Observation Experiments Watch listen and learn Observing Users

Observation & Experiments Watch, listen, and learn…

Observing Users n Not as easy as you think n One of the best ways to gather feedback about your interface n Watch, listen and learn as a person interacts with your system n Qualitative & quantitative, end users, experimental or naturalistic

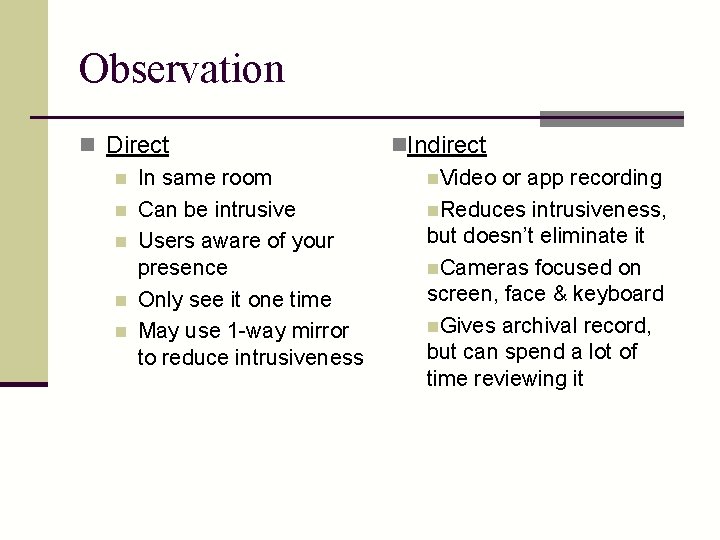

Observation n Direct n In same room n Can be intrusive n Users aware of your presence n Only see it one time n May use 1 -way mirror to reduce intrusiveness n. Indirect n. Video or app recording n. Reduces intrusiveness, but doesn’t eliminate it n. Cameras focused on screen, face & keyboard n. Gives archival record, but can spend a lot of time reviewing it

Location n Observations may be n In lab - Maybe a specially built usability lab n n n Easier to control Can have user complete set of tasks In field n n n Watch their everyday actions More realistic Harder to control other factors

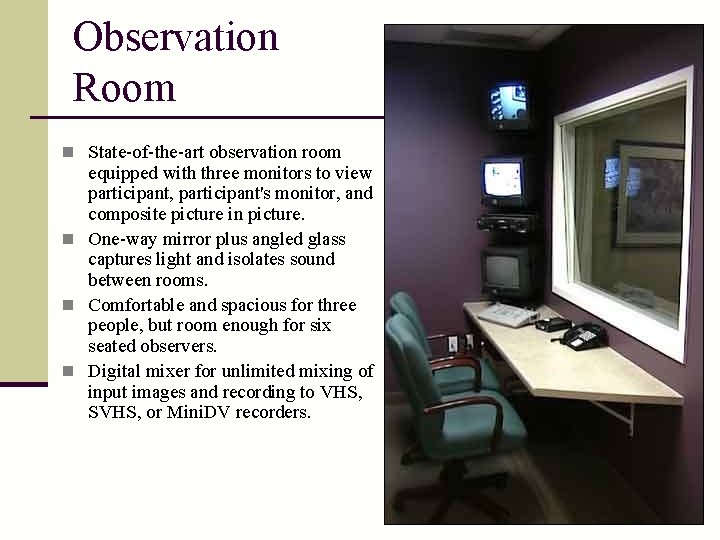

Observation Room n State-of-the-art observation room equipped with three monitors to view participant, participant's monitor, and composite picture in picture. n One-way mirror plus angled glass captures light and isolates sound between rooms. n Comfortable and spacious for three people, but room enough for six seated observers. n Digital mixer for unlimited mixing of input images and recording to VHS, SVHS, or Mini. DV recorders.

Task Selection n What tasks are people performing? n Representative and realistic? n Tasks dealing with specific parts of the interface you want to test? n Problematic tasks? n Don’t forget to pilot your entire evaluation!!

Engaging Users in Evaluation n What’s going on in the user’s head? n Use verbal protocol where users describe their thoughts n Qualitative techniques n Think-aloud - can be very helpful n Post-hoc verbal protocol - review video n Critical incident logging - positive & negative n Structured interviews - good questions n “What did you like best/least? ” n “How would you change. . ? ”

Think Aloud n User describes verbally what s/he is thinking and doing n n n What they believe is happening Why they take an action What they are trying to do n Widely used, popular protocol n Potential problems: n Can be awkward for participant n Thinking aloud can modify way user performs task

Cooperative approach n Another technique: Co-discovery learning (Constructive iteration) n n Join pairs of participants to work together Use think aloud Perhaps have one person be semi-expert (coach) and one be novice More natural (like conversation) so removes some awkwardness of individual think aloud n Variant: let coach be from design team (cooperative evaluation)

Alternative n What if thinking aloud during session will be too disruptive? n Can use post-event protocol User performs session, then watches video afterwards and describes what s/he was thinking n Sometimes difficult to recall n Opens up door of interpretation n

What if a user gets stuck? n Decide ahead of time what you will do. n Offer assistance or not? What kind of assistance? n You can ask (in cooperative evaluation) n “What are you trying to do. . ? ” n “What made you think. . ? ” n “How would you like to perform. . ? ” n “What would make this easier to accomplish. . ? ” n Maybe offer hints n This is why cooperative approaches are used

Inputs n Need operational prototype n could use Wizard of Oz simulation n Need tasks and descriptions n Reflect real tasks n Avoid choosing only tasks that your design best supports n Minimize necessary background knowledge n Pay attention to time and training required.

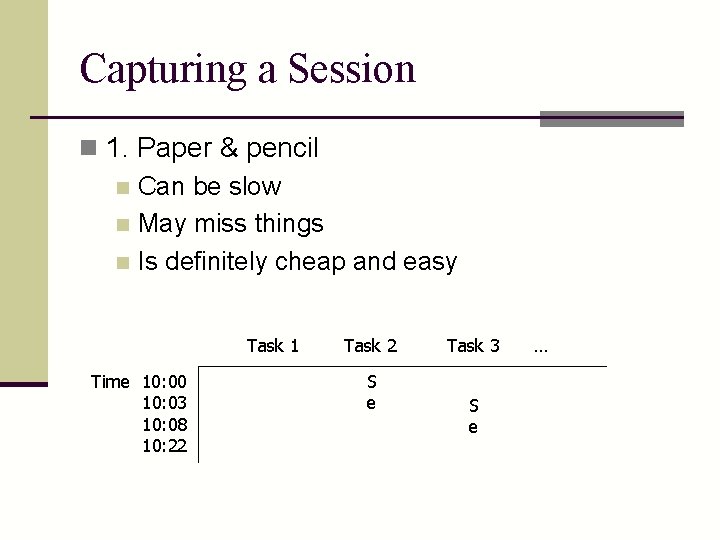

Capturing a Session n 1. Paper & pencil n Can be slow n May miss things n Is definitely cheap and easy Task 1 Time 10: 00 10: 03 10: 08 10: 22 Task 2 S e Task 3 S e …

Capturing a Session n 2. Recording (screen, audio and/or video) n Good for think-aloud n Multiple cameras may be needed n Good, rich record of session n Can be intrusive n Can be painful to transcribe and analyze

Recording n 2 b: With usability software (such as Morae) n Combines audio/video, screen, mouse and keyboard clicks, window opening/closing, etc. n Synchronizes everything n Allows for annotations n Can create clips or presentations

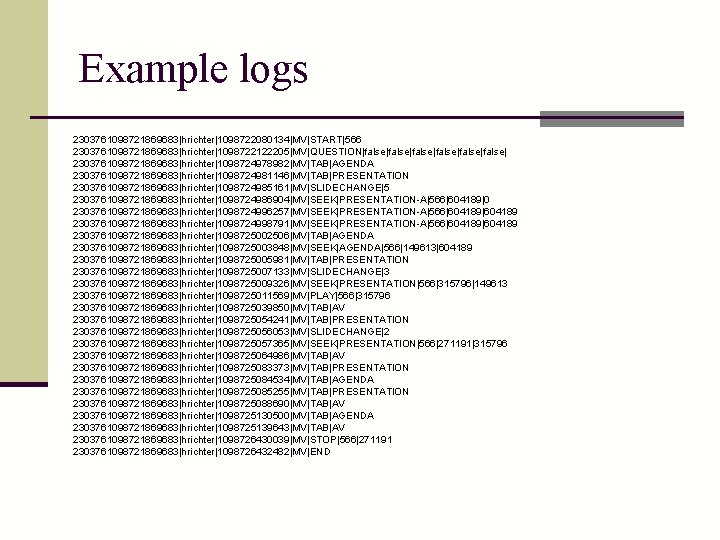

Capturing a Session n 3. Software logging n Modify software to log user actions n Can give time-stamped key press or mouse event n Two problems: n n Too low-level, want higher level events Massive amount of data, need analysis tools

Example logs 2303761098721869683|hrichter|1098722080134|MV|START|566 2303761098721869683|hrichter|1098722122205|MV|QUESTION|false|false|false| 2303761098721869683|hrichter|1098724978982|MV|TAB|AGENDA 2303761098721869683|hrichter|1098724981146|MV|TAB|PRESENTATION 2303761098721869683|hrichter|1098724985161|MV|SLIDECHANGE|5 2303761098721869683|hrichter|1098724986904|MV|SEEK|PRESENTATION-A|566|604189|0 2303761098721869683|hrichter|1098724996257|MV|SEEK|PRESENTATION-A|566|604189 2303761098721869683|hrichter|1098724998791|MV|SEEK|PRESENTATION-A|566|604189 2303761098721869683|hrichter|1098725002506|MV|TAB|AGENDA 2303761098721869683|hrichter|1098725003848|MV|SEEK|AGENDA|566|149613|604189 2303761098721869683|hrichter|1098725005981|MV|TAB|PRESENTATION 2303761098721869683|hrichter|1098725007133|MV|SLIDECHANGE|3 2303761098721869683|hrichter|1098725009326|MV|SEEK|PRESENTATION|566|315796|149613 2303761098721869683|hrichter|1098725011569|MV|PLAY|566|315796 2303761098721869683|hrichter|1098725039850|MV|TAB|AV 2303761098721869683|hrichter|1098725054241|MV|TAB|PRESENTATION 2303761098721869683|hrichter|1098725056053|MV|SLIDECHANGE|2 2303761098721869683|hrichter|1098725057365|MV|SEEK|PRESENTATION|566|271191|315796 2303761098721869683|hrichter|1098725064986|MV|TAB|AV 2303761098721869683|hrichter|1098725083373|MV|TAB|PRESENTATION 2303761098721869683|hrichter|1098725084534|MV|TAB|AGENDA 2303761098721869683|hrichter|1098725085255|MV|TAB|PRESENTATION 2303761098721869683|hrichter|1098725088690|MV|TAB|AV 2303761098721869683|hrichter|1098725130500|MV|TAB|AGENDA 2303761098721869683|hrichter|1098725139643|MV|TAB|AV 2303761098721869683|hrichter|1098726430039|MV|STOP|566|271191 2303761098721869683|hrichter|1098726432482|MV|END

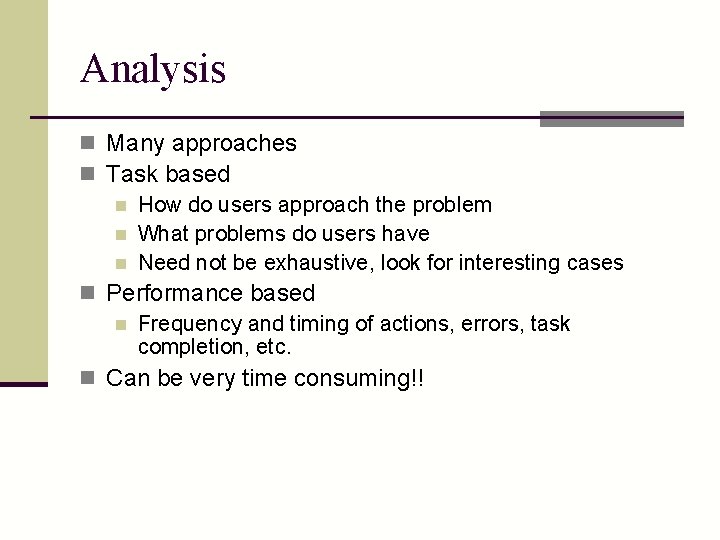

Analysis n Many approaches n Task based n How do users approach the problem n What problems do users have n Need not be exhaustive, look for interesting cases n Performance based n Frequency and timing of actions, errors, task completion, etc. n Can be very time consuming!!

Experiments Testing hypotheses…

Experiments n Test hypotheses in your design n More controlled examination than just observation n Generally quantitative, experimental, with end users.

Types of Variables n Independent n What you’re studying, what you intentionally vary (e. g. , interface feature, interaction device, selection technique, design) n Dependent n Performance measures you record or examine (e. g. , time, number of errors) n Controlled n What you do not want to affect your study

“Controlling” Variables n Prevent a variable from affecting the results in any systematic way n Methods of controlling for a variable: n n n Don’t allow it to vary n e. g. , all males Allow it to vary randomly n e. g. , randomly assign participants to different groups Counterbalance - systematically vary it n e. g. , equal number of males, females in each group n The appropriate option depends on circumstances

Hypotheses n What you predict will happen n More specifically, the way you predict the dependent variable (i. e. , accuracy) will depend on the independent variable(s) n “Null” hypothesis (Ho) n Stating that there will be no effect n e. g. , “There will be no difference in performance between the two groups” n Data used to try to disprove this null hypothesis

Defining Performance n Based on the task n Specific, objective measures/metrics n Examples: n Speed (reaction time, time to complete) n Accuracy (errors, hits/misses) n Production (number of files processed) n Score (number of points earned) n …others…? n Preference, satisfaction, etc. (i. e. questionnaire response) are also valid measurements

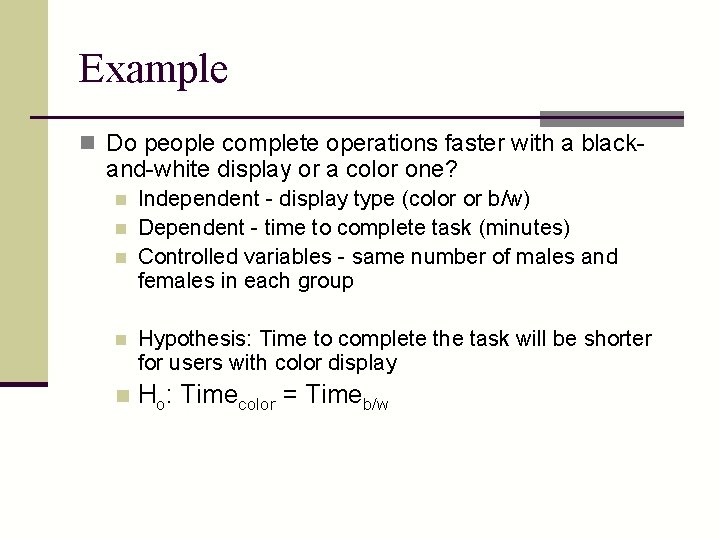

Example n Do people complete operations faster with a black- and-white display or a color one? n n n Independent - display type (color or b/w) Dependent - time to complete task (minutes) Controlled variables - same number of males and females in each group n Hypothesis: Time to complete the task will be shorter for users with color display n Ho: Timecolor = Timeb/w

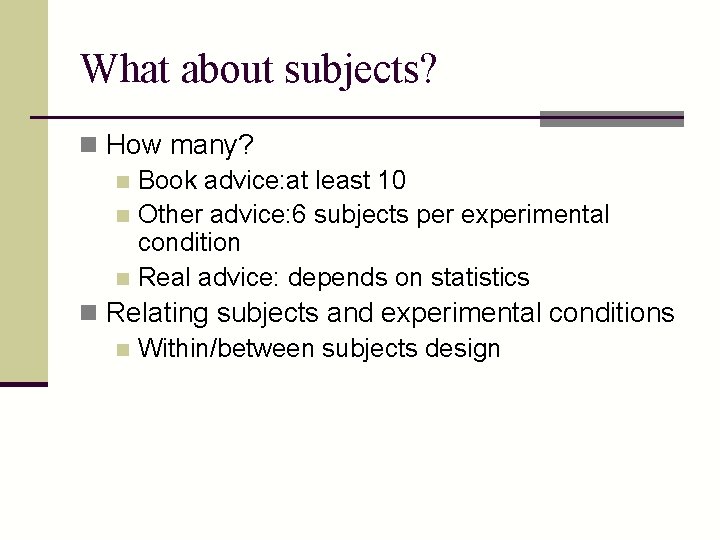

What about subjects? n How many? n Book advice: at least 10 n Other advice: 6 subjects per experimental condition n Real advice: depends on statistics n Relating subjects and experimental conditions n Within/between subjects design

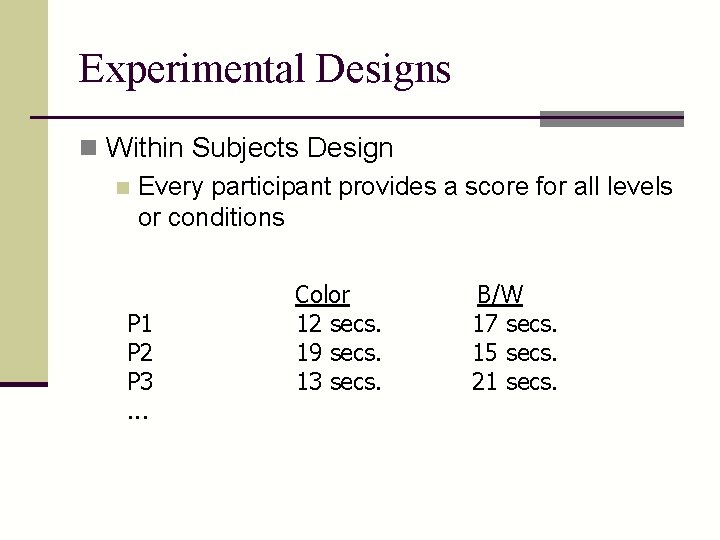

Experimental Designs n Within Subjects Design n Every participant provides a score for all levels or conditions P 1 P 2 P 3. . . Color 12 secs. 19 secs. 13 secs. B/W 17 secs. 15 secs. 21 secs.

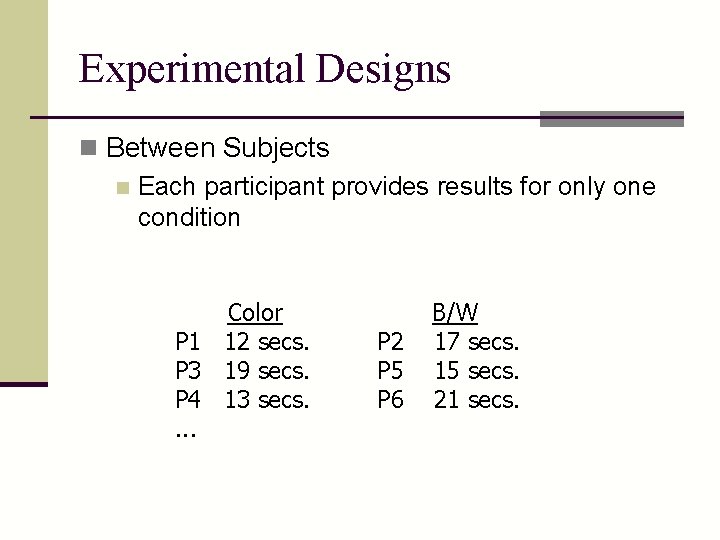

Experimental Designs n Between Subjects n Each participant provides results for only one condition Color P 1 12 secs. P 3 19 secs. P 4 13 secs. . P 2 P 5 P 6 B/W 17 secs. 15 secs. 21 secs.

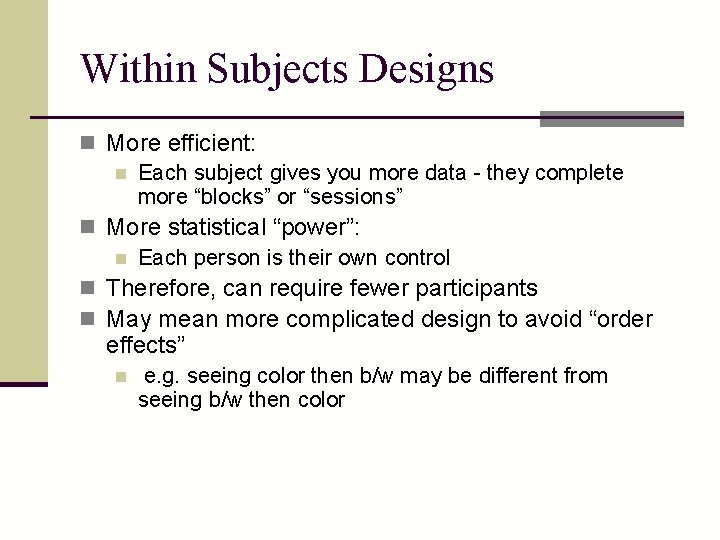

Within Subjects Designs n More efficient: n Each subject gives you more data - they complete more “blocks” or “sessions” n More statistical “power”: n Each person is their own control n Therefore, can require fewer participants n May mean more complicated design to avoid “order effects” n e. g. seeing color then b/w may be different from seeing b/w then color

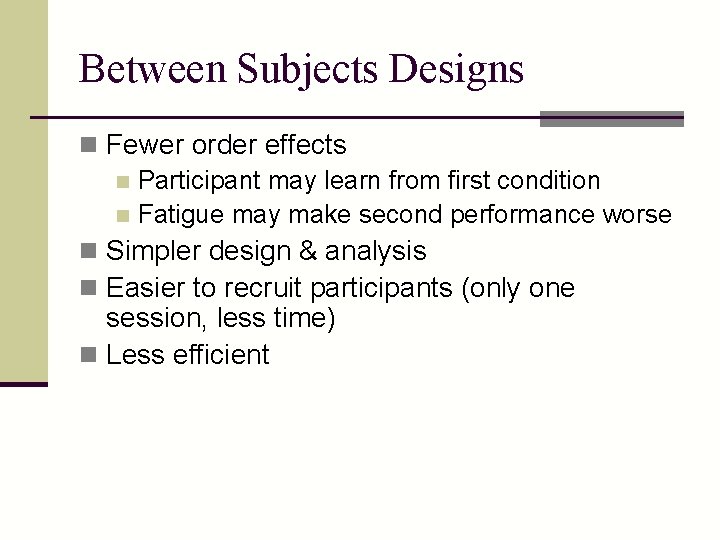

Between Subjects Designs n Fewer order effects n Participant may learn from first condition n Fatigue may make second performance worse n Simpler design & analysis n Easier to recruit participants (only one session, less time) n Less efficient

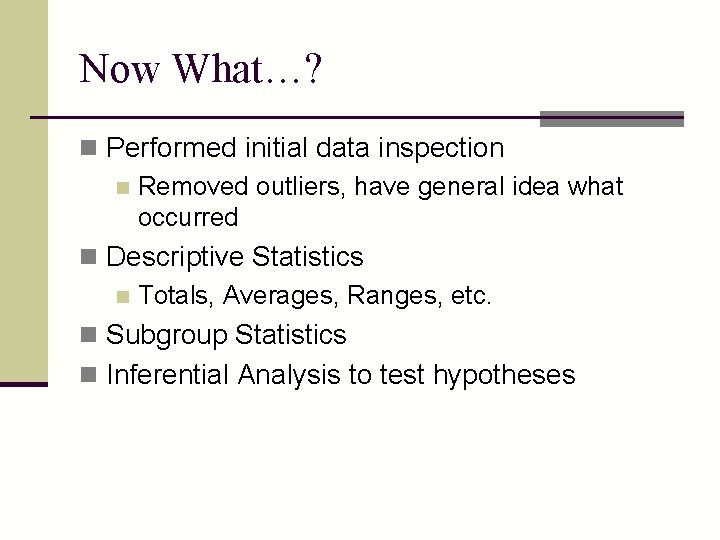

Now What…? n Performed initial data inspection n Removed outliers, have general idea what occurred n Descriptive Statistics n Totals, Averages, Ranges, etc. n Subgroup Statistics n Inferential Analysis to test hypotheses

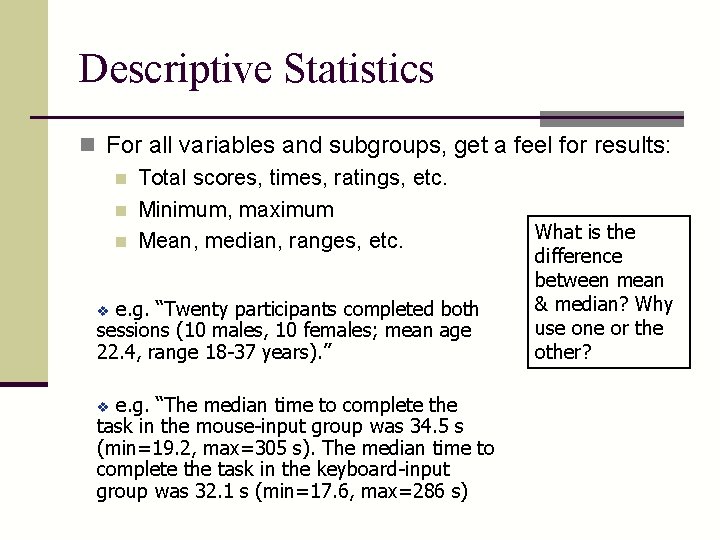

Descriptive Statistics n For all variables and subgroups, get a feel for results: n Total scores, times, ratings, etc. n Minimum, maximum What is the n Mean, median, ranges, etc. e. g. “Twenty participants completed both sessions (10 males, 10 females; mean age 22. 4, range 18 -37 years). ” v e. g. “The median time to complete the task in the mouse-input group was 34. 5 s (min=19. 2, max=305 s). The median time to complete the task in the keyboard-input group was 32. 1 s (min=17. 6, max=286 s) v difference between mean & median? Why use one or the other?

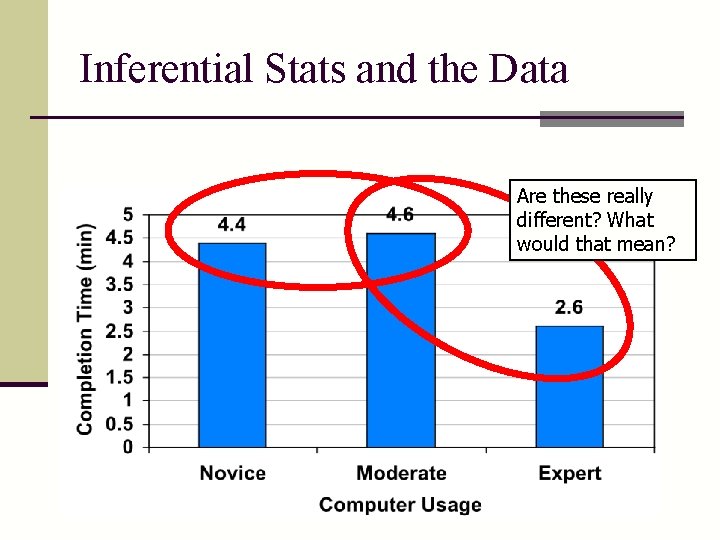

Inferential Stats and the Data Are these really different? What would that mean?

Goal of analysis n Get >95% confidence in significance of result n that is, null hypothesis disproved n Ho: Timecolor = Timeb/w n OR, there is an influence n ORR, only 1 in 20 chance that difference occurred due to random chance

Hypothesis Testing n Tests to determine differences n t-test to compare two means n ANOVA (Analysis of Variance) to compare several means n Need to determine “statistical significance” n “Significance level” (p): n The probability that your null hypothesis was wrong, simply by chance n p (“alpha” level) is often set at 0. 05, or 5% of the time you’ll get the result you saw, just by chance

Feeding Back Into Design n What were the conclusions you reached? n How can you improve on the design? n What are quantitative benefits of the redesign? n e. g. 2 minutes saved per transaction, which means 24% increase in production, or $45, 000 per year in increased profit n What are qualitative, less tangible benefit(s)? n e. g. workers will be less bored, less tired, and therefore more interested --> better cust. service

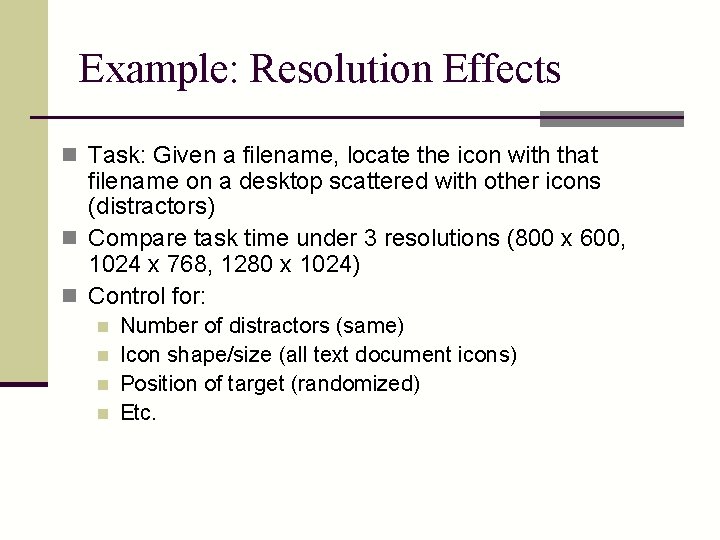

Example: Resolution Effects n Task: Given a filename, locate the icon with that filename on a desktop scattered with other icons (distractors) n Compare task time under 3 resolutions (800 x 600, 1024 x 768, 1280 x 1024) n Control for: n n Number of distractors (same) Icon shape/size (all text document icons) Position of target (randomized) Etc.

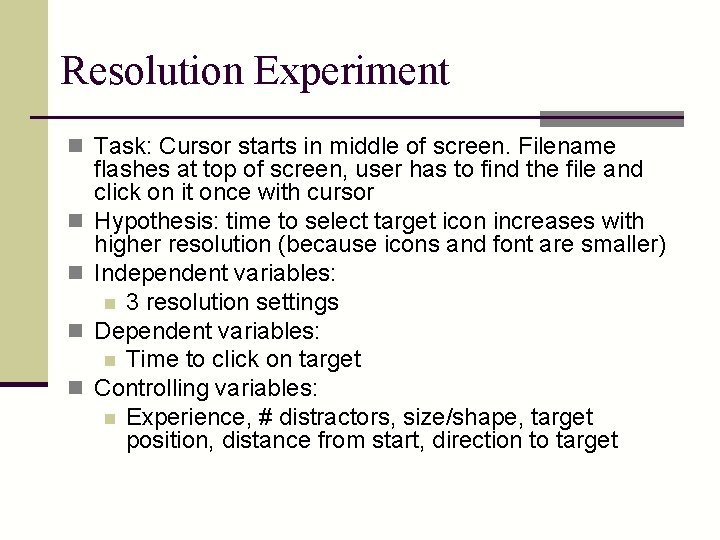

Resolution Experiment n Task: Cursor starts in middle of screen. Filename n n flashes at top of screen, user has to find the file and click on it once with cursor Hypothesis: time to select target icon increases with higher resolution (because icons and font are smaller) Independent variables: n 3 resolution settings Dependent variables: n Time to click on target Controlling variables: n Experience, # distractors, size/shape, target position, distance from start, direction to target

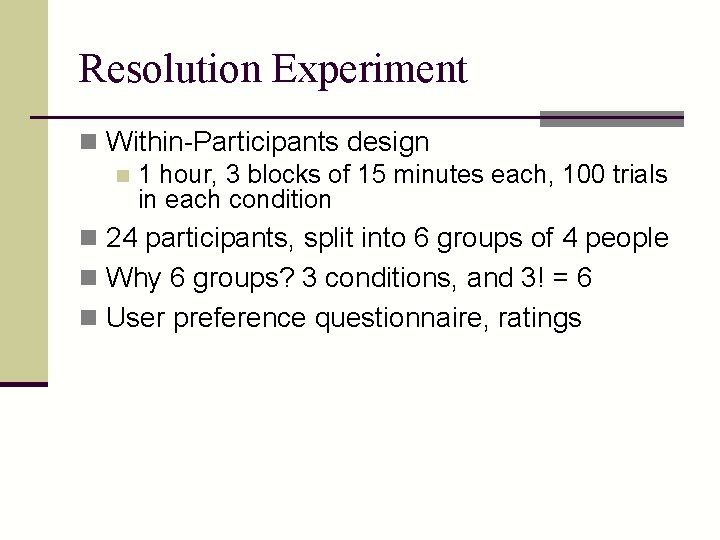

Resolution Experiment n Within-Participants design n 1 hour, 3 blocks of 15 minutes each, 100 trials in each condition n 24 participants, split into 6 groups of 4 people n Why 6 groups? 3 conditions, and 3! = 6 n User preference questionnaire, ratings

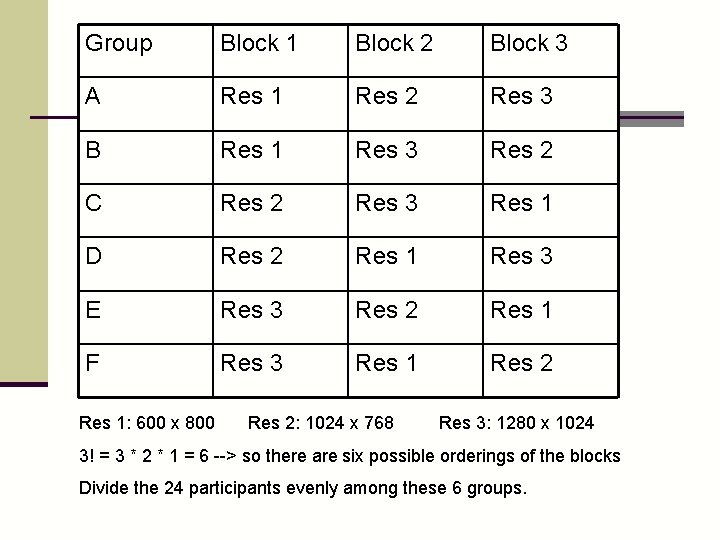

Group Block 1 Block 2 Block 3 A Res 1 Res 2 Res 3 B Res 1 Res 3 Res 2 C Res 2 Res 3 Res 1 D Res 2 Res 1 Res 3 E Res 3 Res 2 Res 1 F Res 3 Res 1 Res 2 Res 1: 600 x 800 Res 2: 1024 x 768 Res 3: 1280 x 1024 3! = 3 * 2 * 1 = 6 --> so there are six possible orderings of the blocks Divide the 24 participants evenly among these 6 groups.

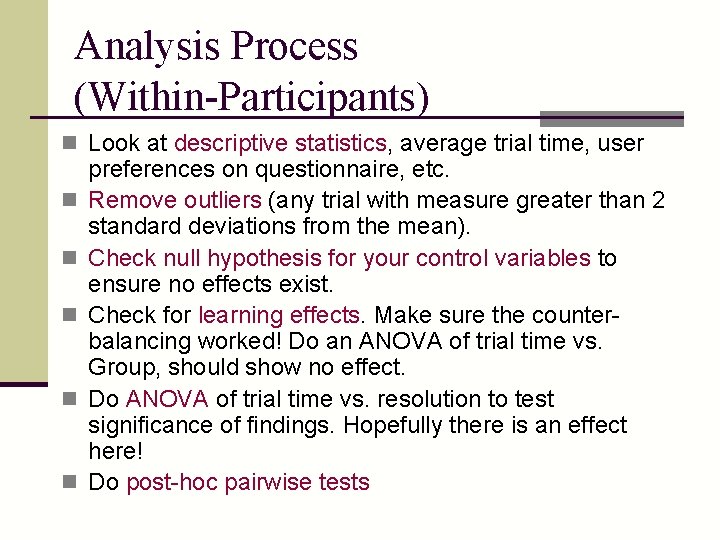

Analysis Process (Within-Participants) n Look at descriptive statistics, average trial time, user n n n preferences on questionnaire, etc. Remove outliers (any trial with measure greater than 2 standard deviations from the mean). Check null hypothesis for your control variables to ensure no effects exist. Check for learning effects. Make sure the counterbalancing worked! Do an ANOVA of trial time vs. Group, should show no effect. Do ANOVA of trial time vs. resolution to test significance of findings. Hopefully there is an effect here! Do post-hoc pairwise tests

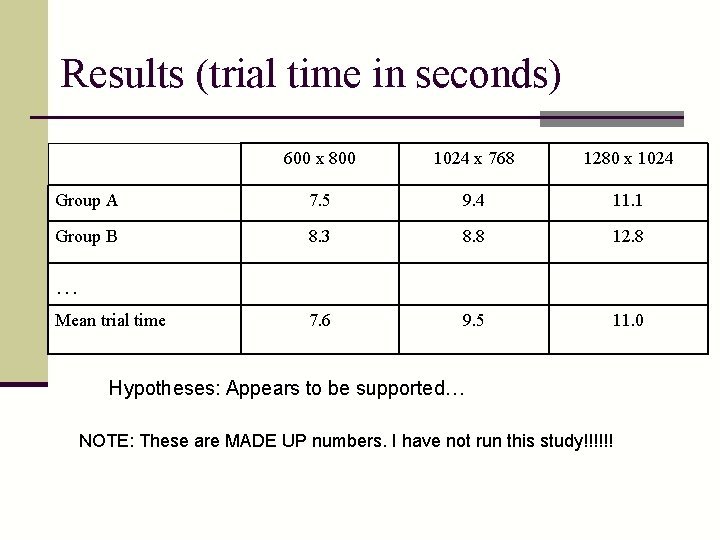

Results (trial time in seconds) 600 x 800 1024 x 768 1280 x 1024 Group A 7. 5 9. 4 11. 1 Group B 8. 3 8. 8 12. 8 7. 6 9. 5 11. 0 … Mean trial time Hypotheses: Appears to be supported… NOTE: These are MADE UP numbers. I have not run this study!!!!!!

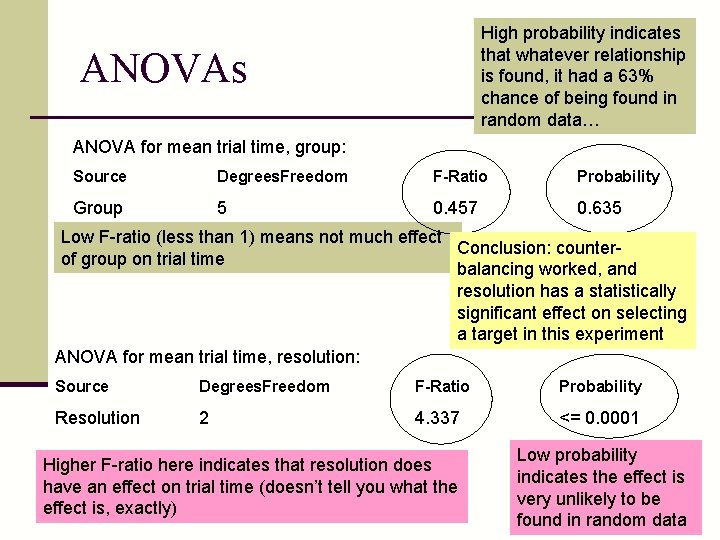

High probability indicates that whatever relationship is found, it had a 63% chance of being found in random data… ANOVAs ANOVA for mean trial time, group: Source Degrees. Freedom F-Ratio Probability Group 5 0. 457 0. 635 Low F-ratio (less than 1) means not much effect Conclusion: counterof group on trial time balancing worked, and resolution has a statistically significant effect on selecting a target in this experiment ANOVA for mean trial time, resolution: Source Degrees. Freedom F-Ratio Probability Resolution 2 4. 337 <= 0. 0001 Higher F-ratio here indicates that resolution does have an effect on trial time (doesn’t tell you what the effect is, exactly) Low probability indicates the effect is very unlikely to be found in random data

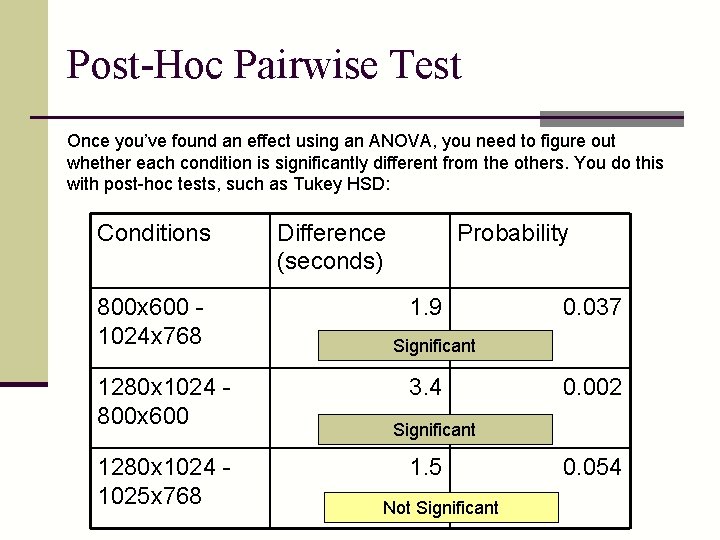

Post-Hoc Pairwise Test Once you’ve found an effect using an ANOVA, you need to figure out whether each condition is significantly different from the others. You do this with post-hoc tests, such as Tukey HSD: Conditions 800 x 600 1024 x 768 1280 x 1024 800 x 600 1280 x 1024 1025 x 768 Difference (seconds) Probability 1. 9 0. 037 Significant 3. 4 0. 002 Significant 1. 5 Not Significant 0. 054

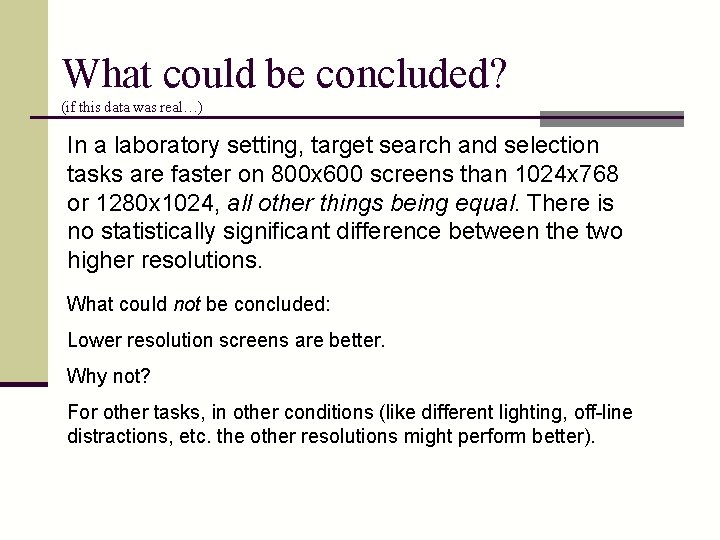

What could be concluded? (if this data was real…) In a laboratory setting, target search and selection tasks are faster on 800 x 600 screens than 1024 x 768 or 1280 x 1024, all other things being equal. There is no statistically significant difference between the two higher resolutions. What could not be concluded: Lower resolution screens are better. Why not? For other tasks, in other conditions (like different lighting, off-line distractions, etc. the other resolutions might perform better).

Another Example: Heather’s simple experiment n Designing interface for categorizing keywords in a transcript n Wanted baseline for comparison n Experiment comparing: Pen and paper, not real time n Pen and paper, real time n Simulated interface, real time n

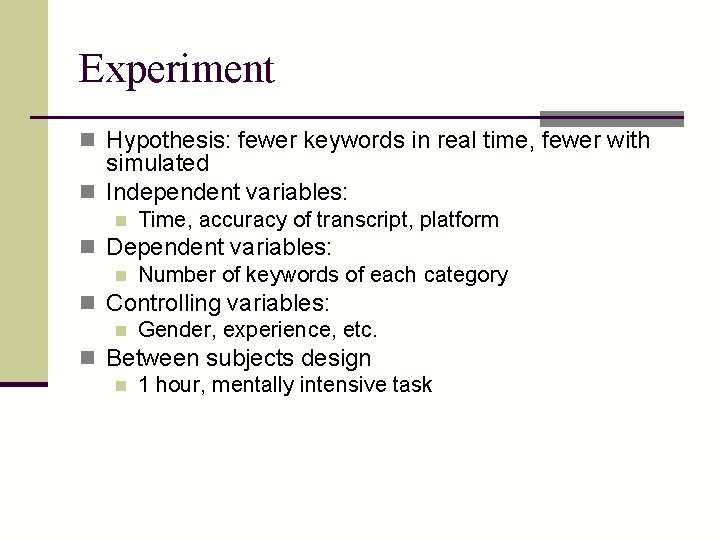

Experiment n Hypothesis: fewer keywords in real time, fewer with simulated n Independent variables: n Time, accuracy of transcript, platform n Dependent variables: n Number of keywords of each category n Controlling variables: n Gender, experience, etc. n Between subjects design n 1 hour, mentally intensive task

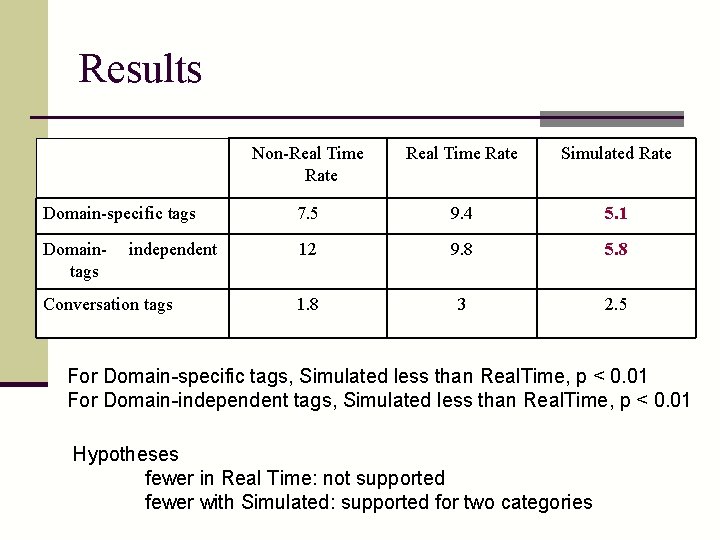

Results Non-Real Time Rate Simulated Rate Domain-specific tags 7. 5 9. 4 5. 1 Domaintags 12 9. 8 5. 8 1. 8 3 2. 5 independent Conversation tags For Domain-specific tags, Simulated less than Real. Time, p < 0. 01 For Domain-independent tags, Simulated less than Real. Time, p < 0. 01 Hypotheses fewer in Real Time: not supported fewer with Simulated: supported for two categories

Example: add video to IM voice chat? n Compare voice chat with and without video n Plan an experiment: n Compare message time or difficulty in communicating or frequency… n Consider: n Tasks n What data you want to gather n How you would gather n What analysis you would do after

Questions: n n n What are independent variables? What are dependent variables? What could be hypothesis? Between or within subjects? What was controlled? What are the tasks?

- Slides: 50