Object Oried Data Analysis Last Time Gene Cell

Object Orie’d Data Analysis, Last Time • Gene Cell Cycle Data • Microarrays and HDLSS visualization • DWD bias adjustment • NCI 60 Data Today: Detailed (math’cal) look at PCA

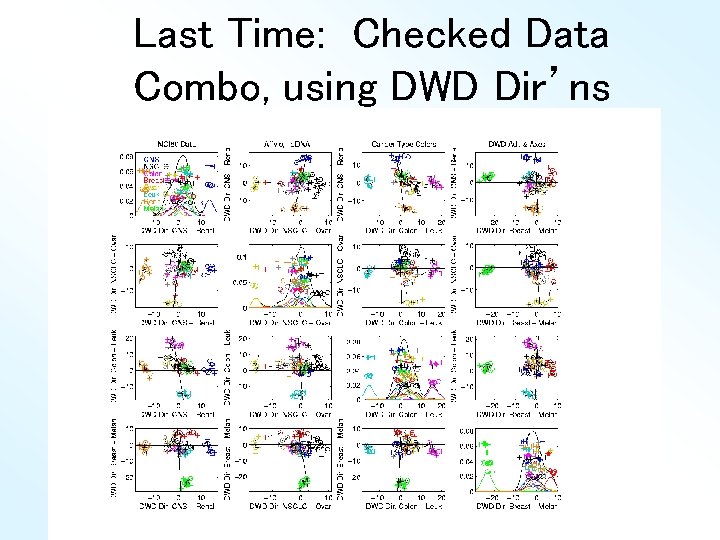

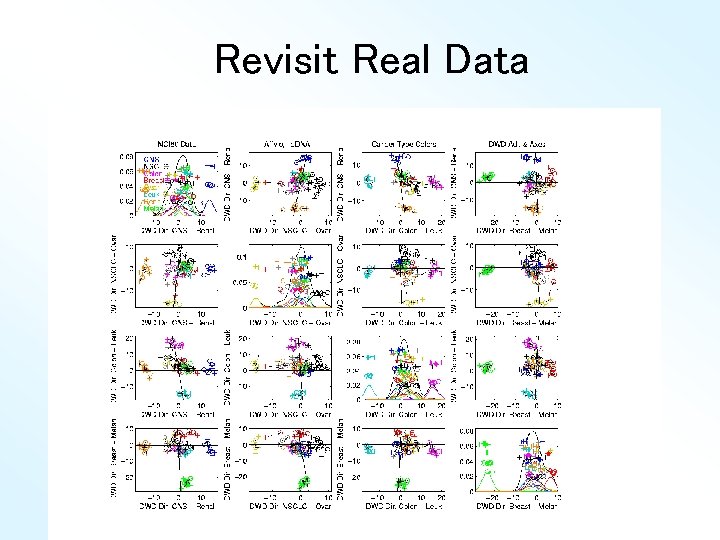

Last Time: Checked Data Combo, using DWD Dir’ns

DWD Views of NCI 60 Data Interesting Question: Which clusters are really there? Issues: • DWD great at finding dir’ns of separation • And will do so even if no real structure • Is this happening here? • Or: which clusters are important? • What does “important” mean?

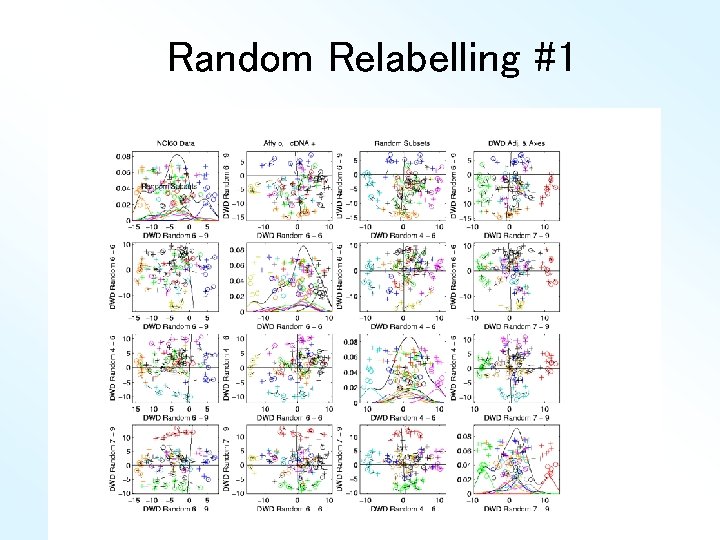

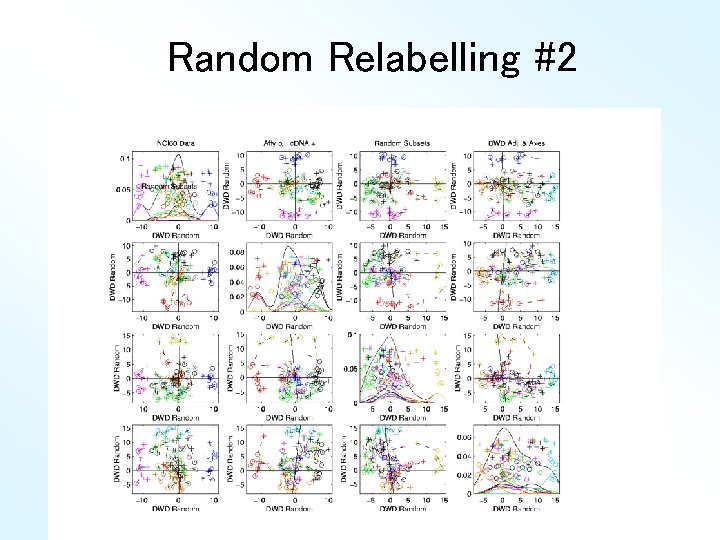

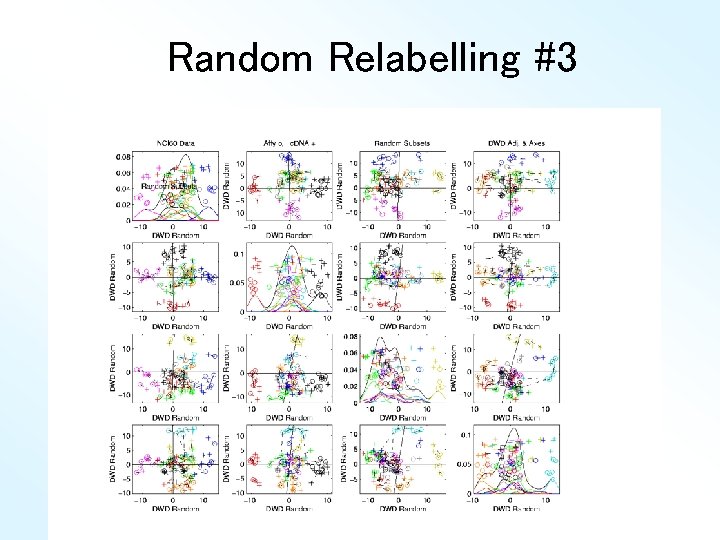

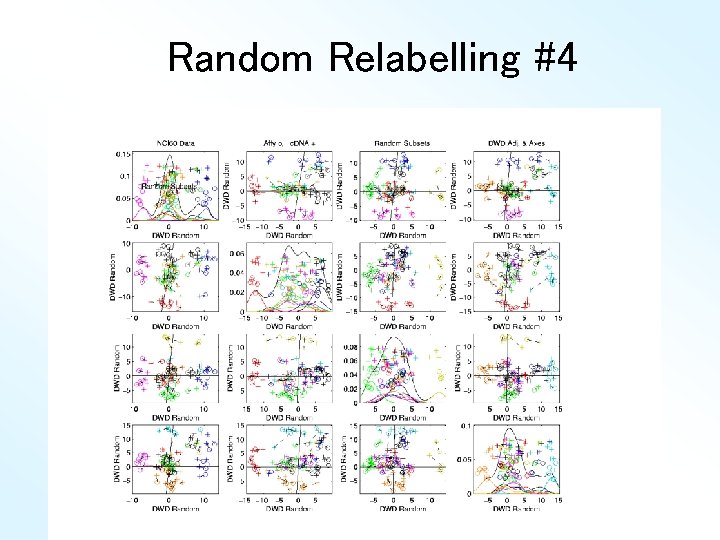

Real Clusters in NCI 60 Data Simple Visual Approach: • Randomly relabel data (Cancer Types) • Recompute DWD dir’ns & visualization • Get heuristic impression from this Deeper Approach • Formal Hypothesis Testing (Done later)

Random Relabelling #1

Random Relabelling #2

Random Relabelling #3

Random Relabelling #4

Revisit Real Data

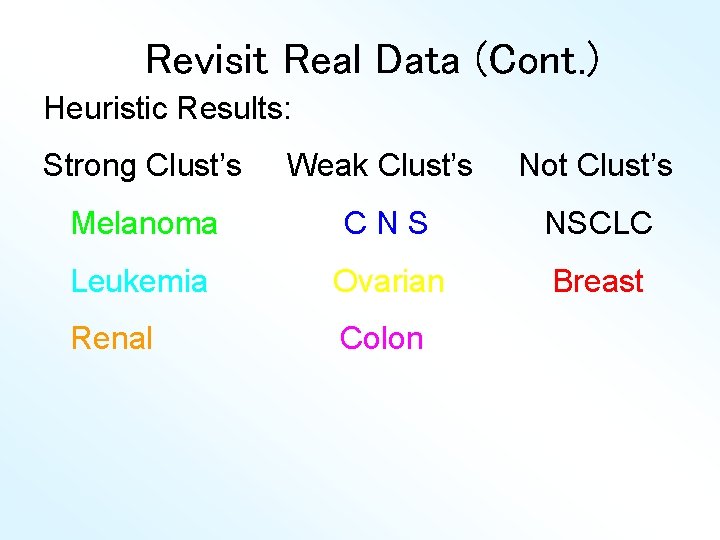

Revisit Real Data (Cont. ) Heuristic Results: Strong Clust’s Weak Clust’s Not Clust’s Melanoma CNS NSCLC Leukemia Ovarian Breast Renal Colon

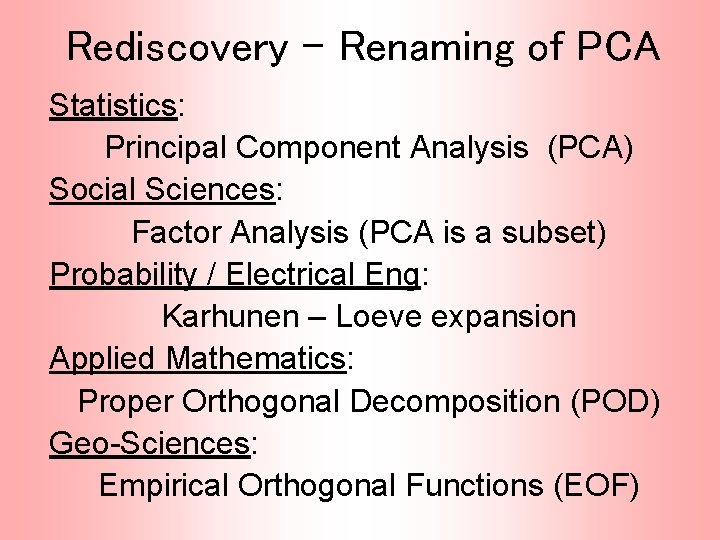

Rediscovery – Renaming of PCA Statistics: Principal Component Analysis (PCA) Social Sciences: Factor Analysis (PCA is a subset) Probability / Electrical Eng: Karhunen – Loeve expansion Applied Mathematics: Proper Orthogonal Decomposition (POD) Geo-Sciences: Empirical Orthogonal Functions (EOF)

An Interesting Historical Note The 1 st (? ) application of PCA to Functional Data Analysis: Rao, C. R. (1958) Some statistical methods for comparison of growth curves, Biometrics, 14, 1 -17. 1 st Paper with “Curves as Data” viewpoint

Detailed Look at PCA Three important (and interesting) viewpoints: 1. Mathematics 2. Numerics 3. Statistics 1 st: Review linear alg. and multivar. prob.

Review of Linear Algebra Vector Space: • set of “vectors”, • and “scalars” (coefficients), • “closed” under “linear combination” , ( e. g. , “ dim Euclid’n space” in space)

Review of Linear Algebra (Cont. ) Subspace: • subset that is again a vector space • i. e. closed under linear combination • e. g. lines through the origin • e. g. planes through the origin • e. g. subsp. “generated by” a set of vector (all linear combos of them = = containing hyperplane through origin)

Review of Linear Algebra (Cont. ) Basis of subspace: set of vectors that: • span, i. e. everything is a lin. com. of them • are linearly indep’t, i. e. lin. Com. is unique • e. g. “unit vector basis”

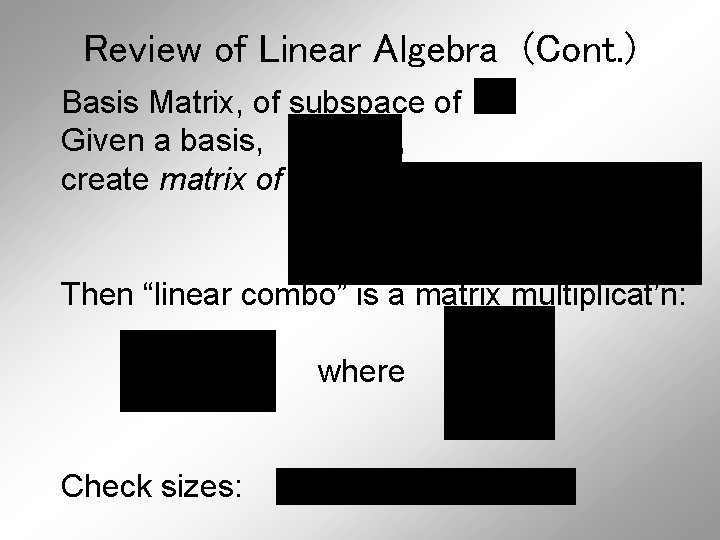

Review of Linear Algebra (Cont. ) Basis Matrix, of subspace of Given a basis, , create matrix of columns: Then “linear combo” is a matrix multiplicat’n: where Check sizes:

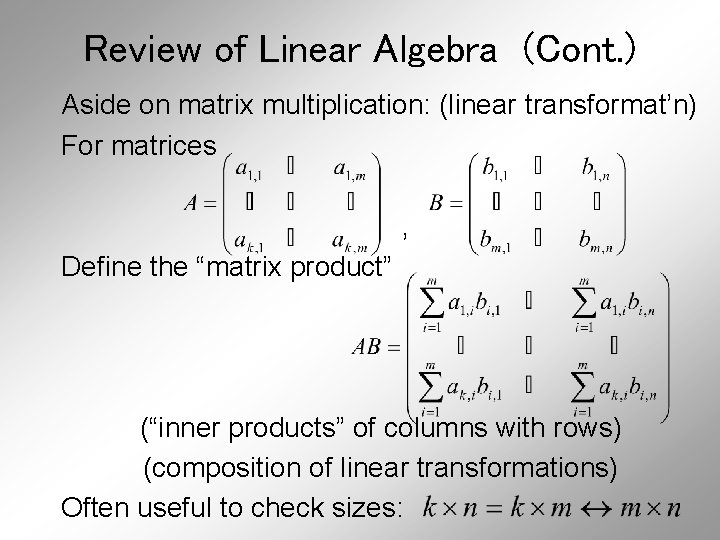

Review of Linear Algebra (Cont. ) Aside on matrix multiplication: (linear transformat’n) For matrices , Define the “matrix product” (“inner products” of columns with rows) (composition of linear transformations) Often useful to check sizes:

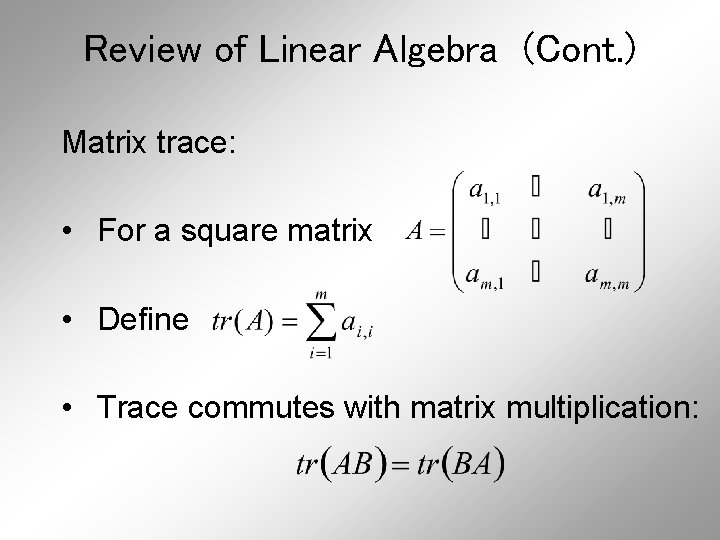

Review of Linear Algebra (Cont. ) Matrix trace: • For a square matrix • Define • Trace commutes with matrix multiplication:

Review of Linear Algebra (Cont. ) Dimension of subspace (a notion of “size”): • number of elements in a basis (unique) • (use basis above) • e. g. dim of a line is 1 • e. g. dim of a plane is 2 • dimension is “degrees of freedom”

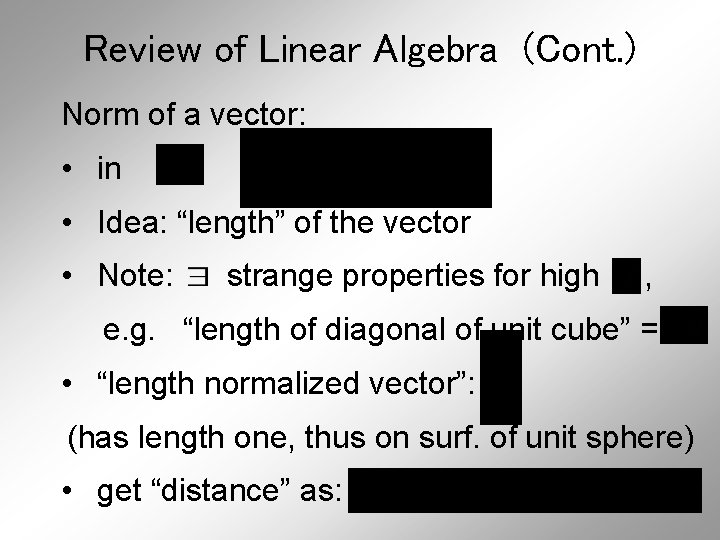

Review of Linear Algebra (Cont. ) Norm of a vector: • in , • Idea: “length” of the vector • Note: strange properties for high , e. g. “length of diagonal of unit cube” = • “length normalized vector”: (has length one, thus on surf. of unit sphere) • get “distance” as:

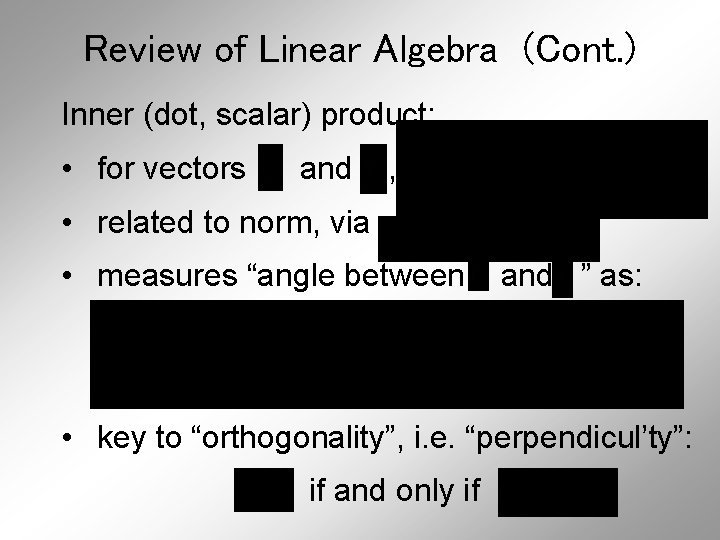

Review of Linear Algebra (Cont. ) Inner (dot, scalar) product: • for vectors and , • related to norm, via • measures “angle between and ” as: • key to “orthogonality”, i. e. “perpendicul’ty”: if and only if

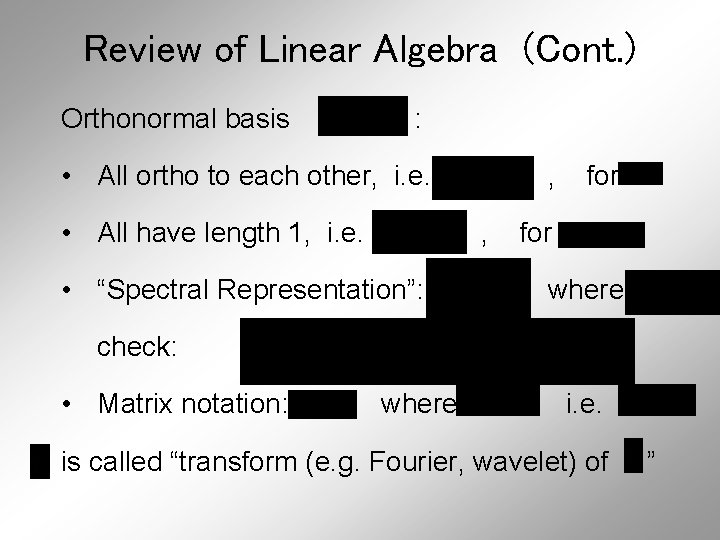

Review of Linear Algebra (Cont. ) Orthonormal basis : • All ortho to each other, i. e. • All have length 1, i. e. , , • “Spectral Representation”: for where check: • Matrix notation: where i. e. is called “transform (e. g. Fourier, wavelet) of ”

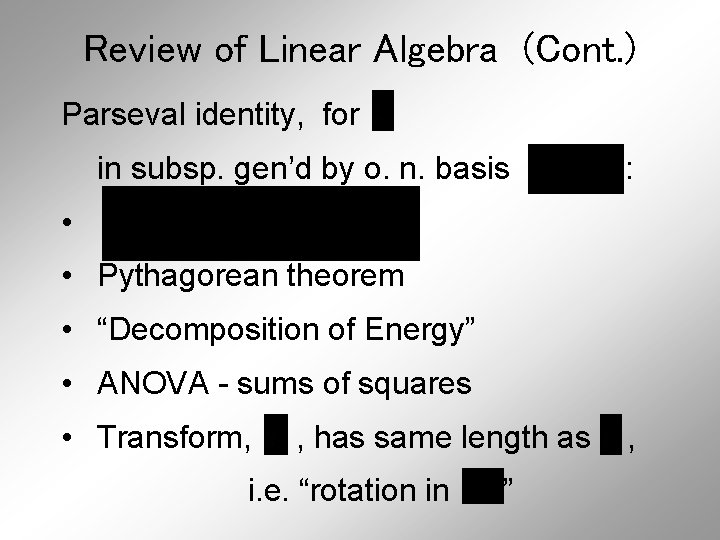

Review of Linear Algebra (Cont. ) Parseval identity, for in subsp. gen’d by o. n. basis : • • Pythagorean theorem • “Decomposition of Energy” • ANOVA - sums of squares • Transform, , has same length as i. e. “rotation in ” ,

Review of Linear Algebra (Cont. ) Next time: add part about Gram. Schmidt Ortho-normalization

Review of Linear Algebra (Cont. ) Projection of a vector onto a subspace : • Idea: member of that is closest to (i. e. “approx’n”) • Find that solves: (“least squa’s”) • For inner product (Hilbert) space: exists and is unique • General solution in : for basis matrix • So “proj’n operator” is “matrix mult’n”: (thus projection is another linear operation) (note same operation underlies least squares)

Review of Linear Algebra (Cont. ) Projection using orthonormal basis : • Basis matrix is “orthonormal”: • So = Recon(Coeffs of dir’n”) • For “orthogonal complement”, , and • Parseval inequality: “in

Review of Linear Algebra (Cont. ) (Real) Unitary Matrices: with • Orthonormal basis matrix (so all of above applies) • Follows that (since have full rank, so • Lin. trans. (mult. by exists …) ) is like “rotation” of • But also includes “mirror images”

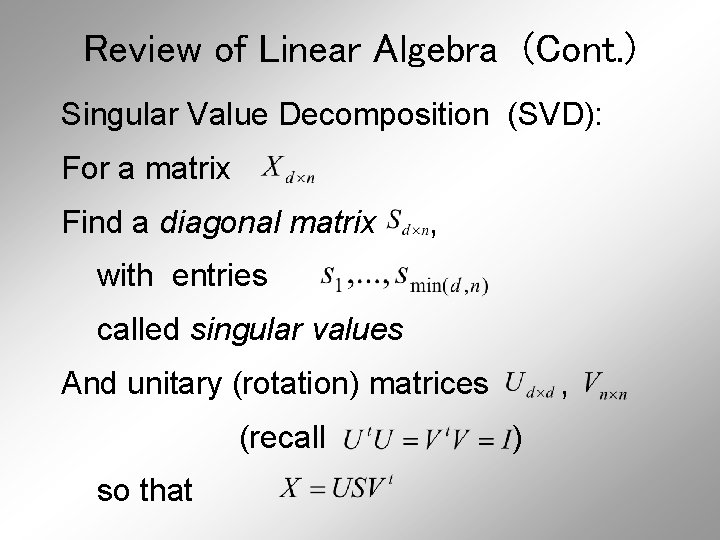

Review of Linear Algebra (Cont. ) Singular Value Decomposition (SVD): For a matrix Find a diagonal matrix , with entries called singular values And unitary (rotation) matrices (recall so that , )

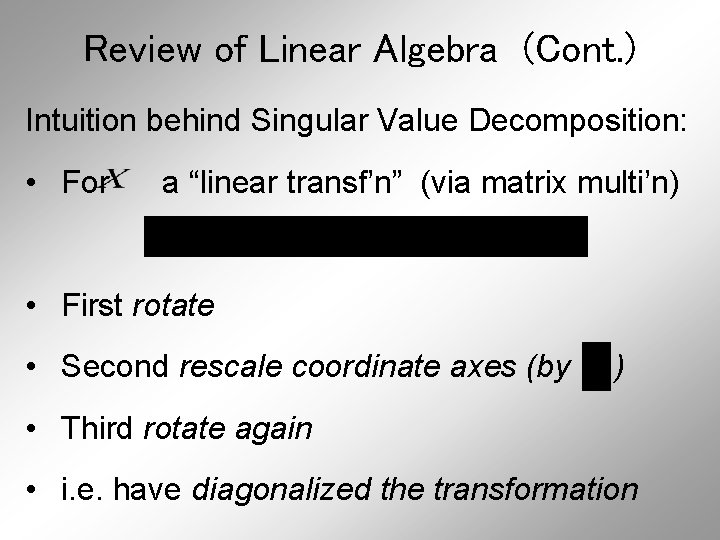

Review of Linear Algebra (Cont. ) Intuition behind Singular Value Decomposition: • For a “linear transf’n” (via matrix multi’n) • First rotate • Second rescale coordinate axes (by ) • Third rotate again • i. e. have diagonalized the transformation

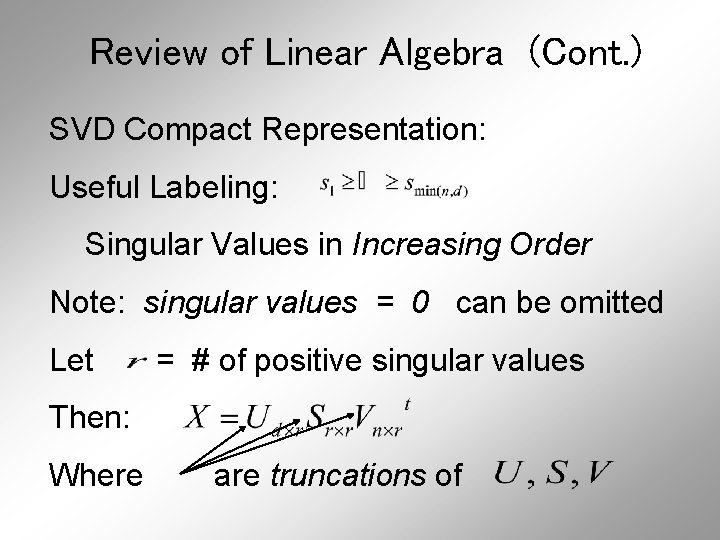

Review of Linear Algebra (Cont. ) SVD Compact Representation: Useful Labeling: Singular Values in Increasing Order Note: singular values = 0 can be omitted Let = # of positive singular values Then: Where are truncations of

Review of Linear Algebra (Cont. ) Eigenvalue Decomposition: For a (symmetric) square matrix Find a diagonal matrix And an orthonormal matrix (i. e. So that: ) , i. e.

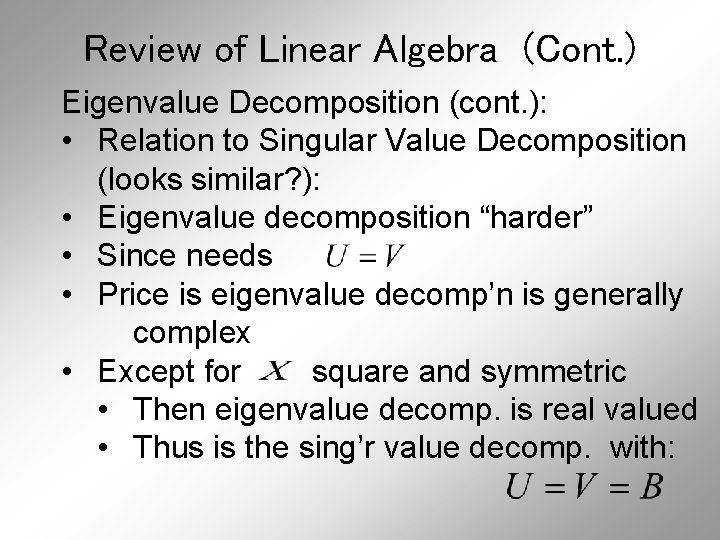

Review of Linear Algebra (Cont. ) Eigenvalue Decomposition (cont. ): • Relation to Singular Value Decomposition (looks similar? ): • Eigenvalue decomposition “harder” • Since needs • Price is eigenvalue decomp’n is generally complex • Except for square and symmetric • Then eigenvalue decomp. is real valued • Thus is the sing’r value decomp. with:

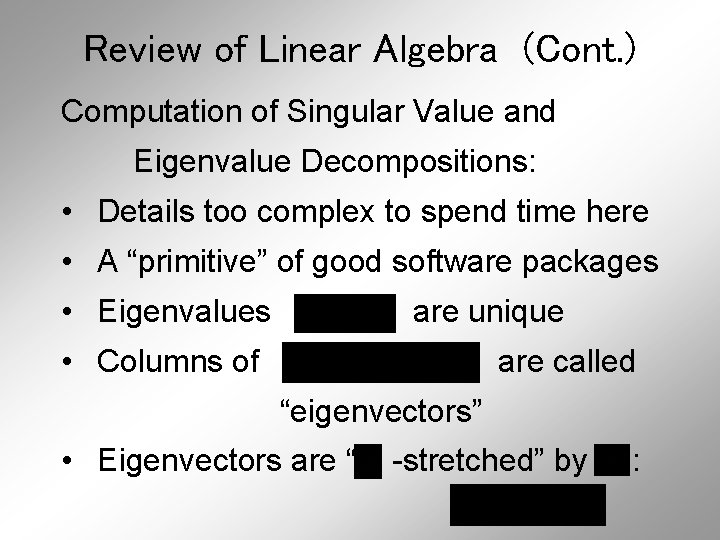

Review of Linear Algebra (Cont. ) Computation of Singular Value and Eigenvalue Decompositions: • Details too complex to spend time here • A “primitive” of good software packages • Eigenvalues are unique • Columns of are called “eigenvectors” • Eigenvectors are “ -stretched” by :

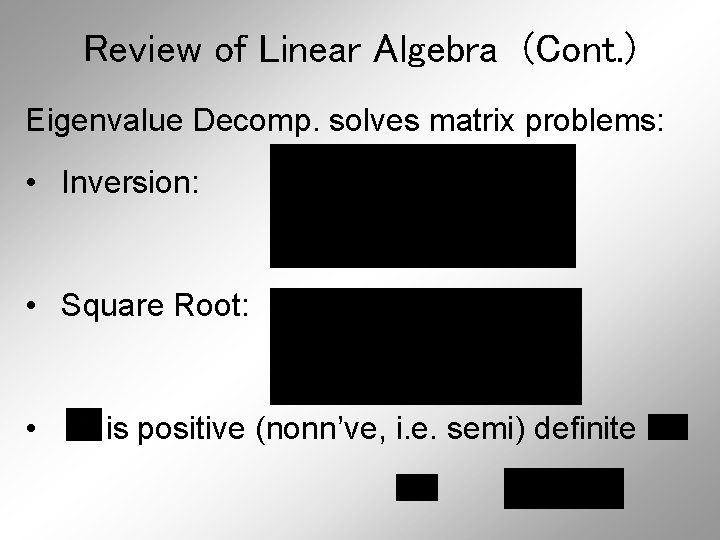

Review of Linear Algebra (Cont. ) Eigenvalue Decomp. solves matrix problems: • Inversion: • Square Root: • is positive (nonn’ve, i. e. semi) definite all

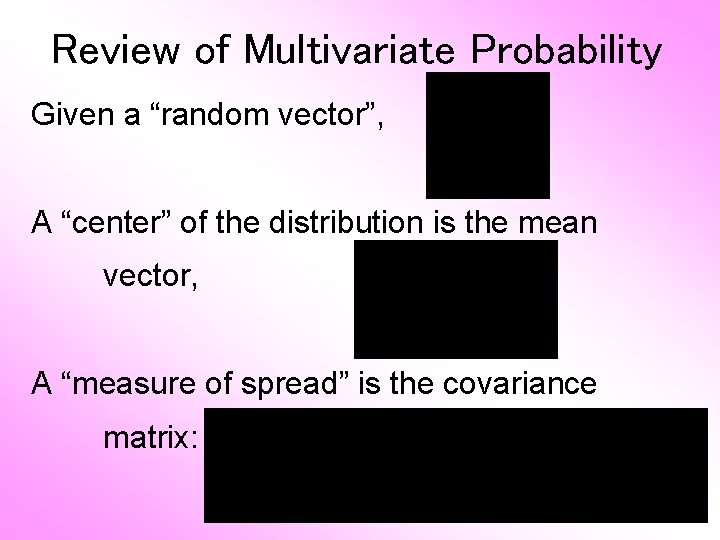

Review of Multivariate Probability Given a “random vector”, A “center” of the distribution is the mean vector, A “measure of spread” is the covariance matrix:

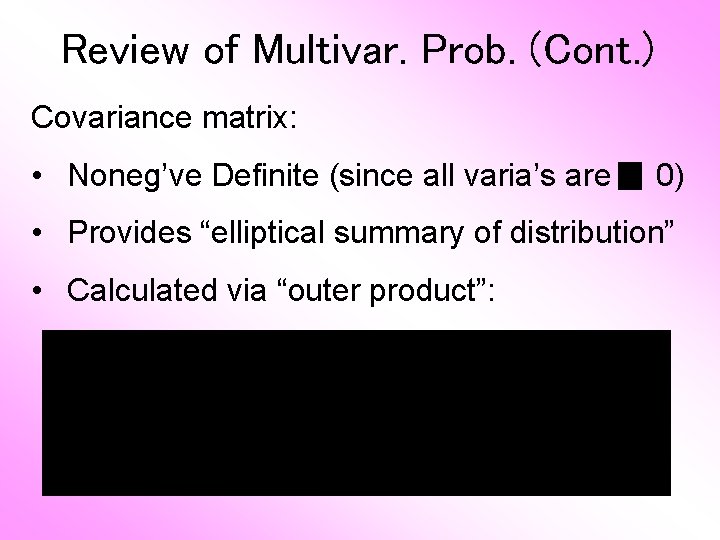

Review of Multivar. Prob. (Cont. ) Covariance matrix: • Noneg’ve Definite (since all varia’s are 0) • Provides “elliptical summary of distribution” • Calculated via “outer product”:

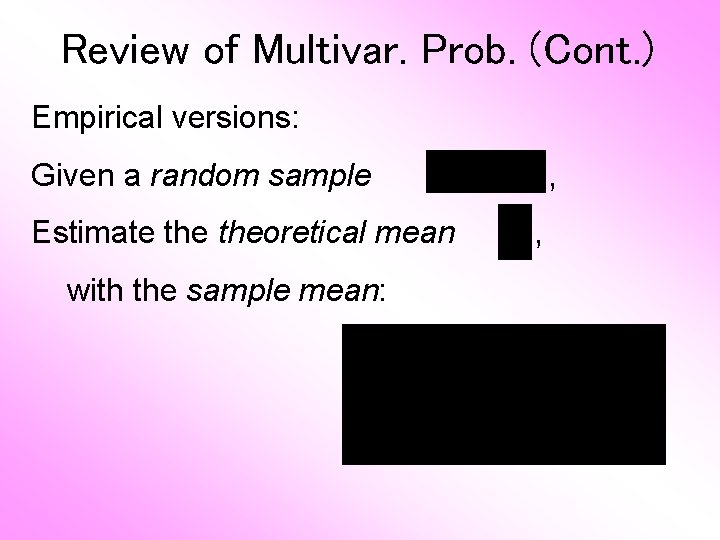

Review of Multivar. Prob. (Cont. ) Empirical versions: Given a random sample Estimate theoretical mean with the sample mean: , ,

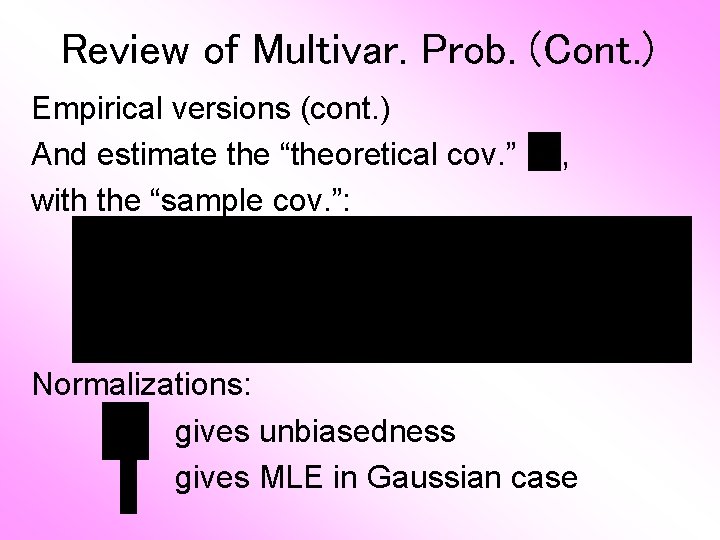

Review of Multivar. Prob. (Cont. ) Empirical versions (cont. ) And estimate the “theoretical cov. ” with the “sample cov. ”: , Normalizations: gives unbiasedness gives MLE in Gaussian case

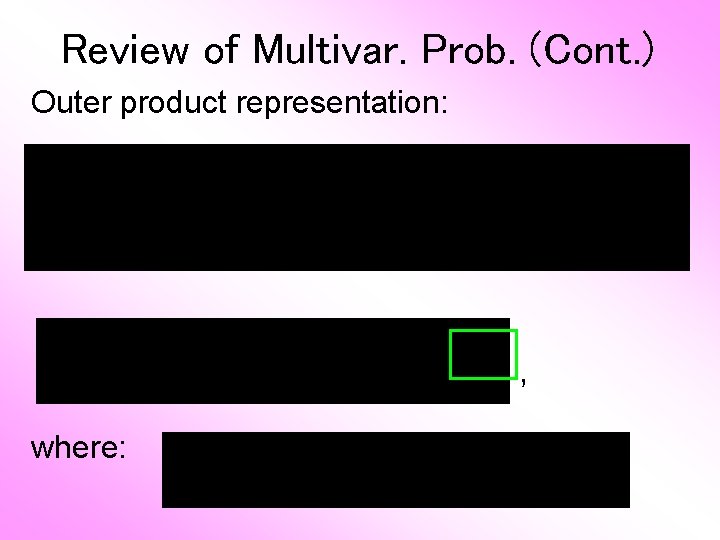

Review of Multivar. Prob. (Cont. ) Outer product representation: , where:

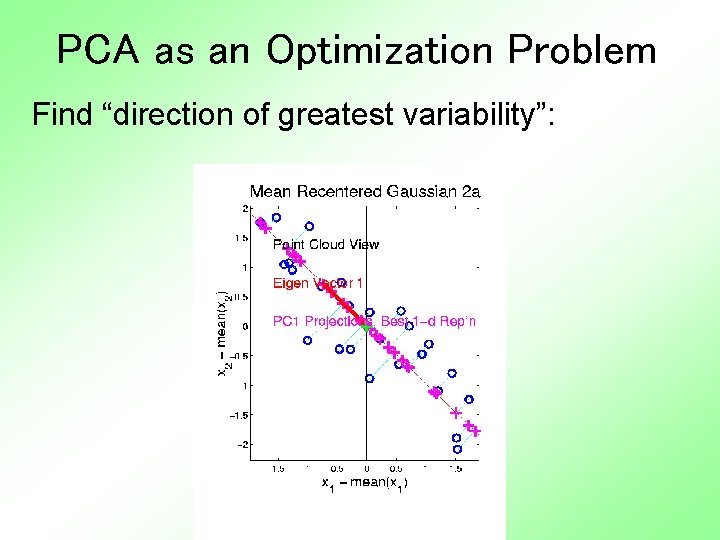

PCA as an Optimization Problem Find “direction of greatest variability”:

PCA as Optimization (Cont. ) Find “direction of greatest variability”: Given a “direction vector”, Projection of (i. e. in the direction Variability in the direction : ) :

PCA as Optimization (Cont. ) Variability in the direction : i. e. (proportional to) a quadratic form in the covariance matrix Simple solution comes from the eigenvalue representation of : where is orthonormal, &

PCA as Optimization (Cont. ) Variability in the direction But =“ : transform of = “ rotated into ” coordinates”, and the diagonalized quadratic form becomes

PCA as Optimization (Cont. ) Now since is an orthonormal basis matrix, and So the rotation gives a distribution of the (unit) energy of over the eigendirections And is max’d (over ), by putting all energy in the “largest direction”, i. e. , where “eigenvalues are ordered”,

PCA as Optimization (Cont. ) Notes: • Solution is unique when • Else have sol’ns in subsp. gen’d by 1 st • Projecting onto subspace gives , as next direction • Continue through • Replace to by , …, to get theoretical PCA • Estimated by the empirical version s

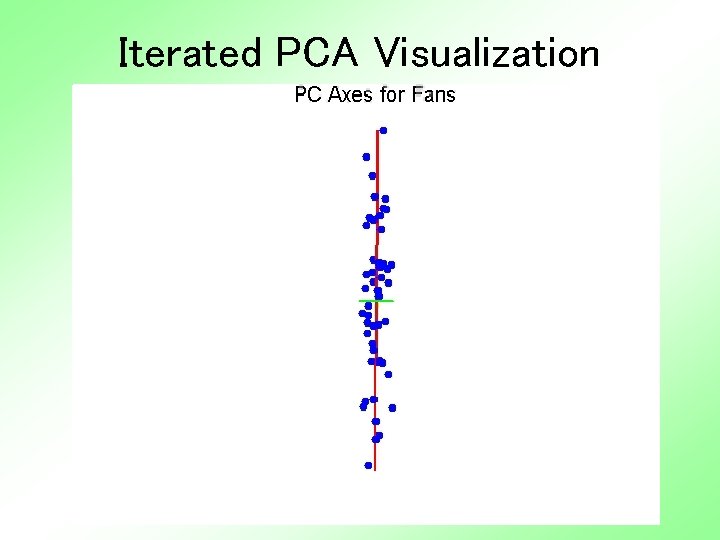

Iterated PCA Visualization

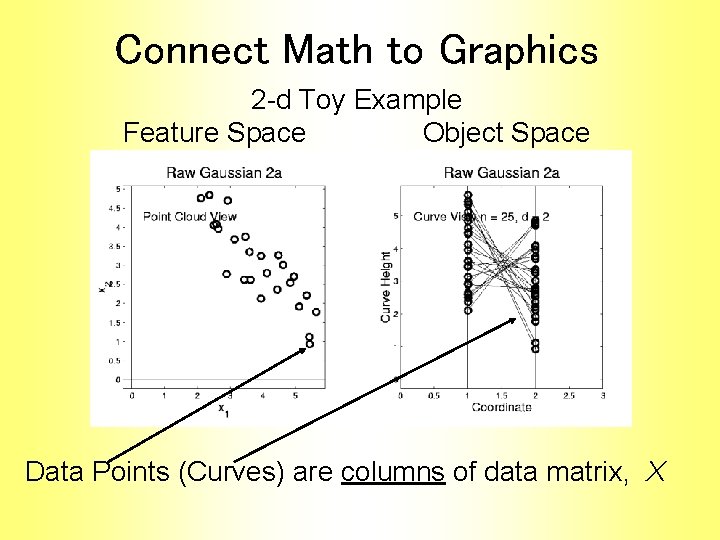

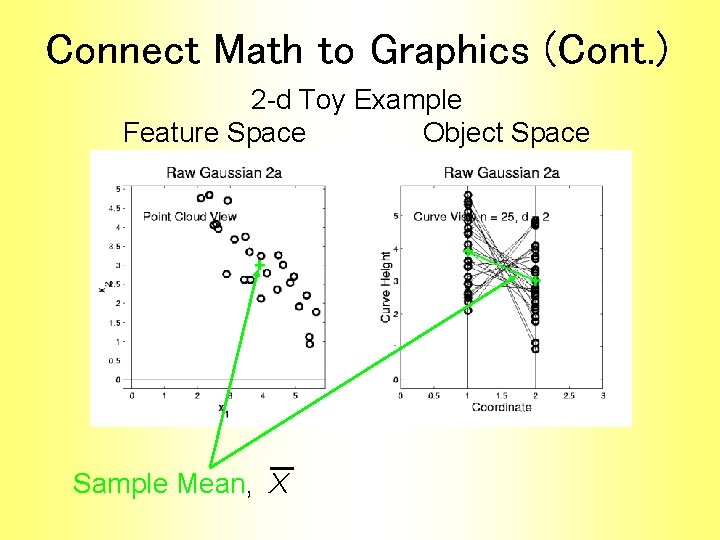

Connect Math to Graphics 2 -d Toy Example Feature Space Object Space Data Points (Curves) are columns of data matrix, X

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space Sample Mean, X

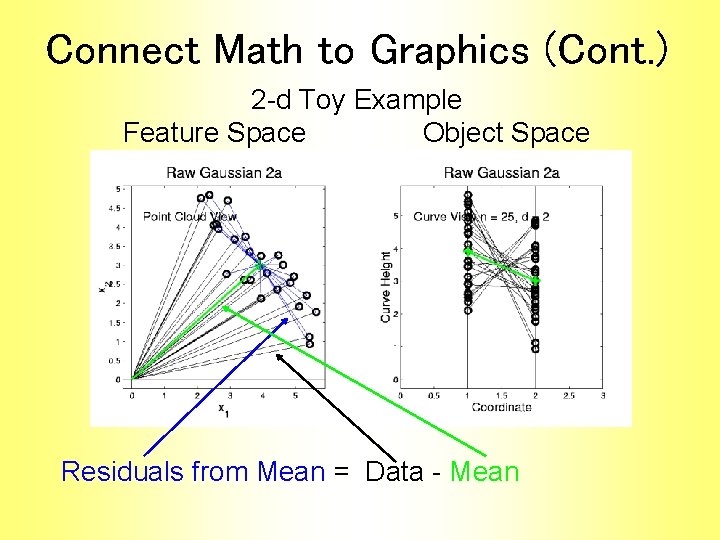

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space Residuals from Mean = Data - Mean

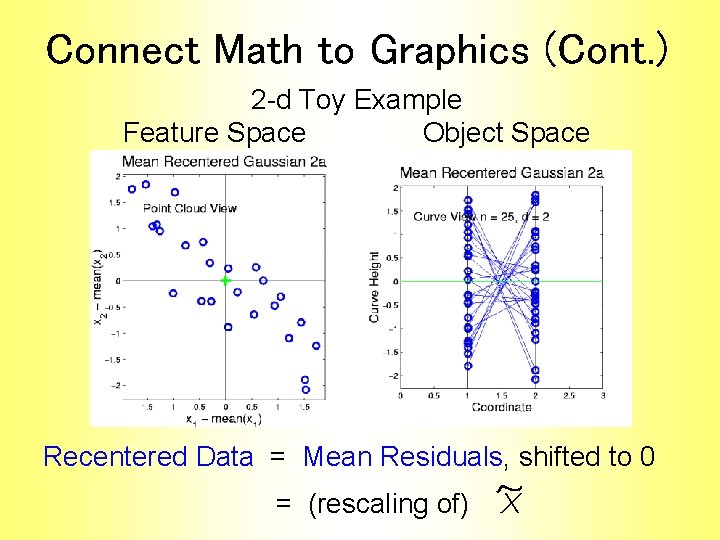

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space Recentered Data = Mean Residuals, shifted to 0 = (rescaling of) X

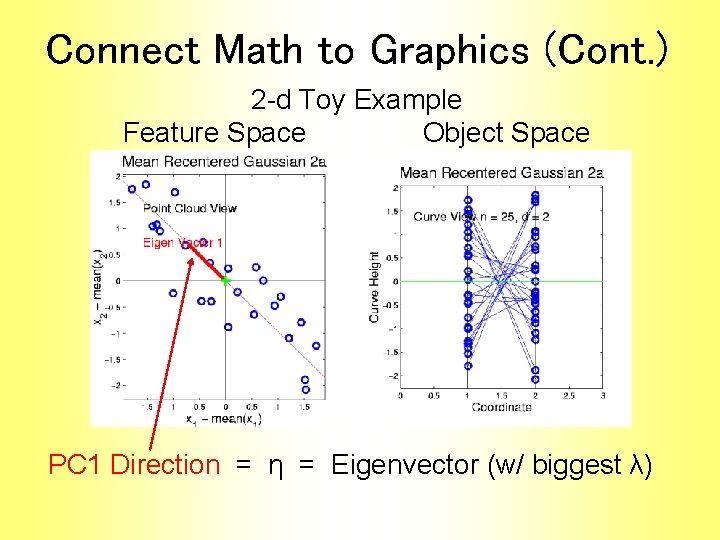

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space PC 1 Direction = η = Eigenvector (w/ biggest λ)

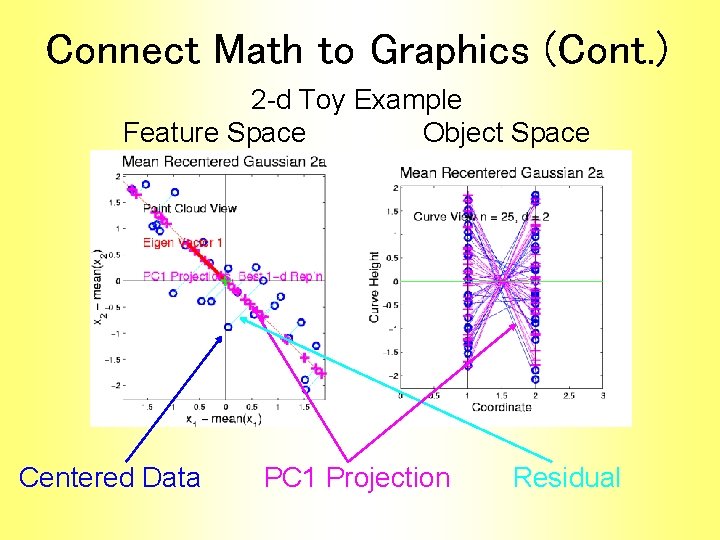

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space Centered Data PC 1 Projection Residual

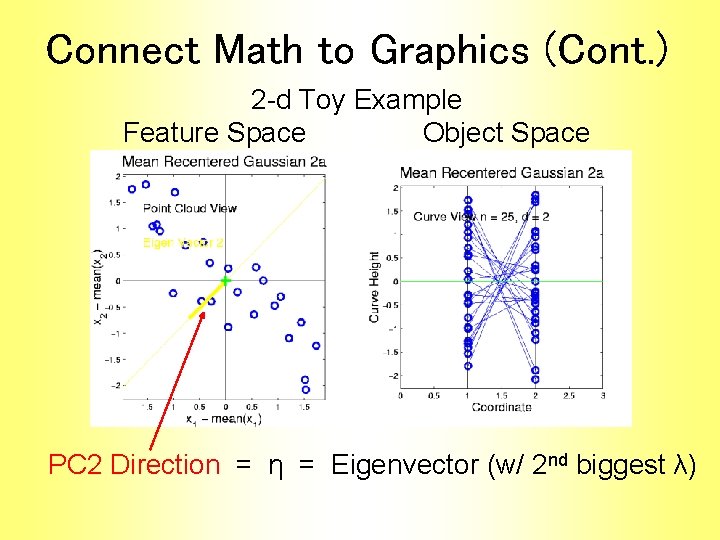

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space PC 2 Direction = η = Eigenvector (w/ 2 nd biggest λ)

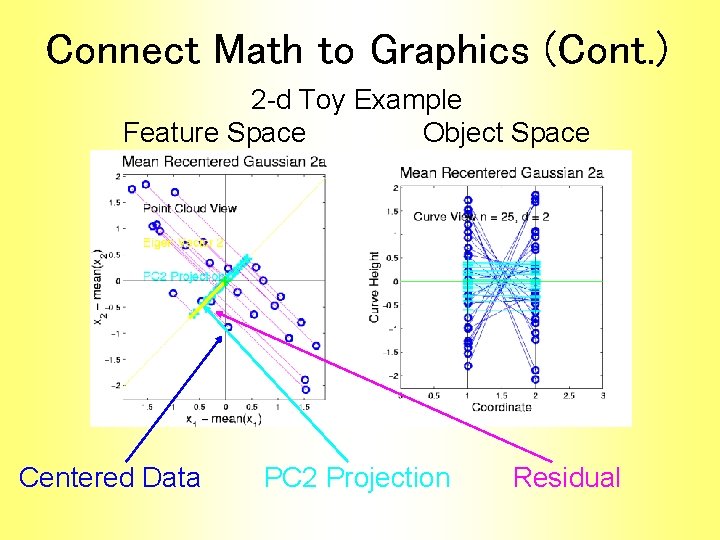

Connect Math to Graphics (Cont. ) 2 -d Toy Example Feature Space Object Space Centered Data PC 2 Projection Residual

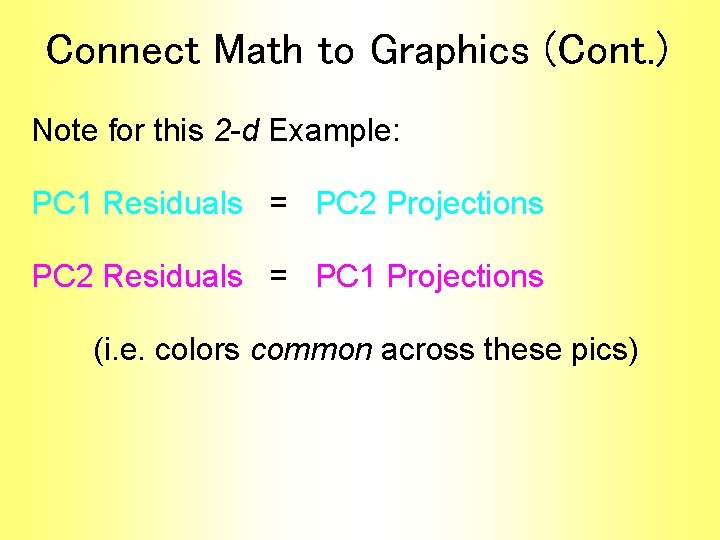

Connect Math to Graphics (Cont. ) Note for this 2 -d Example: PC 1 Residuals = PC 2 Projections PC 2 Residuals = PC 1 Projections (i. e. colors common across these pics)

- Slides: 56