Obfuscation and Strict Online Anonymity Tony Doyle Hunter

Obfuscation and Strict Online Anonymity Tony Doyle Hunter College Library 2015 LACUNY Institute May 8, 2015

Assumptions • Privacy good • Surveillance, tracking, monitoring, profiling bad

Privacy and Technology • Pervasive surveillance • “Greased” data (Moor 1997) • Radically altered flow of info

My thesis • Fully anonymous web searching through obfuscation justified • Obfuscation: “produces misleading, false, or ambiguous data with the intention of confusing an adversary or simply adding to the time or cost of separating good data from bad” (Bruton and Nissenbaum 2011).

Why worry? • Who’s gathering? • What are they doing with it? • What’s happening to us as a result?

Why worry? • Manipulation • Reduced autonomy

Good Old Days

Profiling • More likely obese if. . . – Premium cable and frequent fast food dining – No kids and a mini van – Frequent online shopping for clothes

Asymmetries • Information • Power

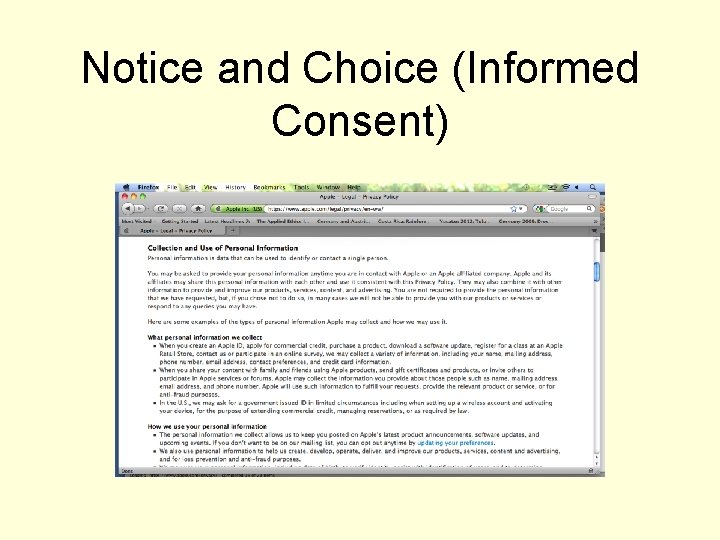

Notice and Choice (Informed Consent)

Market Solution

Legislation

Privacy and Anonymity

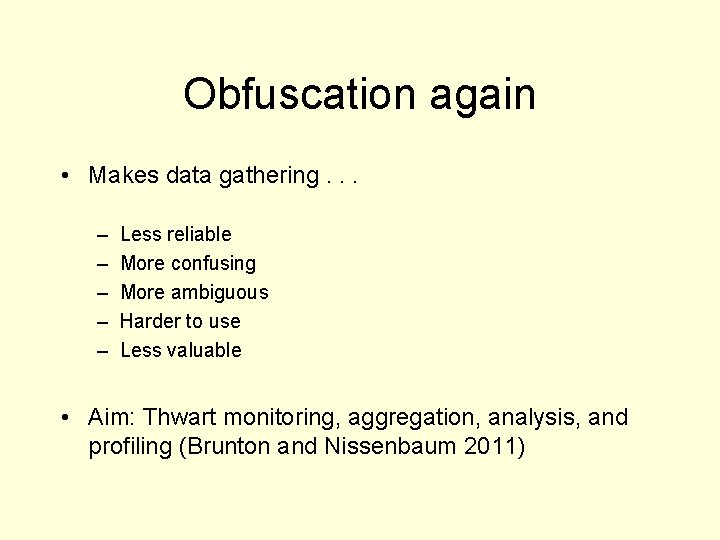

Obfuscation again • Makes data gathering. . . – – – Less reliable More confusing More ambiguous Harder to use Less valuable • Aim: Thwart monitoring, aggregation, analysis, and profiling (Brunton and Nissenbaum 2011)

Obfuscation and Natural Selection

Non-Jews and the Yellow Star

Loyalty Card Swaps

Digital Solutions • TOR • Track. Me. Not

Justification I • Coercion • Power imbalance

Justication II • Disguises justified?

Dogma Challenged (J Rule 2007)

Dogma • Blame technology: – Tech an “autonomous force” – Driven by “inner logic” or “inherent imperatives” – Irresistibly demands more info

Dogma • Tech sweeps away human values and intentions • Inevitably destroys privacy and anonymity

References • • • Brunton, F. and H. Nissenbaum (2013). “Political and ethical perspectives on data obfuscation. In Privacy, due process and the computational turn: The philosophy of law meets the philosophy of technology. Eds. M. Hildebrandt and K. De Vries. (New York: Routledge): 164 -88. Brunton, F. and H. Nissenbaum (2011). Vernacular resistance to data collection and analysis: A political theory of obfuscation. First Monday 16(5). Howe, D. and H. Nissenbaum (2009). Trackmenot: Resisting surveillance in web search. In Lessons from the identity trail: Anonymity, privacy, and identity in a networked society. Eds. I. Kerr, C. Lucock, and V. Steeves (Oxford: Oxford University Press): 418 -36. Moor, J. (1997). Towards a theory of privacy in the information age. Computers and Society, 27(3), 27 -32. Nissenbaum, H. (2011). A contextual approach to privacy online. Daedalus 140(4): 32 -48. Rule, J. (2007). Privacy in peril. New York: Oxford University Press.

- Slides: 24