Numerical Methods SOLUTION OF EQUATION Root Finding Methods

![How to get initial (nontrivial) interval [a, b] ? Hint from the physical problem How to get initial (nontrivial) interval [a, b] ? Hint from the physical problem](https://slidetodoc.com/presentation_image_h2/52bccb80307b61d905f5ea6d3c06cbb1/image-19.jpg)

![Example Roots are bounded by Hence, real roots are in [ -10, 10] Roots Example Roots are bounded by Hence, real roots are in [ -10, 10] Roots](https://slidetodoc.com/presentation_image_h2/52bccb80307b61d905f5ea6d3c06cbb1/image-20.jpg)

- Slides: 23

Numerical Methods SOLUTION OF EQUATION

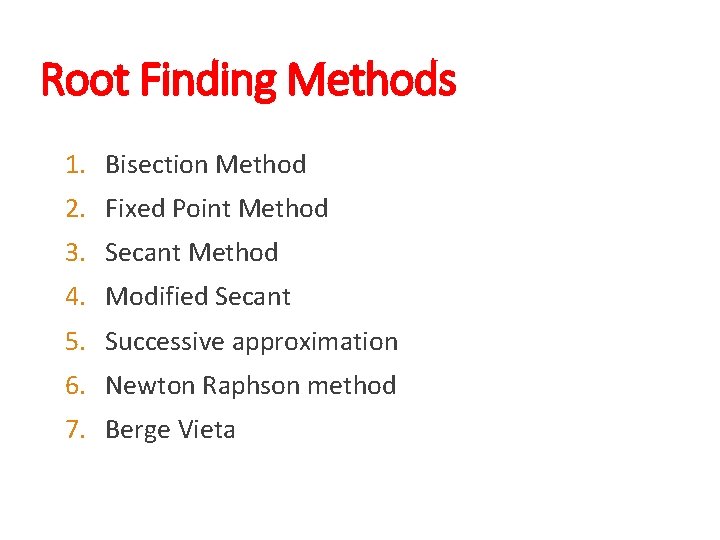

Root Finding Methods 1. Bisection Method 2. Fixed Point Method 3. Secant Method 4. Modified Secant 5. Successive approximation 6. Newton Raphson method 7. Berge Vieta

Motivation Many problems can be re-written into a form such as: ◦ f(x, y, z, …) = 0 ◦ f(x, y, z, …) = g(s, q, …)

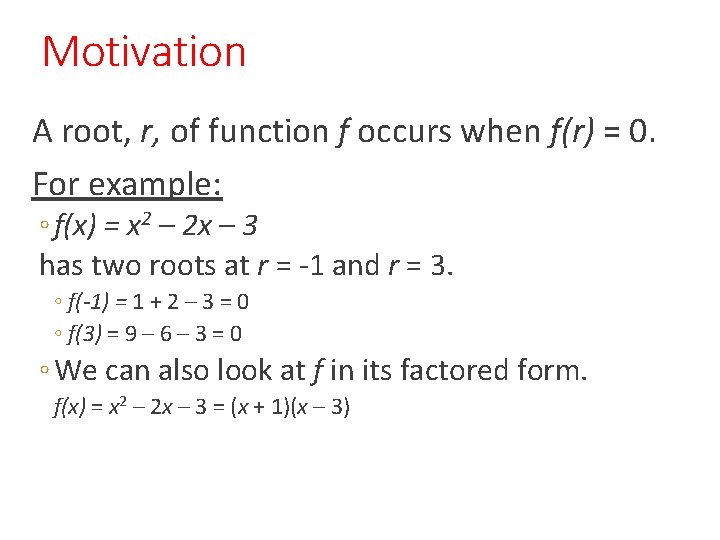

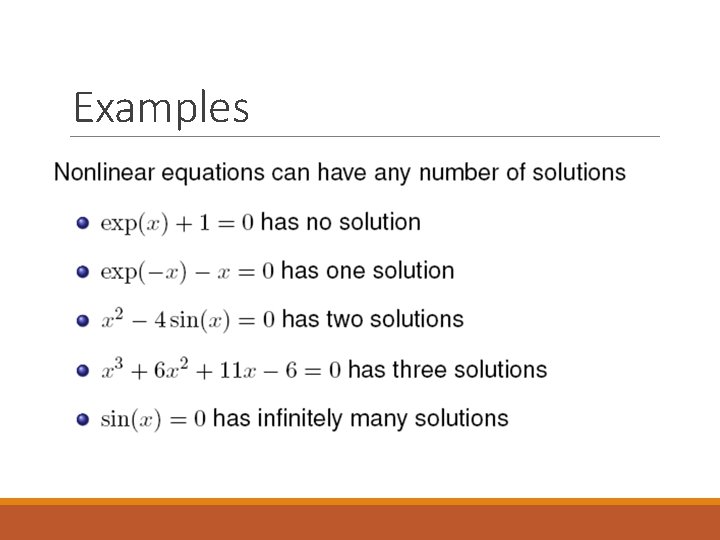

Motivation A root, r, of function f occurs when f(r) = 0. For example: ◦ f(x) = x 2 – 2 x – 3 has two roots at r = -1 and r = 3. ◦ f(-1) = 1 + 2 – 3 = 0 ◦ f(3) = 9 – 6 – 3 = 0 ◦ We can also look at f in its factored form. f(x) = x 2 – 2 x – 3 = (x + 1)(x – 3)

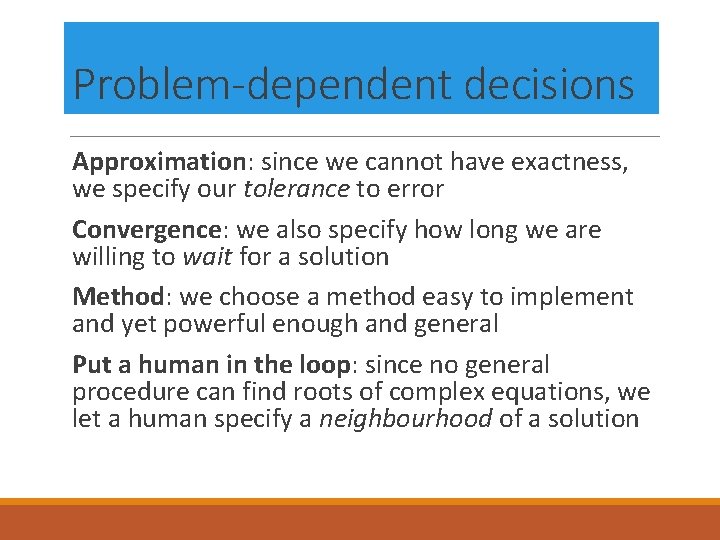

Finding roots / solving equations q. General solution exists for equations such as ax 2 + bx + c = 0 q The quadratic formula provides a quick answer to all quadratic equations. q However, no exact general solution (formula) exists for equations with exponents greater than 4. q. Transcendental equations: involving geometric functions (sin, cos), log, exp. These equations cannot be reduced to solution of a polynomial.

Examples

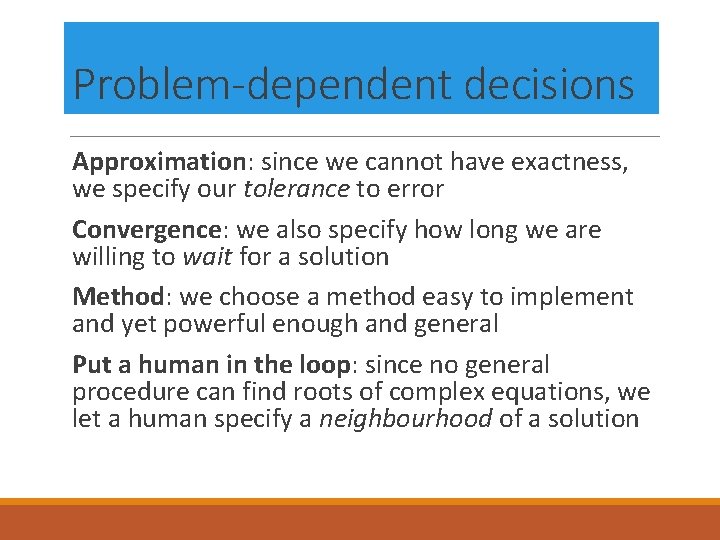

Problem-dependent decisions Approximation: since we cannot have exactness, we specify our tolerance to error Convergence: we also specify how long we are willing to wait for a solution Method: we choose a method easy to implement and yet powerful enough and general Put a human in the loop: since no general procedure can find roots of complex equations, we let a human specify a neighbourhood of a solution

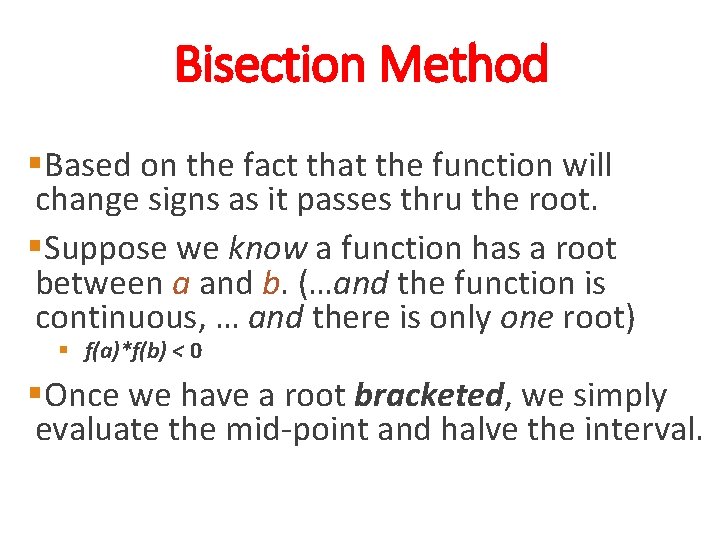

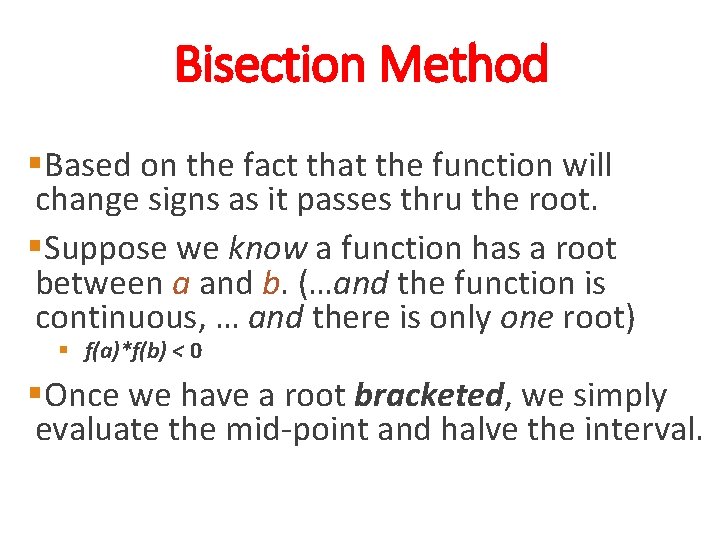

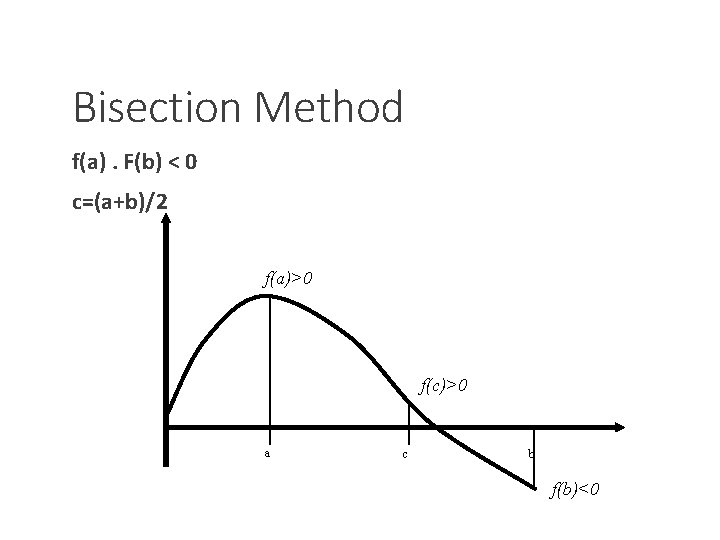

Bisection Method §Based on the fact that the function will change signs as it passes thru the root. §Suppose we know a function has a root between a and b. (…and the function is continuous, … and there is only one root) § f(a)*f(b) < 0 §Once we have a root bracketed, we simply evaluate the mid-point and halve the interval.

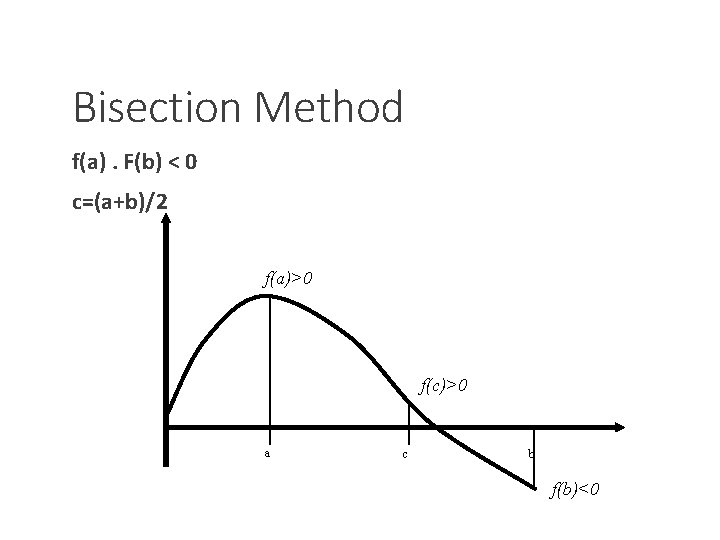

Bisection Method f(a). F(b) < 0 c=(a+b)/2 f(a)>0 f(c)>0 a c b f(b)<0

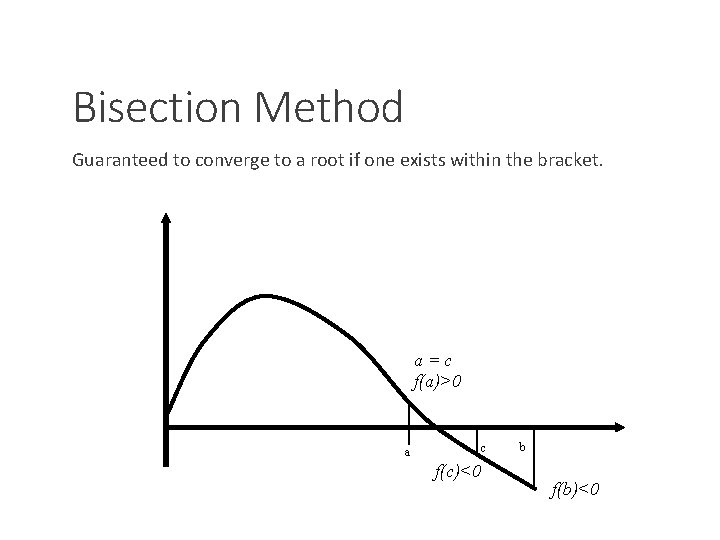

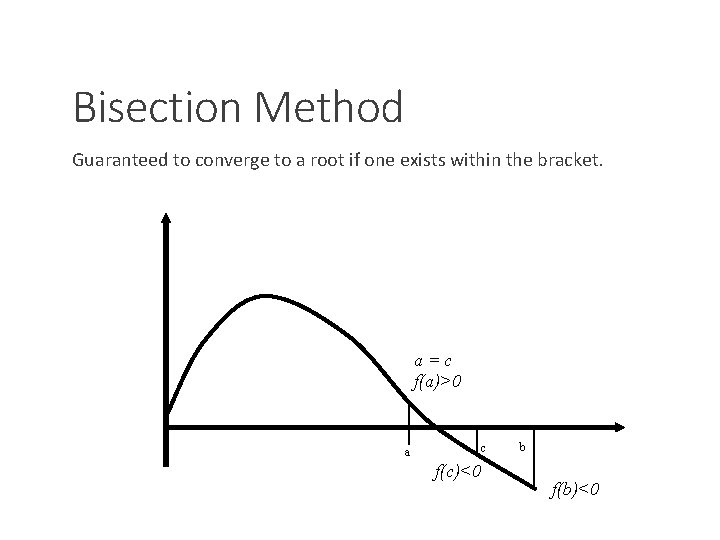

Bisection Method Guaranteed to converge to a root if one exists within the bracket. a=c f(a)>0 c a f(c)<0 b f(b)<0

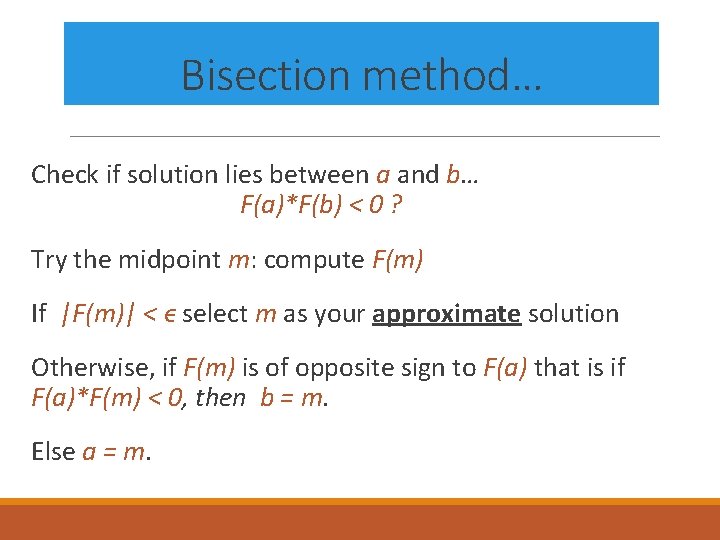

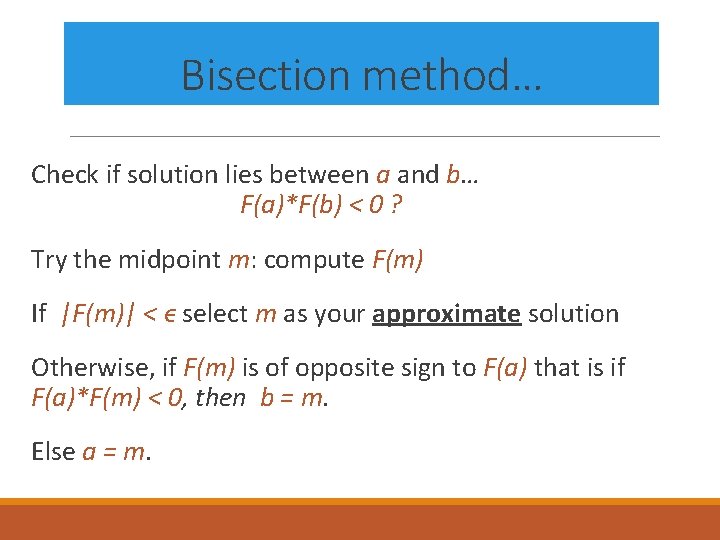

Bisection method… Check if solution lies between a and b… F(a)*F(b) < 0 ? Try the midpoint m: compute F(m) If |F(m)| < ϵ select m as your approximate solution Otherwise, if F(m) is of opposite sign to F(a) that is if F(a)*F(m) < 0, then b = m. Else a = m.

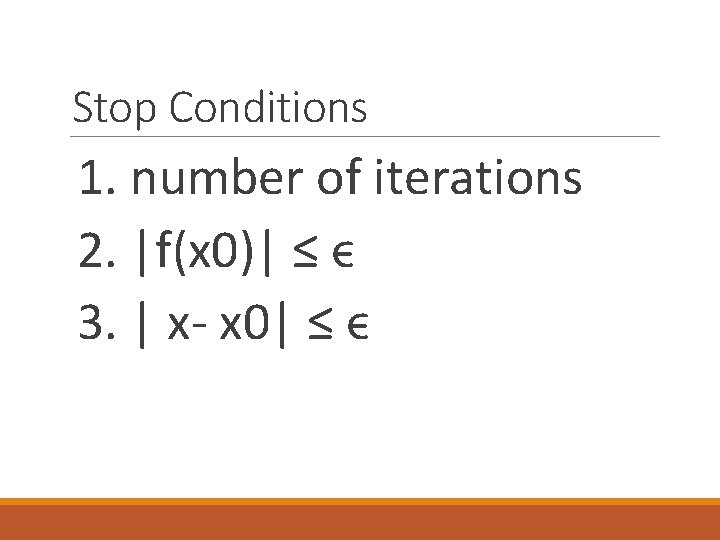

Stop Conditions 1. number of iterations 2. |f(x 0)| ≤ ϵ 3. | x- x 0| ≤ ϵ

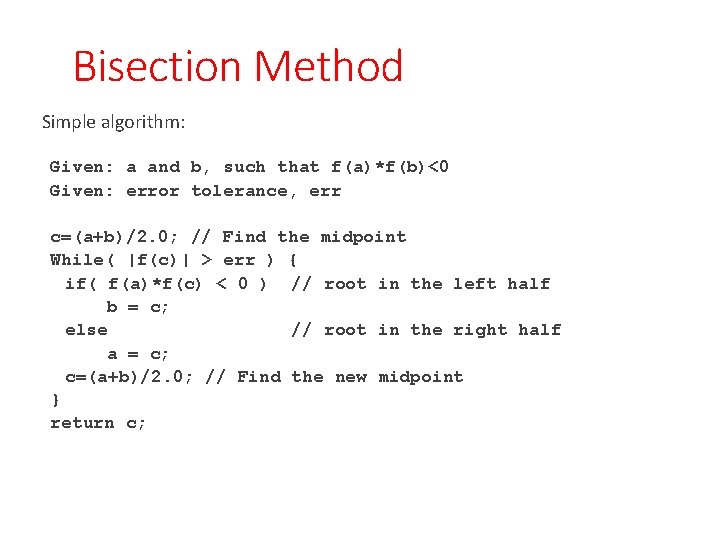

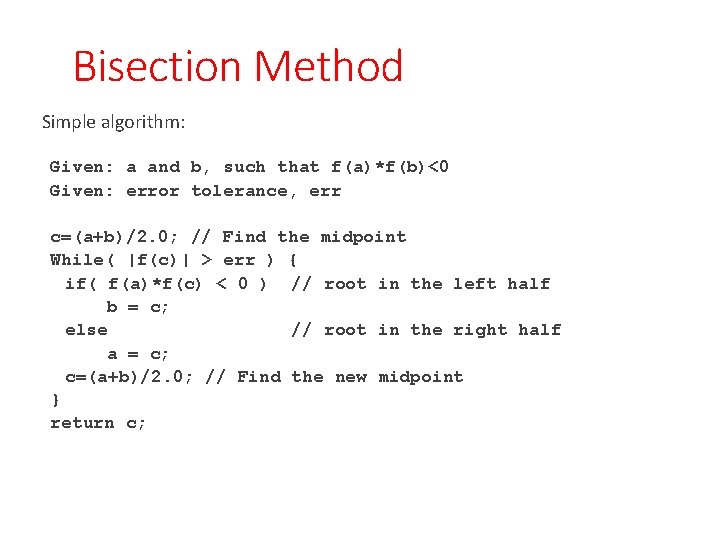

Bisection Method Simple algorithm: Given: a and b, such that f(a)*f(b)<0 Given: error tolerance, err c=(a+b)/2. 0; // Find the midpoint While( |f(c)| > err ) { if( f(a)*f(c) < 0 ) // root in the left half b = c; else // root in the right half a = c; c=(a+b)/2. 0; // Find the new midpoint } return c;

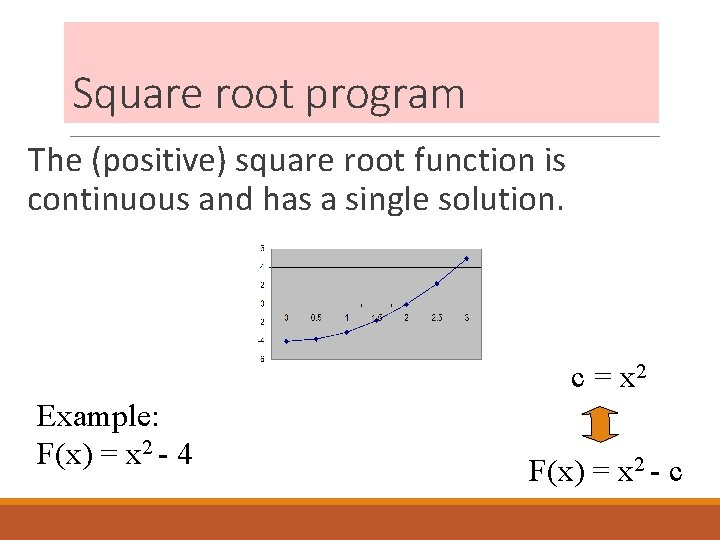

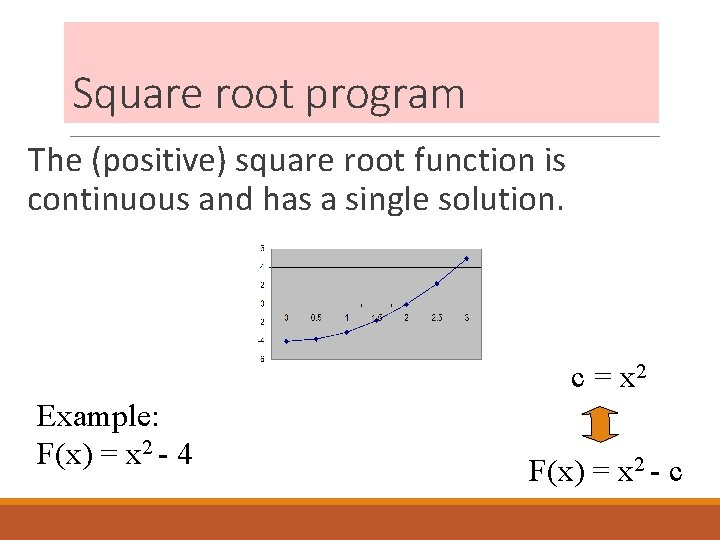

Square root program The (positive) square root function is continuous and has a single solution. c = x 2 Example: F(x) = x 2 - 4 F(x) = x 2 - c

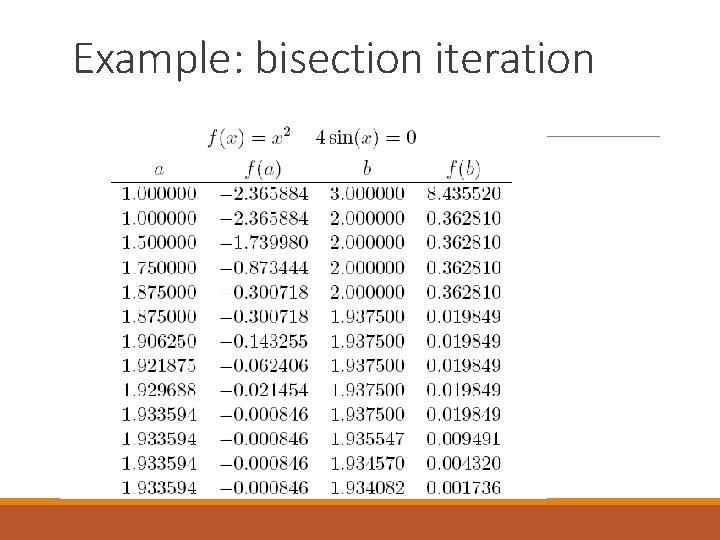

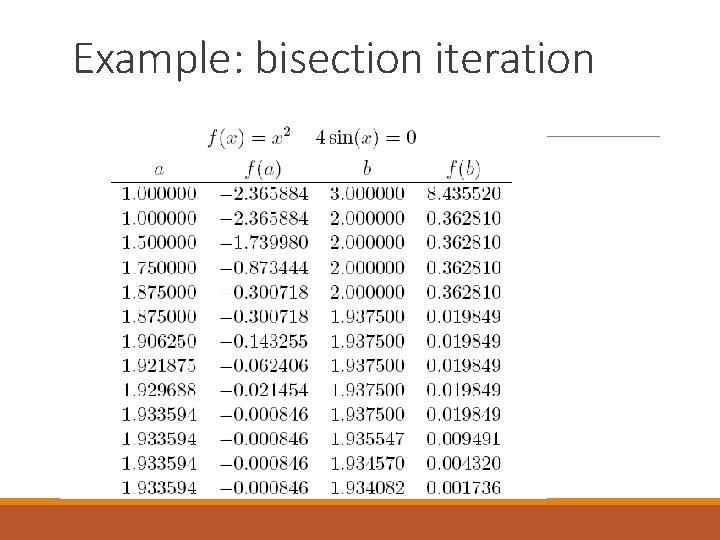

Example: bisection iteration

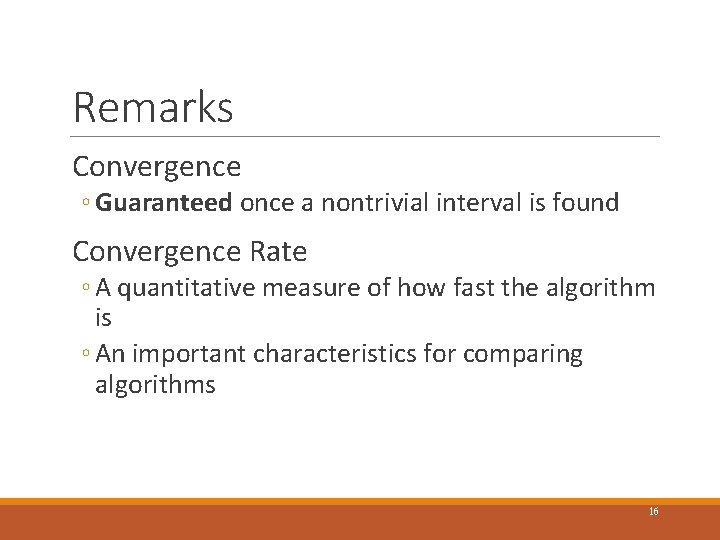

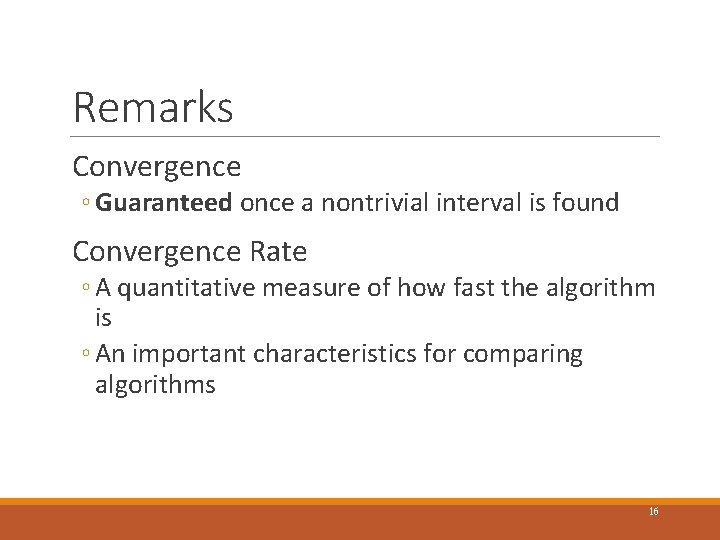

Remarks Convergence ◦ Guaranteed once a nontrivial interval is found Convergence Rate ◦ A quantitative measure of how fast the algorithm is ◦ An important characteristics for comparing algorithms 16

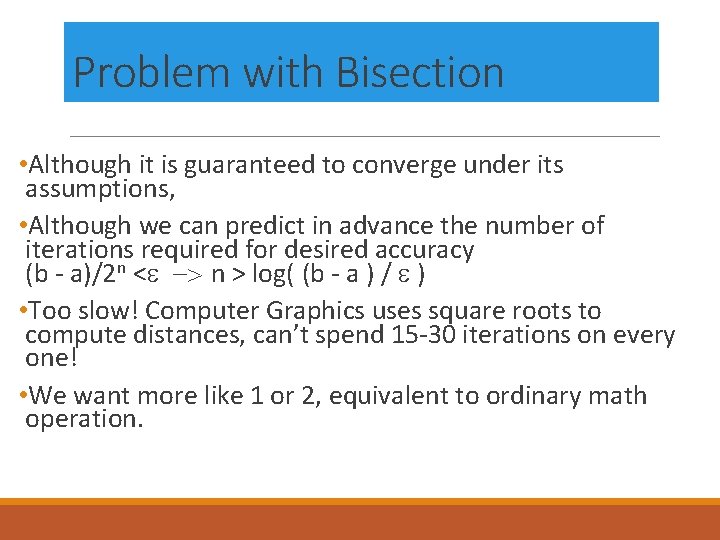

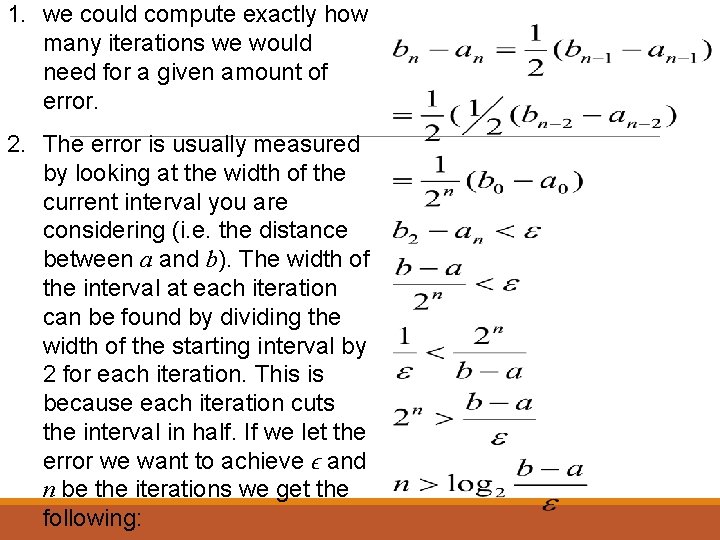

1. we could compute exactly how many iterations we would need for a given amount of error. 2. The error is usually measured by looking at the width of the current interval you are considering (i. e. the distance between a and b). The width of the interval at each iteration can be found by dividing the width of the starting interval by 2 for each iteration. This is because each iteration cuts the interval in half. If we let the error we want to achieve ϵ and n be the iterations we get the following:

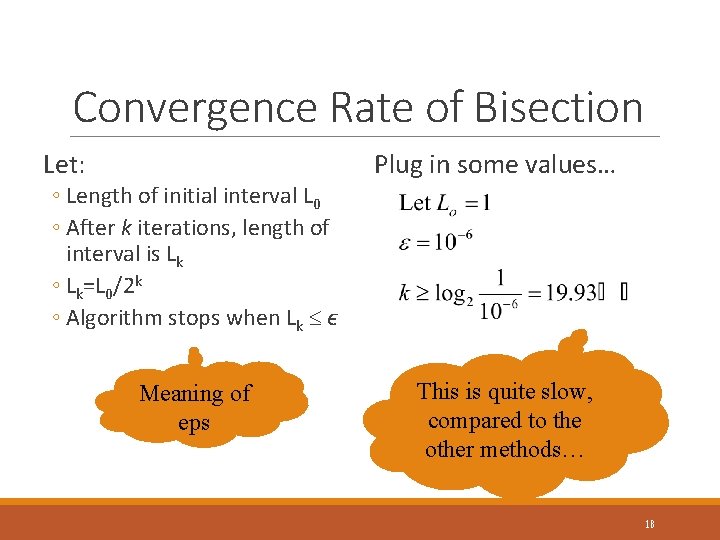

Convergence Rate of Bisection Let: Plug in some values… ◦ Length of initial interval L 0 ◦ After k iterations, length of interval is Lk ◦ Lk=L 0/2 k ◦ Algorithm stops when Lk ϵ Meaning of eps This is quite slow, compared to the other methods… 18

![How to get initial nontrivial interval a b Hint from the physical problem How to get initial (nontrivial) interval [a, b] ? Hint from the physical problem](https://slidetodoc.com/presentation_image_h2/52bccb80307b61d905f5ea6d3c06cbb1/image-19.jpg)

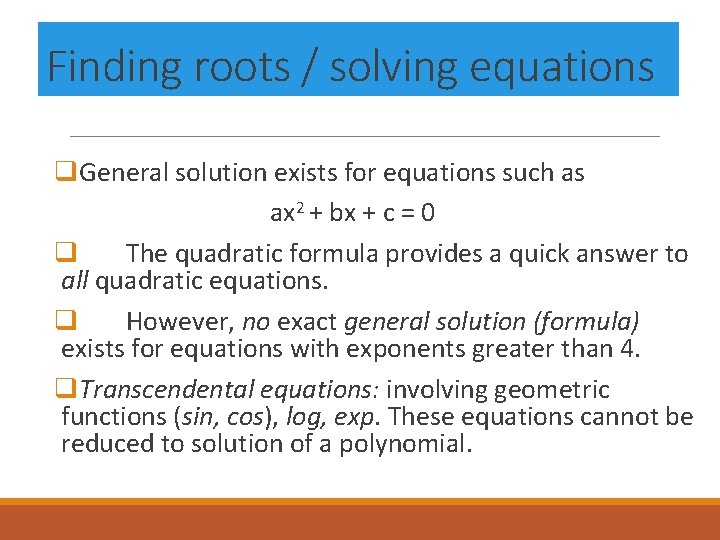

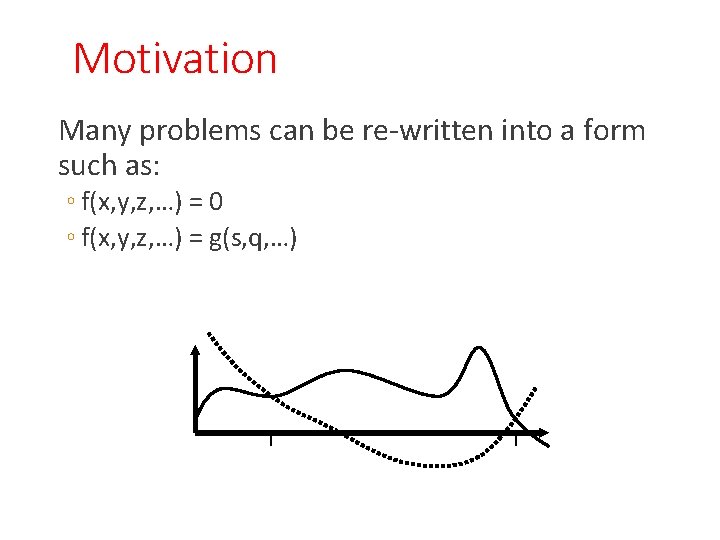

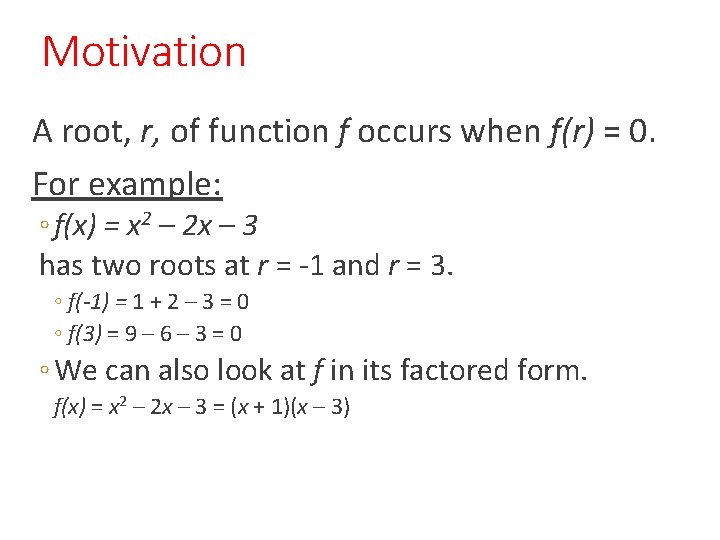

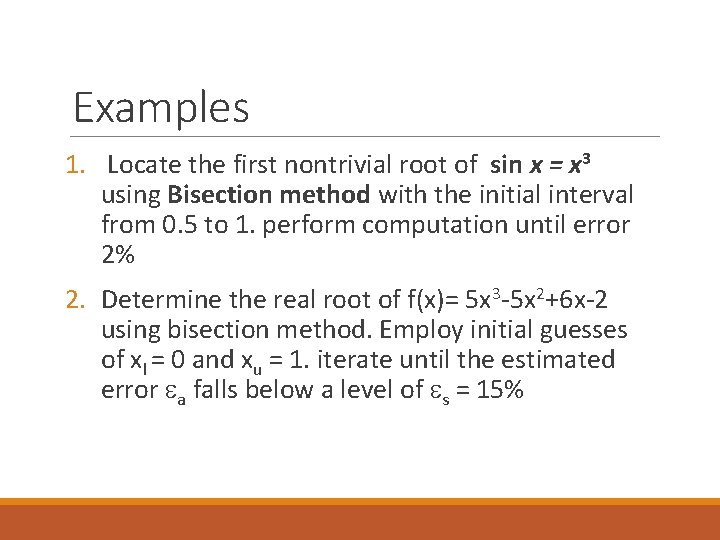

How to get initial (nontrivial) interval [a, b] ? Hint from the physical problem For polynomial equation, the following theorem is applicable: roots (real and complex) of the polynomial f(x) = anxn + an-1 xn-1 +…+ a 1 x + aο satisfy the bound:

![Example Roots are bounded by Hence real roots are in 10 10 Roots Example Roots are bounded by Hence, real roots are in [ -10, 10] Roots](https://slidetodoc.com/presentation_image_h2/52bccb80307b61d905f5ea6d3c06cbb1/image-20.jpg)

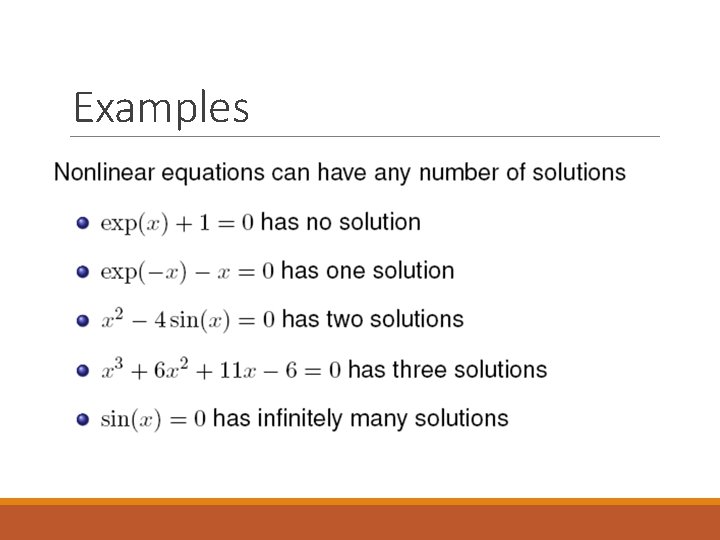

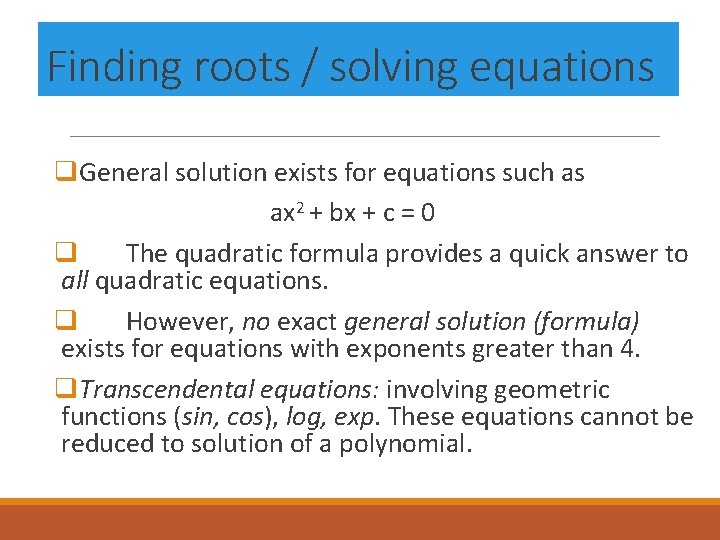

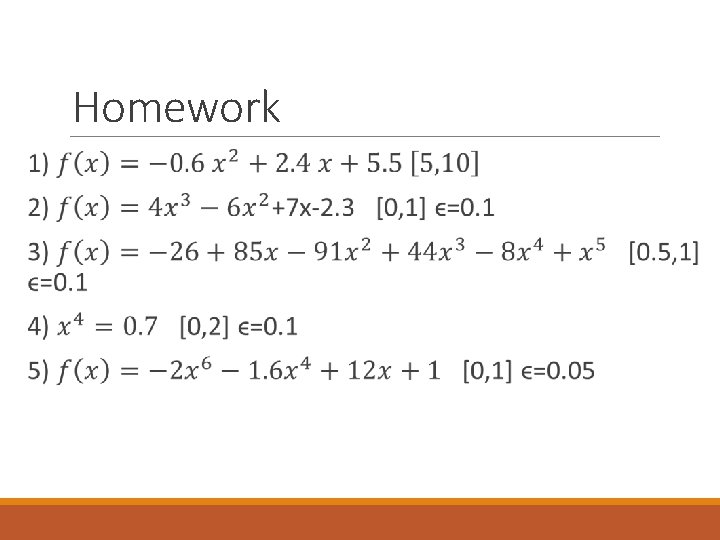

Example Roots are bounded by Hence, real roots are in [ -10, 10] Roots are – 1. 5251, 2. 2626 ± 0. 8844 i complex 20

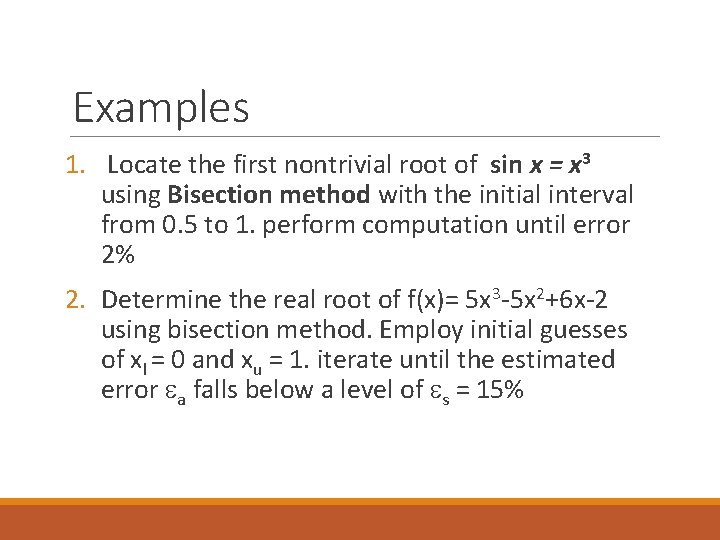

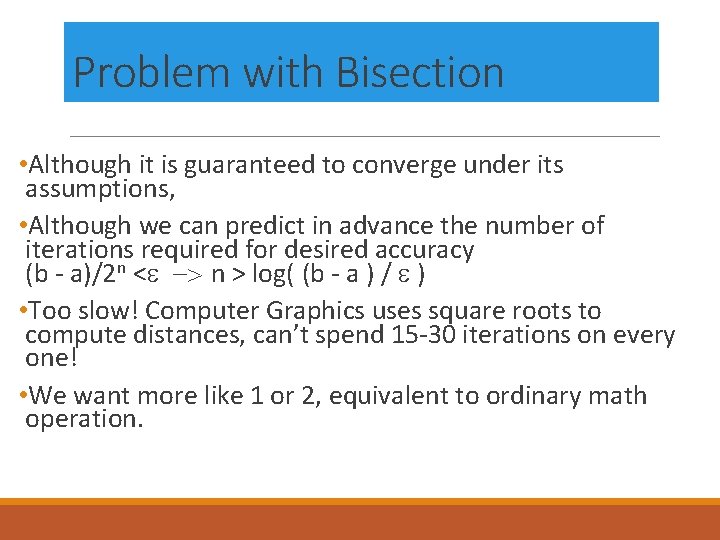

Problem with Bisection • Although it is guaranteed to converge under its assumptions, • Although we can predict in advance the number of iterations required for desired accuracy (b - a)/2 n < -> n > log( (b - a ) / ) • Too slow! Computer Graphics uses square roots to compute distances, can’t spend 15 -30 iterations on every one! • We want more like 1 or 2, equivalent to ordinary math operation.

Examples 1. Locate the first nontrivial root of sin x = x 3 using Bisection method with the initial interval from 0. 5 to 1. perform computation until error 2% 2. Determine the real root of f(x)= 5 x 3 -5 x 2+6 x-2 using bisection method. Employ initial guesses of xl = 0 and xu = 1. iterate until the estimated error a falls below a level of s = 15%

Homework