NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY A Survey

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY A Survey of Multitask Learning 2015/09/22 Ming-Han Yang 1

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Outline n An overview of multitask learning n The history of multitask learning 2

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY What is Multitask learning? ü Multitask learning (MTL) is a machine learning technique that aims at improving the generalization performance of a learning task by jointly learning multiple-related tasks. ü The key to the successful application of MTL is that the tasks need to be related. Here related does not mean the tasks are similar. ü Instead, it means at some level of abstraction these tasks share part of the representation. ü If the tasks are indeed similar learning them together can help transfer knowledge among tasks since it effectively increases the amount of training data for each task. D. Yu and L. Deng (2014). “Automatic speech recognition - a deep learning approach”, Springer, 219 -220. 3

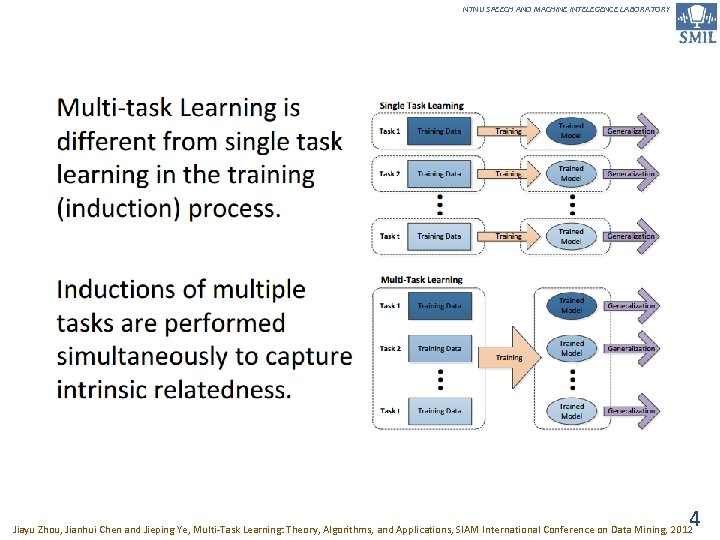

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY 4 Jiayu Zhou, Jianhui Chen and Jieping Ye, Multi-Task Learning: Theory, Algorithms, and Applications, SIAM International Conference on Data Mining, 2012

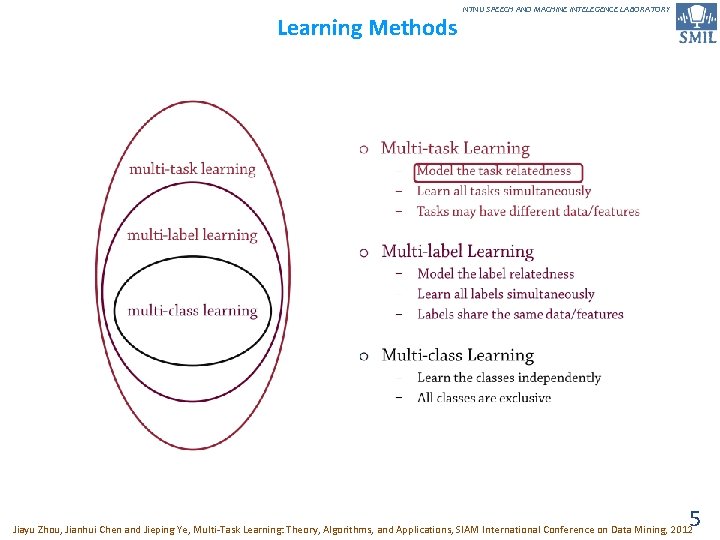

Learning Methods NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY 5 Jiayu Zhou, Jianhui Chen and Jieping Ye, Multi-Task Learning: Theory, Algorithms, and Applications, SIAM International Conference on Data Mining, 2012

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY How to do Multitask learning? ü Multi-task learning is a technique wherein a primary learning task is solved jointly with additional related tasks using a shared input representation. ü If these secondary tasks are chosen well, the shared structure serves to improve generalization of the model, and its accuracy on an unseen test set. ü In multi-task learning, the key aspect is choosing appropriate secondary tasks for the network to learn. ü When choosing secondary tasks for multi-task learning, one should select a task that is related to the primary task, but gives more information about the structure of the problem. ML. Seltzer and J. Droppo(2013). Multi-task learning in deep neural networks for improved phoneme recognition, ICASSP. 6

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY The Beginning of Multitask learning ü Multitask learning has many names and incarnations including learning-to-learn, meta-learning, lifelong learning, and inductive transfer [1] J. Baxter. Learning internal representations. In Proceedings of the International ACM Workshop on Computational Learning Theory, 1995. [2] S. Thrun and L. Y. Pratt. Learning to Learn. Kluwer Academic, 1997 [3] R. Caruana. Multitask learning. Machine Learning, 28: 41– 75, 1997. [4] S. Thrun. Is learning the n-th thing any easier than learning the first? , NIPS, 1995. ü Early implementations of multitask learning primarily investigated neural network or nearest neighbor learners [1][3][4]. ü In addition to neural approaches, Bayesian methods have been explored that implement multitask learning by assuming dependencies between the various models and tasks[5][6]. [5] T. Heskes. Solving a huge number of similar tasks: A combination of multi-task learning and a hierarchical Bayesian approach. ICML, 1998 [6] T. Heskes. Empirical Bayes for learning to learn. ICML, 2004. T. Jebara. (2011) Multitask Sparsity via Maximum Entropy Discrimination. In Journal of Machine Learning. Research, (12): 75 -110. 7

1997 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Multitask learning (1) ü Multitask Learning is an approach to inductive transfer that improves learning for one task by using the information contained in the training signals of other related tasks. ü It does this by learning tasks in parallel while using a shared representation; what is learned for each task can help other tasks be learned better. ü A task will be learned better if we can leverage the information contained in the training signals of other related tasks during learning. R. Caruana (1997). Multitask learning. Machine Learning, 28(1), 41– 75. 8

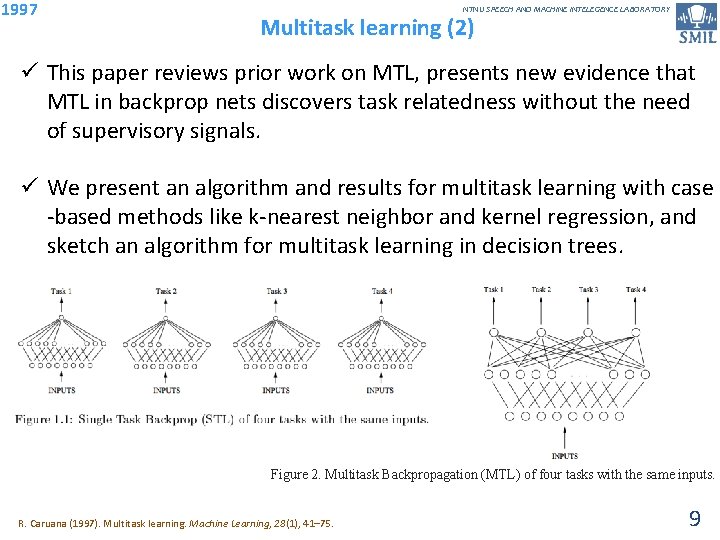

1997 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Multitask learning (2) ü This paper reviews prior work on MTL, presents new evidence that MTL in backprop nets discovers task relatedness without the need of supervisory signals. ü We present an algorithm and results for multitask learning with case -based methods like k-nearest neighbor and kernel regression, and sketch an algorithm for multitask learning in decision trees. Figure 2. Multitask Backpropagation (MTL) of four tasks with the same inputs. R. Caruana (1997). Multitask learning. Machine Learning, 28(1), 41– 75. 9

1997 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Multitask learning (3) Learning Rate in Backprop MTL ü Usually better performance is obtained in backprop MTL when all tasks learn at similar rates and reach best performance at roughly the same time. p If the main task trains long before the extra tasks, it cannot benefit from what has not yet been learned for the extra tasks. p If the main task trains long after the extra tasks, it cannot shape what is learned for the extra tasks. ü Moreover, if the extra tasks begin to overtrain, they may cause the main task to overtrain too because of the overlap in hidden layer representation. R. Caruana (1997). Multitask learning. Machine Learning, 28(1), 41– 75. 10

1997 ü 1. 2. 3. Learning to learn NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Given a family of tasks training experience for each of these tasks, and a family of performance measures (e. g. , one for each task), an algorithm is said to learn if its performance at each task improves with experience and with the number of tasks ü Put differently, a learning algorithm whose performance does not depend on the number of learning tasks, which hence would not benefit from the presence of other learning tasks, is not said to learn. ü For an algorithm to fit this definition, some kind of transfer must occur between multiple tasks that must have a positive impact on expected task-performance. S. Thrun and L. Pratt (1997). Learning to Learn. Norwell, MA, USA: Kluwer. 11

2004 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Regularized multi–task learning (1) ü Past empirical work has shown that learning multiple related tasks from data simultaneously can be advantageous in terms of predictive performance relative to learning these tasks independently. ü In this paper we present an approach to multi–task learning based on the minimization of regularization functionals similar to existing ones, such as the one for Support Vector Machines (SVMs), that have been successfully used in the past for single–task learning. ü Our approach allows to model the relation between tasks in terms of a novel kernel function that uses a task–coupling parameter. T. Evgeniou and M. Pontil(2004). Regularized multi–task learning, In Proc. of the 10 th SIGKDD Int’l Conf. on Knowledge discovery and data mining 12

2004 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Regularized multi–task learning (2) ü When there are relations between the tasks to learn, it can be advantageous to learn all tasks simultaneously instead of following the more traditional approach of learning each task independently of the others. ü There has been a lot of experimental work showing the benefits of such multi–task learning relative to individual task learning when tasks are related, see [*] B. Bakker and T. Heskes. Task clustering and gating for Bayesian multi–task learning. JMLR, 4: 83– 99, 2003. [*] R. Caruana. Multi–Task Learning. Machine Learning, 28, p. 41– 75, 1997. [*] T. Heskes. Empirical Bayes for learning to learn. Proceedings of ICML– 2000, ed. Langley, P. , pp. 367– 374, 2000. [*] S. Thrun and L. Pratt. Learning to Learn. Kluwer Academic Publishers, 1997. ü In this paper we develop methods for multi–task learning that are natural extensions of existing kernel based learning methods for single task learning, such as Support Vector Machines (SVMs). ü To the best of our knowledge, this is the first generalization of regularization–based methods from single–task to multi–task 13 T. Evgeniou and M. Pontil(2004). Regularized multi–task learning, In Proc. of the 10 th SIGKDD Int’l Conf. on Knowledge discovery and data mining learning.

2004 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Regularized multi–task learning (3) ü A statistical learning theory based approach to multi–task learning has been developed in [1 -3]. [1] J. Baxter. A Bayesian/Information Theoretic Model of Learning to Learn via Multiple Task Sampling. Machine Learning, 28, pp. 7– 39, 1997. [2] J. Baxter. A Model for Inductive Bias Learning. Journal of Artificial Intelligence Research, 12, p. 149– 198, 2000. [3] S. Ben-David and R. Schuller, Exploiting Task Relatedness for Multiple Task Learning, COLT, 2003. ü The problem of multi–task learning has been also studied in the statistics literature [4 -5]. [4] L. Breiman and J. H Friedman. Predicting Multivariate Responses in Multiple Linear Regression. Royal Statistical Society Series B, 1998. [5] P. J. Brown and J. V. Zidek. Adaptive Multivariate Ridge Regression. The Annals of Statistics, Vol. 8, No. 1, p. 64– 74, 1980. ü Finally, a number of approaches for learning multiple tasks or for learning to learn are Bayesian, where a probability model capturing the relations between the different tasks is estimated simultaneously with the models’ parameters for each of the individual tasks[6 -9]. [6] G. M. Allenby and P. E. Rossi. Marketing Models of Consumer Heterogeneity. Journal of Econometrics, 89, p. 57– 78, 1999. [7] N. Arora G. M Allenby, and J. Ginter. A Hierarchical Bayes Model of Primary and Secondary Demand. Marketing Science, 17, 1, p. 29– 44, 1998 [8] B. Bakker and T. Heskes. Task clustering and gating for Bayesian multi–task learning, JMLR, 4: 83– 99, 2003 [9] T. Heskes. Empirical Bayes for learning to learn. Proceedings of ICML– 2000, ed. Langley, P. , pp. 367– 374, 2000 T. Evgeniou and M. Pontil(2004). Regularized multi–task learning, In Proc. of the 10 th SIGKDD Int’l Conf. on Knowledge discovery and data mining 14

2008 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Convex multitask feature learning ü We study the problem of learning data representations that are common across multiple related supervised learning tasks. This is a problem of interest in many research areas ü In this paper, we present a novel method for learning sparse representations common across many supervised learning tasks. ü In particular, we develop a novel non-convex multi-task generalization of the 1 -norm regularization known to provide sparse variable selection in the single-task case. ü Our method learns a few features common across the tasks using a novel regularizer which both couples the tasks and enforces sparsity. A. Argyriou, T. Evgeniou and M. Pontil. Convex multitask feature learning. In Machine. Learning, 73(3): 243 -272, 2008. 15

2008 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Clustered multi-task learning: A convex formulation ü In multi-task learning several related tasks are considered simultaneously, with the hope that by an appropriate sharing of information across tasks, each task may benefit from the others. ü In this paper, we assume that tasks are clustered into groups, which are unknown beforehand, and that tasks within a group have similar weight vectors. ü We design a new spectral norm that encodes this a priori assumption, without the prior knowledge of the partition of tasks into groups, resulting in a new convex optimization formulation for multi-task learning. L. Jacob, F. Bach, and J. Vert. Clustered multi-task learning: A convex formulation. NIPS, 2008 16

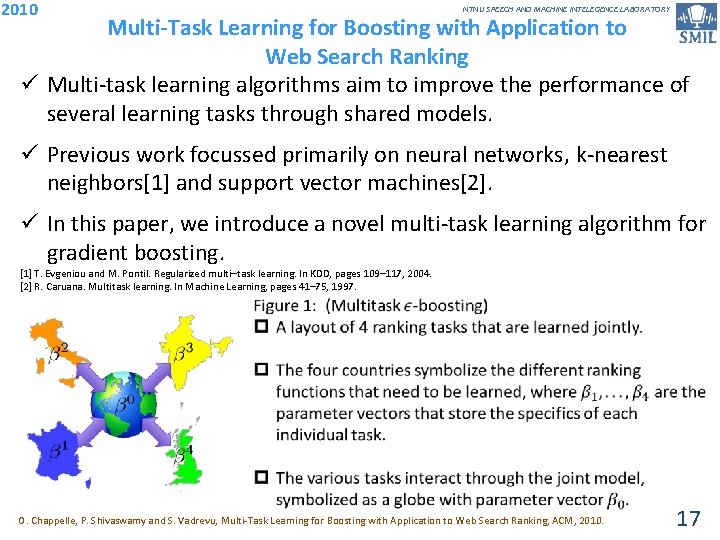

2010 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Multi-Task Learning for Boosting with Application to Web Search Ranking ü Multi-task learning algorithms aim to improve the performance of several learning tasks through shared models. ü Previous work focussed primarily on neural networks, k-nearest neighbors[1] and support vector machines[2]. ü In this paper, we introduce a novel multi-task learning algorithm for gradient boosting. [1] T. Evgeniou and M. Pontil. Regularized multi–task learning. In KDD, pages 109– 117, 2004. [2] R. Caruana. Multitask learning. In Machine Learning, pages 41– 75, 1997. O. Chappelle, P. Shivaswamy and S. Vadrevu, Multi-Task Learning for Boosting with Application to Web Search Ranking, ACM, 2010. 17

2011 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Multitask sparsity via maximum entropy discrimination ü A multitask learning framework is developed for discriminative classification and regression where multiple large-margin linear classifiers are estimated for different prediction problems ü Most machine learning approaches take a single-task perspective where one large homogeneous repository of uniformly collected iid (independent and identically distributed) samples is given and labeled consistently. ü A more realistic, multitask learning approach is to combine data from multiple smaller sources and synergistically leverage heterogeneous labeling or annotation efforts. p feature selection, kernel selection, adaptive pooling and graphical model structure T. Jebara (2011). Multitask Sparsity via Maximum Entropy Discrimination. In Journal of Machine Learning Research, (12): 75 -110. 18

2012 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Learning task grouping and overlap in multi-task learning (1) ü The key aspect in all multi-task learning methods is the introduction of an inductive bias in the joint hypothesis space of all tasks that reflects our prior beliefs about task relatedness structure. ü Assumptions that task parameters lie close to each other in some geometric sense[1] or parameters share a common prior[2][3][4] or they lie in a low dimensional subspace[1] or on a manifold[5] are some examples of introducing an inductive bias in the hope of achieving better generalization. [1] A. Argyriou, T. Evgeniou and M. Pontil. Convex multitask feature learning. In Machine Learning, 73(3): 243 -272, 2008. [2] Yu, Kai, Tresp, Volker, and Schwaighofer, Anton. Learning Gaussian Processes from Multiple Task. In ICML, 2005. [3] Lee, S. I. , Chatalbashev, V. , Vickrey, D. , and Koller, D. Learning a meta-level prior feature relevance from multiple related tasks. In ICML, 2007 [4] Daum´e III, Hal. Bayesian Multitask Learning with Latent Hierarchies. In UAI, 2009. [5] Agarwal, Arvind, Daum´e III, Hal, and Gerber, Samuel. Learning Multiple Tasks using Manifold Regularization. In NIPS, 2010. ü A major challenge in multi-task learning is how to selectively screen the sharing of information so that unrelated tasks do not end up influencing each other. A. Kumar, H. Daume(2012). Learning Task Grouping and Overlap in Multi-Task Learning, the 29 th International Conference on Machine Learning. 19

2012 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Learning task grouping and overlap in multi-task learning (2) ü Sharing information between two unrelated tasks can worsen the performance of both tasks. This phenomenon is also known as negative transfer. ü We propose a framework for multi-task learning that enables one to selectively share the information across the tasks. ü We assume that each task parameter vector is a linear combination of a finite number of underlying basis tasks. ü Our model is based on the assumption that task parameters within a group lie in a low dimensional subspace but allows the tasks in different groups to overlap with each other in one or more bases. A. Kumar, H. Daume(2012). Learning Task Grouping and Overlap in Multi-Task Learning, the 29 th International Conference on Machine Learning. 20

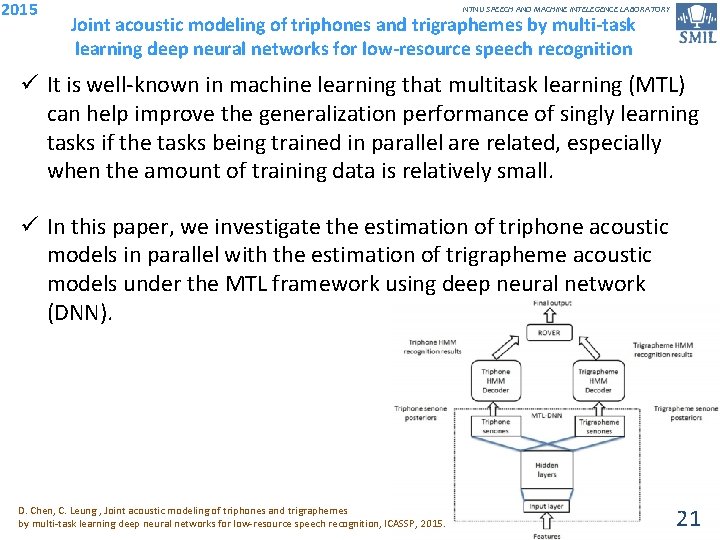

2015 NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY Joint acoustic modeling of triphones and trigraphemes by multi-task learning deep neural networks for low-resource speech recognition ü It is well-known in machine learning that multitask learning (MTL) can help improve the generalization performance of singly learning tasks if the tasks being trained in parallel are related, especially when the amount of training data is relatively small. ü In this paper, we investigate the estimation of triphone acoustic models in parallel with the estimation of trigrapheme acoustic models under the MTL framework using deep neural network (DNN). D. Chen, C. Leung , Joint acoustic modeling of triphones and trigraphemes by multi-task learning deep neural networks for low-resource speech recognition, ICASSP, 2015. 21

NTNU SPEECH AND MACHINE INTELEGENCE LABORATORY 22

- Slides: 22