Nonparametric Density Estimation Riu Baring CIS 8526 Machine

Nonparametric Density Estimation Riu Baring CIS 8526 Machine Learning Temple University Fall 2007 Christopher M. Bishop, Pattern Recognition and Machine Learning, Chapter 2. 5 Some slides from http: //courses. cs. tamu. edu/rgutier/cpsc 689_f 07/

Overview l Density Estimation ¡ ¡ l Given: a finite set x 1, …, x. N Task: to model the probability distribution p(x) Parametric Distribution ¡ Governed by adaptive parameters l ¡ ¡ ¡ Mean and variance – Gaussian Distribution Need procedure to determine suitable values for the parameters Discrete rv – binomial and multinomial distributions Continuous rv – Gaussian distributions

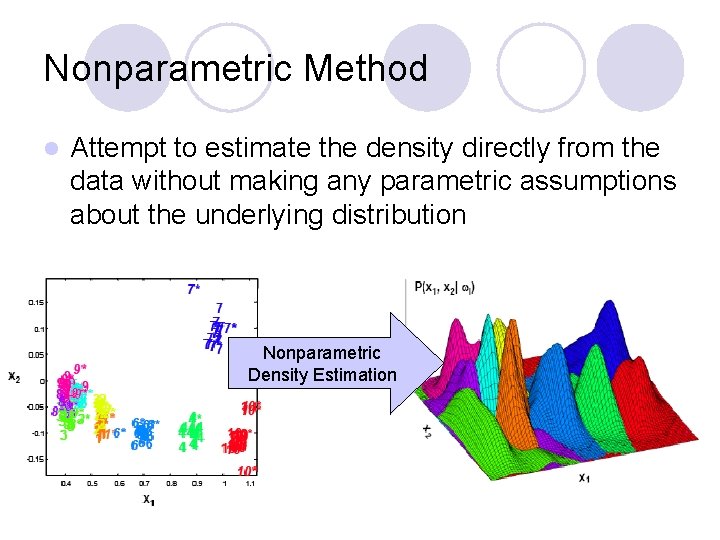

Nonparametric Method l Attempt to estimate the density directly from the data without making any parametric assumptions about the underlying distribution Nonparametric Density Estimation l .

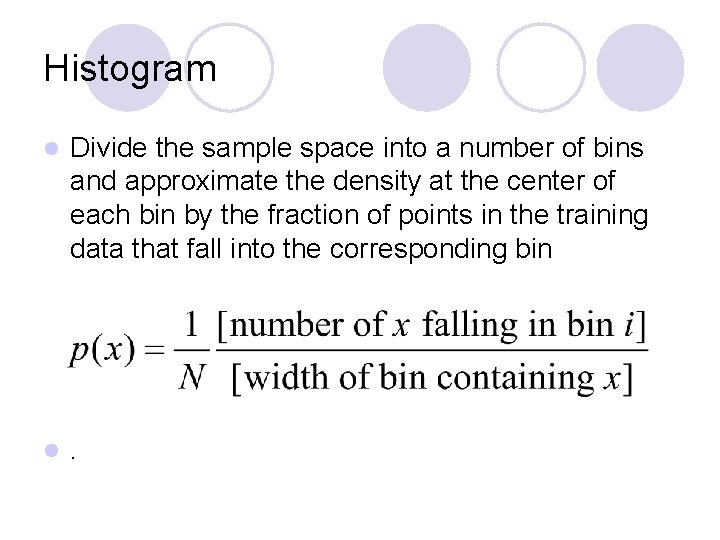

Histogram l Divide the sample space into a number of bins and approximate the density at the center of each bin by the fraction of points in the training data that fall into the corresponding bin l .

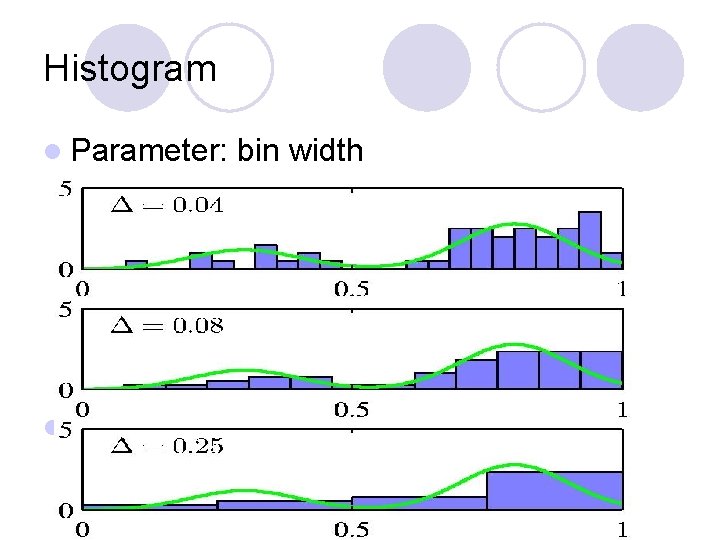

Histogram l Parameter: l. bin width

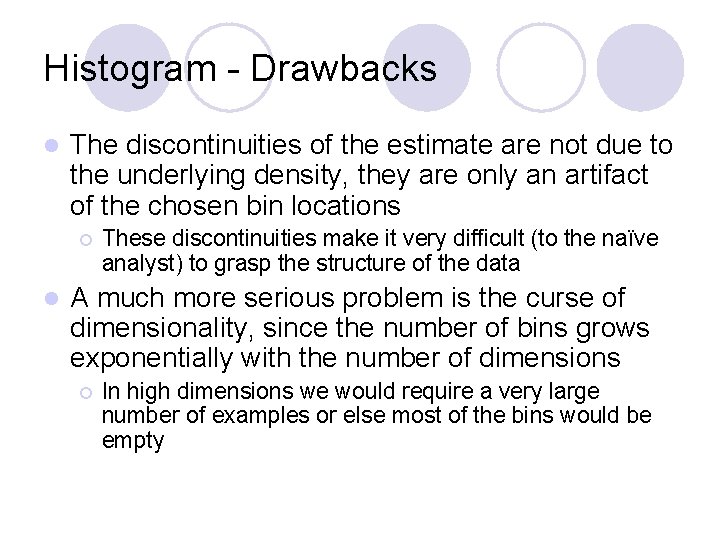

Histogram - Drawbacks l The discontinuities of the estimate are not due to the underlying density, they are only an artifact of the chosen bin locations ¡ l These discontinuities make it very difficult (to the naïve analyst) to grasp the structure of the data A much more serious problem is the curse of dimensionality, since the number of bins grows exponentially with the number of dimensions ¡ In high dimensions we would require a very large number of examples or else most of the bins would be empty

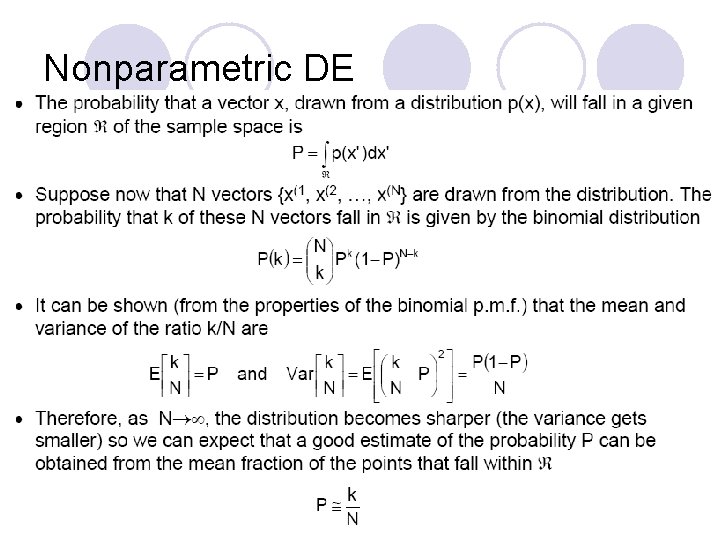

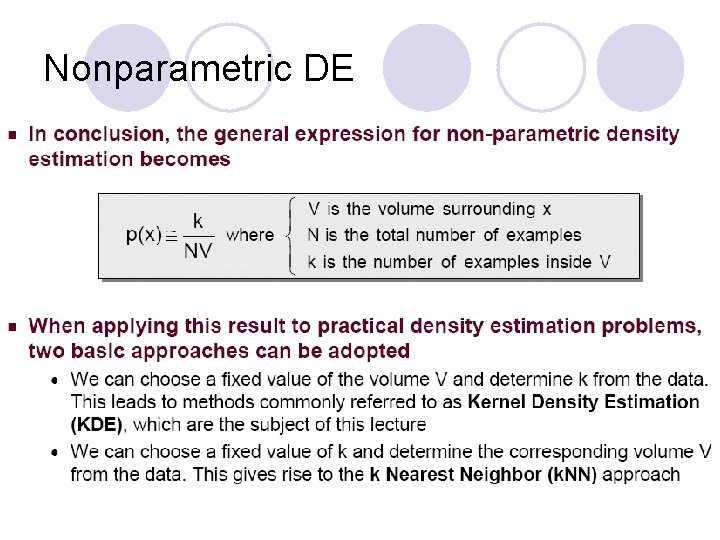

Nonparametric DE

Nonparametric DE

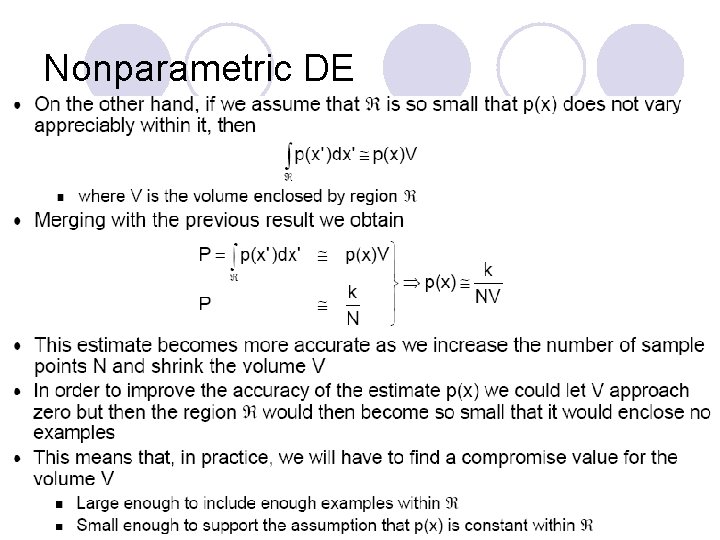

Nonparametric DE

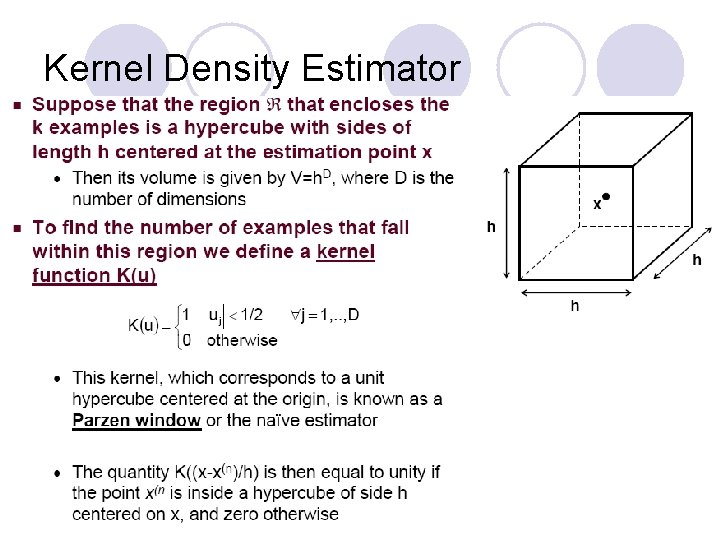

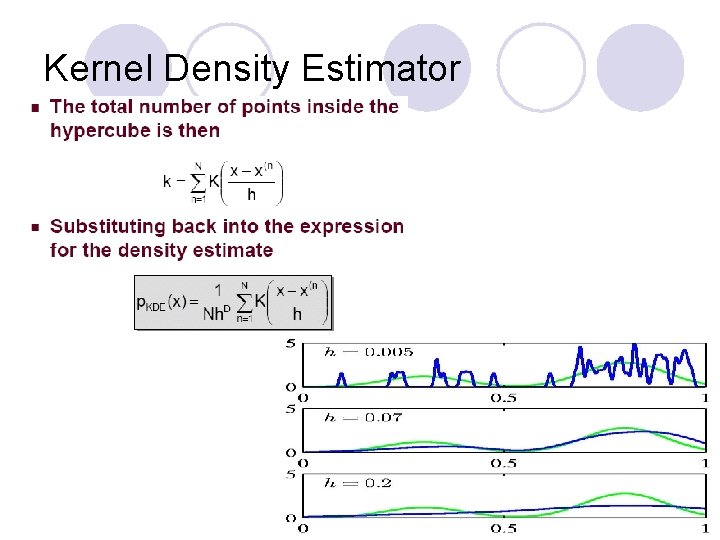

Kernel Density Estimator

Kernel Density Estimator

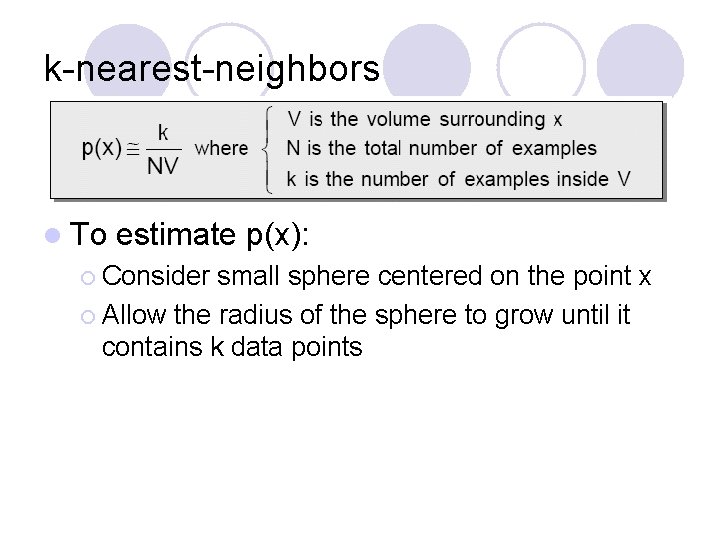

k-nearest-neighbors l To estimate p(x): ¡ Consider small sphere centered on the point x ¡ Allow the radius of the sphere to grow until it contains k data points

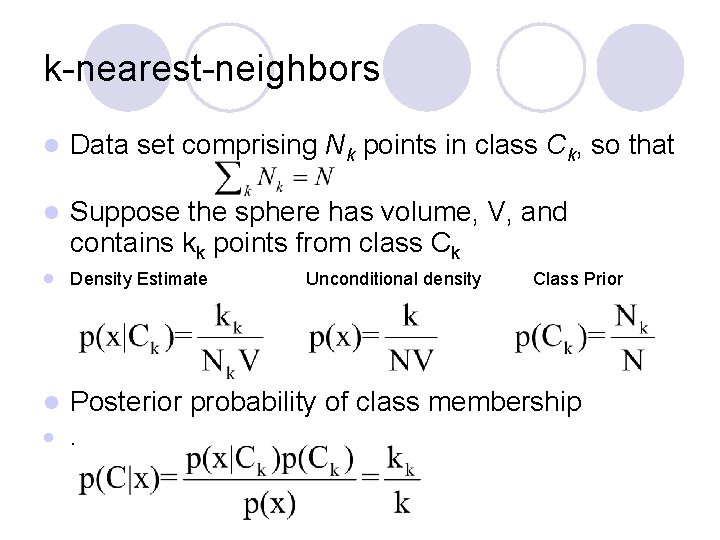

k-nearest-neighbors l Data set comprising Nk points in class Ck, so that l Suppose the sphere has volume, V, and contains kk points from class Ck l Density Estimate l Posterior probability of class membership l . Unconditional density Class Prior

k-nearest-neighbors l To classify new point x ¡ ¡ Identify K nearest neighbors from training data Assign to the class having the largest number of representatives l Parameter, K l .

My thoughts l KDE and KNN require the entire training data set to be stored ¡ Leads l Tweak ¡ KDE: to expensive computation “parameters” bandwidth, h ¡ KNN: K

- Slides: 15