Nonlinear Knowledge in Kernel Machines Data Mining and

![Two-Dimensional Tower Function (Misleading) Data v. Given 400 points on the grid [-4, 4] Two-Dimensional Tower Function (Misleading) Data v. Given 400 points on the grid [-4, 4]](https://slidetodoc.com/presentation_image_h2/87023d01d0440b4671de15fb90f9b6c5/image-24.jpg)

- Slides: 43

Nonlinear Knowledge in Kernel Machines Data Mining and Mathematical Programming Workshop Centre de Recherches Mathématiques Université de Montréal, Québec October 10 -13, 2006 Olvi Mangasarian UW Madison & UCSD La Jolla Edward Wild UW Madison

Objectives v Primary objective: Incorporate prior knowledge over completely arbitrary sets into: Ø function approximation, and Ø classification Ø without transforming (kernelizing) the knowledge v Secondary objective: Achieve transparency of the prior knowledge for practical applications

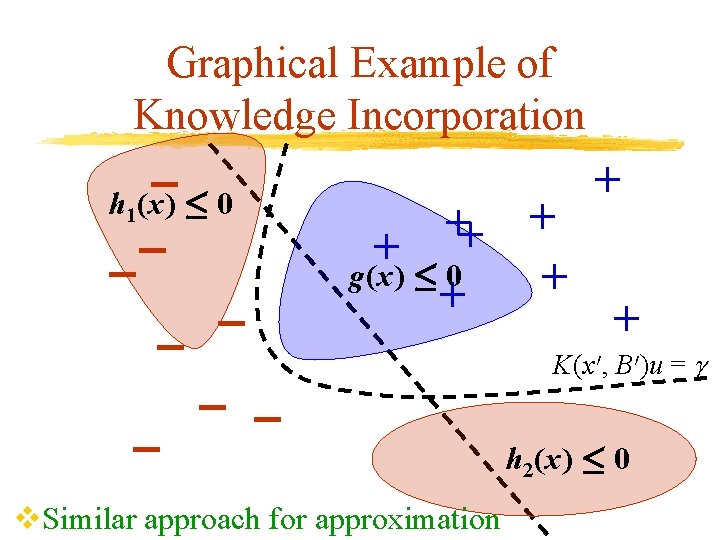

Graphical Example of Knowledge Incorporation h 1(x) · 0 + + + g(x) · 0 + + + v. Similar approach for approximation K(x 0, B 0)u = h 2(x) · 0

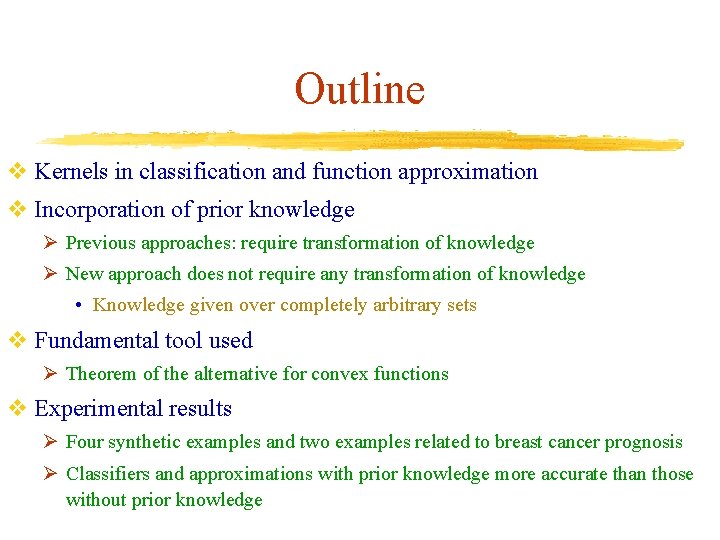

Outline v Kernels in classification and function approximation v Incorporation of prior knowledge Ø Previous approaches: require transformation of knowledge Ø New approach does not require any transformation of knowledge • Knowledge given over completely arbitrary sets v Fundamental tool used Ø Theorem of the alternative for convex functions v Experimental results Ø Four synthetic examples and two examples related to breast cancer prognosis Ø Classifiers and approximations with prior knowledge more accurate than those without prior knowledge

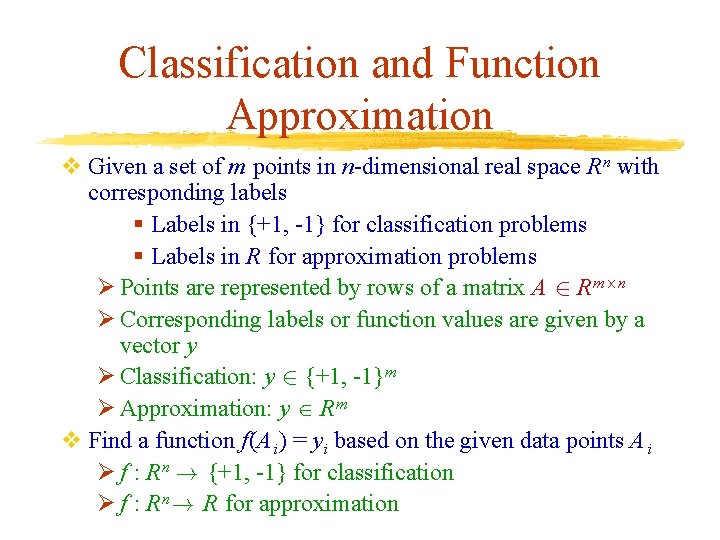

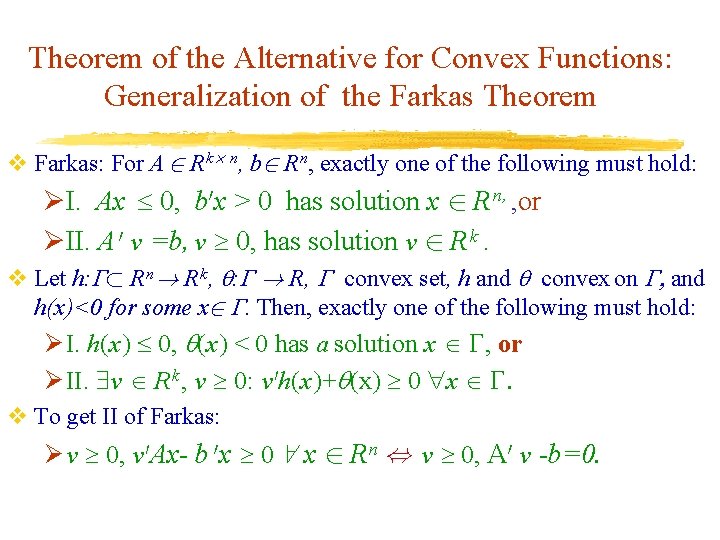

Theorem of the Alternative for Convex Functions: Generalization of the Farkas Theorem v Farkas: For A 2 R k £ n, b 2 R n, exactly one of the following must hold: ØI. Ax 0, b 0 x > 0 has solution x 2 R n, , or ØII. A 0 v =b, v 0, has solution v 2 R k. v Let h: ½ R n! R k , : ! R, convex set, h and convex on , and h(x)<0 for some x 2 . Then, exactly one of the following must hold: Ø I. h(x) 0, (x) < 0 has a solution x , or Ø II. v R k , v 0: v 0 h(x)+ (x) 0 x . v To get II of Farkas: Ø v 0, v 0 Ax- b 0 x 0 8 x 2 R n , v 0, A 0 v -b=0.

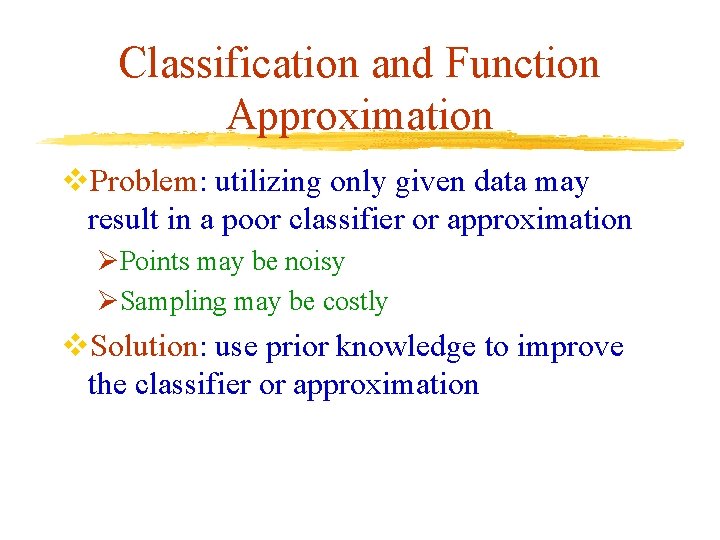

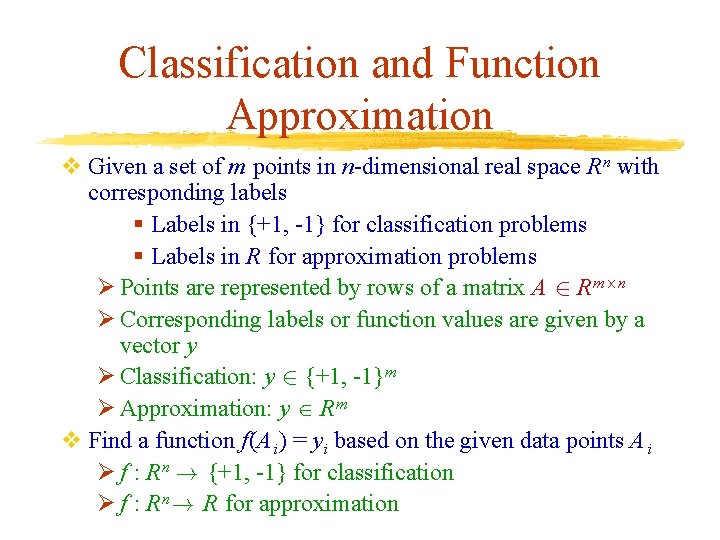

Classification and Function Approximation v Given a set of m points in n-dimensional real space R n with corresponding labels § Labels in {+1, -1} for classification problems § Labels in R for approximation problems Ø Points are represented by rows of a matrix A 2 R m£n Ø Corresponding labels or function values are given by a vector y Ø Classification: y 2 {+1, -1}m Ø Approximation: y R m v Find a function f(A i) = y i based on the given data points A i Ø f : R n ! {+1, -1} for classification Ø f : R n! R for approximation

Classification and Function Approximation v. Problem: utilizing only given data may result in a poor classifier or approximation ØPoints may be noisy ØSampling may be costly v. Solution: use prior knowledge to improve the classifier or approximation

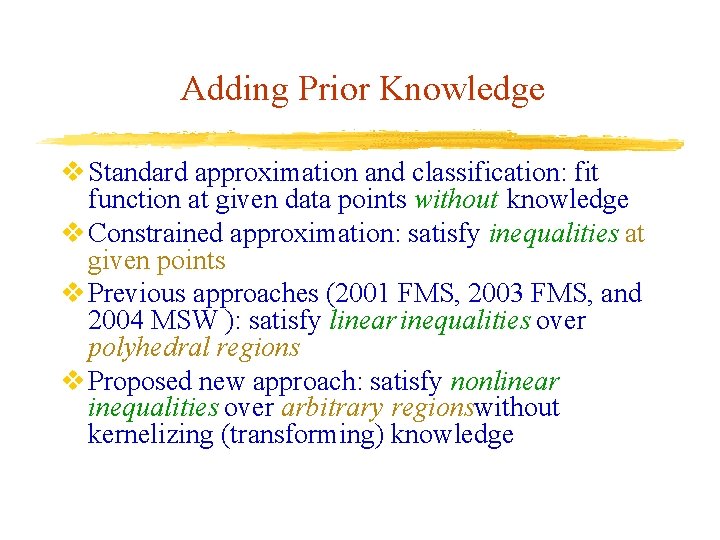

Adding Prior Knowledge v Standard approximation and classification: fit function at given data points without knowledge v Constrained approximation: satisfy inequalities at given points v Previous approaches (2001 FMS, 2003 FMS, and 2004 MSW ): satisfy linear inequalities over polyhedral regions v Proposed new approach: satisfy nonlinear inequalities over arbitrary regionswithout kernelizing (transforming) knowledge

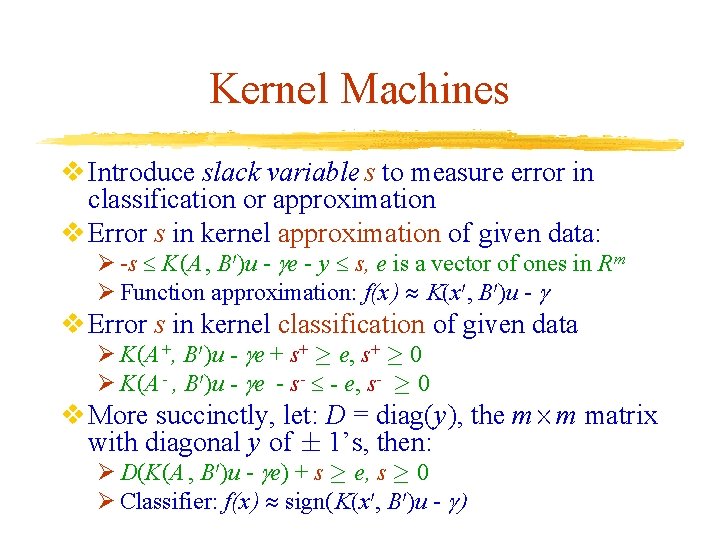

Kernel Machines v Approximate f by a nonlinear kernel function K using parameters u 2 R k and in R v A kernel function is a nonlinear generalization of the scalar product v f(x) K(x 0, B 0)u - , x 2 R n, K: R n £ R n£k ! R k 2 Ø Gaussian K(x 0, B 0)i= - ||x-B i|| , i=1, …. . , k v B 2 R k£n is a basis matrix Ø Usually, B = A 2 R m£n = Input data matrix Ø In Reduced Support Vector Machines, B is a small subset of the rows of A Ø B may be any matrix with n columns

Kernel Machines v Introduce slack variable s to measure error in classification or approximation v Error s in kernel approximation of given data: Ø -s K(A , B 0)u - e - y s, e is a vector of ones in R m Ø Function approximation: f(x ) K(x 0, B 0)u - v Error s in kernel classification of given data Ø K(A +, B 0)u - e + s+ ¸ e, s+ ¸ 0 Ø K(A - , B 0)u - e - s- - e, s- ¸ 0 v More succinctly, let: D = diag(y), the m£m matrix with diagonal y of § 1’s, then: Ø D(K(A , B 0)u - e) + s ¸ e, s ¸ 0 Ø Classifier: f(x ) sign(K(x 0, B 0)u - )

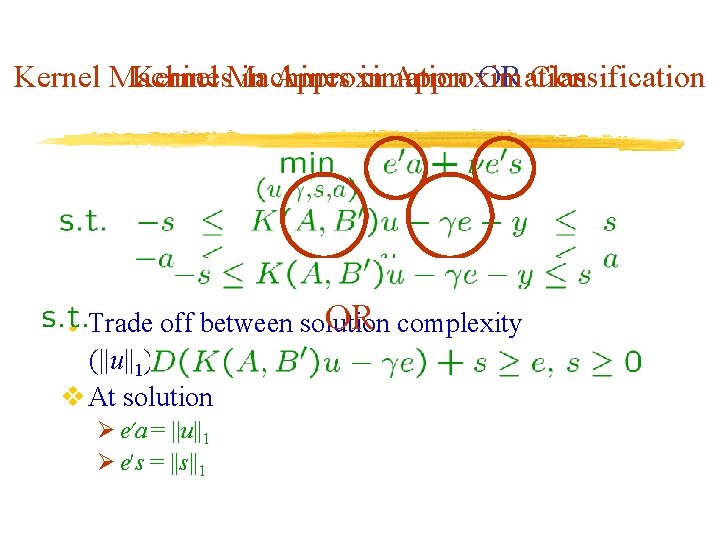

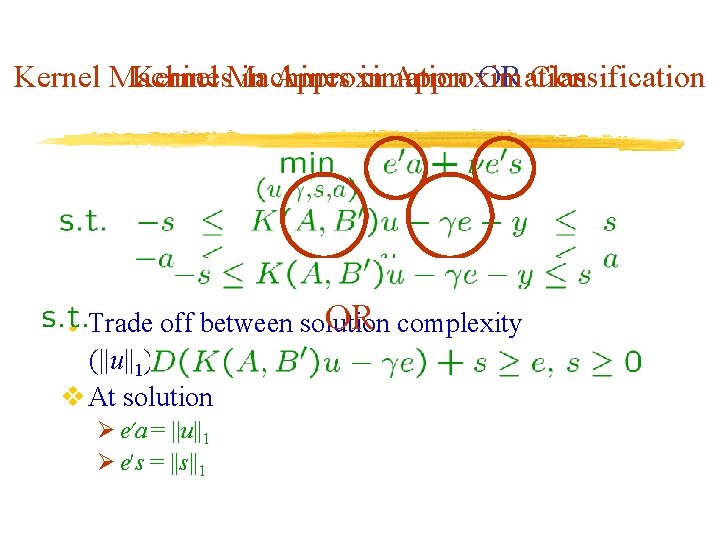

Kernel Machines in Approximation OR Classification OR complexity v Trade off between solution (||u||1) and data fitting (||s||1) v At solution Ø e 0 a = ||u||1 Ø e 0 s = ||s||1

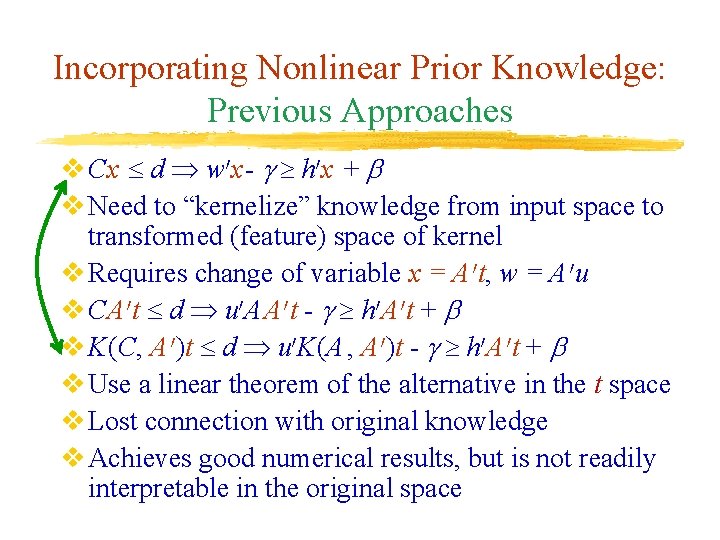

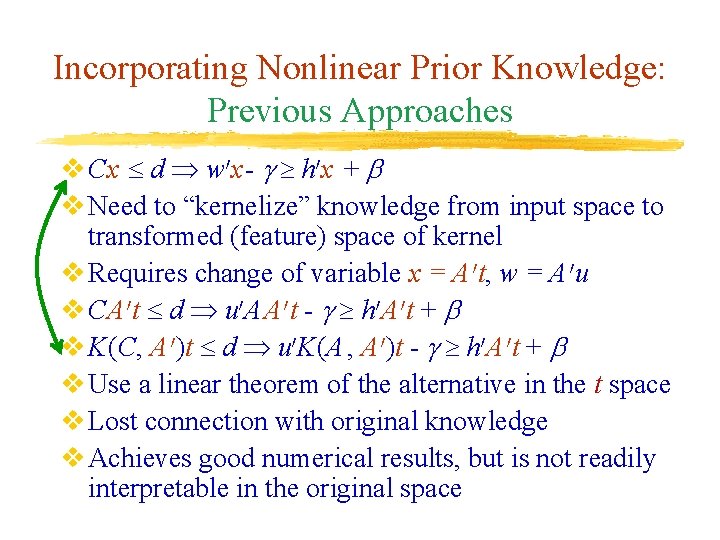

Incorporating Nonlinear Prior Knowledge: Previous Approaches v Cx d w 0 x- h 0 x + v Need to “kernelize” knowledge from input space to transformed (feature) space of kernel v Requires change of variable x = A 0 t, w = A 0 u v CA 0 t d u 0 A A 0 t - h 0 A 0 t + v K(C, A 0)t d u 0 K(A , A 0)t - h 0 A 0 t + v Use a linear theorem of the alternative in the t space v Lost connection with original knowledge v Achieves good numerical results, but is not readily interpretable in the original space

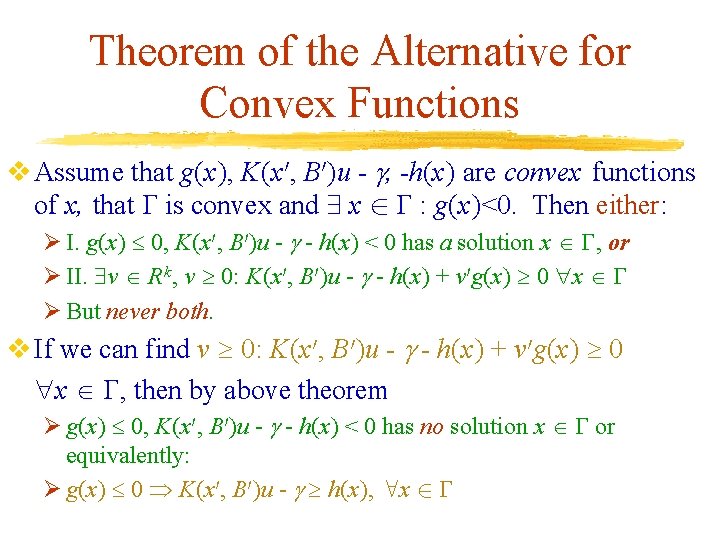

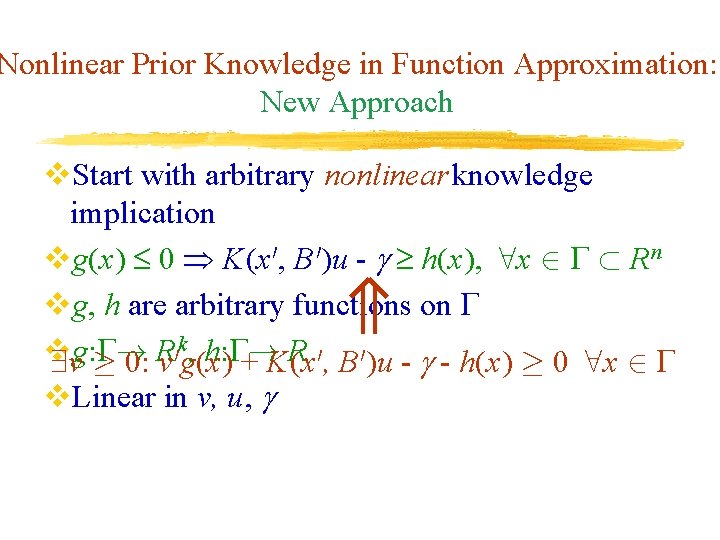

Nonlinear Prior Knowledge in Function Approximation: New Approach v. Start with arbitrary nonlinear knowledge implication vg(x) 0 K(x 0, B 0)u - h(x), 8 x 2 ½ R n vg, h are arbitrary functions on k , h: ! R v g: ! R 0 9 v ¸ 0: v g(x) + K(x 0, B 0)u - - h(x) ¸ 0 8 x 2 v. Linear in v, u,

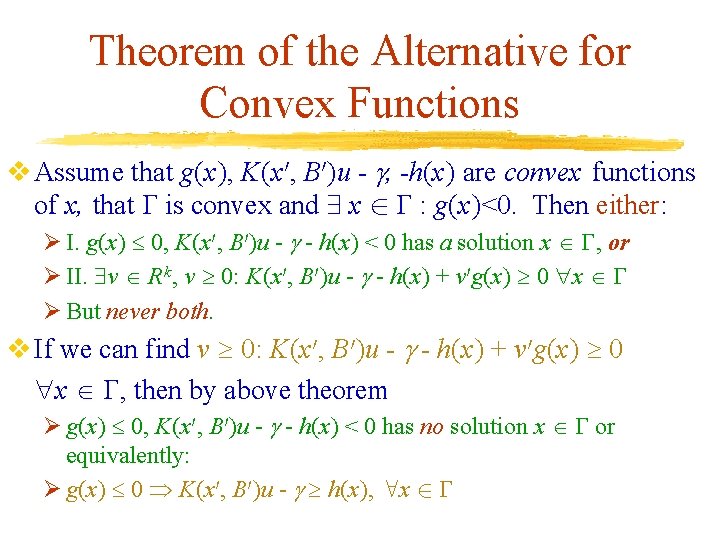

Theorem of the Alternative for Convex Functions v Assume that g(x), K(x 0, B 0)u - , -h(x) are convex functions of x, that is convex and 9 x 2 : g(x)<0. Then either: Ø I. g(x) 0, K(x 0, B 0)u - - h(x) < 0 has a solution x , or Ø II. v R k , v 0: K(x 0, B 0)u - - h(x) + v 0 g(x) 0 x Ø But never both. v If we can find v 0: K(x 0, B 0)u - - h(x) + v 0 g(x) 0 x , then by above theorem Ø g(x) 0, K(x 0, B 0)u - - h(x) < 0 has no solution x or equivalently: Ø g(x) 0 K(x 0, B 0)u - h(x), 8 x 2

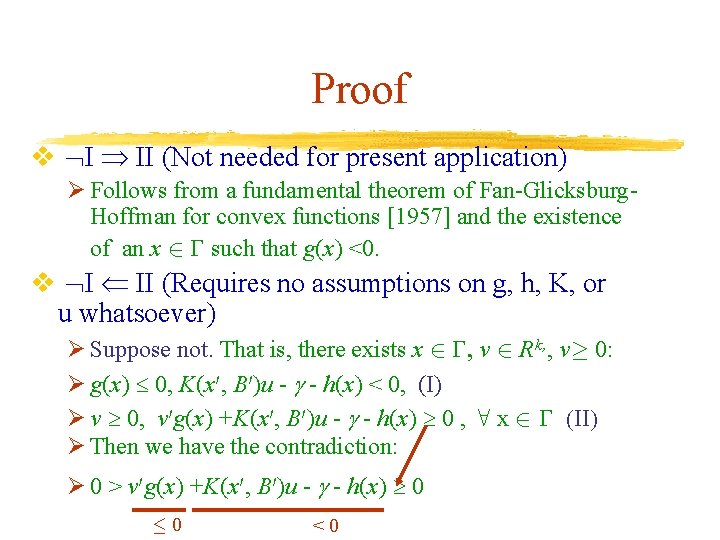

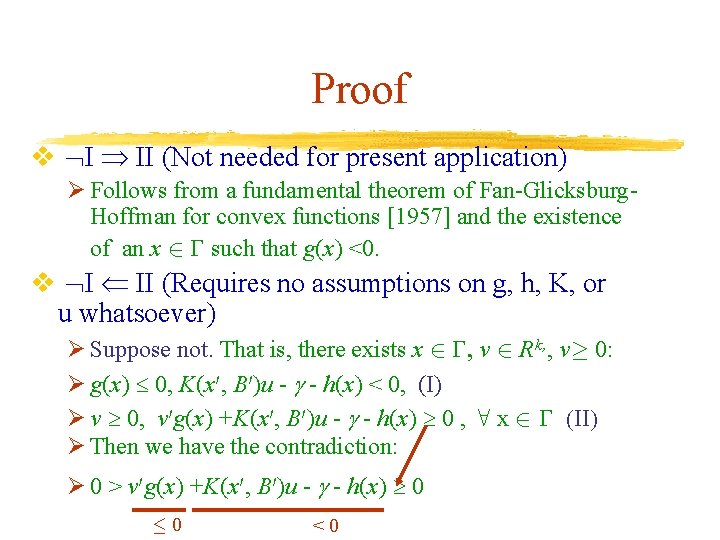

Proof v I II (Not needed for present application) Ø Follows from a fundamental theorem of Fan-Glicksburg. Hoffman for convex functions [1957] and the existence of an x 2 such that g(x) <0. v I II (Requires no assumptions on g, h, K, or u whatsoever) Ø Suppose not. That is, there exists x 2 , v 2 R k, , v¸ 0: Ø g(x) 0, K(x 0, B 0)u - - h(x) < 0, (I) Ø v 0, v 0 g(x) +K(x 0, B 0)u - - h(x) 0 , 8 x 2 (II) Ø Then we have the contradiction: Ø 0 > v 0 g(x) +K(x 0, B 0)u - - h(x) 0 · 0 <0

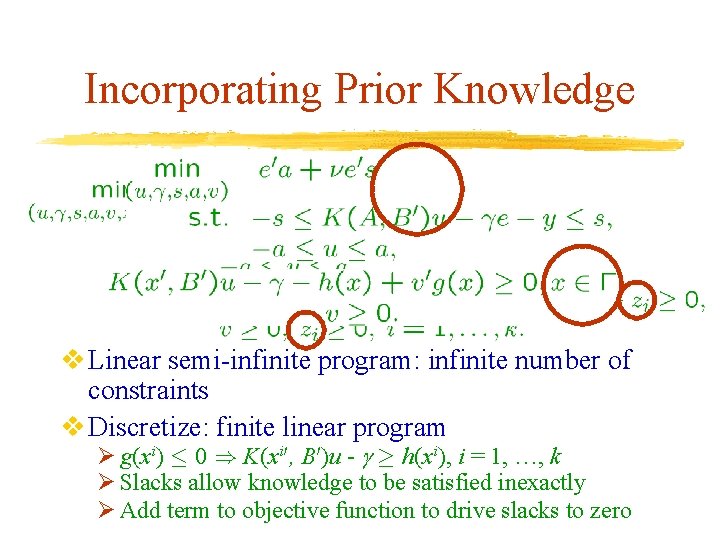

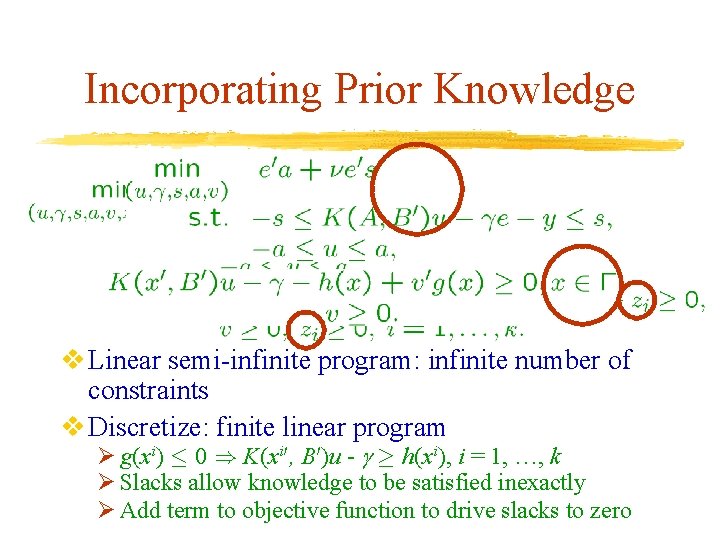

Incorporating Prior Knowledge v Linear semi-infinite program: infinite number of constraints v Discretize: finite linear program Ø g(x i) · 0 ) K(x i 0, B 0)u - ¸ h(x i), i = 1, …, k Ø Slacks allow knowledge to be satisfied inexactly Ø Add term to objective function to drive slacks to zero

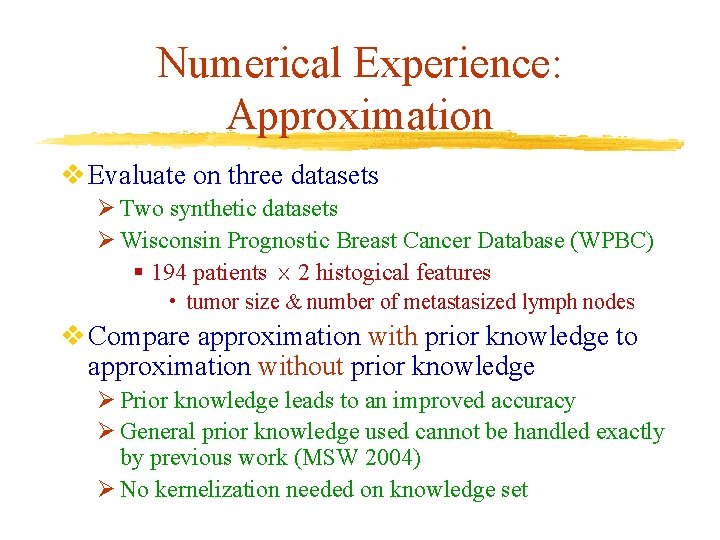

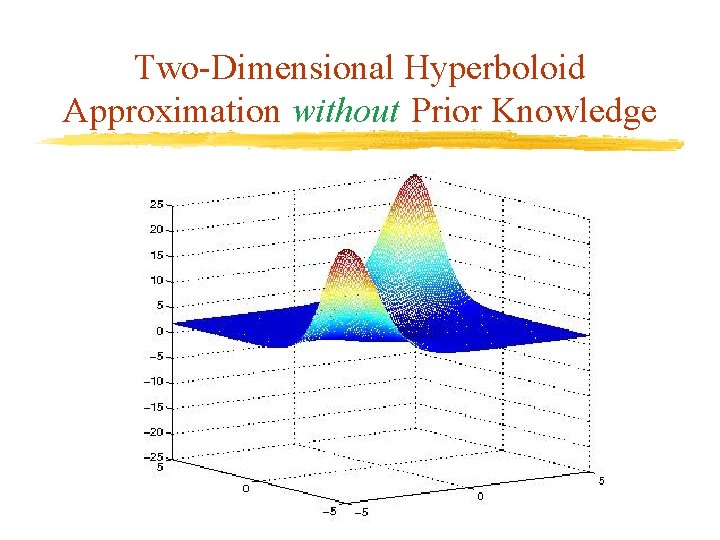

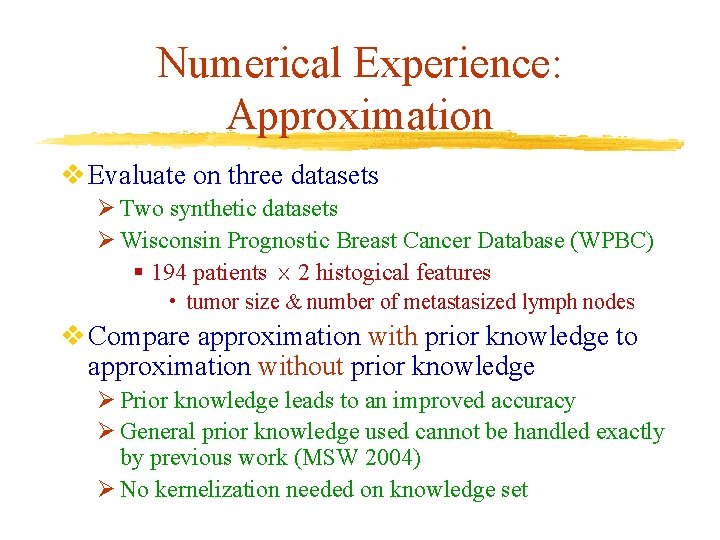

Numerical Experience: Approximation v Evaluate on three datasets Ø Two synthetic datasets Ø Wisconsin Prognostic Breast Cancer Database (WPBC) § 194 patients £ 2 histogical features • tumor size & number of metastasized lymph nodes v Compare approximation with prior knowledge to approximation without prior knowledge Ø Prior knowledge leads to an improved accuracy Ø General prior knowledge used cannot be handled exactly by previous work (MSW 2004) Ø No kernelization needed on knowledge set

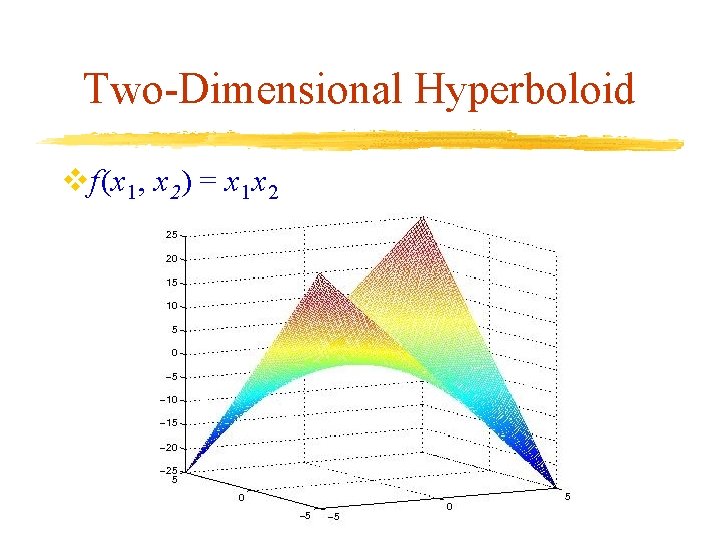

Two-Dimensional Hyperboloid vf(x 1, x 2) = x 1 x 2

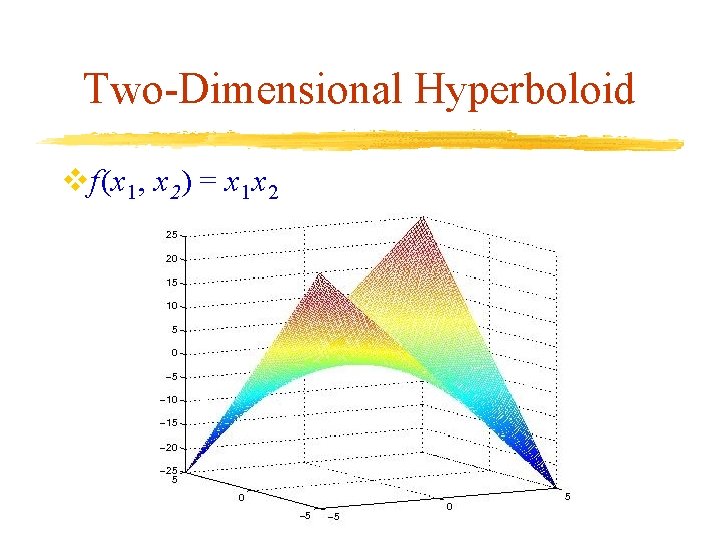

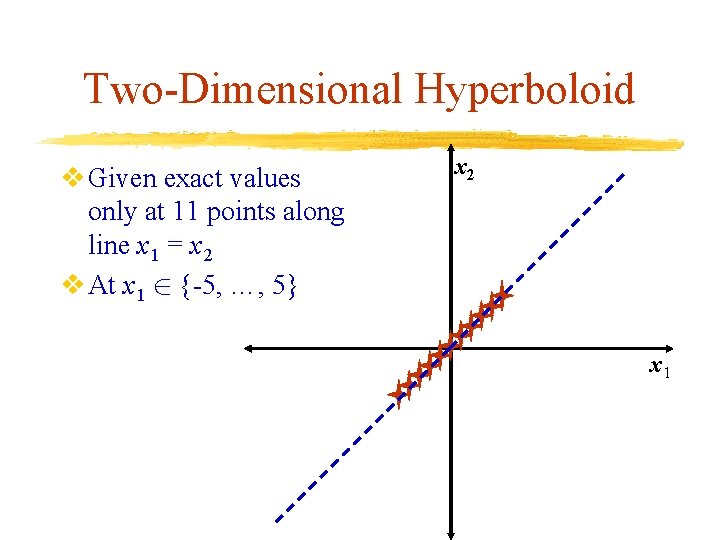

Two-Dimensional Hyperboloid v Given exact values only at 11 points along line x 1 = x 2 v At x 1 2 {-5, …, 5} x 2 x 1

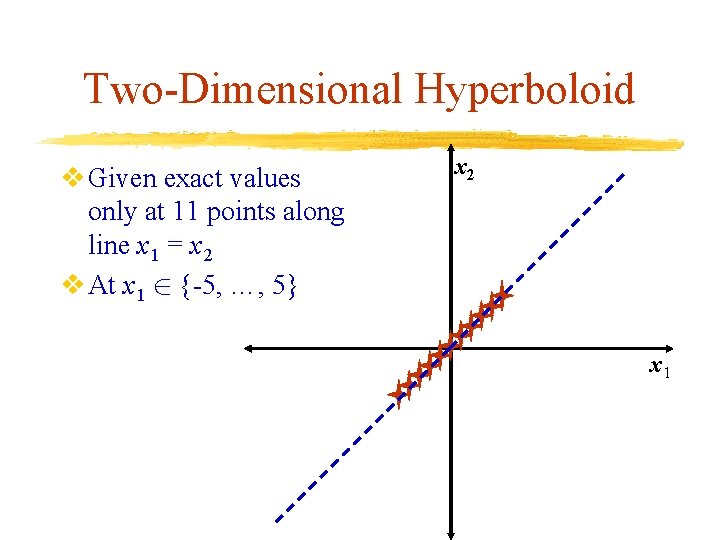

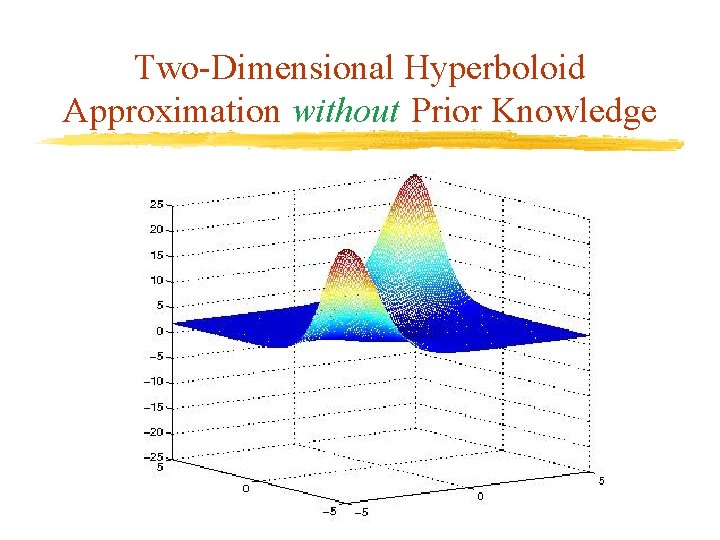

Two-Dimensional Hyperboloid Approximation without Prior Knowledge

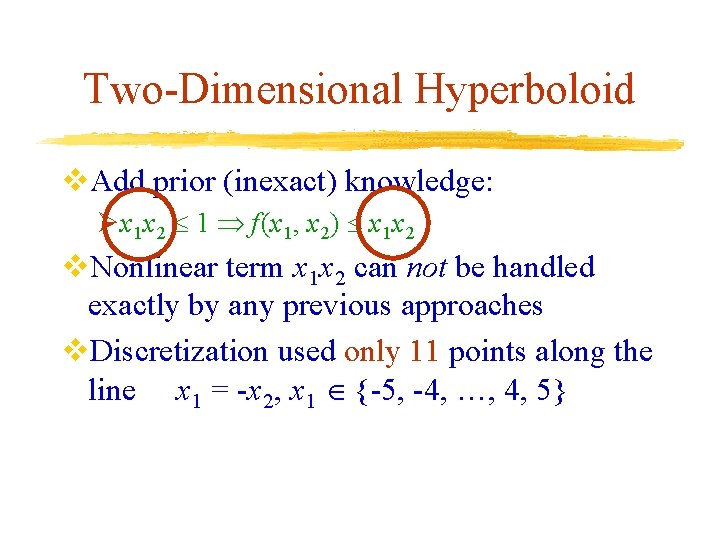

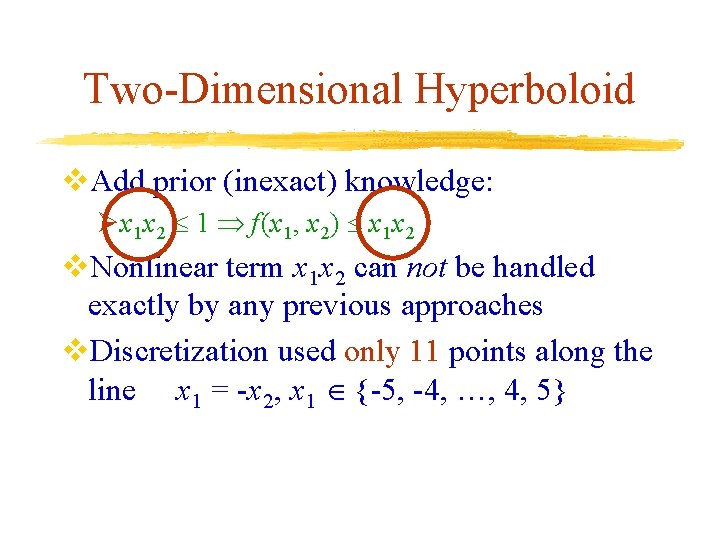

Two-Dimensional Hyperboloid v. Add prior (inexact) knowledge: Øx 1 x 2 1 f(x 1, x 2) x 1 x 2 v. Nonlinear term x 1 x 2 can not be handled exactly by any previous approaches v. Discretization used only 11 points along the line x 1 = -x 2, x 1 {-5, -4, …, 4, 5}

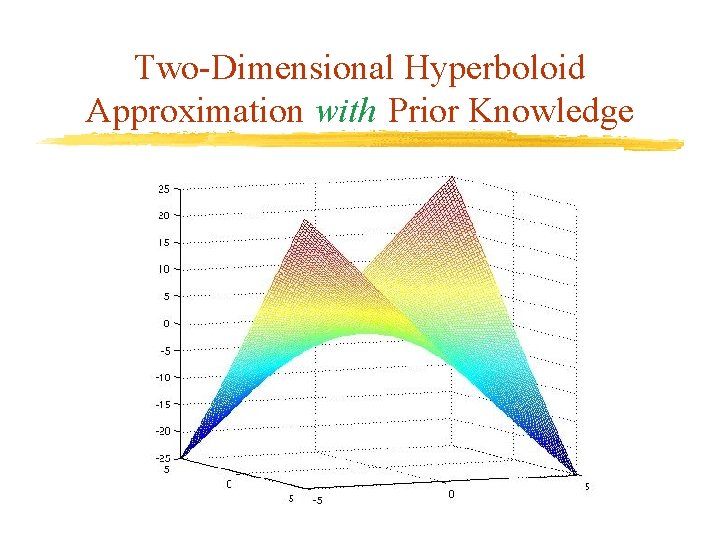

Two-Dimensional Hyperboloid Approximation with Prior Knowledge

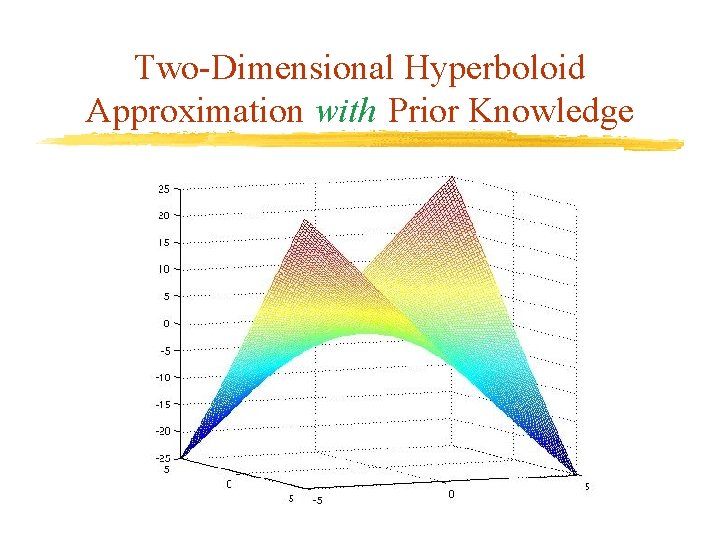

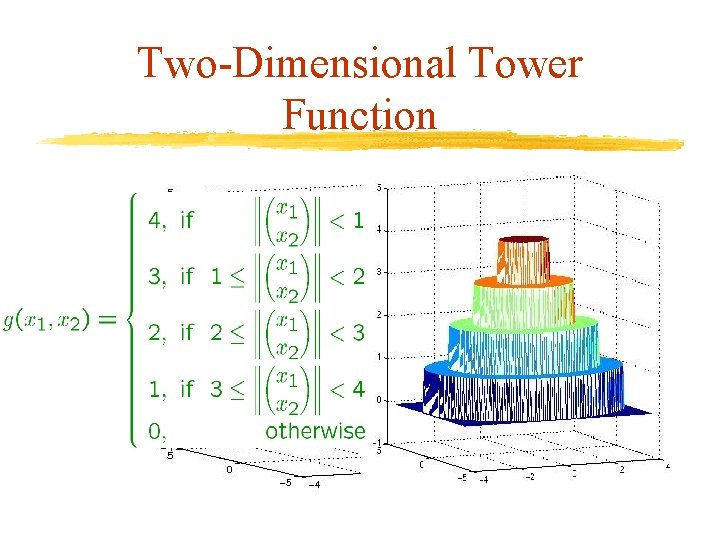

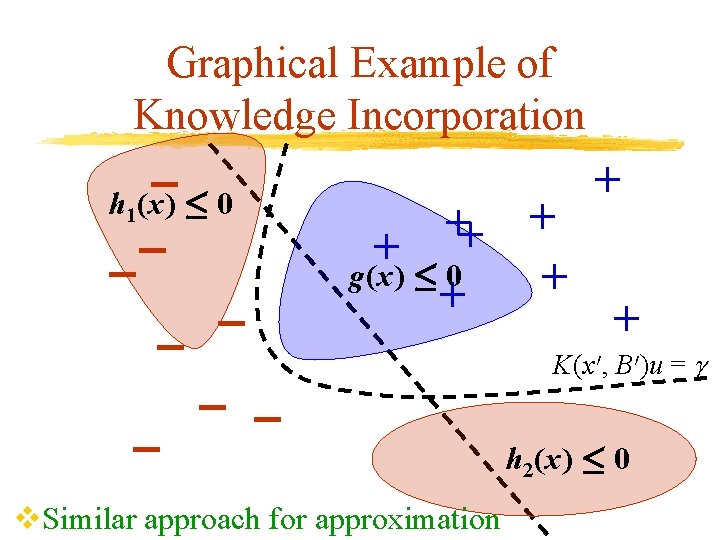

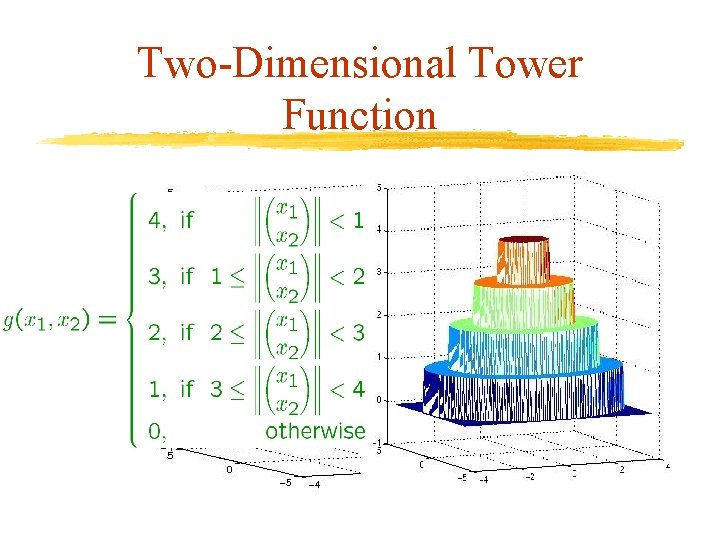

Two-Dimensional Tower Function

![TwoDimensional Tower Function Misleading Data v Given 400 points on the grid 4 4 Two-Dimensional Tower Function (Misleading) Data v. Given 400 points on the grid [-4, 4]](https://slidetodoc.com/presentation_image_h2/87023d01d0440b4671de15fb90f9b6c5/image-24.jpg)

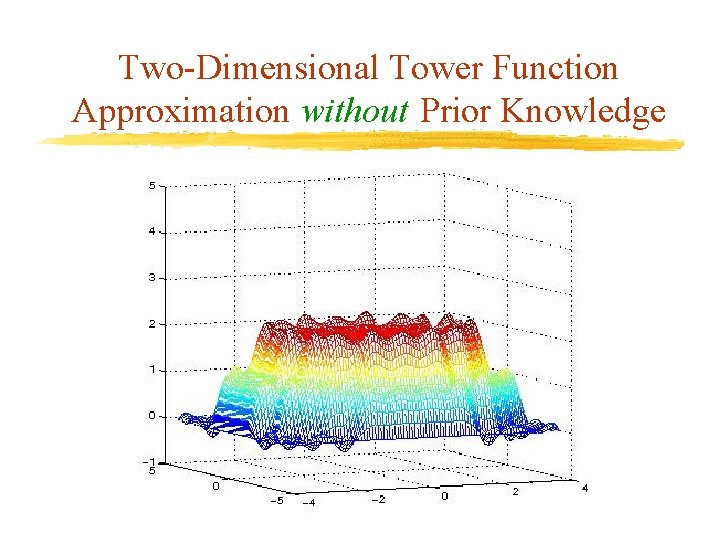

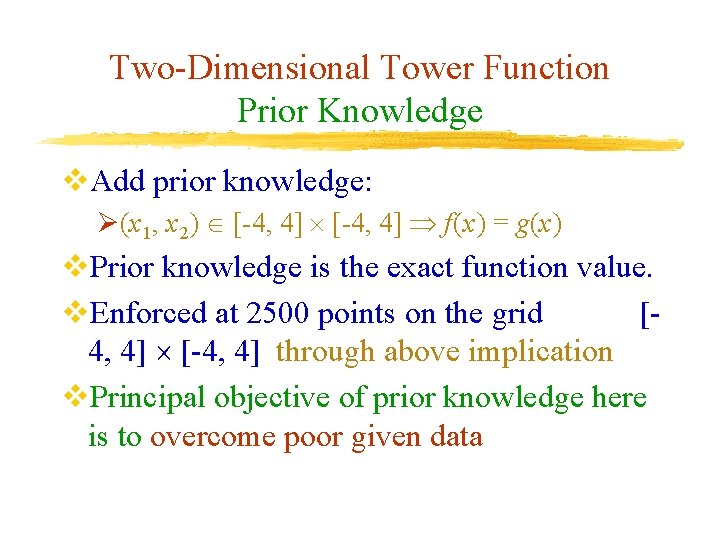

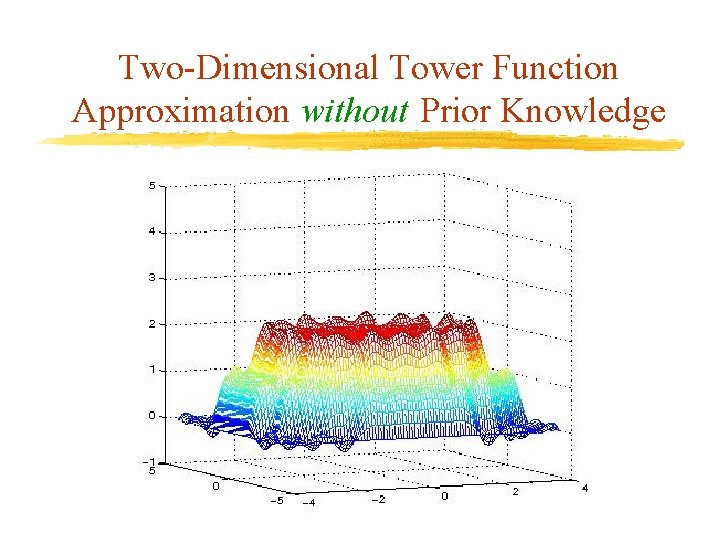

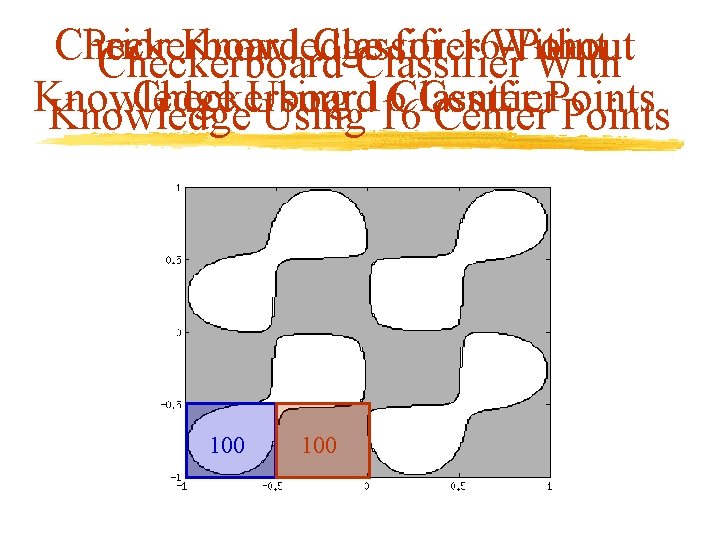

Two-Dimensional Tower Function (Misleading) Data v. Given 400 points on the grid [-4, 4] v. Values are min{g(x), 2}, where g(x) is the exact tower function

Two-Dimensional Tower Function Approximation without Prior Knowledge

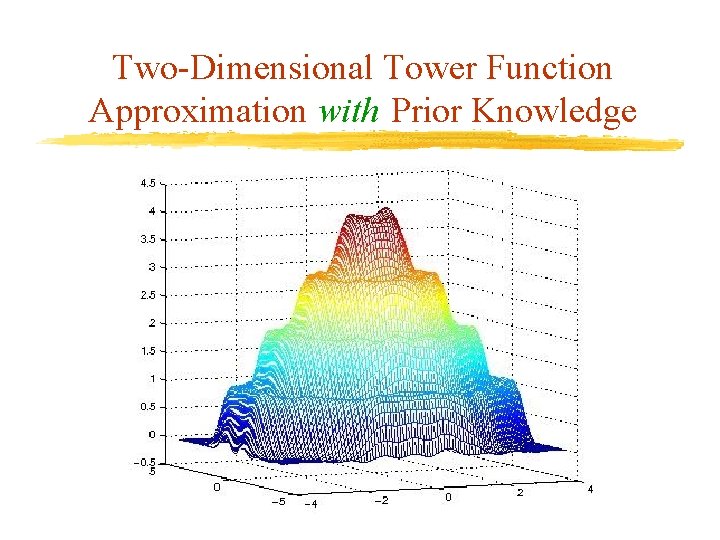

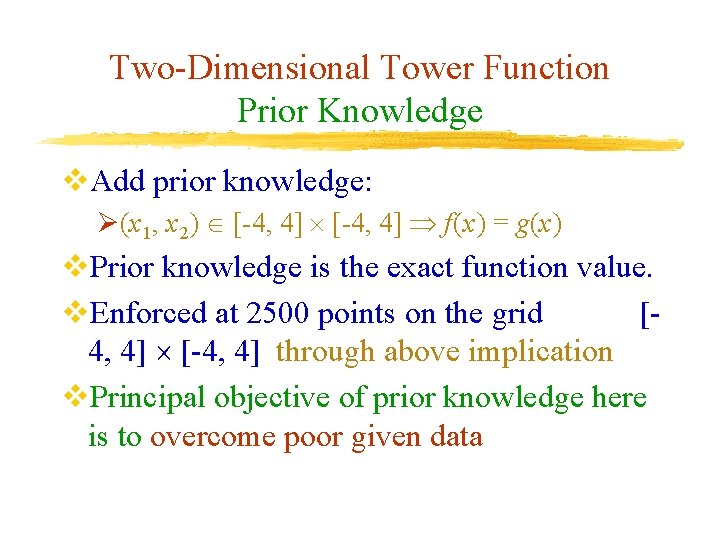

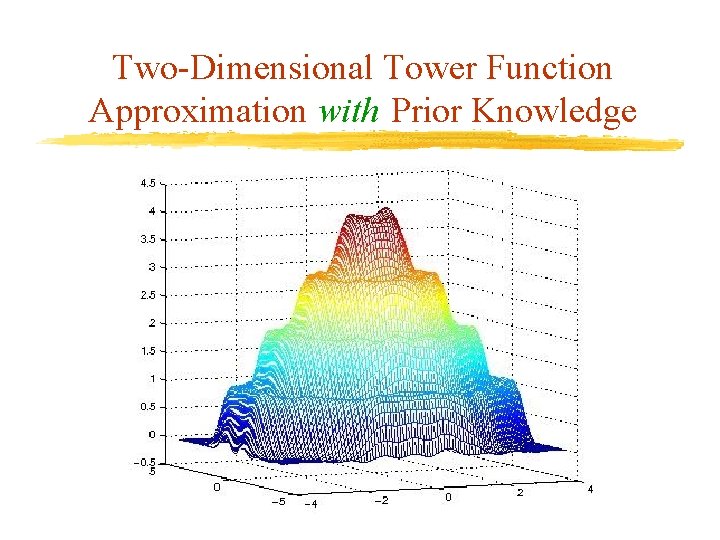

Two-Dimensional Tower Function Prior Knowledge v. Add prior knowledge: Ø(x 1, x 2) [-4, 4] f(x) = g(x) v. Prior knowledge is the exact function value. v. Enforced at 2500 points on the grid [4, 4] [-4, 4] through above implication v. Principal objective of prior knowledge here is to overcome poor given data

Two-Dimensional Tower Function Approximation with Prior Knowledge

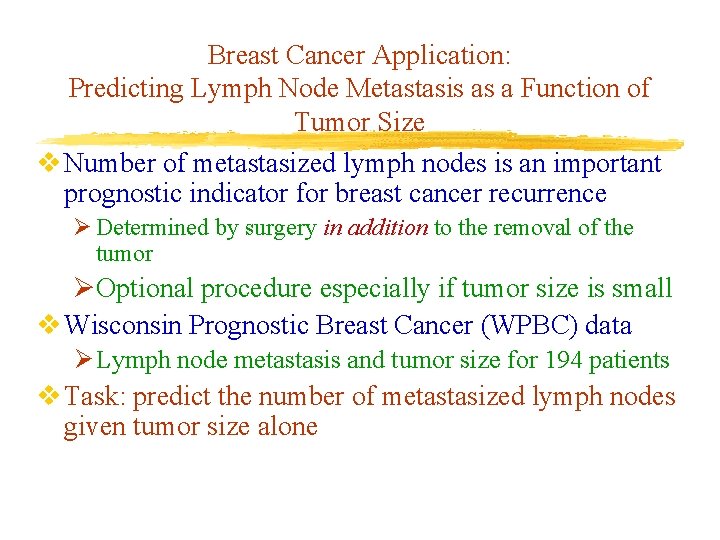

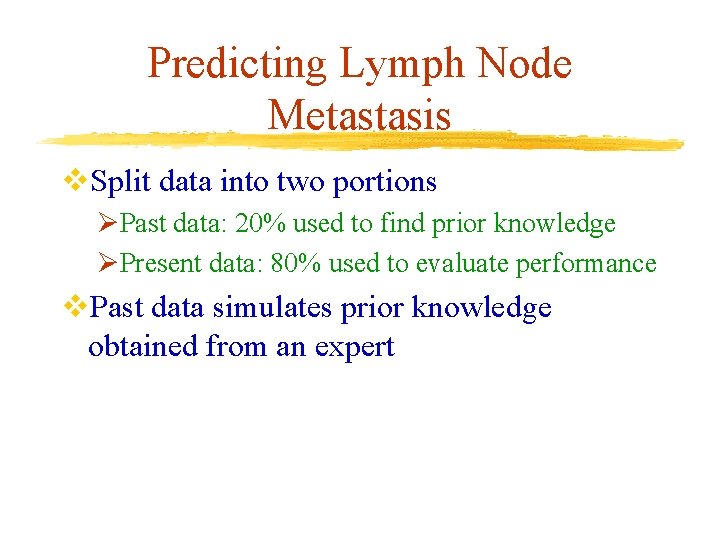

Breast Cancer Application: Predicting Lymph Node Metastasis as a Function of Tumor Size v Number of metastasized lymph nodes is an important prognostic indicator for breast cancer recurrence Ø Determined by surgery in addition to the removal of the tumor ØOptional procedure especially if tumor size is small v Wisconsin Prognostic Breast Cancer (WPBC) data Ø Lymph node metastasis and tumor size for 194 patients v Task: predict the number of metastasized lymph nodes given tumor size alone

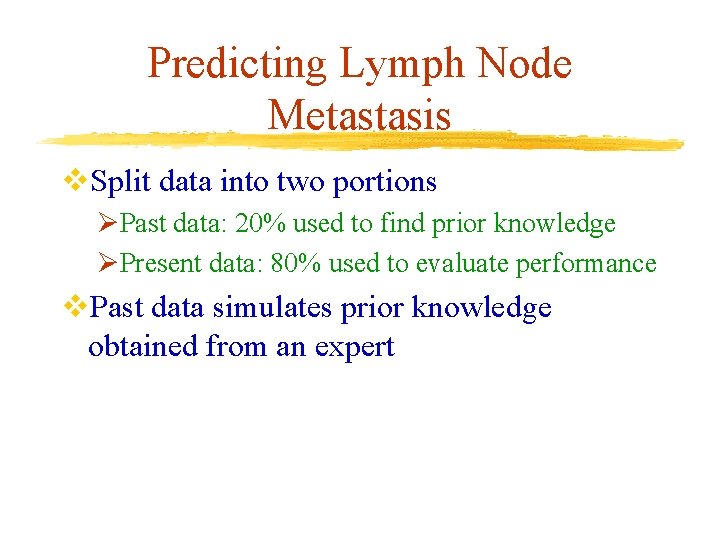

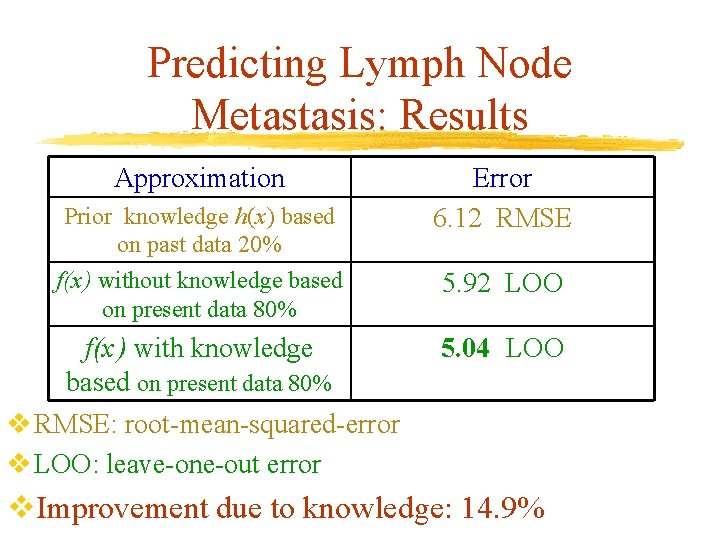

Predicting Lymph Node Metastasis v. Split data into two portions ØPast data: 20% used to find prior knowledge ØPresent data: 80% used to evaluate performance v. Past data simulates prior knowledge obtained from an expert

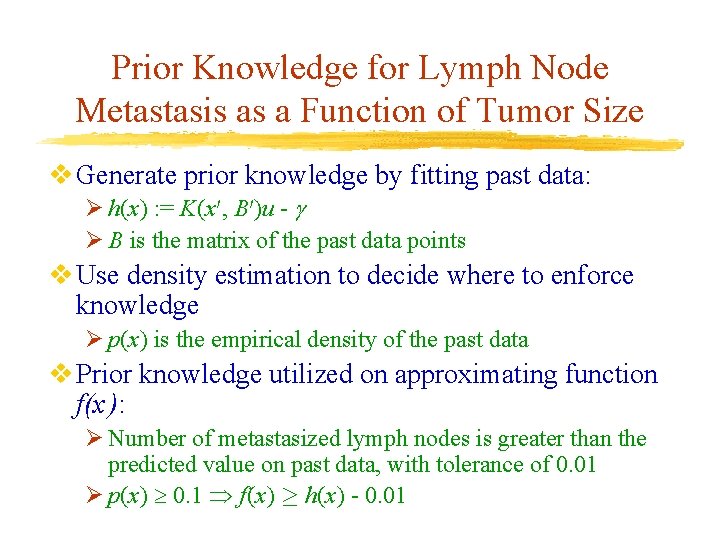

Prior Knowledge for Lymph Node Metastasis as a Function of Tumor Size v Generate prior knowledge by fitting past data: Ø h(x) : = K(x 0, B 0)u - Ø B is the matrix of the past data points v Use density estimation to decide where to enforce knowledge Ø p(x) is the empirical density of the past data v Prior knowledge utilized on approximating function f(x ): Ø Number of metastasized lymph nodes is greater than the predicted value on past data, with tolerance of 0. 01 Ø p(x) 0. 1 f(x) ¸ h(x) - 0. 01

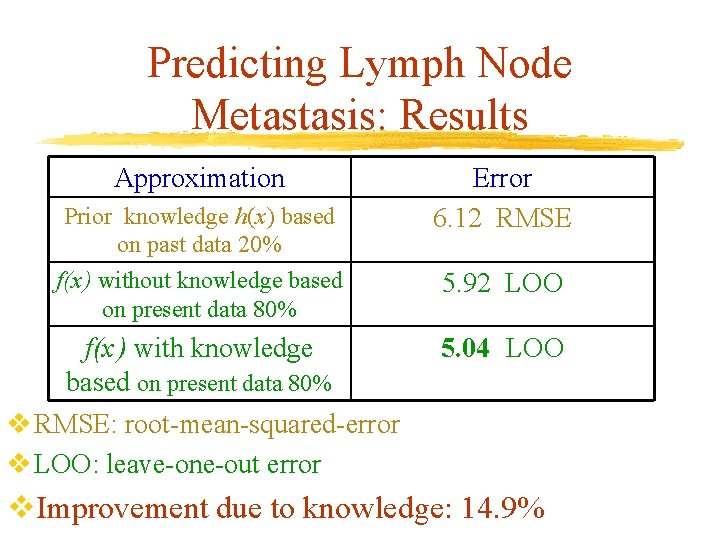

Predicting Lymph Node Metastasis: Results Approximation Prior knowledge h(x) based on past data 20% Error 6. 12 RMSE f(x ) without knowledge based on present data 80% 5. 92 LOO f(x ) with knowledge based on present data 80% v RMSE: root-mean-squared-error v LOO: leave-one-out error 5. 04 LOO v. Improvement due to knowledge: 14. 9%

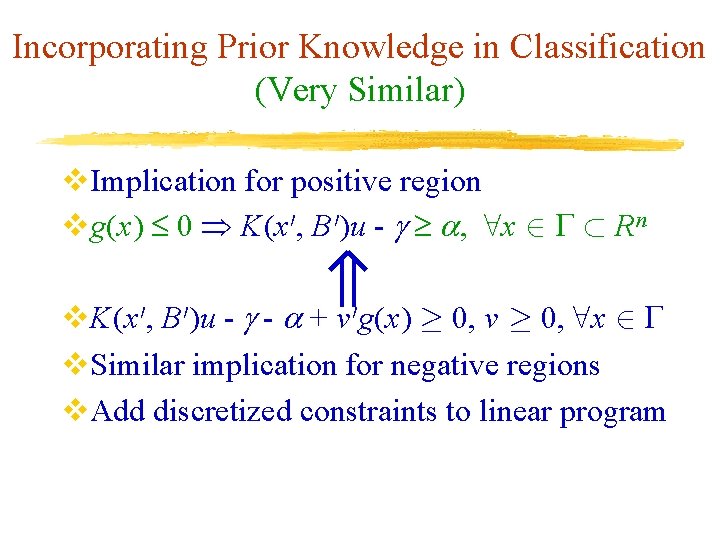

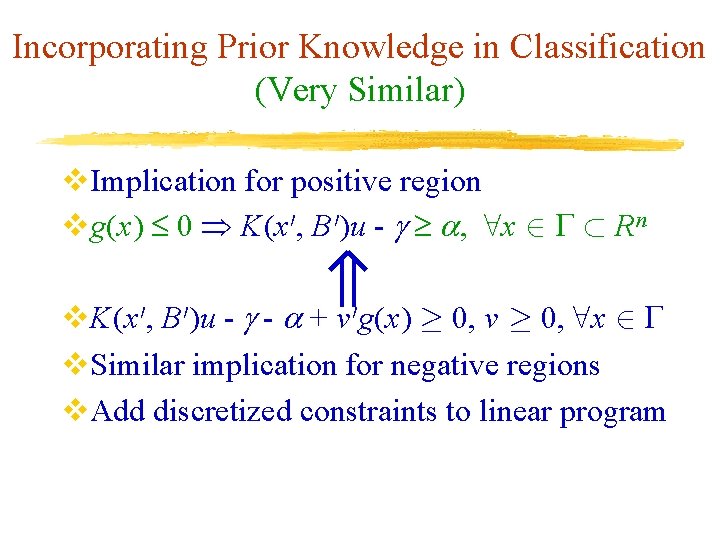

Incorporating Prior Knowledge in Classification (Very Similar) v. Implication for positive region vg(x) 0 K(x 0, B 0)u - , 8 x 2 ½ R n v. K(x 0, B 0)u - - + v 0 g(x) ¸ 0, v ¸ 0, 8 x 2 v. Similar implication for negative regions v. Add discretized constraints to linear program

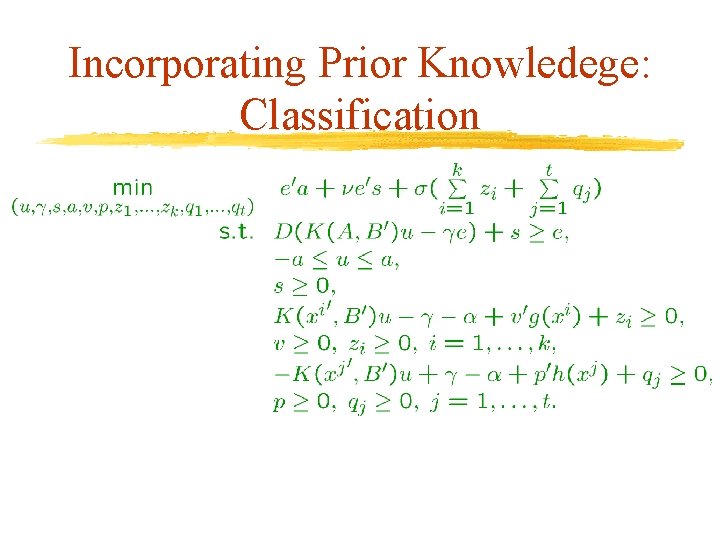

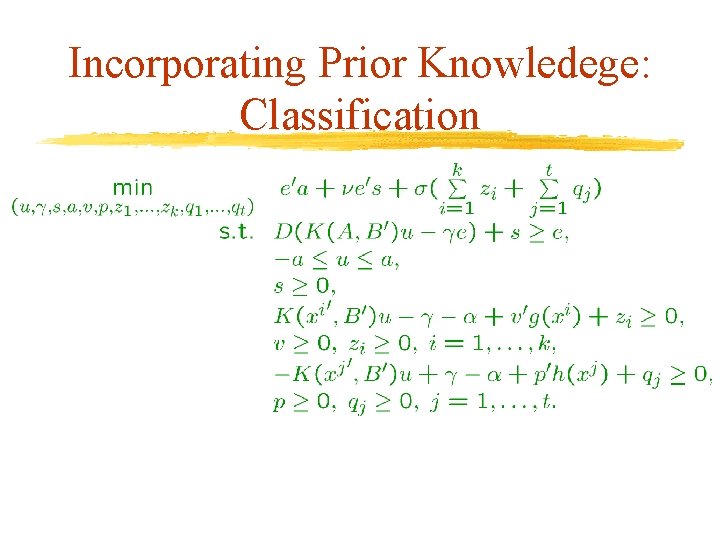

Incorporating Prior Knowledege: Classification

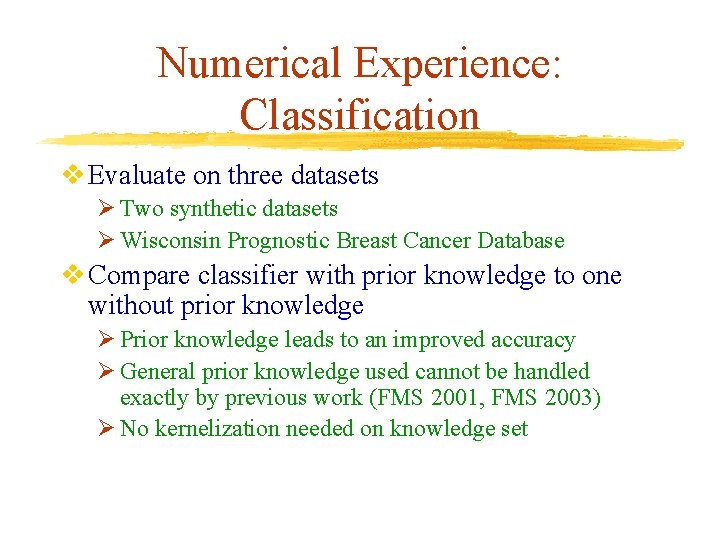

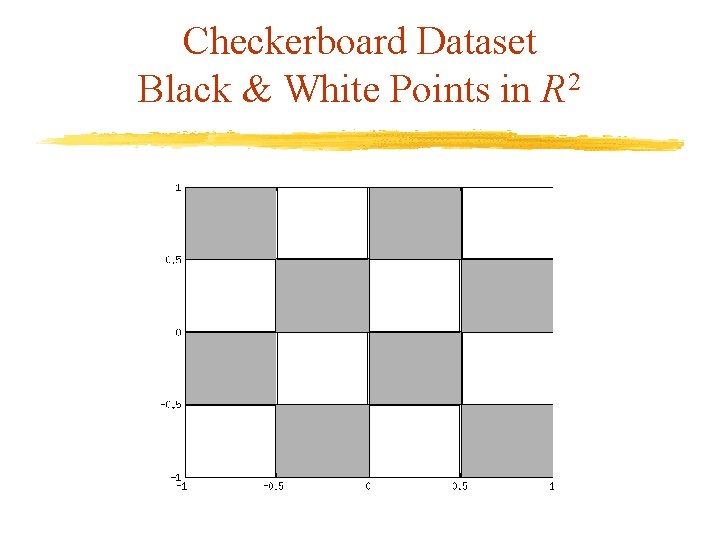

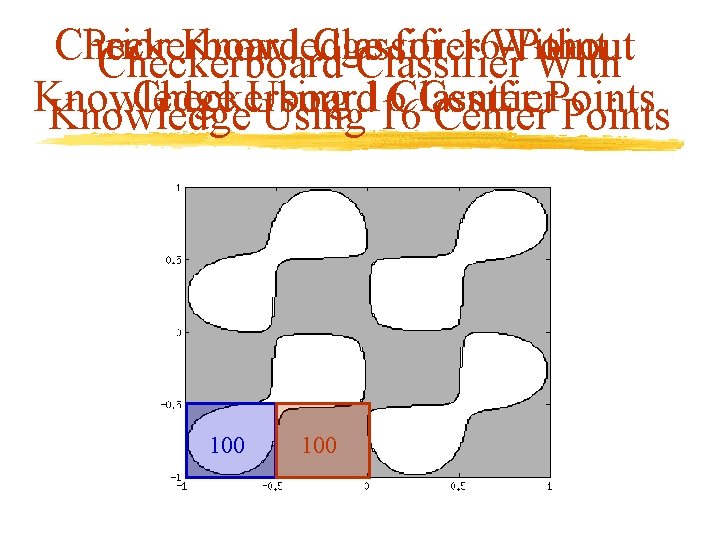

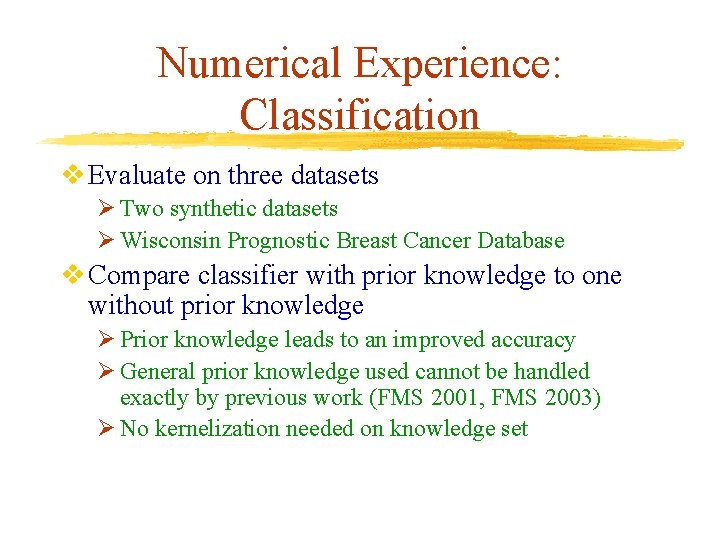

Numerical Experience: Classification v Evaluate on three datasets Ø Two synthetic datasets Ø Wisconsin Prognostic Breast Cancer Database v Compare classifier with prior knowledge to one without prior knowledge Ø Prior knowledge leads to an improved accuracy Ø General prior knowledge used cannot be handled exactly by previous work (FMS 2001, FMS 2003) Ø No kernelization needed on knowledge set

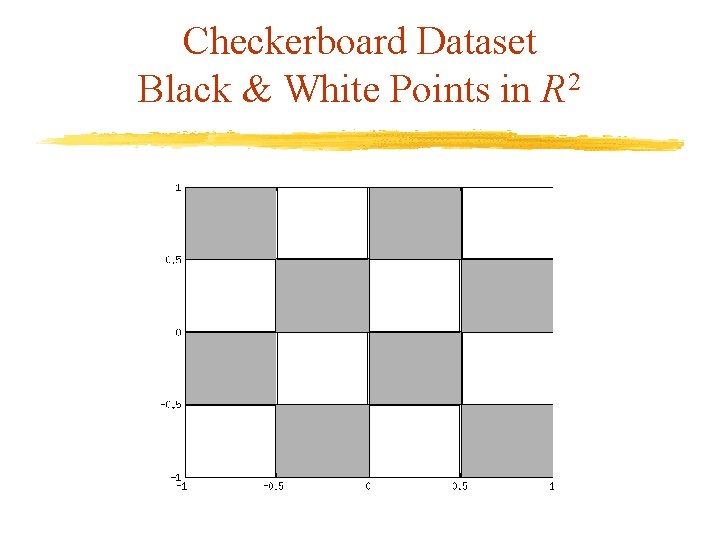

Checkerboard Dataset Black & White Points in R 2

Checkerboard Prior Knowledge Classifier for 16 -Point Without Checkerboard Classifier With Knowledge Checkerboard Using 16 Classifier Center Points Knowledge Using 16 Center Points 100

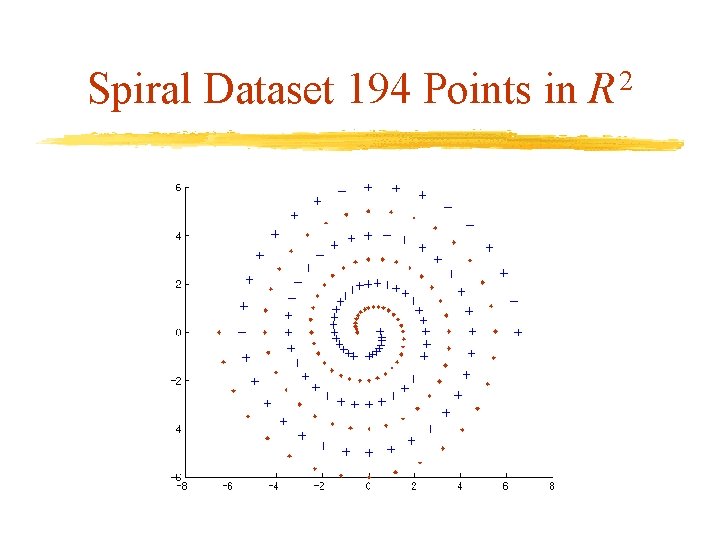

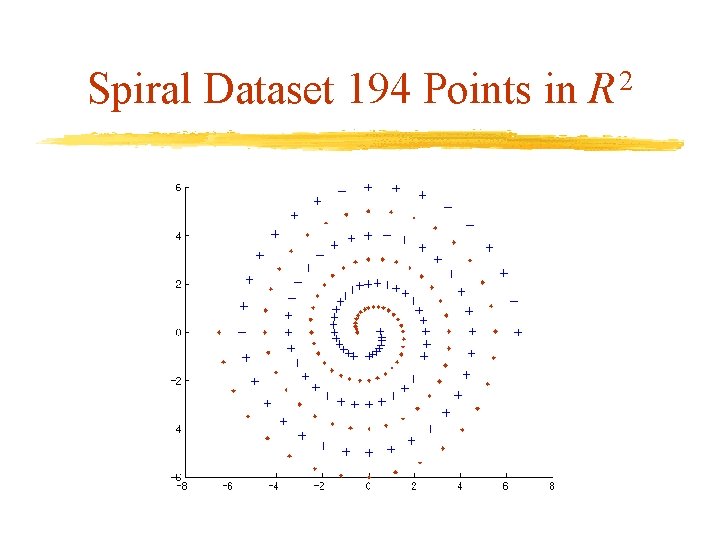

Spiral Dataset 194 Points in R 2

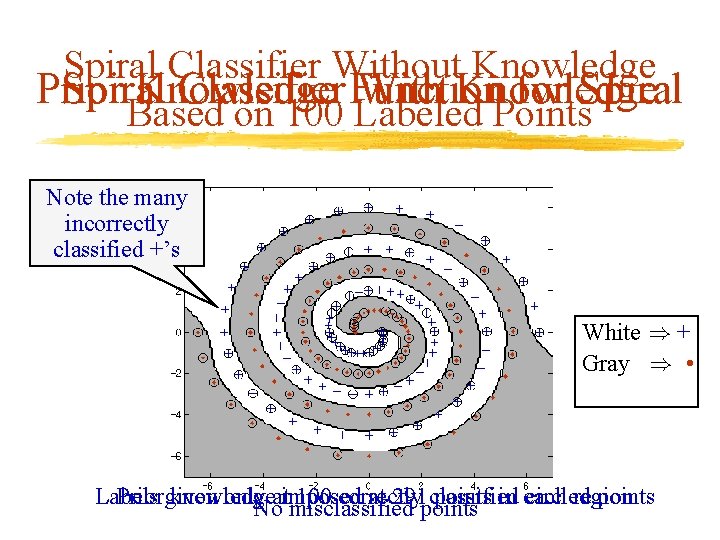

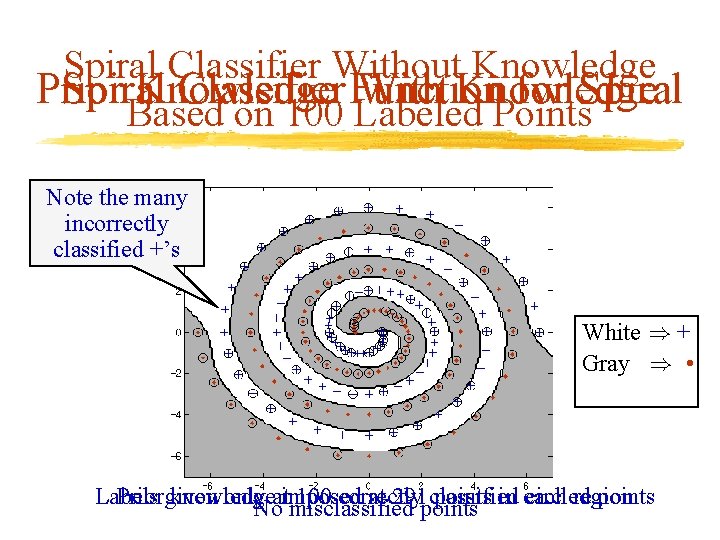

Spiral Classifier Without Knowledge Prior Spiral Knowledge Classifier Function With Knowledge for Spiral Based on 100 Labeled Points Note the many incorrectly classified +’s White ) + Gray ) • • Labels • Priorgiven knowledge only 100 correctly at 291 points classified points in each circled region points • Noatimposed misclassified

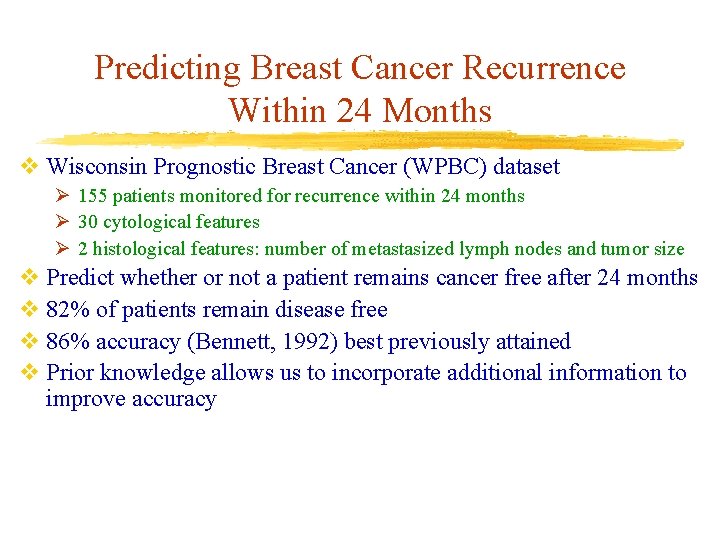

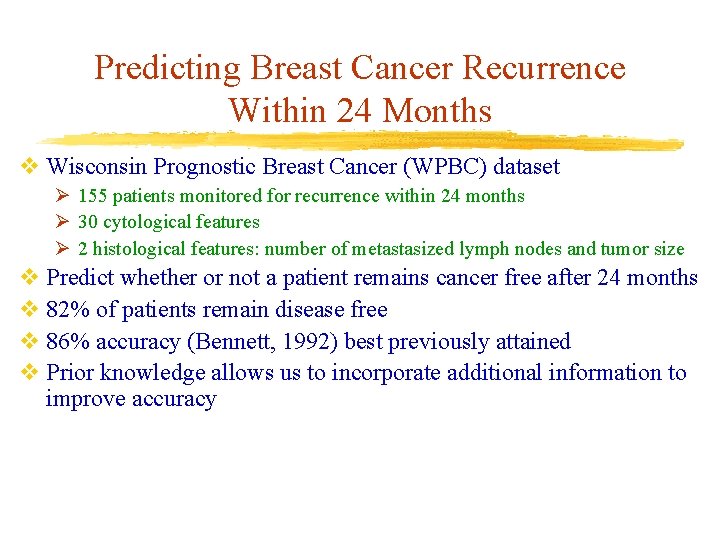

Predicting Breast Cancer Recurrence Within 24 Months v Wisconsin Prognostic Breast Cancer (WPBC) dataset Ø 155 patients monitored for recurrence within 24 months Ø 30 cytological features Ø 2 histological features: number of metastasized lymph nodes and tumor size v Predict whether or not a patient remains cancer free after 24 months v 82% of patients remain disease free v 86% accuracy (Bennett, 1992) best previously attained v Prior knowledge allows us to incorporate additional information to improve accuracy

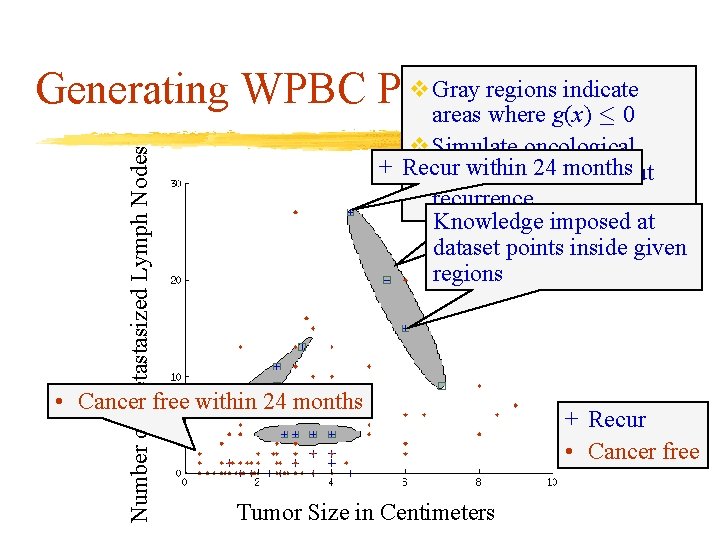

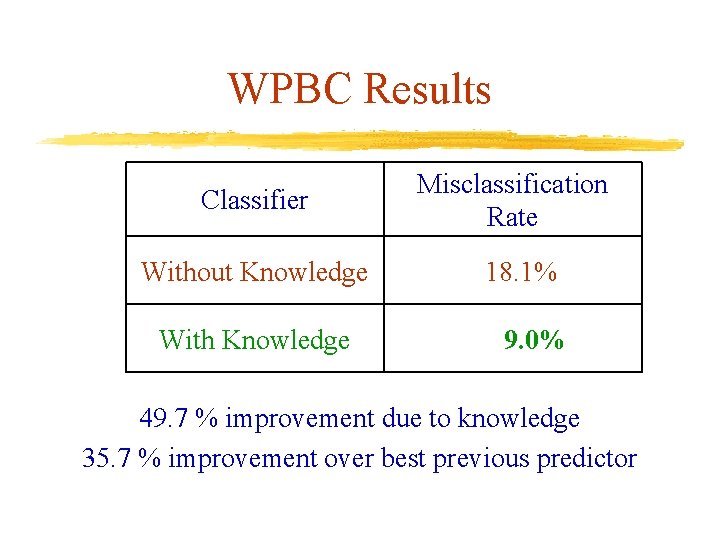

Number of Metastasized Lymph Nodes v Gray. Knowledge regions indicate Generating WPBC Prior areas where g(x) · 0 v Simulate oncological + Recur within advice 24 months surgeon’s about recurrence Knowledge imposed at dataset points inside given regions • Cancer free within 24 months Tumor Size in Centimeters + Recur • Cancer free

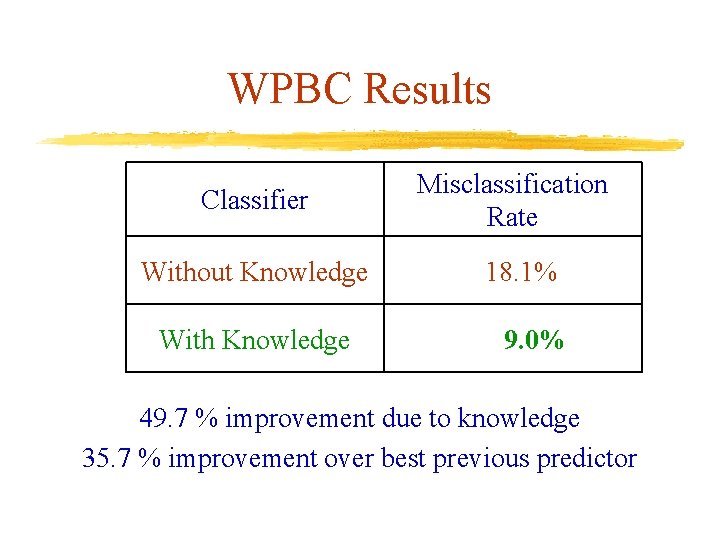

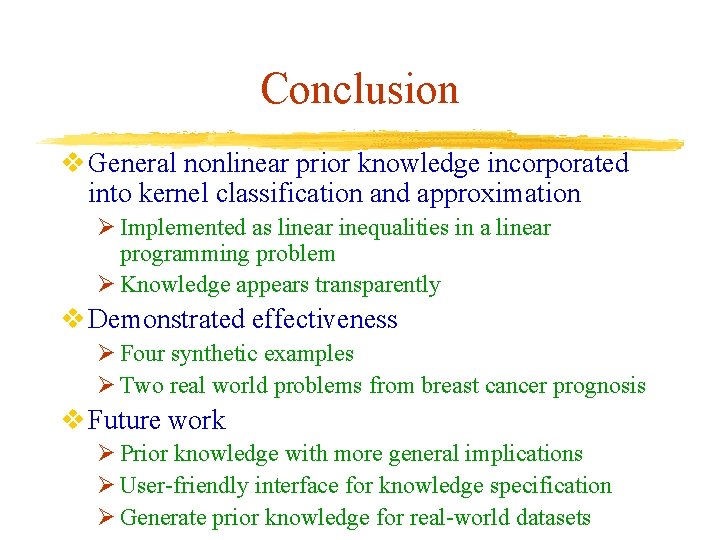

WPBC Results Classifier Without Knowledge With Knowledge Misclassification Rate 18. 1% 9. 0% . . 49. 7 % improvement due to knowledge 35. 7 % improvement over best previous predictor

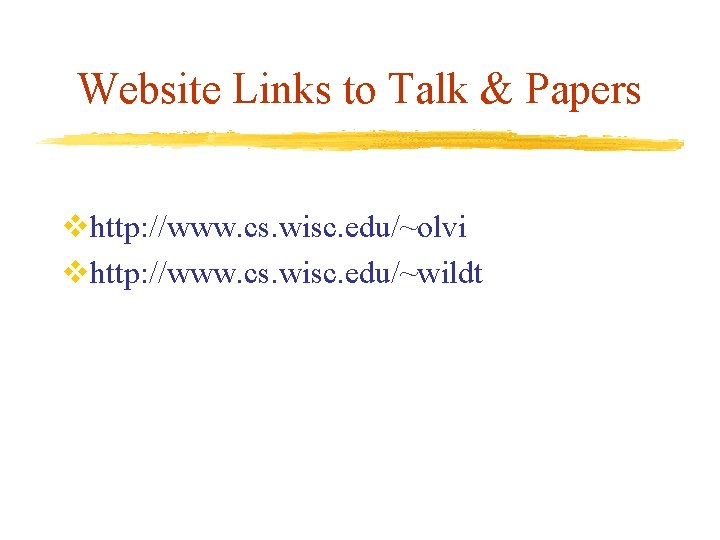

Conclusion v General nonlinear prior knowledge incorporated into kernel classification and approximation Ø Implemented as linear inequalities in a linear programming problem Ø Knowledge appears transparently v Demonstrated effectiveness Ø Four synthetic examples Ø Two real world problems from breast cancer prognosis v Future work Ø Prior knowledge with more general implications Ø User-friendly interface for knowledge specification Ø Generate prior knowledge for real-world datasets

Website Links to Talk & Papers vhttp: //www. cs. wisc. edu/~olvi vhttp: //www. cs. wisc. edu/~wildt