NLP Introduction to NLP TAG The Chomsky Hierarchy

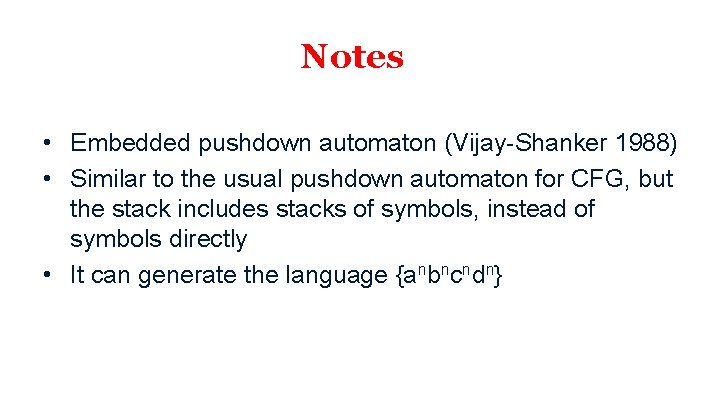

![[example from Jungo Kasai] [example from Jungo Kasai]](https://slidetodoc.com/presentation_image_h2/5ecf786b6d4892f229c68be67ab1e107/image-11.jpg)

![[example from Jungo Kasai] [example from Jungo Kasai]](https://slidetodoc.com/presentation_image_h2/5ecf786b6d4892f229c68be67ab1e107/image-12.jpg)

- Slides: 22

NLP

Introduction to NLP TAG

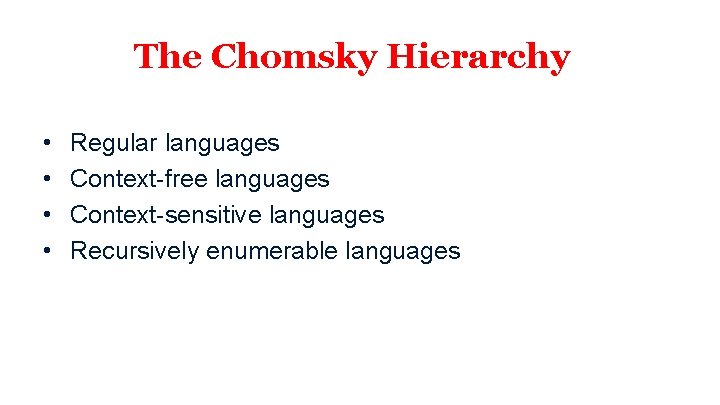

The Chomsky Hierarchy • • Regular languages Context-free languages Context-sensitive languages Recursively enumerable languages

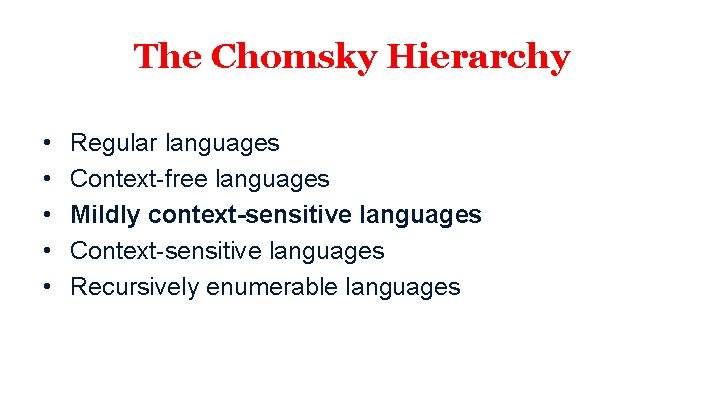

The Chomsky Hierarchy • • • Regular languages Context-free languages Mildly context-sensitive languages Context-sensitive languages Recursively enumerable languages

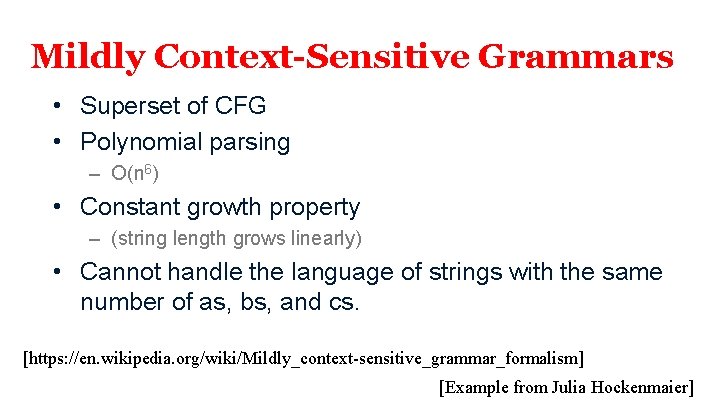

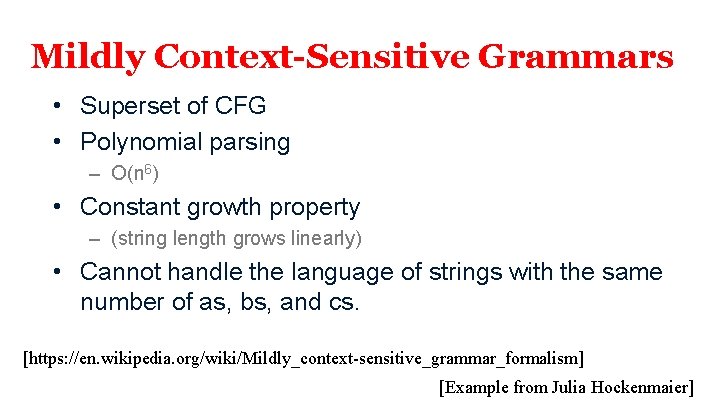

Mildly Context-Sensitive Grammars • Superset of CFG • Polynomial parsing – O(n 6) • Constant growth property – (string length grows linearly) • Cannot handle the language of strings with the same number of as, bs, and cs. [https: //en. wikipedia. org/wiki/Mildly_context-sensitive_grammar_formalism] [Example from Julia Hockenmaier]

Other Formalisms • Tree Substitution Grammar (TSG) – Terminals generate entire tree fragments – TSG and CFG are formally equivalent

Mildly Context-Sensitive Grammars • More powerful than TSG • Examples: – Tree Adjoining Grammar (TAG) – Combinatory Categorial Grammar (CCG)

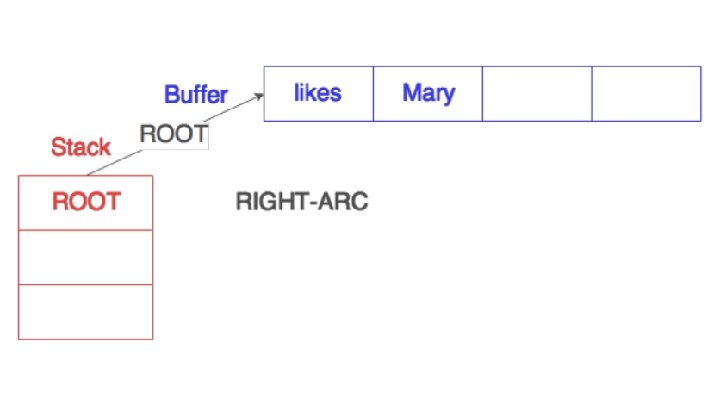

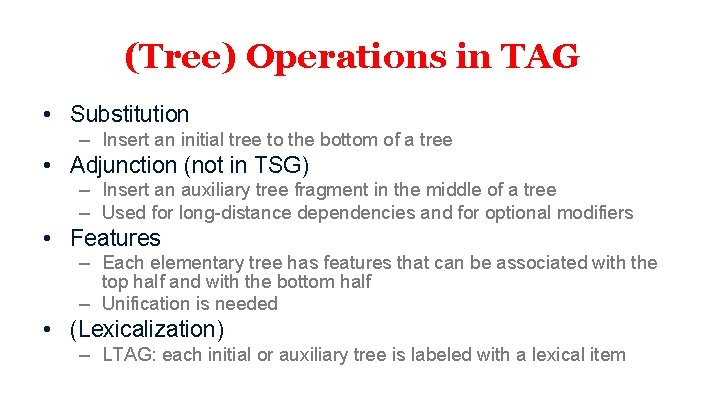

(Tree) Operations in TAG • Substitution – Insert an initial tree to the bottom of a tree • Adjunction (not in TSG) – Insert an auxiliary tree fragment in the middle of a tree – Used for long-distance dependencies and for optional modifiers • Features – Each elementary tree has features that can be associated with the top half and with the bottom half – Unification is needed • (Lexicalization) – LTAG: each initial or auxiliary tree is labeled with a lexical item

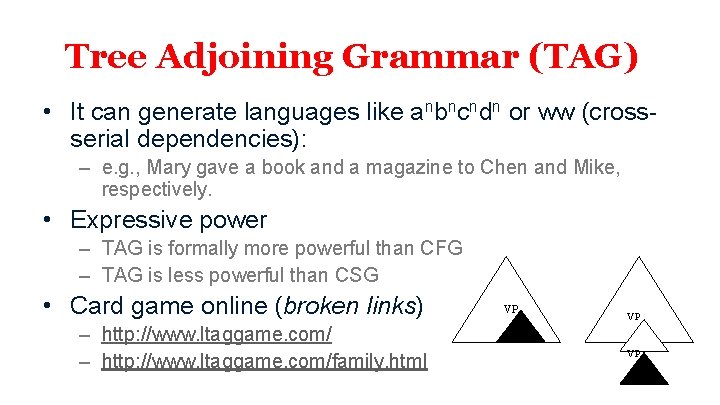

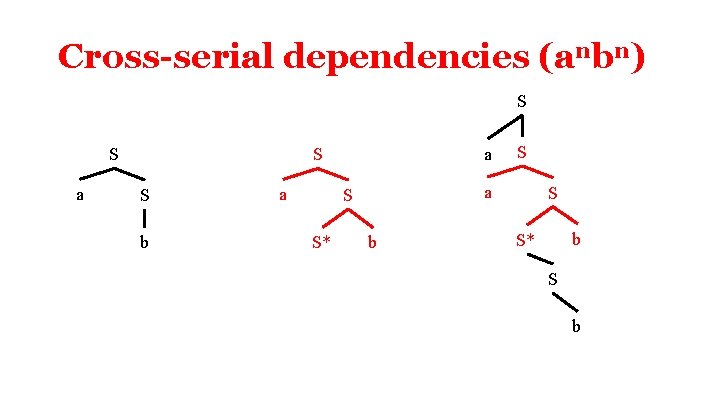

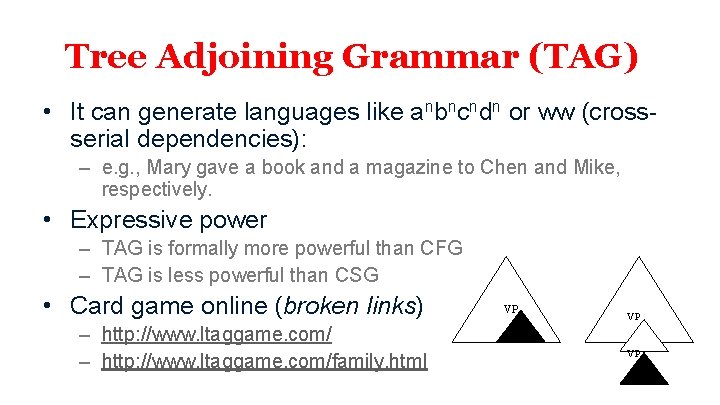

Tree Adjoining Grammar (TAG) • It can generate languages like anbncndn or ww (crossserial dependencies): – e. g. , Mary gave a book and a magazine to Chen and Mike, respectively. • Expressive power – TAG is formally more powerful than CFG – TAG is less powerful than CSG • Card game online (broken links) – http: //www. ltaggame. com/family. html VP VP VP

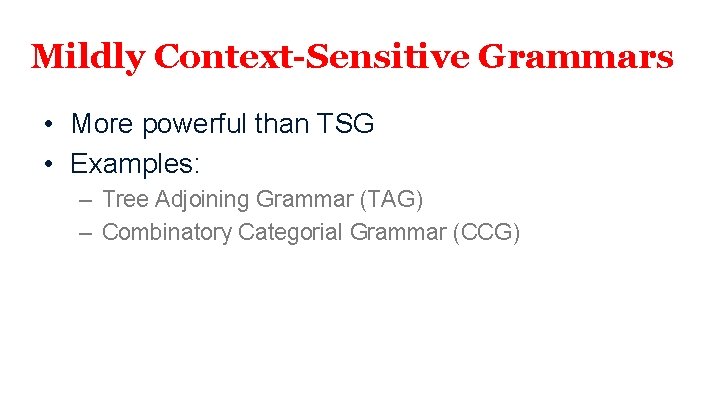

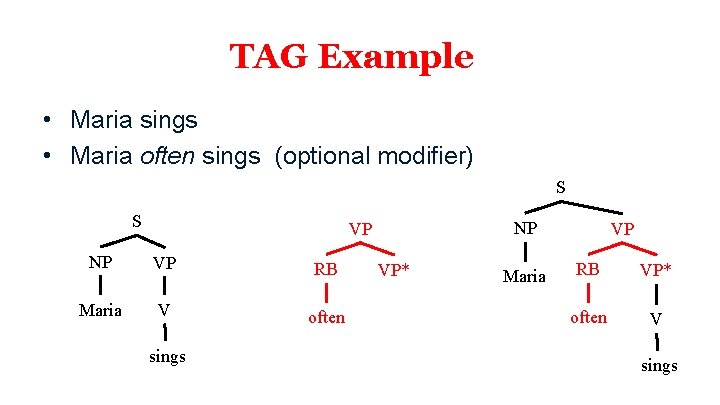

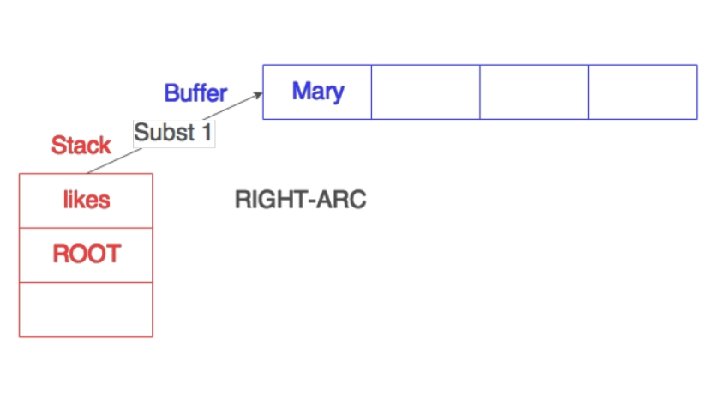

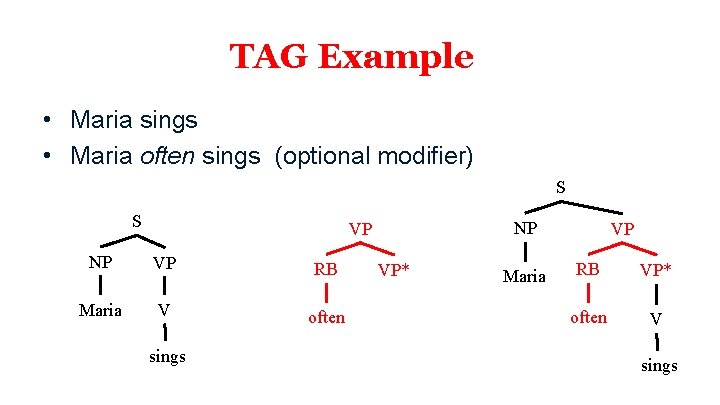

TAG Example • Maria sings • Maria often sings (optional modifier) S S NP VP RB Maria V often sings VP* Maria VP RB VP* often V sings

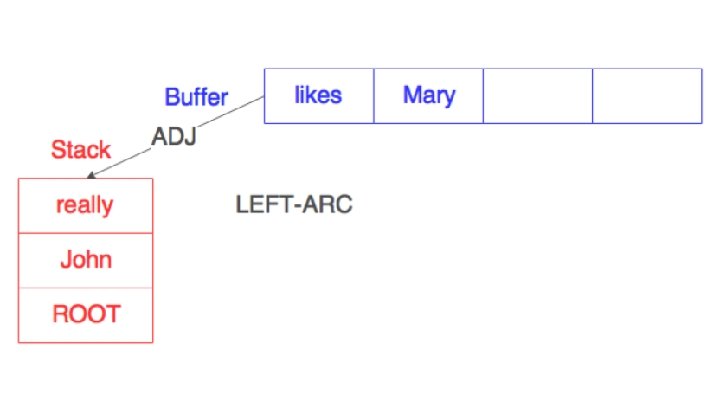

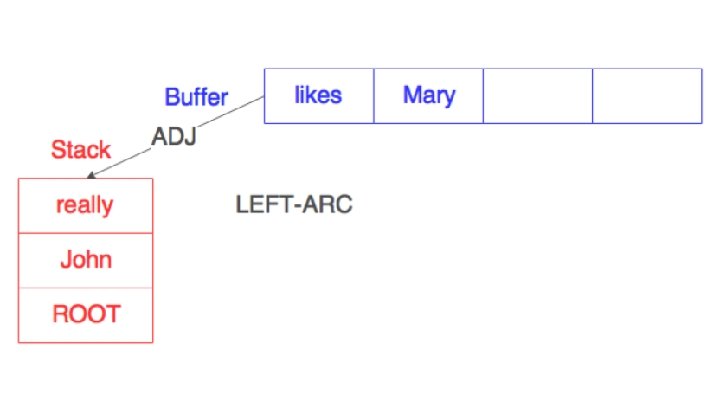

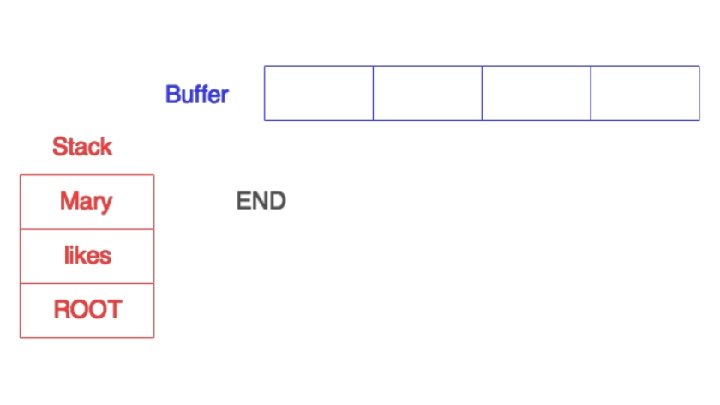

![example from Jungo Kasai [example from Jungo Kasai]](https://slidetodoc.com/presentation_image_h2/5ecf786b6d4892f229c68be67ab1e107/image-11.jpg)

[example from Jungo Kasai]

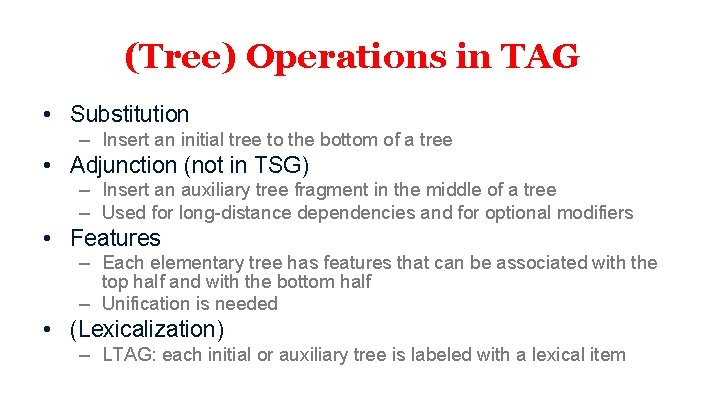

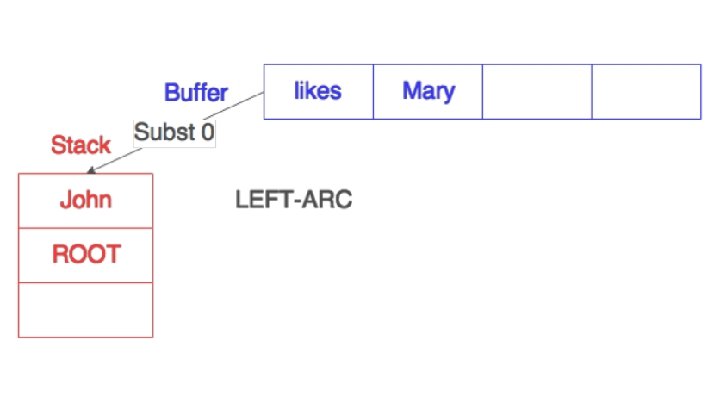

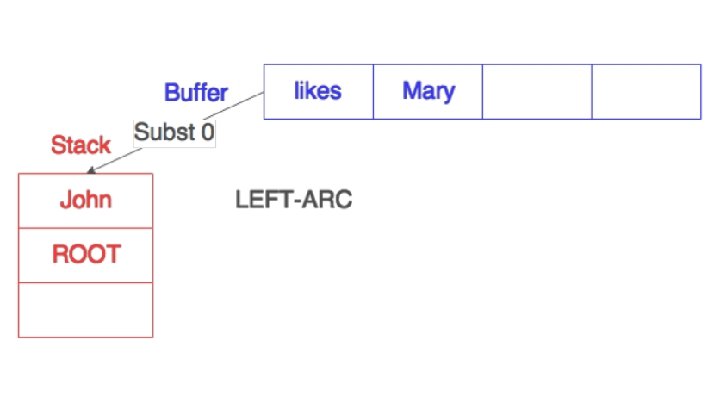

![example from Jungo Kasai [example from Jungo Kasai]](https://slidetodoc.com/presentation_image_h2/5ecf786b6d4892f229c68be67ab1e107/image-12.jpg)

[example from Jungo Kasai]

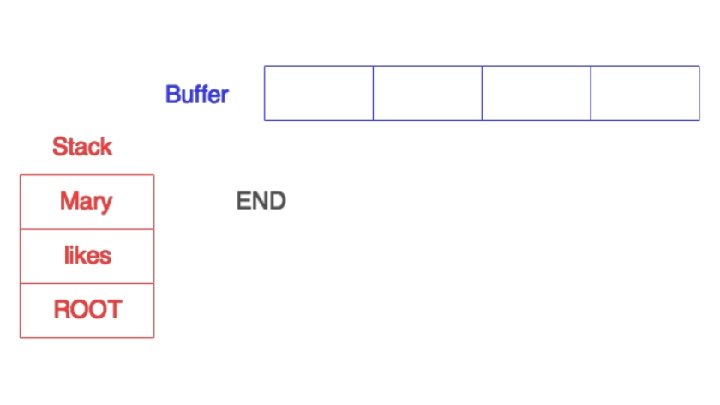

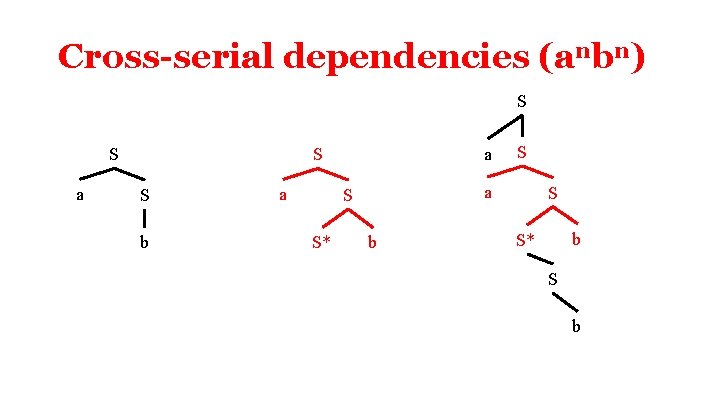

Cross-serial dependencies (anbn) S S a a S S b a a S S* S b

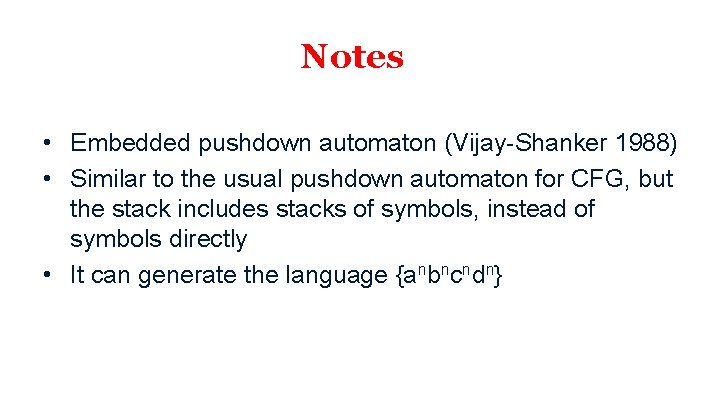

Notes • Embedded pushdown automaton (Vijay-Shanker 1988) • Similar to the usual pushdown automaton for CFG, but the stack includes stacks of symbols, instead of symbols directly • It can generate the language {anbncndn}

NLP