NIST Blog PreTrack 14 Nov 2006 Blog Track

NIST Blog Pre-Track, 14 Nov 2006 Blog Track Open Task: Spam Blog Detection Tim Finin Pranam Kolari, Akshay Java, Tim Finin, Anupam Joshi, Justin Martineau University of Maryland, Baltimore County James Mayfield Johns Hopkins University Applied Physics Laboratory http: //ebiquity. umbc. edu/paper/html/id/318/

Blogosphere Reputation at Stake!

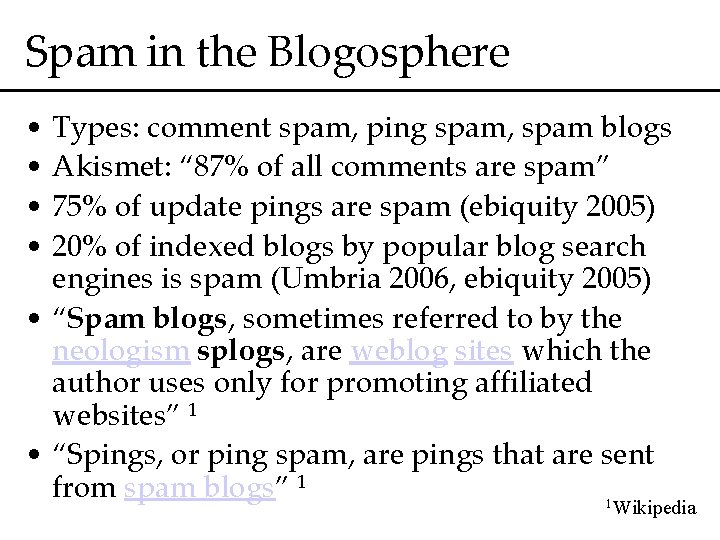

Spam in the Blogosphere • Types: comment spam, ping spam, spam blogs • Akismet: “ 87% of all comments are spam” • 75% of update pings are spam (ebiquity 2005) • 20% of indexed blogs by popular blog search engines is spam (Umbria 2006, ebiquity 2005) • “Spam blogs, sometimes referred to by the neologism splogs, are weblog sites which the author uses only for promoting affiliated websites” 1 • “Spings, or ping spam, are pings that are sent from spam blogs” 1 1 Wikipedia

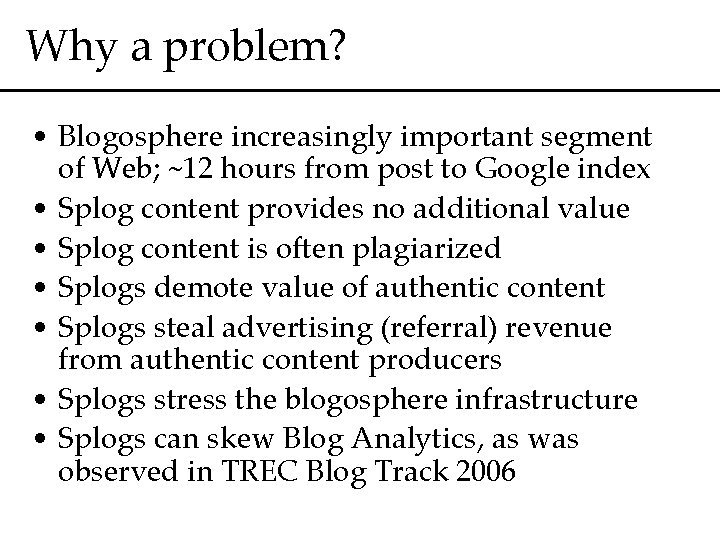

Why a problem? • Blogosphere increasingly important segment of Web; ~12 hours from post to Google index • Splog content provides no additional value • Splog content is often plagiarized • Splogs demote value of authentic content • Splogs steal advertising (referral) revenue from authentic content producers • Splogs stress the blogosphere infrastructure • Splogs can skew Blog Analytics, as was observed in TREC Blog Track 2006

Nature of Splogs in TREC 2006 • Around 83 K identifiable blog home-pages in the collection, with 3. 2 M permalinks • 81 K blogs could be processed • We use splog detection models developed on blog home-pages; 87% accuracy • We identified 13, 542 splogs • Blacklisted 543 K permalinks from these splogs • ~16% of the entire collection • ~17% splog posts injected into TREC dataset 1 1 The TREC Blog 06 Collection: Creating and Analyzing a Blog Test Collection – C. Macdonald, I. Ounis

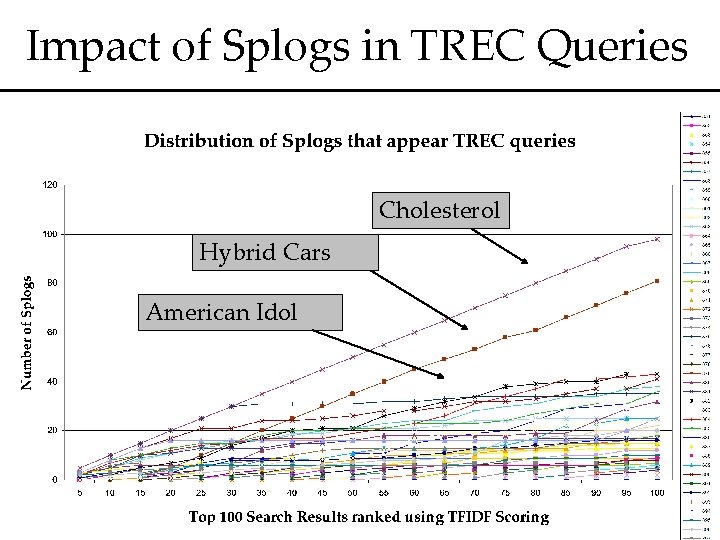

Impact of Splogs in TREC Queries Cholesterol Hybrid Cars American Idol

Splog Detection Task Proposal • Motivation – Detecting and eliminating spam is an essential requirement for any blog analysis – Splog detection has characteristics that set it appart from e-mail and web spam detection • Constraint – Simulate how blog search systems operate • Task Statement – Is an input permalink (post) spam?

Relation to E-mail Spam Detection • TREC has an E-mail Spam Classification Task • Similar in – Fast online spam detection • Different in – Nature of spamming: links, RSS feeds, web graph, metadata – Users targeted indirectly through search engines, e. g. “N 1 ST” not relevant for “NIST” query

Relation to Web Spam Detection • TREC does not have a web spam track • Similar in – Spamming web link structure • Different in – Coverage: Blog Analytics Engines don’t look beyond blogosphere – Speed of detection is important, 150 K posts/hour – Presence of structured text through RSS feeds presents new opportunities, and challenges

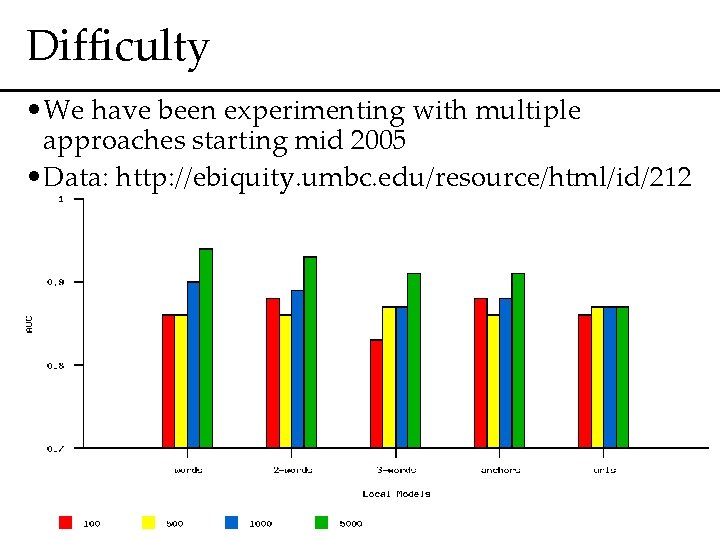

Difficulty • We have been experimenting with multiple approaches starting mid 2005 • Data: http: //ebiquity. umbc. edu/resource/html/id/212

Difficulty • Evolving spamming techniques and splog creation genres • Most basic technique spam techniques – Generate content by stuffing key dictionary words – Generate link to affiliates, through link dumps on blogrolls, linkrolls or after post content • Evolving spam techniques – Scrape contextually similar content to generate posts – RSS hijacking – Aggregation software, e. g. Planet X – Intersperse links randomly – Make link placement meaningful – Add spam comments and then ping. Repeat.

Task Details - Dataset Creation • Similar to TREC Blog 2006, a collection of feeds, blog home-pages and permalinks • View dataset D as two sets – Dbase , Dtest • Dbase to span (n-x) days, and Dtest to span the rest of x days for x≤ 1 • D could collected as a combination of – D as collected in 2006 – Sample a subset of pings from a ping server over the period that D is collected

Task Details - Assessment • Assessors classify spam post into one or more classes based on the kind of spam this post, or the blog hosting it features – Non-blog – Keyword-stuffed – Post-stitching – Post-plagiarism – Post-weaving – Blog/link-roll spam • Each assessment typically takes 1 -2 minutes • Detailed assessment will enable participants to identify classes they handle well and where they can improve

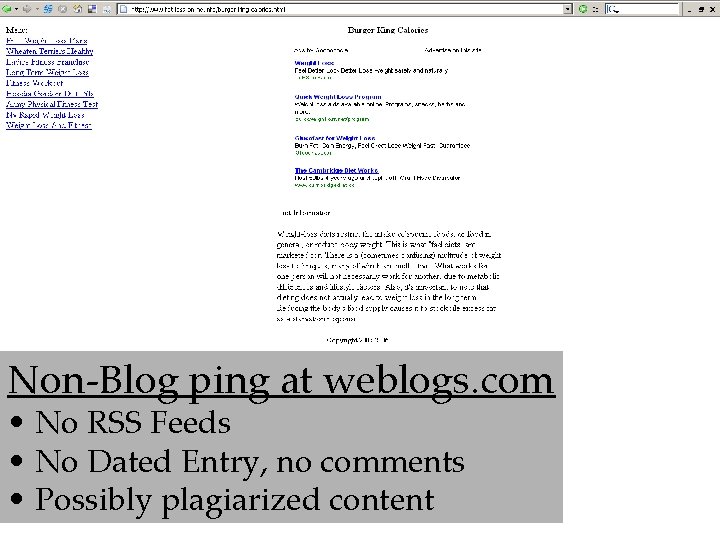

Non-Blog ping at weblogs. com • No RSS Feeds • No Dated Entry, no comments • Possibly plagiarized content

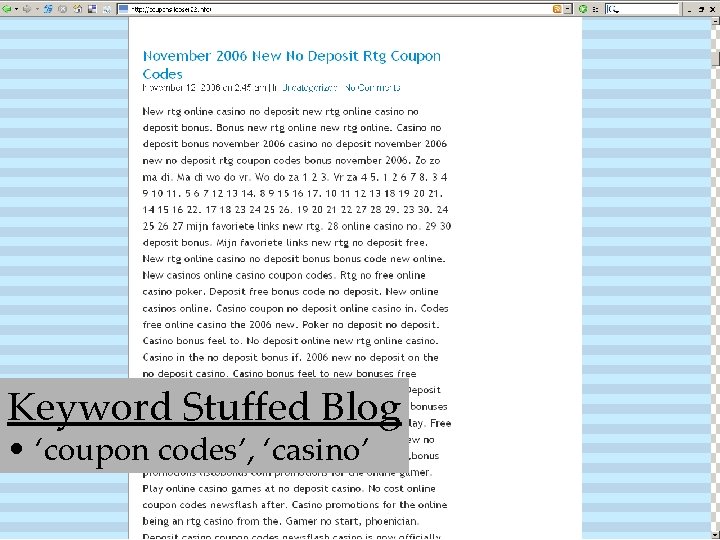

Keyword Stuffed Blog • ‘coupon codes’, ‘casino’

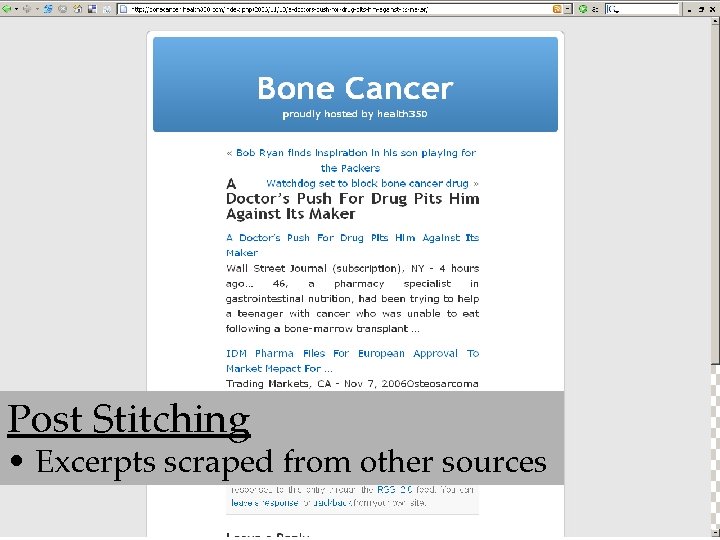

Post Stitching • Excerpts scraped from other sources

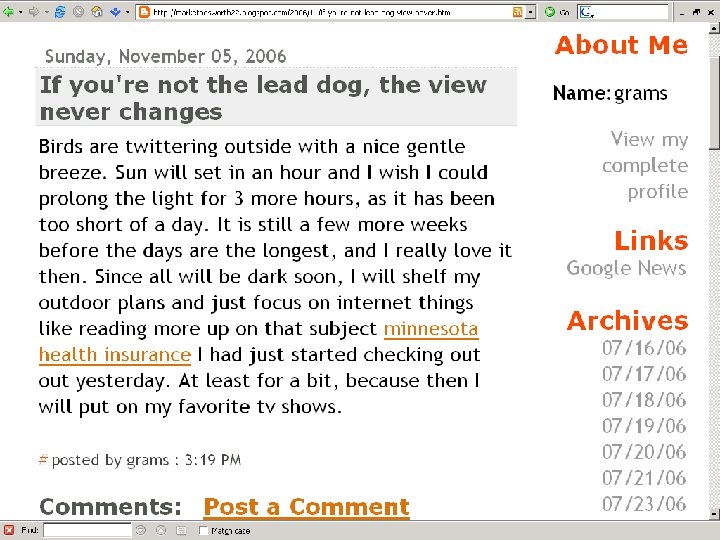

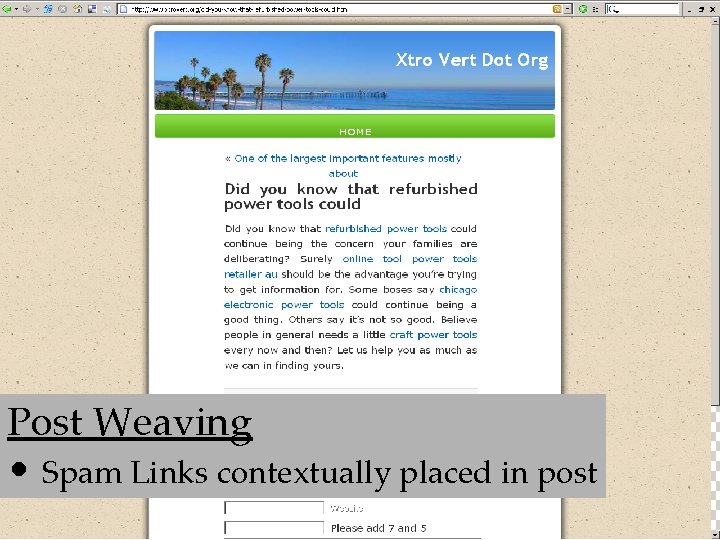

Post Weaving • Spam Links contextually placed in post

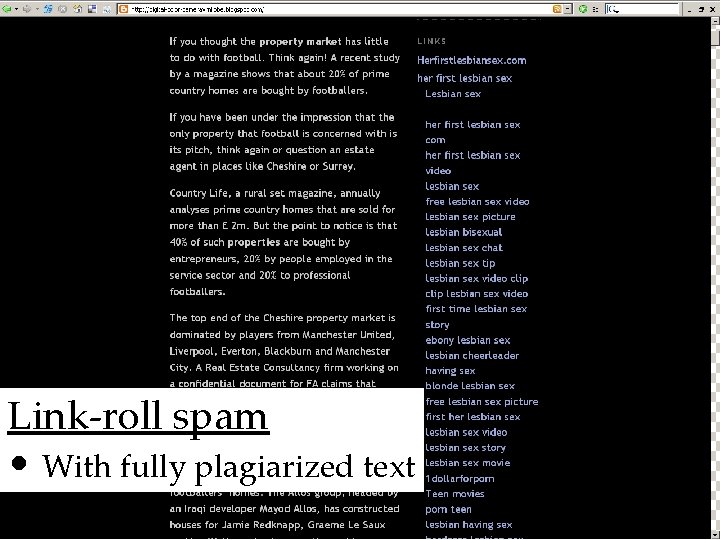

Link-roll spam • With fully plagiarized text

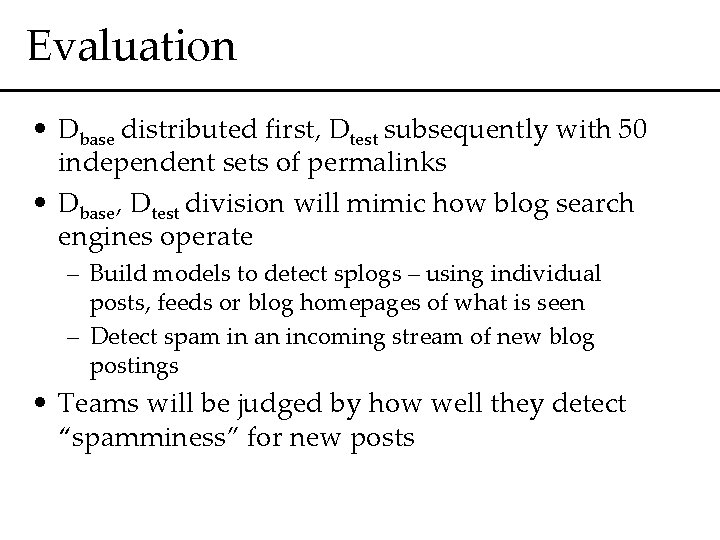

Evaluation • Dbase distributed first, Dtest subsequently with 50 independent sets of permalinks • Dbase, Dtest division will mimic how blog search engines operate – Build models to detect splogs – using individual posts, feeds or blog homepages of what is seen – Detect spam in an incoming stream of new blog postings • Teams will be judged by how well they detect “spamminess” for new posts

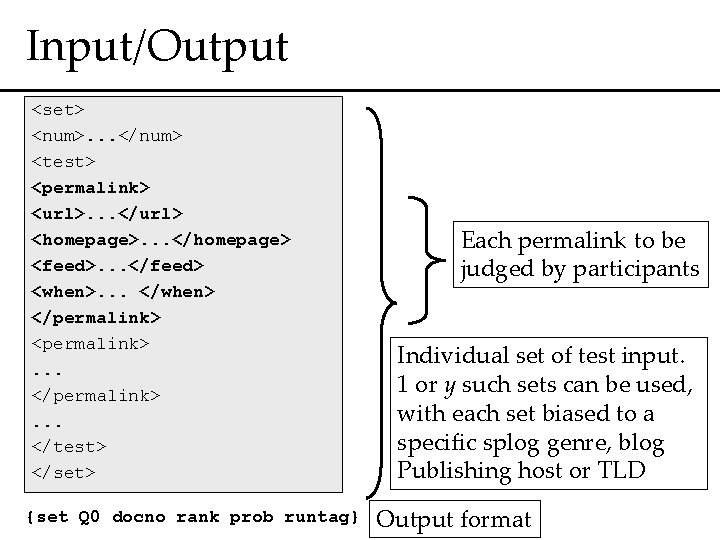

Input/Output <set> <num>. . . </num> <test> <permalink> <url>. . . </url> <homepage>. . . </homepage> <feed>. . . </feed> <when>. . . </when> </permalink> <permalink>. . . </test> </set> {set Q 0 docno rank prob runtag} Each permalink to be judged by participants Individual set of test input. 1 or y such sets can be used, with each set biased to a specific splog genre, blog Publishing host or TLD Output format

Summary • Spam Blogs present a major challenge to the quality of blog mining/analytics • Splog Detection is different from spam in other communication platforms • Development of TREC Task will help furthering state of the art • Task requirements can be easily aligned with existing task of opinion identification

- Slides: 22