nfsv 4 and linux peter honeyman linux scalability

nfsv 4 and linux peter honeyman linux scalability project center for information technology integration university of michigan ann arbor

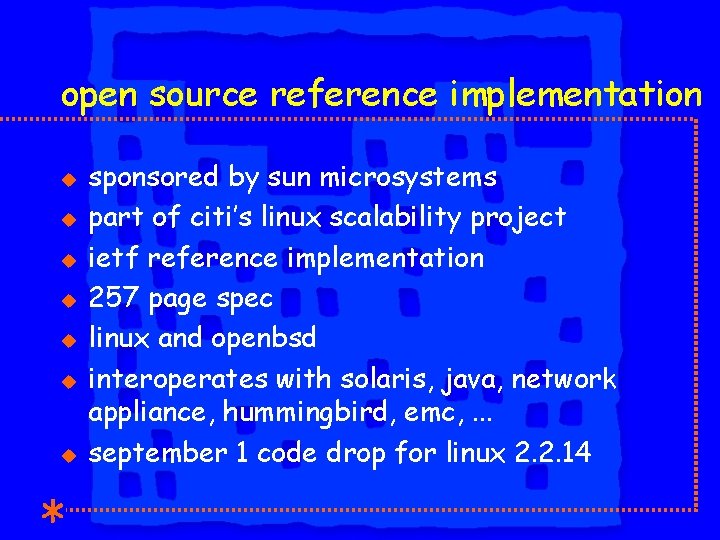

open source reference implementation u u u u sponsored by sun microsystems part of citi’s linux scalability project ietf reference implementation 257 page spec linux and openbsd interoperates with solaris, java, network appliance, hummingbird, emc, . . . september 1 code drop for linux 2. 2. 14

what’s new? u u u u lots of state compound rpc extensible security added to rpc layer delegation for files - client cache consistency lease-based, non-blocking, byte-range locks win 32 share locks mountd: gone lockd, statd: gone

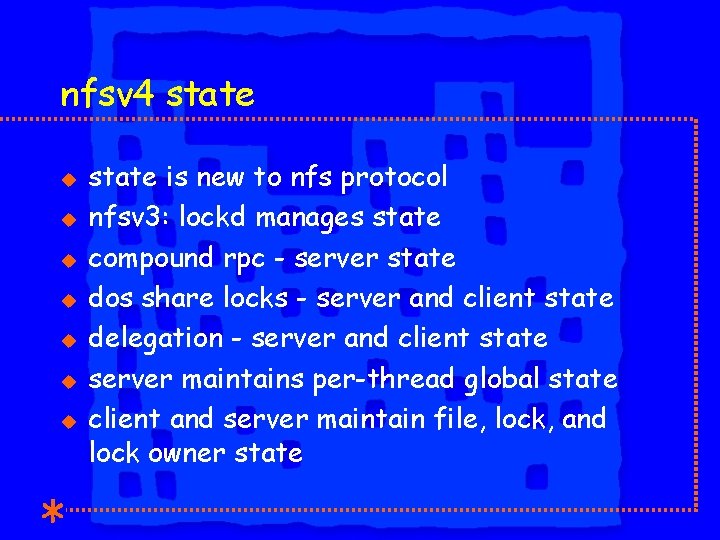

nfsv 4 state u u u u state is new to nfs protocol nfsv 3: lockd manages state compound rpc - server state dos share locks - server and client state delegation - server and client state server maintains per-thread global state client and server maintain file, lock, and lock owner state

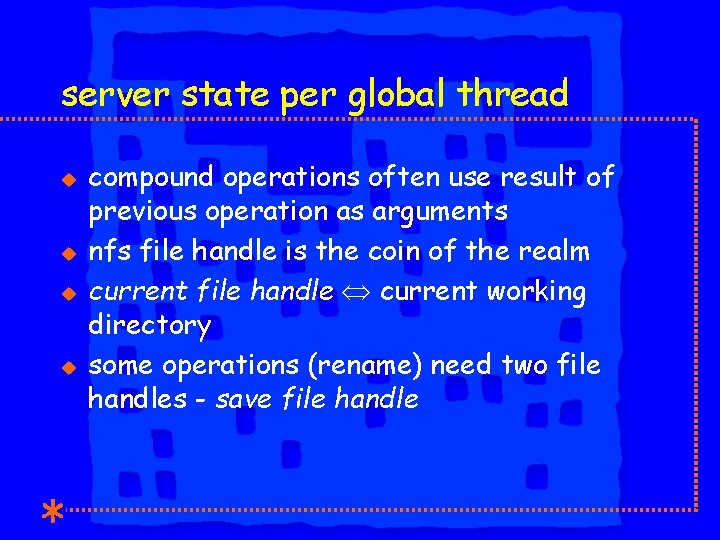

server state per global thread u u compound operations often use result of previous operation as arguments nfs file handle is the coin of the realm current file handle current working directory some operations (rename) need two file handles - save file handle

compound rpc u u u u hope is to reduce traffic complex calling interface partial results used rpc/xdr layering variable length: kmalloc buffer for args and recv want to xdr args directly into rpc buffer want to allow variable length receive buffer

rpc/xdr layering u u u rpc layer does not interpret compound ops replay cache: locking vs. regular have to decode to decide which replay cache to use

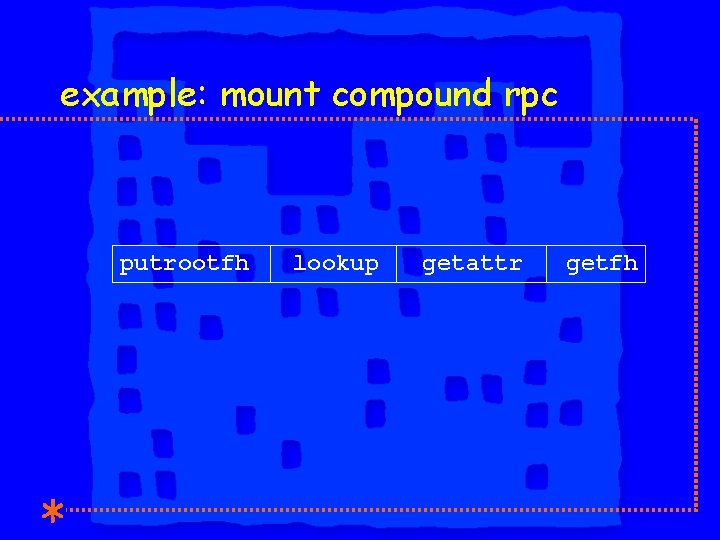

example: mount compound rpc putrootfh lookup getattr getfh

nfsv 4 mount u u u server pseudofs joins exported subtrees with a read only virtual file system any client can mount into the pseudofs users browse the pseudofs (via lookup)

nfsv 4 pseudofs u u u access into exported sub trees based on user’s credentials and permissions client /etc/fstab doesn’t change with servers export list server /etc/exports doesn’t need to maintain an ip based access list

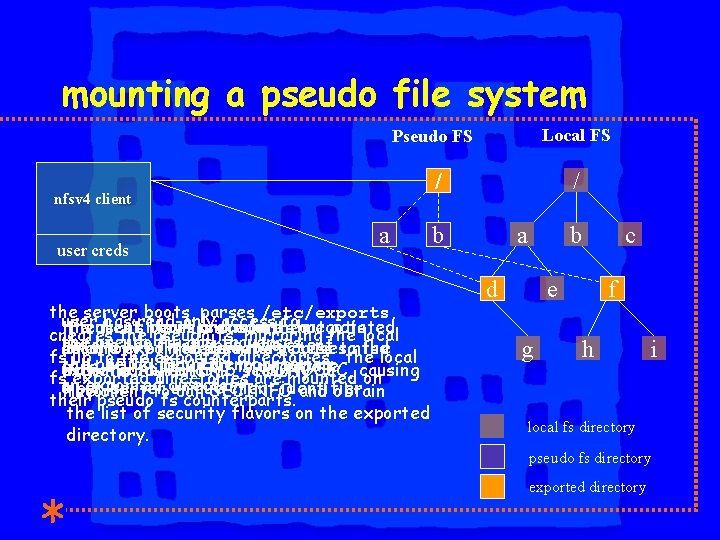

mounting a pseudo file system Local FS Pseudo FS / nfsv 4 client user creds a the server boots, parses /etc/exports, user has read-only access to the user’s permissions in the client first nfsv 4 boots procedure and mounts that anegotiated actslocal creates the pseudo fs, mirroring the pseudo fs, and traverses before the first open, the client realm determine the directory on the exported of the pseudo directory fsaccess causesto nfsd fssecurity up to the exported directories. the local the pseudo fs until encountering calls SETCLIENTID to negotiate causing exported directory. with to return the AUTH_SYS NFS 4 ERR_WRONGSEC, security fsanexported directories are mounted on exported directory. a per-server unique client identifier. flavor. the client to call SECINFO their pseudo fs counterparts. and obtain the list of security flavors on the exported directory. / b a b d c e g f h i local fs directory pseudo fs directory exported directory

rpcsec_gss u u u mit krb 5 gssrpc and sesame are open source, but neither is really rpcsec_gss sun released their rpcsec_gss, a complete rewrite of onc gss & sun onc a tough match u both are transport independent u gss channel bindings / onc xprt u overloading of programs’ null_proc

kernel rpcsec_gss u u u rpc layering had to be violated gss implementations are not kernel safe security service code not kernel safe (kerberos 5) kernel security services implemented as rpc upcalls to a user-level daemon, gssd but only some services - e. g. encryption need to be in the kernel

rpcsec_gss: where are we now? u u (mostly) complete user-level kerberos 5 implementation linux kernel implementation with kerberos 5 u mutual authentication u session key setup u no encryption u gssd

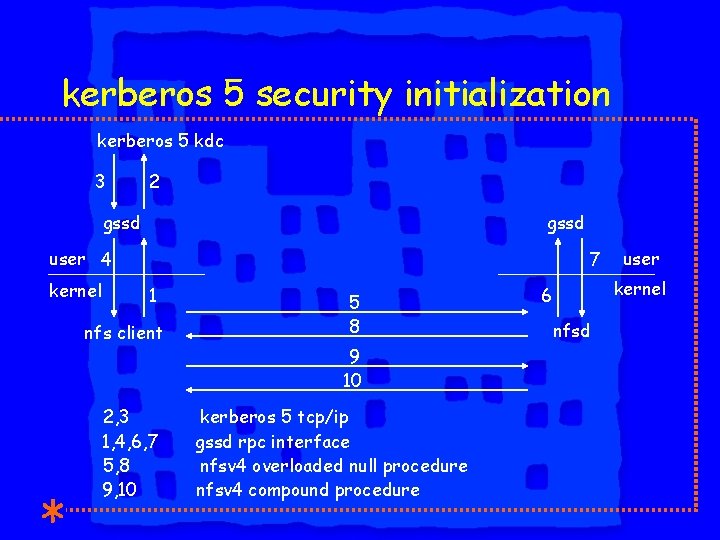

kerberos 5 security initialization kerberos 5 kdc 3 2 gssd user 4 kernel 7 1 nfs client 2, 3 1, 4, 6, 7 5, 8 9, 10 5 8 9 10 kerberos 5 tcp/ip gssd rpc interface nfsv 4 overloaded null procedure nfsv 4 compound procedure user kernel 6 nfsd

locking u u u lease based locks u no byte range callback mechanism server defines a lease for per client lock state server can reclaim client state if lease not renewed open sets lock state, including lock owner (clientid, pid) server returns lock stateid

locking u u u stateid mutating operations are ordered (open, close, locku, open_downgrade) lock owner can request a byte range lock and then: u upgrade the initial lock u unlock a sub-range of the initial lock server is not required to support sub-range lock semantics

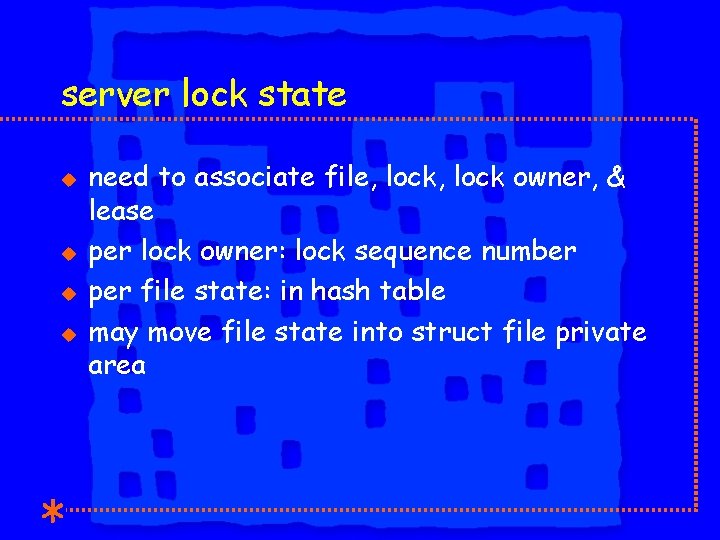

server lock state u u need to associate file, lock owner, & lease per lock owner: lock sequence number per file state: in hash table may move file state into struct file private area

server lock state u u u lock owners in hash table server doesn’t own the inode lock state in linked list off file stateid: handle to server lock state per client state: in hash table - lock lease

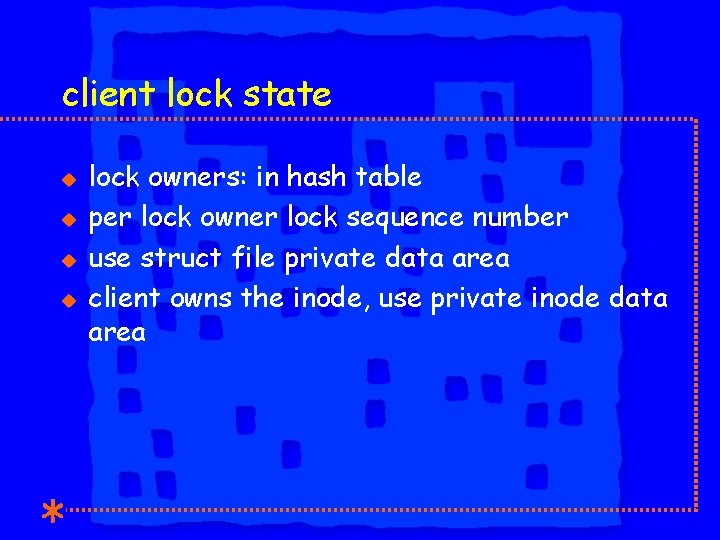

client lock state u u lock owners: in hash table per lock owner lock sequence number use struct file private data area client owns the inode, use private inode data area

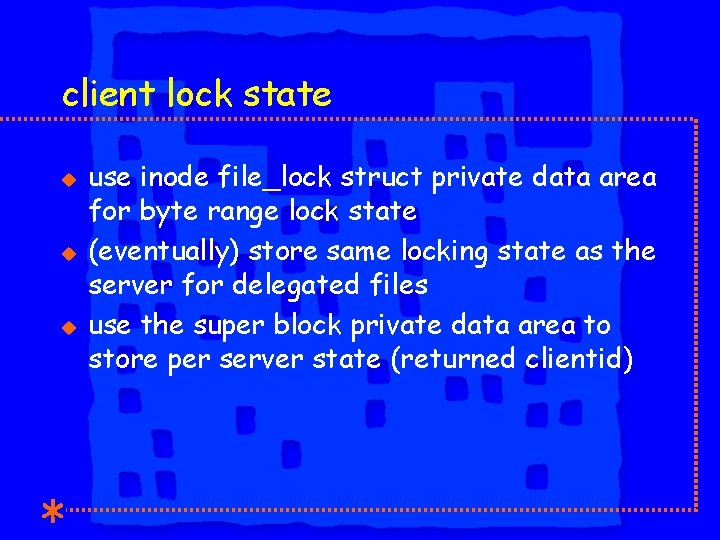

client lock state u use inode file_lock struct private data area for byte range lock state (eventually) store same locking state as the server for delegated files use the super block private data area to store per server state (returned clientid)

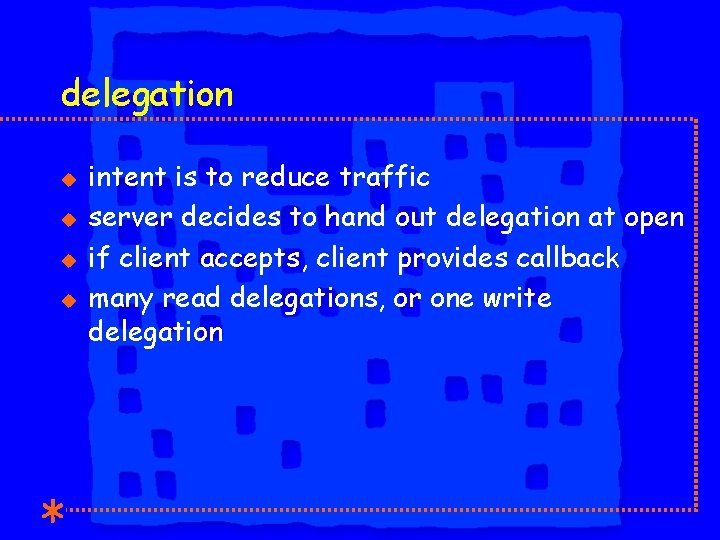

delegation u u intent is to reduce traffic server decides to hand out delegation at open if client accepts, client provides callback many read delegations, or one write delegation

delegation u u u when client delegates a cached file it handles: u all locking, share and byte range u future opens client can’t reclaim a delegation without a new open no delegation for directories

server delegation state u u associates delegation with a file delegation state in linked list off file stateid: separate from the lock stateid client call back path

linux vfs changes u u u shared problem: open with o_excl described by peter braam nfsv 4 implements win 32 share locks, which require atomic open with create linux 2. 2. x and linux 2. 4 vfs is problematic

linux vfs changes u u to create and open a file, three inode operations are called in sequence lookup resolves the last name component create is called to create an inode open is called to open the file

xopen u u u inherent race condition means no atomicity we partially solved this problem we added a new inode operation which performs the open system call in one step int xopen(struct file *filep, struct inode *dir_i, struct dentry *dentry, int mode) if the xopen() inode operation is null, the current two step code is used nfsv 4 open subsumes lookup, create, open, access

user name space u u u local file system uses uid/gid protocol specifies <user name>@<realm> different security can produce different name spaces

user name space u u unix user name kerberos 5 realm pki realm - x 500 or dn naming gssd resolves <user name>@<realm> to local file system representation

open issues u u local file system choices u currently ext 2 u acl implementation will determine fs for linux 2. 4 kernel additions and changes u rpc rewrite u crypto in the kernel u atomic open

next steps u u u march 31 - full linux 2. 4 implementation, without acl’s june 30 - acl’s added network appliance sponsored nfsv 3/v 4 linux performance project

any questions? http: //www. citi. umich. edu/projects/nfsv 4 http: //www. nfsv 4. org

- Slides: 32