News and Blog Analysis with Lydia Steven Skiena

News and Blog Analysis with Lydia Steven Skiena Dept. of Computer Science Stony Brook University http: //www. cs. sunysb. edu/~skiena

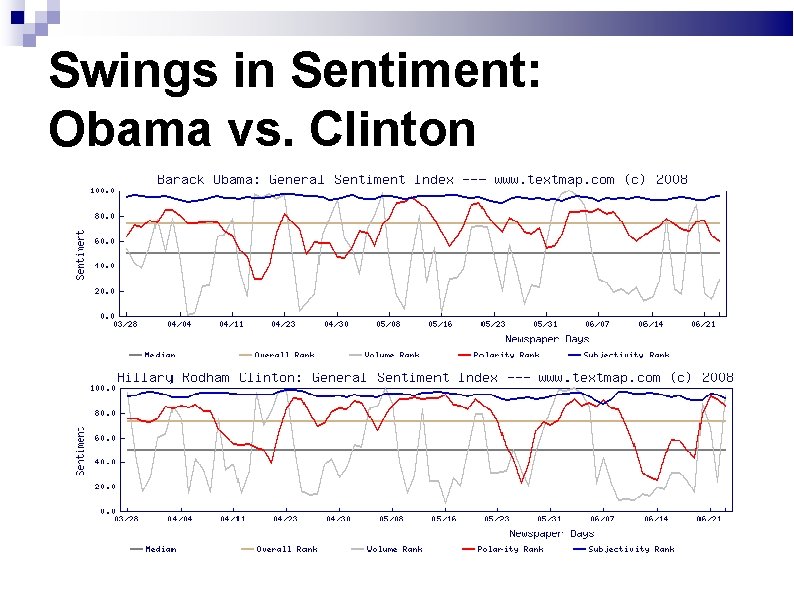

Battle for the Nomination: 2008 4/7: Obama calls Pennsylvanians “bitter” 4/8: Clinton strategist steps aside 4/23: Clinton wins Pennsylvania 5/6: Obama wins NC; race seems settled 5/17: Clinton wins West Virginia 5/23: Clinton raises RFK assassination 6/7: Clinton concedes

Swings in Sentiment: Obama vs. Clinton

Large-Scale News Analysis Our Lydia news analysis system does a daily analysis of over 1000+ online English and foreign-language newspapers, plus blogs, RSS feeds, and other news sources. We currently track over 10, 000 news entities, providing spatial, temporal, relational and sentiment analysis We believe our data and analysis is of great interest in political science and many related fields.

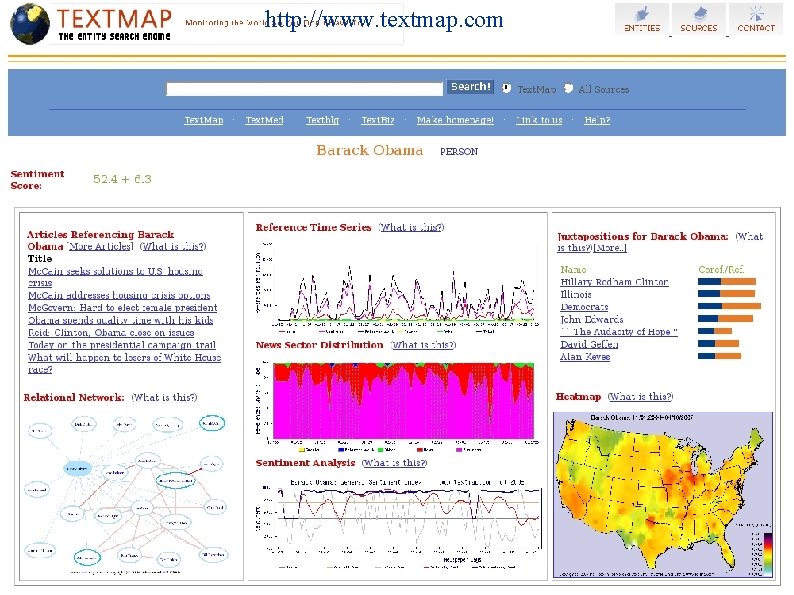

www. textmap. com

http: //www. textmap. com The textmap. com website

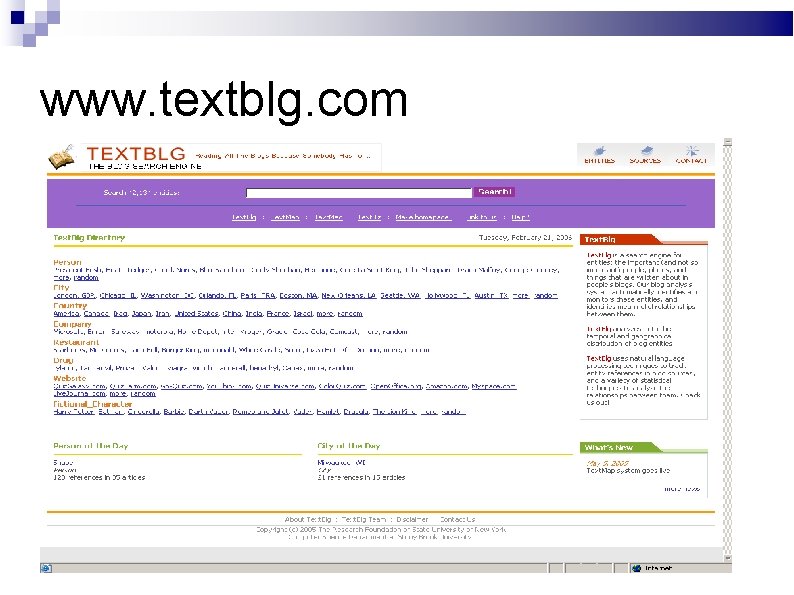

www. textblg. com

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity-oriented search Social network analysis Future directions

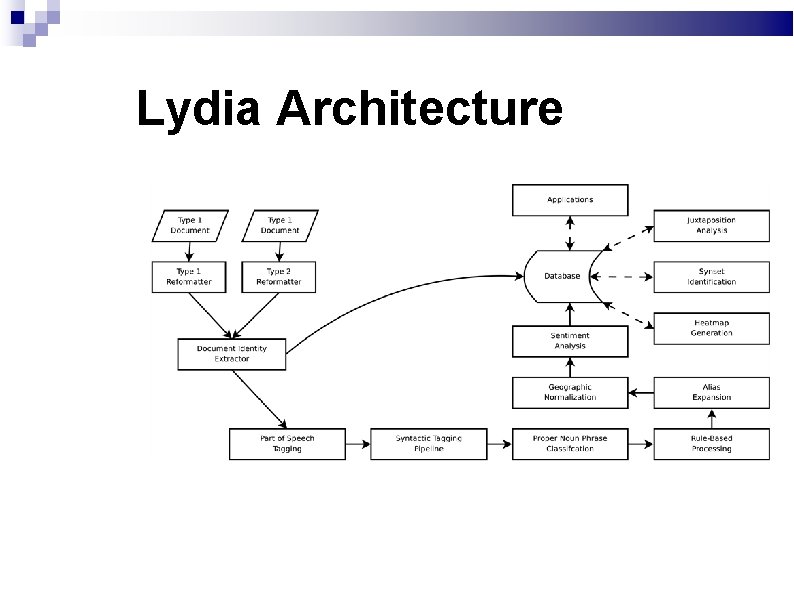

System Architecture Spidering – text is retrieved from a given site on a daily basis using semi-custom spidering agents. Normalization – clean text is extracted with semicustom parsers and formatted for our pipeline Text Markup – annotates parts of the source text for storage and analysis. Back Office Operations – we aggregate entity frequency and relational data for a variety of statistical analyses. Levon Lloyd, Dimitrios Kechagias, and Steven Skiena. Lydia: A System for Large-Scale News Analysis. In String Processing and Information Retrieval: 12 th International Conference (SPIRE 2005).

Text Markup We apply natural language processing (NLP) techniques to annotate interesting features of the document. Full parsing techniques are too slow to keep up with our volume of text, so we employ shallow parsing instead. We can currently markup approximately 2000 newspapers per day per CPU. Analysis phases include…

Input Dr. Judith Rodin, the former president of the University of Pennsylvania, will become president of the Rockefeller Foundation next year, the foundation announced yesterday in New York. She will take over in March 2005, succeeding Gordon Conway, the foundation's first non-American president. Mr. Conway announced last year that he would retire at 66 in December and return to Britain, where his children and grandchildren live.

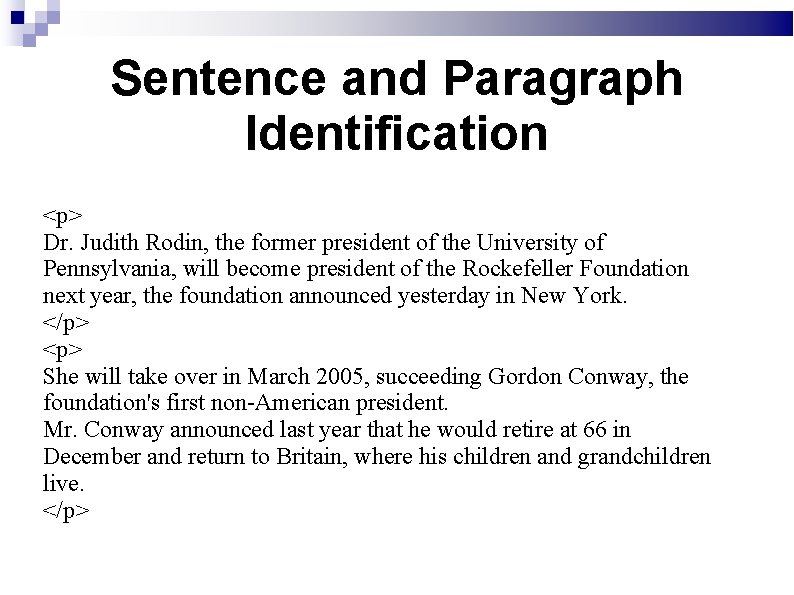

Sentence and Paragraph Identification <p> Dr. Judith Rodin, the former president of the University of Pennsylvania, will become president of the Rockefeller Foundation next year, the foundation announced yesterday in New York. </p> <p> She will take over in March 2005, succeeding Gordon Conway, the foundation's first non-American president. Mr. Conway announced last year that he would retire at 66 in December and return to Britain, where his children and grandchildren live. </p>

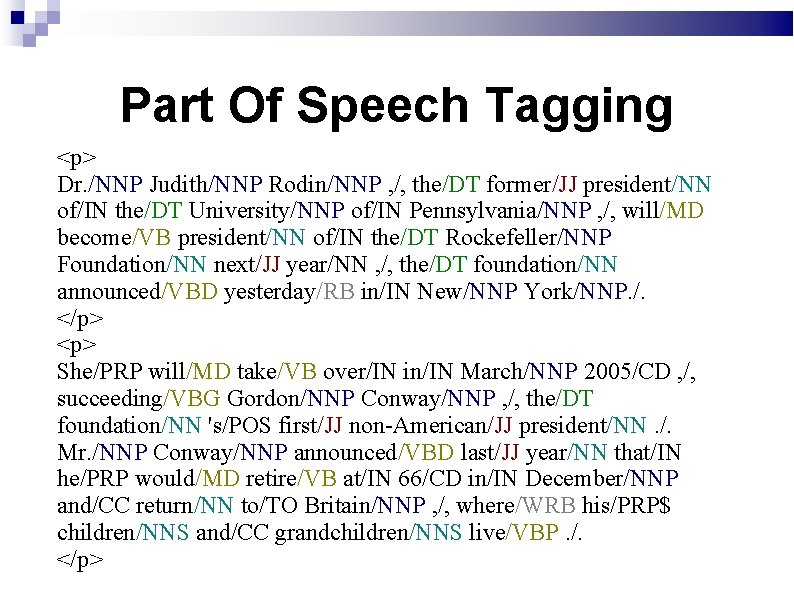

Part Of Speech Tagging <p> Dr. /NNP Judith/NNP Rodin/NNP , /, the/DT former/JJ president/NN of/IN the/DT University/NNP of/IN Pennsylvania/NNP , /, will/MD become/VB president/NN of/IN the/DT Rockefeller/NNP Foundation/NN next/JJ year/NN , /, the/DT foundation/NN announced/VBD yesterday/RB in/IN New/NNP York/NNP. /. </p> <p> She/PRP will/MD take/VB over/IN in/IN March/NNP 2005/CD , /, succeeding/VBG Gordon/NNP Conway/NNP , /, the/DT foundation/NN 's/POS first/JJ non-American/JJ president/NN. /. Mr. /NNP Conway/NNP announced/VBD last/JJ year/NN that/IN he/PRP would/MD retire/VB at/IN 66/CD in/IN December/NNP and/CC return/NN to/TO Britain/NNP , /, where/WRB his/PRP$ children/NNS and/CC grandchildren/NNS live/VBP. /. </p>

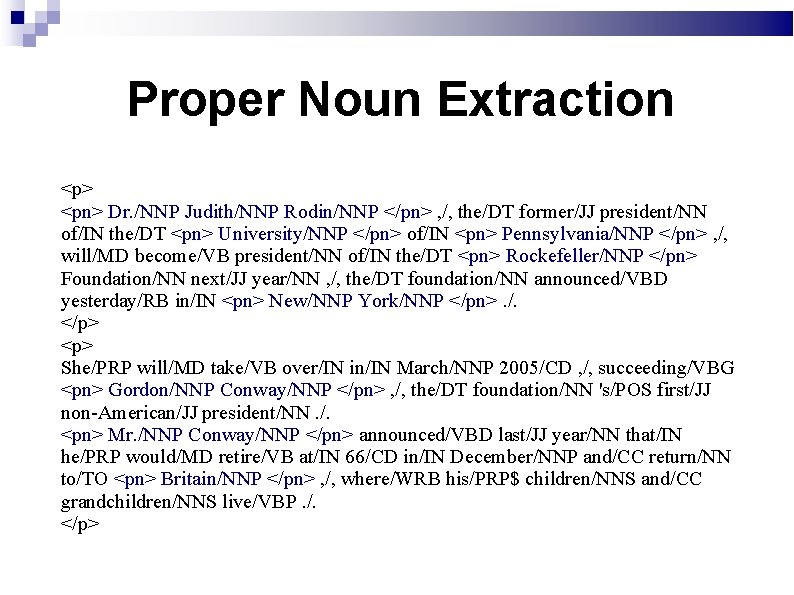

Proper Noun Extraction <p> <pn> Dr. /NNP Judith/NNP Rodin/NNP </pn> , /, the/DT former/JJ president/NN of/IN the/DT <pn> University/NNP </pn> of/IN <pn> Pennsylvania/NNP </pn> , /, will/MD become/VB president/NN of/IN the/DT <pn> Rockefeller/NNP </pn> Foundation/NN next/JJ year/NN , /, the/DT foundation/NN announced/VBD yesterday/RB in/IN <pn> New/NNP York/NNP </pn>. /. </p> <p> She/PRP will/MD take/VB over/IN in/IN March/NNP 2005/CD , /, succeeding/VBG <pn> Gordon/NNP Conway/NNP </pn> , /, the/DT foundation/NN 's/POS first/JJ non-American/JJ president/NN. /. <pn> Mr. /NNP Conway/NNP </pn> announced/VBD last/JJ year/NN that/IN he/PRP would/MD retire/VB at/IN 66/CD in/IN December/NNP and/CC return/NN to/TO <pn> Britain/NNP </pn> , /, where/WRB his/PRP$ children/NNS and/CC grandchildren/NNS live/VBP. /. </p>

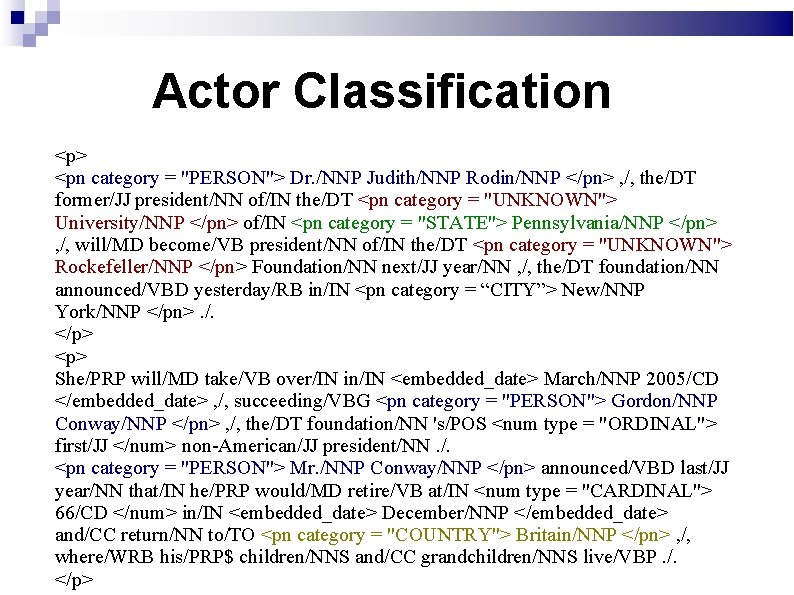

Actor Classification <p> <pn category = "PERSON"> Dr. /NNP Judith/NNP Rodin/NNP </pn> , /, the/DT former/JJ president/NN of/IN the/DT <pn category = "UNKNOWN"> University/NNP </pn> of/IN <pn category = "STATE"> Pennsylvania/NNP </pn> , /, will/MD become/VB president/NN of/IN the/DT <pn category = "UNKNOWN"> Rockefeller/NNP </pn> Foundation/NN next/JJ year/NN , /, the/DT foundation/NN announced/VBD yesterday/RB in/IN <pn category = “CITY”> New/NNP York/NNP </pn>. /. </p> <p> She/PRP will/MD take/VB over/IN in/IN <embedded_date> March/NNP 2005/CD </embedded_date> , /, succeeding/VBG <pn category = "PERSON"> Gordon/NNP Conway/NNP </pn> , /, the/DT foundation/NN 's/POS <num type = "ORDINAL"> first/JJ </num> non-American/JJ president/NN. /. <pn category = "PERSON"> Mr. /NNP Conway/NNP </pn> announced/VBD last/JJ year/NN that/IN he/PRP would/MD retire/VB at/IN <num type = "CARDINAL"> 66/CD </num> in/IN <embedded_date> December/NNP </embedded_date> and/CC return/NN to/TO <pn category = "COUNTRY"> Britain/NNP </pn> , /, where/WRB his/PRP$ children/NNS and/CC grandchildren/NNS live/VBP. /. </p>

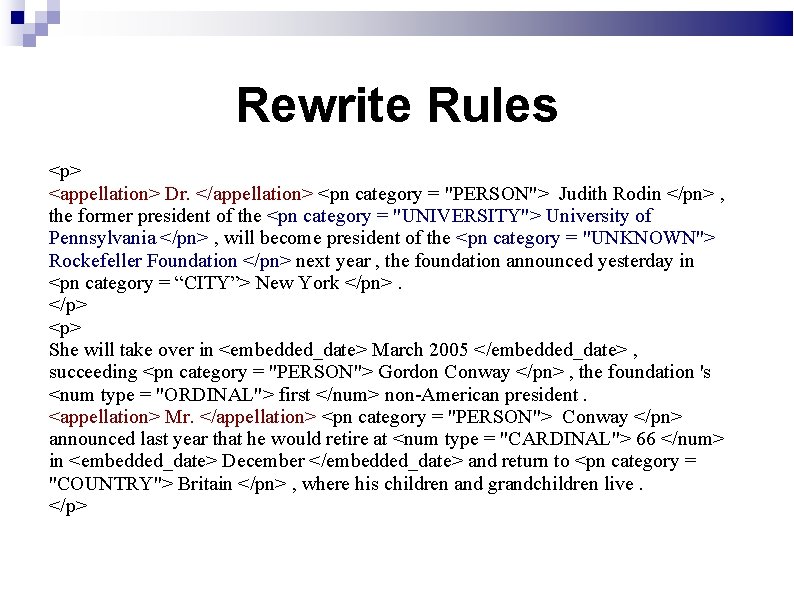

Rewrite Rules <p> <appellation> Dr. </appellation> <pn category = "PERSON"> Judith Rodin </pn> , the former president of the <pn category = "UNIVERSITY"> University of Pennsylvania </pn> , will become president of the <pn category = "UNKNOWN"> Rockefeller Foundation </pn> next year , the foundation announced yesterday in <pn category = “CITY”> New York </pn>. </p> <p> She will take over in <embedded_date> March 2005 </embedded_date> , succeeding <pn category = "PERSON"> Gordon Conway </pn> , the foundation 's <num type = "ORDINAL"> first </num> non-American president. <appellation> Mr. </appellation> <pn category = "PERSON"> Conway </pn> announced last year that he would retire at <num type = "CARDINAL"> 66 </num> in <embedded_date> December </embedded_date> and return to <pn category = "COUNTRY"> Britain </pn> , where his children and grandchildren live. </p>

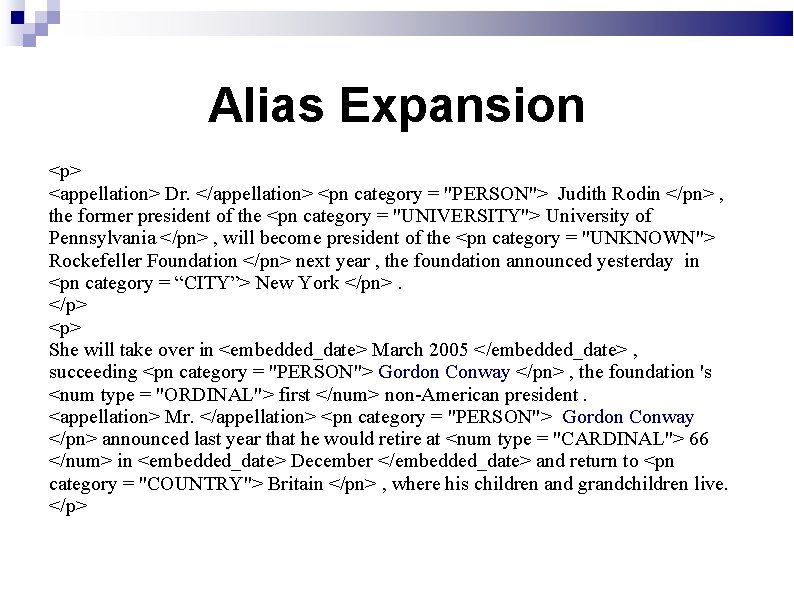

Alias Expansion <p> <appellation> Dr. </appellation> <pn category = "PERSON"> Judith Rodin </pn> , the former president of the <pn category = "UNIVERSITY"> University of Pennsylvania </pn> , will become president of the <pn category = "UNKNOWN"> Rockefeller Foundation </pn> next year , the foundation announced yesterday in <pn category = “CITY”> New York </pn>. </p> <p> She will take over in <embedded_date> March 2005 </embedded_date> , succeeding <pn category = "PERSON"> Gordon Conway </pn> , the foundation 's <num type = "ORDINAL"> first </num> non-American president. <appellation> Mr. </appellation> <pn category = "PERSON"> Gordon Conway </pn> announced last year that he would retire at <num type = "CARDINAL"> 66 </num> in <embedded_date> December </embedded_date> and return to <pn category = "COUNTRY"> Britain </pn> , where his children and grandchildren live. </p>

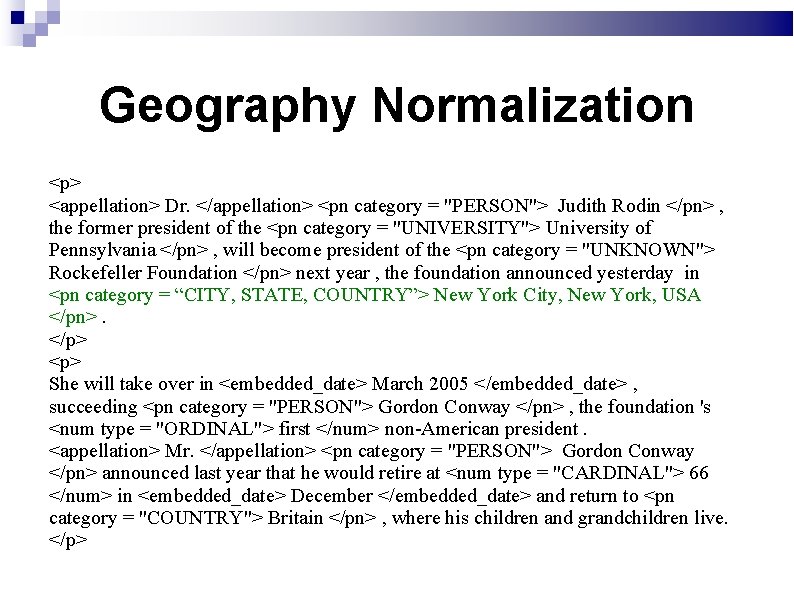

Geography Normalization <p> <appellation> Dr. </appellation> <pn category = "PERSON"> Judith Rodin </pn> , the former president of the <pn category = "UNIVERSITY"> University of Pennsylvania </pn> , will become president of the <pn category = "UNKNOWN"> Rockefeller Foundation </pn> next year , the foundation announced yesterday in <pn category = “CITY, STATE, COUNTRY”> New York City, New York, USA </pn>. </p> <p> She will take over in <embedded_date> March 2005 </embedded_date> , succeeding <pn category = "PERSON"> Gordon Conway </pn> , the foundation 's <num type = "ORDINAL"> first </num> non-American president. <appellation> Mr. </appellation> <pn category = "PERSON"> Gordon Conway </pn> announced last year that he would retire at <num type = "CARDINAL"> 66 </num> in <embedded_date> December </embedded_date> and return to <pn category = "COUNTRY"> Britain </pn> , where his children and grandchildren live. </p>

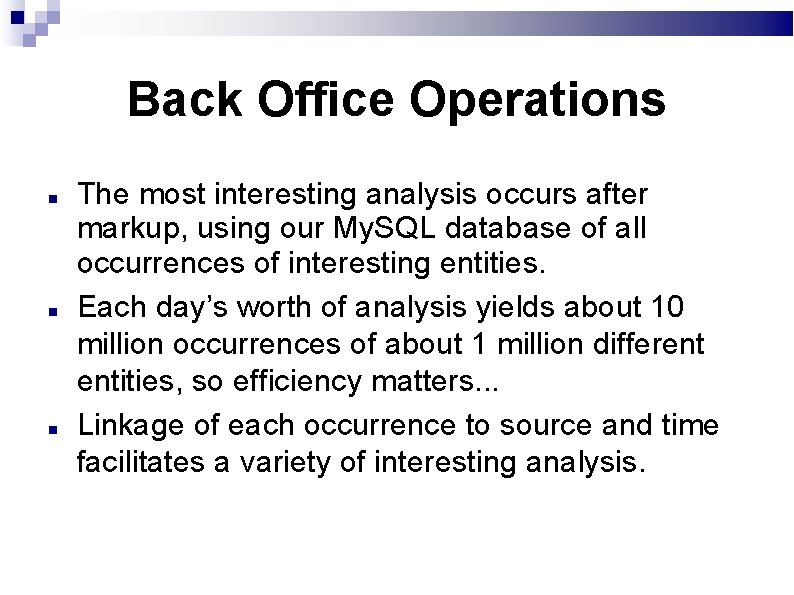

Back Office Operations The most interesting analysis occurs after markup, using our My. SQL database of all occurrences of interesting entities. Each day’s worth of analysis yields about 10 million occurrences of about 1 million different entities, so efficiency matters. . . Linkage of each occurrence to source and time facilitates a variety of interesting analysis.

Lydia Architecture

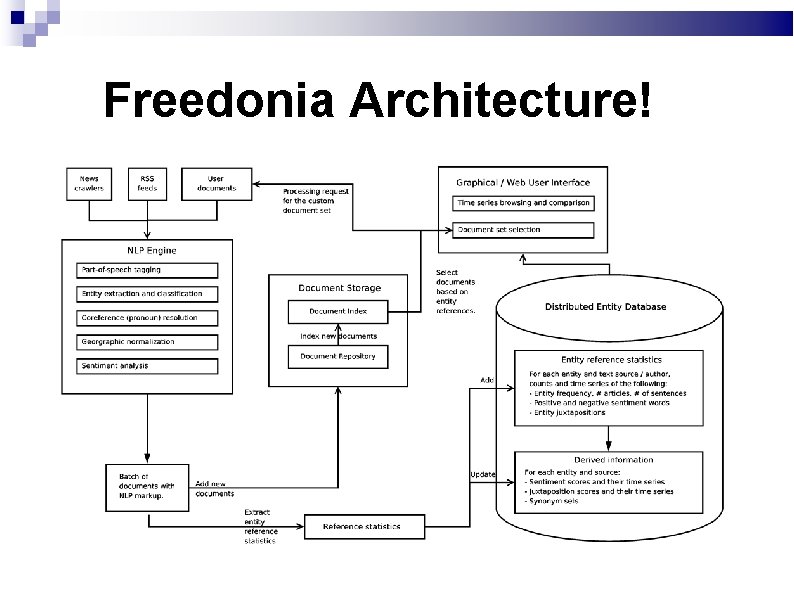

Freedonia Architecture!

Eliminating the Database Bottleneck Separating NLP analysis from backend analysis eliminates daily processing cycles. Distributed Map-Reduce processing with Hadoop aggregates years of counts in under an hour on our cluster! Such throughput facilitates large-scale evaluation to improve NLP/sentiment Server architecture now oriented around time series vs. daily processing

Juxtaposition Analysis We want to compute the significance of the co-occurrences between two entities Similar to collaborative filtering, determining which customers are most similar in order to predict future buying preferences Just counting the number of co-occurrences causes the most popular entities to be related to everyone

Synonym Sets JFK, John Kennedy, John F. Kennedy, and John Fitzgerald Kennedy all refer to the same person. We need a mechanism to link multiple entities that have slightly different names but refer to the same thing. We say that two actors belong in the same synonym set if: There names are morphologically compatible. If the sets of entities that they are related to are similar. ➔Levon Lloyd, Andrew Mehler, and Steven Skiena. Identifying Co-referential Names Across Large Corpra. In Proc. Combinatorial Pattern Matching (CPM 2006)

Duplicate Article Elimination Supreme Court Justice David Souter suffered minor injuries when a group of young men assaulted him as he jogged on a city street, a court spokeswoman and Metropolitan Police said Saturday. Supreme Court Justice David Souter suffered minor injuries when a group of young men assaulted him as he jogged on a city street, a court spokeswoman and Metropolitan Police said. Hashing techniques can efficiently identify duplicate and near-duplicate articles appearing in different news sources.

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity search Social network analysis Future directions

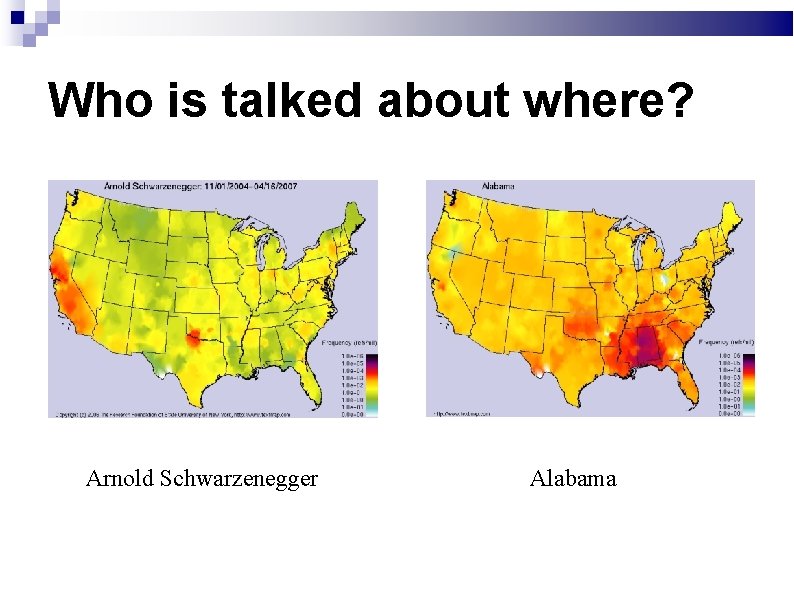

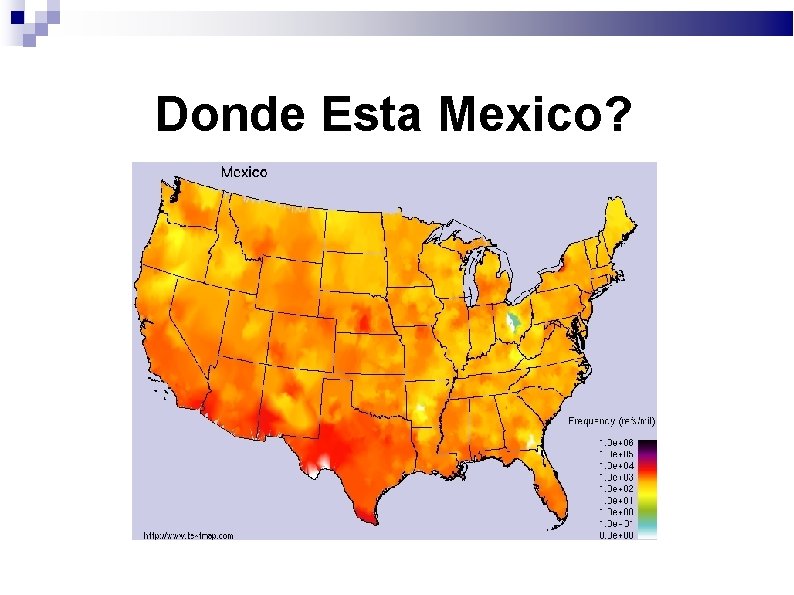

Heatmaps Where are people are talking about particular topics? Newspapers have a sphere of influence based on: Power of the source – circulation, website popularity Population density of surrounding cities The heat a given entity generates in a particular location is a function of the frequency it is mentioned in local sources ➔A. Mehler, Y. Bao, X. Li, Y. Wang, and S. Skiena. Spatial analysis of News Sources, IEEE Trans. Visualization (2006)

Who is talked about where? Arnold Schwarzenegger Alabama

Donde Esta Mexico?

Comparative Entity Maps Dizziness vs. Insomnia Jews vs. Christians

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity-oriented search Social network analysis Future directions

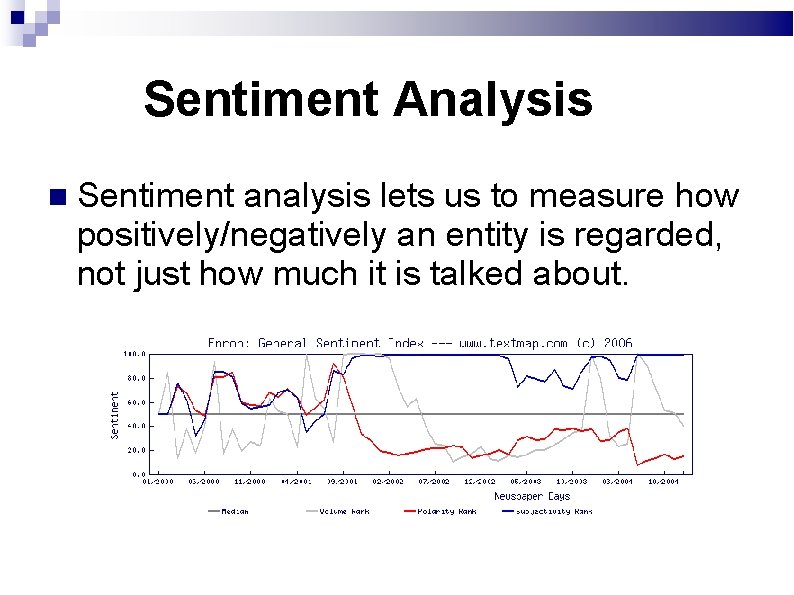

Sentiment Analysis Sentiment analysis lets us to measure how positively/negatively an entity is regarded, not just how much it is talked about.

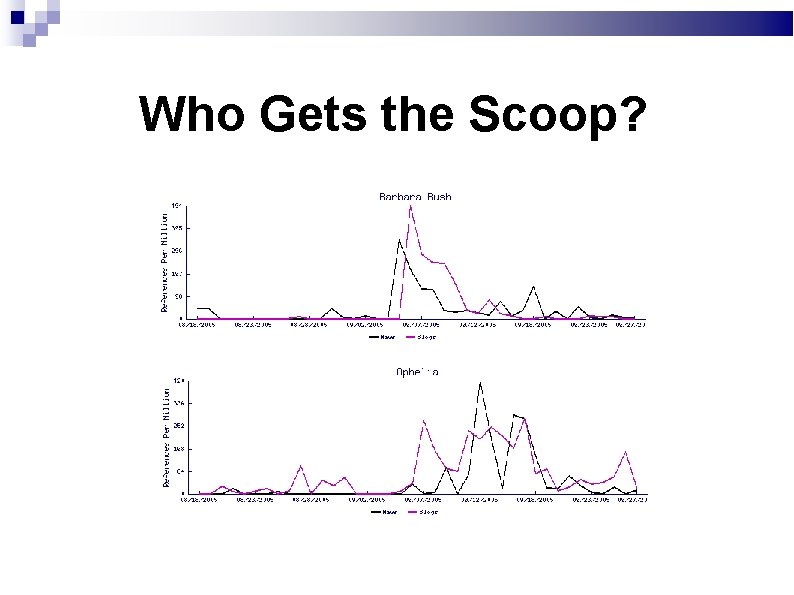

Blog Analysis with Lydia Blogs represent a different view of the world than newspapers. Less objective Greater diversity of topics We adapted Lydia to process Livejournal blogs, and compared blog content to that of newspapers. ➔Levon Lloyd, Prachi Kaulgud, and Steven Skiena. News vs. Blogs: Who Gets the Scoop? . In AAAI Spring Symposium: Computational Approaches to Analyzing Weblogs.

Most Positive Actors in News and Blogs News: Felicity Huffman, Fenando Alonso, Dan Rather, Warren Buffett, Joe Paterno, Ray Charles, Bill Frist, Ben Wallace, John Negroponte, George Clooney, Alicia Keys, Roy Moore, Jay Leno, Roger Federer Blogs: Joe Paterno, Phil Mickelson, Tom Brokow, Sasha Cohen, Ted Stevens, Rafael Nadal, Felicity Huffman, Warren Buffett, Fernando Alonso, Chauncey Billups, Maria Sharapova, Earl Woods, Kasey Kahne, Tom Brady

Most Negative Actors in News and Blogs News: Slobodan Milosevic, John Ashcroft, Zacarias Moussaoui, John Allen Muhammad, Lionel Tate, Charles Taylor, George Ryan, Al Sharpton, Peter Jennings, Saddam Hussein, Jose Padilla, Abdul Rahman, Adolf Hitler, Harriet Miers, King Gyanendra Blogs: John Allen Muhammad, Sammy Sosa, George Ryan, Lionel Tate, Esteban Loaiza, Slobodan Milosevic, Charles Schumer, Scott Peterson, Zacarias Moussaoui, William Jefferson, King Gyanemdra, Ricky Williams, Ernie Fletcher, Edward Kennedy, John Gotti

How Do We Do it? We use large-scale statistical analysis instead of careful NLP of individual reviews. We expand small seed lists of +/- terms into large vocabularies using Wordnet and pathcounting algorithms. We correct for modifiers and negation. Statistical methods turn these counts into indicies. ➔N. Godbole, M. Srinivasaiah, and S. Skiena. Large-Scale Sentiment Analysis for News and Blogs. Int. Conf. Weblogs and Social Media, 2007

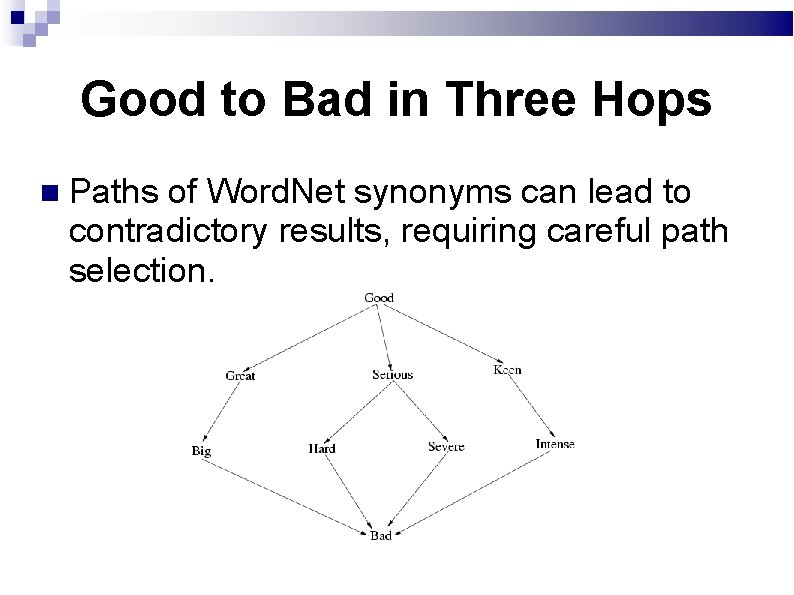

Good to Bad in Three Hops Paths of Word. Net synonyms can lead to contradictory results, requiring careful path selection.

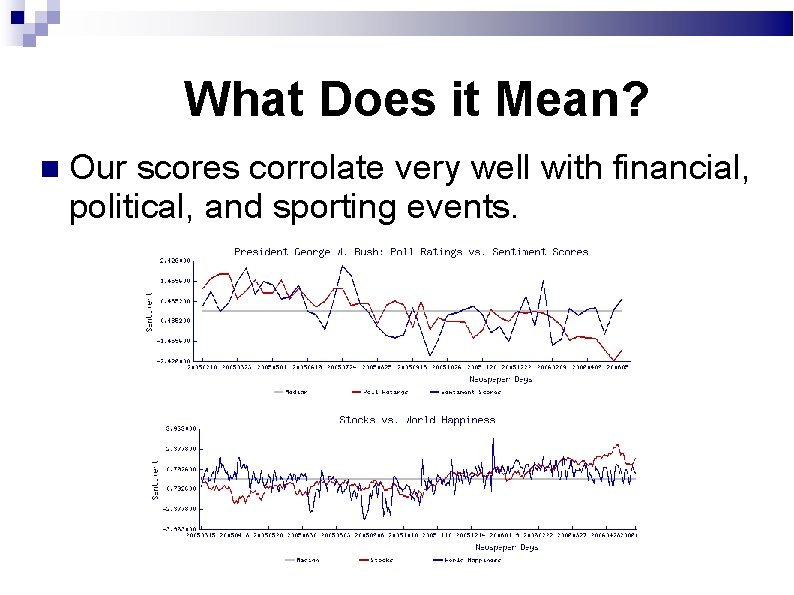

What Does it Mean? Our scores corrolate very well with financial, political, and sporting events.

Seasonal Effects on Sentiment The low point is not September 2001 but April 2004, with the Madrid bombings and war in Iraq.

Bush vs. Putin: who’s more popular where?

Multilingual Sentiment Analysis Implementing language-specific sentiment analysis requires: 1. Language-specific NLP software (e. g. POS tagger, NER ) 2. Language-specific linguistic resources (e. g. Word. Net) Instead, we couple machine translation with our English sentiment analysis Foreign text Translator English sentiment analyzer M. Bautin, L. Venu-reddy, S. Skiena, International Sentiment analysis for news and blogs, IWCSM 2008

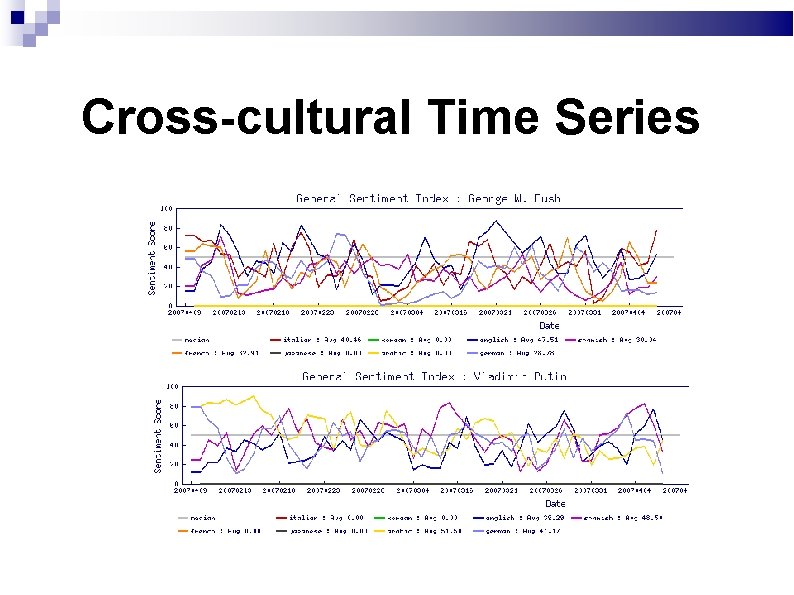

Cross-cultural Time Series

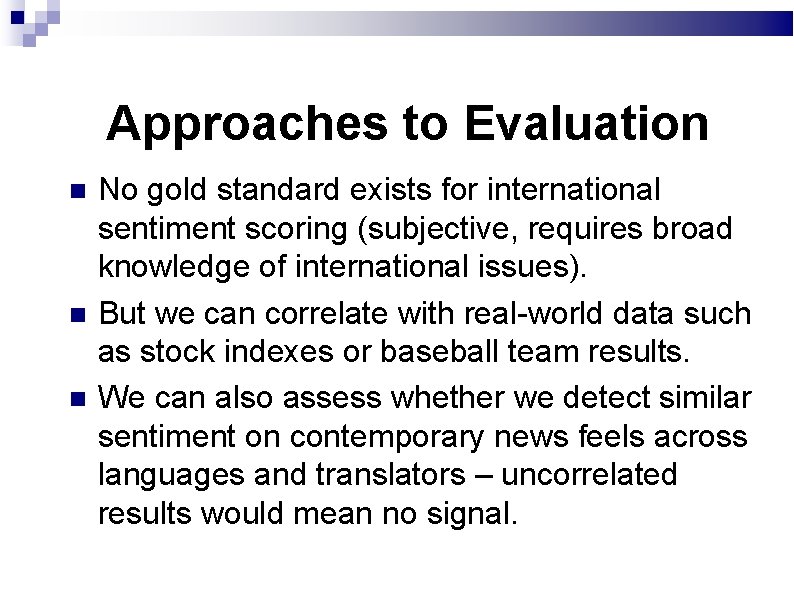

But what does it mean? The key question here is assessment: Do meaningful sentiment signals survive the limitations of mechanical translation? Motivations for multilingual sentiment analysis include Foreign market research Public opinion analysis

Approaches to Evaluation No gold standard exists for international sentiment scoring (subjective, requires broad knowledge of international issues). But we can correlate with real-world data such as stock indexes or baseball team results. We can also assess whether we detect similar sentiment on contemporary news feels across languages and translators – uncorrelated results would mean no signal.

Our Methodology We evaluated news May 1 -10, 2007 from dozens of English, Spanish, Arabic, French, German, Italian, Arabic, Chinese, Japanese, and Korean newspapers. We correlated aggregate detected sentiment across classes of named entities (countries). We do not expect (or desire) perfect correlation, but seek significant correlation Time zones make daily correlations problematic.

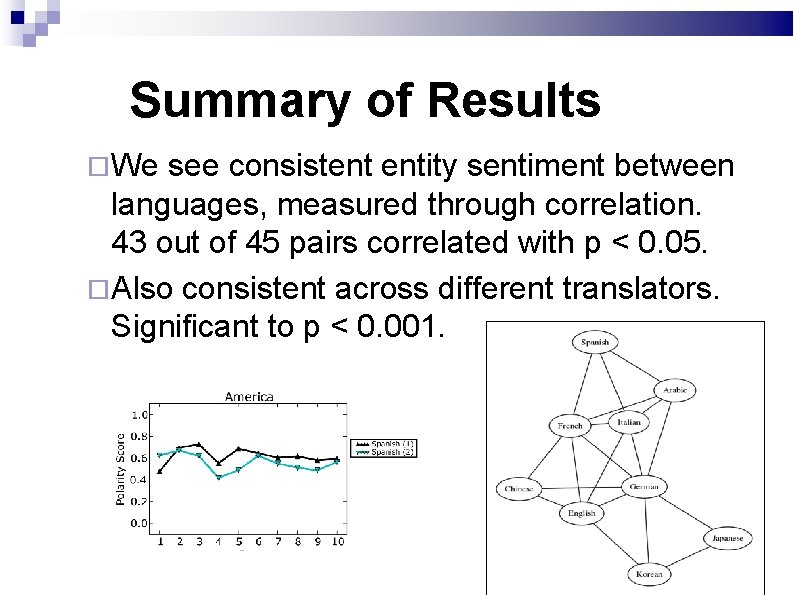

Summary of Results We see consistent entity sentiment between languages, measured through correlation. 43 out of 45 pairs correlated with p < 0. 05. Also consistent across different translators. Significant to p < 0. 001.

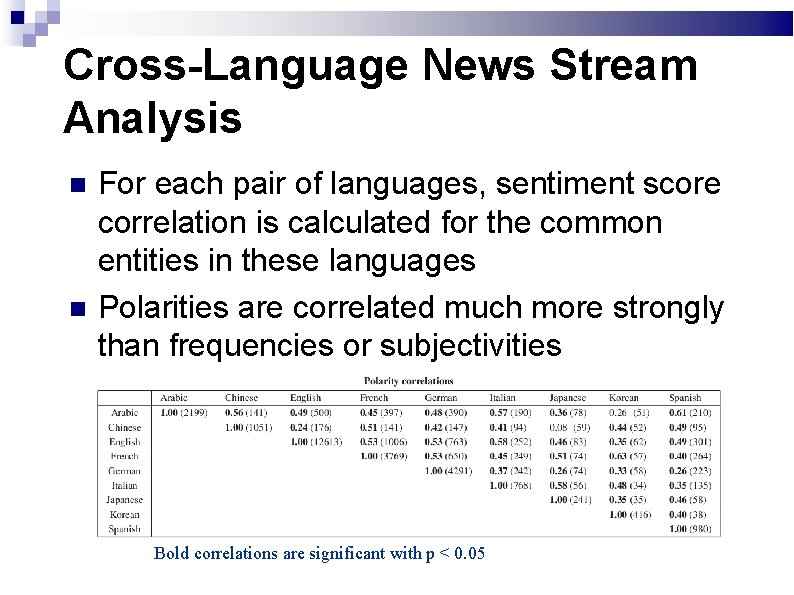

Cross-Language News Stream Analysis For each pair of languages, sentiment score correlation is calculated for the common entities in these languages Polarities are correlated much more strongly than frequencies or subjectivities Bold correlations are significant with p < 0. 05

Parallel Corpus Analysis European Commission Joint Research Centre's Acquis multilingual parallel corpus No temporal information Greater frequency and subjectivity correlation than in the news. Bold correlations are significant with p < 0. 05

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity-oriented search Social network analysis Future directions

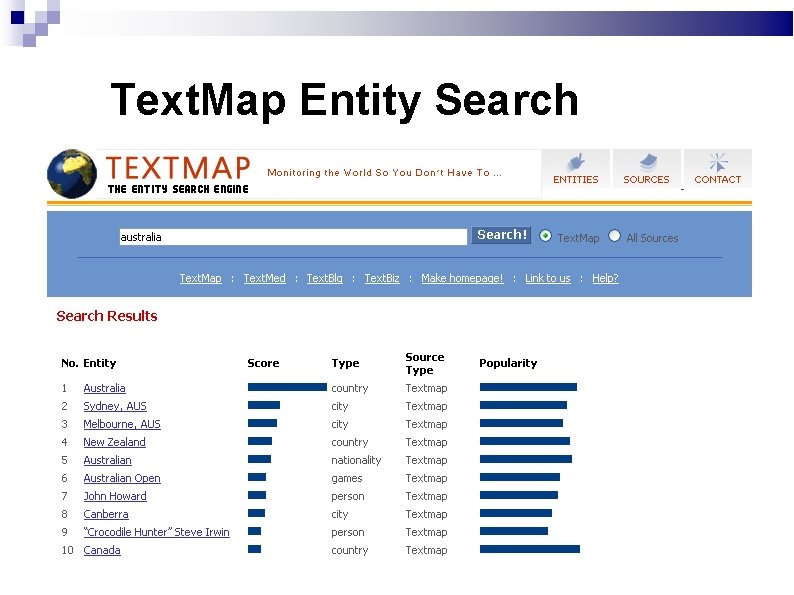

Entity-Oriented Search What if the user is not looking for documents, but for entities? Input: a text corpus Problem: return a ranked list of entities relevant to arbitrary search queries Mikhail Bautin and Steven Skiena. Concordance-Based Entity. Oriented Search. In Proc. IEEE/ACM Web Intelligence (WI 2007) 586 -592.

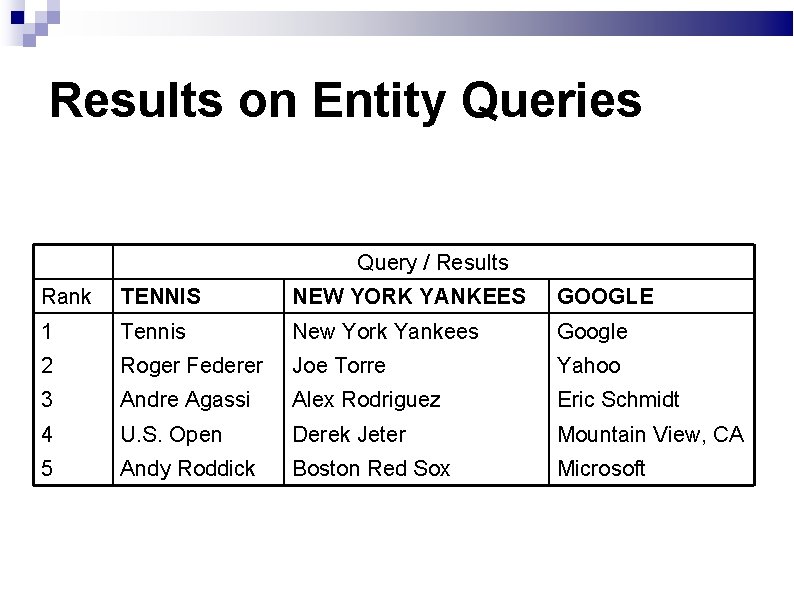

Results on Entity Queries Query / Results Rank TENNIS NEW YORK YANKEES GOOGLE 1 Tennis New York Yankees Google 2 Roger Federer Joe Torre Yahoo 3 Andre Agassi Alex Rodriguez Eric Schmidt 4 U. S. Open Derek Jeter Mountain View, CA 5 Andy Roddick Boston Red Sox Microsoft

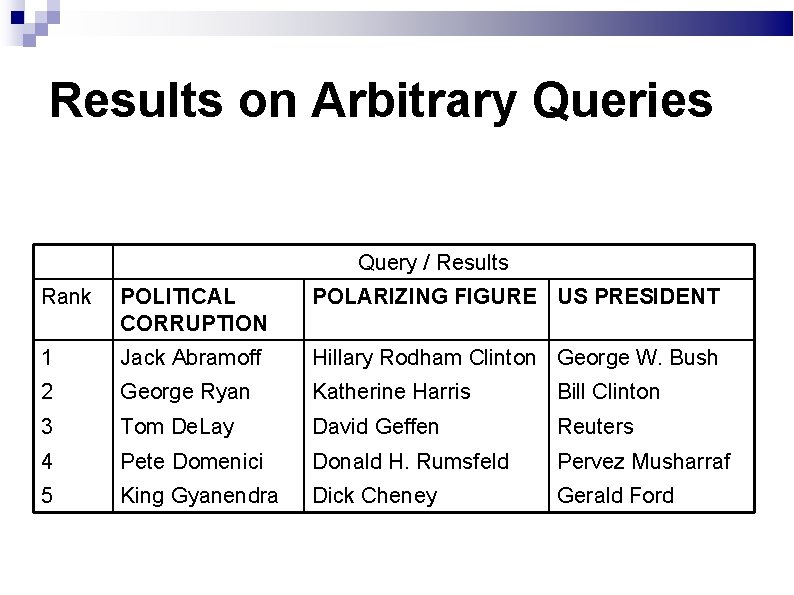

Results on Arbitrary Queries Query / Results Rank POLITICAL CORRUPTION POLARIZING FIGURE US PRESIDENT 1 Jack Abramoff Hillary Rodham Clinton George W. Bush 2 George Ryan Katherine Harris Bill Clinton 3 Tom De. Lay David Geffen Reuters 4 Pete Domenici Donald H. Rumsfeld Pervez Musharraf 5 King Gyanendra Dick Cheney Gerald Ford

Applications of Entity Search Augmented news and web search Provide entity results in addition to document results Provide hints for navigation (“see also” links) Improved encyclopedia search (e. g. “Microsoft Chairman” should return Bill Gates instead of Helmut Panke)

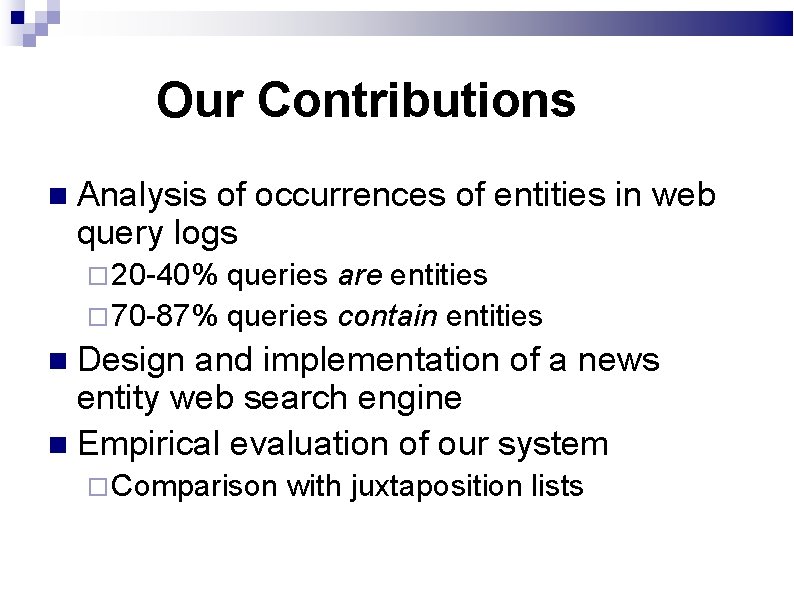

Our Contributions Analysis of occurrences of entities in web query logs 20 -40% queries are entities 70 -87% queries contain entities Design and implementation of a news entity web search engine Empirical evaluation of our system Comparison with juxtaposition lists

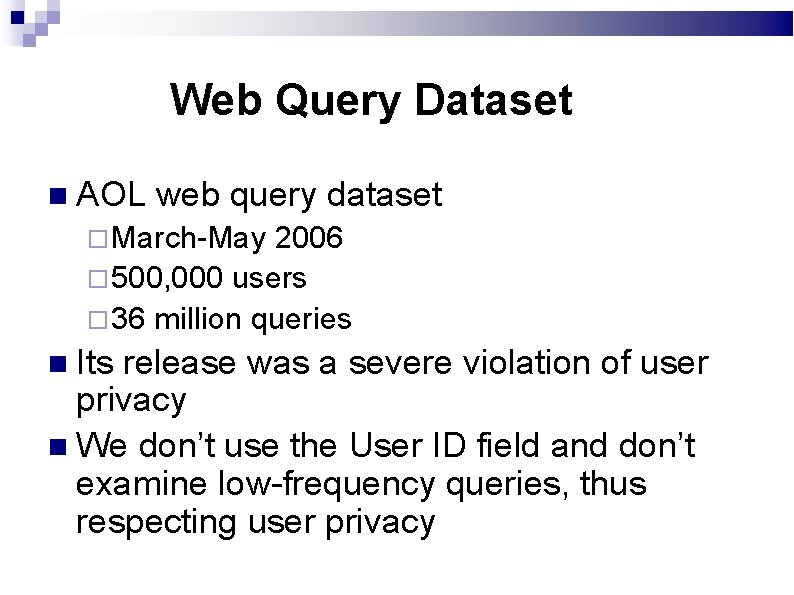

Web Query Dataset AOL web query dataset March-May 2006 500, 000 users 36 million queries Its release was a severe violation of user privacy We don’t use the User ID field and don’t examine low-frequency queries, thus respecting user privacy

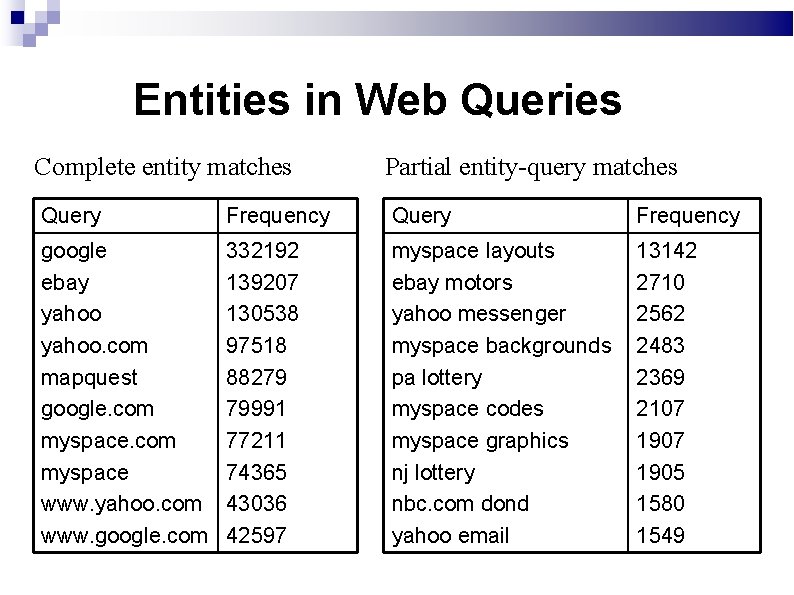

Entities in Web Queries Complete entity matches Partial entity-query matches Query Frequency google ebay yahoo. com mapquest google. com myspace www. yahoo. com www. google. com 332192 139207 130538 97518 88279 79991 77211 74365 43036 42597 myspace layouts ebay motors yahoo messenger myspace backgrounds pa lottery myspace codes myspace graphics nj lottery nbc. com dond yahoo email 13142 2710 2562 2483 2369 2107 1905 1580 1549

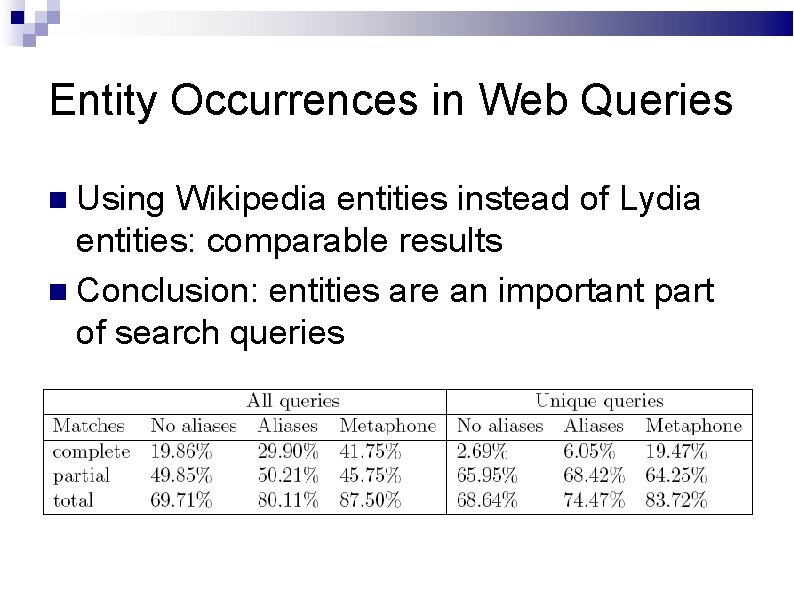

Entity Occurrences in Web Queries Comparing Lydia entities with AOL queries Complete queries: 18% (all) vs 2% (unique) Complete matches increase from left to right: more entities recognized Partial matches decrease: partial matches become complete matches

Entity Occurrences in Web Queries Using Wikipedia entities instead of Lydia entities: comparable results Conclusion: entities are an important part of search queries

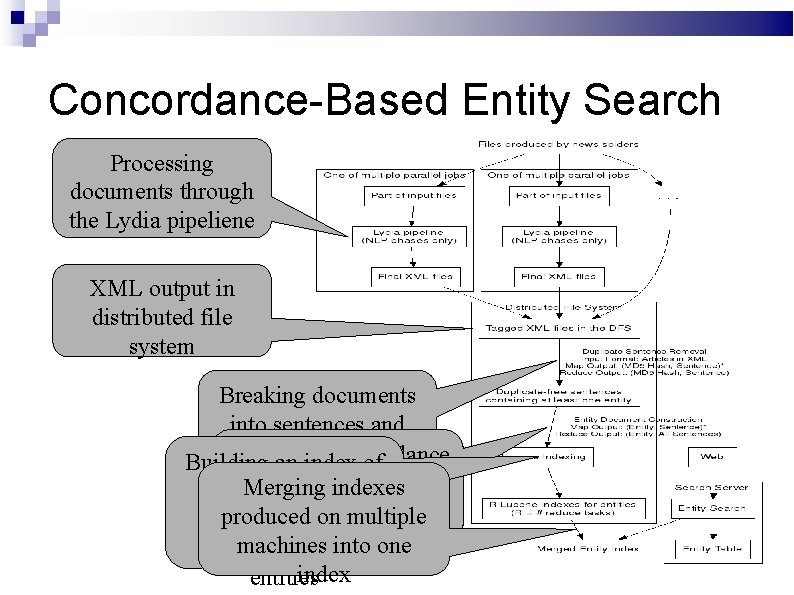

Concordance-Based Entity Search Our approach: Identify entities in the given text Construct a text document for each entity Search these documents using an existing search library (e. g. Lucene)

Concordance-Based Entity Search Processing documents through the Lydia pipeliene XML output in distributed file system Breaking documents into sentences and removing duplicate Producing concordance Building an index of sentences documents for every Merging indexes concordance entity by produced on merging multiple documents sentences machines into corresponding to one index entities

Concordance File for George W. Bush Earlier this week George W. Bush defended the missile defense plan for Eastern Europe. 70 names were on the list , including national figures like George W. Bush. I would n’t approve of him again unless George W. Bush stopped the war in Iraq. And , for that opportunity , the Democrats can thank George W. Bush. Another State Department official confirmed her status as the most senior Arab. American in the George W. Bush administration. Now , more than 2 years later , the majority of those voters say they are satisfied with the George W. Bush presidency.

Searching the Concordances Indexing and searching these concordance documents using Lucene To remove bias towards infrequent entities, we remove document length normalization To deal with duplicate and near-duplicate articles, we remove duplicate sentences

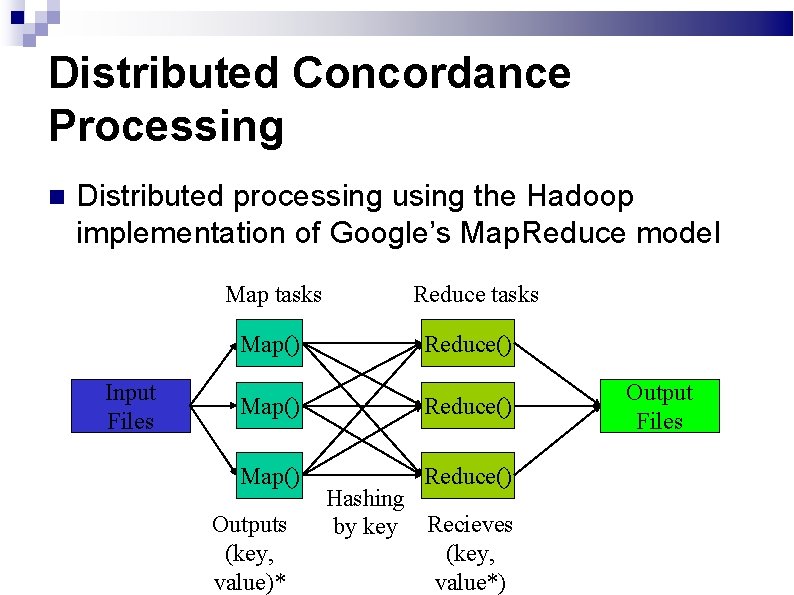

Distributed Concordance Processing Distributed processing using the Hadoop implementation of Google’s Map. Reduce model Map tasks Input Files Reduce tasks Map() Reduce() Outputs (key, value)* Hashing by key Recieves (key, value*) Output Files

Text. Map Entity Search

Decreasing Relevance with Time-dependent indexing and search: News arrive every day Time should take part in scoring Solution: a separate document for each (entity, month) method Search result score is a function of time

Concordance-Based Entity Search Max-score aggregation of results

Evaluating Search Quality No standardized benchmarks exist for entity search TREC benchmarks: ad hoc, QA Not applicable directly to entity search Juxtaposition score: a measure of how strongly entities are related

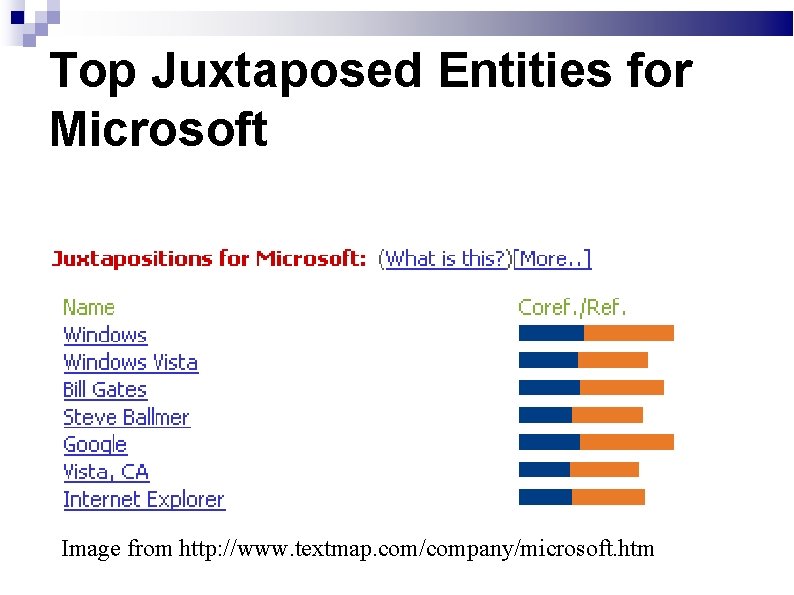

Top Juxtaposed Entities for Microsoft Image from http: //www. textmap. com/company/microsoft. htm

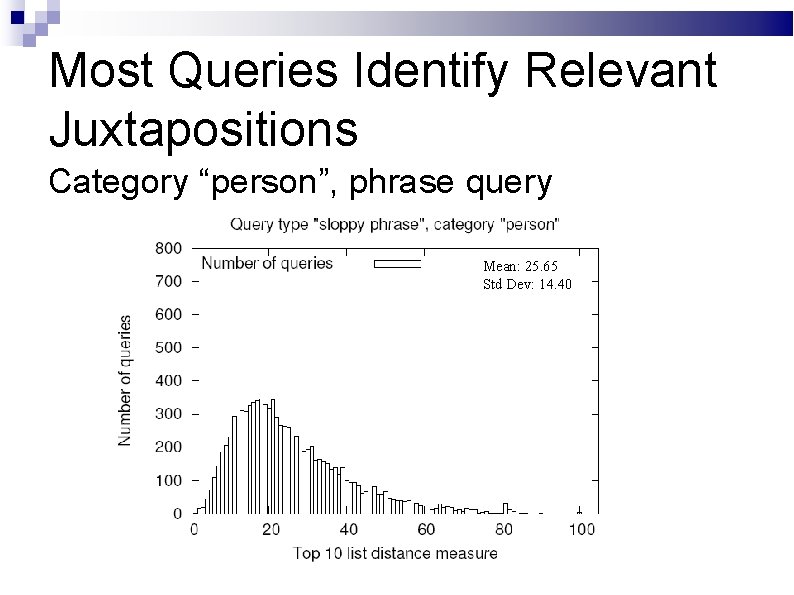

Most Queries Identify Relevant Juxtapositions Category “person”, phrase query Mean: 25. 65 Std Dev: 14. 40

Performance by Category Kendell distance scores are divided by n(n 1)/2=45 so that 1 = reverse lists 2. 2 ≈ disjoint lists Last name queries: results dominated by persons with that last name

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity-oriented search Social network analysis Future directions

Entity Networks Statistically-significant entity juxtapositions naturally define networks of news entities Our experiments show that these networks are scale-free, i. e. observe power law distributions on both entity reference frequency and vertex degree. These networks are useful for visualization, traversal, and more.

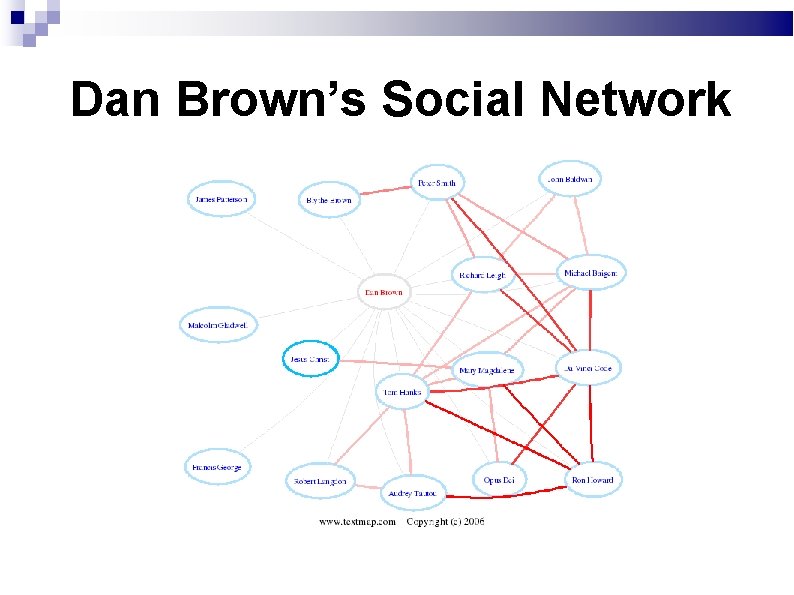

Dan Brown’s Social Network

Discovering Entity Communities Identifying natural communities of news entities facilitates interesting analysis. E. g: Democrats, technologists, Italians, actors, baseball players, New Yorkers. Curated rosters of such communities are usually unobtainable, incomplete, and/or ambiguous

Expanding Entity Communities Our approach: grow a large community from a small seed set of known members. Selected representatives of any distinct community are easy to identify (e. g. Wikipedia) We incrementally expand the network to include the entity with the most significant in-group neighborhood.

Community Extension Criteria

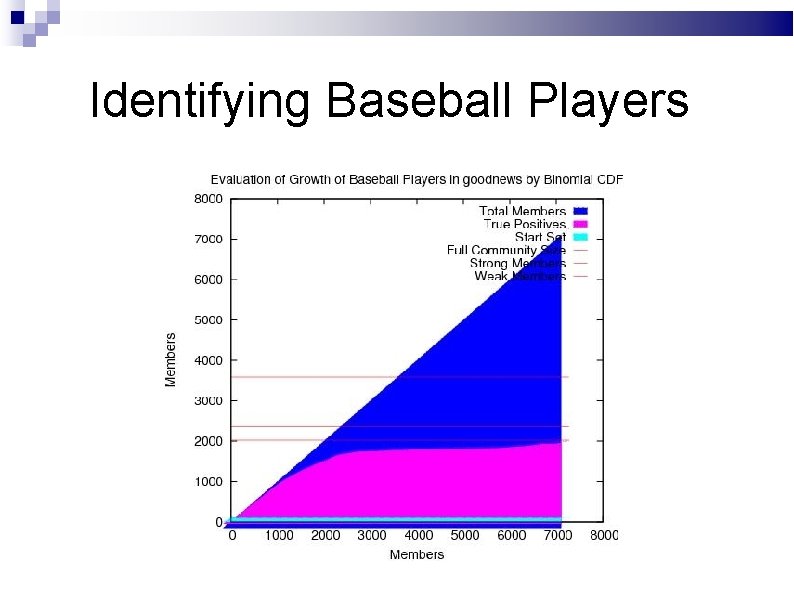

Identifying Baseball Players

But when do we stop? This incremental process eventually starts adding members from outside the community, destroying the result. We partition our known members into seed and validation sets. The gap between insertions of validation members grows substantially soon as we leave the community.

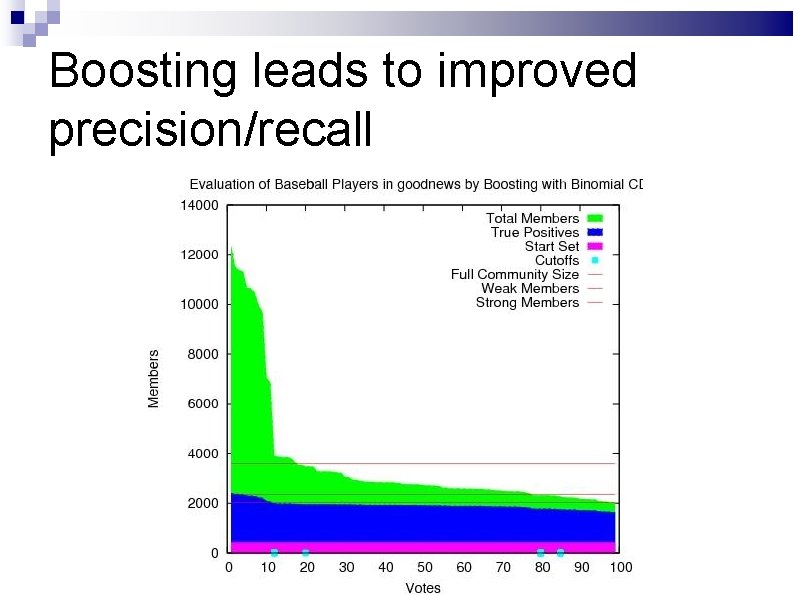

Boosting leads to improved precision/recall

When is a baseball player not a baseball player?

Outline of Talk Lydia system architecture Spatial and temporal analysis Sentiment analysis Entity-oriented search Social network analysis Future directions

Future Directions Improved data access, to support… Larger scale / historical document corpus analysis Foreign-language news analysis Event-focused relation extraction Financial modeling and analysis Collaboration with political and social scientists Our technology is now being commercialized by General Sentiment

The Lydia Team

… and the Lydia Cluster

Who Gets the Scoop?

New Orleans – Animation

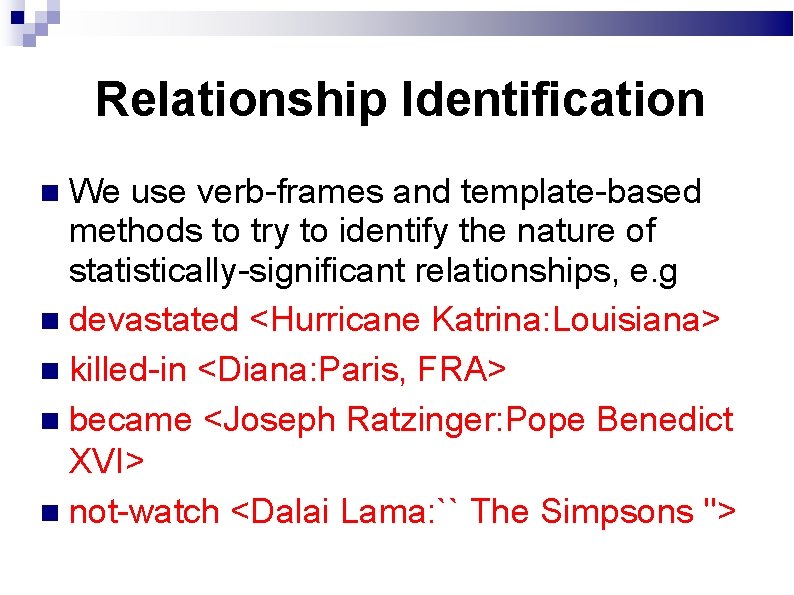

Relationship Identification We use verb-frames and template-based methods to try to identify the nature of statistically-significant relationships, e. g devastated <Hurricane Katrina: Louisiana> killed-in <Diana: Paris, FRA> became <Joseph Ratzinger: Pope Benedict XVI> not-watch <Dalai Lama: `` The Simpsons ''>

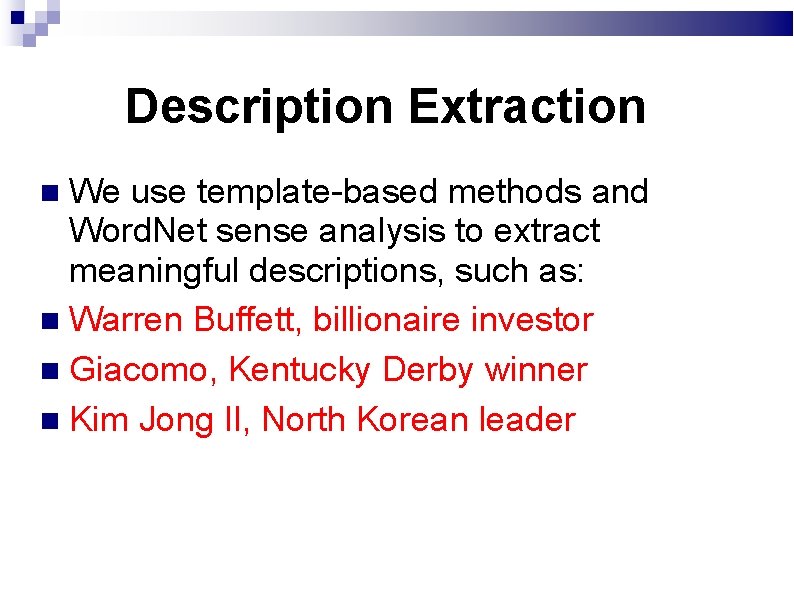

Description Extraction We use template-based methods and Word. Net sense analysis to extract meaningful descriptions, such as: Warren Buffett, billionaire investor Giacomo, Kentucky Derby winner Kim Jong Il, North Korean leader

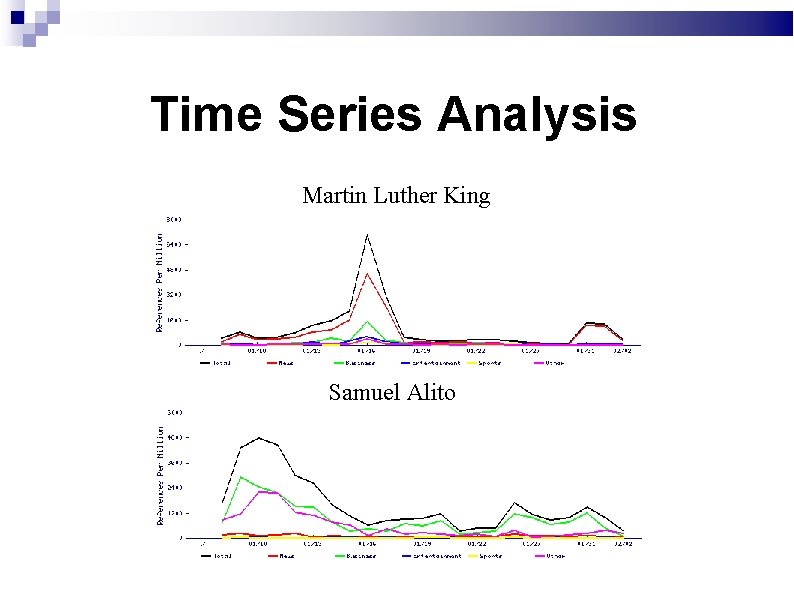

Time Series Analysis Martin Luther King Samuel Alito

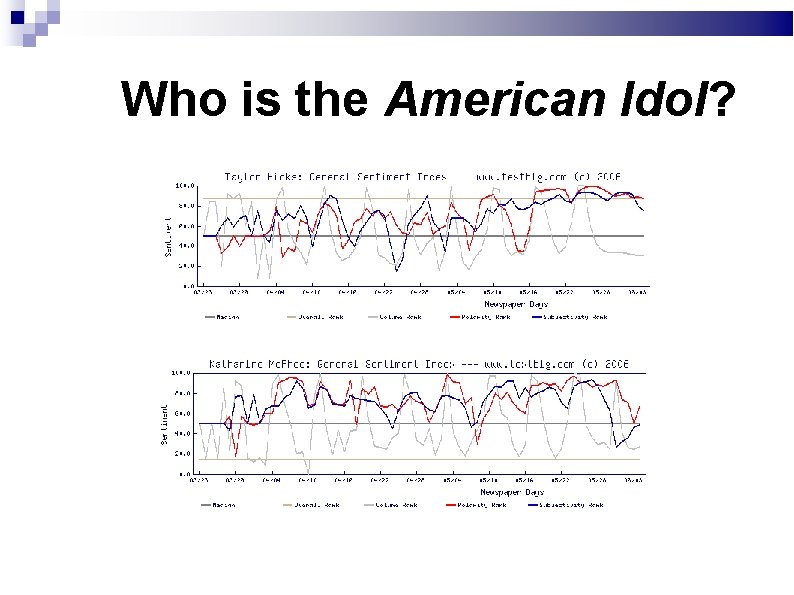

Who is the American Idol?

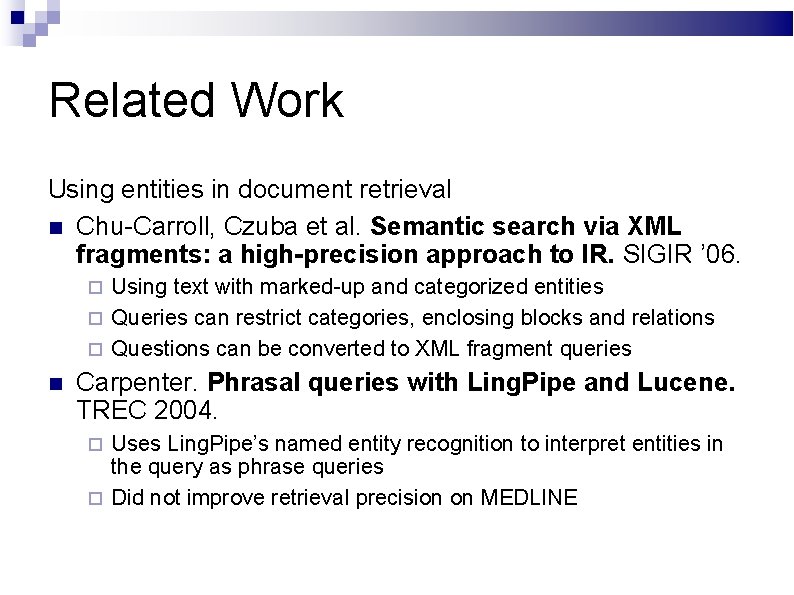

Related Work Using entities in document retrieval Chu-Carroll, Czuba et al. Semantic search via XML fragments: a high-precision approach to IR. SIGIR ’ 06. Using text with marked-up and categorized entities Queries can restrict categories, enclosing blocks and relations Questions can be converted to XML fragment queries Carpenter. Phrasal queries with Ling. Pipe and Lucene. TREC 2004. Uses Ling. Pipe’s named entity recognition to interpret entities in the query as phrase queries Did not improve retrieval precision on MEDLINE

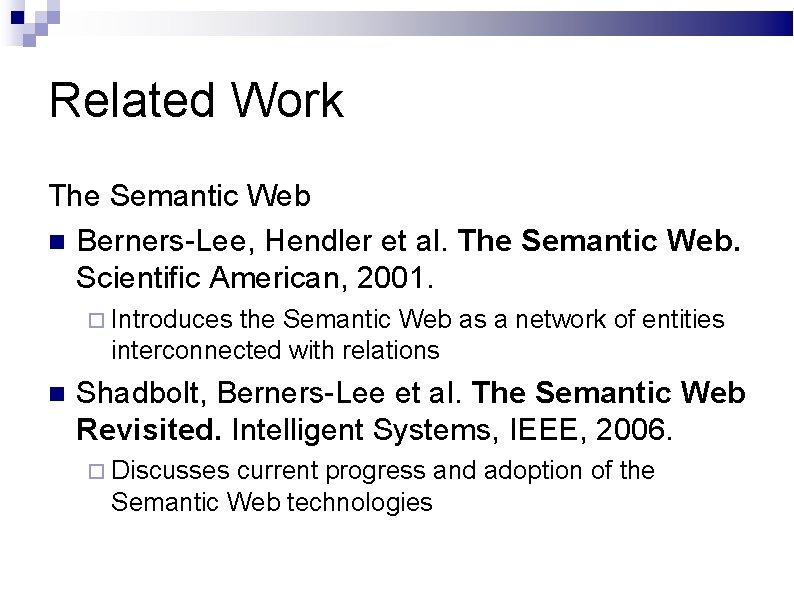

Related Work The Semantic Web Berners-Lee, Hendler et al. The Semantic Web. Scientific American, 2001. Introduces the Semantic Web as a network of entities interconnected with relations Shadbolt, Berners-Lee et al. The Semantic Web Revisited. Intelligent Systems, IEEE, 2006. Discusses current progress and adoption of the Semantic Web technologies

Related Work Relation extraction Banko et al. Open Information Extraction from the Web. International Joint Conference on Artificial Intelligence (IJCAI), 2007. Open Information Extraction – unsupervised relation extraction from unstructured text Text. Runner: a scalable system using lightweight techniques Pasca et al. (Google Labs). Names and Similarities on the Web: Fact Extraction in the Fast Lane. ACL ’ 06. Iterative approach for relation extraction starting from seed facts No domain knowledge or lexicons are used Precision of 90% and recall of 70 -90% are reported on Person. Born. In-Year relations.

Entity Occurrences in Web Queries Observations: Variations in tokenization (e. g. “toys r us”), plurals, ‘&’ vs ‘and’, quotation etc. Constructing a list of aliases Misspellings Using Metaphone hashing

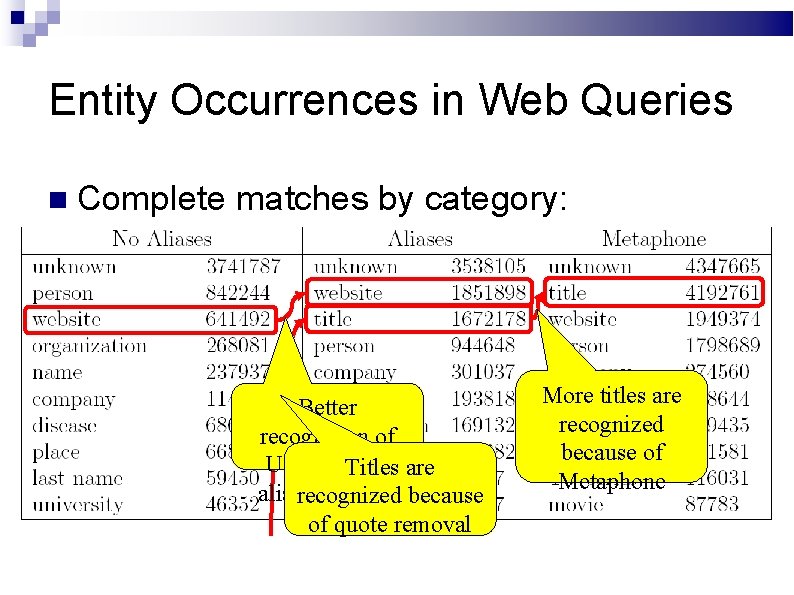

Entity Occurrences in Web Queries Complete matches by category: Better recognition of URLs due to are Titles aliasrecognized detection because of quote removal More titles are recognized because of Metaphone

Entity Occurrences in Web Queries Partial matches by category: <300, 000 partial website Half of the partial matches turned out vs. nearly 2 million complete to be misspelled matches: most website complete matches queries have nothing but a URL.

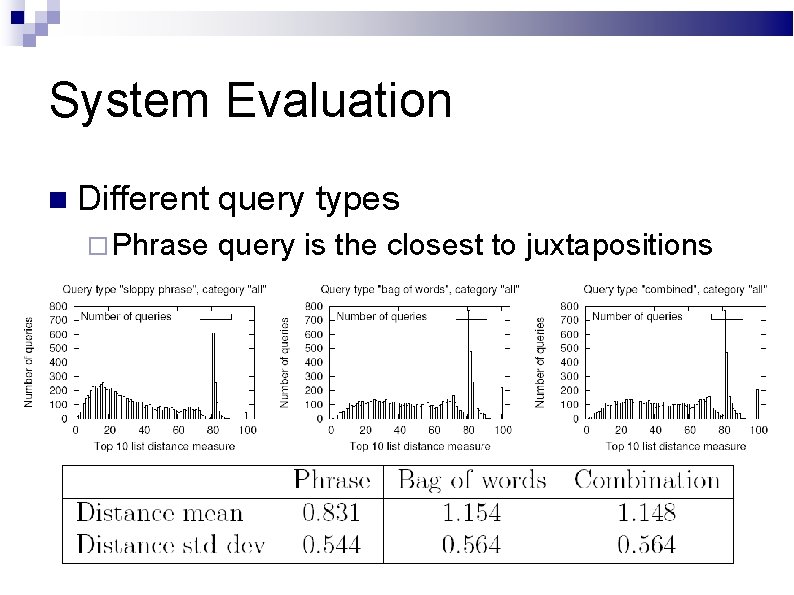

System Evaluation Search results vs. juxtapositions comparison Adding the queried entity at the top of the juxtaposition list Kendall’s distance measure. R. Fagin, R. Kumar, and D. Sivakumar. Comparing top k lists. ACM-SIAM SODA’ 2003. For top-10 lists: 0 = similar lists, 45 = reverse lists, 100 = disjoint lists.

System Evaluation Different query types Phrase query is the closest to juxtapositions

Future Work Alternative evaluation techniques Converting TREC QA list queries to keywordbased entity queries Manually scoring entity retrieval results Customizing our approach for product review search Predicting target entity category based on query and filtering the results accordingly

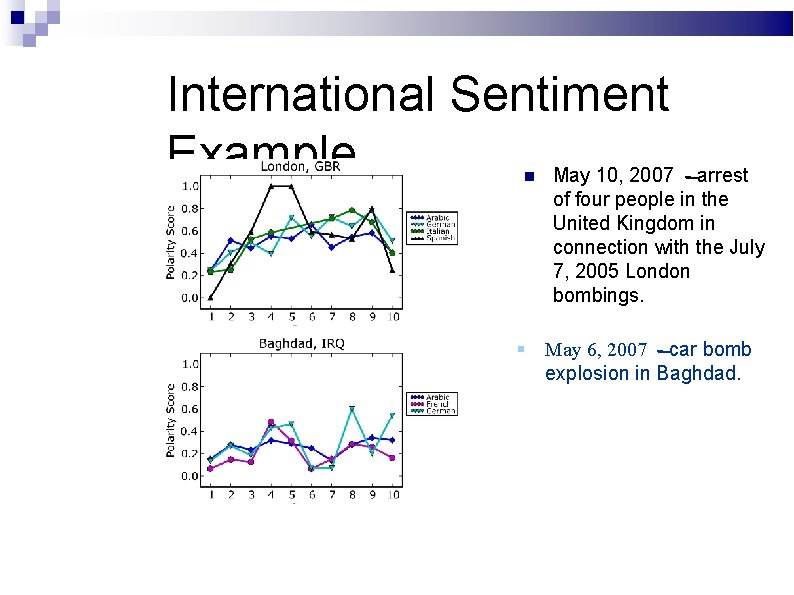

International Sentiment Example May 10, 2007 - arrest of four people in the United Kingdom in connection with the July 7, 2005 London bombings. May 6, 2007 - car bomb explosion in Baghdad.

Related Work Cesarano, C. ; Picariello, A. ; Reforgiato, D. ; and Subrahmanian, V. 2007. The OASYS 2. 0 Opinion Analysis System. In ICWSM’ 07, 313– 314. Mihalcea, R. ; Banea, C. ; and Wiebe, J. 2007. Learning multilingual subjective language via cross-lingual projections. In ACL’ 07, 976– 983. Yao, J. ; Wu, G. ; Liu, J. ; and Zheng, Y. 2006. Using bilingual lexicon to judge sentiment orientation of Chinese words. Hiroshi, K. ; Tetsuya, N. ; and Hideo, W. 2004. Deeper sentiment analysis using machine translation technology. In COLING ’ 04, 494. Morristown, NJ, USA: ACL.

Overview of Lydia / Text. Map Spidering – text is retrieved from a given site on a daily basis using semi-custom spidering agents. Normalization – clean text is extracted with semicustom parsers and formatted for our pipeline. Text Markup – annotates parts of the source text for storage and analysis. Back Office Operations – we aggregate entity frequency and relational data for a variety of statistical analyses.

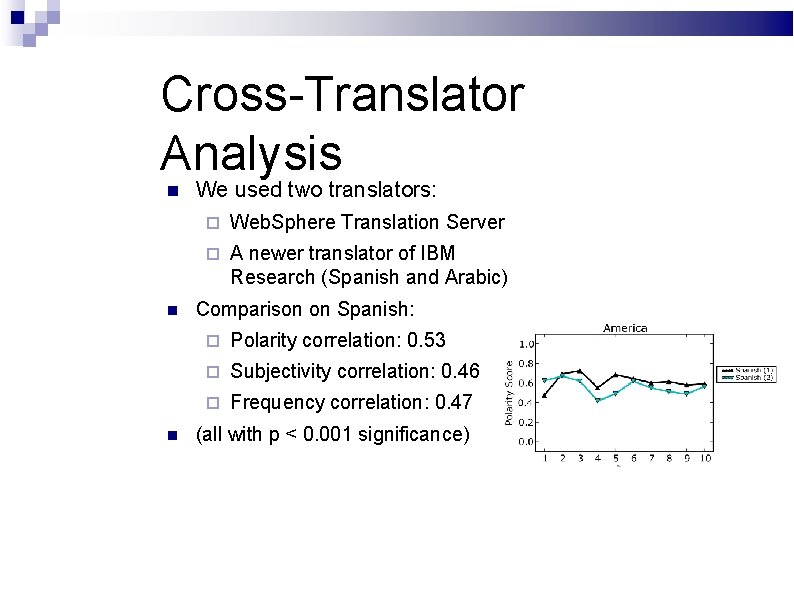

Cross-Translator Analysis We used two translators: Web. Sphere Translation Server A newer translator of IBM Research (Spanish and Arabic) Comparison on Spanish: Polarity correlation: 0. 53 Subjectivity correlation: 0. 46 Frequency correlation: 0. 47 (all with p < 0. 001 significance)

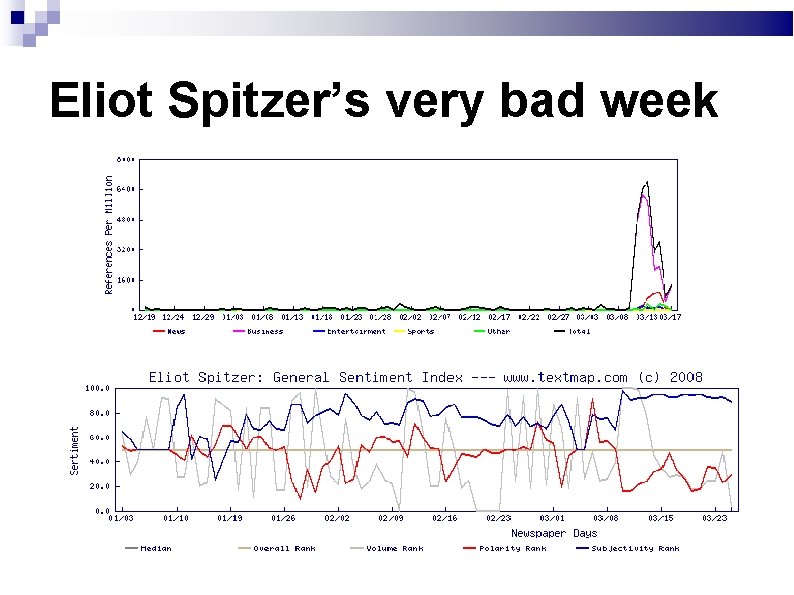

Eliot Spitzer’s very bad week

Eliot Spitzer’s new friends

- Slides: 106