Neurocognitive Informatics Manifesto Wodzisaw Duch Department of Informatics

![Pub. Med queries Searching for: "Alzheimer disease"[Me. SH Terms] AND "apolipoproteins e"[Me. SH Terms] Pub. Med queries Searching for: "Alzheimer disease"[Me. SH Terms] AND "apolipoproteins e"[Me. SH Terms]](https://slidetodoc.com/presentation_image/afad8d67a56dfda26b449eec5efe8513/image-43.jpg)

- Slides: 55

Neurocognitive Informatics Manifesto Włodzisław Duch Department of Informatics, Nicolaus Copernicus University, Toruń, Poland Google: W. Duch IMS’ 09, Kunming

Plan 1. Neurocognitive informatics and information management. 2. Meaning and words in the brain. 3. Neurocognitive approach to natural language. 4. Insight and the mute half of the brain. 5. Intuition and non-conscious thinking. 6. Examples of practical applications. 7. What else is needed? Mental models.

Neurocognitive informatics Computational Intelligence. An International Journal (1984) + 10 other journals with “Computational Intelligence”, D. Poole, A. Mackworth R. Goebel, Computational Intelligence - A Logical Approach. (OUP 1998), GOFAI book, logic and reasoning. • CI: lower cognitive functions, perception, signal analysis, action control, sensorimotor behavior. • AI: higher cognitive functions, thinking, reasoning, planning etc. • Neurocognitive informatics: brain processes can be a great inspiration for AI algorithms, if we could only understand them …. What are the neurons doing? Perceptrons, basic units in multilayer perceptron networks, use threshold logic – Artificial NN inspirations. What are the networks doing? Specific sensory/motor system transformations, implementing various types of memory, estimating similarity. How do higher cognitive functions map to the brain activity? Still hard but … Neurocognitive informatics = abstractions of this process.

Info organization Best: ask an expert. Second best: organize info like in the brain of an expert. • Many books on mind maps. • Many software packages. • The. Brain (www. thebrain. com) interface making hierarchical maps of Internet links. • Other software for graphical representation of info. • Our implementation (Szymanski): Wordnet, Wikipedia graphs extension to similarity is coming.

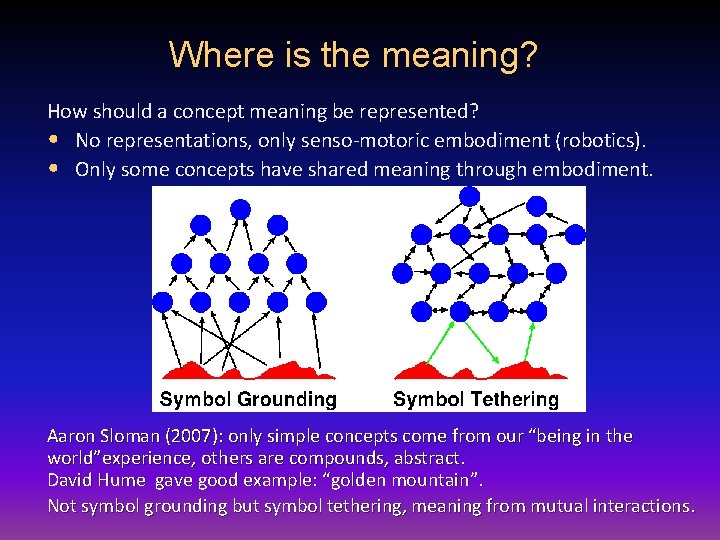

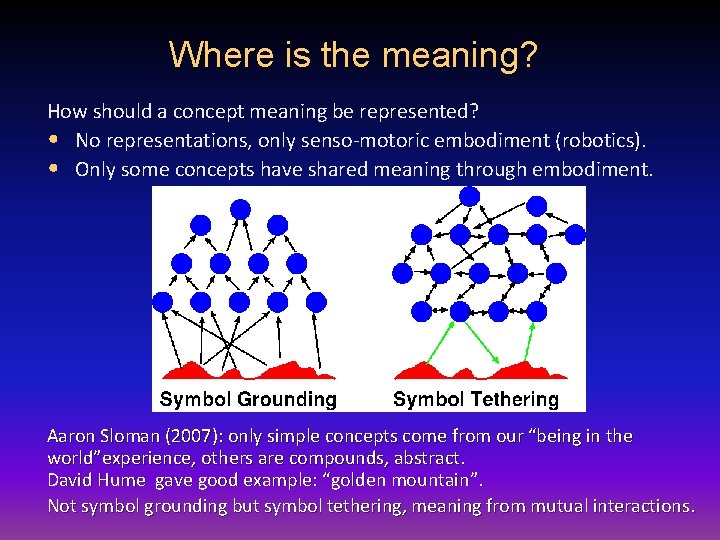

Where is the meaning? How should a concept meaning be represented? • No representations, only senso-motoric embodiment (robotics). • Only some concepts have shared meaning through embodiment. Aaron Sloman (2007): only simple concepts come from our “being in the world”experience, others are compounds, abstract. David Hume gave good example: “golden mountain”. Not symbol grounding but symbol tethering, meaning from mutual interactions.

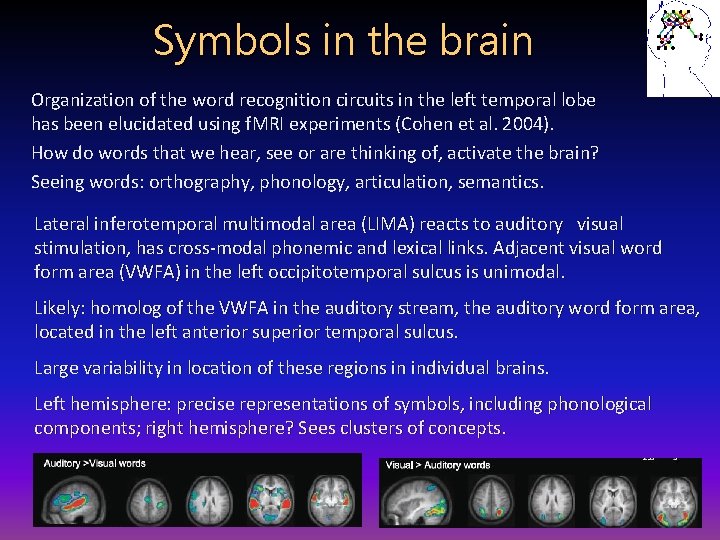

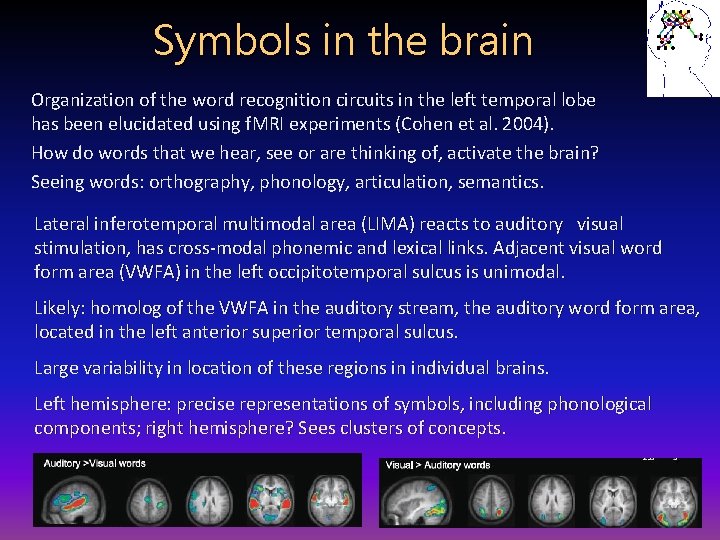

Symbols in the brain Organization of the word recognition circuits in the left temporal lobe has been elucidated using f. MRI experiments (Cohen et al. 2004). How do words that we hear, see or are thinking of, activate the brain? Seeing words: orthography, phonology, articulation, semantics. Lateral inferotemporal multimodal area (LIMA) reacts to auditory visual stimulation, has cross-modal phonemic and lexical links. Adjacent visual word form area (VWFA) in the left occipitotemporal sulcus is unimodal. Likely: homolog of the VWFA in the auditory stream, the auditory word form area, located in the left anterior superior temporal sulcus. Large variability in location of these regions in individual brains. Left hemisphere: precise representations of symbols, including phonological components; right hemisphere? Sees clusters of concepts.

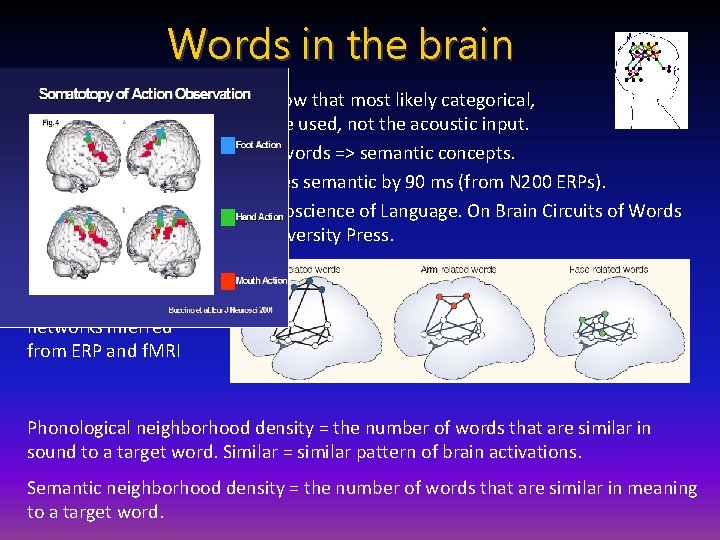

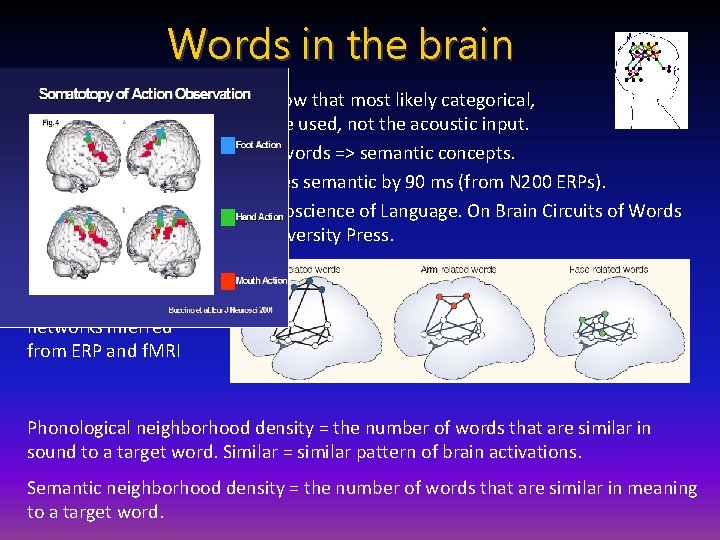

Words in the brain Psycholinguistic experiments show that most likely categorical, phonological representations are used, not the acoustic input. Acoustic signal => phoneme => words => semantic concepts. Phonological processing precedes semantic by 90 ms (from N 200 ERPs). F. Pulvermuller (2003) The Neuroscience of Language. On Brain Circuits of Words and Serial Order. Cambridge University Press. Action-perception networks inferred from ERP and f. MRI Phonological neighborhood density = the number of words that are similar in sound to a target word. Similar = similar pattern of brain activations. Semantic neighborhood density = the number of words that are similar in meaning to a target word.

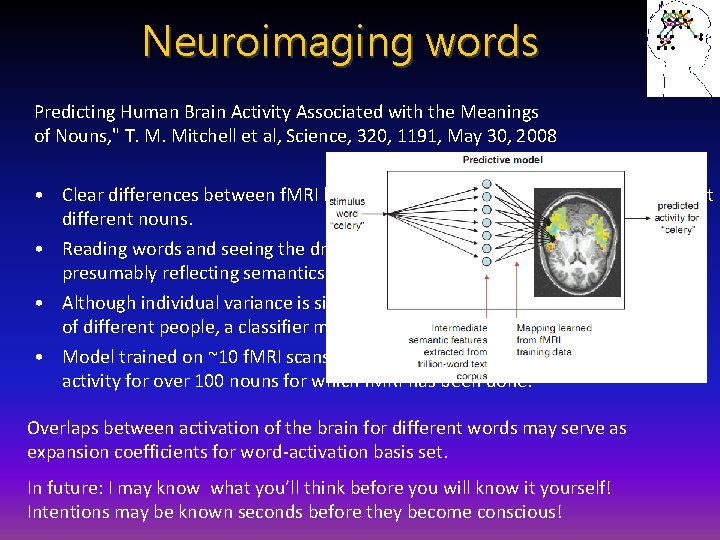

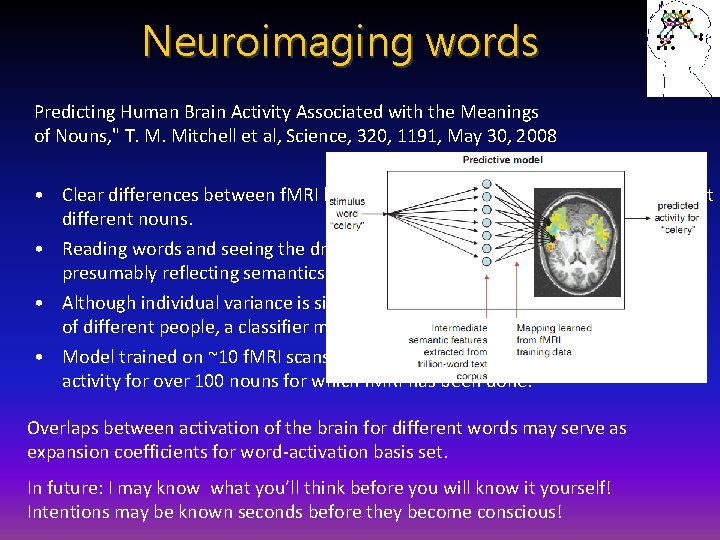

Neuroimaging words Predicting Human Brain Activity Associated with the Meanings of Nouns, " T. M. Mitchell et al, Science, 320, 1191, May 30, 2008 • Clear differences between f. MRI brain activity when people read and think about different nouns. • Reading words and seeing the drawing invokes similar brain activations, presumably reflecting semantics of concepts. • Although individual variance is significant similar activations are found in brains of different people, a classifier may still be trained on pooled data. • Model trained on ~10 f. MRI scans + very large corpus (1012) predicts brain activity for over 100 nouns for which f. MRI has been done. Overlaps between activation of the brain for different words may serve as expansion coefficients for word-activation basis set. In future: I may know what you’ll think before you will know it yourself! Intentions may be known seconds before they become conscious!

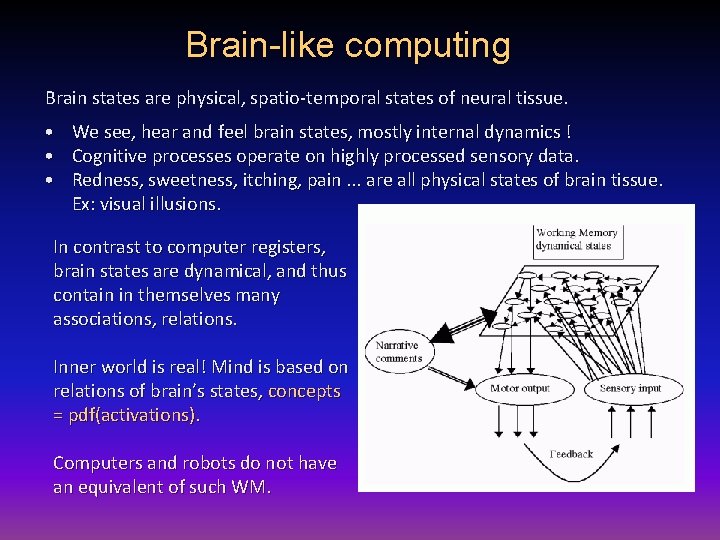

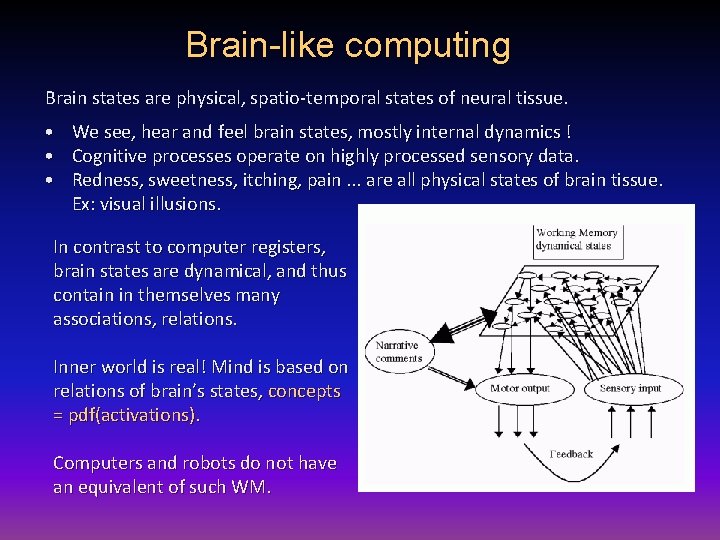

Brain-like computing Brain states are physical, spatio-temporal states of neural tissue. • We see, hear and feel brain states, mostly internal dynamics ! • Cognitive processes operate on highly processed sensory data. • Redness, sweetness, itching, pain. . . are all physical states of brain tissue. Ex: visual illusions. In contrast to computer registers, brain states are dynamical, and thus contain in themselves many associations, relations. Inner world is real! Mind is based on relations of brain’s states, concepts = pdf(activations). Computers and robots do not have an equivalent of such WM.

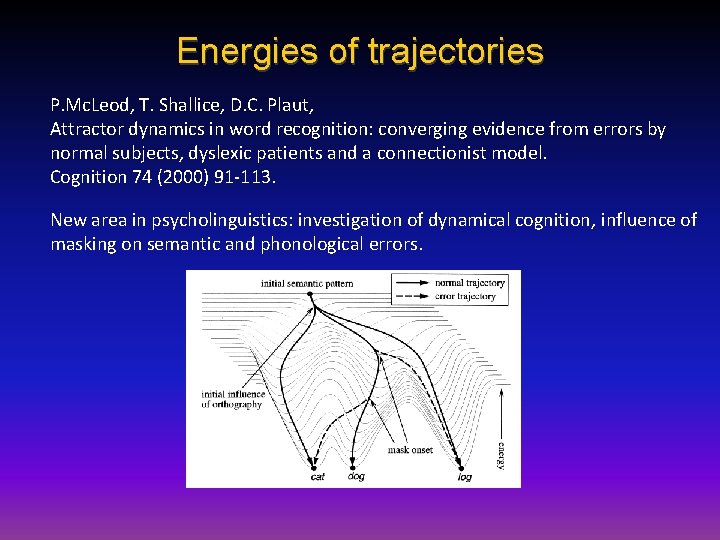

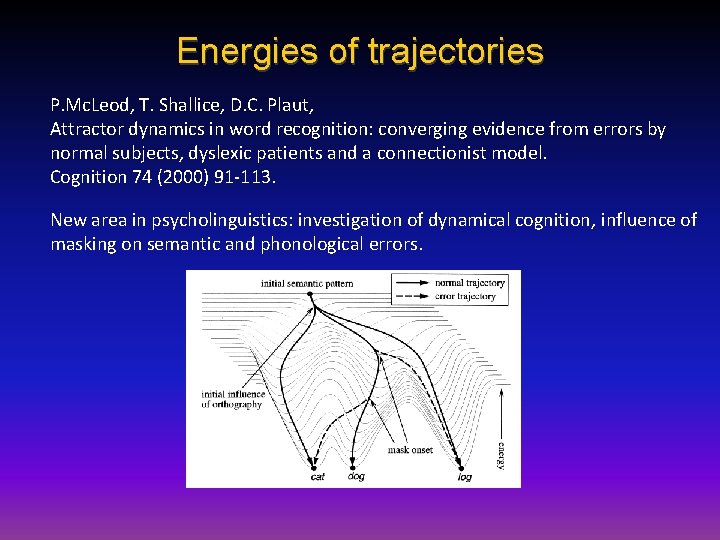

Energies of trajectories P. Mc. Leod, T. Shallice, D. C. Plaut, Attractor dynamics in word recognition: converging evidence from errors by normal subjects, dyslexic patients and a connectionist model. Cognition 74 (2000) 91 -113. New area in psycholinguistics: investigation of dynamical cognition, influence of masking on semantic and phonological errors.

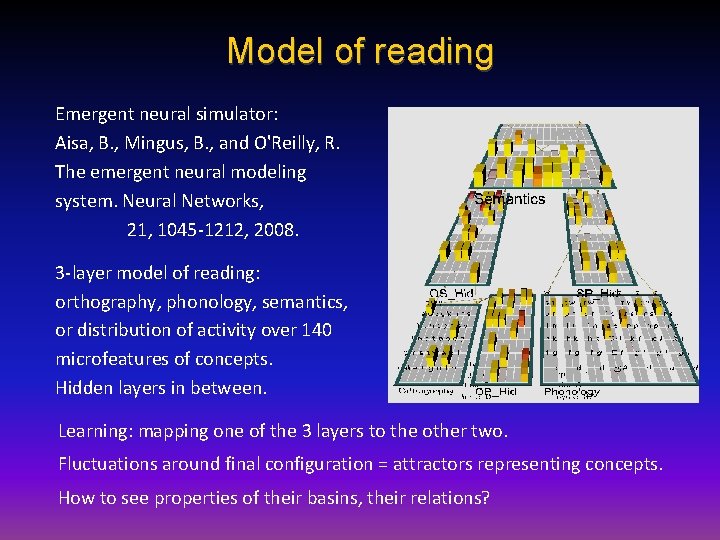

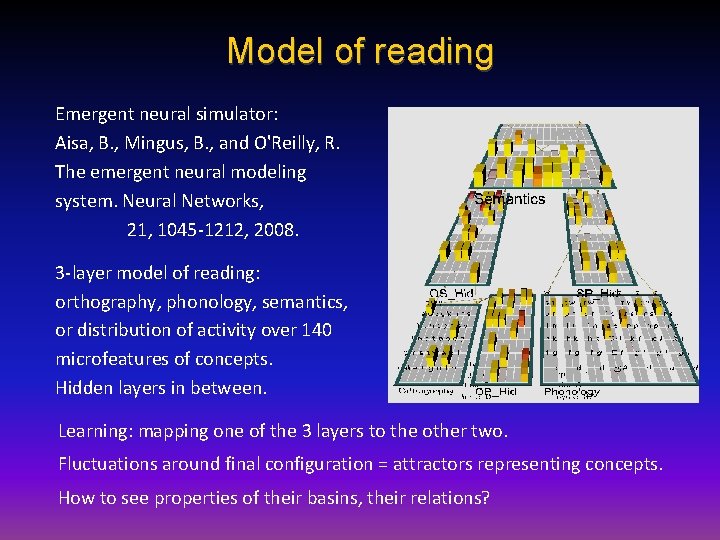

Model of reading Emergent neural simulator: Aisa, B. , Mingus, B. , and O'Reilly, R. The emergent neural modeling system. Neural Networks, 21, 1045 -1212, 2008. 3 -layer model of reading: orthography, phonology, semantics, or distribution of activity over 140 microfeatures of concepts. Hidden layers in between. Learning: mapping one of the 3 layers to the other two. Fluctuations around final configuration = attractors representing concepts. How to see properties of their basins, their relations?

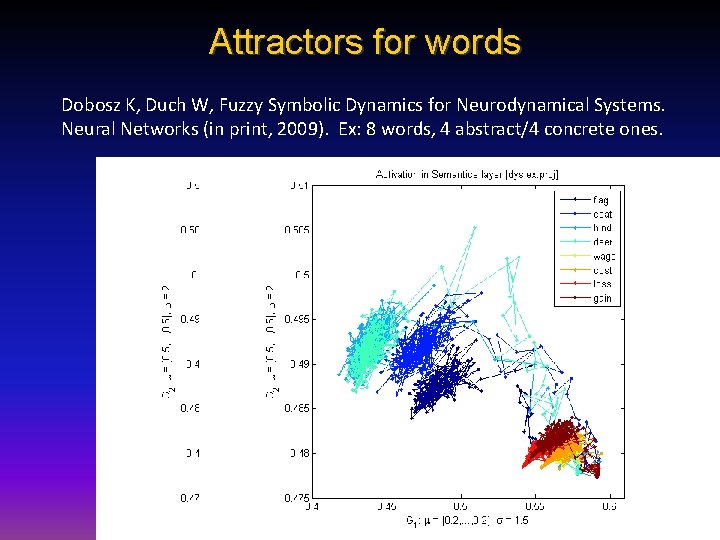

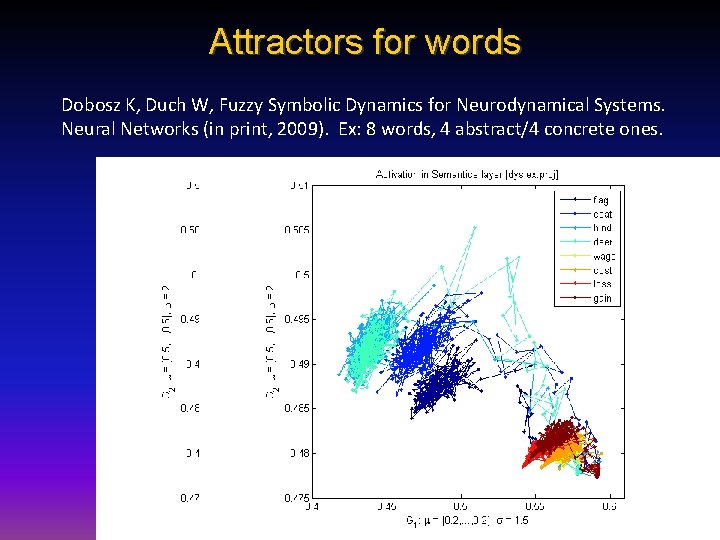

Attractors for words Dobosz K, Duch W, Fuzzy Symbolic Dynamics for Neurodynamical Systems. Neural Networks (in print, 2009). Ex: 8 words, 4 abstract/4 concrete ones.

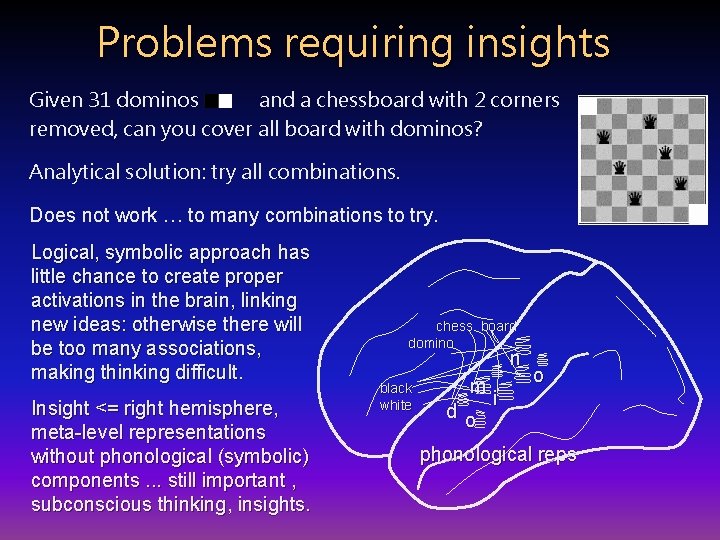

Problems requiring insights Given 31 dominos and a chessboard with 2 corners removed, can you cover all board with dominos? Analytical solution: try all combinations. Does not work … to many combinations to try. Logical, symbolic approach has little chance to create proper activations in the brain, linking new ideas: otherwise there will be too many associations, making thinking difficult. Insight <= right hemisphere, meta-level representations without phonological (symbolic) components. . . still important , subconscious thinking, insights. chess board domino n black white m do i o phonological reps

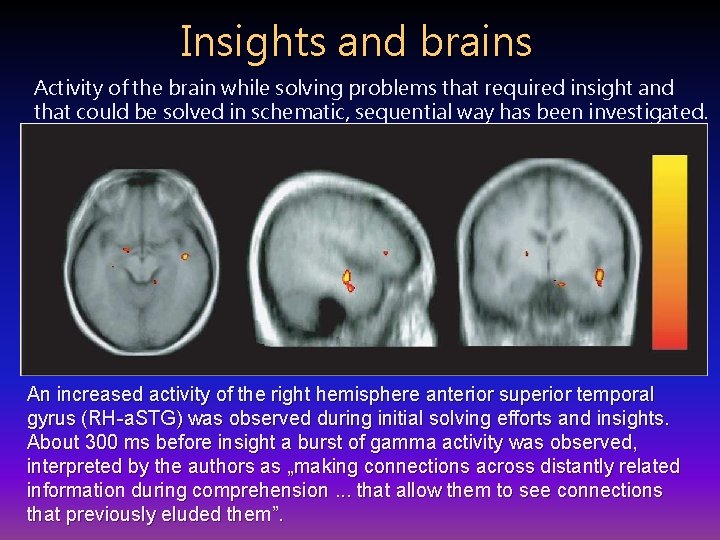

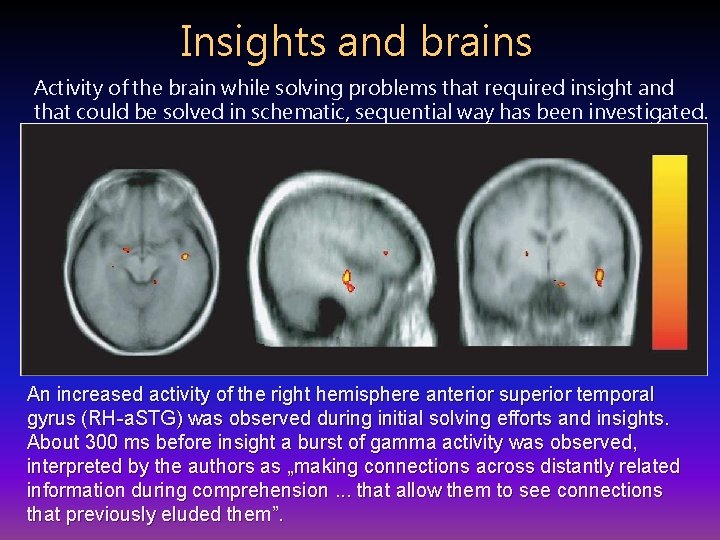

Insights and brains Activity of the brain while solving problems that required insight and that could be solved in schematic, sequential way has been investigated. E. M. Bowden, M. Jung-Beeman, J. Fleck, J. Kounios, „New approaches to demystifying insight”. Trends in Cognitive Science 2005. After solving a problem presented in a verbal way subjects indicated themselves whether they had an insight or not. An increased activity of the right hemisphere anterior superior temporal gyrus (RH-a. STG) was observed during initial solving efforts and insights. About 300 ms before insight a burst of gamma activity was observed, interpreted by the authors as „making connections across distantly related information during comprehension. . . that allow them to see connections that previously eluded them”.

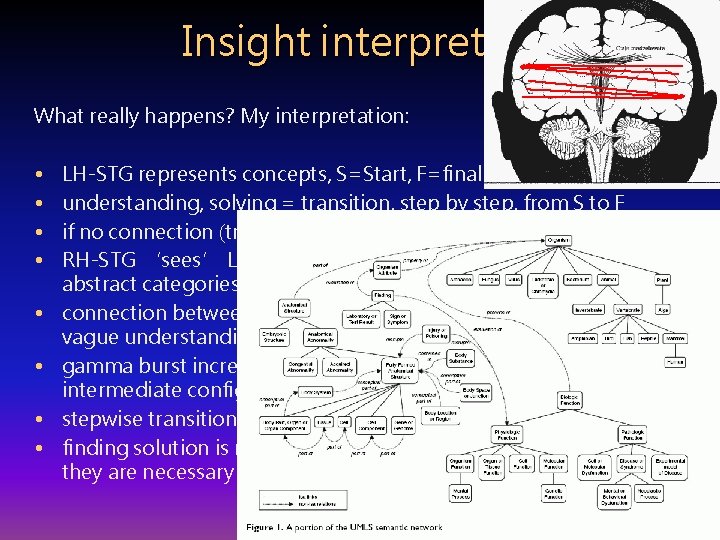

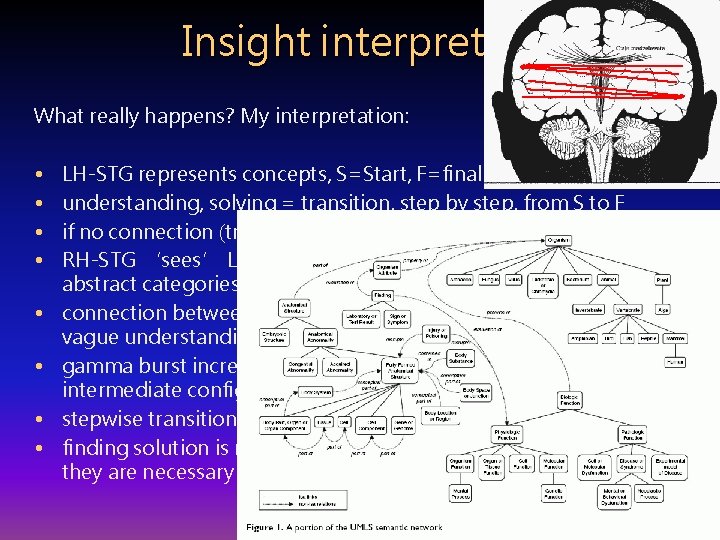

Insight interpreted What really happens? My interpretation: • • LH-STG represents concepts, S=Start, F=final understanding, solving = transition, step by step, from S to F if no connection (transition) is found this leads to an impasse; RH-STG ‘sees’ LH activity on meta-level, clustering concepts into abstract categories (cosets, or constrained sets); connection between S to F is found in RH, leading to a feeling of vague understanding; gamma burst increases the activity of LH representations for S, F and intermediate configurations; feeling of imminent solution arises; stepwise transition between S and F is found; finding solution is rewarded by emotions during Aha! experience; they are necessary to increase plasticity and create permanent links.

Intuition is a concept difficult to grasp, but commonly believed to play important role in business and other decision making; „knowing without being able to explain how we know”. Sinclair Ashkanasy (2005): intuition is a „non-sequential informationprocessing mode, which comprises both cognitive and affective elements and results in direct knowing without any use of conscious reasoning”. First tests of intuition were introduced by Wescott (1961), now 3 tests are used, Rational-Experiential Inventory (REI), Myers-Briggs Type Inventory (MBTI) and Accumulated Clues Task (ACT). Different intuition measures are not correlated, showing problems in constructing theoretical concept of intuition. Significant correlations were found between REI intuition scale and some measures of creativity. Intuition in chess has been studied in details (Newell, Simon 1975). Intuition may result from implicit learning of complex similarity-based evaluation that are difficult to express in symbolic (logical) way.

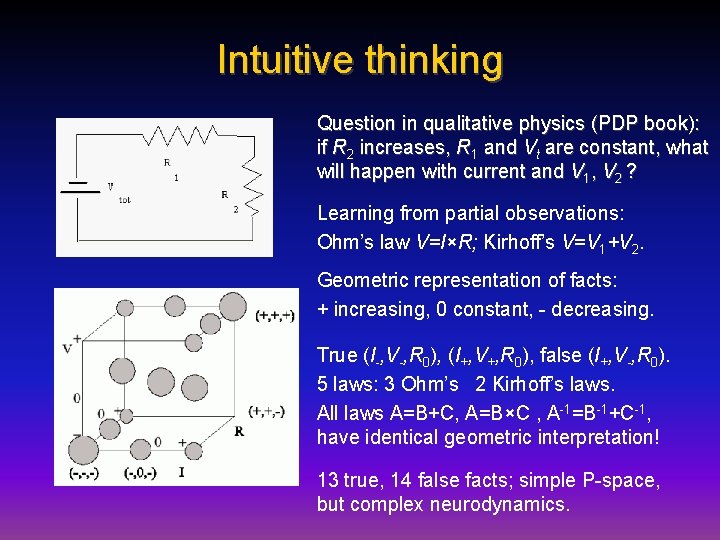

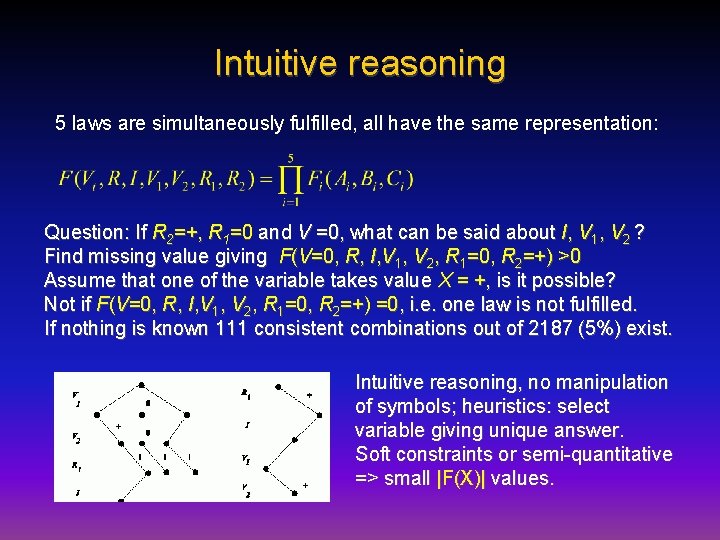

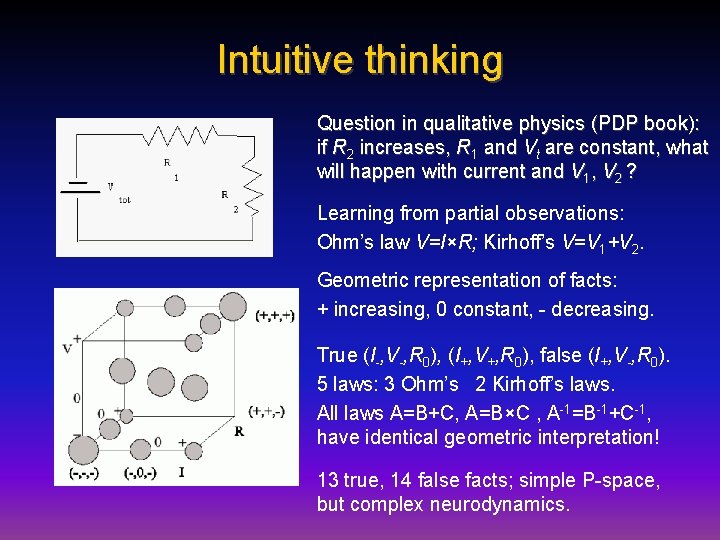

Intuitive thinking Question in qualitative physics (PDP book): if R 2 increases, R 1 and Vt are constant, what will happen with current and V 1, V 2 ? Learning from partial observations: Ohm’s law V=I×R; Kirhoff’s V=V 1+V 2. Geometric representation of facts: + increasing, 0 constant, - decreasing. True (I-, V-, R 0), (I+, V+, R 0), false (I+, V-, R 0). 5 laws: 3 Ohm’s 2 Kirhoff’s laws. All laws A=B+C, A=B×C , A-1=B-1+C-1, have identical geometric interpretation! 13 true, 14 false facts; simple P-space, but complex neurodynamics.

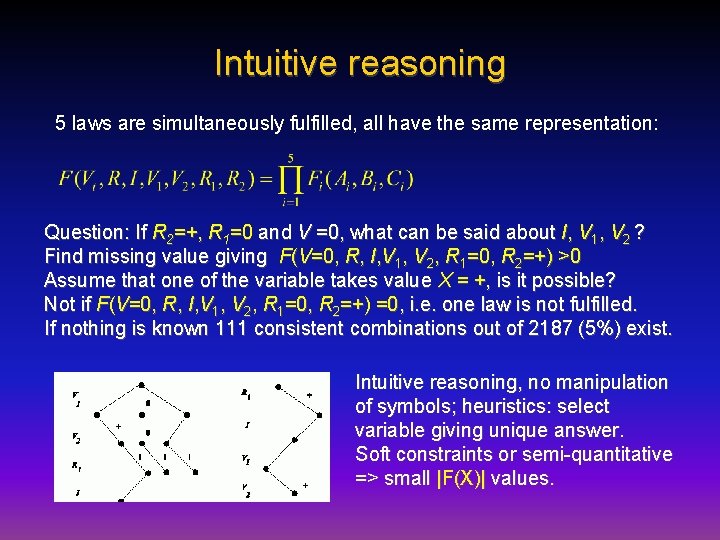

Intuitive reasoning 5 laws are simultaneously fulfilled, all have the same representation: Question: If R 2=+, R 1=0 and V =0, what can be said about I, V 1, V 2 ? Find missing value giving F(V=0, R, I, V 1, V 2, R 1=0, R 2=+) >0 Assume that one of the variable takes value X = +, is it possible? Not if F(V=0, R, I, V 1, V 2, R 1=0, R 2=+) =0, i. e. one law is not fulfilled. If nothing is known 111 consistent combinations out of 2187 (5%) exist. Intuitive reasoning, no manipulation of symbols; heuristics: select variable giving unique answer. Soft constraints or semi-quantitative => small |F(X)| values.

Types of memory Neurocognitive approach needs at least 4 types of memories. Long term (LTM): recognition, semantic, episodic + working memory. Input (text, speech) pre-processed using recognition memory model to correct spelling errors, expand acronyms etc. For dialogue/text understanding episodic memory models are needed. Working memory: an active subset of semantic/episodic memory. All 3 LTM are coupled mutually providing context for recogniton. Semantic memory is a permanent storage of conceptual data. • “Permanent”: data is collected throughout the whole lifetime of the system, old information is overridden/corrected by newer input. • “Conceptual”: contains semantic relations between words and uses them to create concept definitions.

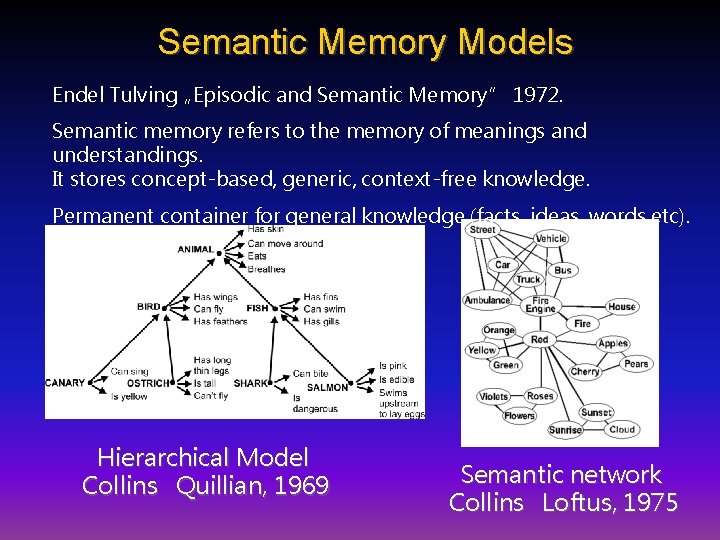

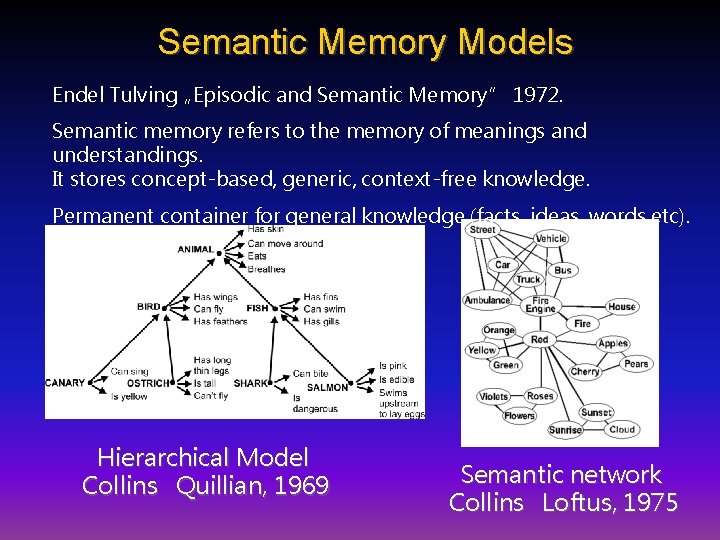

Semantic Memory Models Endel Tulving „Episodic and Semantic Memory” 1972. Semantic memory refers to the memory of meanings and understandings. It stores concept-based, generic, context-free knowledge. Permanent container for general knowledge (facts, ideas, words etc). Hierarchical Model Collins Quillian, 1969 Semantic network Collins Loftus, 1975

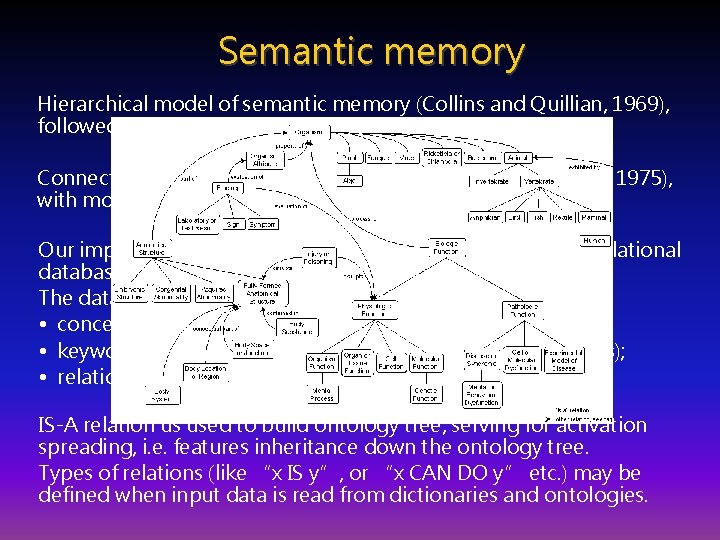

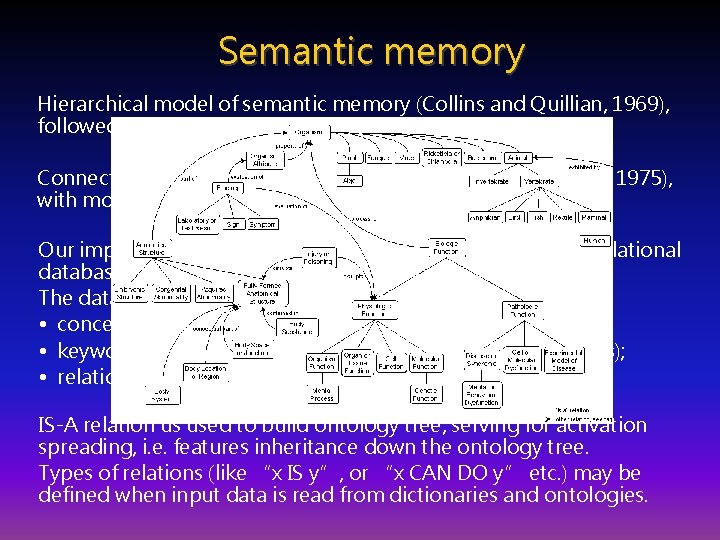

Semantic memory Hierarchical model of semantic memory (Collins and Quillian, 1969), followed by most ontologies. Connectionist spreading activation model (Collins and Loftus, 1975), with mostly lateral connections. Our implementation is based on connectionist model, uses relational database and object access layer API. The database stores three types of data: • concepts, or objects being described; • keywords (features of concepts extracted from data sources); • relations between them. IS-A relation us used to build ontology tree, serving for activation spreading, i. e. features inheritance down the ontology tree. Types of relations (like “x IS y”, or “x CAN DO y” etc. ) may be defined when input data is read from dictionaries and ontologies.

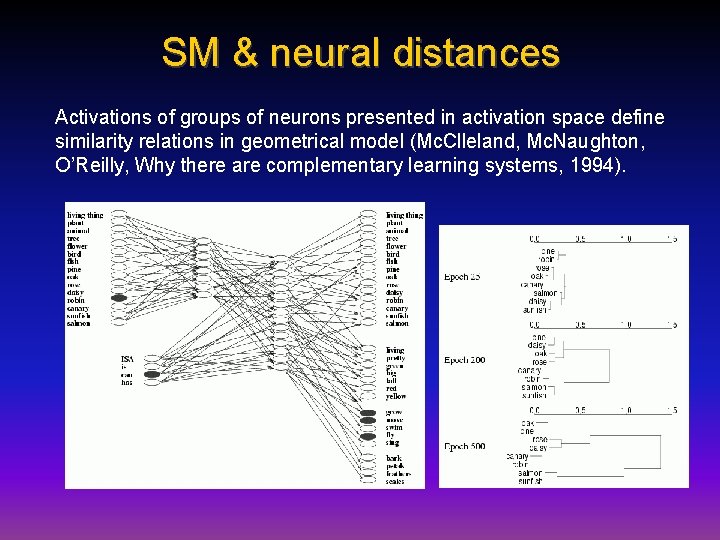

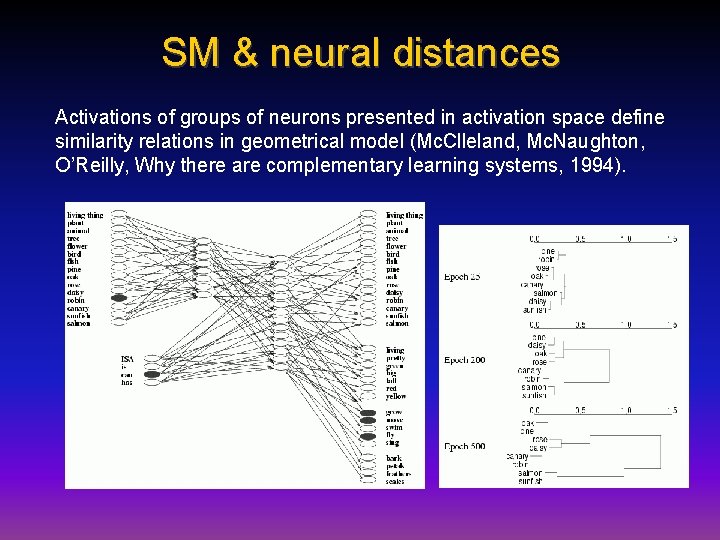

SM & neural distances Activations of groups of neurons presented in activation space define similarity relations in geometrical model (Mc. Clleland, Mc. Naughton, O’Reilly, Why there are complementary learning systems, 1994).

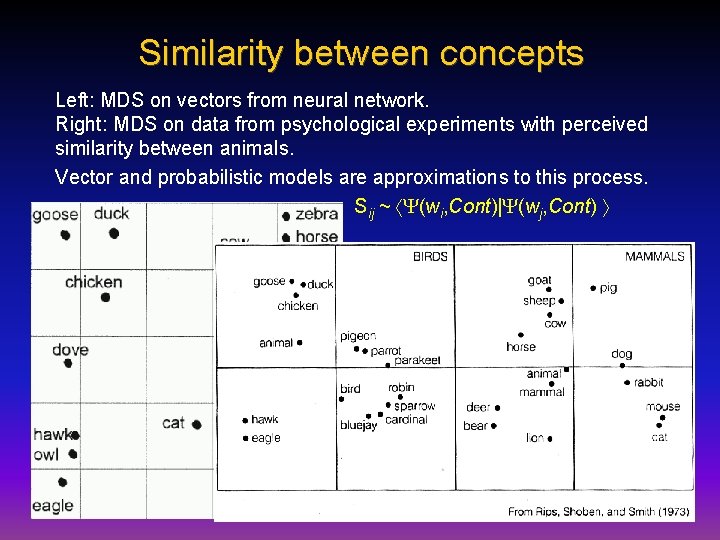

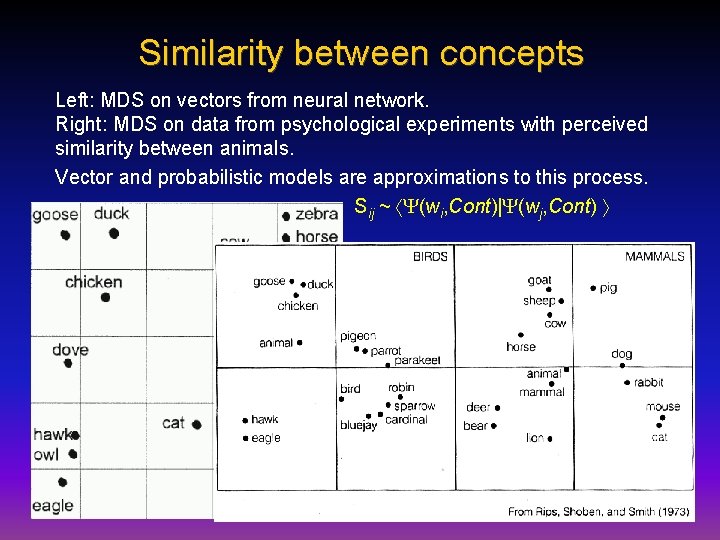

Similarity between concepts Left: MDS on vectors from neural network. Right: MDS on data from psychological experiments with perceived similarity between animals. Vector and probabilistic models are approximations to this process. Sij ~ (wi, Cont)| (wj, Cont)

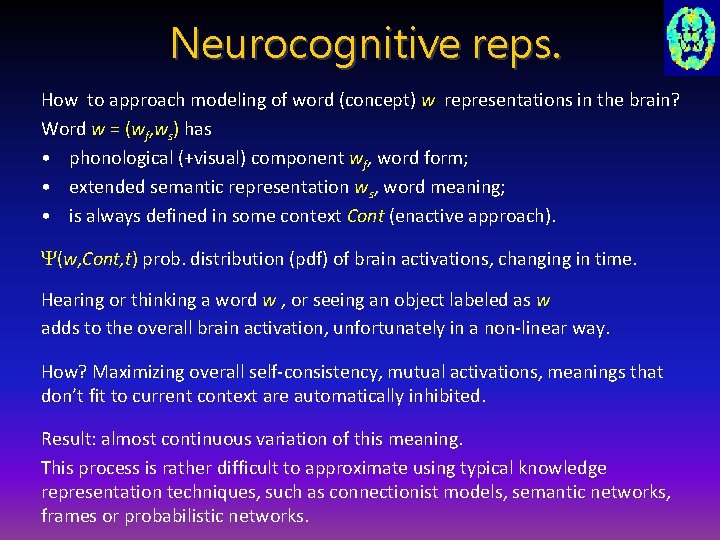

Neurocognitive reps. How to approach modeling of word (concept) w representations in the brain? Word w = (wf, ws) has • phonological (+visual) component wf, word form; • extended semantic representation ws, word meaning; • is always defined in some context Cont (enactive approach). (w, Cont, t) prob. distribution (pdf) of brain activations, changing in time. Hearing or thinking a word w , or seeing an object labeled as w adds to the overall brain activation, unfortunately in a non-linear way. How? Maximizing overall self-consistency, mutual activations, meanings that don’t fit to current context are automatically inhibited. Result: almost continuous variation of this meaning. This process is rather difficult to approximate using typical knowledge representation techniques, such as connectionist models, semantic networks, frames or probabilistic networks.

Approximate reps. States (w, Cont) lexicographical meanings: • clusterize (w, Cont) for all contexts; • define prototypes (wk, Cont) for different meanings wk. A 1: use spreading activation in semantic networks to define . A 2: take a snapshot of activation in discrete space (vector approach). Meaning of the word is a result of priming, spreading activation to speech, motor and associative brain areas, creating affordances. (w, Cont) ~ quasi-stationary wave, with phonological/visual core activations wf and variable extended representation ws selected by Cont. (w, Cont) state into components, because the semantic representation E. Schrödinger (1935): best possible knowledge of a whole does not include the best possible knowledge of its parts! Not only in quantum case. Left semantic network LH contains wf coupled with the RH. What is the role of right semantic network RH?

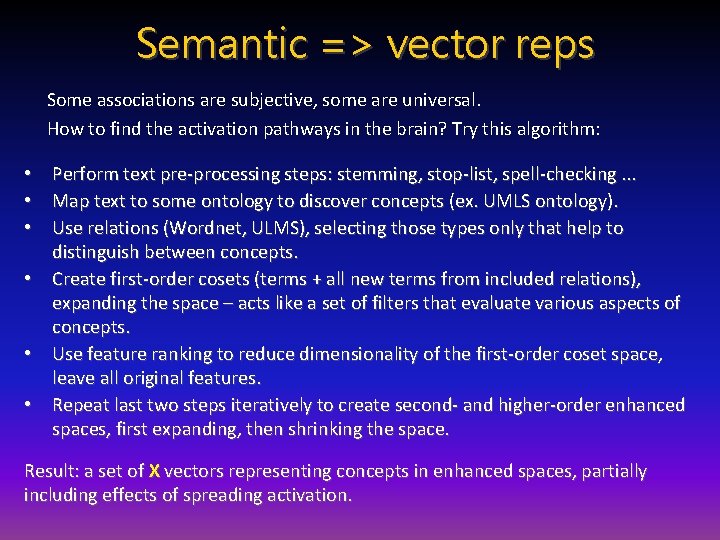

Semantic => vector reps Some associations are subjective, some are universal. How to find the activation pathways in the brain? Try this algorithm: • Perform text pre-processing steps: stemming, stop-list, spell-checking. . . • Map text to some ontology to discover concepts (ex. UMLS ontology). • Use relations (Wordnet, ULMS), selecting those types only that help to distinguish between concepts. • Create first-order cosets (terms + all new terms from included relations), expanding the space – acts like a set of filters that evaluate various aspects of concepts. • Use feature ranking to reduce dimensionality of the first-order coset space, leave all original features. • Repeat last two steps iteratively to create second- and higher-order enhanced spaces, first expanding, then shrinking the space. Result: a set of X vectors representing concepts in enhanced spaces, partially including effects of spreading activation.

Creating SM The API serves as a data access layer providing logical operations between raw data and higher application layers. Data stored in the database is mapped into application objects and the API allows for retrieving specific concepts/keywords. Two major types of data sources for semantic memory: 1. machine-readable structured dictionaries directly convertible into semantic memory data structures; 2. blocks of text, definitions of concepts from dictionaries/encyclopedias. 3 machine-readable data sources are used: • The Suggested Upper Merged Ontology (SUMO) and the MId-Level Ontology (MILO), over 20, 000 terms and 60, 000 axioms. • Word. Net lexicon, more than 200, 000 words-sense pairs. • Concept. Net, concise knowledgebase with 200, 000 assertions.

Creating SM – free text Word. Net hypernymic (a kind of … ) IS-A relation + Hyponym and meronym relations between synsets (converted into concept-concept relations), combined with Concept. Net relation such as: Capable. Of, Property. Of, Part. Of, Made. Of. . . Relations added only if in both Wordnet and Conceptnet. Free-text data: Merriam-Webster, Word. Net and Tiscali. Whole word definitions are stored in SM linked to concepts. A set of most characteristic words from definitions of a given concept. For each concept definition, one set of words for each source dictionary is used, replaced with synset words, subset common to all 3 mapped back to synsets – these are most likely related to the initial concept. They were stored as a separate relation type. Articles and prepositions: removed using manually created stop-word list. Phrases were extracted using Apple. Pie. Parser + concept-phrase relations compared with concept-keyword, only phrases that matched keywords were used.

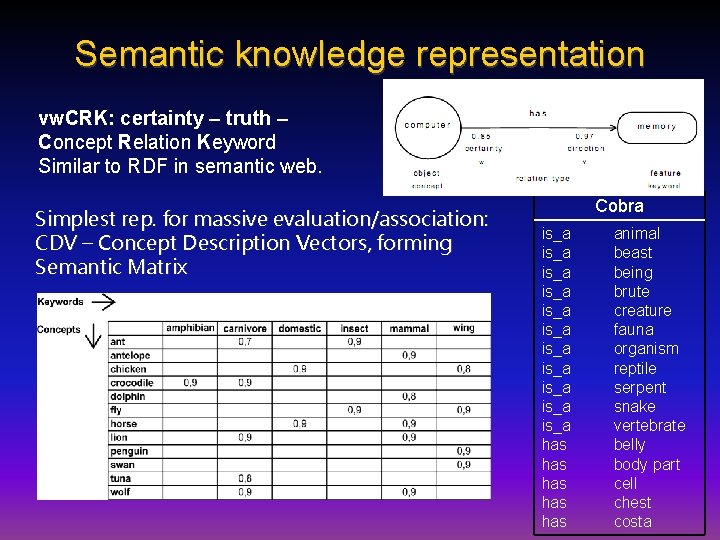

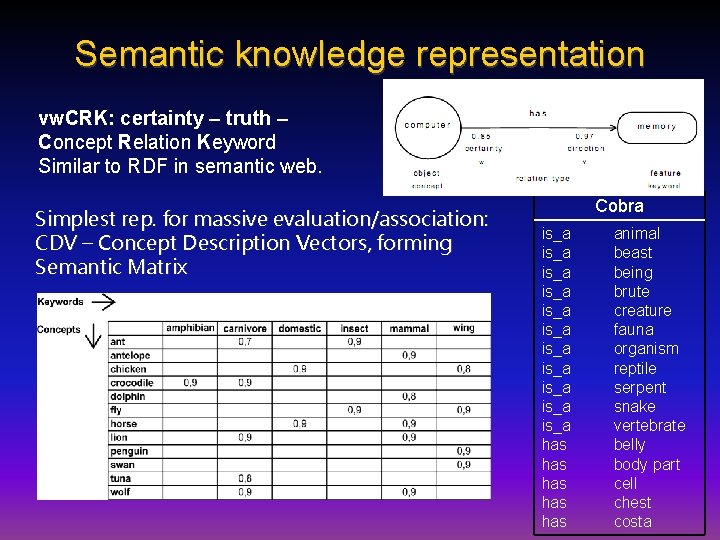

Semantic knowledge representation vw. CRK: certainty – truth – Concept Relation Keyword Similar to RDF in semantic web. Simplest rep. for massive evaluation/association: CDV – Concept Description Vectors, forming Semantic Matrix Cobra is_a is_a is_a has has has animal beast being brute creature fauna organism reptile serpent snake vertebrate belly body part cell chest costa

Concept Description Vectors Drastic simplification: for some applications SM is used in a more efficient way using vector-based knowledge representation. Merging all types of relations => the most general one: “x IS RELATED TO y”, defining vector (semantic) space. {Concept, relations} => Concept Description Vector, CDV. Binary vector, shows which properties are related or have sense for a given concept (not the same as context vector). Semantic memory => CDV matrix, very sparse, easy storage of large amounts of semantic data. Search engines: {keywords} => concept descriptions (Web pages). CDV enable efficient implementation of reversed queries: find a unique subsets of properties for a given concept or a class of concepts = concept higher in ontology. What are the unique features of a sparrow? Proteoglycan? Neutrino?

Relations • IS_A: specific features from more general objects. Inherited features with w from superior relations; v decreased by 10% + corrected during interaction with user. • Similar: defines objects which share features with each other; acquire new knowledge from similar objects through swapping of unknown features with given certainty factors. • Excludes: exchange some unknown features, but reverse the sign of w weights. • Entail: analogical to the logical implication, one feature automatically entails a few more features (connected via the entail relation). Atom of knowledge contains strength and the direction of relations between concepts and keywords coming from 3 components: • • • directly entered into the knowledge base; deduced using predefined relation types from stored information; obtained during system's interaction with the human user.

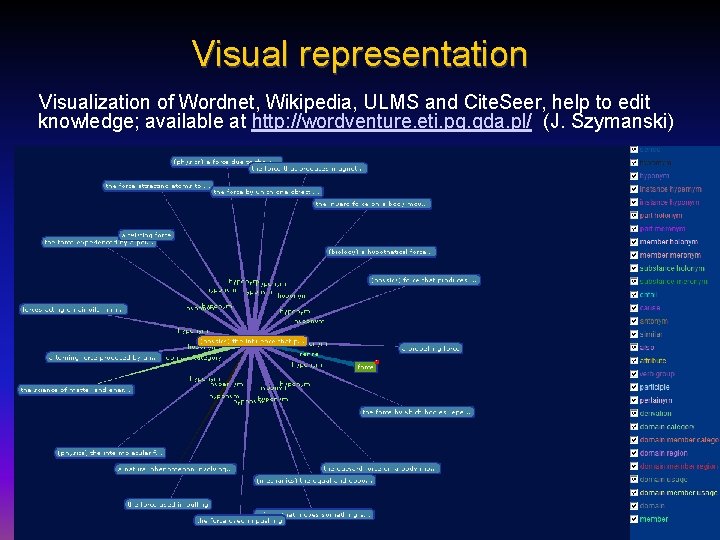

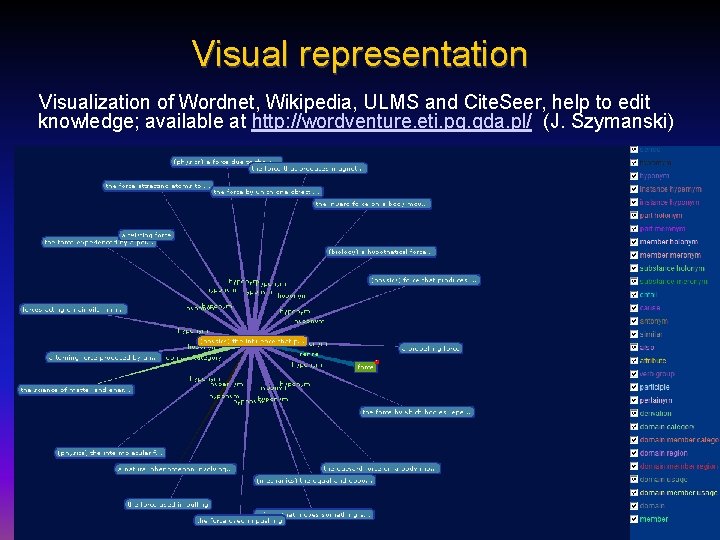

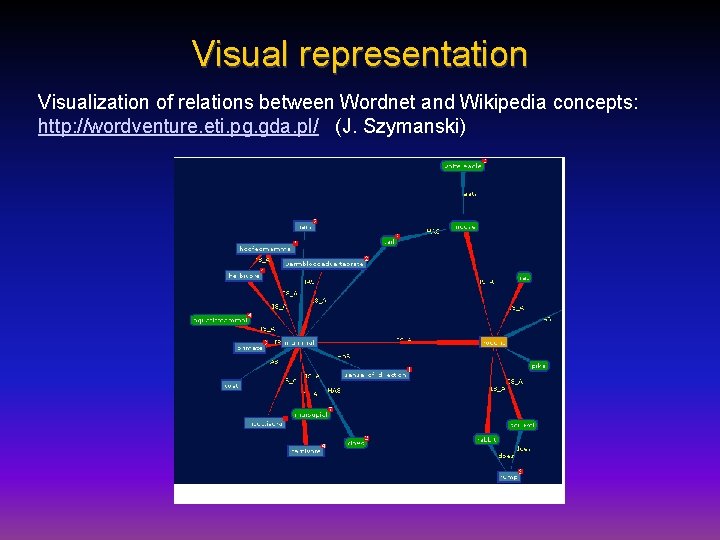

Visual representation Visualization of Wordnet, Wikipedia, ULMS and Cite. Seer, help to edit knowledge; available at http: //wordventure. eti. pg. gda. pl/ (J. Szymanski)

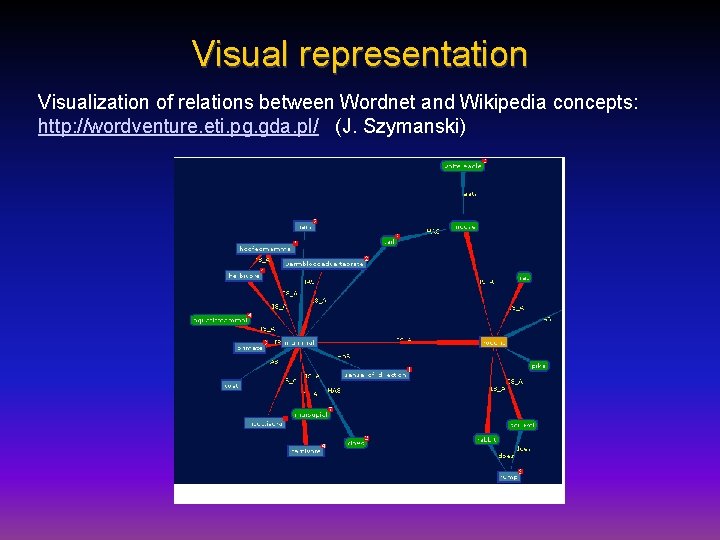

Visual representation Visualization of relations between Wordnet and Wikipedia concepts: http: //wordventure. eti. pg. gda. pl/ (J. Szymanski)

Ambitious approaches… CYC, Douglas Lenat, started in 1984, developed by Cy. Corp, millions of assertions link over 150. 000 concepts and using thousands of micro-theories. Cyc-NL is still a “potential application”, knowledge representation in frames is quite complicated and thus difficult to use. Open-CYC is free. • Open Mind Common Sense Computing Project (MIT): WWW collaboration with over 14, 000 authors, who contributed 710, 000 sentences; used to generate Concept. Net, very large semantic network. • Frame. Net (Berkley), various large-scale ontologies. • How. Net (Chinese Academy of Science). • Life. Net collects info about everyday events, based on Multi-Lingual Concept. Net semantic network with 300 000 nodes, part of the info will be from sensors. • Open Mind Indor Common Sense (Honda) extends it asking questions. The focus of these projects is to understand all relations in text/dialogue. NLP is hard and messy! Many people lost their hope that without deep embodiment we shall create good NLP systems.

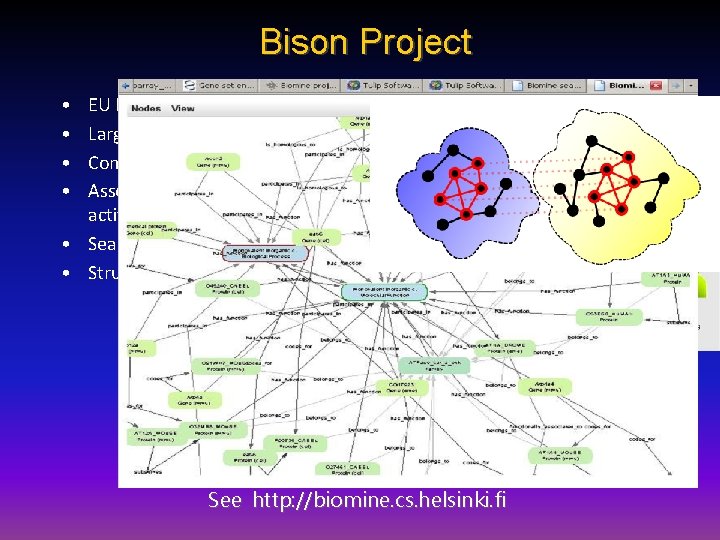

Bison Project • • EU FP 7 Project Large semantic networks. Concepts = activations. Associations = spreading activations. • Searching for biassociations. • Structural similarity See http: //biomine. cs. helsinki. fi

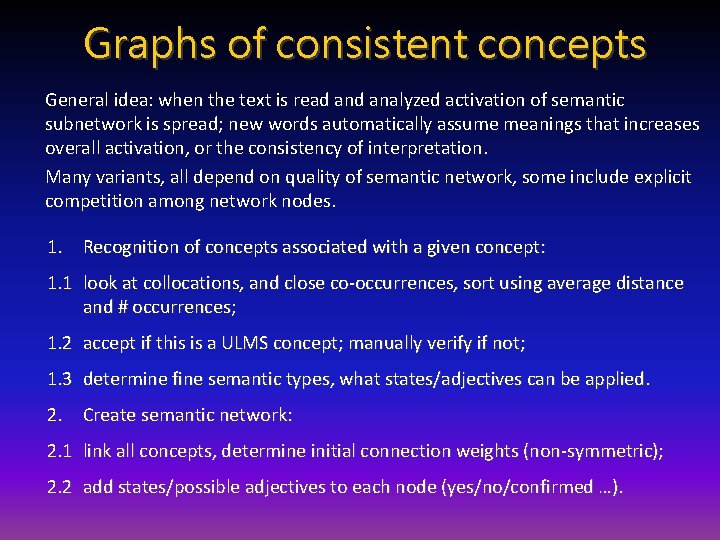

Graphs of consistent concepts General idea: when the text is read analyzed activation of semantic subnetwork is spread; new words automatically assume meanings that increases overall activation, or the consistency of interpretation. Many variants, all depend on quality of semantic network, some include explicit competition among network nodes. 1. Recognition of concepts associated with a given concept: 1. 1 look at collocations, and close co-occurrences, sort using average distance and # occurrences; 1. 2 accept if this is a ULMS concept; manually verify if not; 1. 3 determine fine semantic types, what states/adjectives can be applied. 2. Create semantic network: 2. 1 link all concepts, determine initial connection weights (non-symmetric); 2. 2 add states/possible adjectives to each node (yes/no/confirmed …).

GCC analysis After recognition of concepts and creation of semantic network: 3. Analyze text, create active subnetwork (episodic working memory) to make inferences, disambiguate, and interpret the text. 3. 1 find main unambiguous concepts, activate and spread their activations within semantic network; all linked concepts become partially active, depending on connection weights. 3. 2 Polysemous words, acronyms/abbreviations in expanded form, add to the overall activation; active subnetwork activates appropriate meanings stronger than other meaning, inhibition between competing interpretations decreases alternative meanings. 3. 3 Use grammatical parsing and hierarchical semantic types constraints (Optimality Theory) to infer the state of the concepts. 3. 4 Leave only nodes with activity above some threshold (activity decay). 4. Associate combinations of particular activations with billing codes etc.

Text understanding Graphs of consistent concepts = active part of semantic memory, with spreading activation and inhibition, allows for disambiguation of concept meanings. For medical texts we have > 2 mln concepts and 15 mln relations … Graphs become too complicated to view them.

Medical applications: goals & questions • Can we capture expert’s intuition evaluating document’s similarity, finding its category? Learn form insights? • How to include a priori knowledge in document categorization – important especially for rare disease. • Provide unambiguous annotation of all concepts. • Acronyms/abbreviations expansion and disambiguation. • How to make inferences from the information in the text, assign values to concepts (true, possible, unlikely, false). • How to deal with the negative knowledge (not been found, not consistent with. . . ). • Automatic creation of medical billing codes from text. • Semantic search support, better specification of queries. • Question/answer system. • Integration of text analysis with molecular medicine. Provide support for billing, knowledge discovery, dialog systems.

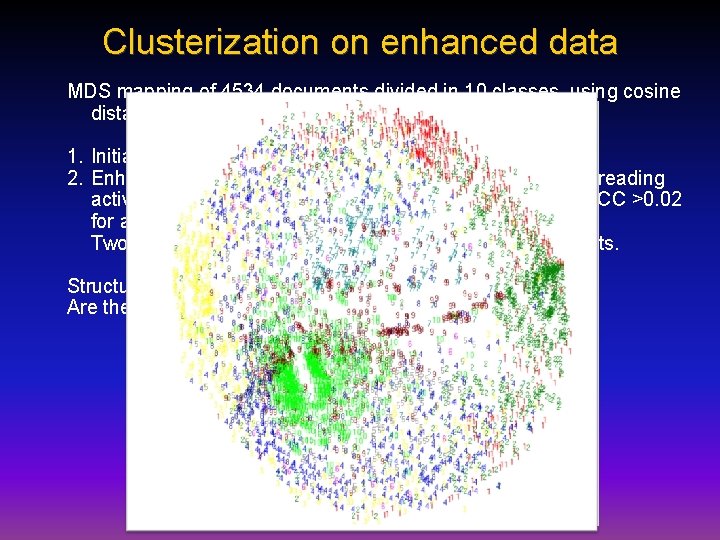

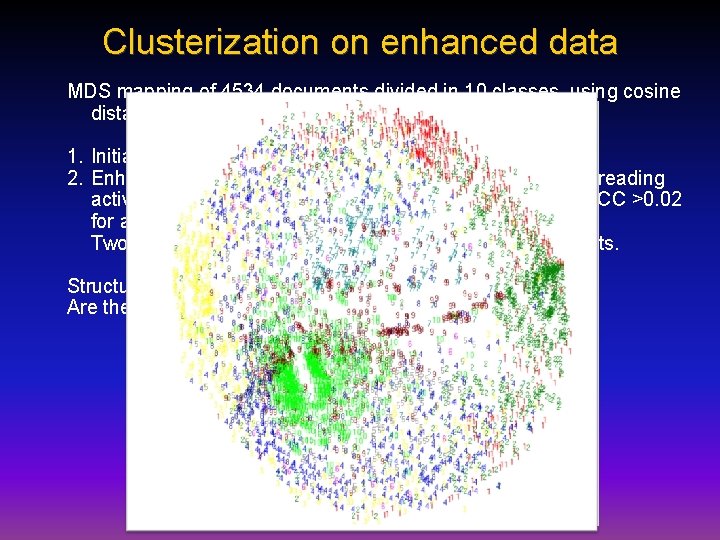

Clusterization on enhanced data MDS mapping of 4534 documents divided in 10 classes, using cosine distances. 1. Initial representation, 807 features. 2. Enhanced by 26 selected semantic types, two steps of spreading activation through semantic network, 2237 concepts with CC >0. 02 for at least one class. Two steps create feedback loops A B between concepts. Structure appears. . . is it interesting to experts? Are these specific subtypes (clinotypes)?

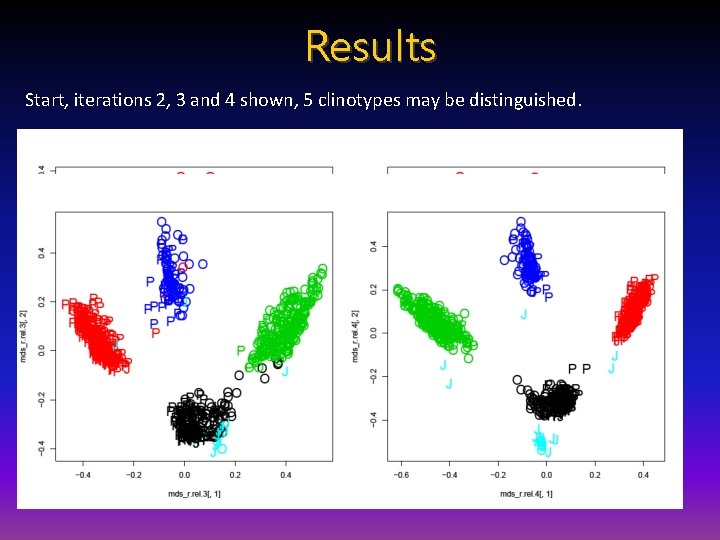

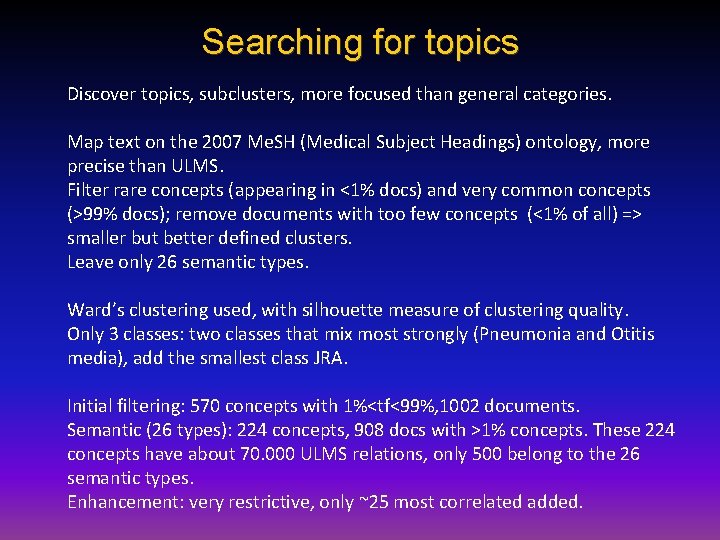

Searching for topics Discover topics, subclusters, more focused than general categories. Map text on the 2007 Me. SH (Medical Subject Headings) ontology, more precise than ULMS. Filter rare concepts (appearing in <1% docs) and very common concepts (>99% docs); remove documents with too few concepts (<1% of all) => smaller but better defined clusters. Leave only 26 semantic types. Ward’s clustering used, with silhouette measure of clustering quality. Only 3 classes: two classes that mix most strongly (Pneumonia and Otitis media), add the smallest class JRA. Initial filtering: 570 concepts with 1%<tf<99%, 1002 documents. Semantic (26 types): 224 concepts, 908 docs with >1% concepts. These 224 concepts have about 70. 000 ULMS relations, only 500 belong to the 26 semantic types. Enhancement: very restrictive, only ~25 most correlated added.

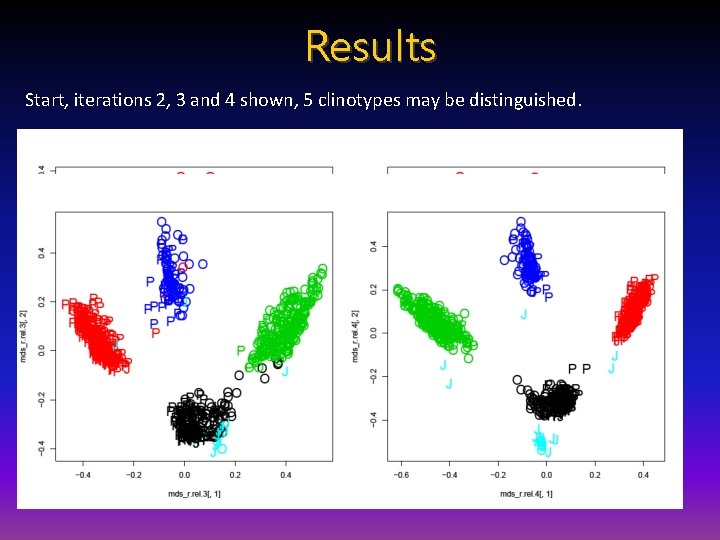

Results Start, iterations 2, 3 and 4 shown, 5 clinotypes may be distinguished.

![Pub Med queries Searching for Alzheimer diseaseMe SH Terms AND apolipoproteins eMe SH Terms Pub. Med queries Searching for: "Alzheimer disease"[Me. SH Terms] AND "apolipoproteins e"[Me. SH Terms]](https://slidetodoc.com/presentation_image/afad8d67a56dfda26b449eec5efe8513/image-43.jpg)

Pub. Med queries Searching for: "Alzheimer disease"[Me. SH Terms] AND "apolipoproteins e"[Me. SH Terms] AND "humans"[Me. SH Terms] Returns 2899 citations with 1924 Me. SH terms. Out of 16 Me. SH hierarchical trees only 4 trees have been selected: Anatomy; Diseases; Chemicals & Drugs; Analytical, Diagnostic and Therapeutic Techniques & Equipment. The number of concepts is 1190. Loop over: Cluster analysis; Feature space enhancement through ULMS relations between Me. SH concepts; Inhibition, leading to filtering of concepts. Create graphical representation.

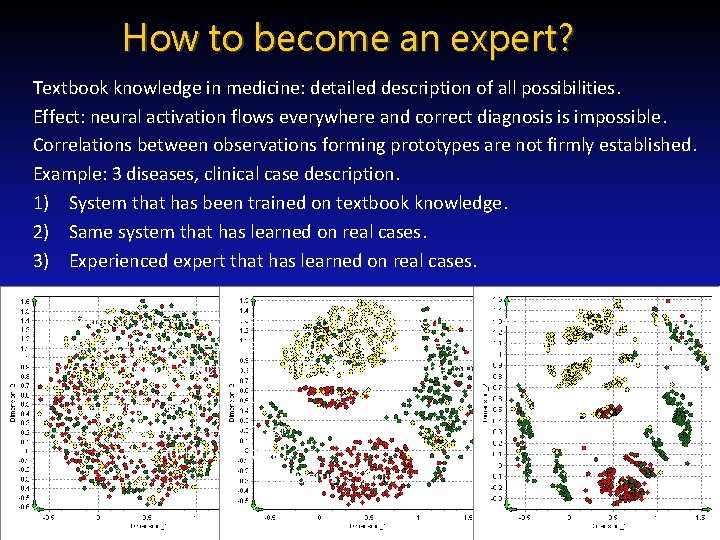

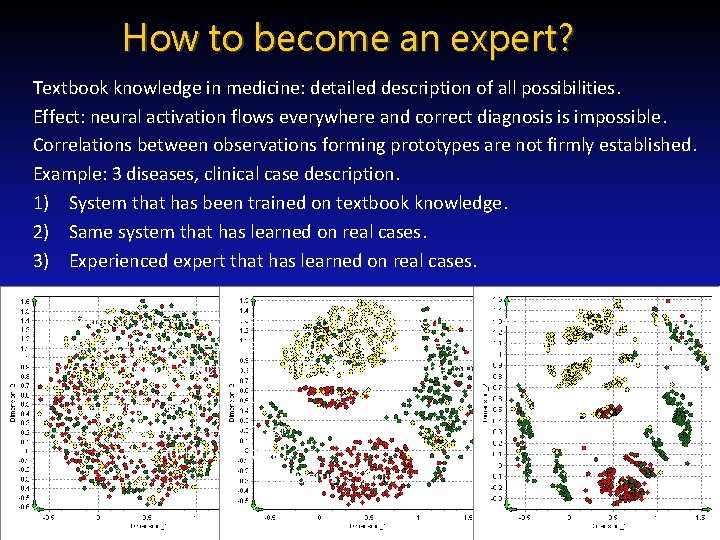

How to become an expert? Textbook knowledge in medicine: detailed description of all possibilities. Effect: neural activation flows everywhere and correct diagnosis is impossible. Correlations between observations forming prototypes are not firmly established. Example: 3 diseases, clinical case description. 1) System that has been trained on textbook knowledge. 2) Same system that has learned on real cases. 3) Experienced expert that has learned on real cases. Conclusion: abstract presentation of knowledge in complex domains leads to poor expertise, random real case learning is a bit better, learning with real cases that cover the whole spectrum of different cases is the best. I hear and I forget. I see and I remember. I do and I understand. Confucius, -500 r.

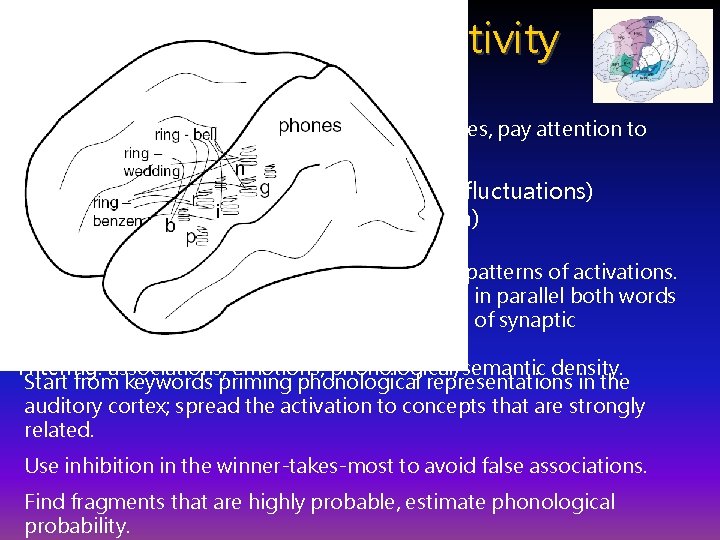

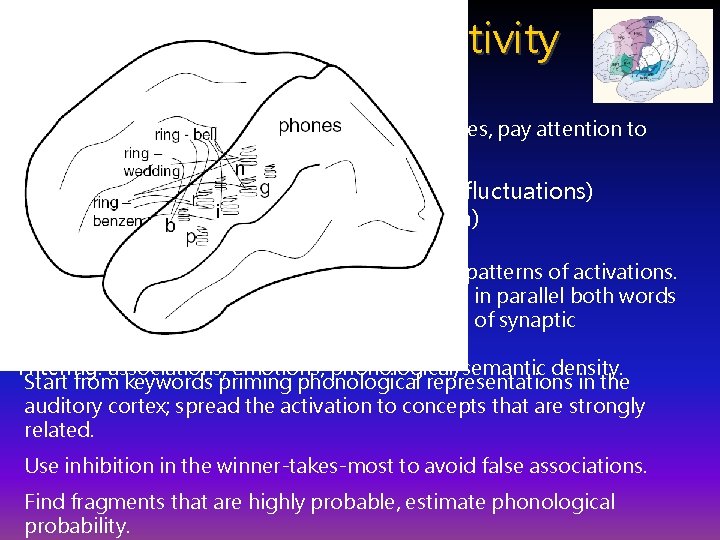

Computational creativity Go to the lower level … construct words from combinations of phonemes, pay attention to morphemes, flexion etc. Creativity = space + imagination (fluctuations) + filtering (competition) Space: neural tissue providing space for infinite patterns of activations. Imagination: many chains of phonemes activate in parallel both words and non-words reps, depending on the strength of synaptic connections. Filtering: associations, emotions, phonological/semantic density. Start from keywords priming phonological representations in the auditory cortex; spread the activation to concepts that are strongly related. Use inhibition in the winner-takes-most to avoid false associations. Find fragments that are highly probable, estimate phonological probability.

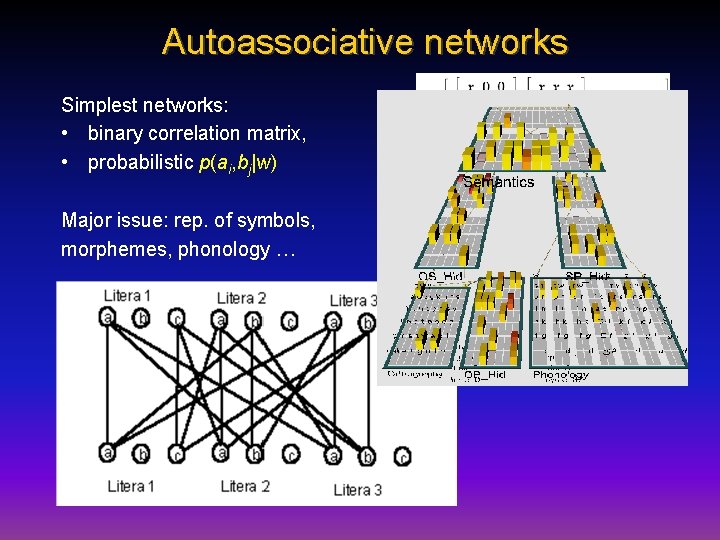

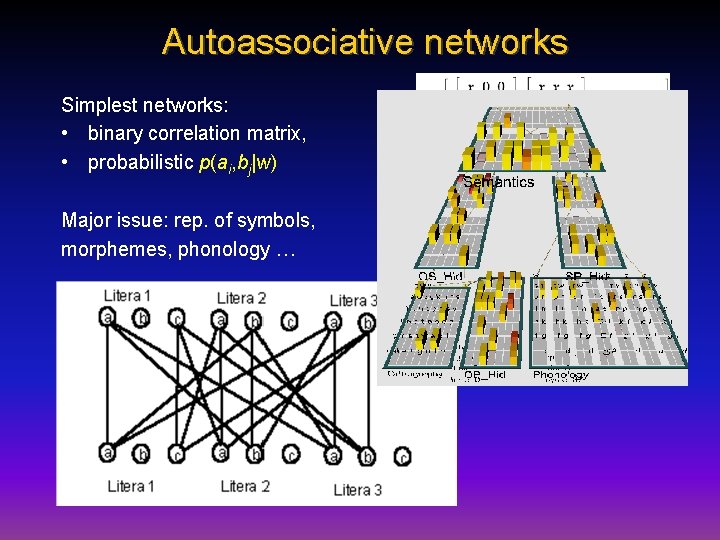

Autoassociative networks Simplest networks: • binary correlation matrix, • probabilistic p(ai, bj|w) Major issue: rep. of symbols, morphemes, phonology …

Words: experiments A real letter from a friend: I am looking for a word that would capture the following qualities: portal to new worlds of imagination and creativity, a place where visitors embark on a journey discovering their inner selves, awakening the Peter Pan within. A place where we can travel through time and space (from the origin to the future and back), so, its about time, about space, infinite possibilities. creativital, creatival (creativity, portal), used in creatival. com FAST!!! I need it soooooooooooon. creativery (creativity, discovery), creativery. com (strategy+creativity) discoverity = {disc, discover, verity} (discovery, creativity, verity) digventure ={dig, digital, venture, adventure} still new! imativity (imagination, creativity); infinitime (infinitive, time) infinition (infinitive, imagination), already a company name portravel (portal, travel); sportal (space, sport, portal), taken timagination (time, imagination); timativity (time, creativity) tivery (time, discovery); trime (travel, time) Server at: http: //www-users. mat. uni. torun. pl/~macias/mambo

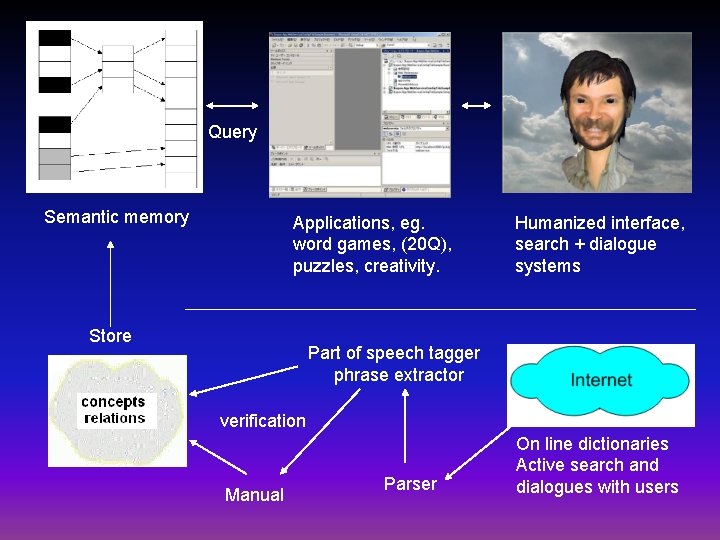

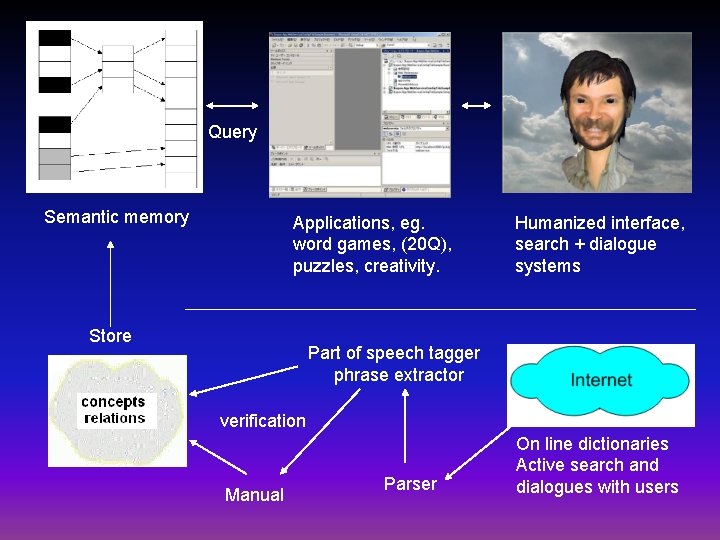

Query Semantic memory Applications, eg. word games, (20 Q), puzzles, creativity. Store Humanized interface, search + dialogue systems Part of speech tagger phrase extractor verification Manual Parser On line dictionaries Active search and dialogues with users

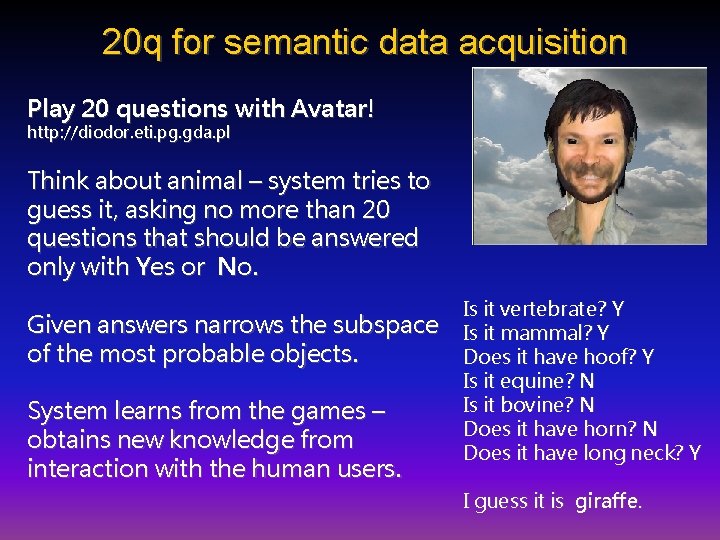

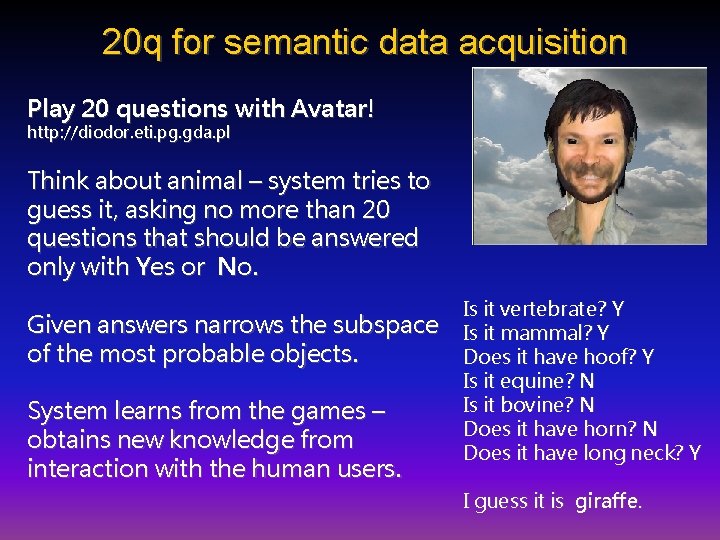

20 q for semantic data acquisition Play 20 questions with Avatar! http: //diodor. eti. pg. gda. pl Think about animal – system tries to guess it, asking no more than 20 questions that should be answered only with Yes or No. Given answers narrows the subspace of the most probable objects. System learns from the games – obtains new knowledge from interaction with the human users. Is it vertebrate? Y Is it mammal? Y Does it have hoof? Y Is it equine? N Is it bovine? N Does it have horn? N Does it have long neck? Y I guess it is giraffe.

20 Q The goal of the 20 question game is to guess a concept that the opponent has in mind by asking appropriate questions. www. 20 q. net has a version that is now implemented in some toys! Based on concepts x question table T(C, Q) = usefulness of Q for C. Learns T(C, Q) values, increasing after successful games, decreasing after lost games. Guess: distance-based. SM does not assume fixed questions. Use of CDV admits only simplest form “Is it related to X? ”, or “Can it be associated with X? ”, where X = concept stored in the SM. Needs only to select a concept, not to build the whole question. Once the keyword has been selected it is possible to use the full power of semantic memory to analyze the type of relations and ask more sophisticated questions.

Mental models Kenneth Craik, 1943 book “The Nature of Explanation”, G-H Luquet attributed mental models to children in 1927. P. Johnson-Laird, 1983 book and papers. Imagination: mental rotation, time ~ angle, about 60 o/sec. Internal models of relations between objects, hypothesized to play a major role in cognition and decision-making. AI: direct representations are very useful, direct in some aspects only! Reasoning: imaging relations, “seeing” mental picture, semantic? Systematic fallacies: a sort of cognitive illusions. • If the test is to continue then the turbine must be rotating fast enough • • to generate emergency electricity. The turbine is not rotating fast enough to generate this electricity. What, if anything, follows? Chernobyl disaster … If A=>B; then ~B => ~A, but only about 2/3 students answer correctly.

Reasoning & models Easy reasoning A=>B, B=>C, so A=>C • All mammals suck milk. • Humans are mammals. • => Humans suck milk. . but almost no-one can draw conclusion from: • • • All academics are scientist. No wise men is an academic. What can we say about wise men and scientists? Surprisingly only ~10% of students get it right, all kinds of errors! No simulations explaining why some mental models are difficult? Creativity: non-schematic thinking?

Mental models summary The mental model theory is an alternative to the view that deduction depends on formal rules of inference. 1. MM represent explicitly what is true, but not what is false; this may lead naive reasoner into systematic error. 2. Large number of complex models => poor performance. 3. Tendency to focus on a few possible models => erroneous conclusions and irrational decisions. Cognitive illusions are just like visual illusions. M. Piattelli-Palmarini, Inevitable Illusions: How Mistakes of Reason Rule Our Minds (1996) R. Pohl, Cognitive Illusions: A Handbook on Fallacies and Biases in Thinking, Judgement and Memory (2005) Amazing, but mental models theory ignores everything we know about learning in any form! How and why do we reason the way we do? I’m innocent! My brain made me do it!

Few conclusions Information management the human way requires neurocognitive informatics: inspirations beyond perceptron. Various approximations to knowledge representation in brain networks should be studied: reference vectors, ontology-based enhancements, spreading activation networks. Relations between spreading activation networks, semantic networks and vector models have not yet been analyzed in details. Neurocognitive approach to language has been developed (Sydney Lamb, Rice Uni. ), but is not practical. Large-scale semantic networks and spreading activation models may be constructed starting from large ontologies + active info search. Semantic networks may capture background knowledge, but vector models offer more flexibility and should be combined with networks. Some interesting algorithms have already been created. Creation of useful numerical representation of various concepts and linking them in semantic networks is at the foundations of information management.

Thank you for lending your ears. . . Google: W. Duch => Papers/presentations/projects