Neural Machine Translation Adapted from slides by Abigail

Neural Machine Translation Adapted from slides by Abigail See Course CS 224 n Stanford University

1990 s-2010 s: Statistical Machine Translation l SMT is a huge research field § The best systems are extremely complex § Hundreds of important details, too many to mention § Systems have many separately-designed subcomponents § Lots of feature engineering • Need to design features to capture particular language phenomena l Require compiling and maintaining extra resources § Like tables of equivalent phrases l Lots of human effort to maintain § Repeated effort for each language pair!

2014: Neural Machine Translation Huge Impact on Machine Translation Research

What is Neural Machine Translation (NMT) is a way to do Machine Translation with a single neural network l The neural network architecture is called sequence-to-sequence (aka seq 2 seq) and it involves two RNNs. l

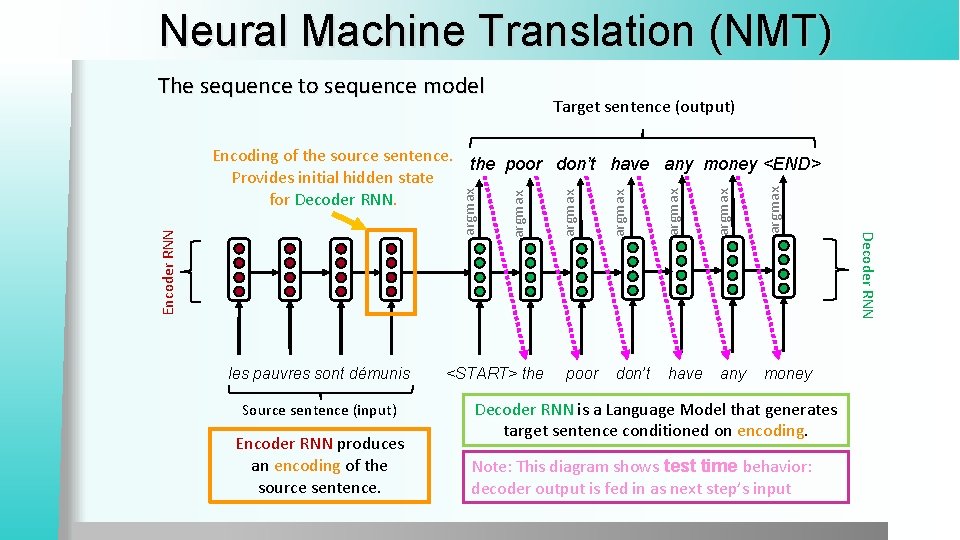

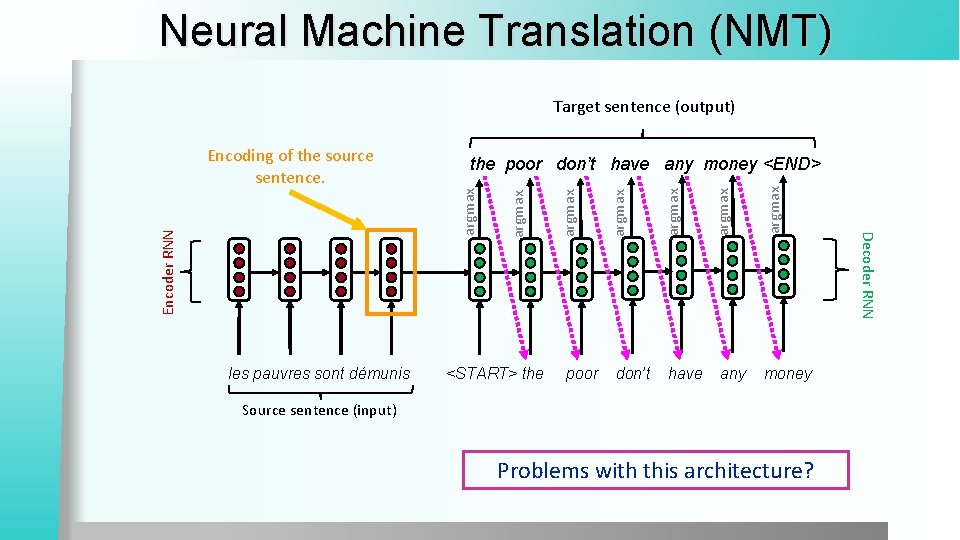

Neural Machine Translation (NMT) The sequence to sequence model Target sentence (output) Source sentence (input) Encoder RNN produces an encoding of the source sentence. <START> the poor don’t have any argmax argmax Encoder RNN les pauvres sont démunis money Decoder RNN is a Language Model that generates target sentence conditioned on encoding. Note: This diagram shows test time behavior: decoder output is fed in as next step’s input Decoder RNN argmax Encoding of the source sentence. the poor don’t have any money <END> Provides initial hidden state for Decoder RNN.

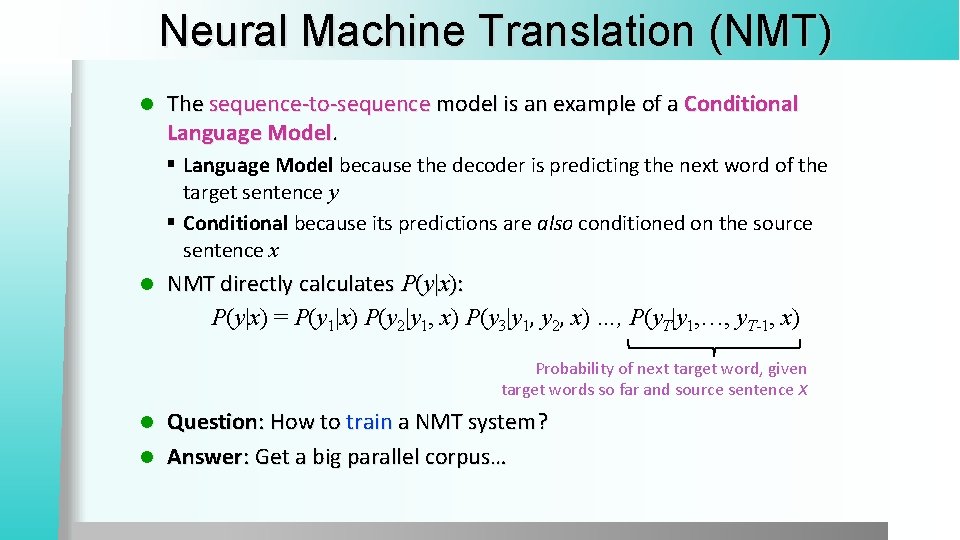

Neural Machine Translation (NMT) l The sequence-to-sequence model is an example of a Conditional Language Model. § Language Model because the decoder is predicting the next word of the target sentence y § Conditional because its predictions are also conditioned on the source sentence x l NMT directly calculates P(y|x): P(y|x) = P(y 1|x) P(y 2|y 1, x) P(y 3|y 1, y 2, x) …, P(y. T|y 1, …, y. T-1, x) Probability of next target word, given target words so far and source sentence x Question: How to train a NMT system? l Answer: Get a big parallel corpus… l

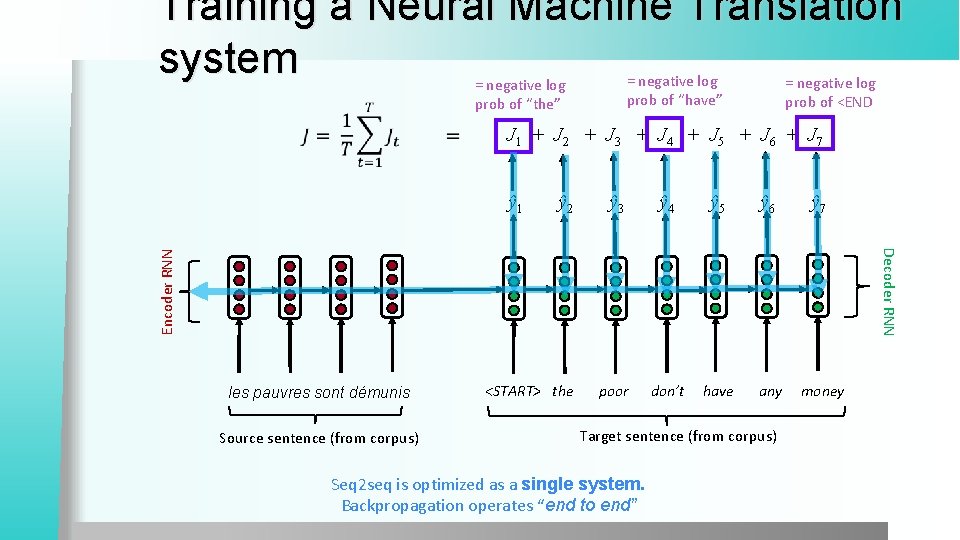

Training a Neural Machine Translation system = negative log prob of “have” = negative log prob of “the” = negative log prob of <END J 1 + J 2 + J 3 + J 4 + J 5 + J 6 + J 7 ŷ 1 ŷ 3 ŷ 4 ŷ 5 ŷ 6 ŷ 7 <START> the poor don’t have any money Encoder RNN Decoder RNN ŷ 2 les pauvres sont démunis Source sentence (from corpus) Target sentence (from corpus) Seq 2 seq is optimized as a single system. Backpropagation operates “end to end”

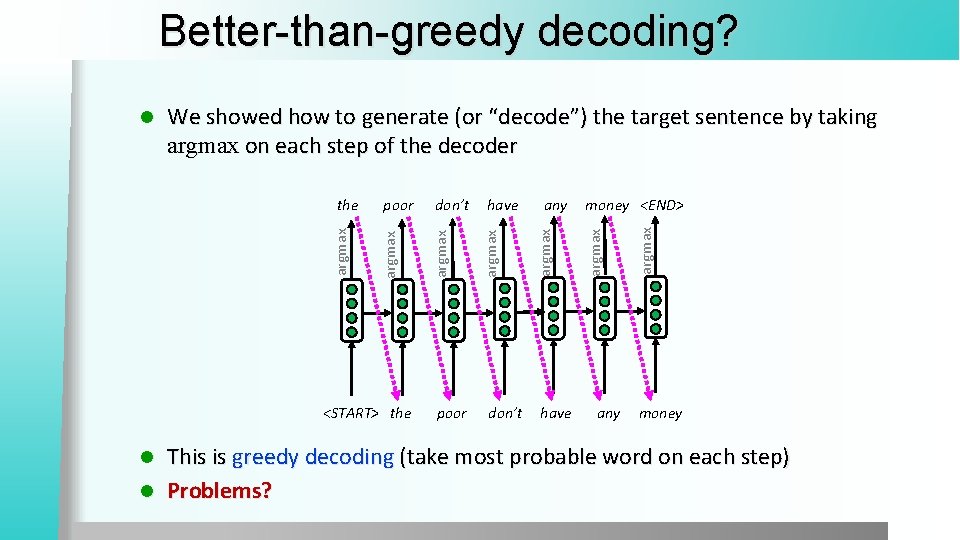

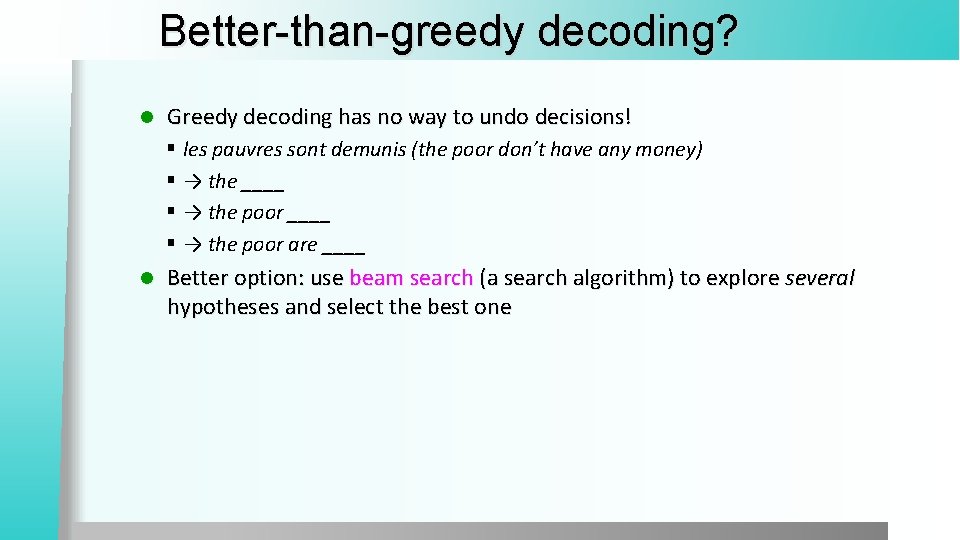

Better-than-greedy decoding? <START> the poor don’t any have money <END> any argmax have argmax don’t argmax poor argmax the argmax We showed how to generate (or “decode”) the target sentence by taking argmax on each step of the decoder argmax l money This is greedy decoding (take most probable word on each step) l Problems? l

Better-than-greedy decoding? l Greedy decoding has no way to undo decisions! § les pauvres sont demunis (the poor don’t have any money) § → the ____ § → the poor are ____ l Better option: use beam search (a search algorithm) to explore several hypotheses and select the best one

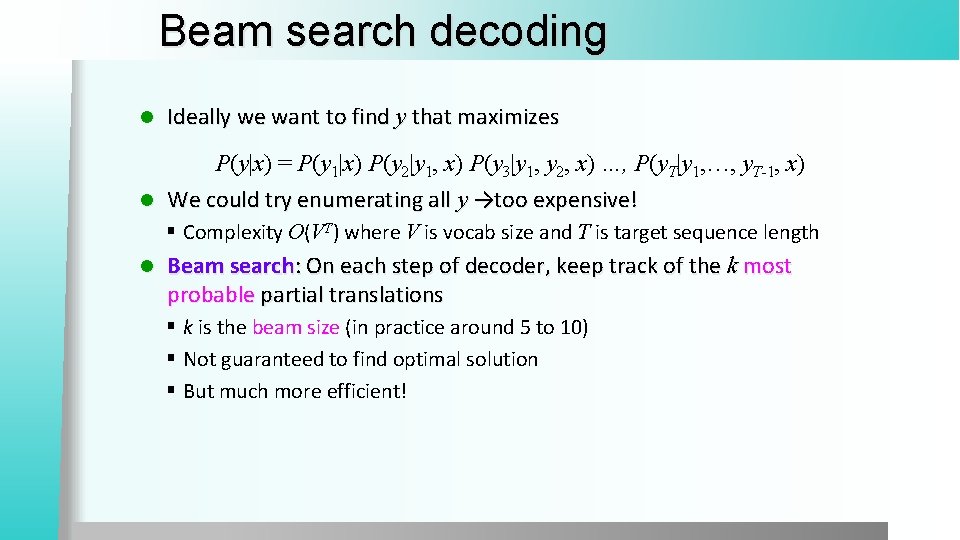

Beam search decoding l Ideally we want to find y that maximizes P(y|x) = P(y 1|x) P(y 2|y 1, x) P(y 3|y 1, y 2, x) …, P(y. T|y 1, …, y. T-1, x) l We could try enumerating all y →too expensive! § Complexity O(VT) where V is vocab size and T is target sequence length l Beam search: On each step of decoder, keep track of the k most probable partial translations § k is the beam size (in practice around 5 to 10) § Not guaranteed to find optimal solution § But much more efficient!

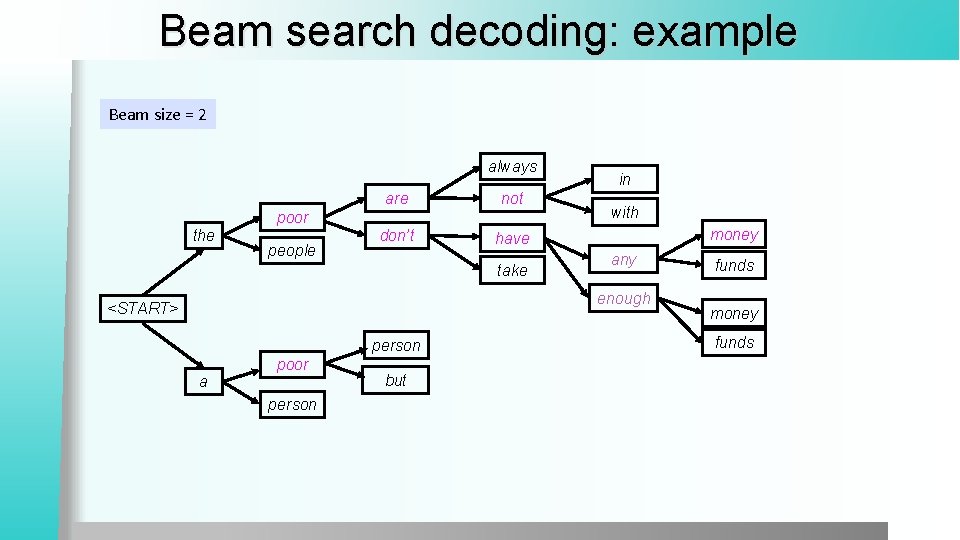

Beam search decoding: example Beam size = 2 always are not don’t have poor the people take in with money any enough <START> person a poor person but funds money funds

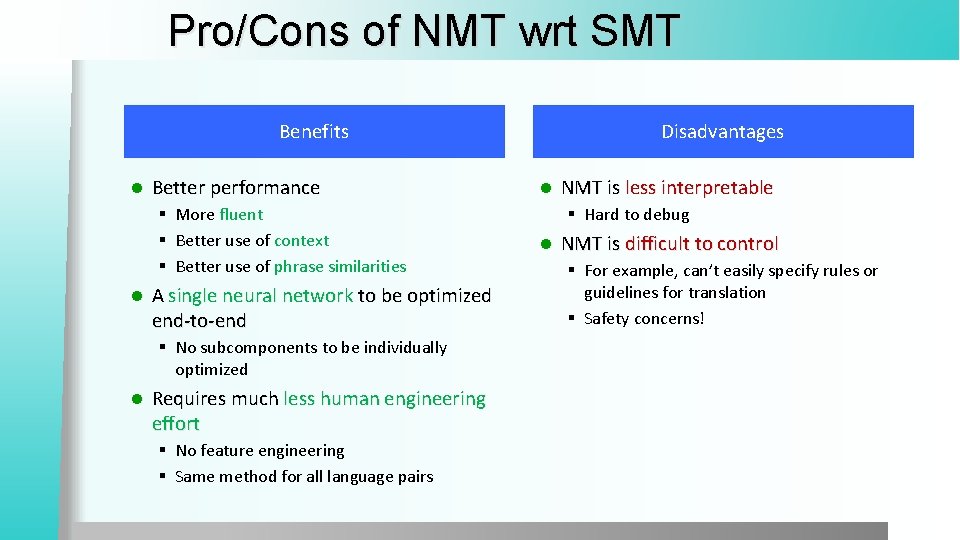

Pro/Cons of NMT wrt SMT Benefits l Better performance § More fluent § Better use of context § Better use of phrase similarities l A single neural network to be optimized end-to-end § No subcomponents to be individually optimized l Requires much less human engineering effort § No feature engineering § Same method for all language pairs Disadvantages l NMT is less interpretable § Hard to debug l NMT is difficult to control § For example, can’t easily specify rules or guidelines for translation § Safety concerns!

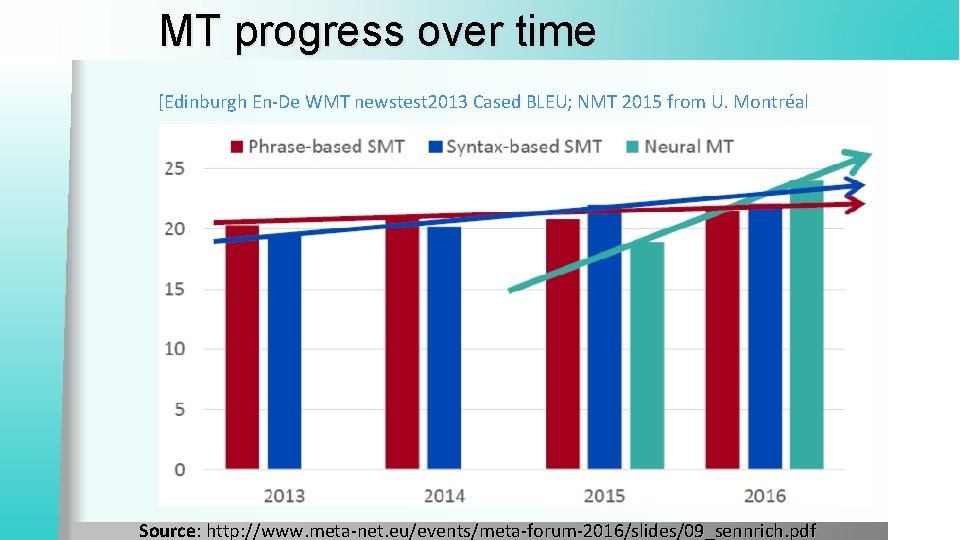

MT progress over time [Edinburgh En-De WMT newstest 2013 Cased BLEU; NMT 2015 from U. Montréal Source: http: //www. meta-net. eu/events/meta-forum-2016/slides/09_sennrich. pdf

NMT: the biggest success story of NLP Deep Learning Neural Machine Translation went from a fringe research activity in 2014 to the leading standard method in 2016 l 2014: First seq 2 seq paper published l 2016: Google Translate switches from SMT to NMT l This is amazing! § SMT systems, built by hundreds of engineers over many years, outperformed by NMT systems trained by a handful of engineers in a few months

Is MT a solved problem? Nope! l Many difficulties remain: l § Out-of-vocabulary words § Domain mismatch between train and test data § Maintaining context over longer text § Low-resource language pair

NMT research continues NMT is the flagship task for NLP Deep Learning l NMT research has pioneered many of the recent innovations of NLP Deep Learning l In 2018: NMT research continues to thrive § Researchers have found many, many improvements to the “vanilla” seq 2 seq NMT system we’ve presented today § But one improvement is so integral that it is the new vanilla… ATTENTION

Neural Machine Translation (NMT) Target sentence (output) les pauvres sont démunis <START> the poor don’t have any argmax argmax the poor don’t have any money <END> money Source sentence (input) Problems with this architecture? Decoder RNN Encoding of the source sentence.

Attention l Attention provides a solution to the bottleneck problem. l Core idea: on each step of the decoder, focus on a particular part of the source sequence l First we will show via diagram (no equations), then we will show with equation

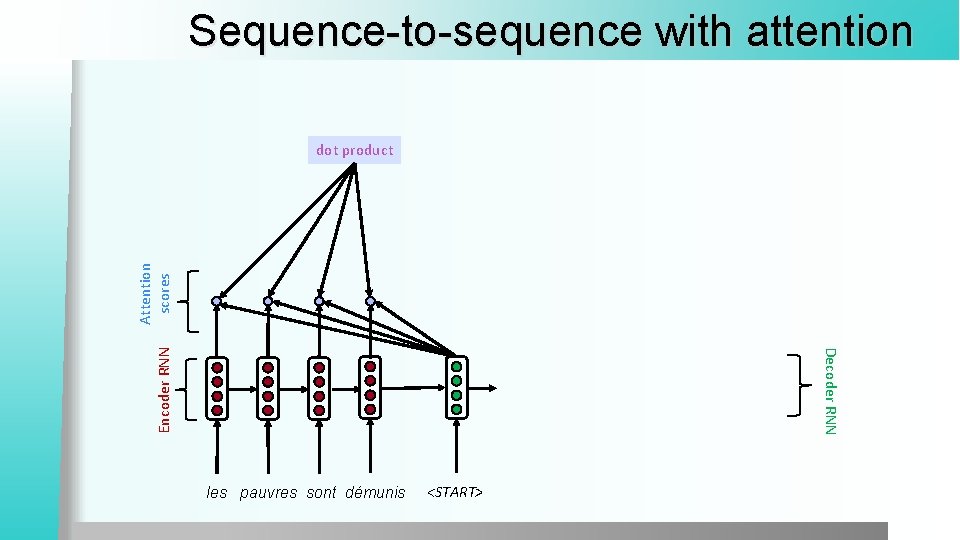

Sequence-to-sequence with attention Decoder RNN Encoder RNN Attention scores dot product les pauvres sont démunis <START>

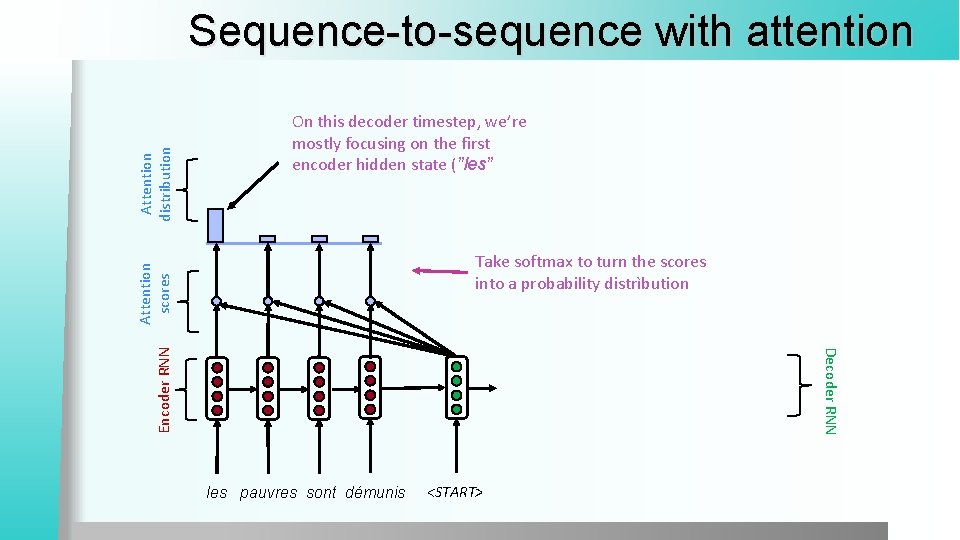

On this decoder timestep, we’re mostly focusing on the first encoder hidden state (”les” Take softmax to turn the scores into a probability distrìbution Decoder RNN Encoder RNN Attention scores Attention distribution Sequence-to-sequence with attention les pauvres sont démunis <START>

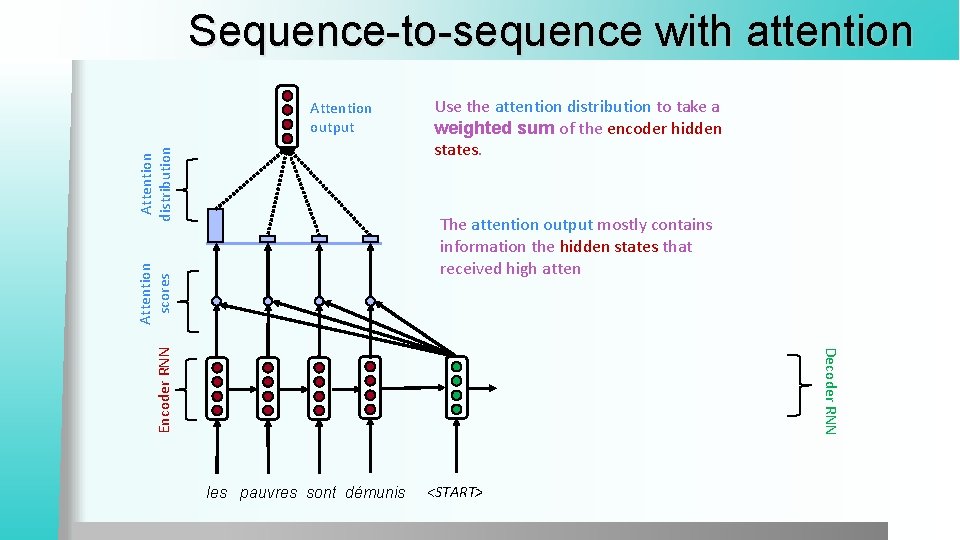

Sequence-to-sequence with attention Attention distribution Attention output Use the attention distribution to take a weighted sum of the encoder hidden states. Decoder RNN Encoder RNN Attention scores The attention output mostly contains information the hidden states that received high atten les pauvres sont démunis <START>

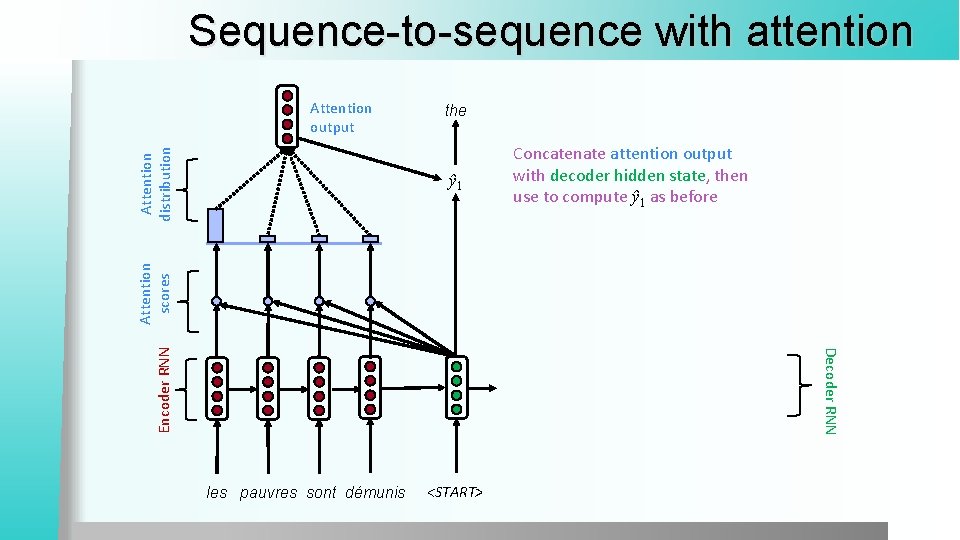

Sequence-to-sequence with attention Attention distribution Attention output the Decoder RNN Encoder RNN Attention scores ŷ 1 Concatenate attention output with decoder hidden state, then use to compute ŷ 1 as before les pauvres sont démunis <START>

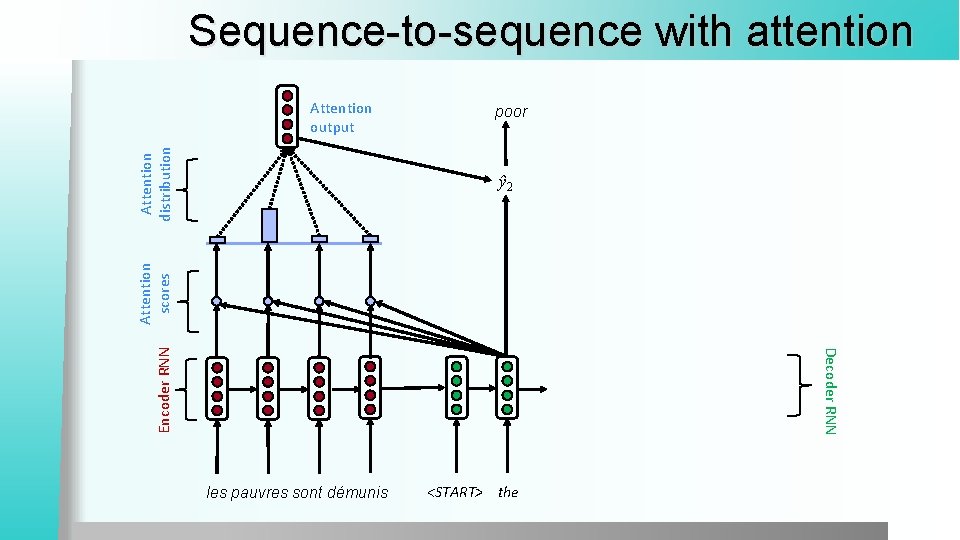

Sequence-to-sequence with attention Attention distribution Attention output poor Decoder RNN Encoder RNN Attention scores ŷ 2 les pauvres sont démunis <START> the

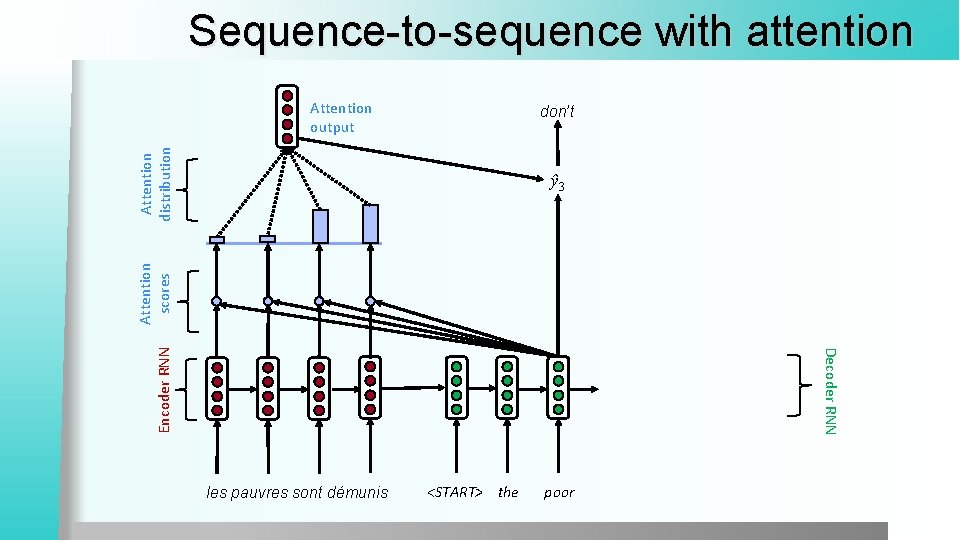

Sequence-to-sequence with attention Attention output Attention distribution don’t Decoder RNN Encoder RNN Attention scores ŷ 3 les pauvres sont démunis <START> the poor

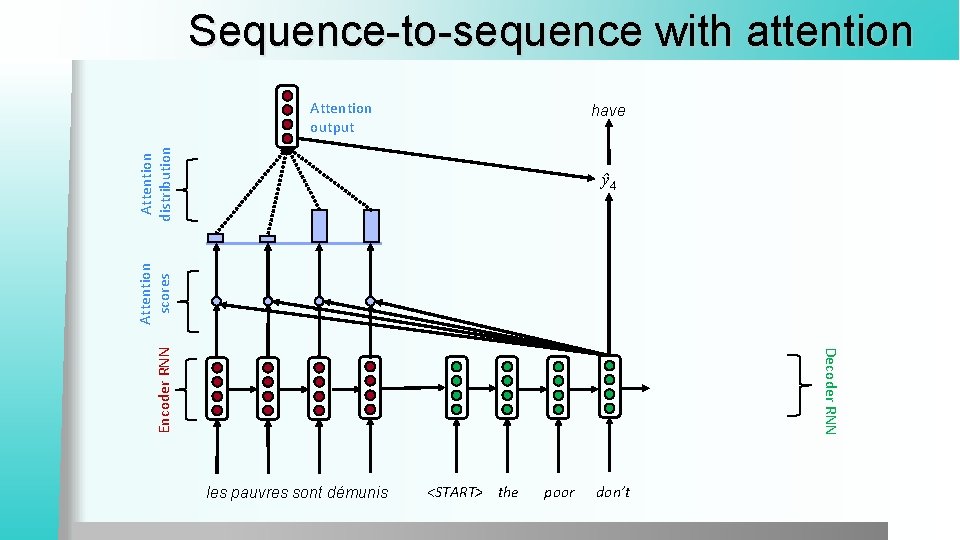

Sequence-to-sequence with attention Attention output Attention distribution have Decoder RNN Encoder RNN Attention scores ŷ 4 les pauvres sont démunis <START> the poor don’t

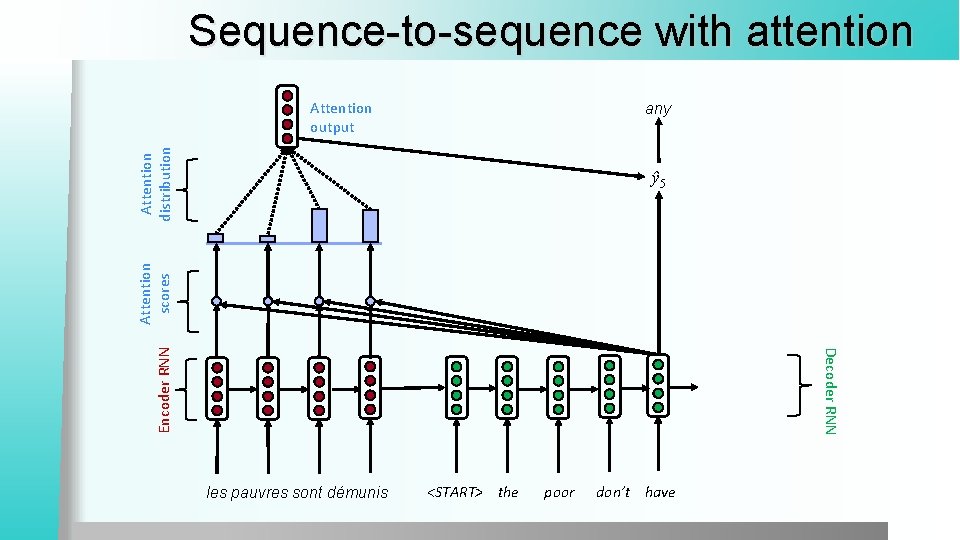

Sequence-to-sequence with attention Attention output Attention distribution any Decoder RNN Encoder RNN Attention scores ŷ 5 les pauvres sont démunis <START> the poor don’t have

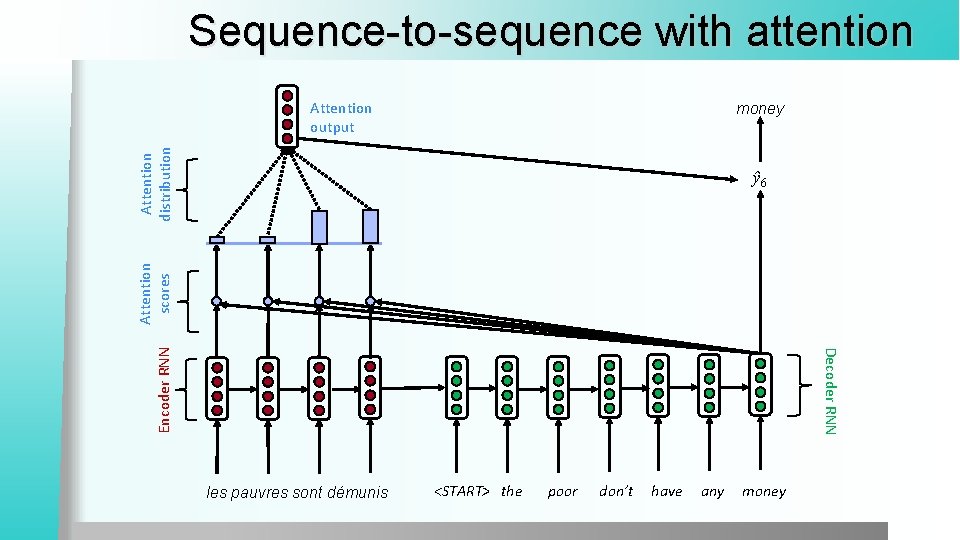

Sequence-to-sequence with attention Attention output Attention distribution money Decoder RNN Encoder RNN Attention scores ŷ 6 les pauvres sont démunis <START> the poor don’t have any money

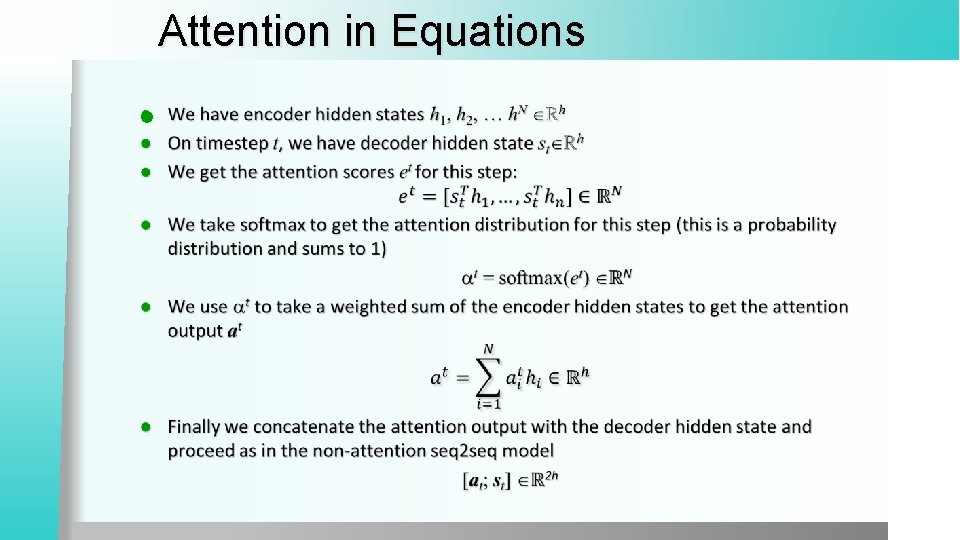

Attention in Equations l

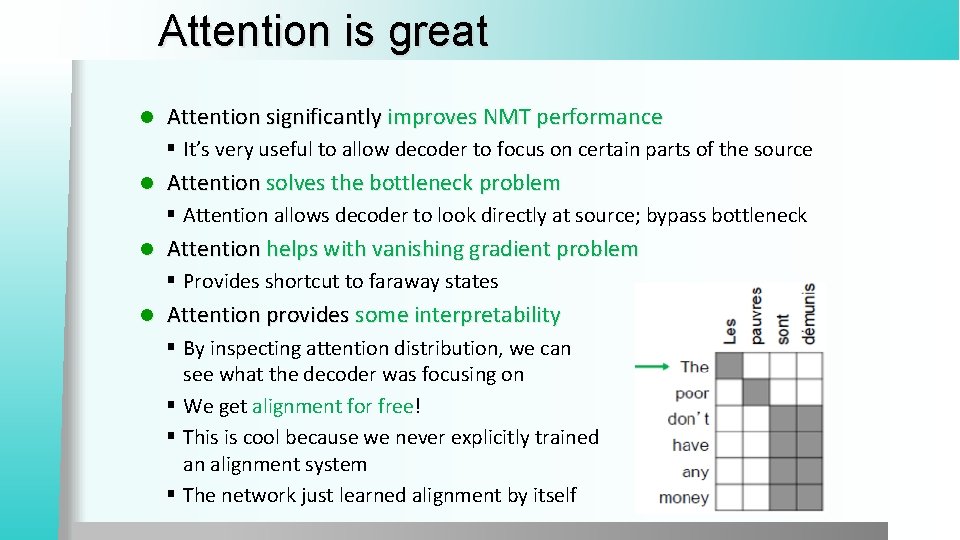

Attention is great l Attention significantly improves NMT performance § It’s very useful to allow decoder to focus on certain parts of the source l Attention solves the bottleneck problem § Attention allows decoder to look directly at source; bypass bottleneck l Attention helps with vanishing gradient problem § Provides shortcut to faraway states l Attention provides some interpretability § By inspecting attention distribution, we can see what the decoder was focusing on § We get alignment for free! § This is cool because we never explicitly trained an alignment system § The network just learned alignment by itself

Sequence to sequence is versatile Sequence-to-sequence is useful for more than just MT l Many NLP tasks can be phrased as sequence-to-sequence: l § Summarization (long text → short text) § Dialogue (previous utterances → next utterance) § Parsing (input text → output parse as sequence) § Code generation (natural language → Python code)

Conclusions We learned the history of Machine Translation (MT) l Since 2014, Neural MT rapidly replaced intricate Statistical MT l Sequence-to-sequence is the architecture for NMT (uses 2 RNNs) l Attention is a way to focus on particular parts of the input § Improves sequence-to-sequence a lot!

- Slides: 31