Networkbased Intrusion Detection and Prevention in Challenging and

Network-based Intrusion Detection and Prevention in Challenging and Emerging Environments: High-speed Data Center, Web 2. 0, and Social Networks Yan Chen Lab for Internet and Security Technology (LIST) Department of Electrical Engineering and Computer Science Northwestern University

Chicago 2

Northwestern 3

4

Statistics • Chicago: 3 rd largest city in US • NU: ranked #12 by US News & World Report – Established in 1851 – ~8000 undergrads • Mc. Cormick School of Engineering: ranked #20 – 180 faculty members – ~1400 undergrads and similar # of grad students 5

Statistics of Mc. Cormick • National academy memberships: – National Academy of Engineering (NAE): 12 active, 7 emeriti – National Academy of Science (NAS): 3 active – Institute of Medicine (Io. M): 1 emeritus – American Academy of Arts and Sciences (AAAS): 5 active, 3 emeriti – National Medal of Technology: 1 active 6

Net. Shield: Massive Semantics-Based Vulnerability Signature Matching for High-Speed Networks supplies travel capital equipment Facilities publication 3, 026 5, 200 0 0 1, 000 Zhichun Li, Gao Xia, Hongyu Gao, Yi Tang, Yan Chen, Bin Liu, Junchen Jiang, and Yuezhou Lv NEC Laboratories America, Inc. Northwestern University Tsinghua University 7

p. To keep network safe is a grand challenge p. Worms and Botnets are still popular p e. g. Conficker worm outbreak in 2008 and infected 9~15 million hosts. 8

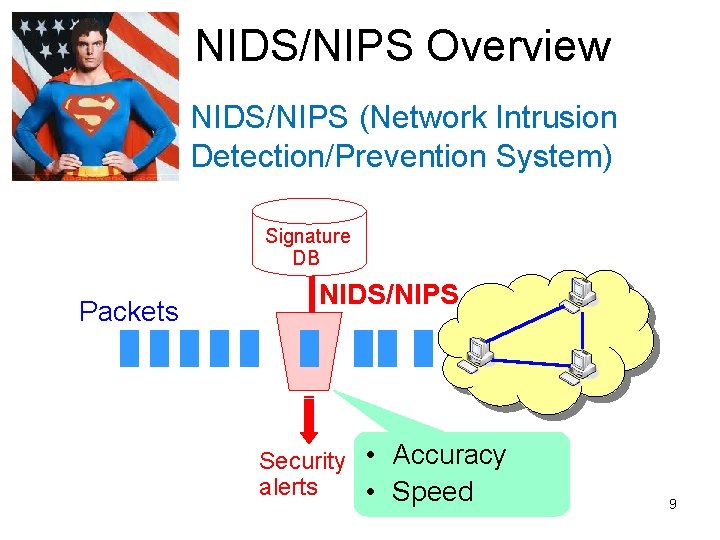

NIDS/NIPS Overview NIDS/NIPS (Network Intrusion Detection/Prevention System) Signature DB Packets NIDS/NIPS Security • Accuracy alerts • Speed 9

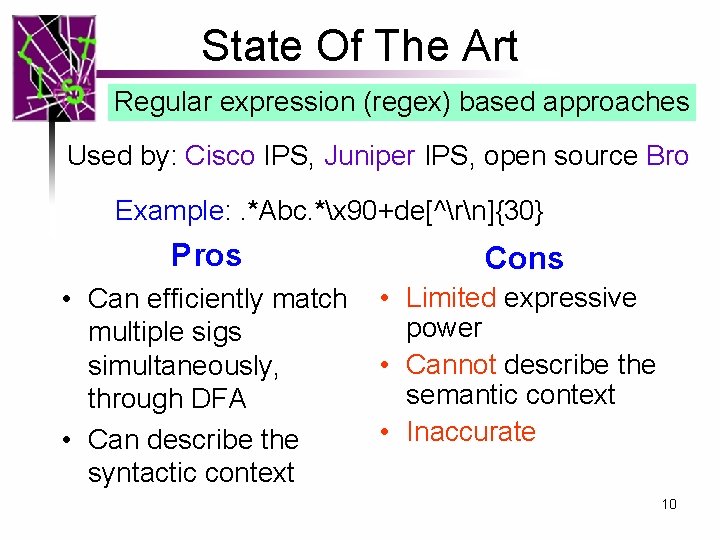

State Of The Art Regular expression (regex) based approaches Used by: Cisco IPS, Juniper IPS, open source Bro Example: . *Abc. *x 90+de[^rn]{30} Pros Cons • Can efficiently match multiple sigs simultaneously, through DFA • Can describe the syntactic context • Limited expressive power • Cannot describe the semantic context • Inaccurate 10

![State Of The Art Vulnerability Signature [Wang et al. 04] Blaster Worm (WINRPC) Example: State Of The Art Vulnerability Signature [Wang et al. 04] Blaster Worm (WINRPC) Example:](http://slidetodoc.com/presentation_image/65a72bb8ec96a4bef2d6a06fe3df441c/image-11.jpg)

State Of The Art Vulnerability Signature [Wang et al. 04] Blaster Worm (WINRPC) Example: Vulnerability: design flaws enable the bad BIND: inputs lead&& therpc_vers_minor==1 program to a bad&& state rpc_vers==5 packed_drep==x 10x 00x 00 Good && context[0]. abstract_syntax. uuid=UUID_Remote. Activation state BIND-ACK: Bad input rpc_vers==5 && rpc_vers_minor==1 CALL: rpc_vers==5 && rpc_vers_minors==1 && packed_drep==x 10x 00x 00 Bad Vulnerability && opnum==0 x 00 && stub. Remote. Activation. Body. actual_length>=40 state Signature && match. RE(stub. buffer, /^x 5 cx 00/) Pros • Directly describe semantic context • Very expressive, can express the vulnerability condition exactly • Accurate Cons • Slow! • Existing approaches all use sequential matching • Require protocol parsing 11

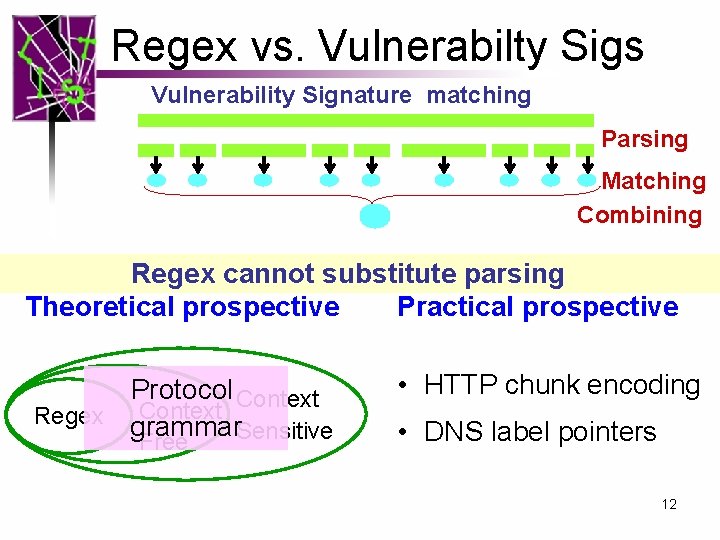

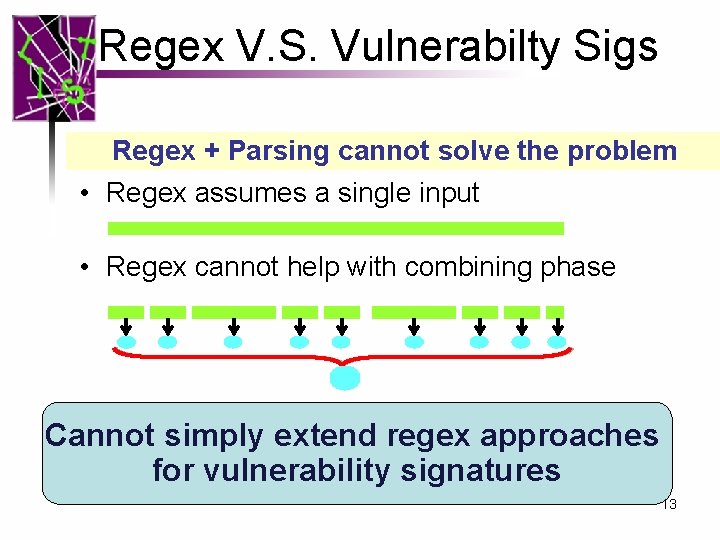

Regex vs. Vulnerabilty Sigs Vulnerability Signature matching Parsing Matching Combining Regex cannot substitute parsing Theoretical prospective Practical prospective Protocol Context Regex grammar Sensitive Free • HTTP chunk encoding • DNS label pointers 12

Regex V. S. Vulnerabilty Sigs Regex + Parsing cannot solve the problem • Regex assumes a single input • Regex cannot help with combining phase Cannot simply extend regex approaches for vulnerability signatures 13

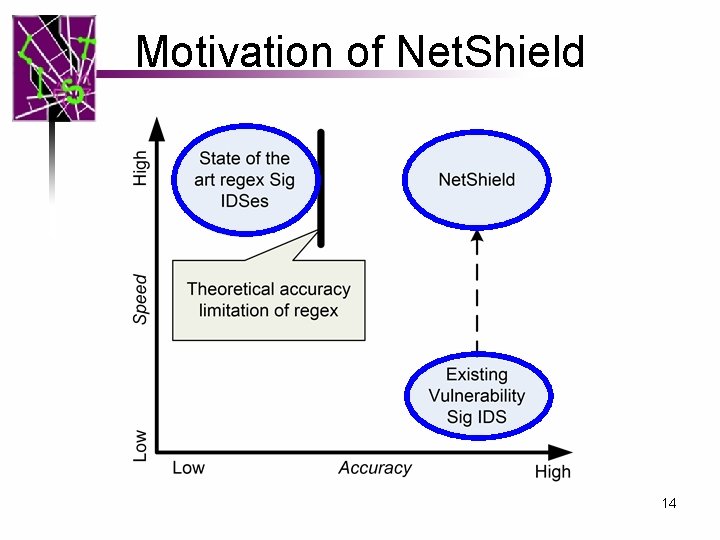

Motivation of Net. Shield 14

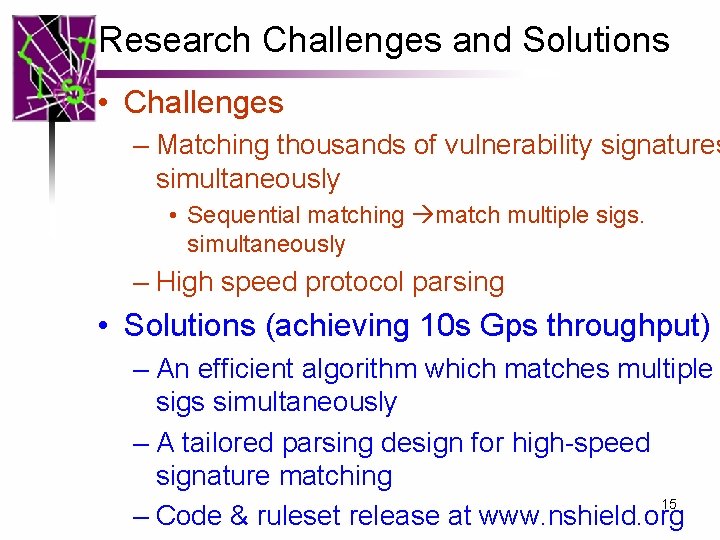

Research Challenges and Solutions • Challenges – Matching thousands of vulnerability signatures simultaneously • Sequential matching match multiple sigs. simultaneously – High speed protocol parsing • Solutions (achieving 10 s Gps throughput) – An efficient algorithm which matches multiple sigs simultaneously – A tailored parsing design for high-speed signature matching 15 – Code & ruleset release at www. nshield. org

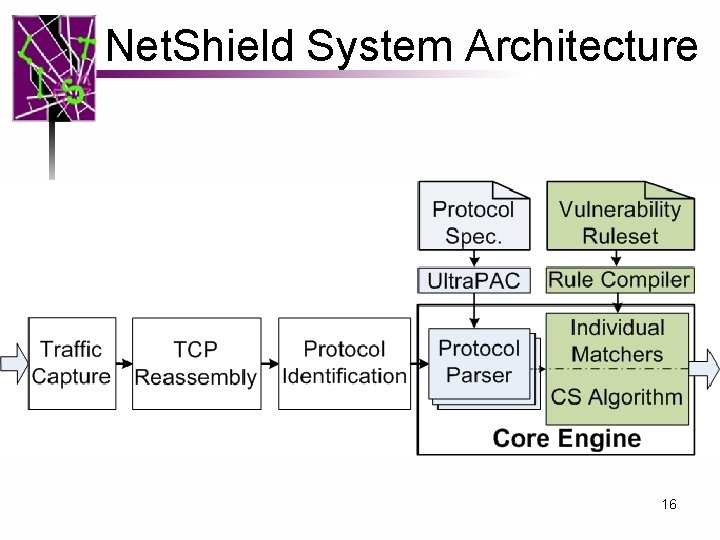

Net. Shield System Architecture 16

Outline • • • Motivation High Speed Matching for Large Rulesets High Speed Parsing Evaluation Research Contributions 17

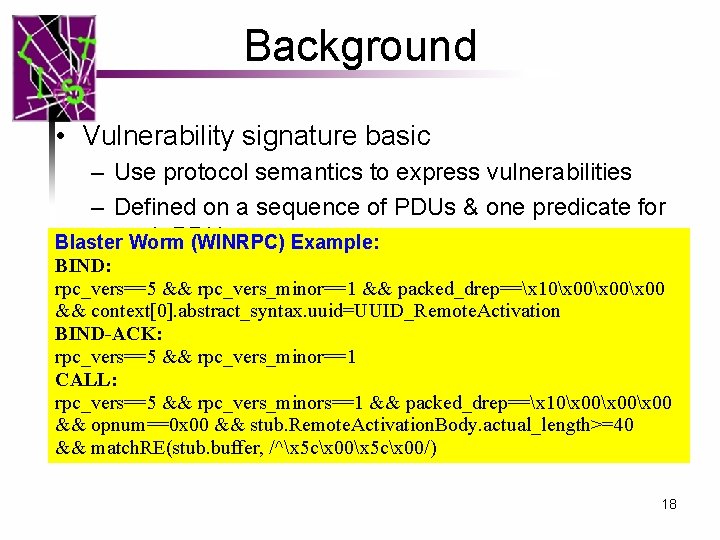

Background • Vulnerability signature basic – Use protocol semantics to express vulnerabilities – Defined on a sequence of PDUs & one predicate for PDU Blastereach Worm (WINRPC) Example: BIND: – Example: ver==1 && method==“put” && len(buf)>300 rpc_vers==5 && rpc_vers_minor==1 && packed_drep==x 10x 00x 00 context[0]. abstract_syntax. uuid=UUID_Remote. Activation • &&Data representations BIND-ACK: – The && basic data types used in predicates: numbers and rpc_vers==5 rpc_vers_minor==1 CALL: strings rpc_vers==5 && rpc_vers_minors==1 – number operators: ==, >, && <, packed_drep==x 10x 00x 00 >=, <= && opnum==0 x 00 && stub. Remote. Activation. Body. actual_length>=40 String operators: ==, match_re(. , . ), len(. ). && – match. RE(stub. buffer, /^x 5 cx 00/) 18

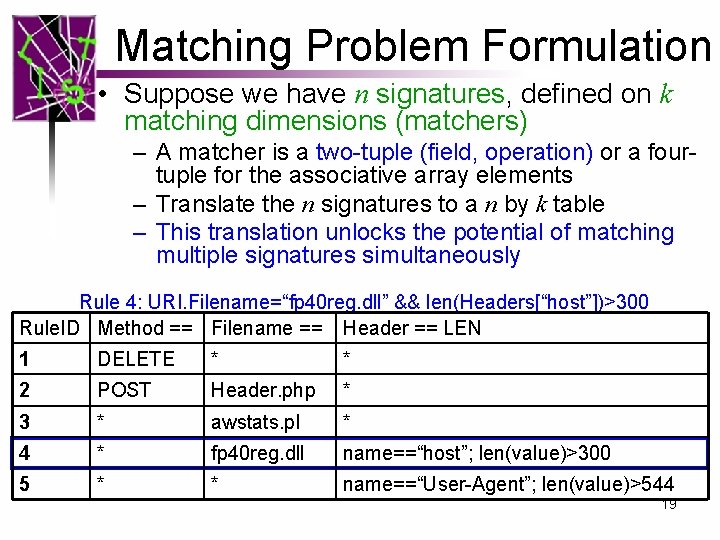

Matching Problem Formulation • Suppose we have n signatures, defined on k matching dimensions (matchers) – A matcher is a two-tuple (field, operation) or a fourtuple for the associative array elements – Translate the n signatures to a n by k table – This translation unlocks the potential of matching multiple signatures simultaneously Rule 4: URI. Filename=“fp 40 reg. dll” && len(Headers[“host”])>300 Rule. ID Method == Filename == Header == LEN 1 DELETE * * 2 POST Header. php * 3 * awstats. pl * 4 * fp 40 reg. dll name==“host”; len(value)>300 5 * * name==“User-Agent”; len(value)>544 19

Signature Matching • Basic scheme for single PDU case • Refinement – Allow negative conditions – Handle array cases – Handle associative array cases – Handle mutual exclusive cases • Extend to Multiple PDU Matching (MPM) – Allow checkpoints. 20

Difficulty of the Single PDU matching Bad News – A well-known computational geometric problem can be reduced to this problem. – And that problem has bad worst case bound O((log N)K-1) time or O(NK) space (worst case ruleset) Good News – Measurement study on Snort and Cisco ruleset – The real-world rulesets are good: the matchers are selective. – With our design O(K) 21

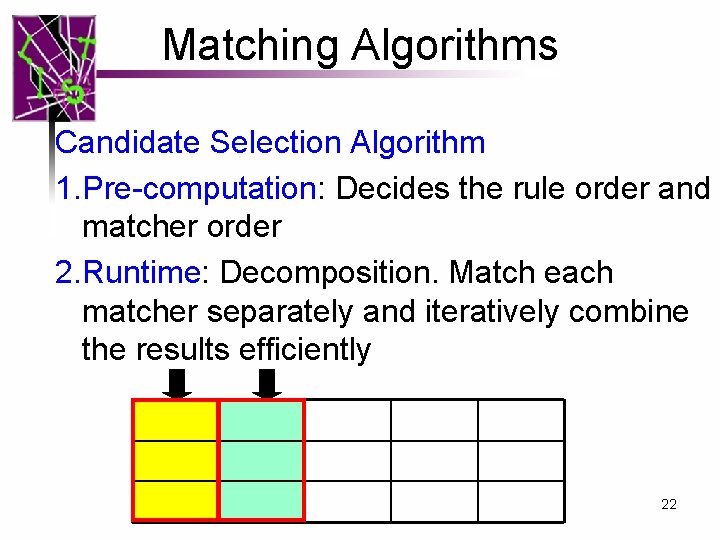

Matching Algorithms Candidate Selection Algorithm 1. Pre-computation: Decides the rule order and matcher order 2. Runtime: Decomposition. Match each matcher separately and iteratively combine the results efficiently 22

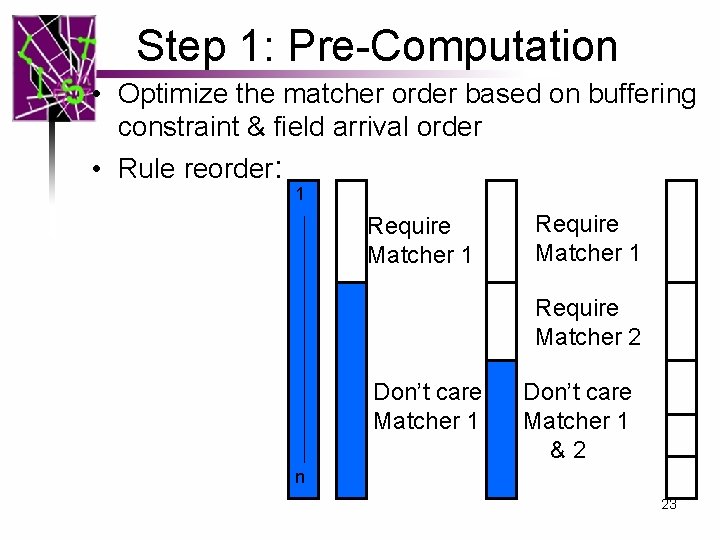

Step 1: Pre-Computation • Optimize the matcher order based on buffering constraint & field arrival order • Rule reorder: 1 Require Matcher 2 Don’t care Matcher 1 &2 n 23

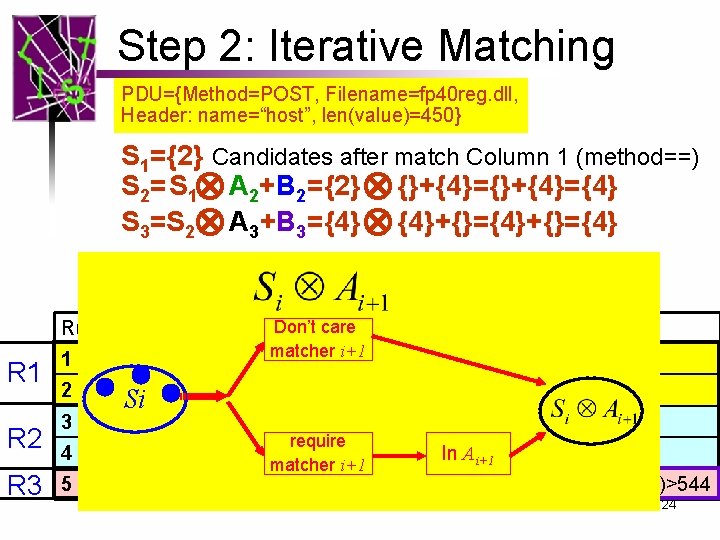

Step 2: Iterative Matching PDU={Method=POST, Filename=fp 40 reg. dll, Header: name=“host”, len(value)=450} S 1={2} Candidates after match Column 1 (method==) S 2=S 1 A 2+B 2 ={2} {}+{4}={4} S 3=S 2 A 3+B 3={4} {4}+{}={4} Don’t care Rule. ID Method == Filename == Header == LEN R 1 R 2 R 3 1 2 DELETE Header. php * * awstats. pl * 4 * fp 40 reg. dll 5 * * 3 Si. POST * matcher i+1 * require In Ai+1 len(value)>300 name==“host”; matcher i+1 name==“User-Agent”; len(value)>544 24

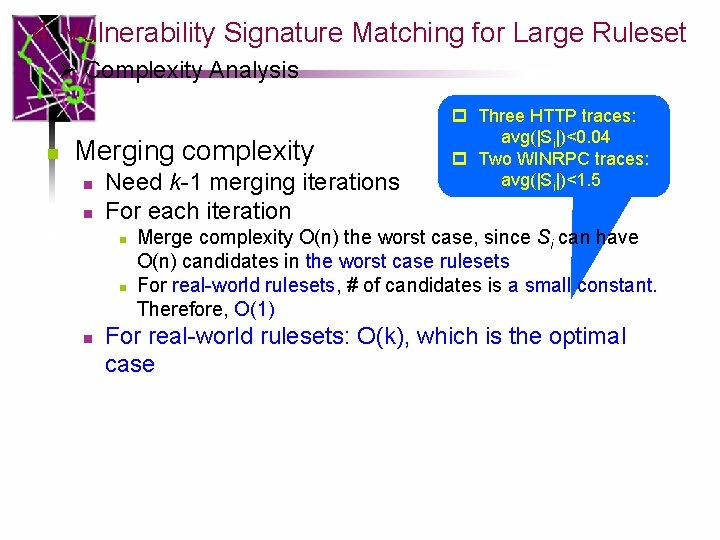

Complexity Analysis Three HTTP traces: avg(|Si|)<0. 04 • Merging complexity Two WINRPC – Need k-1 merging iterations traces: avg(|Si|)<1. 5 – For each iteration • Merge complexity O(n) the worst case, since Si can have O(n) candidates in the worst case rulesets • For real-world rulesets, # of candidates is a small constant. Therefore, O(1) – For real-world rulesets: O(k) which is the optimal we can get 25

Outline • • • Motivation High Speed Matching for Large Rulesets. High Speed Parsing Evaluation Research Contribution 26

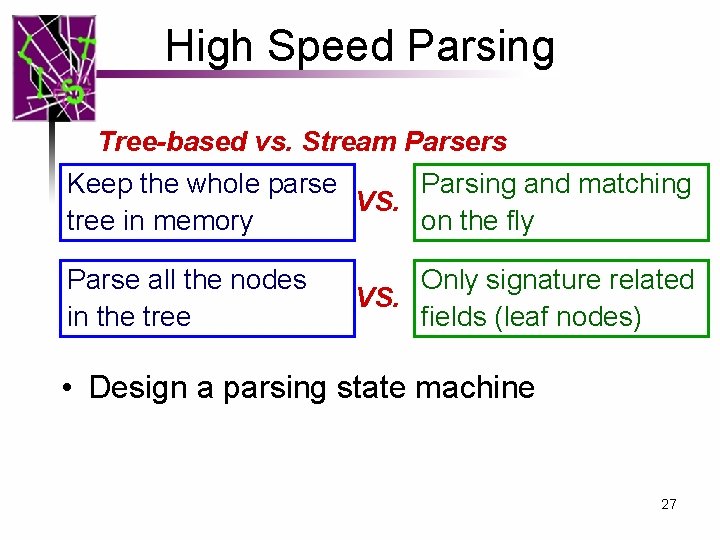

High Speed Parsing Tree-based vs. Stream Parsers Keep the whole parse Parsing and matching VS. tree in memory on the fly Parse all the nodes in the tree Only signature related VS. fields (leaf nodes) • Design a parsing state machine 27

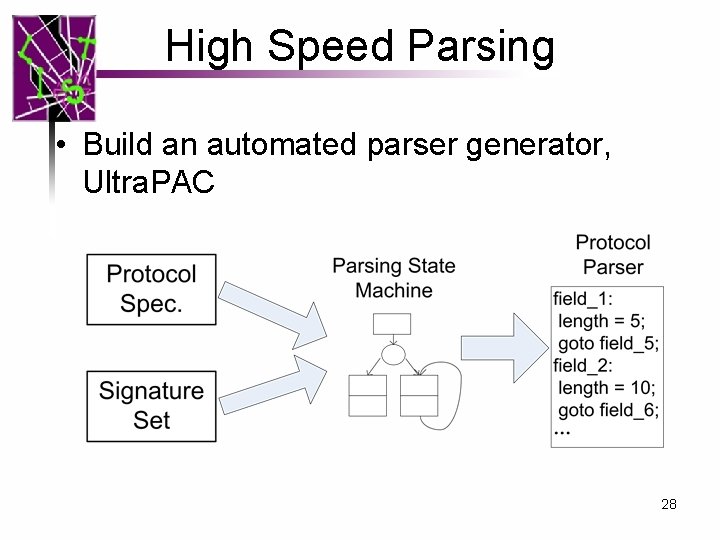

High Speed Parsing • Build an automated parser generator, Ultra. PAC 28

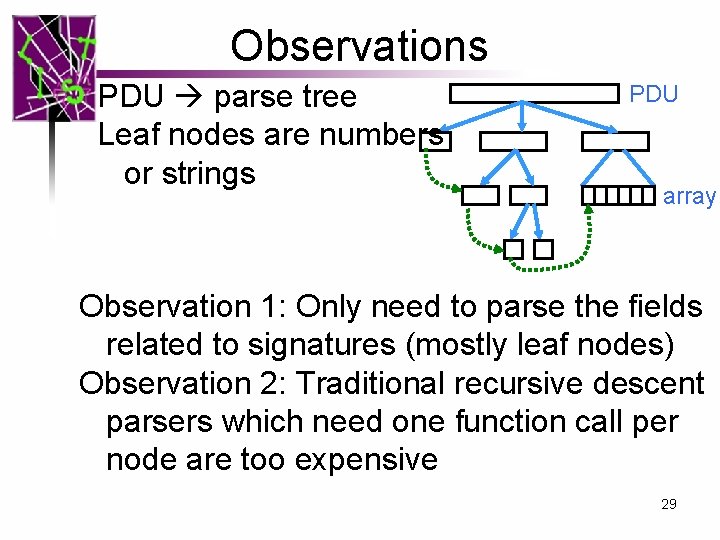

Observations PDU parse tree Leaf nodes are numbers or strings PDU array Observation 1: Only need to parse the fields related to signatures (mostly leaf nodes) Observation 2: Traditional recursive descent parsers which need one function call per node are too expensive 29

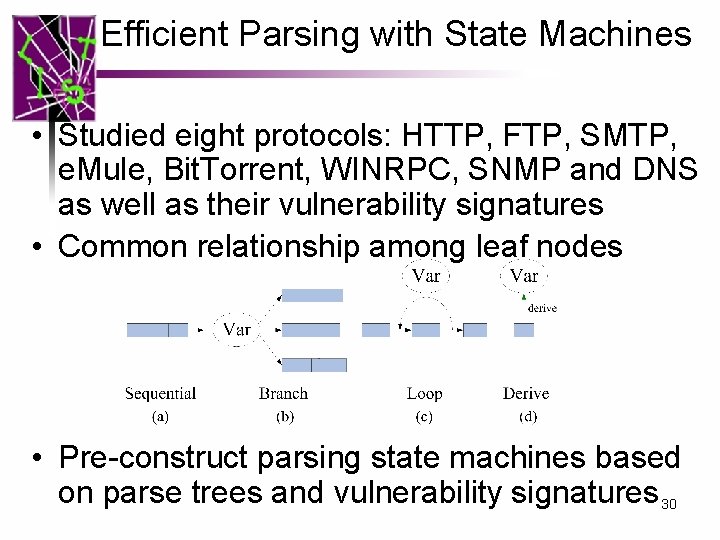

Efficient Parsing with State Machines • Studied eight protocols: HTTP, FTP, SMTP, e. Mule, Bit. Torrent, WINRPC, SNMP and DNS as well as their vulnerability signatures • Common relationship among leaf nodes • Pre-construct parsing state machines based on parse trees and vulnerability signatures 30

Outline • • • Motivation High Speed Matching for Large Rulesets. High Speed Parsing Evaluation Research Contributions 31

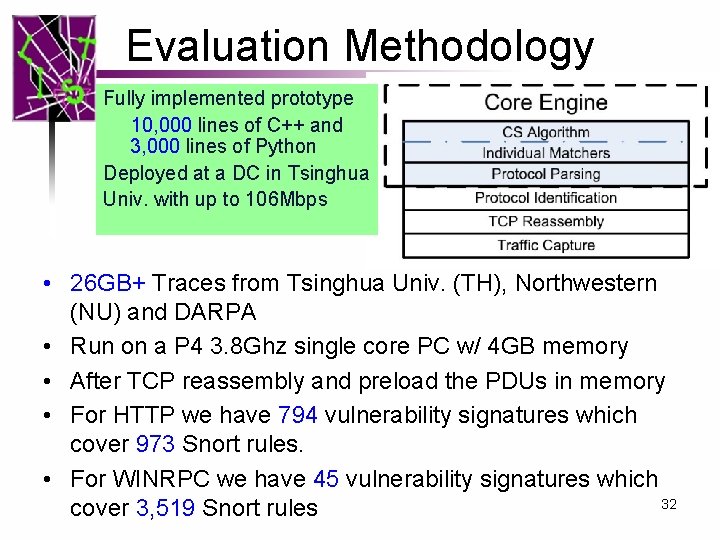

Evaluation Methodology Fully implemented prototype 10, 000 lines of C++ and 3, 000 lines of Python Deployed at a DC in Tsinghua Univ. with up to 106 Mbps • 26 GB+ Traces from Tsinghua Univ. (TH), Northwestern (NU) and DARPA • Run on a P 4 3. 8 Ghz single core PC w/ 4 GB memory • After TCP reassembly and preload the PDUs in memory • For HTTP we have 794 vulnerability signatures which cover 973 Snort rules. • For WINRPC we have 45 vulnerability signatures which 32 cover 3, 519 Snort rules

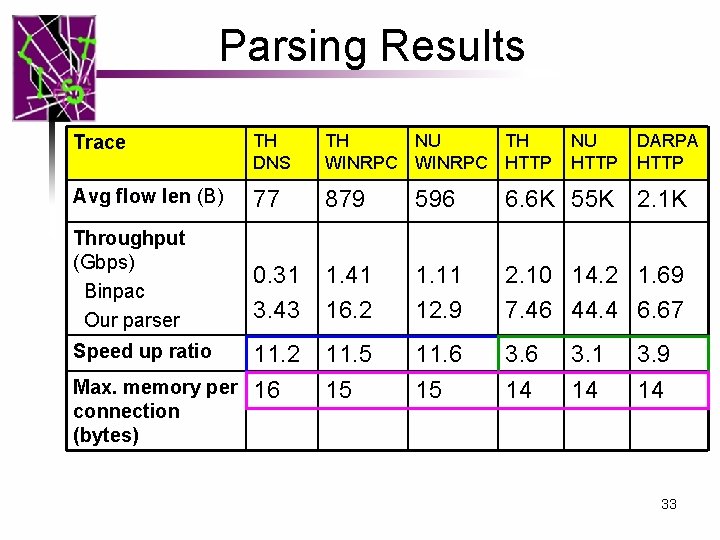

Parsing Results Trace TH DNS TH NU TH WINRPC HTTP Avg flow len (B) 77 879 596 6. 6 K 55 K 2. 1 K Throughput (Gbps) Binpac Our parser 0. 31 3. 43 1. 41 16. 2 1. 11 12. 9 2. 10 14. 2 1. 69 7. 46 44. 4 6. 67 11. 2 Max. memory per 16 11. 5 15 11. 6 15 3. 6 14 Speed up ratio connection (bytes) NU HTTP 3. 1 14 DARPA HTTP 3. 9 14 33

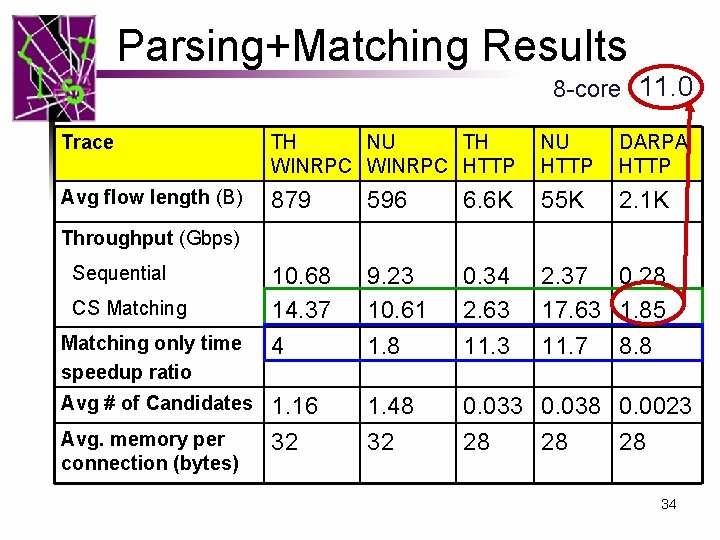

Parsing+Matching Results 8 -core 11. 0 Trace TH NU TH WINRPC HTTP NU HTTP DARPA HTTP Avg flow length (B) 879 596 6. 6 K 55 K 2. 1 K 10. 68 14. 37 9. 23 10. 61 0. 34 2. 63 2. 37 0. 28 17. 63 1. 85 Matching only time speedup ratio 4 1. 8 11. 3 11. 7 Avg # of Candidates 1. 16 32 1. 48 32 0. 033 0. 038 0. 0023 28 28 28 Throughput (Gbps) Sequential CS Matching Avg. memory per connection (bytes) 8. 8 34

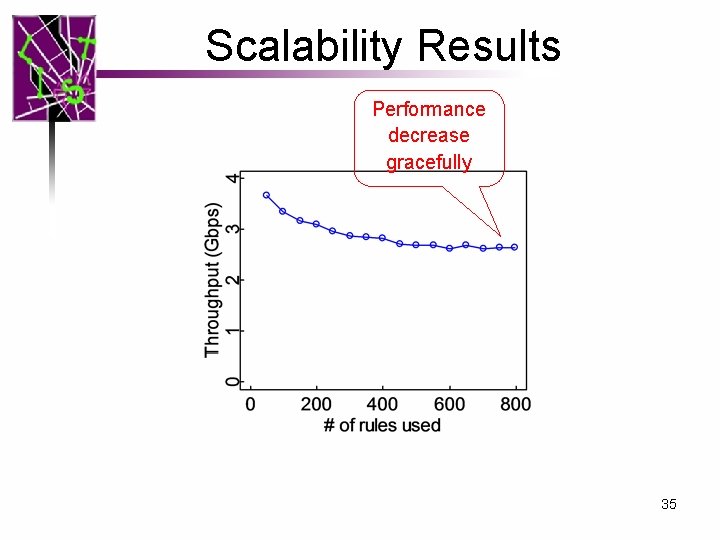

Scalability Results Performance decrease gracefully 35

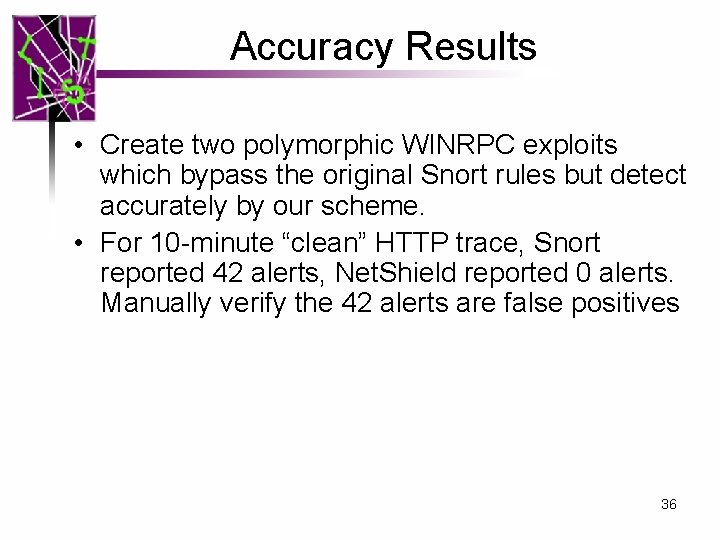

Accuracy Results • Create two polymorphic WINRPC exploits which bypass the original Snort rules but detect accurately by our scheme. • For 10 -minute “clean” HTTP trace, Snort reported 42 alerts, Net. Shield reported 0 alerts. Manually verify the 42 alerts are false positives 36

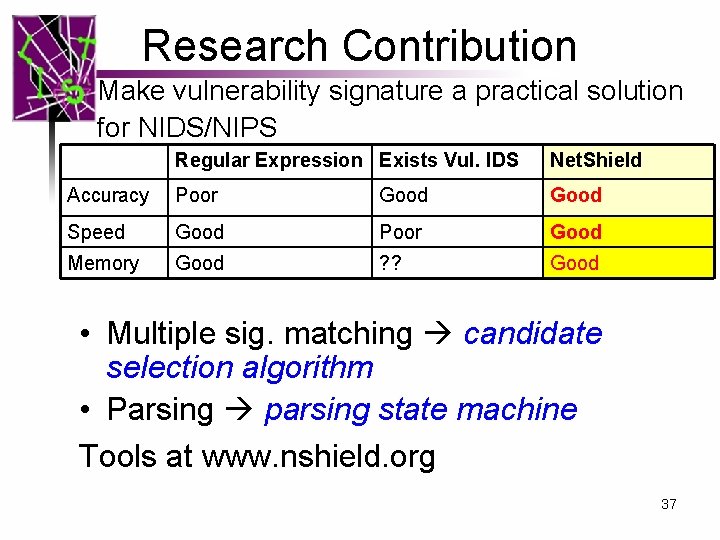

Research Contribution Make vulnerability signature a practical solution for NIDS/NIPS Regular Expression Exists Vul. IDS Net. Shield Accuracy Poor Good Speed Good Poor Good Memory Good ? ? Good • Multiple sig. matching candidate selection algorithm • Parsing parsing state machine Tools at www. nshield. org 37

Q&A 38

4. Vulnerability Signature Matching for Large Ruleset Ø Complexity Analysis n Merging complexity n n Need k-1 merging iterations For each iteration n p Three HTTP traces: avg(|Si|)<0. 04 p Two WINRPC traces: avg(|Si|)<1. 5 Merge complexity O(n) the worst case, since Si can have O(n) candidates in the worst case rulesets For real-world rulesets, # of candidates is a small constant. Therefore, O(1) For real-world rulesets: O(k), which is the optimal case

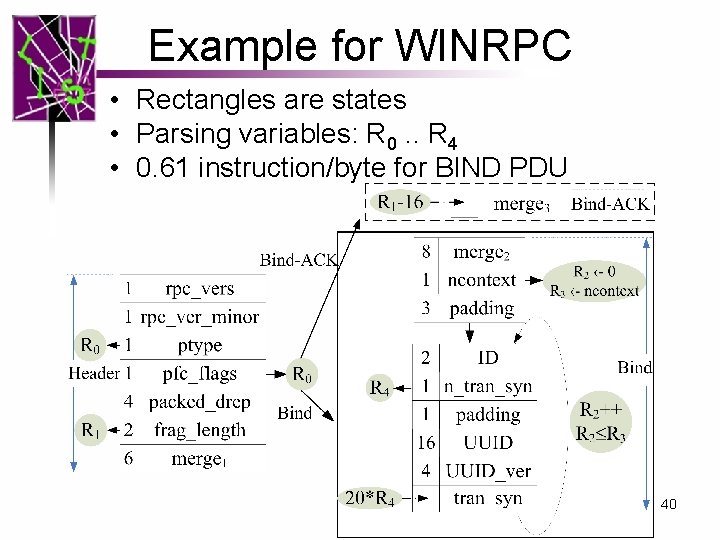

Example for WINRPC • Rectangles are states • Parsing variables: R 0. . R 4 • 0. 61 instruction/byte for BIND PDU 40

Parser generator • We reuse the front-end of Bin. PAC (a Yacc like tool for protocol parsing) • Redesign the backend to generate the parsing state machine based parser 41

- Slides: 41