Networkaware OS ESCC Miami February 5 2003 Tom

Network-aware OS ESCC Miami February 5, 2003 Tom Dunigan thd@ornl. gov Matt Mathis mathis@psc. edu Brian Tierney bltierney@lbl. gov

Roadmap • Motivation • Net 100 project overview – Web 100 – network probes & sensors – protocol analysis and tuning • Year 1 Results – A TCP tuning daemon – Tuning experiments www. net 100. o rg • Year 2 – ongoing research, Web 100 update (Mathis) DOE-funded project (Office of Science) $1 M/yr, 3 yrs beginning 9/01 LBL, ORNL, PSC, NCAR Net 100 project objectives: (network-aware operating systems) • measure, understand, and improve end-to-end network/application performance • tune network protocols and applications (grid and bulk transfer) • first year emphasis: TCP bulk transfer over high delay/bandwidth nets U. S. Department of Energy Oak Ridge National UT-BATTELLE

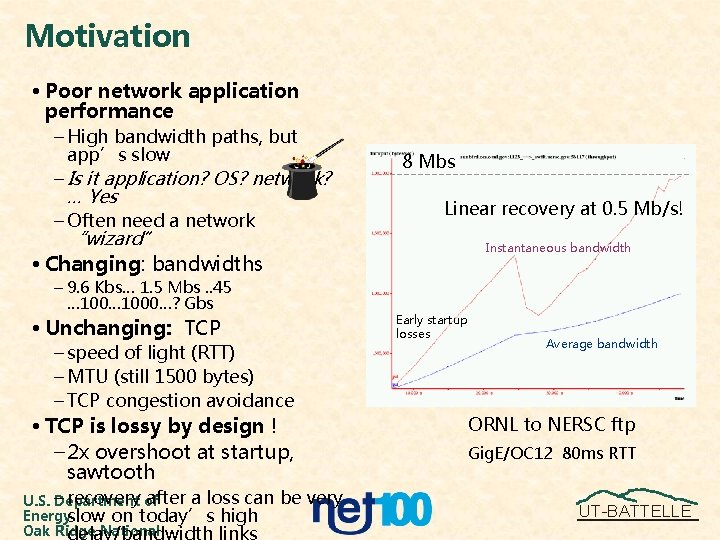

Motivation • Poor network application performance – High bandwidth paths, but app’s slow – Is it application? OS? network? … Yes – Often need a network “wizard” 8 Mbs Linear recovery at 0. 5 Mb/s! Instantaneous bandwidth • Changing: bandwidths – 9. 6 Kbs… 1. 5 Mbs. . 45 … 1000…? Gbs • Unchanging: TCP – speed of light (RTT) – MTU (still 1500 bytes) – TCP congestion avoidance • TCP is lossy by design ! – 2 x overshoot at startup, sawtooth recovery of after a loss can U. S. – Department Energyslow on today’s high Oak Ridge National be very Early startup losses Average bandwidth ORNL to NERSC ftp Gig. E/OC 12 80 ms RTT UT-BATTELLE

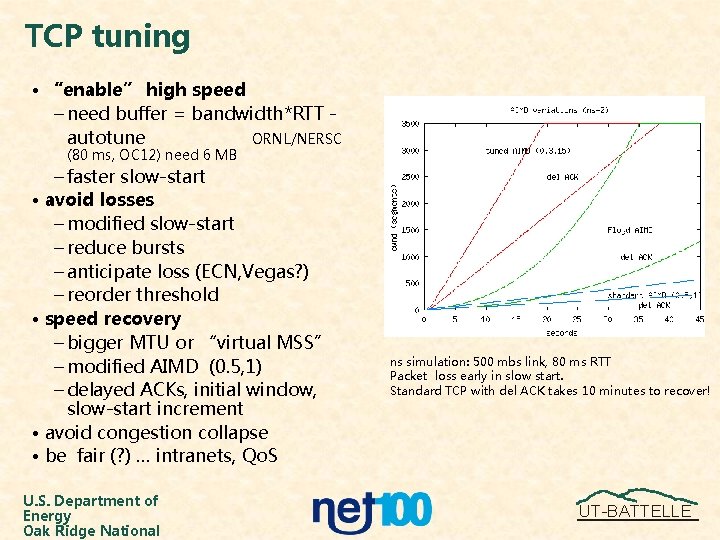

TCP tuning • “enable” high speed – need buffer = bandwidth*RTT autotune ORNL/NERSC (80 ms, OC 12) need 6 MB – faster slow-start • avoid losses – modified slow-start – reduce bursts – anticipate loss (ECN, Vegas? ) – reorder threshold • speed recovery – bigger MTU or “virtual MSS” – modified AIMD (0. 5, 1) – delayed ACKs, initial window, slow-start increment • avoid congestion collapse • be fair (? ) … intranets, Qo. S U. S. Department of Energy Oak Ridge National ns simulation: 500 mbs link, 80 ms RTT Packet loss early in slow start. Standard TCP with del ACK takes 10 minutes to recover! UT-BATTELLE

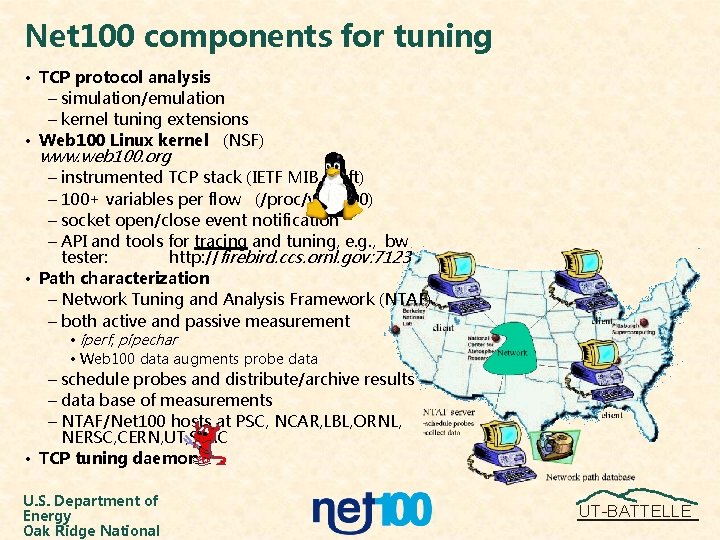

Net 100 components for tuning • TCP protocol analysis – simulation/emulation – kernel tuning extensions • Web 100 Linux kernel (NSF) www. web 100. org – instrumented TCP stack (IETF MIB draft) – 100+ variables per flow (/proc/web 100) – socket open/close event notification – API and tools for tracing and tuning, e. g. , bw tester: http: //firebird. ccs. ornl. gov: 7123 • Path characterization – Network Tuning and Analysis Framework (NTAF) – both active and passive measurement • iperf, pipechar • Web 100 data augments probe data – schedule probes and distribute/archive results – data base of measurements – NTAF/Net 100 hosts at PSC, NCAR, LBL, ORNL, NERSC, CERN, UT, SLAC • TCP tuning daemon U. S. Department of Energy Oak Ridge National UT-BATTELLE

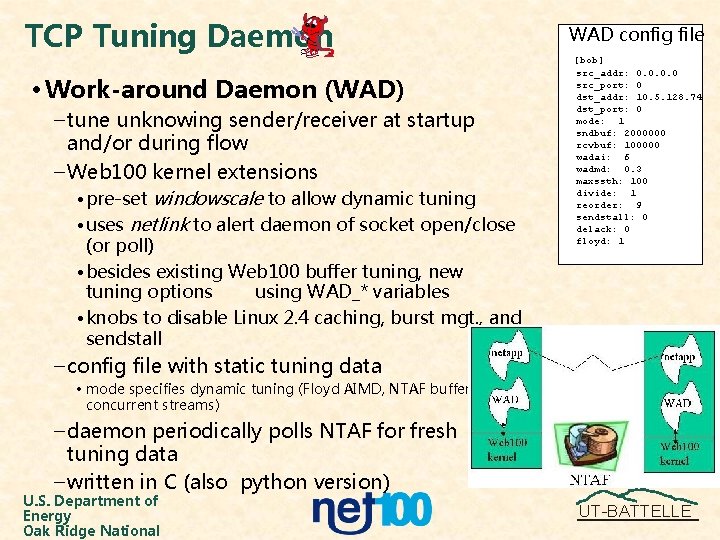

TCP Tuning Daemon • Work-around Daemon (WAD) – tune unknowing sender/receiver at startup and/or during flow – Web 100 kernel extensions • pre-set windowscale to allow dynamic tuning • uses netlink to alert daemon of socket open/close (or poll) • besides existing Web 100 buffer tuning, new tuning options using WAD_* variables • knobs to disable Linux 2. 4 caching, burst mgt. , and sendstall WAD config file [bob] src_addr: 0. 0 src_port: 0 dst_addr: 10. 5. 128. 74 dst_port: 0 mode: 1 sndbuf: 2000000 rcvbuf: 100000 wadai: 6 wadmd: 0. 3 maxssth: 100 divide: 1 reorder: 9 sendstall: 0 delack: 0 floyd: 1 – config file with static tuning data • mode specifies dynamic tuning (Floyd AIMD, NTAF buffer size, concurrent streams) – daemon periodically polls NTAF for fresh tuning data – written in C (also python version) U. S. Department of Energy Oak Ridge National UT-BATTELLE

Experimental results (year 1) • Evaluating the tuning daemon in the wild – emphasis: bulk transfers over high delay/bandwidth nets (Internet 2, ESnet) – tests over: 10 Gig. E, OC 48, OC 12, OC 3, ATM/VBR, Gig. E, FDDI, 100/10 T, cable, ISDN, wireless (802. 11 b), dialup – tests over Nist. NET 100 T testbed • Various TCP tuning options – buffer tuning – AIMD mods (including Floyd, both in-kernel and in WAD) – slow-start mods – parallel streams vs single tuned • Results are anecdotal – more systematic testing is on-going – Your mileage may vary …. Network professionals on a closed course. Do not attempt this at home. U. S. Department of Energy Oak Ridge National UT-BATTELLE

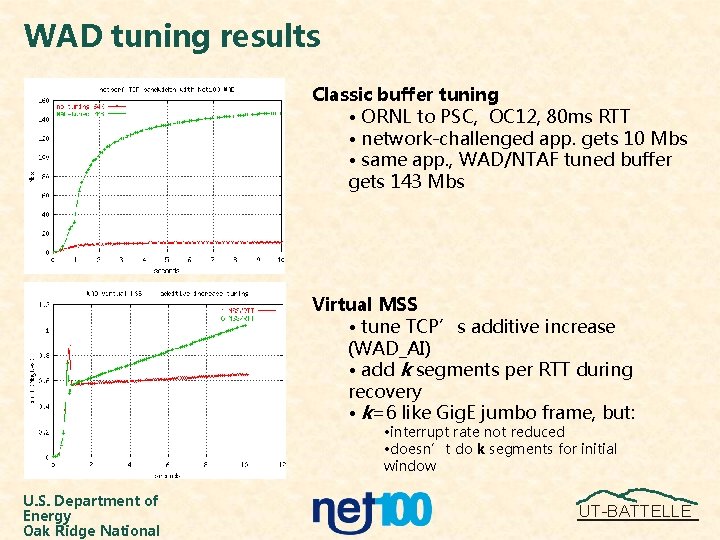

WAD tuning results Classic buffer tuning • ORNL to PSC, OC 12, 80 ms RTT • network-challenged app. gets 10 Mbs • same app. , WAD/NTAF tuned buffer gets 143 Mbs Virtual MSS • tune TCP’s additive increase (WAD_AI) • add k segments per RTT during recovery • k=6 like Gig. E jumbo frame, but: • interrupt rate not reduced • doesn’t do k segments for initial window U. S. Department of Energy Oak Ridge National UT-BATTELLE

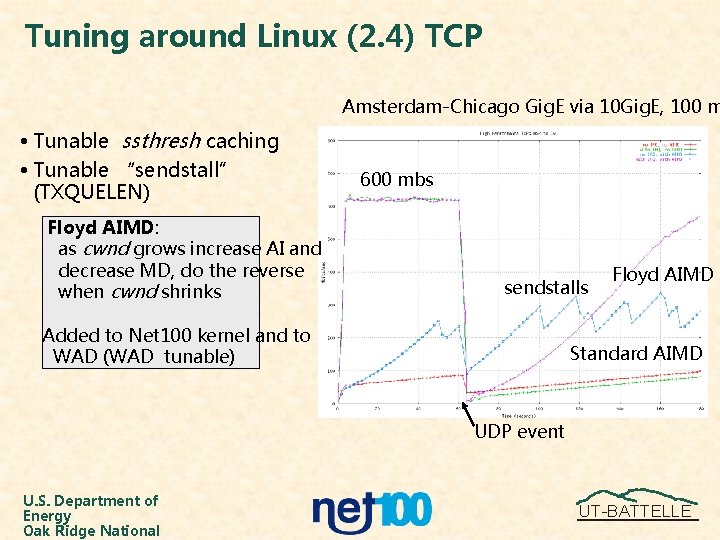

Tuning around Linux (2. 4) TCP Amsterdam-Chicago Gig. E via 10 Gig. E, 100 m • Tunable ssthresh caching • Tunable “sendstall” (TXQUELEN) Floyd AIMD: as cwnd grows increase AI and decrease MD, do the reverse when cwnd shrinks 600 mbs sendstalls Added to Net 100 kernel and to WAD (WAD tunable) Floyd AIMD Standard AIMD UDP event U. S. Department of Energy Oak Ridge National UT-BATTELLE

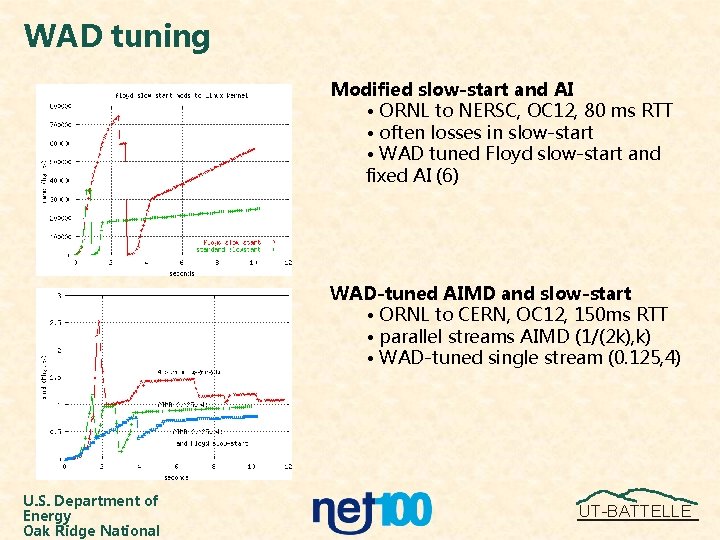

WAD tuning Modified slow-start and AI • ORNL to NERSC, OC 12, 80 ms RTT • often losses in slow-start • WAD tuned Floyd slow-start and fixed AI (6) WAD-tuned AIMD and slow-start • ORNL to CERN, OC 12, 150 ms RTT • parallel streams AIMD (1/(2 k), k) • WAD-tuned single stream (0. 125, 4) U. S. Department of Energy Oak Ridge National UT-BATTELLE

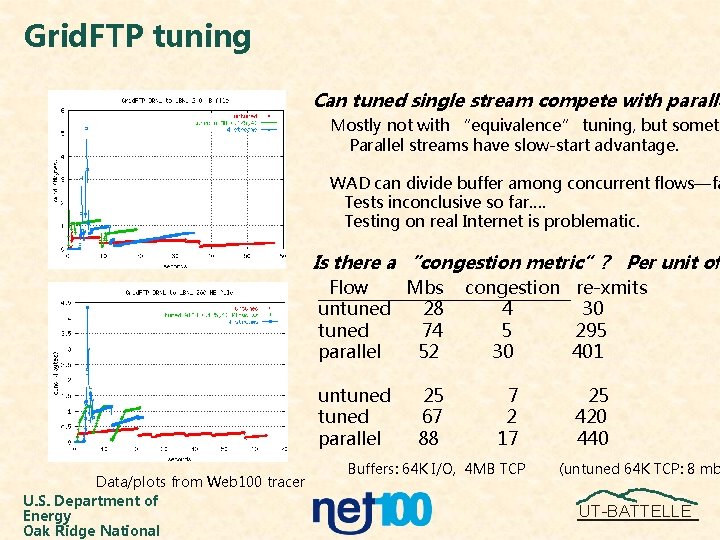

Grid. FTP tuning Can tuned single stream compete with paralle Mostly not with “equivalence” tuning, but someti Parallel streams have slow-start advantage. WAD can divide buffer among concurrent flows—fa Tests inconclusive so far…. Testing on real Internet is problematic. Is there a “congestion metric”? Per unit of Flow Mbs untuned 28 tuned 74 parallel 52 untuned parallel Data/plots from Web 100 tracer U. S. Department of Energy Oak Ridge National 25 67 88 congestion re-xmits 4 30 5 295 30 401 7 2 17 Buffers: 64 K I/O, 4 MB TCP 25 420 440 (untuned 64 K TCP: 8 mb UT-BATTELLE

Ongoing/Planned Net 100 research (year 2) – analyze effectiveness/fairness of current tuning options • simulation • emulation • on the net (systematic tests) – NTAF probes -- characterizing a path to tune a flow • router data (passive) • monitoring applications with Web 100 • latest probe tools – additional tuning algorithms • Vegas • slow-start increment, reorder resiliance, delayed ACKs • non-TCP (SABUL, FOBS, TSUNAMI, ? ) • identify non-congestive loss, ECN? – parallel/multipath selection/tuning – WAD-to-WAD tuning – jumbo frames experiments… the quest for bigger and bigger MTUs – more user-friendly, usable accelerants – port to Cray X 1 network front-end – port to other OS’s U. S. Department of Energy Oak Ridge National UT-BATTELLE

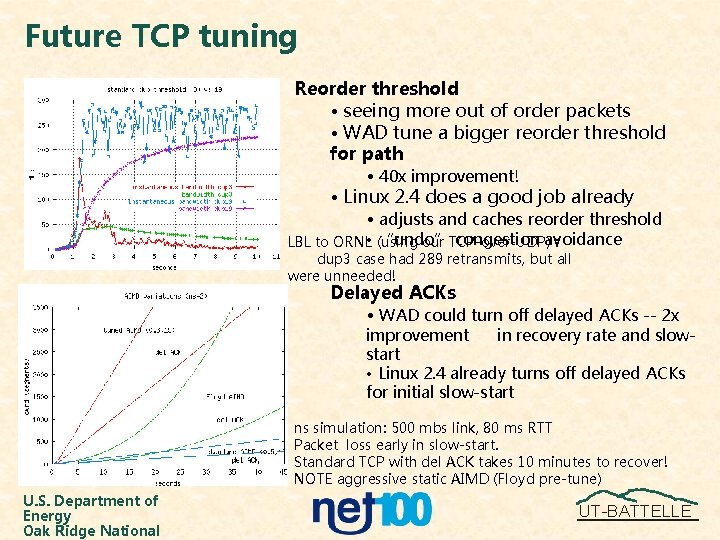

Future TCP tuning Reorder threshold • seeing more out of order packets • WAD tune a bigger reorder threshold for path • 40 x improvement! • Linux 2. 4 does a good job already • adjusts and caches reorder threshold “undo” congestion avoidance LBL to ORNL • (using our TCP-over-UDP) : dup 3 case had 289 retransmits, but all were unneeded! Delayed ACKs • WAD could turn off delayed ACKs -- 2 x improvement in recovery rate and slowstart • Linux 2. 4 already turns off delayed ACKs for initial slow-start ns simulation: 500 mbs link, 80 ms RTT Packet loss early in slow-start. Standard TCP with del ACK takes 10 minutes to recover! NOTE aggressive static AIMD (Floyd pre-tune) U. S. Department of Energy Oak Ridge National UT-BATTELLE

Summary www. net 100. org • Novel approaches – non-invasive dynamic tuning of legacy applications – using TCP to tune TCP (Web 100) – tuning on a per flow/path • Effective evaluation framework – protocol analysis and tuning + net/app/OS debugging – out-of-kernel tuning • Beneficial interactions – TCP protocols (Floyd, Wu Feng (DRS), Web 100, parallel/non-TCP) – Path characterization research (Sci. DAC, CAIDA, Pinger, pathrate, SCNM) – Scientific application and Data grids (Sci. DAC, CERN) • Performance improvements U. S. Department of Energy Oak Ridge National UT-BATTELLE

- Slides: 14