Network Power Scheduling for wireless sensor networks Barbara

Network Power Scheduling for wireless sensor networks Barbara Hohlt Intel Communications Technology Lab Hillsboro, OR August 9, 2005

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

Wireless Sensor Networks n n n Networks of small, low-cost, low-power devices Sensing/actuation, processing, wireless communication Dispersed near phenomena of interest Self-organize, wireless multi-hop networks Unattended for long periods of time

Berkeley Motes n n n Mica 2 Dot Mica 2

Example Applications Pursuer-Evader Environmental Monitoring Home Automation Mote Layout 1 3 1 54 6` 1 8 1 1 1 5 29 Security Indoor Building Monitoring Inventory Tracking

Power Consumption n Power consumption limits the utility of sensor networks ¡ ¡ n n Must survive on own energy stores for months or years 2 AA batteries or 1 Lithium coin cell Replacing batteries is a laborious task and not possible in some environments Conserving energy is critical for prolonging the lifetime of these networks

Where the power goes n Main energy draws ¡ ¡ ¡ n Central processing unit Sensors/actuators Radio dominates the cost of power consumption

Radio Power Consumption n Primary cost is idle listening ¡ ¡ n Secondary cost is overhearing ¡ ¡ ¡ n Time spent listening waiting to receive packets Nodes sleep most of the time to conserve energy Nodes overhear their neighbors communication Broadcast medium Dense networks Must turn radio off ¡ need a schedule

Flexible Power Scheduling n n Flexible Power Scheduling ¡ Reduces radio power consumption ¡ Supports fluctuating demand (multiple queries, aggregates) ¡ Adaptive and decentralized schedules Improves power savings over approaches used in existing deployments ¡ 4. 3 X over Tiny. DB duty cycling ¡ 2– 4. 6 X over GDI low-power listening High end-to-end packet reception ¡ Reduces contention ¡ Increases end-to-end fairness and yield Optimized per hop latency

FPS Two-Level Architecture Network Power Schedule CSMA MAC n Coarse-grain scheduling ¡ ¡ n n Fine-grain CSMA MAC underneath Reduces contention and increases end-to-end fairness ¡ ¡ ¡ n At the network layer Planned radio on-off times Distributes traffic Decouples events from correlated traffic Reserve bandwidth from source to sink Does not require perfect schedules or precise time synchronization

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

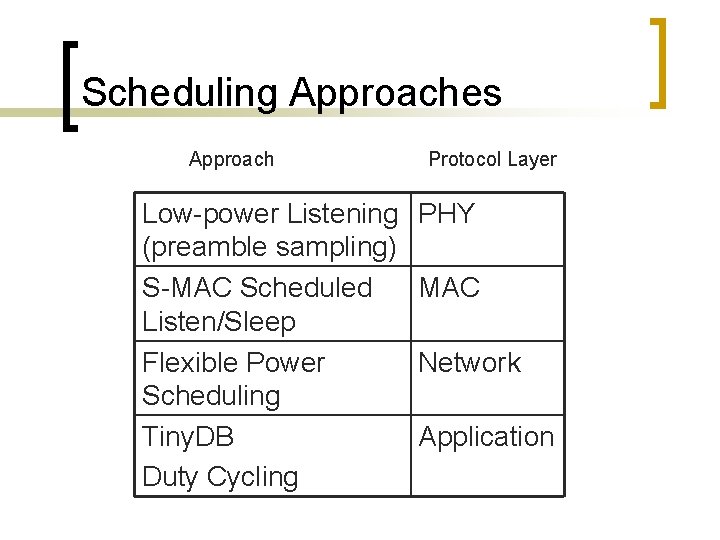

Scheduling Approaches Approach Low-power Listening (preamble sampling) S-MAC Scheduled Listen/Sleep Flexible Power Scheduling Tiny. DB Duty Cycling Protocol Layer PHY MAC Network Application

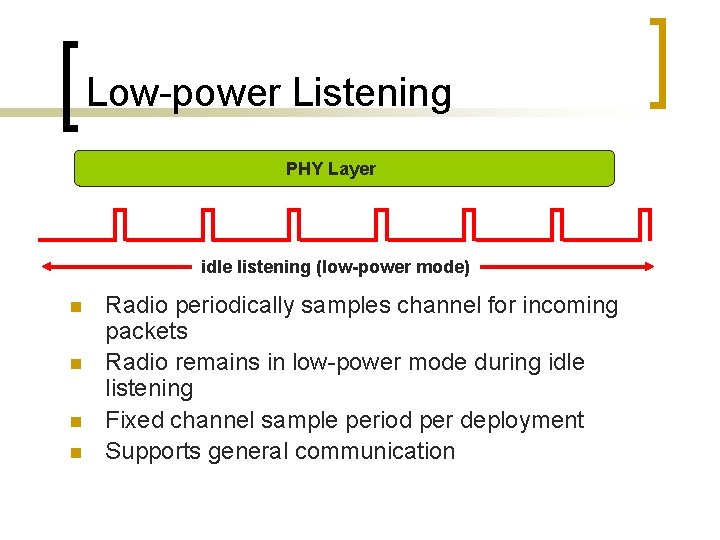

Low-power Listening PHY Layer idle listening (low-power mode) n n Radio periodically samples channel for incoming packets Radio remains in low-power mode during idle listening Fixed channel sample period per deployment Supports general communication

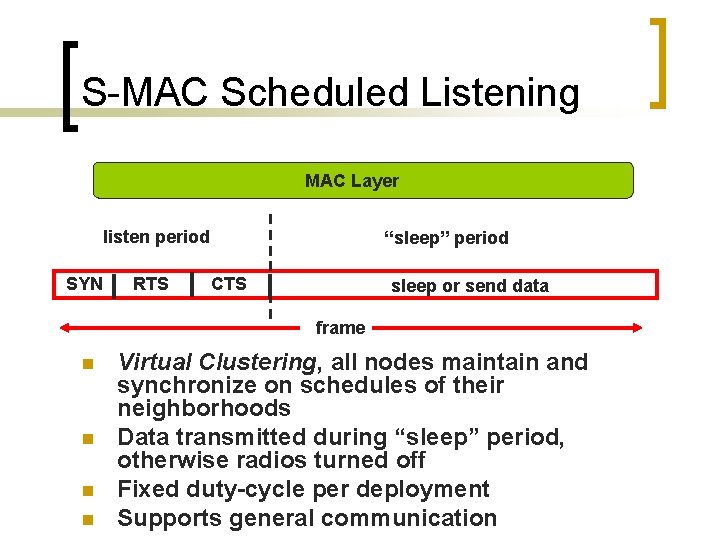

S-MAC Scheduled Listening MAC Layer listen period SYN RTS “sleep” period CTS sleep or send data frame n n Virtual Clustering, all nodes maintain and synchronize on schedules of their neighborhoods Data transmitted during “sleep” period, otherwise radios turned off Fixed duty-cycle per deployment Supports general communication

Tiny. DB Duty Cycling Application Layer waking period epoch n n All nodes sleep and wake at same time every epoch All transmissions during waking period Fixed duty-cycle per deployment Supports a tree topology

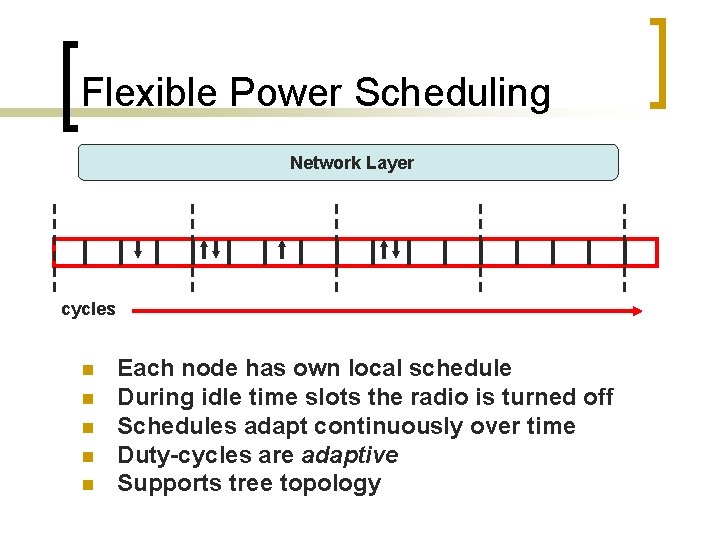

Flexible Power Scheduling Network Layer cycles n n n Each node has own local schedule During idle time slots the radio is turned off Schedules adapt continuously over time Duty-cycles are adaptive Supports tree topology

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

Assumptions n n n Sense-to-gateway applications Multihop network Majority of traffic is periodic Nodes are sleeping most of the time Available bandwidth >> traffic demand Routing component

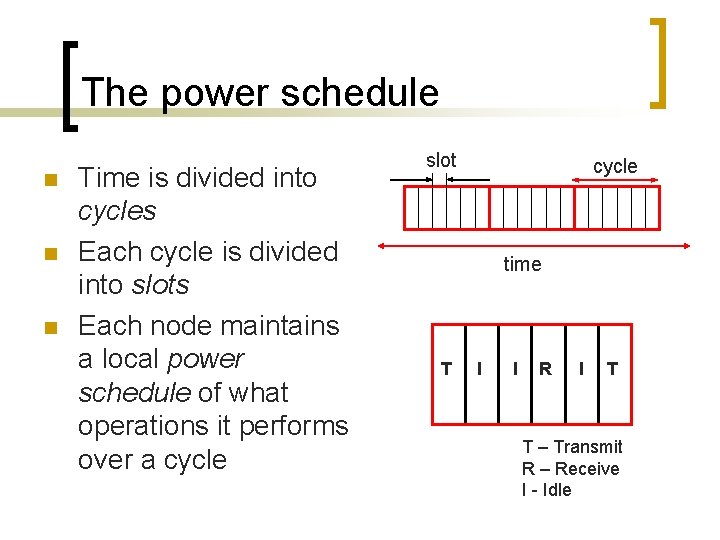

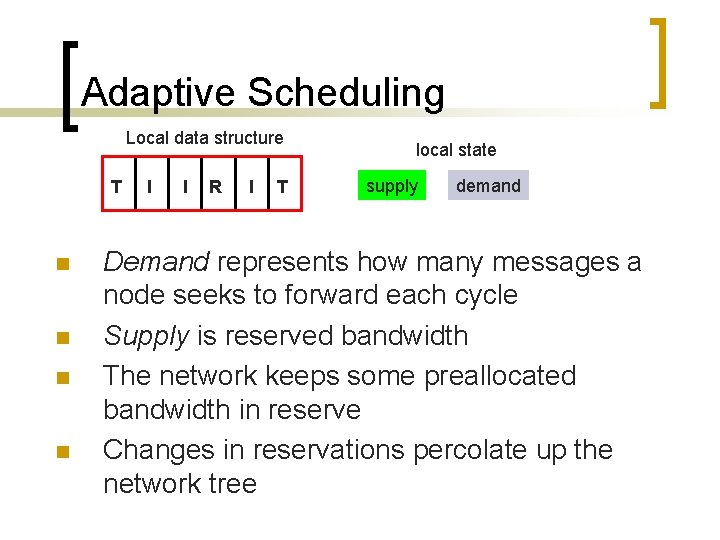

The power schedule n n n Time is divided into cycles Each cycle is divided into slots Each node maintains a local power schedule of what operations it performs over a cycle slot cycle time T I I R I T T – Transmit R – Receive I - Idle

Scheduling flows n n n Schedule entire flows (not packets) Make reservations based on traffic demand Bandwidth is reserved from source to sink ¡ n (and partial flows from source to destination) Reservations remain in effect indefinitely and can adapt over time

Adaptive Scheduling Local data structure T n n I I R I T local state supply demand Demand represents how many messages a node seeks to forward each cycle Supply is reserved bandwidth The network keeps some preallocated bandwidth in reserve Changes in reservations percolate up the network tree

Supply and Demand 1. If supply < demand 1. 2. Request reservation If Conf -> Increment supply If supply >= demand 1. 2. Offer reservation If Req ->Increment demand cycle supply demand 1 0 1 2 1 1 3 1 2 For the purposes of this example, we will say one unit of demand counts as one message per cycle.

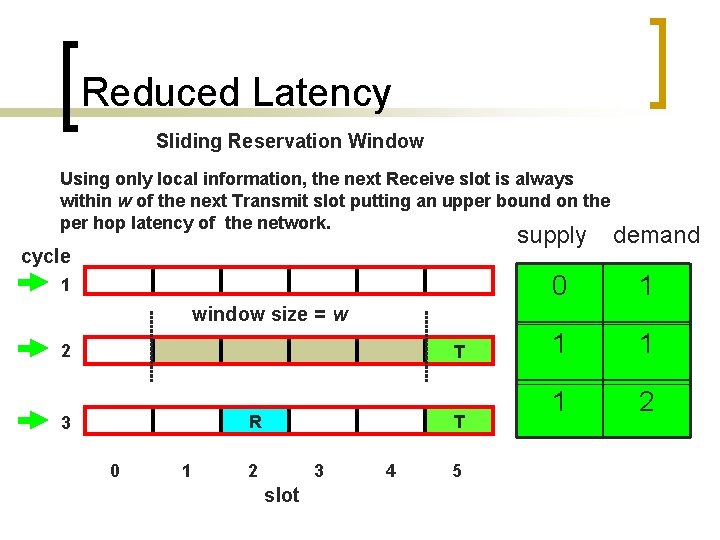

Reduced Latency Sliding Reservation Window Using only local information, the next Receive slot is always within w of the next Transmit slot putting an upper bound on the per hop latency of the network. supply cycle 1 demand 0 1 1 2 window size = w 2 T R 3 0 1 T 2 3 slot 4 5

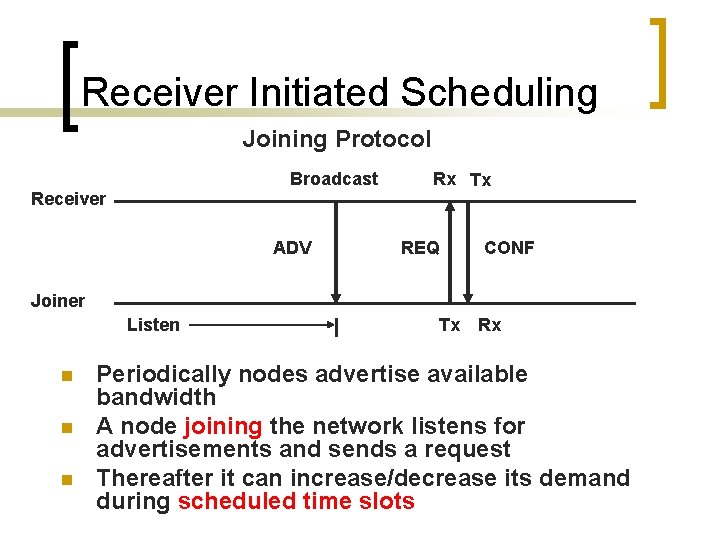

Receiver Initiated Scheduling Joining Protocol Broadcast Receiver ADV Rx Tx REQ CONF Joiner Listen n Tx Rx Periodically nodes advertise available bandwidth A node joining the network listens for advertisements and sends a request Thereafter it can increase/decrease its demand during scheduled time slots

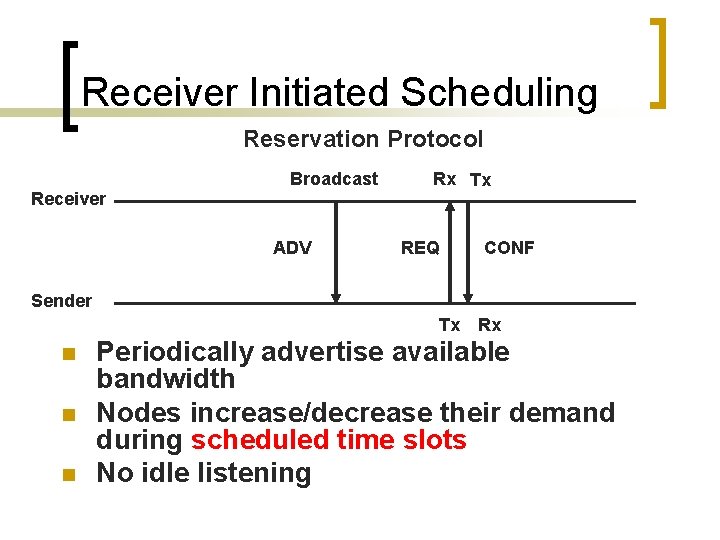

Receiver Initiated Scheduling Reservation Protocol Broadcast Receiver ADV Rx Tx REQ CONF Sender Tx Rx n n n Periodically advertise available bandwidth Nodes increase/decrease their demand during scheduled time slots No idle listening

Properties of supply/demand n All network changes cast as demand ¡ ¡ ¡ n 3 classes of node ¡ ¡ ¡ n Joining Failure Lossy link Multiple queries Mobility Router and application Router only Application only Load balancing

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

Implementation n HW ¡ ¡ ¡ n Mica 2 Dot Mica 2 SW ¡ ¡ ¡ Slackers Tiny. DB/FPS (Twinkle) GDI/FPS (Twinkle)

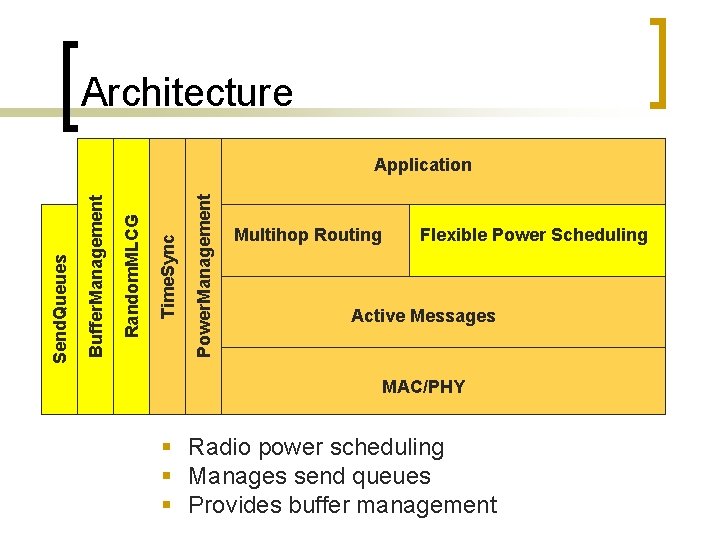

Architecture Power. Management Time. Sync Random. MLCG Buffer. Management Send. Queues Application Multihop Routing Flexible Power Scheduling Active Messages MAC/PHY § Radio power scheduling § Manages send queues § Provides buffer management

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

Micro Benchmarks Mica n n n Power Consumption Fairness and Yield Contention

Power consumption source n n 3 2 1 0 gateway 4 Tiny. OS Mica motes 3 -hop network Node 3 sends one 36 -byte packet per cycle Measure the current at node 2

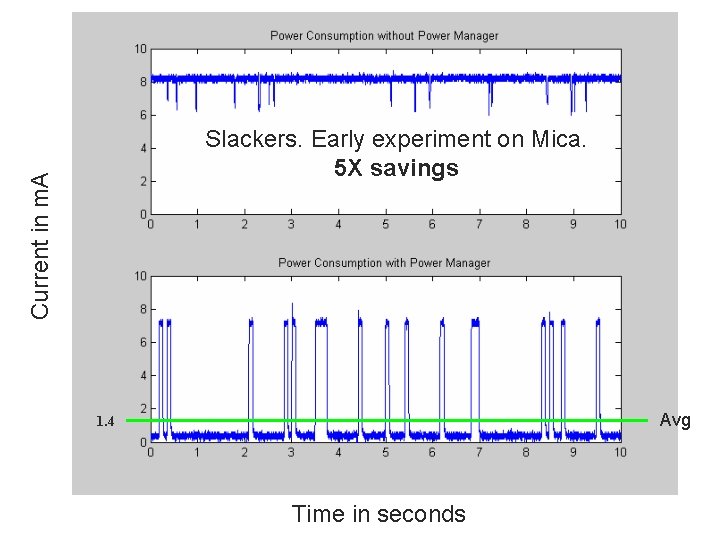

Current in m. A Slackers. Early experiment on Mica. 5 X savings Avg 1. 4 Time in seconds

Mica Experiments Scheduled (FPS) vs Unscheduled (Naïve) n n n 10 MICA motes plus base station 6 motes send 100 messages across 3 hops One message per cycle (3200 ms) Begin with injected start message Repeat 11 times 1 n 2 3 4 5 6 Two Topologies ¡ Single Area n one 8’ x 3’ 4” area ¡ Multiple Area n five areas, motes are 9’-22’ apart

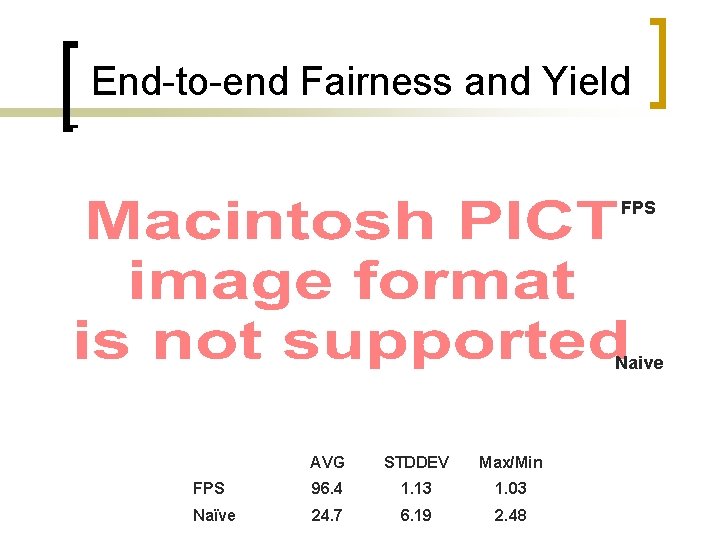

End-to-end Fairness and Yield FPS Naive AVG STDDEV Max/Min FPS 96. 4 1. 13 1. 03 Naïve 24. 7 6. 19 2. 48

Contention is Reduced

Outline n n n Introduction Radio Scheduling FPS Overview Implementation Micro Benchmarks Application Evaluation

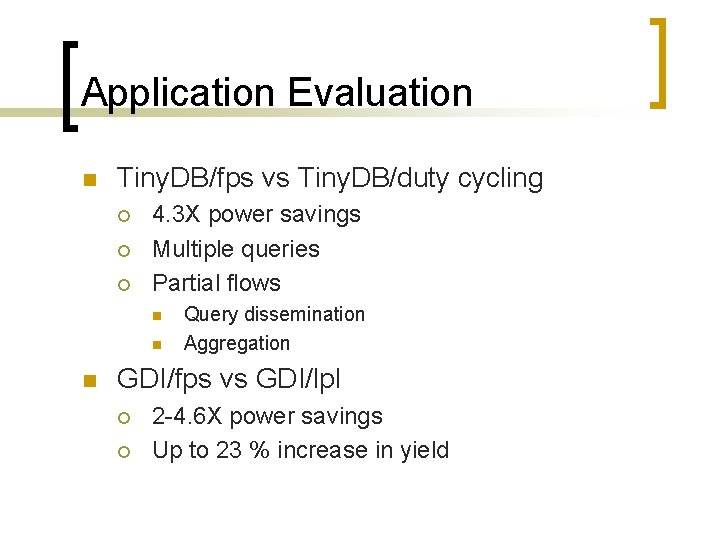

Application Evaluation n Tiny. DB/fps vs Tiny. DB/duty cycling ¡ ¡ ¡ 4. 3 X power savings Multiple queries Partial flows n n n Query dissemination Aggregation GDI/fps vs GDI/lpl ¡ ¡ 2 -4. 6 X power savings Up to 23 % increase in yield

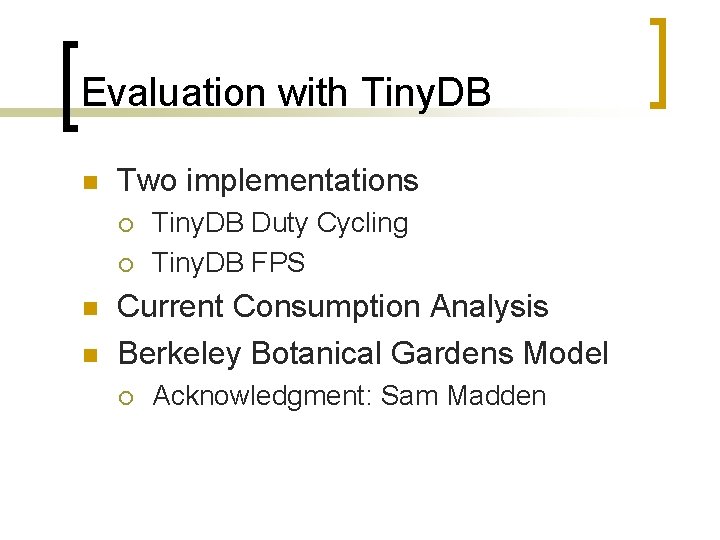

Evaluation with Tiny. DB n Two implementations ¡ ¡ n n Tiny. DB Duty Cycling Tiny. DB FPS Current Consumption Analysis Berkeley Botanical Gardens Model ¡ Acknowledgment: Sam Madden

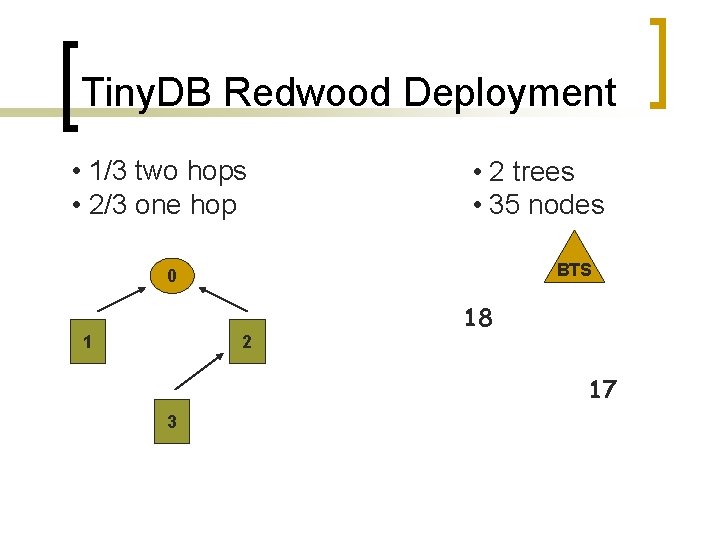

Tiny. DB Redwood Deployment • 1/3 two hops • 2/3 one hop • 2 trees • 35 nodes BTS 0 1 2 18 17 3

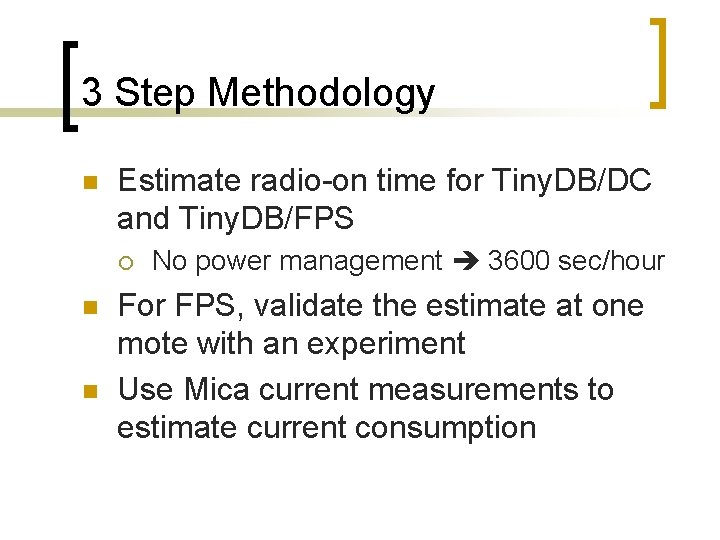

3 Step Methodology n Estimate radio-on time for Tiny. DB/DC and Tiny. DB/FPS ¡ n n No power management 3600 sec/hour For FPS, validate the estimate at one mote with an experiment Use Mica current measurements to estimate current consumption

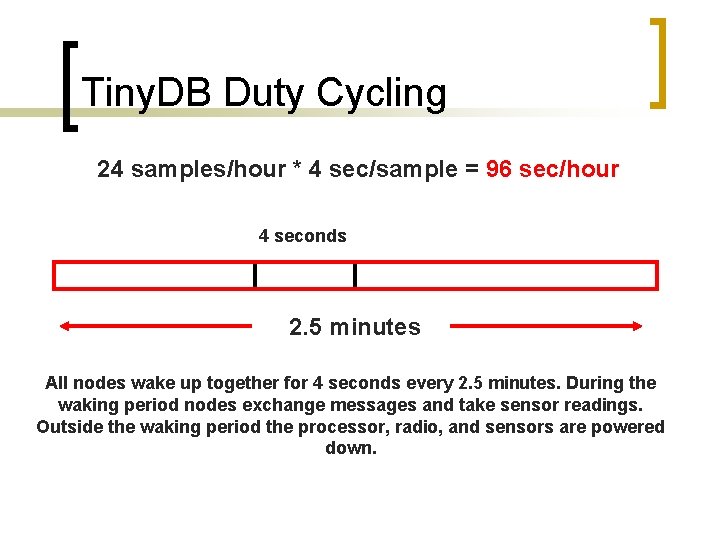

Tiny. DB Duty Cycling 24 samples/hour * 4 sec/sample = 96 sec/hour 4 seconds 2. 5 minutes All nodes wake up together for 4 seconds every 2. 5 minutes. During the waking period nodes exchange messages and take sensor readings. Outside the waking period the processor, radio, and sensors are powered down.

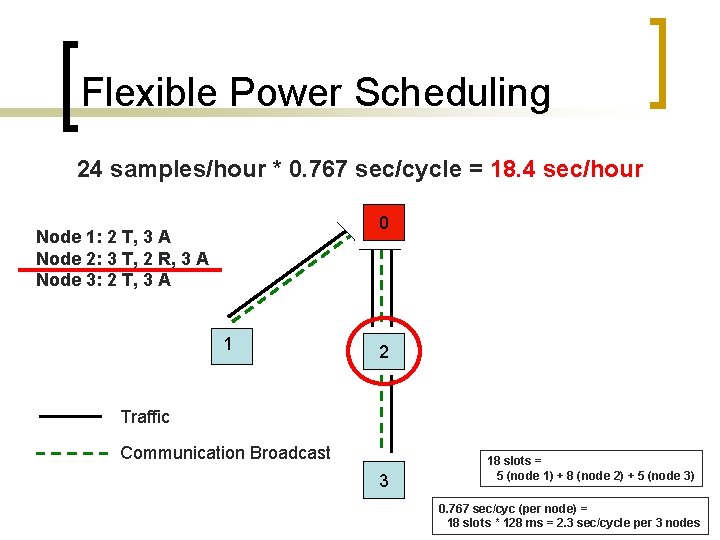

Flexible Power Scheduling 24 samples/hour * 0. 767 sec/cycle = 18. 4 sec/hour 0 Node 1: 2 T, 3 A Node 2: 3 T, 2 R, 3 A Node 3: 2 T, 3 A 1 2 Traffic Communication Broadcast 3 18 slots = 5 (node 1) + 8 (node 2) + 5 (node 3) 0. 767 sec/cyc (per node) = 18 slots * 128 ms = 2. 3 sec/cycle per 3 nodes

FPS Validation

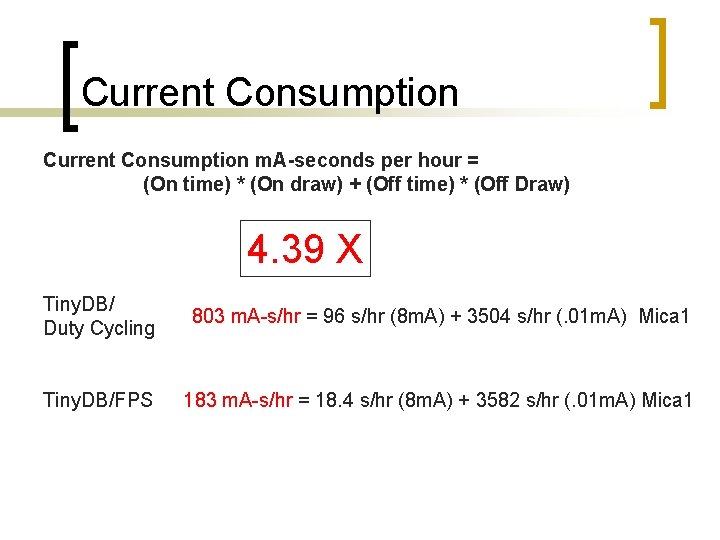

Current Consumption m. A-seconds per hour = (On time) * (On draw) + (Off time) * (Off Draw) 4. 39 X Tiny. DB/ Duty Cycling 803 m. A-s/hr = 96 s/hr (8 m. A) + 3504 s/hr (. 01 m. A) Mica 1 Tiny. DB/FPS 183 m. A-s/hr = 18. 4 s/hr (8 m. A) + 3582 s/hr (. 01 m. A) Mica 1

Evaluation with GDI n Two implementations ¡ ¡ n Experiments ¡ ¡ n GDI Low-Power Listening GDI FPS Yield Power Measurements Power Consumption ¡ Acknowledgement: Rob Szewczyk

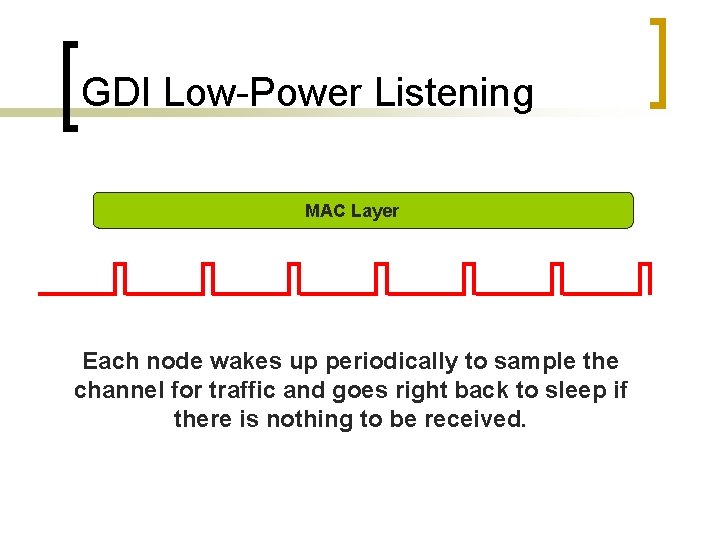

GDI Low-Power Listening MAC Layer Each node wakes up periodically to sample the channel for traffic and goes right back to sleep if there is nothing to be received.

12 Experiments Mica 2 Dot n n 30 mica 2 dot inlab testbed 3 sets ¡ ¡ ¡ n GDI/lpl 100 GDI/lpl 485 GDI/Twinkle 4 sample rates ¡ ¡ 30 seconds 1 minute 5 minute 20 minute

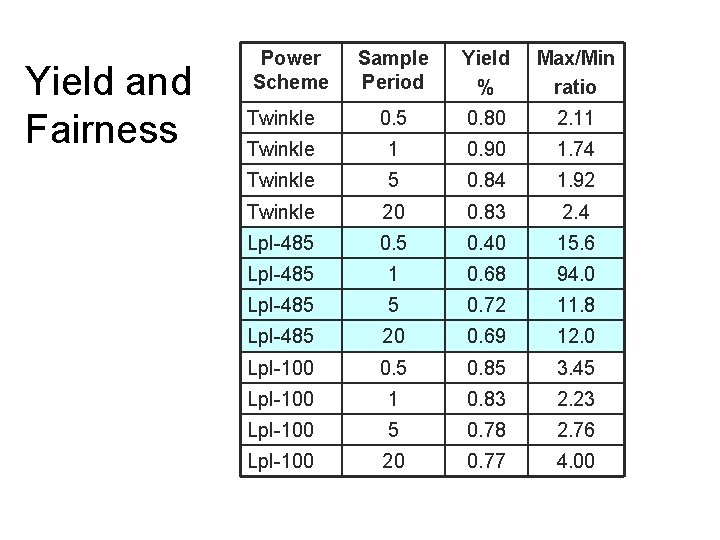

Yield and Fairness Power Scheme Sample Period Yield % Max/Min ratio Twinkle 0. 5 0. 80 2. 11 Twinkle 1 0. 90 1. 74 Twinkle 5 0. 84 1. 92 Twinkle 20 0. 83 2. 4 Lpl-485 0. 40 15. 6 Lpl-485 1 0. 68 94. 0 Lpl-485 5 0. 72 11. 8 Lpl-485 20 0. 69 12. 0 Lpl-100 0. 5 0. 85 3. 45 Lpl-100 1 0. 83 2. 23 Lpl-100 5 0. 78 2. 76 Lpl-100 20 0. 77 4. 00

Measured Power Consumption Sample Period: 5 minutes Sample Period: 20 minute

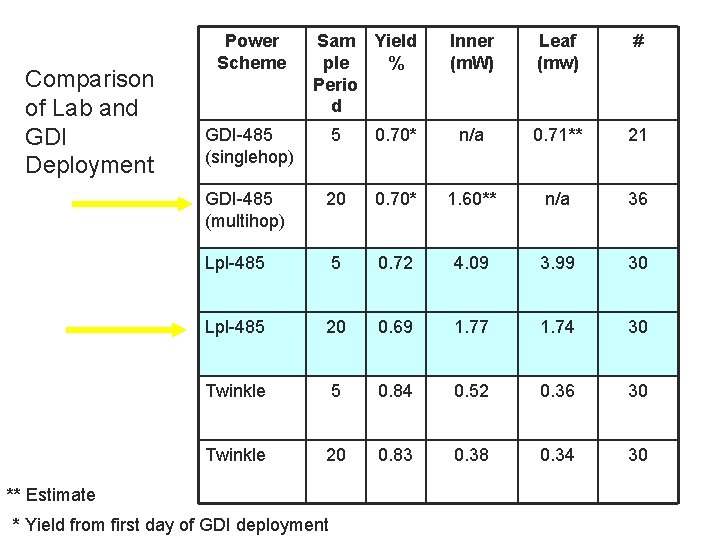

Comparison of Lab and GDI Deployment Power Scheme Sam Yield ple % Perio d Inner (m. W) Leaf (mw) # GDI-485 (singlehop) 5 0. 70* n/a 0. 71** 21 GDI-485 (multihop) 20 0. 70* 1. 60** n/a 36 Lpl-485 5 0. 72 4. 09 3. 99 30 Lpl-485 20 0. 69 1. 77 1. 74 30 Twinkle 5 0. 84 0. 52 0. 36 30 Twinkle 20 0. 83 0. 38 0. 34 30 ** Estimate * Yield from first day of GDI deployment

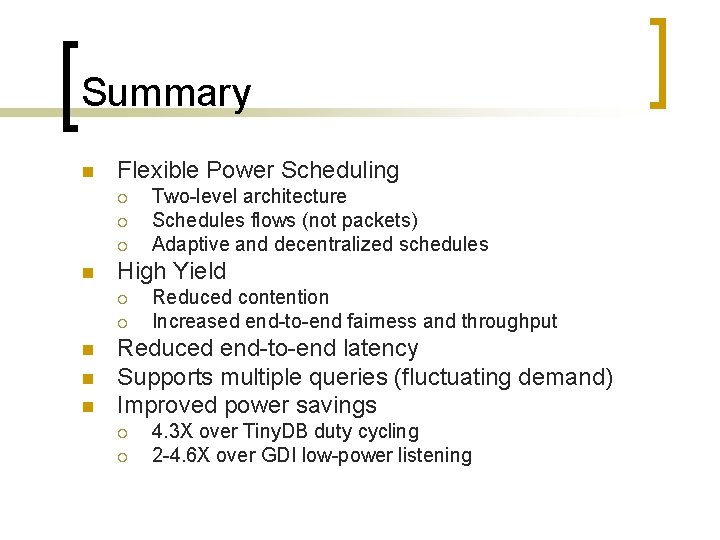

Summary n Flexible Power Scheduling ¡ ¡ ¡ n High Yield ¡ ¡ n n n Two-level architecture Schedules flows (not packets) Adaptive and decentralized schedules Reduced contention Increased end-to-end fairness and throughput Reduced end-to-end latency Supports multiple queries (fluctuating demand) Improved power savings ¡ ¡ 4. 3 X over Tiny. DB duty cycling 2 -4. 6 X over GDI low-power listening

Thank You Barbara Hohlt hohltb@cs. berkeley. edu

END

- Slides: 54