Network Applications and Network Programming Web and P

Network Applications and Network Programming: Web and P 2 P Slides are from Richard Yang from Yale Minor modifications are made 1

Recap: FTP, HTTP r FTP: file transfer m ASCII (human-readable format) requests and responses m stateful server m one data channel and one control channel r HTTP m Extensibility: ASCII requests, header lines, entity body, and responses line m Scalability/robustness • stateless server (each request should contain the full information); DNS load balancing • Client caching Web caches m one data channel 2

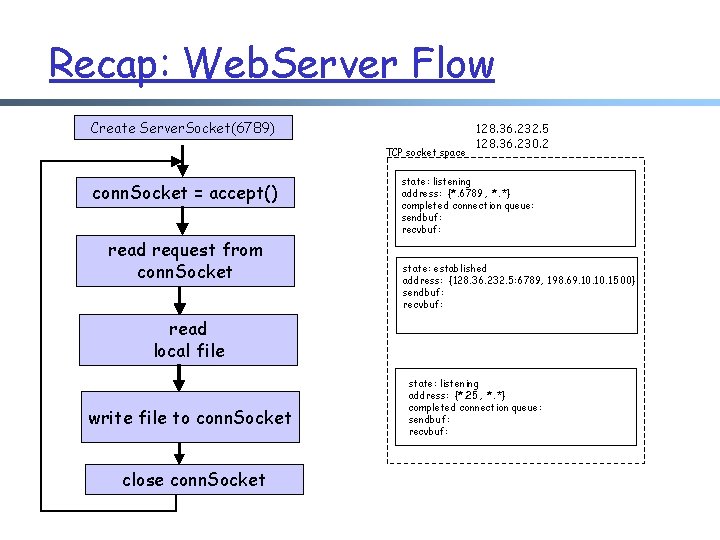

Recap: Web. Server Flow Create Server. Socket(6789) TCP socket space conn. Socket = accept() read request from conn. Socket 128. 36. 232. 5 128. 36. 230. 2 state: listening address: {*. 6789, *. *} completed connection queue: sendbuf: recvbuf: state: established address: {128. 36. 232. 5: 6789, 198. 69. 10. 1500} sendbuf: recvbuf: read local file write file to conn. Socket close conn. Socket state: listening address: {*. 25, *. *} completed connection queue: sendbuf: recvbuf:

Recap: Writing High Performance Servers: r Major Issues: Many socket/IO operations can cause processing to block, e. g. , m m accept: waiting for new connection; read a socket waiting for data or close; write a socket waiting for buffer space; I/O read/write for disk to finish r Thus a crucial perspective of network server design is the concurrency design (non-blocking) m m for high performance to avoid denial of service r A technique to avoid blocking: Thread

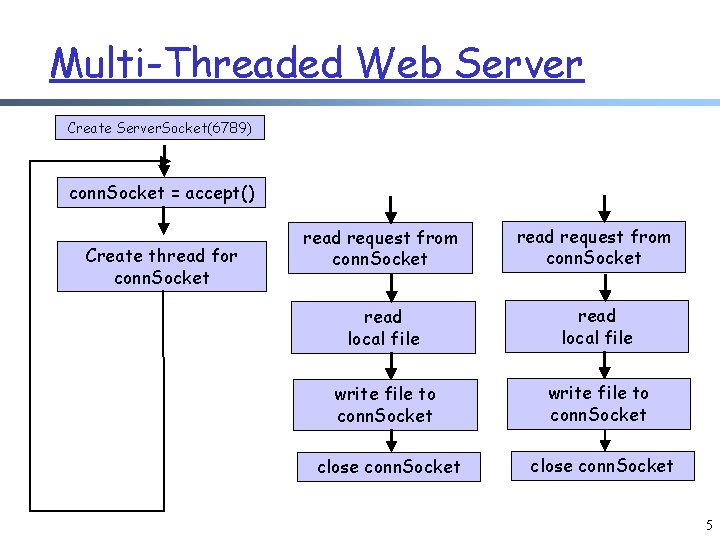

Multi-Threaded Web Server Create Server. Socket(6789) conn. Socket = accept() Create thread for conn. Socket read request from conn. Socket read local file write file to conn. Socket close conn. Socket 5

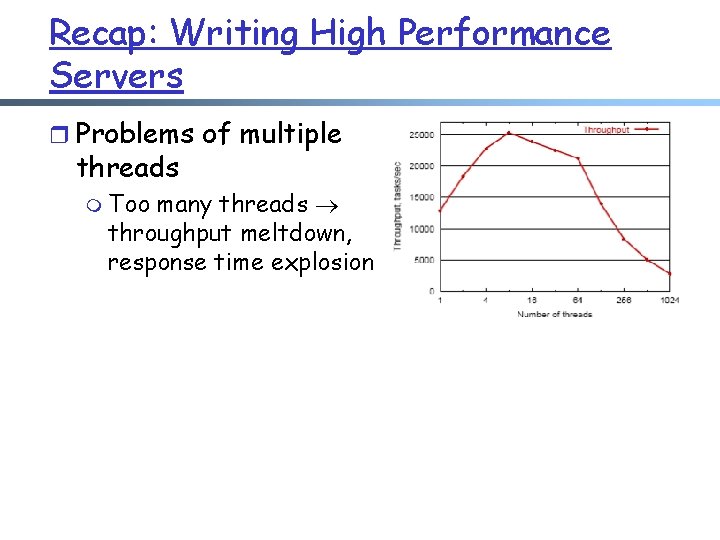

Recap: Writing High Performance Servers r Problems of multiple threads m Too many threads throughput meltdown, response time explosion

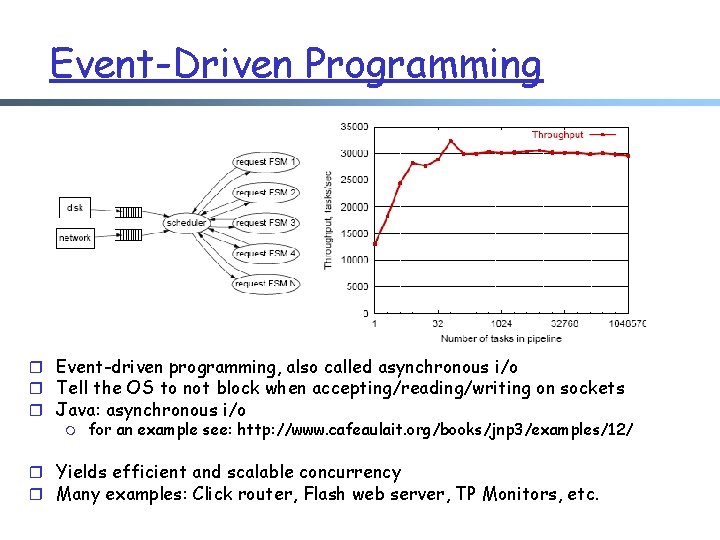

Event-Driven Programming r Event-driven programming, also called asynchronous i/o r Tell the OS to not block when accepting/reading/writing on sockets r Java: asynchronous i/o m for an example see: http: //www. cafeaulait. org/books/jnp 3/examples/12/ r Yields efficient and scalable concurrency r Many examples: Click router, Flash web server, TP Monitors, etc.

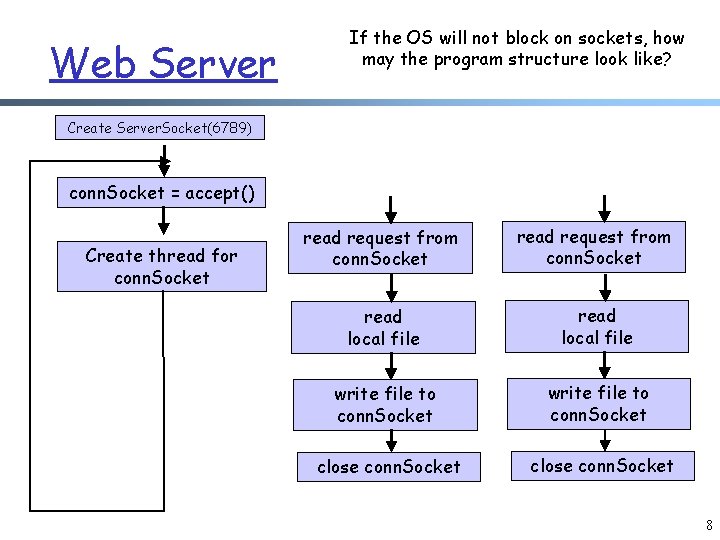

Web Server If the OS will not block on sockets, how may the program structure look like? Create Server. Socket(6789) conn. Socket = accept() Create thread for conn. Socket read request from conn. Socket read local file write file to conn. Socket close conn. Socket 8

Typical Structure of Async i/o r Typically, async i/o programs use Finite State Machines (FSM) to monitor the progress of requests m The state info keeps track of the execution stage of processing each request, e. g. , reading request, writing reply, … r The program has a loop to check potential events at each state 9

Async I/O in Java r An important class is the class Selector, to support event loop r A Selector is a multiplexer of selectable channel objects m example channels: Datagram. Channel, Server. Socket. Channel, Socket. Channel m use configure. Blocking(false) to make a channel non-blocking r A selector may be created by invoking the open method of this class

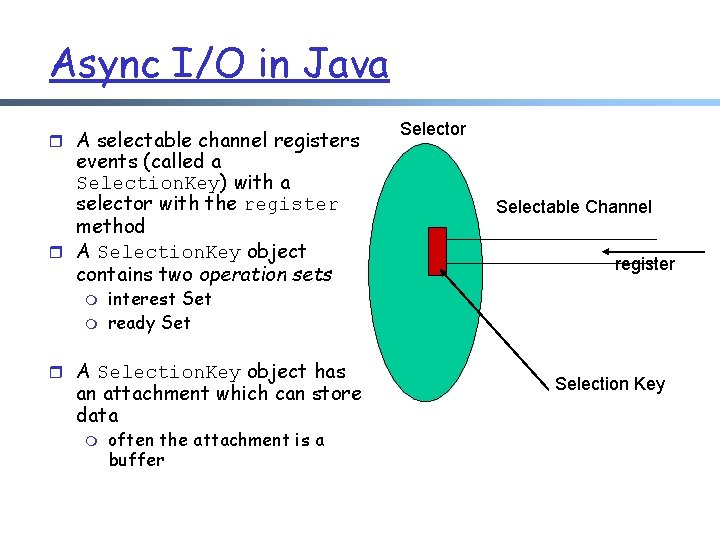

Async I/O in Java r A selectable channel registers events (called a Selection. Key) with a selector with the register method r A Selection. Key object contains two operation sets m m Selectable Channel register interest Set ready Set r A Selection. Key object has an attachment which can store data m Selector often the attachment is a buffer Selection Key

Async I/O in Java r Call select (or select. Now(), or select(int timeout)) to check for ready events, called the selected key set r Iterate over the set to process all ready events

Problems of Event-Driven Server r Difficult to engineer, modularize, and tune r No performance/failure isolation between Finite-State-Machines (FSMs) r FSM code can never block (but page faults, i/o, garbage collection may still force a block) m thus still need multiple threads

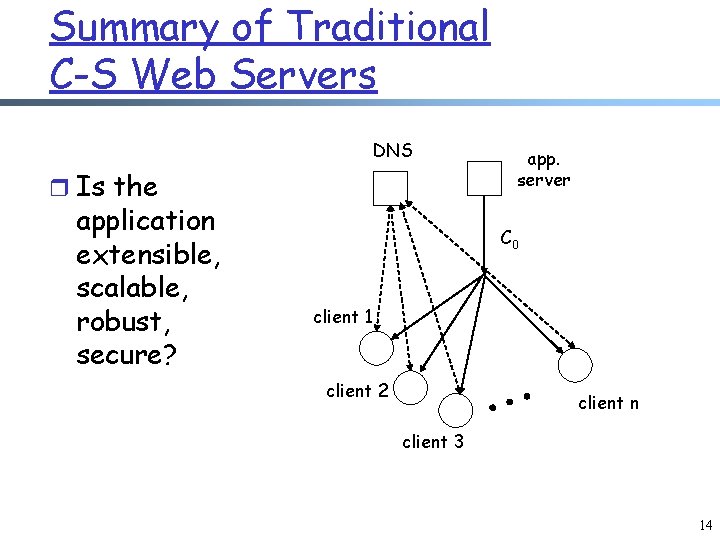

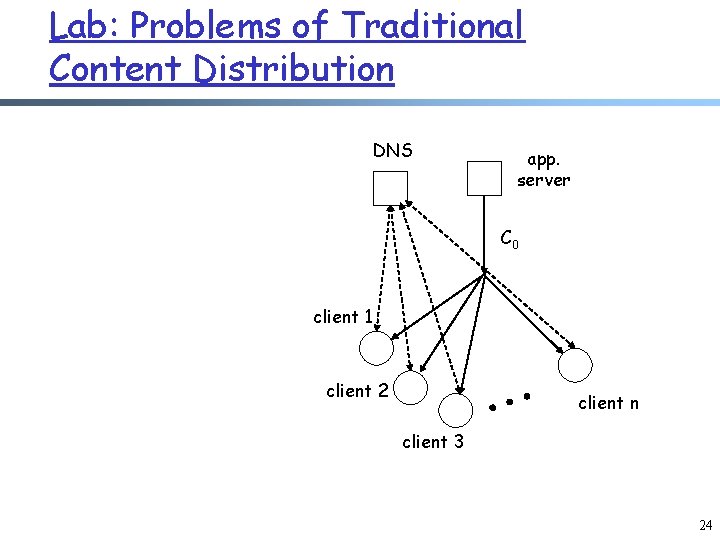

Summary of Traditional C-S Web Servers DNS r Is the application extensible, scalable, robust, secure? app. server C 0 client 1 client 2 client n client 3 14

Content Distribution History. . . “With 25 years of Internet experience, we’ve learned exactly one way to deal with the exponential growth: Caching”. (1997, Van Jacobson) 15

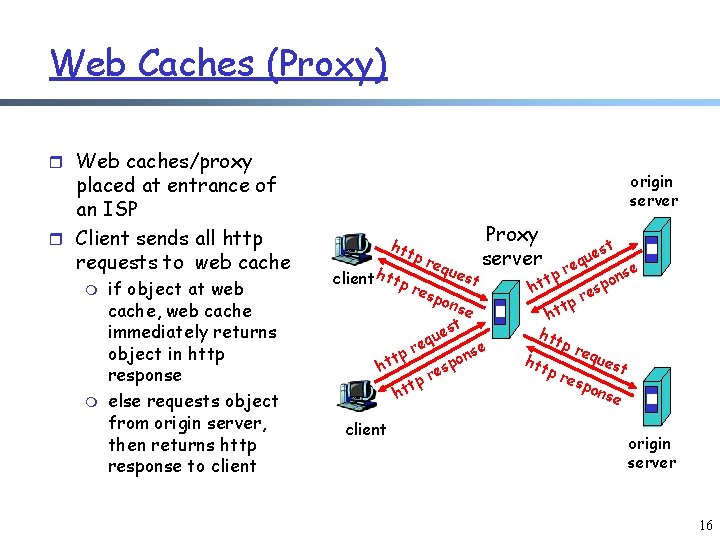

Web Caches (Proxy) r Web caches/proxy placed at entrance of an ISP r Client sends all http requests to web cache m m if object at web cache, web cache immediately returns object in http response else requests object from origin server, then returns http response to client origin server htt client htt pr equ pr esp est Proxy server ons e t es u eq r nse tp o t p h es r tp ht client t es u eq r se p n t o p ht es r tp ht htt pr equ htt est pr esp ons e origin server 16

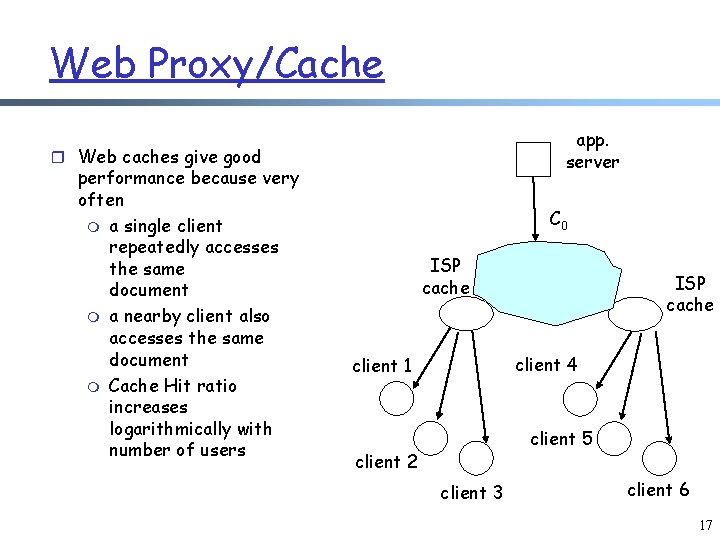

Web Proxy/Cache app. server r Web caches give good performance because very often m a single client repeatedly accesses the same document m a nearby client also accesses the same document m Cache Hit ratio increases logarithmically with number of users C 0 ISP cache client 4 client 1 client 5 client 2 client 3 client 6 17

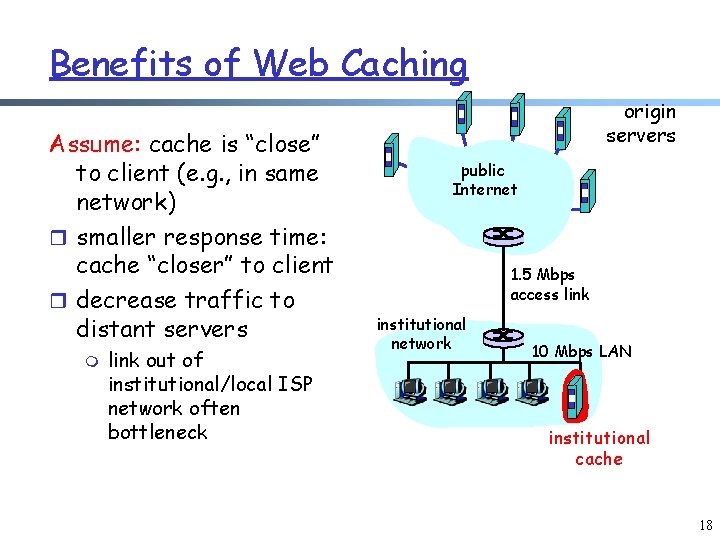

Benefits of Web Caching Assume: cache is “close” to client (e. g. , in same network) r smaller response time: cache “closer” to client r decrease traffic to distant servers m link out of institutional/local ISP network often bottleneck origin servers public Internet 1. 5 Mbps access link institutional network 10 Mbps LAN institutional cache 18

What went wrong with Web Caches? r Web protocols evolved extensively to accommodate caching, e. g. HTTP 1. 1 r However, Web caching was developed with a strong ISP perspective, leaving content providers out of the picture m m It is the ISP who places a cache and controls it ISPs only interest to use Web caches is to reduce bandwidth r In the USA: Bandwidth relative cheap r In Europe, there were many more Web caches m However, ISPs can arbitrarily tune Web caches to deliver stale content 19

Content Provider Perspective r Content providers care about m User experience latency m Content freshness m Accurate access statistics m Avoid flash crowds m Minimize bandwidth usage in their access link 20

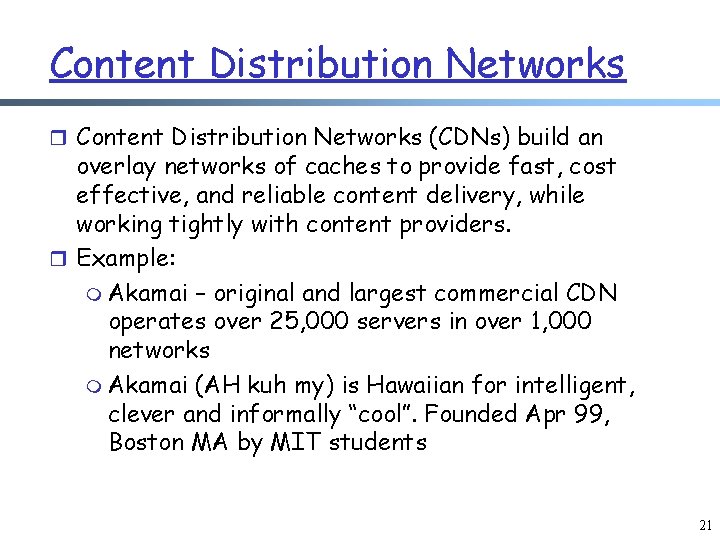

Content Distribution Networks r Content Distribution Networks (CDNs) build an overlay networks of caches to provide fast, cost effective, and reliable content delivery, while working tightly with content providers. r Example: m Akamai – original and largest commercial CDN operates over 25, 000 servers in over 1, 000 networks m Akamai (AH kuh my) is Hawaiian for intelligent, clever and informally “cool”. Founded Apr 99, Boston MA by MIT students 21

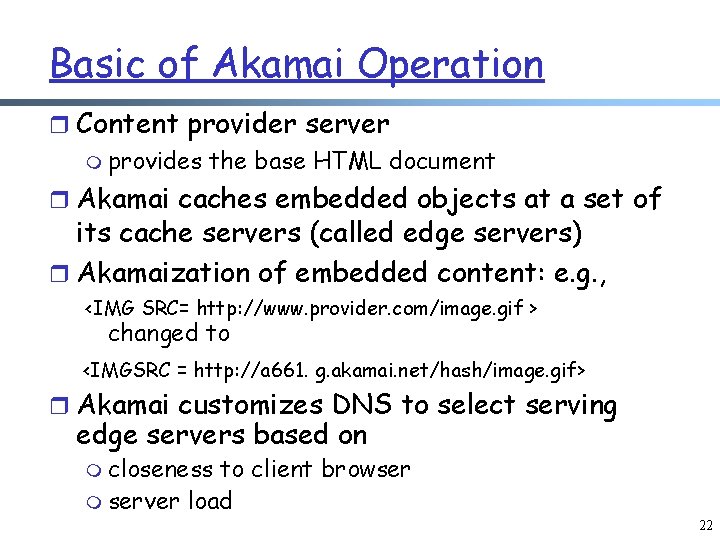

Basic of Akamai Operation r Content provider server m provides the base HTML document r Akamai caches embedded objects at a set of its cache servers (called edge servers) r Akamaization of embedded content: e. g. , <IMG SRC= http: //www. provider. com/image. gif > changed to <IMGSRC = http: //a 661. g. akamai. net/hash/image. gif> r Akamai customizes DNS to select serving edge servers based on m closeness to client browser m server load 22

More Akamai information r URL akamaization is becoming obsolete and only supported for legacy reasons m Currently most content providers prefer to use DNS CNAME techniques to get all their content served from the Akamai servers m still content providers need to run their origin servers r Akamai Evolution: m Files/streaming m Secure pages and whole pages m Dynamic page assembly at the edge (ESI) m Distributed applications 23

Lab: Problems of Traditional Content Distribution DNS app. server C 0 client 1 client 2 client n client 3 24

Objectives of P 2 P r Share the resources (storage and bandwidth) of individual clients to improve scalability/robustness Internet r Bypass DNS to find clients with resources! m examples: instant messaging, skype 25

P 2 P r But P 2 P is not new r Original Internet was a p 2 p system: m m m The original ARPANET connected UCLA, Stanford Research Institute, UCSB, and Univ. of Utah No DNS or routing infrastructure, just connected by phone lines Computers also served as routers r P 2 P is simply an iteration of scalable distributed systems

P 2 P Systems r File Sharing: Bit. Torrent, Lime. Wire r Streaming: PPLive, PPStream, Zatto, … r Research systems r Collaborative computing: m SETI@Home project • Human genome mapping • Intel Net. Batch: 10, 000 computers in 25 worldwide sites for simulations, saved about 500 million

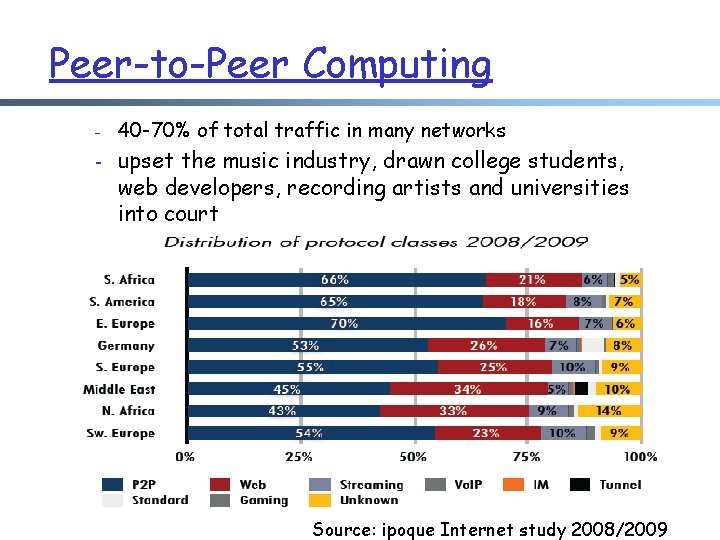

Peer-to-Peer Computing - 40 -70% of total traffic in many networks - upset the music industry, drawn college students, web developers, recording artists and universities into court Source: ipoque Internet study 2008/2009

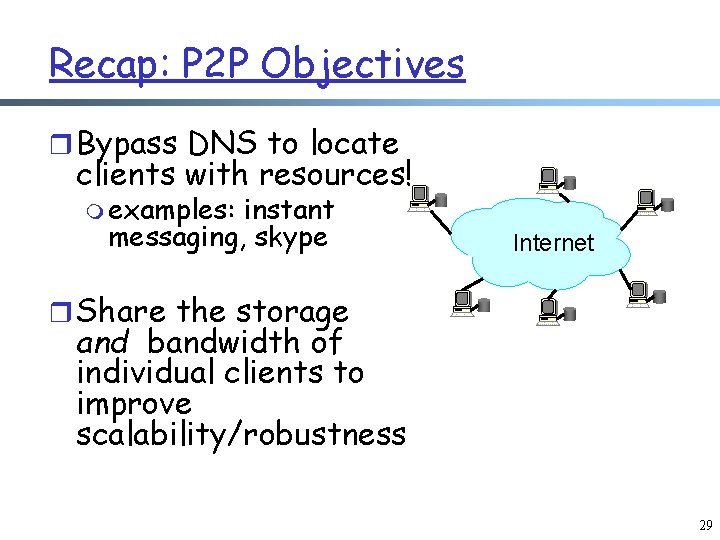

Recap: P 2 P Objectives r Bypass DNS to locate clients with resources! m examples: instant messaging, skype Internet r Share the storage and bandwidth of individual clients to improve scalability/robustness 29

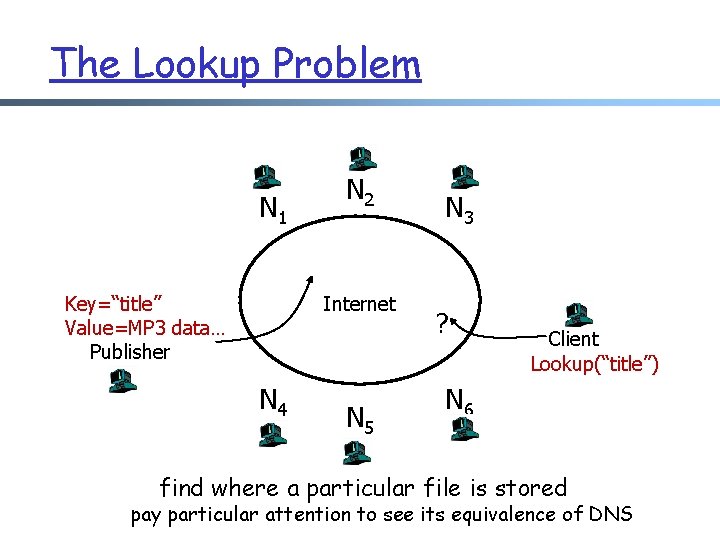

The Lookup Problem N 1 Key=“title” Value=MP 3 data… Publisher N 2 Internet N 4 N 5 N 3 ? Client Lookup(“title”) N 6 find where a particular file is stored pay particular attention to see its equivalence of DNS

Outline q Recap Ø P 2 P Ø the lookup problem Ø Napster 31

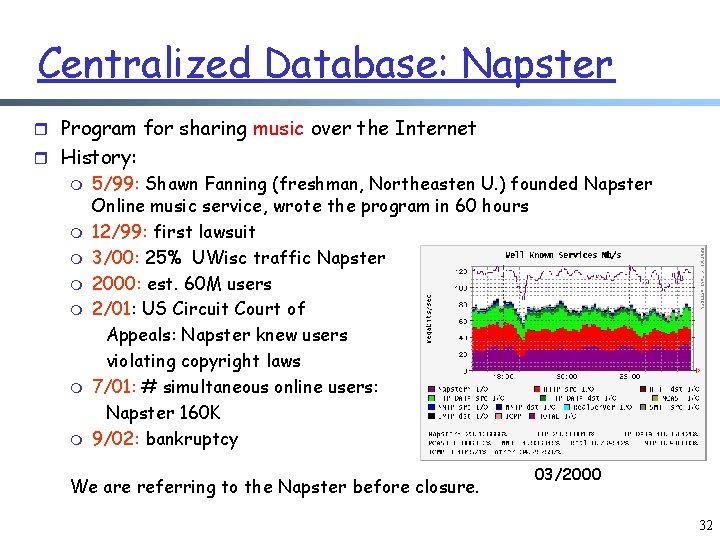

Centralized Database: Napster r Program for sharing music over the Internet r History: m m m m 5/99: Shawn Fanning (freshman, Northeasten U. ) founded Napster Online music service, wrote the program in 60 hours 12/99: first lawsuit 3/00: 25% UWisc traffic Napster 2000: est. 60 M users 2/01: US Circuit Court of Appeals: Napster knew users violating copyright laws 7/01: # simultaneous online users: Napster 160 K 9/02: bankruptcy We are referring to the Napster before closure. 03/2000 32

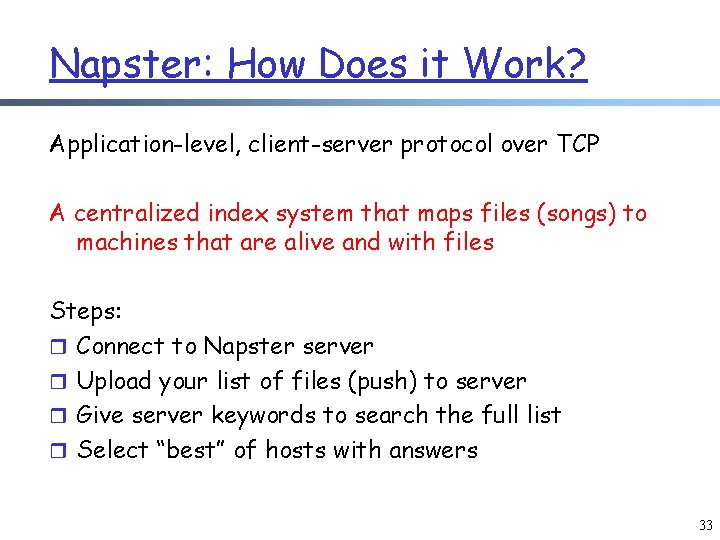

Napster: How Does it Work? Application-level, client-server protocol over TCP A centralized index system that maps files (songs) to machines that are alive and with files Steps: r Connect to Napster server r Upload your list of files (push) to server r Give server keywords to search the full list r Select “best” of hosts with answers 33

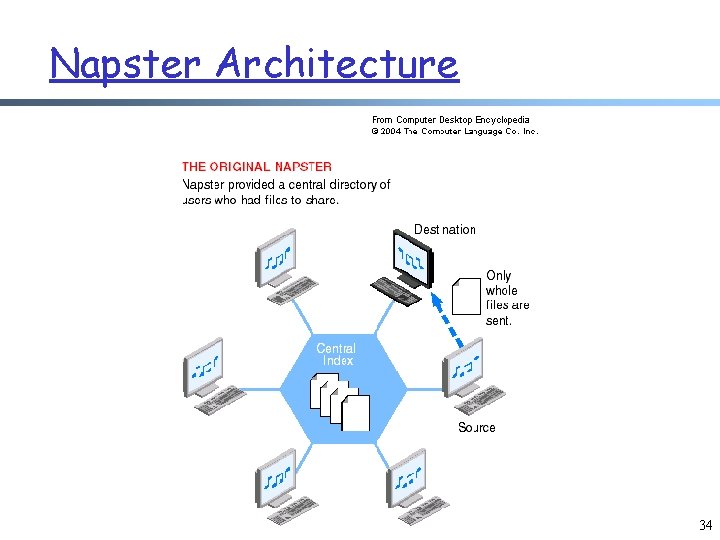

Napster Architecture 34

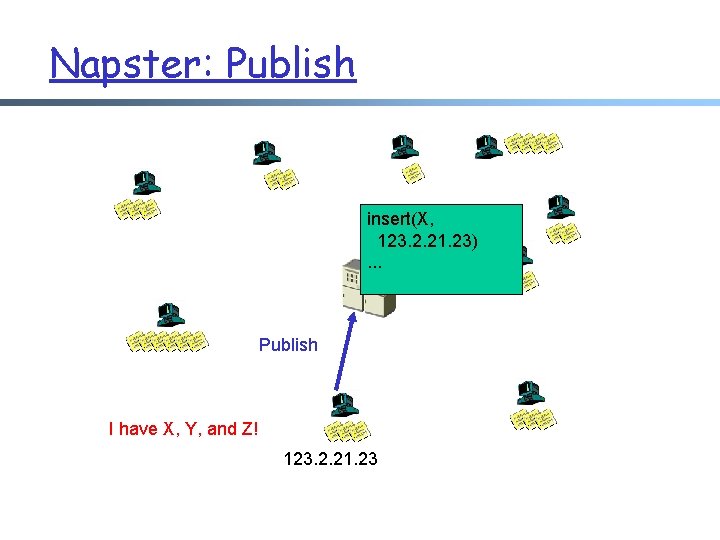

Napster: Publish insert(X, 123. 2. 21. 23). . . Publish I have X, Y, and Z! 123. 2. 21. 23

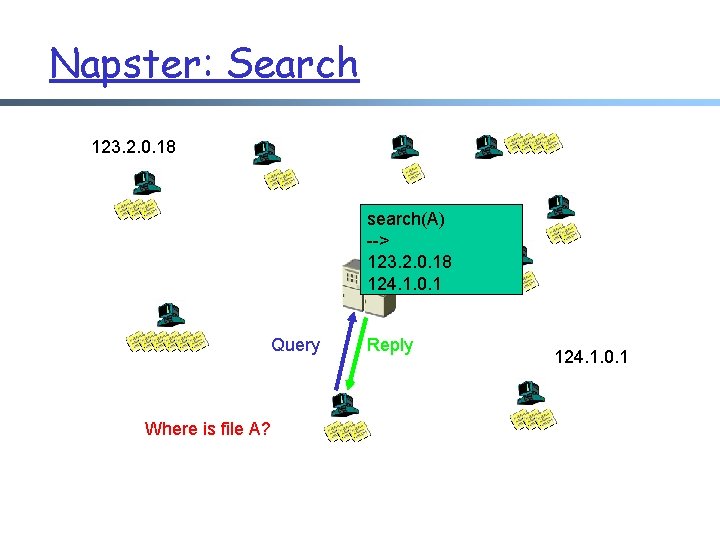

Napster: Search 123. 2. 0. 18 search(A) --> 123. 2. 0. 18 124. 1. 0. 1 Query Where is file A? Reply 124. 1. 0. 1

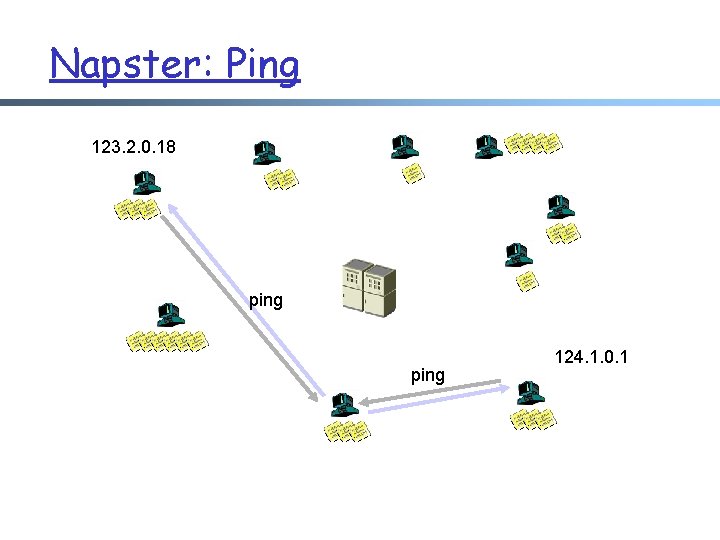

Napster: Ping 123. 2. 0. 18 ping 124. 1. 0. 1

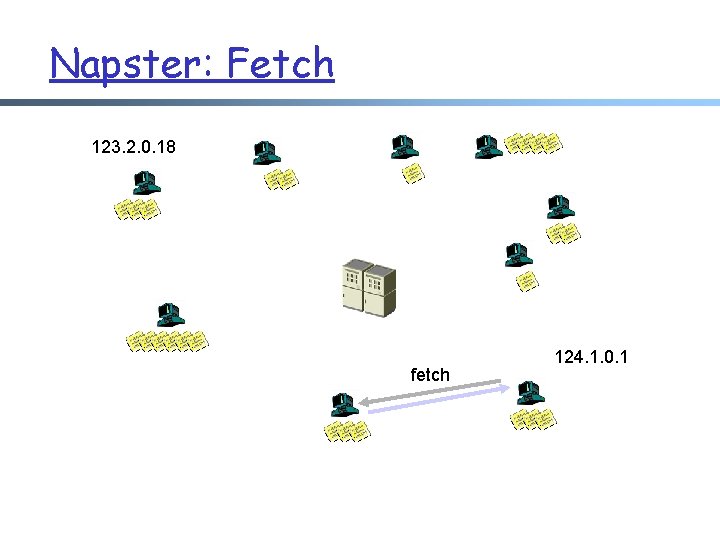

Napster: Fetch 123. 2. 0. 18 fetch 124. 1. 0. 1

![Napster Messages General Packet Format [chunksize] [chunkinfo] [data. . . ] CHUNKSIZE: Intel-endian 16 Napster Messages General Packet Format [chunksize] [chunkinfo] [data. . . ] CHUNKSIZE: Intel-endian 16](http://slidetodoc.com/presentation_image_h/c691d848180e58f461019cd037e9b7cd/image-39.jpg)

Napster Messages General Packet Format [chunksize] [chunkinfo] [data. . . ] CHUNKSIZE: Intel-endian 16 -bit integer size of [data. . . ] in bytes CHUNKINFO: (hex) Intel-endian 16 -bit integer. 5 B - whois query 00 - login rejected 02 - login requested 5 C - whois result 03 - login accepted 5 D - whois: user is offline! 0 D - challenge? (nuprin 1715) 69 - list all channels 2 D - added to hotlist 6 A - channel info 2 E - browse error (user isn't online!) 90 - join channel 2 F - user offline 91 - leave channel …. . 39

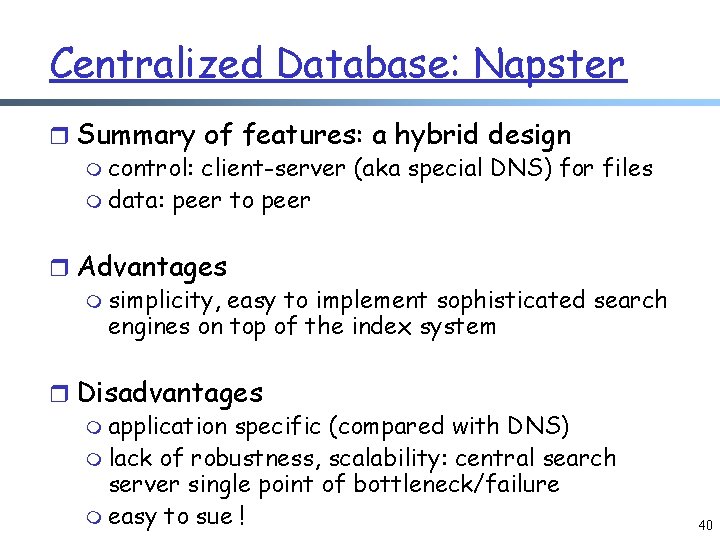

Centralized Database: Napster r Summary of features: a hybrid design m control: client-server (aka special DNS) for files m data: peer to peer r Advantages m simplicity, easy to implement sophisticated search engines on top of the index system r Disadvantages m application specific (compared with DNS) m lack of robustness, scalability: central search server single point of bottleneck/failure m easy to sue ! 40

Variation: Bit. Torrent r A global central index server is replaced by one tracker per file (called a swarm) m reduces centralization; but needs other means to locate trackers r The bandwidth scalability management technique is more interesting m more later 41

Outline q Recap Ø P 2 P Ø the lookup problem ¦ Napster (central query server; distributed data servers) Ø Gnutella 42

Gnutella r On March 14 th 2000, J. Frankel and T. Pepper from AOL’s Nullsoft division (also the developers of the popular Winamp mp 3 player) released Gnutella r Within hours, AOL pulled the plug on it r Quickly reverse-engineered and soon many other clients became available: Bearshare, Morpheus, Lime. Wire, etc. 43

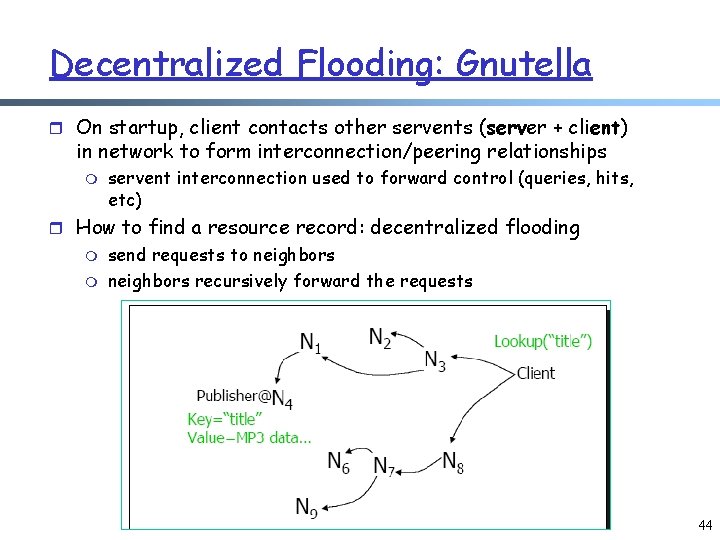

Decentralized Flooding: Gnutella r On startup, client contacts other servents (server + client) in network to form interconnection/peering relationships m servent interconnection used to forward control (queries, hits, etc) r How to find a resource record: decentralized flooding m m send requests to neighbors recursively forward the requests 44

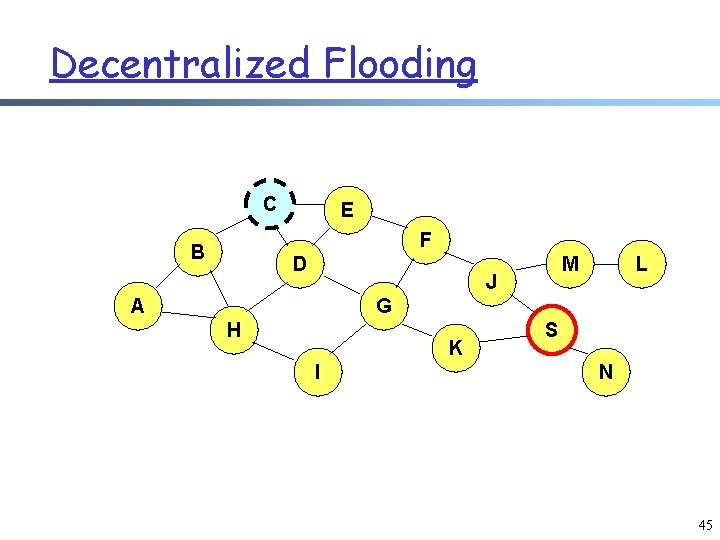

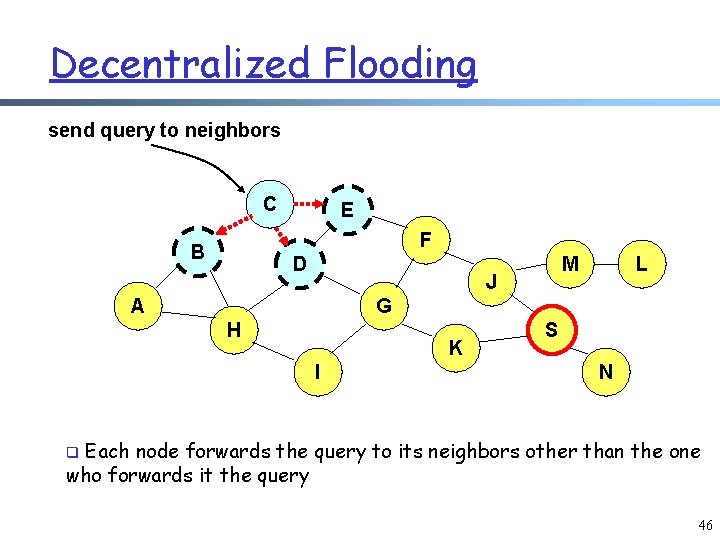

Decentralized Flooding C E F B D M J A L G H K I S N 45

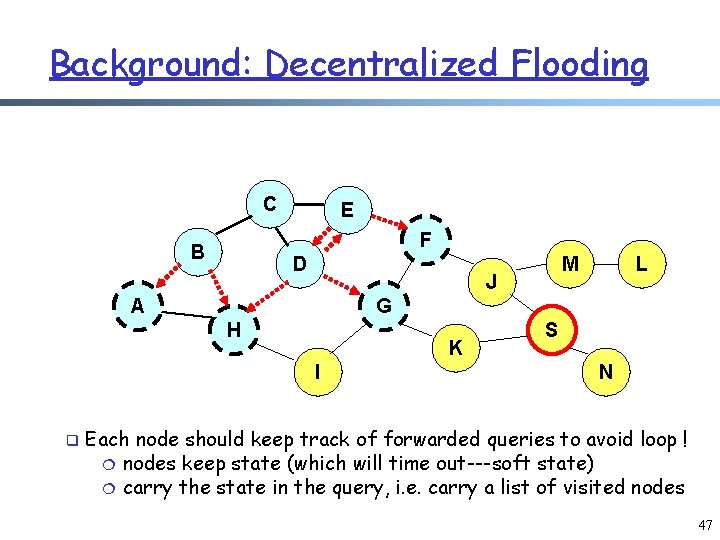

Decentralized Flooding send query to neighbors C E F B D M J A L G H K I S N Each node forwards the query to its neighbors other than the one who forwards it the query q 46

Background: Decentralized Flooding C E F B D J A L G H K I q M S N Each node should keep track of forwarded queries to avoid loop ! ¦ nodes keep state (which will time out---soft state) ¦ carry the state in the query, i. e. carry a list of visited nodes 47

Decentralized Flooding: Gnutella r Basic message header m Unique ID, TTL, Hops r Message types m Ping – probes network for other servents m Pong – response to ping, contains IP addr, # of files, etc. m m Query – search criteria + speed requirement of servent Query. Hit – successful response to Query, contains addr + port to transfer from, speed of servent, etc. Ping, Queries are flooded Query. Hit, Pong: reverse path of previous message 48

Advantages and Disadvantages of Gnutella r Advantages: m totally decentralized, highly robust r Disadvantages: m not scalable; the entire network can be swamped with flood requests • especially hard on slow clients; at some point broadcast traffic on Gnutella exceeded 56 kbps m to alleviate this problem, each request has a TTL to limit the scope • each query has an initial TTL, and each node forwarding it reduces it by one; if TTL reaches 0, the query is dropped (consequence? ) 49

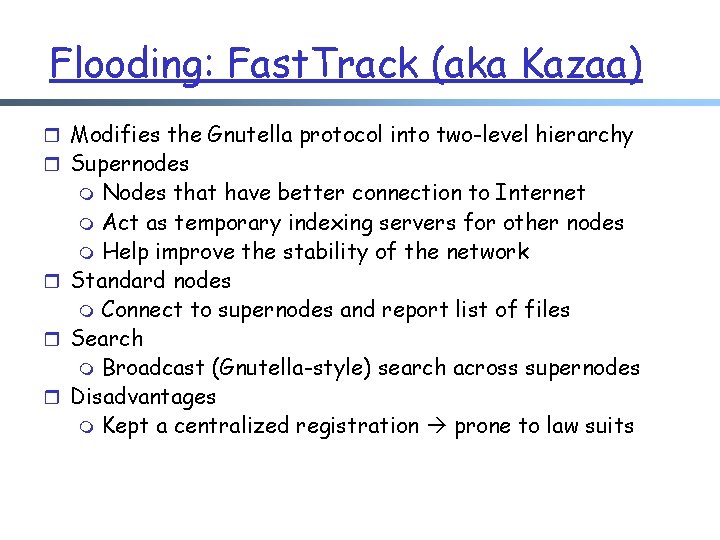

Flooding: Fast. Track (aka Kazaa) r Modifies the Gnutella protocol into two-level hierarchy r Supernodes Nodes that have better connection to Internet m Act as temporary indexing servers for other nodes m Help improve the stability of the network r Standard nodes m Connect to supernodes and report list of files r Search m Broadcast (Gnutella-style) search across supernodes r Disadvantages m Kept a centralized registration prone to law suits m

Optional Slides 51

Optional Slides 52

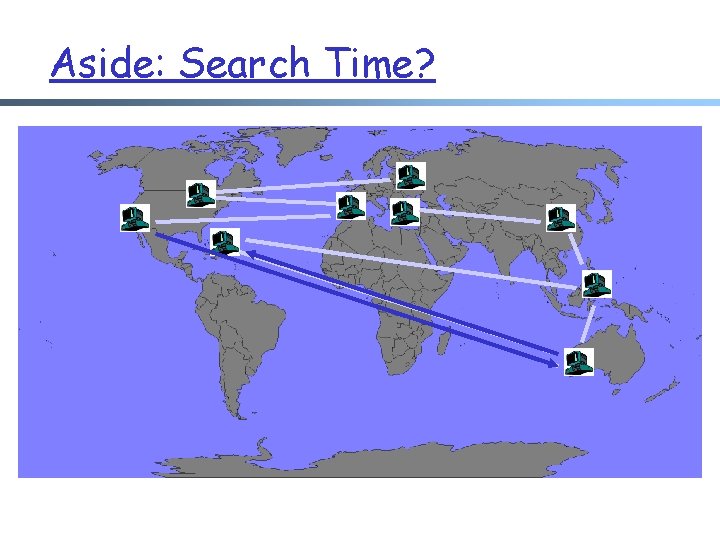

Aside: Search Time?

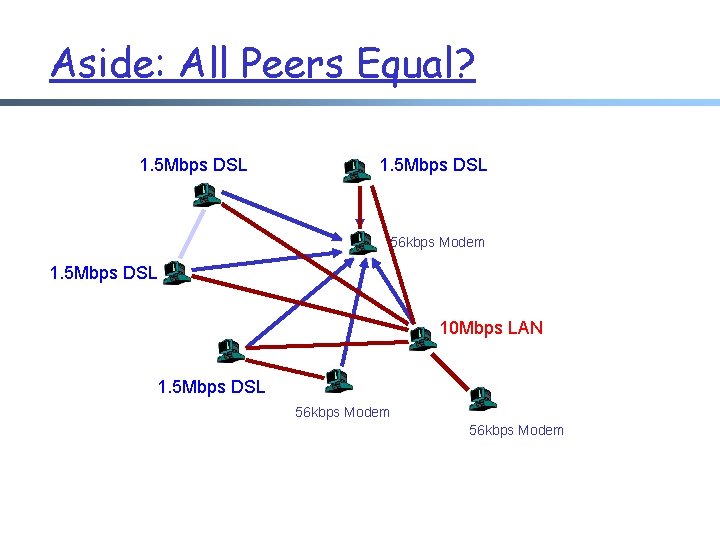

Aside: All Peers Equal? 1. 5 Mbps DSL 56 kbps Modem 1. 5 Mbps DSL 10 Mbps LAN 1. 5 Mbps DSL 56 kbps Modem

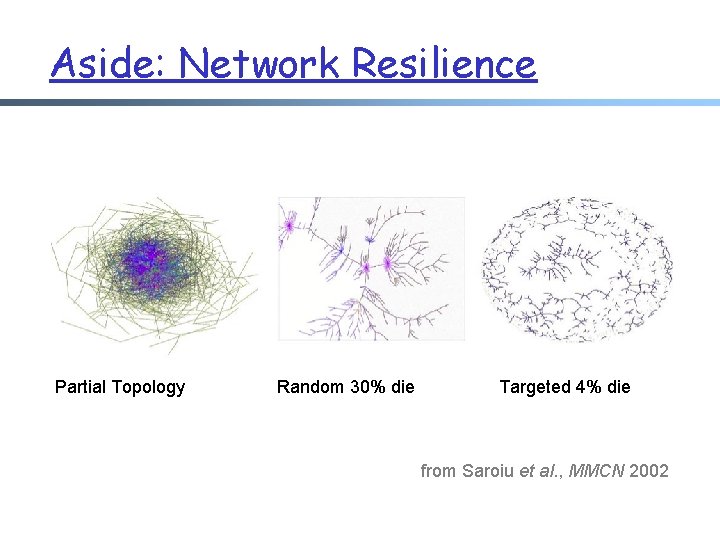

Aside: Network Resilience Partial Topology Random 30% die Targeted 4% die from Saroiu et al. , MMCN 2002

Asynchronous Network Programming (C/C++) 56

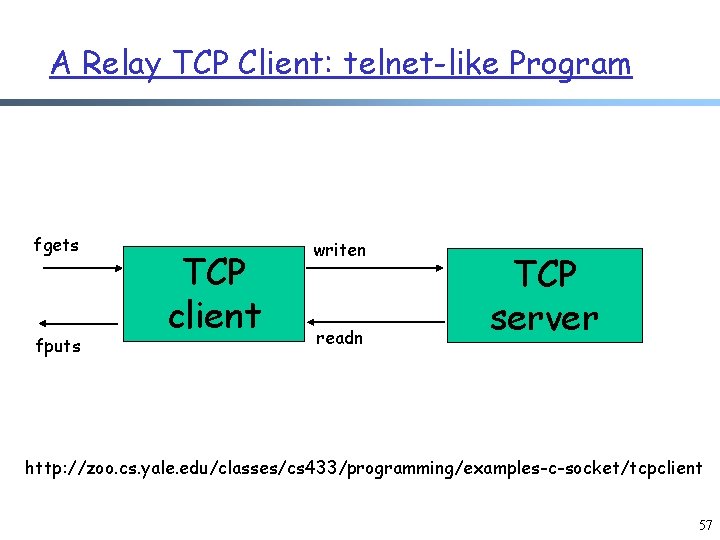

A Relay TCP Client: telnet-like Program fgets fputs TCP client writen readn TCP server http: //zoo. cs. yale. edu/classes/cs 433/programming/examples-c-socket/tcpclient 57

Method 1: Process and Thread r process m fork() m waitpid() r Thread: light weight process m pthread_create() m pthread_exit() 58

![pthread Void main() { char recvline[MAXLINE + 1]; ss = new socketstream(sockfd); pthread_t tid; pthread Void main() { char recvline[MAXLINE + 1]; ss = new socketstream(sockfd); pthread_t tid;](http://slidetodoc.com/presentation_image_h/c691d848180e58f461019cd037e9b7cd/image-59.jpg)

pthread Void main() { char recvline[MAXLINE + 1]; ss = new socketstream(sockfd); pthread_t tid; if (pthread_create(&tid, NULL, copy_to, NULL)) { err_quit("pthread_creat()"); } } while (ss->read_line(recvline, MAXLINE) > 0) { fprintf(stdout, "%sn", recvline); } void *copy_to(void *arg) { char sendline[MAXLINE]; if (debug) cout << "Thread create()!" << endl; while (fgets(sendline, sizeof(sendline), stdin)) ss->writen_socket(sendline, strlen(sendline)); shutdown(sockfd, SHUT_WR); if (debug) cout << "Thread done!" << endl; } pthread_exit(0); 59

Method 2: Asynchronous I/O (Select) r select: deal with blocking system call int select(int n, fd_set *readfds, fd_set *writefds, fd_set *exceptfds, struct timeval *timeout); FD_CLR(int fd, fd_set *set); FD_ZERO(fd_set *set); FD_ISSET(int fd, fd_set *set); FD_SET(int fd, fd_set *set); 60

Method 3: Signal and Select r signal: events such as timeout 61

Examples of Network Programming r Library to make life easier r Four design examples m TCP Client m TCP server using select m TCP server using process and thread m Reliable UDP r Warning: It will be hard to listen to me reading through the code. Read the code. 62

Example 2: A Concurrent TCP Server Using Process or Thread r Get a line, and echo it back r Use select() r For how to use process or thread, see later r Check the code at: http: //zoo. cs. yale. edu/classes/cs 433/programming/examples-c-socket/tcpserver r Are there potential denial of service problems with the code? 63

Example 3: A Concurrent HTTP TCP Server Using Process/Thread r Use process-per-request or thread-per- request r Check the code at: http: //zoo. cs. yale. edu/classes/cs 433/programming/examples-c-socket/simple_httpd 64

- Slides: 64