Network Application Performance Deke Kassabian and Shumon Huque

Network Application Performance Deke Kassabian and Shumon Huque ISC Networking & Telecommunications February 2002 - Super Users Group

Introduction What this talk is all about n n Network performance on the local area network and around campus Network performance in the wide area and for advanced applications Goal: acceptable performance, positive user experience

Who needs to be involved? End Users Researchers Local Support Providers Application Developers System Programmers/Administrators Network Engineers

What is performance? “Performance” might mean … n n n Elapsed time for file transfers Packet loss over a period of time Percentage of data needing retransmission Drop outs in video or audio Subjective “feeling” that feedback is “on time”

Throughput is the amount of data that arrives per unit time. “Goodput” is the amount of data that arrives per unit time, minus the amount of that data that was retransmitted.

Delay is a time measurement for data transfer n n n One way network delay for a bit in transit Delay for a total transfer Time from mouse click to screen message that the “operation is complete” NIC to NIC Stack to Stack Eyeball to eyeball

Jitter Variation in delay over time n n Non-issue for non-realtime applications May be problematic for some applications with real-time interactive requirements, such as video conferencing E 2 E delay of 70 ms +/- 5 ms -> low jitter E 2 E delay of 35 ms +/- 20 ms -> higher jitter

Some Contributors to Delay Slow networks Slow computers Poor TCP/IP stacks on end-stations Poorly written applications

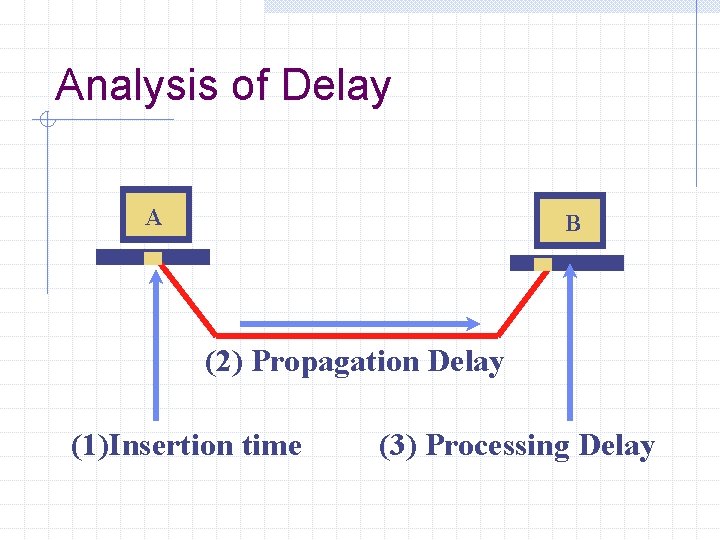

Analysis of Delay A B (2) Propagation Delay (1)Insertion time (3) Processing Delay

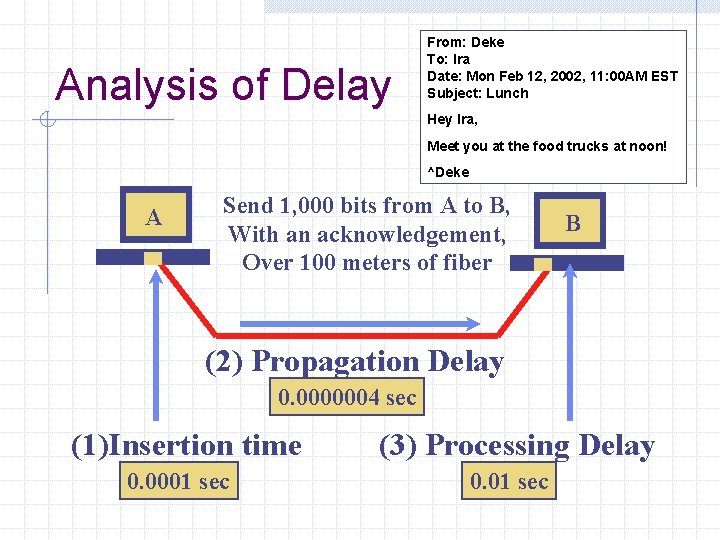

Analysis of Delay From: Deke To: Ira Date: Mon Feb 12, 2002, 11: 00 AM EST Subject: Lunch Hey Ira, Meet you at the food trucks at noon! ^Deke A Send 1, 000 bits from A to B, With an acknowledgement, Over 100 meters of fiber B (2) Propagation Delay 0. 0000004 sec (1)Insertion time 0. 0001 sec (3) Processing Delay 0. 01 sec

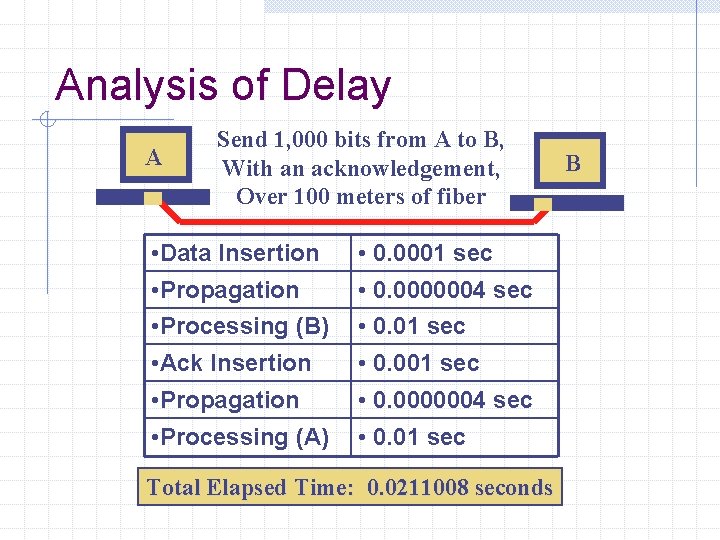

Analysis of Delay A Send 1, 000 bits from A to B, With an acknowledgement, Over 100 meters of fiber • Data Insertion • Propagation • Processing (B) • 0. 0001 sec • 0. 0000004 sec • 0. 01 sec • Ack Insertion • 0. 001 sec • Propagation • Processing (A) • 0. 0000004 sec • 0. 01 sec Total Elapsed Time: 0. 0211008 seconds B

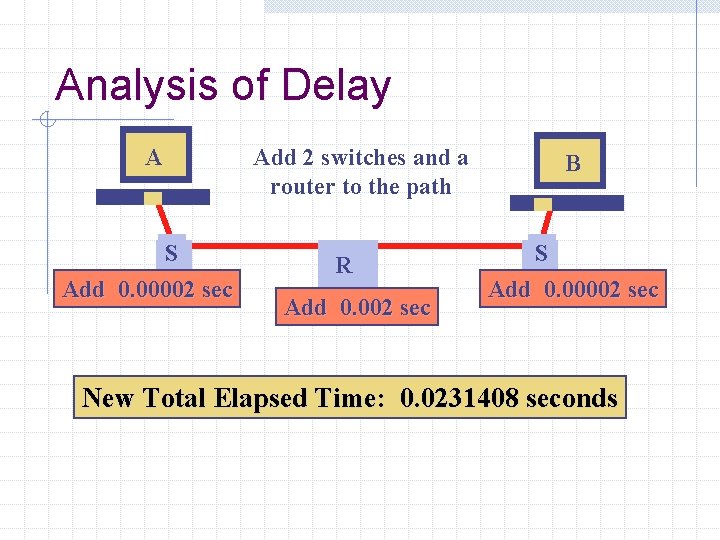

Analysis of Delay Add 2 switches and a router to the path A S Add 0. 00002 sec R Add 0. 002 sec B S Add 0. 00002 sec New Total Elapsed Time: 0. 0231408 seconds

Summary of Delay Analysis Propagation delay is of little consequence in LANs, more of an issue for high bandwidth WANs. Queueing delays are rarely major contributors. Processing delay is almost always an issue. Retransmission delays can be major contributors to poor network performance.

Speaker Change

What I’m going to talk about More on delay contributors, their causes and how to minimize them Protocol Stack behavior & tuning Quality of Service (Qo. S) Performance measurement tools Operating System tuning examples General comments about things you can do

Recap: Delay Contributors Processing Delay Retransmission Delay Queueing Delay Propagation Delay

Processing Delay Time it takes to process a packet at an endstation or network node. Depends on: n Network protocol complexity, application code, computational power at node, NIC efficiency etc Endstation Tuning Application Tuning

Endstation Tuning Good network hardware/NICs Correct speed/duplex settings n Auto-negotiation problems Sufficient CPU Sufficient Memory Network Protocol Stack tuning n Path MTU discovery, Jumbo Frames, TCP Window Scaling, SACK etc

Ethernet Bandwidth/Duplex mode Ethernet bandwidth: 10, 1000 n 10 Gigabit Ethernet soon Duplex modes: half-duplex, full-duplex Auto-Negotiation Mismatch Detection: n n CRC/Alignment errors Late Collisions

Application Tuning Optimize access to host resources Pay attention to Disk I/O issues Pay attention to Bus and Memory issues Know what concurrent activity may be interfering with performance of app Tuning application send/receive buffers Efficient application protocol design Positive end user feedback n Subjective perception of performance

Retransmission Delay Causes n Packet loss w Bad hardware: NICs, switches, routers, transmission lines n n Congestion and Queue drops Out of order packet delivery w May be considered packet loss from application’s perspective if it can’t re-order packets n Untimely delivery (delay) w Some apps may consider a packet to be lost if they don’t receive it in a timely fashion

Retransmission Delay (cont) Mitigating retransmission delay n Ensure working equipment w Although some packet loss is unavoidable; eg. most transmission lines have a BER (Bit Error Rate) n Reduce time to recover from packet loss w Eg. Highly tuned network stack with more aggressive retransmission and recovery behavior n Forward Error Correction (FEC) w Very useful for time/delay sensitive applications w Also, for cases when it’s expensive to retransmit data

Bit Errors on WAN paths Bit Error Rate (BER) specs for networking interfaces/circuits may not be low enough: n n 1 bit-error in 10 billion bits Assuming 1500 byte packets Packet error rate: 1 in 1 million 10 hops => 1 in 100, 000 packet drop rate

Queueing Delay Long queueing delays could be caused by lame hardware (switches/routers) n n n Head of line blocking Insufficient switching fabric Insufficient horse power Unfavorable Qo. S treatment

Queueing Delay (cont) How to reduce n n Use good network hardware Improved network architecture w Reduce number of switching/routing elements on the network path w Richer network topology, more interconnections w End user may not have influence over architecture n Employ preferential queue scheduling algorithms w Will discuss later in Qo. S section of talk

Propagation Delay Restricted by speed of light through transmission medium n n Can’t be changed, but rarely a concern in the campus/LAN environment A concern in long distance paths (WAN), but w Some steps can be taken to increase performance (throughput) on such paths

Other delays and bottlenecks Intermediary systems n n DNS Routing issues w Route availability, asymmetric routing, routing protocol stability and convergence time n n Firewalls Tunnels (IPSec VPNs, IP in IP tunnels etc) w Router hardware poor at encap/decap

Throughput Influenced by a number of variables: n n All the delay factors we discussed Window size (for TCP) Bottleneck link capacity End station processing and buffering capacity

What I’m going to talk about next • Brief description of TCP/IP protocol • How to improve TCP/IP performance

Transport: TCP vs UDP Network apps use 2 main transport protocols: TCP (Transmission Control Protocol) w w Connection oriented (telephone like service) Reliable: guarantees delivery of data Flow control Examples: Web (HTTP), Email (SMTP, IMAP) UDP (User Datagram Protocol) w Connectionless (postal system like) w Unreliable: no guarantees of delivery w Examples: DNS, various types of streaming media

When to use TCP or UDP? Many common apps use TCP because it’s convenient w TCP handles reliable delivery, retransmissions of lost packets, re-ordering, flow control etc You may want to use UDP if: w Delays introduced by ACKs are unacceptable w TCP congestion avoidance and flow control measures are unsuitable for your application w You want more control of how your data is transported over the network w Highly delay/jitter sensitive apps often use UDP n Audio-video conferencing etc

Network Stack Tuning Jumbo Frames Path MTU Discovery TCP Extensions: n n n Window Scaling - RFC 1323 Fast Retransmit Fast Recovery Selective Acknowledgements

Jumbo Frames Increase MTU used at link layer, allowing larger maximum sized frames Increases Network Throughput Fewer larger frames means: w Fewer CPU interrupts and less processing overhead for a given data transfer size Some studies have shown Gigabit Ethernet using 9000 byte jumbo frames provided 50% more throughput and used 50% less CPU! n (default Ethernet MTU is 1500 bytes)

Jumbo Frames (cont) Pitfalls: n Not widely deployed yet n n n Many network devices may not be capable of jumbo frames (they’ll look like bad frames) May cause excessive IP fragmentation BER may have more impact on jumbo frames w Eg. A single bit-error can cause a large amount of data to be lost and retransmitted n May have negative impact on host processing requirements: w More memory for buffering, newer NICs

Path MTU Discovery MTU (Max Transmission Unit) w Max sized frame allowed on the link Path MTU w Min MTU on any network in the path between 2 hosts IP Fragmentation & Reassembly Path MTU Discovery MSS (Max Segment Size) What happens without PMTU discovery? n n Might select wrong MTU and cause fragmentation Suboptimal selection of TCP MSS (536 default? )

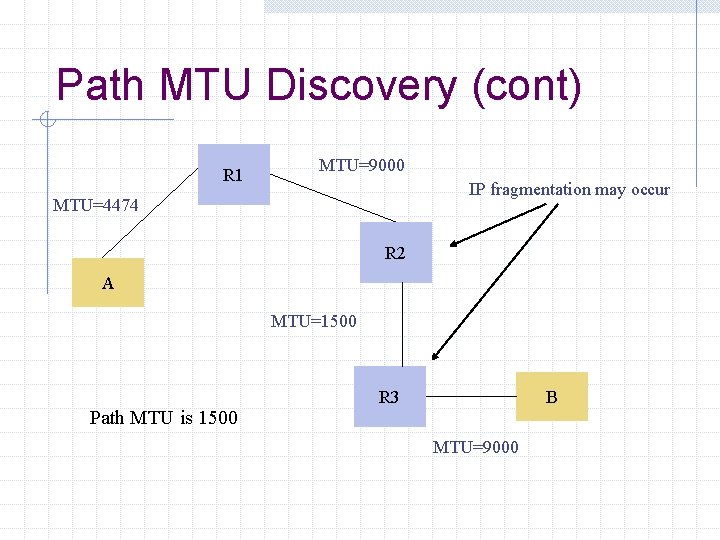

Path MTU Discovery (cont) R 1 MTU=9000 IP fragmentation may occur MTU=4474 R 2 A MTU=1500 Path MTU is 1500 R 3 B MTU=9000

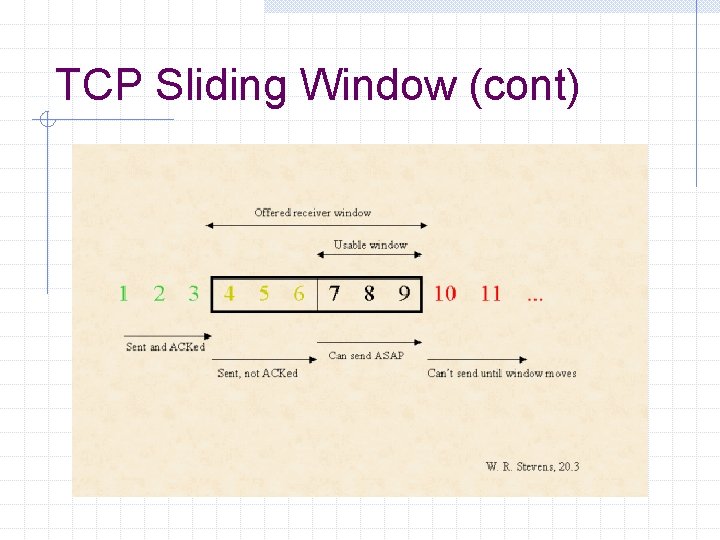

TCP Sliding Window TCP uses a flow control method called “Sliding Window” n n n Allows sender to send multiple segments before it has to wait for an ACK Results in faster transfer rate coz sender doesn’t have to wait for an ACK each time a packet is sent Receiver advertises a window size that tells the sender how much data it can send without waiting for ACK

TCP Sliding Window (cont)

Slow Start In actuality, TCP starts with small window and slowly ramps it up (upto rwin) Congestion Window (cwnd) n n controls startup and limits throughput in the face of congestion cwnd initialized to 1 segment cwnd gets larger after every new ACK cwnd gets smaller when packet loss is detected Slow Start is actually exponential

Congestion Avoidance Assumption: packet loss is caused by congestion When congestion occurs, slow down transmission rate n n n Reset cwnd to 1 if timeout Use slowstart until we reach the half way point where congestion occurred. Then use linear increase n Increase cwnd by ~ 1 segment/RTT

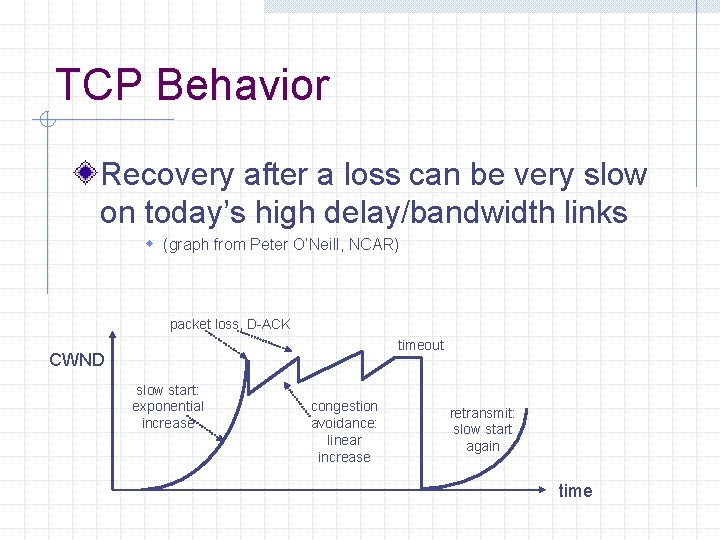

TCP Behavior Recovery after a loss can be very slow on today’s high delay/bandwidth links w (graph from Peter O’Neill, NCAR) packet loss, D-ACK timeout CWND slow start: exponential increase congestion avoidance: linear increase retransmit: slow start again time

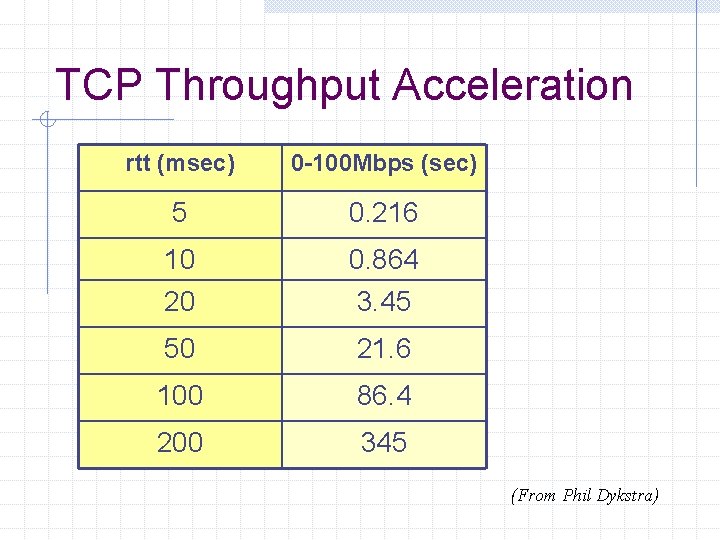

TCP Throughput Acceleration rtt (msec) 0 -100 Mbps (sec) 5 0. 216 10 20 0. 864 3. 45 50 21. 6 100 86. 4 200 345 (From Phil Dykstra)

TCP Window Size Tuning TCP performance depends on: n n Transfer rate (bandwidth) Round trip time BW*Delay product TCP Window should be sized to be at least as large as the BW*Delay product

BW*Delay Product BW*Delay product measures: n n n Amount of data that would fill the network pipe Buffer space required at sender and receiver to achieve the max possible TCP throughput Amount of unacknowledged data that TCP must handle in order to keep pipe full

BW*Delay example A path from Penn to Stanford has: n n Round trip time: 60 ms Bandwidth: 120 Mbps BW * Delay = n n n 60/1000 sec * 120 * 1000000 bits/sec = 7200000 bits = 7200 Kbits = 900 Kbytes So TCP window should be at least 900 KB

TCP Window Scaling RFC 1323: TCP Extensions for High Performance Allows scaling of TCP window size beyond 64 KB (16 bit window field) n Introduces new TCP option Note: In previous example, TCP needs to support Window Scaling to use 900 KB window

Window Scaling Pitfalls Why not use large windows always? n n n Might consume large memory resources May not be useful for all applications Isn’t useful in the campus/LAN environment

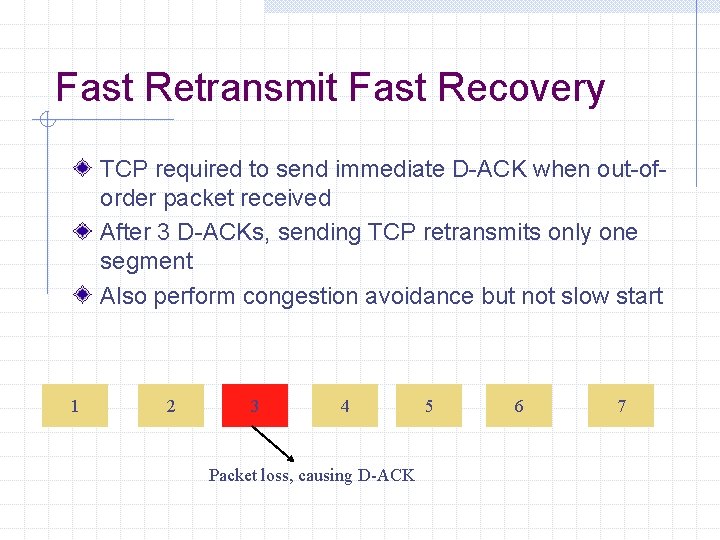

Fast Retransmit Fast Recovery TCP required to send immediate D-ACK when out-oforder packet received After 3 D-ACKs, sending TCP retransmits only one segment Also perform congestion avoidance but not slow start 1 2 3 4 Packet loss, causing D-ACK 5 6 7

TCP Selective Acks (SACK) RFC 2018 Allows TCP to efficiently recover from multiple segment losses within a window Without retransmitting entire window

Enough about TCP

Performance depends on App So, understand application’s requirements (high throughput, low latency, low jitter), eg: n File Transfer using TCP w Needs high throughput w Intolerant of packet loss w May be more tolerant of delay n Interactive Video Conferencing application w Tolerant of some loss w More intolerant of delay and jitter

Quality of Service (Qo. S) A method to selectively allocate scarce network resources A mechanism to offer varying degrees of service to varying classes of traffic Service: delay, jitter, proportion of link bandwidth etc

Quality of Service (Qo. S) cont Requires deployed Qo. S infrastructure n Might require w Traffic marking capabilities in hosts and network hardware w Traffic classification and identification capabilities w Multiple traffic queues with different service characteristics w Different queue servicing algorithms w Mechanisms to specify and enforce Qo. S policy w Signalling mechanisms IEEE 802. 1 p, IP precedence, Int. Serv/RSVP, Diff. Serv, MPLS

Performance Measurement Tools To measure “real” performance of an app, you need to instrument the app with measurement code! However, independent measurement of some common network perf metrics can be done Two kinds: n Active and Passive measurement

Active Measurement Ping Traceroute Netperf Iperf Pathchar Pathrate Mping http: //www. netperf. org/ http: //dast. nlanr. net/Projects/Iperf/ ftp: //ftp. ee. lbl. gov/pathchar/ http: //www. pathrate. org/

Passive Measurement OCx. MON/PCMon Router/switch stats collected via n n SNMP Netflow, etc tcpdump, snoop, etherfind

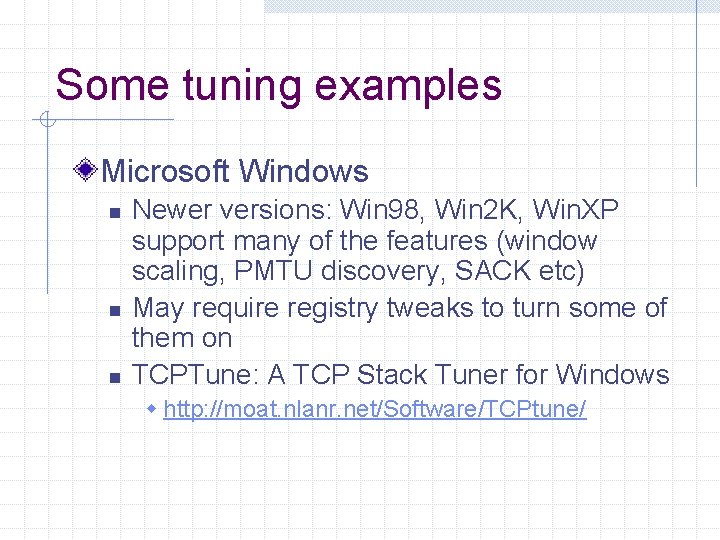

Some tuning examples Microsoft Windows n n n Newer versions: Win 98, Win 2 K, Win. XP support many of the features (window scaling, PMTU discovery, SACK etc) May require registry tweaks to turn some of them on TCPTune: A TCP Stack Tuner for Windows w http: //moat. nlanr. net/Software/TCPtune/

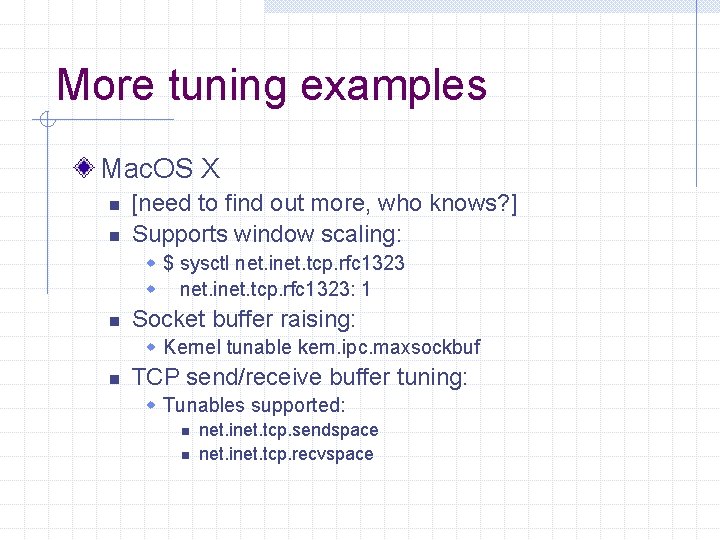

More tuning examples Mac. OS X n n [need to find out more, who knows? ] Supports window scaling: w $ sysctl net. inet. tcp. rfc 1323 w net. inet. tcp. rfc 1323: 1 n Socket buffer raising: w Kernel tunable kern. ipc. maxsockbuf n TCP send/receive buffer tuning: w Tunables supported: n n net. inet. tcp. sendspace net. inet. tcp. recvspace

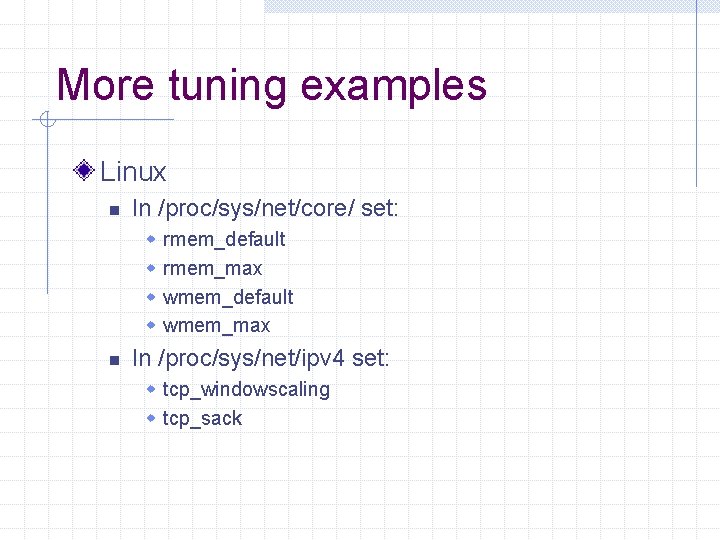

More tuning examples Linux n In /proc/sys/net/core/ set: w w n rmem_default rmem_max wmem_default wmem_max In /proc/sys/net/ipv 4 set: w tcp_windowscaling w tcp_sack

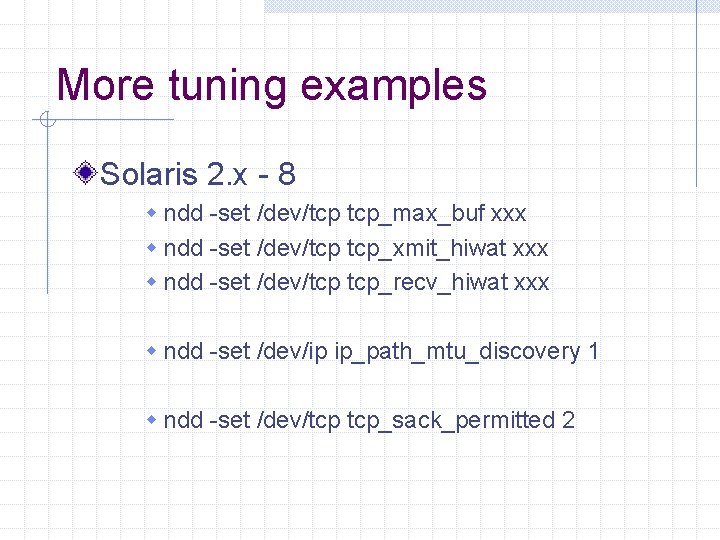

More tuning examples Solaris 2. x - 8 w ndd -set /dev/tcp tcp_max_buf xxx w ndd -set /dev/tcp tcp_xmit_hiwat xxx w ndd -set /dev/tcp tcp_recv_hiwat xxx w ndd -set /dev/ip ip_path_mtu_discovery 1 w ndd -set /dev/tcp tcp_sack_permitted 2

Web 100 Project http: //www. web 100. org/ Enhance TCP capabilities with: n n Better (finer grain) kernel instrumentation Automatic controls Availability: n n Today: Linux (patches for 2. 4. 16 kernel) Being ported to other operating systems.

Things you can do (WAN) Make sure app offers adequately sized receive windows and send buffers But don’t run your system out of memory Find out your path RTT with ping Check your path with traceroute Determine bottleneck capacity and available bandwidth on path Make sure your OS uses Path MTU discovery Make sure your OS uses TCP Large Windows, Fast Retransmit, SACK

Things you can do (Campus) Check your host n (80% of the problems) Check your host n n n Bandwidth/Duplex problems Network stack tuning Application tuning Talk to campus networking folks

Conclusion Understand performance requirements of your application What are the issues? n n Campus/LAN environment What can you do to ask for help?

Any Questions? Deke Kassabian n deke@isc. upenn. edu Shumon Huque n shuque@isc. upenn. edu

- Slides: 65