Net Lord A Scalable MultiTenant Network Architecture for

Net. Lord: A Scalable Multi-Tenant Network Architecture for Virtualized Datacenters SIGCOMM ’ 11 Authors: Jayaram Mudigonda, Praveen Yalagandula, Jeff Mogul HP Labs, Palo Alto, CA Bryan Stiekes, Yanick Pouffary HP Speaker: Ming-Chao Hsu, National Cheng Kung University 1

Outline • • INTRODUCTION PROBLEM AND BACKGROUND NETLORD’S DESIGN BENEFITS AND DESIGN RATIONALE EXPERIMENTAL ANALYSIS DISCUSSION AND FUTUREWORK CONCLUSIONS 2

INTRODUCTION • Cloud datacenters such as Amazon EC 2 and Microsoft Azure are becoming increasingly popular – computing resources at a very low cost – pay-as-you-go model. – eliminate the expensive and maintaining infrastructure. • Cloud datacenter operators can provide cost effective Infrastructure as a Service (Iaa. S) – time multiplex the physical infrastructure – CPU virtualization techniques (VMWare and Xen) • Resource sharing (scale to huge sizes) – the larger number of tenants – the larger number of VMs – Iaa. S networks virtualization and multitenancy • at scales of tens of thousands of tenants and servers, and hundreds of thousands of VMs 3

INTRODUCTION • Most existing datacenter network architectures suffer following drawbacks – expensive to scale: • requires huge core switches with thousands of ports • require complex new protocols, hardware and specific features ( such as IP-in. IP decapsulation, MAC-in-MAC encapsulation, switch modifications) • require excessive switch resources(large forwarding tables) – provide limited support for multi-tenancy • a tenant should be able to define its own layer-2 and layer-3 addresses • performance guarantees and performance isolation • provide IP address space sharing – require complex configuration • careful manual configuration for setting up IP subnets and configuring OSPF 4

INTRODUCTION • Net. Lord – Encapsulates a tenant’s L 2 packets • to provide full address-space virtualization. – light-weight agent • in the end-host hypervisors • to transparently encapsulate and decapsulate packets from and to the local VMs. – leverages SPAIN’s approach • to multi-pathing , • using VLAN tags to identify paths through the network. – The encapsulating Ethernet header directs the packet to the destination server’s edge switch -> the correct server(hypervisor) -> the correct tenant. – Does not expose any tenant MAC addresses to the switches • also hides most of the physical server addresses • the switches can use very small forwarding tables • reducing capital, configurations and operational costs 5

PROBLEM AND BACKGROUND • Scale at low cost – must scale, low cost, large numbers of tenants, hosts, and VMs. – commodity network switches often have only limited resources and features. • MAC forwarding information base (FIB) table entries in data plane • e. g. , 64 K in HP Pro. Curve and 55 K in the Cisco Catalyst 4500 series • Small data-plane FIB tables – MAC-address learning problem – working set of active MAC addresses > switch data-plane table • some entries will be lost and a subsequent packet to those destinations will cause flooding. • Networks operated and handle link failures automatically • Flexible network abstraction – Different tenants will have different network needs. – VMs migration • without needing to change the network addresses of the VMs. • Fully virtualizes the L 2 and L 3 address spaces for each tenant – without any restrictions on the tenant’s choice of L 2 or L 3 addresses. 6

PROBLEM AND BACKGROUND • Multi-tenant datacenters: – VLANs to isolate the different tenants on a single L 2 network • the hypervisor encapsulate a VM’s packets with a VLAN tag – the VLAN tag is a 12 -bit field in the VLAN header, limiting this to at most 4 K tenants – The IEEE 802. 1 ad “Qin. Q” protocol to allow stacking of VLAN tags, which would relieve the 4 K limit, but Qin. Q is not yet widely supported – Spanning Tree Protocol • limits the bisection bandwidth for any given tenant • expose all VM addresses to the switches, creating scalability problems – Amazon’s Virtual Private Cloud (VPC) • provides an L 3 abstraction and full IP address space virtualization. • VPC does not support multicast and broadcast -> implies that the tenants do not get an L 2 abstraction. – Greenberg et al. propose VL 2 • • VL 2 provides each tenant with a single IP subnet (L 3) abstraction support IP-in-IP decapsulation/capsulation the approach assumes several features not common in commodity switches VL 2 handles a service’s L 2 broadcast packets by transmitting them on a IP multicast address assigned to that service. – Diverter • overwrite the MAC addresses of inter-subnet packets • accommodates multiple tenants’ logical IP subnets on a single physical topology. • it assigns unique IP addresses to the VMs -> it does not provide L 3 address virtualization. 7

PROBLEM AND BACKGROUND • Scalable network fabrics: – Many research projects and industry standards address the limitations of Ethernet’s Spanning Tree Protocol. • • TRILL (an IETF standard) , Shortest Path Bridging (an IEEE standard) Seattle , support multipathing using a single-shortest-path approach. These three need new control-plane protocol implementations and data-plane silicon. Hence, inexpensive commodity switches will not support them – Scott et al. proposed MOOSE • address Ethernet scaling issues by using hierarchical MAC addressing • also uses shortest-path routing • needs switches forward packets based on MAC prefixes – Mudigonda et al. proposed SPAIN • SPAIN uses the VLAN support in existing commodity Ethernet switches to provide multipathing topologies • SPAIN uses VLAN tags to identify k edge-disjoint paths between pairs of endpoint hosts. • The original SPAIN design may expose each end-point MAC address k times (once per VLAN ) • stressing data-plane tables , and it can not scale to large number of VMs. 8

NETLORD’S DESIGN • Net. Lord leverages our previous work on SPAIN – provide a multi-path fabric using commodity switches. • The Net. Lord Agent (NLA) – light-weight agent, in the hypervisors – encapsulating L 2 and L 3 headers -> the correct edge switch – the egress edge switch to deliver the packet to the correct server, and allows the destination NLA to deliver the packet to the correct tenant. • The encapsulation method is that tenant VM addresses are never exposed to the actual hardware switches. – Share a single edge-switch MAC address across a large number of physical and virtual machines. – This resolves the problem of FIB-table pressure, instead of needing to store millions of VM addresses in their FIBs – Edge-switch FIBs must also store the addresses of their directly connected server NICs 9

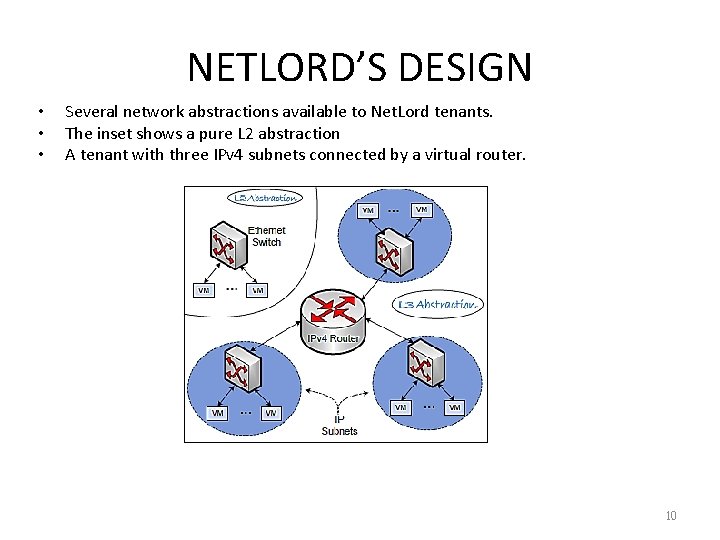

NETLORD’S DESIGN • • • Several network abstractions available to Net. Lord tenants. The inset shows a pure L 2 abstraction A tenant with three IPv 4 subnets connected by a virtual router. 10

NETLORD’S DESIGN • Net. Lord’s components – Fabric switches: • traditional, unmodified commodity switches. • support VLANs (for multi-pathing) and basic IP forwarding. • no routing protocol(RIP, OSPF) – A Net. Lord Agent (NLA) • resides in the hypervisor of each physical server • encapsulates and decapsulates all packets from and to the local VMs. • the NLA builds and maintains a table – VM’s Virtual Interface (VIF) ID -> port number + MAC address ( edge switch) – Configuration repository: • The repository resides at an address known to the NLAs • VM manager system and maintains several databases. – SPAIN-style multi-pathing; per-tenant configuration information 11

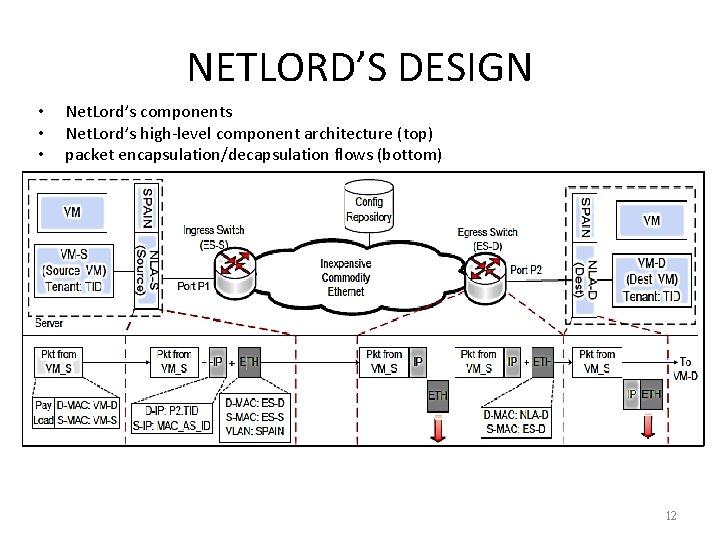

NETLORD’S DESIGN • • • Net. Lord’s components Net. Lord’s high-level component architecture (top) packet encapsulation/decapsulation flows (bottom) 12

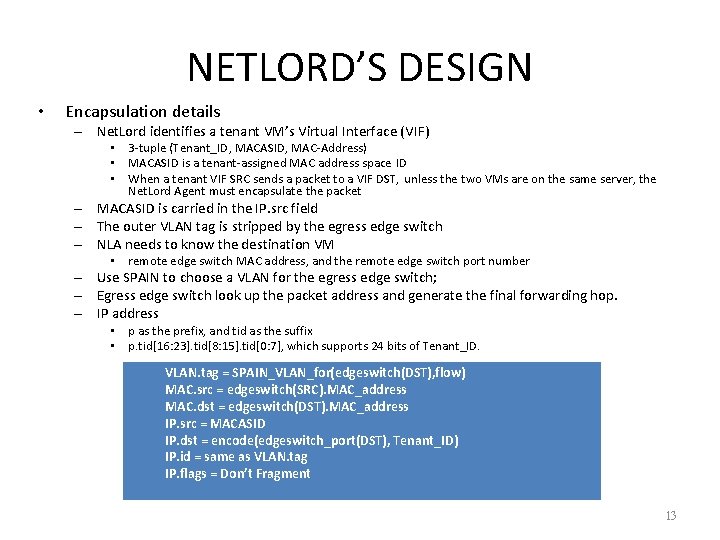

NETLORD’S DESIGN • Encapsulation details – Net. Lord identifies a tenant VM’s Virtual Interface (VIF) • 3 -tuple (Tenant_ID, MACASID, MAC-Address) • MACASID is a tenant-assigned MAC address space ID • When a tenant VIF SRC sends a packet to a VIF DST, unless the two VMs are on the same server, the Net. Lord Agent must encapsulate the packet – MACASID is carried in the IP. src field – The outer VLAN tag is stripped by the egress edge switch – NLA needs to know the destination VM • remote edge switch MAC address, and the remote edge switch port number – Use SPAIN to choose a VLAN for the egress edge switch; – Egress edge switch look up the packet address and generate the final forwarding hop. – IP address • p as the prefix, and tid as the suffix • p. tid[16: 23]. tid[8: 15]. tid[0: 7], which supports 24 bits of Tenant_ID. VLAN. tag = SPAIN_VLAN_for(edgeswitch(DST), flow) MAC. src = edgeswitch(SRC). MAC_address MAC. dst = edgeswitch(DST). MAC_address IP. src = MACASID IP. dst = encode(edgeswitch_port(DST), Tenant_ID) IP. id = same as VLAN. tag IP. flags = Don’t Fragment 13

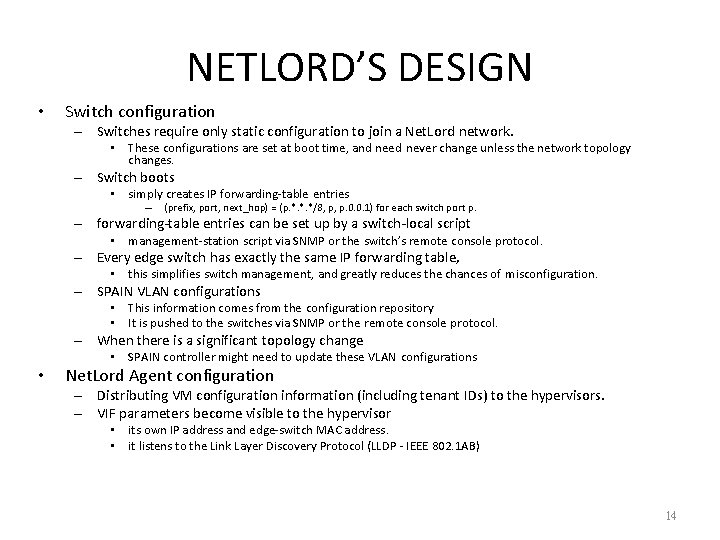

NETLORD’S DESIGN • Switch configuration – Switches require only static configuration to join a Net. Lord network. • These configurations are set at boot time, and need never change unless the network topology changes. – Switch boots • simply creates IP forwarding-table entries – (prefix, port, next_hop) = (p. *. *. */8, p, p. 0. 0. 1) for each switch port p. – forwarding-table entries can be set up by a switch-local script • management-station script via SNMP or the switch’s remote console protocol. – Every edge switch has exactly the same IP forwarding table, • this simplifies switch management, and greatly reduces the chances of misconfiguration. – SPAIN VLAN configurations • This information comes from the configuration repository • It is pushed to the switches via SNMP or the remote console protocol. – When there is a significant topology change • SPAIN controller might need to update these VLAN configurations • Net. Lord Agent configuration – Distributing VM configuration information (including tenant IDs) to the hypervisors. – VIF parameters become visible to the hypervisor • its own IP address and edge-switch MAC address. • it listens to the Link Layer Discovery Protocol (LLDP - IEEE 802. 1 AB) 14

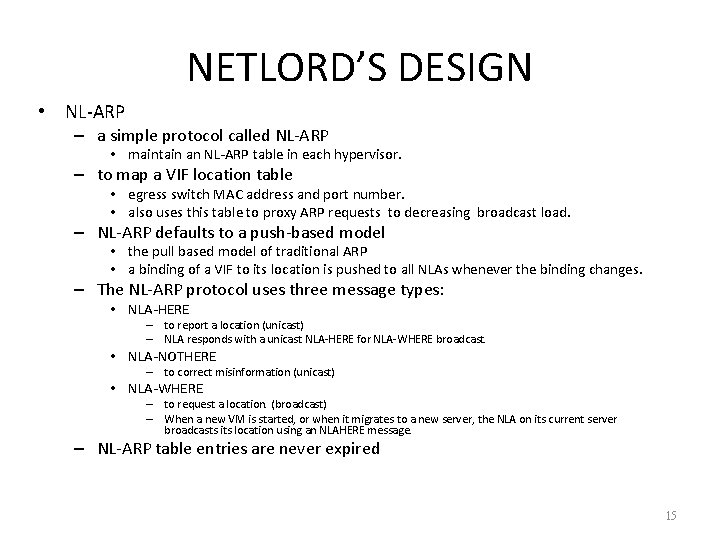

NETLORD’S DESIGN • NL-ARP – a simple protocol called NL-ARP • maintain an NL-ARP table in each hypervisor. – to map a VIF location table • egress switch MAC address and port number. • also uses this table to proxy ARP requests to decreasing broadcast load. – NL-ARP defaults to a push-based model • the pull based model of traditional ARP • a binding of a VIF to its location is pushed to all NLAs whenever the binding changes. – The NL-ARP protocol uses three message types: • NLA-HERE – to report a location (unicast) – NLA responds with a unicast NLA-HERE for NLA-WHERE broadcast. • NLA-NOTHERE – to correct misinformation (unicast) • NLA-WHERE – to request a location. (broadcast) – When a new VM is started, or when it migrates to a new server, the NLA on its current server broadcasts its location using an NLAHERE message. – NL-ARP table entries are never expired 15

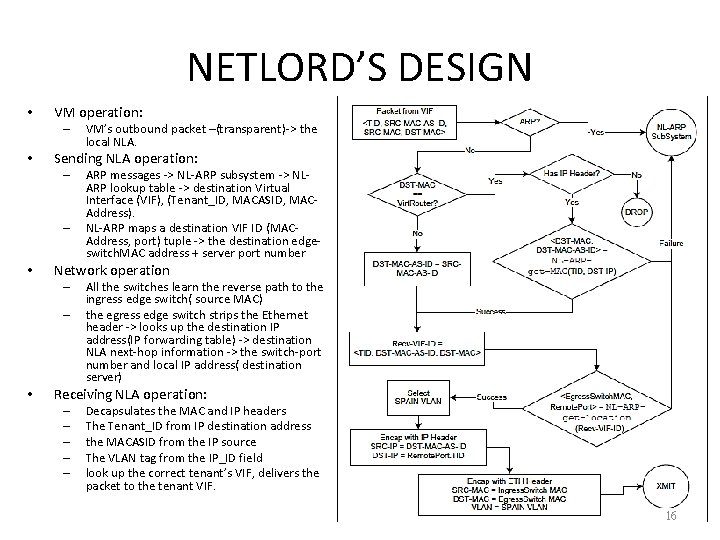

NETLORD’S DESIGN • VM operation: – • Sending NLA operation: – – • ARP messages -> NL-ARP subsystem -> NLARP lookup table -> destination Virtual Interface (VIF), (Tenant_ID, MACASID, MACAddress). NL-ARP maps a destination VIF ID (MACAddress, port) tuple -> the destination edgeswitch. MAC address + server port number Network operation – – • VM’s outbound packet –(transparent)-> the local NLA. All the switches learn the reverse path to the ingress edge switch( source MAC) the egress edge switch strips the Ethernet header -> looks up the destination IP address(IP forwarding table) -> destination NLA next-hop information -> the switch-port number and local IP address( destination server) Receiving NLA operation: – – – Decapsulates the MAC and IP headers The Tenant_ID from IP destination address the MACASID from the IP source The VLAN tag from the IP_ID field look up the correct tenant’s VIF, delivers the packet to the tenant VIF. 16

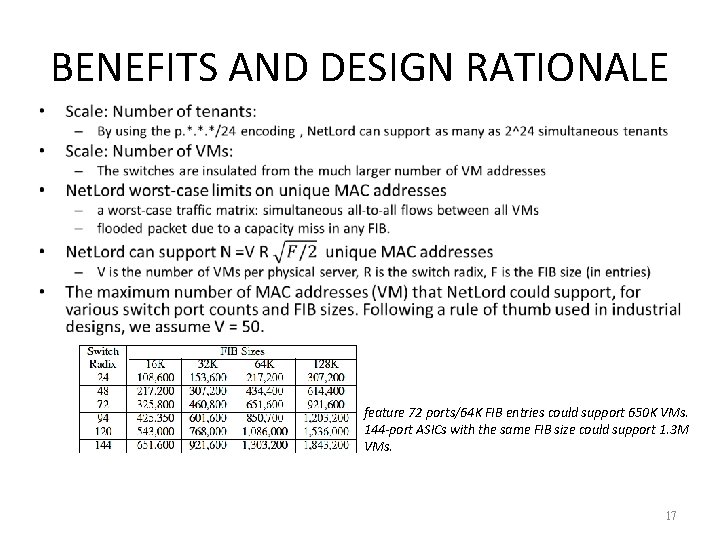

BENEFITS AND DESIGN RATIONALE • feature 72 ports/64 K FIB entries could support 650 K VMs. 144 -port ASICs with the same FIB size could support 1. 3 M VMs. 17

BENEFITS AND DESIGN RATIONALE • Why encapsulate – VM addresses can be hidden from switch FIBs either via encapsulation or via header-rewriting. – Drawbacks • (1) Increased per-packet overhead – extra header bytes and CPU for processing them • (2) Heightened chances for fragmentation and thus dropped packets ; • (3) Increased complexity for in-network Qo. S processing. • Why not use MAC-in-MAC? – This would require the egress edge-switch FIB to map a VM destination MAC address – It also requires some mechanism to update the FIB when a VM starts, stops, or migrates – MAC-in-MAC is not yet widely supported in inexpensive datacenter switches. • NL-ARP overheads: – NL-ARP imposes two kinds of overheads: • bandwidth for its messages on the network • space on the servers for its tables. – At least one 10 Gbps NIC. NLA-HERE traffic to just 1% of that bandwidth • can sustain about 164, 000 NLA-HERE messages per second. – Each table entry is 32 bytes. • A million entries can be stored in a 64 MB hash table • This table consumes about 0. 8% of that RAM(8 GB of RAM). 18

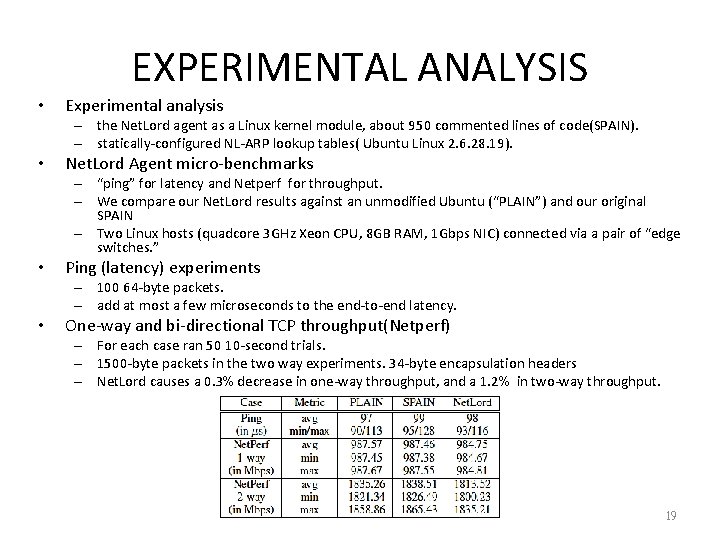

EXPERIMENTAL ANALYSIS • Experimental analysis – the Net. Lord agent as a Linux kernel module, about 950 commented lines of code(SPAIN). – statically-configured NL-ARP lookup tables( Ubuntu Linux 2. 6. 28. 19). • Net. Lord Agent micro-benchmarks – “ping” for latency and Netperf for throughput. – We compare our Net. Lord results against an unmodified Ubuntu (“PLAIN”) and our original SPAIN – Two Linux hosts (quadcore 3 GHz Xeon CPU, 8 GB RAM, 1 Gbps NIC) connected via a pair of “edge switches. ” • Ping (latency) experiments – 100 64 -byte packets. – add at most a few microseconds to the end-to-end latency. • One-way and bi-directional TCP throughput(Netperf) – For each case ran 50 10 -second trials. – 1500 -byte packets in the two way experiments. 34 -byte encapsulation headers – Net. Lord causes a 0. 3% decrease in one-way throughput, and a 1. 2% in two-way throughput. 19

EXPERIMENTAL ANALYSIS • Emulation methodology and testbed – – • testing on 74 servers light-weight VM shim layer to the NLA kernel module. each TCP flow endpoint becomes an emulated VM. emulate up to V = 3000 VMs per server. 74 VMs per tenant on our 74 servers Metrics: – compute the goodput as the total number of bytes transferred by all tasks • Testbed: – – • a larger shared testbed(74 servers) Each server has a quad-core 3 GHz Xeon CPU and 8 GB RAM. The 74 servers are distributed across 6 edge switches All switch and NIC ports run at 1 Gbps, and the switches can hold 64 K FIB table entries. Topologies: – (i) Fat. Tree topology • the entire testbed has 16 edge switches, another 8 switches to emulate 16 core switches • we constructed a two-level Fat. Tree, with full bisection bandwidth. • no oversubscription – (ii) Clique topology: • All 16 edge switches are connected to each other • oversubscribed by at most 2: 1. 20

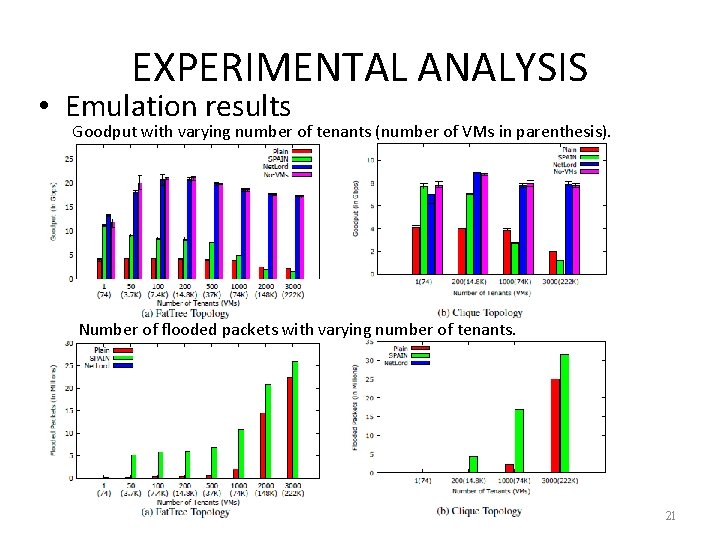

EXPERIMENTAL ANALYSIS • Emulation results Goodput with varying number of tenants (number of VMs in parenthesis). Number of flooded packets with varying number of tenants. 21

- Slides: 21