NEC 2011 Varna Bulgaria September 12 19 2011

NEC 2011 - Varna, Bulgaria - September 12 -19, 2011 Spanning from Data Acquisition to Grid Today and a personal view of tomorrow Livio Mapelli CERN - Physics Dept. Use LHC experiments as basis for analysis and future projections Requirements, present and future, are the most demanding in HEP Solutions developed are certainly valid in general in HEP and possibly beyond Outline The challenge for DAQ and Analysis (brief problem description) Concepts, models/architectures and designs Evolution? Thanks to Sergio Cittolin , Ian Bird , Alexei Klimentov, , Massimo Lamanna for their help and inspiring discussions I have also enjoyed and consulted the LHC reports: P Hristov – A Klimentov – A Ttsaregorodtsev – P Kreuzer 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 1

Set the scene - Overall requirements Any design must be requirements driven Physics at the LHC means: Identify and select single events out of 10 000 000 with bunches crossing at 40 000 per second producing several simultaneous p-p interactions >20 every 25 ns resulting in >1000 particles / 25 ns Transport and reduce in real time 100’s of GB / s Store and retrieve efficiently 10’s of PB of data per year And at the end of the day… … be ready for big discoveries 2/25/2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 2

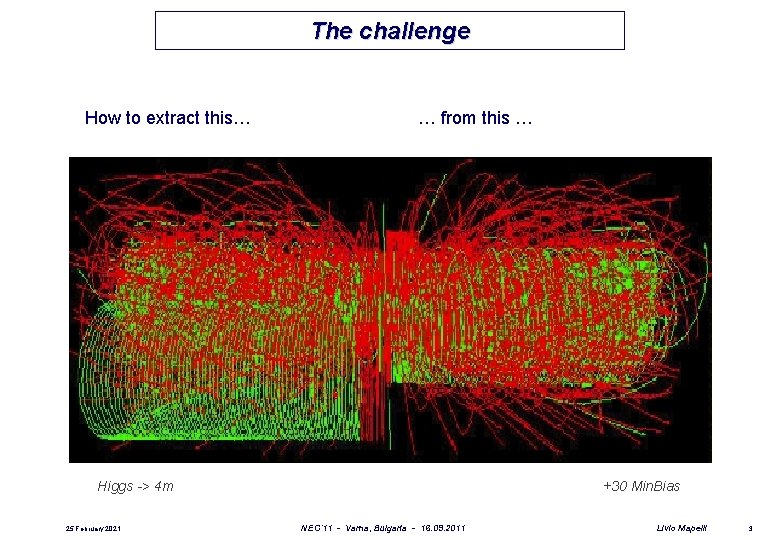

The challenge How to extract this… … from this … Higgs -> 4 m 25 February 2021 +30 Min. Bias NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 3

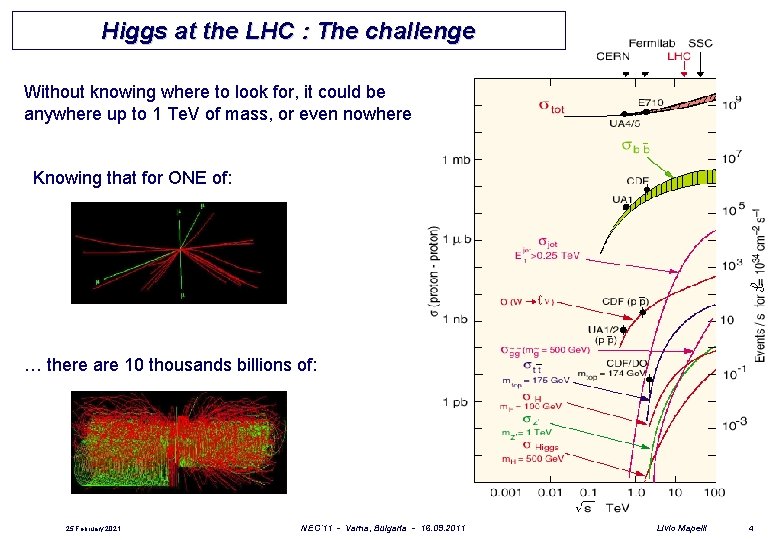

Higgs at the LHC : The challenge Without knowing where to look for, it could be anywhere up to 1 Te. V of mass, or even nowhere Knowing that for ONE of: … there are 10 thousands billions of: 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 4

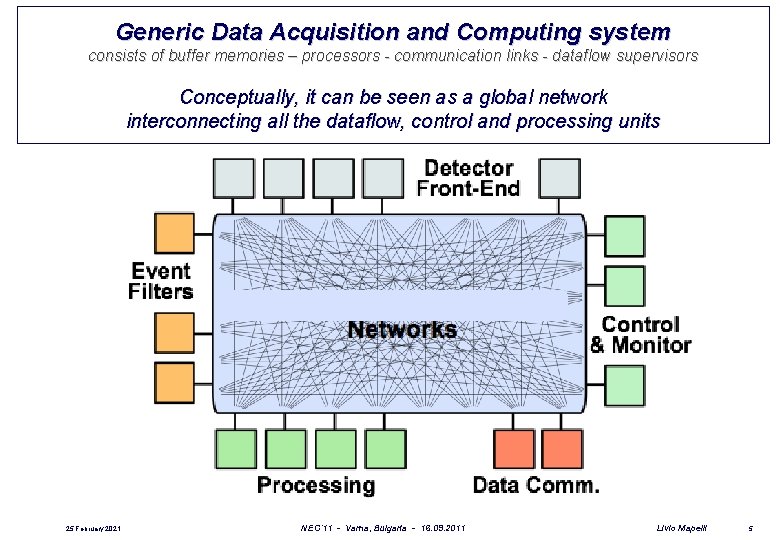

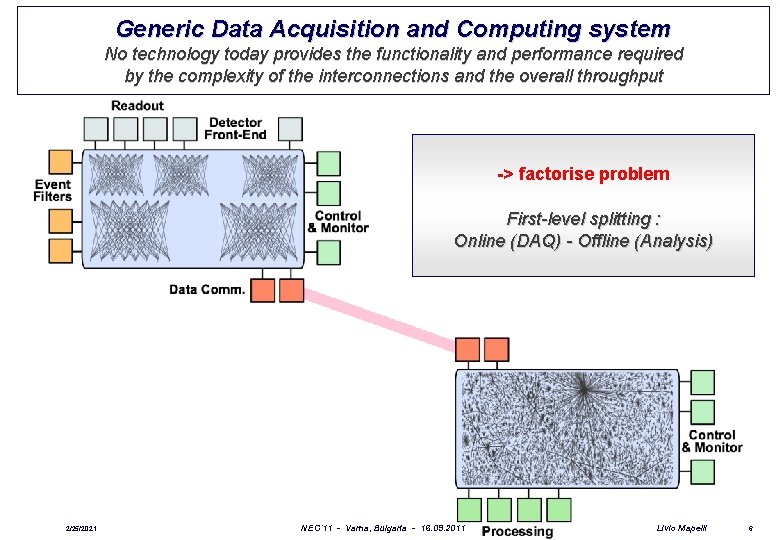

Generic Data Acquisition and Computing system consists of buffer memories – processors - communication links - dataflow supervisors Conceptually, it can be seen as a global network interconnecting all the dataflow, control and processing units 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 5

Generic Data Acquisition and Computing system No technology today provides the functionality and performance required by the complexity of the interconnections and the overall throughput -> factorise problem First-level splitting : Online (DAQ) - Offline (Analysis) 2/25/2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 6

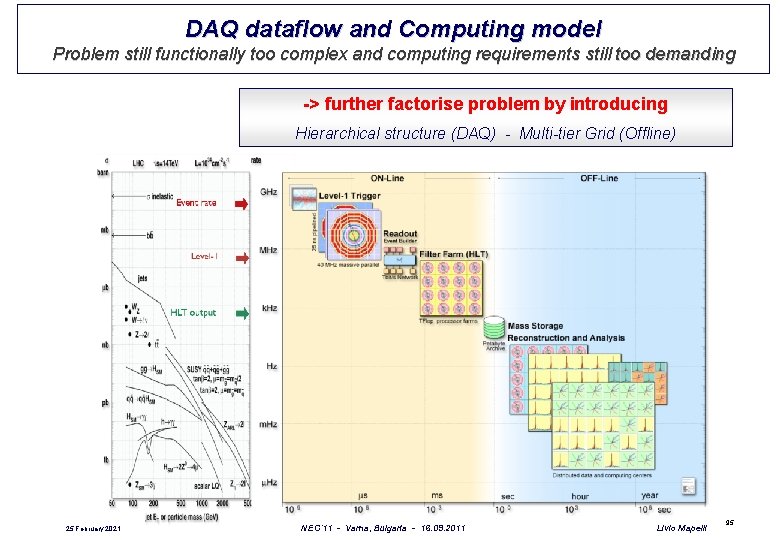

DAQ dataflow and Computing model Problem still functionally too complex and computing requirements still too demanding -> further factorise problem by introducing Hierarchical structure (DAQ) - Multi-tier Grid (Offline) 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 95

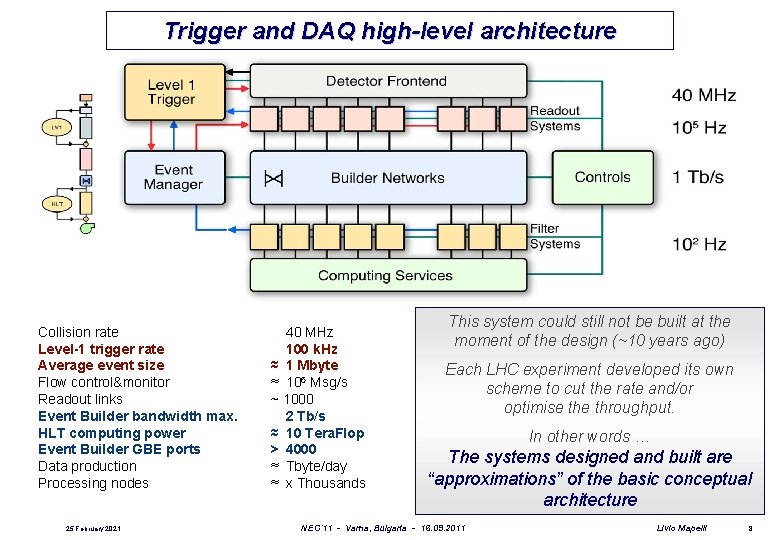

Trigger and DAQ high-level architecture Collision rate Level-1 trigger rate Average event size Flow control&monitor Readout links Event Builder bandwidth max. HLT computing power Event Builder GBE ports Data production Processing nodes 25 February 2021 40 MHz 100 k. Hz ≈ 1 Mbyte ≈ 106 Msg/s ~ 1000 2 Tb/s ≈ 10 Tera. Flop > 4000 ≈ Tbyte/day ≈ x Thousands This system could still not be built at the moment of the design (~10 years ago) Each LHC experiment developed its own scheme to cut the rate and/or optimise throughput. In other words … The systems designed and built are “approximations” of the basic conceptual architecture NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 8

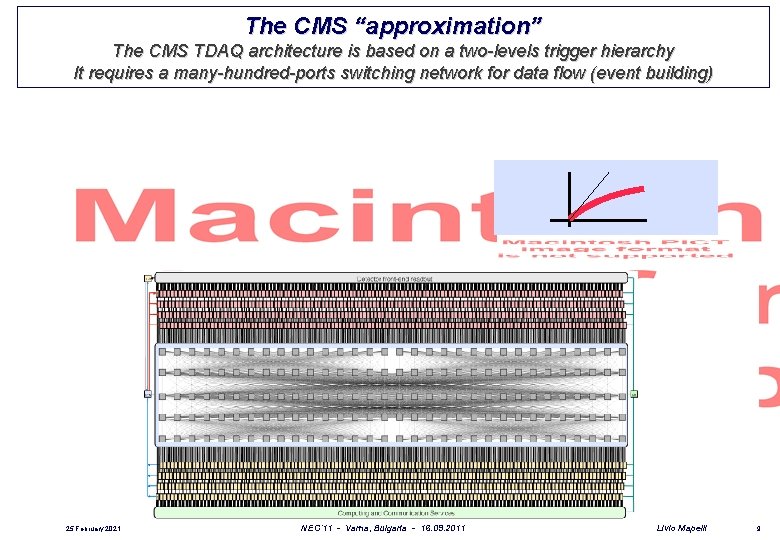

The CMS “approximation” The CMS TDAQ architecture is based on a two-levels trigger hierarchy It requires a many-hundred-ports switching network for data flow (event building) 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 9

The CMS “approximation” Clever solution : Introduce a two-steps event-building mechanism for the distribution of events to separate smaller networks (event multiplexing) 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 10

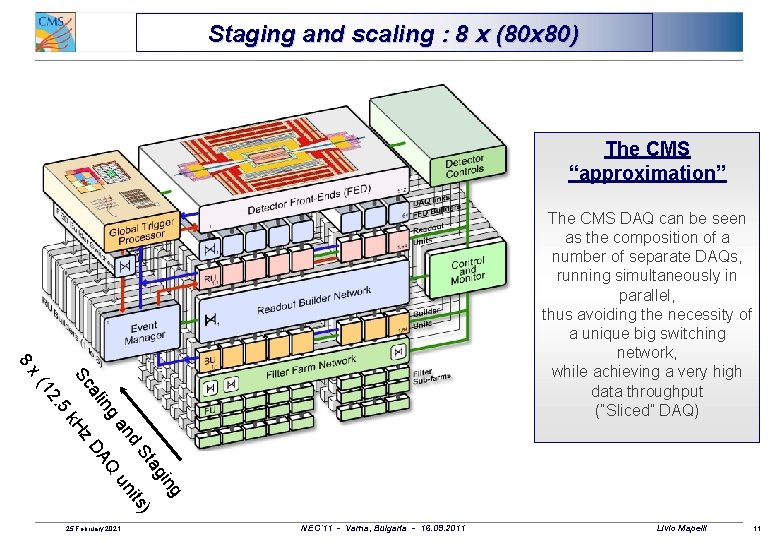

Staging and scaling : 8 x (80 x 80) The CMS “approximation” 8 The CMS DAQ can be seen as the composition of a number of separate DAQs, running simultaneously in parallel, thus avoiding the necessity of a unique big switching network, while achieving a very high data throughput (“Sliced” DAQ) k. H ing ag ) St its d un an ng AQ ali z. D Sc 5 . 12 x( 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 11

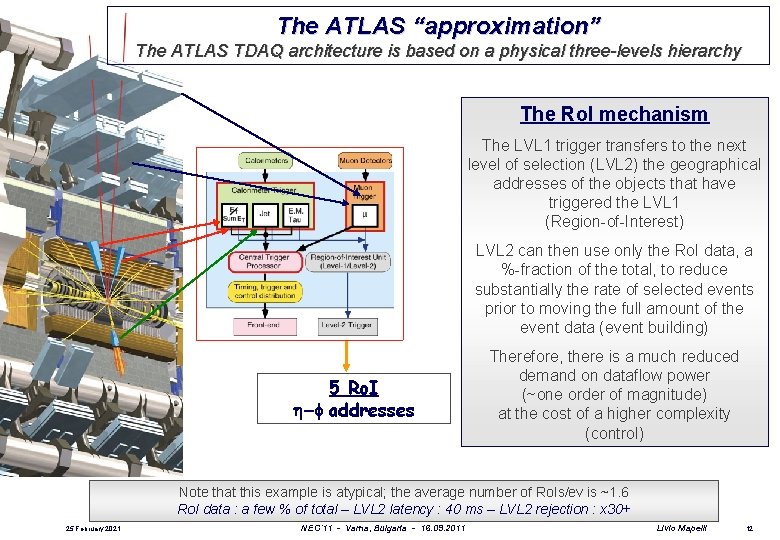

The ATLAS “approximation” The ATLAS TDAQ architecture is based on a physical three-levels hierarchy The Ro. I mechanism The LVL 1 trigger transfers to the next level of selection (LVL 2) the geographical addresses of the objects that have triggered the LVL 1 (Region-of-Interest) LVL 2 can then use only the Ro. I data, a %-fraction of the total, to reduce substantially the rate of selected events prior to moving the full amount of the event data (event building) 5 Ro. I -f addresses Therefore, there is a much reduced demand on dataflow power (~one order of magnitude) at the cost of a higher complexity (control) Note that this example is atypical; the average number of Ro. Is/ev is ~1. 6 Ro. I data : a few % of total – LVL 2 latency : 40 ms – LVL 2 rejection : x 30+ 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 12

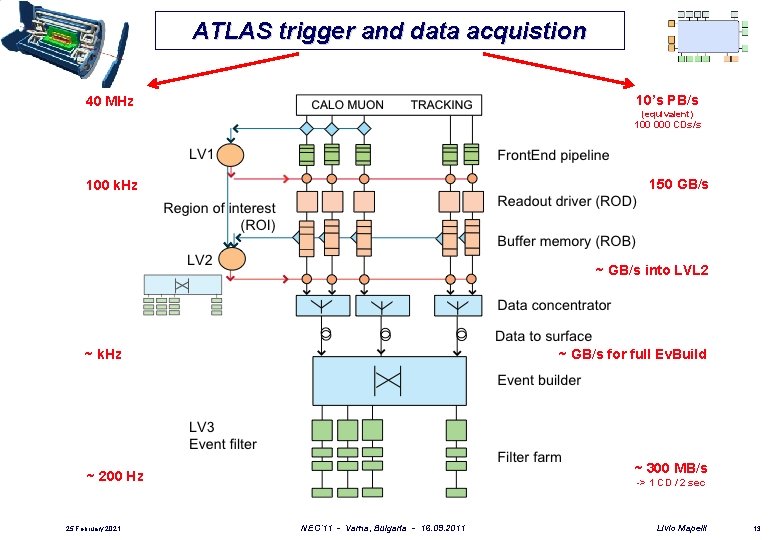

ATLAS trigger and data acquistion 10’s PB/s 40 MHz (equivalent) 100 000 CDs/s 150 GB/s 100 k. Hz ~ GB/s into LVL 2 ~ k. Hz ~ GB/s for full Ev. Build ~ 300 MB/s ~ 200 Hz 25 February 2021 -> 1 CD / 2 sec NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 13

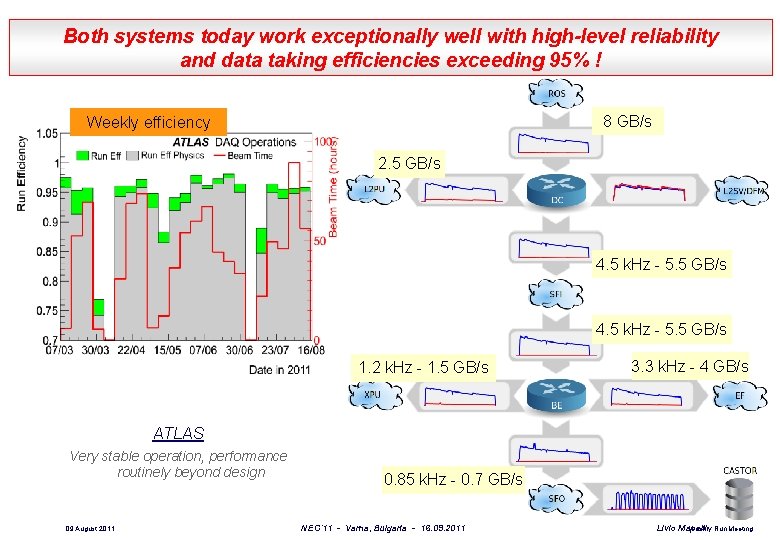

Both systems today work exceptionally well with high-level reliability and data taking efficiencies exceeding 95% ! 8 GB/s Weekly efficiency 2. 5 GB/s 4. 5 k. Hz - 5. 5 GB/s 1. 2 k. Hz - 1. 5 GB/s 3. 3 k. Hz - 4 GB/s ATLAS Very stable operation, performance routinely beyond design 09 August 2011 0. 85 k. Hz - 0. 7 GB/s NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Weekly Run Meeting Livio Mapelli

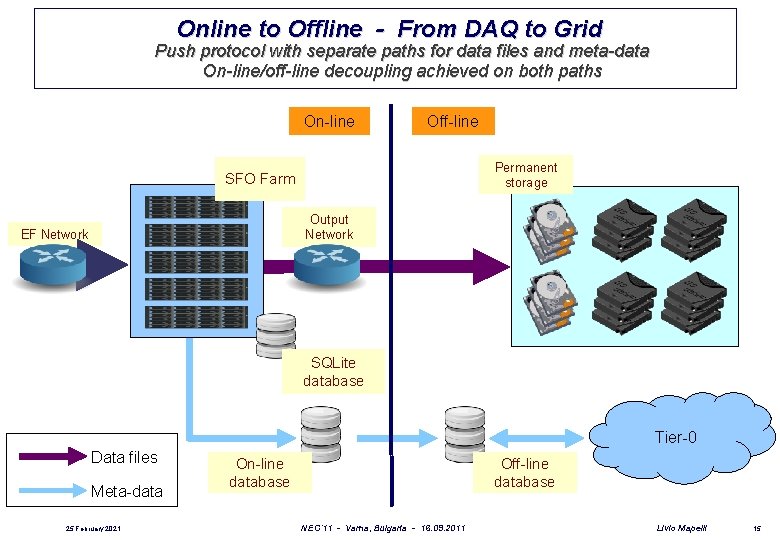

Online to Offline - From DAQ to Grid Push protocol with separate paths for data files and meta-data On-line/off-line decoupling achieved on both paths On-line Off-line Permanent storage SFO Farm Output Network EF Network SQLite database Tier-0 Data files Meta-data 25 February 2021 On-line database Off-line database NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 15

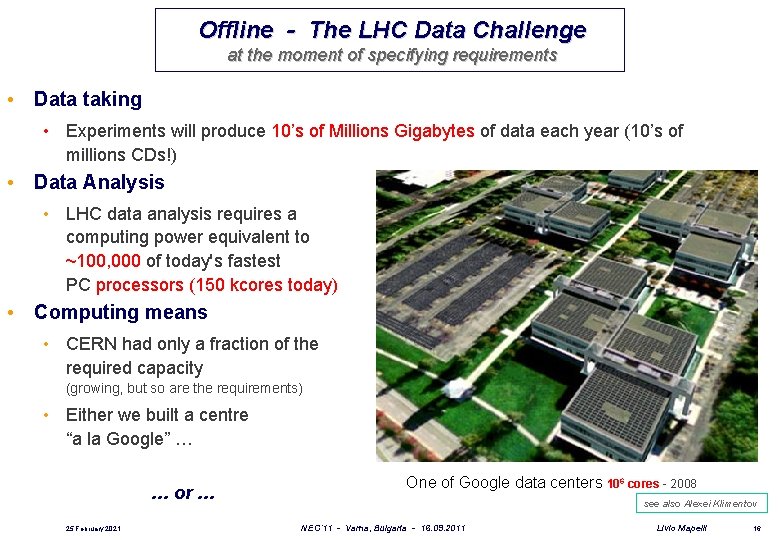

Offline - The LHC Data Challenge at the moment of specifying requirements • Data taking • Experiments will produce 10’s of Millions Gigabytes of data each year (10’s of millions CDs!) • Data Analysis • LHC data analysis requires a computing power equivalent to ~100, 000 of today's fastest PC processors (150 kcores today) • Computing means • CERN had only a fraction of the required capacity (growing, but so are the requirements) • Either we built a centre “a la Google” … … or … 25 February 2021 One of Google data centers 106 cores - 2008 see also Alexei Klimentov NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 16

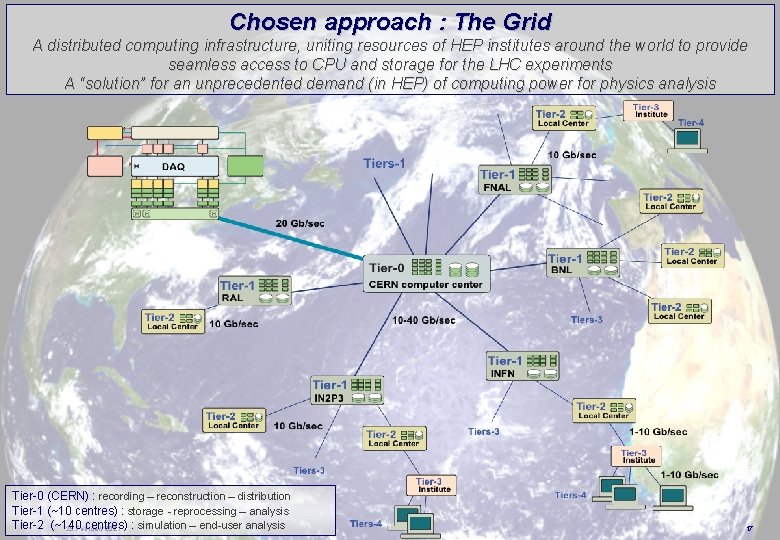

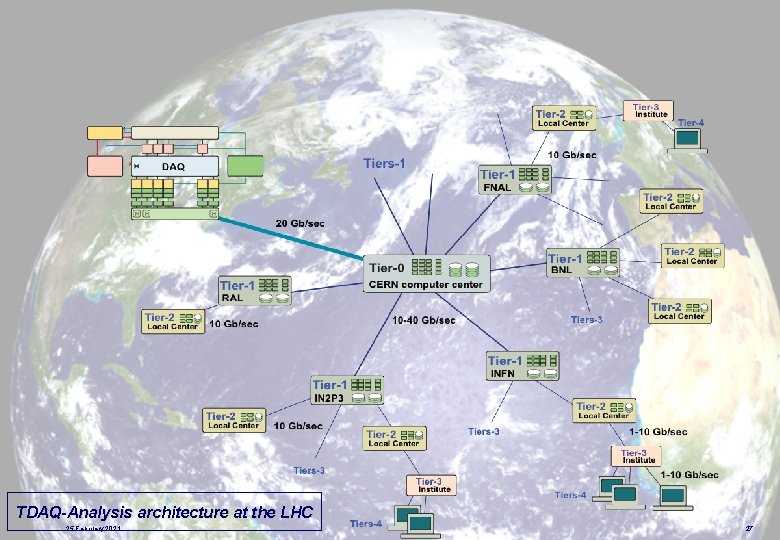

Chosen approach : The Grid A distributed computing infrastructure, uniting resources of HEP institutes around the world to provide seamless access to CPU and storage for the LHC experiments A “solution” for an unprecedented demand (in HEP) of computing power for physics analysis Tier-0 (CERN) : recording – reconstruction – distribution Tier-1 (~10 centres) : storage - reprocessing – analysis Tier-2 (~140 centres) 25 February 2021 : simulation – end-user analysis NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 17

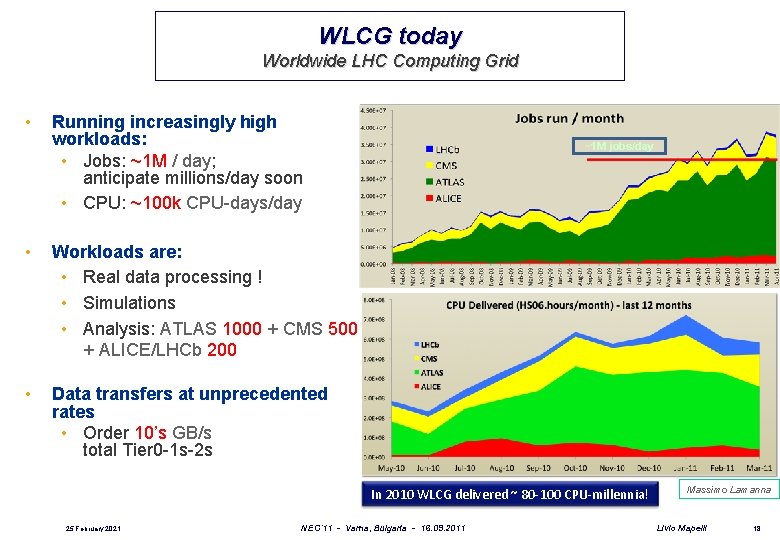

WLCG today Worldwide LHC Computing Grid • • • Running increasingly high workloads: • Jobs: ~1 M / day; anticipate millions/day soon • CPU: ~100 k CPU-days/day ~1 M jobs/day Workloads are: • Real data processing ! • Simulations • Analysis: ATLAS 1000 + CMS 500 + ALICE/LHCb 200 ~100 k CPU-days/day Data transfers at unprecedented rates • Order 10’s GB/s total Tier 0 -1 s-2 s In 2010 WLCG delivered ~ 80 -100 CPU-millennia! 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Massimo Lamanna Livio Mapelli 18

Where do we go from here? The LHC program - machine, experiments, computing – has taken 25 years to complete, from conception to operation. It is expected to operate probably as long. 25 years is several generations in technology changes: we must guarantee stability and sustainability, but also evolution at the same time. For Data Acquisition and Offline Computing, I expect a continuous “adiabatic” evolution, with optimisation and improvements and “smooth” adaptation to new tools and evolving technologies alternating with (also major) structural technology changes at given times, as allowed by intervals in data taking and as requested by detector changes (upgrades) and increasing demands. 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 19

Data Acquisition • Evolution of existing systems • Study evolutions of DAQ systems based on current architectures (Nicoletta Garelli) • • • preparing for the upgrades… No big structural changes possible during data taking and anyway not needed in the short term Hardware • Performance improvements from better products (CPUs…), optimisation of use of resources (all system have a degree of openness and scalability potential…) • Technology changes in major shutdowns? In any case, no R&D needed in this area but careful technology tracking! Software • All experiments have mature systems, for many aspects based on different solutions • … unnecessarily so, it is because at the moment of the design and development we did not have a mature understanding of the problems and their possible solutions: we were pioneering, inventing solutions! (and everybody pioneers his/her own way) • We are today mature for an analysis of the different solutions to the same problems, with the aim of evolving towards systems incorporating the best of each: lot of room for improvement! • The main issue : can we “reverse engineer” in a non-disruptive way? Can we evolve towards more modern software for the major experiments upgrades? • This requires a management decision, I believe it should be done (at least the analysis) 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 20

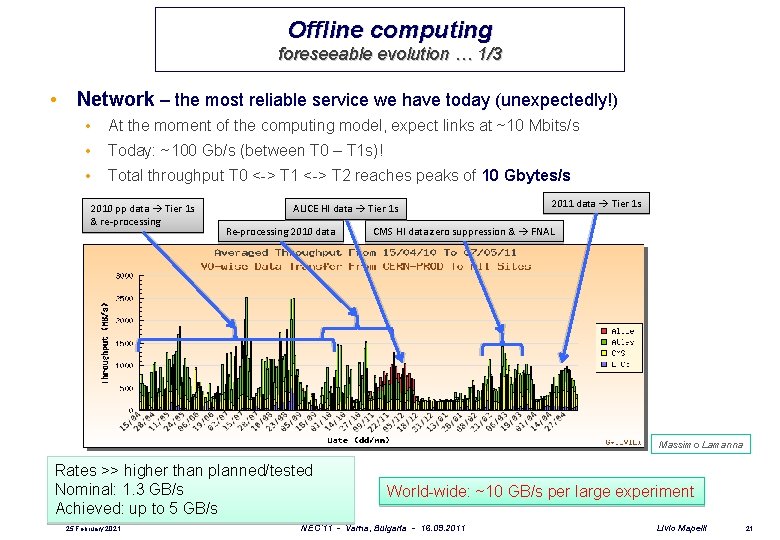

Offline computing foreseeable evolution … 1/3 • Network – the most reliable service we have today (unexpectedly!) • At the moment of the computing model, expect links at ~10 Mbits/s • Today: ~100 Gb/s (between T 0 – T 1 s)! • Total throughput T 0 <-> T 1 <-> T 2 reaches peaks of 10 Gbytes/s 2010 pp data Tier 1 s & re-processing ALICE HI data Tier 1 s Re-processing 2010 data 2011 data Tier 1 s CMS HI data zero suppression & FNAL Massimo Lamanna Rates >> higher than planned/tested Nominal: 1. 3 GB/s Achieved: up to 5 GB/s 25 February 2021 World-wide: ~10 GB/s per large experiment NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 21

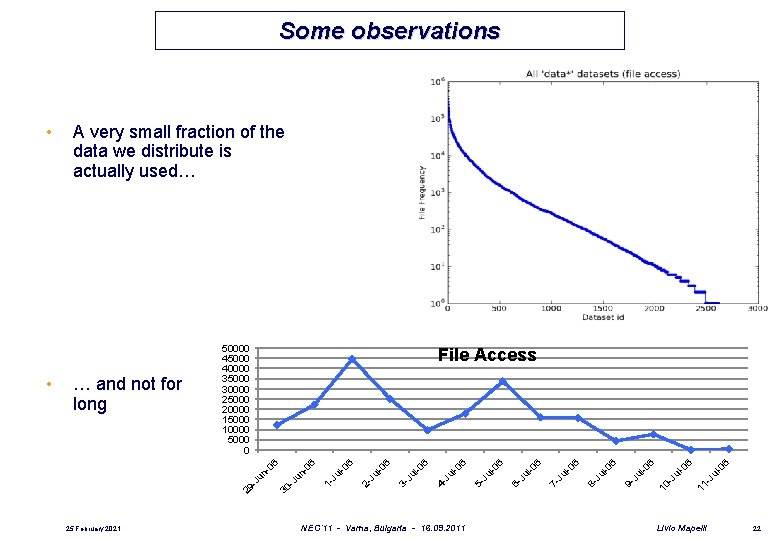

Some observations NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 6 11 -J ul -0 6 -0 ul -J 10 l-0 6 Ju 9 - l-0 6 Ju 8 - l-0 6 Ju 7 - l-0 6 Ju 6 - l-0 6 Ju 5 - l-0 6 Ju 4 - l-0 6 Ju 3 - l-0 6 Ju 2 - l-0 6 1 - Ju -0 un -J 30 -0 un 25 February 2021 6 File Access 6 … and not for long 50000 45000 40000 35000 30000 25000 20000 15000 10000 5000 0 -J • A very small fraction of the data we distribute is actually used… 29 • Livio Mapelli 22

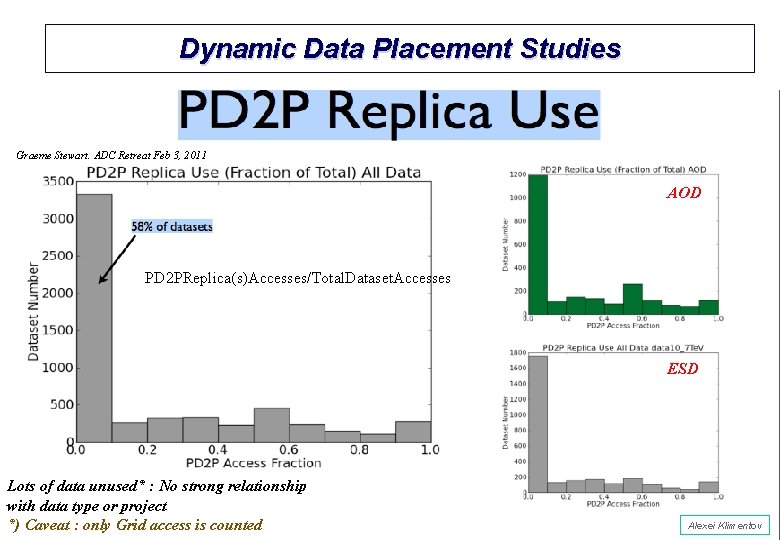

Dynamic Data Placement Studies Graeme Stewart. ADC Retreat Feb 3, 2011 AOD PD 2 PReplica(s)Accesses/Total. Dataset. Accesses ESD Lots of data unused* : No strong relationship with data type or project *) Caveat 23 : only Grid access is counted NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Alexei Klimentov Livio Mapelli

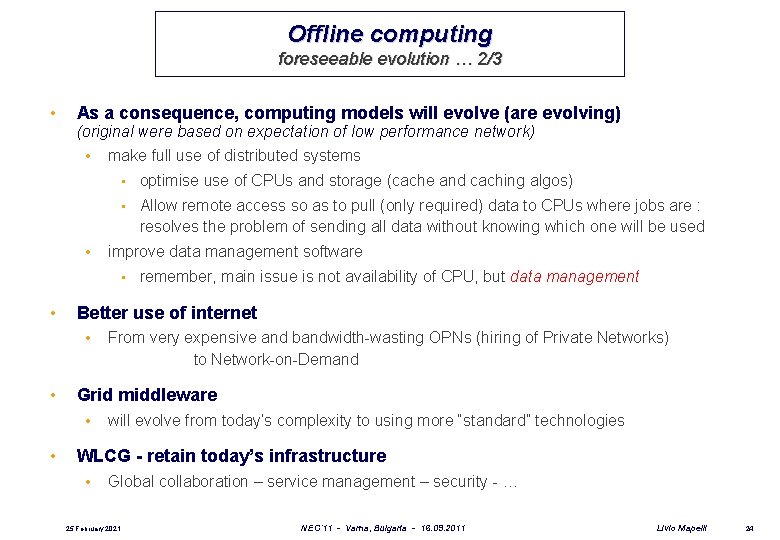

Offline computing foreseeable evolution … 2/3 • As a consequence, computing models will evolve (are evolving) (original were based on expectation of low performance network) • • make full use of distributed systems • optimise use of CPUs and storage (cache and caching algos) • Allow remote access so as to pull (only required) data to CPUs where jobs are : resolves the problem of sending all data without knowing which one will be used improve data management software • • Better use of internet • • From very expensive and bandwidth-wasting OPNs (hiring of Private Networks) to Network-on-Demand Grid middleware • • remember, main issue is not availability of CPU, but data management will evolve from today’s complexity to using more “standard” technologies WLCG - retain today’s infrastructure • Global collaboration – service management – security - … 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 24

Offline computing foreseeable evolution … 3/3 • Virtualisation • Grid -> Clouds • • i. e. virtualisation will allow the WLCG distributed computing environment to use cloud technology (dynamic allocation of resources) “Globalisation”? (take advantage from? ) • HEP has been a leader in needing and building global collaborations • It is no longer unique – many other sciences now have similar needs (Life sciences, astrophysics, …) • Anticipate huge data volumes • 25 February 2021 We must also collaborate on common solutions where possible, also banking on the important lessons from our experience NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 25

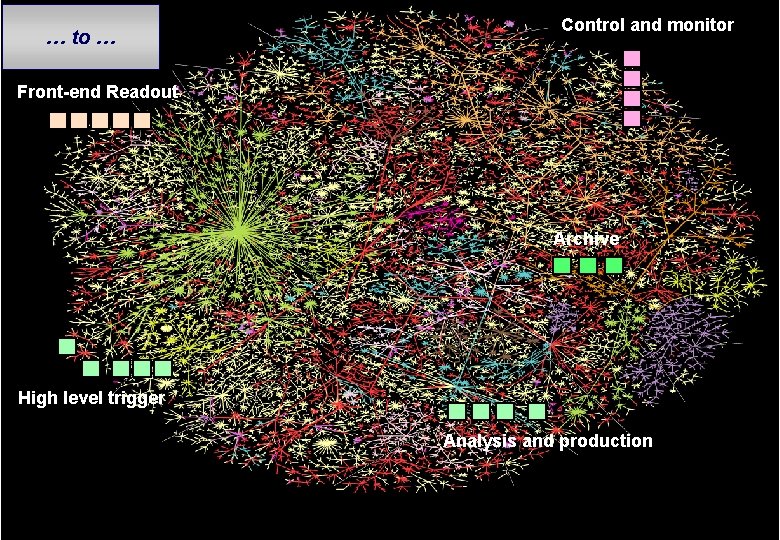

And next, for the more distant future? The present is based on decisions taken ~ 10 years ago In the meantime … internet exploded on us! In fact, looking at the current evolution trends (previous slides) they all point in the direction of an increasingly optimised (and simplified!) use of internet How far will we go towards the implementation of the basic on-offline conceptual architecture purely based on internet and internet services? i. e. move from … 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 26

TDAQ-Analysis architecture at the LHC 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 27

Control and monitor … to … Front-end Readout Archive High level trigger Analysis and production 2/25/2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 28

Crazy idea? Probably. But if it will ever happen, it will come “naturally” (certainly not tomorrow, though) Certainly, the 1 st Level trigger and frontend readout will always be on a local dedicated experiment network and make use of custom solutions at places But High-Level Triggers, dataflow, controls and monitoring farms (on- / off-line)? Already today trigger algorithms, online and offline monitoring are based on the same software, and operationally the separation is becoming fictitious… Can we base everything on internet services? In case technically feasible, though, (bandwidth would still be an issue today) a few (non-minor) issues are to be addressed: Security Service availability (local buffering, a lot of! 100 s of PB) Cost but also Online-offline cultural barrier, but also PHYSICISTS’ cultural barrier… Good software (data management, but not only…) 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 29

Conclusions Past experience teaches that any long-term projection is meaningless and anyway unnecessary one should not forget that today the “centralisation” of all resources is also technically feasible, in fact easier and cheaper, implementing equally well the basic conceptual on/offline model and serving in an equally transparent way a distributed community … but … politics … (see discussion earlier in the session … Clouds as a half-way solutions? ) Much more important is to choose the right path and strategy for evolution For DAQ and offline computing this is NOT R&D (with limited, very specific exceptions): it is rather TECHNOLOGY TRACKING and FUNCTIONAL PROTOTYPING I. e. , effort and resources must be invested in functional prototypes aimed at testing the suitability of new technologies to solve our problems, and also as support for learning how to write BETTER SOFTWARE This is what we have been forced to do for many years during the development of the “moving target” LHC experiments in a period of exceptionally rapid technology changes We have learnt the technique, we have been successful, we should now bank on it! 25 February 2021 NEC’ 11 - Varna, Bulgaria - 16. 09. 2011 Livio Mapelli 30

- Slides: 30