Nearest Neighbor Search in HighDimensional Spaces Alexandr Andoni

Nearest Neighbor Search in High-Dimensional Spaces Alexandr Andoni (Microsoft Research Silicon Valley)

Nearest Neighbor Search (NNS) l Preprocess: a set D of points l Query: given a new point q, report a point p D with the smallest distance to q p q

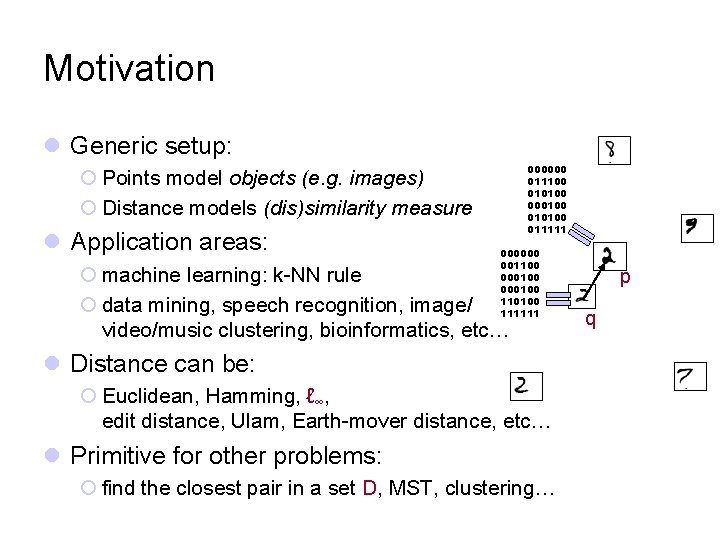

Motivation l Generic setup: 000000 011100 010100 000100 011111 ¡ Points model objects (e. g. images) ¡ Distance models (dis)similarity measure l Application areas: 000000 001100 000100 110100 111111 ¡ machine learning: k-NN rule ¡ data mining, speech recognition, image/ video/music clustering, bioinformatics, etc… l Distance can be: ¡ Euclidean, Hamming, ℓ∞, edit distance, Ulam, Earth-mover distance, etc… l Primitive for other problems: ¡ find the closest pair in a set D, MST, clustering… p q

Further motivation? e. Harmony: 29 Dimensions® of Compatibily 4

Plan for today 1. NNS for basic distances 2. NNS for advanced distances: reductions 3. NNS via composition

Plan for today 1. NNS for basic distances 2. NNS for advanced distances: reductions 3. NNS via composition

Euclidean distance 7

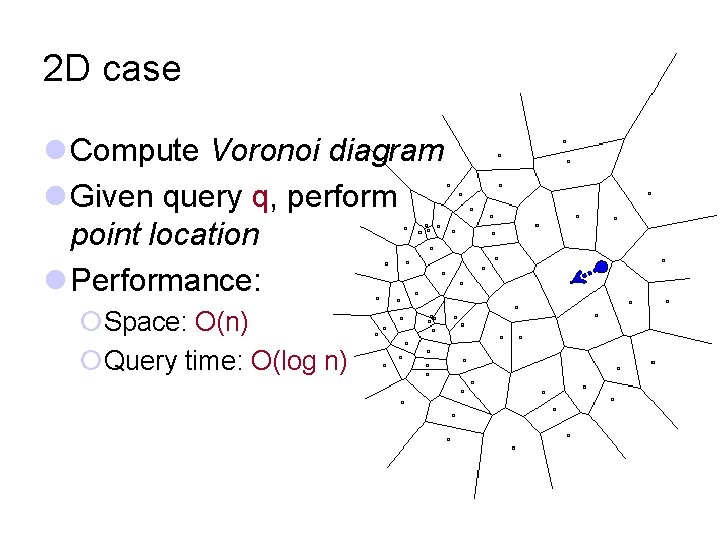

2 D case l Compute Voronoi diagram l Given query q, perform point location l Performance: ¡Space: O(n) ¡Query time: O(log n)

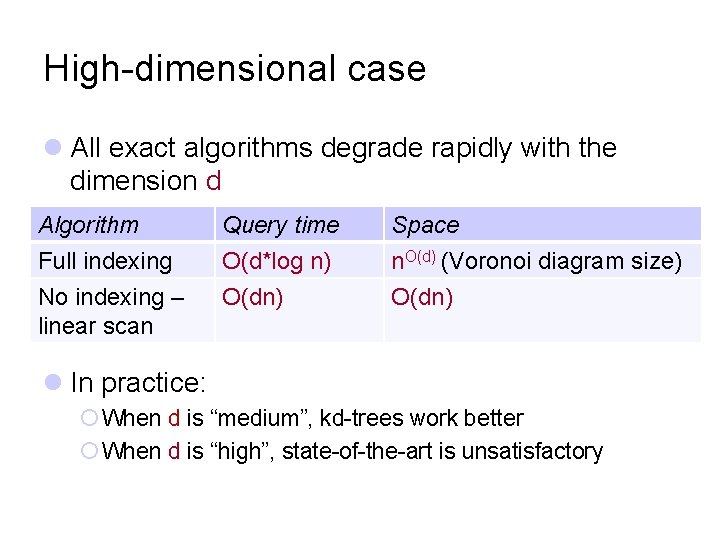

High-dimensional case l All exact algorithms degrade rapidly with the dimension d Algorithm Query time Space Full indexing O(d*log n) n. O(d) (Voronoi diagram size) No indexing – linear scan O(dn) l In practice: ¡ When d is “medium”, kd-trees work better ¡ When d is “high”, state-of-the-art is unsatisfactory

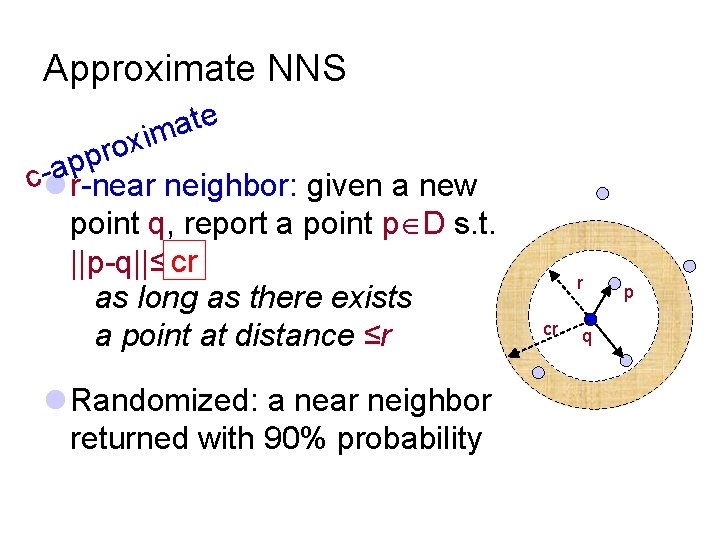

Approximate NNS e t a im x o r p p a cl r-near neighbor: given a new point q, report a point p D s. t. ||p-q||≤rcr as long as there exists a point at distance ≤r l Randomized: a near neighbor returned with 90% probability r cr q p

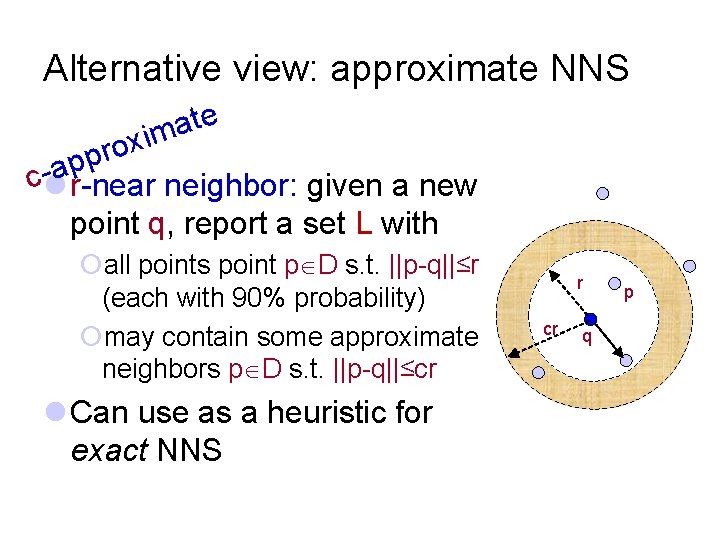

Alternative view: approximate NNS e t a im x o r p p a cl r-near neighbor: given a new point q, report a set L with ¡all points point p D s. t. ||p-q||≤r (each with 90% probability) ¡may contain some approximate neighbors p D s. t. ||p-q||≤cr l Can use as a heuristic for exact NNS r cr q p

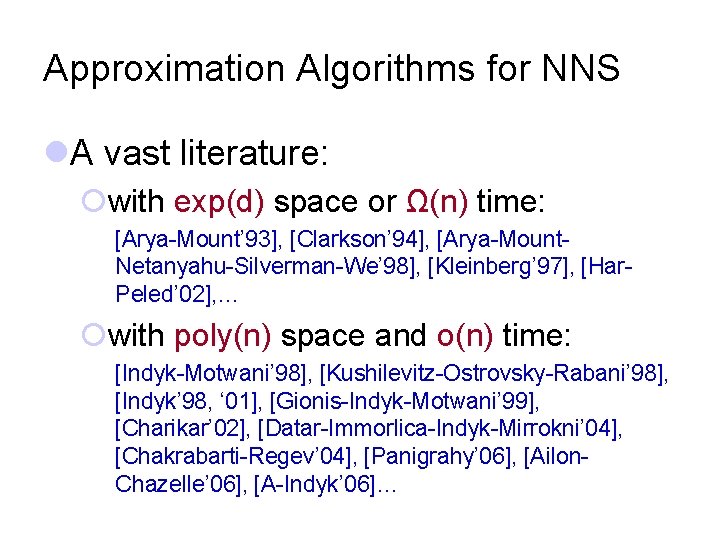

Approximation Algorithms for NNS l. A vast literature: ¡with exp(d) space or Ω(n) time: [Arya-Mount’ 93], [Clarkson’ 94], [Arya-Mount. Netanyahu-Silverman-We’ 98], [Kleinberg’ 97], [Har. Peled’ 02], … ¡with poly(n) space and o(n) time: [Indyk-Motwani’ 98], [Kushilevitz-Ostrovsky-Rabani’ 98], [Indyk’ 98, ‘ 01], [Gionis-Indyk-Motwani’ 99], [Charikar’ 02], [Datar-Immorlica-Indyk-Mirrokni’ 04], [Chakrabarti-Regev’ 04], [Panigrahy’ 06], [Ailon. Chazelle’ 06], [A-Indyk’ 06]…

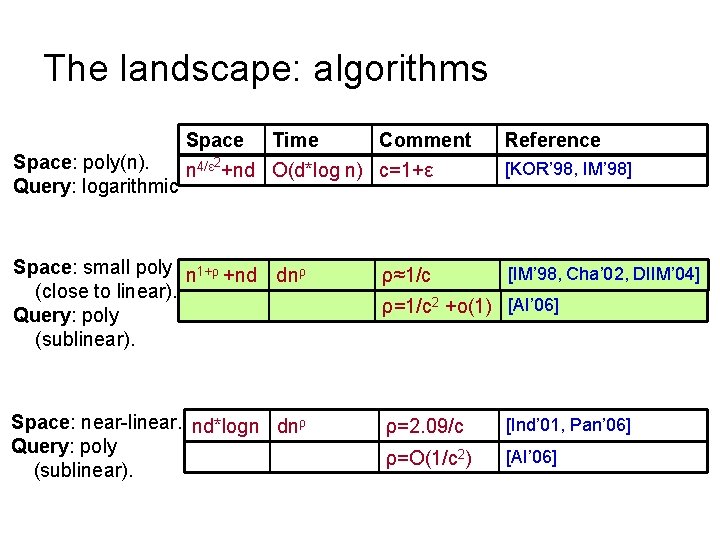

The landscape: algorithms Space Time Comment 2 Space: poly(n). n 4/ε +nd O(d*log n) c=1+ε Query: logarithmic Space: small poly n 1+ρ +nd dnρ (close to linear). Query: poly (sublinear). Space: near-linear. nd*logn dnρ Query: poly (sublinear). ρ≈1/c Reference [KOR’ 98, IM’ 98] [IM’ 98, Cha’ 02, DIIM’ 04] ρ=1/c 2 +o(1) [AI’ 06] ρ=2. 09/c [Ind’ 01, Pan’ 06] ρ=O(1/c 2) [AI’ 06]

![Locality-Sensitive Hashing [Indyk-Motwani’ 98] q l Random hash function g: Rd Z s. t. Locality-Sensitive Hashing [Indyk-Motwani’ 98] q l Random hash function g: Rd Z s. t.](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-14.jpg)

Locality-Sensitive Hashing [Indyk-Motwani’ 98] q l Random hash function g: Rd Z s. t. for any points p, q: p q ¡For a close pair p, q: ||p-q||≤r, P 1= Pr[g(p)=g(q)] is “high” “not-so-small” P 2=¡For a far pair p, q: ||p-q||>cr, Pr[g(p)=g(q)] is “small” l Use several hash tables: nρ, where ρ<1 s. t. Pr[g(p)=g(q)] 1 P 2 ||p-q|| r cr

![Example of hash functions: grids [Datar-Immorlica-Indyk-Mirrokni’ 04] l Pick a regular grid: ¡ Shift Example of hash functions: grids [Datar-Immorlica-Indyk-Mirrokni’ 04] l Pick a regular grid: ¡ Shift](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-15.jpg)

Example of hash functions: grids [Datar-Immorlica-Indyk-Mirrokni’ 04] l Pick a regular grid: ¡ Shift and rotate randomly l Hash function: ¡ g(p) = index of the cell of p l Gives ρ ≈ 1/c p

![Near-Optimal LSH [A-Indyk’ 06] l Regular grid → grid of balls p ¡p can Near-Optimal LSH [A-Indyk’ 06] l Regular grid → grid of balls p ¡p can](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-16.jpg)

Near-Optimal LSH [A-Indyk’ 06] l Regular grid → grid of balls p ¡p can hit empty space, so take more such grids until p is in a ball l Need (too) many grids of balls ¡Start by projecting in dimension t l Analysis gives l Choice of reduced dimension t? 2 D ¡Tradeoff between l# hash tables, n , and l. Time to hash, t. O(t) ¡Total query time: dn 1/c 2+o(1) p Rt

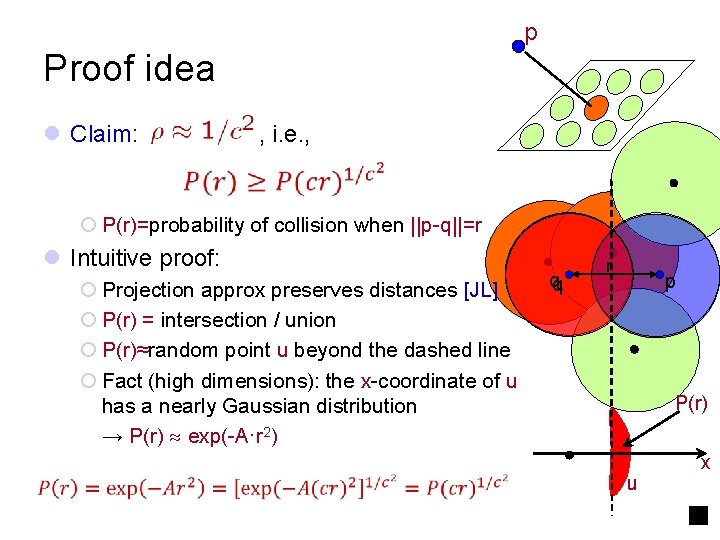

p Proof idea l Claim: , i. e. , ¡ P(r)=probability of collision when ||p-q||=r l Intuitive proof: ¡ Projection approx preserves distances [JL] ¡ P(r) = intersection / union ¡ P(r)≈random point u beyond the dashed line ¡ Fact (high dimensions): the x-coordinate of u has a nearly Gaussian distribution → P(r) exp(-A·r 2) qq r p P(r) u x

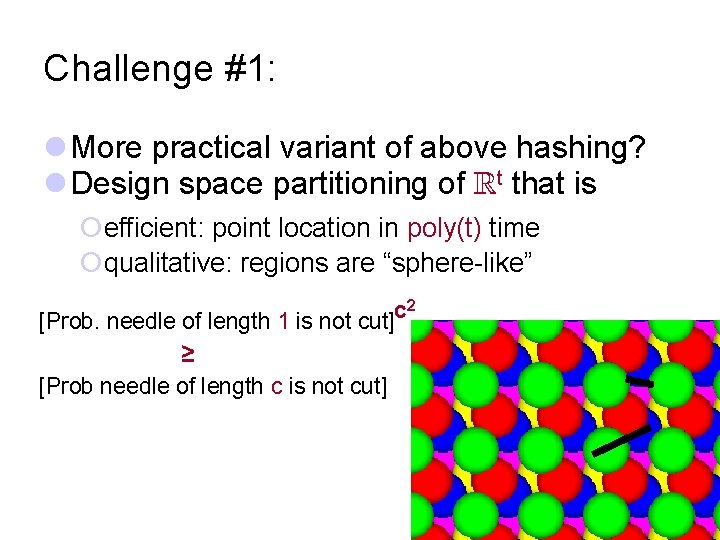

Challenge #1: l More practical variant of above hashing? l Design space partitioning of Rt that is ¡efficient: point location in poly(t) time ¡qualitative: regions are “sphere-like” 2 c [Prob. needle of length 1 is not cut] ≥ [Prob needle of length c is not cut]

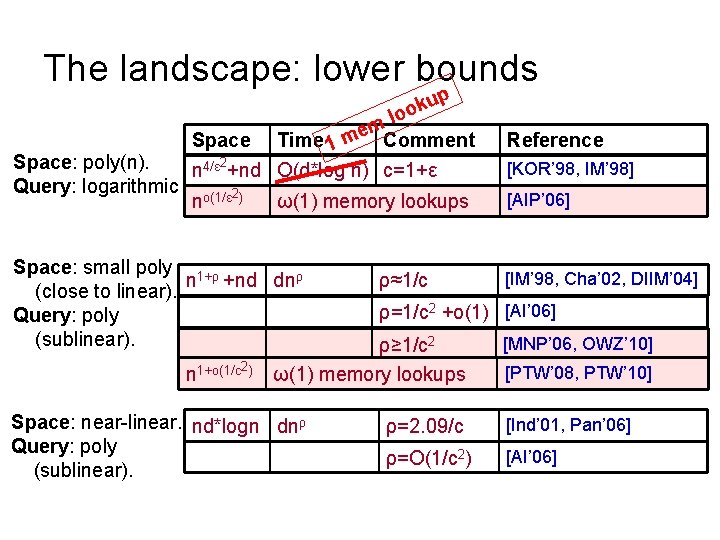

The landscape: lower bounds p u k oo Space: poly(n). Query: logarithmic ml e Space Time 1 m Comment 2 n 4/ε +nd O(d*log n) c=1+ε no(1/ε 2) ω(1) memory lookups Space: small poly 1+ρ n +nd dnρ (close to linear). Query: poly (sublinear). n 1+o(1/c 2) ρ≈1/c [KOR’ 98, IM’ 98] [AIP’ 06] [IM’ 98, Cha’ 02, DIIM’ 04] ρ=1/c 2 +o(1) [AI’ 06] ρ≥ 1/c 2 ω(1) memory lookups Space: near-linear. nd*logn dnρ Query: poly (sublinear). Reference [MNP’ 06, OWZ’ 10] [PTW’ 08, PTW’ 10] ρ=2. 09/c [Ind’ 01, Pan’ 06] ρ=O(1/c 2) [AI’ 06]

Other norms l Euclidean norm (ℓ 2) ¡Locality sensitive hashing l Hamming space ( ℓ 1) ¡also LSH ¡(in fact in original [IM 98]) l Max norm (ℓ ) ¡Don’t know of any LSH ¡next… ℓ =real space with distance: ||x-y|| =maxi |xi-yi| 20

![NNS for ℓ∞ distance ℓ =real space with distance: ||x-y|| =maxi |xi-yi| [Indyk’ 98] NNS for ℓ∞ distance ℓ =real space with distance: ||x-y|| =maxi |xi-yi| [Indyk’ 98]](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-21.jpg)

NNS for ℓ∞ distance ℓ =real space with distance: ||x-y|| =maxi |xi-yi| [Indyk’ 98] l Thm: for ρ>0, NNS for ℓ∞d with ¡ O(d * log n) query time ¡ n 1+ρ space ¡ O(lg 1+ρ lg d) approximation l The approach: ¡ A deterministic decision tree l Similar to kd-trees Challenge #2: ¡ Each node of DT is “q < t” q 1<5 ? Yes No i ¡ One difference: algorithms goes q 2<4 ? O(1) space, down the tree once Obtain O(1) approximation with n Yes No (while tracking the list of possible and sublinear query time NNS under ℓ. ∞ neighbors) q 1<3 ? l [ACP’ 08]: optimal for deterministic decision trees! q 2<3 ?

Plan for today 1. NNS for basic distances 2. NNS for advanced distances: reductions 3. NNS via composition

What do we have? l Classical ℓp distances: ¡Euclidean (ℓ 2), Hamming (ℓ 1), ℓ∞ l How about other distances? l E. g. : ¡Edit (Levenshtein) distance: ed(x, y) = minimum number of insertions/deletions/substitutions operations that transform x into y. l Very similar to Hamming distance… ¡or Earth-Mover Distance…

Earth-Mover Distance l Definition: ¡Given two sets A, B of points in a metric space ¡EMD(A, B) = min cost bipartite matching between A and B l Which metric space? ¡Can be plane, ℓ 2, ℓ 1… l Applications in image vision

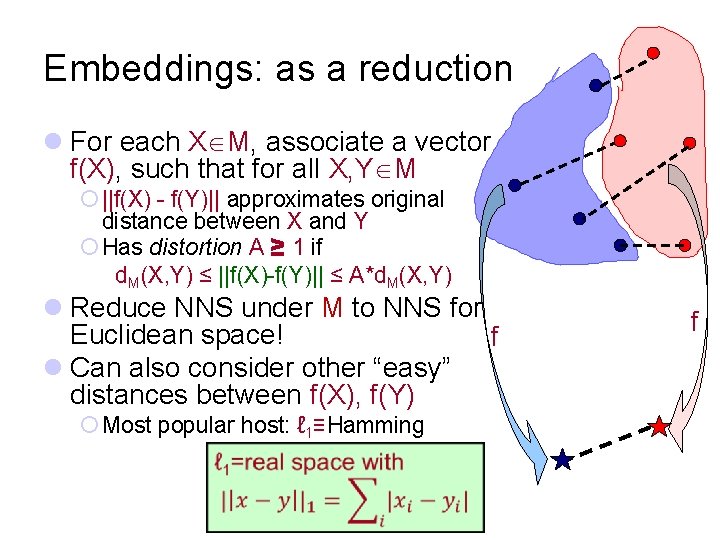

Embeddings: as a reduction l For each X M, associate a vector f(X), such that for all X, Y M ¡ ||f(X) - f(Y)|| approximates original distance between X and Y ¡ Has distortion A ≥ 1 if d. M(X, Y) ≤ ||f(X)-f(Y)|| ≤ A*d. M(X, Y) l Reduce NNS under M to NNS for Euclidean space! f l Can also consider other “easy” distances between f(X), f(Y) ¡ Most popular host: ℓ 1≡Hamming f

![Earth-Mover Distance over 2 D into ℓ 1 [Charikar’ 02, Indyk-Thaper’ 03] l Sets Earth-Mover Distance over 2 D into ℓ 1 [Charikar’ 02, Indyk-Thaper’ 03] l Sets](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-26.jpg)

Earth-Mover Distance over 2 D into ℓ 1 [Charikar’ 02, Indyk-Thaper’ 03] l Sets of size s in [1…s]x[1…s] box l Embedding of set A: ¡ impose randomly-shifted grid ¡ Each grid cell gives a coordinate: f (A)c=#points in the cell c ¡ Subpartition the grid recursively, and assign new coordinates for each new cell (on all levels) 00 02 00 11 12 01 01 22 20 00 l Distortion: O(log s) 26

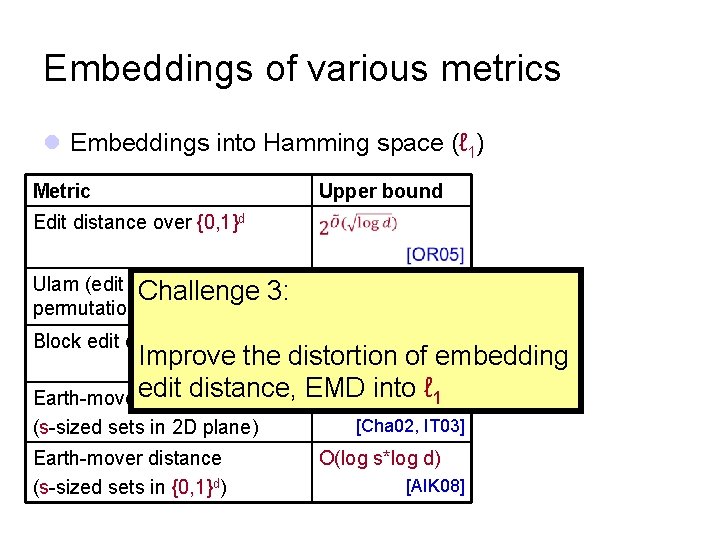

Embeddings of various metrics l Embeddings into Hamming space (ℓ 1) Metric Upper bound Edit distance over {0, 1}d Ulam (edit distance between Challenge 3: permutations) O(log d) Block edit distance O (log d) [CK 06] Improve the distortion of embedding [MS 00, CM 07] edit distance, EMD into ℓ 1 Earth-mover distance O(log s) (s-sized sets in 2 D plane) Earth-mover distance (s-sized sets in {0, 1}d) [Cha 02, IT 03] O(log s*log d) [AIK 08]

Are we done? “just” remains to find an embedding with low distortion… No, unfortunately

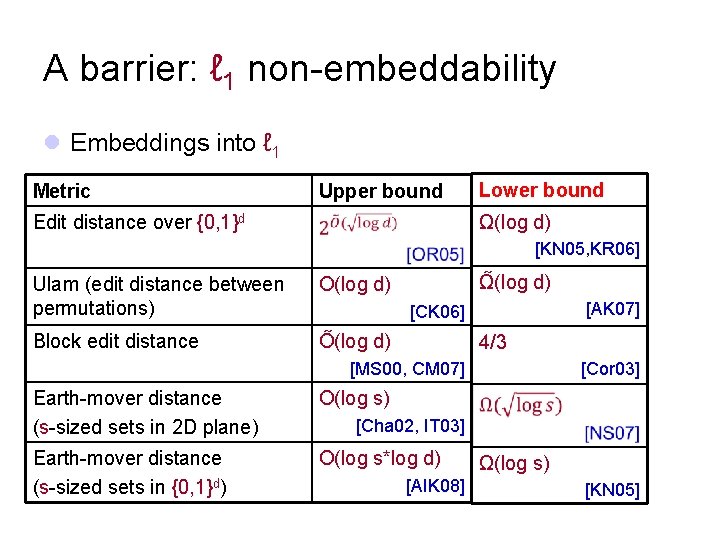

A barrier: ℓ 1 non-embeddability l Embeddings into ℓ 1 Metric Upper bound Lower bound Ω(log d) Edit distance over {0, 1}d [KN 05, KR 06] Ulam (edit distance between permutations) O(log d) Block edit distance O (log d) Ω (log d) [AK 07] [CK 06] 4/3 [MS 00, CM 07] Earth-mover distance (s-sized sets in 2 D plane) O(log s) Earth-mover distance (s-sized sets in {0, 1}d) O(log s*log d) [Cor 03] [Cha 02, IT 03] [AIK 08] Ω(log s) [KN 05]

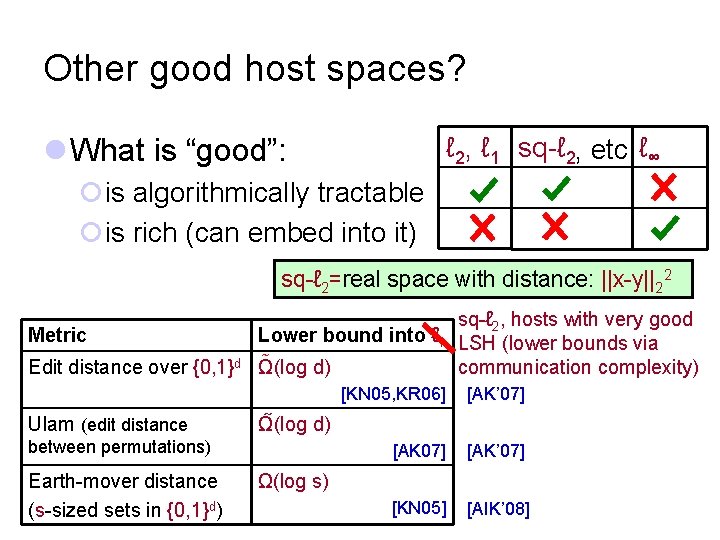

Other good host spaces? l What is “good”: ℓ 2, ℓ 1 sq-ℓ 2, etc ℓ∞ ¡is algorithmically tractable ¡is rich (can embed into it) sq-ℓ 2=real space with distance: ||x-y||22 Metric Edit distance over {0, 1}d Ulam (edit distance sq-ℓ 2, hosts with very good Lower bound into ℓ 1 LSH (lower bounds via Ω(log d) communication complexity) [AK’ 07] [KN 05] [AIK’ 08] Ω (log d) between permutations) Earth-mover distance (s-sized sets in {0, 1}d) [KN 05, KR 06] Ω(log s)

Plan for today 1. NNS for basic distances 2. NNS for advanced distances: reductions 3. NNS via composition

![α Meet our new host [A-Indyk-Krauthgamer’ 09] … … … l Iterated product space α Meet our new host [A-Indyk-Krauthgamer’ 09] … … … l Iterated product space](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-32.jpg)

α Meet our new host [A-Indyk-Krauthgamer’ 09] … … … l Iterated product space d 1 d 1 β d∞, 1 d 22, ∞, 1 γ 32

![Why ? [A-Indyk-Krauthgamer’ 09, Indyk’ 02] Algorithmically Rich tractable l edit distance between Why ? [A-Indyk-Krauthgamer’ 09, Indyk’ 02] Algorithmically Rich tractable l edit distance between](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-33.jpg)

Why ? [A-Indyk-Krauthgamer’ 09, Indyk’ 02] Algorithmically Rich tractable l edit distance between permutations ED(1234567, 7123456) = 2

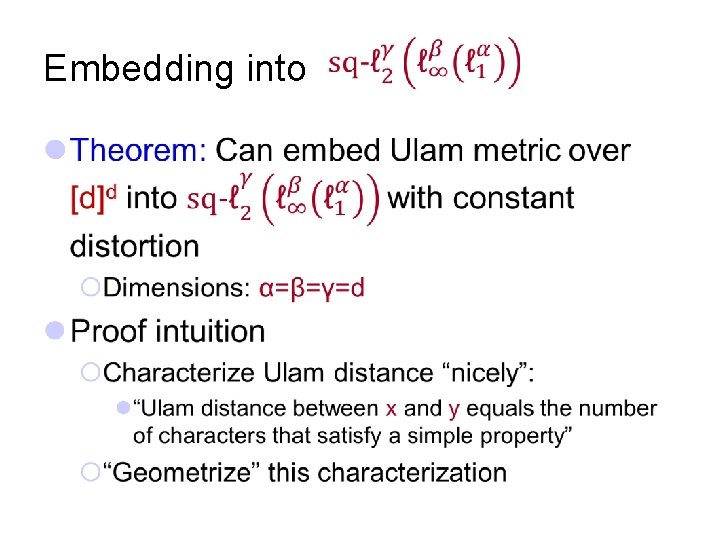

Embedding into l

![Ulam: a characterization [Ailon-Chazelle-Commandur-Lu’ 04, Gopalan-Jayram. Krauthgamer-Kumar’ 07, A-Indyk-Krauthgamer’ 09] l Lemma: Ulam(x, y) Ulam: a characterization [Ailon-Chazelle-Commandur-Lu’ 04, Gopalan-Jayram. Krauthgamer-Kumar’ 07, A-Indyk-Krauthgamer’ 09] l Lemma: Ulam(x, y)](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-35.jpg)

Ulam: a characterization [Ailon-Chazelle-Commandur-Lu’ 04, Gopalan-Jayram. Krauthgamer-Kumar’ 07, A-Indyk-Krauthgamer’ 09] l Lemma: Ulam(x, y) approximately equals the number of “faulty” characters a satisfying: E. g. , a=5; K=4 X[5; 4] x= 123456789 y= 123467895 ¡there exists K≥ 1 (prefix-length) s. t. ¡the set of K characters preceding a in x differs much from the set of K characters preceding a in y Y[5; 4]

![Ulam: the embedding X[5; 4] l “Geometrizing” characterization: 123467895 l Gives an embedding 123456789 Ulam: the embedding X[5; 4] l “Geometrizing” characterization: 123467895 l Gives an embedding 123456789](http://slidetodoc.com/presentation_image_h/0347d7ab8163e1f1055b2f81feecfb9e/image-36.jpg)

Ulam: the embedding X[5; 4] l “Geometrizing” characterization: 123467895 l Gives an embedding 123456789 Y[5; 4]

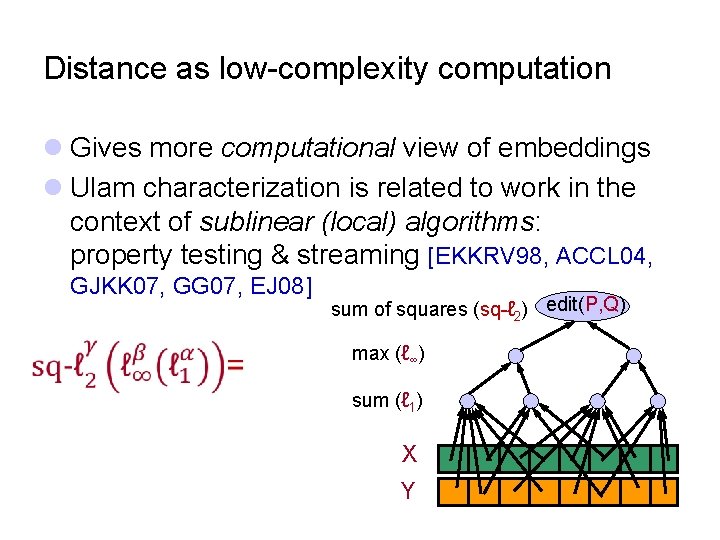

Distance as low-complexity computation l Gives more computational view of embeddings l Ulam characterization is related to work in the context of sublinear (local) algorithms: property testing & streaming [EKKRV 98, ACCL 04, GJKK 07, GG 07, EJ 08] sum of squares (sq-ℓ 2) edit(P, Q) max (ℓ∞) sum (ℓ 1) X Y

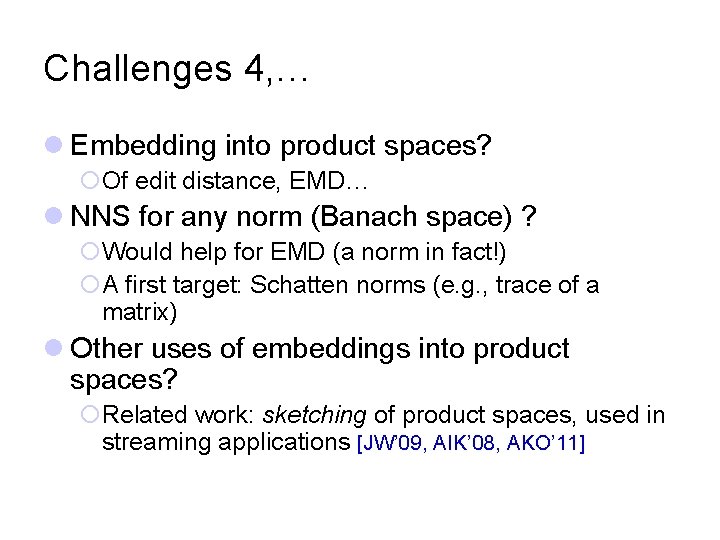

Challenges 4, … l Embedding into product spaces? ¡Of edit distance, EMD… l NNS for any norm (Banach space) ? ¡Would help for EMD (a norm in fact!) ¡A first target: Schatten norms (e. g. , trace of a matrix) l Other uses of embeddings into product spaces? ¡Related work: sketching of product spaces, used in streaming applications [JW’ 09, AIK’ 08, AKO’ 11]

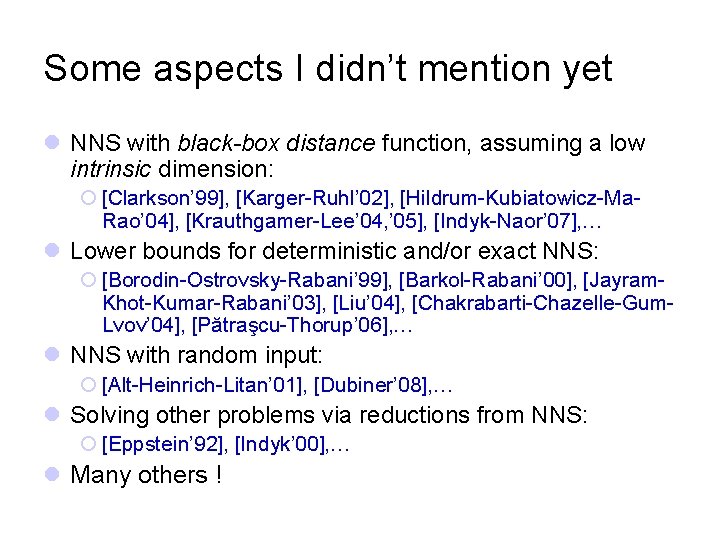

Some aspects I didn’t mention yet l NNS with black-box distance function, assuming a low intrinsic dimension: ¡ [Clarkson’ 99], [Karger-Ruhl’ 02], [Hildrum-Kubiatowicz-Ma. Rao’ 04], [Krauthgamer-Lee’ 04, ’ 05], [Indyk-Naor’ 07], … l Lower bounds for deterministic and/or exact NNS: ¡ [Borodin-Ostrovsky-Rabani’ 99], [Barkol-Rabani’ 00], [Jayram. Khot-Kumar-Rabani’ 03], [Liu’ 04], [Chakrabarti-Chazelle-Gum. Lvov’ 04], [Pătraşcu-Thorup’ 06], … l NNS with random input: ¡ [Alt-Heinrich-Litan’ 01], [Dubiner’ 08], … l Solving other problems via reductions from NNS: ¡ [Eppstein’ 92], [Indyk’ 00], … l Many others !

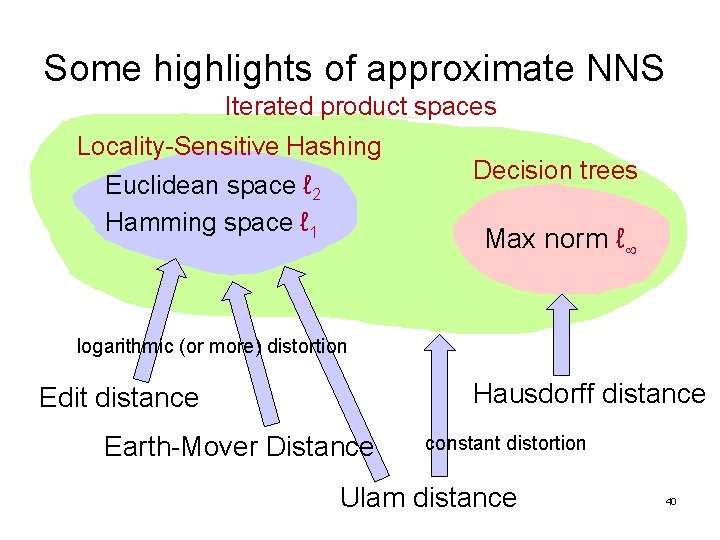

Some highlights of approximate NNS Iterated product spaces Locality-Sensitive Hashing Euclidean space ℓ 2 Hamming space ℓ 1 Decision trees Max norm ℓ logarithmic (or more) distortion Hausdorff distance Edit distance Earth-Mover Distance constant distortion Ulam distance 40

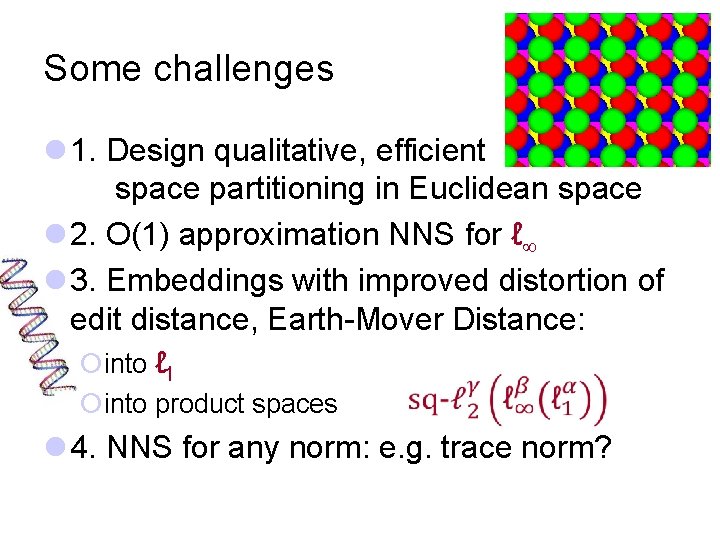

Some challenges l 1. Design qualitative, efficient space partitioning in Euclidean space l 2. O(1) approximation NNS for ℓ l 3. Embeddings with improved distortion of edit distance, Earth-Mover Distance: ¡into ℓ 1 ¡into product spaces l 4. NNS for any norm: e. g. trace norm?

- Slides: 41