Ne ST Network Storage Flexible Commodity Storage Appliances

Ne. ST: Network Storage Flexible Commodity Storage Appliances John Bent Miron Livny, Andrea Arpaci-Dusseau, Remzi Arpaci-Dusseau Computer Sciences Department University of Wisconsin-Madison johnbent@nestproject. com http: //www. nestproject. com

Terms › Appliance (Merriam-Webster) hb : an instrument or device designed for a particular use; specifically a household or office device › Storage appliance h. Storage plus access methods www. cs. wisc. edu/condor

What storage users want › Reliability and availability › Manageability hcost of management > cost of storage itself h“no futz” computing › Scalability › Performance www. cs. wisc. edu/condor

What storage vendors have › Net. App, EMC, etc. › Manageable h. Just plug it in and it works h. Administrative web interface › Reliable and available h. Standard RAID techniques › High performance h. Specialized, thin OS focused on serving files www. cs. wisc. edu/condor

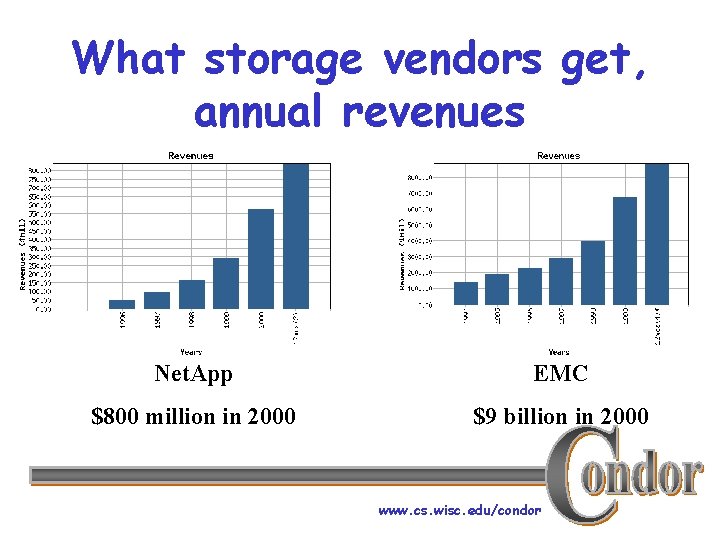

What storage vendors get, annual revenues Net. App EMC $800 million in 2000 $9 billion in 2000 www. cs. wisc. edu/condor

What’s the problem? › False coupling between HW and SW › “Playground syndrome” › Myth of specialization www. cs. wisc. edu/condor

H/W and S/W are bundled › Hardware decisions are imposed › Hard to ride commodity curve h. Example: • Netapp F 720 – $35, 000. 00, 252 GB – $139 / GB • Linux server – $18, 000. 00, 365 GB – $49 / GB www. cs. wisc. edu/condor

“Playground syndrome” › “We have storage appliances. . . hif you use these protocols, hif you use these security mechanisms, hif you are comfortable with our data semantics” › Non-flexible software entity www. cs. wisc. edu/condor

Myth of specialization › Specialize for one protocol on one machine › Specialization decreases over time as h. Protocols are added h. Product line expands › Example: Netapp software h. Generation 1 fit on a single floppy h. Generation 2 took six h. Generation 3? www. cs. wisc. edu/condor

Alternatives? › Appliance (Merriam. Webster) ha : a piece of equipment for adapting a tool or machine to a special purpose www. cs. wisc. edu/condor

Our game? › Flexible, commodity based, softwareonly storage appliances › Goal h. Find a networked machine h“Drop” some software on it h. Have a ready to use storage appliance with flexible mechanisms www. cs. wisc. edu/condor

New worlds, new problems › Diverse hardware, software platforms h. Netapp, EMC advantage • fewer platforms, control over OS h. Our approach • Automate configuration to each host system – Hardware example - use file system or self-manage – Software example - use either read/write or mmap › Cost of flexibility › Key is design of the software www. cs. wisc. edu/condor

Outline › Introduction › Building flexible storage modules h. Big picture h. Protocol layer h. Concurrency architecture h. Storage layer › Motivations for flexible storage appliances › Conclusion and current status www. cs. wisc. edu/condor

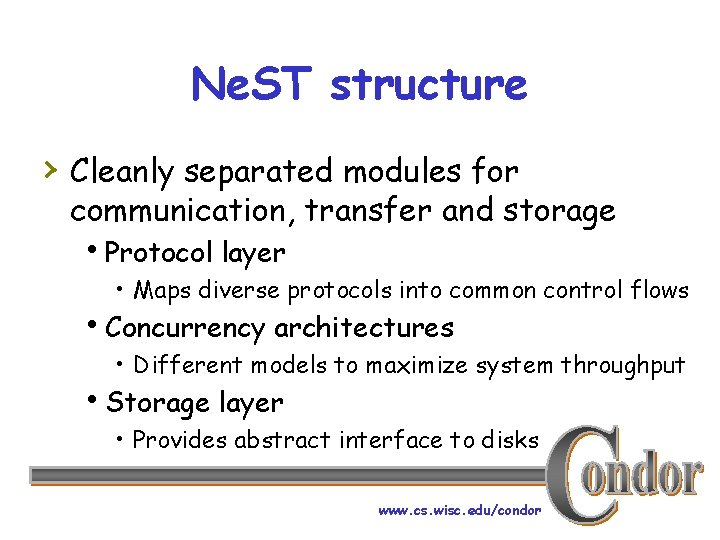

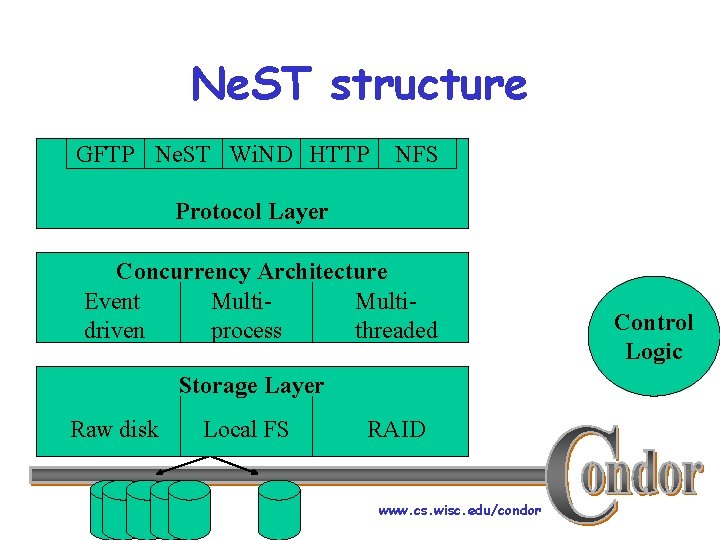

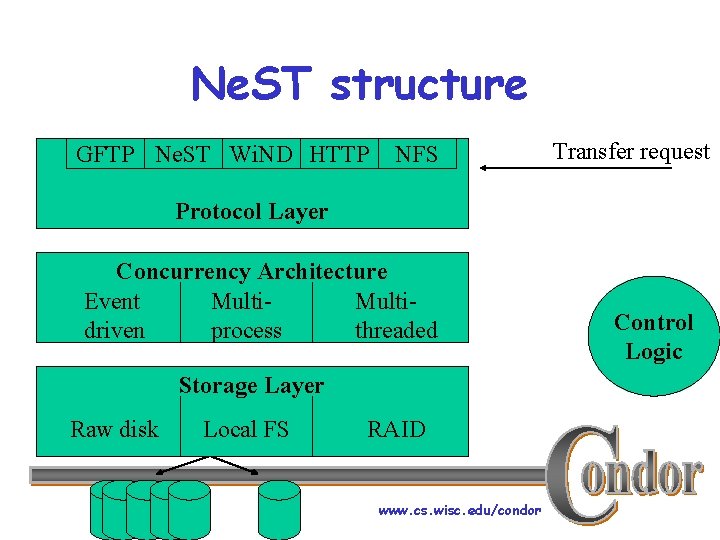

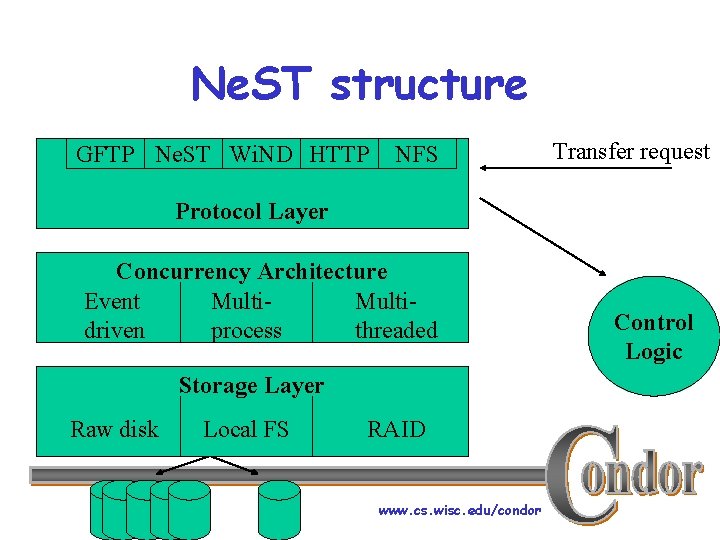

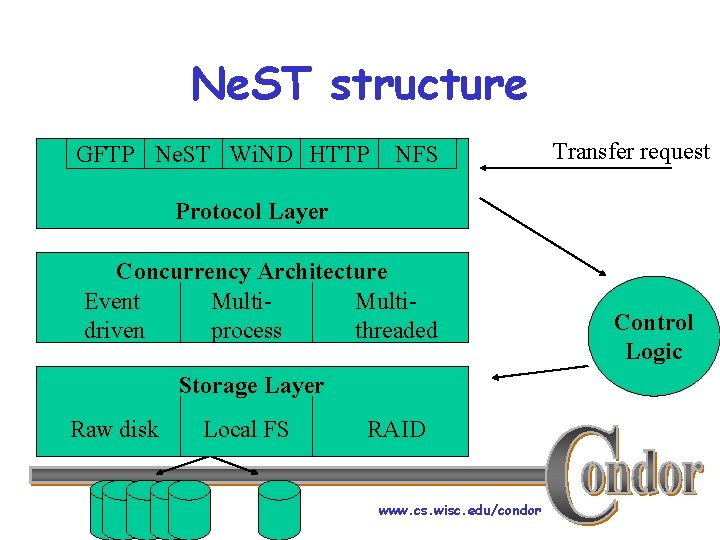

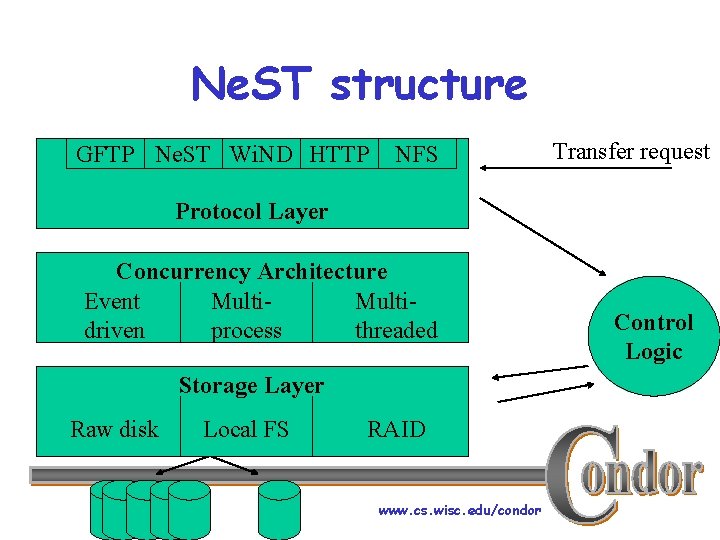

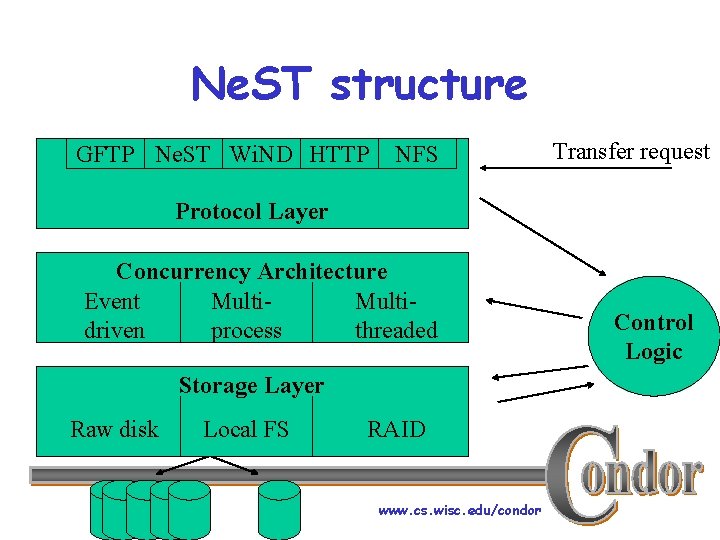

Ne. ST structure › Cleanly separated modules for communication, transfer and storage h. Protocol layer • Maps diverse protocols into common control flows h. Concurrency architectures • Different models to maximize system throughput h. Storage layer • Provides abstract interface to disks www. cs. wisc. edu/condor

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Transfer request Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Transfer request Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Transfer request Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Transfer request Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

Ne. ST structure GFTP Ne. ST Wi. ND HTTP NFS Transfer request Protocol Layer Concurrency Architecture Event Multidriven process threaded Storage Layer Raw disk Local FS RAID www. cs. wisc. edu/condor Control Logic

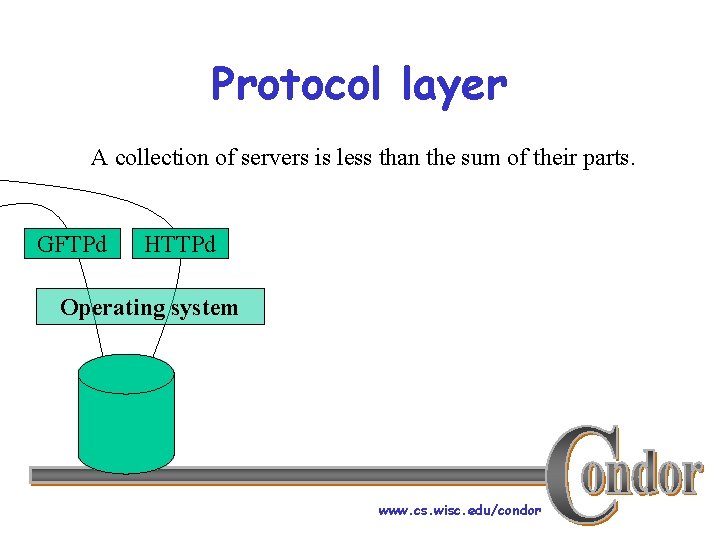

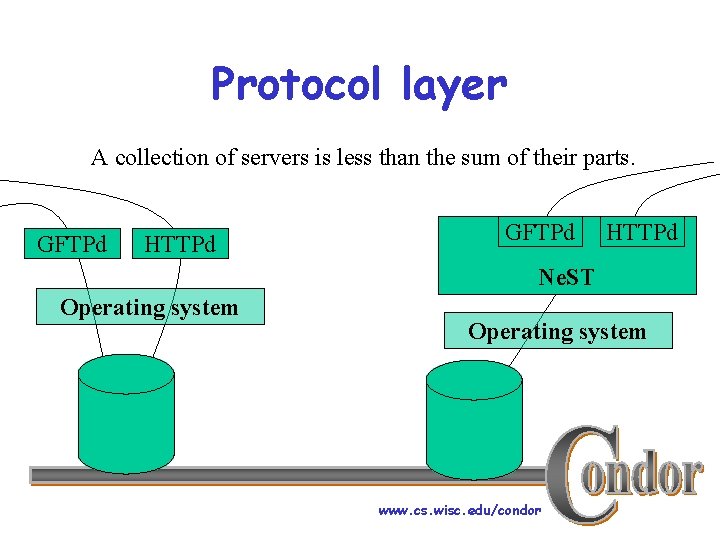

Protocol layer A collection of servers is less than the sum of their parts. GFTPd HTTPd Operating system www. cs. wisc. edu/condor

Protocol layer A collection of servers is less than the sum of their parts. GFTPd HTTPd NFS GFTPd HTTPd Ne. ST Operating system www. cs. wisc. edu/condor

Consolidate protocols › Single point of control h. Storage quotas and guarantees can be supported across multiple protocols. h. Bandwidth can be controlled and quality of service can be guaranteed. › Single administrative interface h. Set policies h. Manage user accounts www. cs. wisc. edu/condor

Protocol layer implementation › Each protocol listens on well-defined port › Central control accepts connections › Protocol layer reads from connection and › returns generic request object Like Linux V-nodes h. Add new protocol by writing a couple of methods www. cs. wisc. edu/condor

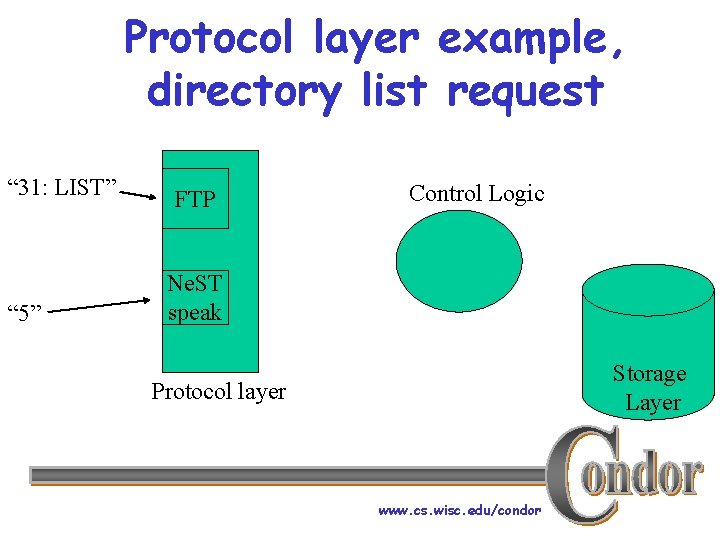

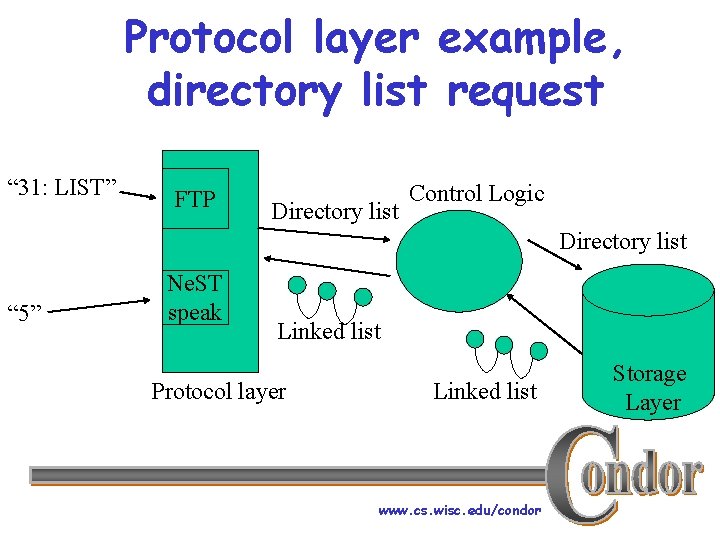

Protocol layer example, directory list request “ 31: LIST” “ 5” FTP Control Logic Ne. ST speak Storage Layer Protocol layer www. cs. wisc. edu/condor

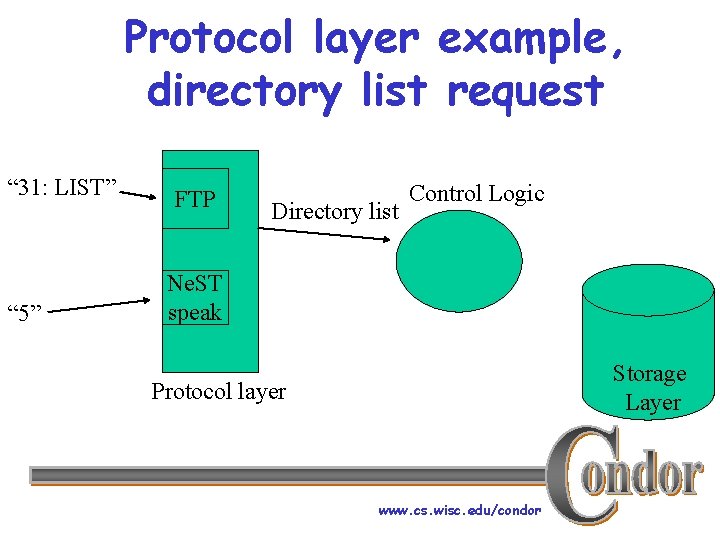

Protocol layer example, directory list request “ 31: LIST” “ 5” FTP Directory list Control Logic Ne. ST speak Storage Layer Protocol layer www. cs. wisc. edu/condor

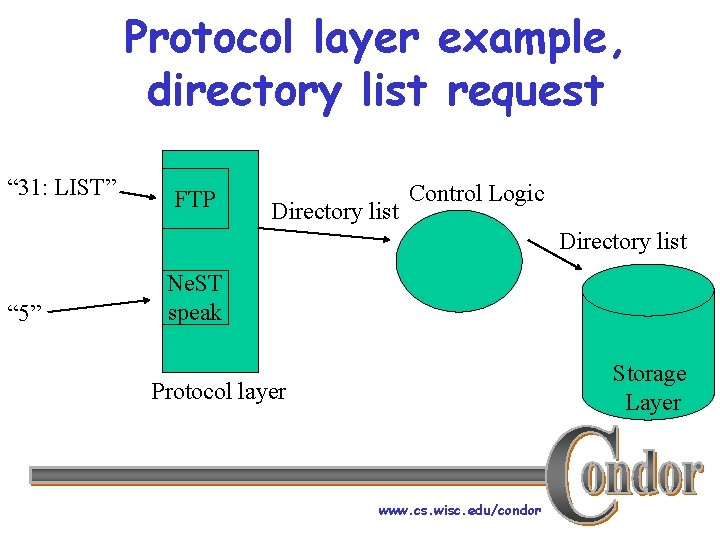

Protocol layer example, directory list request “ 31: LIST” FTP Directory list Control Logic Directory list “ 5” Ne. ST speak Storage Layer Protocol layer www. cs. wisc. edu/condor

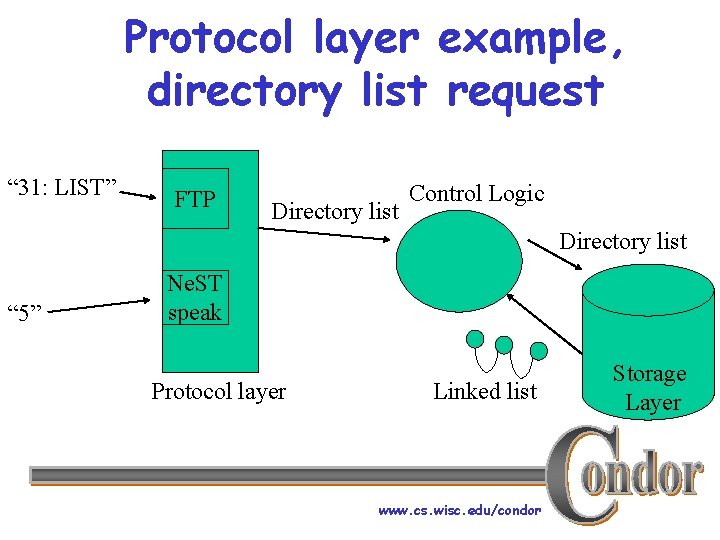

Protocol layer example, directory list request “ 31: LIST” FTP Directory list Control Logic Directory list “ 5” Ne. ST speak Protocol layer Linked list www. cs. wisc. edu/condor Storage Layer

Protocol layer example, directory list request “ 31: LIST” FTP Directory list Control Logic Directory list “ 5” Ne. ST speak Linked list Protocol layer Linked list www. cs. wisc. edu/condor Storage Layer

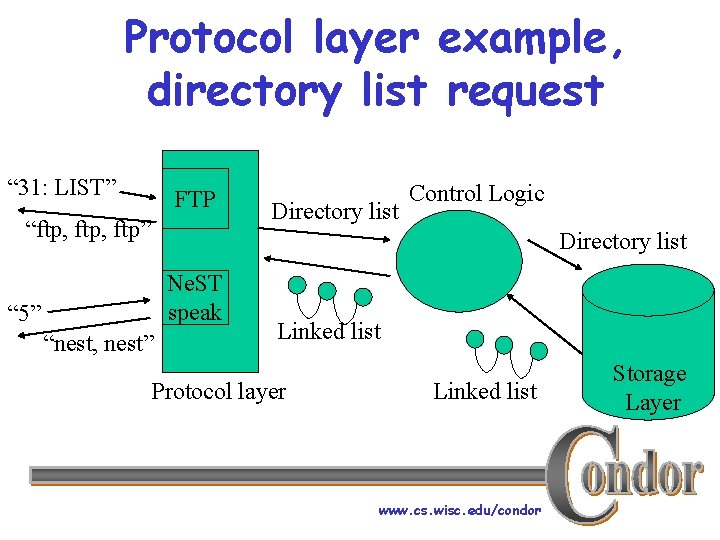

Protocol layer example, directory list request “ 31: LIST” FTP “ftp, ftp” Directory list Control Logic Directory list Ne. ST speak “ 5” “nest, nest” Linked list Protocol layer Linked list www. cs. wisc. edu/condor Storage Layer

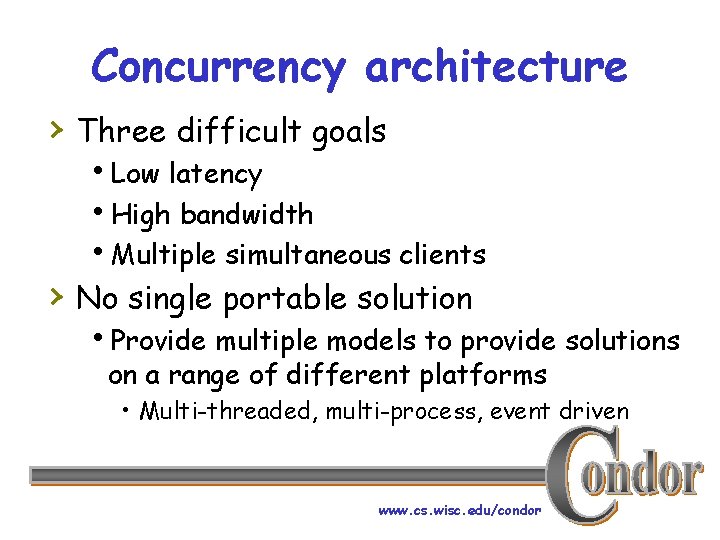

Concurrency architecture › Three difficult goals h. Low latency h. High bandwidth h. Multiple simultaneous clients › No single portable solution h. Provide multiple models to provide solutions on a range of different platforms • Multi-threaded, multi-process, event driven www. cs. wisc. edu/condor

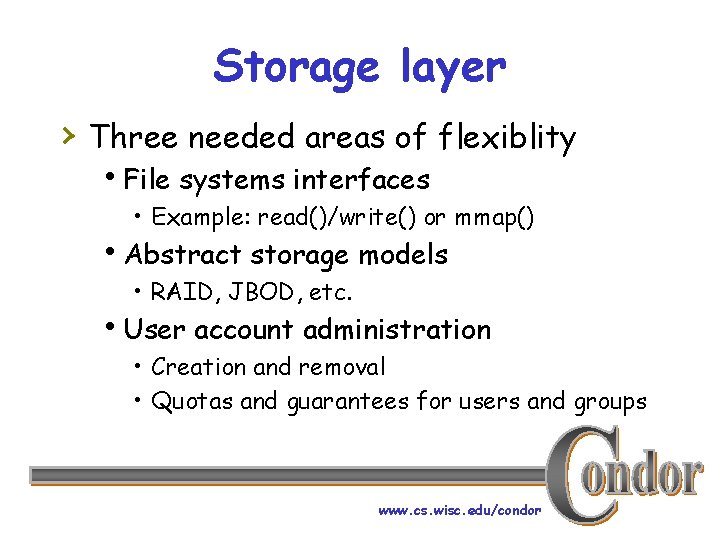

Storage layer › Three needed areas of flexiblity h. File systems interfaces • Example: read()/write() or mmap() h. Abstract storage models • RAID, JBOD, etc. h. User account administration • Creation and removal • Quotas and guarantees for users and groups www. cs. wisc. edu/condor

Outline › Introduction › Building flexible storage modules › Motivations for flexible storage appliances h. Communication protocols h. Replacement costs h. Data semantics h. Security and authentication h. Condor Ne. STs › Conclusion and current status www. cs. wisc. edu/condor

Communication protocols › The Esperanto problem › Too many protocols to implement them all › Too many clients use proprietary protocols Storage must allow pluggable protocols. www. cs. wisc. edu/condor

Replacement costs › Infinite cost to replace first class data. › Variable cost to replace cached data › depending on size and distance. Variable cost to replace job output files depending on computation cost. Cheap cached files First class data www. cs. wisc. edu/condor

Replacement costs › Infinite cost to replace first class data. › Variable cost to replace cached data › depending on size and distance. Variable cost to replace job output files depending on computation cost. Cheap cached files First class data Cost aware storage can effectively increase its own capacity. www. cs. wisc. edu/condor

Data semantics › Must stored objects be protected from › › read and write dependencies? Is transaction support desired? Acceptable replies to storage requests. www. cs. wisc. edu/condor

Data semantics, example › Problem h. PFS on top of FTP fakes open hread may then return file not found › Solution h. Mechanisms are needed to support flexible semantics independent of the transfer protocol. www. cs. wisc. edu/condor

Data semantics, example › Problem h. PFS on top of FTP fakes open hread may then return file not found › Solution h. Mechanisms are needed to support flexible semantics independent of the transfer protocol. Divorce semantics from the protocol. www. cs. wisc. edu/condor

Security and authentication › › › Ownership Privacy Encryption Authentication Access rights www. cs. wisc. edu/condor

Who, when, how and how much? › Who is allowed to use the storage? › Promiscuity and monogamy are easy › Polygamy is also easy Promiscuous Abstinent www. cs. wisc. edu/condor

› Problem Do I know you? h. Migrant grid users may need temporary, preferential storage access › Solution h. Provide mechanisms to • advertise available storage • create self-destructing user accounts Matchmake applications with storage opportunities. www. cs. wisc. edu/condor

Condor Ne. STs › Better, smarter checkpoint servers › Checkpoints are just another data file › Ne. ST transparently replicates and › › migrates data files Condor jobs access data files from closest Ne. ST Flexible policy support for managing disk and network resources www. cs. wisc. edu/condor

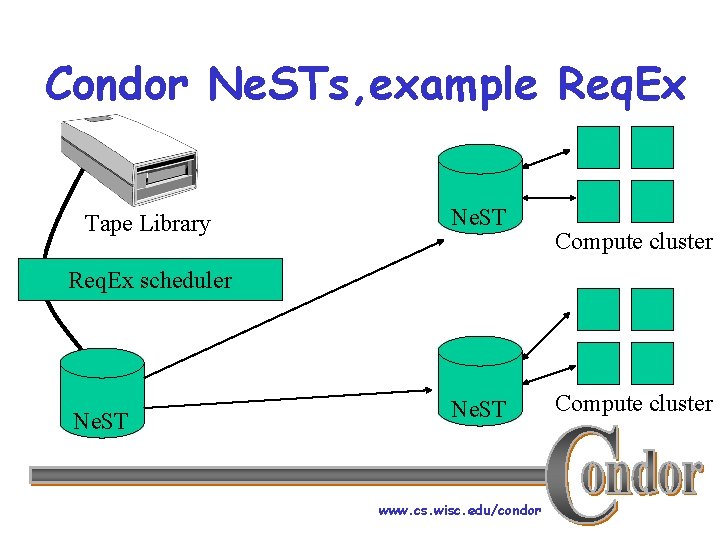

Condor Ne. STs, example Req. Ex Tape Library Ne. ST Compute cluster Req. Ex scheduler Ne. ST www. cs. wisc. edu/condor Compute cluster

Outline › › Introduction Building flexible storage solutions Motivations for flexible storage appliances Conclusion h. Current status h. Future work h. Concluding remarks www. cs. wisc. edu/condor

Current status › Concurrency architectures are done h. Gets, puts, reads and writes perform well › Virtual protocol class interface is built h. Ne. ST speak is fully implemented h. Grid ftp is partially implemented › Simple first implementation of storage reservations and remote quota management is done www. cs. wisc. edu/condor

Future work › Discovery process of client storage › › › requirements Quality of service guarantees for bandwidth and storage Support for transient and opportunistic users Transparent inter-Ne. ST cooperation www. cs. wisc. edu/condor

Concluding remarks › Return storage to the commodity › curve by creating software-only storage appliances Allow greater storage flexibility for a wide range of application needs www. cs. wisc. edu/condor

- Slides: 48