Navigating Nets Simple algorithms for proximity search Robert

Navigating Nets: Simple algorithms for proximity search Robert Krauthgamer (IBM Almaden) Joint work with James R. Lee (UC Berkeley) Navigating Nets

A classical problem Fix a metric space (X, d): X = set of points. d = distance function over X. Near-neighbor search (NNS) [Minsky-Papert]: 1. Preprocess a given n-point subset S X. 2. Given a query point q 2 X, quickly compute the closest point to q among S. Navigating Nets 2

Variations on NNS (1+ )-approximate nearest neighbor search: l Find a 2 X such that d(q, a) · (1+ ) d(q, S). Dynamic case: l Allow updates to S (insertions and deletions). Distributed case: l l No central index (e. g. , nodes in a network). Other cost measures (e. g. , communication, stretch, load). Navigating Nets 3

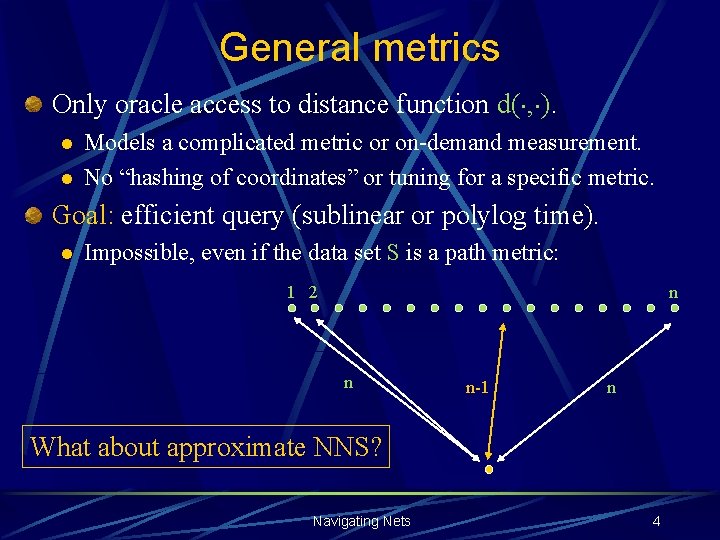

General metrics Only oracle access to distance function d(¢, ¢). l l Models a complicated metric or on-demand measurement. No “hashing of coordinates” or tuning for a specific metric. Goal: efficient query (sublinear or polylog time). l Impossible, even if the data set S is a path metric: 1 2 n n n-1 n What about approximate NNS? Navigating Nets 4

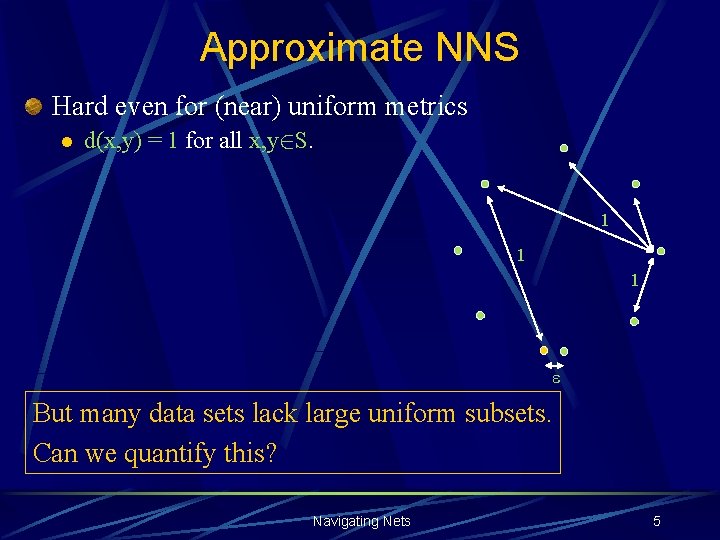

Approximate NNS Hard even for (near) uniform metrics l d(x, y) = 1 for all x, y 2 S. 1 1 1 But many data sets lack large uniform subsets. Can we quantify this? Navigating Nets 5

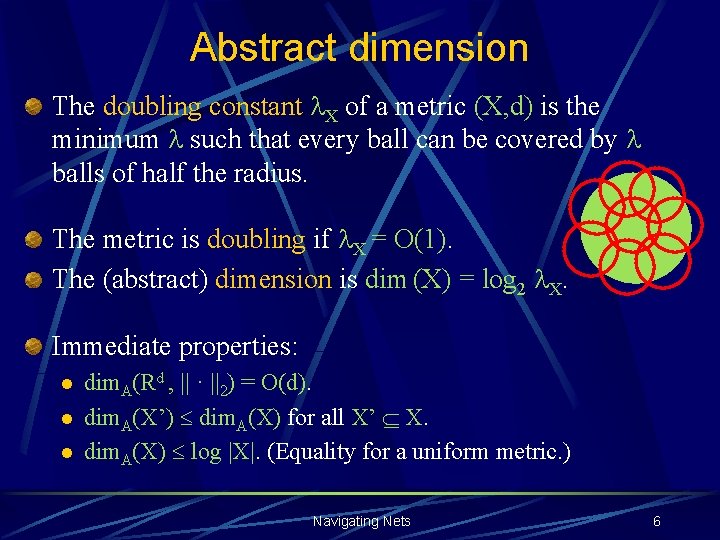

Abstract dimension The doubling constant l. X of a metric (X, d) is the minimum l such that every ball can be covered by l balls of half the radius. The metric is doubling if l. X = O(1). The (abstract) dimension is dim (X) = log 2 l. X. Immediate properties: l l l dim. A(Rd , || · ||2) = O(d). dim. A(X’) dim. A(X) for all X’ X. dim. A(X) log |X|. (Equality for a uniform metric. ) Navigating Nets 6

Illustration Grid with missing piece Navigating Nets 7

Illustration Grid with missing piece Low-dimensional manifold (bounded curvature) Navigating Nets 8

Illustration Grid with missing piece Manifold Union of curves in Euclidean space Navigating Nets 9

![Embedding doubling metrics Theorem [Assouad, 1983] [Gupta, K. , Lee, 2003]: Fix 0< <1, Embedding doubling metrics Theorem [Assouad, 1983] [Gupta, K. , Lee, 2003]: Fix 0< <1,](http://slidetodoc.com/presentation_image_h2/d14e3d078be68bb569cf03b73639b13f/image-10.jpg)

Embedding doubling metrics Theorem [Assouad, 1983] [Gupta, K. , Lee, 2003]: Fix 0< <1, and let (X, d) be a doubling metric. Then (X, d ) can be embedded with O(1) distortion into l 2 O(1). Not true for =1 [Semmes, 1996]. Motivation: Embed S and then apply Euclidean NNS. Navigating Nets 10

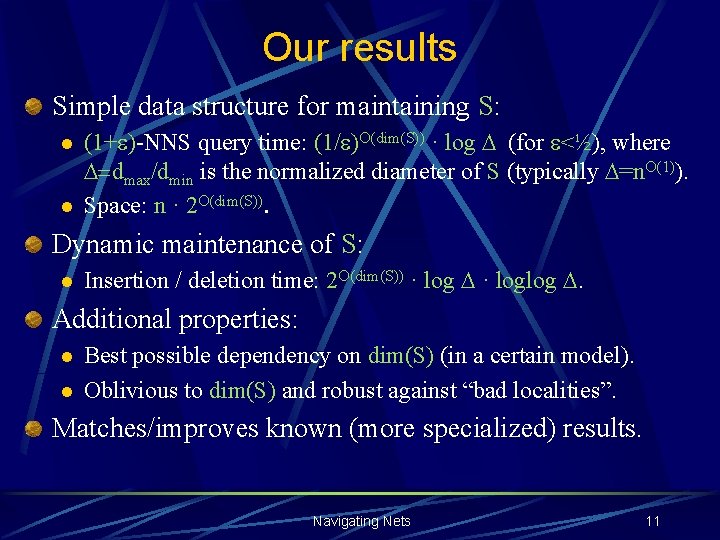

Our results Simple data structure for maintaining S: l l (1+ )-NNS query time: (1/ )O(dim(S)) · log (for <½), where =dmax/dmin is the normalized diameter of S (typically =n. O(1)). Space: n · 2 O(dim(S)). Dynamic maintenance of S: l Insertion / deletion time: 2 O(dim(S)) · loglog . Additional properties: l l Best possible dependency on dim(S) (in a certain model). Oblivious to dim(S) and robust against “bad localities”. Matches/improves known (more specialized) results. Navigating Nets 11

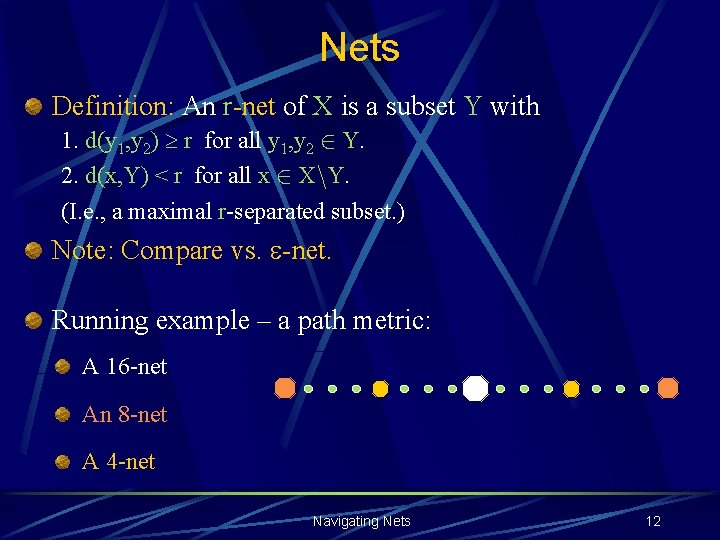

Nets Definition: An r-net of X is a subset Y with 1. d(y 1, y 2) r for all y 1, y 2 2 Y. 2. d(x, Y) < r for all x 2 Xn. Y. (I. e. , a maximal r-separated subset. ) Note: Compare vs. -net. Running example – a path metric: A 16 -net An 8 -net A 4 -net Navigating Nets 12

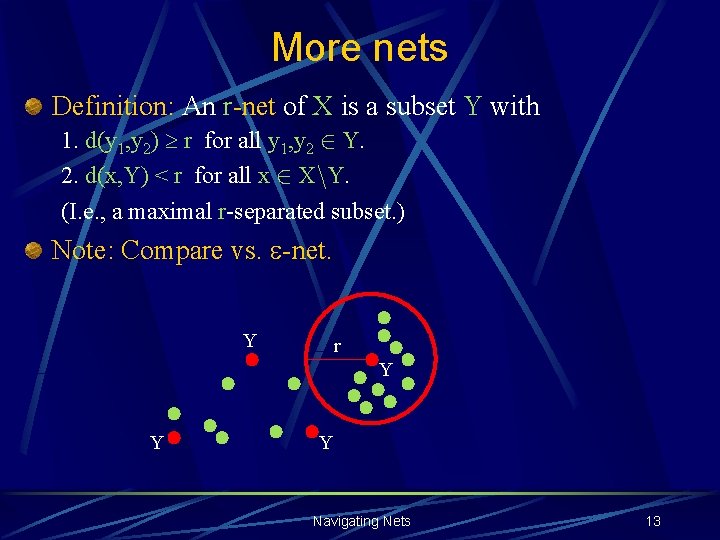

More nets Definition: An r-net of X is a subset Y with 1. d(y 1, y 2) r for all y 1, y 2 2 Y. 2. d(x, Y) < r for all x 2 Xn. Y. (I. e. , a maximal r-separated subset. ) Note: Compare vs. -net. Y r Y Y Y Navigating Nets 13

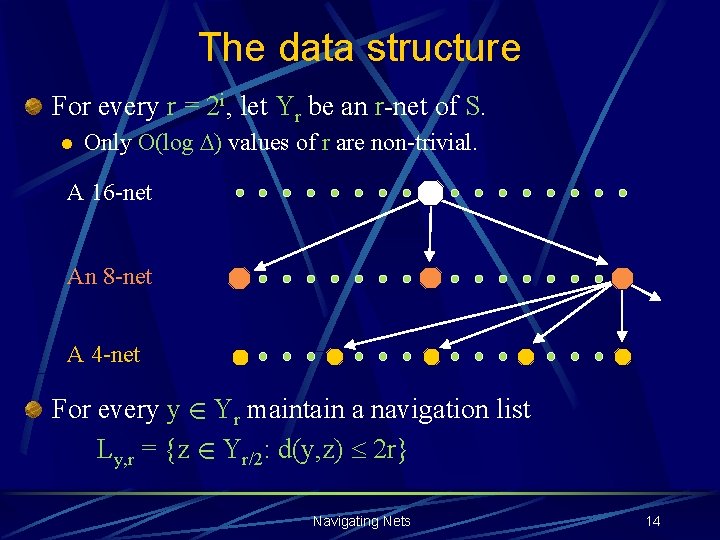

The data structure For every r = 2 i, let Yr be an r-net of S. l Only O(log ) values of r are non-trivial. A 16 -net An 8 -net A 4 -net For every y 2 Yr maintain a navigation list Ly, r = {z 2 Yr/2: d(y, z) 2 r} Navigating Nets 14

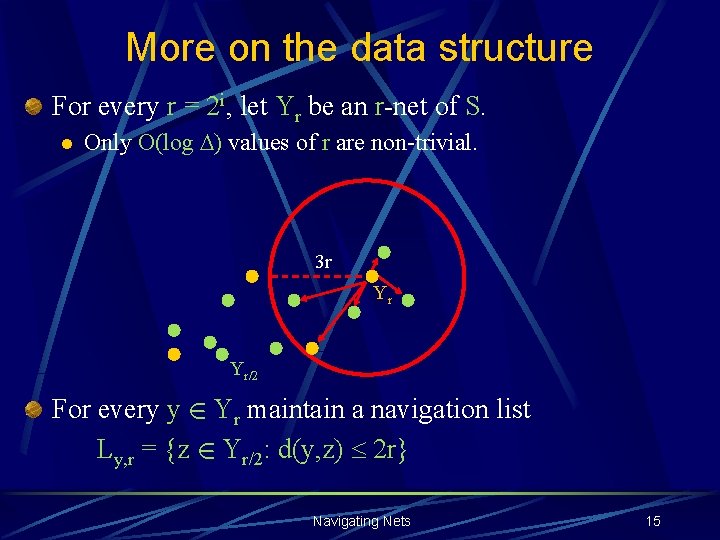

More on the data structure For every r = 2 i, let Yr be an r-net of S. l Only O(log ) values of r are non-trivial. 3 r Yr Yr/2 For every y 2 Yr maintain a navigation list Ly, r = {z 2 Yr/2: d(y, z) 2 r} Navigating Nets 15

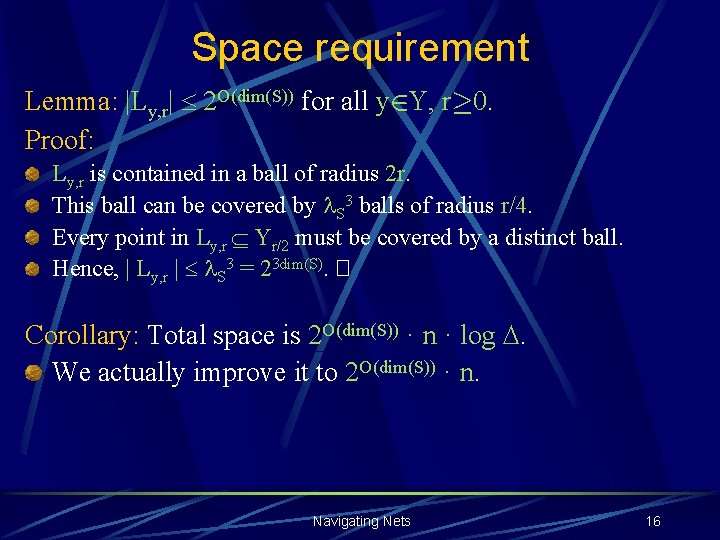

Space requirement Lemma: |Ly, r| 2 O(dim(S)) for all y 2 Y, r¸ 0. Proof: Ly, r is contained in a ball of radius 2 r. This ball can be covered by l. S 3 balls of radius r/4. Every point in Ly, r Yr/2 must be covered by a distinct ball. Hence, | Ly, r | l. S 3 = 23 dim(S). � Corollary: Total space is 2 O(dim(S)) · n · log . We actually improve it to 2 O(dim(S)) · n. Navigating Nets 16

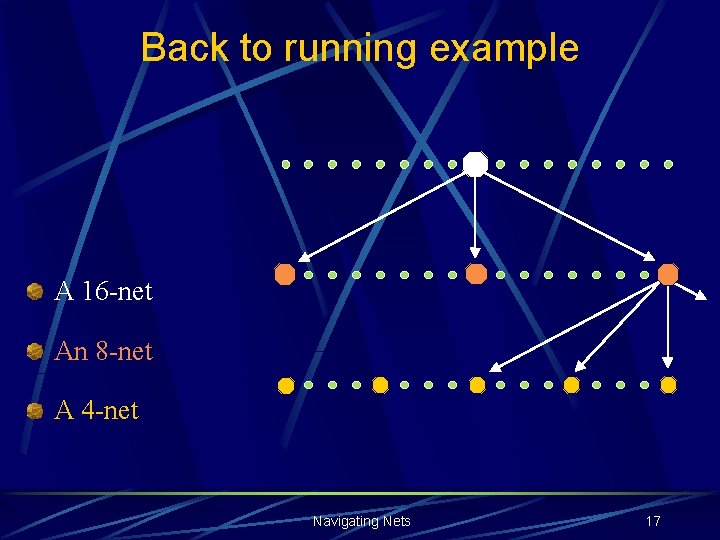

Back to running example A 16 -net An 8 -net A 4 -net Navigating Nets 17

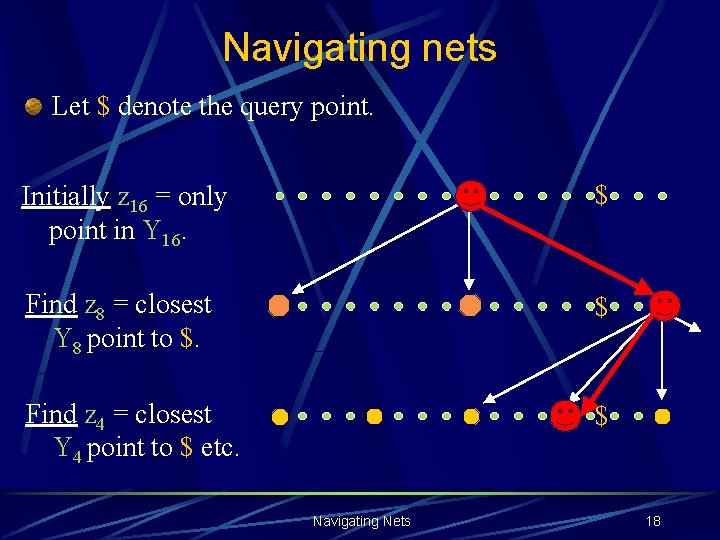

Navigating nets Let $ denote the query point. Initially z 16 = only point in Y 16. $ Find z 8 = closest Y 8 point to $. $ Find z 4 = closest Y 4 point to $ etc. $ Navigating Nets 18

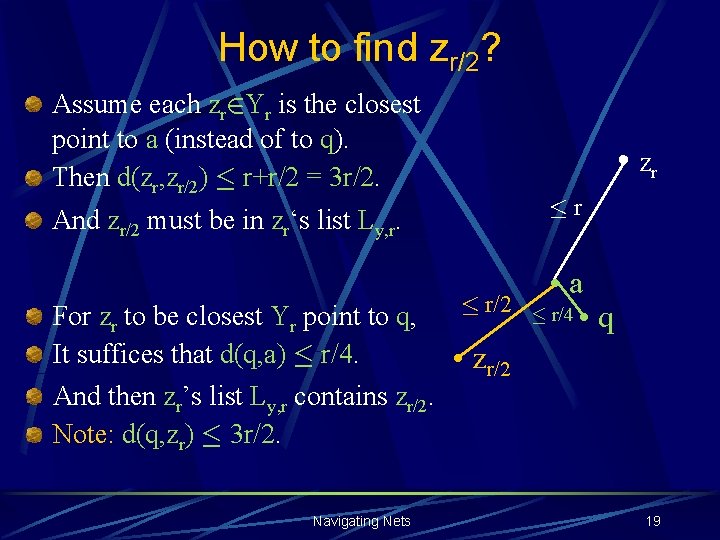

How to find zr/2? Assume each zr 2 Yr is the closest point to a (instead of to q). Then d(zr, zr/2) · r+r/2 = 3 r/2. • zr ·r And zr/2 must be in zr‘s list Ly, r. For zr to be closest Yr point to q, It suffices that d(q, a) · r/4. And then zr’s list Ly, r contains zr/2. Note: d(q, zr) · 3 r/2. Navigating Nets · r/2 • a · r/4 • q • zr/2 19

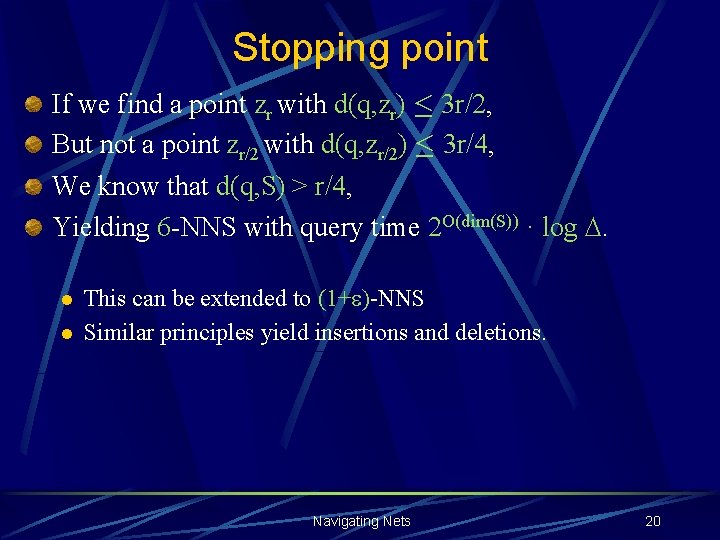

Stopping point If we find a point zr with d(q, zr) · 3 r/2, But not a point zr/2 with d(q, zr/2) · 3 r/4, We know that d(q, S) > r/4, Yielding 6 -NNS with query time 2 O(dim(S)) · log . l l This can be extended to (1+ )-NNS Similar principles yield insertions and deletions. Navigating Nets 20

Near-optimality The basic idea: l l Consider a uniform metric on l points. Let the query point be at distance 1 from all of them, Except for one point whose distance is 1 -. Finding this point requires (in an oracle model) computing all l distances to q. Can happen at every distance scale r. We get a lower bound of 2 W (dim(S)) log . Navigating Nets 21

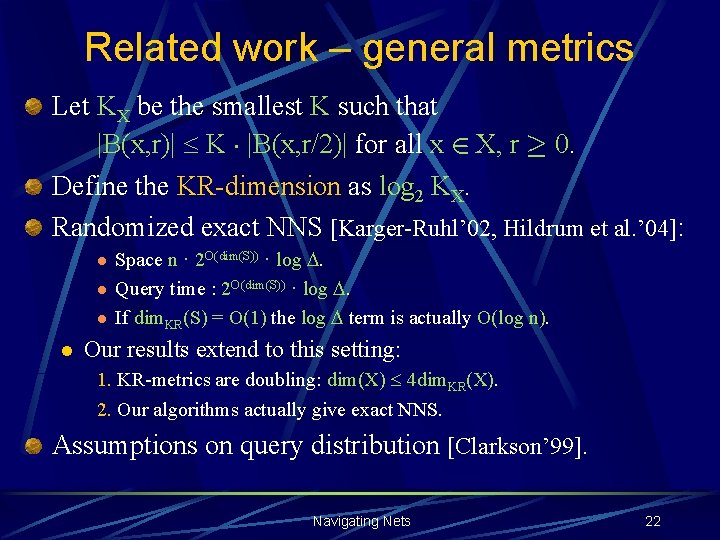

Related work – general metrics Let KX be the smallest K such that |B(x, r)| K ¢ |B(x, r/2)| for all x 2 X, r ¸ 0. Define the KR-dimension as log 2 KX. Randomized exact NNS [Karger-Ruhl’ 02, Hildrum et al. ’ 04]: l l Space n · 2 O(dim(S)) · log . Query time : 2 O(dim(S)) · log . If dim. KR(S) = O(1) the log term is actually O(log n). Our results extend to this setting: 1. KR-metrics are doubling: dim(X) 4 dim. KR(X). 2. Our algorithms actually give exact NNS. Assumptions on query distribution [Clarkson’ 99]. Navigating Nets 22

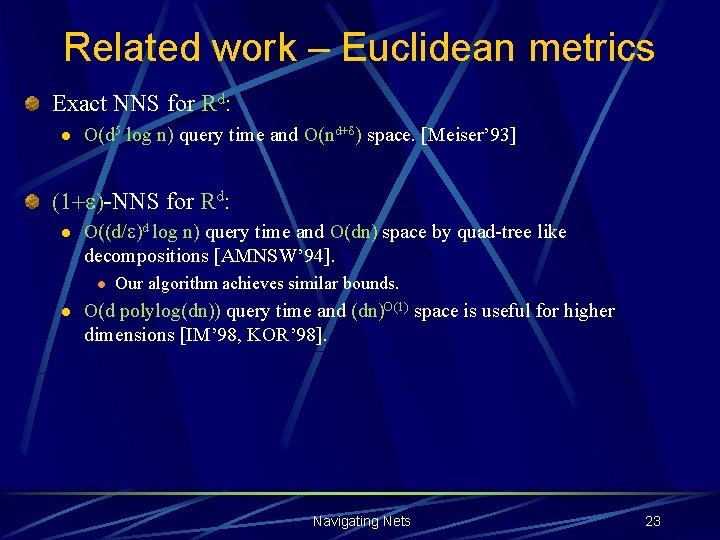

Related work – Euclidean metrics Exact NNS for Rd: l O(d 5 log n) query time and O(nd+d) space. [Meiser’ 93] (1+ )-NNS for Rd: l O((d/ )d log n) query time and O(dn) space by quad-tree like decompositions [AMNSW’ 94]. l l Our algorithm achieves similar bounds. O(d polylog(dn)) query time and (dn)O(1) space is useful for higher dimensions [IM’ 98, KOR’ 98]. Navigating Nets 23

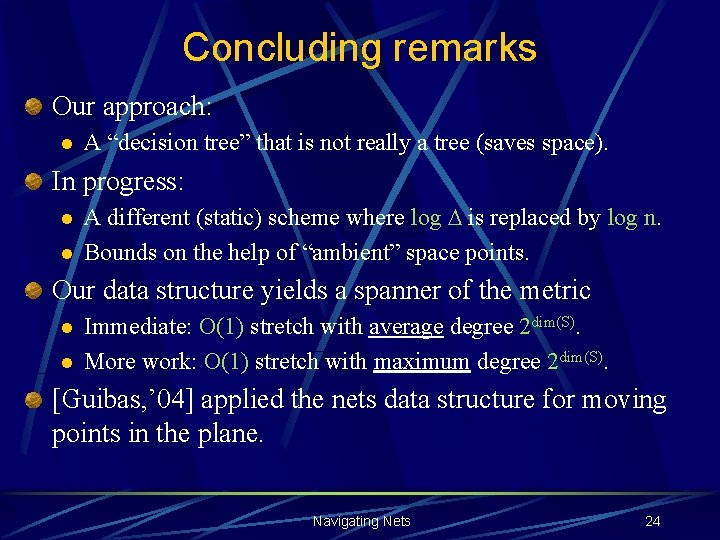

Concluding remarks Our approach: l A “decision tree” that is not really a tree (saves space). In progress: l l A different (static) scheme where log is replaced by log n. Bounds on the help of “ambient” space points. Our data structure yields a spanner of the metric l l Immediate: O(1) stretch with average degree 2 dim(S). More work: O(1) stretch with maximum degree 2 dim(S). [Guibas, ’ 04] applied the nets data structure for moving points in the plane. Navigating Nets 24

Navigating Nets 25

- Slides: 25