Natural Language Understanding and Generation for Dialogue Systems

- Slides: 33

Natural Language Understanding and Generation for Dialogue Systems Eduard Hovy USC/ISI institute for creative technologies 11/1/2020

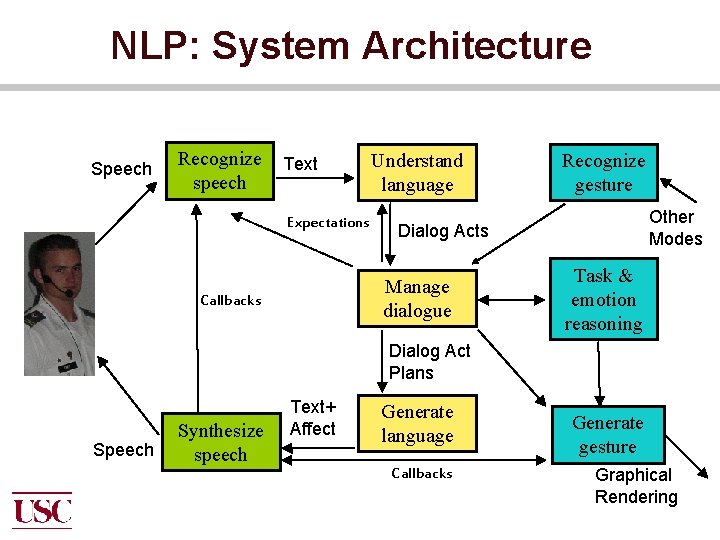

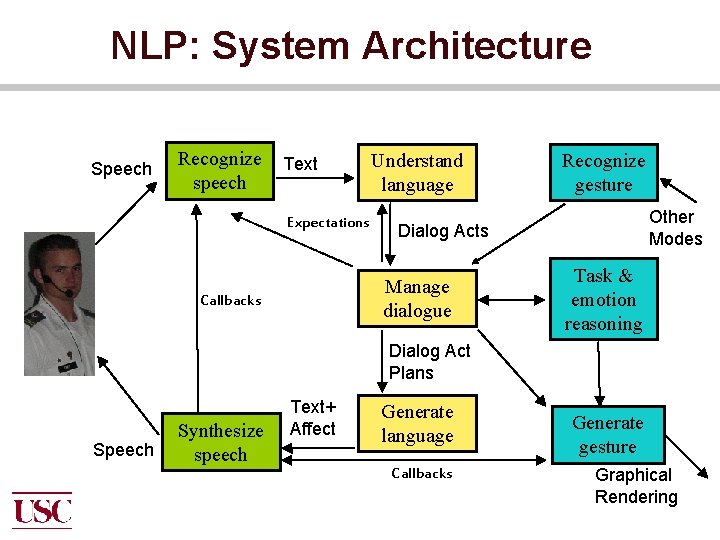

NLP: System Architecture Speech Recognize speech Text Expectations Understand language Other Modes Dialog Acts Manage dialogue Callbacks Recognize gesture Task & emotion reasoning Dialog Act Plans Speech Synthesize speech Text+ Affect Generate language Callbacks Generate gesture Graphical Rendering

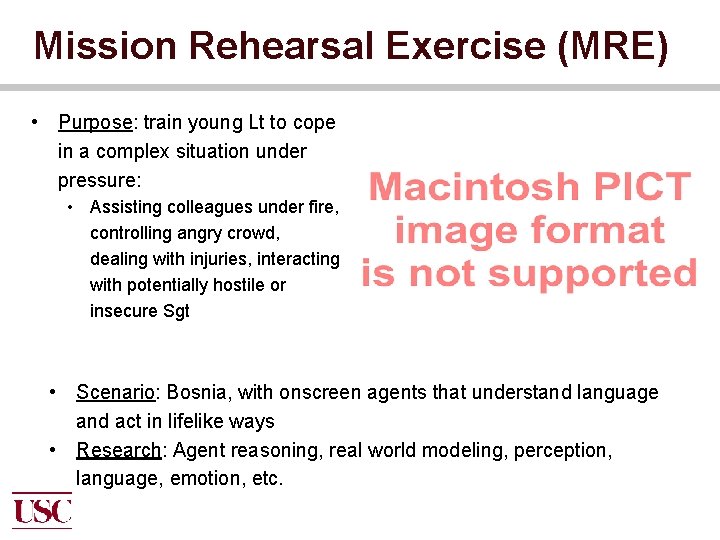

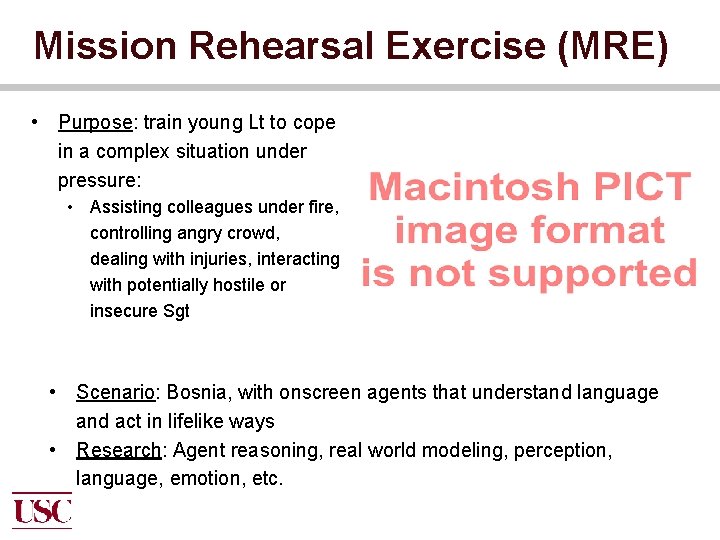

Mission Rehearsal Exercise (MRE) • Purpose: train young Lt to cope in a complex situation under pressure: • Assisting colleagues under fire, controlling angry crowd, dealing with injuries, interacting with potentially hostile or insecure Sgt • Scenario: Bosnia, with onscreen agents that understand language and act in lifelike ways • Research: Agent reasoning, real world modeling, perception, language, emotion, etc.

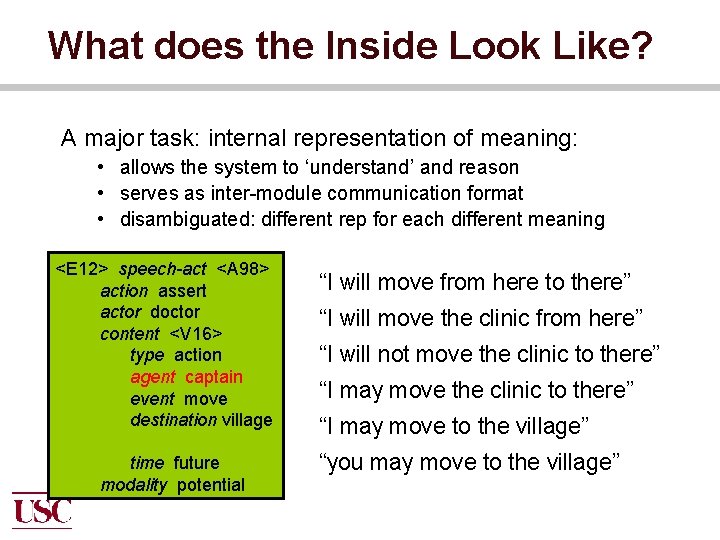

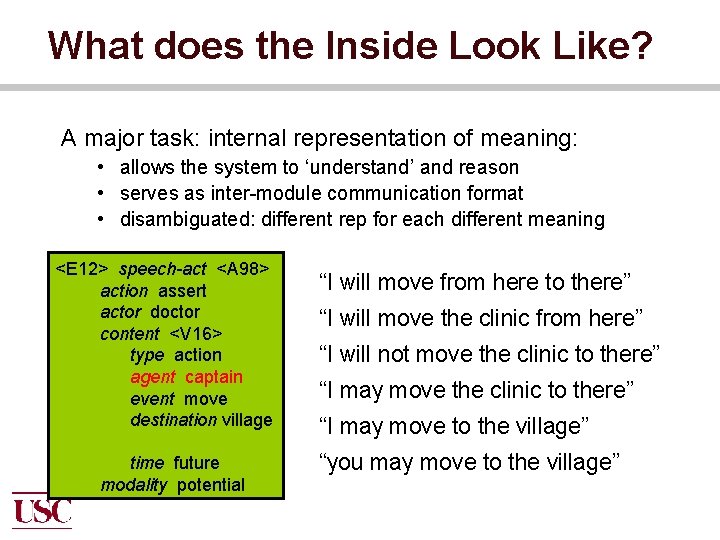

What does the Inside Look Like? A major task: internal representation of meaning: • allows the system to ‘understand’ and reason • serves as inter-module communication format • disambiguated: different rep for each different meaning <E 12> speech-act <A 98> action assert actor doctor content <V 16> type action agent doctor agent captain event move source herevillage destination there theme clinic time future polarity negative modality potential “I will move from here to there” “I will move the clinic from here” “I will not move the clinic to there” “I may move to the village” “you may move to the village”

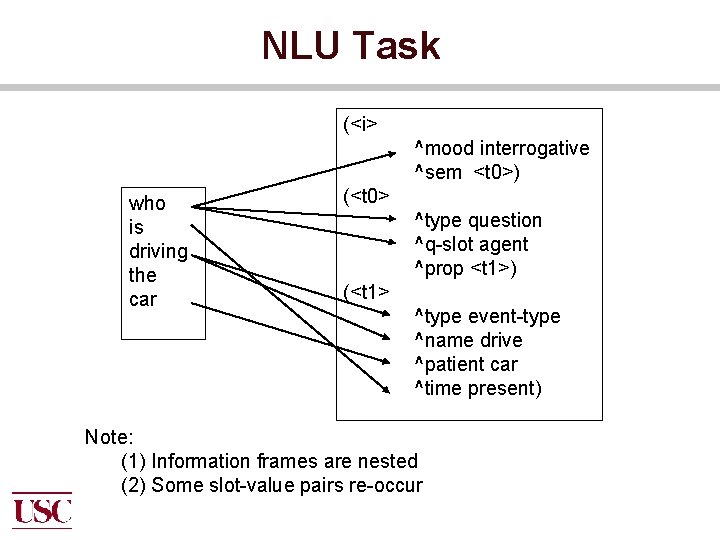

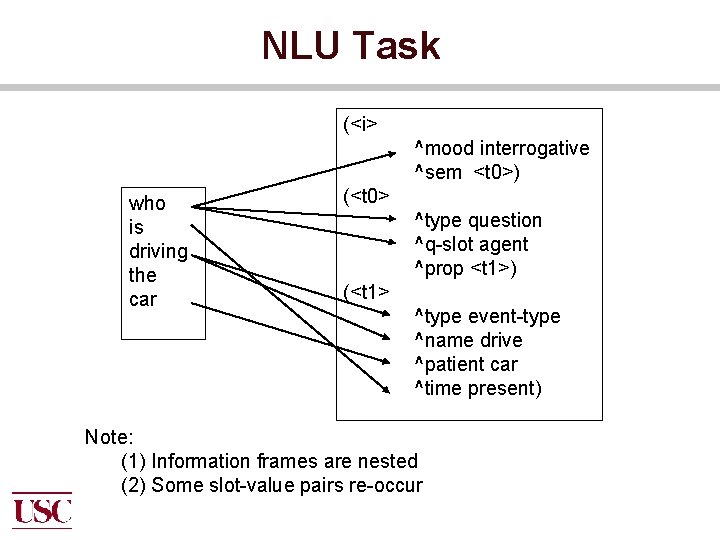

NLU Task (<i> who is driving the car ^mood interrogative ^sem <t 0>) (<t 0> ^type question ^q-slot agent ^prop <t 1>) (<t 1> ^type event-type ^name drive ^patient car ^time present) Note: (1) Information frames are nested (2) Some slot-value pairs re-occur

Situation What’s the problem? • Need to produce semantic representation • Bad quality input from speech reorganization • Very little training data What to do? • Combine multiple engines with different strengths

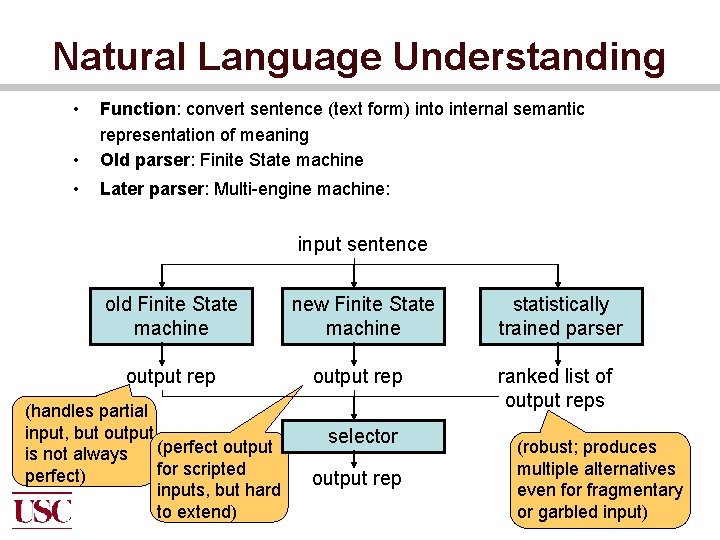

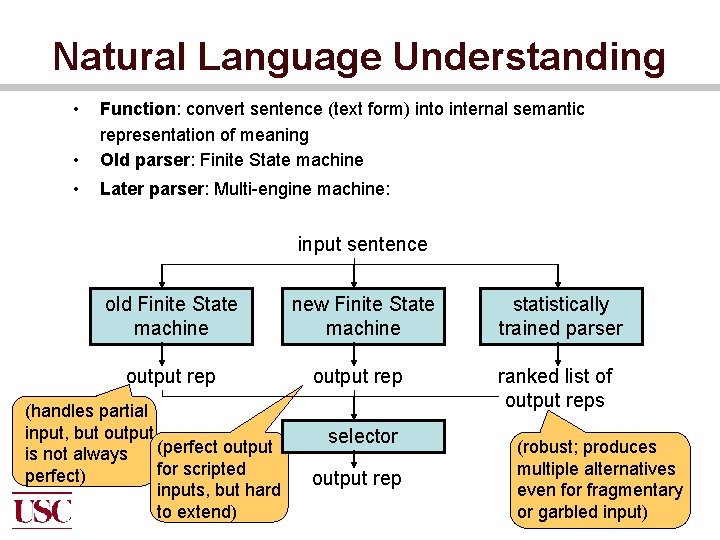

Natural Language Understanding • • Function: convert sentence (text form) into internal semantic representation of meaning Old parser: Finite State machine • Later parser: Multi-engine machine: input sentence old Finite State machine new Finite State machine statistically trained parser output rep ranked list of output reps (handles partial input, but output is not always (perfect output for scripted perfect) inputs, but hard to extend) selector output rep (robust; produces multiple alternatives even for fragmentary or garbled input)

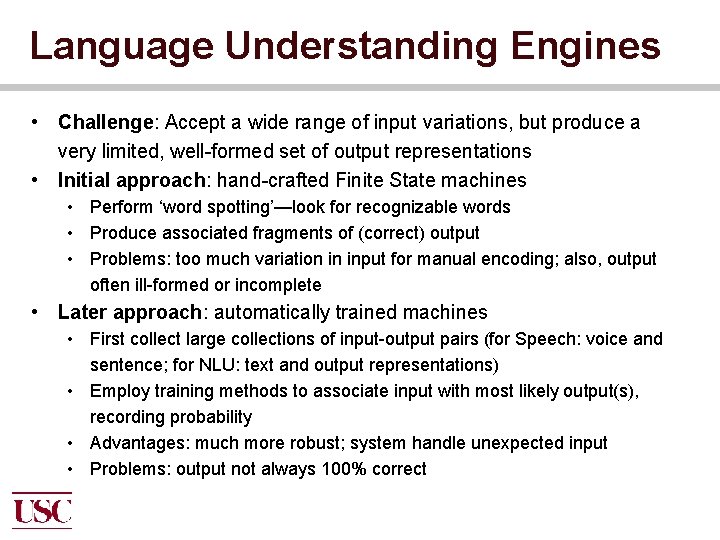

Language Understanding Engines • Challenge: Accept a wide range of input variations, but produce a very limited, well-formed set of output representations • Initial approach: hand-crafted Finite State machines • Perform ‘word spotting’—look for recognizable words • Produce associated fragments of (correct) output • Problems: too much variation in input for manual encoding; also, output often ill-formed or incomplete • Later approach: automatically trained machines • First collect large collections of input-output pairs (for Speech: voice and sentence; for NLU: text and output representations) • Employ training methods to associate input with most likely output(s), recording probability • Advantages: much more robust; system handle unexpected input • Problems: output not always 100% correct

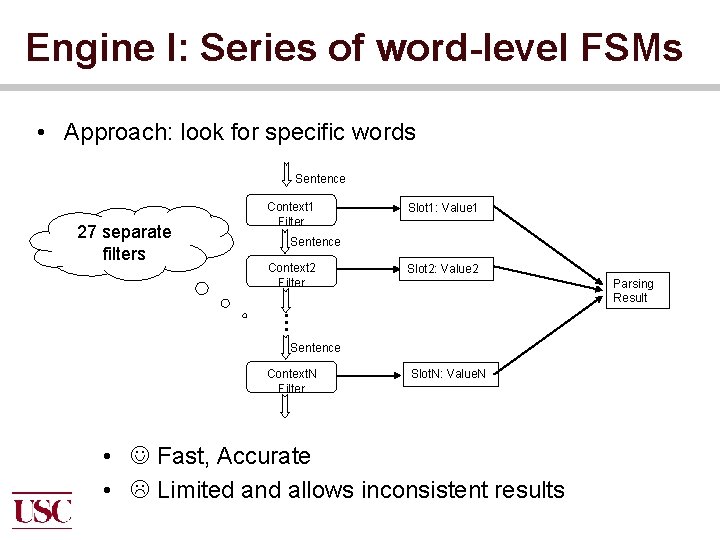

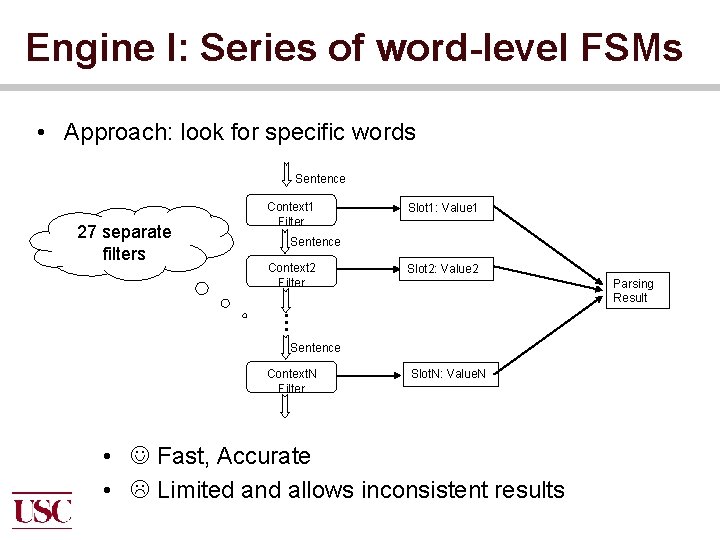

Engine I: Series of word-level FSMs • Approach: look for specific words Sentence 27 separate filters Context 1 Filter Slot 1: Value 1 Sentence Context 2 Filter Slot 2: Value 2 Parsing Result … Sentence Context. N Filter Slot. N: Value. N • Fast, Accurate • Limited and allows inconsistent results

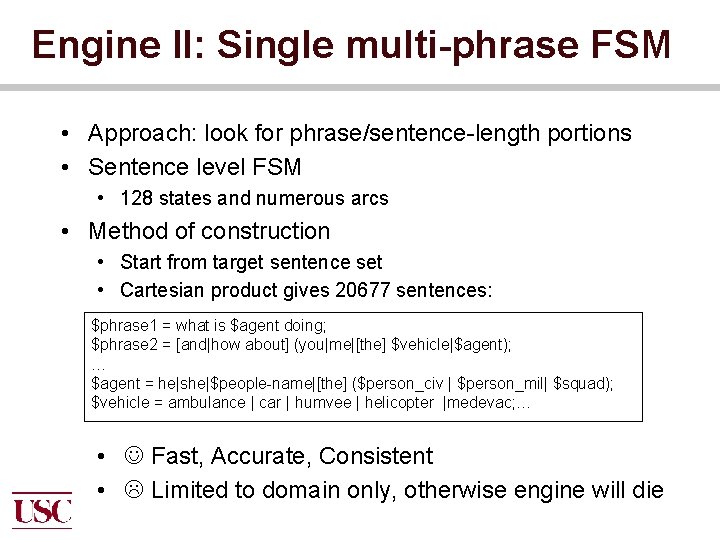

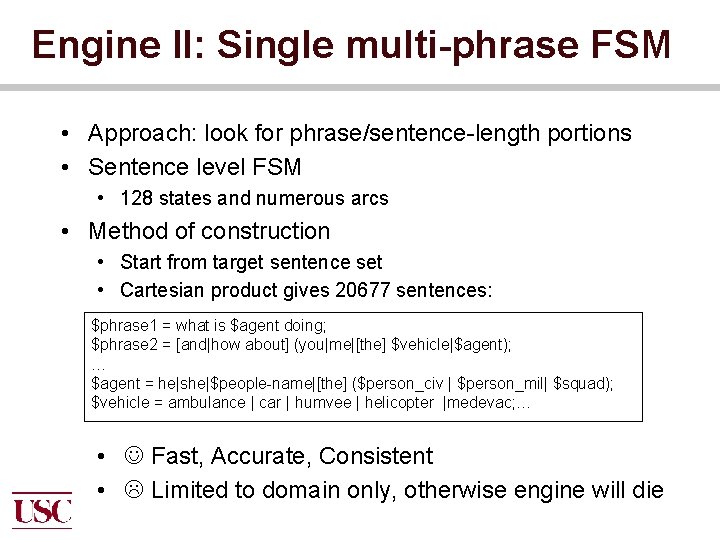

Engine II: Single multi-phrase FSM • Approach: look for phrase/sentence-length portions • Sentence level FSM • 128 states and numerous arcs • Method of construction • Start from target sentence set • Cartesian product gives 20677 sentences: $phrase 1 = what is $agent doing; $phrase 2 = [and|how about] (you|me|[the] $vehicle|$agent); … $agent = he|she|$people-name|[the] ($person_civ | $person_mil| $squad); $vehicle = ambulance | car | humvee | helicopter |medevac; … • Fast, Accurate, Consistent • Limited to domain only, otherwise engine will die

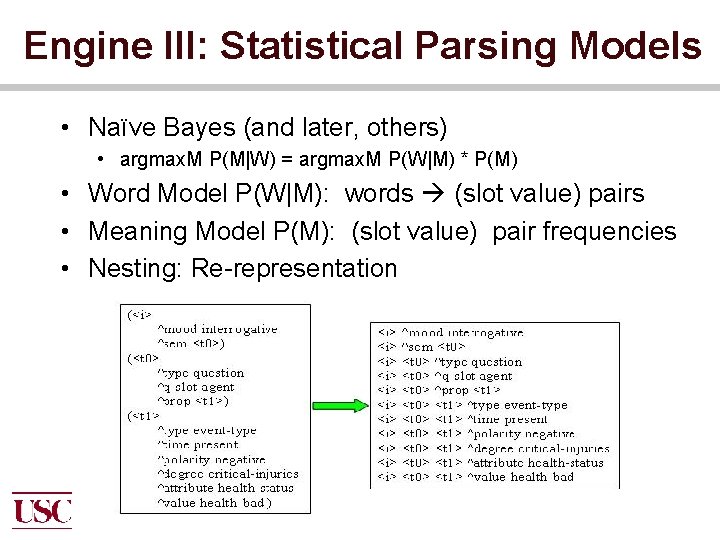

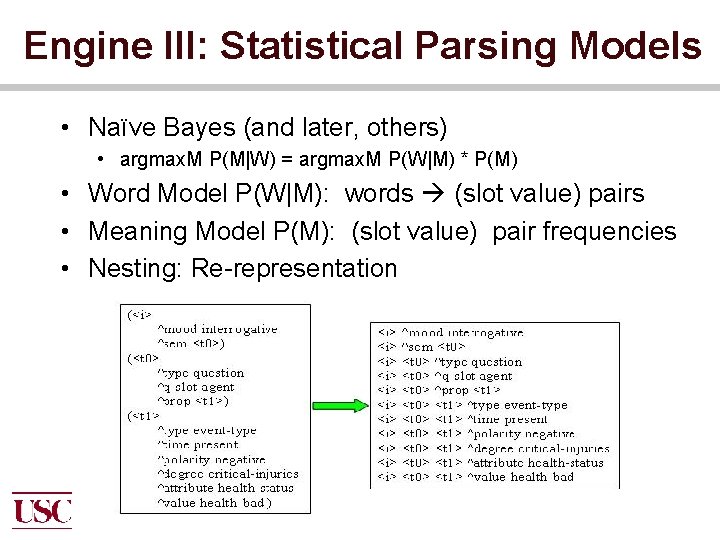

Engine III: Statistical Parsing Models • Naïve Bayes (and later, others) • argmax. M P(M|W) = argmax. M P(W|M) * P(M) • Word Model P(W|M): words (slot value) pairs • Meaning Model P(M): (slot value) pair frequencies • Nesting: Re-representation

Related Work • Number of statistically trained semantic parsers have been reported recently • Ex: Gildea and Jurafsky (2002), Pradhan et al. (2004), Fleishman and Hovy (2003) • SRI system, trained on limited data (Goldwater et al. , 2005) • Frame-oriented partial parsing, trained either on Framenet (Baker et al. , 1998) or Propbank (Kingsbury et al. , 2002) • Hard to use these parsers in specific domains especially dialogues • Dialogue domains sometimes involve unusual terminology • Apart from core case roles (Agent, Patient, Instrument etc. ) dialogue domains need additional information about things like addressees, modality, speech acts etc.

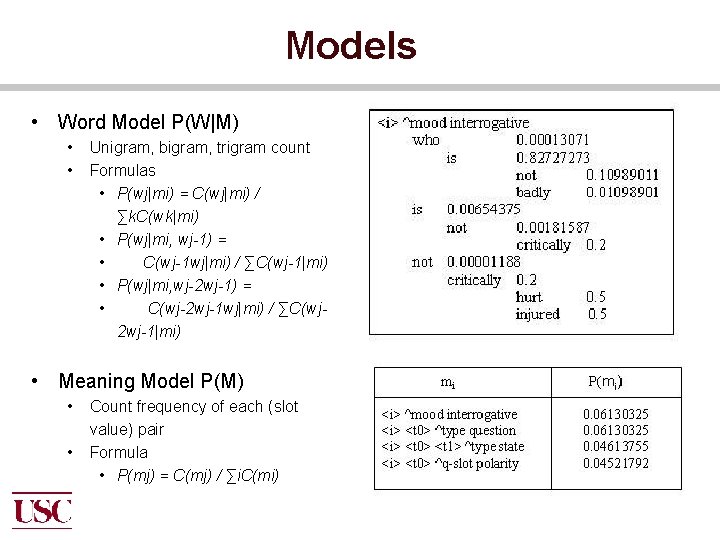

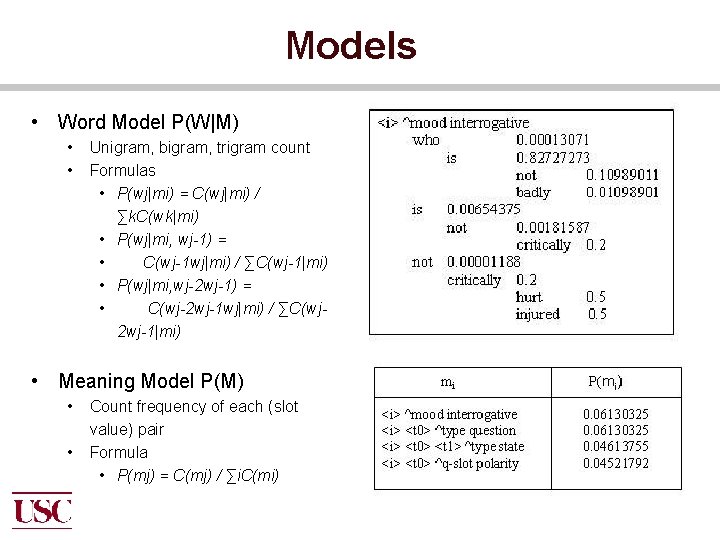

Models • Word Model P(W|M) • • Unigram, bigram, trigram count Formulas • P(wj|mi) = C(wj|mi) / ∑k. C(wk|mi) • P(wj|mi, wj-1) = • C(wj-1 wj|mi) / ∑C(wj-1|mi) • P(wj|mi, wj-2 wj-1) = • C(wj-2 wj-1 wj|mi) / ∑C(wj 2 wj-1|mi) • Meaning Model P(M) • • Count frequency of each (slot value) pair Formula • P(mj) = C(mj) / ∑i. C(mi)

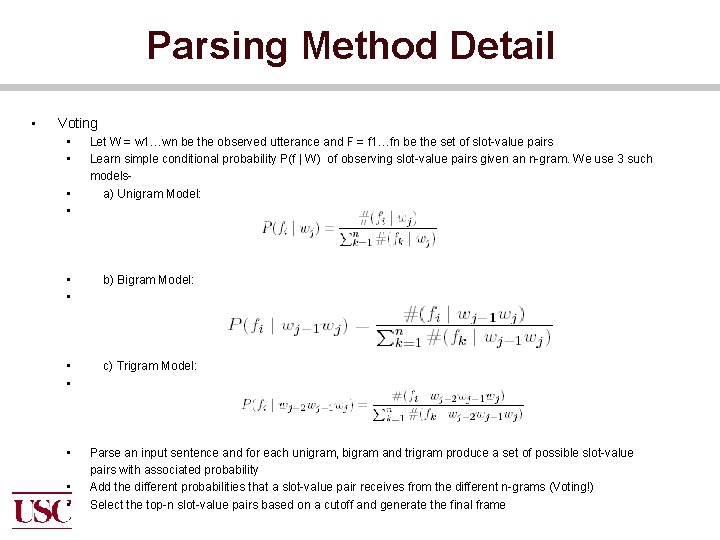

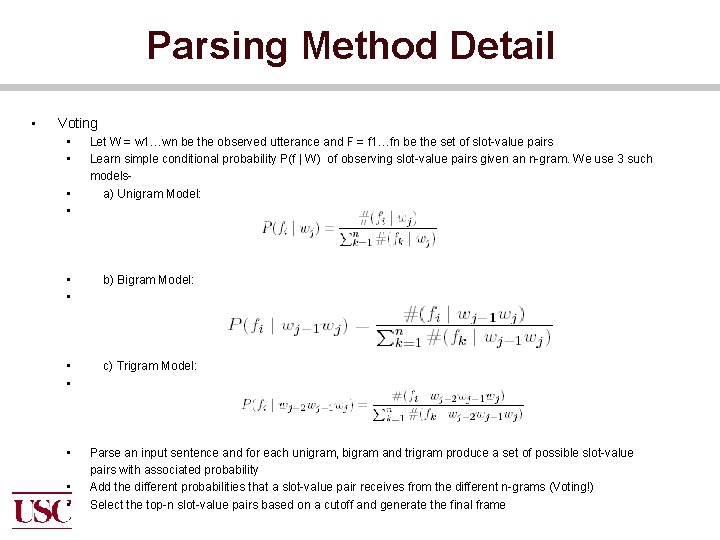

Parsing Method Detail • Voting • • Let W = w 1…wn be the observed utterance and F = f 1…fn be the set of slot-value pairs Learn simple conditional probability P(f | W) of observing slot-value pairs given an n-gram. We use 3 such modelsa) Unigram Model: • • b) Bigram Model: • • c) Trigram Model: • • • Parse an input sentence and for each unigram, bigram and trigram produce a set of possible slot-value pairs with associated probability Add the different probabilities that a slot-value pair receives from the different n-grams (Voting!) Select the top-n slot-value pairs based on a cutoff and generate the final frame

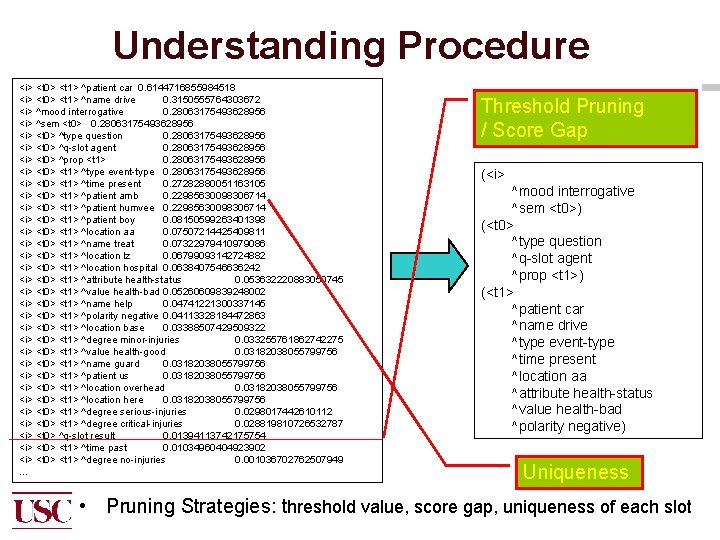

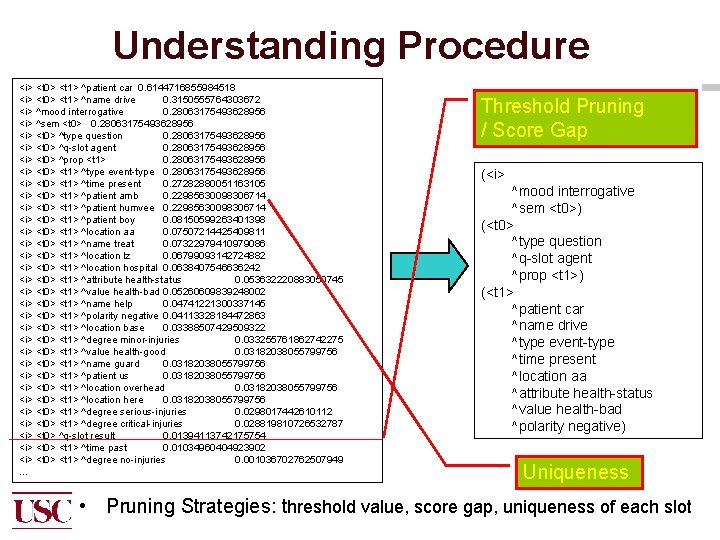

Understanding Procedure <i> <t 0> <t 1> ^patient car 0. 6144716855984518 <i> <t 0> <t 1> ^name drive 0. 3150555764303672 <i> ^mood interrogative 0. 28063175493628956 <i> ^sem <t 0> 0. 28063175493628956 <i> <t 0> ^type question 0. 28063175493628956 <i> <t 0> ^q-slot agent 0. 28063175493628956 <i> <t 0> ^prop <t 1> 0. 28063175493628956 <i> <t 0> <t 1> ^type event-type 0. 28063175493628956 <i> <t 0> <t 1> ^time present 0. 27282880051163105 <i> <t 0> <t 1> ^patient amb 0. 22985630098306714 <i> <t 0> <t 1> ^patient humvee 0. 22985630098306714 <i> <t 0> <t 1> ^patient boy 0. 08150599263401398 <i> <t 0> <t 1> ^location aa 0. 07507214425409811 <i> <t 0> <t 1> ^name treat 0. 07322979410979086 <i> <t 0> <t 1> ^location lz 0. 06799093142724882 <i> <t 0> <t 1> ^location hospital 0. 0638407546636242 <i> <t 0> <t 1> ^attribute health-status 0. 053632220883050745 <i> <t 0> <t 1> ^value health-bad 0. 05260609839248002 <i> <t 0> <t 1> ^name help 0. 04741221300337145 <i> <t 0> <t 1> ^polarity negative 0. 04113328184472863 <i> <t 0> <t 1> ^location base 0. 03388507429509322 <i> <t 0> <t 1> ^degree minor-injuries 0. 033255761862742275 <i> <t 0> <t 1> ^value health-good 0. 03182038055799756 <i> <t 0> <t 1> ^name guard 0. 03182038055799756 <i> <t 0> <t 1> ^patient us 0. 03182038055799756 <i> <t 0> <t 1> ^location overhead 0. 03182038055799756 <i> <t 0> <t 1> ^location here 0. 03182038055799756 <i> <t 0> <t 1> ^degree serious-injuries 0. 0298017442610112 <i> <t 0> <t 1> ^degree critical-injuries 0. 028819810726532787 <i> <t 0> ^q-slot result 0. 01394113742175754 <i> <t 0> <t 1> ^time past 0. 01034960404923902 <i> <t 0> <t 1> ^degree no-injuries 0. 001036702762507949 … Threshold Pruning / Score Gap (<i> ^mood interrogative ^sem <t 0>) (<t 0> ^type question ^q-slot agent ^prop <t 1>) (<t 1> ^patient car ^name drive ^type event-type ^time present ^location aa ^attribute health-status ^value health-bad ^polarity negative) Uniqueness • Pruning Strategies: threshold value, score gap, uniqueness of each slot

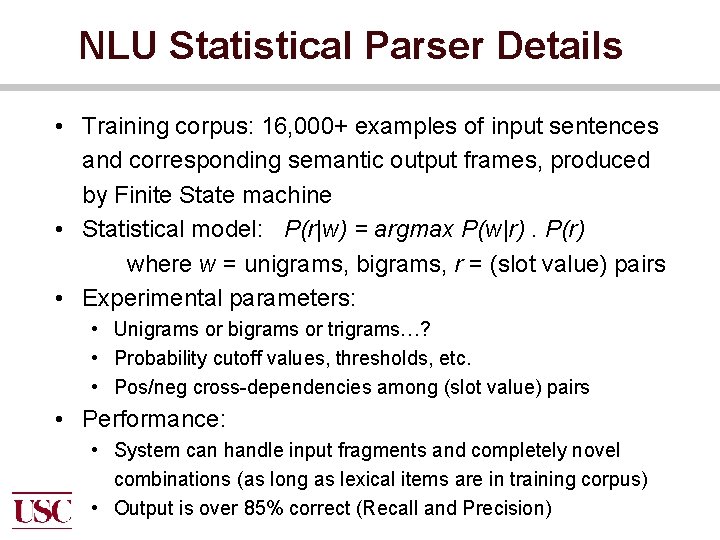

NLU Statistical Parser Details • Training corpus: 16, 000+ examples of input sentences and corresponding semantic output frames, produced by Finite State machine • Statistical model: P(r|w) = argmax P(w|r). P(r) where w = unigrams, bigrams, r = (slot value) pairs • Experimental parameters: • Unigrams or bigrams or trigrams…? • Probability cutoff values, thresholds, etc. • Pos/neg cross-dependencies among (slot value) pairs • Performance: • System can handle input fragments and completely novel combinations (as long as lexical items are in training corpus) • Output is over 85% correct (Recall and Precision)

Evaluation: Measuring Correctness We know what we want, and we see what the system produces: • • Recall: how many slotvalue pairs missing? Precision: how many slot-value pairs wrong? Record Army trainees Analyze each utterance in detail

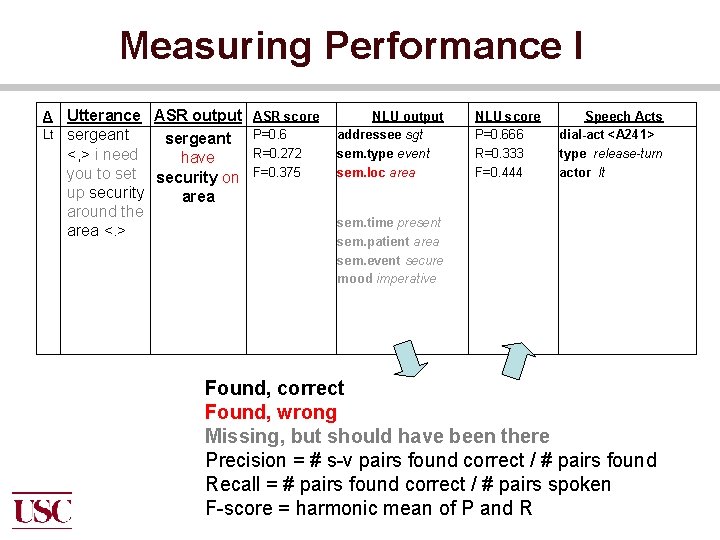

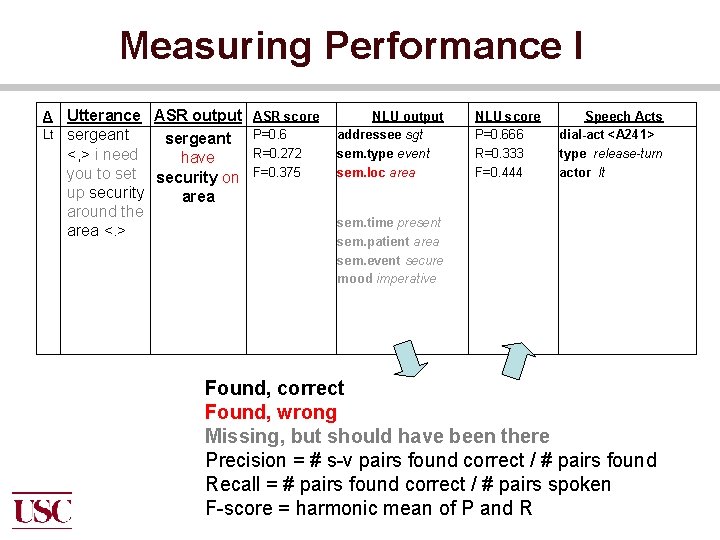

Measuring Performance I A Lt Utterance ASR output sergeant <, > i need have you to set security on up security area around the area <. > ASR score P=0. 6 R=0. 272 F=0. 375 NLU output addressee sgt sem. type event sem. loc area NLU score P=0. 666 R=0. 333 F=0. 444 Speech Acts dial-act <A 241> type release-turn actor lt sem. time present sem. patient area sem. event secure mood imperative Found, correct Found, wrong Missing, but should have been there Precision = # s-v pairs found correct / # pairs found Recall = # pairs found correct / # pairs spoken F-score = harmonic mean of P and R

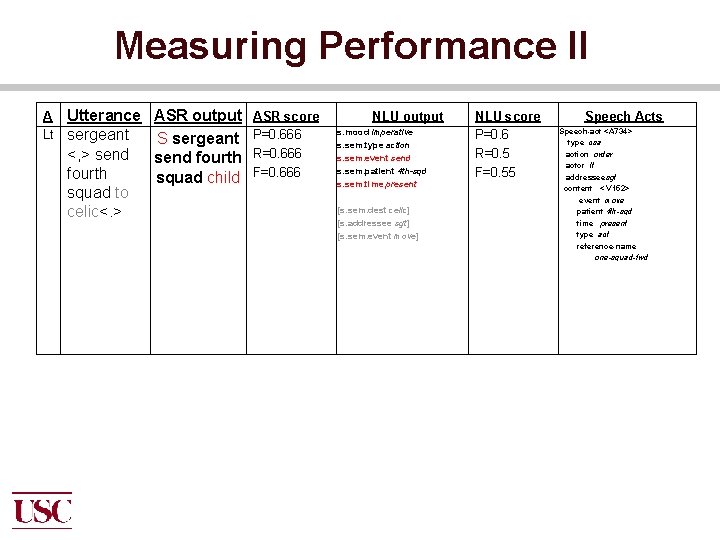

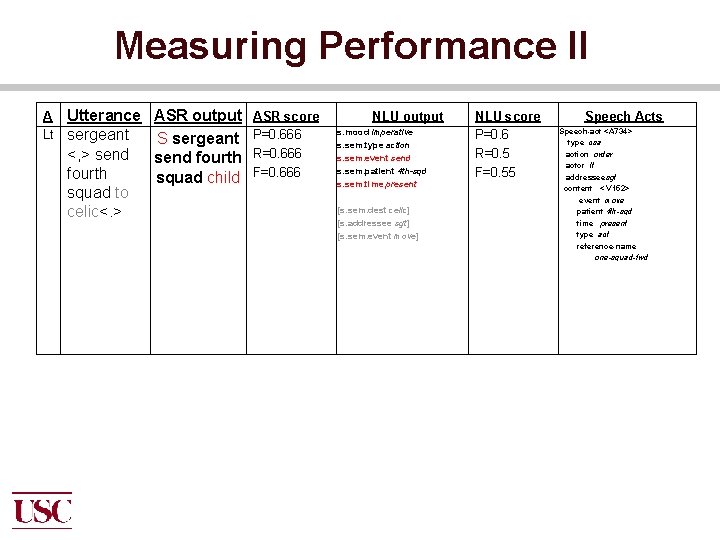

Measuring Performance II A Lt Utterance ASR output sergeant S sergeant <, > send fourth squad child squad to celic<. > ASR score P=0. 666 R=0. 666 F=0. 666 NLU output s. mood imperative s. sem. type action s. sem. event send s. sem. patient 4 th-sqd s. sem. time present [s. sem. dest celic] [s. addressee sgt] [s. sem. event move] NLU score P=0. 6 R=0. 5 F=0. 55 Speech Acts Speech-act <A 734> type csa action order actor lt addresseesgt content < V 152> event move patient 4 th-sqd time present type act reference-name one-squad-fwd

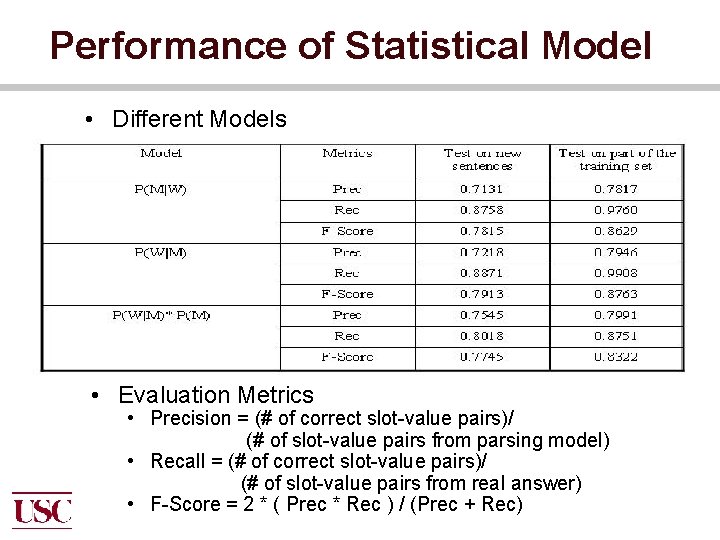

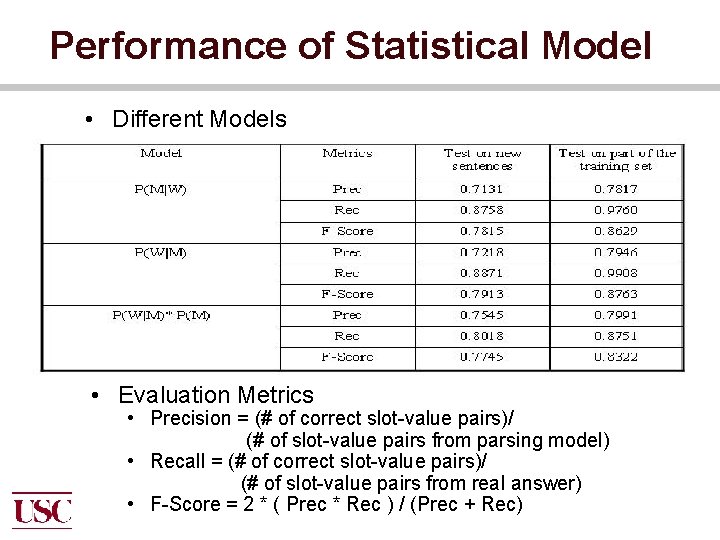

Performance of Statistical Model • Different Models • Evaluation Metrics • Precision = (# of correct slot-value pairs)/ (# of slot-value pairs from parsing model) • Recall = (# of correct slot-value pairs)/ (# of slot-value pairs from real answer) • F-Score = 2 * ( Prec * Rec ) / (Prec + Rec)

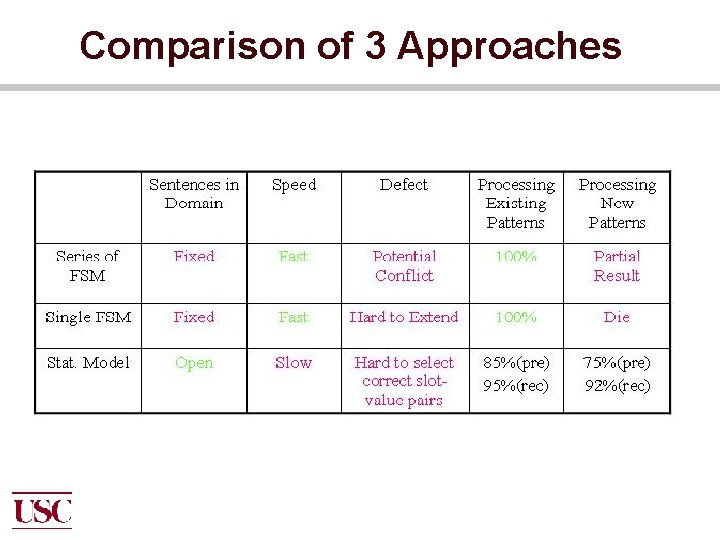

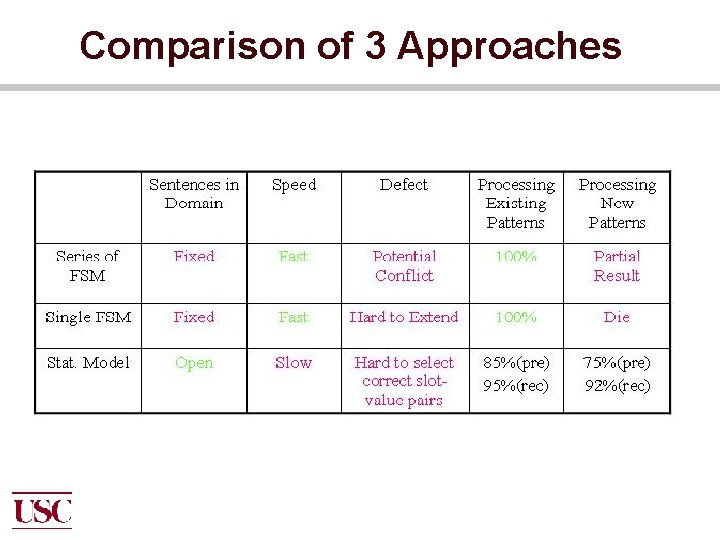

Comparison of 3 Approaches

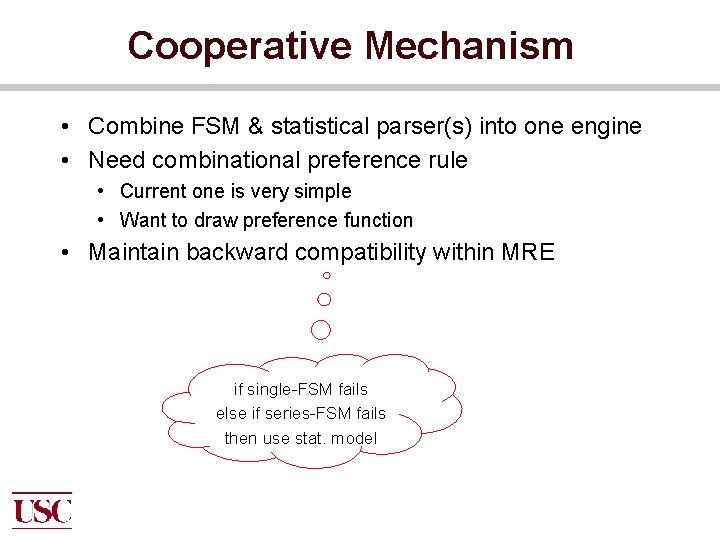

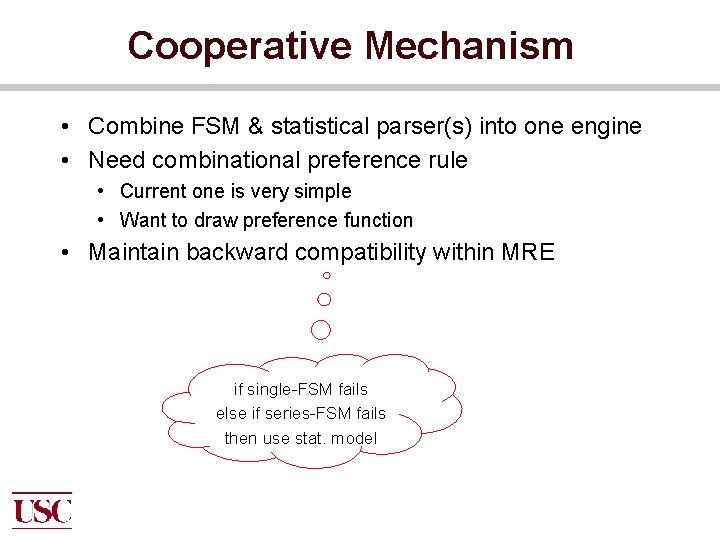

Cooperative Mechanism • Combine FSM & statistical parser(s) into one engine • Need combinational preference rule • Current one is very simple • Want to draw preference function • Maintain backward compatibility within MRE if single-FSM fails else if series-FSM fails then use stat. model

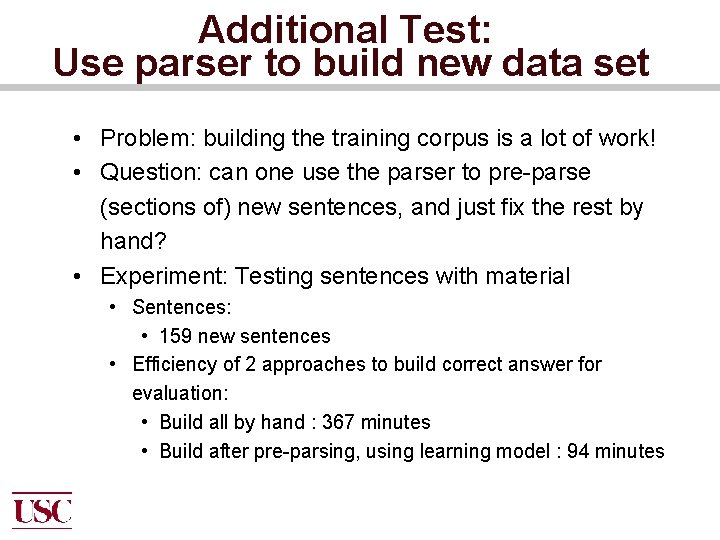

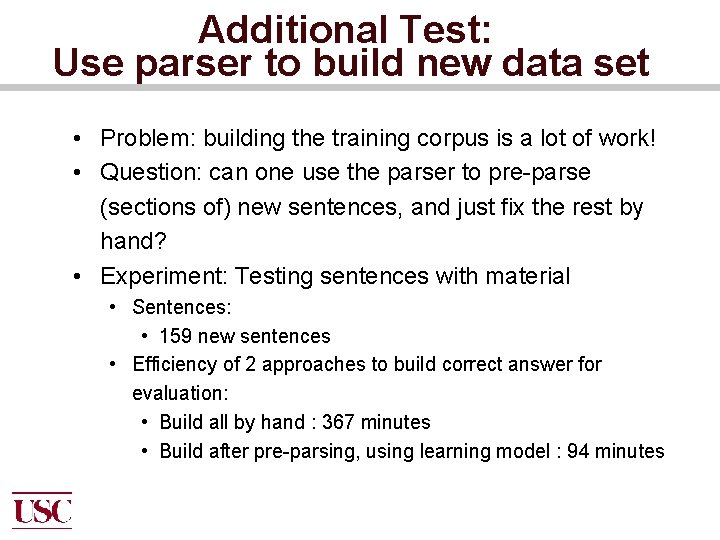

Additional Test: Use parser to build new data set • Problem: building the training corpus is a lot of work! • Question: can one use the parser to pre-parse (sections of) new sentences, and just fix the rest by hand? • Experiment: Testing sentences with material • Sentences: • 159 new sentences • Efficiency of 2 approaches to build correct answer for evaluation: • Build all by hand : 367 minutes • Build after pre-parsing, using learning model : 94 minutes

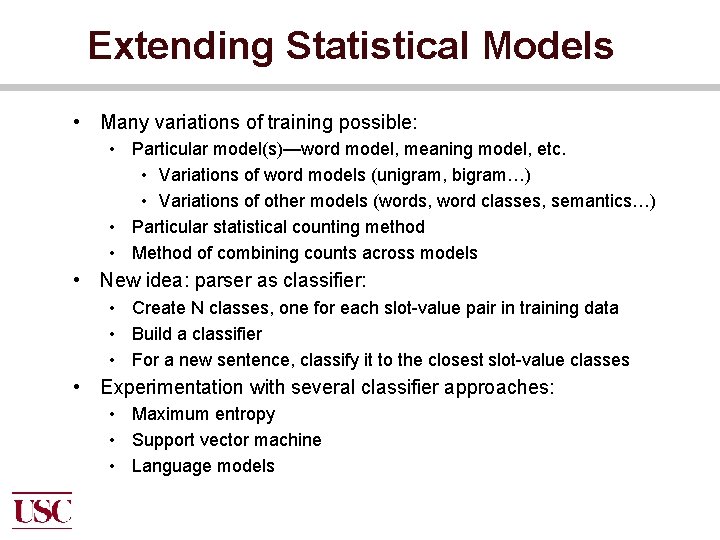

Extending Statistical Models • Many variations of training possible: • Particular model(s)—word model, meaning model, etc. • Variations of word models (unigram, bigram…) • Variations of other models (words, word classes, semantics…) • Particular statistical counting method • Method of combining counts across models • New idea: parser as classifier: • Create N classes, one for each slot-value pair in training data • Build a classifier • For a new sentence, classify it to the closest slot-value classes • Experimentation with several classifier approaches: • Maximum entropy • Support vector machine • Language models

Classifier Parsing Method 1 • Maximum Entropy • Treat the parsing problem as ranking using a classifier • Treat each slot-value pair occurring in the training data as a class • Treat the unigrams, bigrams and trigrams occurring in the input sentences as the classifier features and their corresponding tf*idf as the feature values • Generate a ranked list of slot-value pairs using the classifier • Select the top-n slot-value pairs based on a cutoff and generate the final frame

Classifier Parsing Method 2 • Support Vector Machine • Problem formulation is the same as the Maximum Entropy method

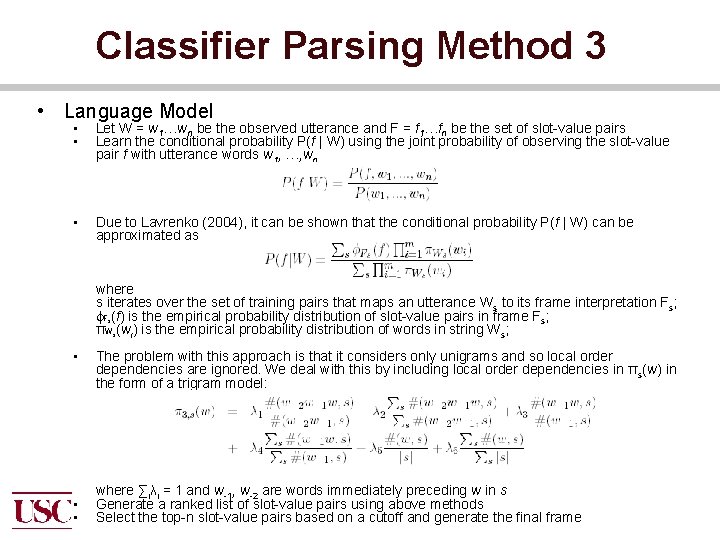

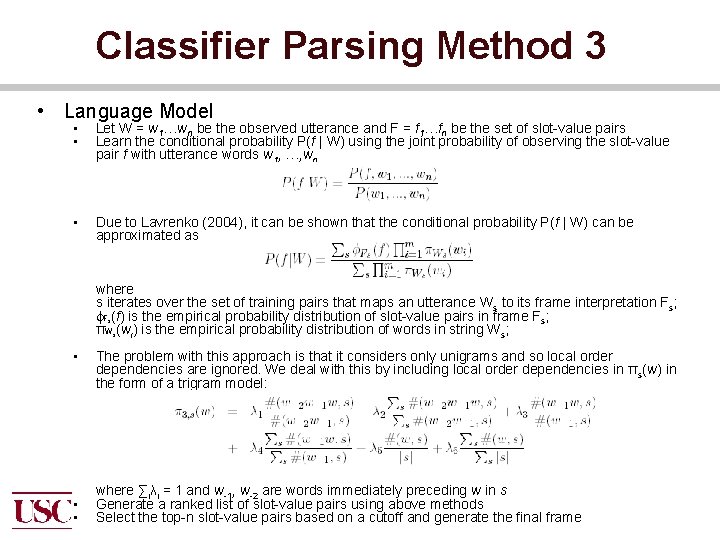

Classifier Parsing Method 3 • Language Model • • Let W = w 1…wn be the observed utterance and F = f 1…fn be the set of slot-value pairs Learn the conditional probability P(f | W) using the joint probability of observing the slot-value pair f with utterance words w 1, , …, wn • Due to Lavrenko (2004), it can be shown that the conditional probability P(f | W) can be approximated as where s iterates over the set of training pairs that maps an utterance Ws to its frame interpretation Fs; Fs(f) is the empirical probability distribution of slot-value pairs in frame Fs; πWs(wi) is the empirical probability distribution of words in string Ws; • The problem with this approach is that it considers only unigrams and so local order dependencies are ignored. We deal with this by including local order dependencies in πs(w) in the form of a trigram model: • • where ∑iλi = 1 and w-1, w-2 are words immediately preceding w in s Generate a ranked list of slot-value pairs using above methods Select the top-n slot-value pairs based on a cutoff and generate the final frame

Experimental Setup • Train on 477 sentence-frame pairs • Perform 4 fold cross-validation on the training data to set the cutoffs for each of the methods • Test on unseen 50 sentence-frame pairs • Compare the systems based on their Precision, Recall and F-scores

Classifier Results • Language Models perform the best but sores for all the methods are very close • Paired 2 tailed t-test shows no statistically significant difference in the performances obtained by any of the methods

Output Processing: More Cascading • Content planning: • Multiple communication goals from dialogue state • Action selection: arbitration using preference rules • Sentence planning: • Input: Utterance goals from Dialogue Manager • Technology: rep transformation rules (built by hand) • Sentence generation: • Technology: cascaded grammar expansion (grammar built by hand; approx. 150 rules) • Word and phrase choice sensitive to emotional connotations • Speech synthesis: • Method: assembly and smoothing of individual phonemes • Technology: FESTIVAL/Festvox system (from Edinburgh & CMU) • Gesture control: • Technology: BEAT (from MIT) system to coordinate timing of speech, mouth movements, and hand gestures

Generating Language • Approach: • Previous work (MRE): used phrasal expansion generator: start with rep frame, choose sentence pattern, and fill in and expand each part • Current work (SASO): building new statistical generator — reversible parser-generator architecture; re-use NLU material • Work in progress; performance level ~80% • Plan to add controls to generate inflection and gestures first time this will ever have been done • Major additional task: building supporting resources: • Lexicon: 1, 204 lexical items • Ontology: approx. 150 concepts and relations

Natural Language Generation • Function: convert semantic representation into English text string • Old generator: produce just one variation per input • New generator: produce different variations for a given input, depending on speaker’s emotional stance “They crashed into us” “There was an accident” “They rammed into us” “We bumped into them” • Sentence planner (under construction): plan crosssentence connections (pronouns, discourse links etc. )

Emotion-Based Generation • Two basic components: • Speaker’s emotional desire/stance (positive or negative toward mother/driver) — obtained from Emotion Module • Default affective connotations of words (“smash”/“bump”) and sentence forms / positions (agent of a smash is Bad) • Research developed a new, more powerful way to compute the maximally effective sentence(s) to satisfy all stance constraints: • Euclidian distance measure between speaker’s desire and all candidate words/sentences • Details in (Fleischman and Hovy 02)