Natural Language Processing Finite State Transducers October 2006

Natural Language Processing Finite State Transducers October 2006 CSA 3180 NLP

Acknowledgement • Material in this lecture derived/copied from • Richard Sproat CL 46 Lectures • Lauri Karttunen LSA lectures 2005 October 2006 CSA 3180 NLP 2

Three Key Concepts Regular Relations Finite State Transducers Computational Morphology October 2006 CSA 3180 NLP 3

Three Key Concepts Regular Relations Finite State Transducers Computational Morphology October 2006 CSA 3180 NLP 4

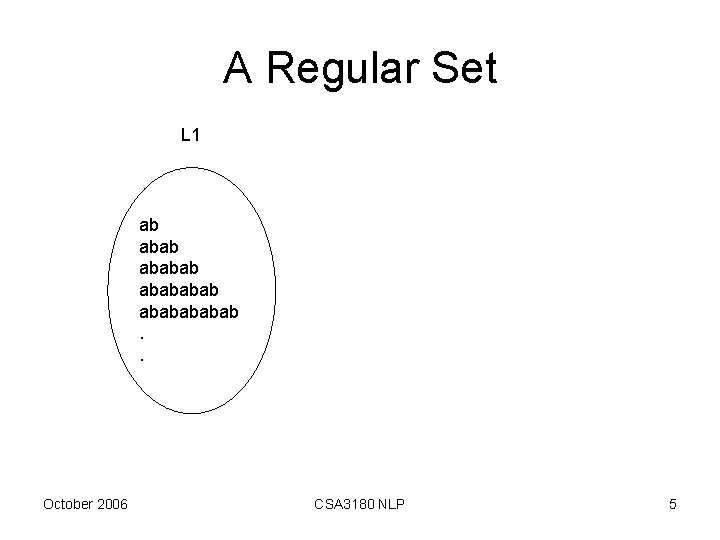

A Regular Set L 1 ab ababababab. . October 2006 CSA 3180 NLP 5

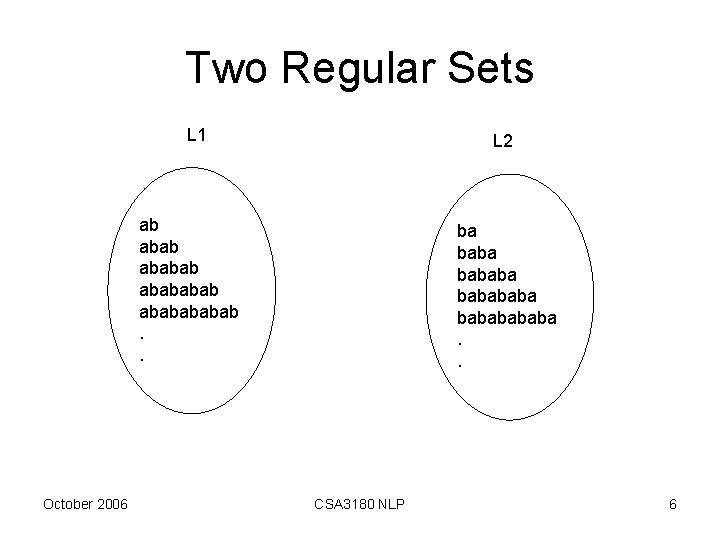

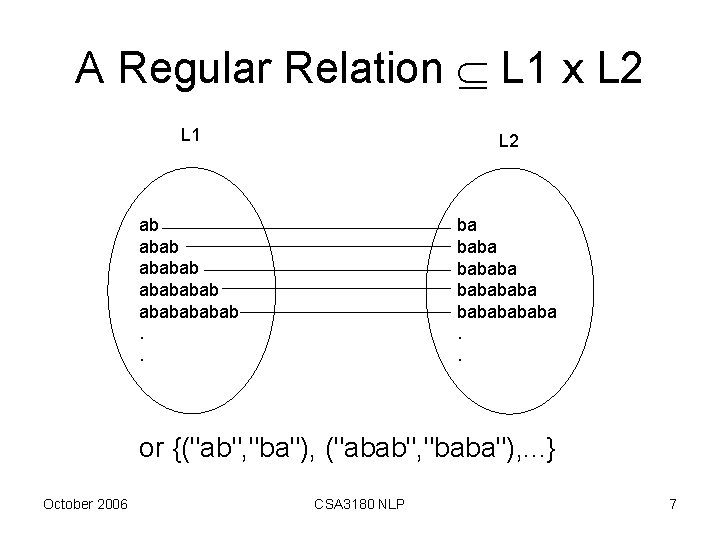

Two Regular Sets L 1 L 2 ab ababababab. . October 2006 ba bababababa. . CSA 3180 NLP 6

A Regular Relation L 1 x L 2 L 1 L 2 ab ababababab. . ba bababababa. . or {("ab", "ba"), ("abab", "baba"), . . . } October 2006 CSA 3180 NLP 7

![Some closure properties for regular relations • • • Concatenation [R 1 R 2] Some closure properties for regular relations • • • Concatenation [R 1 R 2]](http://slidetodoc.com/presentation_image_h/177acb11642c7bd55706cd448fa396ce/image-8.jpg)

Some closure properties for regular relations • • • Concatenation [R 1 R 2] Power (Rn) Reversal Inversion (R-1) Composition: R 1 ○ R 2 October 2006 CSA 3180 NLP 8

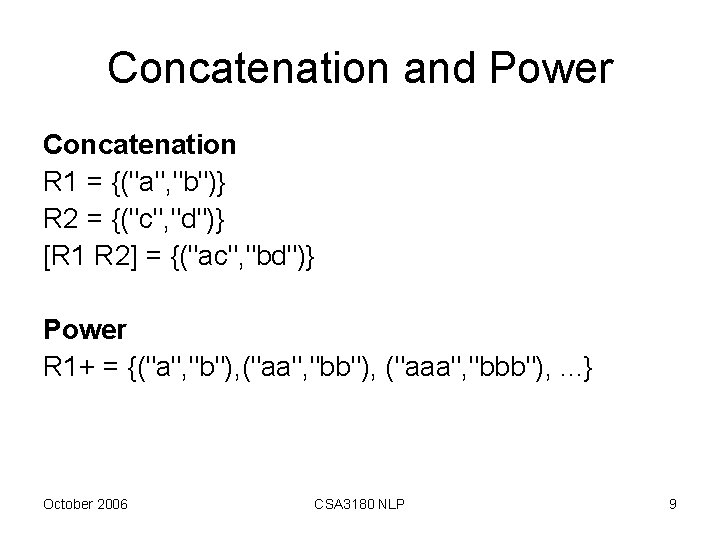

Concatenation and Power Concatenation R 1 = {("a", "b")} R 2 = {("c", "d")} [R 1 R 2] = {("ac", "bd")} Power R 1+ = {("a", "b"), ("aa", "bb"), ("aaa", "bbb"), . . . } October 2006 CSA 3180 NLP 9

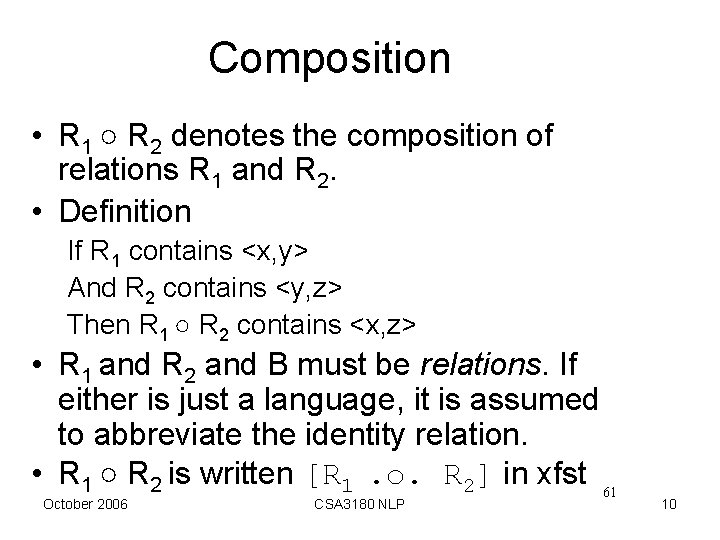

Composition • R 1 ○ R 2 denotes the composition of relations R 1 and R 2. • Definition If R 1 contains <x, y> And R 2 contains <y, z> Then R 1 ○ R 2 contains <x, z> • R 1 and R 2 and B must be relations. If either is just a language, it is assumed to abbreviate the identity relation. • R 1 ○ R 2 is written [R 1. o. R 2] in xfst 61 October 2006 CSA 3180 NLP 10

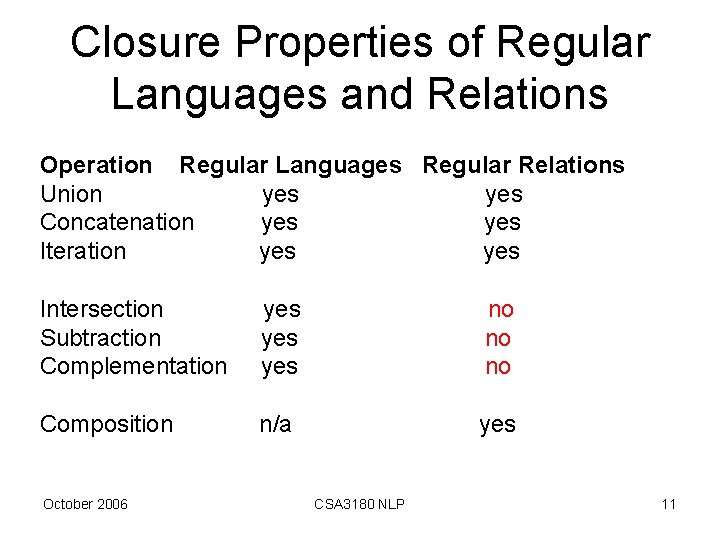

Closure Properties of Regular Languages and Relations Operation Regular Languages Regular Relations Union yes Concatenation yes Iteration yes Intersection Subtraction Complementation yes yes no no no Composition n/a yes October 2006 CSA 3180 NLP 11

Three Key Concepts Regular Relations Finite State Transducers Computational Morphology October 2006 CSA 3180 NLP 12

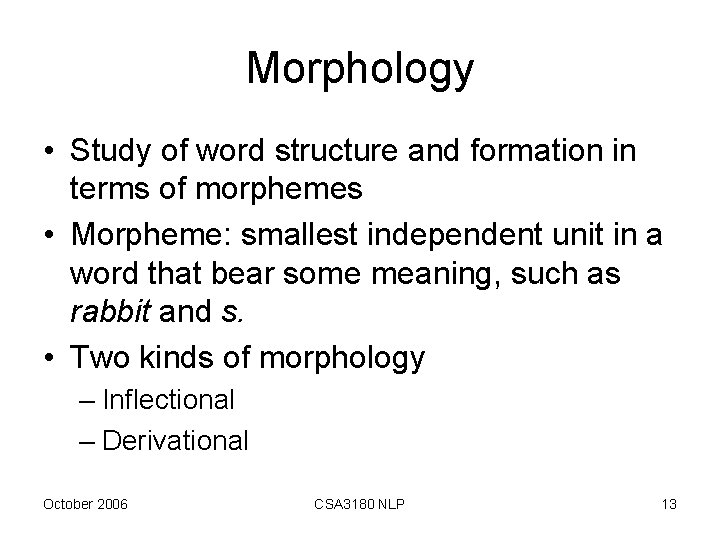

Morphology • Study of word structure and formation in terms of morphemes • Morpheme: smallest independent unit in a word that bear some meaning, such as rabbit and s. • Two kinds of morphology – Inflectional – Derivational October 2006 CSA 3180 NLP 13

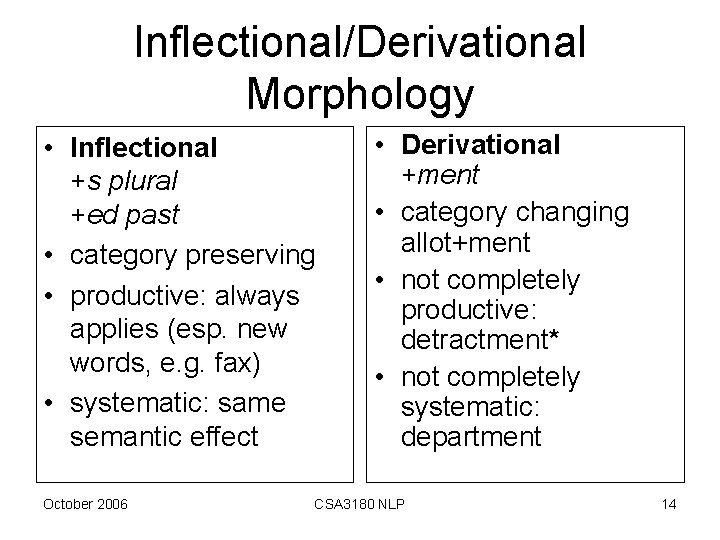

Inflectional/Derivational Morphology • Inflectional +s plural +ed past • category preserving • productive: always applies (esp. new words, e. g. fax) • systematic: same semantic effect October 2006 • Derivational +ment • category changing allot+ment • not completely productive: detractment* • not completely systematic: department CSA 3180 NLP 14

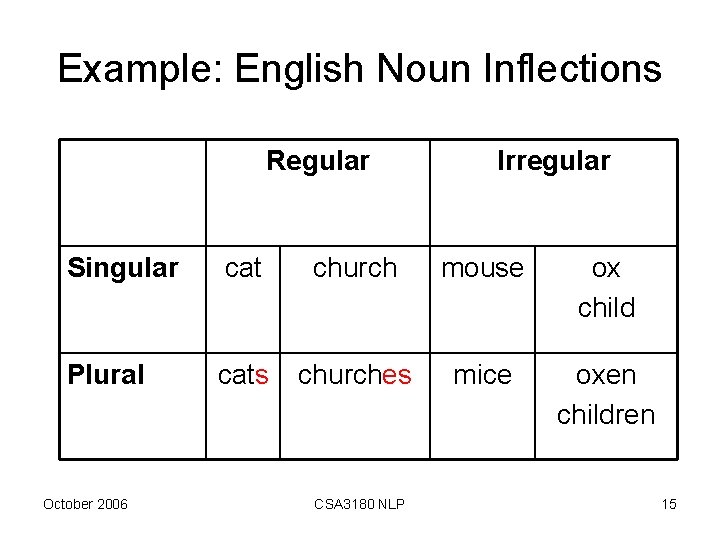

Example: English Noun Inflections Regular Irregular Singular cat church mouse ox child Plural cats churches mice oxen children October 2006 CSA 3180 NLP 15

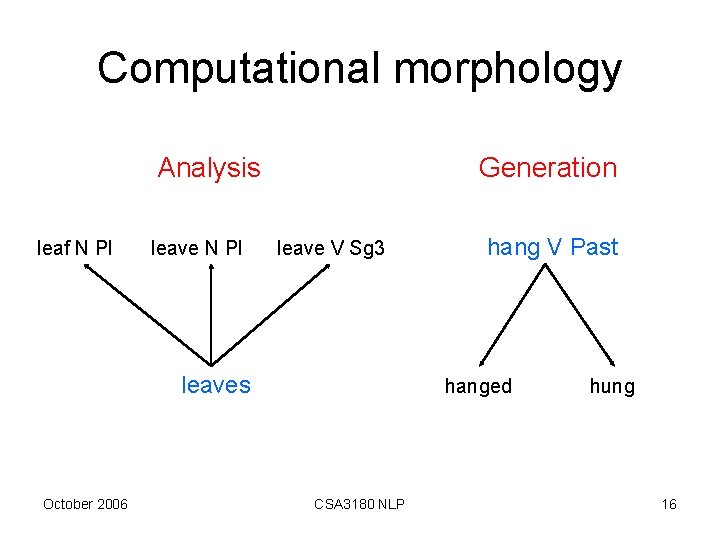

Computational morphology Analysis leaf N Pl leave N Pl Generation leave V Sg 3 leaves October 2006 hang V Past hanged CSA 3180 NLP hung 16

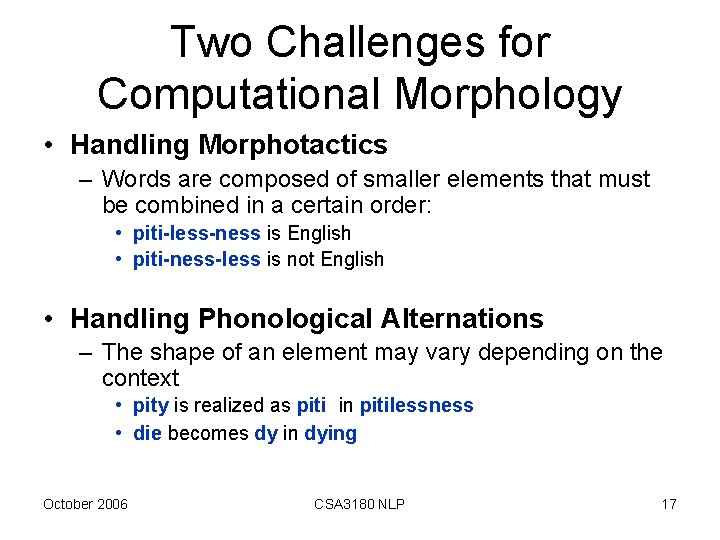

Two Challenges for Computational Morphology • Handling Morphotactics – Words are composed of smaller elements that must be combined in a certain order: • piti-less-ness is English • piti-ness-less is not English • Handling Phonological Alternations – The shape of an element may vary depending on the context • pity is realized as piti in pitilessness • die becomes dy in dying October 2006 CSA 3180 NLP 17

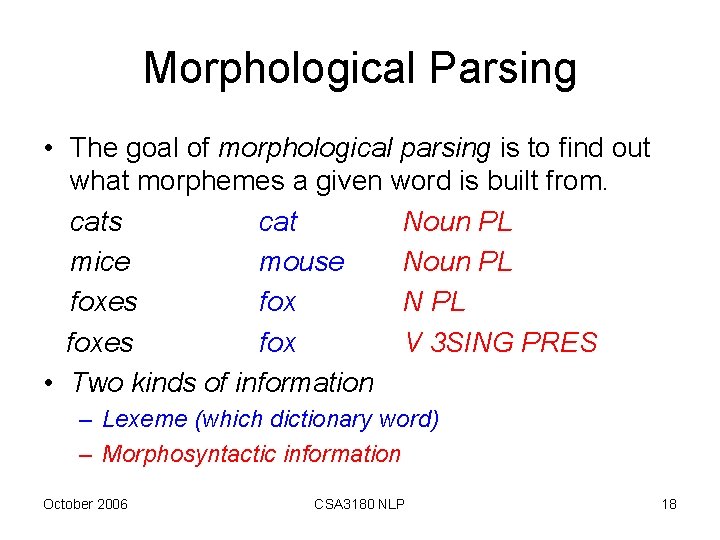

Morphological Parsing • The goal of morphological parsing is to find out what morphemes a given word is built from. cats cat Noun PL mice mouse Noun PL foxes fox N PL foxes fox V 3 SING PRES • Two kinds of information – Lexeme (which dictionary word) – Morphosyntactic information October 2006 CSA 3180 NLP 18

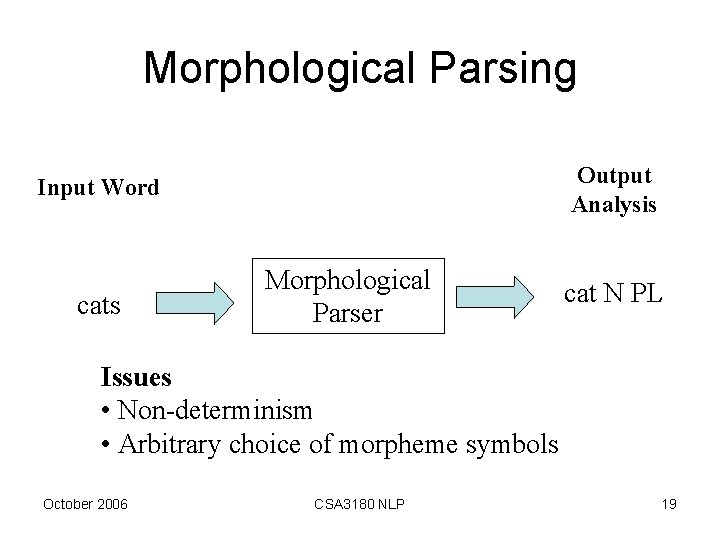

Morphological Parsing Output Analysis Input Word cats Morphological Parser cat N PL Issues • Non-determinism • Arbitrary choice of morpheme symbols October 2006 CSA 3180 NLP 19

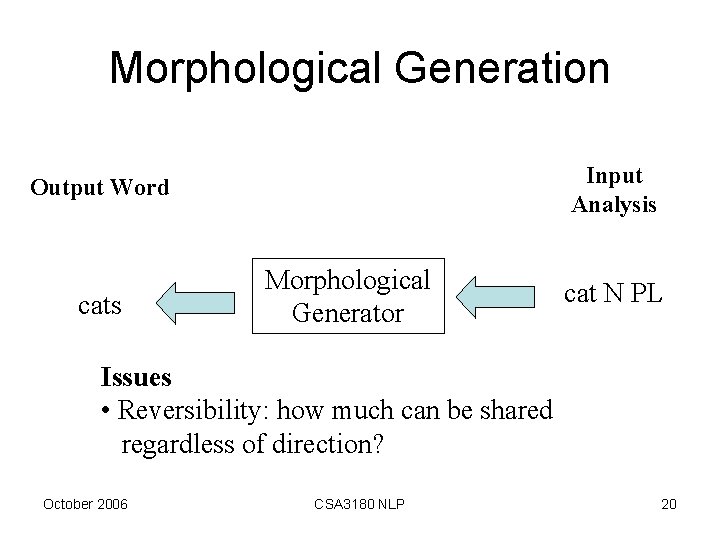

Morphological Generation Input Analysis Output Word cats Morphological Generator cat N PL Issues • Reversibility: how much can be shared regardless of direction? October 2006 CSA 3180 NLP 20

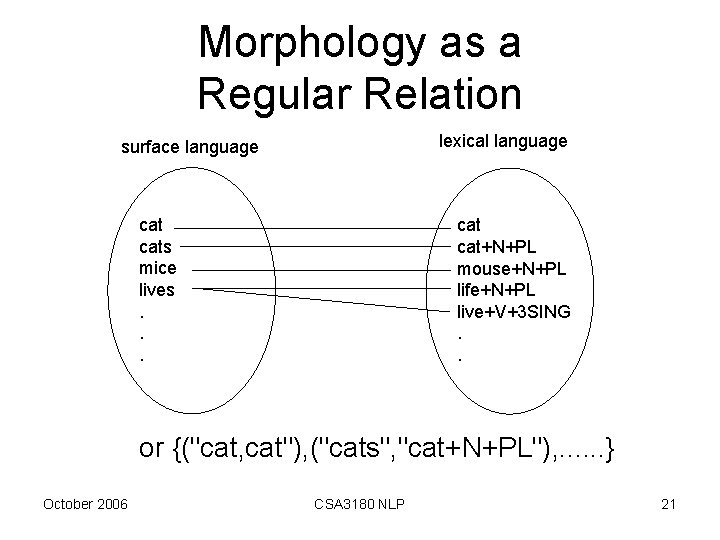

Morphology as a Regular Relation lexical language surface language cats mice lives. . . cat+N+PL mouse+N+PL life+N+PL live+V+3 SING. . or {("cat, cat"), ("cats", "cat+N+PL"), . . . } October 2006 CSA 3180 NLP 21

Three Key Concepts Regular Relations Finite State Transducers Computational Morphology October 2006 CSA 3180 NLP 22

FSA a Used for • Recognition • Generation October 2006 CSA 3180 NLP 23

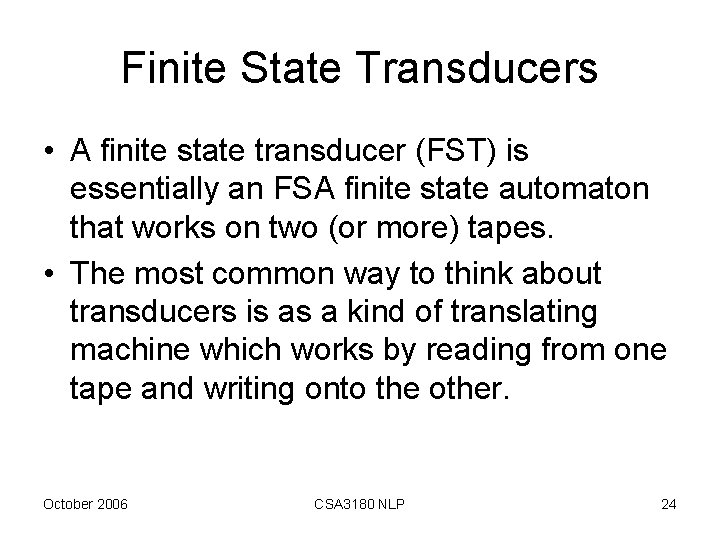

Finite State Transducers • A finite state transducer (FST) is essentially an FSA finite state automaton that works on two (or more) tapes. • The most common way to think about transducers is as a kind of translating machine which works by reading from one tape and writing onto the other. October 2006 CSA 3180 NLP 24

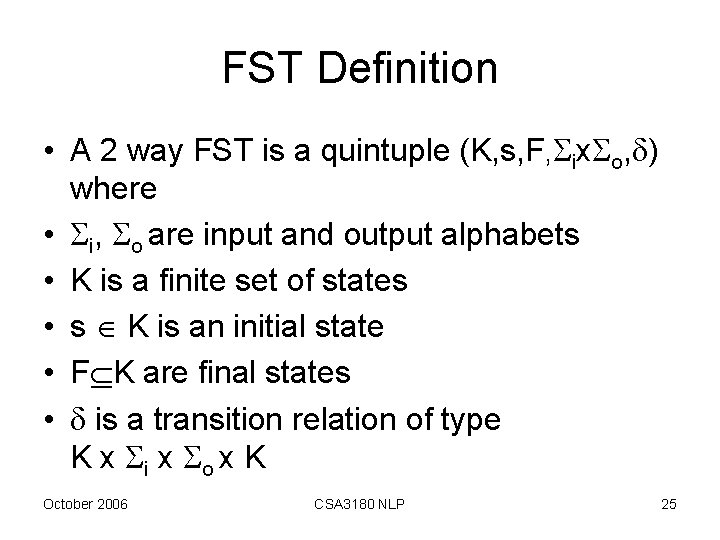

FST Definition • A 2 way FST is a quintuple (K, s, F, ix o, ) where • i, o are input and output alphabets • K is a finite set of states • s K is an initial state • F K are final states • is a transition relation of type K x i x o x K October 2006 CSA 3180 NLP 25

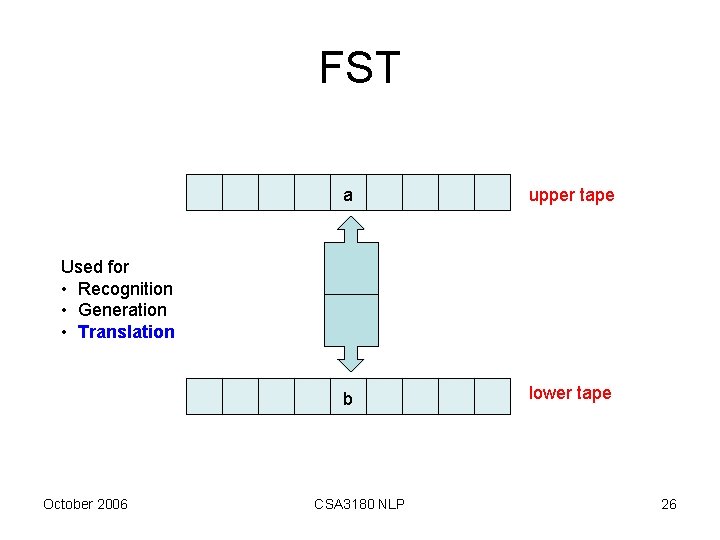

FST a upper tape b lower tape Used for • Recognition • Generation • Translation October 2006 CSA 3180 NLP 26

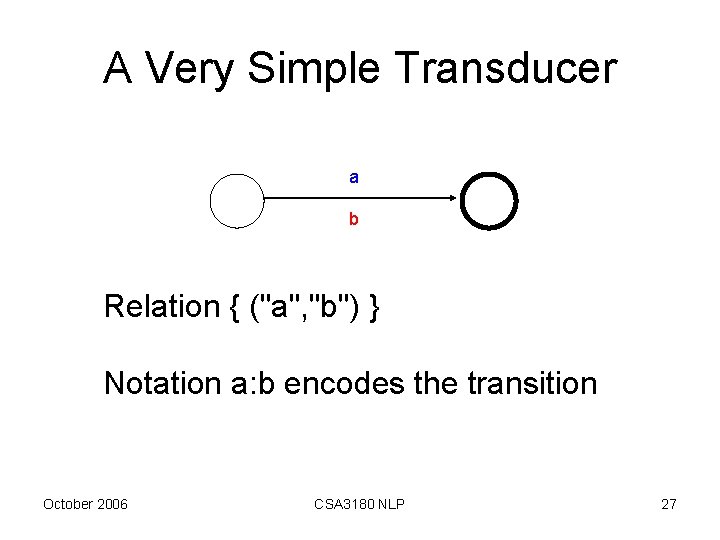

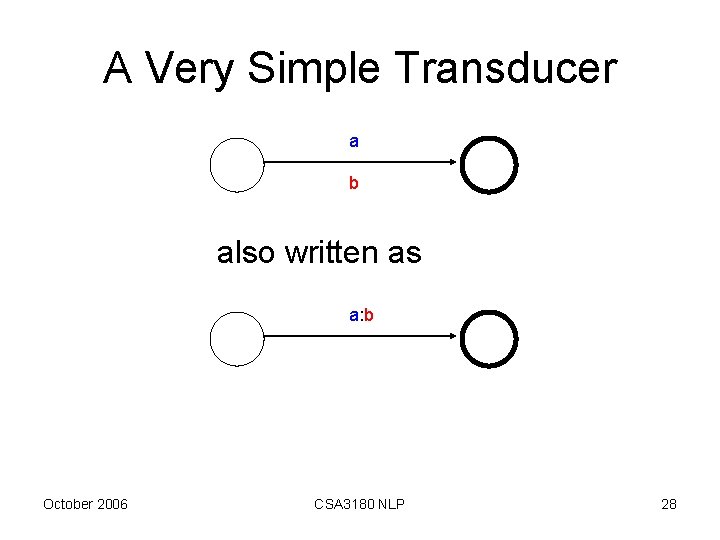

A Very Simple Transducer a b Relation { ("a", "b") } Notation a: b encodes the transition October 2006 CSA 3180 NLP 27

A Very Simple Transducer a b also written as a: b October 2006 CSA 3180 NLP 28

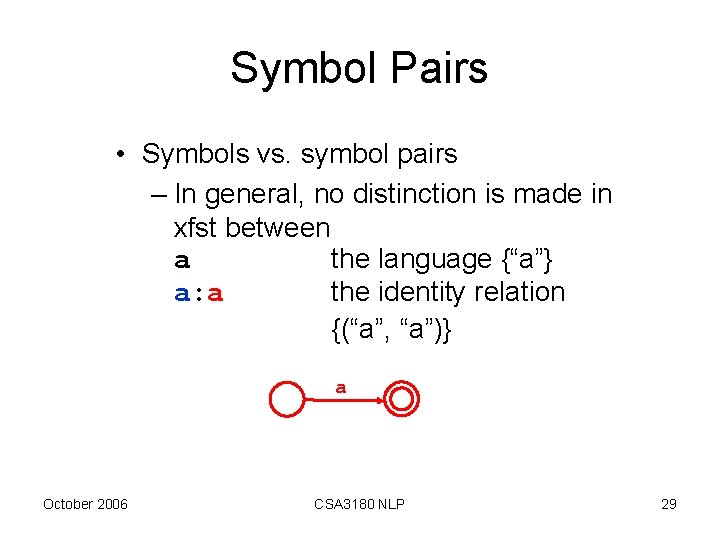

Symbol Pairs • Symbols vs. symbol pairs – In general, no distinction is made in xfst between a the language {“a”} a: a the identity relation {(“a”, “a”)} a October 2006 CSA 3180 NLP 29

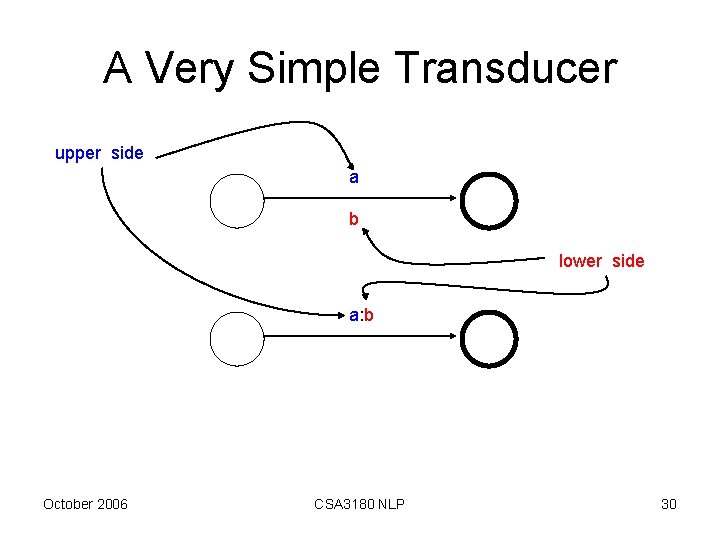

A Very Simple Transducer upper side a b lower side a: b October 2006 CSA 3180 NLP 30

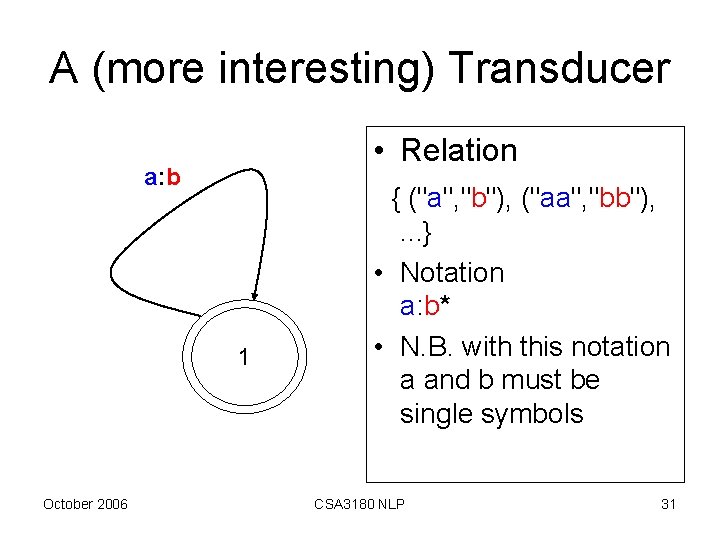

A (more interesting) Transducer • Relation a: b 1 October 2006 { ("a", "b"), ("aa", "bb"), . . . } • Notation a: b* • N. B. with this notation a and b must be single symbols CSA 3180 NLP 31

Transducer have Several Modes of Operation • generation mode: It writes on both tapes. A string of as on one tape and a string of bs on the other tape. Both strings have the same length. • recognition mode: It accepts when the word on the first tape consists of exactly as many as as the word on the second tape consists of bs. • translation mode (left to right): It reads as from the first tape and writes a b for every a that it reads onto the second tape. • translation mode (right to left): It reads bs from the second tape and writes an a for every b that it reads onto the first tape. October 2006 CSA 3180 NLP 32

The Basic Idea • Morphology is regular • Morphology is finite state October 2006 CSA 3180 NLP 33

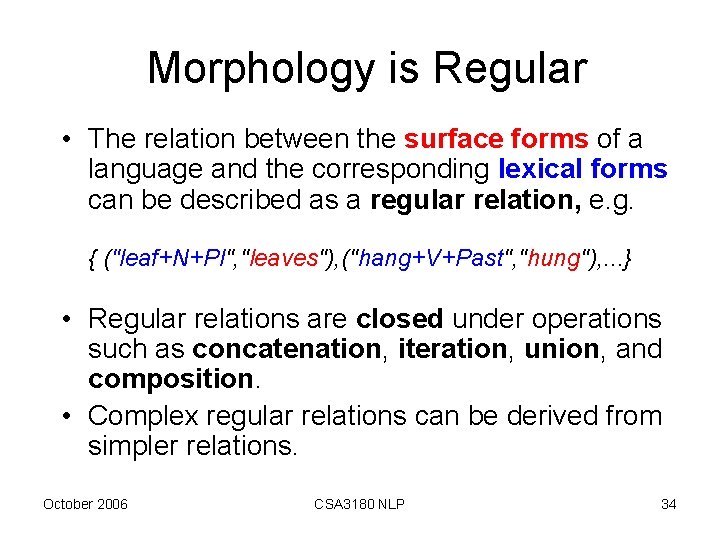

Morphology is Regular • The relation between the surface forms of a language and the corresponding lexical forms can be described as a regular relation, e. g. { ("leaf+N+Pl", "leaves"), ("hang+V+Past", "hung"), . . . } • Regular relations are closed under operations such as concatenation, iteration, union, and composition. • Complex regular relations can be derived from simpler relations. October 2006 CSA 3180 NLP 34

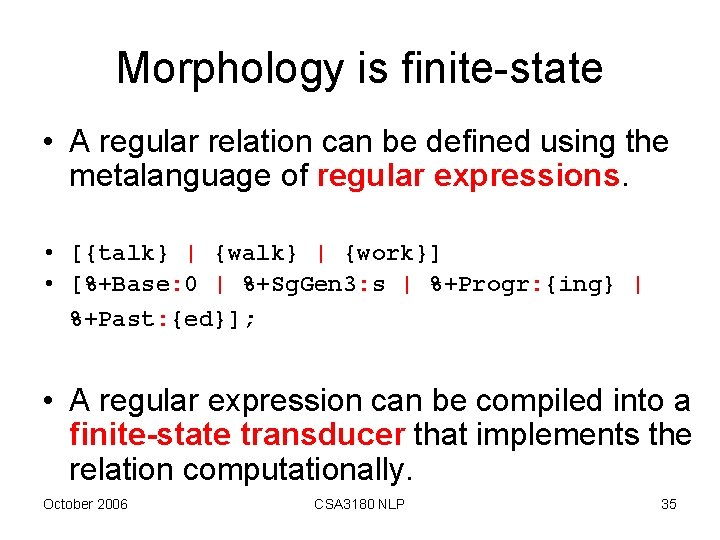

Morphology is finite-state • A regular relation can be defined using the metalanguage of regular expressions. • [{talk} | {work}] • [%+Base: 0 | %+Sg. Gen 3: s | %+Progr: {ing} | %+Past: {ed}]; • A regular expression can be compiled into a finite-state transducer that implements the relation computationally. October 2006 CSA 3180 NLP 35

![Compilation Regular expression • [{talk} | {work}] • [%+Base: 0 | %+Sg. Gen 3: Compilation Regular expression • [{talk} | {work}] • [%+Base: 0 | %+Sg. Gen 3:](http://slidetodoc.com/presentation_image_h/177acb11642c7bd55706cd448fa396ce/image-36.jpg)

Compilation Regular expression • [{talk} | {work}] • [%+Base: 0 | %+Sg. Gen 3: s | %+Progr: {ing} | %+Past: {ed}]; Finite-state transducer +Base: a w o initial state October 2006 +3 rd. Sg: s a t final state l k r +Progr: i +Past: e CSA 3180 NLP : n : g : d 36

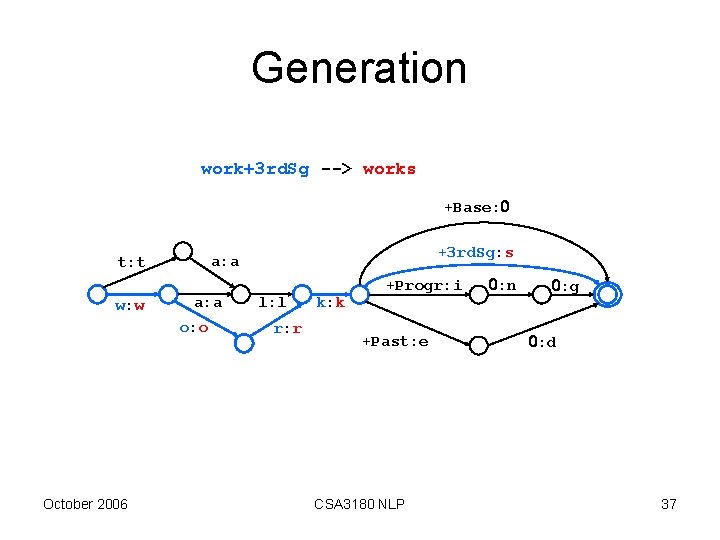

Generation work+3 rd. Sg --> works +Base: w: w a: a o: o October 2006 +3 rd. Sg: s a: a t: t l: l r: r k: k +Progr: i +Past: e CSA 3180 NLP : n : g : d 37

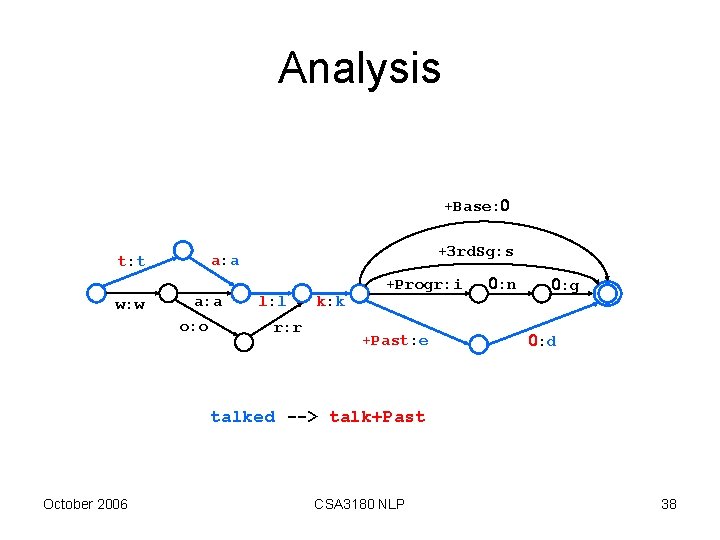

Analysis +Base: w: w +3 rd. Sg: s a: a t: t a: a o: o l: l r: r k: k +Progr: i +Past: e : n : g : d talked --> talk+Past October 2006 CSA 3180 NLP 38

![XFST Demo 2 start xfst % xfst[0]: compile a regular expression • xfst[0]: regex XFST Demo 2 start xfst % xfst[0]: compile a regular expression • xfst[0]: regex](http://slidetodoc.com/presentation_image_h/177acb11642c7bd55706cd448fa396ce/image-39.jpg)

XFST Demo 2 start xfst % xfst[0]: compile a regular expression • xfst[0]: regex • [{talk} | {work}] • [% +Base: 0 | %+Sg. Gen 3: s | %+Progr: {ing} | %+Past: {ed}]; xfst[1]: apply up walked walk+Past apply the result xfst[1]: apply down talk+Sg. Gen 3 talks October 2006 CSA 3180 NLP 39

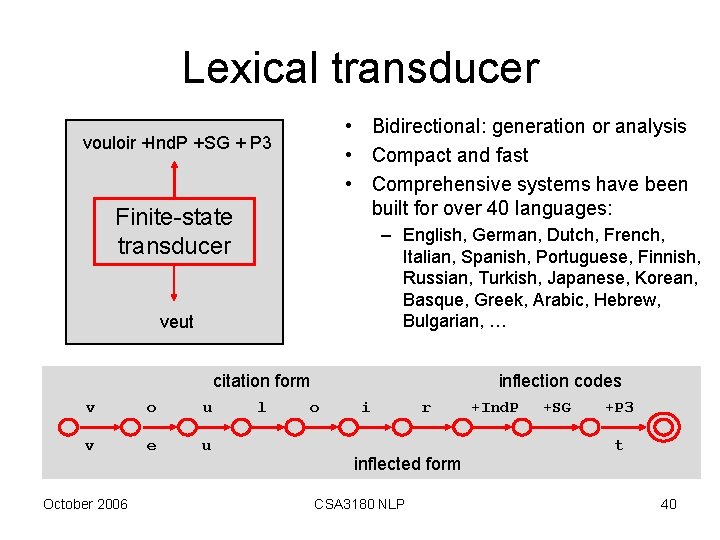

Lexical transducer • Bidirectional: generation or analysis • Compact and fast • Comprehensive systems have been built for over 40 languages: vouloir +Ind. P +SG + P 3 Finite-state transducer – English, German, Dutch, French, Italian, Spanish, Portuguese, Finnish, Russian, Turkish, Japanese, Korean, Basque, Greek, Arabic, Hebrew, Bulgarian, … veut citation form v o u v e u October 2006 l inflection codes o i r inflected form CSA 3180 NLP +Ind. P +SG +P 3 t 40

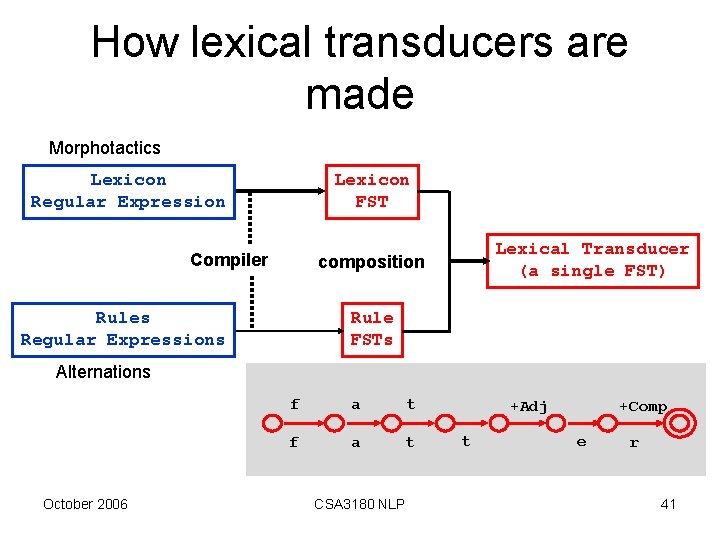

How lexical transducers are made Morphotactics Lexicon Regular Expression Lexicon FST Compiler Lexical Transducer (a single FST) composition Rules Regular Expressions Rule FSTs Alternations October 2006 f a t CSA 3180 NLP +Adj t +Comp e r 41

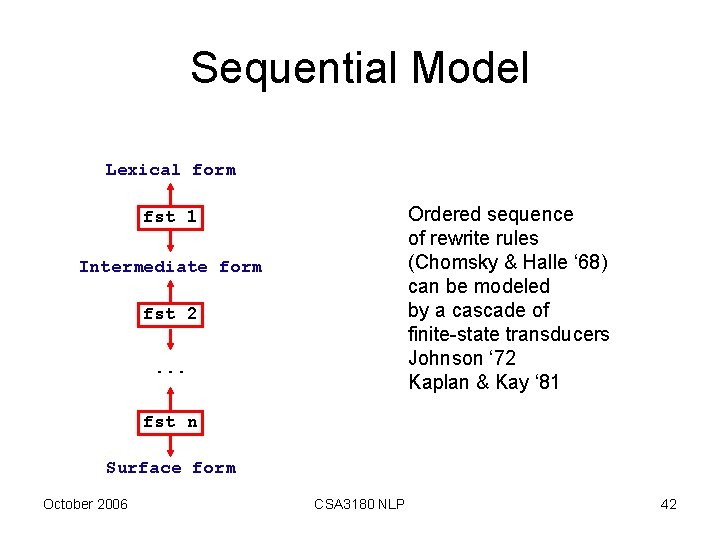

Sequential Model Lexical form Ordered sequence of rewrite rules (Chomsky & Halle ‘ 68) can be modeled by a cascade of finite-state transducers Johnson ‘ 72 Kaplan & Kay ‘ 81 fst 1 Intermediate form fst 2. . . fst n Surface form October 2006 CSA 3180 NLP 42

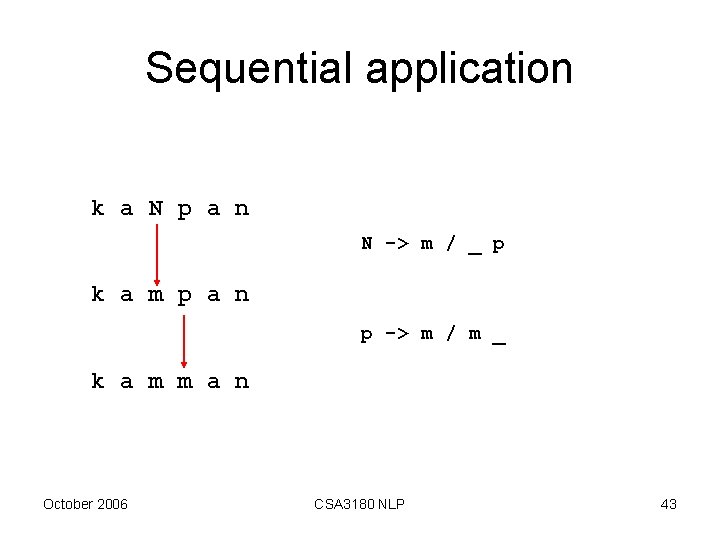

Sequential application k a N p a n N -> m / _ p k a m p a n p -> m / m _ k a m m a n October 2006 CSA 3180 NLP 43

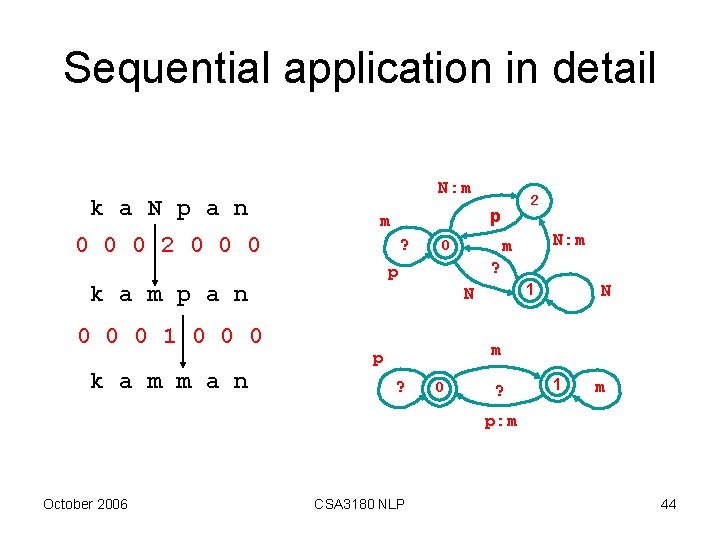

Sequential application in detail k a N p a n N: m p m 0 0 0 2 0 0 0 ? 0 0 0 1 0 0 0 k a m m a n N: m m 0 ? p k a m p a n 2 1 N N m p ? 0 ? 1 m p: m October 2006 CSA 3180 NLP 44

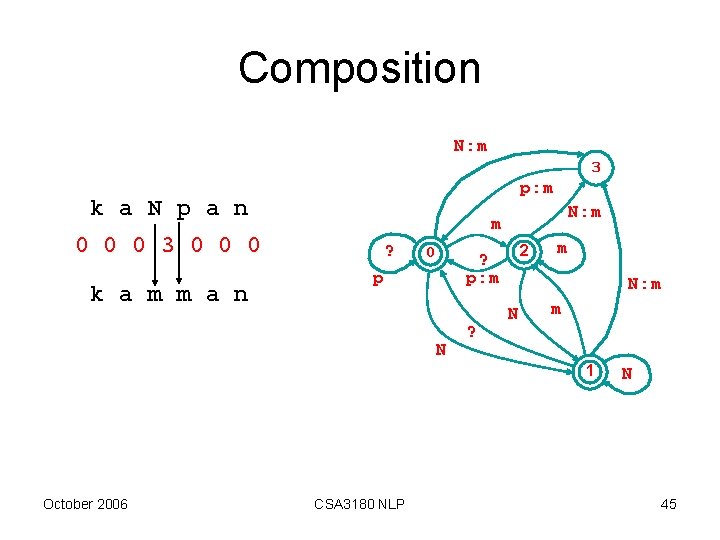

Composition N: m 3 p: m k a N p a n 0 0 0 3 0 0 0 k a m m a n N: m m ? 0 2 ? p: m p N ? m N: m N m 1 October 2006 CSA 3180 NLP N 45

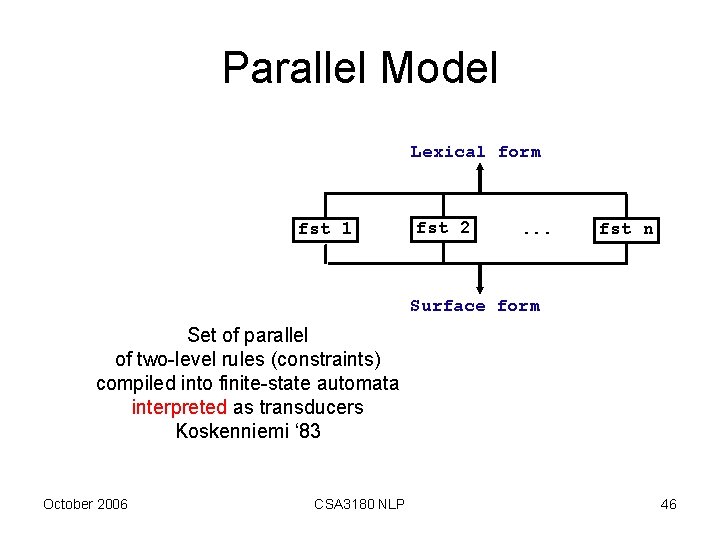

Parallel Model Lexical form fst 1 fst 2 . . . fst n Surface form Set of parallel of two-level rules (constraints) compiled into finite-state automata interpreted as transducers Koskenniemi ‘ 83 October 2006 CSA 3180 NLP 46

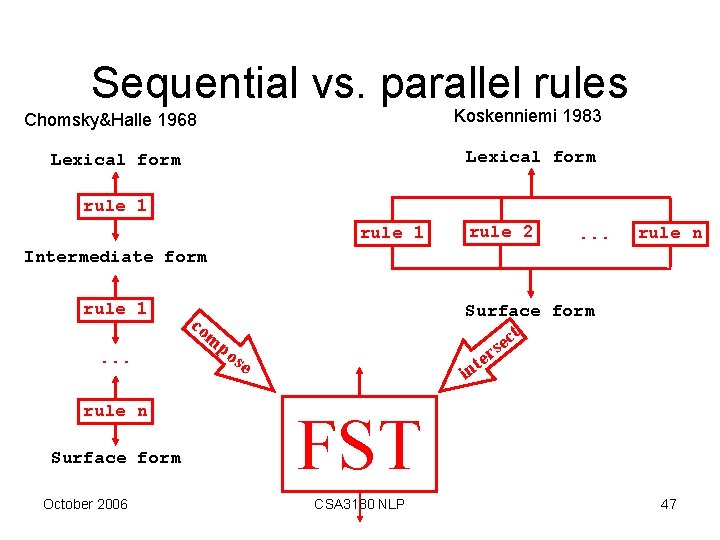

Sequential vs. parallel rules Chomsky&Halle 1968 Koskenniemi 1983 Lexical form rule 1 rule 2 . . . rule n Intermediate form rule 1 Surface form co ct e s r e t m . . . rule n Surface form October 2006 po se in FST CSA 3180 NLP 47

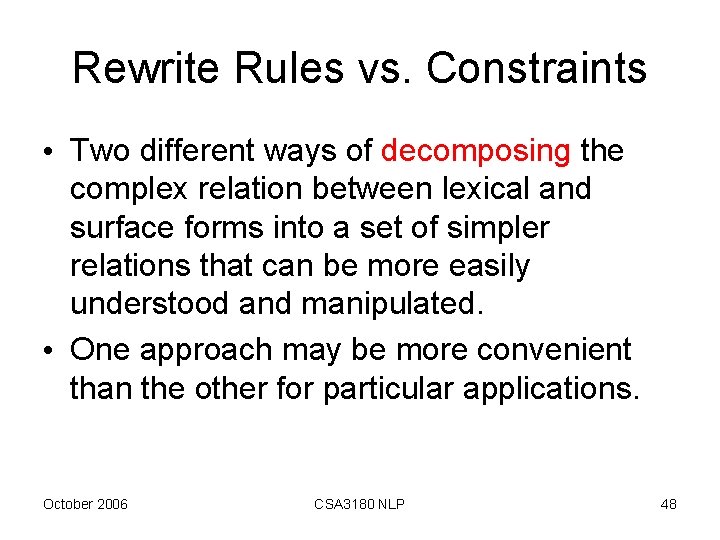

Rewrite Rules vs. Constraints • Two different ways of decomposing the complex relation between lexical and surface forms into a set of simpler relations that can be more easily understood and manipulated. • One approach may be more convenient than the other for particular applications. October 2006 CSA 3180 NLP 48

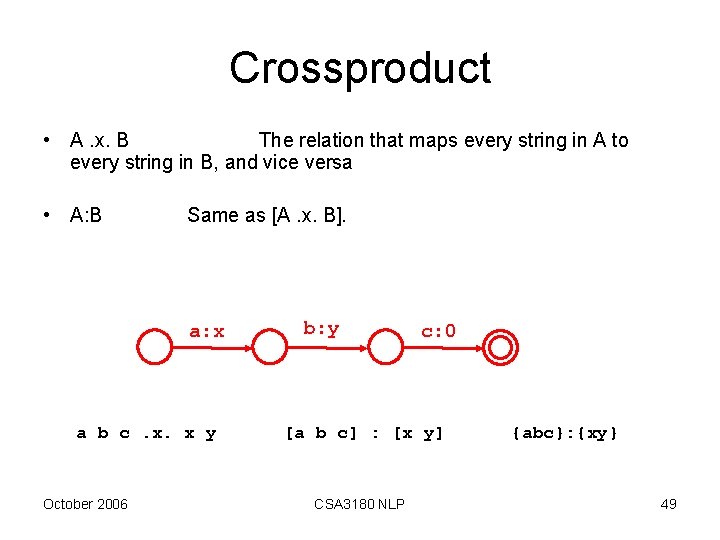

Crossproduct • A. x. B The relation that maps every string in A to every string in B, and vice versa • A: B Same as [A. x. B]. a: x a b c. x. x y October 2006 b: y c: 0 [a b c] : [x y] CSA 3180 NLP {abc}: {xy} 49

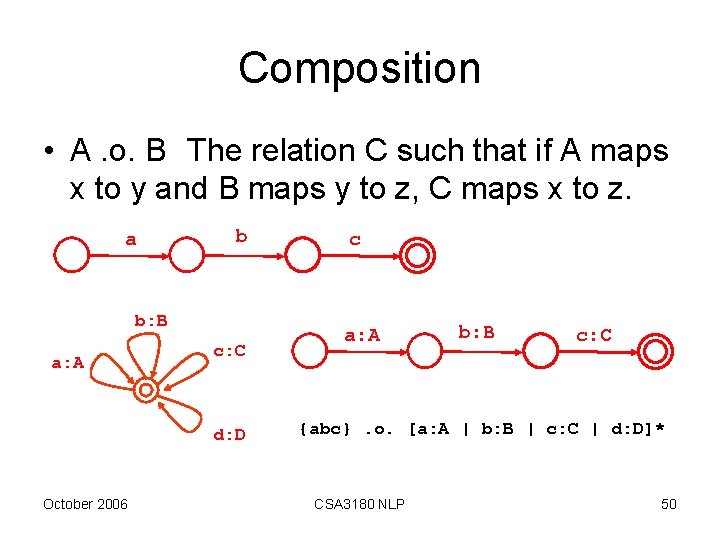

Composition • A. o. B The relation C such that if A maps x to y and B maps y to z, C maps x to z. a b b: B a: A c: C d: D October 2006 c a: A b: B c: C {abc}. o. [a: A | b: B | c: C | d: D]* CSA 3180 NLP 50

Xerox RE Operators containment • => restriction • -> @-> replacement • $ – Make it easier to describe complex languages and relations without extending the formal power of finitestate systems. October 2006 CSA 3180 NLP 51

![Containment $a ? a [? * a ? *] October 2006 CSA 3180 NLP Containment $a ? a [? * a ? *] October 2006 CSA 3180 NLP](http://slidetodoc.com/presentation_image_h/177acb11642c7bd55706cd448fa396ce/image-52.jpg)

Containment $a ? a [? * a ? *] October 2006 CSA 3180 NLP 52

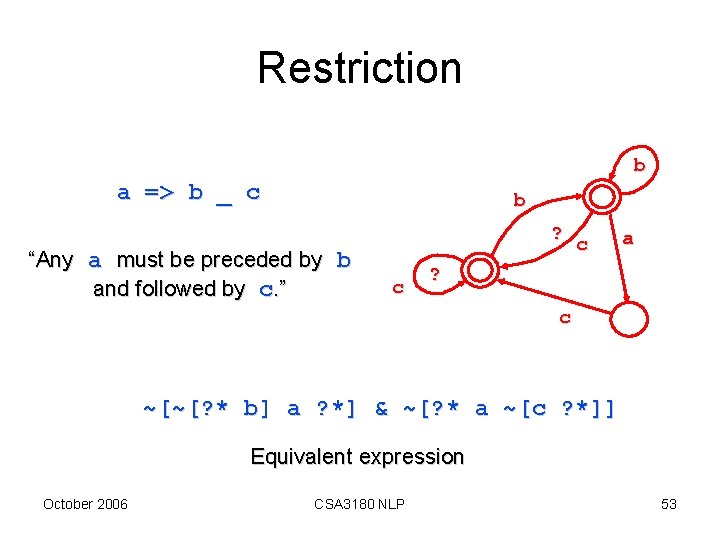

Restriction b a => b _ c b ? “Any a must be preceded by b and followed by c. ” c c a ? c ~[~[? * b] a ? *] & ~[? * a ~[c ? *]] Equivalent expression October 2006 CSA 3180 NLP 53

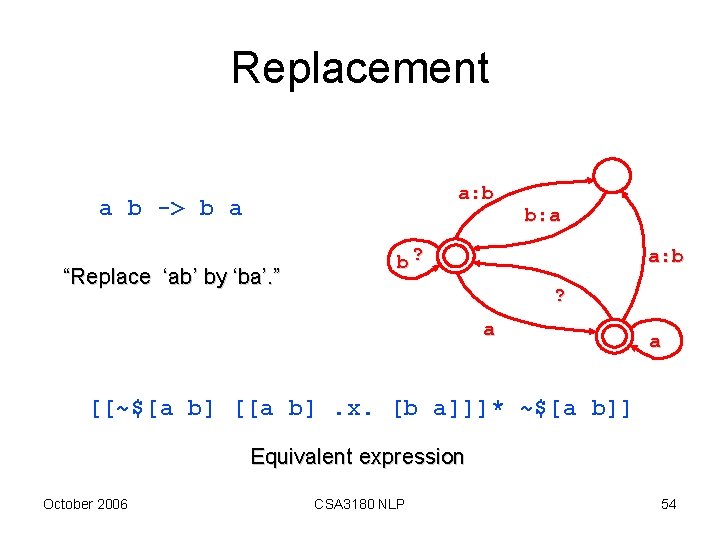

Replacement a: b a b -> b a “Replace ‘ab’ by ‘ba’. ” b: a b? a: b ? a a [[~$[a b] [[a b]. x. [b a]]]* ~$[a b]] Equivalent expression October 2006 CSA 3180 NLP 54

![Marking a|e|i|o|u -> %[. . . %] 0: [ [ ] i e ? Marking a|e|i|o|u -> %[. . . %] 0: [ [ ] i e ?](http://slidetodoc.com/presentation_image_h/177acb11642c7bd55706cd448fa396ce/image-55.jpg)

Marking a|e|i|o|u -> %[. . . %] 0: [ [ ] i e ? a o u 0: ] p o t a t o p[o]t[a]t[o] October 2006 CSA 3180 NLP 55

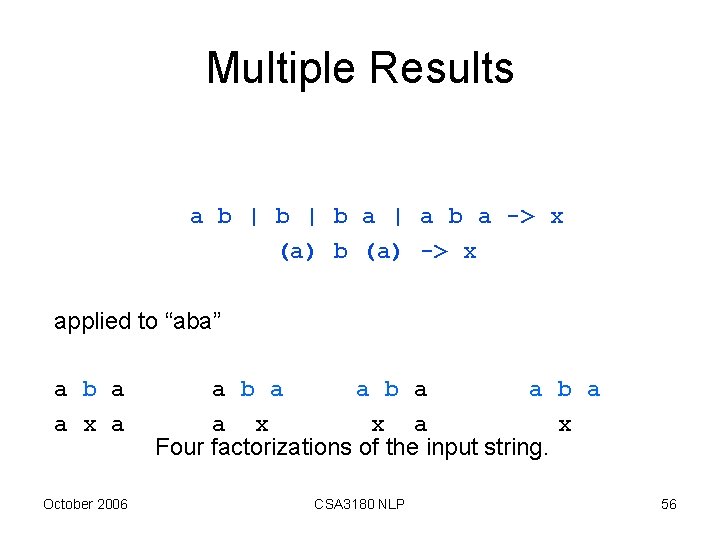

Multiple Results a b | b a | a b a -> x (a) b (a) -> x applied to “aba” a b a a x a October 2006 a b a a x x a x Four factorizations of the input string. CSA 3180 NLP 56

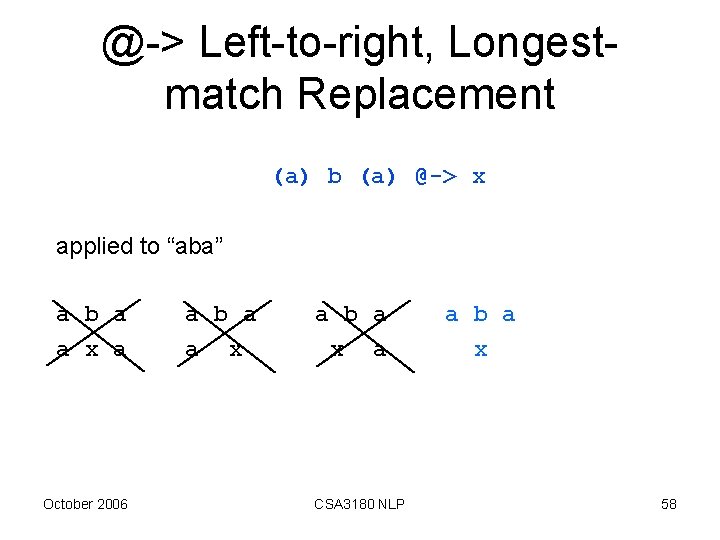

Directed Replace Operators • guarantee a unique result by constraining the factorization of the input string by – Direction of the match (rightward or leftward) – Length (longest or shortest) October 2006 CSA 3180 NLP 57

@-> Left-to-right, Longestmatch Replacement (a) b (a) @-> x applied to “aba” a b a a x a October 2006 a b a a x a b a x a CSA 3180 NLP a b a x 58

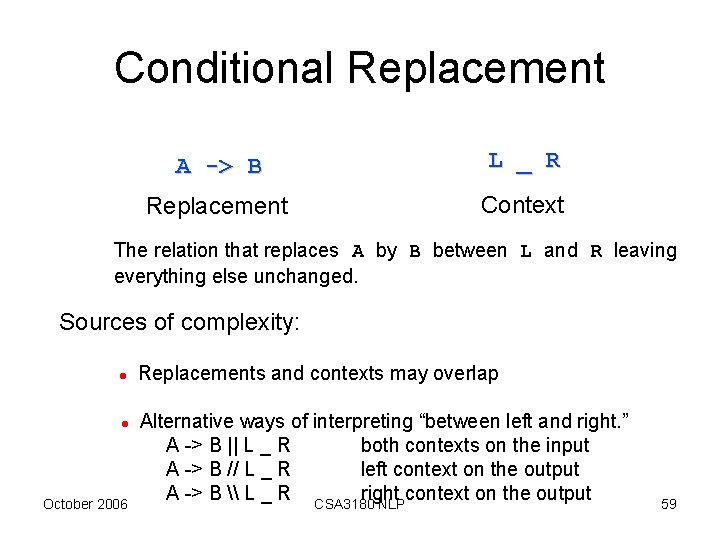

Conditional Replacement A -> B L _ R Replacement Context The relation that replaces A by B between L and R leaving everything else unchanged. Sources of complexity: l l October 2006 Replacements and contexts may overlap Alternative ways of interpreting “between left and right. ” A -> B || L _ R both contexts on the input A -> B // L _ R left context on the output A -> B \ L _ R CSA 3180 right context on the output NLP 59

- Slides: 59