Natural Language Processing Berlin Chen Department of Computer

Natural Language Processing Berlin Chen Department of Computer Science & Information Engineering National Taiwan Normal University References: 1. Foundations of Statistical Natural Language Processing 2. Speech and Language Processing

Motivation for NLP (1/2) • Academic: Explore the nature of linguistic communication – Obtain a better understanding of how languages work • Practical: Enable effective human-machine communication – Conversational agents are becoming an important form of humancomputer communication – Revolutionize the way computers are used • More flexible and intelligent

Motivation for NLP (2/2) • Different Academic Disciplines: Problems and Methods – Electrical Engineering, Statistics – Computer Science – Linguistics – Psychology Linguistics Psychology NLP Computer Science Electrical Engineering, Statistics • Many of the techniques presented were first develpoed for speech and then spread over into NLP – E. g. Language models in speech recognition

Turing Test • Alan Turing, 1950 interrogator – Alan predicted at the end of 20 century a machine with 10 gigabytes of memory would have 30% chance of fooling a human interrogator after 5 minutes of questions • Does it come true?

Hollywood Cinema • Computers/robots can listen, speak, and answer our questions – E. g. : HAL 9000 computer in “ 2001: A Space Odyssey” (2001太空漫遊 )

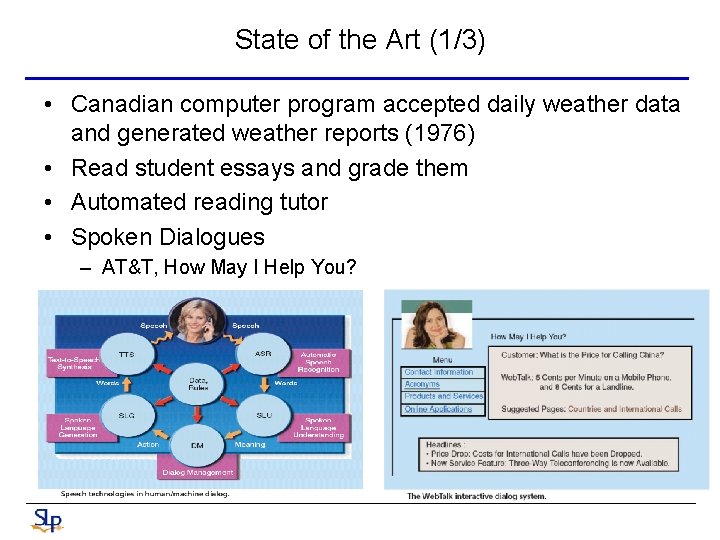

State of the Art (1/3) • Canadian computer program accepted daily weather data and generated weather reports (1976) • Read student essays and grade them • Automated reading tutor • Spoken Dialogues – AT&T, How May I Help You?

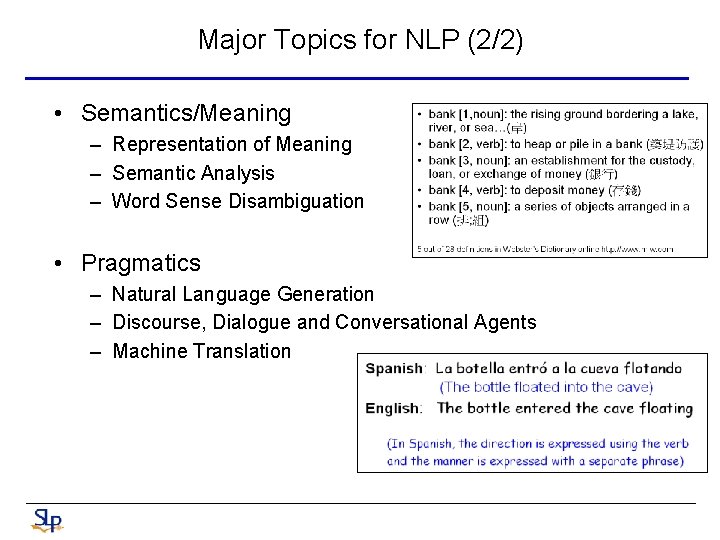

Major Topics for NLP (2/2) • Semantics/Meaning – Representation of Meaning – Semantic Analysis – Word Sense Disambiguation • Pragmatics – Natural Language Generation – Discourse, Dialogue and Conversational Agents – Machine Translation

Dissidences • Rationalists (e. g. Chomsky) – Humans are innate language faculties – (Almost fully) encoded rules plus reasoning mechanisms – Dominating between 1960’s~mid 1980’s • Empiricists (e. g. Shannon) – The mind does not begin with detailed sets of principles and procedures for language components and cognitive domains – Rather, only general operations for association, pattern recognition, generalization etc. , are endowed with • General language models plus machine learning approaches – Dominating between 1920’s~mid 1960’s and resurging 1990’s~

Dissidences: Statistical and Non-Statistical NLP • The dividing line between the two has become much more fuzzy recently – An increasing number of non-statistical researches use corpus evidence and incorporate quantitative methods • Corpus: “a body of texts” (大量的文稿) – Statistical NLP needs to start with all the scientific knowledge available about a phenomenon when building a probabilistic model, rather than closing one’s eye and taking a clean-slate approach • Probabilistic and data-driven • Statistical NLP → “Language Technology” or “Language Engineering”

(I) Part-of-Speech Tagging

Review • Tagging (part-of-speech tagging) – The process of assigning (labeling) a part-of-speech or other lexical class marker to each word in a sentence (or a corpus) • Decide whether each word is a noun, verb, adjective, or whatever The/AT representative/NN put/VBD chairs/NNS on/IN the/AT table/NN Or The/AT representative/JJ put/NN chairs/VBZ on/IN the/AT table/NN – An intermediate layer of representation of syntactic structure • When compared with syntactic parsing – Above 96% accuracy for most successful approaches Tagging can be viewed as a kind of syntactic disambiguation

Introduction • Parts-of-speech – Known as POS, word classes, lexical tags, morphology classes • Tag sets – Penn Treebank : 45 word classes used (Francis, 1979) • Penn Treebank is a parsed corpus – Brown corpus: 87 word classes used (Marcus et al. , 1993) – …. The/DT grand/JJ jury/NN commented/VBD on/IN a/DT number/NN of/IN other/JJ topics/NNS. /.

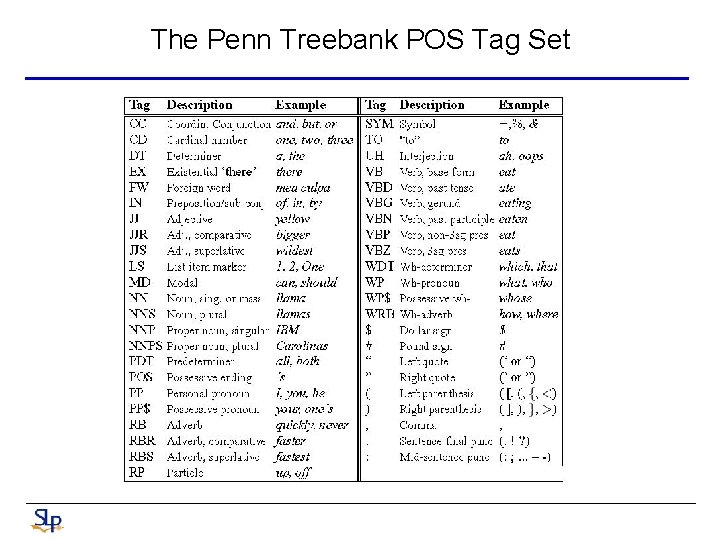

The Penn Treebank POS Tag Set

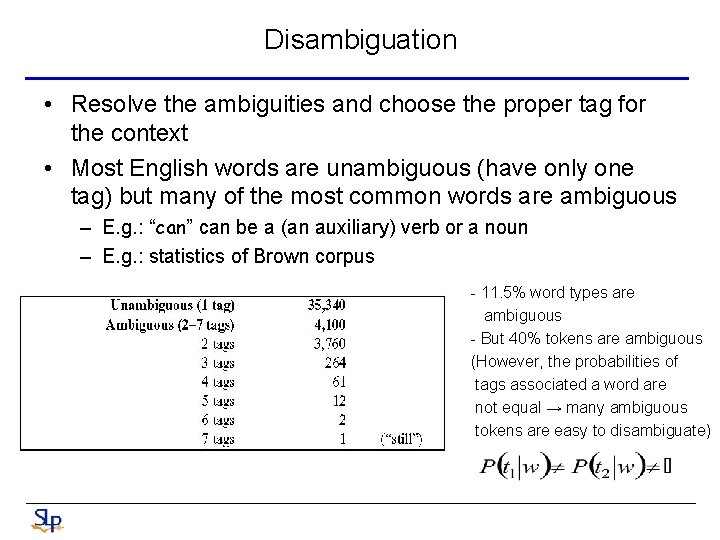

Disambiguation • Resolve the ambiguities and choose the proper tag for the context • Most English words are unambiguous (have only one tag) but many of the most common words are ambiguous – E. g. : “can” can be a (an auxiliary) verb or a noun – E. g. : statistics of Brown corpus - 11. 5% word types are ambiguous - But 40% tokens are ambiguous (However, the probabilities of tags associated a word are not equal → many ambiguous tokens are easy to disambiguate)

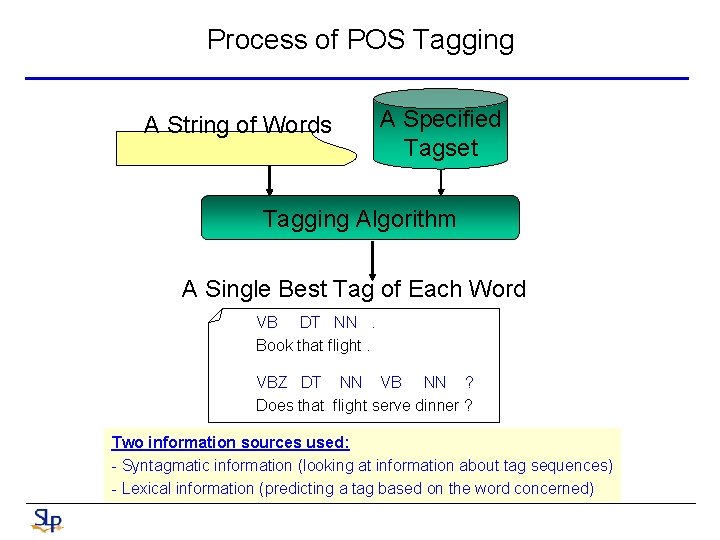

Process of POS Tagging A String of Words A Specified Tagset Tagging Algorithm A Single Best Tag of Each Word VB DT NN. Book that flight. VBZ DT NN VB NN ? Does that flight serve dinner ? Two information sources used: - Syntagmatic information (looking at information about tag sequences) - Lexical information (predicting a tag based on the word concerned)

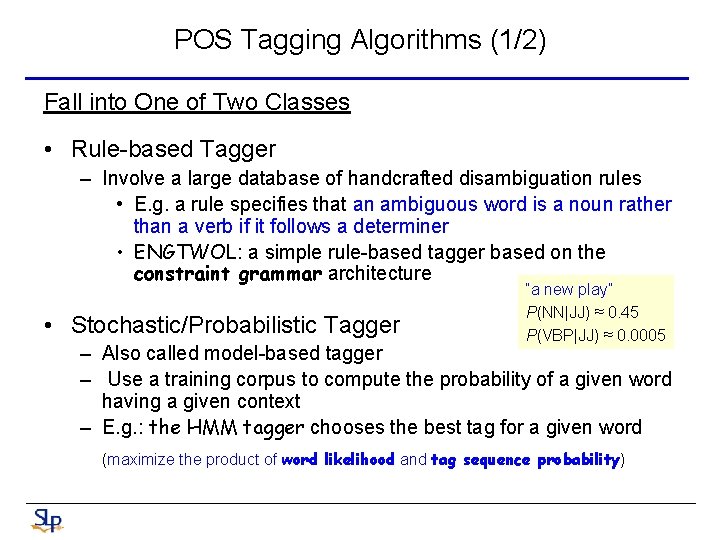

POS Tagging Algorithms (1/2) Fall into One of Two Classes • Rule-based Tagger – Involve a large database of handcrafted disambiguation rules • E. g. a rule specifies that an ambiguous word is a noun rather than a verb if it follows a determiner • ENGTWOL: a simple rule-based tagger based on the constraint grammar architecture • Stochastic/Probabilistic Tagger “a new play” P(NN|JJ) ≈ 0. 45 P(VBP|JJ) ≈ 0. 0005 – Also called model-based tagger – Use a training corpus to compute the probability of a given word having a given context – E. g. : the HMM tagger chooses the best tag for a given word (maximize the product of word likelihood and tag sequence probability)

POS Tagging Algorithms (1/2) • Transformation-based/Brill Tagger – A hybrid approach – Like rule-based approach, determine the tag of an ambiguous word based on rules – Like stochastic approach, the rules are automatically induced from previous tagged training corpus with the machine learning technique • Supervised learning

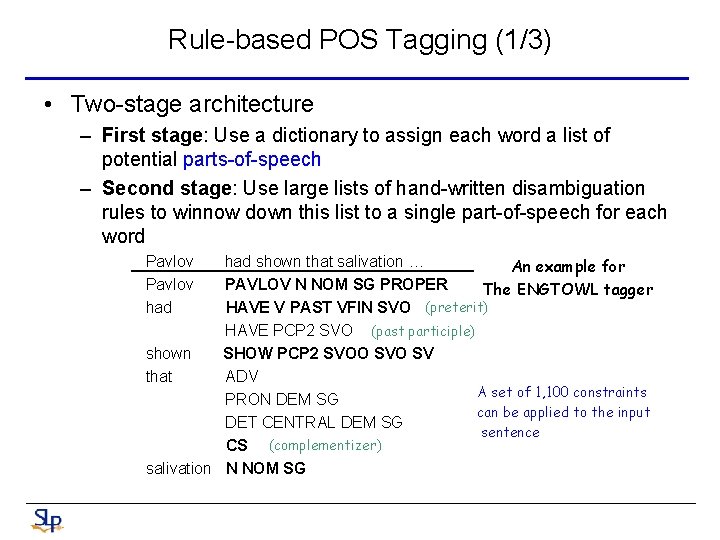

Rule-based POS Tagging (1/3) • Two-stage architecture – First stage: Use a dictionary to assign each word a list of potential parts-of-speech – Second stage: Use large lists of hand-written disambiguation rules to winnow down this list to a single part-of-speech for each word Pavlov had shown that salivation … An example for PAVLOV N NOM SG PROPER The ENGTOWL tagger HAVE V PAST VFIN SVO (preterit) HAVE PCP 2 SVO (past participle) shown SHOW PCP 2 SVOO SV that ADV A set of 1, 100 constraints PRON DEM SG can be applied to the input DET CENTRAL DEM SG sentence CS (complementizer) salivation N NOM SG

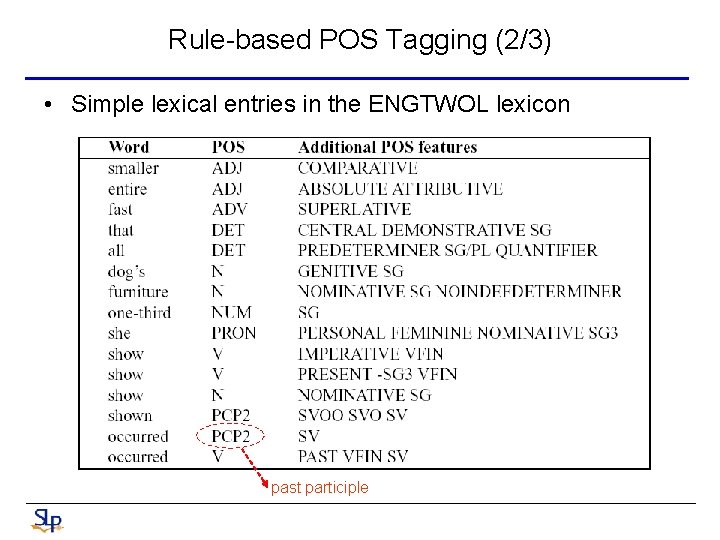

Rule-based POS Tagging (2/3) • Simple lexical entries in the ENGTWOL lexicon past participle

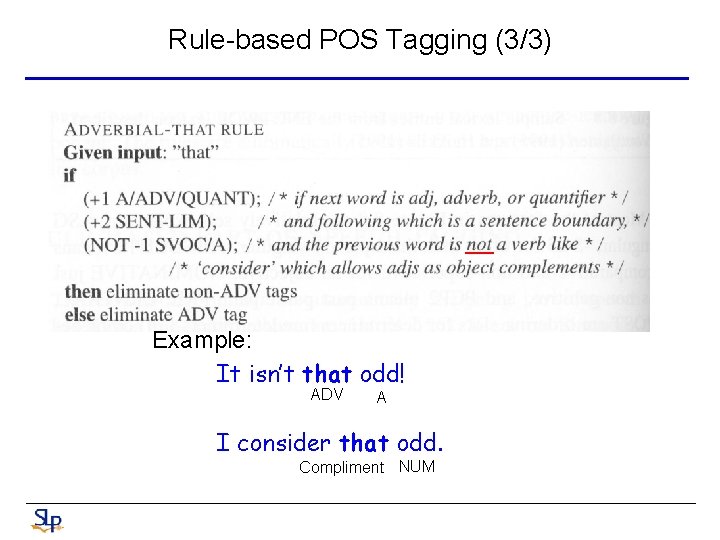

Rule-based POS Tagging (3/3) Example: It isn’t that odd! ADV A I consider that odd. Compliment NUM

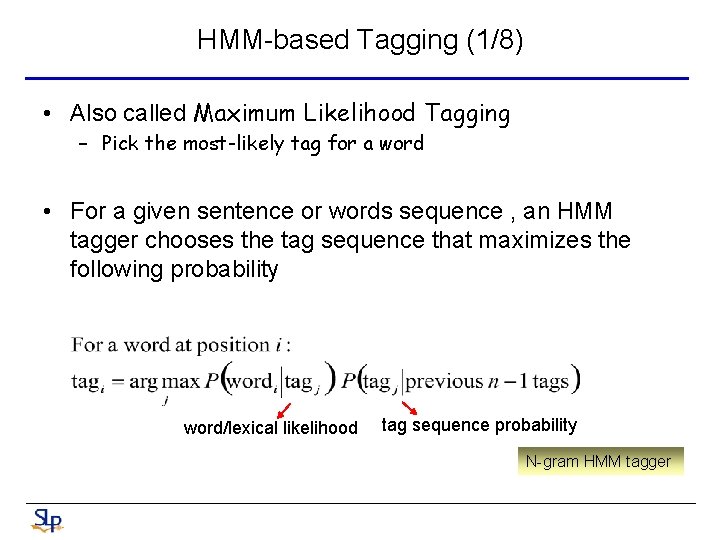

HMM-based Tagging (1/8) • Also called Maximum Likelihood Tagging – Pick the most-likely tag for a word • For a given sentence or words sequence , an HMM tagger chooses the tag sequence that maximizes the following probability word/lexical likelihood tag sequence probability N-gram HMM tagger

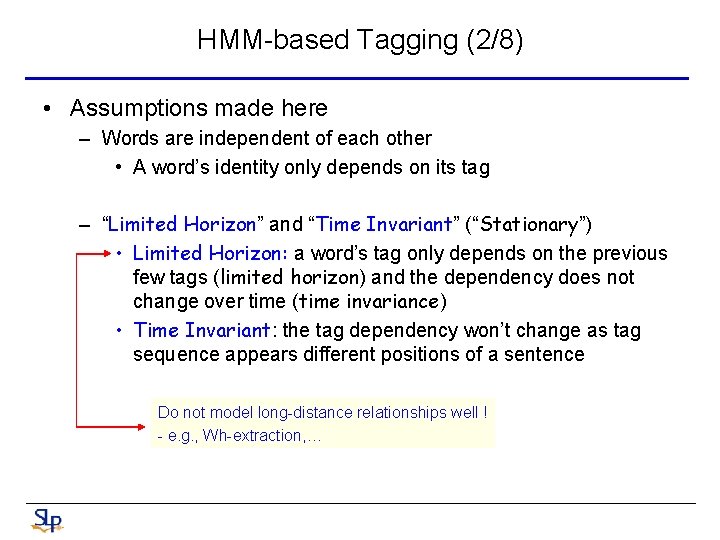

HMM-based Tagging (2/8) • Assumptions made here – Words are independent of each other • A word’s identity only depends on its tag – “Limited Horizon” and “Time Invariant” (“Stationary”) • Limited Horizon: a word’s tag only depends on the previous few tags (limited horizon) and the dependency does not change over time (time invariance) • Time Invariant: the tag dependency won’t change as tag sequence appears different positions of a sentence Do not model long-distance relationships well ! - e. g. , Wh-extraction, …

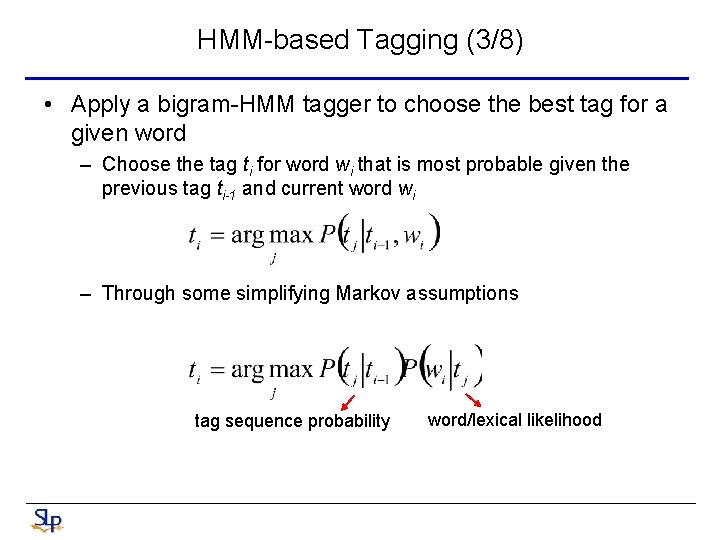

HMM-based Tagging (3/8) • Apply a bigram-HMM tagger to choose the best tag for a given word – Choose the tag ti for word wi that is most probable given the previous tag ti-1 and current word wi – Through some simplifying Markov assumptions tag sequence probability word/lexical likelihood

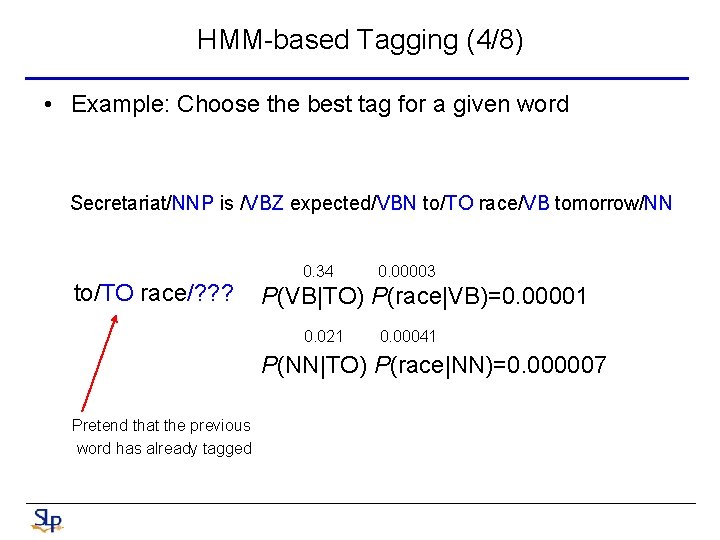

HMM-based Tagging (4/8) • Example: Choose the best tag for a given word Secretariat/NNP is /VBZ expected/VBN to/TO race/VB tomorrow/NN to/TO race/? ? ? 0. 34 0. 00003 P(VB|TO) P(race|VB)=0. 00001 0. 021 0. 00041 P(NN|TO) P(race|NN)=0. 000007 Pretend that the previous word has already tagged

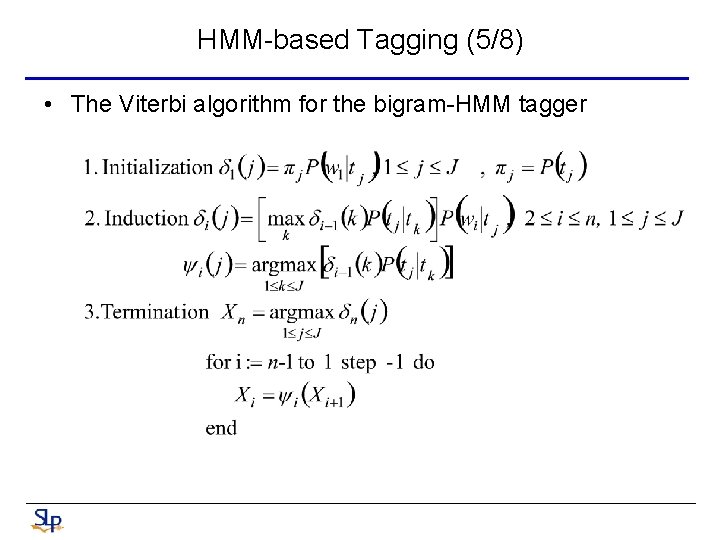

HMM-based Tagging (5/8) • The Viterbi algorithm for the bigram-HMM tagger

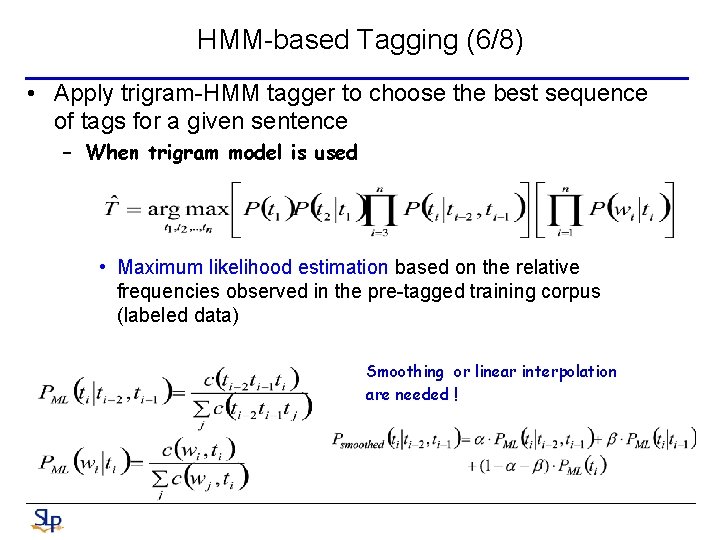

HMM-based Tagging (6/8) • Apply trigram-HMM tagger to choose the best sequence of tags for a given sentence – When trigram model is used • Maximum likelihood estimation based on the relative frequencies observed in the pre-tagged training corpus (labeled data) Smoothing or linear interpolation are needed !

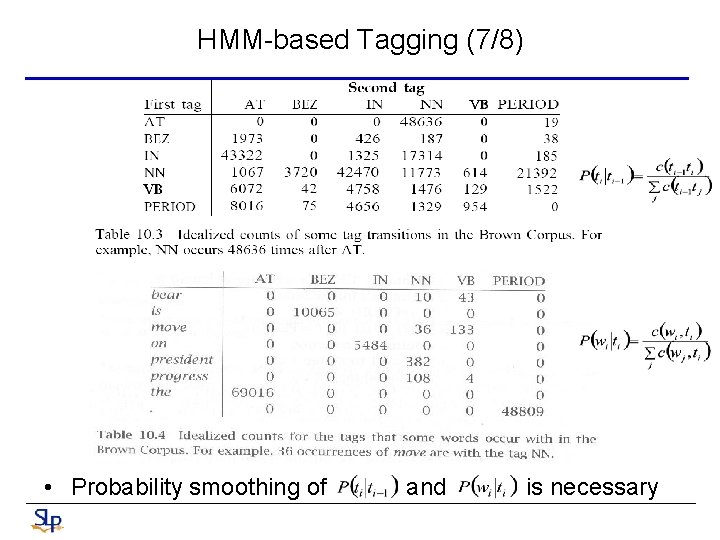

HMM-based Tagging (7/8) • Probability smoothing of and is necessary

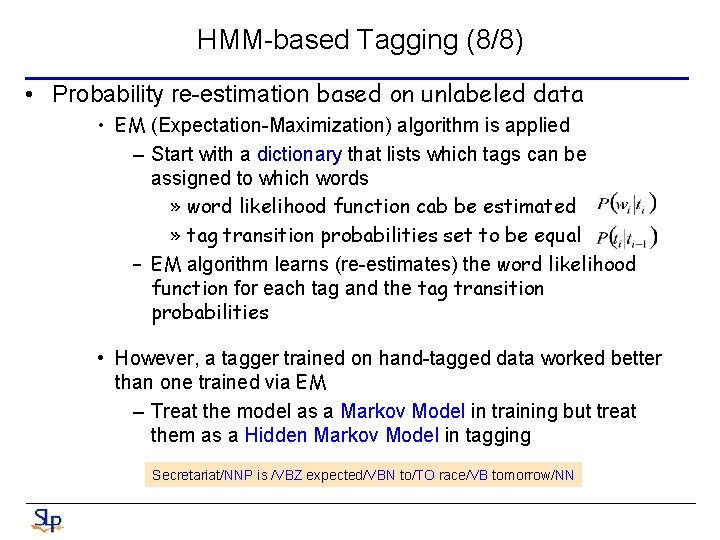

HMM-based Tagging (8/8) • Probability re-estimation based on unlabeled data • EM (Expectation-Maximization) algorithm is applied – Start with a dictionary that lists which tags can be assigned to which words » word likelihood function cab be estimated » tag transition probabilities set to be equal – EM algorithm learns (re-estimates) the word likelihood function for each tag and the tag transition probabilities • However, a tagger trained on hand-tagged data worked better than one trained via EM – Treat the model as a Markov Model in training but treat them as a Hidden Markov Model in tagging Secretariat/NNP is /VBZ expected/VBN to/TO race/VB tomorrow/NN

Transformation-based Tagging (1/8) • Also called Brill tagging – An instance of Transformation-Based Learning (TBL) • Motive – Like the rule-based approach, TBL is based on rules that specify what tags should be assigned to what word – Like the stochastic approach, rules are automatically induced from the data by the machine learning technique • Note that TBL is a supervised learning technique – It assumes a pre-tagged training corpus

Transformation-based Tagging (2/8) • How the TBL rules are learned – Three major stages 1. Label every word with its most-likely tag using a set of tagging rules (use the broadest rules at first) 2. Examine every possible transformation (rewrite rule), and select the one that results in the most improved tagging (supervised! should compare to the pre-tagged corpus ) 3. Re-tag the data according this rule – The above three stages are repeated until some stopping criterion is reached • Such as insufficient improvement over the previous pass – An ordered list of transformations (rules) can be finally obtained

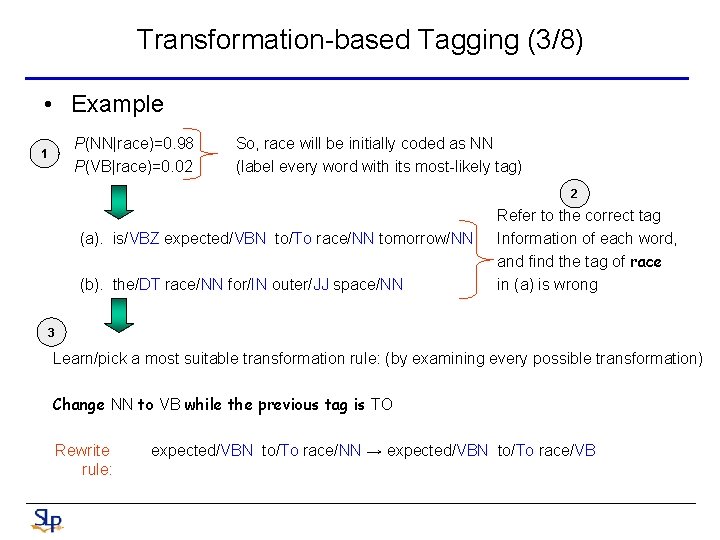

Transformation-based Tagging (3/8) • Example P(NN|race)=0. 98 P(VB|race)=0. 02 1 So, race will be initially coded as NN (label every word with its most-likely tag) 2 (a). is/VBZ expected/VBN to/To race/NN tomorrow/NN (b). the/DT race/NN for/IN outer/JJ space/NN Refer to the correct tag Information of each word, and find the tag of race in (a) is wrong 3 Learn/pick a most suitable transformation rule: (by examining every possible transformation) Change NN to VB while the previous tag is TO Rewrite rule: expected/VBN to/To race/NN → expected/VBN to/To race/VB

Transformation-based Tagging (4/8) • Templates (abstracted transformations) – The set of possible transformations may be infinite – Should limit the set of transformations – The design of a small set of templates (abstracted transformations) is needed E. g. , a strange rule like: transform NN to VB if the previous word was “IBM” and the word “the” occurs between 17 and 158 words before that

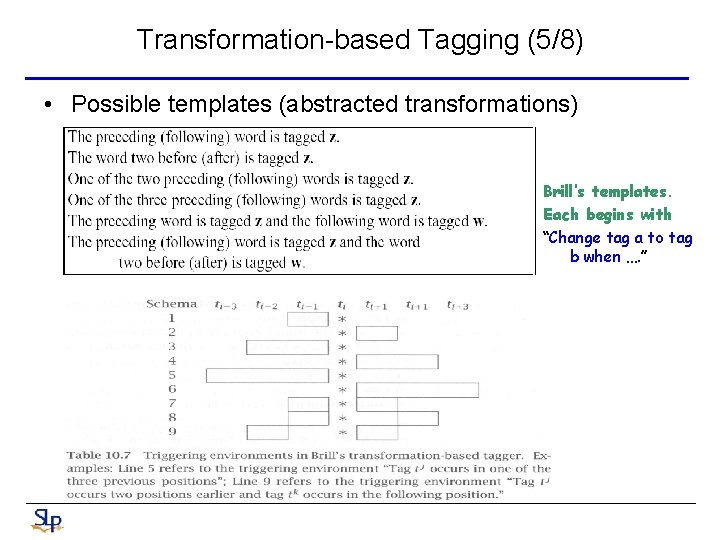

Transformation-based Tagging (5/8) • Possible templates (abstracted transformations) Brill’s templates. Each begins with “Change tag a to tag b when …. ”

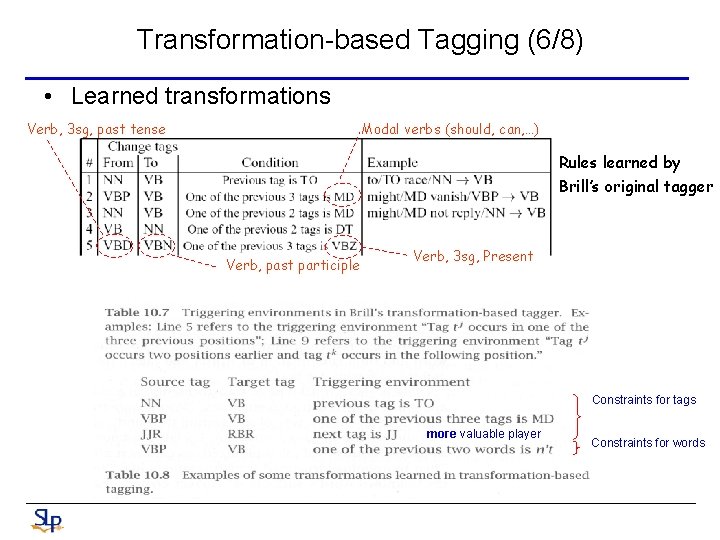

Transformation-based Tagging (6/8) • Learned transformations Verb, 3 sg, past tense Modal verbs (should, can, …) Rules learned by Brill’s original tagger Verb, past participle Verb, 3 sg, Present Constraints for tags more valuable player Constraints for words

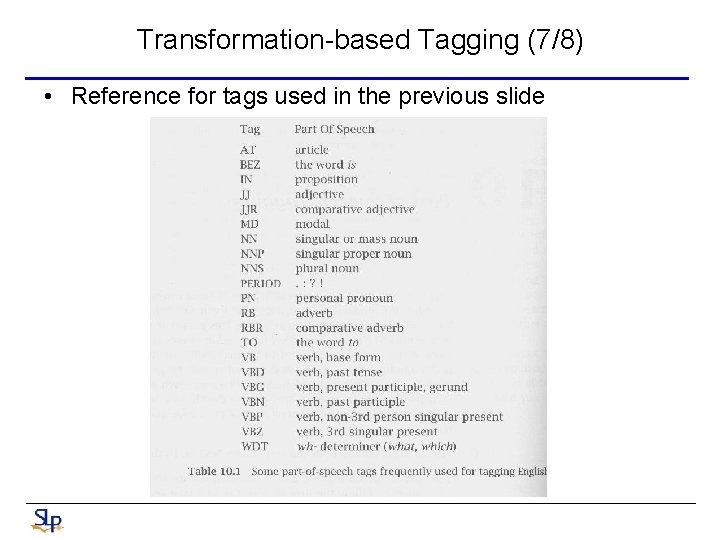

Transformation-based Tagging (7/8) • Reference for tags used in the previous slide

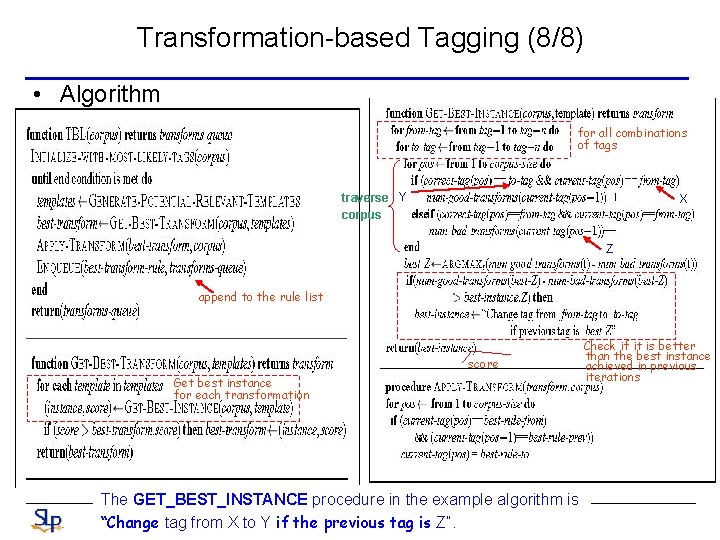

Transformation-based Tagging (8/8) • Algorithm for all combinations of tags traverse Y corpus X Z append to the rule list score Get best instance for each transformation The GET_BEST_INSTANCE procedure in the example algorithm is “Change tag from X to Y if the previous tag is Z”. Check if it is better than the best instance achieved in previous iterations

(II) Extractive Spoken Document Summarization - Models and Features 37

Introduction (1/3) • World Wide Web has led to a renaissance of the research of automatic document summarization, and has extended it to cover a wider range of new tasks • Speech is one of the most important sources of information about multimedia content • However, spoken documents associated with multimedia are unstructured without titles and paragraphs and thus are difficult to retrieve and browse – Spoken documents are merely audio/video signals or a very long sequence of transcribed words including errors – It is inconvenient and inefficient for users to browse through each of them from the beginning to the end

Introduction (2/3) • Spoken document summarization, which aims to generate a summary automatically for the spoken documents, is the key for better speech understanding and organization • Extractive vs. Abstractive Summarization – Extractive summarization is to select a number of indicative sentences or paragraphs from original document and sequence them to form a summary – Abstractive summarization is to rewrite a concise abstract that can reflect the key concepts of the document – Extractive summarization has gained much more attention in the recent past

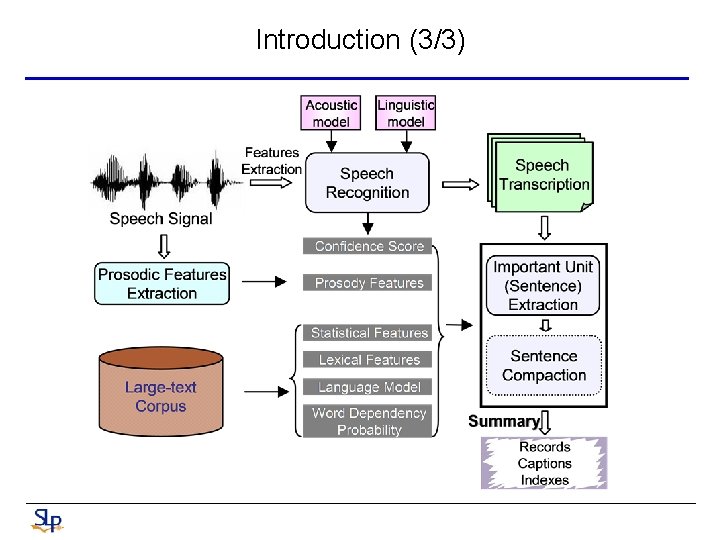

Introduction (3/3)

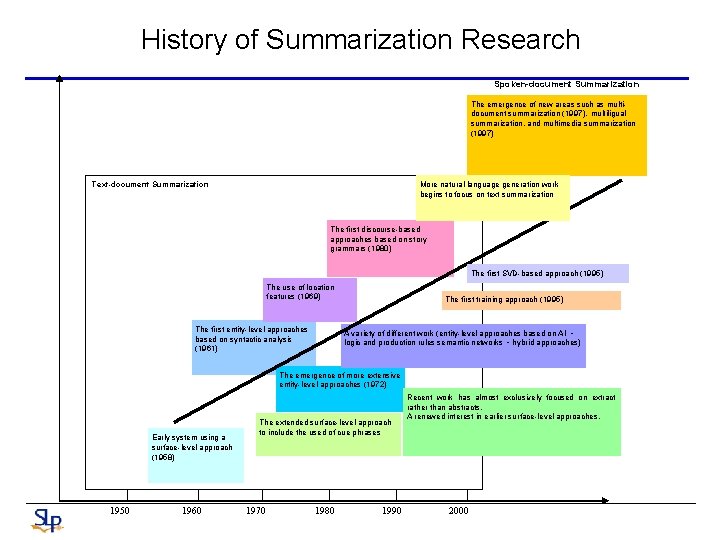

History of Summarization Research Spoken-document Summarization The emergence of new areas such as multidocument summarization (1997), multiligual summarization, and multimedia summarization (1997) Text-document Summarization More natural language generation work begins to focus on text summarization The first discourse-based approaches based on story grammars (1980) The first SVD-based approach (1995) The use of location features (1969) The first entity-level approaches based on syntactic analysis (1961) The first training approach (1995) A variety of different work (entity-level approaches based on AI 、 logic and production rules semantic networks 、hybrid approaches) The emergence of more extensive entity-level approaches (1972) Early system using a surface-level approach (1958) 1950 1960 The extended surface-level approach to include the used of cue phrases 1970 1980 1990 Recent work has almost exclusively focused on extract rather than abstracts. A renewed interest in earlier surface-level approaches. 2000

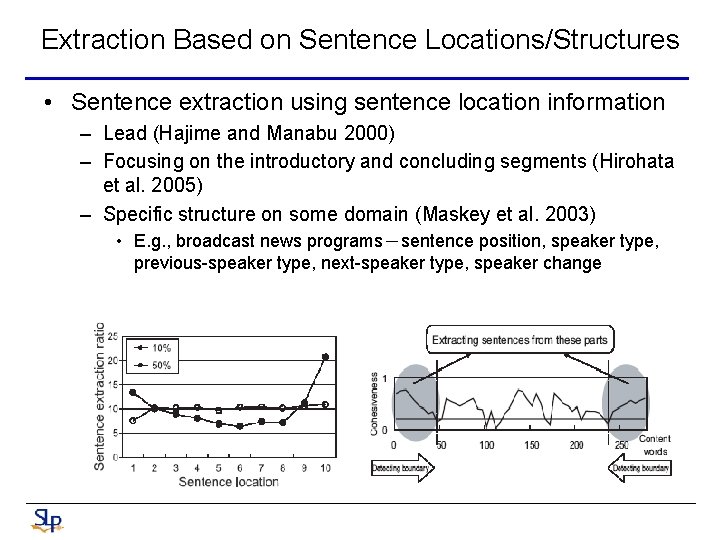

Extraction Based on Sentence Locations/Structures • Sentence extraction using sentence location information – Lead (Hajime and Manabu 2000) – Focusing on the introductory and concluding segments (Hirohata et al. 2005) – Specific structure on some domain (Maskey et al. 2003) • E. g. , broadcast news programs-sentence position, speaker type, previous-speaker type, next-speaker type, speaker change

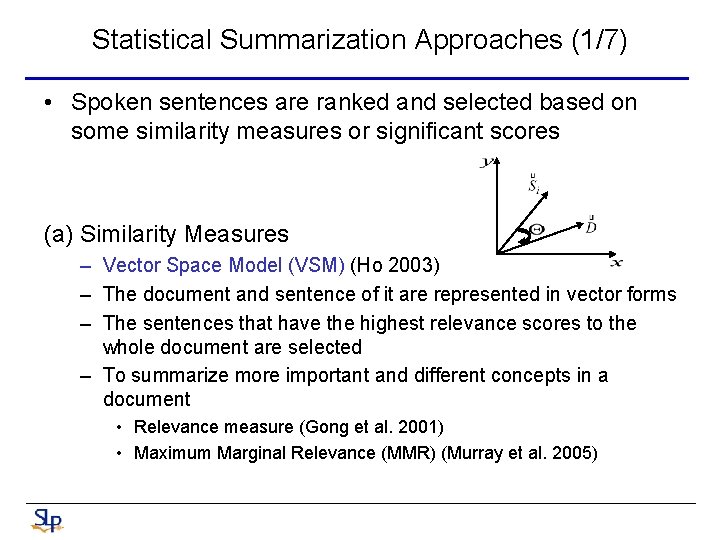

Statistical Summarization Approaches (1/7) • Spoken sentences are ranked and selected based on some similarity measures or significant scores (a) Similarity Measures – Vector Space Model (VSM) (Ho 2003) – The document and sentence of it are represented in vector forms – The sentences that have the highest relevance scores to the whole document are selected – To summarize more important and different concepts in a document • Relevance measure (Gong et al. 2001) • Maximum Marginal Relevance (MMR) (Murray et al. 2005)

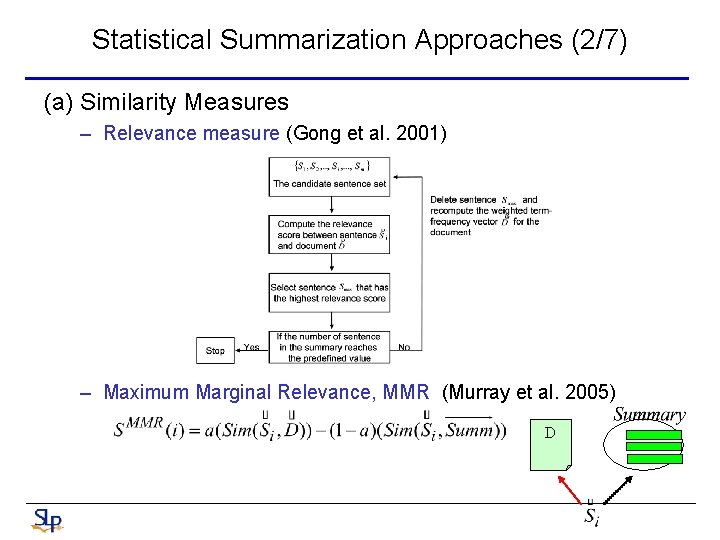

Statistical Summarization Approaches (2/7) (a) Similarity Measures – Relevance measure (Gong et al. 2001) – Maximum Marginal Relevance, MMR (Murray et al. 2005) D

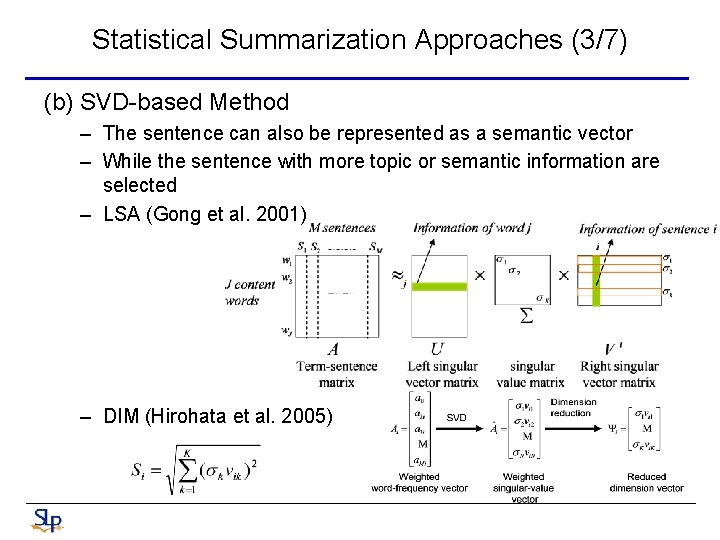

Statistical Summarization Approaches (3/7) (b) SVD-based Method – The sentence can also be represented as a semantic vector – While the sentence with more topic or semantic information are selected – LSA (Gong et al. 2001) – DIM (Hirohata et al. 2005)

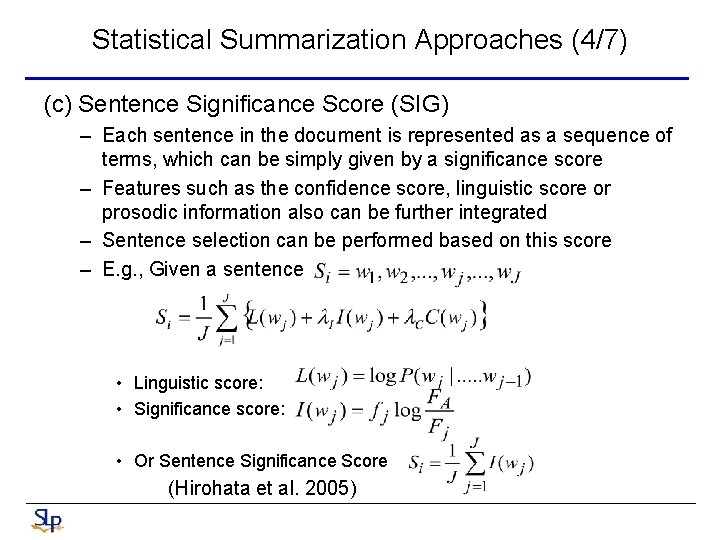

Statistical Summarization Approaches (4/7) (c) Sentence Significance Score (SIG) – Each sentence in the document is represented as a sequence of terms, which can be simply given by a significance score – Features such as the confidence score, linguistic score or prosodic information also can be further integrated – Sentence selection can be performed based on this score – E. g. , Given a sentence • Linguistic score: • Significance score: • Or Sentence Significance Score (Hirohata et al. 2005)

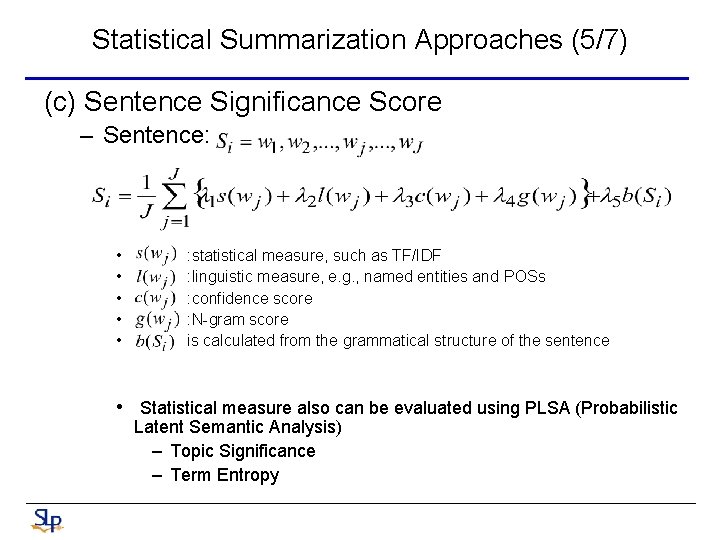

Statistical Summarization Approaches (5/7) (c) Sentence Significance Score – Sentence: • • • : statistical measure, such as TF/IDF : linguistic measure, e. g. , named entities and POSs : confidence score : N-gram score is calculated from the grammatical structure of the sentence • Statistical measure also can be evaluated using PLSA (Probabilistic Latent Semantic Analysis) – Topic Significance – Term Entropy

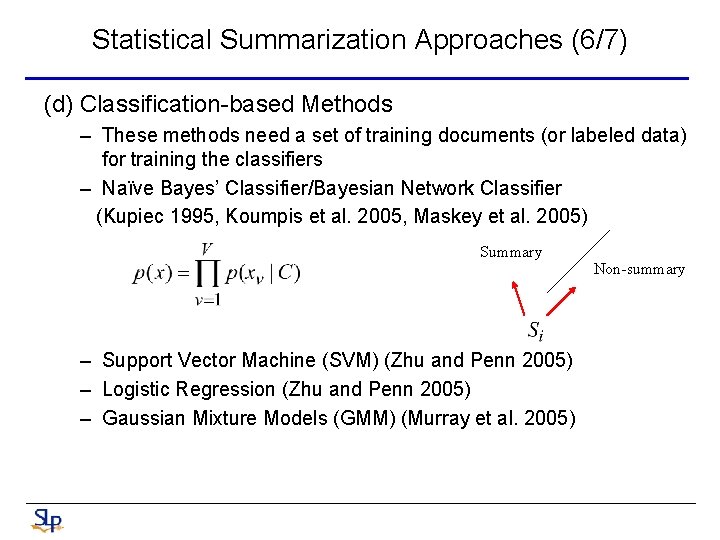

Statistical Summarization Approaches (6/7) (d) Classification-based Methods – These methods need a set of training documents (or labeled data) for training the classifiers – Naïve Bayes’ Classifier/Bayesian Network Classifier (Kupiec 1995, Koumpis et al. 2005, Maskey et al. 2005) Summary – Support Vector Machine (SVM) (Zhu and Penn 2005) – Logistic Regression (Zhu and Penn 2005) – Gaussian Mixture Models (GMM) (Murray et al. 2005) Non-summary

Statistical Summarization Approaches (7/7) (e) Combined Methods (Hirohata et al. 2005) – Sentence Significance Score (SIG) combined with Location Information – Latent semantic analysis (LSA) combined with Location Information – DIM combined with Location Information

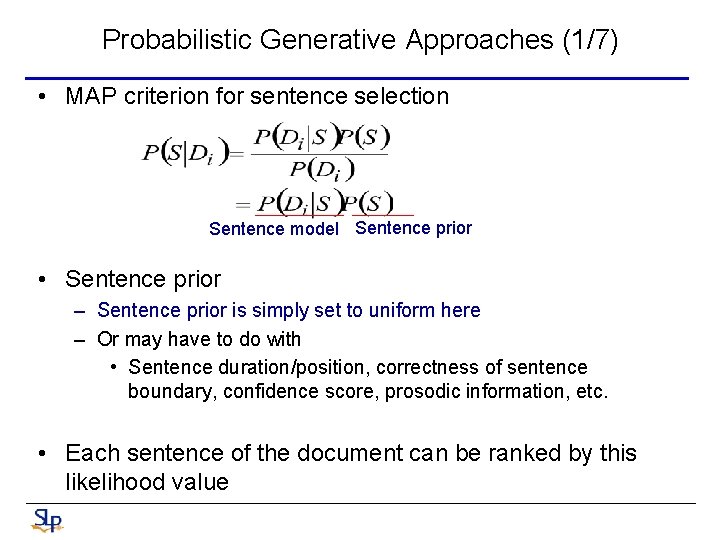

Probabilistic Generative Approaches (1/7) • MAP criterion for sentence selection Sentence model Sentence prior • Sentence prior – Sentence prior is simply set to uniform here – Or may have to do with • Sentence duration/position, correctness of sentence boundary, confidence score, prosodic information, etc. • Each sentence of the document can be ranked by this likelihood value

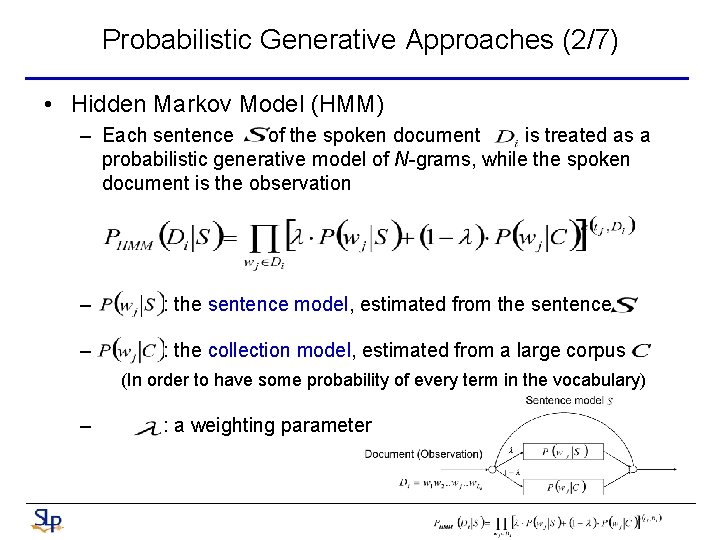

Probabilistic Generative Approaches (2/7) • Hidden Markov Model (HMM) – Each sentence of the spoken document is treated as a probabilistic generative model of N-grams, while the spoken document is the observation – : the sentence model, estimated from the sentence – : the collection model, estimated from a large corpus (In order to have some probability of every term in the vocabulary) – : a weighting parameter

Probabilistic Generative Approaches (3/7) • Relevance Model, RM – In HMM, the true sentence model might not be accurately estimated (by MLE) • Since the sentence consists only of few terms – In order to improve estimation of the sentence model • Each sentence has its own associated relevant model , constructed by the subset of documents in the collection that are relevant to the sentence • The relevance model is then linearly combined with the original sentence model to form a more accurate sentence model

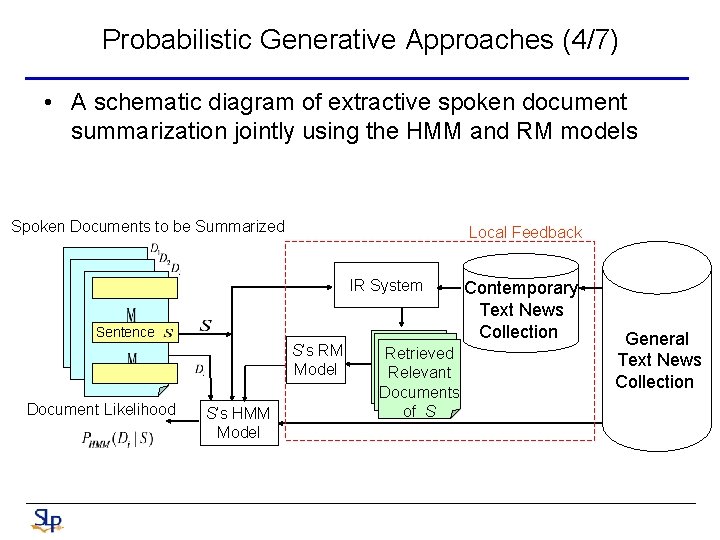

Probabilistic Generative Approaches (4/7) • A schematic diagram of extractive spoken document summarization jointly using the HMM and RM models Spoken Documents to be Summarized Local Feedback IR System Sentence S’s RM Model Document Likelihood S’s HMM Model Retrieved Relevant Documents of S Contemporary Text News Collection General Text News Collection

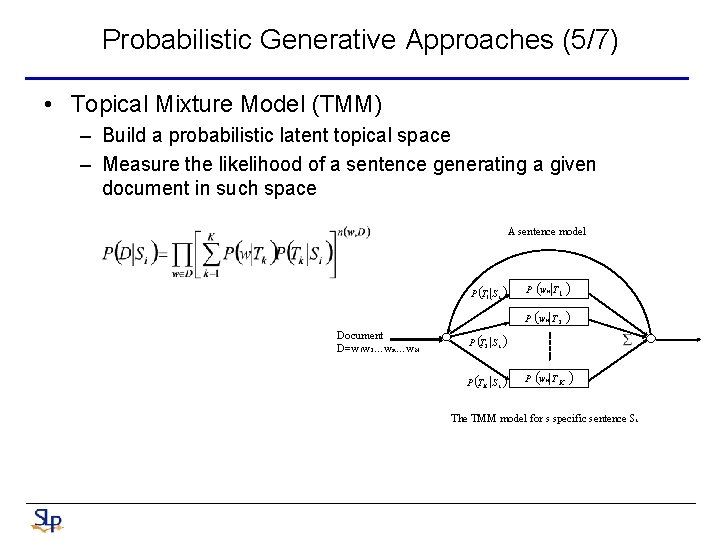

Probabilistic Generative Approaches (5/7) • Topical Mixture Model (TMM) – Build a probabilistic latent topical space – Measure the likelihood of a sentence generating a given document in such space A sentence model P (T 1 S i ) Document D=w 1 w 2…wn…w. N P wn T 1 ( ) P (wn T 2 ) P (T 2 S i ) P (T K S i ) ( P wn T K ) The TMM model for s specific sentence Si

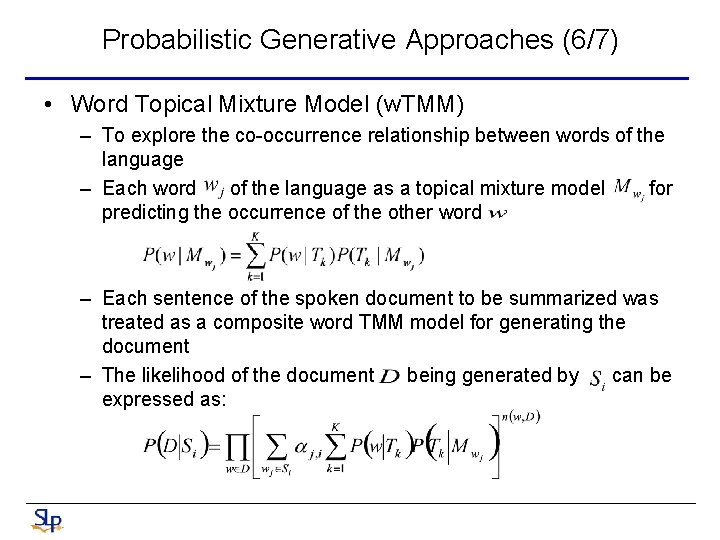

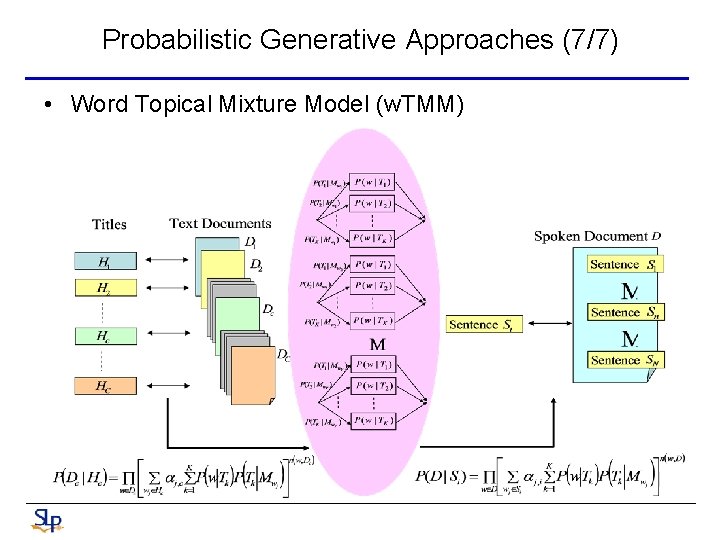

Probabilistic Generative Approaches (6/7) • Word Topical Mixture Model (w. TMM) – To explore the co-occurrence relationship between words of the language – Each word of the language as a topical mixture model for predicting the occurrence of the other word – Each sentence of the spoken document to be summarized was treated as a composite word TMM model for generating the document – The likelihood of the document being generated by can be expressed as:

Probabilistic Generative Approaches (7/7) • Word Topical Mixture Model (w. TMM)

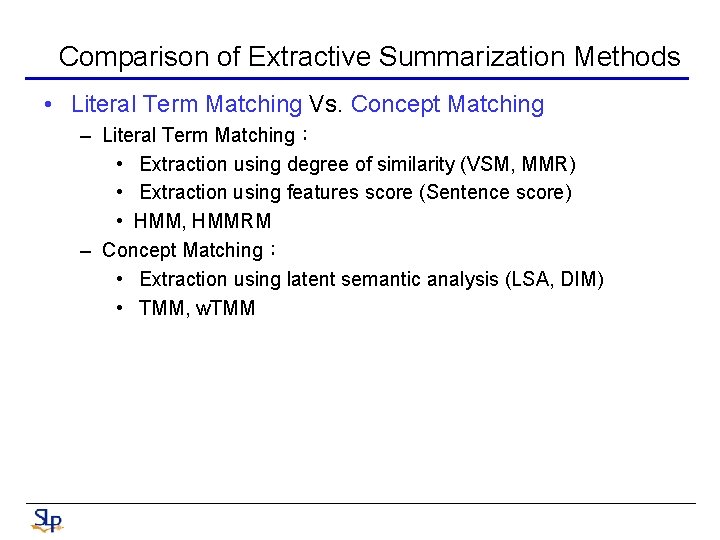

Comparison of Extractive Summarization Methods • Literal Term Matching Vs. Concept Matching – Literal Term Matching: • Extraction using degree of similarity (VSM, MMR) • Extraction using features score (Sentence score) • HMM, HMMRM – Concept Matching: • Extraction using latent semantic analysis (LSA, DIM) • TMM, w. TMM

Evaluation Metrics (1/3) • Subjective Evaluation Metrics (Direct evaluation) – Conducted by human subjects – Different levels • Objective Evaluation Metrics – Automatic summaries were evaluated by objective metrics • Automatic Evaluation – Summaries are evaluated by IR

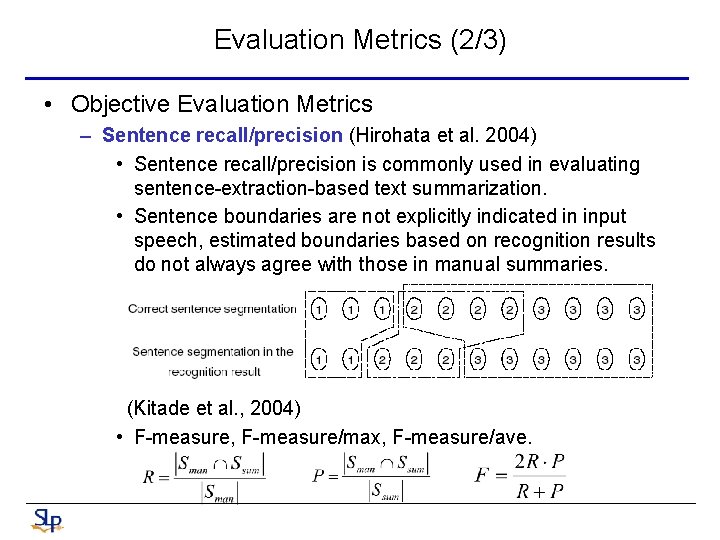

Evaluation Metrics (2/3) • Objective Evaluation Metrics – Sentence recall/precision (Hirohata et al. 2004) • Sentence recall/precision is commonly used in evaluating sentence-extraction-based text summarization. • Sentence boundaries are not explicitly indicated in input speech, estimated boundaries based on recognition results do not always agree with those in manual summaries. (Kitade et al. , 2004) • F-measure, F-measure/max, F-measure/ave.

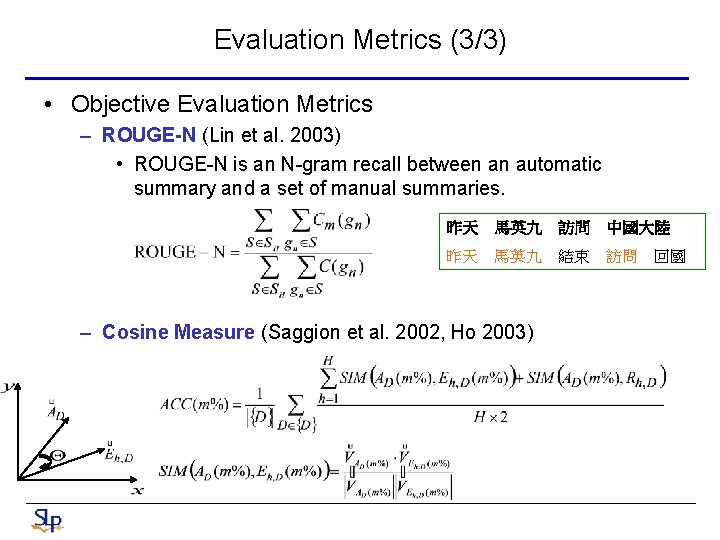

Evaluation Metrics (3/3) • Objective Evaluation Metrics – ROUGE-N (Lin et al. 2003) • ROUGE-N is an N-gram recall between an automatic summary and a set of manual summaries. 昨天 馬英九 訪問 中國大陸 昨天 馬英九 結束 訪問 回國 – Cosine Measure (Saggion et al. 2002, Ho 2003)

HW: Document Summarization

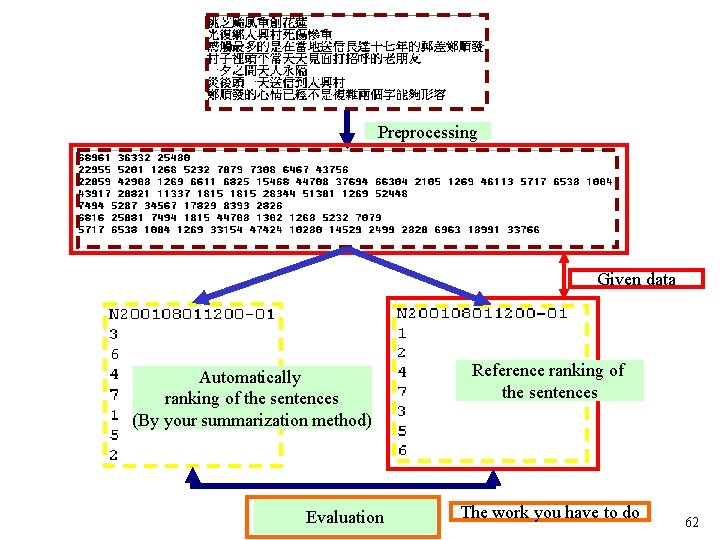

Preprocessing Given data Automatically ranking of the sentences (By your summarization method) Evaluation Reference ranking of the sentences The work you have to do 62

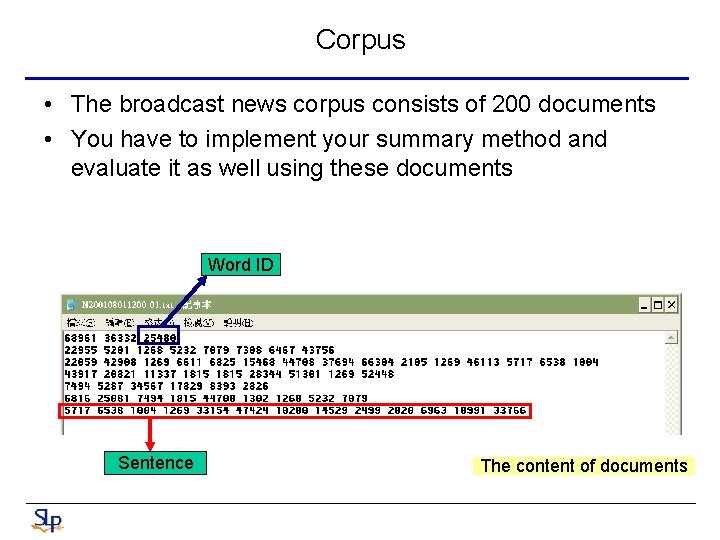

Corpus • The broadcast news corpus consists of 200 documents • You have to implement your summary method and evaluate it as well using these documents Word ID Sentence The content of documents

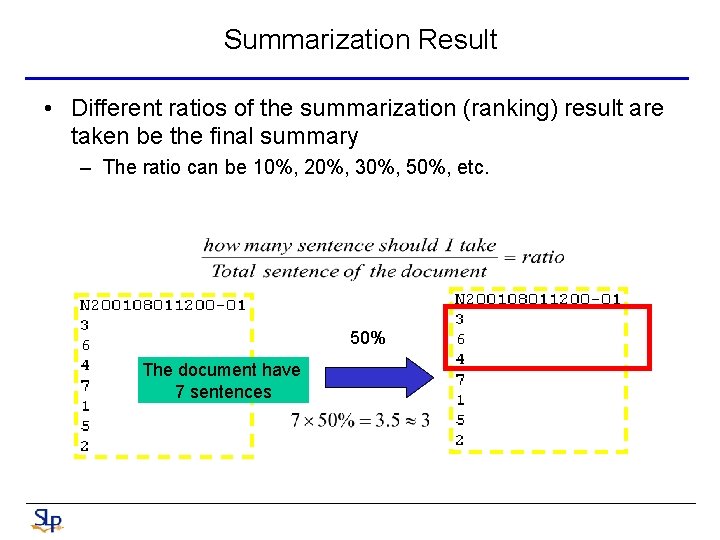

Summarization Result • Different ratios of the summarization (ranking) result are taken be the final summary – The ratio can be 10%, 20%, 30%, 50%, etc. 50% The document have 7 sentences

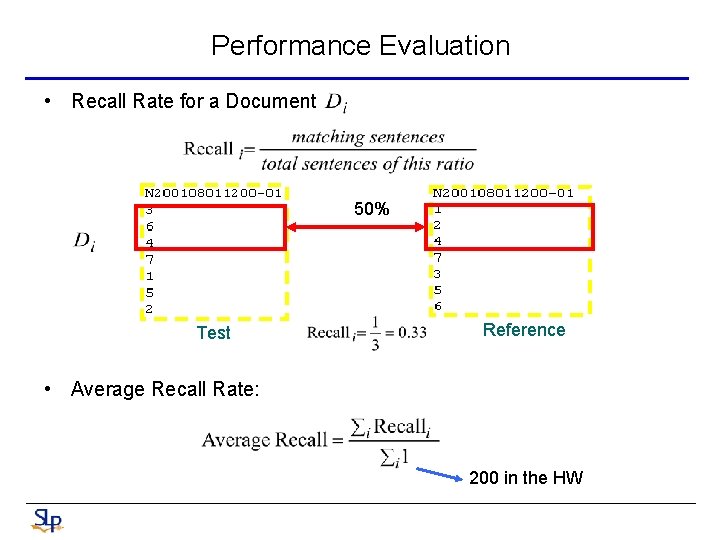

Performance Evaluation • Recall Rate for a Document 50% Test Reference • Average Recall Rate: 200 in the HW

- Slides: 65