NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Libraries and

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Libraries and Their Performance Frank V. Hale Thomas M. De. Boni NERSC User Services Group March 17, 2003 Libraries and Their Performance

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part I: Single Node Performance Measurement • Use of hpmcount for measurement of total code performance • Use of HPM Toolkit for measurement of code section performance • Vector operations generally give better performance than scalar (indexed) operations • Shared-memory, SMP parallelism can be very effective and easy to use March 17, 2003 Libraries and Their Performance 2

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Demonstration Problem • Compute p using random points in unit square (ratio of points in unit circle to points in unit square) • Use input file with sequence of 134, 217, 728 uniformly distributed random numbers in range 0 -1; unformatted, 8 byte floating point numbers (1 gigabyte of data) March 17, 2003 Libraries and Their Performance 3

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER A first Fortran code % cat estpi 1. f implicit none integer i, points, circle real*8 x, y read(*, *)points open(10, file="runiform 1. dat", status="old", form="unformatted") circle = 0 c repeat for each (x, y) data point: read and compute do i=1, points read(10)x read(10)y if (sqrt((x-0. 5)**2 + (y-0. 5)**2). le. 0. 5) circle = circle + 1 enddo write(*, *)"Estimated pi using ", points, " points as ", . ((4. *circle)/points) end March 17, 2003 Libraries and Their Performance 4

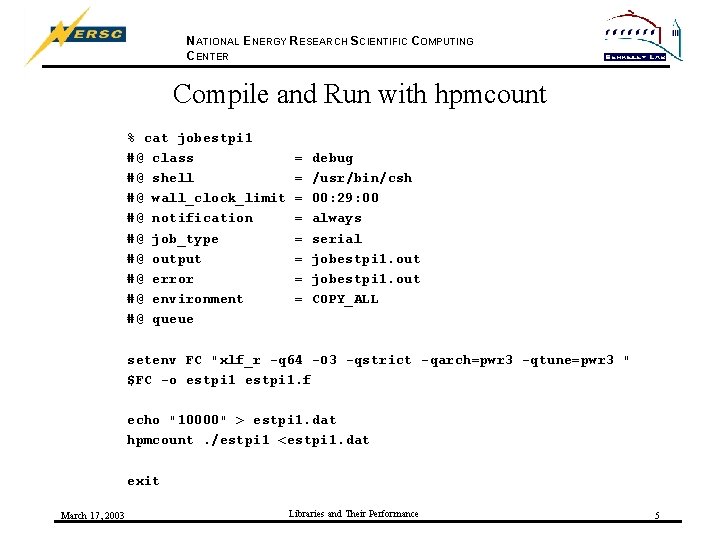

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run with hpmcount % cat jobestpi 1 #@ class #@ shell #@ wall_clock_limit #@ notification #@ job_type #@ output #@ error #@ environment #@ queue = = = = debug /usr/bin/csh 00: 29: 00 always serial jobestpi 1. out COPY_ALL setenv FC "xlf_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 " $FC -o estpi 1. f echo "10000" > estpi 1. dat hpmcount. /estpi 1 <estpi 1. dat exit March 17, 2003 Libraries and Their Performance 5

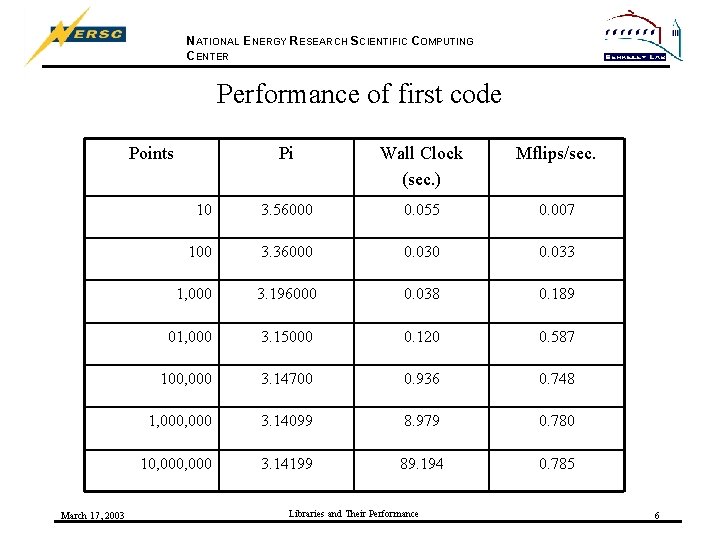

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Performance of first code Points March 17, 2003 Pi Wall Clock (sec. ) Mflips/sec. 10 3. 56000 0. 055 0. 007 100 3. 36000 0. 033 1, 000 3. 196000 0. 038 0. 189 01, 000 3. 15000 0. 120 0. 587 100, 000 3. 14700 0. 936 0. 748 1, 000 3. 14099 8. 979 0. 780 10, 000 3. 14199 89. 194 0. 785 Libraries and Their Performance 6

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Performance of first code March 17, 2003 Libraries and Their Performance 7

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Some Observations • Performance is not very good at all, less than 1 Mflip/s (peak is 1, 500 Mflip/s per processor) • Scalar approach to computation • Scalar I/O mixed with scalar computation Suggestions: Ø Separate I/O from computation Ø Use vector operations on dynamically allocated vector data structures March 17, 2003 Libraries and Their Performance 8

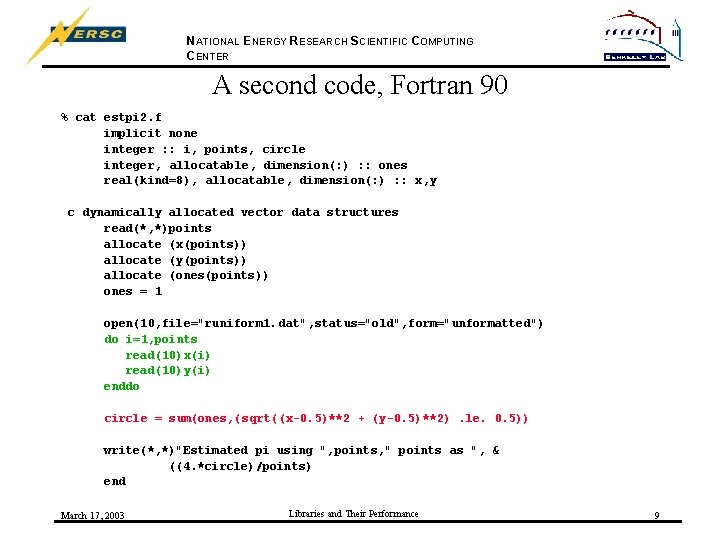

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER A second code, Fortran 90 % cat estpi 2. f implicit none integer : : i, points, circle integer, allocatable, dimension(: ) : : ones real(kind=8), allocatable, dimension(: ) : : x, y c dynamically allocated vector data structures read(*, *)points allocate (x(points)) allocate (y(points)) allocate (ones(points)) ones = 1 open(10, file="runiform 1. dat", status="old", form="unformatted") do i=1, points read(10)x(i) read(10)y(i) enddo circle = sum(ones, (sqrt((x-0. 5)**2 + (y-0. 5)**2). le. 0. 5)) write(*, *)"Estimated pi using ", points, " points as ", & ((4. *circle)/points) end March 17, 2003 Libraries and Their Performance 9

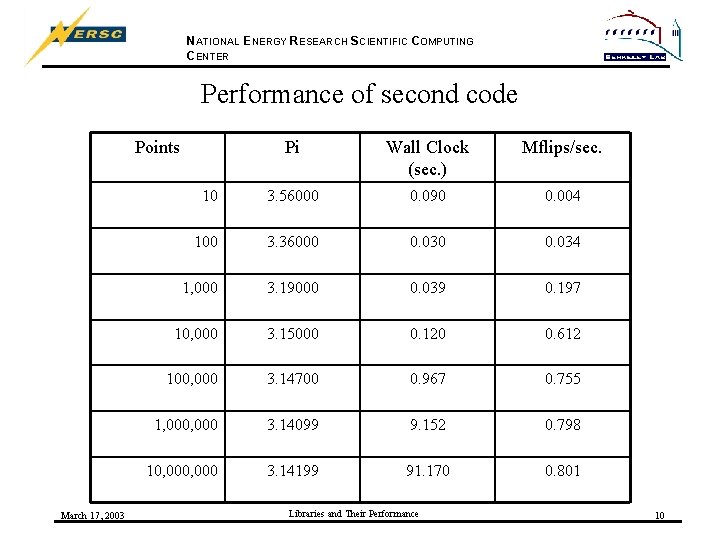

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Performance of second code Points March 17, 2003 Pi Wall Clock (sec. ) Mflips/sec. 10 3. 56000 0. 090 0. 004 100 3. 36000 0. 034 1, 000 3. 19000 0. 039 0. 197 10, 000 3. 15000 0. 120 0. 612 100, 000 3. 14700 0. 967 0. 755 1, 000 3. 14099 9. 152 0. 798 10, 000 3. 14199 91. 170 0. 801 Libraries and Their Performance 10

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Performance of second code March 17, 2003 Libraries and Their Performance 11

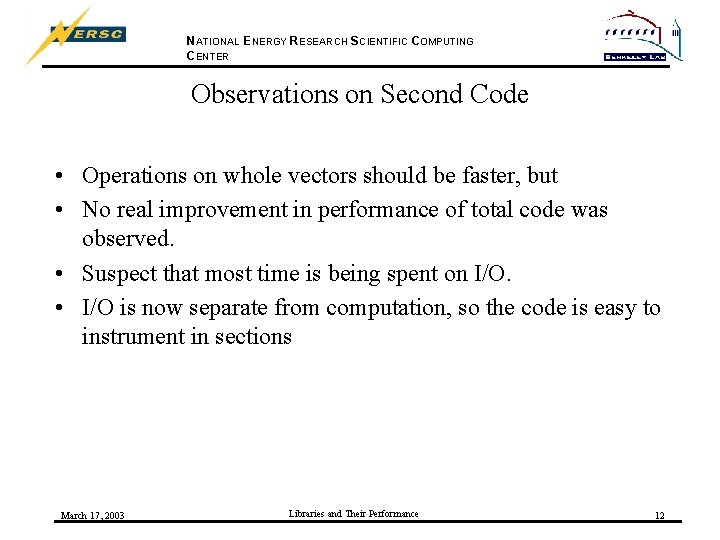

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on Second Code • Operations on whole vectors should be faster, but • No real improvement in performance of total code was observed. • Suspect that most time is being spent on I/O. • I/O is now separate from computation, so the code is easy to instrument in sections March 17, 2003 Libraries and Their Performance 12

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Instrument code sections with HPM Toolkit Four sections to be separately measured: • Data structure initialization • Read data • Estimate p • Write output Calls to f_hpmstart and f_hpmstop around each section. March 17, 2003 Libraries and Their Performance 13

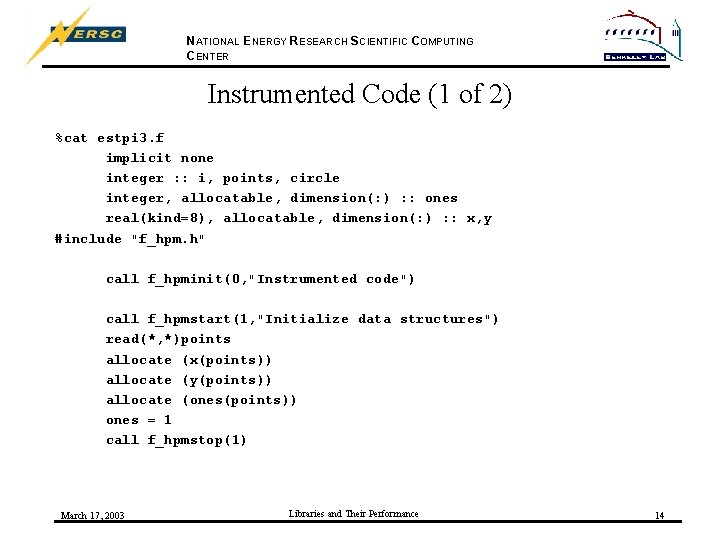

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Instrumented Code (1 of 2) %cat estpi 3. f implicit none integer : : i, points, circle integer, allocatable, dimension(: ) : : ones real(kind=8), allocatable, dimension(: ) : : x, y #include "f_hpm. h" call f_hpminit(0, "Instrumented code") call f_hpmstart(1, "Initialize data structures") read(*, *)points allocate (x(points)) allocate (y(points)) allocate (ones(points)) ones = 1 call f_hpmstop(1) March 17, 2003 Libraries and Their Performance 14

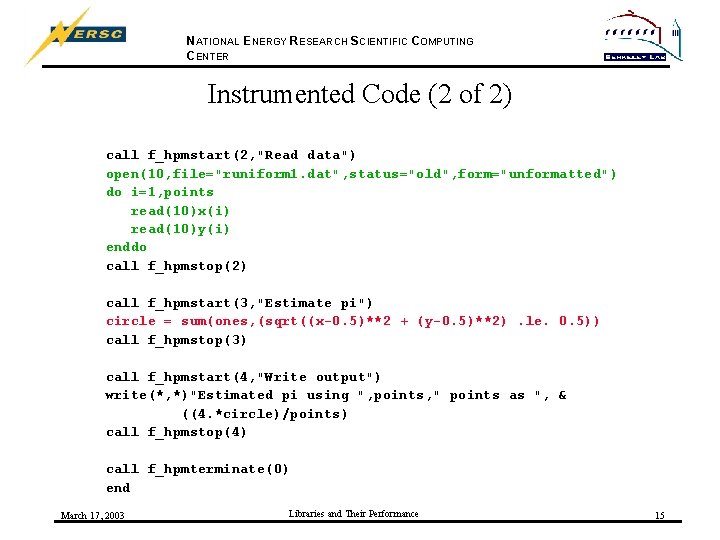

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Instrumented Code (2 of 2) call f_hpmstart(2, "Read data") open(10, file="runiform 1. dat", status="old", form="unformatted") do i=1, points read(10)x(i) read(10)y(i) enddo call f_hpmstop(2) call f_hpmstart(3, "Estimate pi") circle = sum(ones, (sqrt((x-0. 5)**2 + (y-0. 5)**2). le. 0. 5)) call f_hpmstop(3) call f_hpmstart(4, "Write output") write(*, *)"Estimated pi using ", points, " points as ", & ((4. *circle)/points) call f_hpmstop(4) call f_hpmterminate(0) end March 17, 2003 Libraries and Their Performance 15

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Notes on Instrumented Code • Entire executable code enclosed between hpm_init and hpm_terminate • Code sections enclosed between hpm_start and hpm_stop • Descriptive text labels appear in output file(s) March 17, 2003 Libraries and Their Performance 16

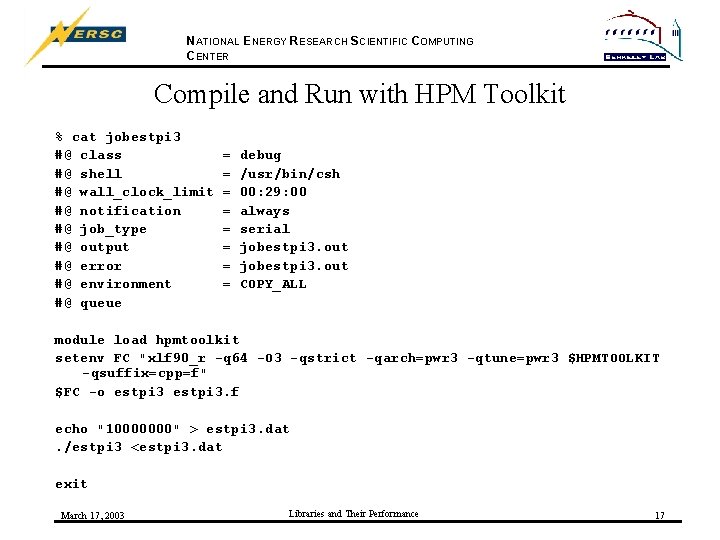

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run with HPM Toolkit % cat jobestpi 3 #@ class #@ shell #@ wall_clock_limit #@ notification #@ job_type #@ output #@ error #@ environment #@ queue = = = = debug /usr/bin/csh 00: 29: 00 always serial jobestpi 3. out COPY_ALL module load hpmtoolkit setenv FC "xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f" $FC -o estpi 3. f echo "10000000" > estpi 3. dat. /estpi 3 <estpi 3. dat exit March 17, 2003 Libraries and Their Performance 17

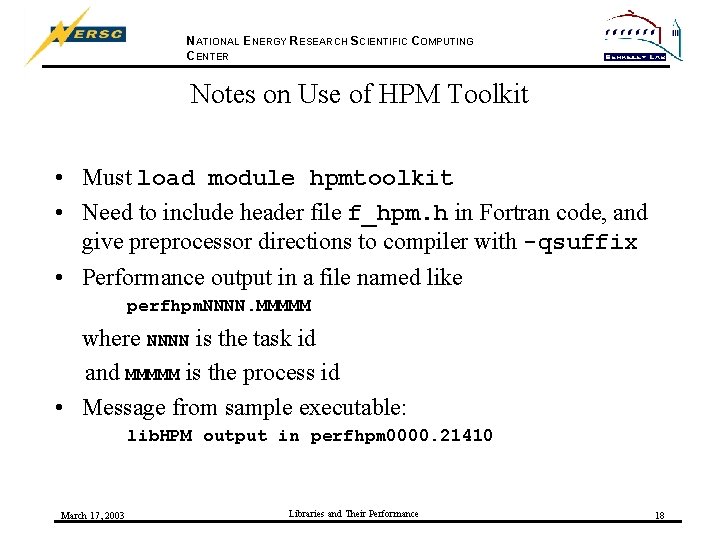

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Notes on Use of HPM Toolkit • Must load module hpmtoolkit • Need to include header file f_hpm. h in Fortran code, and give preprocessor directions to compiler with -qsuffix • Performance output in a file named like perfhpm. NNNN. MMMMM where NNNN is the task id and MMMMM is the process id • Message from sample executable: lib. HPM output in perfhpm 0000. 21410 March 17, 2003 Libraries and Their Performance 18

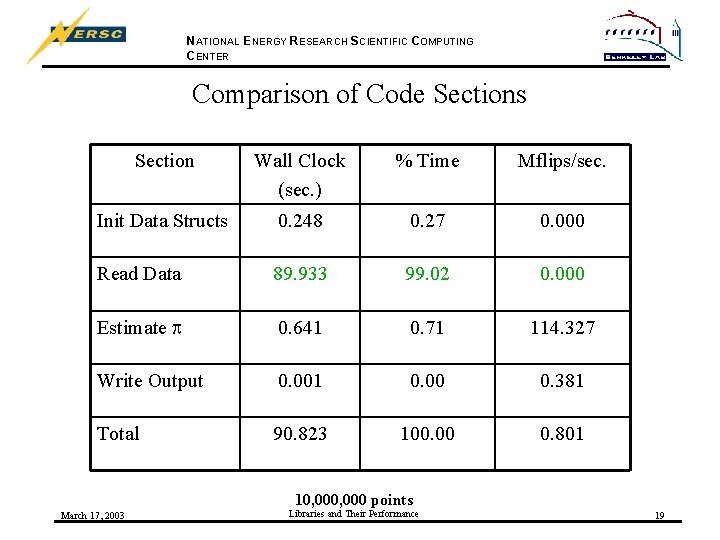

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of Code Sections Section Wall Clock (sec. ) % Time Mflips/sec. Init Data Structs 0. 248 0. 27 0. 000 Read Data 89. 933 99. 02 0. 000 Estimate p 0. 641 0. 71 114. 327 Write Output 0. 001 0. 00 0. 381 Total 90. 823 100. 00 0. 801 10, 000 points March 17, 2003 Libraries and Their Performance 19

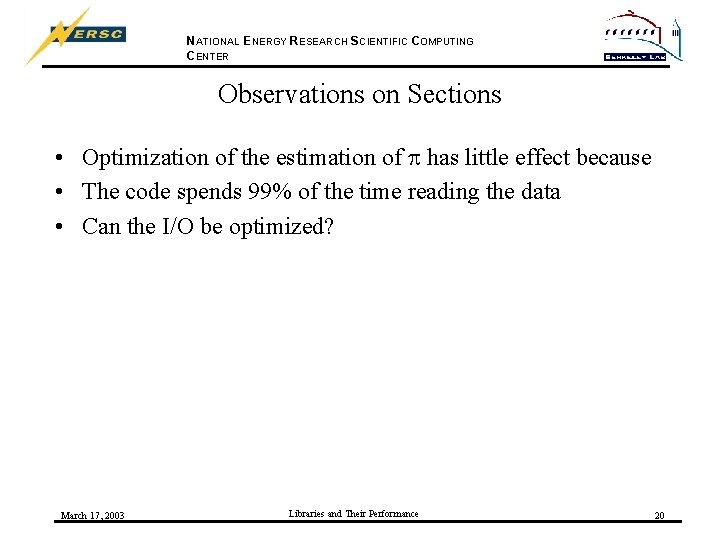

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on Sections • Optimization of the estimation of p has little effect because • The code spends 99% of the time reading the data • Can the I/O be optimized? March 17, 2003 Libraries and Their Performance 20

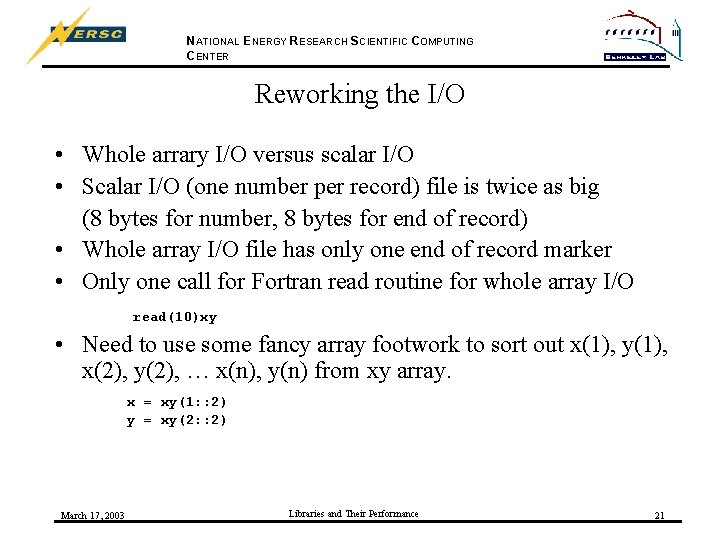

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Reworking the I/O • Whole arrary I/O versus scalar I/O • Scalar I/O (one number per record) file is twice as big (8 bytes for number, 8 bytes for end of record) • Whole array I/O file has only one end of record marker • Only one call for Fortran read routine for whole array I/O read(10)xy • Need to use some fancy array footwork to sort out x(1), y(1), x(2), y(2), … x(n), y(n) from xy array. x = xy(1: : 2) y = xy(2: : 2) March 17, 2003 Libraries and Their Performance 21

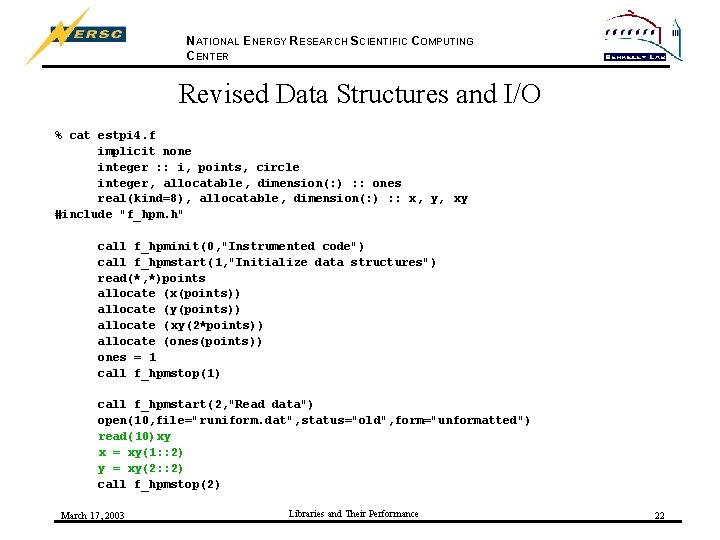

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Revised Data Structures and I/O % cat estpi 4. f implicit none integer : : i, points, circle integer, allocatable, dimension(: ) : : ones real(kind=8), allocatable, dimension(: ) : : x, y, xy #include "f_hpm. h" call f_hpminit(0, "Instrumented code") call f_hpmstart(1, "Initialize data structures") read(*, *)points allocate (x(points)) allocate (y(points)) allocate (xy(2*points)) allocate (ones(points)) ones = 1 call f_hpmstop(1) call f_hpmstart(2, "Read data") open(10, file="runiform. dat", status="old", form="unformatted") read(10)xy x = xy(1: : 2) y = xy(2: : 2) call f_hpmstop(2) March 17, 2003 Libraries and Their Performance 22

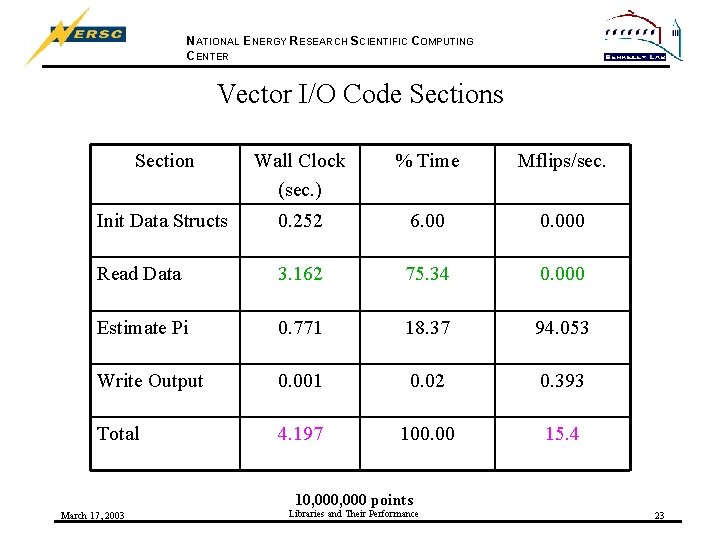

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Vector I/O Code Sections Section Wall Clock (sec. ) % Time Mflips/sec. Init Data Structs 0. 252 6. 00 0. 000 Read Data 3. 162 75. 34 0. 000 Estimate Pi 0. 771 18. 37 94. 053 Write Output 0. 001 0. 02 0. 393 Total 4. 197 100. 00 15. 4 10, 000 points March 17, 2003 Libraries and Their Performance 23

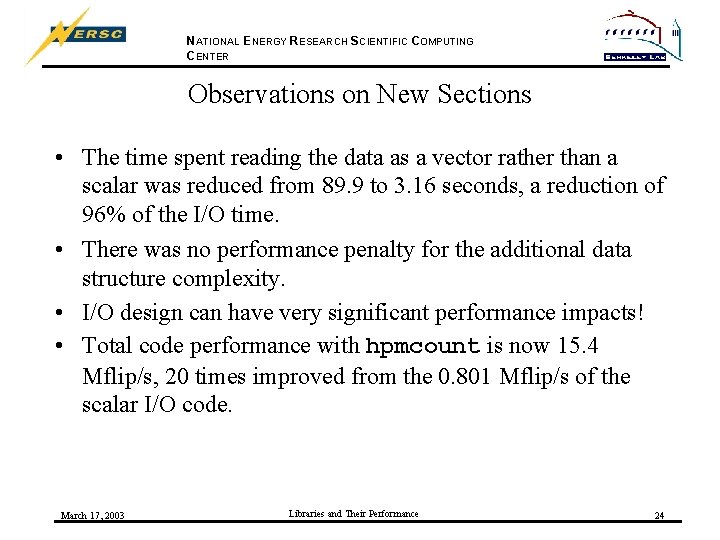

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on New Sections • The time spent reading the data as a vector rather than a scalar was reduced from 89. 9 to 3. 16 seconds, a reduction of 96% of the I/O time. • There was no performance penalty for the additional data structure complexity. • I/O design can have very significant performance impacts! • Total code performance with hpmcount is now 15. 4 Mflip/s, 20 times improved from the 0. 801 Mflip/s of the scalar I/O code. March 17, 2003 Libraries and Their Performance 24

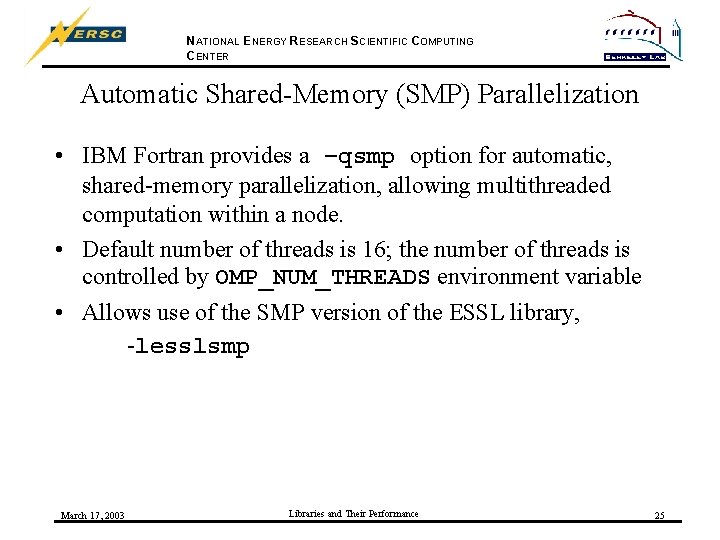

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Automatic Shared-Memory (SMP) Parallelization • IBM Fortran provides a –qsmp option for automatic, shared-memory parallelization, allowing multithreaded computation within a node. • Default number of threads is 16; the number of threads is controlled by OMP_NUM_THREADS environment variable • Allows use of the SMP version of the ESSL library, -lesslsmp March 17, 2003 Libraries and Their Performance 25

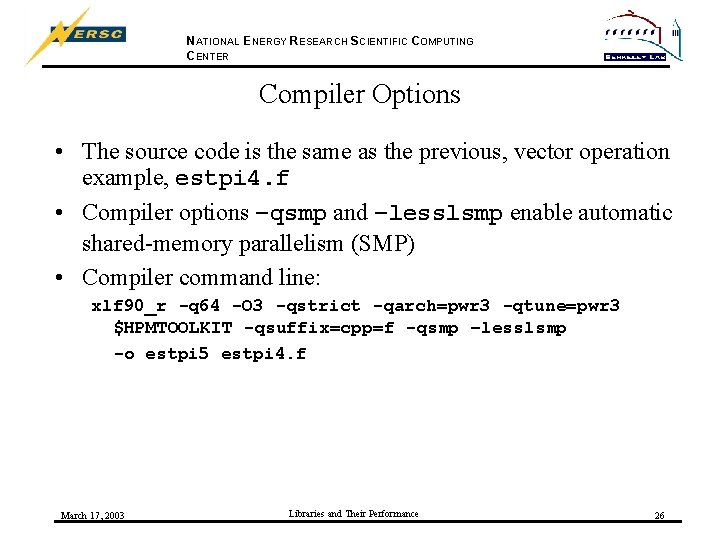

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compiler Options • The source code is the same as the previous, vector operation example, estpi 4. f • Compiler options –qsmp and –lesslsmp enable automatic shared-memory parallelism (SMP) • Compiler command line: xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f -qsmp –lesslsmp -o estpi 5 estpi 4. f March 17, 2003 Libraries and Their Performance 26

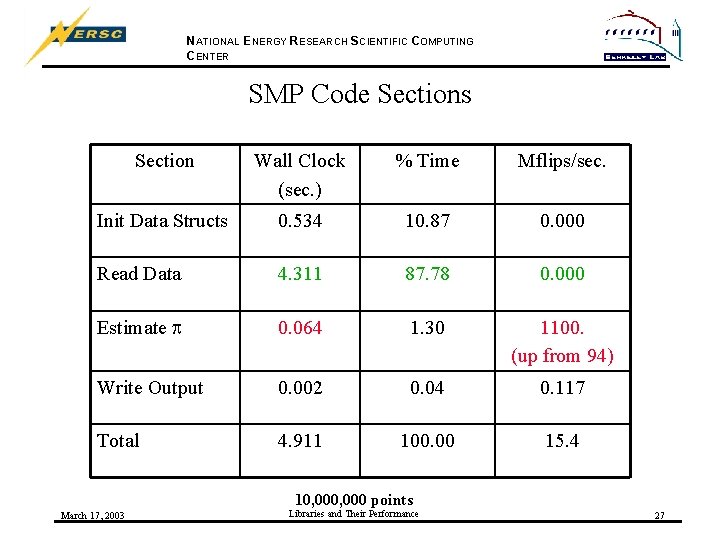

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER SMP Code Sections Section Wall Clock (sec. ) % Time Mflips/sec. Init Data Structs 0. 534 10. 87 0. 000 Read Data 4. 311 87. 78 0. 000 Estimate p 0. 064 1. 30 1100. (up from 94) Write Output 0. 002 0. 04 0. 117 Total 4. 911 100. 00 15. 4 10, 000 points March 17, 2003 Libraries and Their Performance 27

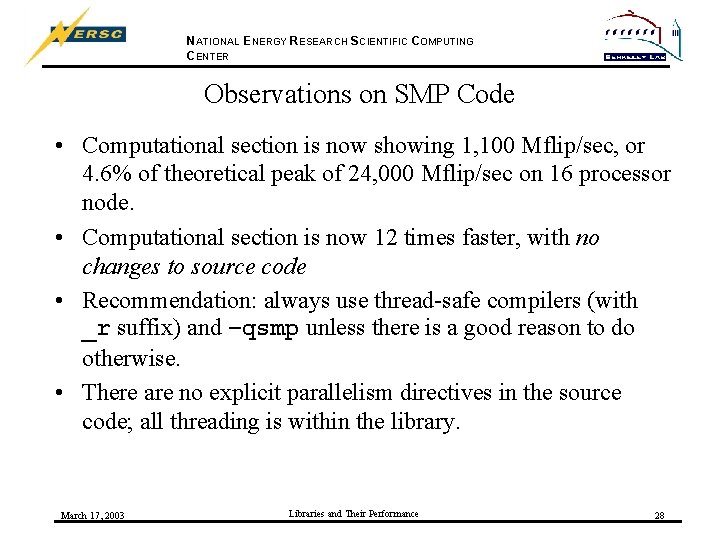

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on SMP Code • Computational section is now showing 1, 100 Mflip/sec, or 4. 6% of theoretical peak of 24, 000 Mflip/sec on 16 processor node. • Computational section is now 12 times faster, with no changes to source code • Recommendation: always use thread-safe compilers (with _r suffix) and –qsmp unless there is a good reason to do otherwise. • There are no explicit parallelism directives in the source code; all threading is within the library. March 17, 2003 Libraries and Their Performance 28

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Too Many Threads Can Spoil Performance • Each node has 16 processors, and usually having more threads than processors will not improve performance March 17, 2003 Libraries and Their Performance 29

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Sidebar: Cost of Misaligned Common Block • User code with Fortran 77 style common blocks may receive an innocuous warning: 1514 -008 (W) Variable … is misaligned. affect the efficiency of the code. This may • How much can this affect the efficiency of the code? • Test: put arrays x and y in misaligned common, with a 1 -byte character in front of them March 17, 2003 Libraries and Their Performance 30

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Potential Cost of Misaligned Common Blocks • 10, 000 points used for computing Pi; • Properly aligned, dynamically allocated x and y used 0. 064 seconds at 1, 100 Mflip/s • Misaligned, statically allocated x and y in common block used 0. 834 seconds at 88. 4 Mflip/s • Common block alignment slowed computation by a factor of 12 March 17, 2003 Libraries and Their Performance 31

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part I Conclusion • hpmcount can be used to measure the performance of the total code • HPM Toolkit can be used to measure the performance of discrete code sections • Optimization effort must be focused effectively • Fortran 90 vector operations are generally faster than Fortran 77 scalar operations • Use of automatic SMP parallelization may provide an easy performance boost • I/O may be the largest factor in “whole code” performance • Misaligned common blocks can be very expensive March 17, 2003 Libraries and Their Performance 32

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part II: Comparing Libraries • In the rich user environment on seaborg, there are many alternative ways to do the same computation • The HPM Toolkit provides the tools to compare alternative approaches to the same computation March 17, 2003 Libraries and Their Performance 33

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Dot Product Functions • • User coded scalar computation User coded vector computaiton Single processor ESSL ddot Multi-threaded SMP ESSL ddot Single processor IMSL ddot Single processor NAG f 06 eaf Multi-threaded SMP NAG f 06 eaf March 17, 2003 Libraries and Their Performance 34

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Sample Problem • Test Cauchy-Schwartz inequality for N vectors of length N (X • Y)2 <= (X • X)(Y • Y) • Generate 2 N random numbers (array x 2) • Use 1 st N for X; (X • X) computed once • Vary vector Y for i=1, n y = 2. 0*x 2(i: n+(i-1)) First Y is 2 X, second Y is 2(X 2(2: N+1)), etc. • Compute (2*N)+1 dot products of length N March 17, 2003 Libraries and Their Performance 35

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Instrumented Code Section for Dot Products call f_hpmstart(1, "Dot products") xx = ddot(n, x, 1) do i=1, n y = 2. 0*x 2(i: n+(i-1)) yy = ddot(n, y, 1) xy = ddot(n, x, 1, y, 1) diffs(i) = (xx*yy)-(xy*xy) enddo call f_hpmstop(1) March 17, 2003 Libraries and Their Performance 36

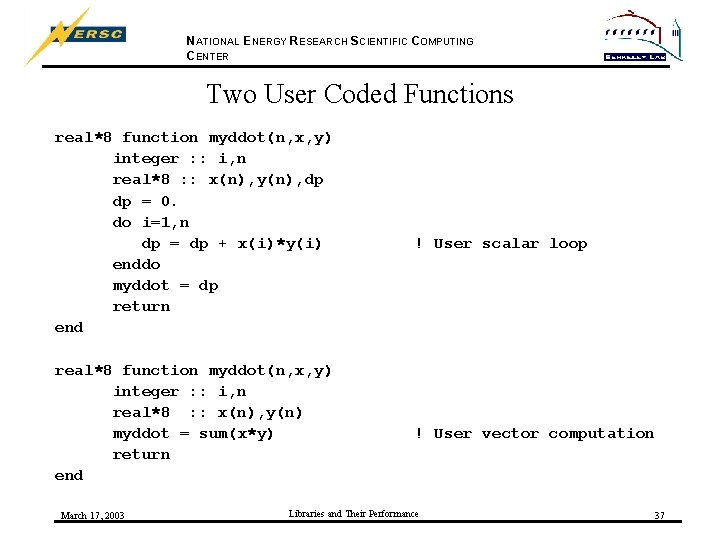

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Two User Coded Functions real*8 function myddot(n, x, y) integer : : i, n real*8 : : x(n), y(n), dp dp = 0. do i=1, n dp = dp + x(i)*y(i) enddo myddot = dp return end real*8 function myddot(n, x, y) integer : : i, n real*8 : : x(n), y(n) myddot = sum(x*y) return end March 17, 2003 ! User scalar loop ! User vector computation Libraries and Their Performance 37

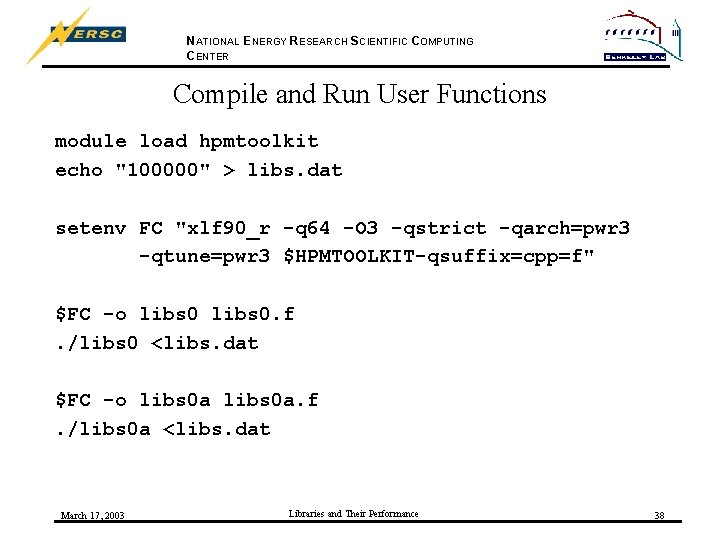

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run User Functions module load hpmtoolkit echo "100000" > libs. dat setenv FC "xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT-qsuffix=cpp=f" $FC -o libs 0. f. /libs 0 <libs. dat $FC -o libs 0 a. f. /libs 0 a <libs. dat March 17, 2003 Libraries and Their Performance 38

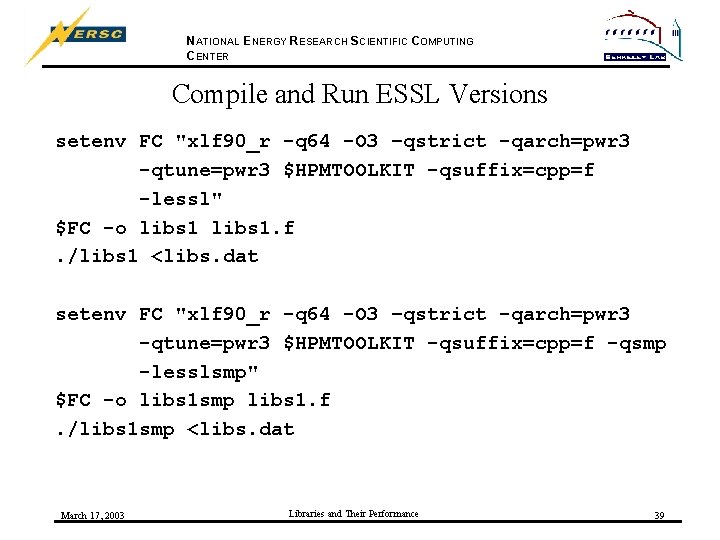

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run ESSL Versions setenv FC "xlf 90_r -q 64 -O 3 –qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f -lessl" $FC -o libs 1. f. /libs 1 <libs. dat setenv FC "xlf 90_r -q 64 -O 3 –qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f -qsmp -lesslsmp" $FC -o libs 1 smp libs 1. f. /libs 1 smp <libs. dat March 17, 2003 Libraries and Their Performance 39

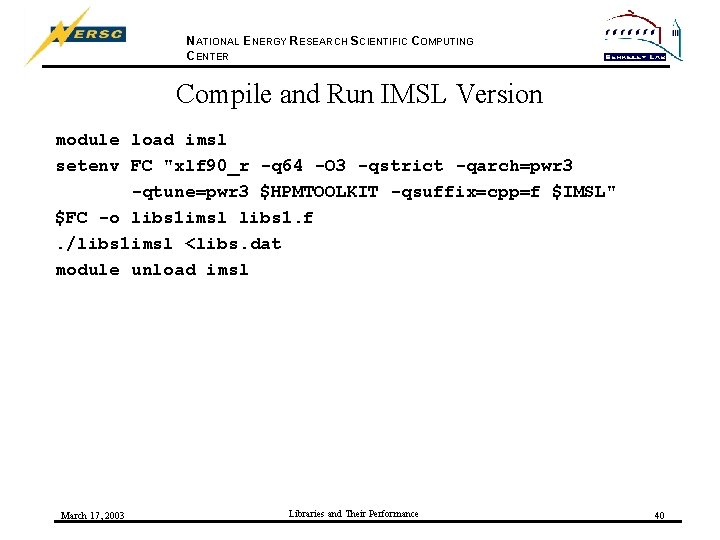

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run IMSL Version module load imsl setenv FC "xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f $IMSL" $FC -o libs 1 imsl libs 1. f. /libs 1 imsl <libs. dat module unload imsl March 17, 2003 Libraries and Their Performance 40

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile and Run NAG Versions module load nag_64 setenv FC "xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f $NAG" $FC -o libs 1 nag libsnag. f. /libs 1 nag <libs. dat module unload nag module load nag_smp 64 setenv FC "xlf 90_r -q 64 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 $HPMTOOLKIT -qsuffix=cpp=f $NAG_SMP 6 -qsmp=omp -qnosave " $FC -o libs 1 nagsmp libsnag. f. /libs 1 nagsmp <libs. dat module unload nag_smp 64 March 17, 2003 Libraries and Their Performance 41

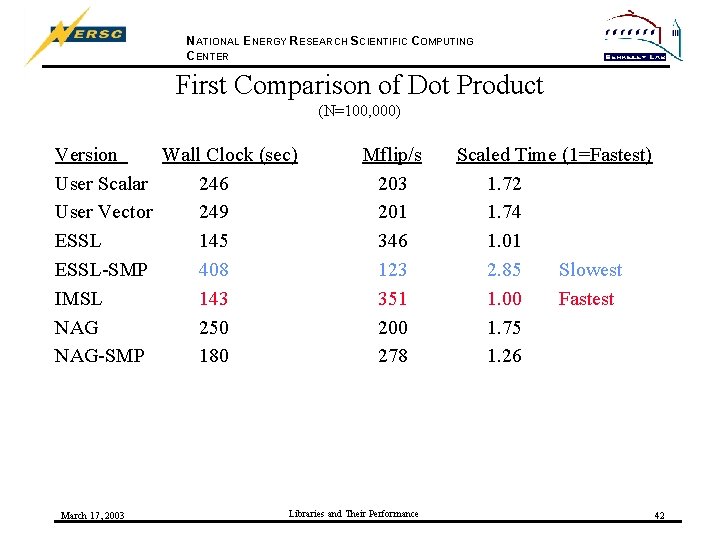

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER First Comparison of Dot Product (N=100, 000) Version Wall Clock (sec) User Scalar 246 User Vector 249 ESSL 145 ESSL-SMP 408 IMSL 143 NAG 250 NAG-SMP 180 March 17, 2003 Mflip/s 203 201 346 123 351 200 278 Libraries and Their Performance Scaled Time (1=Fastest) 1. 72 1. 74 1. 01 2. 85 Slowest 1. 00 Fastest 1. 75 1. 26 42

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments on First Comparisons • The best results, by just a little, were obtained using the IMSL library, with ESSL a close second • Third best was the NAG-SMP routine, with benefits from multi-threaded computation • The user coded routines and NAG were about 75% slower than the ESSL and IMSL routines. In general, library routines are highly optimized and better than user coded routines. • The ESSL-SMP library did very poorly on this computation; this unexpected result may be due to data structures in the library, or perhaps the number of threads (default is 16). March 17, 2003 Libraries and Their Performance 43

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER ESSL-SMP Performance vs. Number of Threads • All for N=100, 000 • Number of threads controlled by environment variable OMP_NUM_THREADS March 17, 2003 Libraries and Their Performance 44

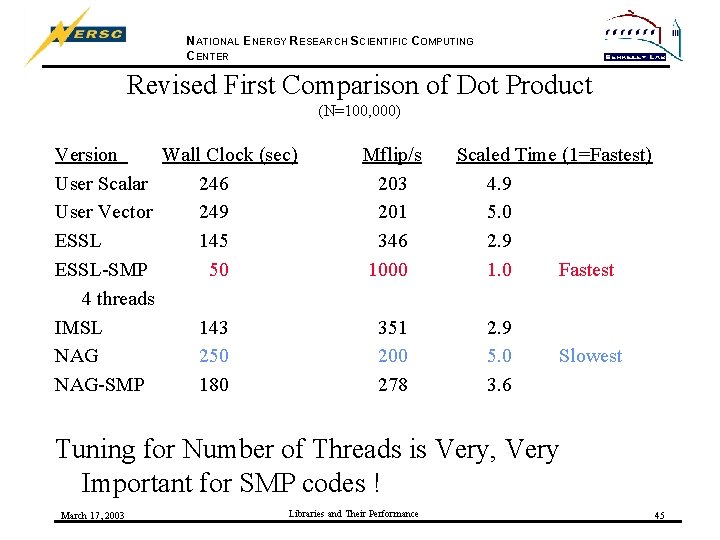

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Revised First Comparison of Dot Product (N=100, 000) Version Wall Clock (sec) User Scalar 246 User Vector 249 ESSL 145 ESSL-SMP 50 4 threads IMSL 143 NAG 250 NAG-SMP 180 Mflip/s 203 201 346 1000 351 200 278 Scaled Time (1=Fastest) 4. 9 5. 0 2. 9 1. 0 Fastest 2. 9 5. 0 3. 6 Slowest Tuning for Number of Threads is Very, Very Important for SMP codes ! March 17, 2003 Libraries and Their Performance 45

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Scaling up the Problem • The first comparisons were for N=100, 000 computing 200, 001 dot products of vectors of length 100, 000 • Second comparison for N=200, 000 computes 400, 001 dot products of vectors of length 200, 000 • Increase computational complexity by a factor of 4. March 17, 2003 Libraries and Their Performance 46

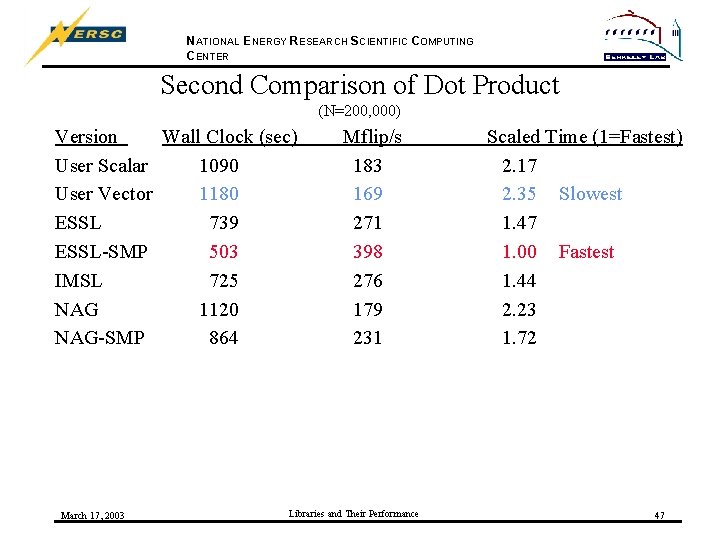

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Second Comparison of Dot Product (N=200, 000) Version Wall Clock (sec) User Scalar 1090 User Vector 1180 ESSL 739 ESSL-SMP 503 IMSL 725 NAG 1120 NAG-SMP 864 March 17, 2003 Mflip/s 183 169 271 398 276 179 231 Libraries and Their Performance Scaled Time (1=Fastest) 2. 17 2. 35 Slowest 1. 47 1. 00 Fastest 1. 44 2. 23 1. 72 47

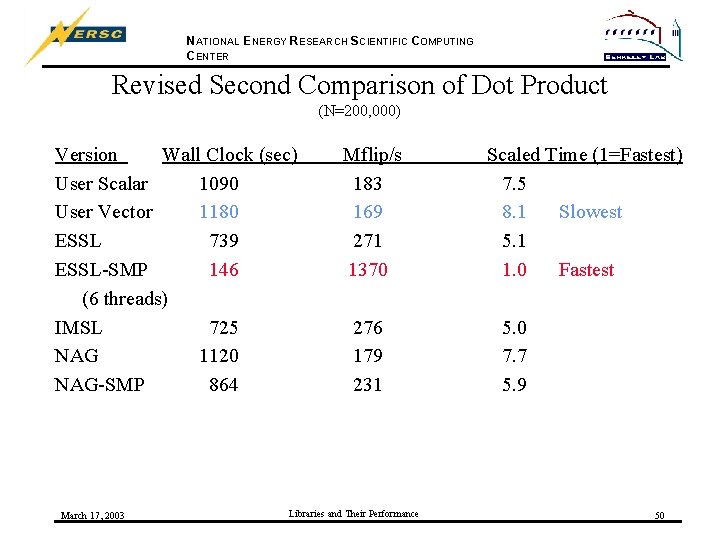

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments on Second Comparisons (N=200, 000) • Now the best results are from the ESSL-SMP library, with the default 16 threads • The next best group is ESSL, IMSL and NAG-SMP, taking 50 -75% longer than the ESSL-SMP routine. • The worst results were seen from NAG (single thread) and the user code routines. Ø What is the impact of the number of threads on the ESSLSMP library performance? It is already the best. March 17, 2003 Libraries and Their Performance 48

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER ESSL-SMP Performance vs. Number of Threads • All for N=200, 000 • Number of threads controlled by environment variable OMP_NUM_THREADS March 17, 2003 Libraries and Their Performance 49

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Revised Second Comparison of Dot Product (N=200, 000) Version Wall Clock (sec) User Scalar 1090 User Vector 1180 ESSL 739 ESSL-SMP 146 (6 threads) IMSL 725 NAG 1120 NAG-SMP 864 March 17, 2003 Mflip/s 183 169 271 1370 276 179 231 Libraries and Their Performance Scaled Time (1=Fastest) 7. 5 8. 1 Slowest 5. 1 1. 0 Fastest 5. 0 7. 7 5. 9 50

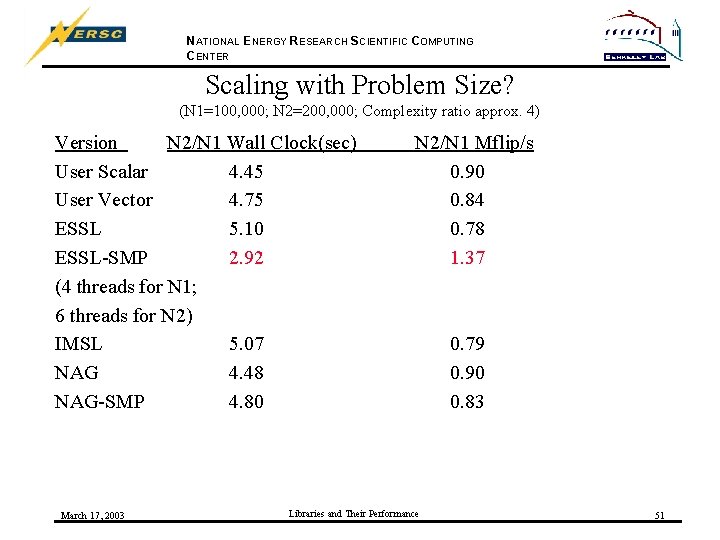

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Scaling with Problem Size? (N 1=100, 000; N 2=200, 000; Complexity ratio approx. 4) Version N 2/N 1 Wall Clock(sec) User Scalar 4. 45 User Vector 4. 75 ESSL 5. 10 ESSL-SMP 2. 92 (4 threads for N 1; 6 threads for N 2) IMSL 5. 07 NAG 4. 48 NAG-SMP 4. 80 March 17, 2003 N 2/N 1 Mflip/s 0. 90 0. 84 0. 78 1. 37 Libraries and Their Performance 0. 79 0. 90 0. 83 51

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments on Scaling Problem Size • The ESSL-SMP performance, when tuned for the optimal number of threads, increased by almost 40% with the increased problem size. • The untuned ESSL-SMP performance increased by a factor of 3. 2 with the increased problem size. • The user codes, ESSL, IMSL, NAG and NAG-SMP routines all showed 10%-22% decreases in performance with the larger problem size. • It is not possible to determine, a priori, how the performance of different, functionally equivalent routines will scale with problem size. March 17, 2003 Libraries and Their Performance 52

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Matrix Multiplication • • User coded scalar computation Fortran intrinsic matmul Single processor ESSL dgemm Multi-threaded SMP ESSL dgemm Single processor IMSL dmrrrr (32 -bit) Single processor NAG f 01 ckf Multi-threaded SMP NAG f 01 ckf March 17, 2003 Libraries and Their Performance 53

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Sample Problem • Multiply two dense N by N matrixes, A and B • A(i, j) = i + j • B(i, j) = j – i • Output C(N, N) to verify result March 17, 2003 Libraries and Their Performance 54

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Kernel of user matrix multiply do i=1, n do j=1, n a(i, j) = real(i+j) b(i, j) = real(j-i) enddo call f_hpmstart(1, "Matrix multiply") do j=1, n do k=1, n do i=1, n c(i, j) = c(i, j) + a(i, k)*b(k, j) enddo call f_hpmstop(1) March 17, 2003 Libraries and Their Performance 55

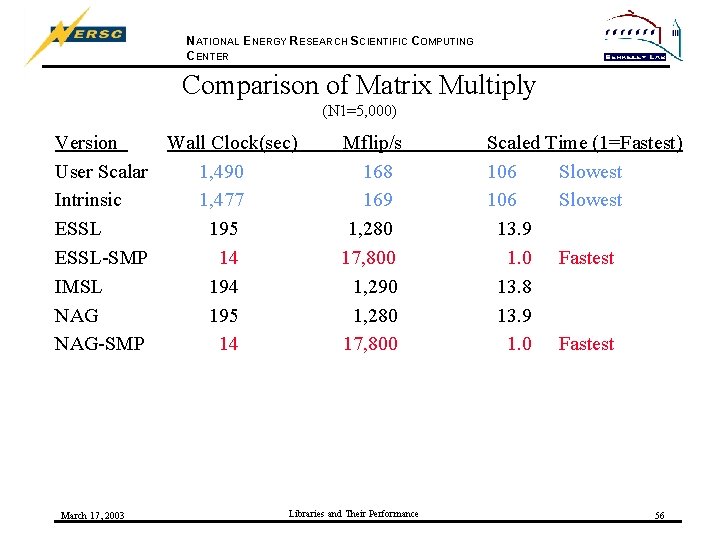

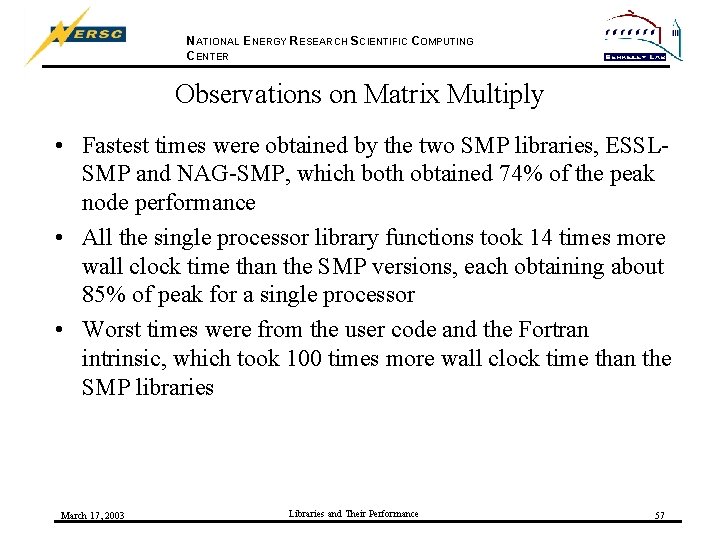

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of Matrix Multiply (N 1=5, 000) Version Wall Clock(sec) User Scalar 1, 490 Intrinsic 1, 477 ESSL 195 ESSL-SMP 14 IMSL 194 NAG 195 NAG-SMP 14 March 17, 2003 Mflip/s 168 169 1, 280 17, 800 1, 290 1, 280 17, 800 Libraries and Their Performance Scaled Time (1=Fastest) 106 Slowest 13. 9 1. 0 Fastest 13. 8 13. 9 1. 0 Fastest 56

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on Matrix Multiply • Fastest times were obtained by the two SMP libraries, ESSLSMP and NAG-SMP, which both obtained 74% of the peak node performance • All the single processor library functions took 14 times more wall clock time than the SMP versions, each obtaining about 85% of peak for a single processor • Worst times were from the user code and the Fortran intrinsic, which took 100 times more wall clock time than the SMP libraries March 17, 2003 Libraries and Their Performance 57

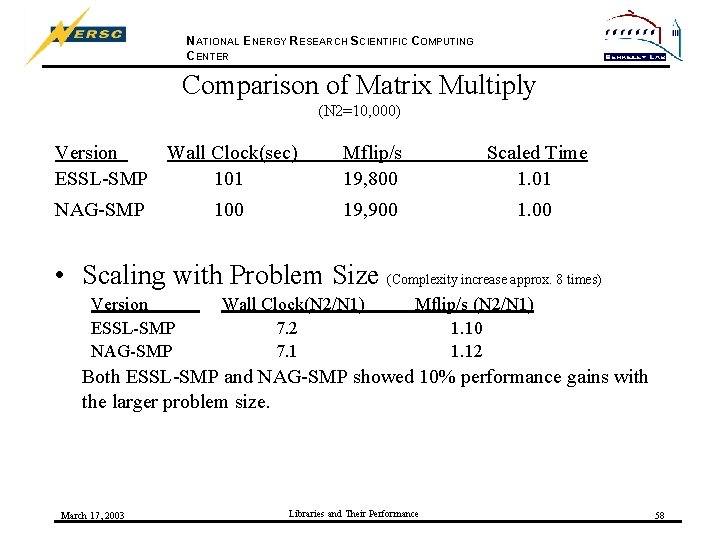

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of Matrix Multiply (N 2=10, 000) Version Wall Clock(sec) ESSL-SMP 101 Mflip/s 19, 800 Scaled Time 1. 01 NAG-SMP 19, 900 100 • Scaling with Problem Size (Complexity increase approx. 8 times) Version ESSL-SMP NAG-SMP Wall Clock(N 2/N 1) 7. 2 7. 1 Mflip/s (N 2/N 1) 1. 10 1. 12 Both ESSL-SMP and NAG-SMP showed 10% performance gains with the larger problem size. March 17, 2003 Libraries and Their Performance 58

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Observations on Scaling • Scaling of problem size was only done for the SMP libraries, to fit into reasonable times. • Doubling N results in 8 times increase of computational complexity for dense matrix multiplication • Performance actually increased for both routines for larger problem size. March 17, 2003 Libraries and Their Performance 59

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER ESSL-SMP Performance vs. Number of Threads • All for N=10, 000 • Number of threads controlled by environment variable OMP_NUM_THREADS March 17, 2003 Libraries and Their Performance 60

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part II Conclusion • The NERSC user environment provides a rich variety of mathematical libraries • Performance can vary widely for the same computation, sometimes even for the same function name, from library to library; performance also varies with problem size and, for the SMP libraries, the number of threads • It is not possible to know, a priori, which library will provide the best performance for a given function and problem size • The HPM Toolkit provides a way to compare library routine performance and make informed choices March 17, 2003 Libraries and Their Performance 61

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part III: Moving to Multi-node Parallelism • The examples so far have all been of single processor or multi-processor, shared-memory (SMP style) parallelism on a single 16 processor node • The poe+ command is the multi-node equivalent of hpmcount, and poe+ can be used with MPI codes or multi -node, distributed memory parallel libraries such as PESSL and Sca. LAPACK. • poe+ is a perl script developed by David Skinner of the NERSC User Services Group which aggregates hpmcount results for each of distributed-memory process March 17, 2003 Libraries and Their Performance 62

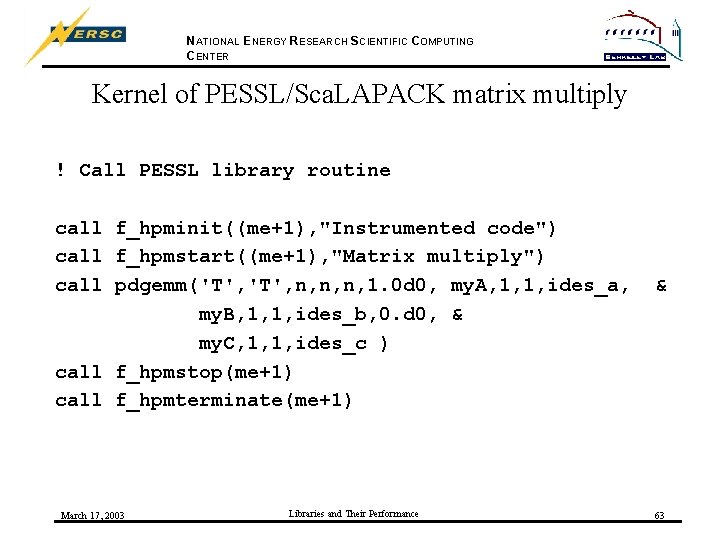

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Kernel of PESSL/Sca. LAPACK matrix multiply ! Call PESSL library routine call f_hpminit((me+1), "Instrumented code") call f_hpmstart((me+1), "Matrix multiply") call pdgemm('T', n, n, n, 1. 0 d 0, my. A, 1, 1, ides_a, my. B, 1, 1, ides_b, 0. d 0, & my. C, 1, 1, ides_c ) call f_hpmstop(me+1) call f_hpmterminate(me+1) March 17, 2003 Libraries and Their Performance & 63

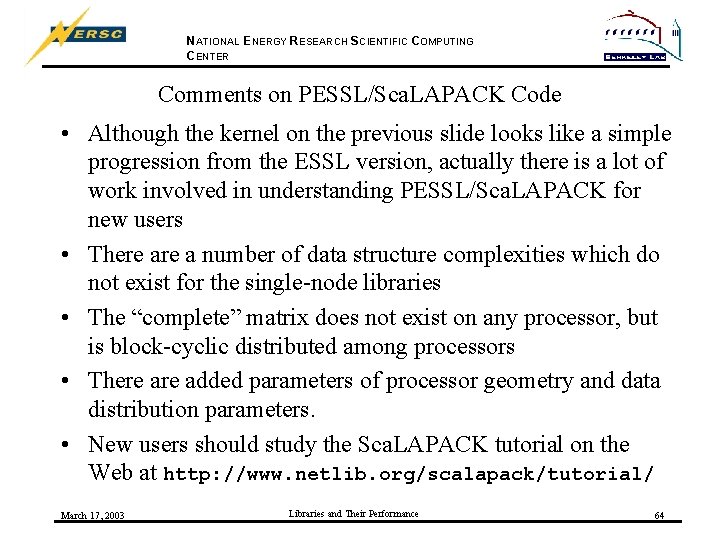

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments on PESSL/Sca. LAPACK Code • Although the kernel on the previous slide looks like a simple progression from the ESSL version, actually there is a lot of work involved in understanding PESSL/Sca. LAPACK for new users • There a number of data structure complexities which do not exist for the single-node libraries • The “complete” matrix does not exist on any processor, but is block-cyclic distributed among processors • There added parameters of processor geometry and data distribution parameters. • New users should study the Sca. LAPACK tutorial on the Web at http: //www. netlib. org/scalapack/tutorial/ March 17, 2003 Libraries and Their Performance 64

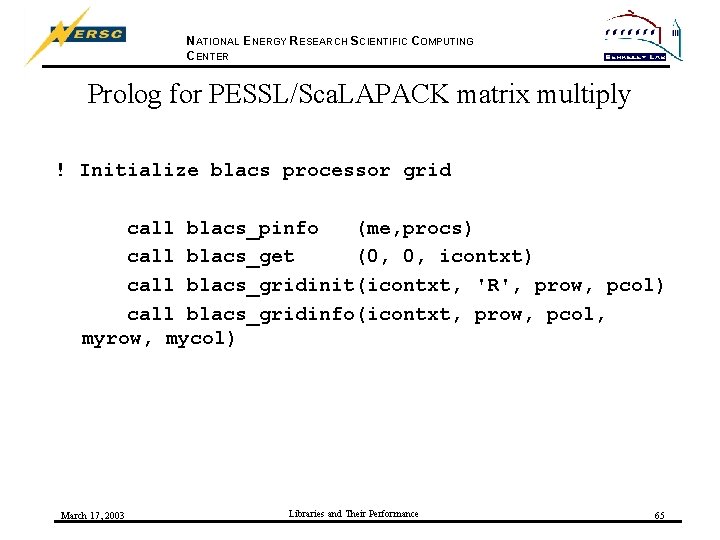

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Prolog for PESSL/Sca. LAPACK matrix multiply ! Initialize blacs processor grid call blacs_pinfo (me, procs) call blacs_get (0, 0, icontxt) call blacs_gridinit(icontxt, 'R', prow, pcol) call blacs_gridinfo(icontxt, prow, pcol, myrow, mycol) March 17, 2003 Libraries and Their Performance 65

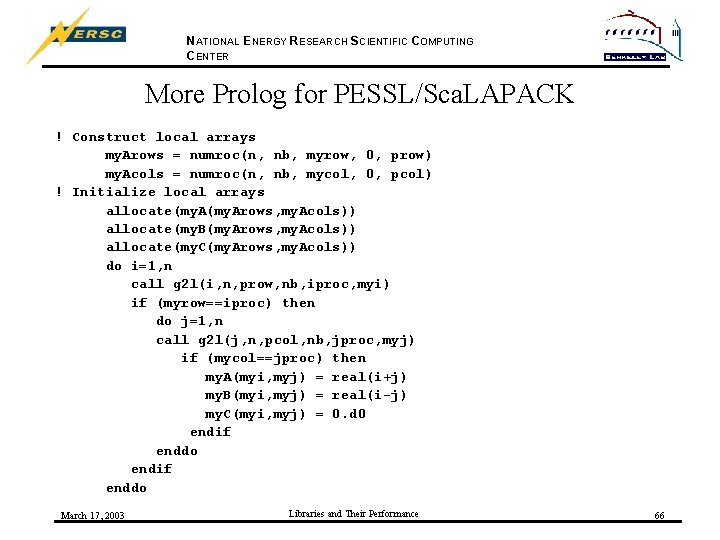

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER More Prolog for PESSL/Sca. LAPACK ! Construct local arrays my. Arows = numroc(n, nb, myrow, 0, prow) my. Acols = numroc(n, nb, mycol, 0, pcol) ! Initialize local arrays allocate(my. Arows, my. Acols)) allocate(my. B(my. Arows, my. Acols)) allocate(my. C(my. Arows, my. Acols)) do i=1, n call g 2 l(i, n, prow, nb, iproc, myi) if (myrow==iproc) then do j=1, n call g 2 l(j, n, pcol, nb, jproc, myj) if (mycol==jproc) then my. A(myi, myj) = real(i+j) my. B(myi, myj) = real(i-j) my. C(myi, myj) = 0. d 0 endif enddo March 17, 2003 Libraries and Their Performance 66

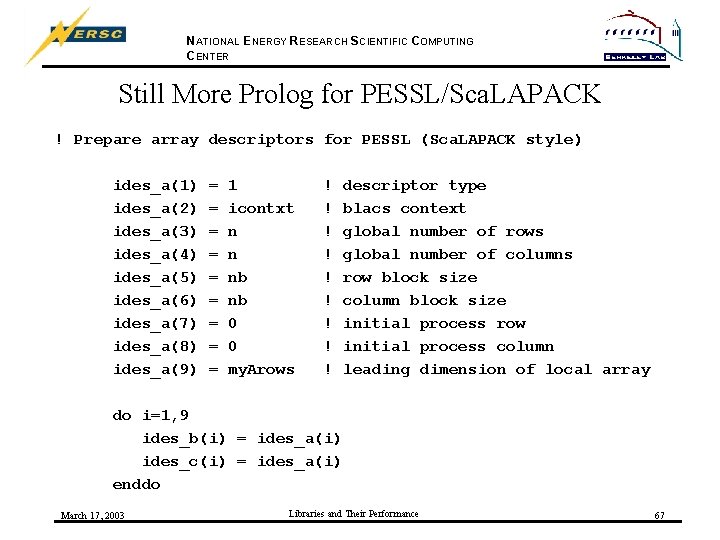

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Still More Prolog for PESSL/Sca. LAPACK ! Prepare array descriptors for PESSL (Sca. LAPACK style) ides_a(1) ides_a(2) ides_a(3) ides_a(4) ides_a(5) ides_a(6) ides_a(7) ides_a(8) ides_a(9) = = = = = 1 icontxt n n nb nb 0 0 my. Arows ! ! ! ! ! descriptor type blacs context global number of rows global number of columns row block size column block size initial process row initial process column leading dimension of local array do i=1, 9 ides_b(i) = ides_a(i) ides_c(i) = ides_a(i) enddo March 17, 2003 Libraries and Their Performance 67

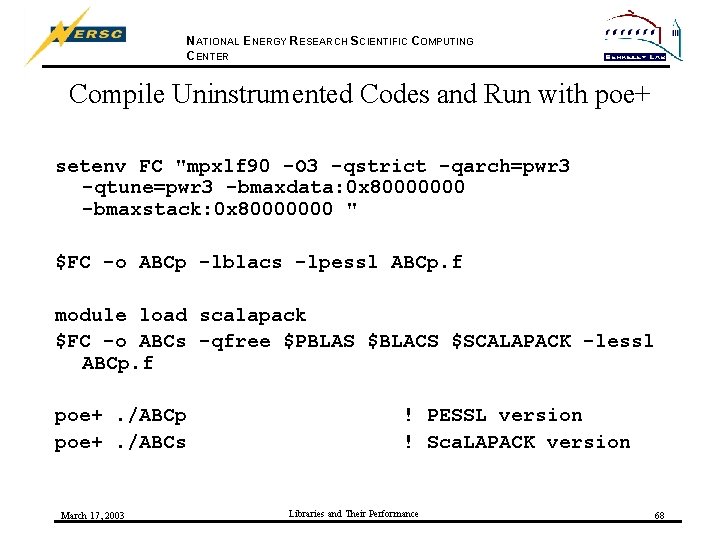

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Compile Uninstrumented Codes and Run with poe+ setenv FC "mpxlf 90 -O 3 -qstrict -qarch=pwr 3 -qtune=pwr 3 -bmaxdata: 0 x 80000000 -bmaxstack: 0 x 80000000 " $FC -o ABCp -lblacs -lpessl ABCp. f module load scalapack $FC -o ABCs -qfree $PBLAS $BLACS $SCALAPACK -lessl ABCp. f poe+. /ABCp poe+. /ABCs March 17, 2003 ! PESSL version ! Sca. LAPACK version Libraries and Their Performance 68

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Four Runs for PESSL and Sca. LAPACK Codes • • • N=5000, 16 processors (one node) in 4 x 4 processor array N=10, 000, 16 processors (one node) in 4 x 4 processor array N=5000, 64 processors (four nodes) in 8 x 8 processor array N=10000, 64 processors (four nodes) in 8 x 8 processor array Compare “whole code” performance using poe+ with “whole code” results for single-node ESSL-SMP routine using hpmcount. • poe+ returns average wall clock time across all processes, and aggregate Mflip/s of all processes March 17, 2003 Libraries and Their Performance 69

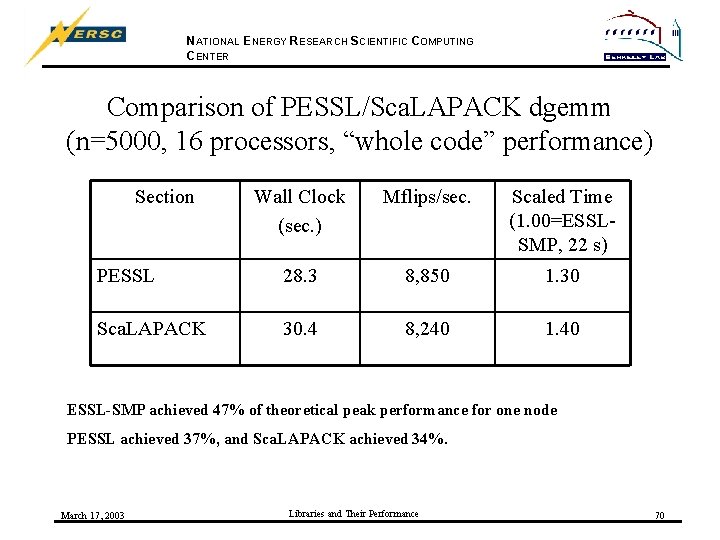

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of PESSL/Sca. LAPACK dgemm (n=5000, 16 processors, “whole code” performance) Section Wall Clock (sec. ) Mflips/sec. Scaled Time (1. 00=ESSLSMP, 22 s) PESSL 28. 3 8, 850 1. 30 Sca. LAPACK 30. 4 8, 240 1. 40 ESSL-SMP achieved 47% of theoretical peak performance for one node PESSL achieved 37%, and Sca. LAPACK achieved 34%. March 17, 2003 Libraries and Their Performance 70

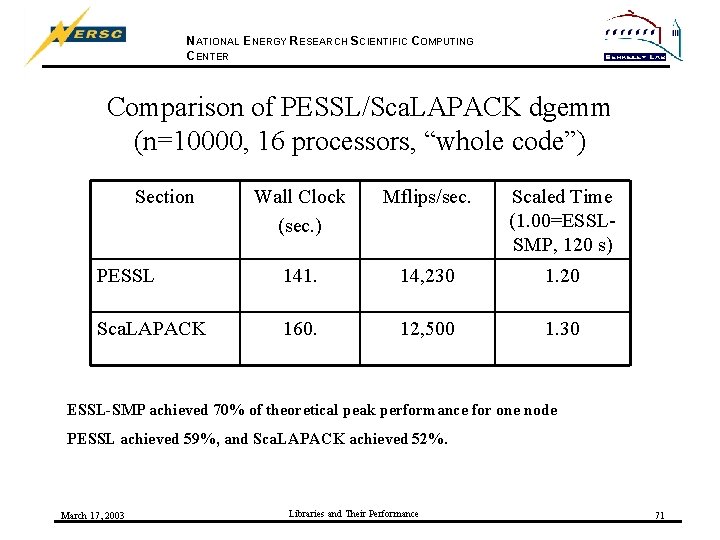

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of PESSL/Sca. LAPACK dgemm (n=10000, 16 processors, “whole code”) Section Wall Clock (sec. ) Mflips/sec. Scaled Time (1. 00=ESSLSMP, 120 s) PESSL 141. 14, 230 1. 20 Sca. LAPACK 160. 12, 500 1. 30 ESSL-SMP achieved 70% of theoretical peak performance for one node PESSL achieved 59%, and Sca. LAPACK achieved 52%. March 17, 2003 Libraries and Their Performance 71

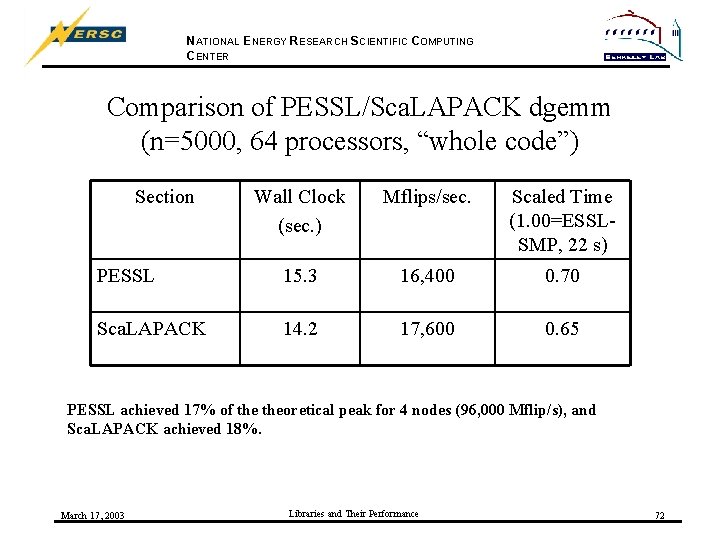

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of PESSL/Sca. LAPACK dgemm (n=5000, 64 processors, “whole code”) Section Wall Clock (sec. ) Mflips/sec. Scaled Time (1. 00=ESSLSMP, 22 s) PESSL 15. 3 16, 400 0. 70 Sca. LAPACK 14. 2 17, 600 0. 65 PESSL achieved 17% of theoretical peak for 4 nodes (96, 000 Mflip/s), and Sca. LAPACK achieved 18%. March 17, 2003 Libraries and Their Performance 72

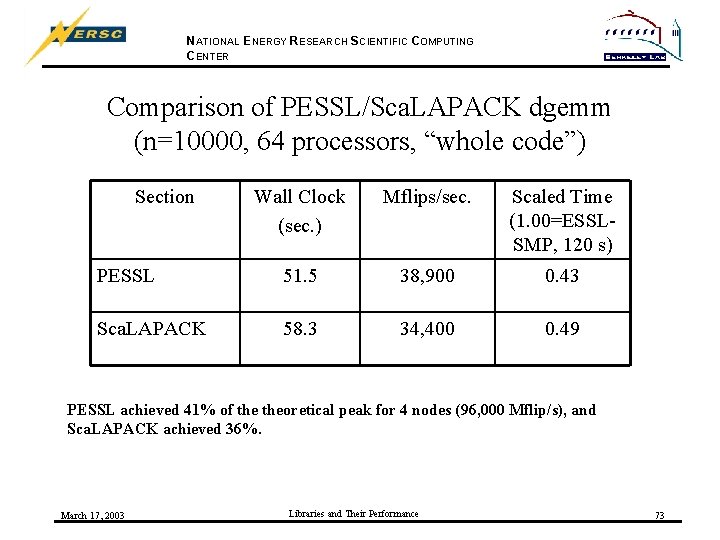

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comparison of PESSL/Sca. LAPACK dgemm (n=10000, 64 processors, “whole code”) Section Wall Clock (sec. ) Mflips/sec. Scaled Time (1. 00=ESSLSMP, 120 s) PESSL 51. 5 38, 900 0. 43 Sca. LAPACK 58. 3 34, 400 0. 49 PESSL achieved 41% of theoretical peak for 4 nodes (96, 000 Mflip/s), and Sca. LAPACK achieved 36%. March 17, 2003 Libraries and Their Performance 73

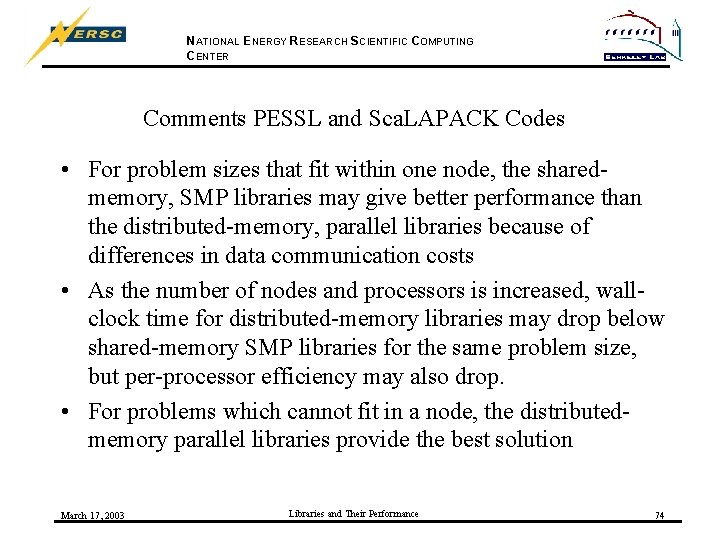

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments PESSL and Sca. LAPACK Codes • For problem sizes that fit within one node, the sharedmemory, SMP libraries may give better performance than the distributed-memory, parallel libraries because of differences in data communication costs • As the number of nodes and processors is increased, wallclock time for distributed-memory libraries may drop below shared-memory SMP libraries for the same problem size, but per-processor efficiency may also drop. • For problems which cannot fit in a node, the distributedmemory parallel libraries provide the best solution March 17, 2003 Libraries and Their Performance 74

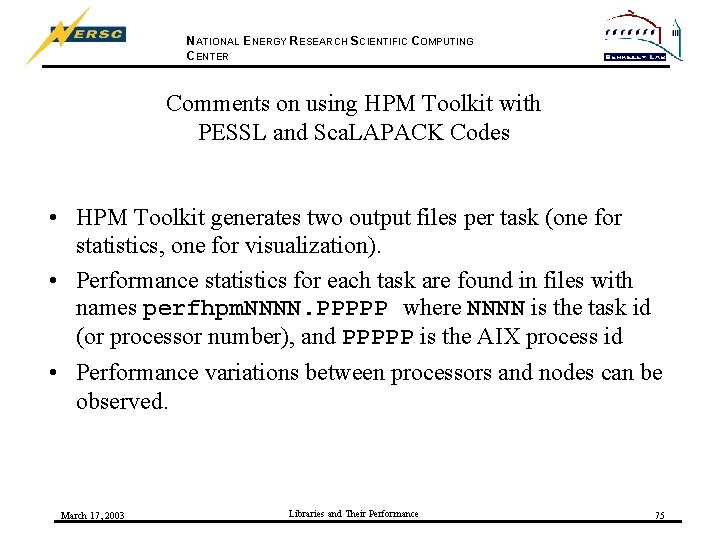

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Comments on using HPM Toolkit with PESSL and Sca. LAPACK Codes • HPM Toolkit generates two output files per task (one for statistics, one for visualization). • Performance statistics for each task are found in files with names perfhpm. NNNN. PPPPP where NNNN is the task id (or processor number), and PPPPP is the AIX process id • Performance variations between processors and nodes can be observed. March 17, 2003 Libraries and Their Performance 75

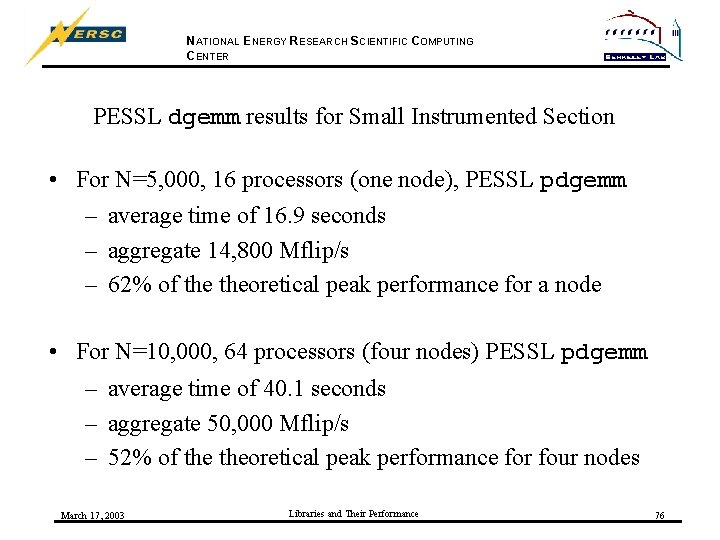

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER PESSL dgemm results for Small Instrumented Section • For N=5, 000, 16 processors (one node), PESSL pdgemm – average time of 16. 9 seconds – aggregate 14, 800 Mflip/s – 62% of theoretical peak performance for a node • For N=10, 000, 64 processors (four nodes) PESSL pdgemm – average time of 40. 1 seconds – aggregate 50, 000 Mflip/s – 52% of theoretical peak performance for four nodes March 17, 2003 Libraries and Their Performance 76

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Variability in PESSL dgemm Small Instrumented Section • For N=5, 000, 16 processors (one node), PESSL pdgemm – Wall clock for each processor varies from 16. 4 to 17. 4 sec – Mflip/s for each processor varies from 850 to 1000 • For N=10, 000, 64 processors (four nodes) PESSL pdgemm – Wall clock for each processor varies from 39. 25 to 40. 75 sec – Mflip/s for each processor varies from 730 to 830 March 17, 2003 Libraries and Their Performance 77

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER PESSL dgemm Task Variation (n=5000, 16 processors) March 17, 2003 Libraries and Their Performance 78

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER PESSL dgemm Task Variation (n=10000, 64 processors) March 17, 2003 Libraries and Their Performance 79

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER Part III Conclusion • NERSC provides a variety of distributed-memory, multinode mathematical libraries (PESSL, Sca. LAPACK and NAG Parallel). • Performance of these libraries can be measured using “whole code” approaches with poe+, similar to hpmcount for single node codes • The HPM Toolkit can be used to instrument small sections of codes for more detailed analysis, include variation between tasks; but a number of output files are produced and must be analyzed by the user. March 17, 2003 Libraries and Their Performance 80

NATIONAL ENERGY RESEARCH SCIENTIFIC COMPUTING CENTER References • Information on hpmcount and poe+ for whole code performance measurement is available on the NERSC Website at http: //hpcf. nersc. gov/software/ibm/hpmcount/ • Detailed information about the HPM Toolkit for measuring performance of discrete code sections is available on the NERSC Website at http: //hpcf. nersc. gov/software/ibm/hpmcount/HPM_2_4_2. html • The list of mathematical libraries available on seaborg can be found on the NERSC Website at http: //hpcf. nersc. gov/software/ibm/#mathlibs March 17, 2003 Libraries and Their Performance 81

- Slides: 81