Nanjing University of Science Technology Pattern Recognition Statistical

- Slides: 31

Nanjing University of Science & Technology Pattern Recognition: Statistical and Neural Lonnie C. Ludeman Lecture 9 Sept 28, 2005 1

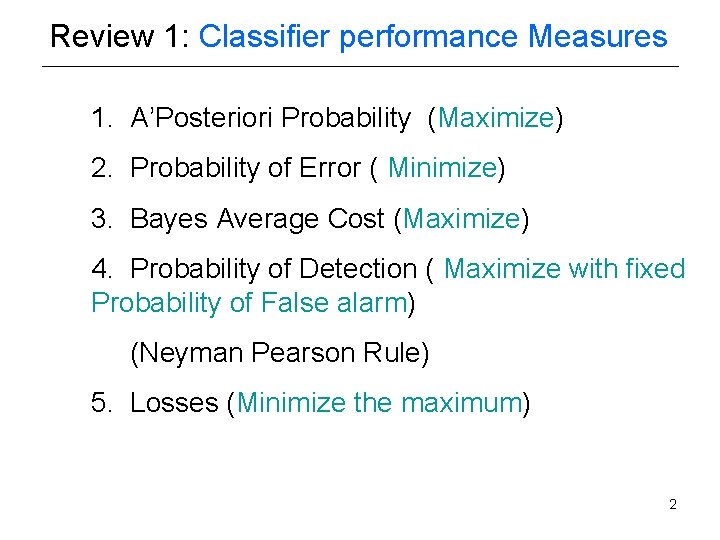

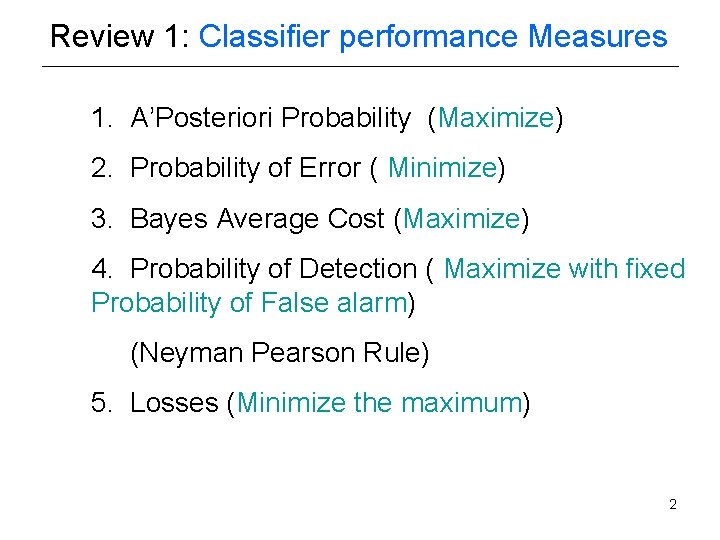

Review 1: Classifier performance Measures 1. A’Posteriori Probability (Maximize) 2. Probability of Error ( Minimize) 3. Bayes Average Cost (Maximize) 4. Probability of Detection ( Maximize with fixed Probability of False alarm) (Neyman Pearson Rule) 5. Losses (Minimize the maximum) 2

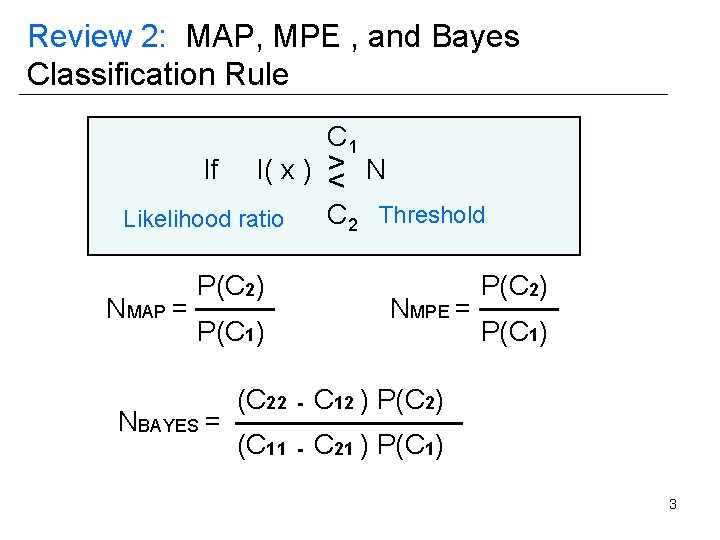

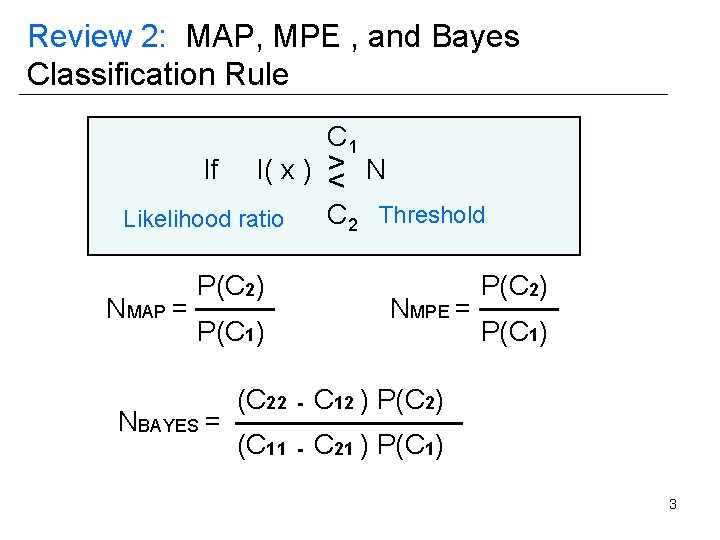

Review 2: MAP, MPE , and Bayes Classification Rule If C 1 l( x ) > N < Likelihood ratio NMAP = P(C 2) P(C 1) NBAYES = C 2 Threshold NMPE = P(C 2) P(C 1) (C 22 - C 12 ) P(C 2) (C 11 - C 21 ) P(C 1) 3

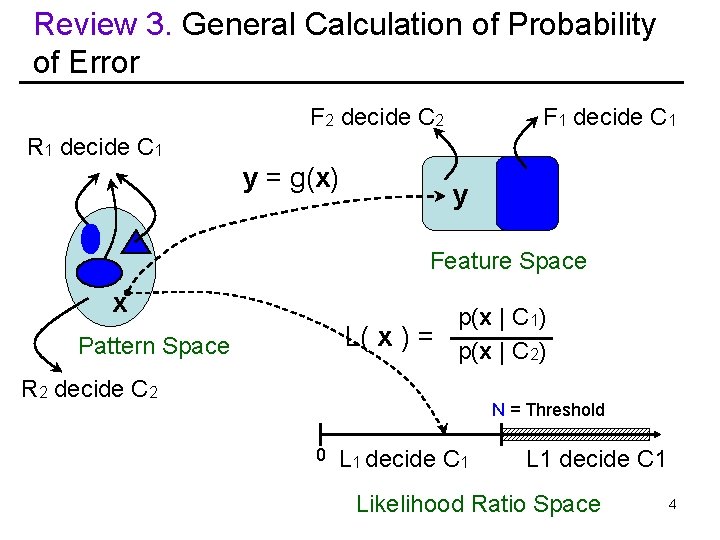

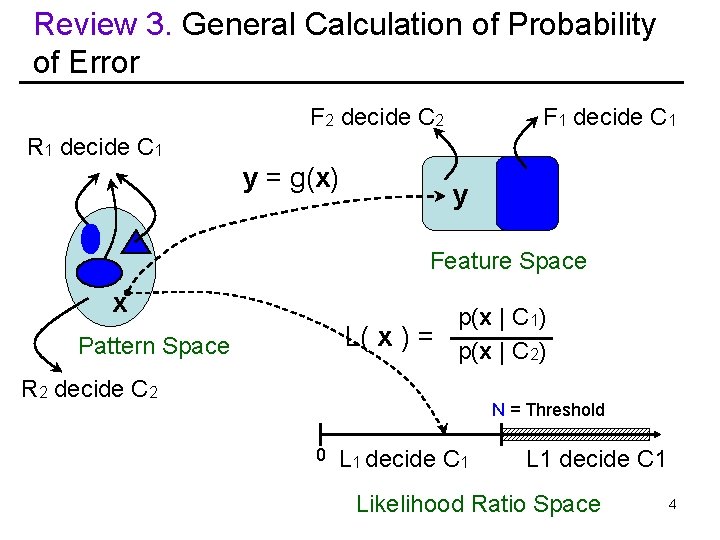

Review 3. General Calculation of Probability of Error F 2 decide C 2 F 1 decide C 1 R 1 decide C 1 y = g(x) y Feature Space x p(x | C 1) L( x ) = p(x | C 2) Pattern Space R 2 decide C 2 N = Threshold 0 L 1 decide C 1 Likelihood Ratio Space 4

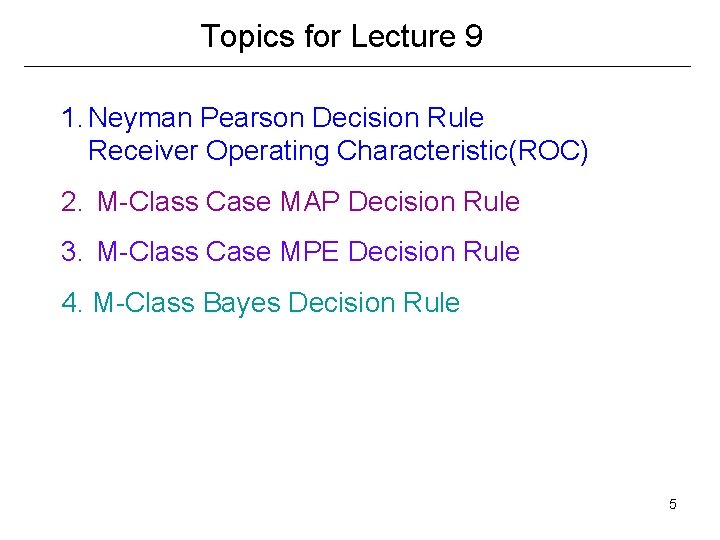

Topics for Lecture 9 1. Neyman Pearson Decision Rule Receiver Operating Characteristic(ROC) 2. M-Class Case MAP Decision Rule 3. M-Class Case MPE Decision Rule 4. M-Class Bayes Decision Rule 5

Motivation: Falling Rock Small probability of a falling rock Difficult to assign realistic costs to consequences Very High cost to not detect Low cost for false alarm 6

Definitions: P(decide target | target) Detection P(decide no target | target) Miss P(decide target | no target) False Alarm P(decide no target | no target ) Correct Dismissal 7

Neyman Pearson Classifier- 2 Classes A. Assumptions: C 1: (target), known p(x | C 1) C 2: (no Target), known p(x | C 2) No Apriori probabilities specified Acceptable False Alarm rate specified No cost assignment available specified 8

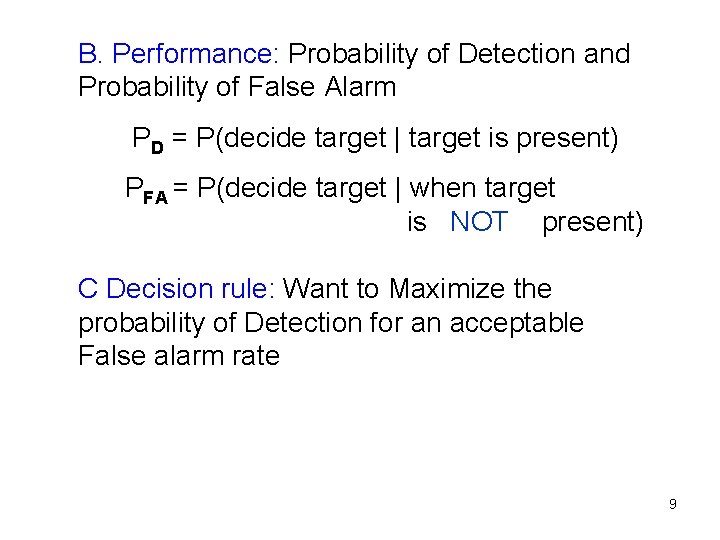

B. Performance: Probability of Detection and Probability of False Alarm PD = P(decide target | target is present) PFA = P(decide target | when target is NOT present) C Decision rule: Want to Maximize the probability of Detection for an acceptable False alarm rate 9

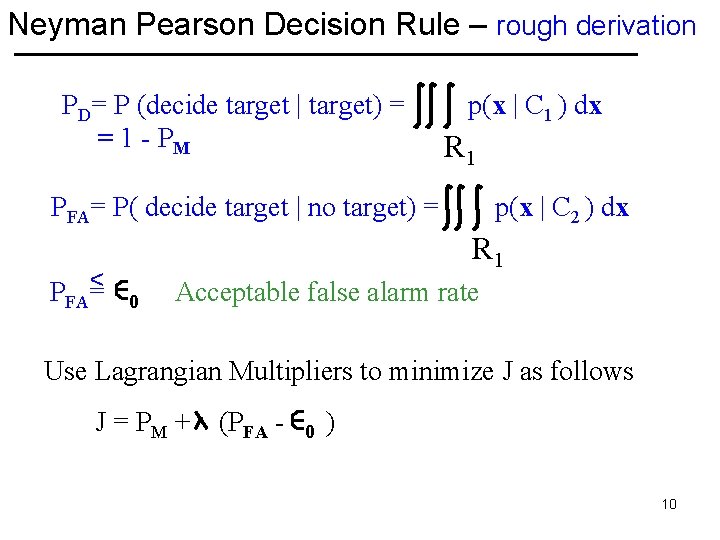

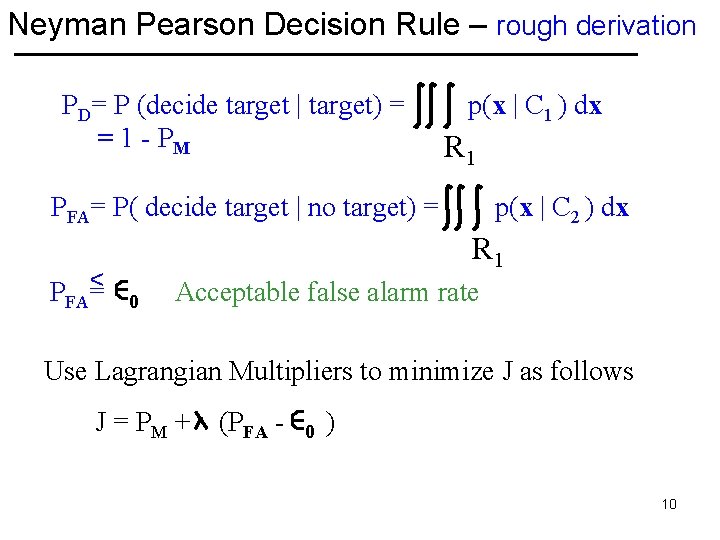

Neyman Pearson Decision Rule – rough derivation PD= P (decide target | target) = = 1 - PM p(x | C 1 ) dx R 1 PFA= P( decide target | no target) = < PFA= p(x | C 2 ) dx R 1 0 Acceptable false alarm rate Use Lagrangian Multipliers to minimize J as follows J = PM + (PFA - 0 ) 10

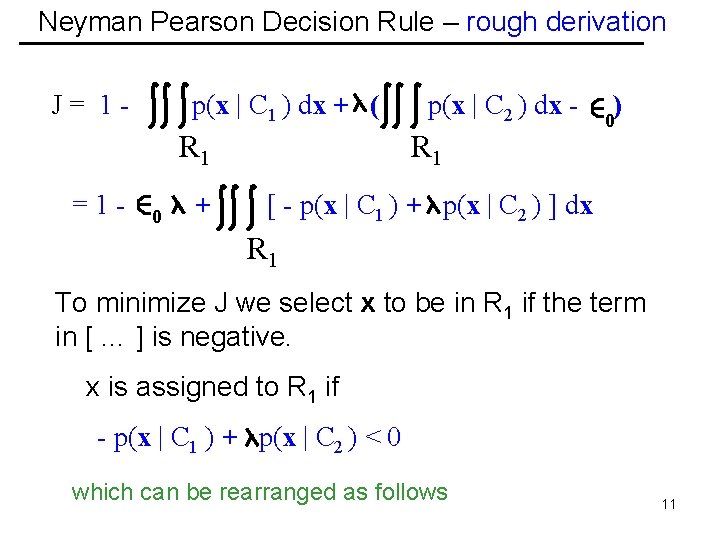

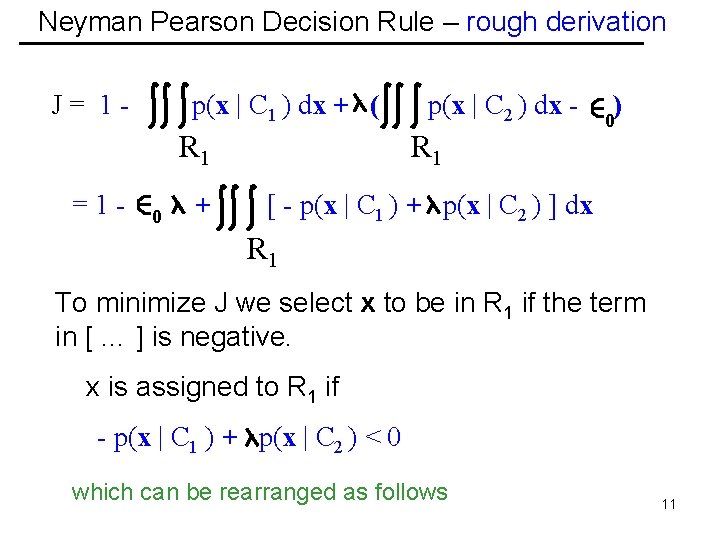

Neyman Pearson Decision Rule – rough derivation J= 1=1 - p(x | C 1 ) dx + ( 0 p(x | C 2 ) dx - R 1 + [ - p(x | C 1 ) + p(x | C 2 ) ] dx ) 0 R 1 To minimize J we select x to be in R 1 if the term in [ … ] is negative. x is assigned to R 1 if - p(x | C 1 ) + p(x | C 2 ) < 0 which can be rearranged as follows 11

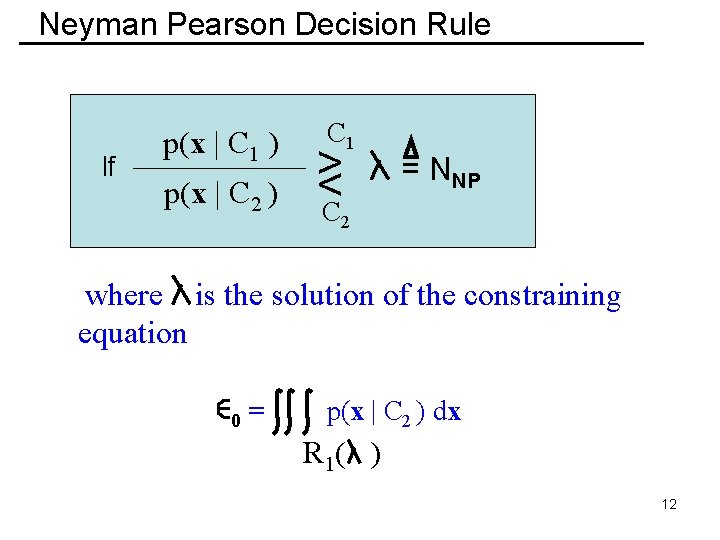

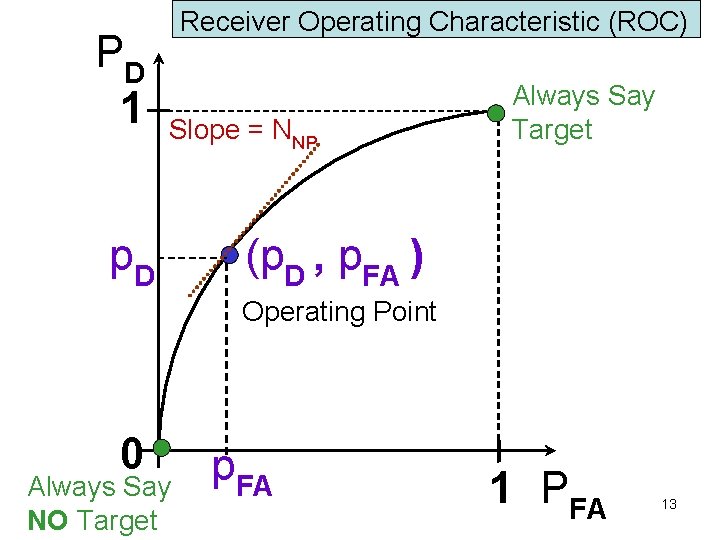

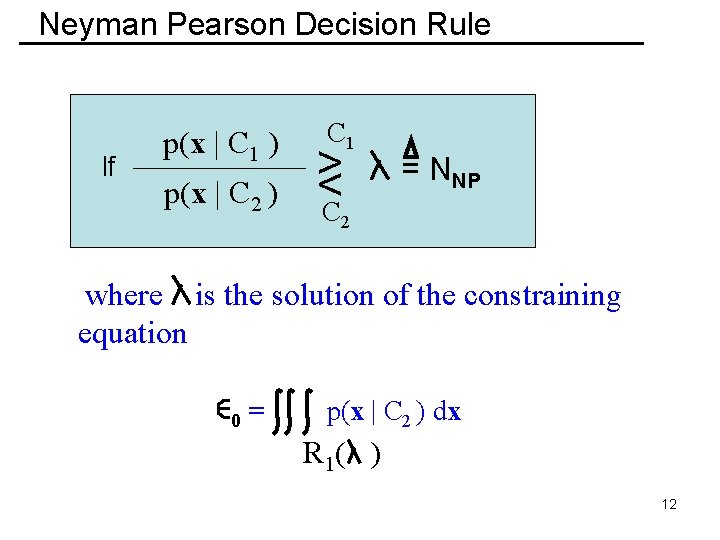

Neyman Pearson Decision Rule If p(x | C 1 ) p(x | C 2 ) C 1 > <C = NNP 2 where is the solution of the constraining equation 0 = p(x | C 2 ) dx R 1( ) 12

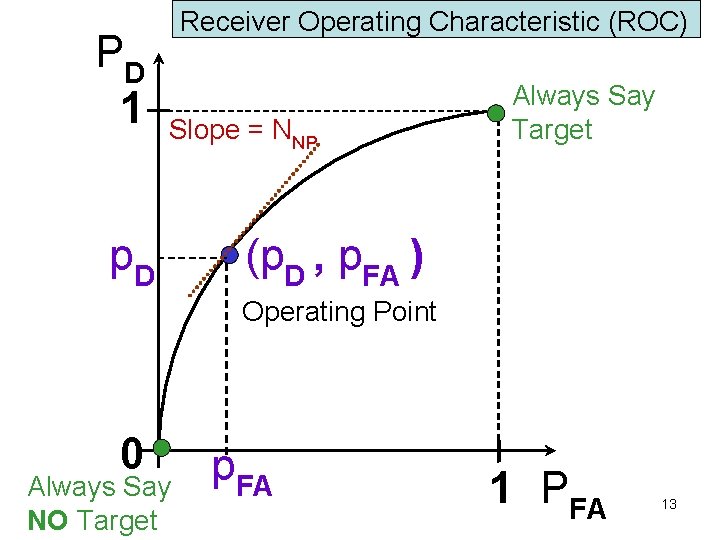

PD 1 Receiver Operating Characteristic (ROC) Slope = NNP p. D Always Say Target (p. D , p. FA ) Operating Point 0 Always Say NO Target p. FA 1 PFA 13

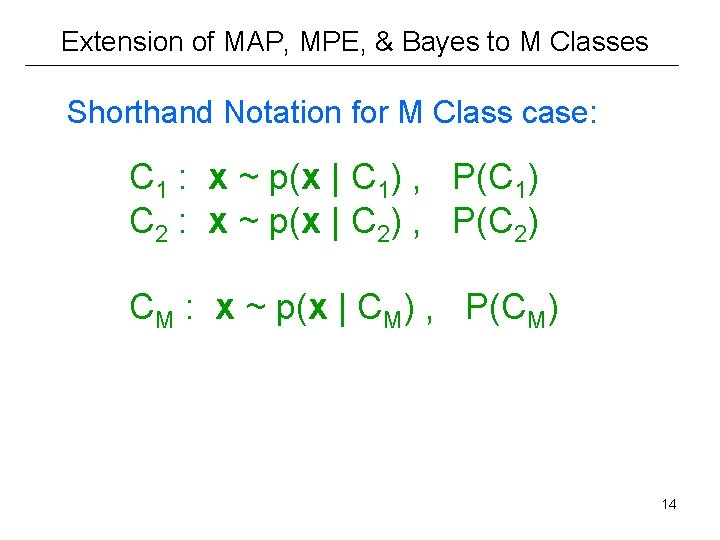

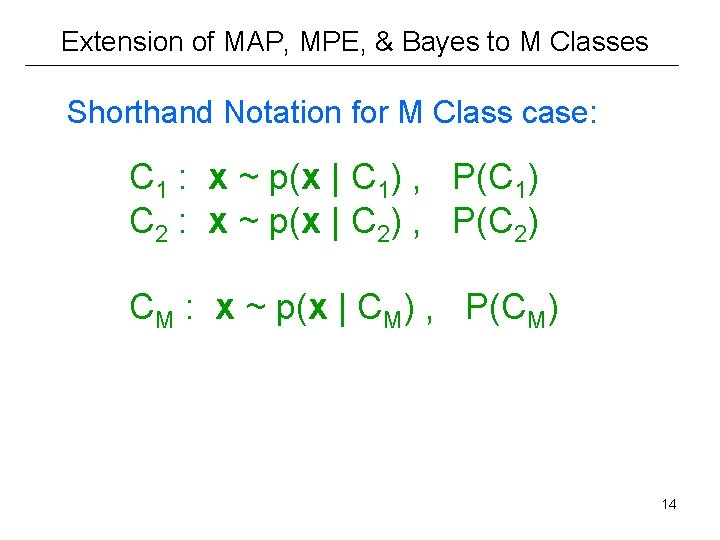

Extension of MAP, MPE, & Bayes to M Classes Shorthand Notation for M Class case: C 1 : x ~ p(x | C 1) , P(C 1) C 2 : x ~ p(x | C 2) , P(C 2) CM : x ~ p(x | CM) , P(CM) 14

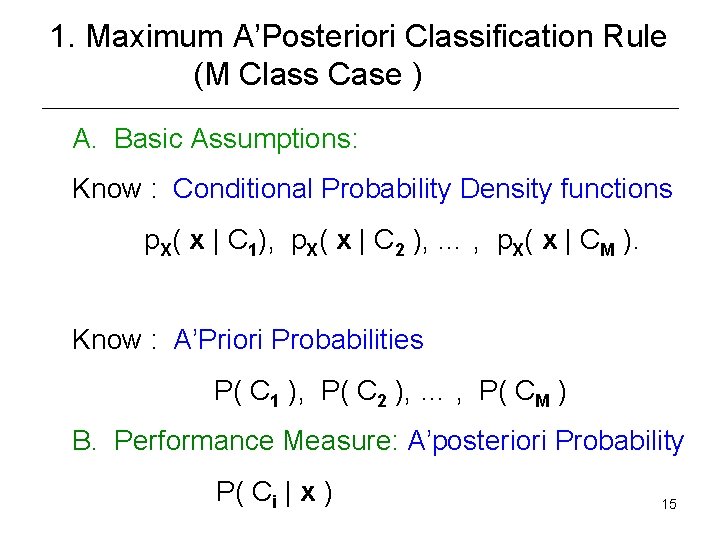

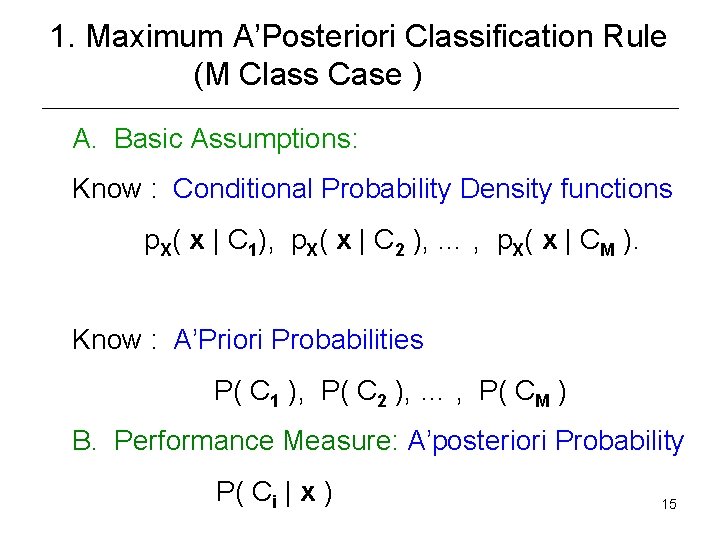

1. Maximum A’Posteriori Classification Rule (M Class Case ) A. Basic Assumptions: Know : Conditional Probability Density functions p. X( x | C 1), p. X( x | C 2 ), … , p. X( x | CM ). Know : A’Priori Probabilities P( C 1 ), P( C 2 ), … , P( CM ) B. Performance Measure: A’posteriori Probability P( Ci | x ) 15

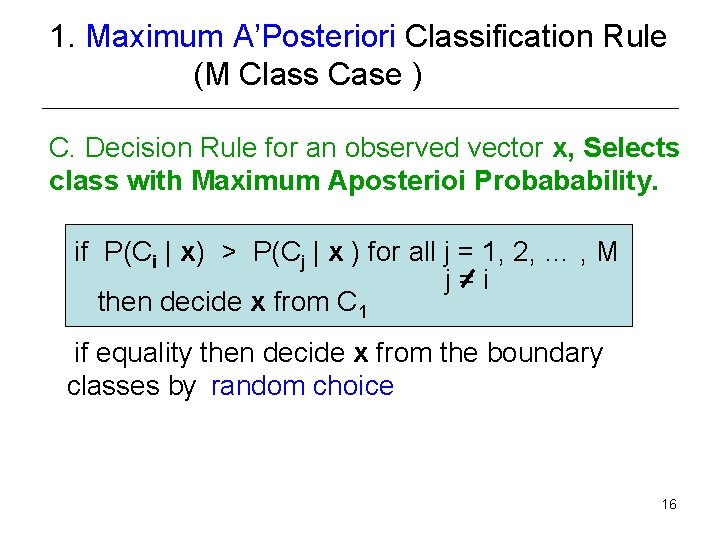

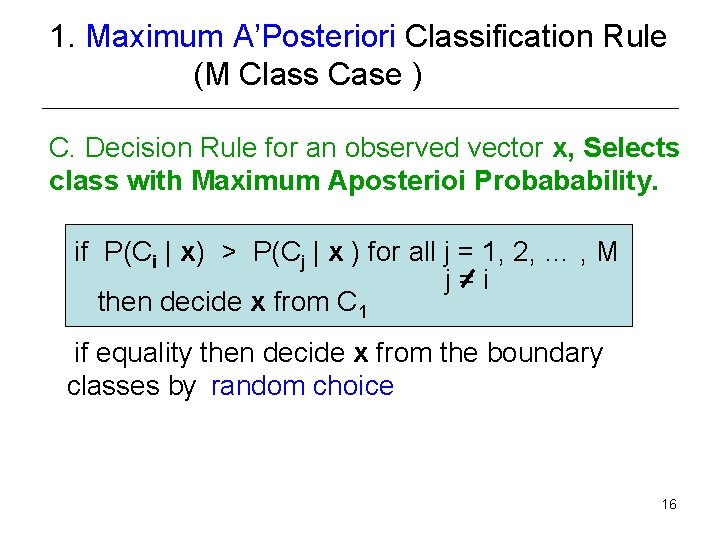

1. Maximum A’Posteriori Classification Rule (M Class Case ) C. Decision Rule for an observed vector x, Selects class with Maximum Aposterioi Probabability. if P(Ci | x) > P(Cj | x ) for all j = 1, 2, … , M j=i then decide x from C 1 if equality then decide x from the boundary classes by random choice 16

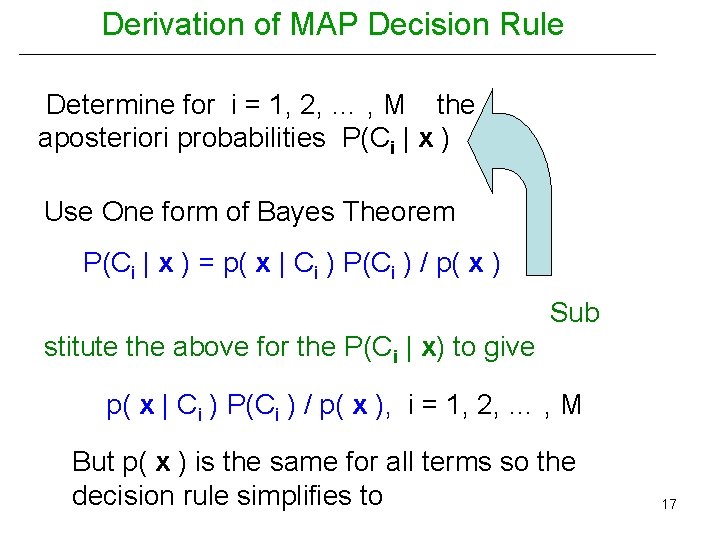

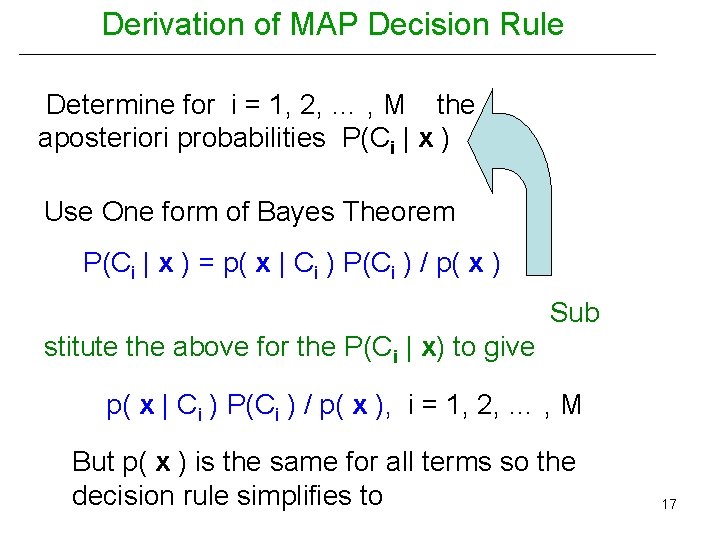

Derivation of MAP Decision Rule Determine for i = 1, 2, … , M the aposteriori probabilities P(Ci | x ) Use One form of Bayes Theorem P(Ci | x ) = p( x | Ci ) P(Ci ) / p( x ) Sub stitute the above for the P(Ci | x) to give p( x | Ci ) P(Ci ) / p( x ), i = 1, 2, … , M But p( x ) is the same for all terms so the decision rule simplifies to 17

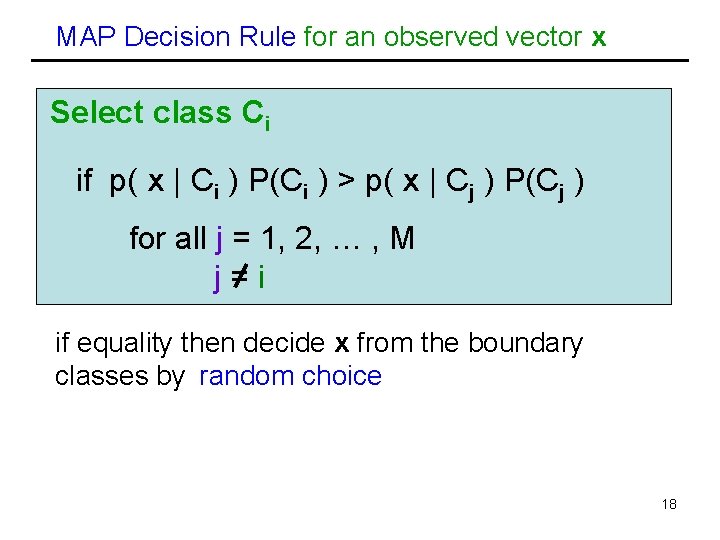

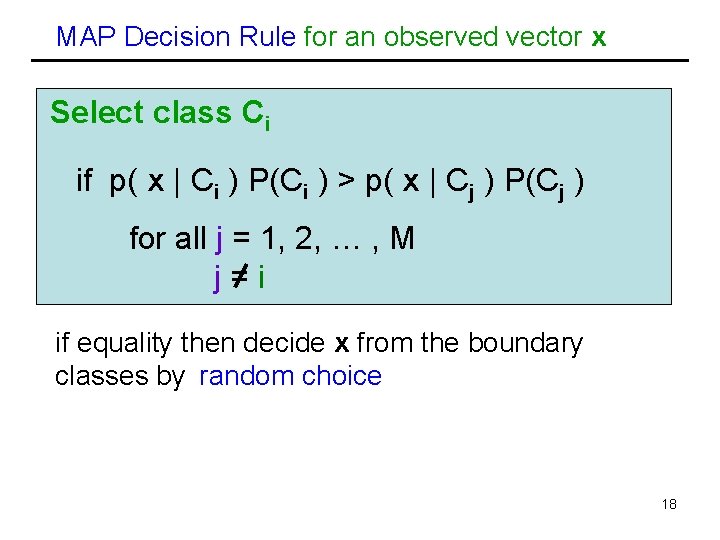

MAP Decision Rule for an observed vector x Select class Ci if p( x | Ci ) P(Ci ) > p( x | Cj ) P(Cj ) for all j = 1, 2, … , M j=i if equality then decide x from the boundary classes by random choice 18

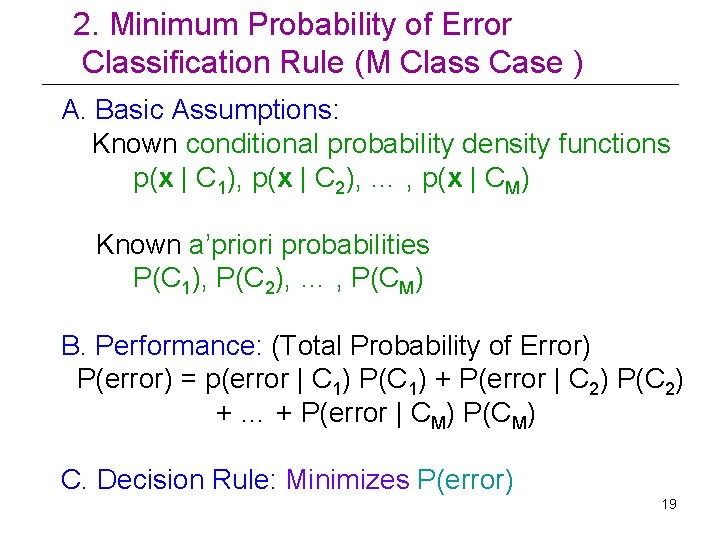

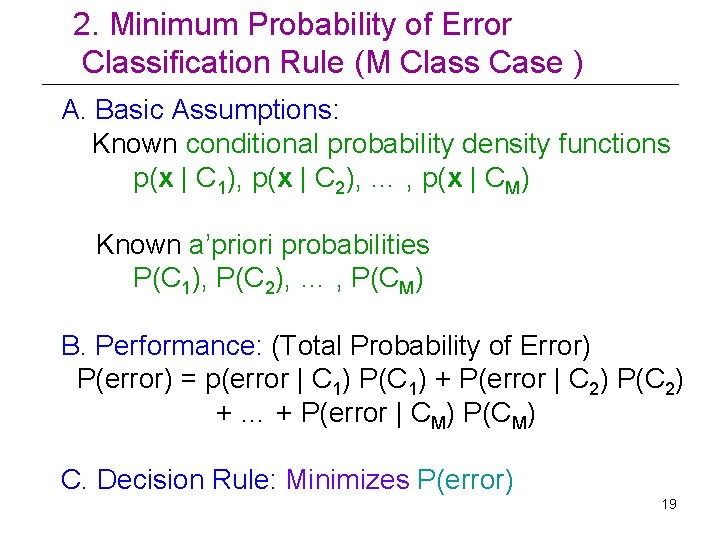

2. Minimum Probability of Error Classification Rule (M Class Case ) A. Basic Assumptions: Known conditional probability density functions p(x | C 1), p(x | C 2), … , p(x | CM) Known a’priori probabilities P(C 1), P(C 2), … , P(CM) B. Performance: (Total Probability of Error) P(error) = p(error | C 1) P(C 1) + P(error | C 2) P(C 2) + … + P(error | CM) P(CM) C. Decision Rule: Minimizes P(error) 19

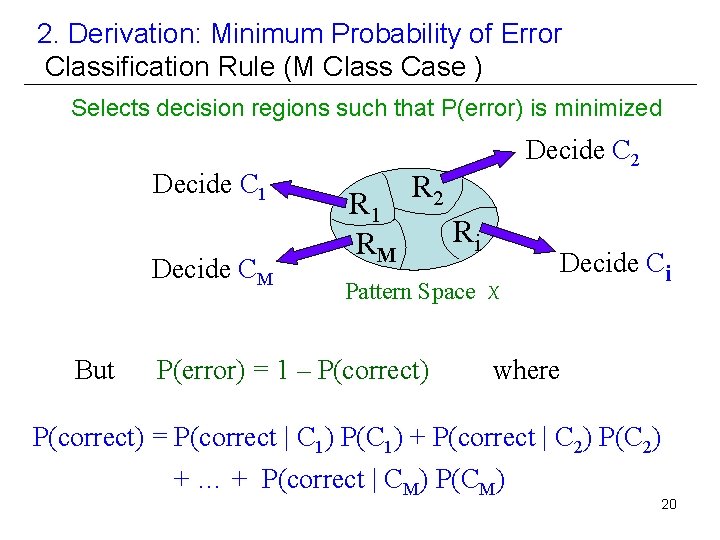

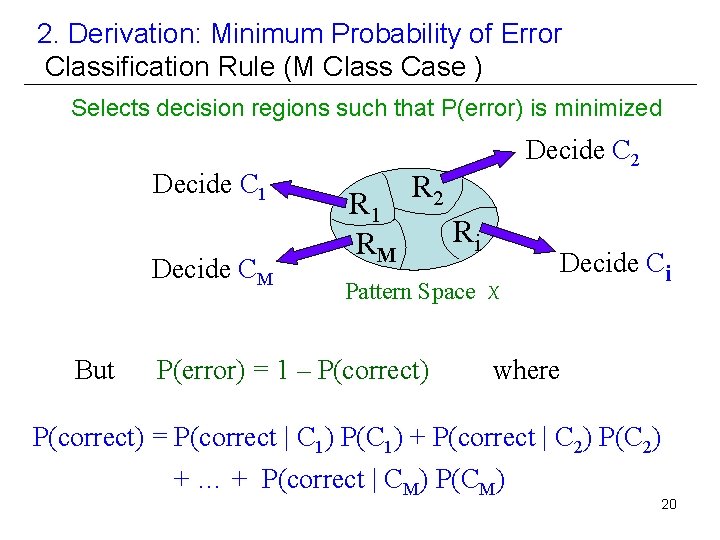

2. Derivation: Minimum Probability of Error Classification Rule (M Class Case ) Selects decision regions such that P(error) is minimized Decide C 1 Decide CM But R 1 RM Decide C 2 Ri Pattern Space X P(error) = 1 – P(correct) Decide Ci where P(correct) = P(correct | C 1) P(C 1) + P(correct | C 2) P(C 2) + … + P(correct | CM) P(CM) 20

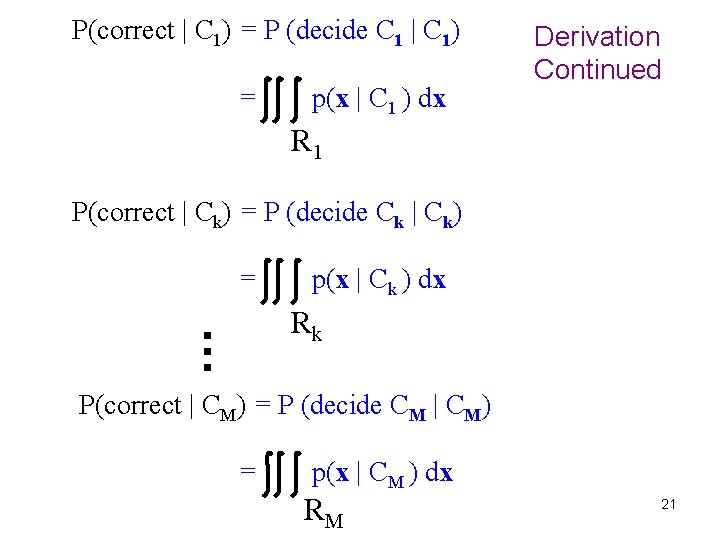

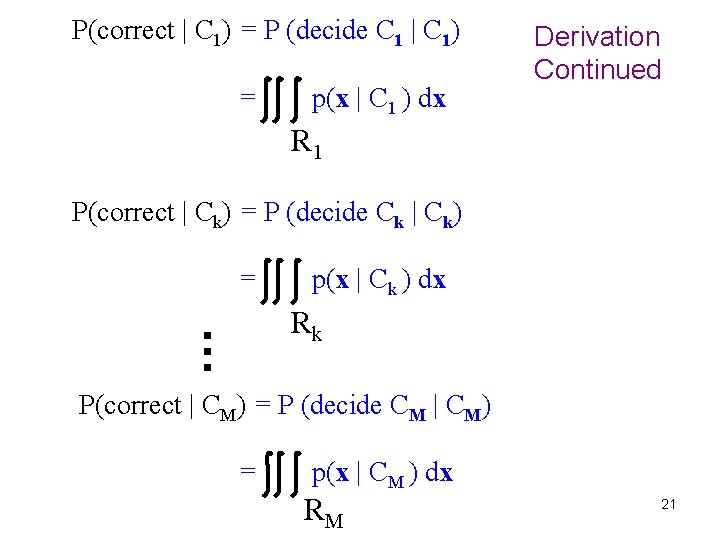

P(correct | C 1) = P (decide C 1 | C 1) = p(x | C 1 ) dx Derivation Continued R 1 P(correct | Ck) = P (decide Ck | Ck) = p(x | Ck ) dx … Rk P(correct | CM) = P (decide CM | CM) = p(x | CM ) dx RM 21

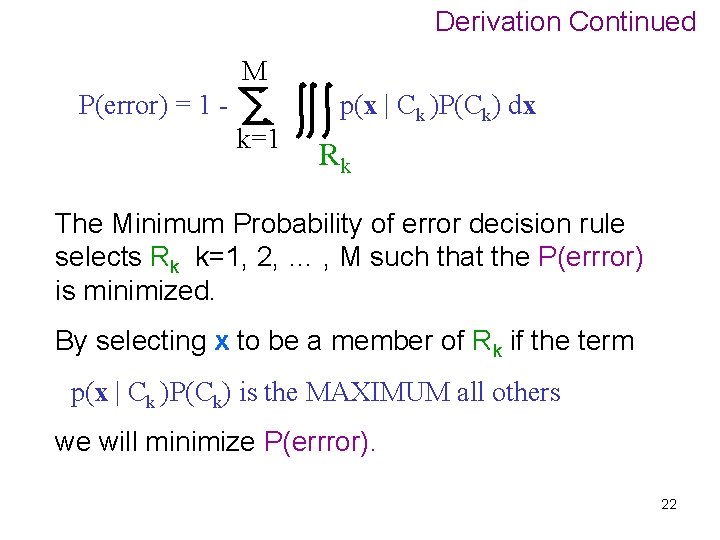

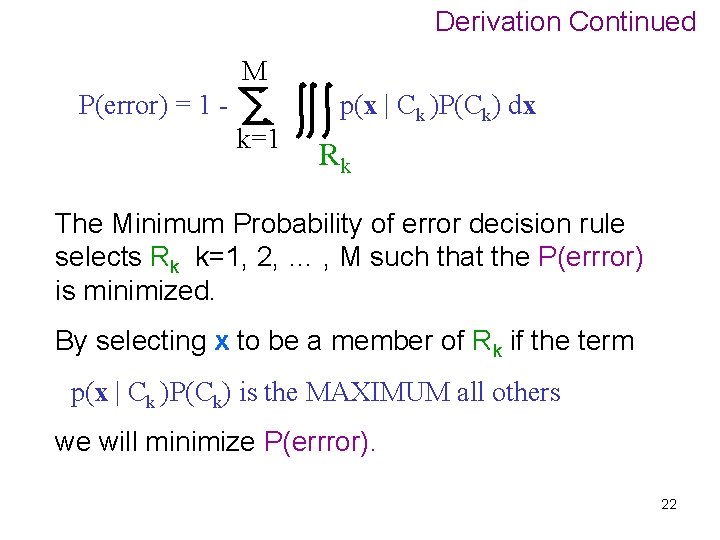

Derivation Continued M P(error) = 1 k=1 p(x | Ck )P(Ck) dx Rk The Minimum Probability of error decision rule selects Rk k=1, 2, … , M such that the P(errror) is minimized. By selecting x to be a member of Rk if the term p(x | Ck )P(Ck) is the MAXIMUM all others we will minimize P(errror). 22

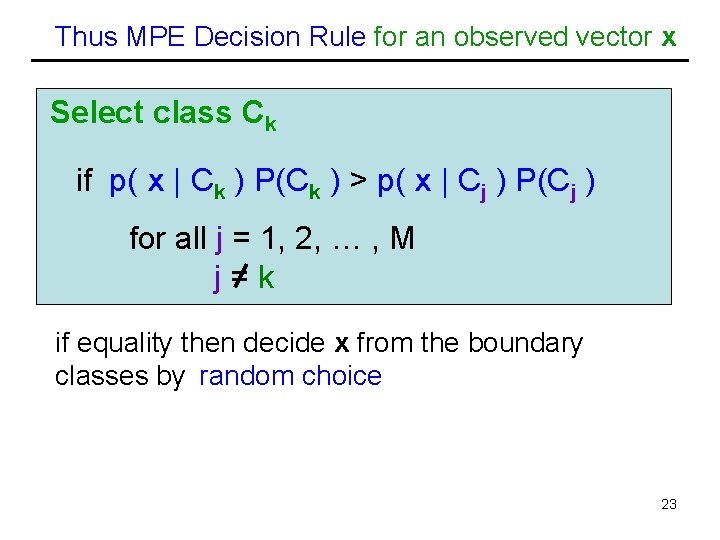

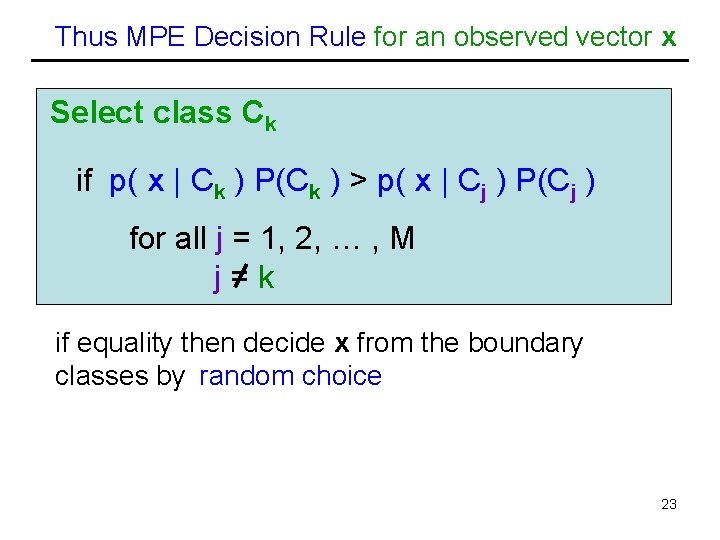

Thus MPE Decision Rule for an observed vector x Select class Ck if p( x | Ck ) P(Ck ) > p( x | Cj ) P(Cj ) for all j = 1, 2, … , M j=k if equality then decide x from the boundary classes by random choice 23

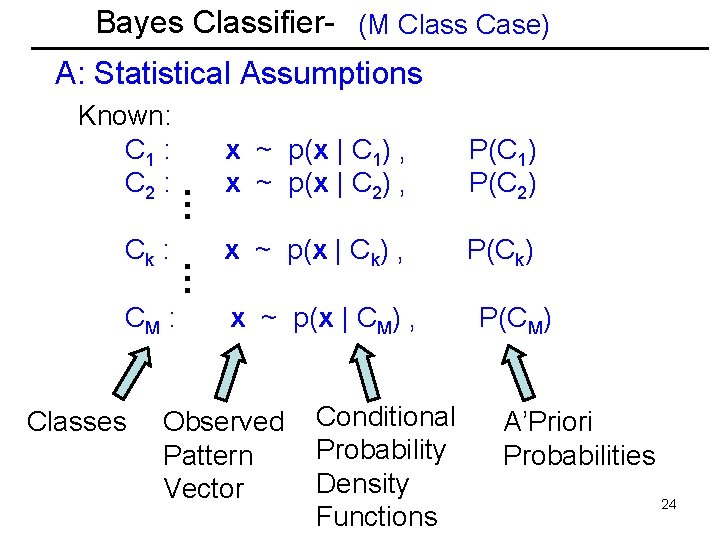

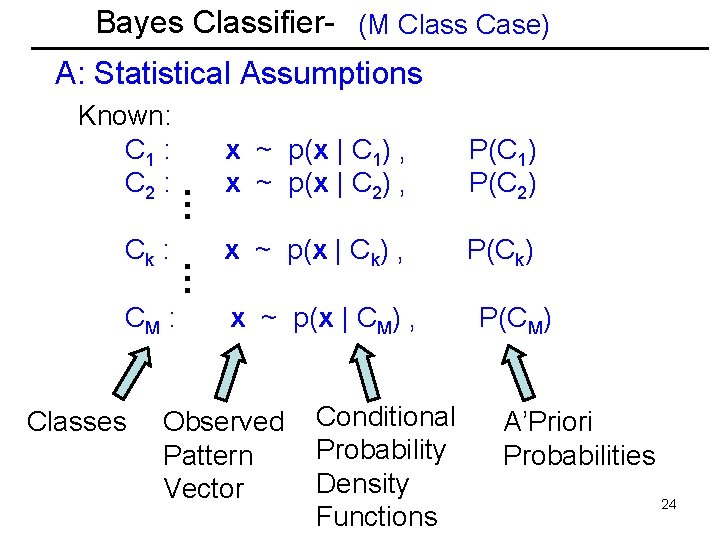

Bayes Classifier- (M Class Case) A: Statistical Assumptions CM : Classes … Ck : … Known: C 1 : C 2 : x ~ p(x | C 1) , x ~ p(x | C 2) , P(C 1) P(C 2) x ~ p(x | Ck) , P(Ck) x ~ p(x | CM) , Observed Pattern Vector Conditional Probability Density Functions P(CM) A’Priori Probabilities 24

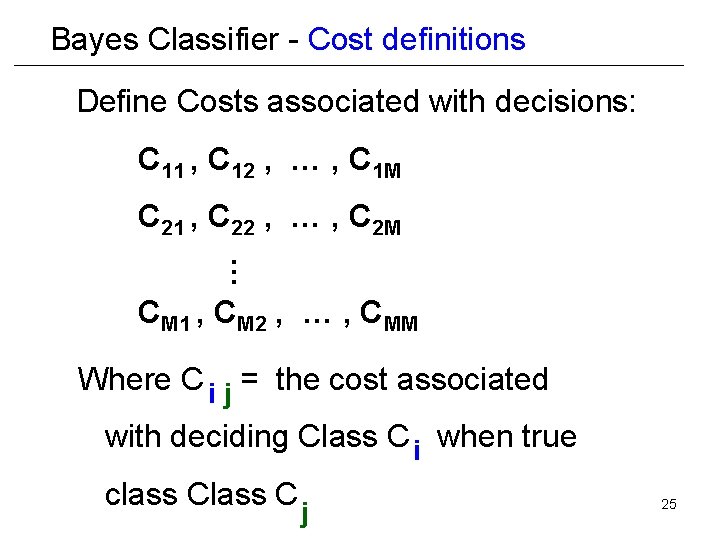

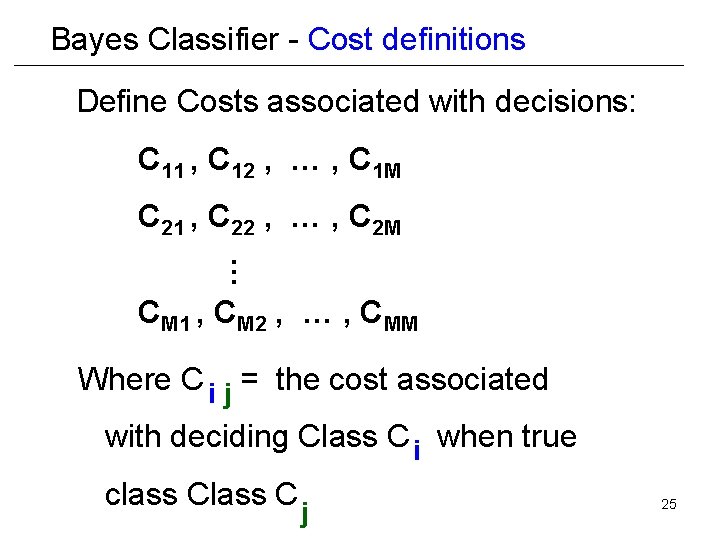

Bayes Classifier - Cost definitions Define Costs associated with decisions: C 11 , C 12 , … , C 1 M … C 21 , C 22 , … , C 2 M CM 1 , CM 2 , … , CMM Where C i j = the cost associated with deciding Class C i when true class C j 25

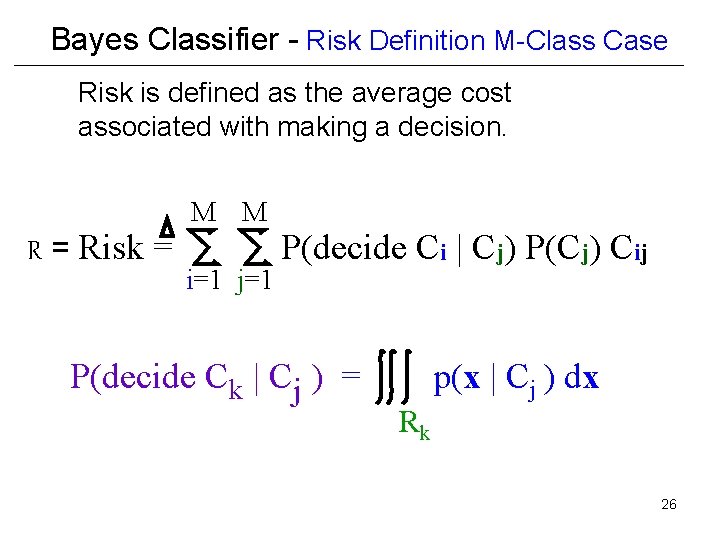

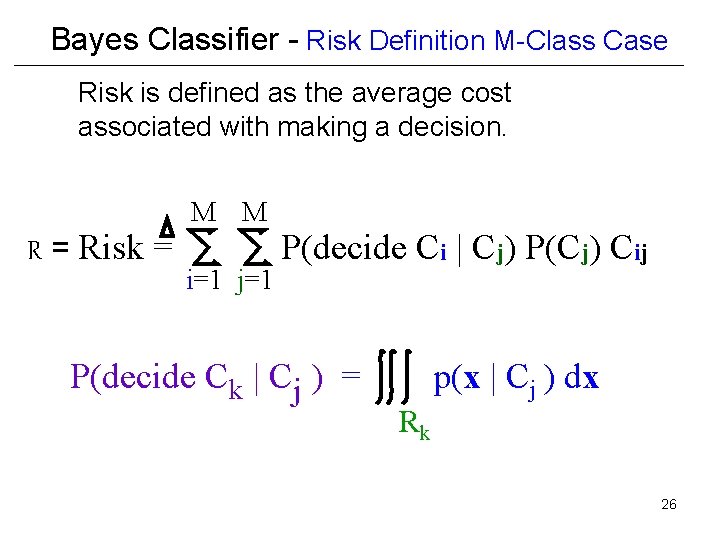

Bayes Classifier - Risk Definition M-Class Case Risk is defined as the average cost associated with making a decision. R = Risk = M M i=1 j=1 P(decide Ci | Cj) P(Cj) Cij P(decide Ck | Cj ) = p(x | Cj ) dx Rk 26

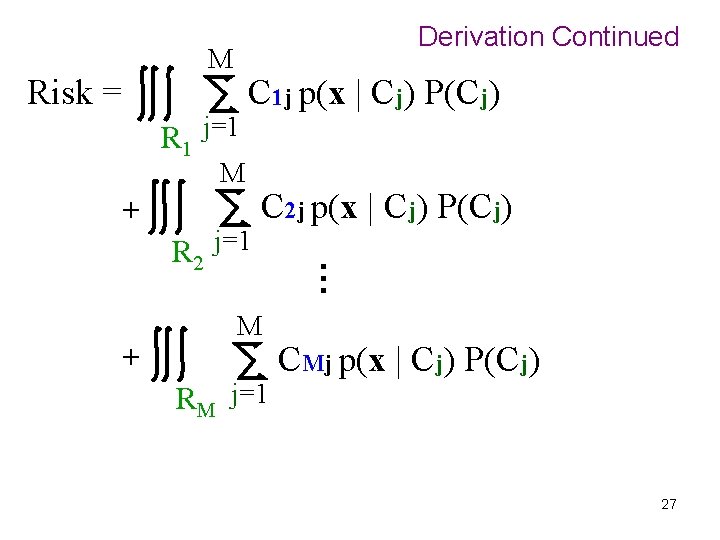

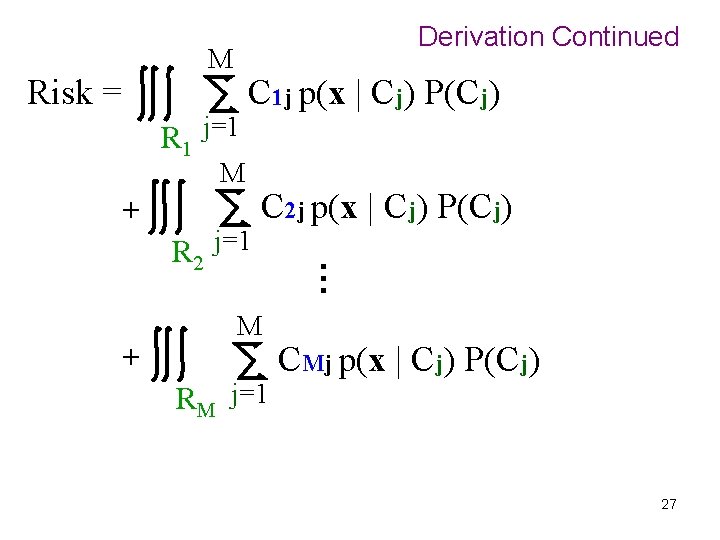

Derivation Continued M Risk = R 1 j=1 C 1 j p(x | Cj) P(Cj) M + M RM j=1 … + R 2 j=1 C 2 j p(x | Cj) P(Cj) CMj p(x | Cj) P(Cj) 27

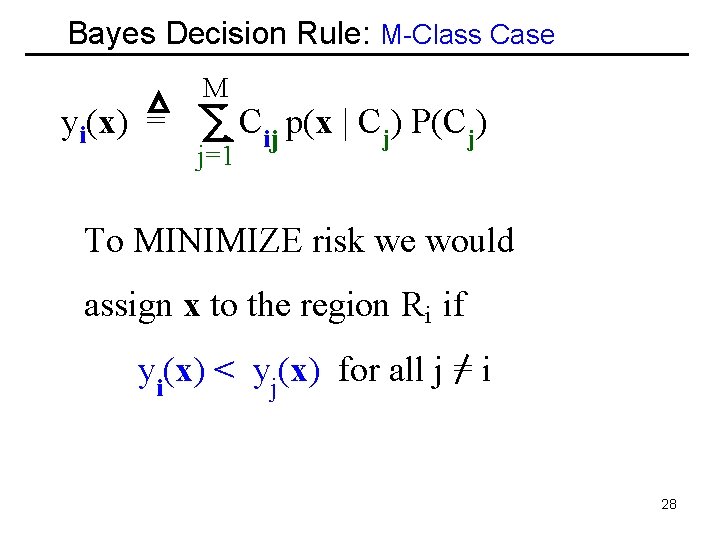

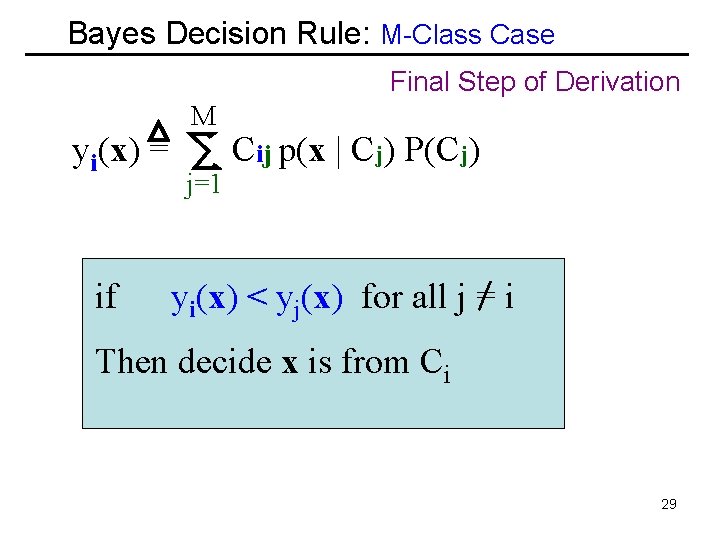

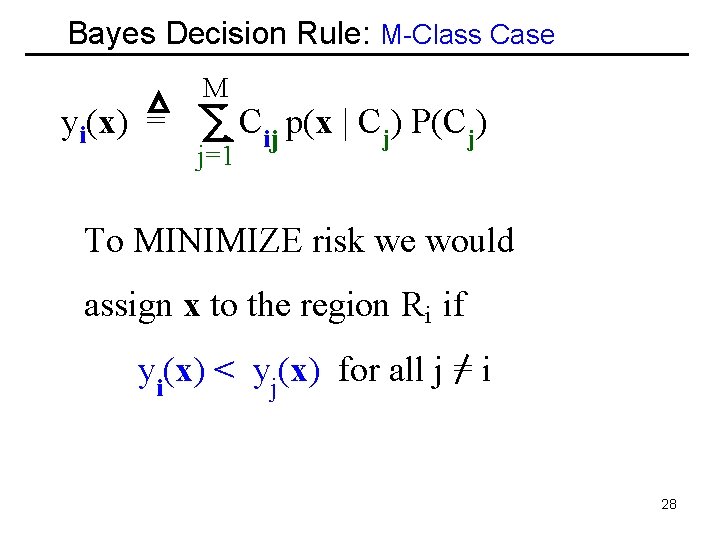

Bayes Decision Rule: M-Class Case yi(x) = M j=1 Cij p(x | Cj) P(Cj) To MINIMIZE risk we would assign x to the region Ri if yi(x) < yj(x) for all j = i 28

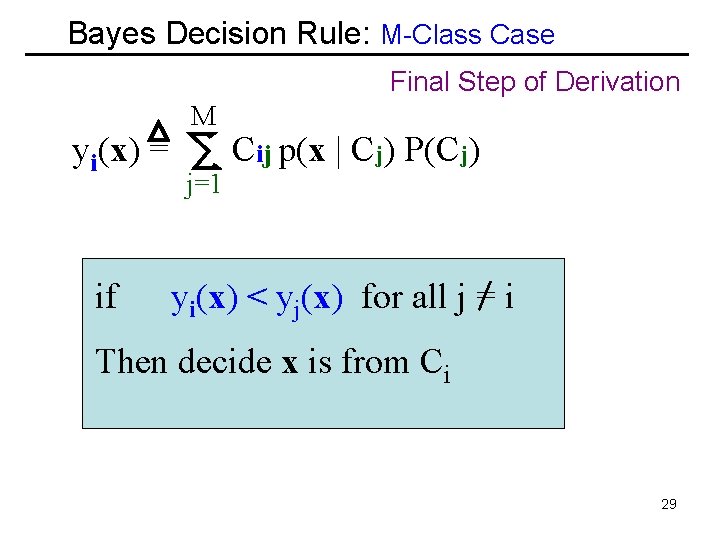

Bayes Decision Rule: M-Class Case Final Step of Derivation yi(x) = if M j=1 Cij p(x | Cj) P(Cj) yi(x) < yj(x) for all j = i Then decide x is from Ci 29

Summary 1. Neyman Pearson Decision Rule Receiver Operating Characteristic(ROC} 2. M-Class Case MAP Decision Rule 3. M-Class Case MPE Decision Rule 4. M-Class Bayes Decision Rule 30

End of Lecture 9 31