NAMED DATA NETWORKING IN SCIENCE APPLICATIONS Christos Papadopoulos

- Slides: 30

NAMED DATA NETWORKING IN SCIENCE APPLICATIONS Christos Papadopoulos Colorado State University CALTECH, Nov 20, 2017 Supported by NSF #1345236, #1340999 and #1659403.

Science Applications Scientific apps generate tremendous amounts of data Current climate CMIP 5 dataset: 3. 5 PB, projected into the exabytes with CMIP 6 High Energy Physics (HEP) ATLAS: 1 PB/s raw, filters to 4 PB/yr Data typically distributed to repositories Sciences develop custom software for dataset discovery, publishing, and retrieval E. g. ESGF, xrootd, etc. 11

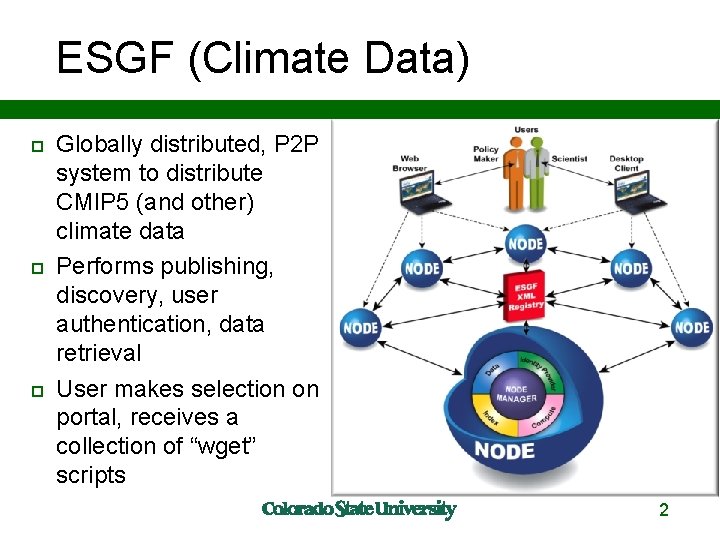

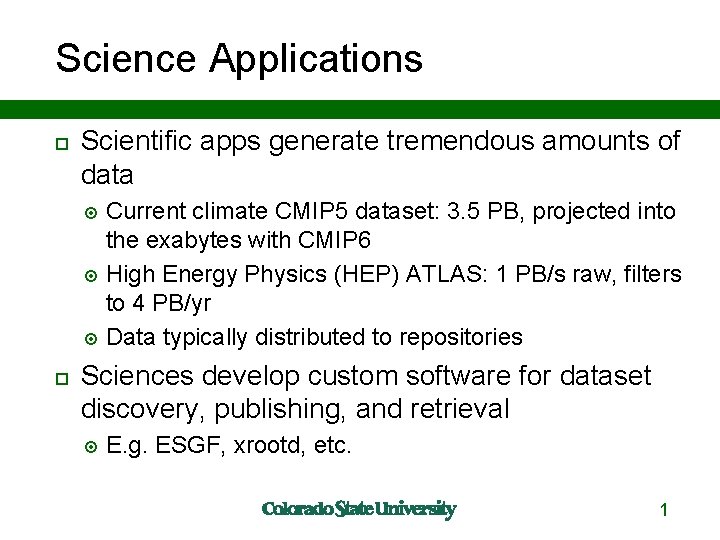

ESGF (Climate Data) Globally distributed, P 2 P system to distribute CMIP 5 (and other) climate data Performs publishing, discovery, user authentication, data retrieval User makes selection on portal, receives a collection of “wget” scripts 2

ESGF Server Locations User specifies which ESGF server to connect to receive data 33

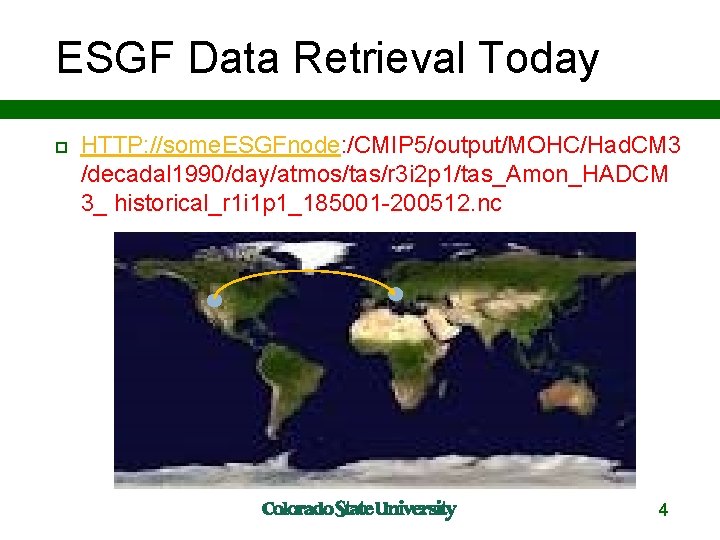

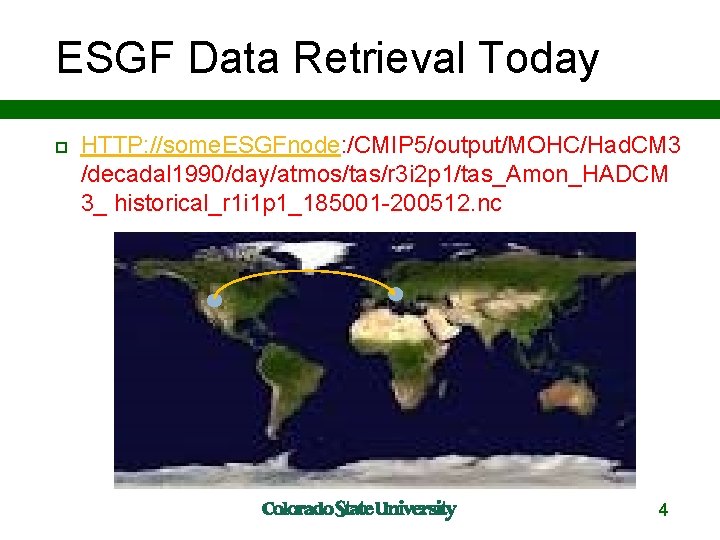

ESGF Data Retrieval Today HTTP: //some. ESGFnode: /CMIP 5/output/MOHC/Had. CM 3 /decadal 1990/day/atmos/tas/r 3 i 2 p 1/tas_Amon_HADCM 3_ historical_r 1 i 1 p 1_185001 -200512. nc 4

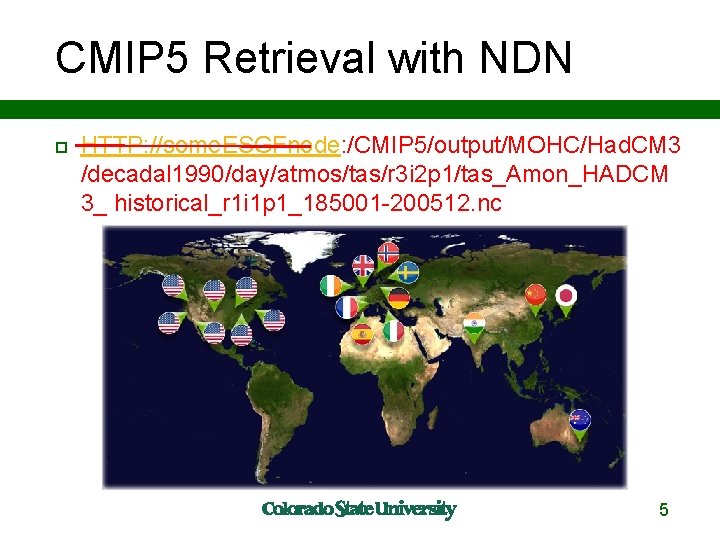

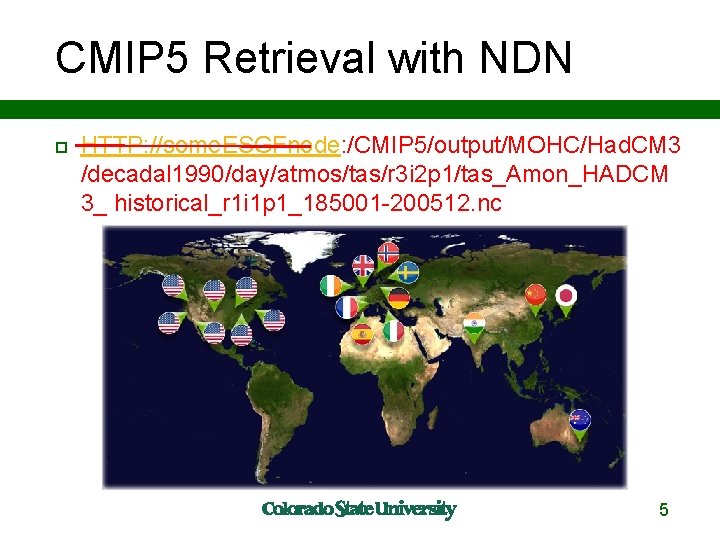

CMIP 5 Retrieval with NDN HTTP: //some. ESGFnode: /CMIP 5/output/MOHC/Had. CM 3 /decadal 1990/day/atmos/tas/r 3 i 2 p 1/tas_Amon_HADCM 3_ historical_r 1 i 1 p 1_185001 -200512. nc 55

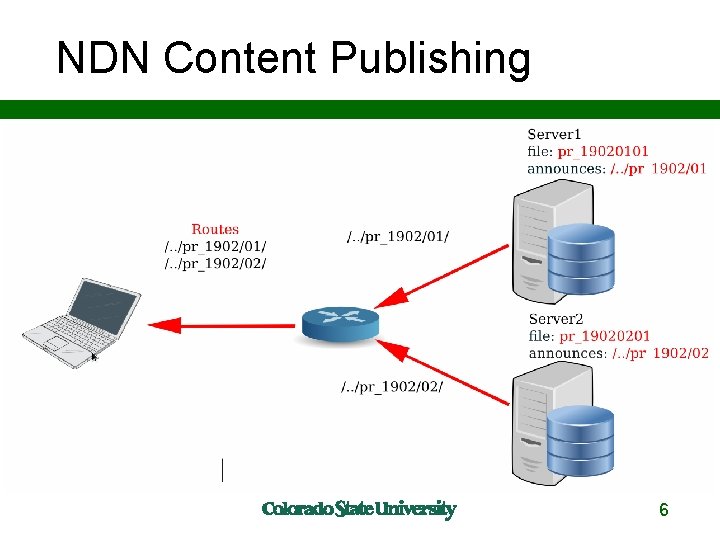

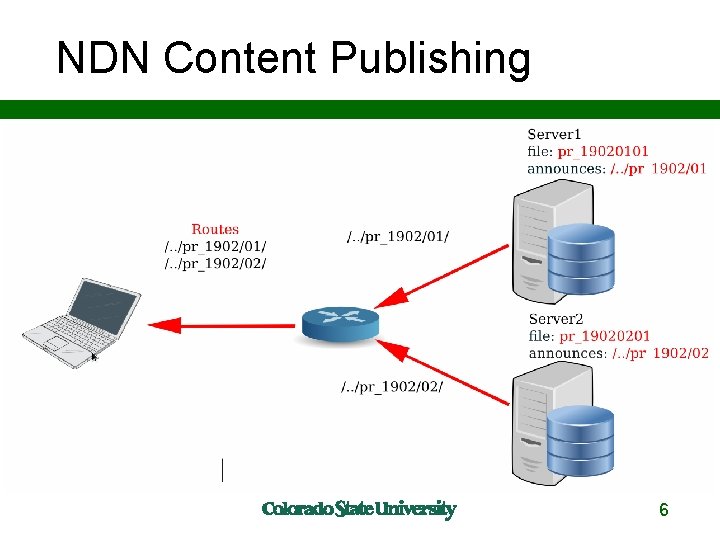

NDN Content Publishing 6

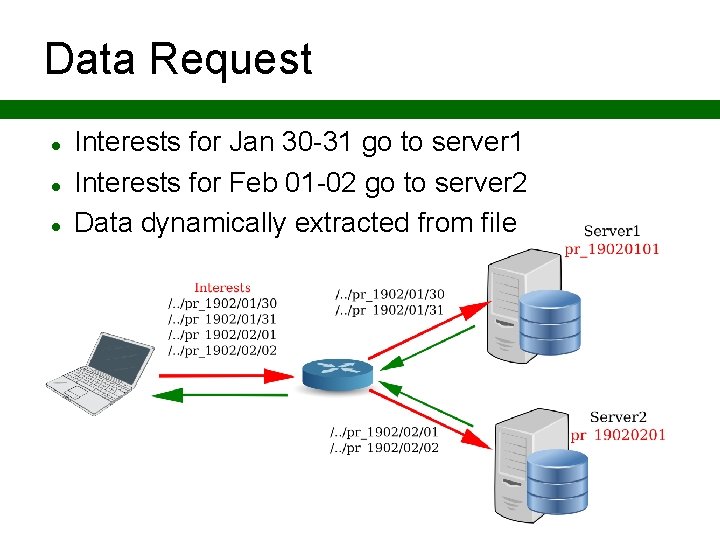

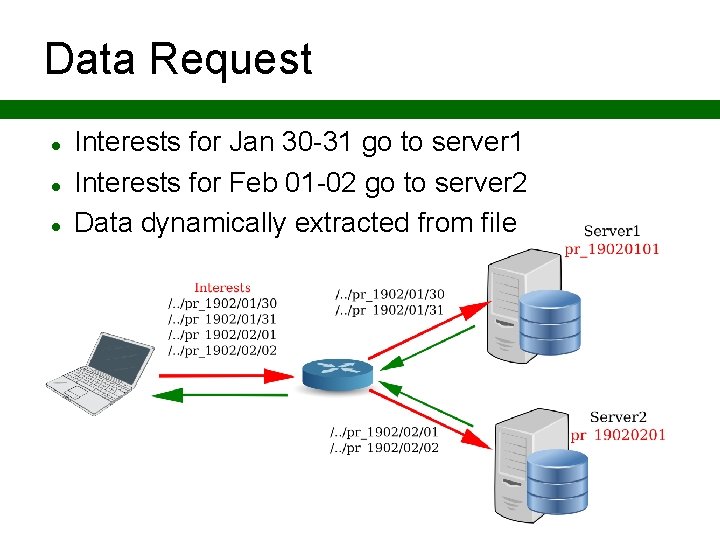

Data Request Interests for Jan 30 -31 go to server 1 Interests for Feb 01 -02 go to server 2 Data dynamically extracted from file 7

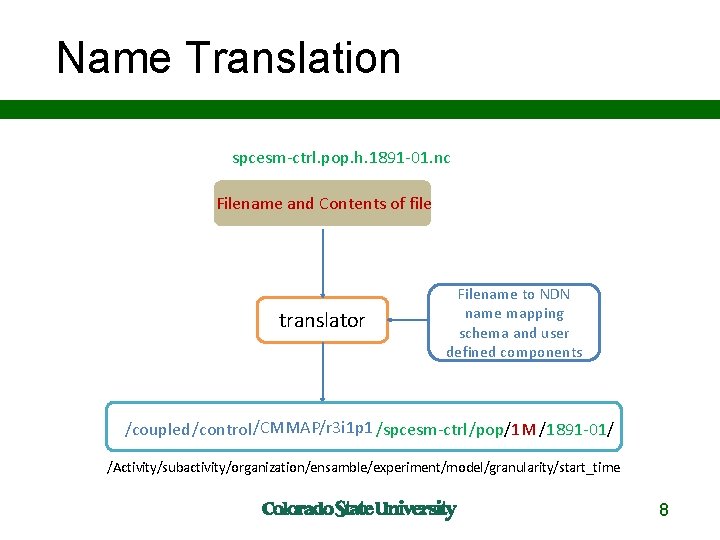

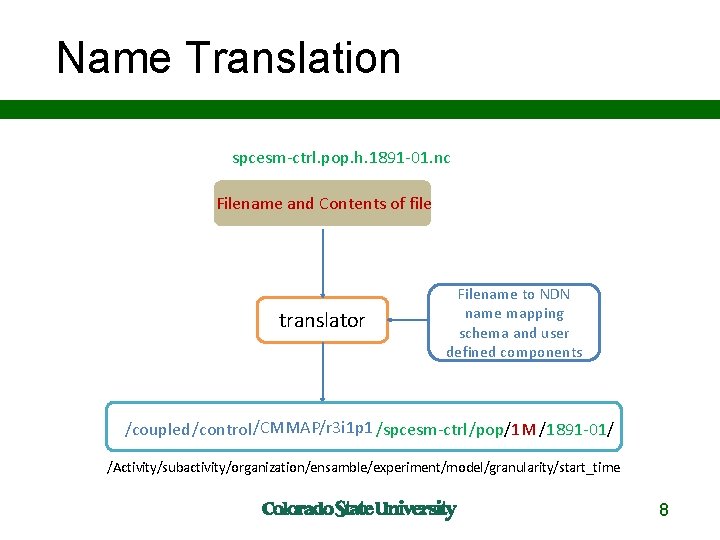

Name Translation spcesm-ctrl. pop. h. 1891 -01. nc Filename and Contents of file translator Filename to NDN name mapping schema and user defined components /coupled/control/CMMAP/r 3 i 1 p 1 /spcesm-ctrl/pop/1 M /1891 -01/ /Activity/subactivity/organization/ensamble/experiment/model/granularity/start_time 88

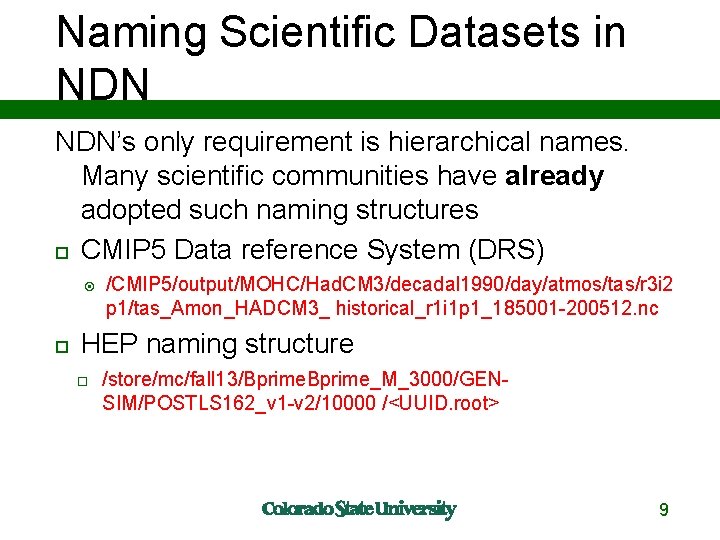

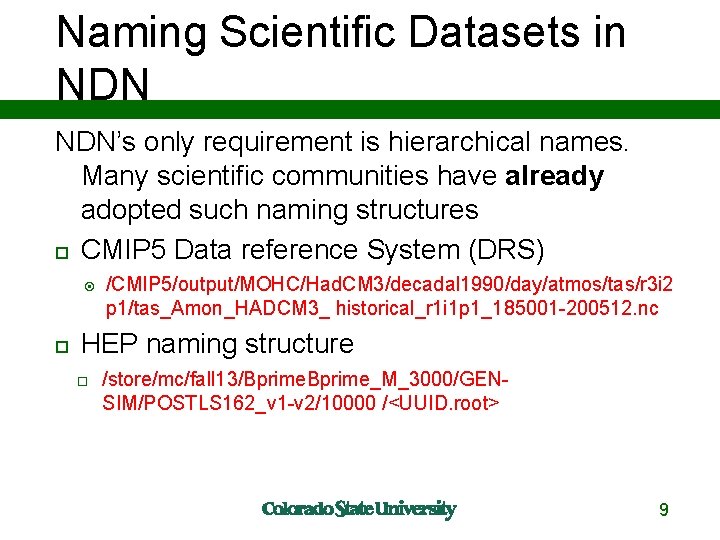

Naming Scientific Datasets in NDN’s only requirement is hierarchical names. Many scientific communities have already adopted such naming structures CMIP 5 Data reference System (DRS) /CMIP 5/output/MOHC/Had. CM 3/decadal 1990/day/atmos/tas/r 3 i 2 p 1/tas_Amon_HADCM 3_ historical_r 1 i 1 p 1_185001 -200512. nc HEP naming structure /store/mc/fall 13/Bprime_M_3000/GENSIM/POSTLS 162_v 1 -v 2/10000 /<UUID. root> 9

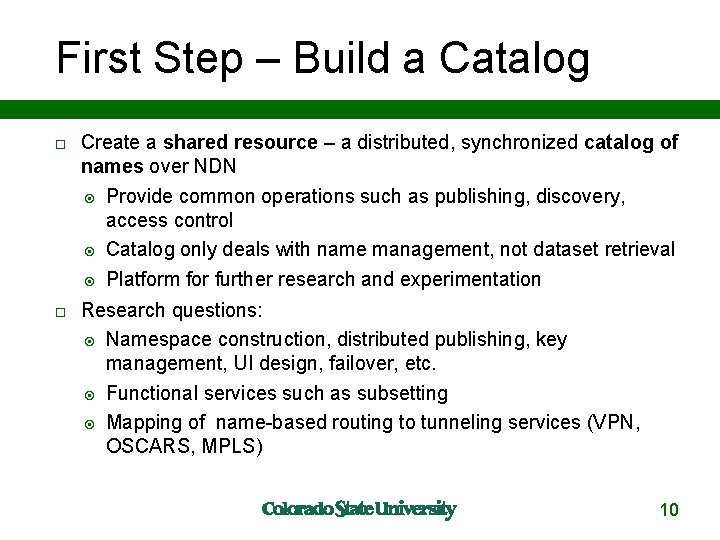

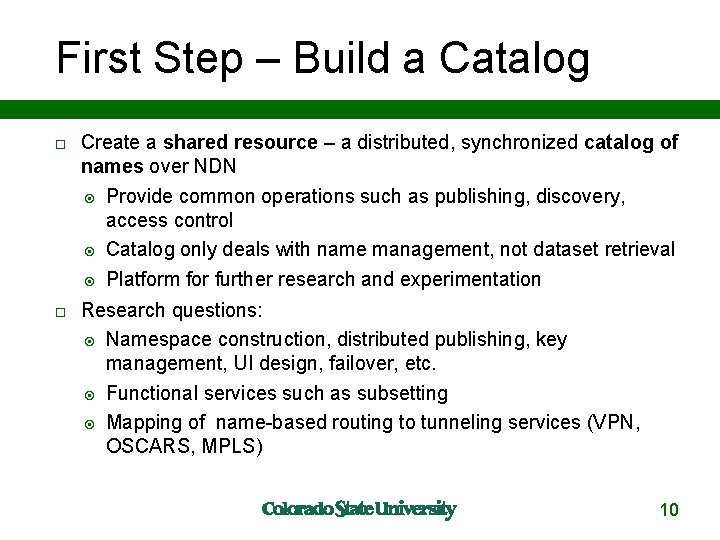

First Step – Build a Catalog Create a shared resource – a distributed, synchronized catalog of names over NDN Provide common operations such as publishing, discovery, access control Catalog only deals with name management, not dataset retrieval Platform for further research and experimentation Research questions: Namespace construction, distributed publishing, key management, UI design, failover, etc. Functional services such as subsetting Mapping of name-based routing to tunneling services (VPN, OSCARS, MPLS) 10

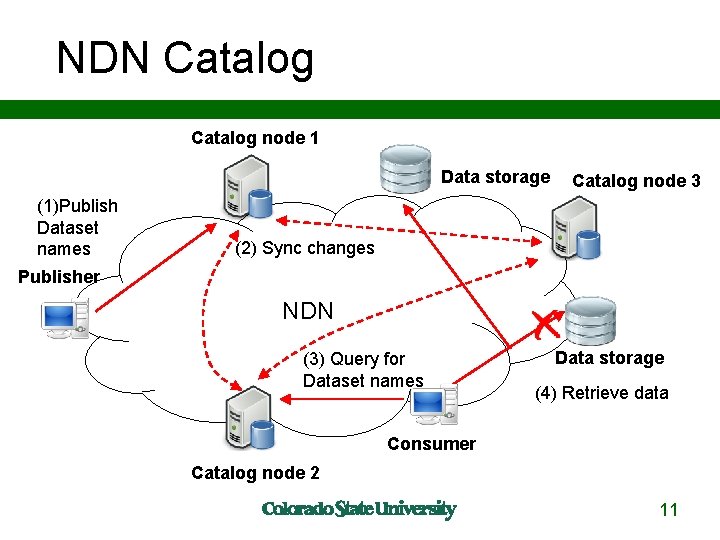

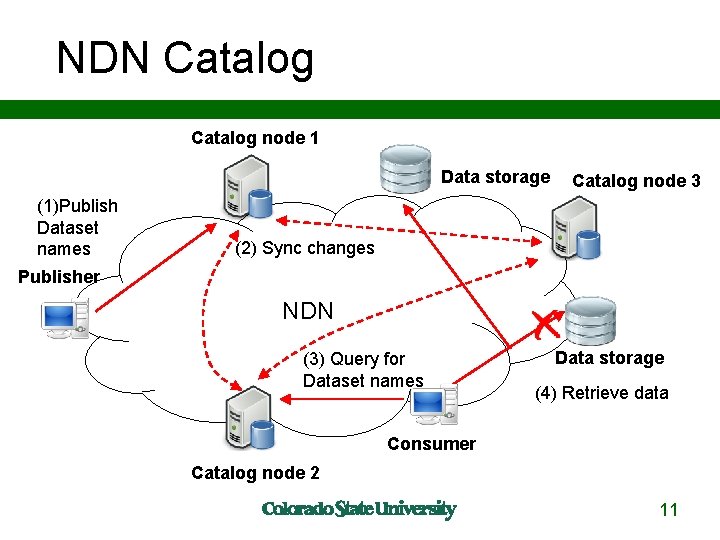

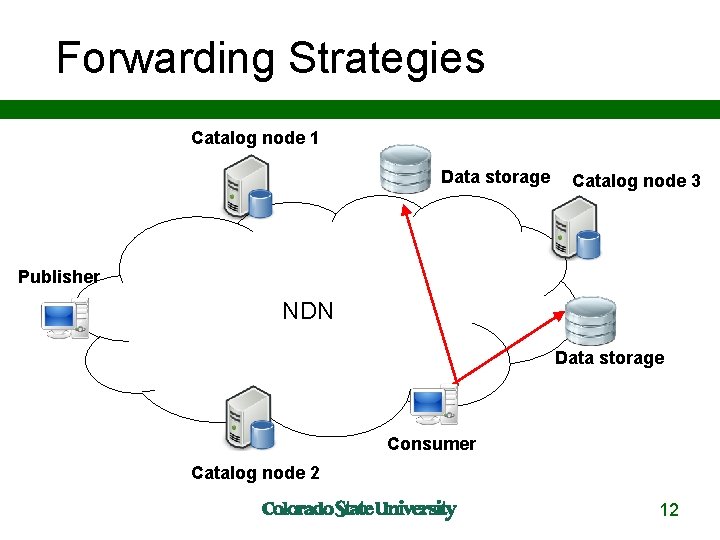

NDN Catalog node 1 Data storage (1)Publish Dataset names Catalog node 3 (2) Sync changes Publisher NDN (3) Query for Dataset names Data storage (4) Retrieve data Consumer Catalog node 2 11

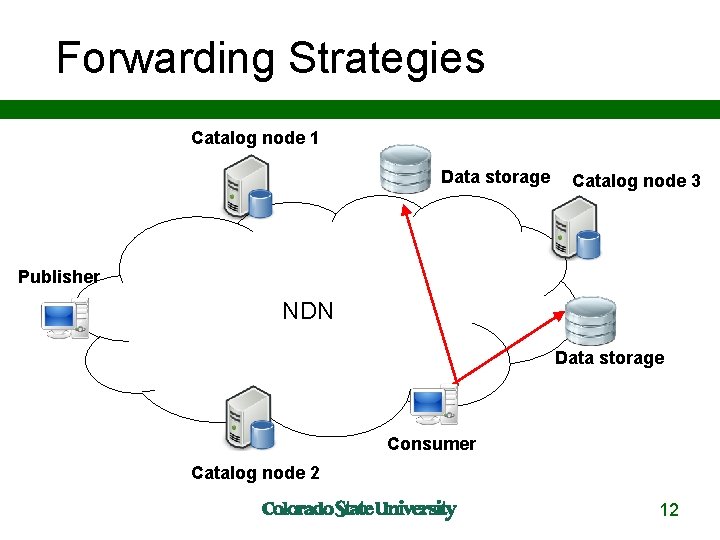

Forwarding Strategies Catalog node 1 Data storage Catalog node 3 Publisher NDN Data storage Consumer Catalog node 2 1212

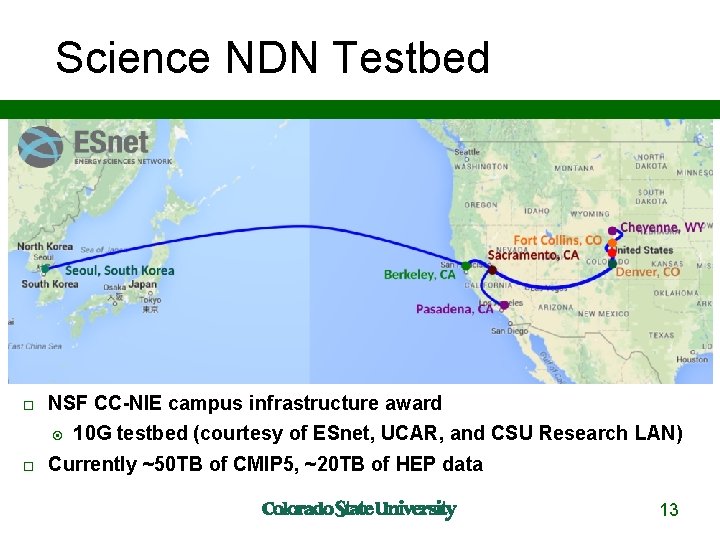

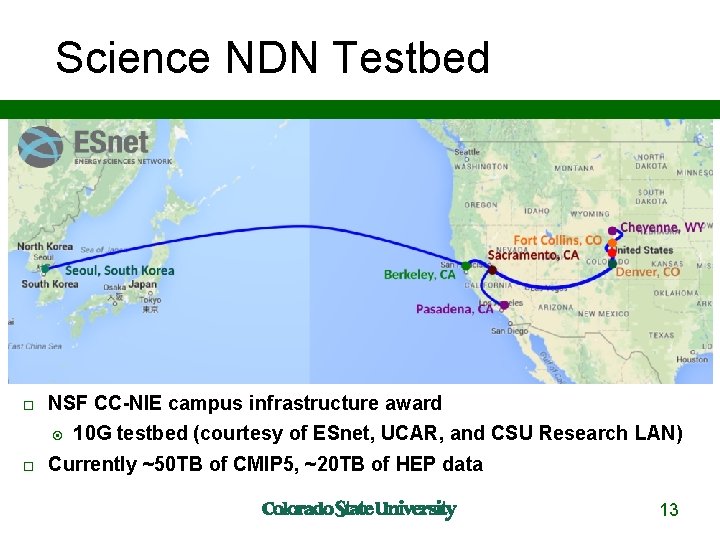

Science NDN Testbed NSF CC-NIE campus infrastructure award 10 G testbed (courtesy of ESnet, UCAR, and CSU Research LAN) Currently ~50 TB of CMIP 5, ~20 TB of HEP data 13

REQUEST AGGREGATION, CACHING, AND FORWARDING STRATEGIES FOR IMPROVING LARGE CLIMATE DATA DISTRIBUTION WITH NDN: A CASE STUDY Susmit Shannigrahi, Chengyu Fan, Christos Papadopoulos ICN 2017

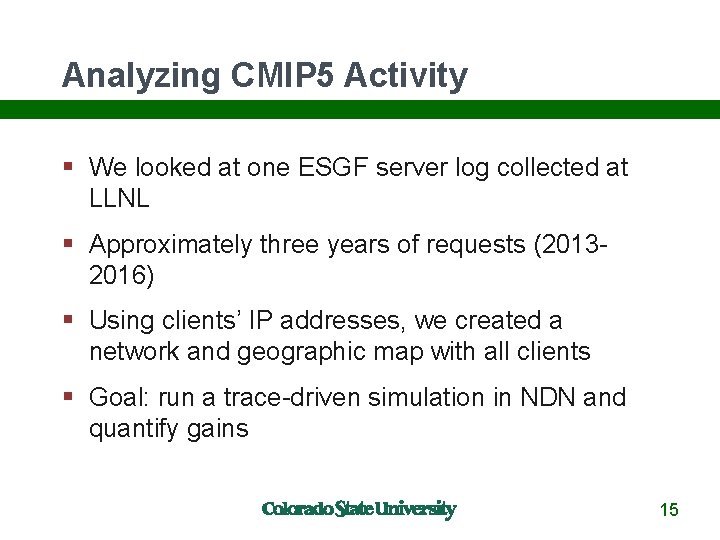

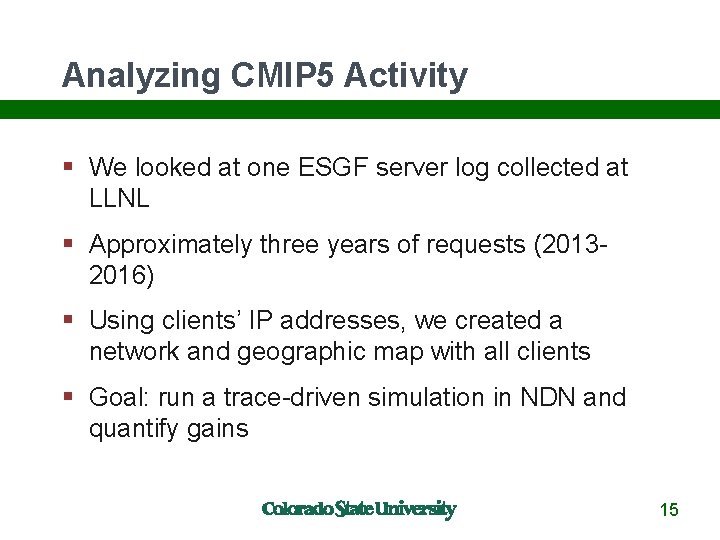

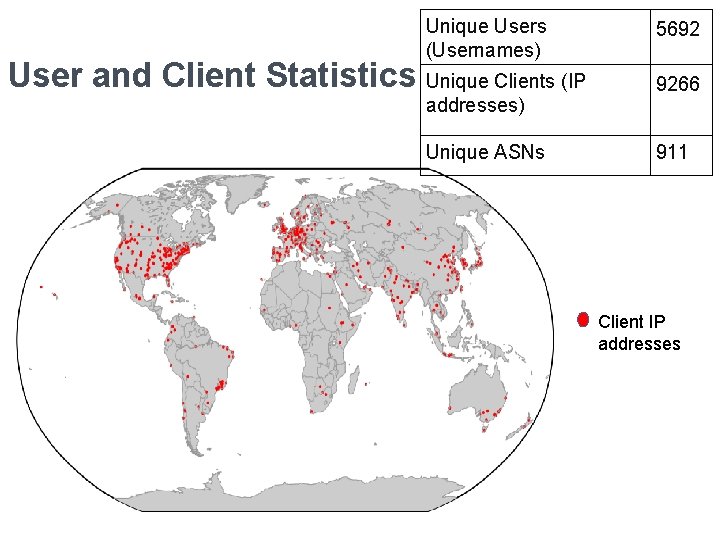

Analyzing CMIP 5 Activity We looked at one ESGF server log collected at LLNL Approximately three years of requests (20132016) Using clients’ IP addresses, we created a network and geographic map with all clients Goal: run a trace-driven simulation in NDN and quantify gains 15

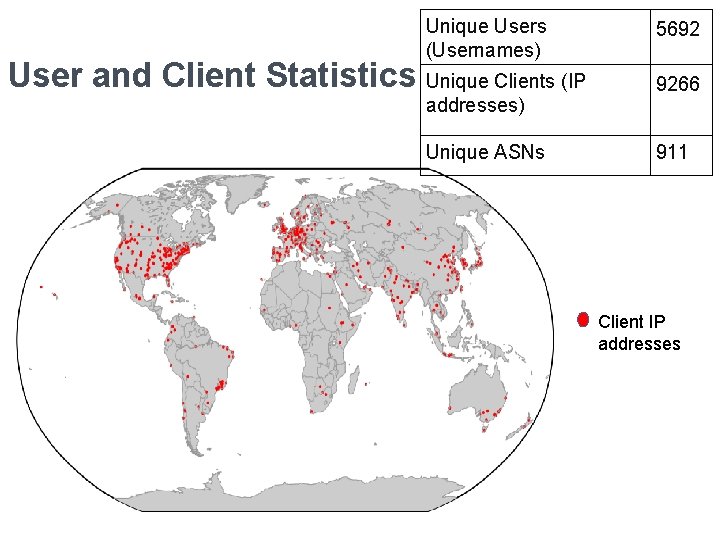

Unique Users (Usernames) User and Client Statistics Unique Clients (IP addresses) Unique ASNs 5692 9266 911 Client IP addresses

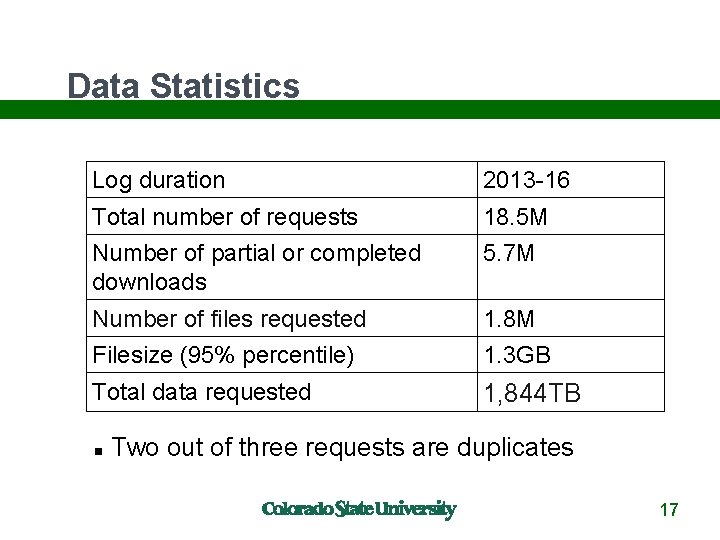

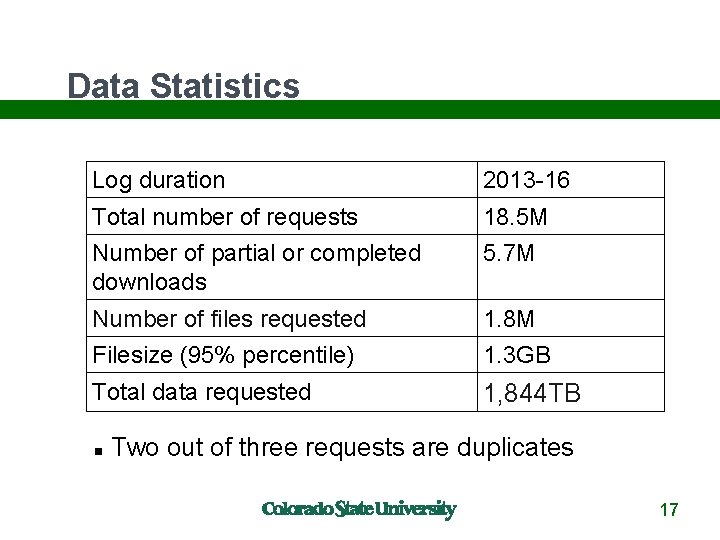

Data Statistics Log duration 2013 -16 Total number of requests 18. 5 M Number of partial or completed downloads 5. 7 M Number of files requested 1. 8 M Filesize (95% percentile) 1. 3 GB Total data requested 1, 844 TB Two out of three requests are duplicates 17

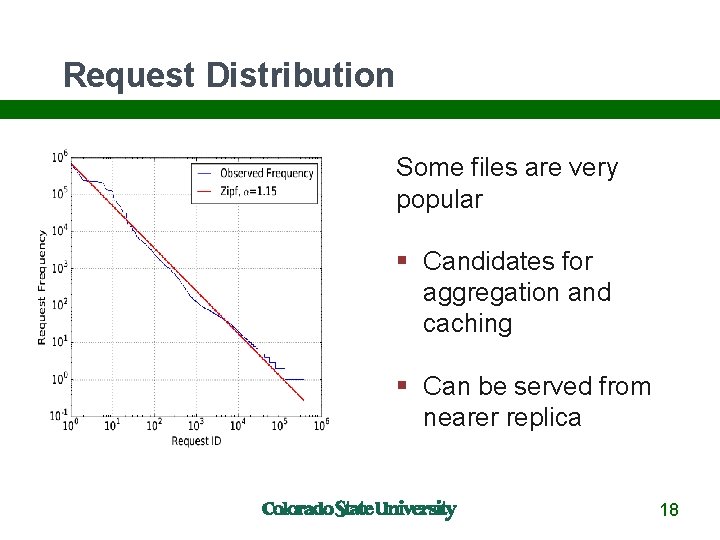

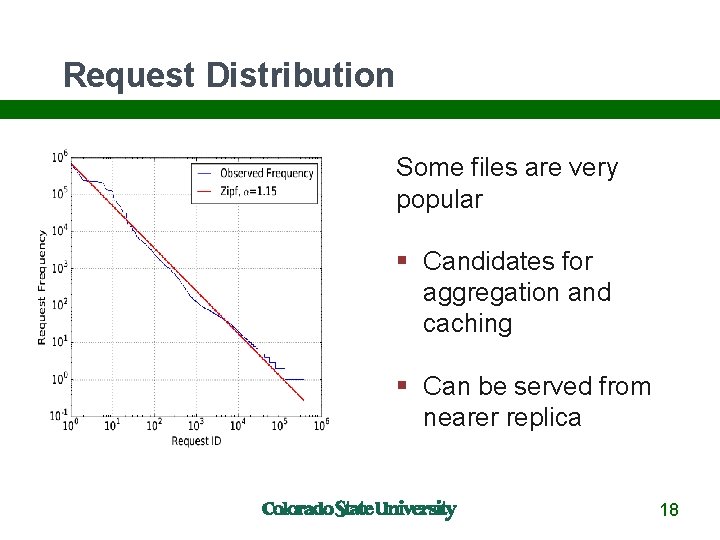

Request Distribution Some files are very popular Candidates for aggregation and caching Can be served from nearer replica 18

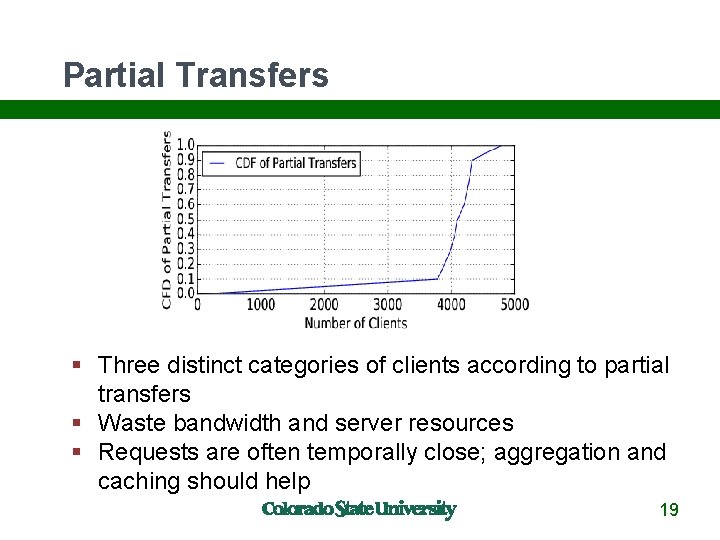

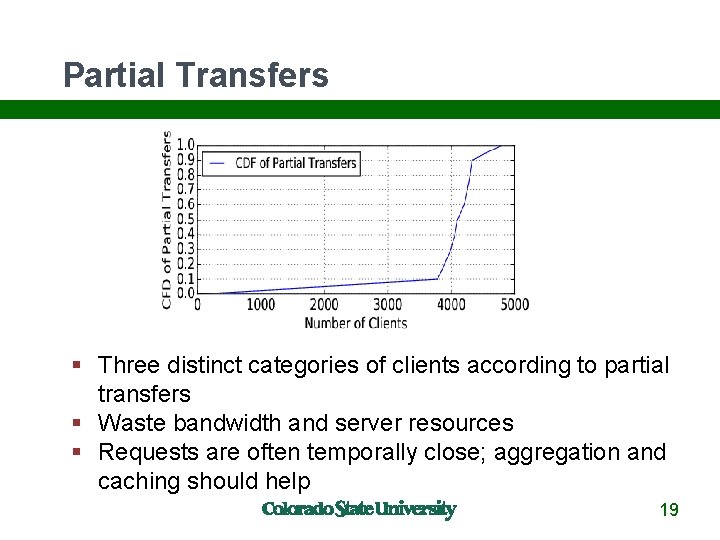

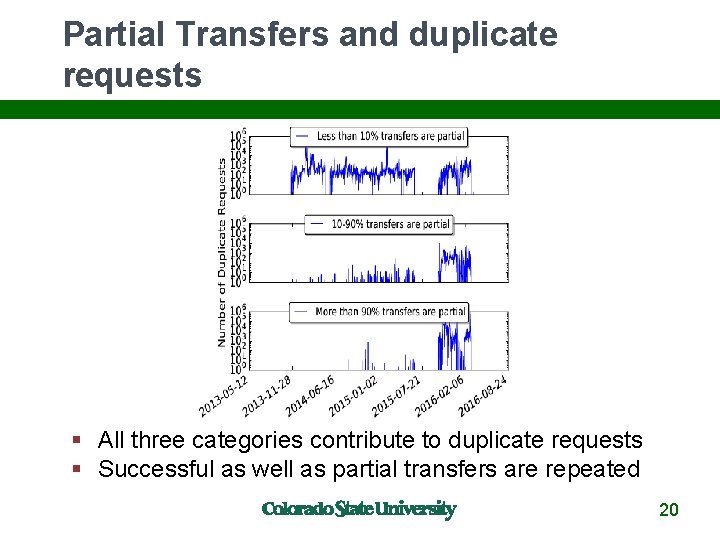

Partial Transfers Three distinct categories of clients according to partial transfers Waste bandwidth and server resources Requests are often temporally close; aggregation and caching should help 19

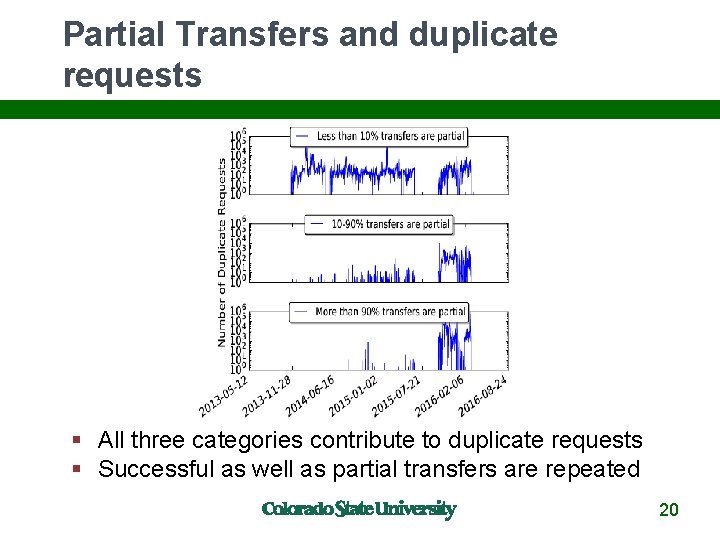

Partial Transfers and duplicate requests All three categories contribute to duplicate requests Successful as well as partial transfers are repeated 20

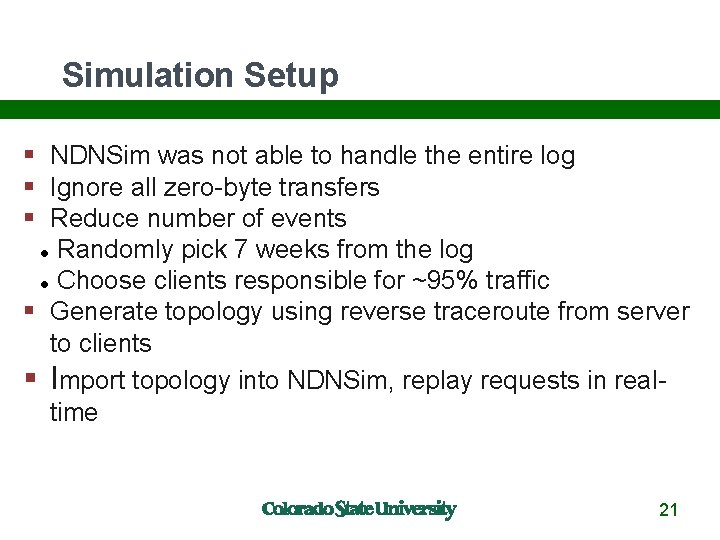

Simulation Setup NDNSim was not able to handle the entire log Ignore all zero-byte transfers Reduce number of events Randomly pick 7 weeks from the log Choose clients responsible for ~95% traffic Generate topology using reverse traceroute from server to clients Import topology into NDNSim, replay requests in realtime 21

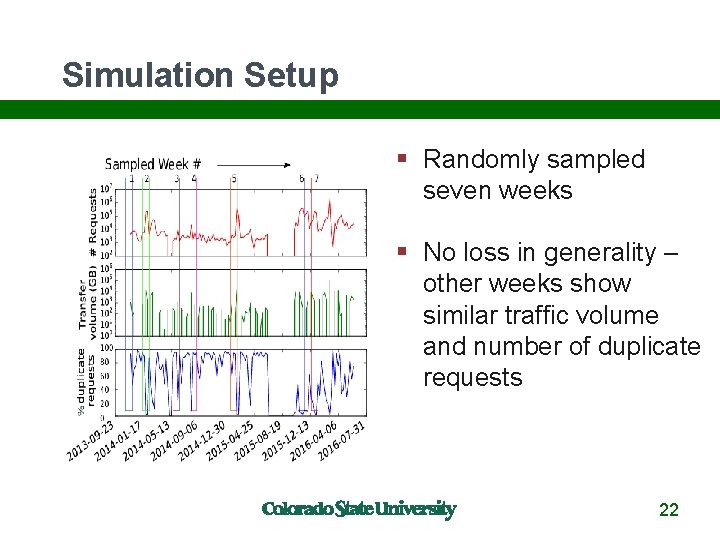

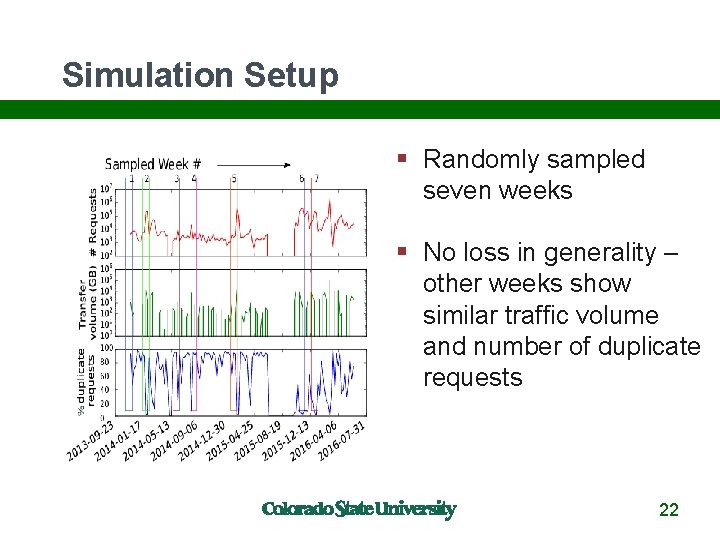

Simulation Setup Randomly sampled seven weeks No loss in generality – other weeks show similar traffic volume and number of duplicate requests 22

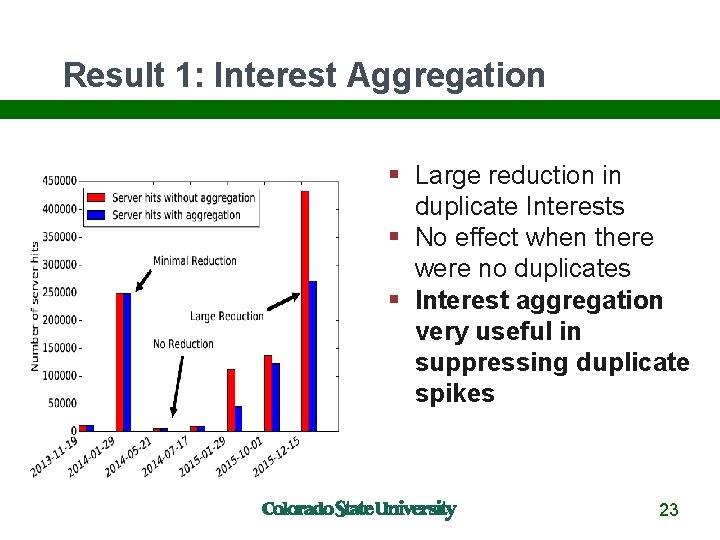

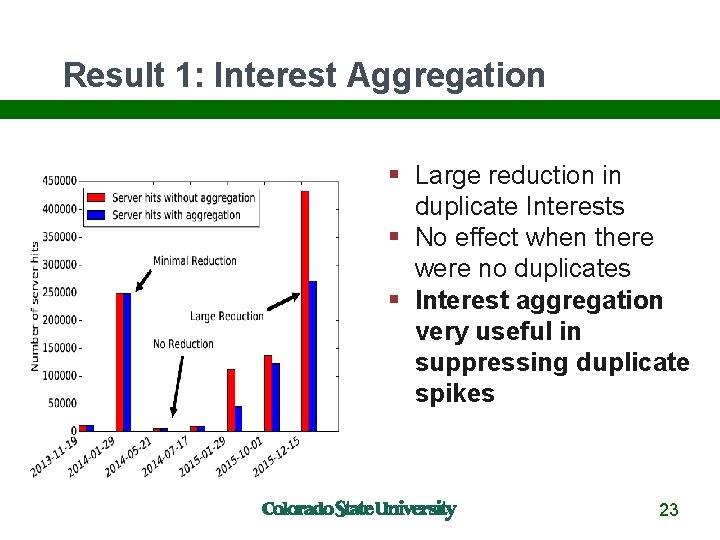

Result 1: Interest Aggregation Large reduction in duplicate Interests No effect when there were no duplicates Interest aggregation very useful in suppressing duplicate spikes 23

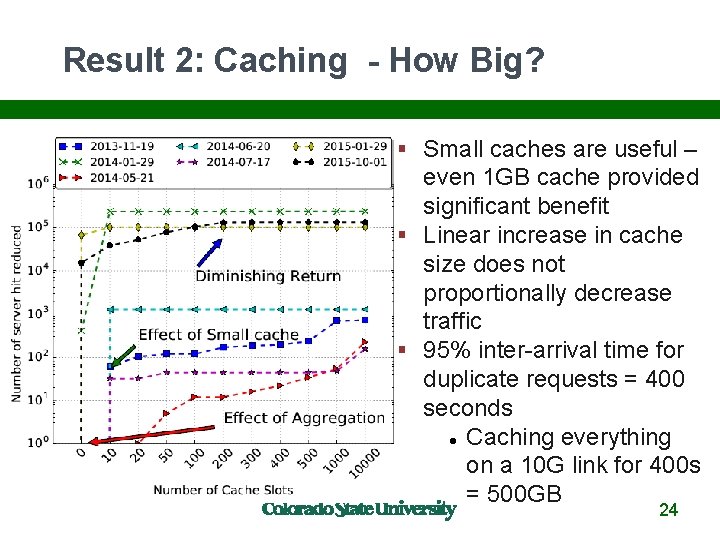

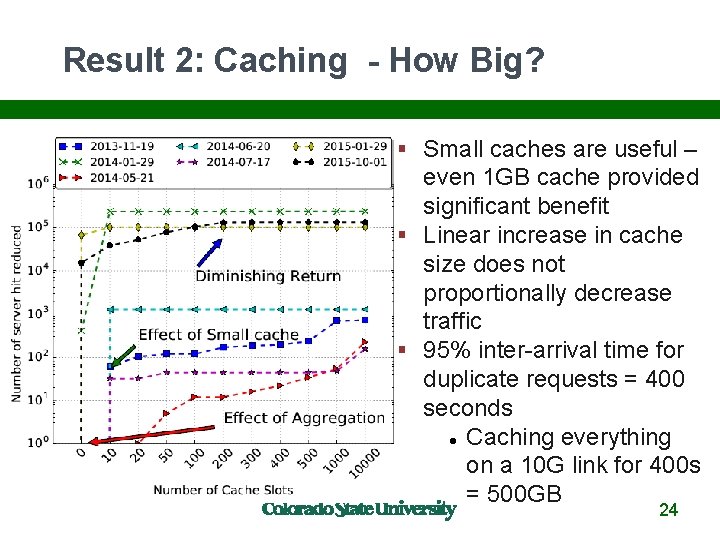

Result 2: Caching - How Big? Small caches are useful – even 1 GB cache provided significant benefit Linear increase in cache size does not proportionally decrease traffic 95% inter-arrival time for duplicate requests = 400 seconds Caching everything on a 10 G link for 400 s = 500 GB 24

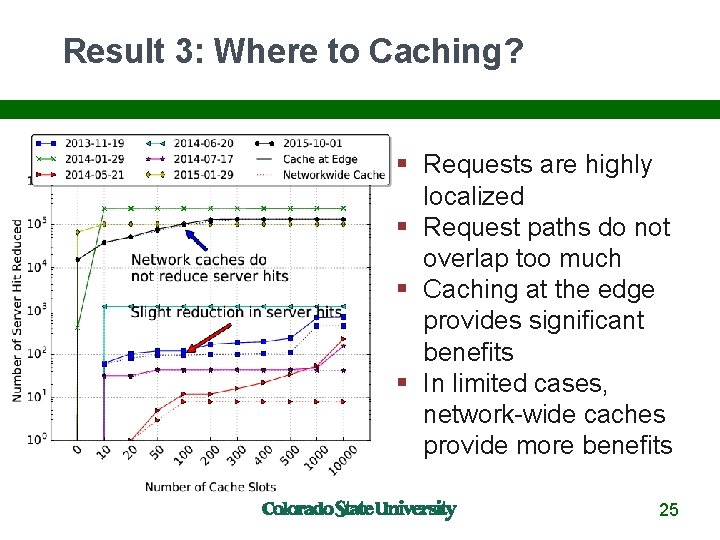

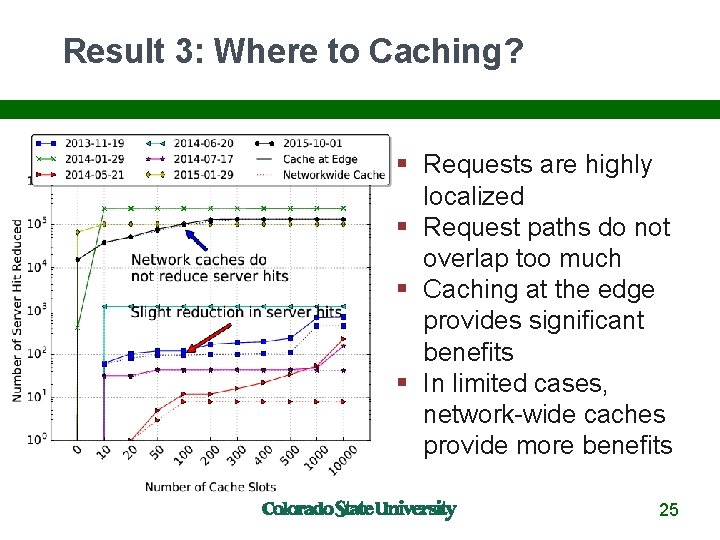

Result 3: Where to Caching? Requests are highly localized Request paths do not overlap too much Caching at the edge provides significant benefits In limited cases, network-wide caches provide more benefits 25

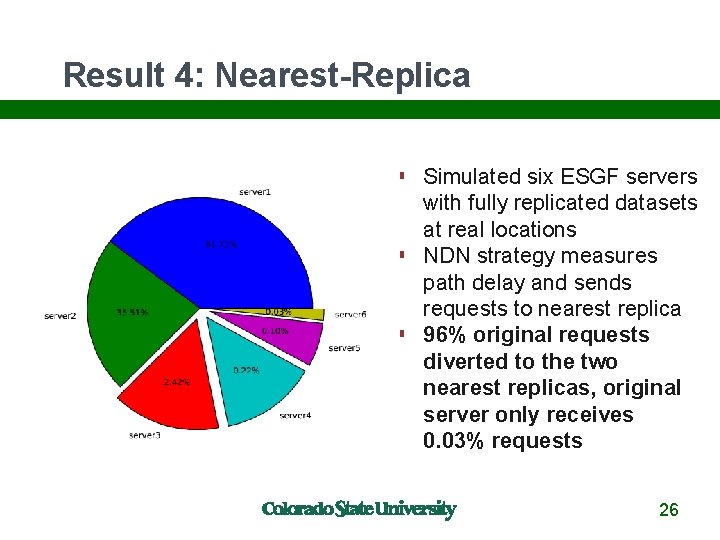

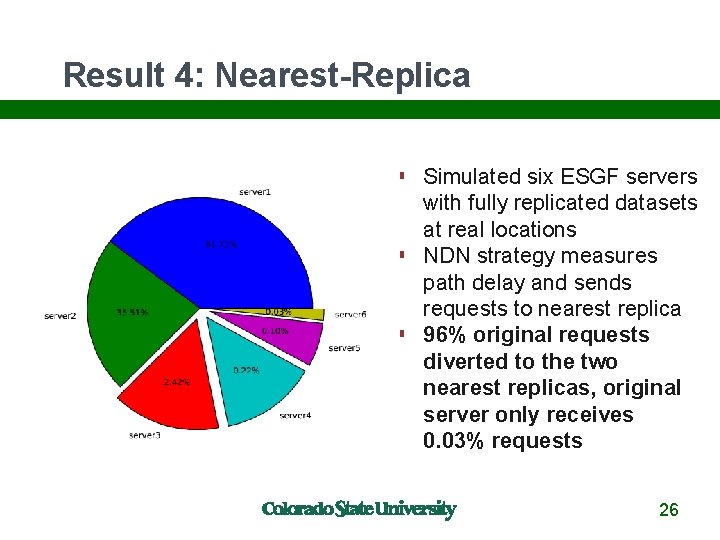

Result 4: Nearest-Replica Simulated six ESGF servers with fully replicated datasets at real locations NDN strategy measures path delay and sends requests to nearest replica 96% original requests diverted to the two nearest replicas, original server only receives 0. 03% requests 26

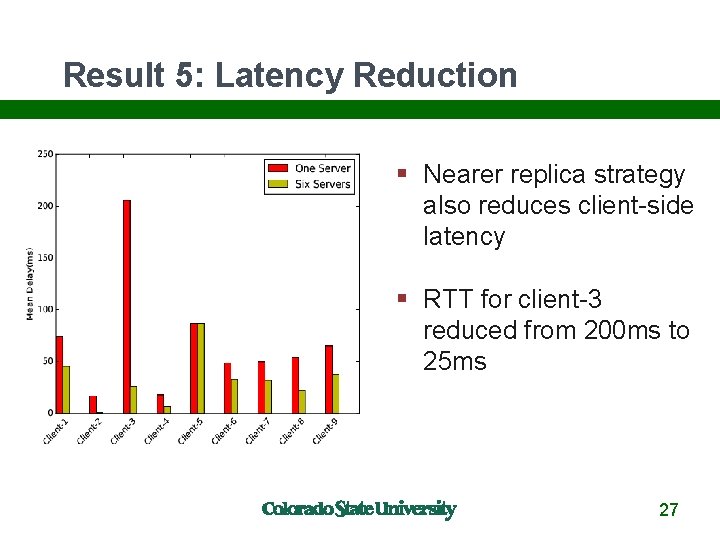

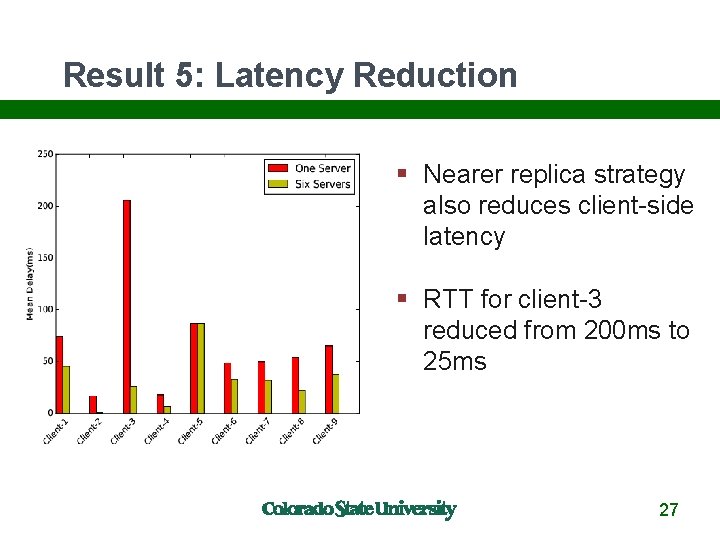

Result 5: Latency Reduction Nearer replica strategy also reduces client-side latency RTT for client-3 reduced from 200 ms to 25 ms 27

Conclusions NDN is a great match for CMIP 5 data distribution Naming translation had a few bumps because of inconsistent original names Found a few CMIP 5 naming bugs along the way Catalog implementation over NDN was very easy: publishing (with the help of NDN sync) discovery authentication NDN caching very beneficial in simulation NDN strategies shown to work on testbed 28

For More Info christos@colostate. edu http: //named-data. net http: //github. com/named-data 29