Mutual Information vs Correlation Both MI and correlation

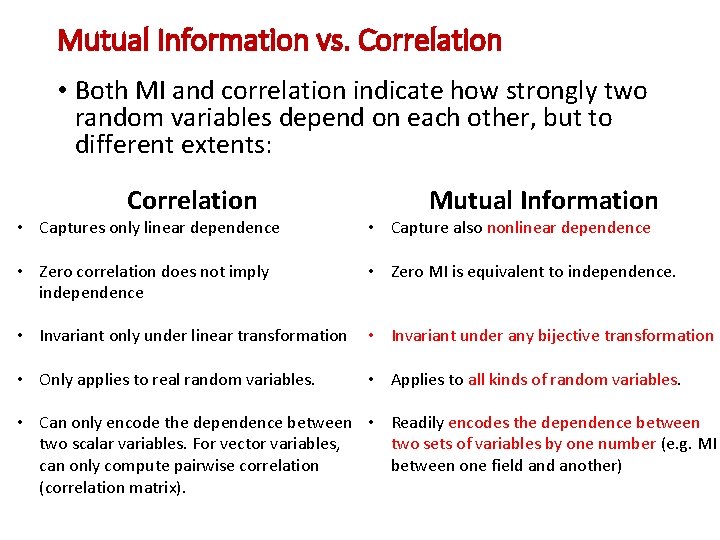

Mutual Information vs. Correlation • Both MI and correlation indicate how strongly two random variables depend on each other, but to different extents: Correlation Mutual Information • Captures only linear dependence • Capture also nonlinear dependence • Zero correlation does not imply independence • Zero MI is equivalent to independence. • Invariant only under linear transformation • Invariant under any bijective transformation • Only applies to real random variables. • Applies to all kinds of random variables. • Can only encode the dependence between • Readily encodes the dependence between two scalar variables. For vector variables, two sets of variables by one number (e. g. MI can only compute pairwise correlation between one field another) (correlation matrix).

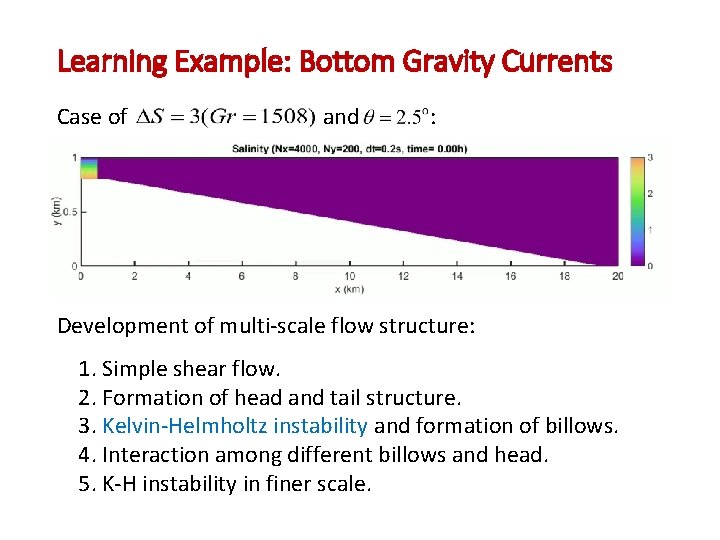

Learning Example: Bottom Gravity Currents Case of and : Development of multi-scale flow structure: 1. Simple shear flow. 2. Formation of head and tail structure. 3. Kelvin-Helmholtz instability and formation of billows. 4. Interaction among different billows and head. 5. K-H instability in finer scale.

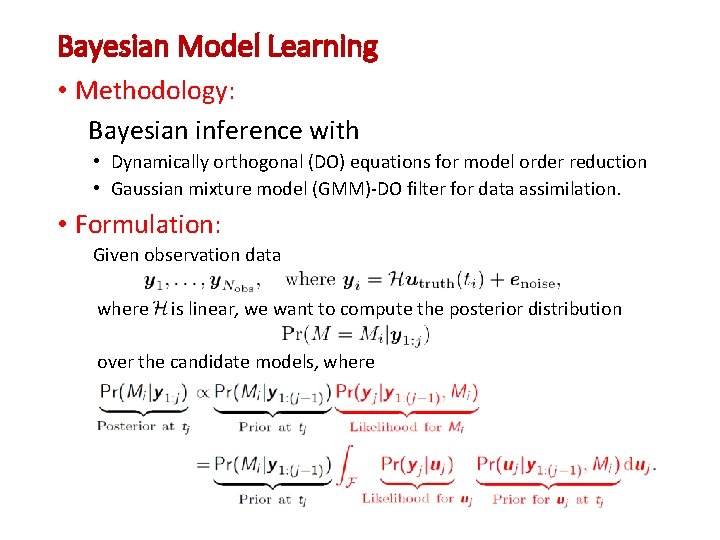

Bayesian Model Learning • Methodology: Bayesian inference with • Dynamically orthogonal (DO) equations for model order reduction • Gaussian mixture model (GMM)-DO filter for data assimilation. • Formulation: Given observation data where is linear, we want to compute the posterior distribution over the candidate models, where

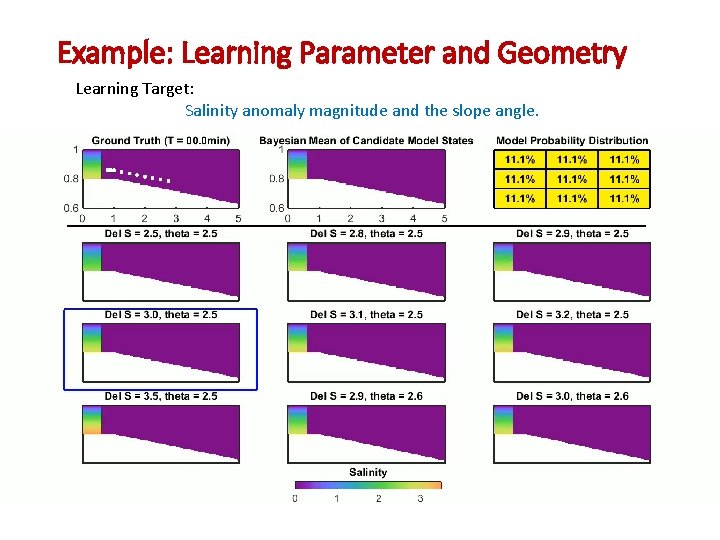

Example: Learning Parameter and Geometry Learning Target: Salinity anomaly magnitude and the slope angle.

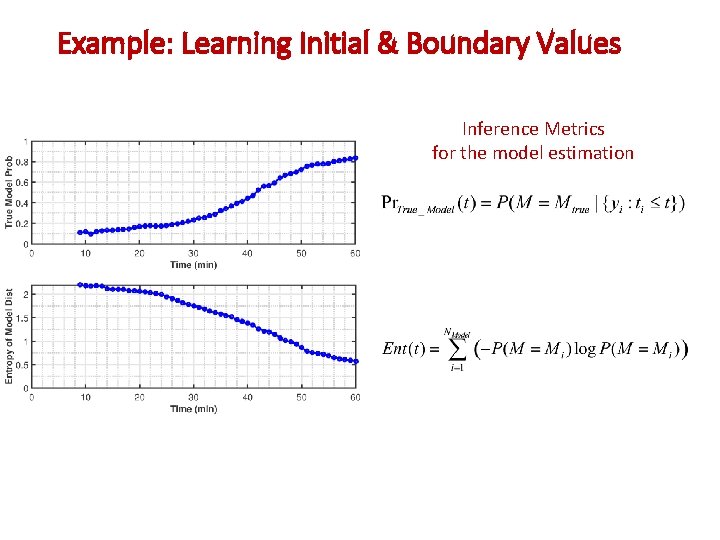

Example: Learning Initial & Boundary Values Inference Metrics for the model estimation

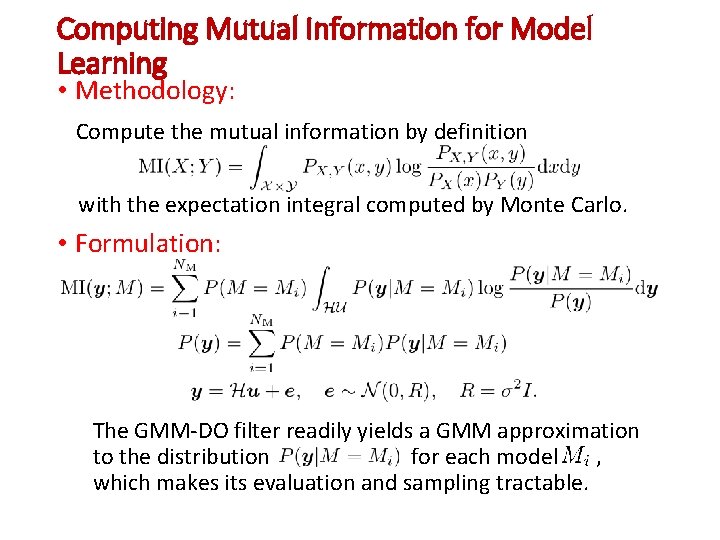

Computing Mutual Information for Model Learning • Methodology: Compute the mutual information by definition with the expectation integral computed by Monte Carlo. • Formulation: The GMM-DO filter readily yields a GMM approximation to the distribution for each model , which makes its evaluation and sampling tractable.

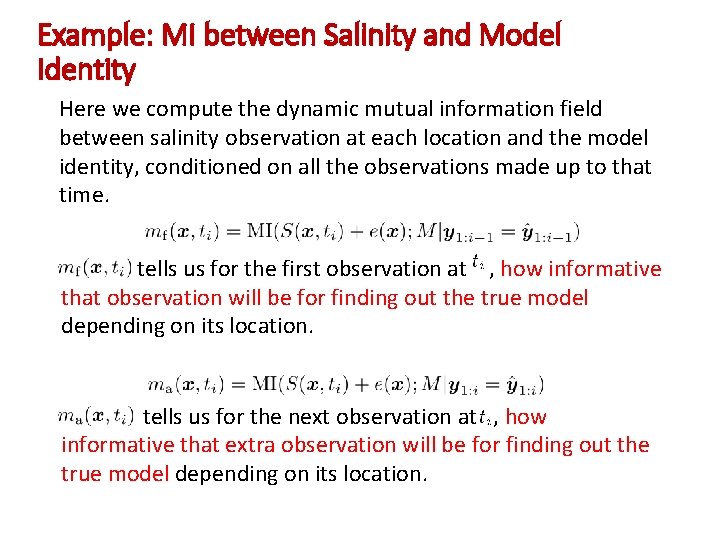

Example: MI between Salinity and Model Identity Here we compute the dynamic mutual information field between salinity observation at each location and the model identity, conditioned on all the observations made up to that time. tells us for the first observation at , how informative that observation will be for finding out the true model depending on its location. tells us for the next observation at , how informative that extra observation will be for finding out the true model depending on its location.

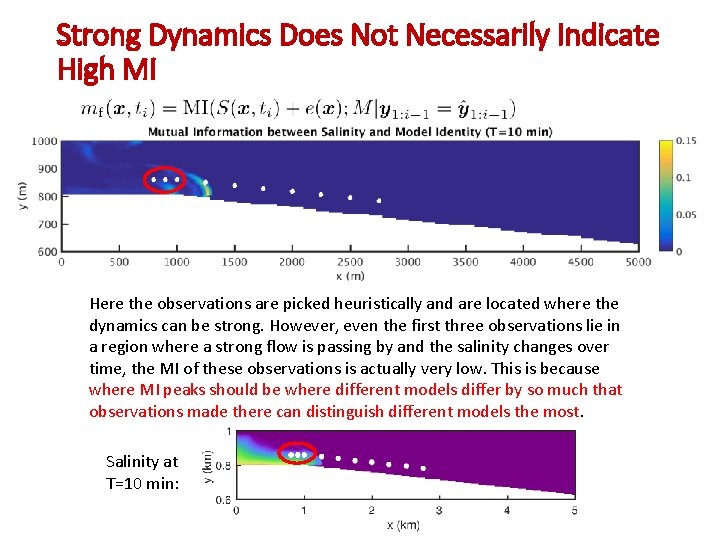

Strong Dynamics Does Not Necessarily Indicate High MI Here the observations are picked heuristically and are located where the dynamics can be strong. However, even the first three observations lie in a region where a strong flow is passing by and the salinity changes over time, the MI of these observations is actually very low. This is because where MI peaks should be where different models differ by so much that observations made there can distinguish different models the most. Salinity at T=10 min:

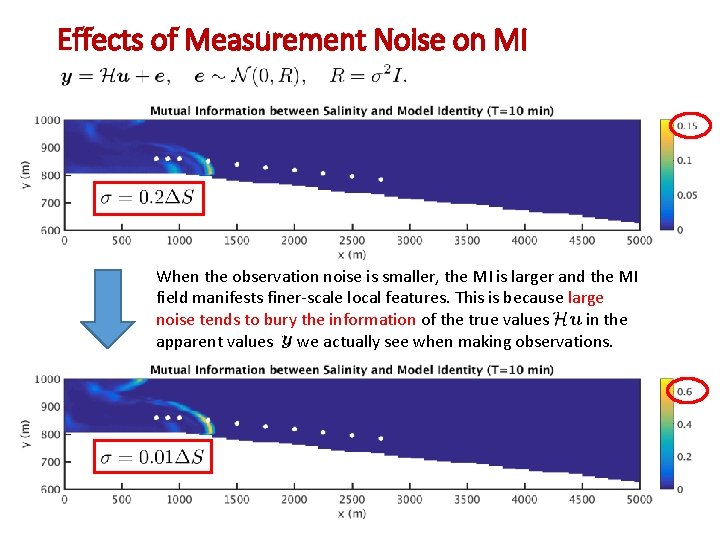

Effects of Measurement Noise on MI When the observation noise is smaller, the MI is larger and the MI field manifests finer-scale local features. This is because large noise tends to bury the information of the true values in the apparent values we actually see when making observations.

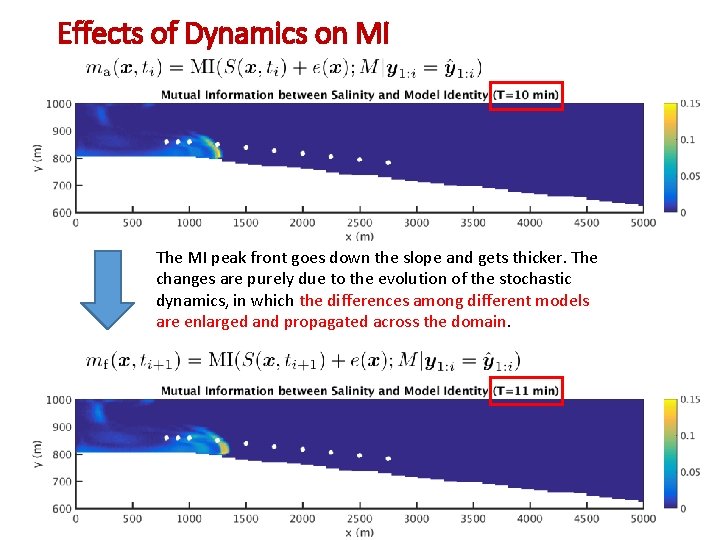

Effects of Dynamics on MI The MI peak front goes down the slope and gets thicker. The changes are purely due to the evolution of the stochastic dynamics, in which the differences among different models are enlarged and propagated across the domain.

MI of More Than One Observations • Show the MI of more than one observations. • How this help us identify redundant observations or bad observation combinations.

Change Observations According to MI • Show the entropy of the distribution over models decrease differently with different observaitons.

- Slides: 12