Multivariate Probability Distributions Multivariate Random Variables In many

Multivariate Probability Distributions

Multivariate Random Variables • In many settings, we are interested in 2 or more characteristics observed in experiments • Often used to study the relationship among characteristics and the prediction of one based on the other(s) • Three types of distributions: – Joint: Distribution of outcomes across all combinations of variables levels – Marginal: Distribution of outcomes for a single variable – Conditional: Distribution of outcomes for a single variable, given the level(s) of the other variable(s)

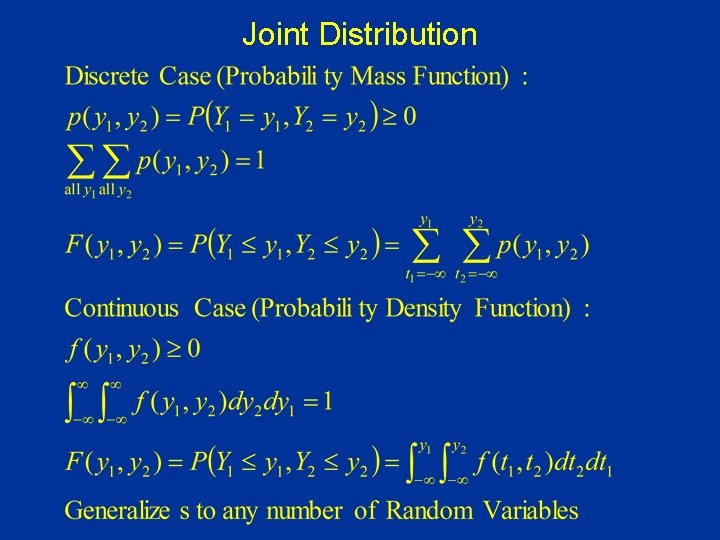

Joint Distribution

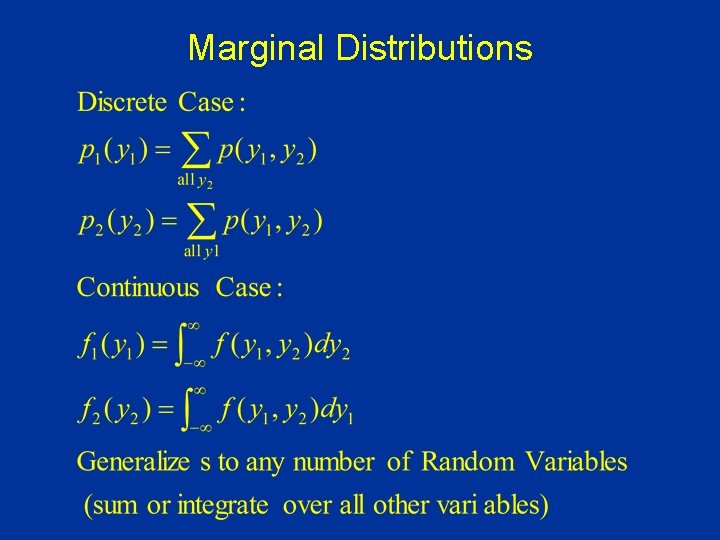

Marginal Distributions

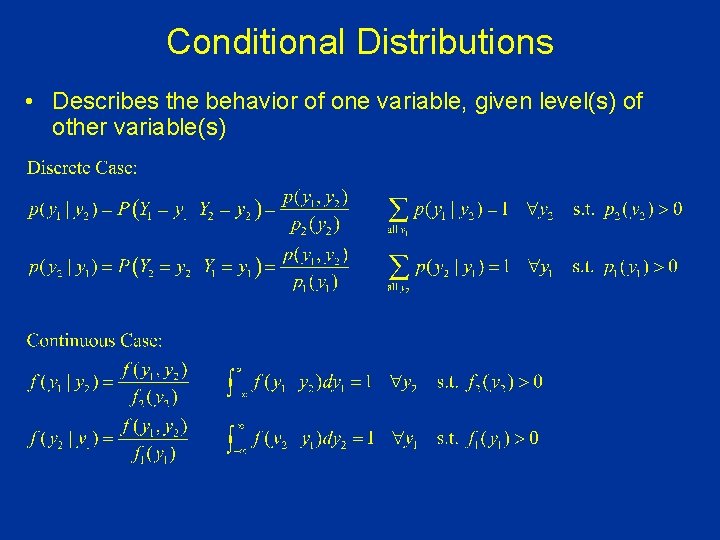

Conditional Distributions • Describes the behavior of one variable, given level(s) of other variable(s)

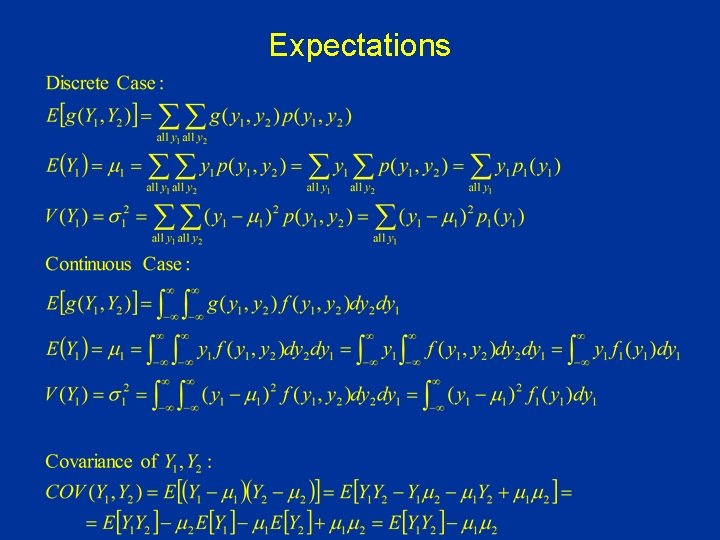

Expectations

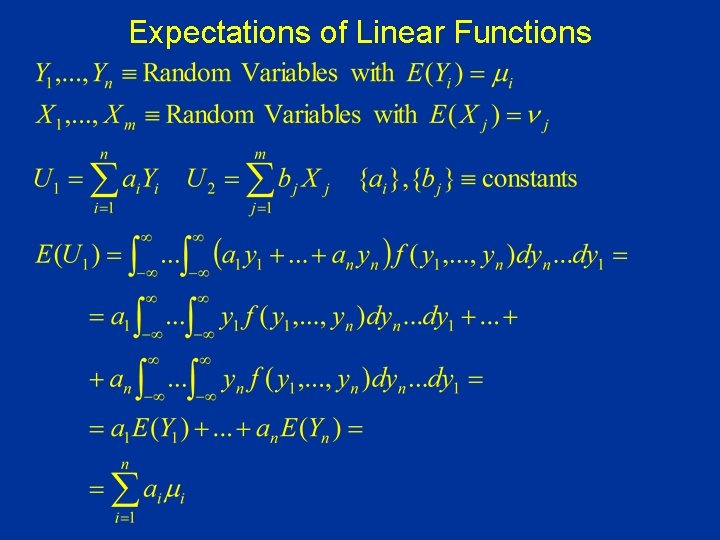

Expectations of Linear Functions

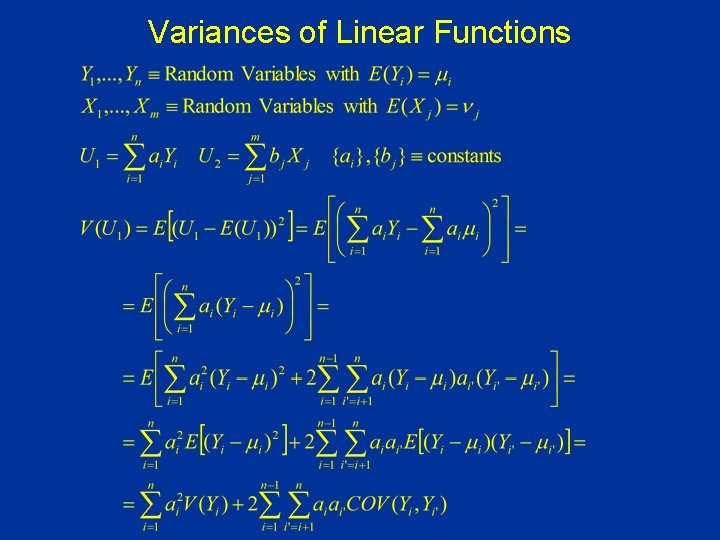

Variances of Linear Functions

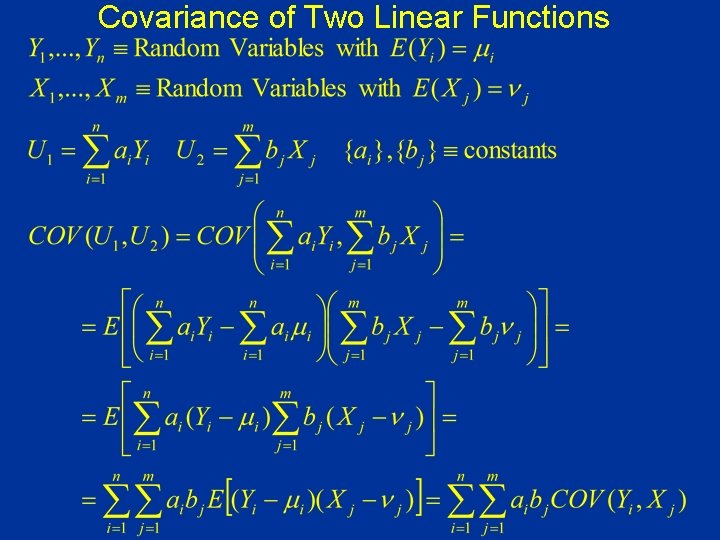

Covariance of Two Linear Functions

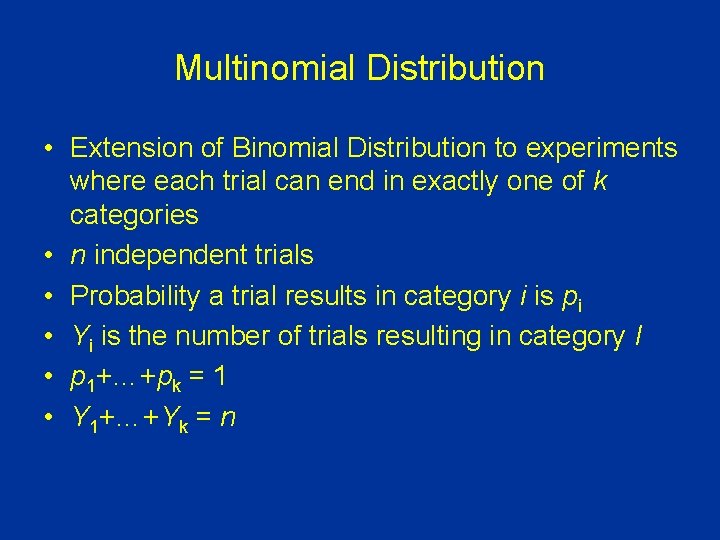

Multinomial Distribution • Extension of Binomial Distribution to experiments where each trial can end in exactly one of k categories • n independent trials • Probability a trial results in category i is pi • Yi is the number of trials resulting in category I • p 1+…+pk = 1 • Y 1+…+Yk = n

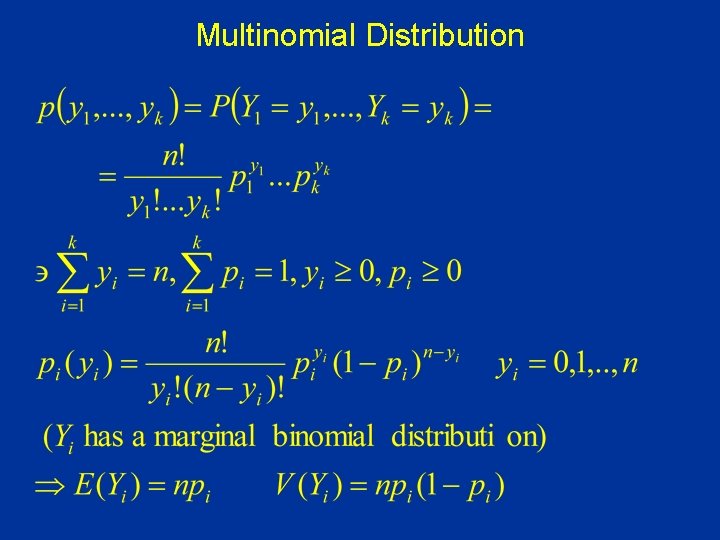

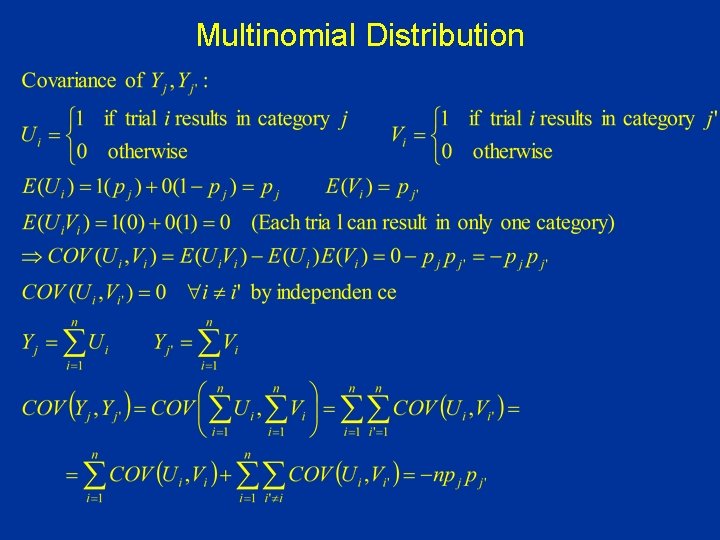

Multinomial Distribution

Multinomial Distribution

![Conditional Expectations When E[Y 1|y 2] is a function of y 2, function is Conditional Expectations When E[Y 1|y 2] is a function of y 2, function is](http://slidetodoc.com/presentation_image_h/7c14b15ec3feeeb12bc87d3aa20a0772/image-13.jpg)

Conditional Expectations When E[Y 1|y 2] is a function of y 2, function is called the regression of Y 1 on Y 2

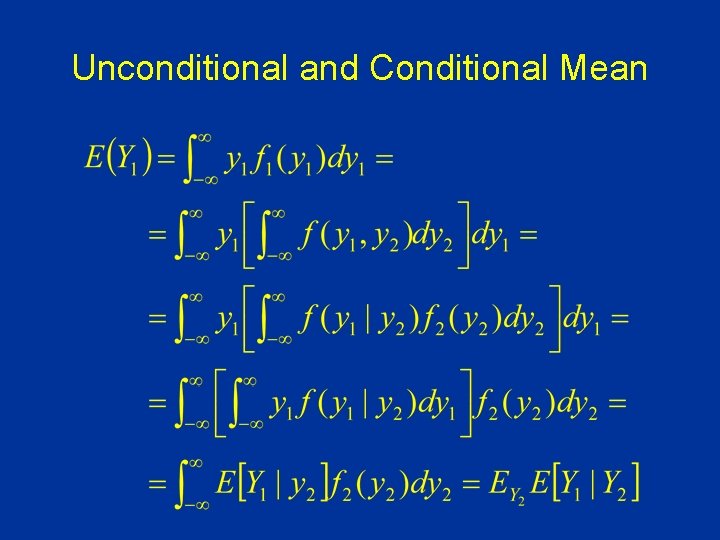

Unconditional and Conditional Mean

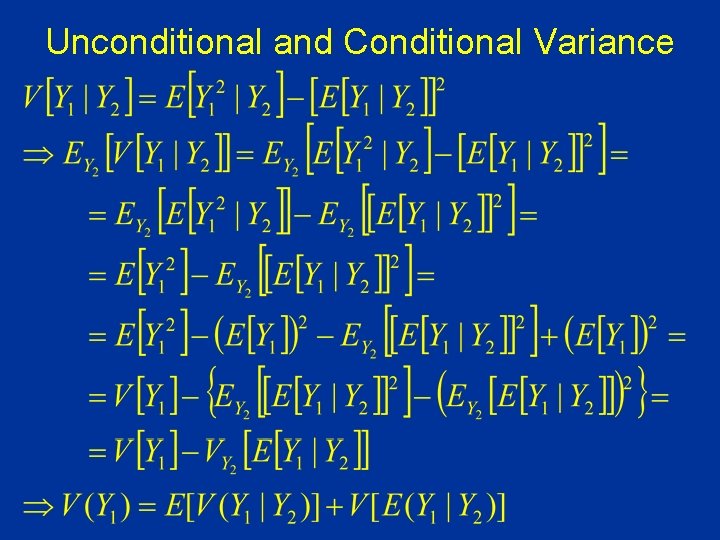

Unconditional and Conditional Variance

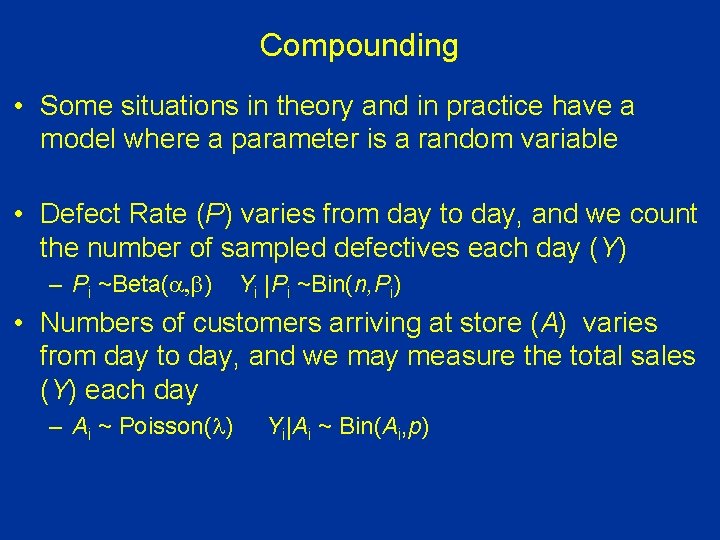

Compounding • Some situations in theory and in practice have a model where a parameter is a random variable • Defect Rate (P) varies from day to day, and we count the number of sampled defectives each day (Y) – Pi ~Beta(a, b) Yi |Pi ~Bin(n, Pi) • Numbers of customers arriving at store (A) varies from day to day, and we may measure the total sales (Y) each day – Ai ~ Poisson(l) Yi|Ai ~ Bin(Ai, p)

- Slides: 16