Multithreading basics Main process forks additional processing threads

Multi-threading basics Main process forks additional processing threads Takes advantage of multiple processors, or CPU dead times while waiting for data Synchronization: When one thread needs the results of processing happening in another thread, (i. e. one thread will wait) Locks: multiple threads might need to access the same data. They have to lock it/manipulate it/unlock it (as quick as possible)

Why multi-threading/multi-core? Clock rates are stagnant Future CPUs will be predominantly multithread/multi-core Xbox 360 has 3 cores PS 3 has a stream architecture with eight cores Almost all new PC’s are dual or quad core. Two performance possibilities: Single-threaded? Minimal performance growth Multi-threaded? Exponential performance growth

Design for Multithreading Good design is critical Bad multithreading can be worse than no multithreading Deadlocks, synchronization bugs, poor performance, etc. Comments can help alot!

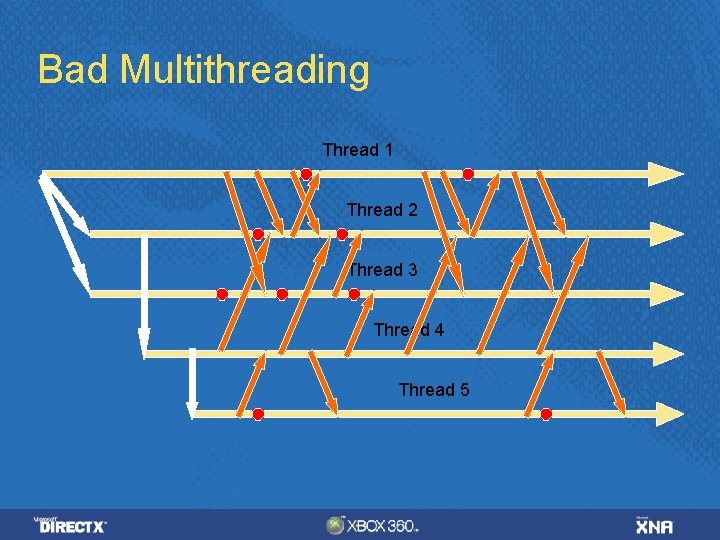

Bad Multithreading Thread 1 Thread 2 Thread 3 Thread 4 Thread 5

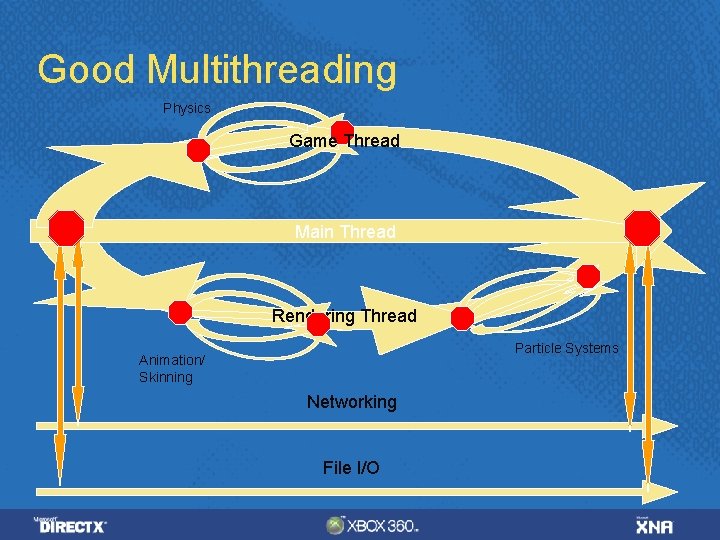

Good Multithreading Physics Game Thread Main Thread Rendering Thread Particle Systems Animation/ Skinning Networking File I/O

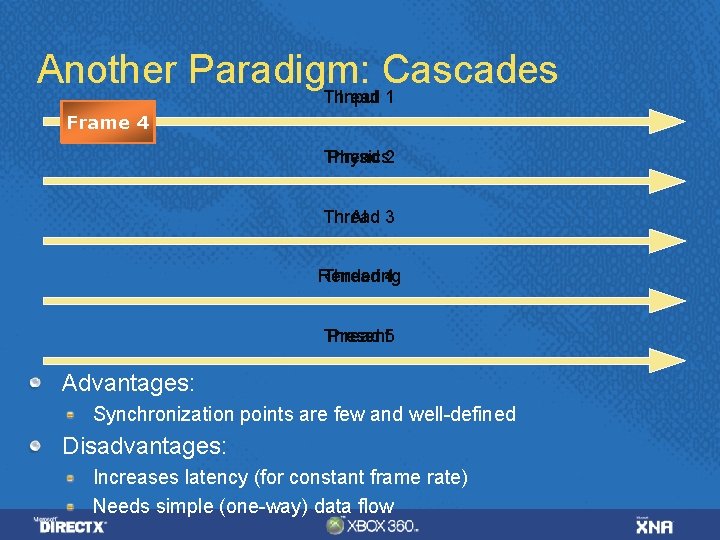

Another Paradigm: Cascades Thread Input 1 Frame 1 2 4 3 Thread Physics 2 Thread AI 3 Rendering Thread 4 Thread Present 5 Advantages: Synchronization points are few and well-defined Disadvantages: Increases latency (for constant frame rate) Needs simple (one-way) data flow

Available Synchronization Objects Events Semaphores Mutexes Critical Sections Don't use Suspend. Thread() Some title have used this for synchronization Can easily lead to deadlocks Interacts badly with Visual Studio debugger

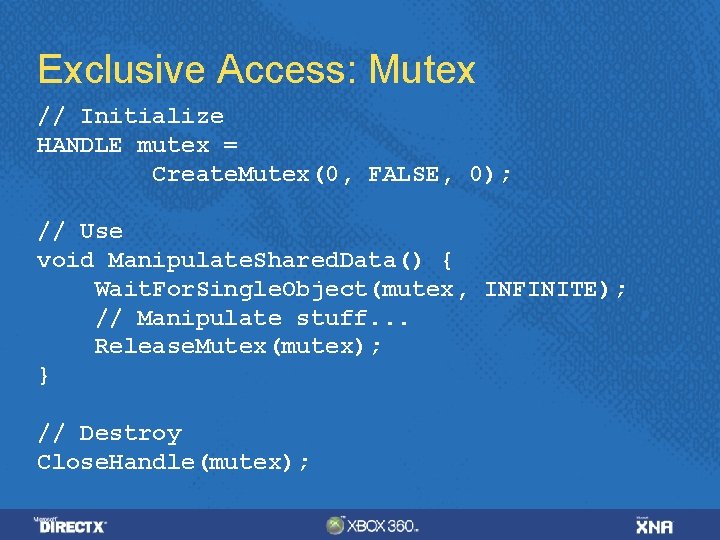

Exclusive Access: Mutex // Initialize HANDLE mutex = Create. Mutex(0, FALSE, 0); // Use void Manipulate. Shared. Data() { Wait. For. Single. Object(mutex, INFINITE); // Manipulate stuff. . . Release. Mutex(mutex); } // Destroy Close. Handle(mutex);

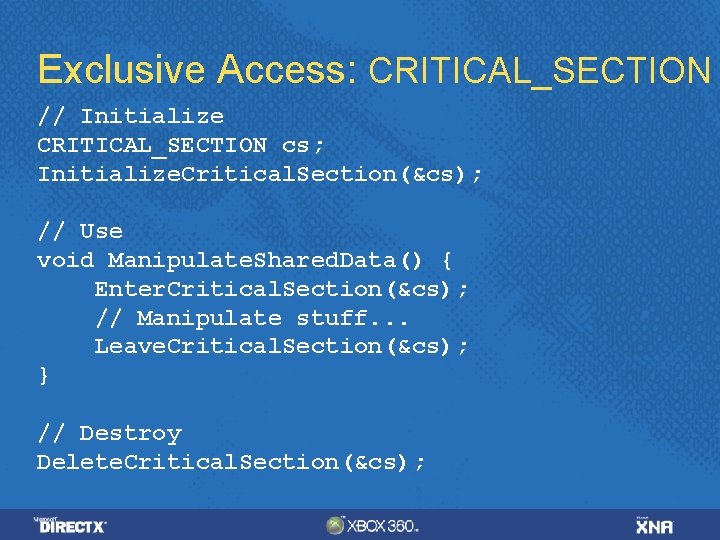

Exclusive Access: CRITICAL_SECTION // Initialize CRITICAL_SECTION cs; Initialize. Critical. Section(&cs); // Use void Manipulate. Shared. Data() { Enter. Critical. Section(&cs); // Manipulate stuff. . . Leave. Critical. Section(&cs); } // Destroy Delete. Critical. Section(&cs);

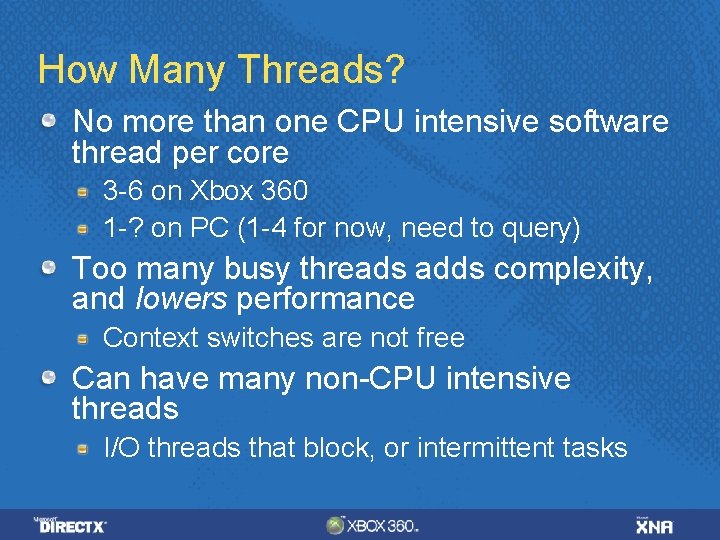

How Many Threads? No more than one CPU intensive software thread per core 3 -6 on Xbox 360 1 -? on PC (1 -4 for now, need to query) Too many busy threads adds complexity, and lowers performance Context switches are not free Can have many non-CPU intensive threads I/O threads that block, or intermittent tasks

Typical Threaded Tasks File Decompression Rendering Graphics Fluff Physics

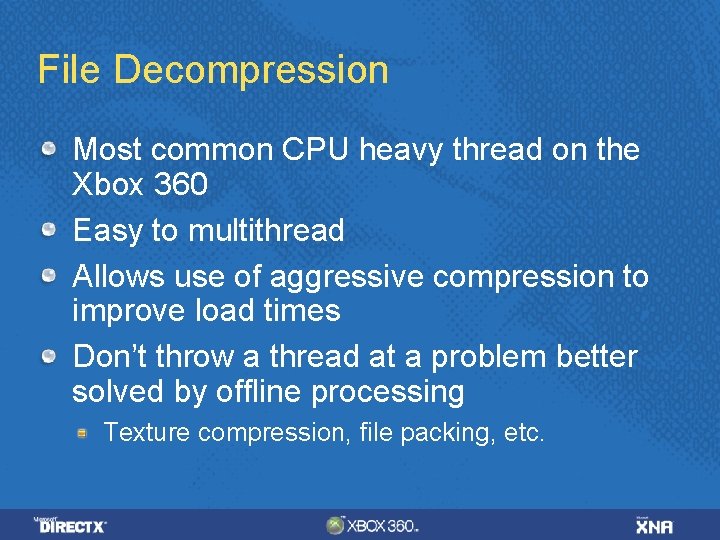

File Decompression Most common CPU heavy thread on the Xbox 360 Easy to multithread Allows use of aggressive compression to improve load times Don’t throw a thread at a problem better solved by offline processing Texture compression, file packing, etc.

Rendering Separate update and render threads Rendering on multiple threads (D 3 DCREATE_MULTITHREADED) works poorly Exception: Xbox 360 command buffers Special case of cascades paradigm Pass render state from update to render With constant workload gives same latency, better frame rate With increased workload gives same frame rate, worse latency

Graphics Fluff Extra graphics that doesn't affect play Procedurally generated animating cloud textures Cloth simulations Dynamic ambient occlusion Procedurally generated vegetation, etc. Extra particles, better particle physics, etc. Easy to synchronize Potentially expensive, but if the core is otherwise idle. . . ?

Physics? Could cascade from update to physics to rendering Makes use of three threads May be too much latency Could run physics on many threads Uses many threads while doing physics May leave threads mostly idle elsewhere

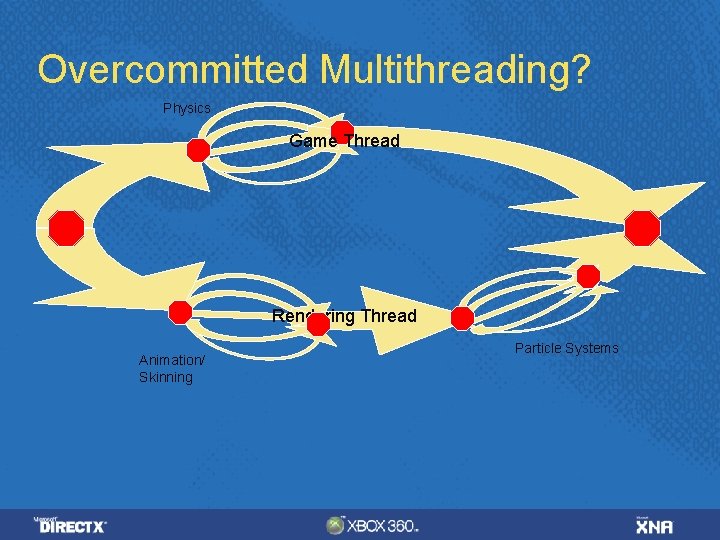

Overcommitted Multithreading? Physics Game Thread Rendering Thread Animation/ Skinning Particle Systems

Synchronization tips/costs: Synchronization is moderately expensive when there is no contention Hundreds to thousands of cycles Synchronization can be arbitrarily expensive when there is contention! Goals: Synchronize rarely Hold locks briefly Minimize shared data

Threading File I/O & Decompression First: use large reads and asynchronous I/O Then: consider compression to accelerate loading Don't do format conversions etc. that are better done at build time! Have resource proxies to allow rendering to continue

File I/O Implementation Details vector<Resource*> g_resources; Worst design: decompressor locks g_resources while decompressing Better design: decompressor adds resources to vector after decompressing Still requires renderer to synch on every resource access Best design: two Resource* vectors Renderer has private vector, no locking required Decompressor use shared vector, syncs when adding new Resource* Renderer moves Resource* from shared to private vector once per frame

Profiling multi-threaded apps Need thread-aware profilers Profiling may hide many synchronization stalls Home-grown spin locks make profiling harder Consider instrumenting calls to synchronization functions Don't use locks in instrumentation Windows: Intel VTune, AMD Code. Analyst, and the Visual Studio Team System Profiler Xbox 360: PIX, Xb. Perf. View, etc.

Windows tips Avoid using wgl. Make. Current or this. Invoke() Best to do all rendering calls from a single thread Test on multiple machines and configurations Single-core, SMT (i. e. Hyper-Threading), Dualcore, Intel and AMD chips, Multi-socket multicore (4+ cores)

Ogre-specific Ogre has a class to load resources in a background process http: //www. ogre 3 d. org/docs/api/html/class. Og re_1_1 Resource. Background. Queue. html

- Slides: 22