Multimodal Speech and Dialog Recognition Stephen Levinson Thomas

- Slides: 14

Multimodal Speech and Dialog Recognition Stephen Levinson, Thomas Huang, Mark Hasegawa-Johnson, Ken Chen, Stephen Chu, Ashutosh Garg, Zhinian Jing, Danfeng Li, John Lin, Mohamed Omar, Zhen Wen

Outline • Robust Features from Multimodal Sensors – Binaural Hearing – Robust Acoustic Features – Face Tracking – Gesture Recognition • Recognition: Dynamic Bayesian Networks – Speechreading (CHMM) – Prosody (FHMM) – User State Recognition (HHMM) – Automatic Language Acquisition (Association)

Research Environment 1: Multimodal HS Physics Tutor • User State Recognition determines: – System initiative vs. User initiative – Choice of topics, wording of explanations • User State based on observation of: – Dialog content (correctness, complexity) – Emotion recognition (pitch, speaking rate, video) • Usability Testers: Urbana HS Students

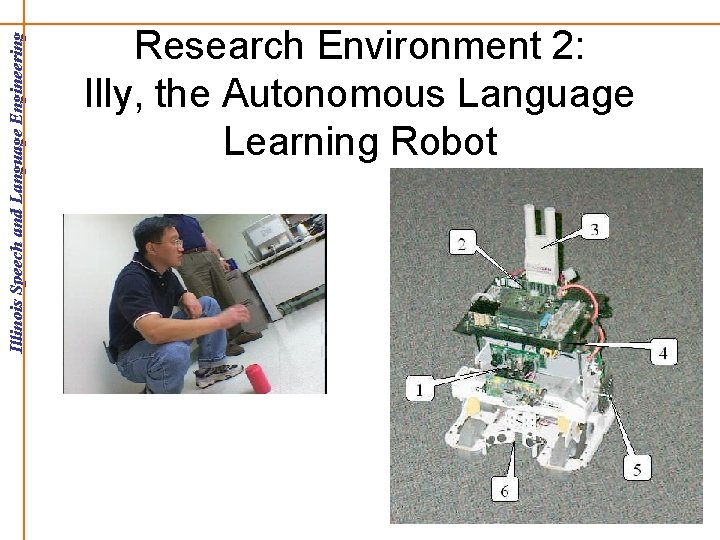

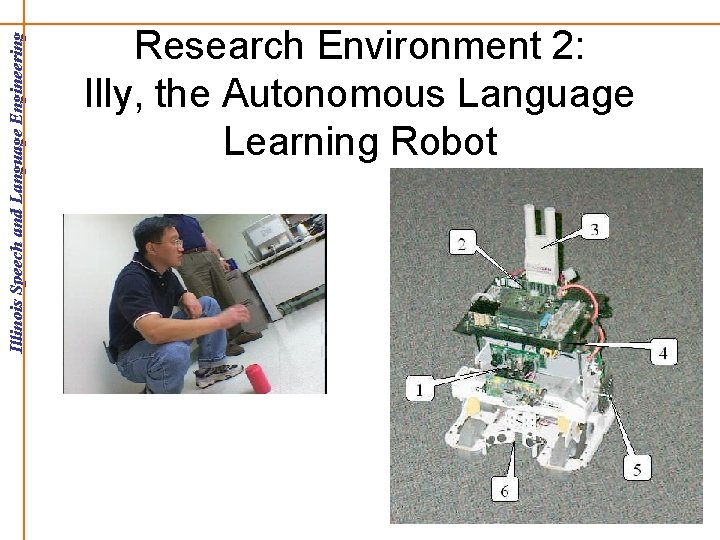

Research Environment 2: Illy, the Autonomous Language Learning Robot

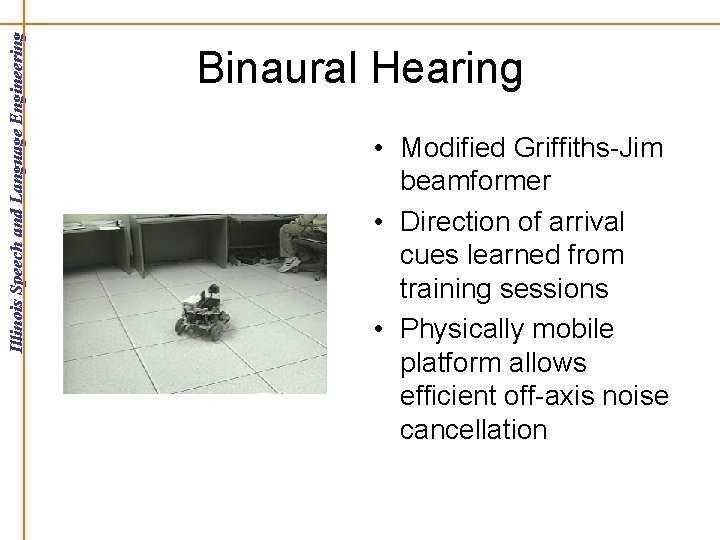

Binaural Hearing • Modified Griffiths-Jim beamformer • Direction of arrival cues learned from training sessions • Physically mobile platform allows efficient off-axis noise cancellation

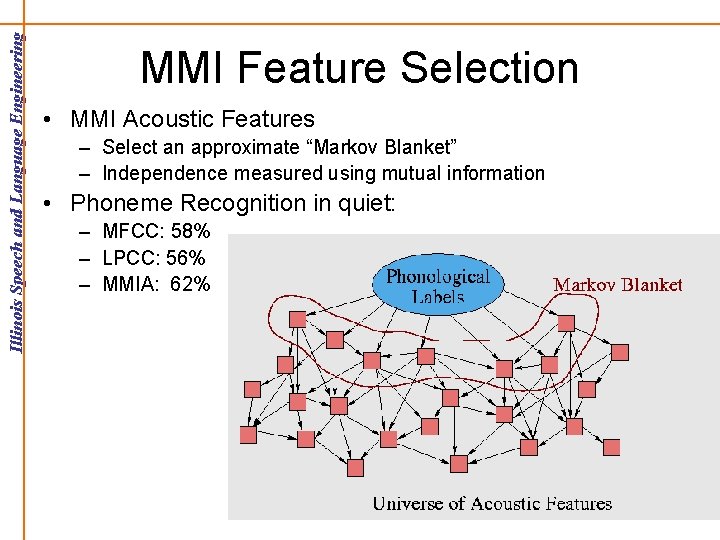

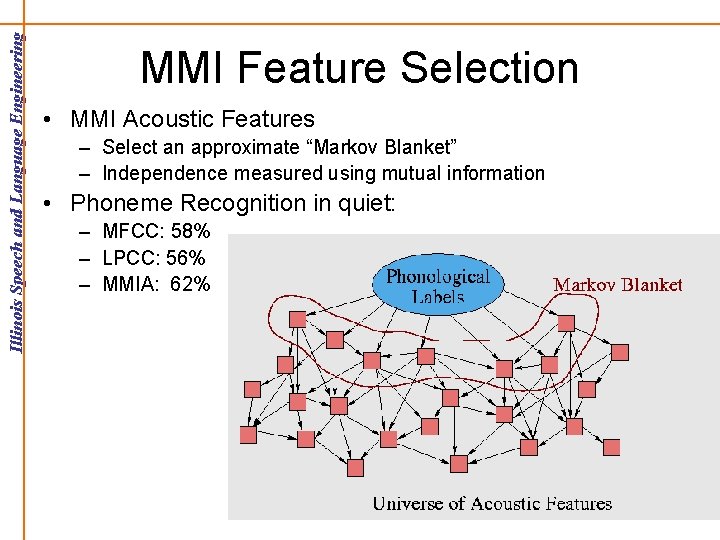

MMI Feature Selection • MMI Acoustic Features – Select an approximate “Markov Blanket” – Independence measured using mutual information • Phoneme Recognition in quiet: – MFCC: 58% – LPCC: 56% – MMIA: 62%

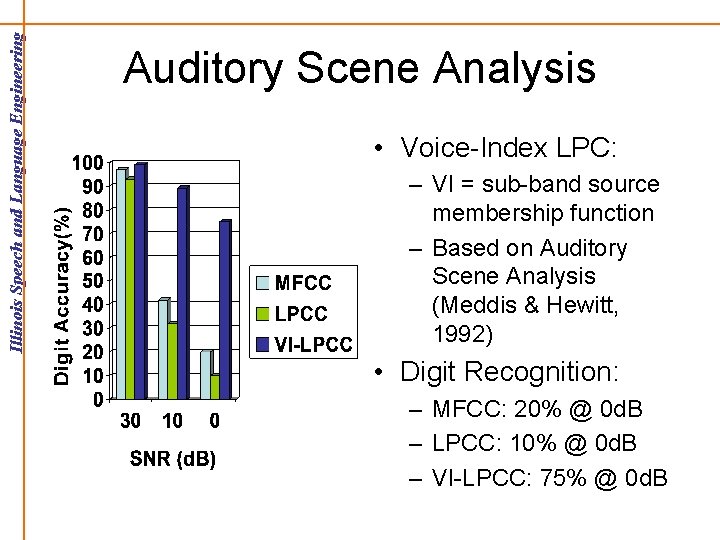

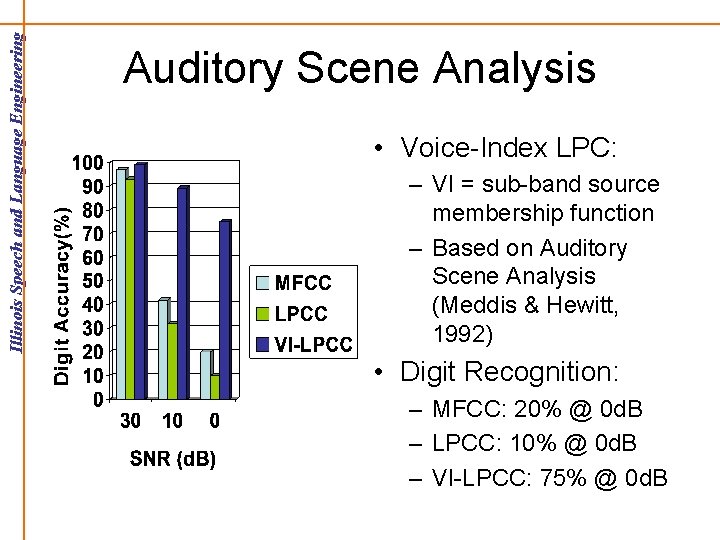

Auditory Scene Analysis • Voice-Index LPC: – VI = sub-band source membership function – Based on Auditory Scene Analysis (Meddis & Hewitt, 1992) • Digit Recognition: – MFCC: 20% @ 0 d. B – LPCC: 10% @ 0 d. B – VI-LPCC: 75% @ 0 d. B

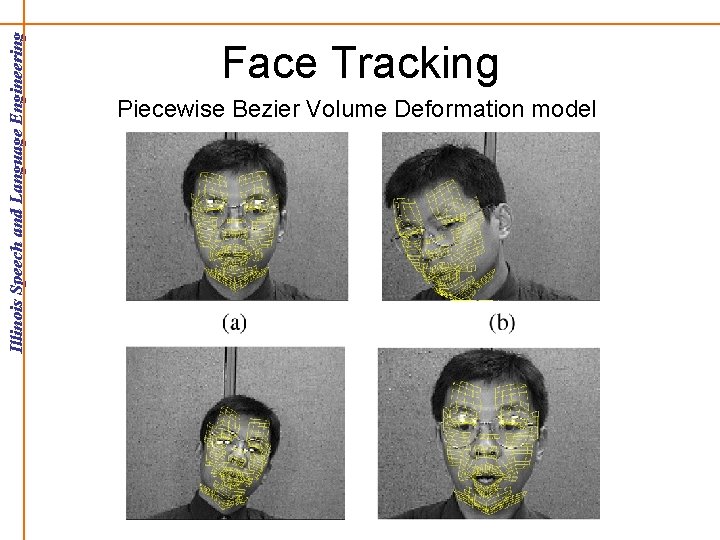

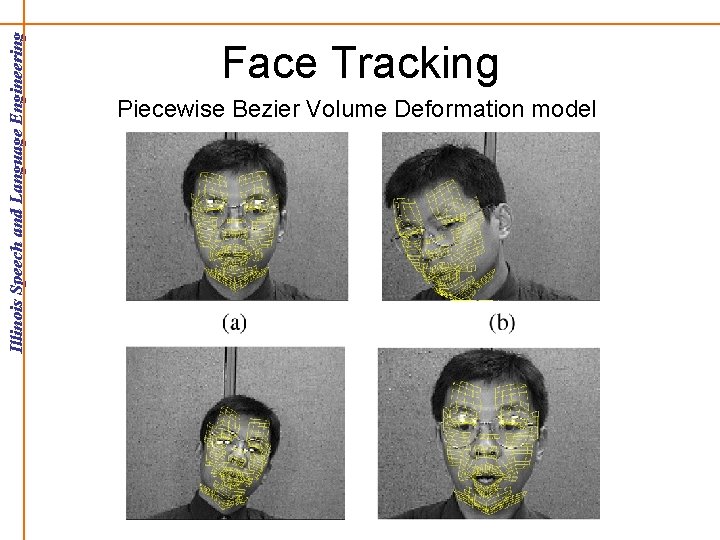

Face Tracking Piecewise Bezier Volume Deformation model

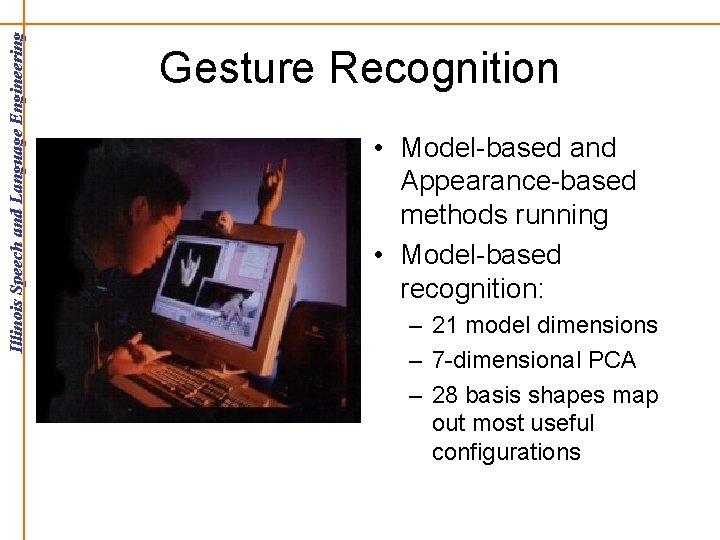

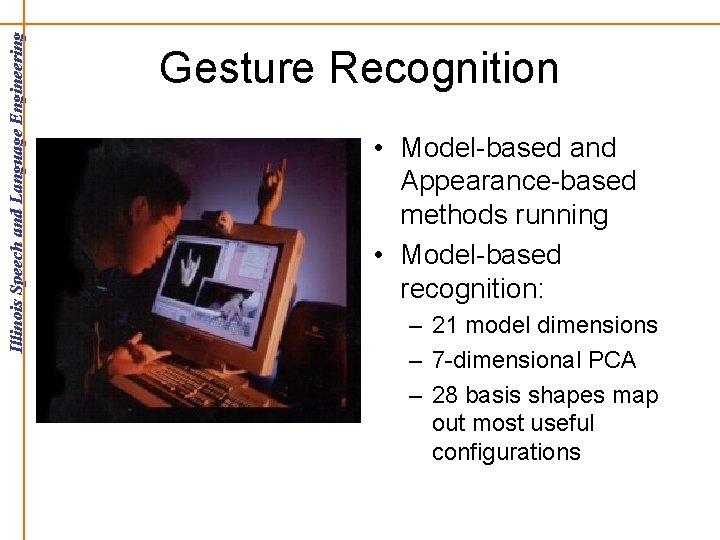

Gesture Recognition • Model-based and Appearance-based methods running • Model-based recognition: – 21 model dimensions – 7 -dimensional PCA – 28 basis shapes map out most useful configurations

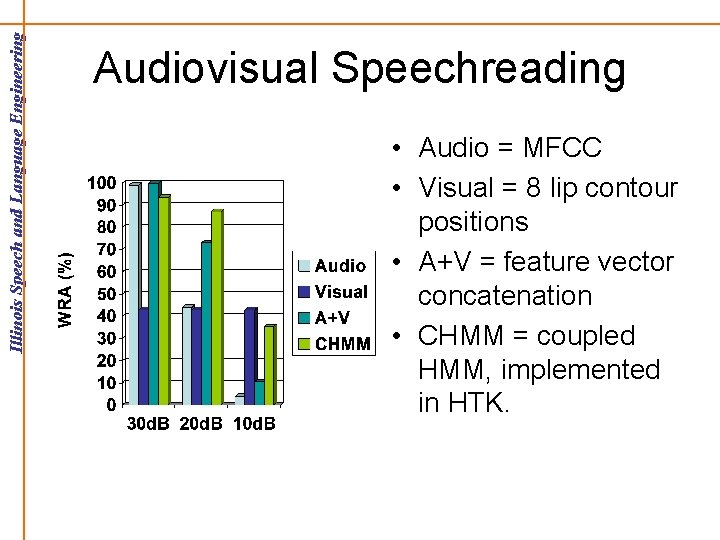

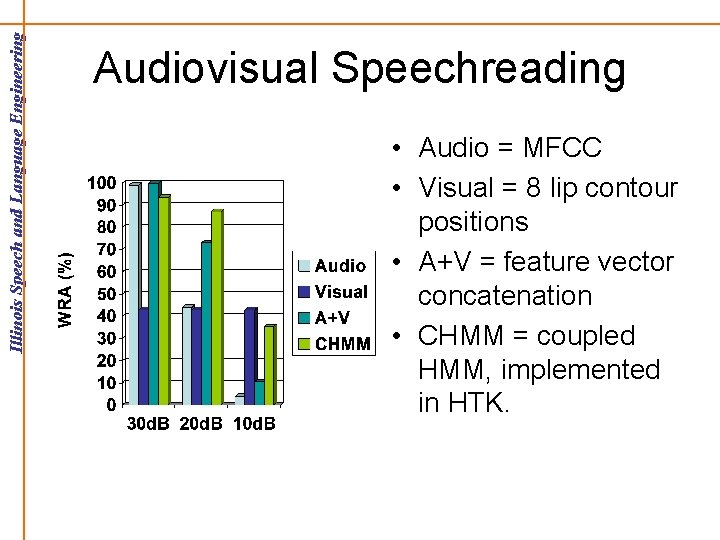

Audiovisual Speechreading • Audio = MFCC • Visual = 8 lip contour positions • A+V = feature vector concatenation • CHMM = coupled HMM, implemented in HTK.

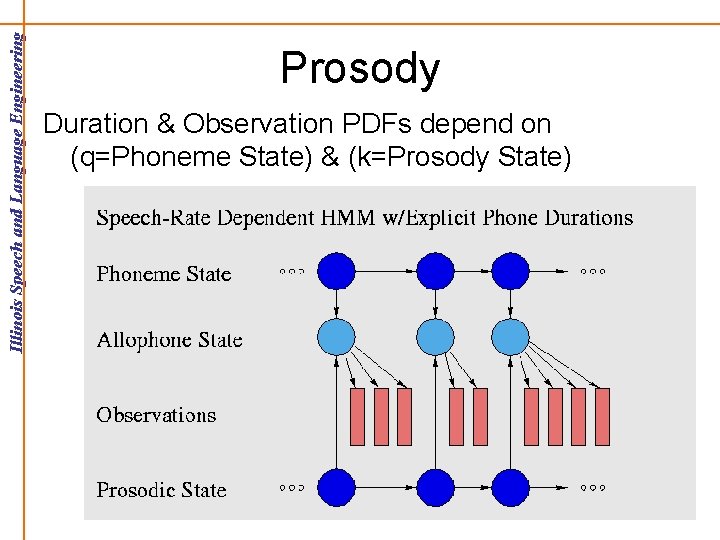

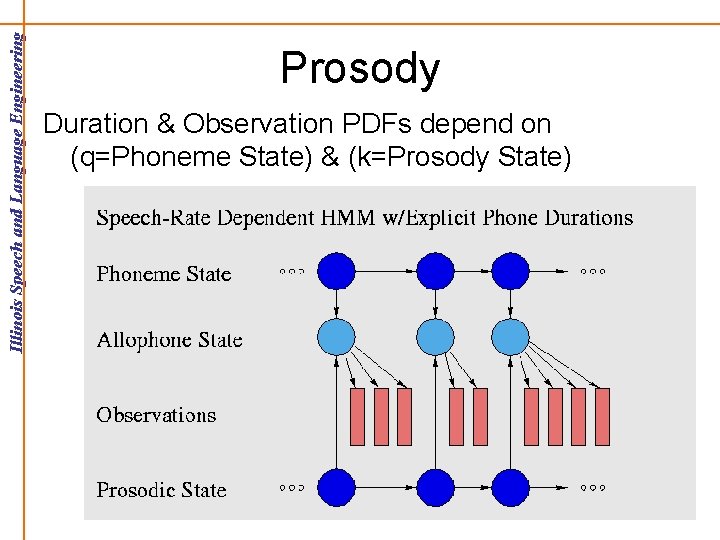

Prosody Duration & Observation PDFs depend on (q=Phoneme State) & (k=Prosody State)

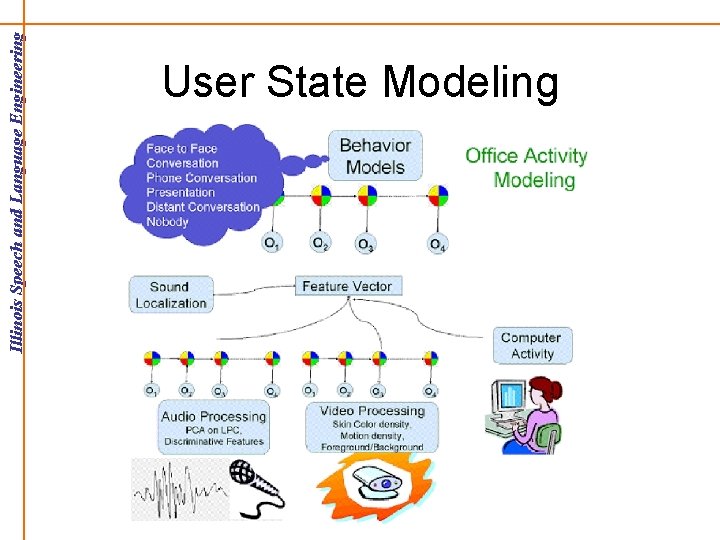

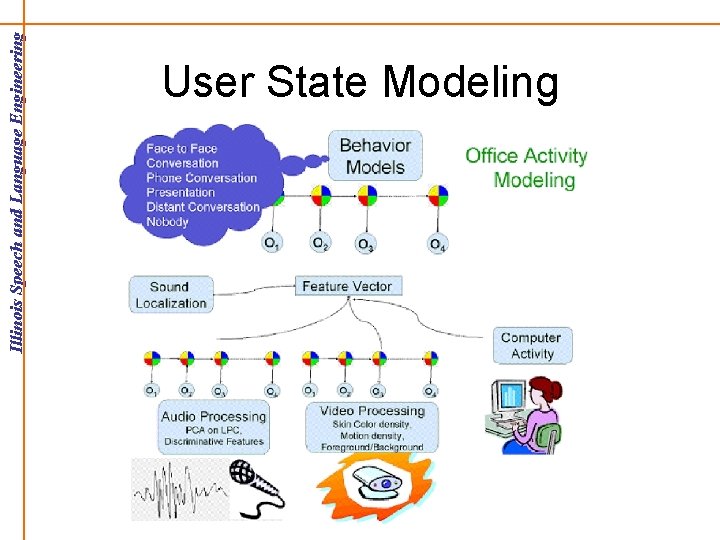

User State Modeling

Automatic Language Acquisition

Conclusions • Objectives: – Theoretical Understanding – Practical Applications • Noise robustness achieved through: – Audiovisual Feature Design – Multimodal Integration