Multimodal Interfaces Robust interaction where graphical user interfaces

Multimodal Interfaces Robust interaction where graphical user interfaces fear to tread Philip R. Cohen Professor and Co-Director Center for Human-Computer Communication Oregon Health and Science Univ. http: //www. cse. ogi. edu/CHCC and Natural Interaction Systems, LLC

Team Effort Co-PI: Sharon Oviatt Rajah Annamalai Alex Arthur Paulo Barthelmess Rachel Coulston Marisa Flecha-Garcia Xiao Huang Ed Kaiser Sanjeev Kumar Rebecca Lunsford Richard Wesson Multidisciplinary research

Multimodal Interaction • Use of one or more natural communication modalities—e. g. , Speech, gesture, sketch … • Advantages over GUI and unimodal systems – Easier to use; Less training – Robust, flexible – Preferred by users – Faster, more efficient – Supports new functionality • Applies to many different environments and form factors that challenge GUI, especially mobile ones

Potential Application Areas • • • Architecture and Design Geographical Information Systems Emergency Operations Field-based Operations Mobile Computing and Telecommunications Virtual/Augmented Reality Pervasive/Ubiquitous Computing Computer-Supported Collaborative Work Education Entertainment

Challenges for multimodal interface design • More than 2 modes –e. g. spoken, gestural, facial expression, gaze; various sensors • Inputs are uncertain –vs. Keyboard/mouse – Corrupted by noise – Multiple people • Recognition is probabilistic • Meaning is ambiguous Design for uncertainty

Approach Gain robustness via – Fusion of inputs from multiple modalities – Using strengths of one mode to compensate for weaknesses of others—design time and run time – Avoiding/correcting errors – Statistical architecture – Confirmation – Dialogue context – Simplification of language in a multimodal context – Output affecting/channeling input

Demo Started with 50 & 100 Mhz 486

Multimodal Architecture

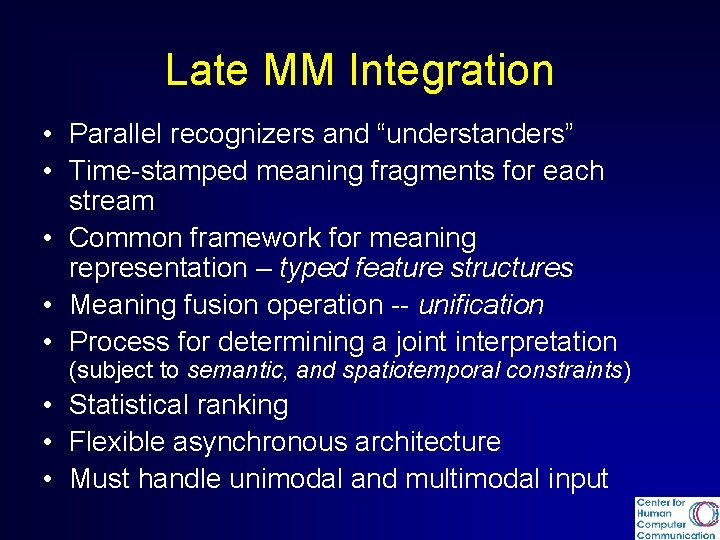

Late MM Integration • Parallel recognizers and “understanders” • Time-stamped meaning fragments for each stream • Common framework for meaning representation – typed feature structures • Meaning fusion operation -- unification • Process for determining a joint interpretation (subject to semantic, and spatiotemporal constraints) • Statistical ranking • Flexible asynchronous architecture • Must handle unimodal and multimodal input

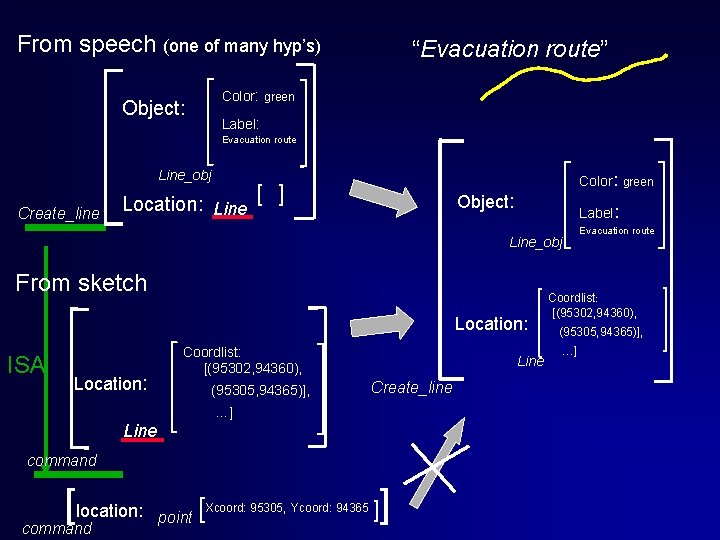

From speech (one of many hyp’s) “Evacuation route” Color: green Object: Label: Evacuation route Line_obj Create_line Location: Line [ ] Color: green Object: Label: Evacuation route Line_obj From sketch Location: ISA Location: Coordlist: [(95302, 94360), (95305, 94365)], Line Create_line …] Line command [location: command point [Xcoord: 95305, Ycoord: 94365 ]] Coordlist: [(95302, 94360), (95305, 94365)], …]

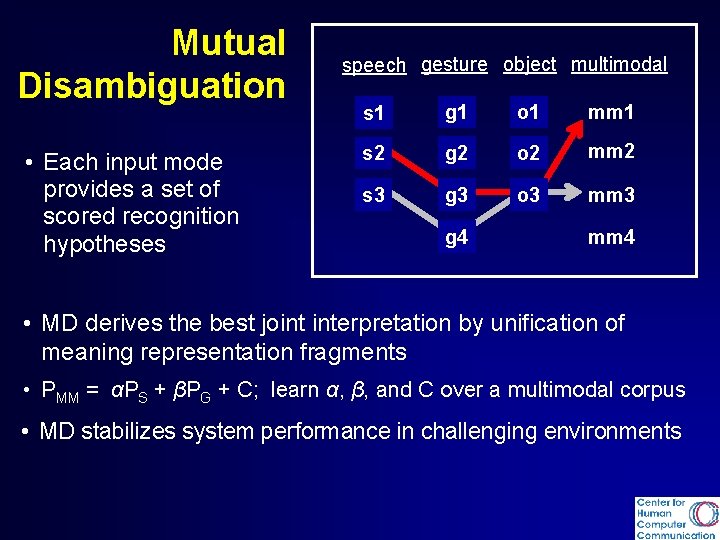

Mutual Disambiguation • Each input mode provides a set of scored recognition hypotheses speech gesture object multimodal s 1 g 1 o 1 mm 1 s 2 g 2 o 2 mm 2 s 3 g 3 o 3 mm 3 g 4 mm 4 • MD derives the best joint interpretation by unification of meaning representation fragments • PMM = αPS + βPG + C; learn α, β, and C over a multimodal corpus • MD stabilizes system performance in challenging environments

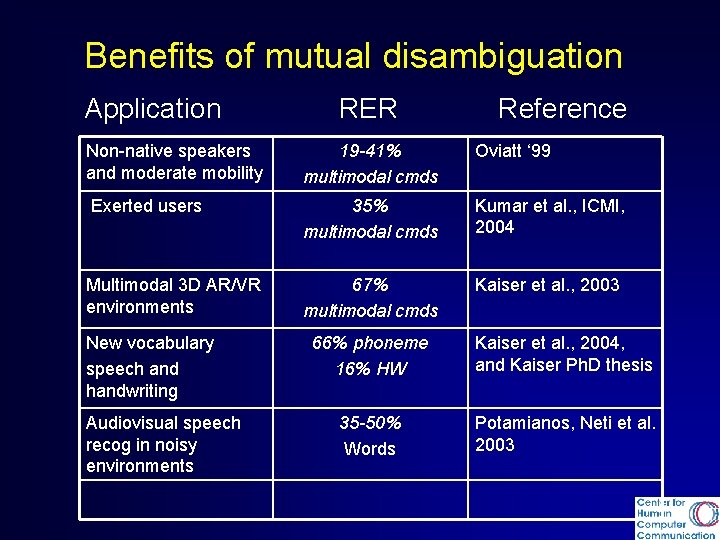

Benefits of mutual disambiguation Application RER Reference Non-native speakers and moderate mobility 19 -41% multimodal cmds Oviatt ‘ 99 Exerted users 35% multimodal cmds Kumar et al. , ICMI, 2004 Multimodal 3 D AR/VR environments 67% multimodal cmds Kaiser et al. , 2003 New vocabulary speech and handwriting Audiovisual speech recog in noisy environments 66% phoneme 16% HW Kaiser et al. , 2004, and Kaiser Ph. D thesis 35 -50% Words Potamianos, Neti et al. 2003

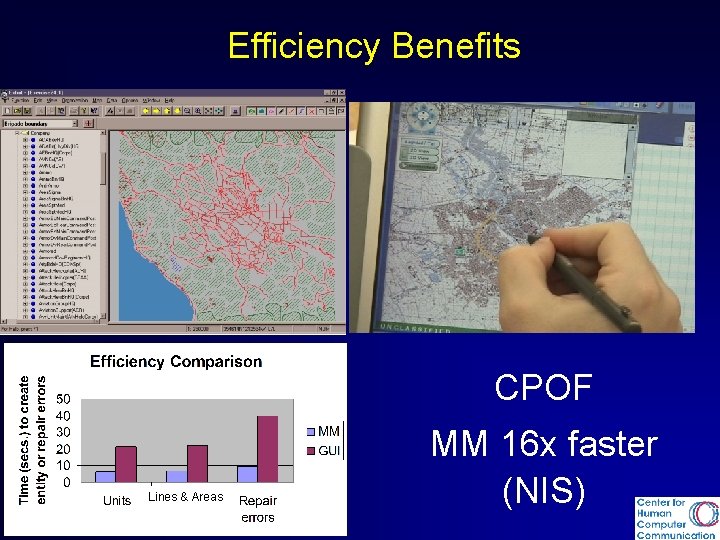

Efficiency Benefits CPOF Lines & Areas MM 16 x faster (NIS)

Demonstration CMU -speech MIT – body tracking OHSU – multimodal fusion (speech + writing/sketch, 3 D gesture) Stanford (NLP, dialogue)

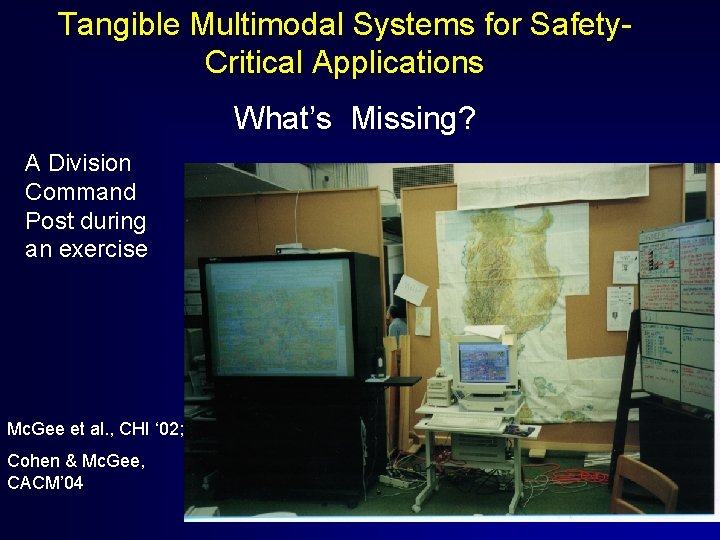

Tangible Multimodal Systems for Safety. Critical Applications What’s Missing? A Division Command Post during an exercise Mc. Gee et al. , CHI ‘ 02; Cohen & Mc. Gee, CACM’ 04

What they use

Many work practices rely on paper ATC -- Mackay ‘ 98 ICU -- Gorman et al. , 2000

Why do they use paper? • Already know the interface • Poor computer interfaces • Fail-safe; robust to power outages • High resolution • Large/small scale • Cheap • Lightweight • Portable • Collaboration

Clinical Data Entry “Perhaps the single greatest challenge that has consistently confronted every clinical system developer is to engage clinicians in direct data entry” (IOM, 1997, p. 125) “To make it simple for the practitioner to interact with the record, data entry must be almost as easy as writing. ” (IOM. 1997, p. 88)

Multimodal Interaction with Paper (NIS) Based on Anoto technology

Benefits • Most people (incl. kids, seniors) know how to use the pen • Portability (works over cell phone) • Ubiquity – paper is everywhere • Collaborative – multiple simult. pens • Next – use for note-taking, alone or in meetings; fuse with ongoing speech • Many new applications – e. g. , architecture, engineering, education, field data capture

Elementary Science Education Sharon Oviatt

Quiet Interfaces that Help People Think Sharon Oviatt oviatt@cse. ogi. edu http: //www. cse. ogi. edu/CHCC/

- Slides: 23