MTracer A Traceoriented Monitoring Framework for Mediumscale Distributed

MTracer: A Trace-oriented Monitoring Framework for Medium-scale Distributed Systems Jingwen Zhou, Zhenbang Chen, Haibo Mi, and Ji Wang {jwzhou, zbchen}@nudt. edu. cn College of Computer National University of Defense Technology This work is supported by: i. VCE National Basic Research Program of China

Background … … 2

Background ? ! ? ? !! … Distributed System containing tens to hundreds nodes, which is medium-scale. 3

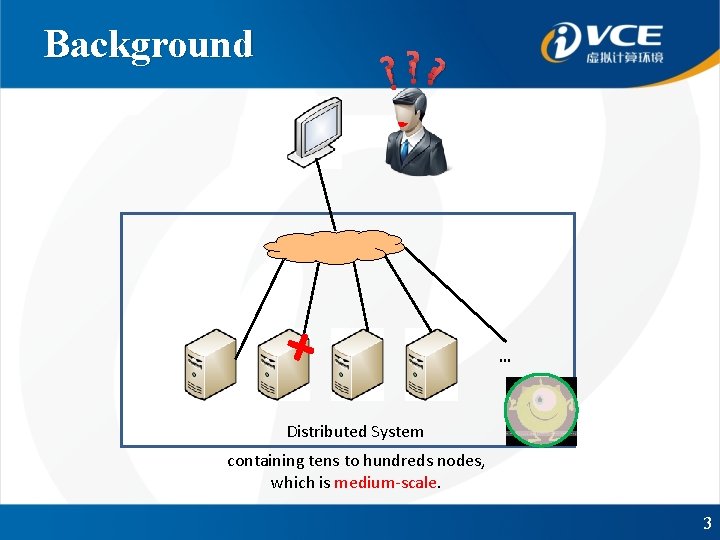

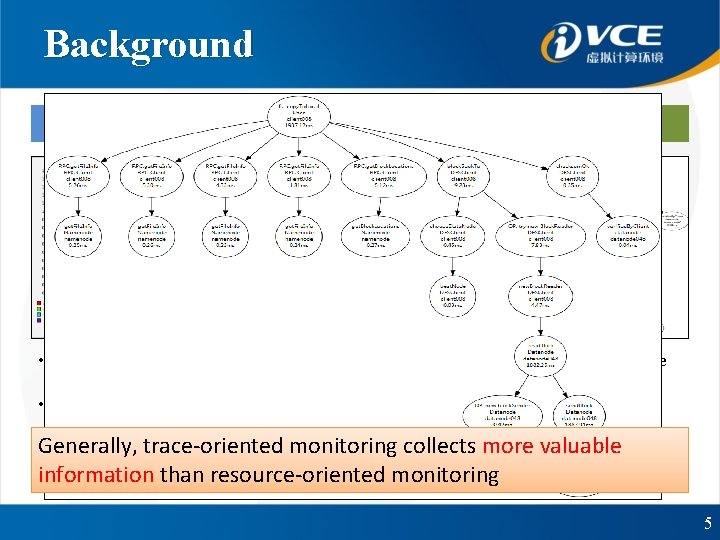

Background Resource-oriented Monitoring • Record the resource consumption, such as CPU and memory • Ganglia, Chukwa … Trace-oriented Monitoring • Record execution paths, or called the traces of requests • X-Trace, P-Tracer, Zipkin … 4

Background Resource-oriented Monitoring • Record the resource consumption, such as CPU and memory • Ganglia, Chukwa … Trace-oriented Monitoring • Record execution paths, or called the traces of requests • X-Trace, P-Tracer, Zipkin … Generally, trace-oriented monitoring collects more valuable information than resource-oriented monitoring 5

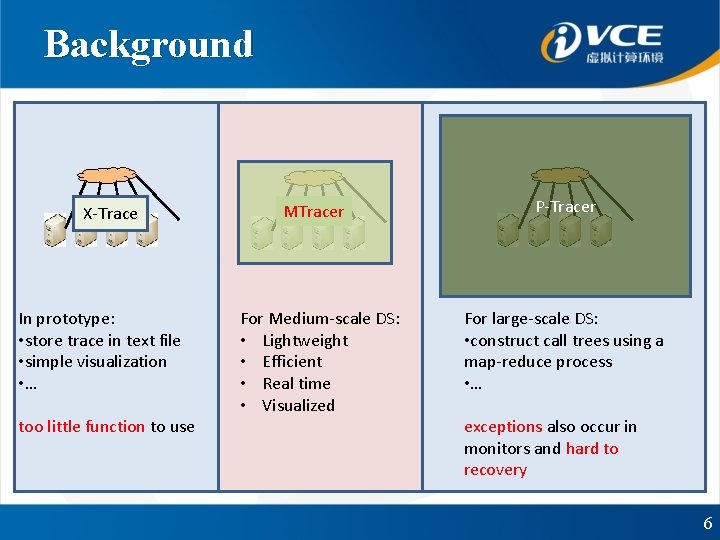

Background X-Trace In prototype: • store trace in text file • simple visualization • … too little function to use MTracer ?? For Medium-scale DS: • Lightweight • Efficient • Real time • Visualized P-Tracer For large-scale DS: • construct call trees using a map-reduce process • … exceptions also occur in monitors and hard to recovery 6

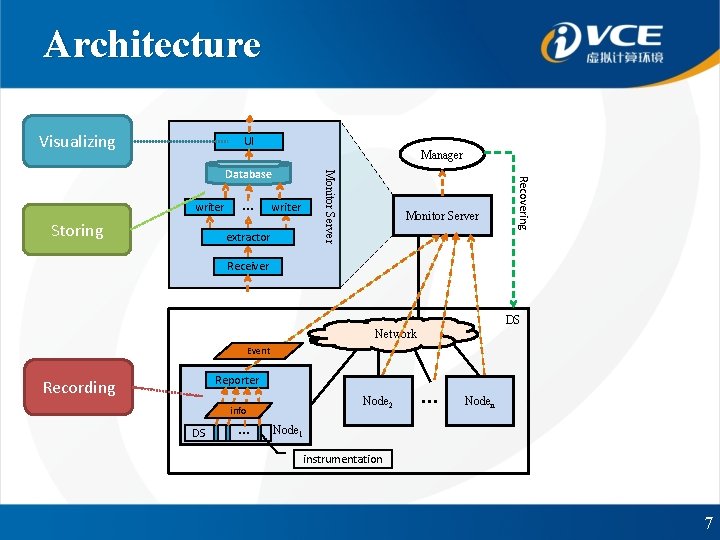

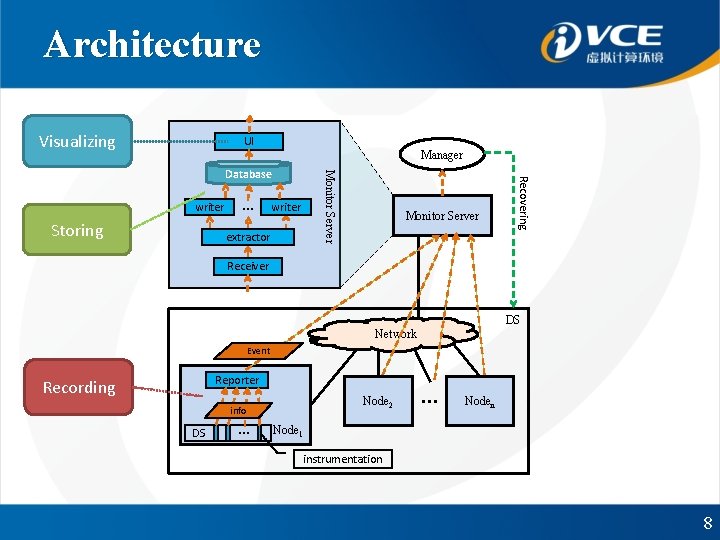

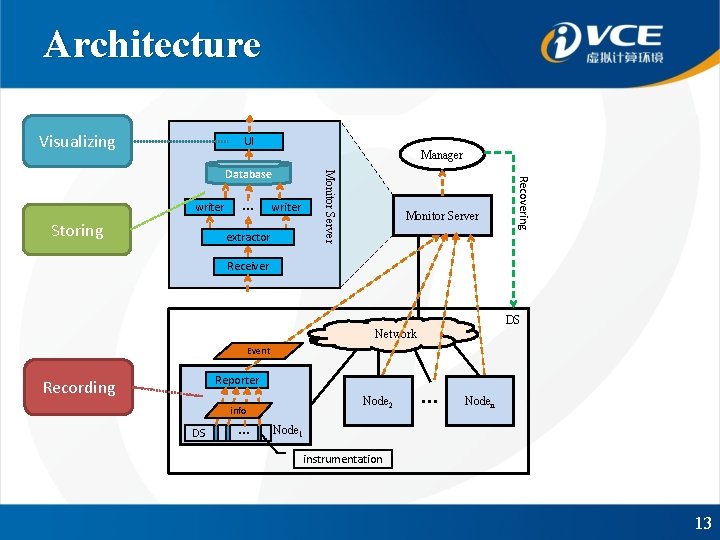

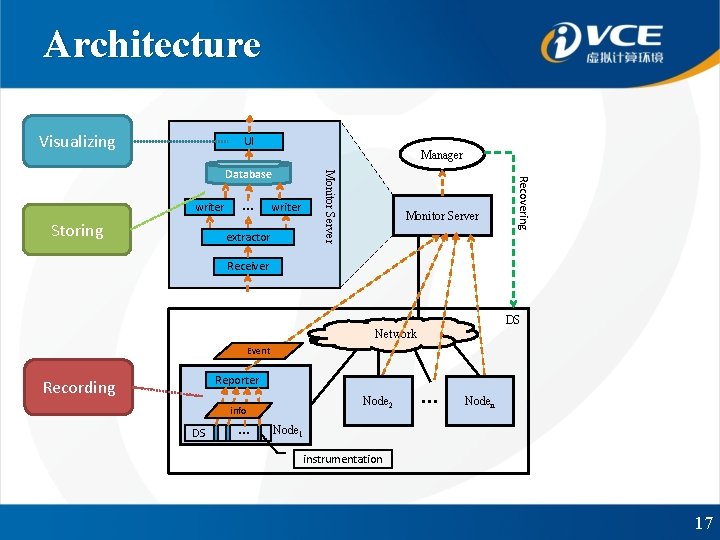

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 7

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 8

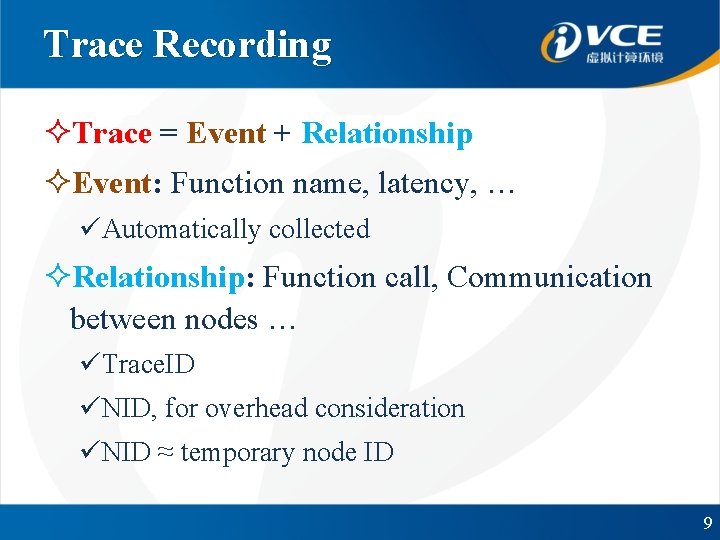

Trace Recording ²Trace = Event + Relationship ²Event: Function name, latency, … üAutomatically collected ²Relationship: Function call, Communication between nodes … üTrace. ID üNID, for overhead consideration üNID ≈ temporary node ID 9

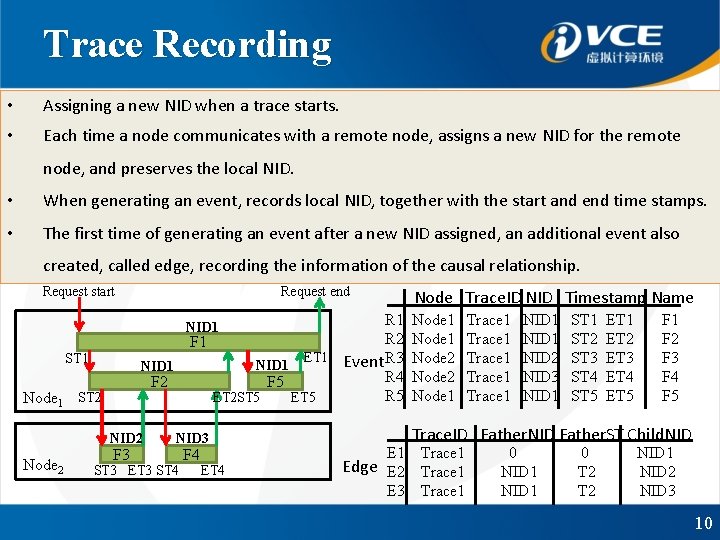

Trace Recording • Assigning a new NID when a trace starts. • Each time a node communicates with a remote node, assigns a new NID for the remote node, and preserves the local NID. • When generating an event, records local NID, together with the start and end time stamps. • The first time of generating an event after a new NID assigned, an additional event also created, called edge, recording the information of the causal relationship. Request start Request end Node Trace. ID NID Timestamp Name R 1 R 2 F 1 ET 1 Event R 3 NID 1 R 4 F 5 R 5 ET 2 ST 5 ET 5 NID 1 ST 1 NID 1 F 2 Node 1 ST 2 NID 2 Node 2 F 3 NID 3 ST 3 ET 3 ST 4 F 4 ET 4 E 1 Node 2 Node 1 NID 2 NID 3 NID 1 ST 2 ST 3 ST 4 ST 5 ET 1 ET 2 ET 3 ET 4 ET 5 F 1 F 2 F 3 F 4 F 5 Trace. ID Father. NID Father. ST Child. NID Trace 1 Edge E 2 Trace 1 E 3 Trace 1 Trace 1 0 NID 1 0 T 2 NID 1 NID 2 NID 3 10

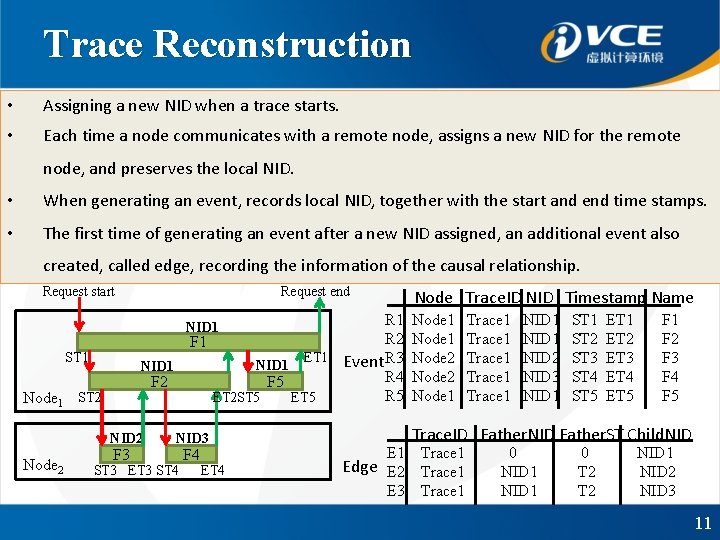

Trace Reconstruction • Assigning a new NID when a trace starts. • Each time a node communicates with a remote node, assigns a new NID for the remote node, and preserves the local NID. • When generating an event, records local NID, together with the start and end time stamps. • The first time of generating an event after a new NID assigned, an additional event also created, called edge, recording the information of the causal relationship. Request start Request end Node Trace. ID NID Timestamp Name R 1 R 2 F 1 ET 1 Event R 3 NID 1 R 4 F 5 R 5 ET 2 ST 5 ET 5 NID 1 ST 1 NID 1 F 2 Node 1 ST 2 NID 2 Node 2 F 3 NID 3 ST 3 ET 3 ST 4 F 4 ET 4 E 1 Node 2 Node 1 NID 2 NID 3 NID 1 ST 2 ST 3 ST 4 ST 5 ET 1 ET 2 ET 3 ET 4 ET 5 F 1 F 2 F 3 F 4 F 5 Trace. ID Father. NID Father. ST Child. NID Trace 1 Edge E 2 Trace 1 E 3 Trace 1 Trace 1 0 NID 1 0 T 2 NID 1 NID 2 NID 3 11

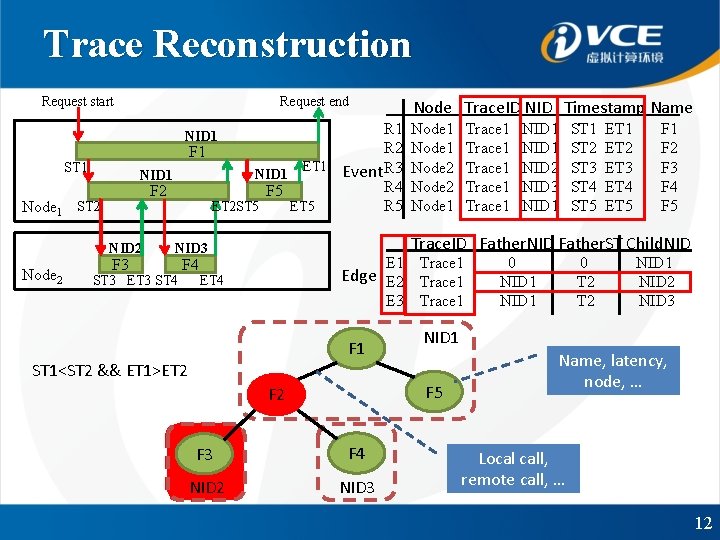

Trace Reconstruction Request start Request end R 1 R 2 F 1 ET 1 Event R 3 NID 1 R 4 F 5 R 5 ET 2 ST 5 ET 5 NID 1 ST 1 Node 1 NID 1 F 2 ST 2 NID 2 Node 2 F 3 NID 3 ST 3 ET 3 ST 4 F 4 E 1 Edge E 2 E 3 ET 4 F 1 ST 1<ST 2 && ET 1>ET 2 Node Trace. ID NID Timestamp Name Node 1 Node 2 Node 1 F 3 F 4 NID 2 NID 3 NID 1 ST 2 ST 3 ST 4 ST 5 ET 1 ET 2 ET 3 ET 4 ET 5 F 1 F 2 F 3 F 4 F 5 Trace. ID Father. NID Father. ST Child. NID Trace 1 0 NID 1 0 T 2 NID 1 NID 2 NID 3 NID 1 F 5 F 2 Trace 1 Trace 1 Name, latency, node, … Local call, remote call, … 12

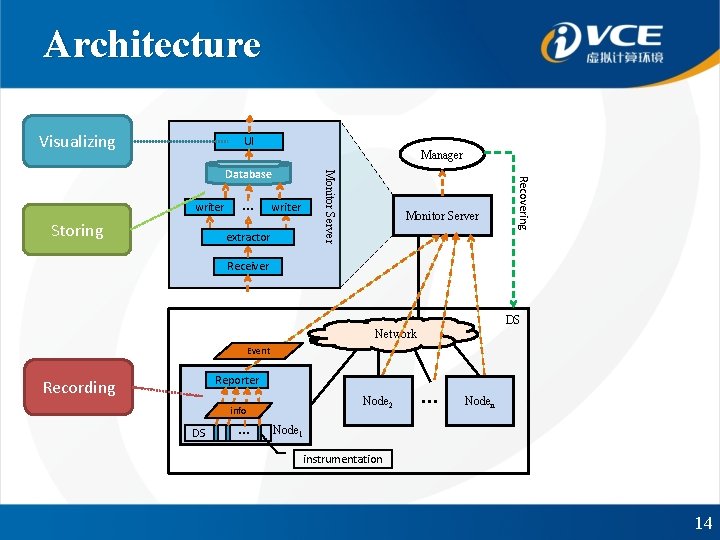

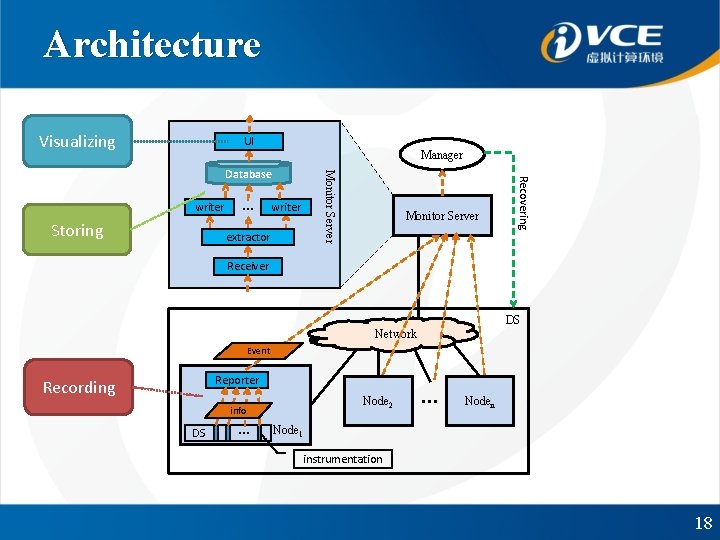

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 13

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 14

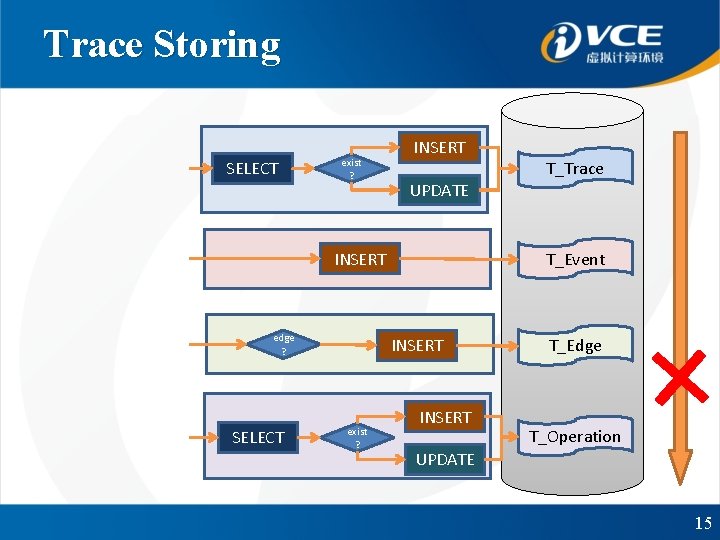

Trace Storing SELECT exist ? INSERT UPDATE INSERT edge ? SELECT T_Event INSERT exist ? T_Trace INSERT T_Edge T_Operation UPDATE 15

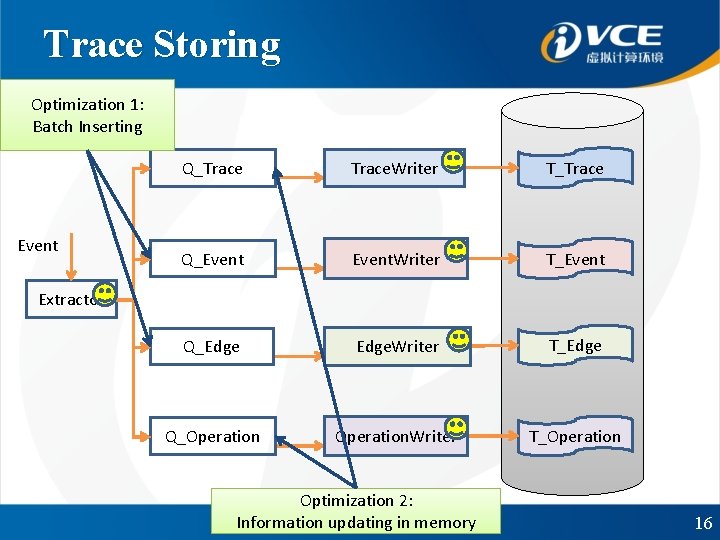

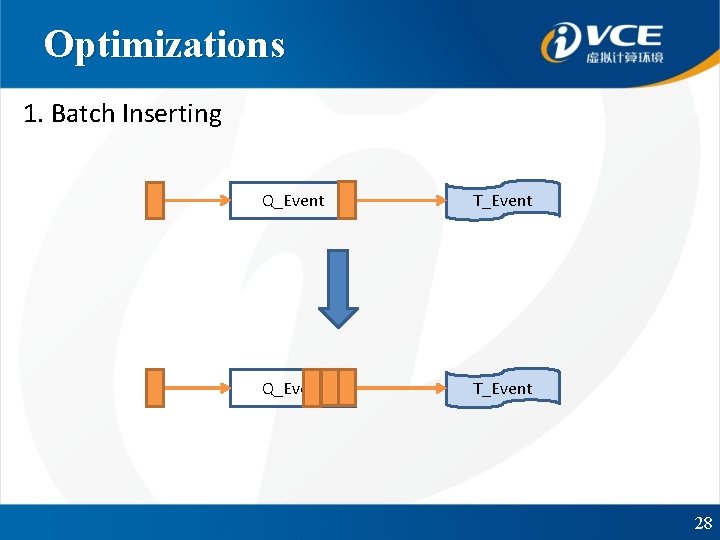

Trace Storing Optimization 1: Batch Inserting Event Q_Trace. Writer T_Trace Q_Event. Writer T_Event Q_Edge. Writer T_Edge Q_Operation. Writer T_Operation Extractor Optimization 2: Information updating in memory 16

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 17

Architecture Visualizing UI Manager Storing … writer extractor Monitor Server Recovering writer Monitor Server Database Receiver DS Network Event Reporter Recording Node 2 info DS … … Noden Node 1 instrumentation 18

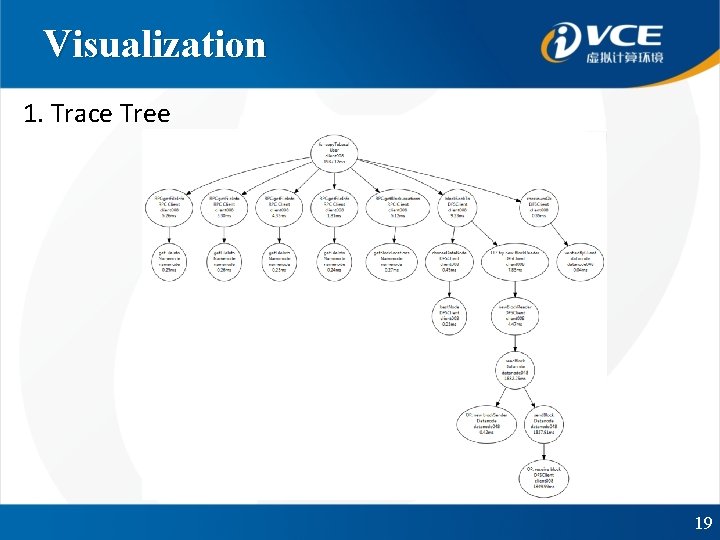

Visualization 1. Trace Tree 19

Visualization 20

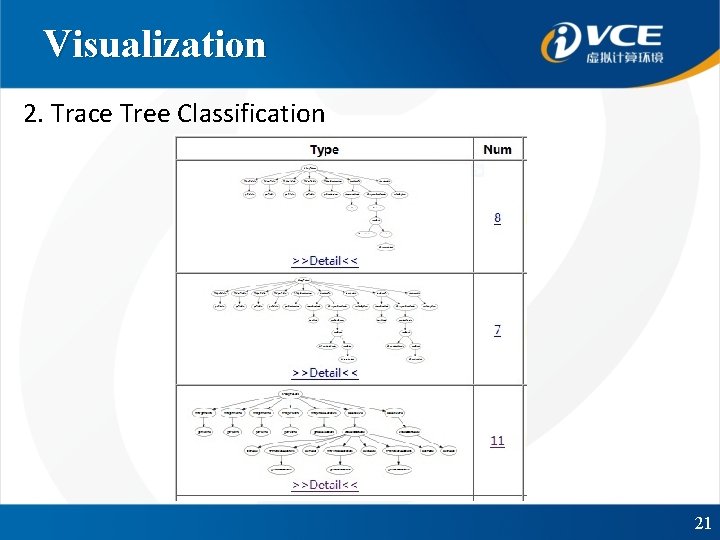

Visualization 2. Trace Tree Classification 21

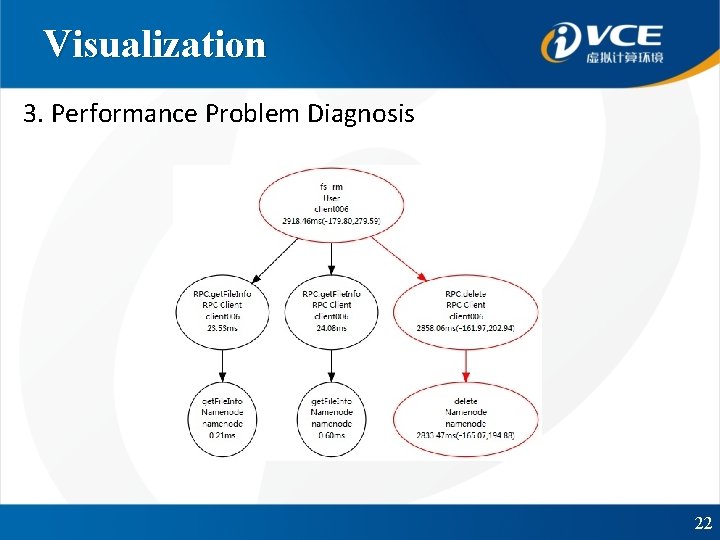

Visualization 3. Performance Problem Diagnosis 22

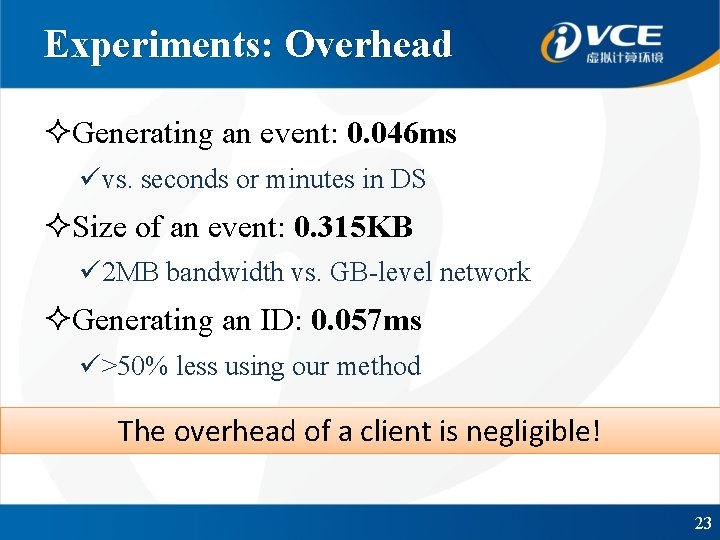

Experiments: Overhead ²Generating an event: 0. 046 ms üvs. seconds or minutes in DS ²Size of an event: 0. 315 KB ü 2 MB bandwidth vs. GB-level network ²Generating an ID: 0. 057 ms ü>50% less using our method The overhead of a client is negligible! 23

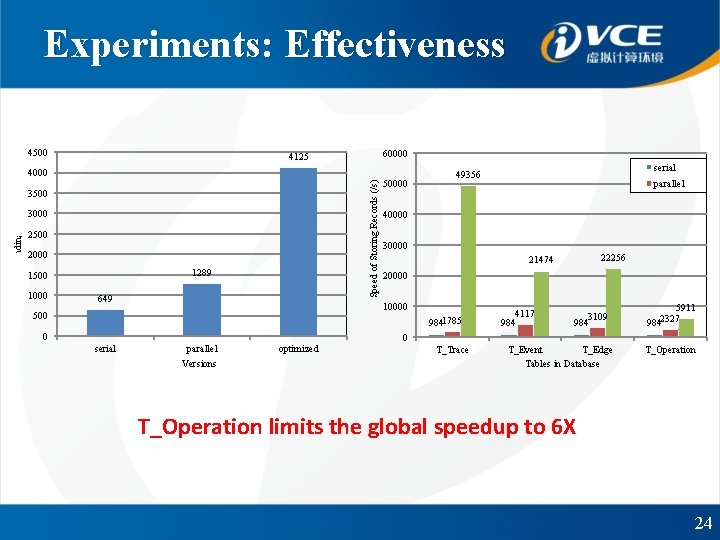

Experiments: Effectiveness 4500 60000 4125 Speed of Storing Records (/s) Speed of Sending Events (/s) 4000 3500 3000 2500 2000 1289 1500 1000 649 50000 30000 22256 21474 20000 9841785 0 parallel 40000 10000 500 serial 49356 4117 984 3109 984 5911 2327 984 0 serial parallel Versions optimized T_Trace T_Event T_Edge Tables in Database T_Operation limits the global speedup to 6 X 24

Experiments: Usability ²HDFS: RPC & Data accessing processes ² 50 Clients + (50 +1) HDFS ² 14 faults:Functional + Performance problem ²Easily handling, correctly visualizing ²Trace classifying + Diagnosis algorithm ²> 200 nodes 25

Conclusion ²MTracer:A Lightweight, efficient, real-time monitor for medium-scale DS, with a visualized frontend. ²Future work üAn easier way for instrumentations üA dataset for trace-based monitoring research üFault detection ü… 26

This work is supported by: i. VCE National Basic Research Program of China 27

Optimizations 1. Batch Inserting Q_Event T_Event 28

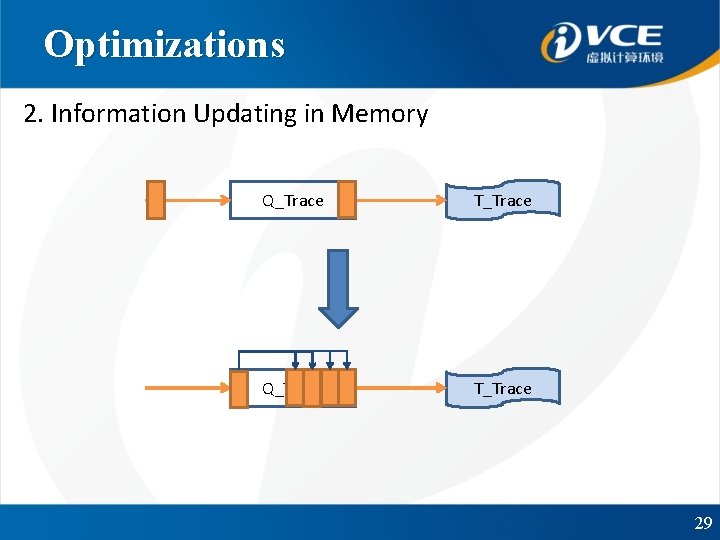

Optimizations 2. Information Updating in Memory Q_Trace T_Trace 29

- Slides: 29