MSc Methods part II Bayesian analysis Dr Mathias

MSc Methods part II: Bayesian analysis Dr. Mathias (Mat) Disney UCL Geography Office: 113, Pearson Building Tel: 7670 0592 Email: mdisney@ucl. geog. ac. uk www. geog. ucl. ac. uk/~mdisney

Lecture outline • Intro to Bayes’ Theorem – – – Science and scientific thinking Probability & Bayes Theorem – why is it important? Frequentists v Bayesian Background, rationale Methods: MCMC …… Advantages / disadvantages • Applications: – parameter estimation, uncertainty – Practical – basic Bayesian estimation

Reading and browsing • • • Gauch, H. , 2002, Scientific Method in Practice, CUP. Sivia, D. S. with Skilling, J. (2008) Data Analysis, 2 nd ed. , OUP, Oxford. Monteith and Unsworth, • • Computational Numerical Methods in C (XXXX) Flake, W. G. (2000) Computational Beauty of Nature, MIT Press. Gershenfeld, N. (2002) The Nature of Mathematical Modelling, , CUP. • Mathematical texts – Blah • Kalman filters – Welch and Bishop – Maybeck • Papers

So how do we do science? • • • Carry out experiments? Collect observations? Test hypotheses (models)? Generate “understanding”? Objective knowledge? ? Induction? Deduction?

Induction and deduction • Deduction – Inference, by reasoning, from general to particular – E. g. Premises: i) every mammal has a heart; ii) every horse is a mammal. – Conclusion: Every horse has a heart. – Valid if the truth of premises guarantees truth of conclusions & false otherwise. – Conclusion is either true or false

Induction and deduction • Induction – Process of inferring general principles from observation of particular cases – E. g. Premise: every horse that has ever been observed has a heart – Conclusion: Every horse has a heart. – Conclusion goes beyond information present, even implicitly, in premises – Conclusions have a degree of strength (weak -> near certain).

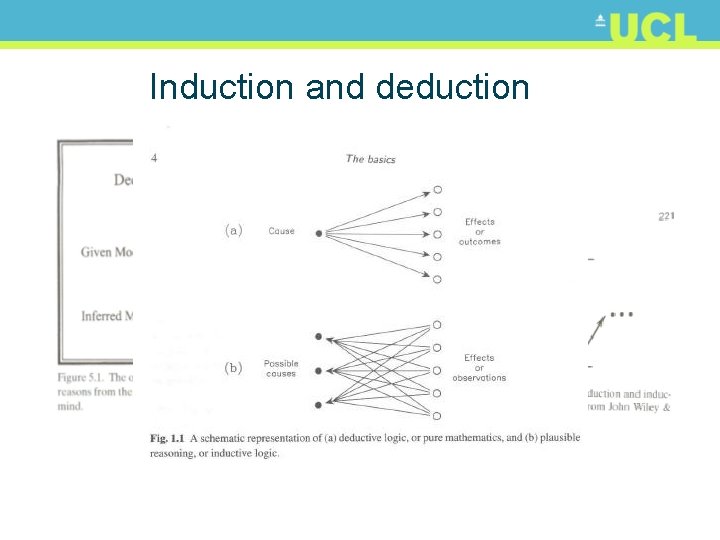

Induction and deduction

Aside: sound argument v fallacy • If plants lack nitrogen, they become yellowish – The plants are yellowish, therefore they lack N – The plants do not lack N, so they do not become yellowish – The plants lack N, so they become yellowish – The plants are not yellowish, so they do not lack N • • Affirming the antecedent: p q; p, q ✓ Denying the consequent: p q: ~q, ~p ✓ Affirming the consequent: p q: q, p X Denying the antecedent: p q: ~p, ~q X

Aside: sound argument v fallacy • Fallacies can be hard to spot in longer, more detailed arguments: – Fallacies of composition; ambiguity; false dilemmas; circular reasoning; genetic fallacies (ad hominem) • Gauch (2003) notes: – For an argument to be accepted by any audience as proof, audience MUST accept premises and validity – That is: part of responsibility for rational dialogue falls to the audience – If audience data lacking and / or logic weak then valid argument may be incorrectly rejected (or vice versa)

Gauch (2006): “Seven pillars of Science” 1. 2. 3. 4. Realism: physical world is real; Presuppositions: world is orderly and comprehensible; Evidence: science demands evidence; Logic: science uses standard, settled logic to connect evidence and assumptions with conclusions; 5. Limits: many matters cannot usefully be examined by science; 6. Universality: science is public and inclusive; 7. Worldview: science must contribute to a meaningful worldview.

What’s this got to do with methods? • Fundamental laws of probability can be derived from statements of logic • BUT there are different ways to apply • Two key ways – Frequentist – Bayesian – after Rev. Thomas Bayes (1702 -1761)

Bayes: see Gauch (2003) ch 5 • Informally, the Bayesian Q is: – “What is the probability (P) that a hypothesis (H) is true, given the data and any prior knowledge? ” – Weighs hypotheses (different models) in the light of data • The frequentist Q is: – “How reliable is an inference procedure, but virtue of not rejecting a true hypothesis or accepting a false hypothesis? ” – Weighs procedures (different sets of data) in the light of hypothesis

Bayes: see Gauch (2003) ch 5 • Prior knowledge? – What is known beyond the particular experiment at hand, which may be substantial or negligible • We all have priors: assumptions, experience, other pieces of evidence • Bayes approach explicitly requires you to assign a probability to your prior (somehow) • Bayesian view - probability as degree of belief rather than a frequency of occurrence (in the long run…)

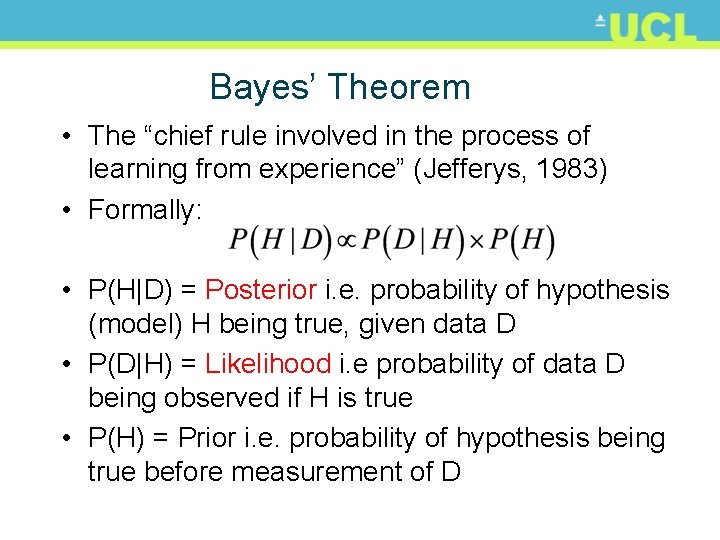

Bayes’ Theorem • The “chief rule involved in the process of learning from experience” (Jefferys, 1983) • Formally: • P(H|D) = Posterior i. e. probability of hypothesis (model) H being true, given data D • P(D|H) = Likelihood i. e probability of data D being observed if H is true • P(H) = Prior i. e. probability of hypothesis being true before measurement of D

Bayes’ Theorem • Importance? P(H|D) appears on the left of BT • It solves the inverse (inductive) problem – probability of a hypothesis given some data • This is how we do science in practice! • We don’t have access to infinite repetitions of expts (the ‘long run frequency’ view)

Bayes Theorem • I is ‘background information’ as there is ‘no such thing as absolute probability’ (see S & S p 5) • P(rain today) will depend on clouds this morning, whether we saw forecast etc. – I usually left out but …. • Power of Bayes’ Theorem – Relates the quantity of interest i. e. P of H being true given D, to that which we might estimate in practice i. e. P of observing D, given H is correct

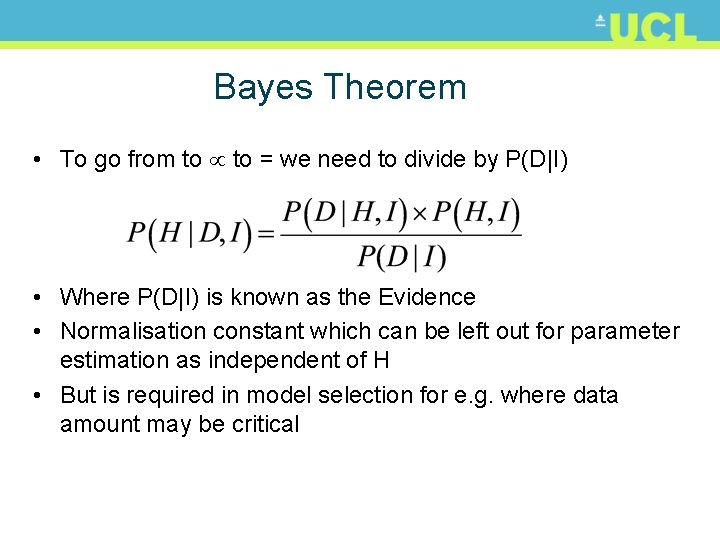

Bayes Theorem • To go from to = we need to divide by P(D|I) • Where P(D|I) is known as the Evidence • Normalisation constant which can be left out for parameter estimation as independent of H • But is required in model selection for e. g. where data amount may be critical

Bayes Theorem & marginalisation • To go from to = we need to divide by P(D|I) • Where P(D|I) is known as the Evidence • Normalisation constant which can be left out for parameter estimation as independent of H • But is required in model selection for e. g. where data amount may be critical

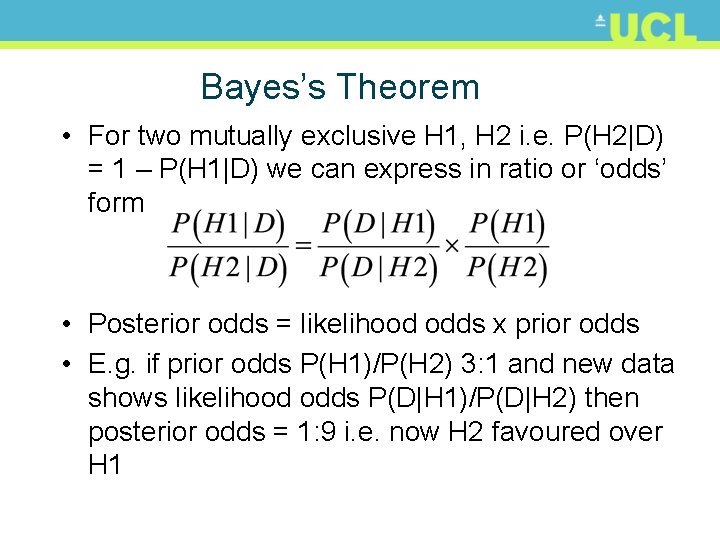

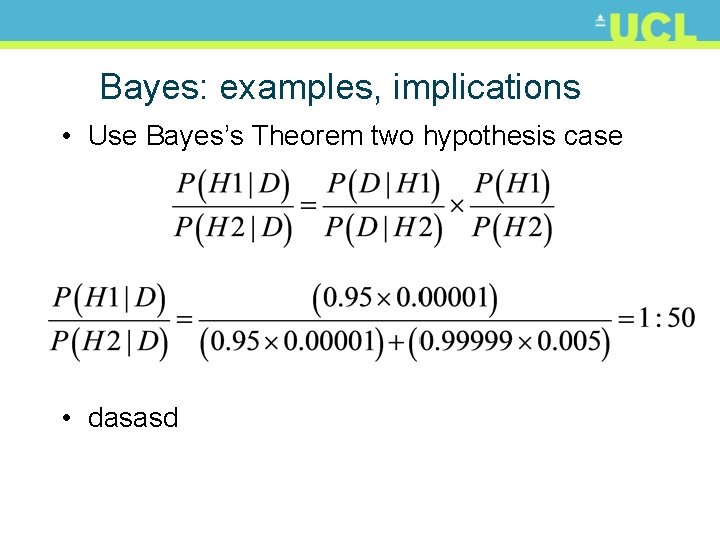

Bayes’s Theorem • For two mutually exclusive H 1, H 2 i. e. P(H 2|D) = 1 – P(H 1|D) we can express in ratio or ‘odds’ form • Posterior odds = likelihood odds x prior odds • E. g. if prior odds P(H 1)/P(H 2) 3: 1 and new data shows likelihood odds P(D|H 1)/P(D|H 2) then posterior odds = 1: 9 i. e. now H 2 favoured over H 1

Bayes: examples, implications • Ignored priors & the rare diseases • Disease affects 1: 100, 000 randomly • If you have it, test will correctly say so with P = 0. 95 • Test gives incorrect positive diagnosis (false positive) with P = 0. 005 • If test is positive, what is P that diagnosis is correct?

Bayes: examples, implications • Use Bayes’s Theorem two hypothesis case • dasasd

- Slides: 21