MS 101 Algorithms Instructor Neelima Gupta nguptacs du

- Slides: 21

MS 101: Algorithms Instructor Neelima Gupta ngupta@cs. du. ac. in

Table of Contents Asymptotic Notations

Growth Functions • Big O Notation • In general a function – f(n) is O(g(n)) if there exist positive constants c and n 0 such that f(n) c g(n) for all n n 0 • Formally – O(g(n)) = { f(n): positive constants c and n 0 such that f(n) c g(n) n n 0 • Intuitively, it means f(n) grows no faster than g(n). • Examples: – n^2, n^2 – n^3, n^3 – n^2 – n

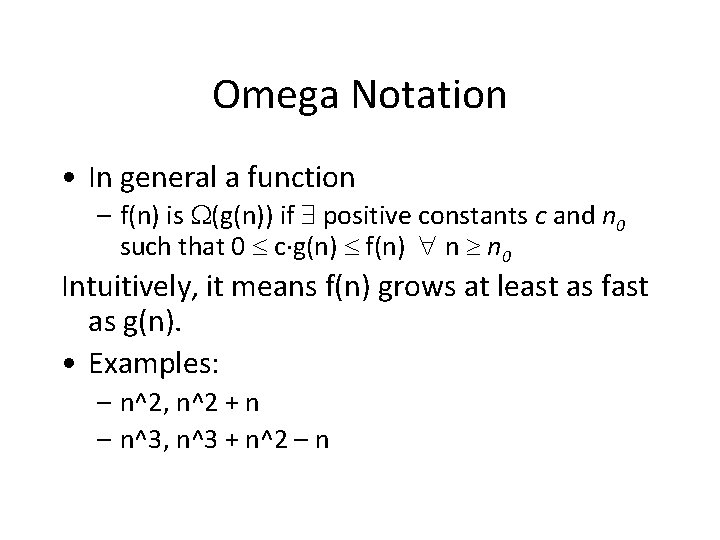

Omega Notation • In general a function – f(n) is (g(n)) if positive constants c and n 0 such that 0 c g(n) f(n) n n 0 Intuitively, it means f(n) grows at least as fast as g(n). • Examples: – n^2, n^2 + n – n^3, n^3 + n^2 – n

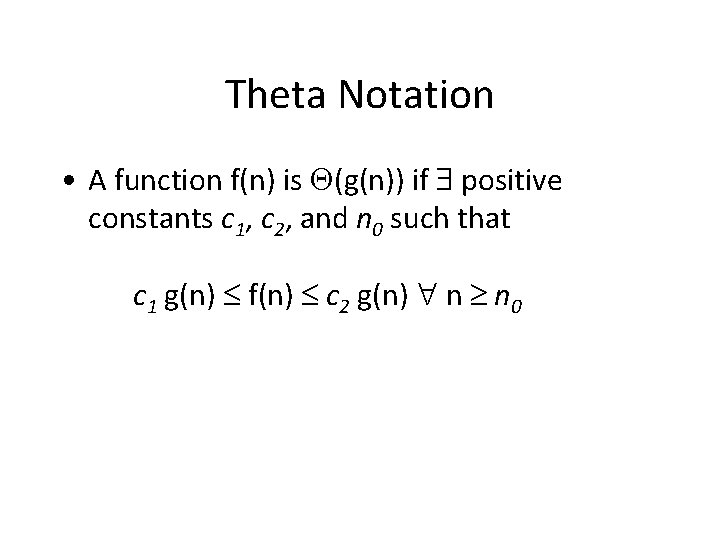

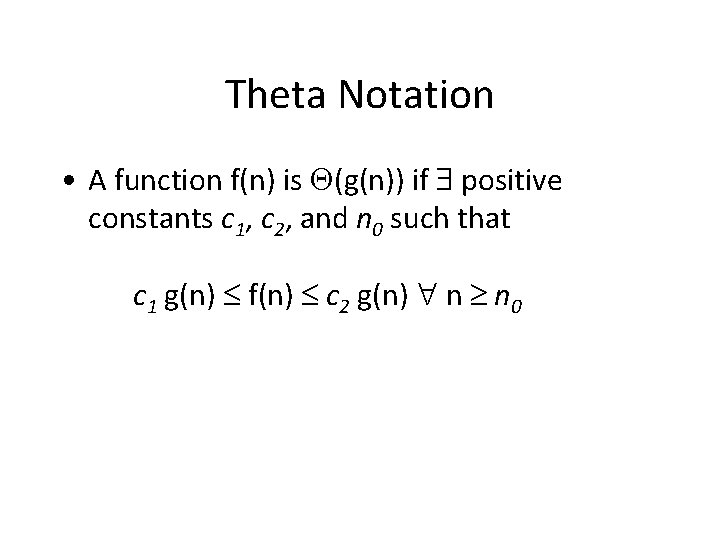

Theta Notation • A function f(n) is (g(n)) if positive constants c 1, c 2, and n 0 such that c 1 g(n) f(n) c 2 g(n) n n 0

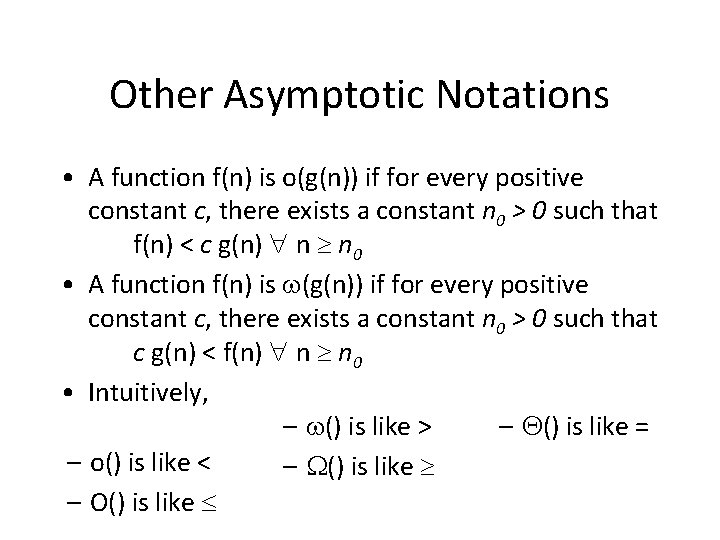

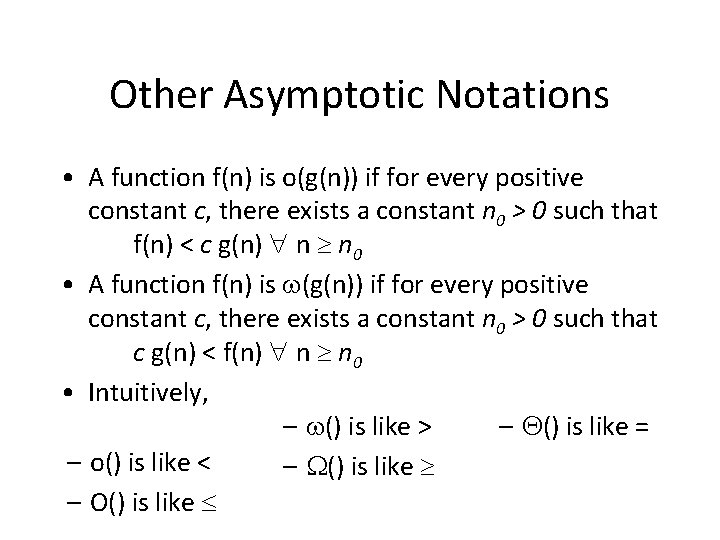

Other Asymptotic Notations • A function f(n) is o(g(n)) if for every positive constant c, there exists a constant n 0 > 0 such that f(n) < c g(n) n n 0 • A function f(n) is (g(n)) if for every positive constant c, there exists a constant n 0 > 0 such that c g(n) < f(n) n n 0 • Intuitively, – () is like > – () is like = – o() is like < – () is like – O() is like

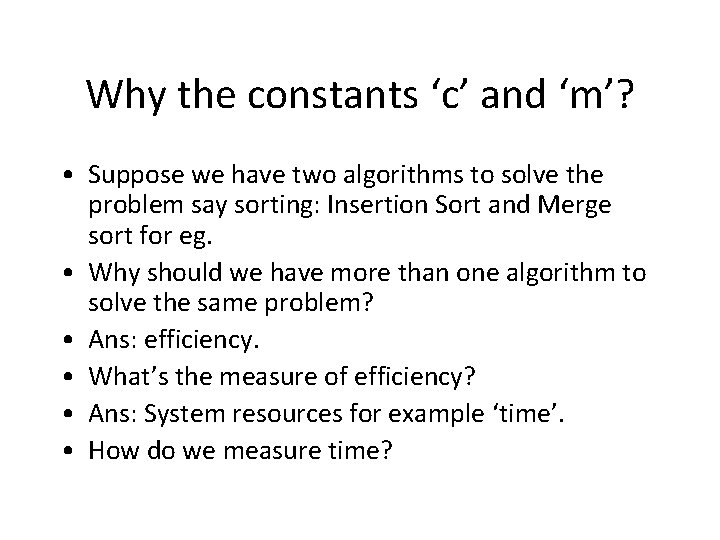

Why the constants ‘c’ and ‘m’? • Suppose we have two algorithms to solve the problem say sorting: Insertion Sort and Merge sort for eg. • Why should we have more than one algorithm to solve the same problem? • Ans: efficiency. • What’s the measure of efficiency? • Ans: System resources for example ‘time’. • How do we measure time?

Contd. . • • IS(n) = O(n^2) MS(n) = O(nlog n) MS(n) is faster than IS(n). Suppose we run IS on a fast machine and MS on a slow machine and measure the time (since they were developed by two different people living in different part of the globe), we may get less time for IS and more for MS…wrong analysis • Solution: count the number of steps on a generic computational model

Computational Model: Analysis of Algorithms • Analysis is performed with respect to a computational model • We will usually use a generic uniprocessor random-access machine (RAM) – All memory equally expensive to access – No concurrent operations – All reasonable instructions take unit time • Except, of course, function calls – Constant word size • Unless we are explicitly manipulating bits

Running Time • Number of primitive steps that are executed – Except for time of executing a function call, in this model most statements roughly require the same (within constant factor) amount of time • y=m*x+b • c = 5 / 9 * (t - 32 ) • z = f(x) + g(y) • We can be more exact if need be

But why ‘c’ and ‘m’? • Because – We compare two algorithms on the basis of their number of steps and – the actual time taken by an algorithm is (no more than in case of ‘O’ or no less than in case of ‘Ω’) ‘c’ times the number of steps.

Why ‘m’? • We need efficient algorithms and computational tools to solve problems on big data. For example, it is not very difficult to sort a pack of 52 cards manually. However, to sort all the books in a library on their accession number might be tedious if done manually. • So we want to compare algorithms for large input.

Properties • Transitivity of O, Omega and theta. • f = O(h), g = O(h) => f +g = O(h). • Let g = O(f), then f + g = theta(f)

Asymptotic bounds of some common functions • Polynomial of degree d with leading coefficient positive is theta(nd). • For every b > 1 and k > 0, we have logb n = O(nk) • loga n = logb n /logb a and hence, logb n = theta(loga n) • For every r > 1 and k > 0, we have nk = O(rn)

Exponential functions • rn , we are only interested in r > 1 because we always mean that exponential functions grow faster than polynomials. This is true only when r > 1. So, for our purpose we’ll always assume r > 1 whenever we talk about exponential functions. • Unlike log functions, base cannot be ignored in exponential functions. i. e in general, rn != theta(sn).

Assigmment 2 • Polynomial of degree d with leading coefficient positive is theta(nd).

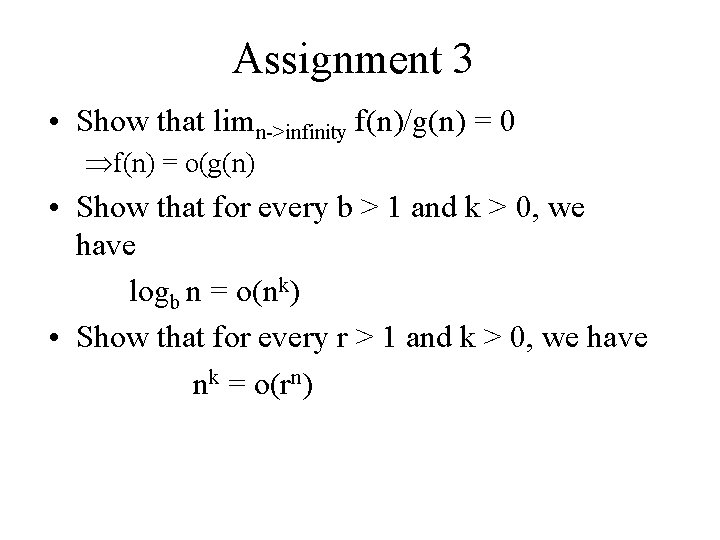

Assignment 3 • Show that limn->infinity f(n)/g(n) = 0 f(n) = o(g(n) • Show that for every b > 1 and k > 0, we have logb n = o(nk) • Show that for every r > 1 and k > 0, we have nk = o(rn)

Assignment 4 • Show that limn->infinity f(n)/g(n) = c, c>0 f(n) = theta(g(n)

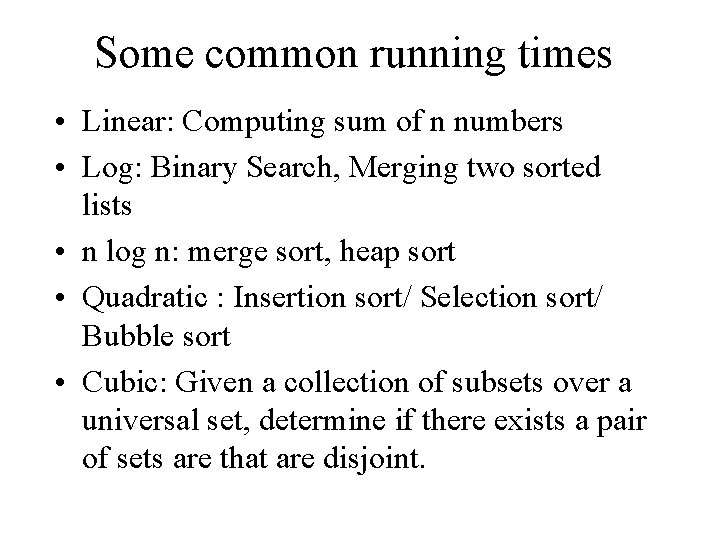

Some common running times • Linear: Computing sum of n numbers • Log: Binary Search, Merging two sorted lists • n log n: merge sort, heap sort • Quadratic : Insertion sort/ Selection sort/ Bubble sort • Cubic: Given a collection of subsets over a universal set, determine if there exists a pair of sets are that are disjoint.

Some more • General polynomial time: nk ---Independent Set of size k. • Beyond Polynomial Time : Maximum Independent Set.

Arrange some functions • Let us arrange the following functions in ascending order (assume log n = o(n) is known) – n, n^2, n^3, sqrt(n), n^epsilon, log^2 n, n log n, n/log n, 2^n, 3^n