Moving Object Detection and Tracking for Intelligent Outdoor

Moving Object Detection and Tracking for Intelligent Outdoor Surveillance Assoc. Prof. Dr. Kanappan Palaniappan palaniappank@missouri. edu Dr. Filiz Bunyak bunyak@missouri. edu Dr. Sumit Nath naths@missouri. edu Department of Computer Science University of Missouri-Columbia

Visual Surveillance and Monitoring • Mounting video cameras is cheap, but finding available human resources to observe the output is expensive. According to study of US Nat’l Institute of Justice: • A person can not pay attention to more than 4 cameras. • After only 20 minutes of watching and evaluating monitor screens, attention of most individuals falls below acceptable levels. • Although surveillance cameras are already prevalent in banks, stores, and parking lots, video data currently is used only "after the fact". What is needed • Continuous 24 -hour monitoring of surveillance video to alert security officers to a burglary in progress, or to a suspicious individual loitering in the parking lot, while there is still time to prevent the crime.

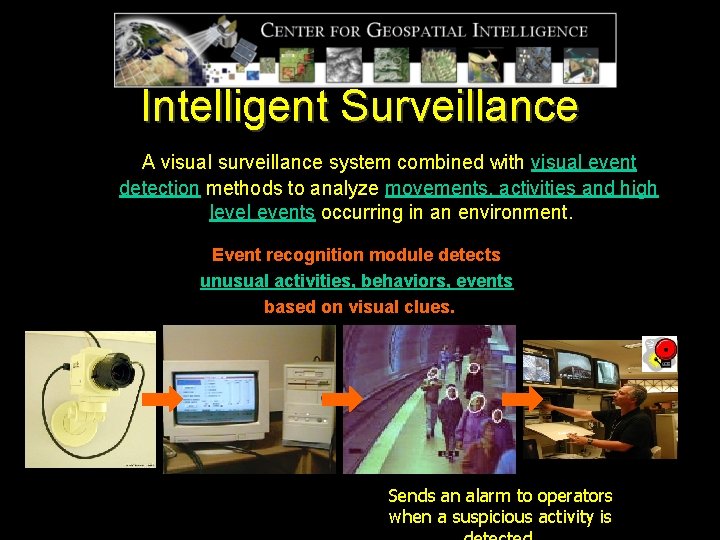

Intelligent Surveillance A visual surveillance system combined with visual event detection methods to analyze movements, activities and high level events occurring in an environment. Event recognition module detects unusual activities, behaviors, events based on visual clues. Sends an alarm to operators when a suspicious activity is

Visual Event Detection Applications Surveillance and Monitoring: • • Security (parking lots, airports, subway stations, banks, lobbies etc. ) Traffic (track vehicle movements and annotate action in traffic scenarios with natural language verbs. ) Commercial (understanding customer behavior in stores) Long-Term Analysis (statistics gathering for infrastructure change i. e. crowding measurement) Broadcast Video Indexing: Sports video indexing for newscasters and coaches. Interactive Environments: environment that responds to the activity of occupants Robotic Collaboration: robots that can effectively navigate their environment and interact with other people and robots. Medical: • Event based analysis of cell motility • Gait analysis, etc.

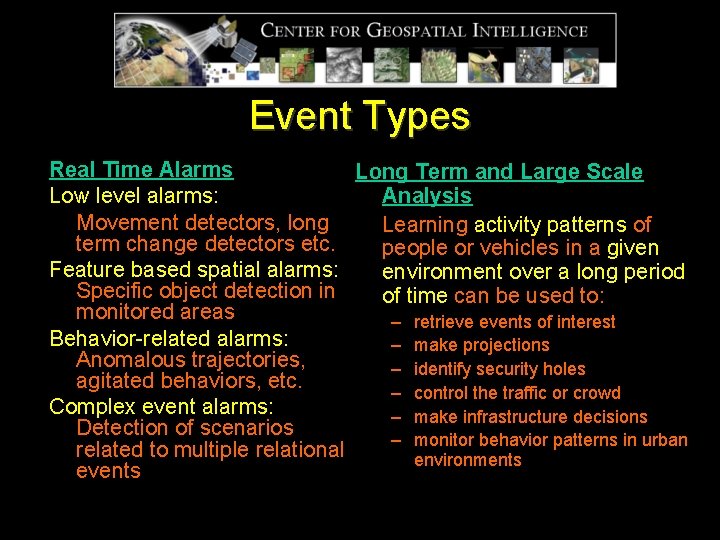

Event Types Real Time Alarms Long Term and Large Scale Analysis Low level alarms: Movement detectors, long Learning activity patterns of term change detectors etc. people or vehicles in a given Feature based spatial alarms: environment over a long period Specific object detection in of time can be used to: monitored areas – retrieve events of interest Behavior-related alarms: – make projections Anomalous trajectories, – identify security holes agitated behaviors, etc. – control the traffic or crowd Complex event alarms: – make infrastructure decisions Detection of scenarios – monitor behavior patterns in urban related to multiple relational environments events

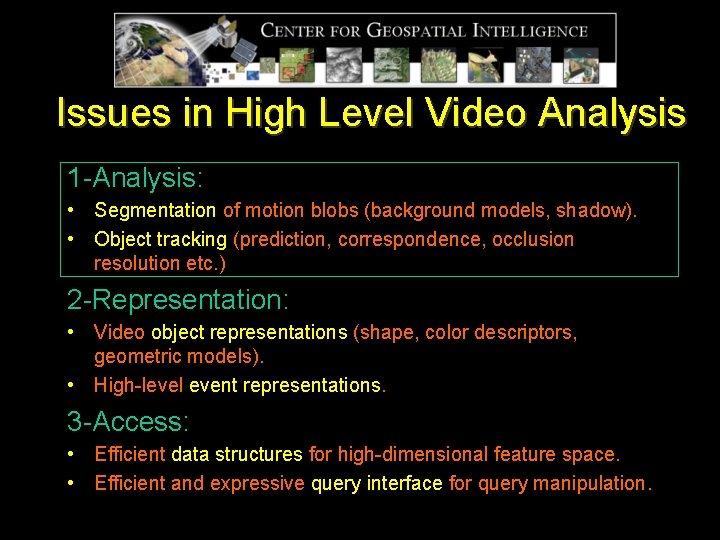

Issues in High Level Video Analysis 1 -Analysis: • Segmentation of motion blobs (background models, shadow). • Object tracking (prediction, correspondence, occlusion resolution etc. ) 2 -Representation: • Video object representations (shape, color descriptors, geometric models). • High-level event representations. 3 -Access: • Efficient data structures for high-dimensional feature space. • Efficient and expressive query interface for query manipulation.

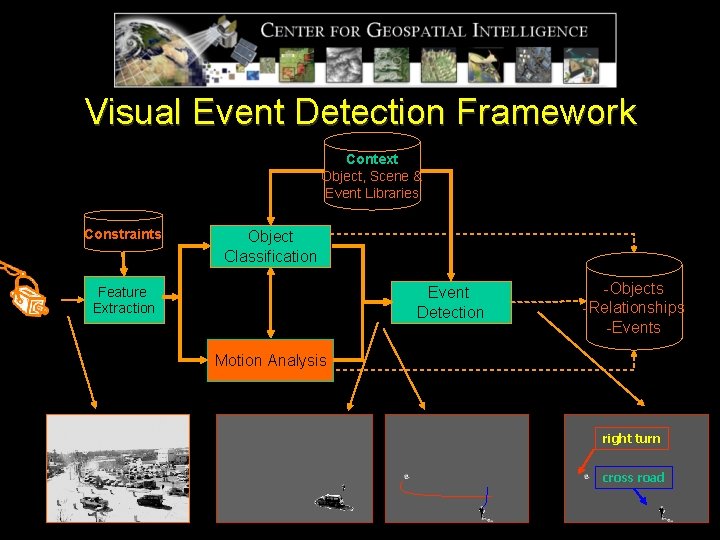

Visual Event Detection Framework Context Object, Scene & Event Libraries Constraints Object Classification Events Event Detection Feature Extraction -Objects -Relationships -Events Motion Analysis right turn cross road

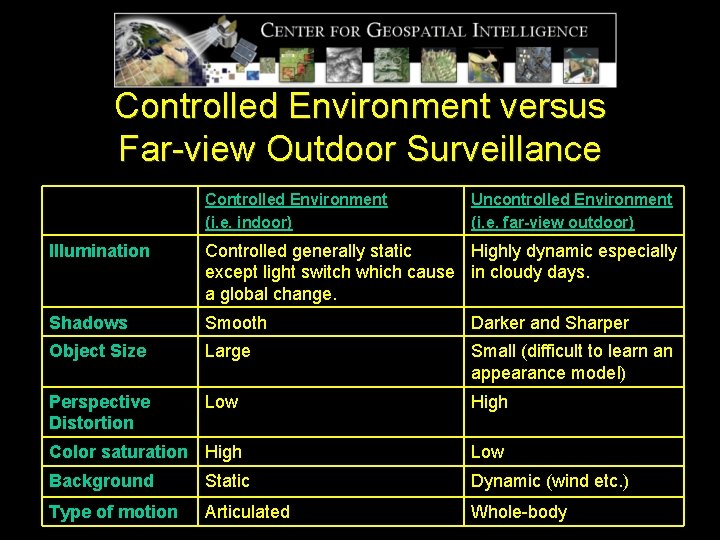

Controlled Environment versus Far-view Outdoor Surveillance Controlled Environment (i. e. indoor) Uncontrolled Environment (i. e. far-view outdoor) Illumination Controlled generally static Highly dynamic especially except light switch which cause in cloudy days. a global change. Shadows Smooth Darker and Sharper Object Size Large Small (difficult to learn an appearance model) Perspective Distortion Low High Color saturation High Low Background Static Dynamic (wind etc. ) Type of motion Articulated Whole-body

Our Current Capabilities Moving Object Detection Moving Cast Shadow Detection/Elimination Moving Object Tracking Sudden Illumination Change Detection Trajectory Filtering and Discontinuity Resolution

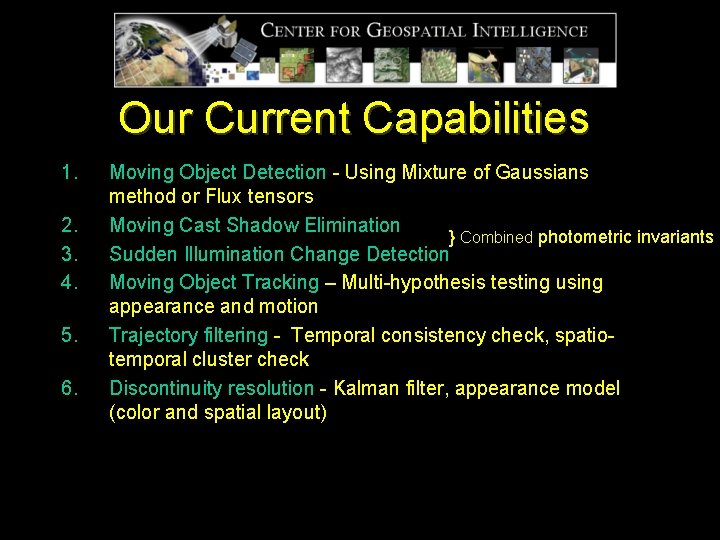

Our Current Capabilities 1. 2. 3. 4. 5. 6. Moving Object Detection - Using Mixture of Gaussians method or Flux tensors Moving Cast Shadow Elimination } Combined photometric invariants Sudden Illumination Change Detection Moving Object Tracking – Multi-hypothesis testing using appearance and motion Trajectory filtering - Temporal consistency check, spatiotemporal cluster check Discontinuity resolution - Kalman filter, appearance model (color and spatial layout)

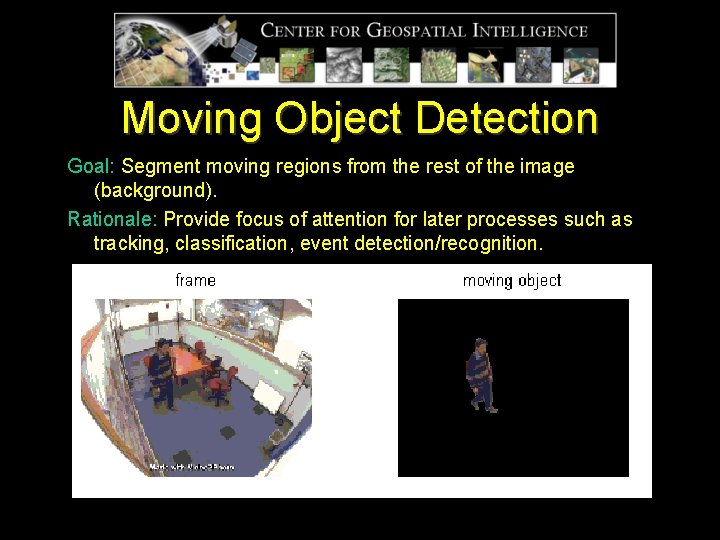

Moving Object Detection Goal: Segment moving regions from the rest of the image (background). Rationale: Provide focus of attention for later processes such as tracking, classification, event detection/recognition.

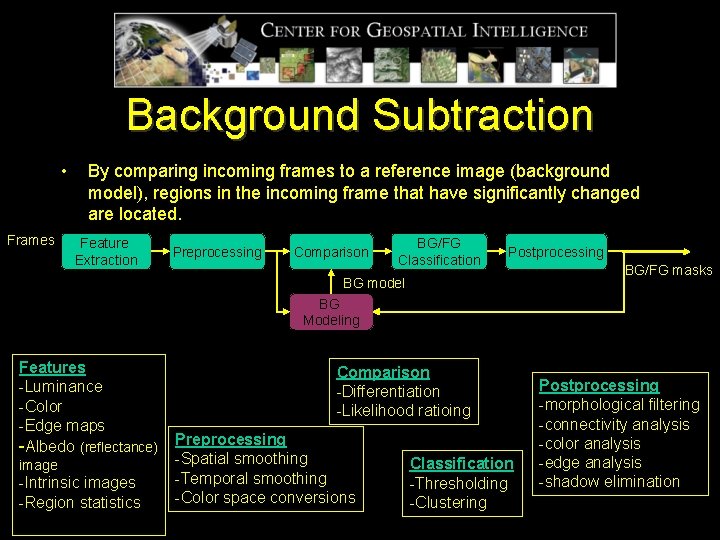

Background Subtraction • Frames By comparing incoming frames to a reference image (background model), regions in the incoming frame that have significantly changed are located. Feature Extraction Preprocessing Comparison BG/FG Classification Postprocessing BG model BG/FG masks BG Modeling Features -Luminance -Color -Edge maps -Albedo (reflectance) image -Intrinsic images -Region statistics Comparison -Differentiation -Likelihood ratioing Preprocessing -Spatial smoothing -Temporal smoothing -Color space conversions Classification -Thresholding -Clustering Postprocessing -morphological filtering -connectivity analysis -color analysis -edge analysis -shadow elimination

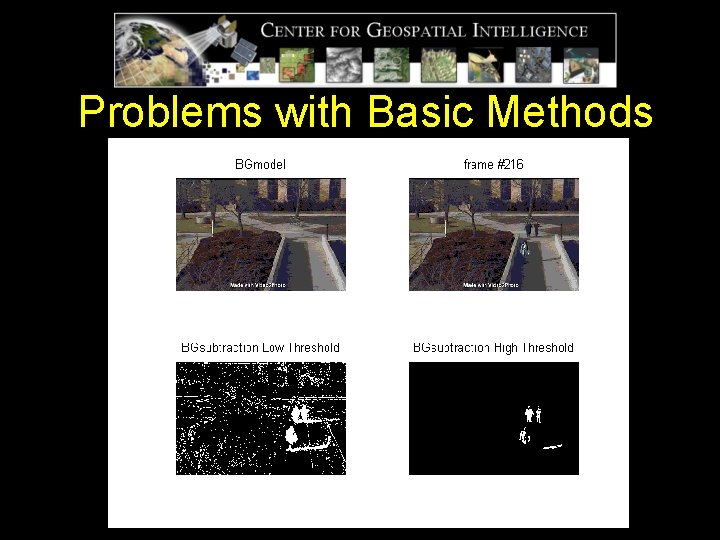

Problems with Basic Methods

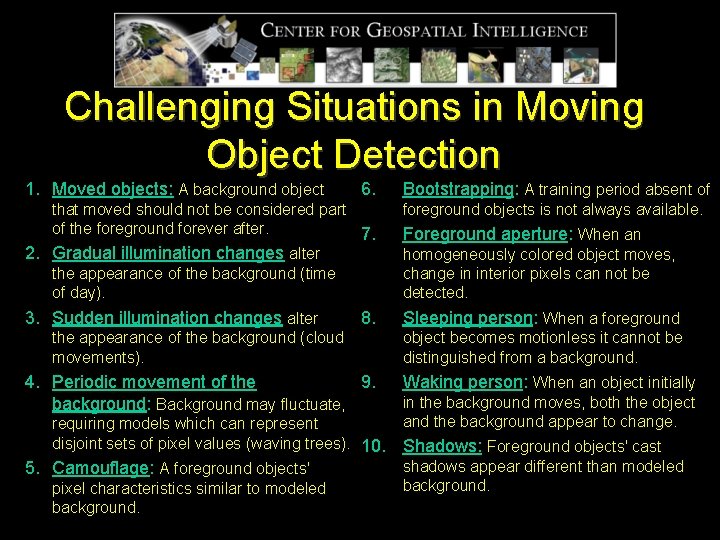

Challenging Situations in Moving Object Detection 1. Moved objects: A background object 6. Bootstrapping: A training period absent of that moved should not be considered part of the foreground forever after. 7. 2. Gradual illumination changes alter the appearance of the background (time of day). foreground objects is not always available. 3. Sudden illumination changes alter Sleeping person: When a foreground 8. the appearance of the background (cloud movements). 4. Periodic movement of the 9. background: Background may fluctuate, requiring models which can represent disjoint sets of pixel values (waving trees). 10. 5. Camouflage: A foreground objects' pixel characteristics similar to modeled background. Foreground aperture: When an homogeneously colored object moves, change in interior pixels can not be detected. object becomes motionless it cannot be distinguished from a background. Waking person: When an object initially in the background moves, both the object and the background appear to change. Shadows: Foreground objects' cast shadows appear different than modeled background.

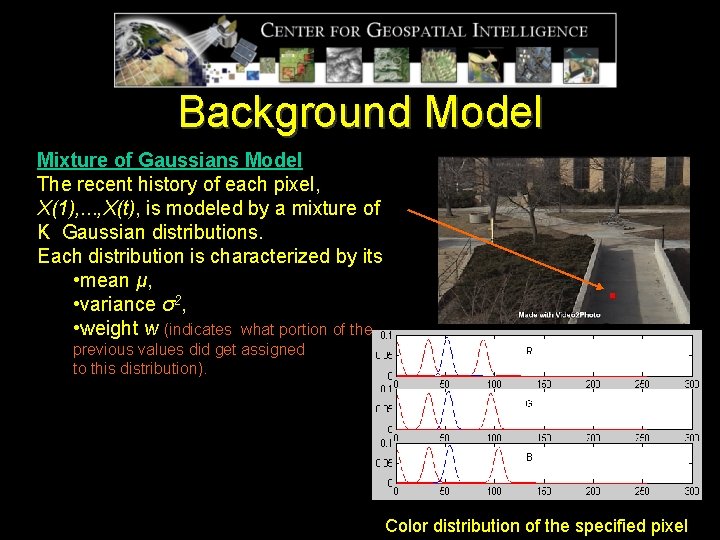

Background Model intensity Mixture of Gaussians Model The recent history of each pixel, X(1), . . . , X(t), is modeled by a mixture of K Gaussian distributions. Each distribution is characterized by its • mean μ, • variance σ2, • weight w (indicates what portion of the previous values did get assigned to this distribution). Color history of the specified pixel Color distribution of the specified pixel

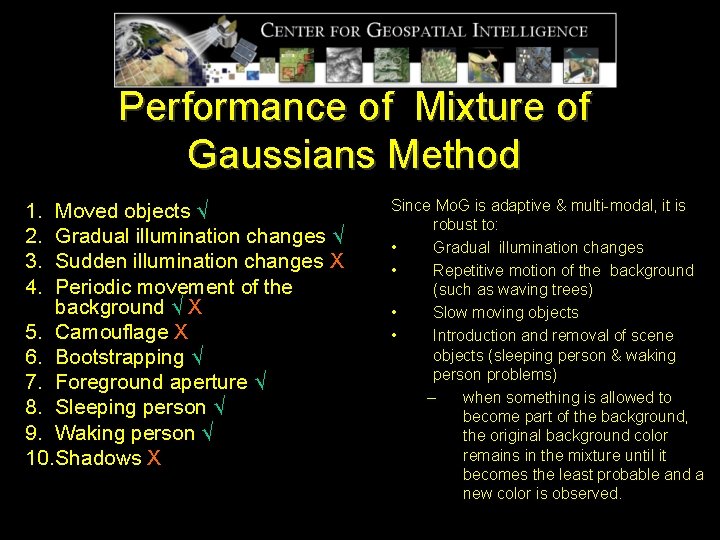

Performance of Mixture of Gaussians Method 1. 2. 3. 4. Moved objects √ Gradual illumination changes √ Sudden illumination changes X Periodic movement of the background √ X 5. Camouflage X 6. Bootstrapping √ 7. Foreground aperture √ 8. Sleeping person √ 9. Waking person √ 10. Shadows X Since Mo. G is adaptive & multi-modal, it is robust to: • Gradual illumination changes • Repetitive motion of the background (such as waving trees) • Slow moving objects • Introduction and removal of scene objects (sleeping person & waking person problems) – when something is allowed to become part of the background, the original background color remains in the mixture until it becomes the least probable and a new color is observed.

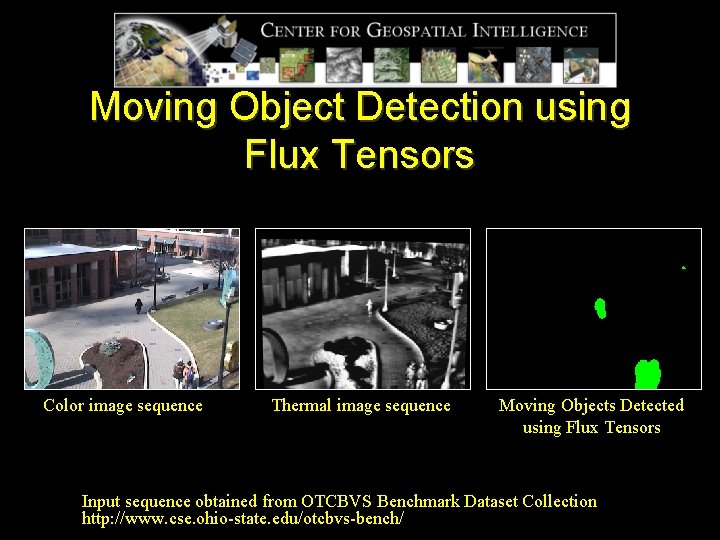

Moving Object Detection using Flux Tensors Color image sequence Thermal image sequence Moving Objects Detected using Flux Tensors Input sequence obtained from OTCBVS Benchmark Dataset Collection http: //www. cse. ohio-state. edu/otcbvs-bench/

Shadow Problem creates “new” objects static shadow merges separate objects

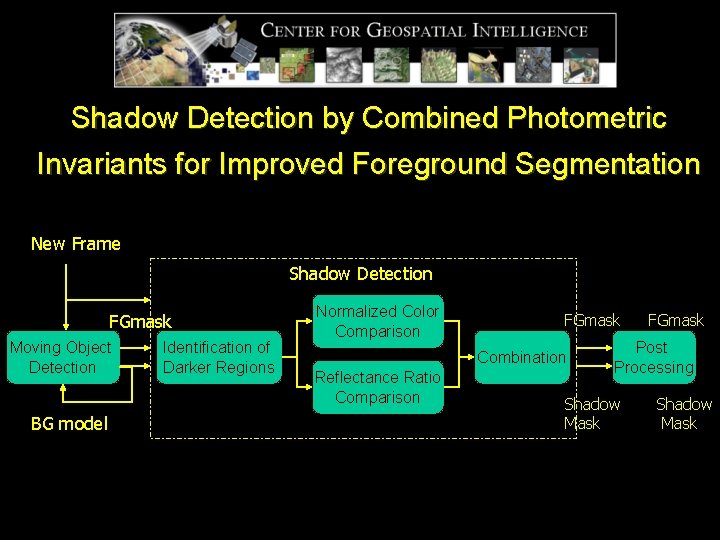

Shadow Detection by Combined Photometric Invariants for Improved Foreground Segmentation New Frame Shadow Detection FGmask Moving Object Detection BG model Identification of Darker Regions Normalized Color Comparison FGmask Combination Reflectance Ratio Comparison FGmask Post Processing Shadow Mask

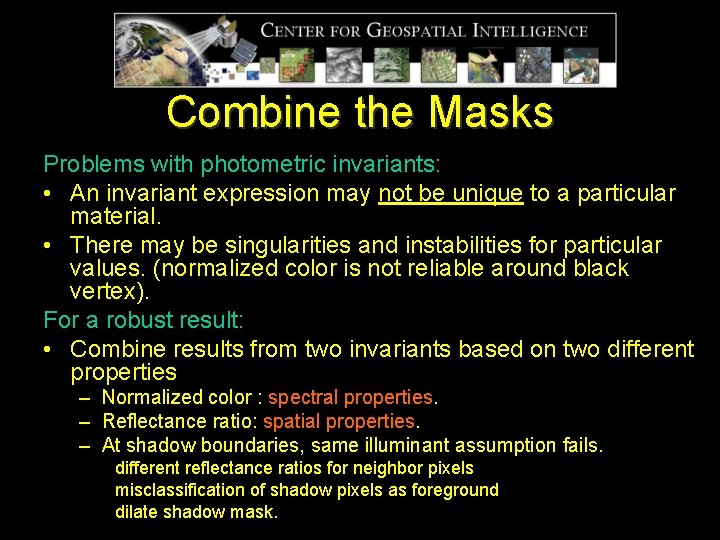

Combine the Masks Problems with photometric invariants: • An invariant expression may not be unique to a particular material. • There may be singularities and instabilities for particular values. (normalized color is not reliable around black vertex). For a robust result: • Combine results from two invariants based on two different properties – Normalized color : spectral properties. – Reflectance ratio: spatial properties. – At shadow boundaries, same illuminant assumption fails. different reflectance ratios for neighbor pixels misclassification of shadow pixels as foreground dilate shadow mask.

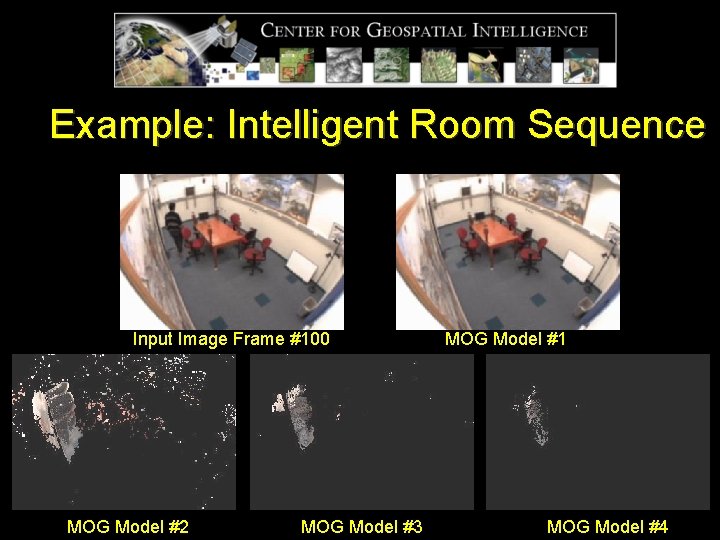

Example: Intelligent Room Sequence Input Image Frame #100 MOG Model #2 MOG Model #3 MOG Model #1 MOG Model #4

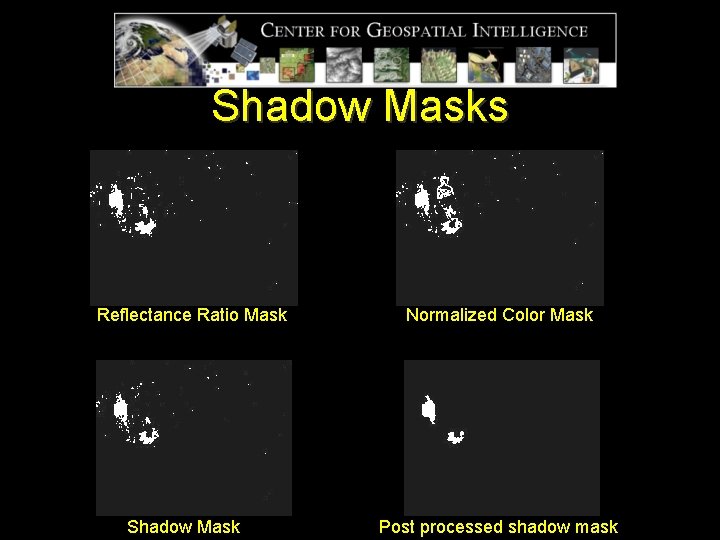

Shadow Masks Reflectance Ratio Mask Shadow Mask Normalized Color Mask Post processed shadow mask

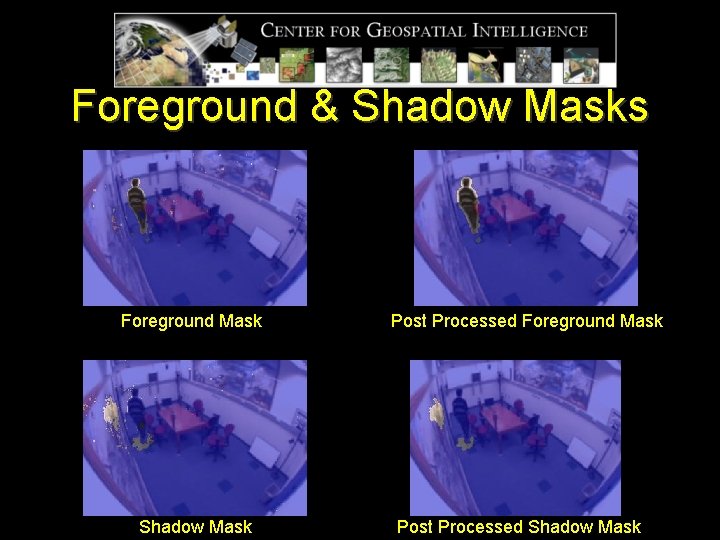

Foreground & Shadow Masks Foreground Mask Shadow Mask Post Processed Foreground Mask Post Processed Shadow Mask

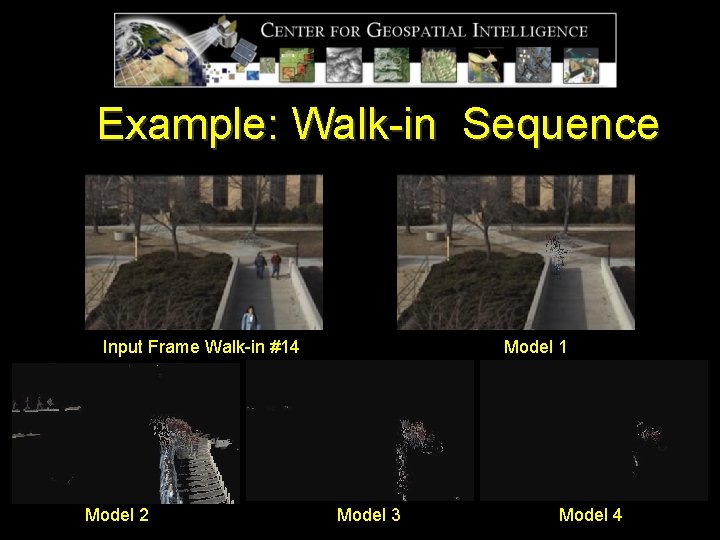

Example: Walk-in Sequence Input Frame Walk-in #14 Model 2 Model 1 Model 3 Model 4

Shadow Masks Normalized Color Masks Shadow Mask Reflectance Ratio Mask Shadow Mask Post Processed

Foreground & Shadow Masks Foreground Mask Shadow Mask Foreground Mask Post Processed Shadow Mask Post Processed

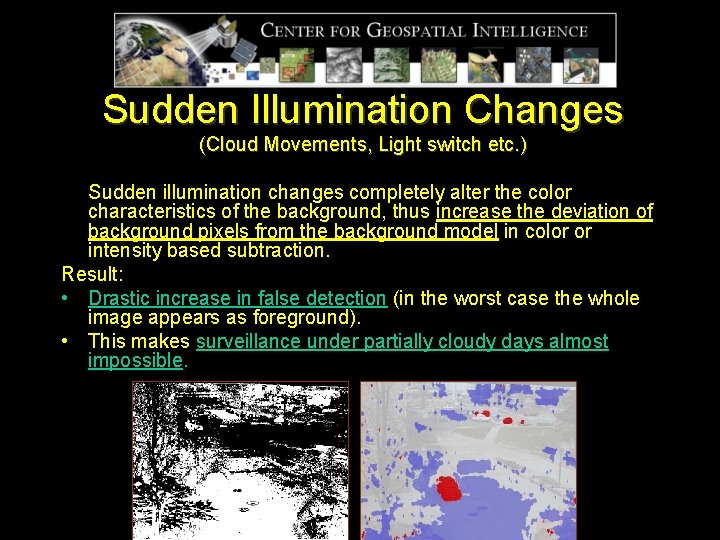

Sudden Illumination Changes (Cloud Movements, Light switch etc. ) Sudden illumination changes completely alter the color characteristics of the background, thus increase the deviation of background pixels from the background model in color or intensity based subtraction. Result: • Drastic increase in false detection (in the worst case the whole image appears as foreground). • This makes surveillance under partially cloudy days almost impossible.

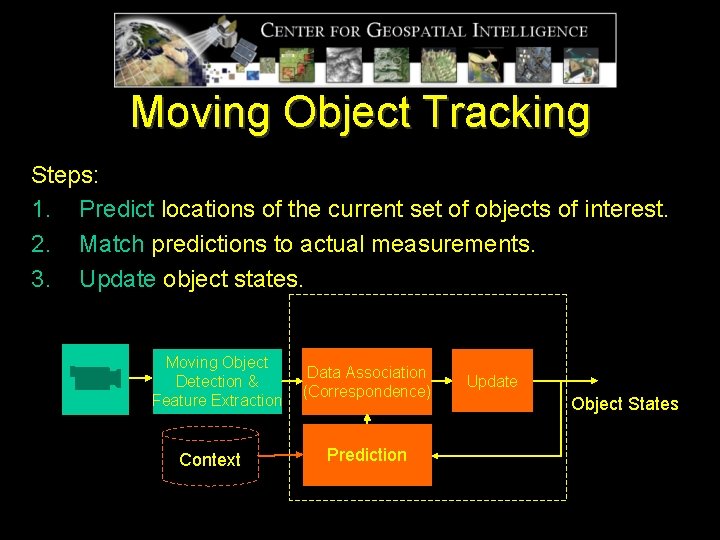

Moving Object Tracking Steps: 1. Predict locations of the current set of objects of interest. 2. Match predictions to actual measurements. 3. Update object states. Tracking Moving Object Detection & Feature Extraction Context Data Association (Correspondence) Prediction Update Object States

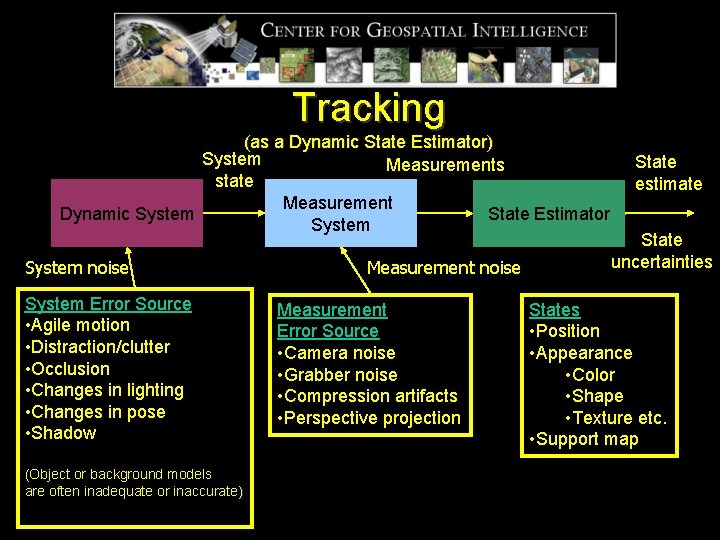

Tracking (as a Dynamic State Estimator) System Measurements state Measurement Dynamic System State Estimator System noise System Error Source • Agile motion • Distraction/clutter • Occlusion • Changes in lighting • Changes in pose • Shadow (Object or background models are often inadequate or inaccurate) Measurement noise Measurement Error Source • Camera noise • Grabber noise • Compression artifacts • Perspective projection State estimate State uncertainties States • Position • Appearance • Color • Shape • Texture etc. • Support map

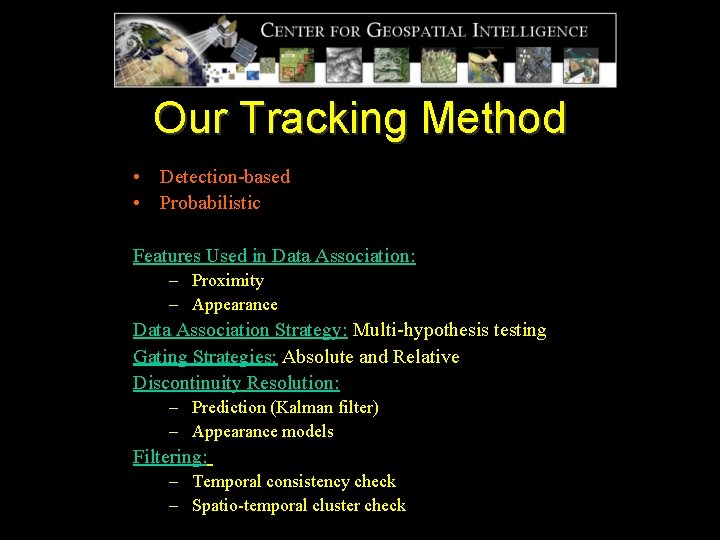

Our Tracking Method • Detection-based • Probabilistic Features Used in Data Association: – Proximity – Appearance Data Association Strategy: Multi-hypothesis testing Gating Strategies: Absolute and Relative Discontinuity Resolution: – Prediction (Kalman filter) – Appearance models Filtering: – Temporal consistency check – Spatio-temporal cluster check

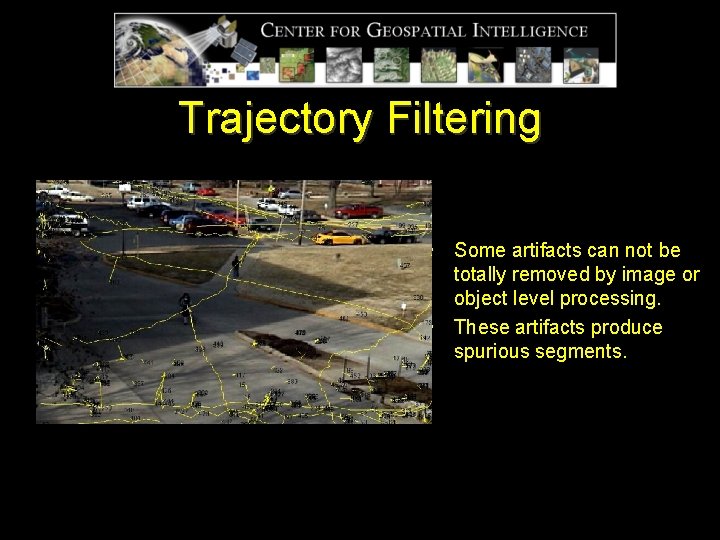

Trajectory Filtering • Some artifacts can not be totally removed by image or object level processing. • These artifacts produce spurious segments.

Temporal Consistency Check Source of the Problem: Segments resulting from • Temporarily fragmented parts of an object • Un-eliminated cast shadows Effect: Short segments that split from or merge to a longer segment. Proposed Solution: Pruning short split or merge segments by temporal consistency check. Elimination of short disconnected segments are delayed until after discontinuity resolution.

Spatio-Temporal Cluster Check Source of the Problem: • Repetitive motion of the background (i. e. moving branches or their cast shadows). • Spectral reflections (i. e. reflections from car windshields). Effect: Temporally consistent and spatially clustered trajectories. Proposed Solution: • Average Displacement to Length Ratio (ADLR) • Diagonal to Length Ratio (DLR)

Discontinuity Resolution Discontinuities occur especially in low resolution outdoor sequences. Source of the problem: • Temporarily undetected objects due to – Low contrast – Partial or total occlusions • Incorrect pruning in data association due to significant change in appearance or size caused by – Partial occlusion – Fragmentation

Discontinuity Resolution 1. Define source and sink locations where the objects are expected to appear and disappear. 2. Identify – Segdis : Segments disappearing unexpectedly (at a non-sink location) -> possible start of a discontinuity. – Segapp : Segments appearing unexpectedly (at a non-source location) -> possible end of a discontinuity. 3. Identify possible matches based on time constraint. 4. Use Kalman filter to predict future positions of disappearing and past positions of appearing segments. 5. Check direction and position consistencies on – Disappearing segment – Appearing segment – Joining segment 6. Check Color similarity. 7. Multiple possible matches for a single disappearing segment-> select appearing segment starting earliest. 8. Multiple possible matches for a single appearing segment-> select disappearing segment ending latest. 9. Match-> appearing segment inherits disappearing segment’s label and propagates this new label to its children.

Challenges in Tracking for Visual Event Detection • Shadows -false detections, shape distortions, merges • Sudden illumination changes (e. g. due to cloud movements) -difficulty in object detection especially in partly cloudy days • Glare from specular surfaces (e. g. car windshields) -spurious detections and trajectory segments • Perspective distortion (objects far away from the camera look smaller and appear to move slower) -difficulty in filtering false detections • Occlusion -discontinuities in trajectories • Poor video quality (low resolution, low color saturation) -difficulty in moving object detection -difficulty in appearance modeling

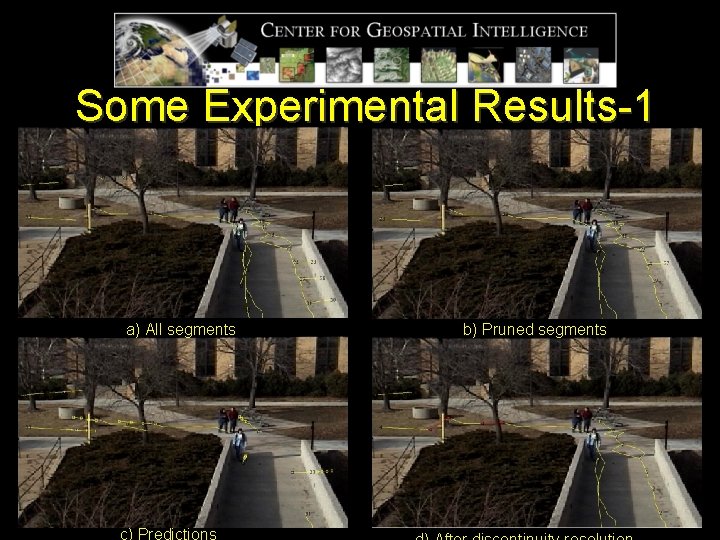

Some Experimental Results-1 a) All segments c) Predictions b) Pruned segments

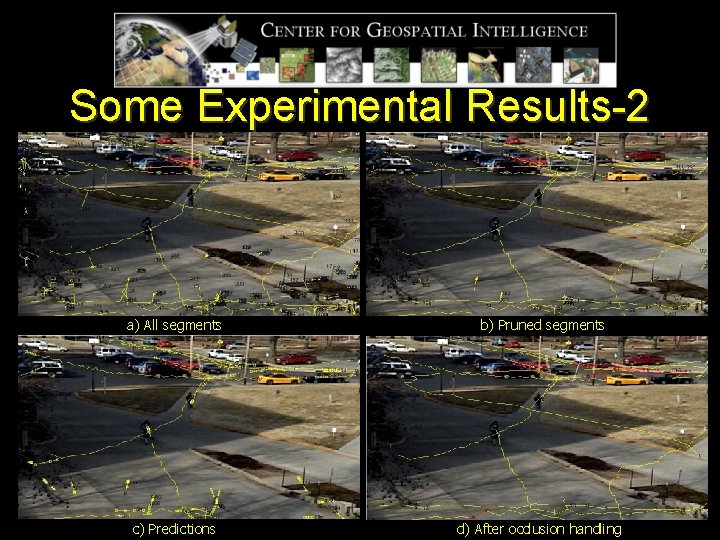

Some Experimental Results-2 a) All segments c) Predictions b) Pruned segments UPS d) After occlusion handling

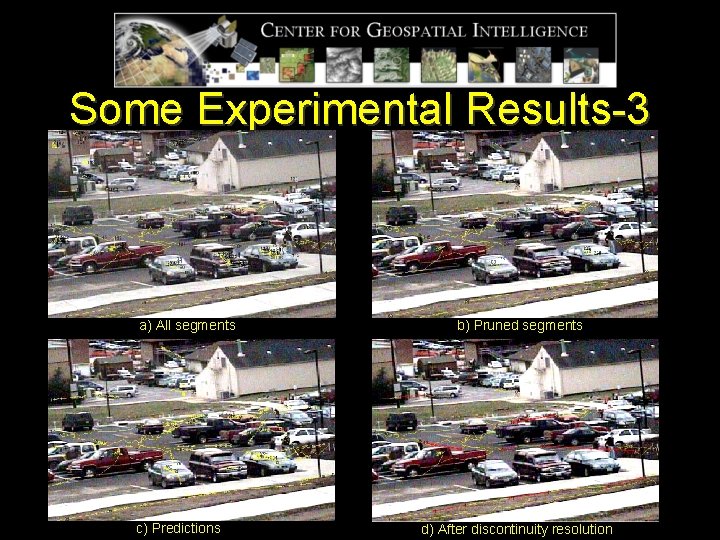

Some Experimental Results-3 a) All segments c) Predictions b) Pruned segments d) After discontinuity resolution

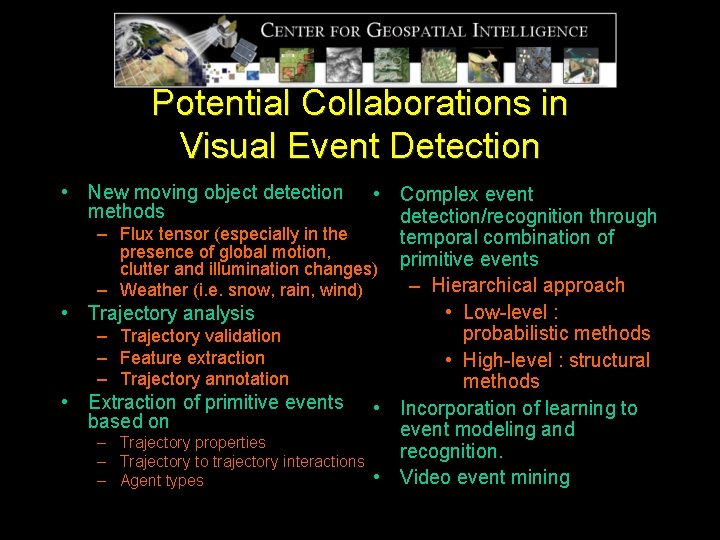

Potential Collaborations in Visual Event Detection • New moving object detection methods • Complex event detection/recognition through – Flux tensor (especially in the temporal combination of presence of global motion, clutter and illumination changes) primitive events – Hierarchical approach – Weather (i. e. snow, rain, wind) • Low-level : • Trajectory analysis probabilistic methods – Trajectory validation – Feature extraction • High-level : structural – Trajectory annotation methods • Extraction of primitive events • Incorporation of learning to based on event modeling and – Trajectory properties recognition. – Trajectory to trajectory interactions • Video event mining – Agent types

Questions?

- Slides: 41