Moving beyond Framebased Video Object Segmentation Matthew Alighchi

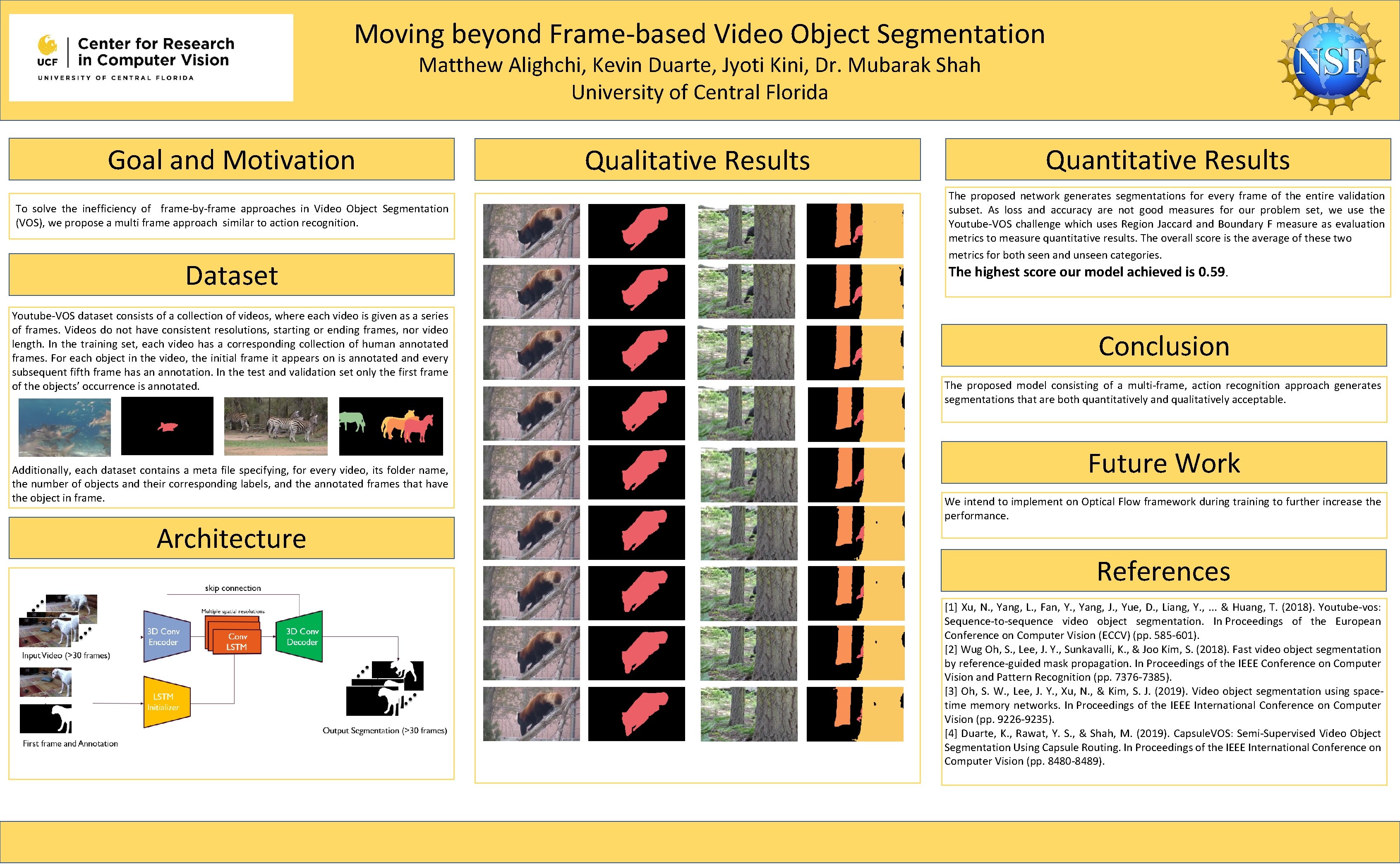

Moving beyond Frame-based Video Object Segmentation Matthew Alighchi, Kevin Duarte, Jyoti Kini, Dr. Mubarak Shah University of Central Florida Goal and Motivation To solve the inefficiency of frame-by-frame approaches in Video Object Segmentation (VOS), we propose a multi frame approach similar to action recognition. Dataset Youtube-VOS dataset consists of a collection of videos, where each video is given as a series of frames. Videos do not have consistent resolutions, starting or ending frames, nor video length. In the training set, each video has a corresponding collection of human annotated frames. For each object in the video, the initial frame it appears on is annotated and every subsequent fifth frame has an annotation. In the test and validation set only the first frame of the objects’ occurrence is annotated. Additionally, each dataset contains a meta file specifying, for every video, its folder name, the number of objects and their corresponding labels, and the annotated frames that have the object in frame. Architecture Qualitative Results Quantitative Results The proposed network generates segmentations for every frame of the entire validation subset. As loss and accuracy are not good measures for our problem set, we use the Youtube-VOS challenge which uses Region Jaccard and Boundary F measure as evaluation metrics to measure quantitative results. The overall score is the average of these two metrics for both seen and unseen categories. The highest score our model achieved is 0. 59. Conclusion The proposed model consisting of a multi-frame, action recognition approach generates segmentations that are both quantitatively and qualitatively acceptable. Future Work We intend to implement on Optical Flow framework during training to further increase the performance. References [1] Xu, N. , Yang, L. , Fan, Y. , Yang, J. , Yue, D. , Liang, Y. , . . . & Huang, T. (2018). Youtube-vos: Sequence-to-sequence video object segmentation. In Proceedings of the European Conference on Computer Vision (ECCV) (pp. 585 -601). [2] Wug Oh, S. , Lee, J. Y. , Sunkavalli, K. , & Joo Kim, S. (2018). Fast video object segmentation by reference-guided mask propagation. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. 7376 -7385). [3] Oh, S. W. , Lee, J. Y. , Xu, N. , & Kim, S. J. (2019). Video object segmentation using spacetime memory networks. In Proceedings of the IEEE International Conference on Computer Vision (pp. 9226 -9235). [4] Duarte, K. , Rawat, Y. S. , & Shah, M. (2019). Capsule. VOS: Semi-Supervised Video Object Segmentation Using Capsule Routing. In Proceedings of the IEEE International Conference on Computer Vision (pp. 8480 -8489).

- Slides: 1