Movementbased checkpointing and logging for failure recovery of

Movement-based checkpointing and logging for failure recovery of database applications in mobile environments Sapna E. George, Ing-Ray Chen Presented By Yinan Li, Shuo Miao April 14, 2009

Agenda Introduction The Proposed Approach System Model Checkpointing, Logging, and Recovery Performance Analysis The SPN Model Results and Analysis Summary and Applicability

Failure-Prone Mobile Computing A type of distributed computing involving hosts that are mobile while retailing network connection through unreliable wireless communication Prone to failures Why mobile computing is prone to failures? Host mobility Link breakage Limiting the use of backend power source Limited battery life Wireless communication Unreliable wireless links Intermittent connectivity Bandwidth limitations

Failure Recovery in Traditional Distributed Systems Checkpointing Process state is periodically saved to stable storage such as hard disks, namely checkpoint Checkpoints may be large, e. g. , the size of a checkpoint may be several KB Sometimes called a snapshot Independent VS. coordinated Independent checkpointing Each process independently and asynchronously takes local checkpoints Coordinated checkpointing All processes synchronize to jointly build global checkpoints An expensive operation During the process of taking checkpoints, the process has to be suspended The size of checkpoints may be large How can we reduce the number of checkpointing operations, while still enable rollback recovery?

Failure Recovery in Traditional Distributed Systems Logging Proposed as a complimentary to checkpointing, to reduce the number of expensive checkpointing operations, but still enable effective recovery Recording all the non-deterministic events happening in a process between two checkpoints that potentially change the state of the process, e. g. , receipt of a message, user input, etc. , and the information necessary to reply these events Asynchronous recovery is achieved by combining checkpointing with logging

Failure Recovery in Traditional Distributed Systems Rollback recovery Upon failure, the state of the failed process is rolled back to the most recent checkpoint Events found in the logs that happened after the most recent checkpoint are replayed in their original order Independent recovery Each process recreates its pre-failure state independently (asynchronously) Computation is restarted after rollback

New Challenges Brought by Mobile Computing Are traditional methods still directly applicable? Rethinking … Properties of mobile computing that complicate the situation Mobility of hosts Unreliable wireless network connectivity Low wireless bandwidth Limited battery life of mobile devices Lack of stable storage Different types of failures Voluntary disconnection (to save battery life) Hardware failure Transmission errors due to noises Software failure

The Proposed Approach (1/2) Why it is proposed? Traditional recovery schemes may not work Due to the characteristics of mobile computing And the challenges imposed by mobile applications The limitation of existing methods Checkpoints are taken periodically If the frequency of checkpointing is high, the additional overhead is large If the frequency is low, the recovery cost may be very large (the distance between the current MSS of the MH and the MSS that stores the checkpoint may be large, and the logs may be widely dispersed) Rigid mechanism for the whole system, lacking the flexibility of per-user methods

The Proposed Approach (2/2) Proposed approach Movement-based, instead of periodic Checkpoints are taken only when a mobile host has made M handoffs Goal: looking for the optimal M that minimizes the total recovery cost Advantages Minimization of the total recovery cost The optimal threshold M ensures that Checkpoints stay close to the recovery MSS Logs are not too widely dispersed Overhead of unnecessary checkpoints & logs is avoided Per-user based M is a function of the failure rate, log arrive rate, and mobility rate of individual MHs, which is adaptive to specific user behavior Wide-range applicability

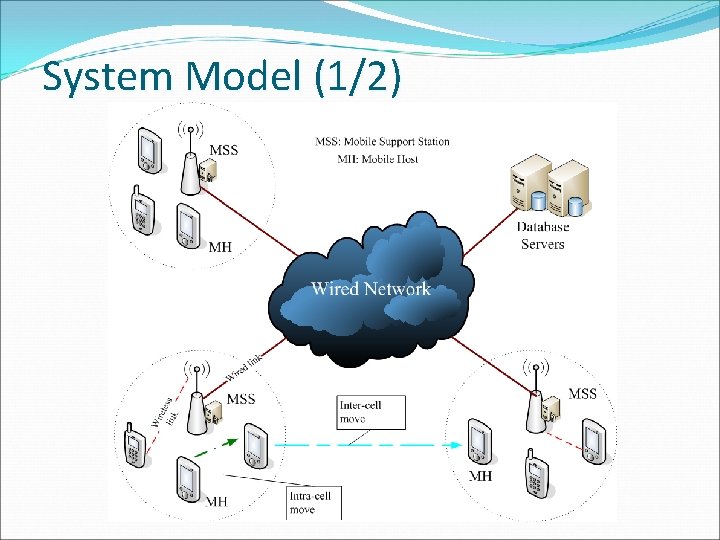

System Model (1/2)

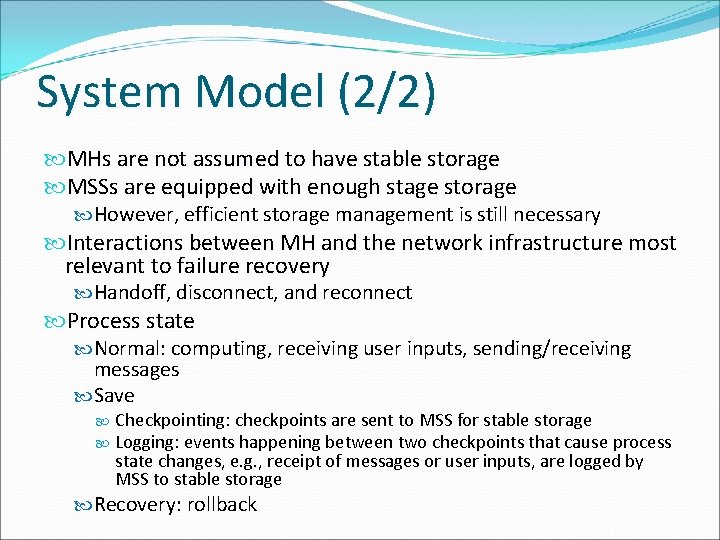

System Model (2/2) MHs are not assumed to have stable storage MSSs are equipped with enough stage storage However, efficient storage management is still necessary Interactions between MH and the network infrastructure most relevant to failure recovery Handoff, disconnect, and reconnect Process state Normal: computing, receiving user inputs, sending/receiving messages Save Checkpointing: checkpoints are sent to MSS for stable storage Logging: events happening between two checkpoints that cause process state changes, e. g. , receipt of messages or user inputs, are logged by MSS to stable storage Recovery: rollback

Checkpointing & Logging (1/3)

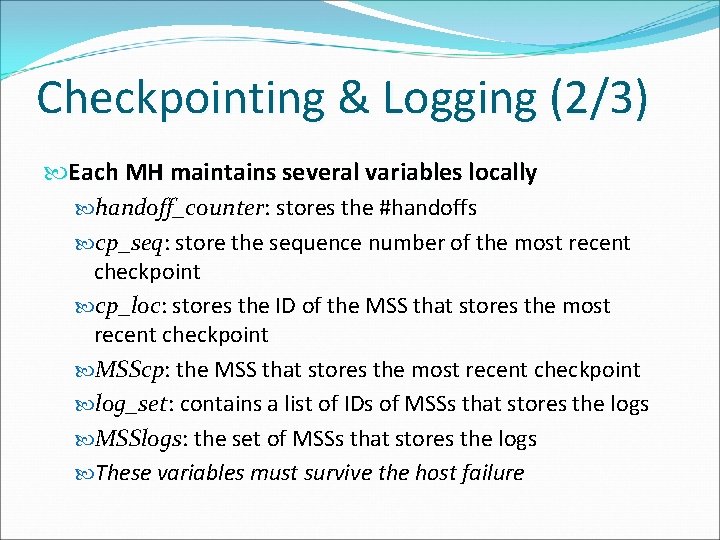

Checkpointing & Logging (2/3) Each MH maintains several variables locally handoff_counter: stores the #handoffs cp_seq: store the sequence number of the most recent checkpoint cp_loc: stores the ID of the MSS that stores the most recent checkpoint MSScp: the MSS that stores the most recent checkpoint log_set: contains a list of IDs of MSSs that stores the logs MSSlogs: the set of MSSs that stores the logs These variables must survive the host failure

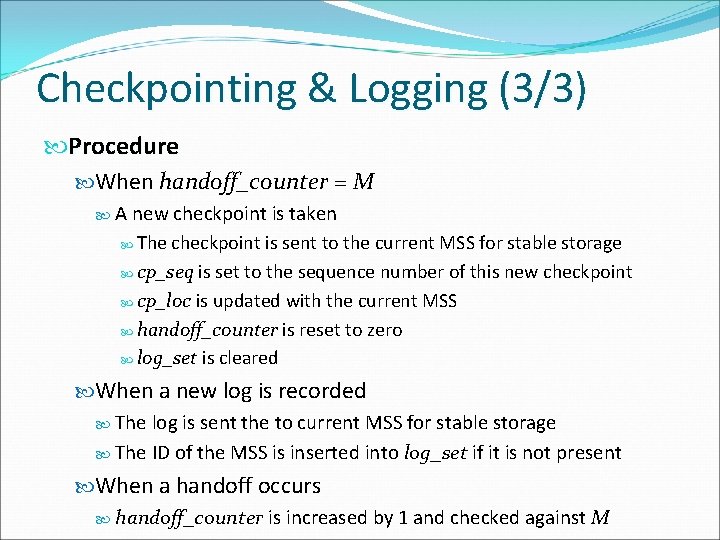

Checkpointing & Logging (3/3) Procedure When handoff_counter = M A new checkpoint is taken The checkpoint is sent to the current MSS for stable storage cp_seq is set to the sequence number of this new checkpoint cp_loc is updated with the current MSS handoff_counter is reset to zero log_set is cleared When a new log is recorded The log is sent the to current MSS for stable storage The ID of the MSS is inserted into log_set if it is not present When a handoff occurs handoff_counter is increased by 1 and checked against M

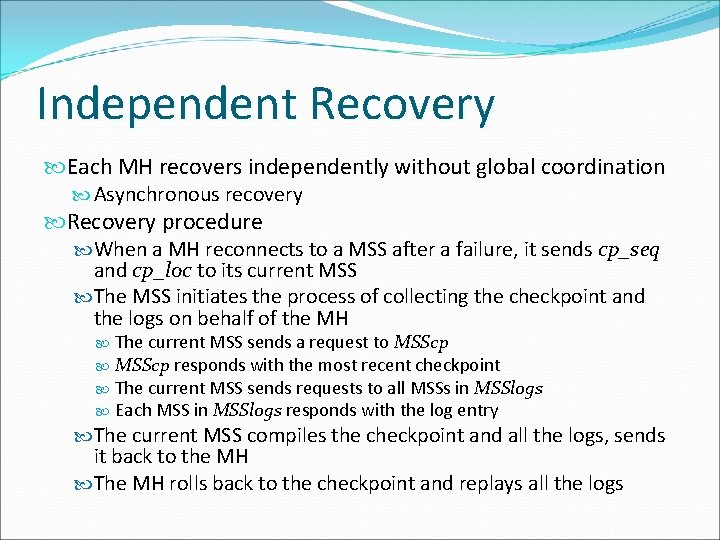

Independent Recovery Each MH recovers independently without global coordination Asynchronous recovery Recovery procedure When a MH reconnects to a MSS after a failure, it sends cp_seq and cp_loc to its current MSS The MSS initiates the process of collecting the checkpoint and the logs on behalf of the MH The current MSS sends a request to MSScp responds with the most recent checkpoint The current MSS sends requests to all MSSs in MSSlogs Each MSS in MSSlogs responds with the log entry The current MSS compiles the checkpoint and all the logs, sends it back to the MH The MH rolls back to the checkpoint and replays all the logs

Performance Analysis: SPN Model

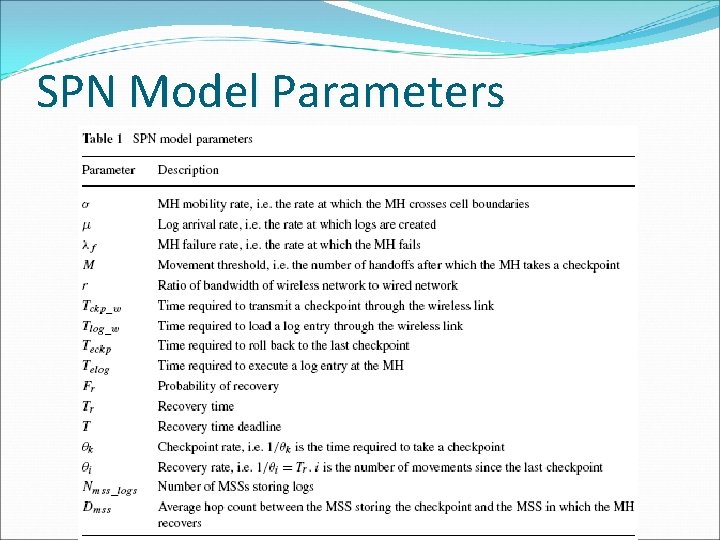

SPN Model Parameters

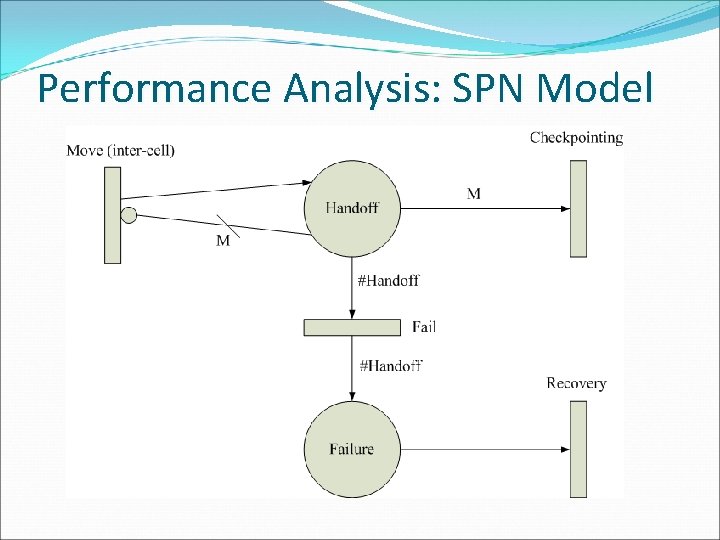

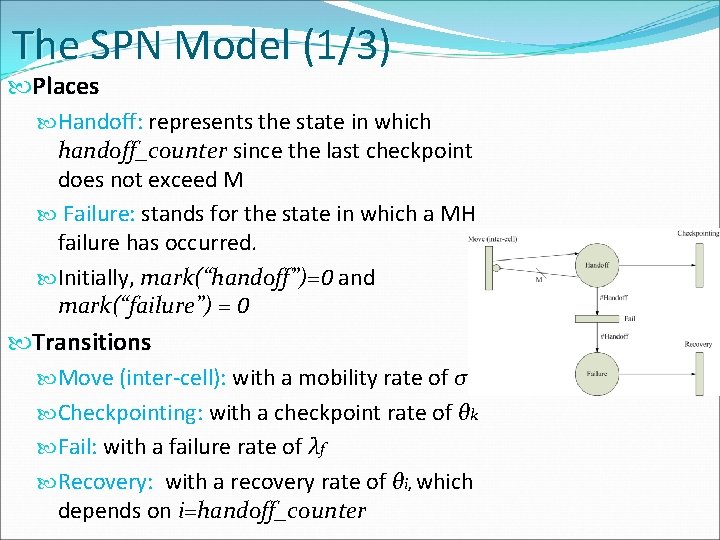

The SPN Model (1/3) Places Handoff: represents the state in which handoff_counter since the last checkpoint does not exceed M Failure: stands for the state in which a MH failure has occurred. Initially, mark(“handoff”)=0 and mark(“failure”) = 0 Transitions Move (inter-cell): with a mobility rate of σ Checkpointing: with a checkpoint rate of θk Fail: with a failure rate of λf Recovery: with a recovery rate of θi, which depends on i=handoff_counter

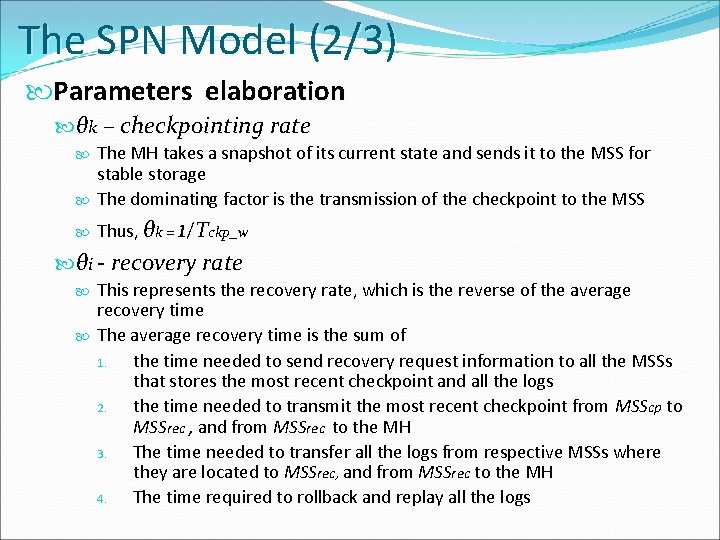

The SPN Model (2/3) Parameters elaboration θk – checkpointing rate The MH takes a snapshot of its current state and sends it to the MSS for stable storage The dominating factor is the transmission of the checkpoint to the MSS Thus, θk = 1/Tckp_w θi - recovery rate This represents the recovery rate, which is the reverse of the average recovery time The average recovery time is the sum of 1. the time needed to send recovery request information to all the MSSs that stores the most recent checkpoint and all the logs 2. the time needed to transmit the most recent checkpoint from MSScp to MSSrec , and from MSSrec to the MH 3. The time needed to transfer all the logs from respective MSSs where they are located to MSSrec, and from MSSrec to the MH 4. The time required to rollback and replay all the logs

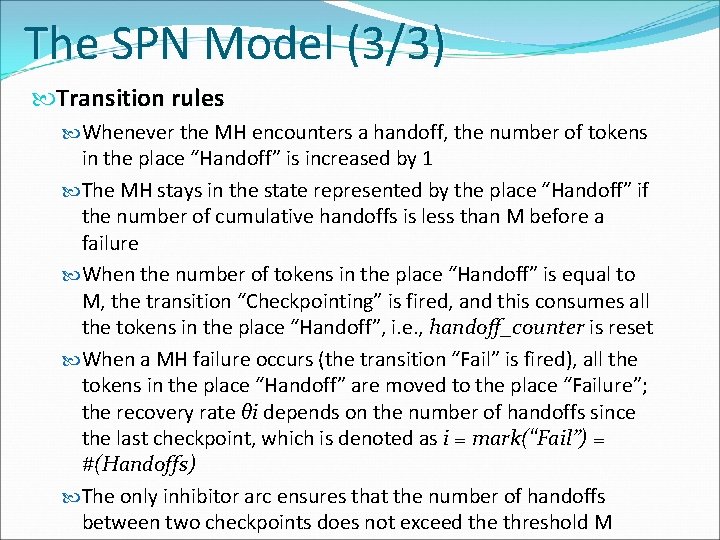

The SPN Model (3/3) Transition rules Whenever the MH encounters a handoff, the number of tokens in the place “Handoff” is increased by 1 The MH stays in the state represented by the place “Handoff” if the number of cumulative handoffs is less than M before a failure When the number of tokens in the place “Handoff” is equal to M, the transition “Checkpointing” is fired, and this consumes all the tokens in the place “Handoff”, i. e. , handoff_counter is reset When a MH failure occurs (the transition “Fail” is fired), all the tokens in the place “Handoff” are moved to the place “Failure”; the recovery rate θi depends on the number of handoffs since the last checkpoint, which is denoted as i = mark(“Fail”) = #(Handoffs) The only inhibitor arc ensures that the number of handoffs between two checkpoints does not exceed the threshold M

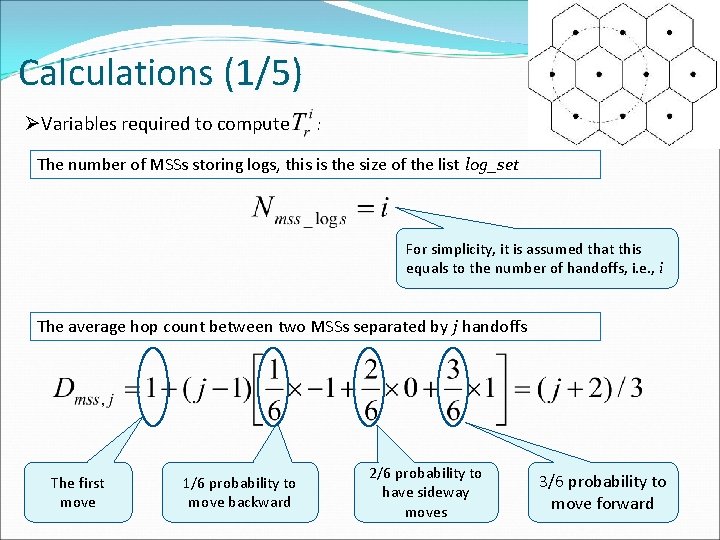

Calculations (1/5) ØVariables required to compute : The number of MSSs storing logs, this is the size of the list log_set For simplicity, it is assumed that this equals to the number of handoffs, i. e. , i The average hop count between two MSSs separated by j handoffs The first move 1/6 probability to move backward 2/6 probability to have sideway moves 3/6 probability to move forward

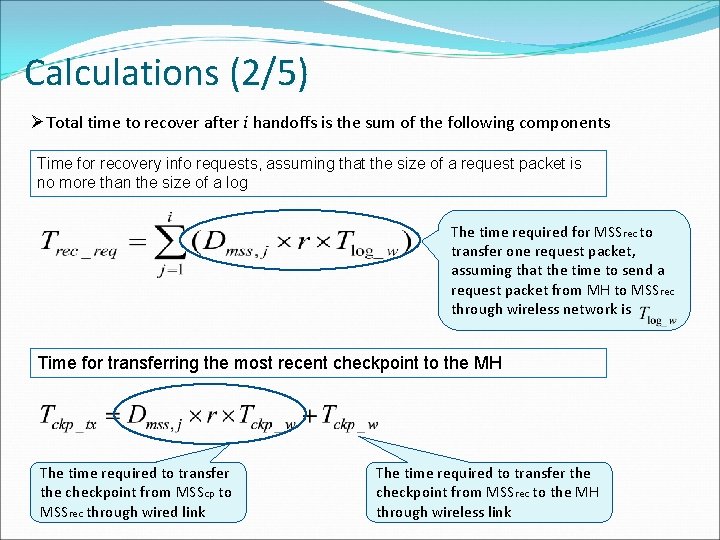

Calculations (2/5) ØTotal time to recover after i handoffs is the sum of the following components Time for recovery info requests, assuming that the size of a request packet is no more than the size of a log The time required for MSSrec to transfer one request packet, assuming that the time to send a request packet from MH to MSSrec through wireless network is Time for transferring the most recent checkpoint to the MH The time required to transfer the checkpoint from MSScp to MSSrec through wired link The time required to transfer the checkpoint from MSSrec to the MH through wireless link

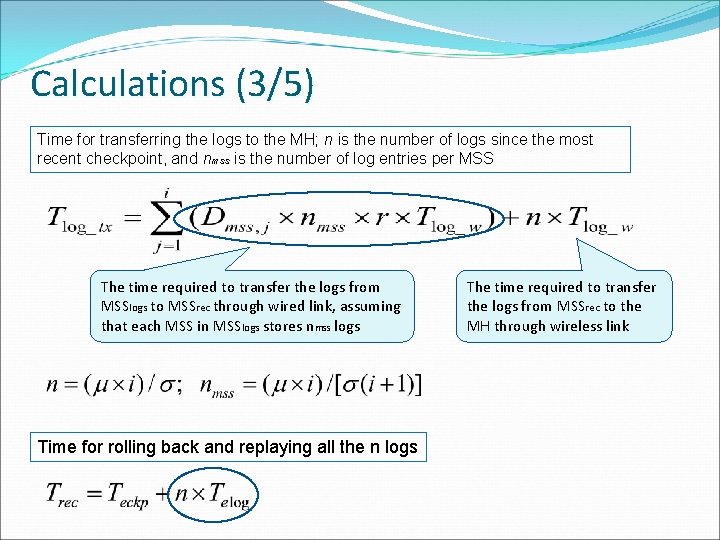

Calculations (3/5) Time for transferring the logs to the MH; n is the number of logs since the most recent checkpoint, and nmss is the number of log entries per MSS The time required to transfer the logs from MSSlogs to MSSrec through wired link, assuming that each MSS in MSSlogs stores nmss logs Time for rolling back and replaying all the n logs The time required to transfer the logs from MSSrec to the MH through wireless link

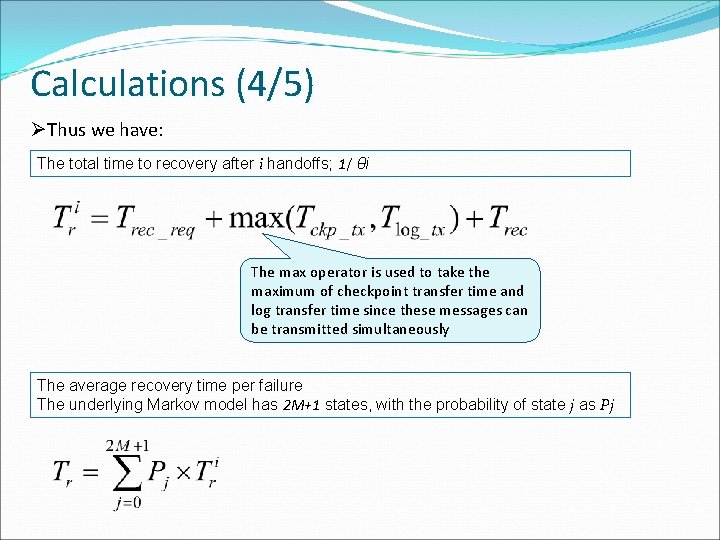

Calculations (4/5) ØThus we have: The total time to recovery after i handoffs; 1/ θi The max operator is used to take the maximum of checkpoint transfer time and log transfer time since these messages can be transmitted simultaneously The average recovery time per failure The underlying Markov model has 2 M+1 states, with the probability of state j as Pj

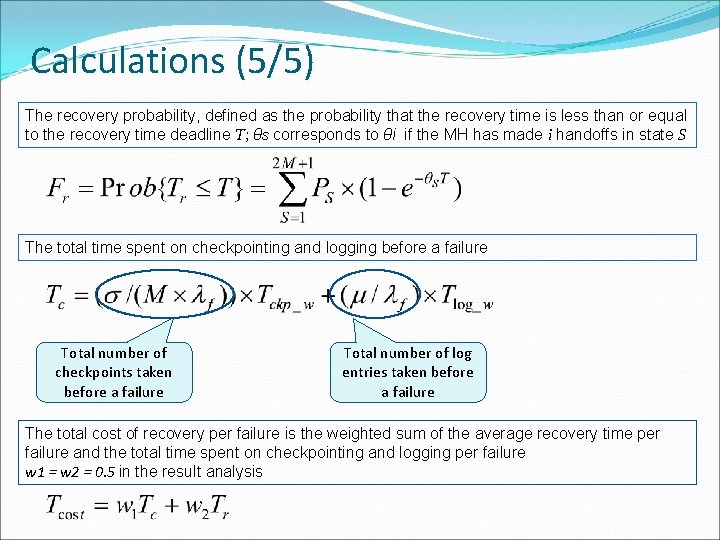

Calculations (5/5) The recovery probability, defined as the probability that the recovery time is less than or equal to the recovery time deadline T; θS corresponds to θi if the MH has made i handoffs in state S The total time spent on checkpointing and logging before a failure Total number of checkpoints taken before a failure Total number of log entries taken before a failure The total cost of recovery per failure is the weighted sum of the average recovery time per failure and the total time spent on checkpointing and logging per failure w 1 = w 2 = 0. 5 in the result analysis

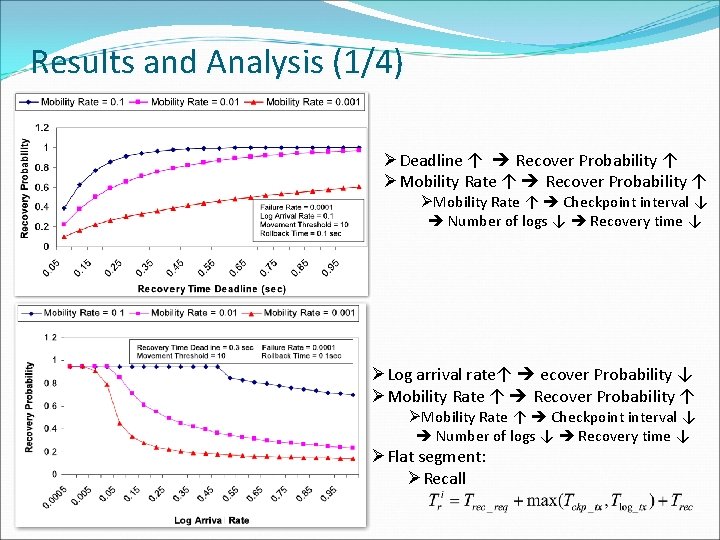

Results and Analysis (1/4) ØDeadline ↑ Recover Probability ↑ ØMobility Rate ↑ Checkpoint interval ↓ Number of logs ↓ Recovery time ↓ ØLog arrival rate↑ ecover Probability ↓ ØMobility Rate ↑ Recover Probability ↑ ØMobility Rate ↑ Checkpoint interval ↓ Number of logs ↓ Recovery time ↓ ØFlat segment: ØRecall

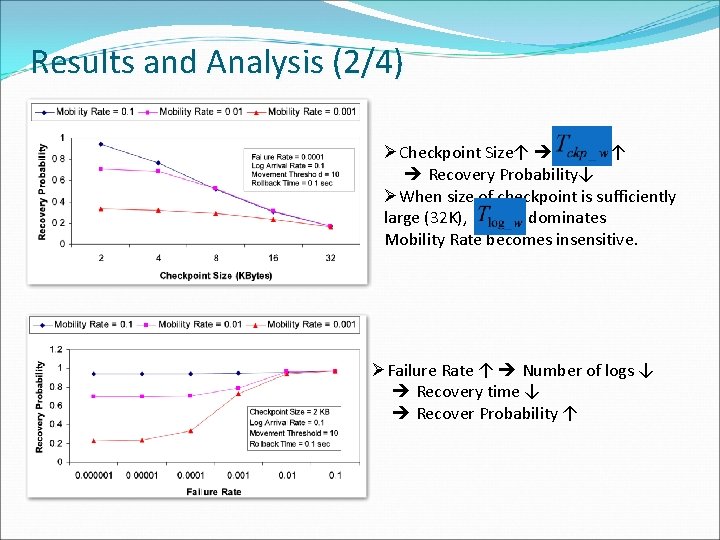

Results and Analysis (2/4) ØCheckpoint Size↑ ↑ Recovery Probability↓ ØWhen size of checkpoint is sufficiently large (32 K), dominates Mobility Rate becomes insensitive. ØFailure Rate ↑ Number of logs ↓ Recovery time ↓ Recover Probability ↑

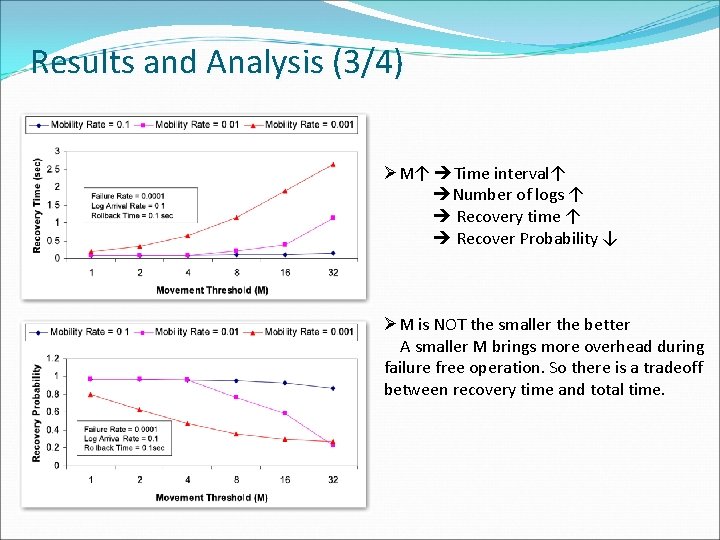

Results and Analysis (3/4) ØM↑ Time interval↑ Number of logs ↑ Recovery time ↑ Recover Probability ↓ ØM is NOT the smaller the better A smaller M brings more overhead during failure free operation. So there is a tradeoff between recovery time and total time.

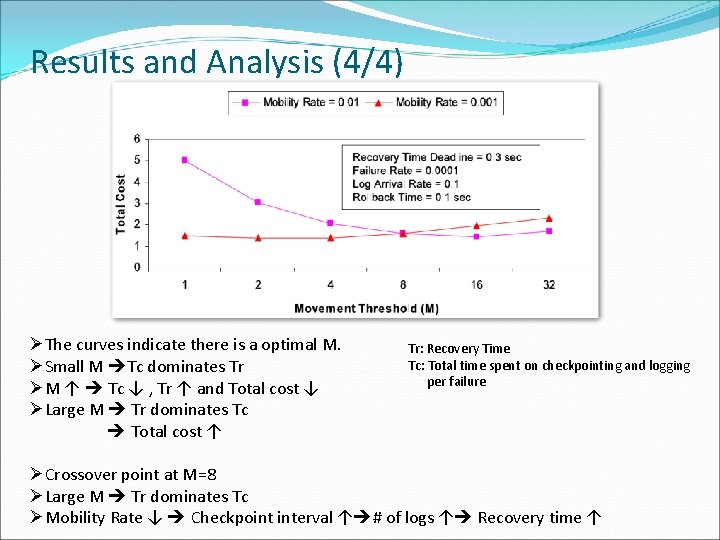

Results and Analysis (4/4) ØThe curves indicate there is a optimal M. ØSmall M Tc dominates Tr ØM ↑ Tc ↓ , Tr ↑ and Total cost ↓ ØLarge M Tr dominates Tc Total cost ↑ Tr: Recovery Time Tc: Total time spent on checkpointing and logging per failure ØCrossover point at M=8 ØLarge M Tr dominates Tc ØMobility Rate ↓ Checkpoint interval ↑ # of logs ↑ Recovery time ↑

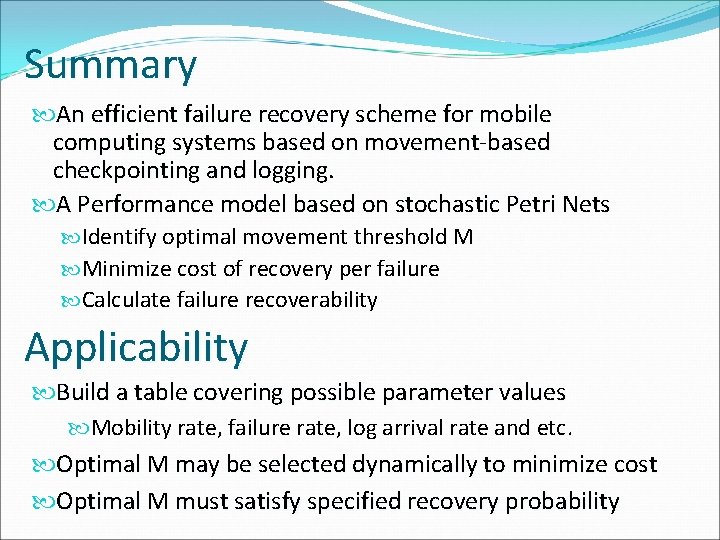

Summary An efficient failure recovery scheme for mobile computing systems based on movement-based checkpointing and logging. A Performance model based on stochastic Petri Nets Identify optimal movement threshold M Minimize cost of recovery per failure Calculate failure recoverability Applicability Build a table covering possible parameter values Mobility rate, failure rate, log arrival rate and etc. Optimal M may be selected dynamically to minimize cost Optimal M must satisfy specified recovery probability

Questions?

- Slides: 31