Morphosyntactic correspondence a progress report on bitext parsing

Morphosyntactic correspondence: a progress report on bitext parsing Alexander Fraser, Renjing Wang, Hinrich Schütze Institute for NLP University of Stuttgart INFuture 2009: Digital Resources and Knowledge Sharing Nov 4 th 2009, Zagreb

Outline § The Institute for Natural Language Processing at the University of Stuttgart § Bitext parsing § Using morphosyntactic correspondence

If. NLP Stuttgart § The Institute for Natural Language Processing (If. NLP/IMS) at the University of Stuttgart § Dogil (Phonetics and Speech) § Large department § Kuhn/Rohrer (LFG syntax and semantics) § Cahill (LFG generation) § Heid (Terminology extraction, morphology) § Padó (Semantics, lexical semantics) § Schütze (Statistical NLP and Information Retrieval) § More on next slide

If. NLP – Statistical NLP Group § Hinrich Schütze (director since 2004) § § § Bernd Möbius – Speech recognition and synthesis Helmut Schmid - Parsing , morphology (known for Tree. Tagger, Bit. Par) Sabine Schulte im Walde – NLP and cognitive modeling of lexical semantics Michael Walsh – Speech, exemplar theoretic syntax Alex Fraser - Statistical machine translation, parsing, cross-lingual information retrieval § General department areas of research § New statistical NLP models and methods § Semi-supervised and active learning § Cognitive/linguistic representation models § Applied to: NLP, retrieval, MT, speech, e-learning, …

If. NLP - Partnerships § Stuttgart: large projects with linguistics, computer science, EE signal processing, high performance computing § Germany: Darmstadt, Tübingen, DSPIN/CLARIN consortium (UIMAbased German processing) § International: large French-led European project (6 universities, 4 industrial partners), collaborations on South African languages, Edinburgh, CLARIN § Industrial: various projects with publishers (many focusing on terminology)

Outline § The Institute for Natural Language Processing at the University of Stuttgart § Bitext parsing § Using morphosyntactic correspondence

What is bitext parsing? § Bitext: a text and its translation § Sentences and their translations are aligned § Sometimes called a parallel corpus § Syntactic parsing: automatically find the syntactic structure of a sentence (syntactic parse) § Bitext parsing: automatically find the syntactic structure of the parallel sentences in a bitext § We will use the complementarity of the syntax of the two languages to obtain improved parses

Motivation for bitext parsing § Many advances in syntactic parsing come from better modeling § But the overall bottleneck is the size of the treebank § Our research asks a different question: § Where can we (cheaply) obtain additional information, which helps to supplement the treebank? § A new information source for resolving ambiguity is a translation § The human translator understands the sentence and disambiguates for us! § Our research goal was to build large databases of improved parses to help establish preferences for difficult phenomena like PP-attachment

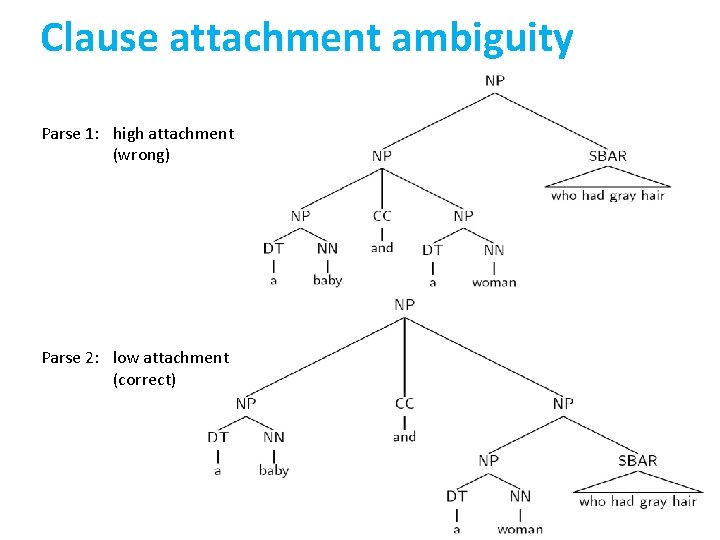

Clause attachment ambiguity Parse 1: high attachment (wrong) Parse 2: low attachment (correct)

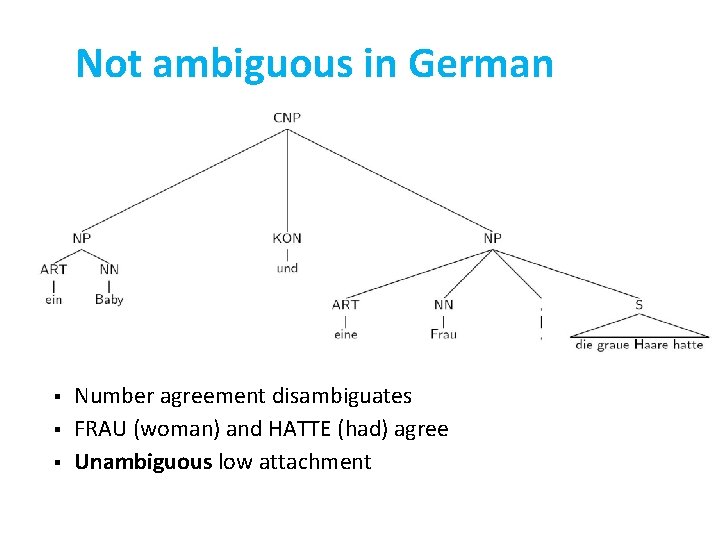

Not ambiguous in German § § § Number agreement disambiguates FRAU (woman) and HATTE (had) agree Unambiguous low attachment

Parse reranking of bitext § Goal: improve English parsing accuracy § Parse English sentence, obtain list of 100 best parse candidates § Parse German sentence, obtain single best parse § Determine the correspondence of German to English words using a word alignment § Calculate syntactic divergence of each English parse candidate and the projection of the German parse § Choose probable English parse candidate with low syntactic divergence

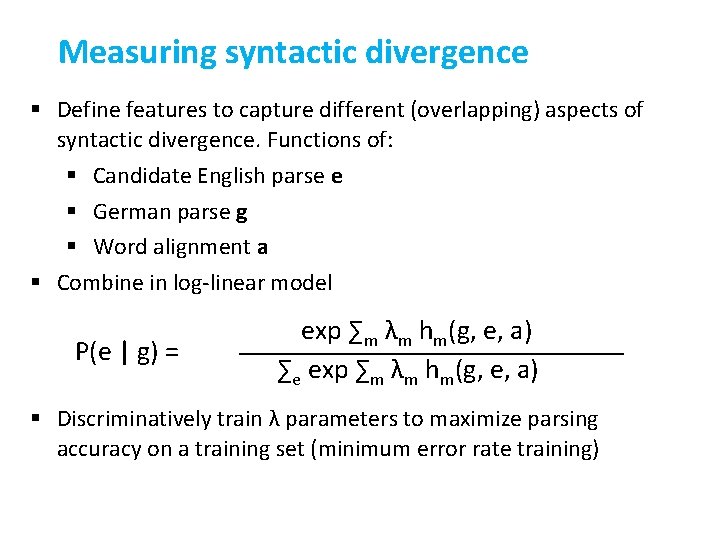

Measuring syntactic divergence § Define features to capture different (overlapping) aspects of syntactic divergence. Functions of: § Candidate English parse e § German parse g § Word alignment a § Combine in log-linear model P(e | g) = exp ∑m λm hm(g, e, a) ∑e exp ∑m λm hm(g, e, a) § Discriminatively train λ parameters to maximize parsing accuracy on a training set (minimum error rate training)

Rich bitext projection features § Defined 36 features by looking at common English parsing errors § No monolingual features, except baseline parser probability § General features § Is there a probable label correspondence between German and the hypothesized English parse? § How expected is the size of each constituent in the hypothesized English parse given the German parse? § Specific features § Are coordinations realized identically? § Is the NP structure the same? § Mix of probabilistic and heuristic features

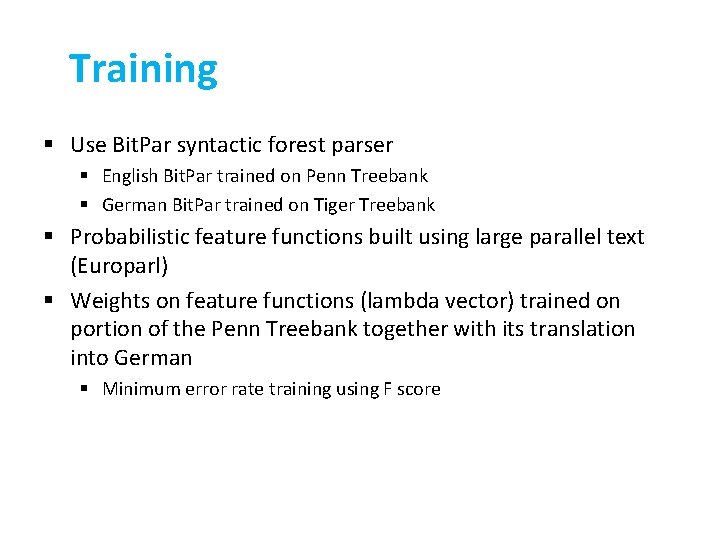

Training § Use Bit. Par syntactic forest parser § English Bit. Par trained on Penn Treebank § German Bit. Par trained on Tiger Treebank § Probabilistic feature functions built using large parallel text (Europarl) § Weights on feature functions (lambda vector) trained on portion of the Penn Treebank together with its translation into German § Minimum error rate training using F score

Reranking English parses § Difficult task § German is difficult to parse § Our knowledge source, the German parser, is out-ofdomain (poor performance) § Baseline English parser we are trying to improve is indomain (good performance) § Test set has long sentences § Result: 0. 70% F 1 improvement on test data (stat. significant)

New results § Reranking German parses § We needed German gold standard parses (and English translations) § Sebastian Pado has made a small parallel treebank for Europarl available § No engineering on German yet § We are using the same syntactic divergence features which were designed to improve English parsing § There are German specific ambiguities which could be modeled, such as subjectobject ambiguity (e. g. , Die Maus jagt die Katze, “the mouse chases the cat” or “the cat chases the mouse”) § But easier task because the parser we are trying to improve is weaker (German is hard to parse, Europarl is out of domain) § 2. 3% F 1 improvement currently, we think this can be further improved

Summary: bitext parsing § I showed you an approach for bitext parsing § Reranking the parses of English to minimize syntactic divergence with an automatically generated German parse § I then showed our first results for reranking German parses using a single English parse § The approach we used for this kind of morphosyntactic correspondence is more general than just parse reranking § Machine translation involves morphosyntactic correspondence § And this is where we are interested in looking at Croatian

Outline § The Institute for Natural Language Processing at the University of Stuttgart § Bitext parsing § Using morphosyntactic correspondence

Morphosyntactic processing § I am co-PI of a new If. NLP project funded by the DFG (German Science Foundation) § Project: morphosyntactic modeling for statistical machine translation (SMT) § SMT research, up until recently, has been dominated by translation into English § English expresses a lot of information through word order, very little through inflection § Approaches to translating morphologically rich languages to English are preprocessing based

Present: linguistic preprocessing § Linguistic preprocessing for SMT (stat. machine translation) § From: freer syntax, morphologically rich language § To: rigid syntax, morphologically poor language § Existing examples: German to English, Czech to English

Present: linguistic preprocessing § How this works § Produce morphosyntactic analysis of German (or Czech) § Reorder words in the German/Czech sentence to be in English order § Reduce morphological inflection (for instance, remove case marking, remove all agreement on adjectives, etc) § For Czech: insert pseudo-words (e. g. indicate PRO-drop pronouns) § Use statistics on this “simplified” German or Czech to map directly to English using SMT

Present: linguistic preprocessing § How well does this work? § German to English SMT with linguistic preprocessing (Stuttgart system) § Results from 2008 ACL workshop on machine translation (extensive human evaluation) § Only system limited to organizer’s data competitive with: § The best system of 5 rule-based MT systems § Saarbrücken hybrid rule-based/SMT system § Google Translate, which does not use linguistic preprocessing but does use vastly more data

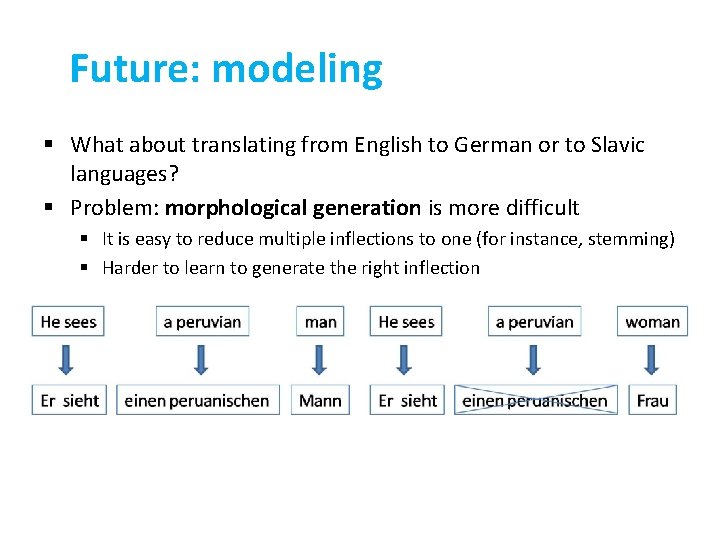

Future: modeling § What about translating from English to German or to Slavic languages? § Problem: morphological generation is more difficult § It is easy to reduce multiple inflections to one (for instance, stemming) § Harder to learn to generate the right inflection

Future: modeling § Current work on morphological generation § Work at Charles University in Prague on Czech § Tectogrammatical representation is not (yet) competitive with simple statistics (little explicit knowledge of morphology or syntax) § Best English to German SMT systems also use little or no morphological knowledge § And they are much worse than rule-based English to German systems § Challenge: to use morphosyntactic knowledge with statistical approaches requires more than just linguistic preprocessing § morphosyntactic modeling

Morphosyntactic correspondence § In fact, all multilingual problems involve morphosyntactic correspondence: § If we have a source parse tree, and source text, and we would like a target text, this is machine translation § If we have a source parse tree, source text and target text, and we would like a target parse, this is bitext parsing § If we would like to know which word in the target text is a translation of a particular word in the source text and we use morphosyntactic analysis, this is syntactic word alignment § The same thinking can be used for cross-lingual information retrieval § Very relevant when one of the languages is morphologically rich

Conclusion § § § I introduced the If. NLP Stuttgart I presented a new approach to improving parsing using morphosyntactic correspondence: bitext parsing I discussed the general challenge of using morphosyntactic correspondence, focusing on statistical machine translation § Biggest challenge is translating into freer word order, morphologically rich (e. g. , German and particularly Slavic languages) § We are interested in the challenge of building systems to translate to Croatian § To do this: we need partners who are working on Croatian analysis! § We also request that you think about multilingual applications when producing Croatian NLP resources § The type of approach I showed for bitext parsing is useful for other multilingual applications

Thank you!

Title § text

Statistical Approach § Using statistical models § Create many alternatives, called hypotheses § Give a score to each hypothesis § Find the hypothesis with the best score through search § Disadvantages § Difficulties handling structurally rich models (math and computation) § Need data to train the model parameters § Difficult to understand decision process made by system § Advantages § § Avoid hard decisions Speed can be traded with quality, no all-or-nothing Works better in the presence of unexpected input Learns automatically as more data becomes available Modified from Vogel

Morphosyntactic knowledge § We use: morphological analyzers & treebanks, which are combined in parsing models learned from treebanks § English models have little morphological analysis (suffix analysis to determine POS for unknown words) § German syntactic parser Bit. Par (Schmid) uses SMOR (Stuttgart Morphological Analyzer) § Given inflected form, SMOR returns possible fine-grained POS tags § E. g. , for nouns/adjectives: POS, case, gender, number, definiteness § Bit. Par puts possible analyses in the chart, and disambiguates § Slavic languages require even more morphological knowledge than German

Transferring syntactic knowledge § Need knowledge source! § English syntactic parser § About 90% bracketing accuracy § Mapping § Requires bitext § Work discussed here uses German/English Europarl (European Parliament Proceedings) § Resource for Croatian: Acquis Communautaire § Automatically generated word alignment

Additional details in the paper § § Formalization of bitext parsing as a parse reranking task Definitions of bitext feature functions Analysis of feature functions through feature selection Comparison of MERT (minimum error rate training) with SVMRank

- Slides: 32