More Symbolic Learning CPSC 386 Artificial Intelligence Ellen

More Symbolic Learning CPSC 386 Artificial Intelligence Ellen Walker Hiram College

Ensemble Learning • Instead of learning exactly one hypothesis, learn several and combine them • Example – Learn 5 hypotheses (classification rules) from the same training set – For each element in the test set, let all hypotheses vote on classification – Result: for an item to be mis-classified, it has to be misclassified (in the same way) by 3 different hypotheses! • Assumption: errors made by each hypothesis are independent (or at least, different)

Multiple Simple Hypotheses • Simple hypotheses – Require less time to compute – Require less space to represent – Limit the “expressiveness” of what can be learned • Multiple simple hypotheses (fixed number) – Don’t significantly increase time or space needs – Can significantly increase expressiveness • Example (next slide): Multiple linear thresholds

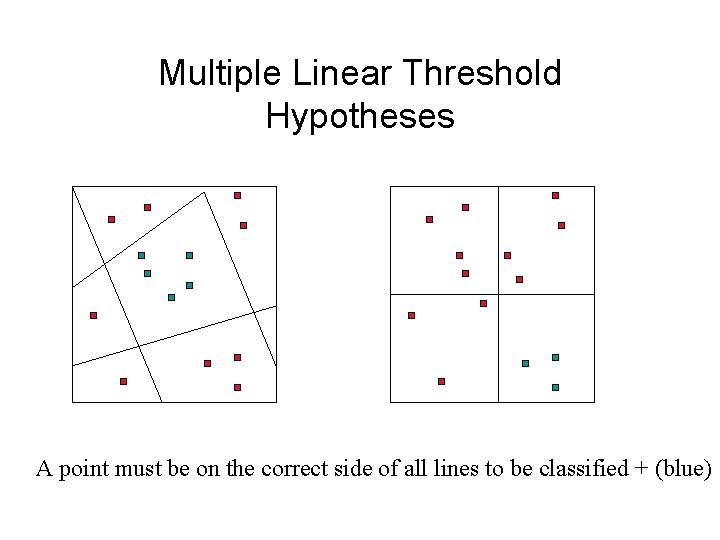

Multiple Linear Threshold Hypotheses A point must be on the correct side of all lines to be classified + (blue)

Boosting • Generate M hypotheses sequentially, from “weighted training sets” • First set has equal weights. • Second set has misclassified examples from first set weighted higher, correctly classified weighted lower. • Third set revises weights based on classified/misclassified examples from second set, etc. • Result is weighted majority classification of all hypotheses, weighted according to how they did on the training set.

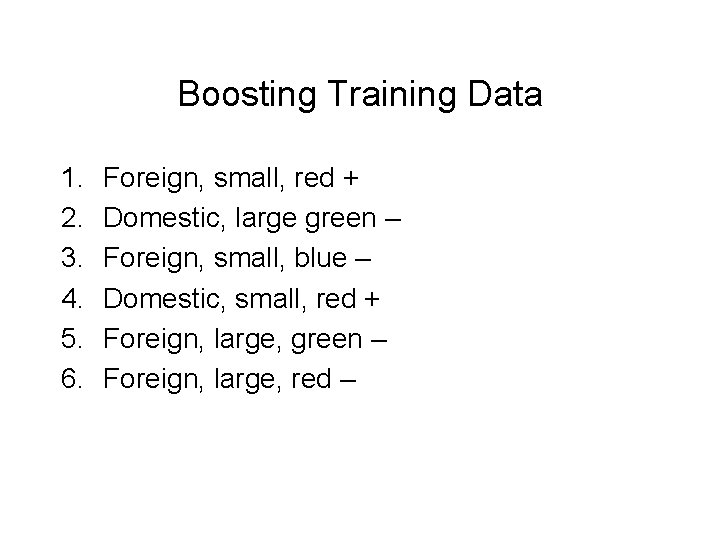

Boosting Training Data 1. 2. 3. 4. 5. 6. Foreign, small, red + Domestic, large green – Foreign, small, blue – Domestic, small, red + Foreign, large, green – Foreign, large, red –

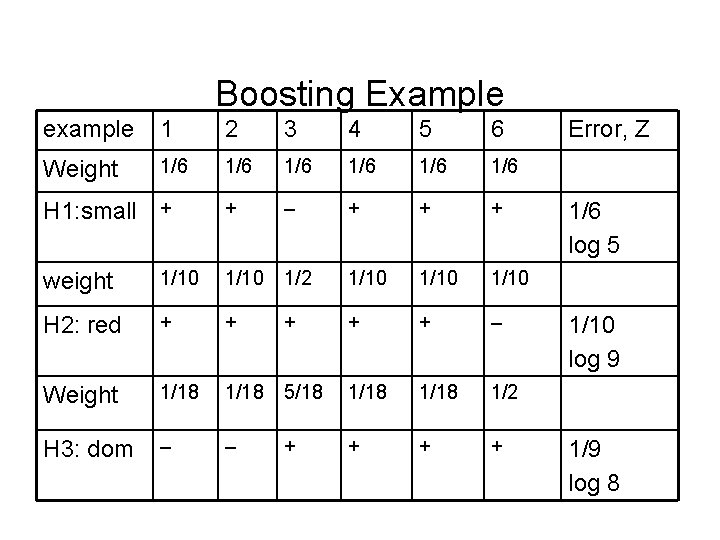

Boosting Example example 1 2 3 4 5 6 Weight 1/6 1/6 1/6 + – + + + H 1: small + weight 1/10 1/2 1/10 H 2: red + + + – Weight 1/18 5/18 1/18 1/2 H 3: dom – – + + Error, Z 1/6 log 5 1/10 log 9 1/9 log 8

Test Classifications • Rules: small (log 5), red (log 9), dom (log 8) • Domestic, large, blue – NO (log 5 + log 9), YES (log 8) --> NO • Domestic, large, red – NO(log 5), YES (log 9 + log 8) --> YES • Foreign, small, green – NO(log 8 + log 9), YES (log 5) --> NO

Knowledge in Learning • What you learn depends on what you know – Attributes for classification – Organizational structure • Relationships in structural learning (e. g. above, next to, touching) – Relevance • Copper wire {conducts electricity / is 2 m long}

Explanation Based Learning • “Learning” from one example • Extract a general rule from an example – A penny conducts electricity – (Pennies are made of copper) – Therefore, copper conducts electricity • Generalization of optimization technique called memoization – Build table of results from prior computations

Steps in EBL 1. Build an explanation of the observation. For example, prove that the instance is a member of a query class (goal predicate) 2. In parallel, construct a generalized proof tree, using the same inference steps (rules) 3. Transform the generalized proof tree into a new rule. This generalizes the new explanation.

Operationality • Define an “easy” subgoal as operational • Use operationality to know when to stop the proof • Examples: – Operational concept is a structural constraint that can be easily observed – Operational concept is a LISP primitive that can be easily applied

EBL Example: Learn “cup” • Goal: A cup is stable, liftable, and holds liquid • Operationality: only structural criteria (geometry, topology) may be used • Example: a coffee-cup

Explain the Observation Prove why the object is a cup: – Goal: • stable(x) & liftable(x) & holds-liquid(x) -> cup(x) – Facts: • flatbottom(cup 1) & red(cup 1) & attach(cup 1, handle) & weight(cup 1, 2 oz) & concave(cup 1) & ceramic(cup 1) – Proof: • Cup is stable because it has a flat bottom; liftable because it has a handle and weighs 2 oz (which is less than 16 oz), and holds-liquid because it is concave.

Generalize • Replace all constants in the proof (e. g. cup 1) with variables cup(x) <- flatbottom(x) & attach(x, y) & weight(x, z) & concave(x) • Re-prove to find any variables that should really be constants! cup(x) <- flatbottom(x) & attach(x, handle) & weight(x, z) & (< z 16 oz) • A cup is an object that has a flat bottom, a handle, and weighs < 1 lb and is concave

Relevance Based Learning • Find simplest determination consistent with the observations – Determination is a set of features P, Q so that if examples match on P, they will also match on Q – In this case, Q is the target predicate (classification to be learned) – Simplest has the fewest attributes • Combine with Decision Tree for great improvement – RBL to select a subset of attributes, then DTL

Inductive Logic Programming • Combines inductive learning (from examples) with first-order logical representations • Allows relational concepts (like grandparent) to be learned, unlike attribute-based methods • Is a rigorous approach

Top-Down ILP • Start with a very general rule – Analogy: empty decision tree – Actual: empty left side – (empty) => grandfather(X, Y) • Gradually specialize the rule until it fits the data – Analogy: growing the decision tree – Actual: adding predicates to left side until all examples are correctly classified • father(X, Z) => grandfather(X, Y) • father(X, Z) and parent(Z, Y) => grandfather(X, Y)

Inverse Resolution • We know that resolution takes two clauses c 1 and c 2 and generates a result c • Inverse resolution starts with c (and possibly c 1) and determines c 2 • Working “backwards” through a proof, generate the necessary rules – This can be done for discovery = unsupervised symbolic learning!

Some Final Comments • Learning requires prior knowledge – E. g. which attributes are available? – E. g. current rules for EBL • Prior knowledge can bias a learning system – This can be good or bad • Existing learning systems are focused; we are a long way from creating a system that learns like an infant.

- Slides: 20