More on Clustering 1 Densitybased Clustering 1 DBSCAN

More on Clustering 1. Density-based Clustering 1. DBSCAN 2. DENCLUE 2. Hierarchical Clustering will be discussed as the lst topic of the clustering lectures Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

Density-based Clustering algorithms use density-estimation techniques: l to use a non-parametric density function over the space of the attributes; then clusters are identified as areas whose density is above a certain threshold (DENCLUE’s Approach) l to create a proximity graph which connects objects whose density is above a certain threshold in the neighborhood of an object; then clustering algorithms identify contiguous, connected subsets in the graph which are dense (DBSCAN’s Approach). DBSCAN employs a naïve density estimation approach to estimate the density of dataset points. Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

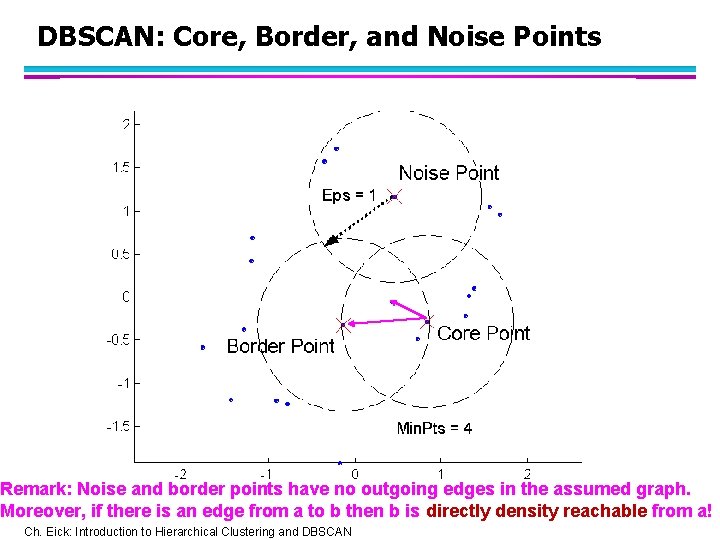

DBSCAN (http: //www 2. cs. uh. edu/~ceick/7363/Papers/dbscan. pdf ) l DBSCAN is a density-based algorithm. – – – Density = number of points within a specified radius (Eps) Input parameter: Min. Pts and Eps A point is a core point if it has more than a specified number of points (Min. Pts) within Eps u These are points that are at the interior of a cluster – A border point has fewer than Min. Pts within Eps, but is in the neighborhood of a core point – A noise point is any point that is not a core point or a border point. Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

DBSCAN: Core, Border, and Noise Points Min. Pts = 7 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

DBSCAN: Core, Border, and Noise Points Remark: Noise and border points have no outgoing edges in the assumed graph. Moreover, if there is an edge from a to b then b is directly density reachable from a! Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

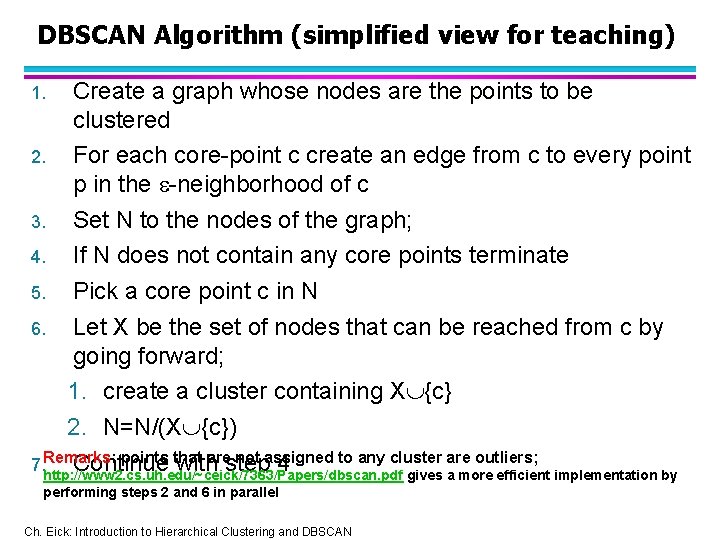

DBSCAN Algorithm (simplified view for teaching) Create a graph whose nodes are the points to be clustered 2. For each core-point c create an edge from c to every point p in the -neighborhood of c 3. Set N to the nodes of the graph; 4. If N does not contain any core points terminate 5. Pick a core point c in N 6. Let X be the set of nodes that can be reached from c by going forward; 1. create a cluster containing X {c} 2. N=N/(X {c}) points that arestep not assigned to any cluster are outliers; 7. Remarks: Continue with 4 http: //www 2. cs. uh. edu/~ceick/7363/Papers/dbscan. pdf gives a more efficient implementation by 1. performing steps 2 and 6 in parallel Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

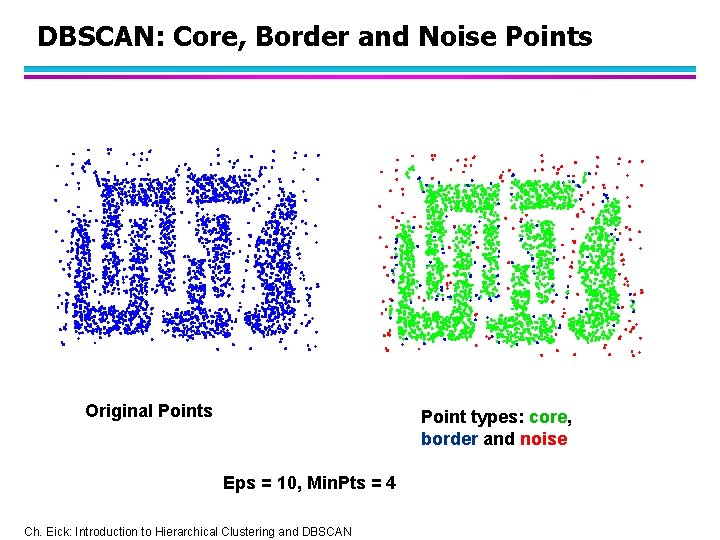

DBSCAN: Core, Border and Noise Points Original Points Point types: core, border and noise Eps = 10, Min. Pts = 4 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

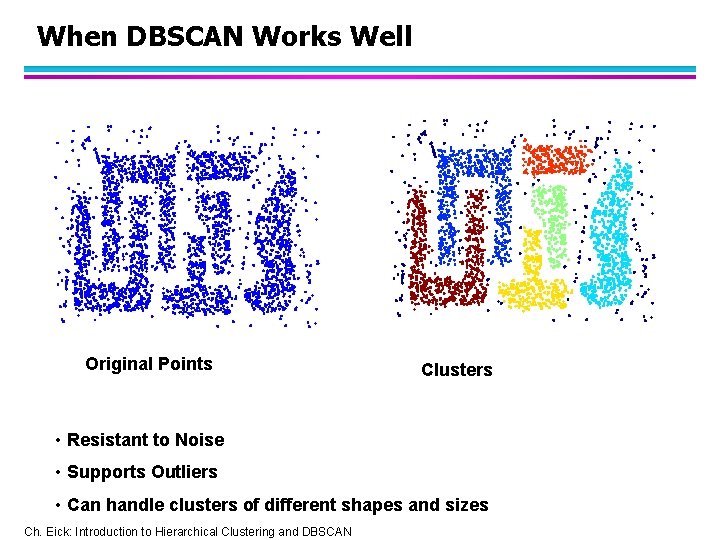

When DBSCAN Works Well Original Points Clusters • Resistant to Noise • Supports Outliers • Can handle clusters of different shapes and sizes Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

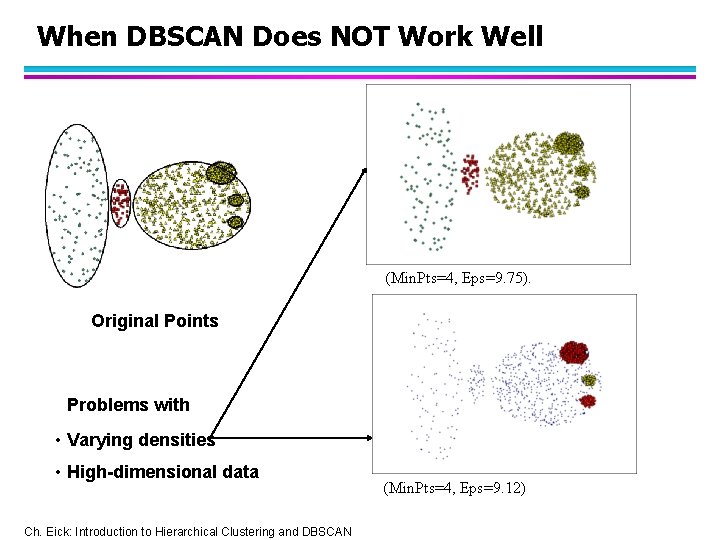

When DBSCAN Does NOT Work Well (Min. Pts=4, Eps=9. 75). Original Points Problems with • Varying densities • High-dimensional data Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN (Min. Pts=4, Eps=9. 12)

![DBSCAN in R dbscan(iris[3: 4], 0. 15, 3, showplot=1) dbscan Pts=150 Min. Pts=3 eps=0. DBSCAN in R dbscan(iris[3: 4], 0. 15, 3, showplot=1) dbscan Pts=150 Min. Pts=3 eps=0.](http://slidetodoc.com/presentation_image_h2/b3b233f412e7f33bb291d5abc6077b41/image-10.jpg)

DBSCAN in R dbscan(iris[3: 4], 0. 15, 3, showplot=1) dbscan Pts=150 Min. Pts=3 eps=0. 15 0 1 2 3 4 5 6 border 20 2 5 0 3 2 1 seed 0 46 54 3 9 1 4 total 20 48 59 3 12 3 5 l dbscan. r (demo) l http: //www. inside-r. org/node/59838 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

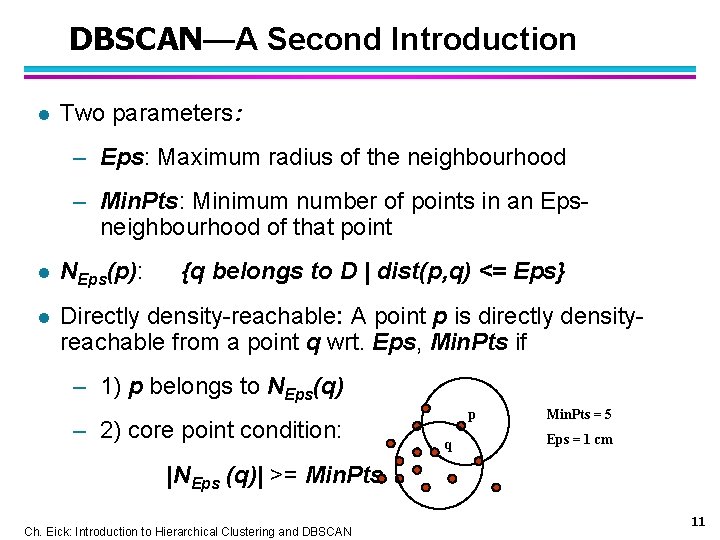

DBSCAN—A Second Introduction l Two parameters: – Eps: Maximum radius of the neighbourhood – Min. Pts: Minimum number of points in an Epsneighbourhood of that point l NEps(p): {q belongs to D | dist(p, q) <= Eps} l Directly density-reachable: A point p is directly densityreachable from a point q wrt. Eps, Min. Pts if – 1) p belongs to NEps(q) – 2) core point condition: p q Min. Pts = 5 Eps = 1 cm |NEps (q)| >= Min. Pts Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 11

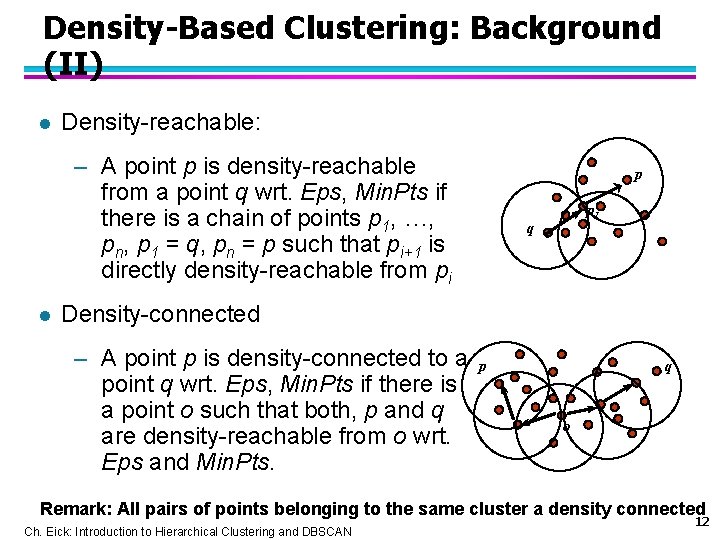

Density-Based Clustering: Background (II) l Density-reachable: – A point p is density-reachable from a point q wrt. Eps, Min. Pts if there is a chain of points p 1, …, pn, p 1 = q, pn = p such that pi+1 is directly density-reachable from pi l p p 1 q Density-connected – A point p is density-connected to a point q wrt. Eps, Min. Pts if there is a point o such that both, p and q are density-reachable from o wrt. Eps and Min. Pts. p q o Remark: All pairs of points belonging to the same cluster a density connected Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 12

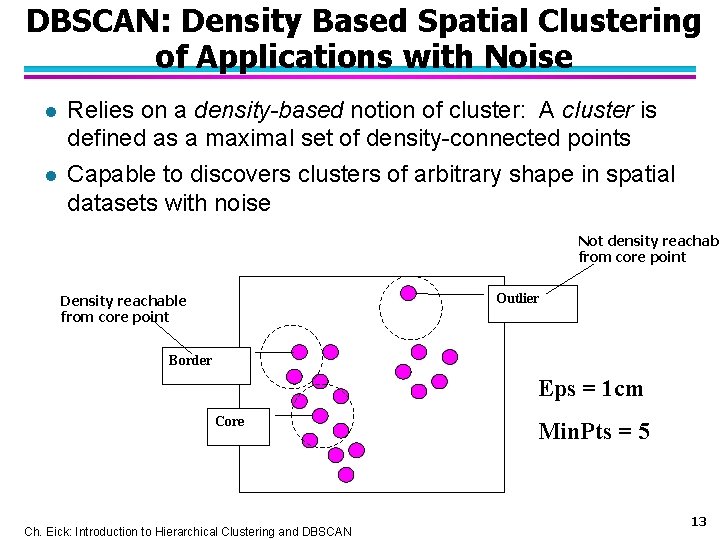

DBSCAN: Density Based Spatial Clustering of Applications with Noise l l Relies on a density-based notion of cluster: A cluster is defined as a maximal set of density-connected points Capable to discovers clusters of arbitrary shape in spatial datasets with noise Not density reachabl from core point Outlier Density reachable from core point Border Eps = 1 cm Core Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN Min. Pts = 5 13

DBSCAN: The Algorithm 1. Arbitrary select a point p 2. Retrieve all points density-reachable from p wrt Eps and Min. Pts. 3. If p is a core point, a cluster is formed. 4. If p ia not a core point, no points are density-reachable from p and DBSCAN visits the next point of the database. 5. Continue the process until all of the points have been processed. Remark: Some bookkeeping is needed to make sure that only points that have not been assigned to a cluster yet, will be used in step 2. Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 14

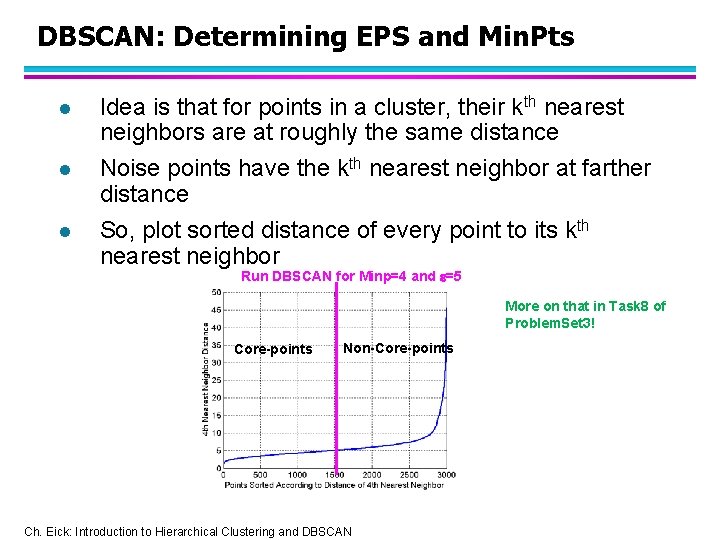

DBSCAN: Determining EPS and Min. Pts l l l Idea is that for points in a cluster, their kth nearest neighbors are at roughly the same distance Noise points have the kth nearest neighbor at farther distance So, plot sorted distance of every point to its kth nearest neighbor Run DBSCAN for Minp=4 and =5 More on that in Task 8 of Problem. Set 3! Core-points Non-Core-points Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

DENCLUE: Clustering using density functions DENsity-based CLUst. Ering by Hinneburg & Keim (KDD’ 98) l Paper: http: //www 2. cs. uh. edu/~ceick/DM/Denclue 2. pdf l Slides Morteza H. Chehreghani from Sharif University: http: //www 2. cs. uh. edu/~ceick/DM/DENCLUE. pdf l Randomized Hill Climbing slides: http: //www 2. cs. uh. edu/~ceick/DM/RHC. pptx Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 16

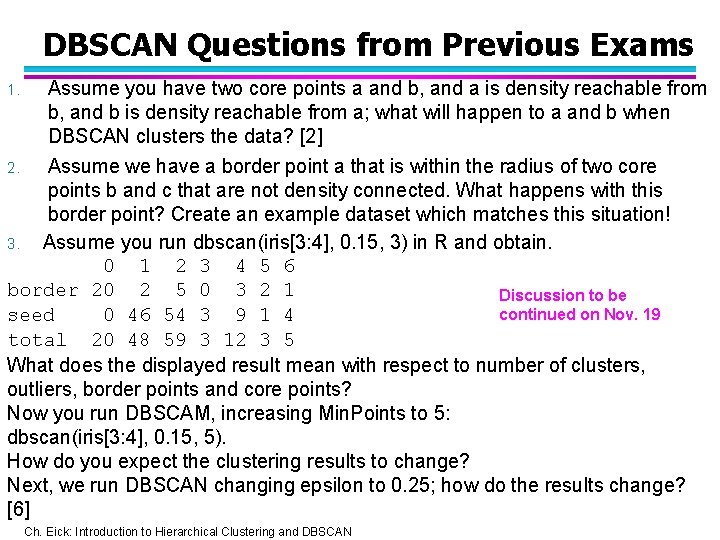

DBSCAN Questions from Previous Exams 1. Assume you have two core points a and b, and a is density reachable from b, and b is density reachable from a; what will happen to a and b when DBSCAN clusters the data? [2] Assume we have a border point a that is within the radius of two core points b and c that are not density connected. What happens with this border point? Create an example dataset which matches this situation! 3. Assume you run dbscan(iris[3: 4], 0. 15, 3) in R and obtain. 0 1 2 3 4 5 6 border 20 2 5 0 3 2 1 Discussion to be continued on Nov. 19 seed 0 46 54 3 9 1 4 total 20 48 59 3 12 3 5 What does the displayed result mean with respect to number of clusters, outliers, border points and core points? Now you run DBSCAM, increasing Min. Points to 5: dbscan(iris[3: 4], 0. 15, 5). How do you expect the clustering results to change? Next, we run DBSCAN changing epsilon to 0. 25; how do the results change? [6] 2. Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

DENCLUE Teaching Plan Source code of DENCLUE? ? l Today: High-level Introduction of DENCLUE l Everybody reads the DENCLUE 2. 0 paper l Th. , Nov. 19: Group N: Jennifer and Sunita will lead a discussion (at most 25 minutes) of DENCLUE centering on producing initial answers to the following questions (Jennifer and Sunita are assumed to produce initial answers to these questions): a. What is a density attractor and how are density attractors computed by DENCLUE? b. What is a cluster in DENCLUE? How are clusters formed by DENCLUE? c. What is a path in DENCLUE? How are paths computed in DENCLUE? What algorithm is used to determine which clusters are merged? d. DENCLUE places a (hyper)grid on the top of the dataset… Why? ? e. How does DENCLUE’s hill climbing procedure work? How was it enhanced in DENCLUE 2. 0 in comparison of its older version? f. What objects in the dataset does DENCLUE classify as outliers? Remark: Dr. Eick will only listen, and maybe make some comments after the discussion. Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

November 19 News l There will be a peer reviewing/Kritik discussion during the lecture on Tuesday, November 24 at 3: 10 p; there will be no lecture on Nov. 26! l Topics we still likely will be discussing---time permitting---on Nov. 24 and Dec. 1+3 include: Cluster Validity, Hierarchical Clustering, Brief Introduction to Spatial Data Mining and Preprocessing l A draft Task 7 Rubric has been posted in the Problem. Set channel l Today’s activities 1. 2. 3. 4. 5. 6. 7. 8. Brief R Demo K-Means Finish R Demo DBSCAN Lecture “Randomized Hill Climbing” Brief Discussion of Task 8 Problem. Set 3 Discussion and Q&A DENCLUE lead by Group N: Jennifer and Sunita Discussion Leftover Slides “Density-based Clustering” Lecture Brief Look at Group L’s Running PAM solution Maybe, begin Lecture “Cluster Validity and Cluster Evaluation” Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

Density-based Clustering: Pros and Cons § +: can (potentially) discover clusters of arbitrary shape § +: not sensitive to outliers and supports outlier detection § +: can handle noise § +-: medium algorithm complexities O(n**2), O(n*log(n) § -: finding good density estimation parameters is frequently difficult; more difficult to use than K-means. § -: usually, does not do well in clustering highdimensional datasets. § -: cluster models are not well understood (yet) Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 20

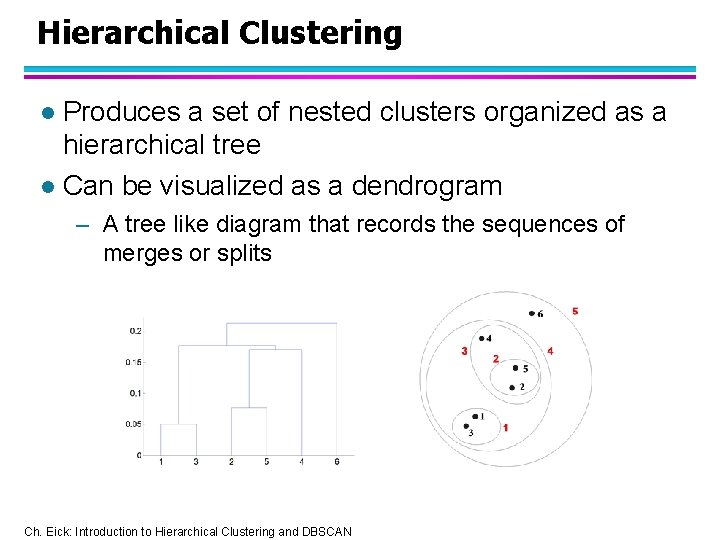

Hierarchical Clustering Produces a set of nested clusters organized as a hierarchical tree l Can be visualized as a dendrogram l – A tree like diagram that records the sequences of merges or splits Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

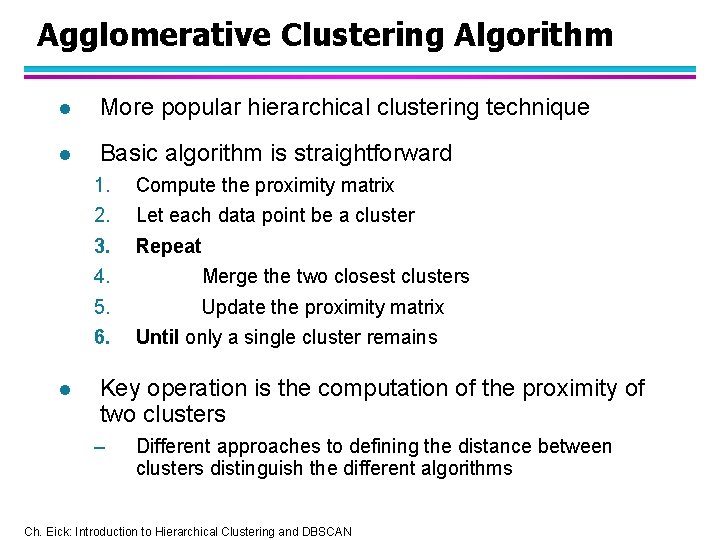

Agglomerative Clustering Algorithm l More popular hierarchical clustering technique l Basic algorithm is straightforward 1. 2. 3. 4. 5. 6. l Compute the proximity matrix Let each data point be a cluster Repeat Merge the two closest clusters Update the proximity matrix Until only a single cluster remains Key operation is the computation of the proximity of two clusters – Different approaches to defining the distance between clusters distinguish the different algorithms Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

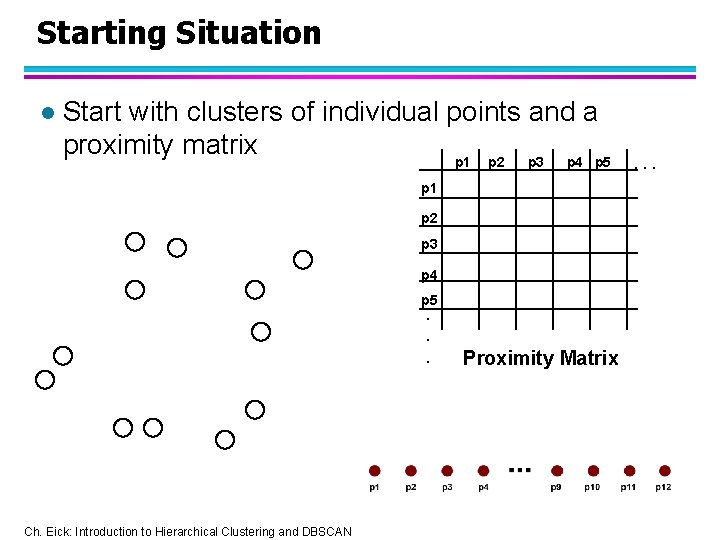

Starting Situation l Start with clusters of individual points and a proximity matrix p 1 p 2 p 3 p 4 p 5. . . Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN Proximity Matrix . . .

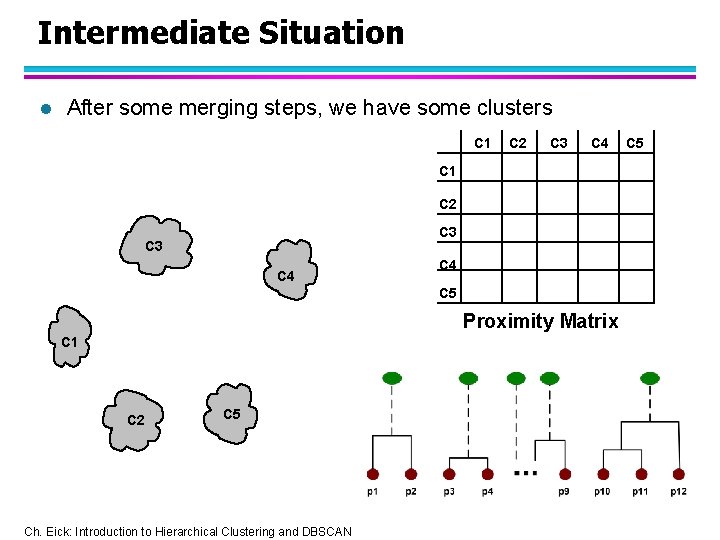

Intermediate Situation l After some merging steps, we have some clusters C 1 C 2 C 3 C 4 C 5 Proximity Matrix C 1 C 2 C 5 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN C 5

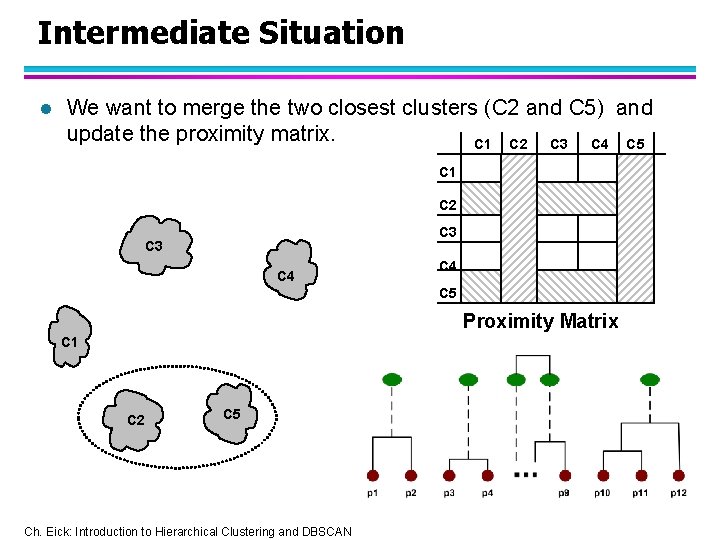

Intermediate Situation l We want to merge the two closest clusters (C 2 and C 5) and update the proximity matrix. C 1 C 2 C 3 C 4 C 5 Proximity Matrix C 1 C 2 C 5 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

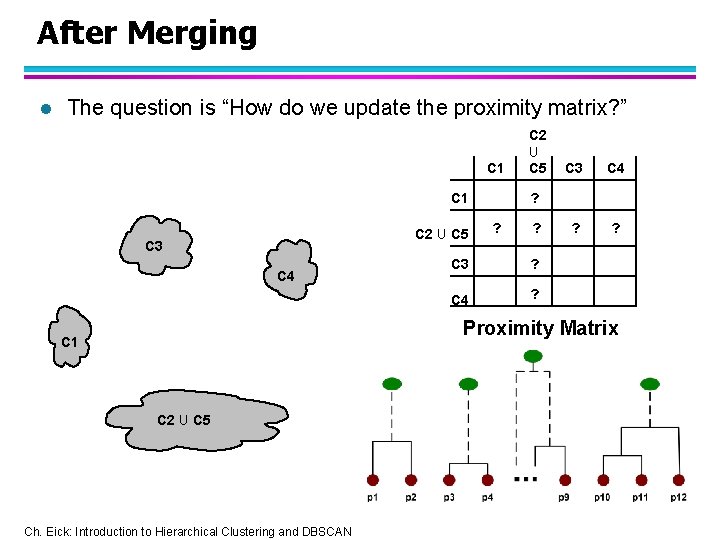

After Merging l The question is “How do we update the proximity matrix? ” C 1 C 2 U C 5 C 3 C 4 ? ? ? C 3 ? C 4 ? Proximity Matrix C 1 C 2 U C 5 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN

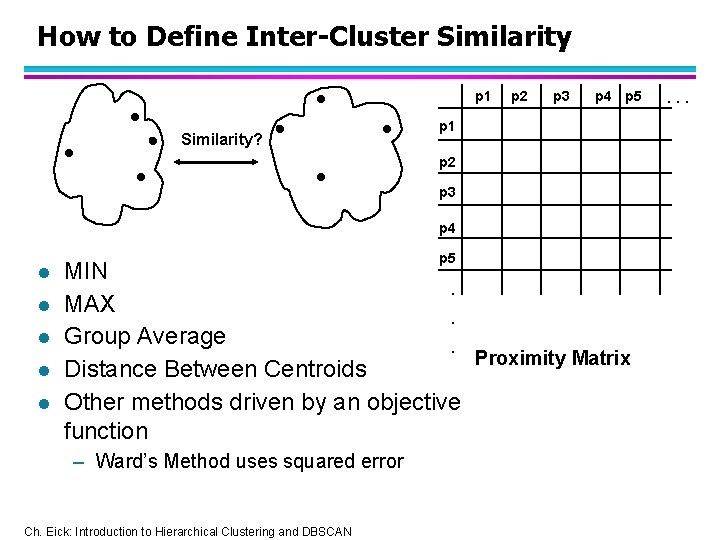

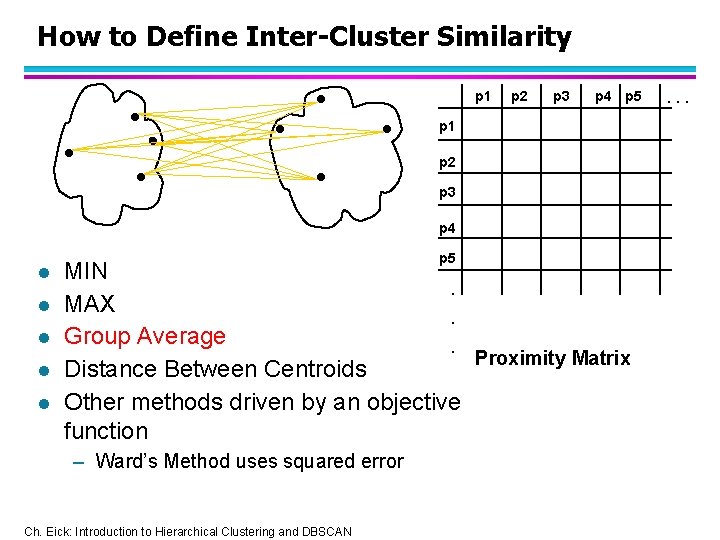

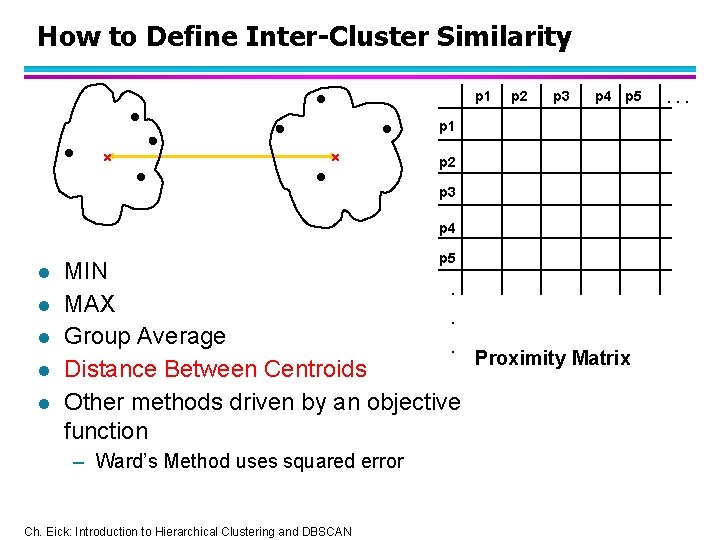

How to Define Inter-Cluster Similarity p 1 Similarity? p 2 p 3 p 4 p 5 p 1 p 2 p 3 p 4 l l l p 5 MIN. MAX. Group Average. Proximity Matrix Distance Between Centroids Other methods driven by an objective function – Ward’s Method uses squared error Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN . . .

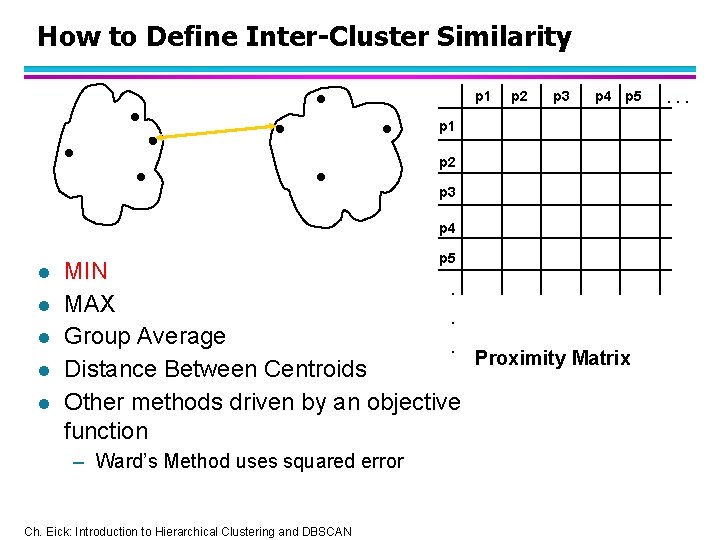

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 p 1 p 2 p 3 p 4 l l l p 5 MIN. MAX. Group Average. Proximity Matrix Distance Between Centroids Other methods driven by an objective function – Ward’s Method uses squared error Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN . . .

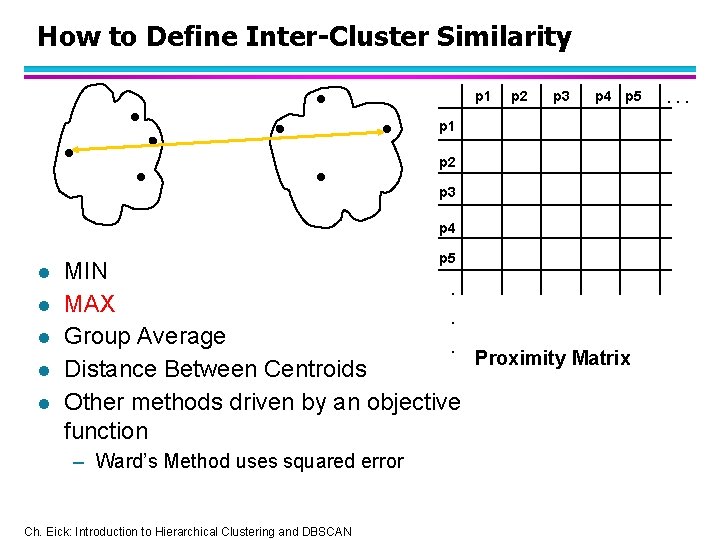

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 p 1 p 2 p 3 p 4 l l l p 5 MIN. MAX. Group Average. Proximity Matrix Distance Between Centroids Other methods driven by an objective function – Ward’s Method uses squared error Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN . . .

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 p 1 p 2 p 3 p 4 l l l p 5 MIN. MAX. Group Average. Proximity Matrix Distance Between Centroids Other methods driven by an objective function – Ward’s Method uses squared error Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN . . .

How to Define Inter-Cluster Similarity p 1 p 2 p 3 p 4 p 5 p 1 p 2 p 3 p 4 l l l p 5 MIN. MAX. Group Average. Proximity Matrix Distance Between Centroids Other methods driven by an objective function – Ward’s Method uses squared error Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN . . .

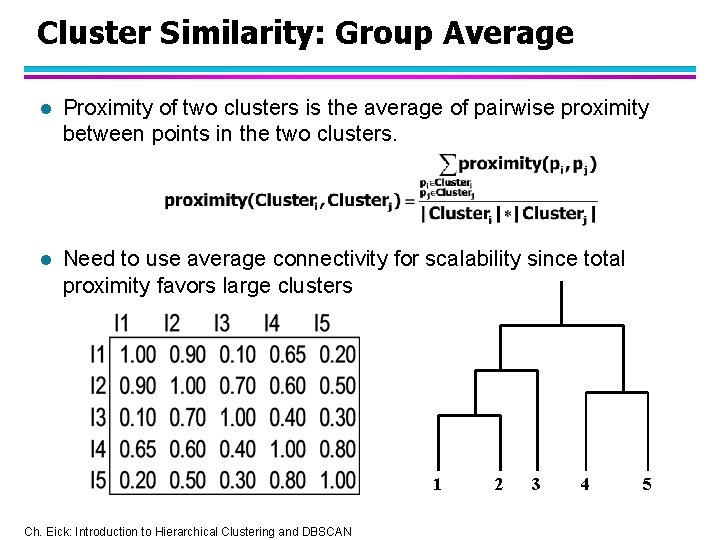

Cluster Similarity: Group Average l Proximity of two clusters is the average of pairwise proximity between points in the two clusters. l Need to use average connectivity for scalability since total proximity favors large clusters 1 Ch. Eick: Introduction to Hierarchical Clustering and DBSCAN 2 3 4 5

- Slides: 32