Monitoring and Evaluation ME for Community Driven Development

- Slides: 14

Monitoring and Evaluation (M&E) for Community Driven Development (CDD) Programs Introduction to Concepts and Examples Susan Wong, CDD Global Lead March 2, 2018

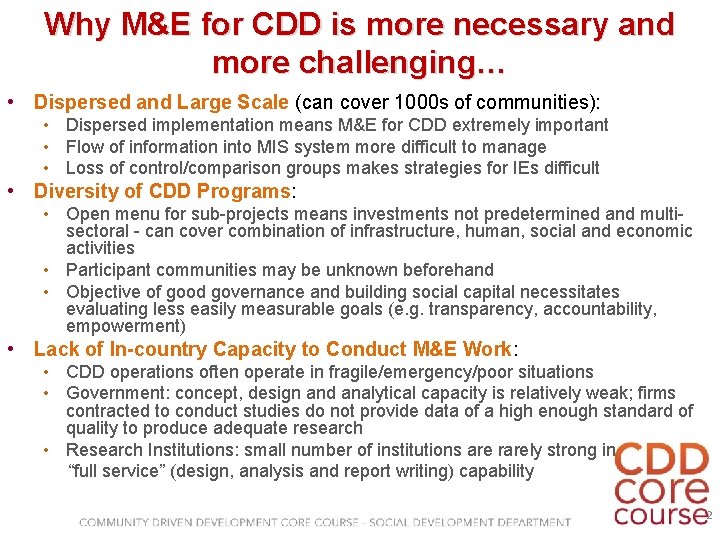

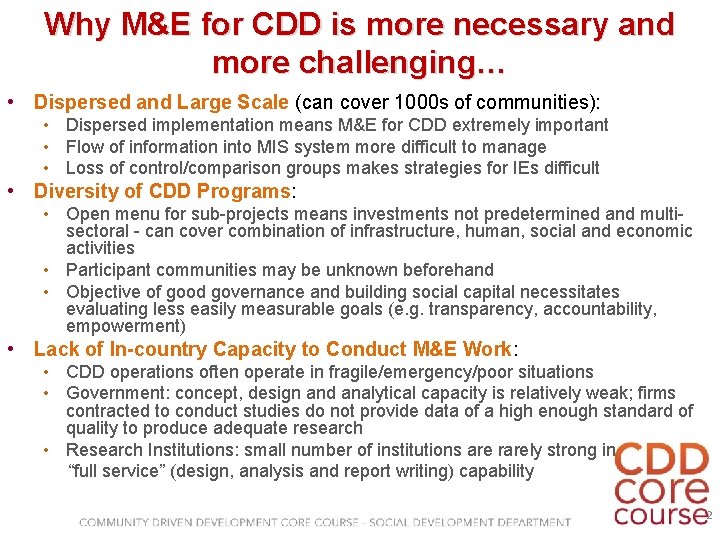

Why M&E for CDD is more necessary and more challenging… • Dispersed and Large Scale (can cover 1000 s of communities): • Dispersed implementation means M&E for CDD extremely important • Flow of information into MIS system more difficult to manage • Loss of control/comparison groups makes strategies for IEs difficult • Diversity of CDD Programs: • Open menu for sub-projects means investments not predetermined and multisectoral - can cover combination of infrastructure, human, social and economic activities • Participant communities may be unknown beforehand • Objective of good governance and building social capital necessitates evaluating less easily measurable goals (e. g. transparency, accountability, empowerment) • Lack of In-country Capacity to Conduct M&E Work: • CDD operations often operate in fragile/emergency/poor situations • Government: concept, design and analytical capacity is relatively weak; firms contracted to conduct studies do not provide data of a high enough standard of quality to produce adequate research • Research Institutions: small number of institutions are rarely strong in “full service” (design, analysis and report writing) capability 2

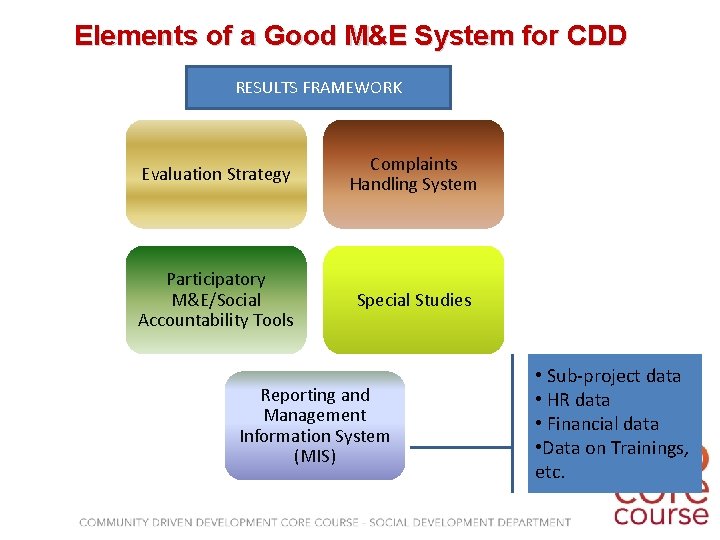

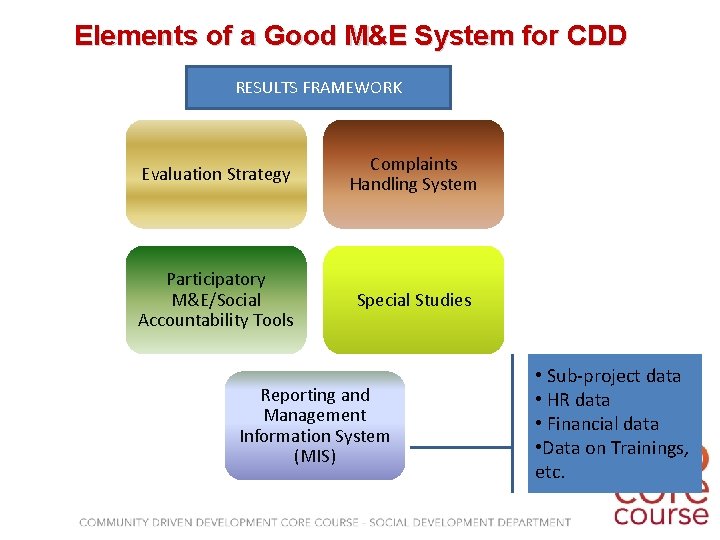

Elements of a Good M&E System for CDD RESULTS FRAMEWORK Evaluation Strategy Complaints Handling System Participatory M&E/Social Accountability Tools Special Studies Reporting and Management Information System (MIS) • Sub-project data • HR data • Financial data • Data on Trainings, etc.

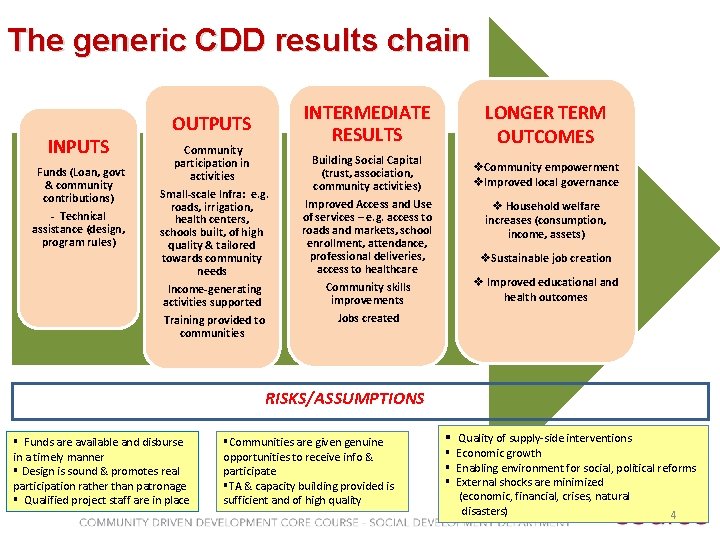

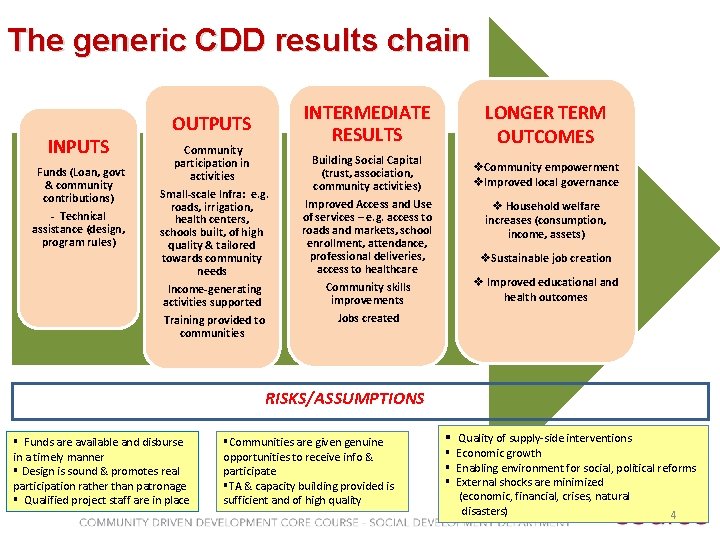

The generic CDD results chain INPUTS Funds (Loan, govt & community contributions) - Technical assistance (design, program rules) OUTPUTS Community participation in activities Small-scale Infra: e. g. roads, irrigation, health centers, schools built, of high quality & tailored towards community needs Income-generating activities supported Training provided to communities INTERMEDIATE RESULTS LONGER TERM OUTCOMES Building Social Capital (trust, association, community activities) v. Community empowerment v. Improved local governance Improved Access and Use of services – e. g. access to roads and markets, school enrollment, attendance, professional deliveries, access to healthcare Community skills improvements Jobs created v Household welfare increases (consumption, income, assets) v. Sustainable job creation v Improved educational and health outcomes RISKS/ASSUMPTIONS § Funds are available and disburse in a timely manner § Design is sound & promotes real participation rather than patronage § Qualified project staff are in place §Communities are given genuine opportunities to receive info & participate §TA & capacity building provided is sufficient and of high quality § Quality of supply-side interventions § Economic growth § Enabling environment for social, political reforms § External shocks are minimized (economic, financial, crises, natural disasters) 4

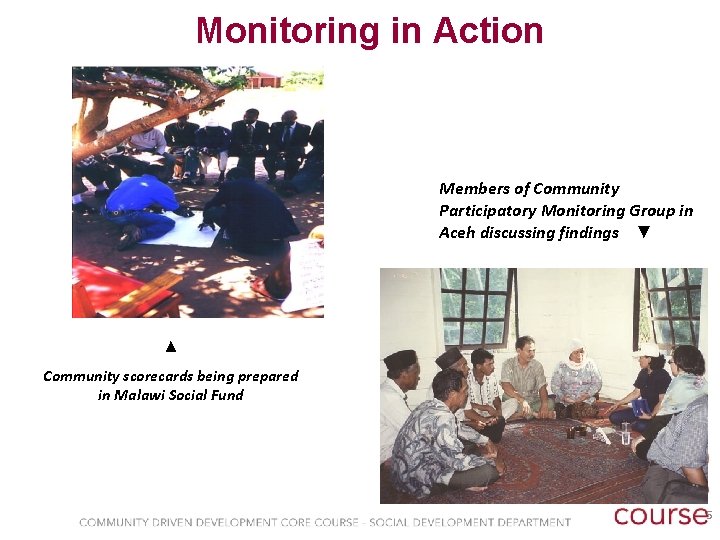

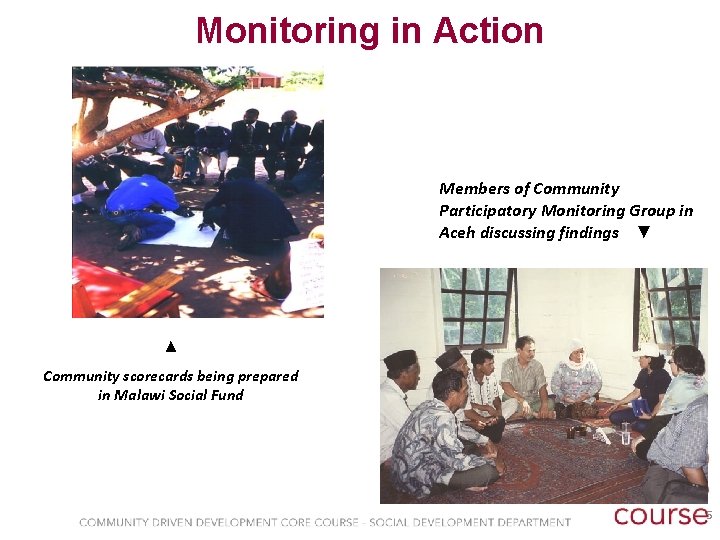

Monitoring in Action Members of Community Participatory Monitoring Group in Aceh discussing findings ▼ ▲ Community scorecards being prepared in Malawi Social Fund 5

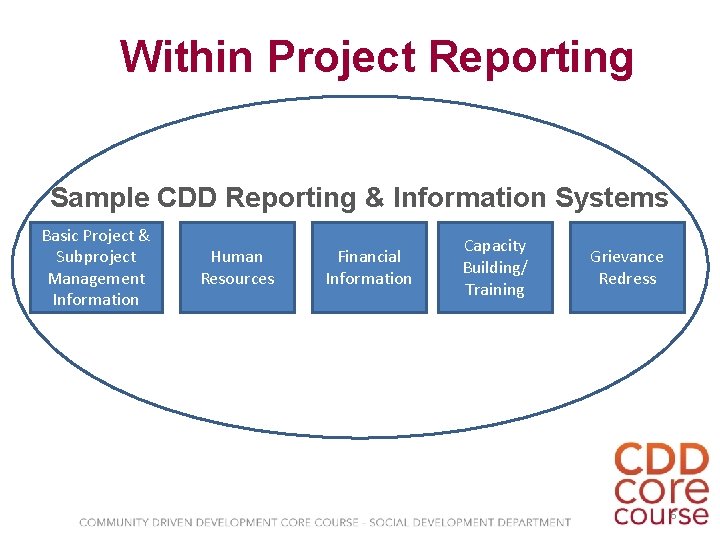

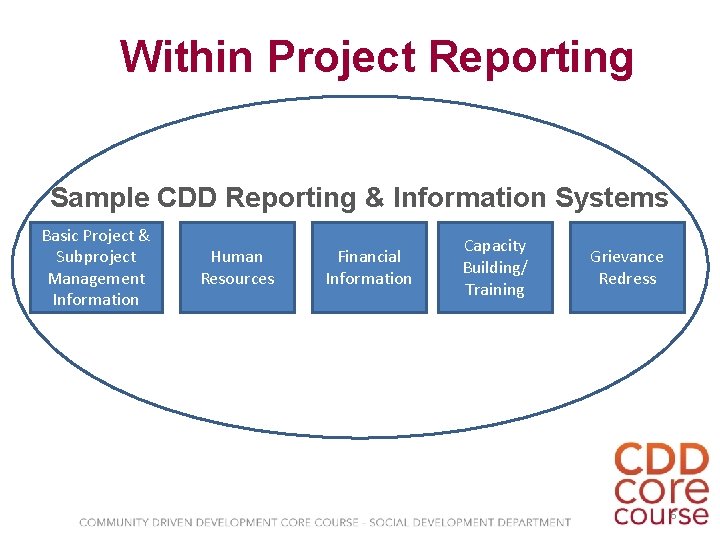

Within Project Reporting Sample CDD Reporting & Information Systems Basic Project & Subproject Management Information Human Resources Financial Information Capacity Building/ Training Grievance Redress 6

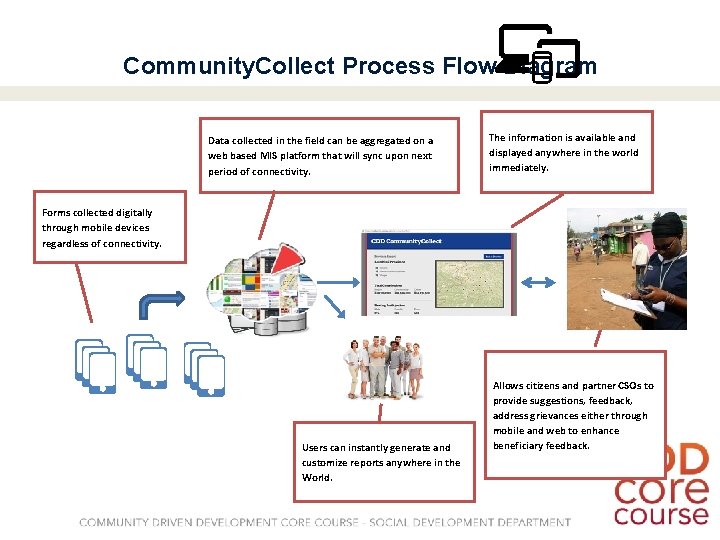

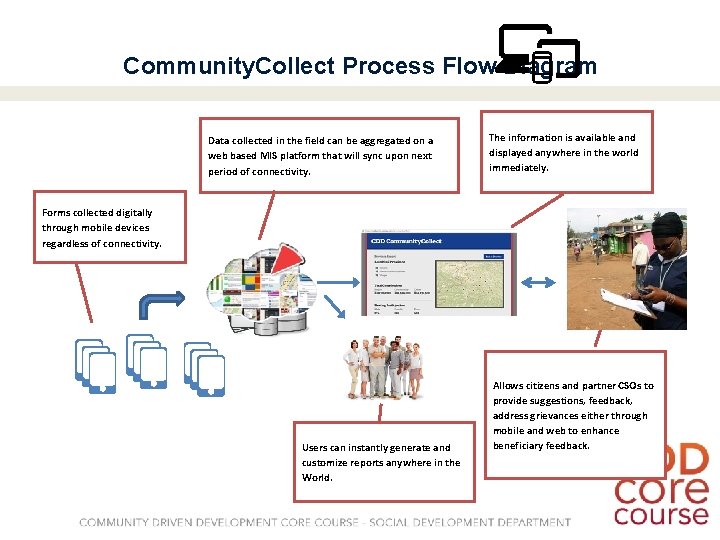

Community. Collect Process Flow Diagram Data collected in the field can be aggregated on a web based MIS platform that will sync upon next period of connectivity. The information is available and displayed anywhere in the world immediately. Forms collected digitally through mobile devices regardless of connectivity. Global Programs Unit Users can instantly generate and customize reports anywhere in the World. Allows citizens and partner CSOs to provide suggestions, feedback, address grievances either through mobile and web to enhance beneficiary feedback.

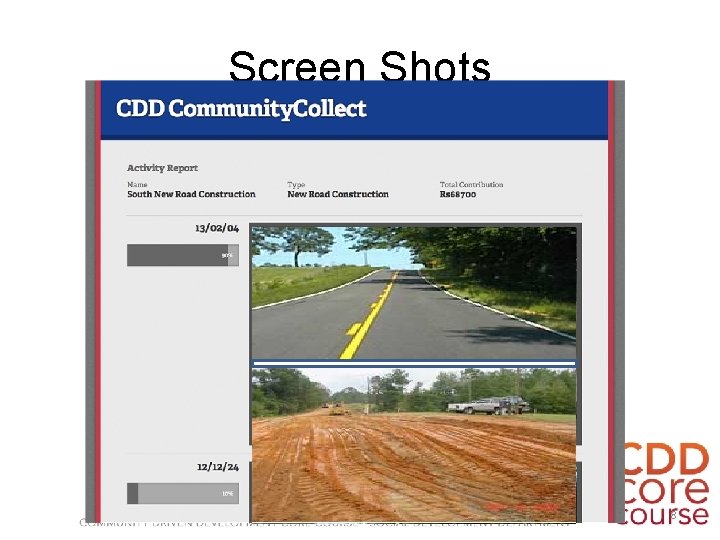

Screen Shots Proprietary & Confidential 8

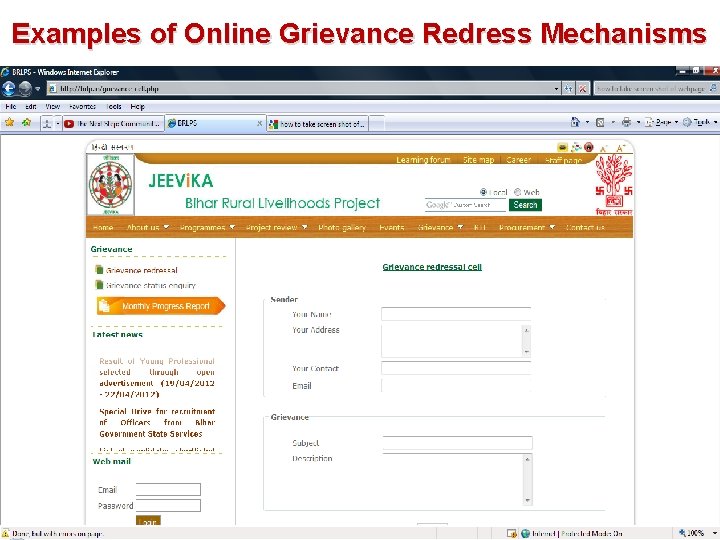

Examples of Online Grievance Redress Mechanisms

How Do We Evaluate? Key Guiding Principles in Impact Evaluation: § The Counterfactual - Comparison/control groups § Sample size large enough to generate statistically significant results § Baseline data § Mix of Quantitative & Qualitative methods ideal 10

Types of Evaluation Work • Impact Evaluation: Rigorous quantitative evaluations which attribute impact on outcome indicators to the project (e. g. Indonesia KDP/PNPM-Rural, Nepal PAF, or Afghanistan NSP) • Purpose: Establish effectiveness of project in achieving development objectives • Best practice: Treatment and Control groups measured exante and ex-post project implementation; could be through: • Randomized Control Trials (RCTs) • Non/Quasi-experimental techniques (e. g. Propensity Score Matching) • Outcome indicators based on overall project objectives (including per capita consumption, access to health care and education, employment) • Qualitative component to determine how and why impacts are occurring

Types of Evaluation Work (contd. ) • Infrastructure Studies: Rigorously developed methods using economists and engineers to assess sub-project infrastructure (E. g. Burkina Faso Community Based Rural Devt Proj) • • Purpose: establish effectiveness of project as infrastructure delivery system EIRR: direct and indirect economic impact on local economy Quality: based on existing standards Cost-effectiveness: relative to equivalent government construction • Thematic Evaluations: Can be done on specific issues of interest (e. g. gender impacts, procurement, micro-finance, corruption, etc. ) normally using qualitative approaches (e. g. PNPM Marginalized Groups study) • Smaller sample size allows methodological flexibility • Techniques include Focus Group Discussions, Key Informant Interviews and Direct Observation • Randomized Pilots for new programs: Offer a mechanism to rigorously test new design options during the umbrella project’s implementation period using smaller scale interventions for which rigorous impact evaluation is built-in (E. g. TASAF Community Based Conditional Cash Transfer Pilot)

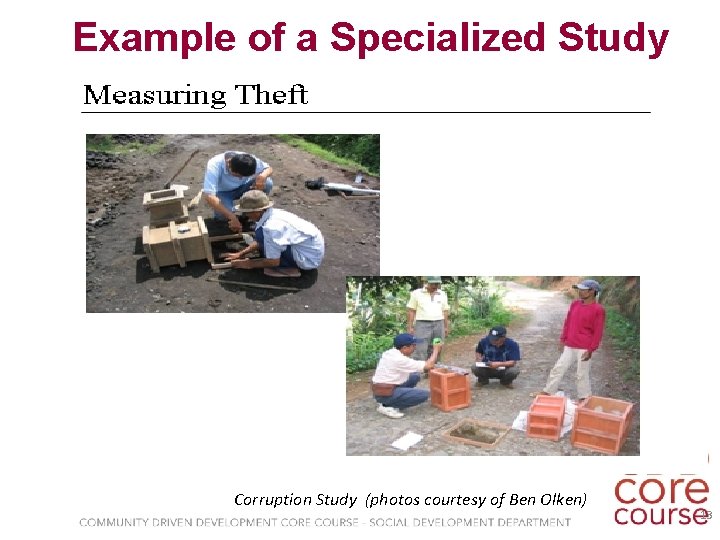

Example of a Specialized Study Corruption Study (photos courtesy of Ben Olken) 13

Lessons Learned about CDD Evaluations • Don’t mess around. These evaluations are tricky and more challenging than single sector evaluations. Hire the expertise you need. Not all evaluators are alike. • Do not compromise on rigor or quality. 1 rigorous study is better than several mediocre ones. • Work closely with the evaluators to make sure evaluators understand the project purpose and design. • Be careful about what you measure (and what you promise). CDD projects are not a magic bullet. Evaluations should focus on whether project achieved its objectives. Be realistic about what impacts will be.