Modular Neural Networks SOM ART and CALM Jaap

- Slides: 44

Modular Neural Networks: SOM, ART, and CALM Jaap Murre University of Amsterdam University of Maastricht jaap@murre. com http: //www. neuromod. org

Modular neural networks • • Why modularity? Kohonen’s Self-Organizing Map (SOM) Grossberg’s Adaptive Resonance Theory Categorizing And Learning Module, CALM (Murre, Phaf, & Wolters, 1992)

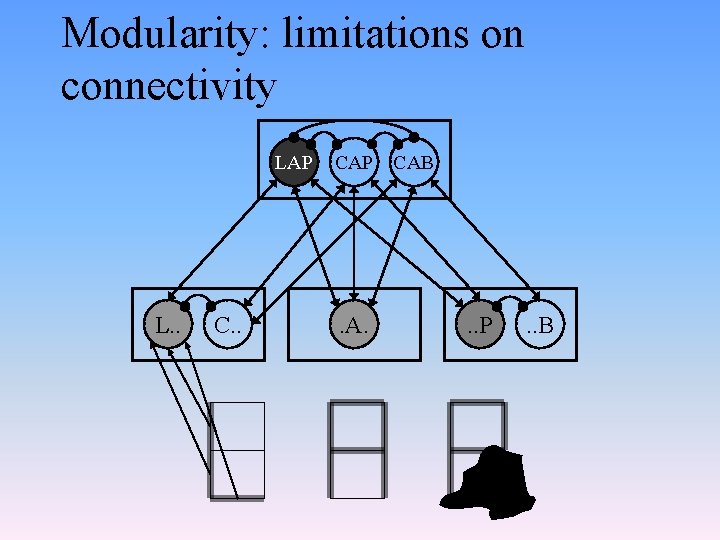

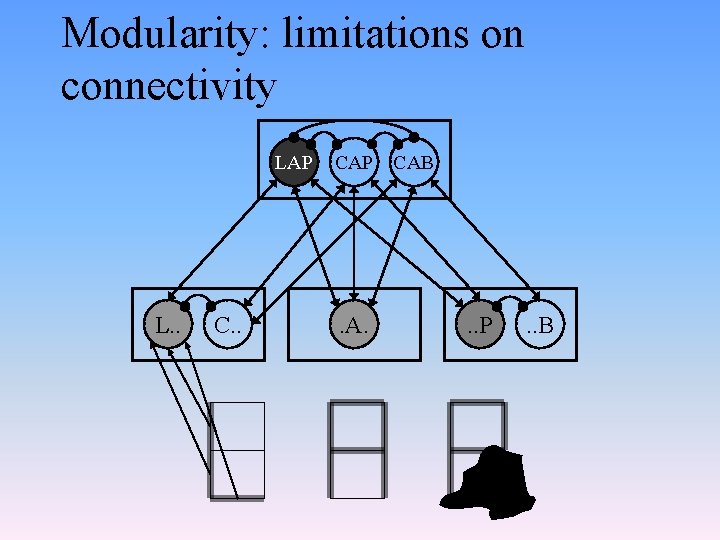

Modularity: limitations on connectivity LAP L. . CAP . A. CAB . . P . . B

Modularity • Scalability • Re-use in design and evolution • Coarse steering of development; learning provides fine structure • Improved generalization because of fewer connections • Strong evidence from neurobiology

Self-Organizing Maps (SOMs) Topological Representations

Map formation in the brain • Topographic maps omnipresent in the sensory regions of the brain – retinotopic maps: neurons ordered as the locations of their visual field on the retina – tonotopic maps: neurons ordered according to tone for which they are sensitive – maps in somatosensory cortex: neurons ordered according to body part for which they are sensitive – maps in motor cortex: neurons ordered according to muscles they control

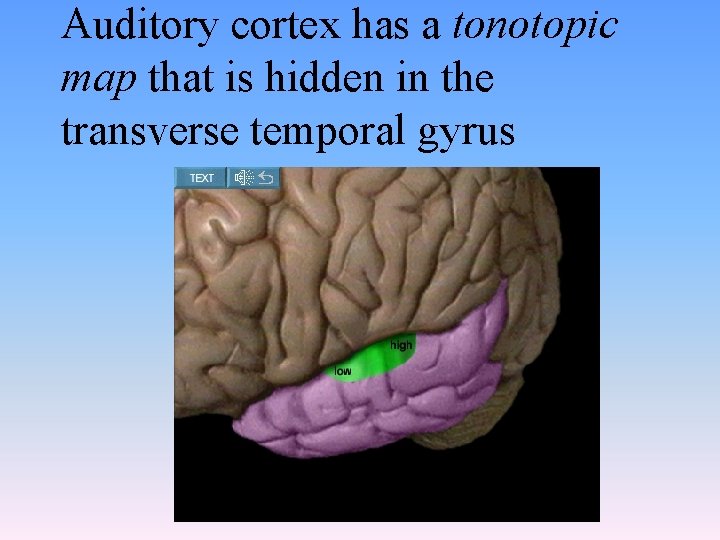

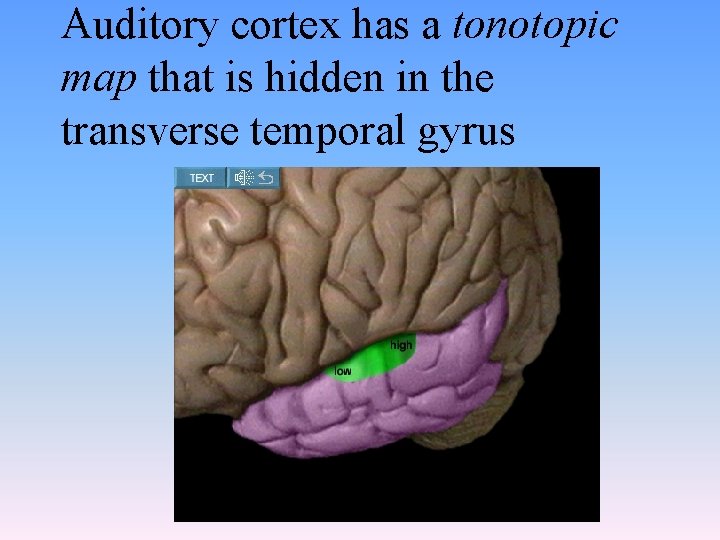

Auditory cortex has a tonotopic map that is hidden in the transverse temporal gyrus

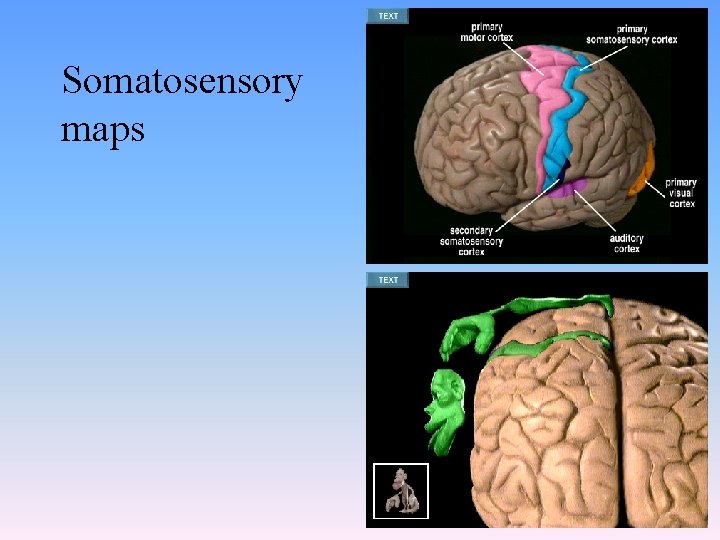

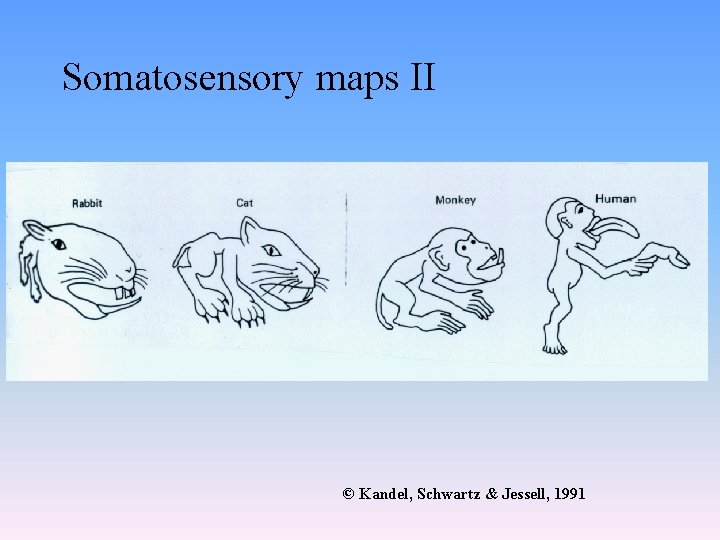

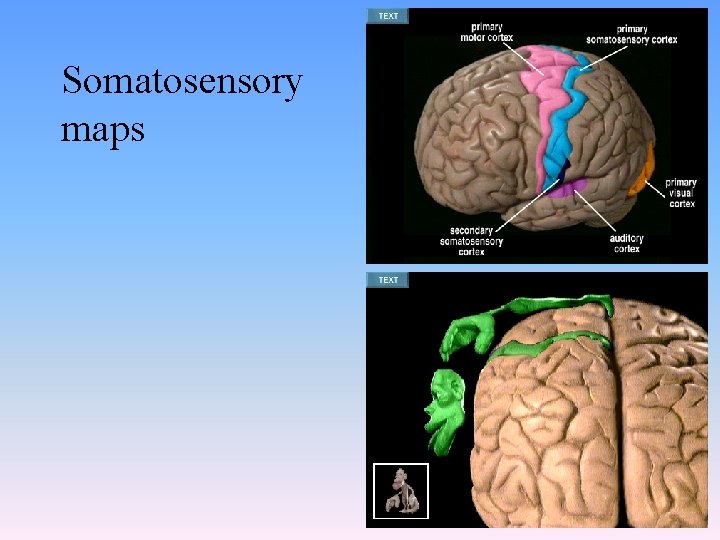

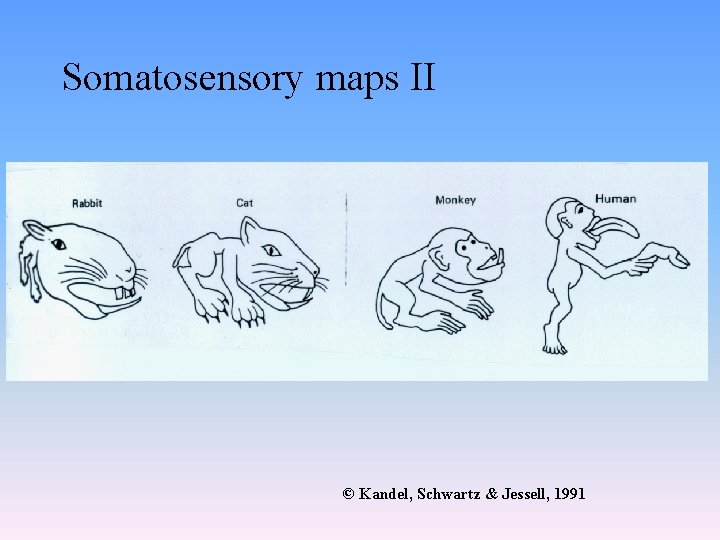

Somatosensory maps

Somatosensory maps II © Kandel, Schwartz & Jessell, 1991

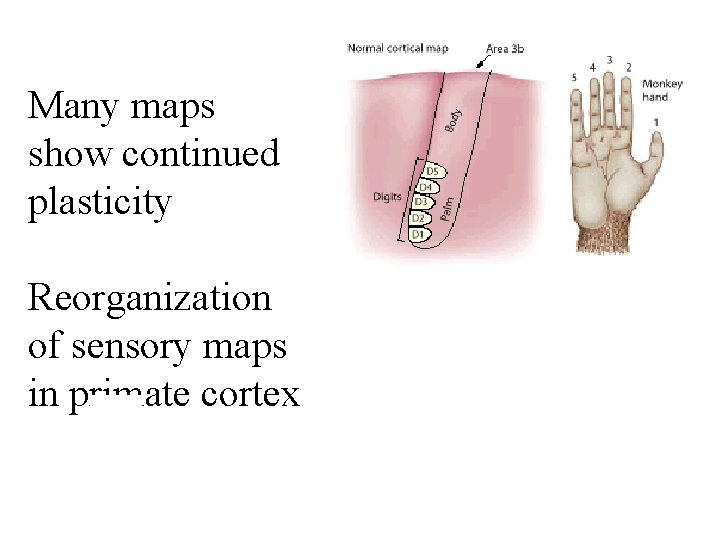

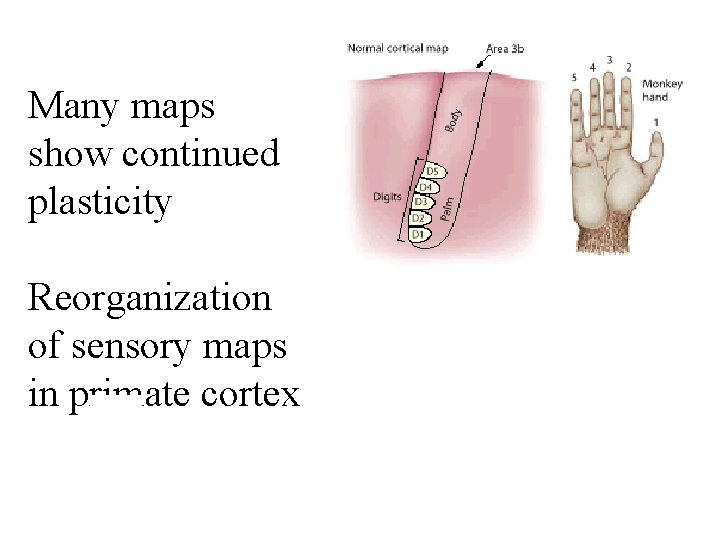

Many maps show continued plasticity Reorganization of sensory maps in primate cortex

Kohonen maps • Teuvo Kohonen was the first to show maps may develop • Self-Organizing Maps (SOMs) • Demonstration: the ordering of colors (colors are vectors in a 3 -dimensional space of brightness, hue, saturation).

Kohonen algorithm • Finding the activity bubble • Updating the weights for the nodes in the active bubble

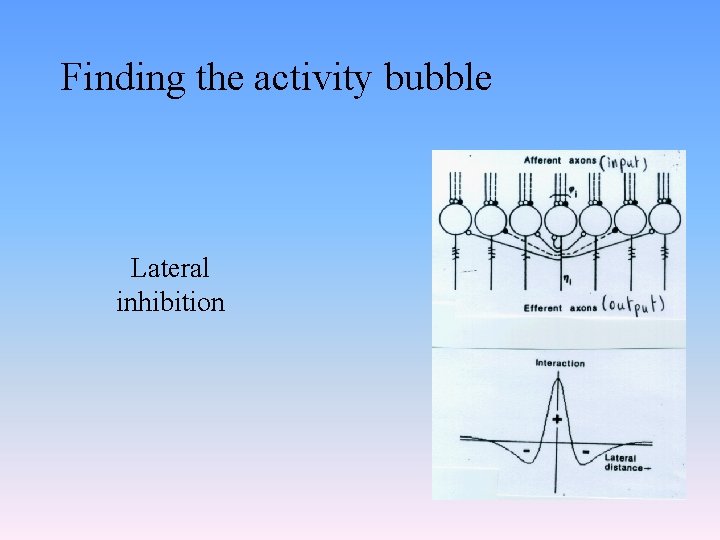

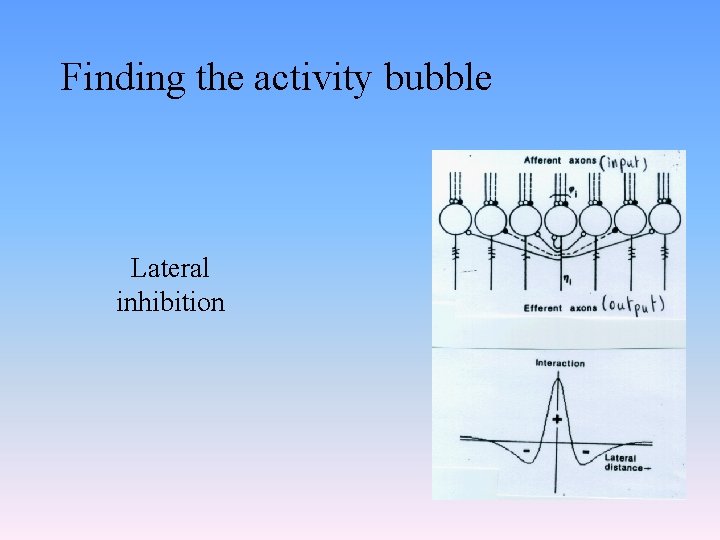

Finding the activity bubble Lateral inhibition

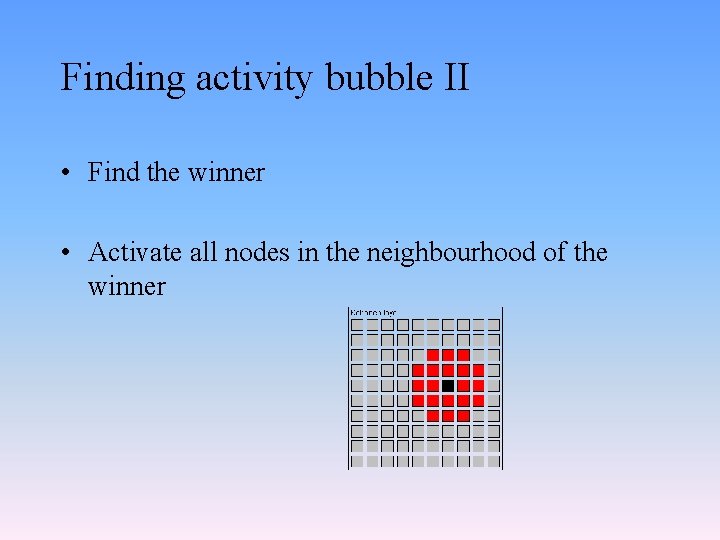

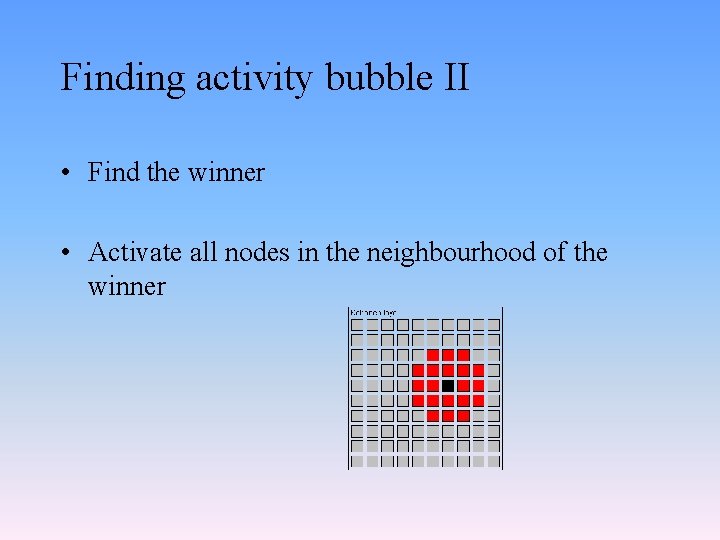

Finding activity bubble II • Find the winner • Activate all nodes in the neighbourhood of the winner

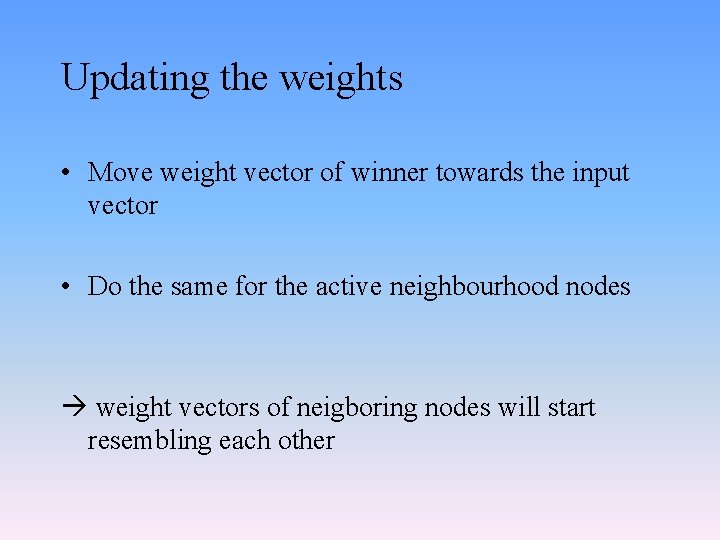

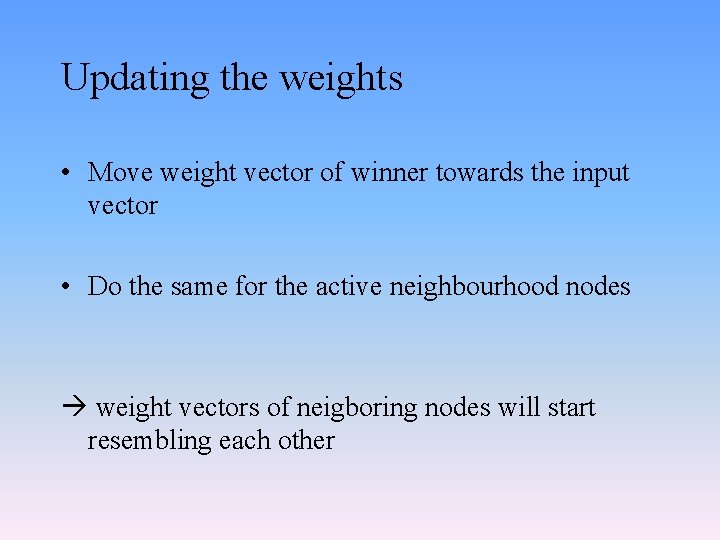

Updating the weights • Move weight vector of winner towards the input vector • Do the same for the active neighbourhood nodes weight vectors of neigboring nodes will start resembling each other

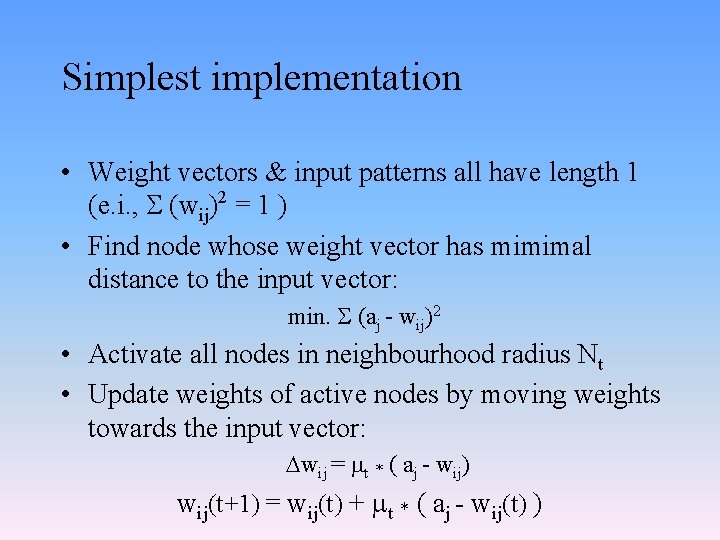

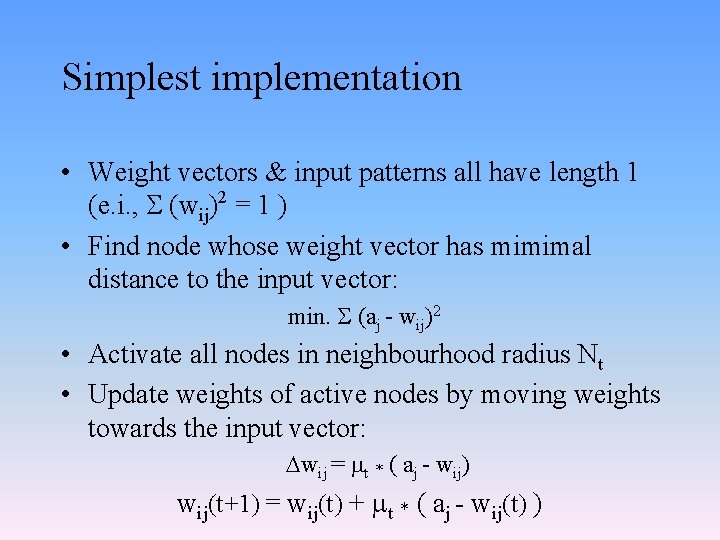

Simplest implementation • Weight vectors & input patterns all have length 1 (e. i. , (wij)2 = 1 ) • Find node whose weight vector has mimimal distance to the input vector: min. (aj - wij)2 • Activate all nodes in neighbourhood radius Nt • Update weights of active nodes by moving weights towards the input vector: wij = t * ( aj - wij) wij(t+1) = wij(t) + t * ( aj - wij(t) )

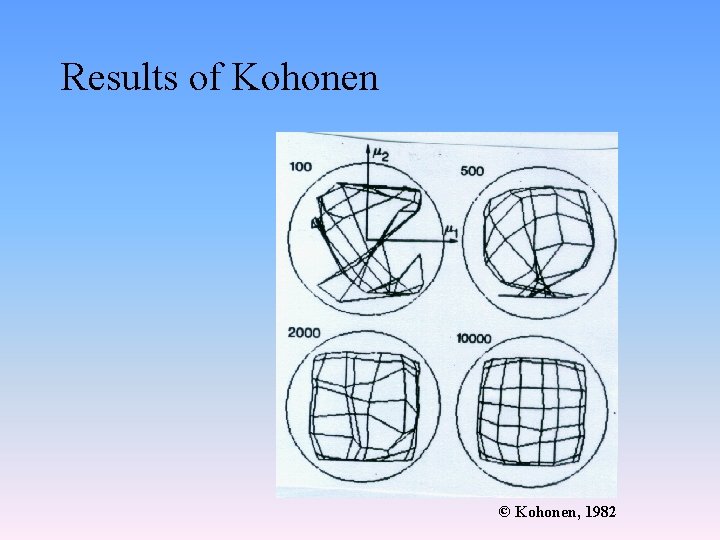

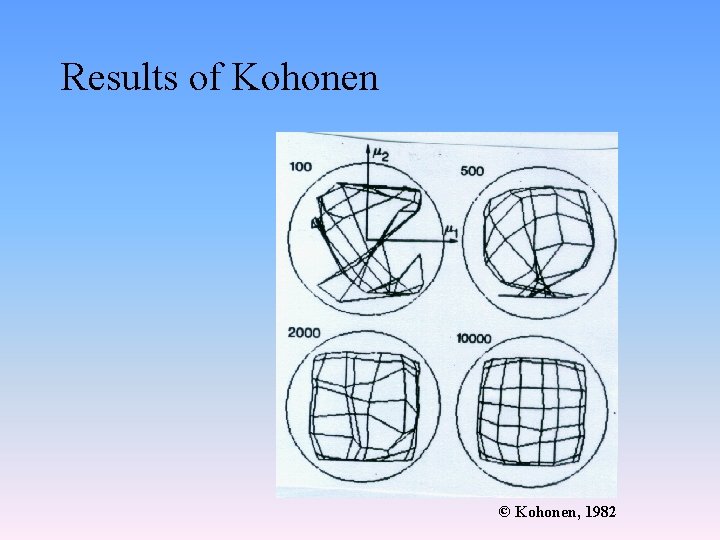

Results of Kohonen © Kohonen, 1982

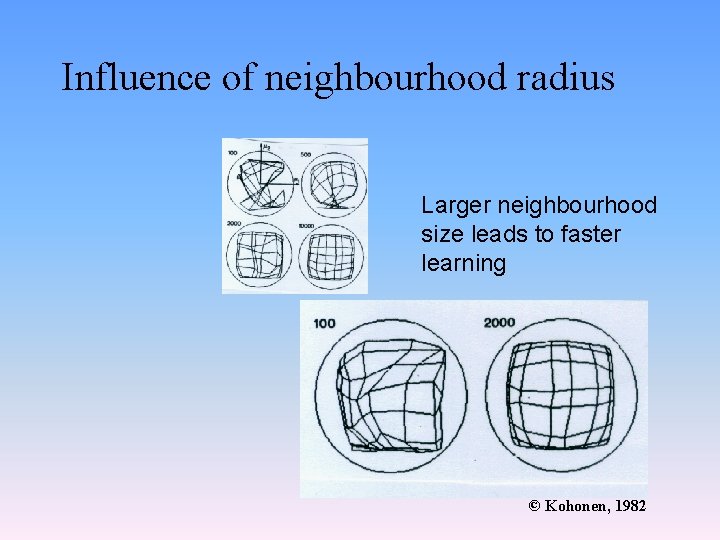

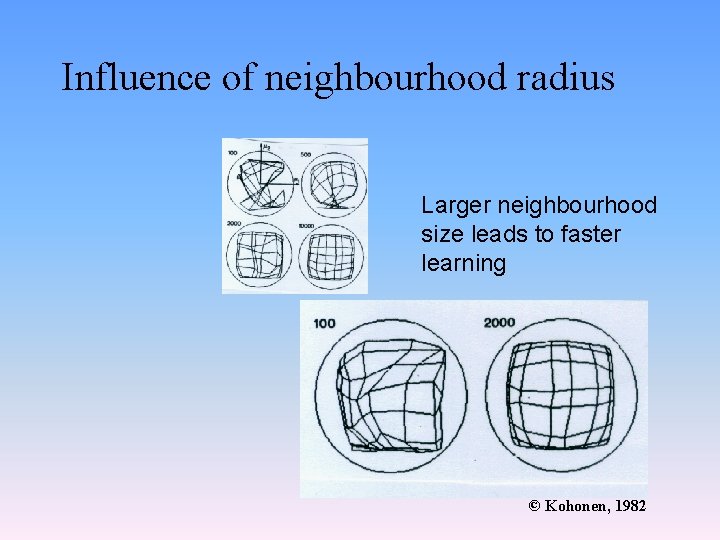

Influence of neighbourhood radius Larger neighbourhood size leads to faster learning © Kohonen, 1982

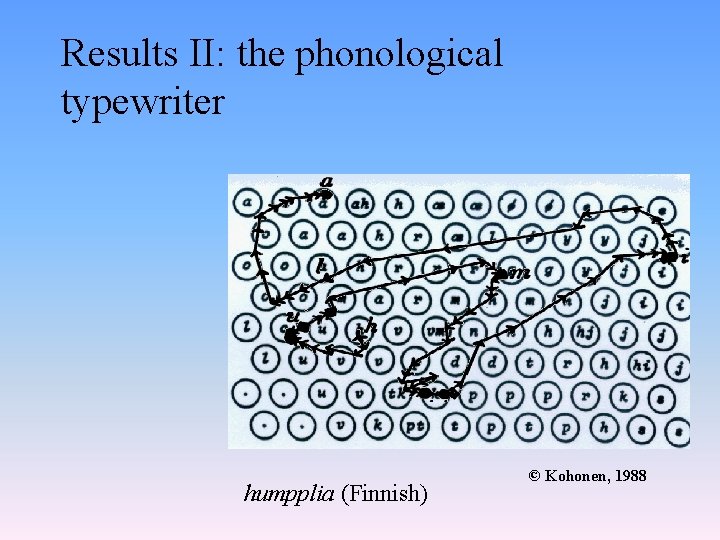

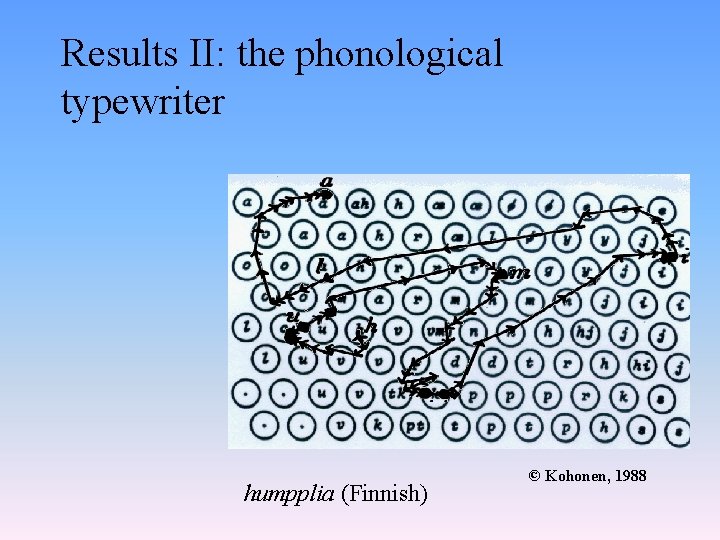

Results II: the phonological typewriter humpplia (Finnish) © Kohonen, 1988

Conclusions for SOM • • Elegant Prime example of unsupervised learning Biologically relevant and plausible Very good at discovering structure: – discovering categories – mapping the input onto a topographic map

Adaptive Resonance Theory (ART) Stephen Grossberg (1976)

Grossberg’s ART • Stability-Plasticity Dilemma • How to disentangle overlapping patterns?

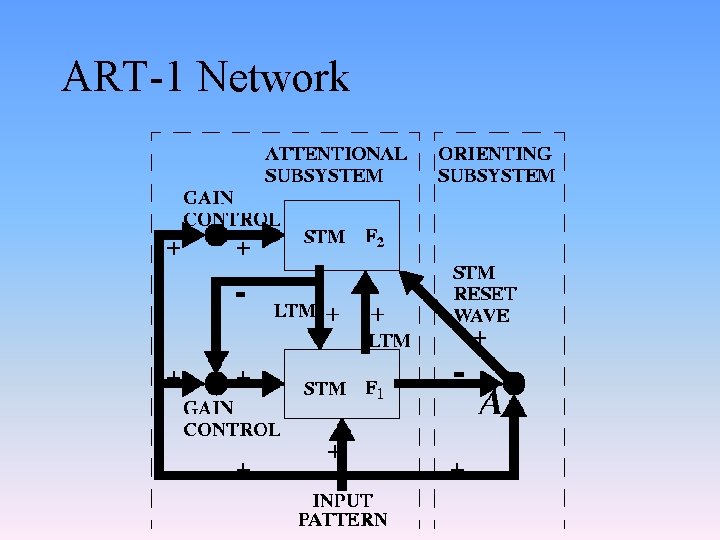

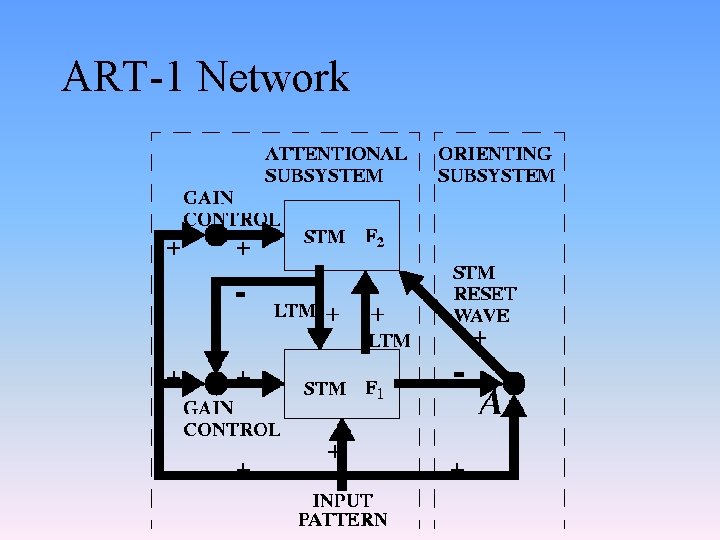

ART-1 Network

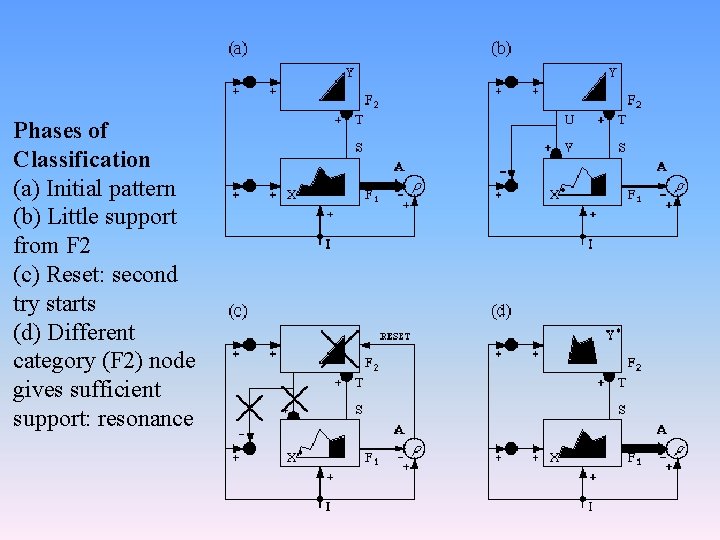

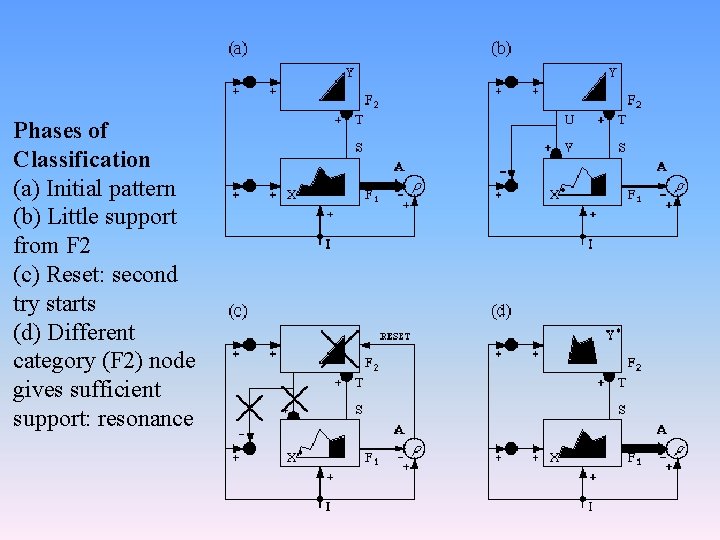

Phases of Classification (a) Initial pattern (b) Little support from F 2 (c) Reset: second try starts (d) Different category (F 2) node gives sufficient support: resonance

Categorizing And Learning Module (CALM) Murre, Phaf, and Wolters (1992)

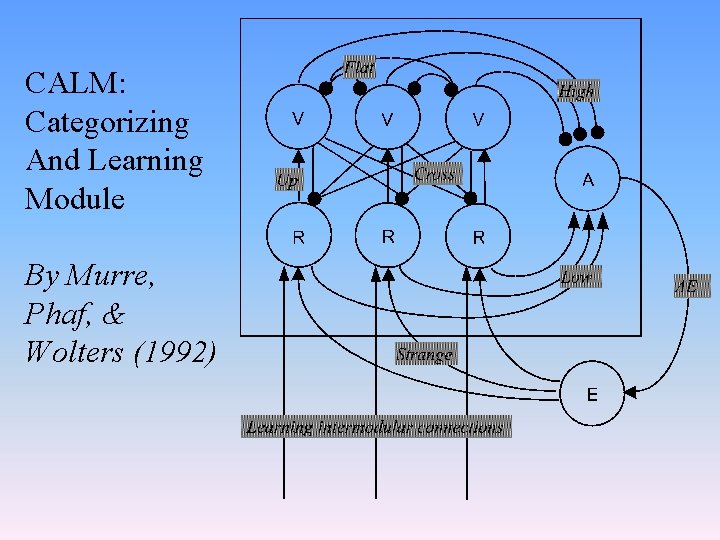

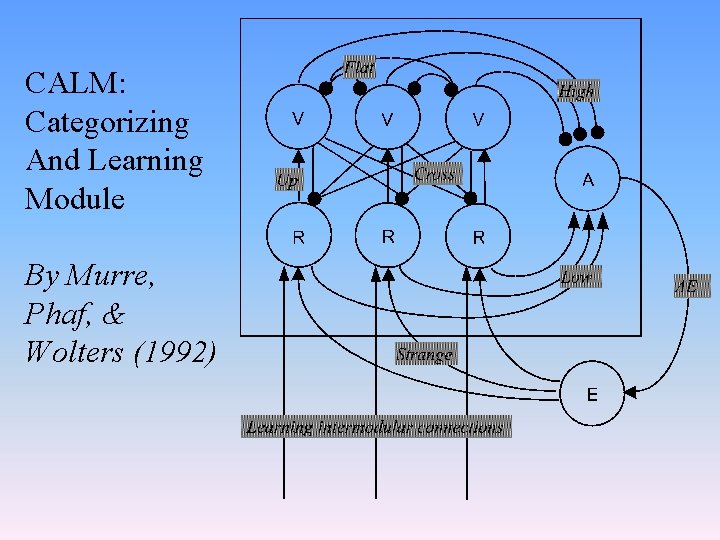

CALM: Categorizing And Learning Module • CALM module is basic unit in multimodular networks • Categorizes arbitrary input activation patterns and retains this categorization over time • CALM is developed for unsupervised learning but also works with supervision • Motivated by psychological, biological, and practical considerations

Important design principles in CALM • Modularity • Novelty dependent categorization and learning • Wiring scheme inspired by neocortical minicolumn

Elaboration versus activation • Novelty dependent categorization and learning derived from memory psychology (Graf and Mandler, 1984) – Elaboration learning: Active formation of new associations – Activation learning: Passive strengthening of pre-existing associations • In CALM: Relative novelty of patterns determines either type of learning

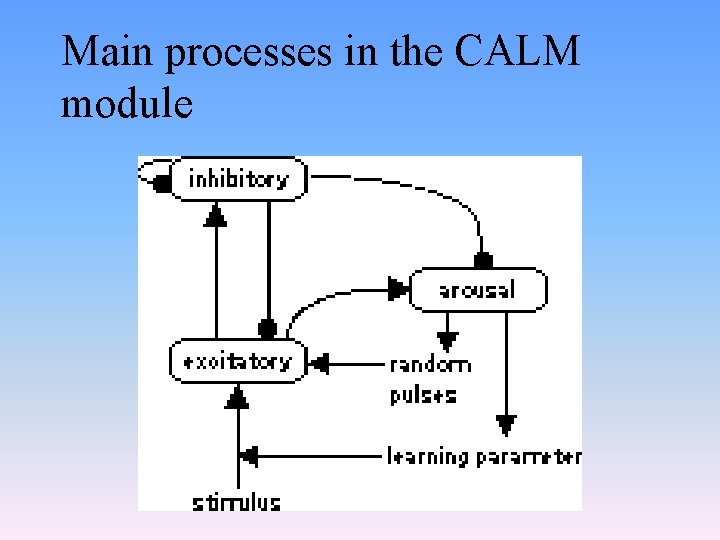

How elaboration learning is implemented in CALM • Novel pattern – > Much competition – > High activation of Arousal Node – > High activation of External Node – > High learning parameter – > High noise amplitude on Representation Nodes • Elaboration learning drives: – Self-induced noise – Self-induced learning

Self-induced noise (cf. Bolzmann Machine) • Non-specific activations from sub-cortical structures in cortex • Optimal level of arousal for optimal learning performance (Yerkes-Dodson Law) • Noise drives search for new representations • Noise breaks symmetry deadlocks • Noise may lead to convergence in deeper attractors

Self-induced learning • Possible role of hippocampus and basal forebrain (cf. modulatory system in Trace. Link) • Shift from implicit to explicit memory • Remedy of the Plasticity-Stability Dilemma

Stability-Plasticity Dilemma or the Problem of Real-Time Learning • How can a learning system be designed to remain plastic, or adaptive, in response to significant events and yet remain stable in response to irrelevant events? ” Carpenter and Grossberg, 1988, p. 77)

Novelty dependent categorization • Novel patterns implies search for new representations • Search process is driven by novelty dependent noise

Novelty dependent learning • Novel pattern: increased learning rate • Old pattern: base-rate learning

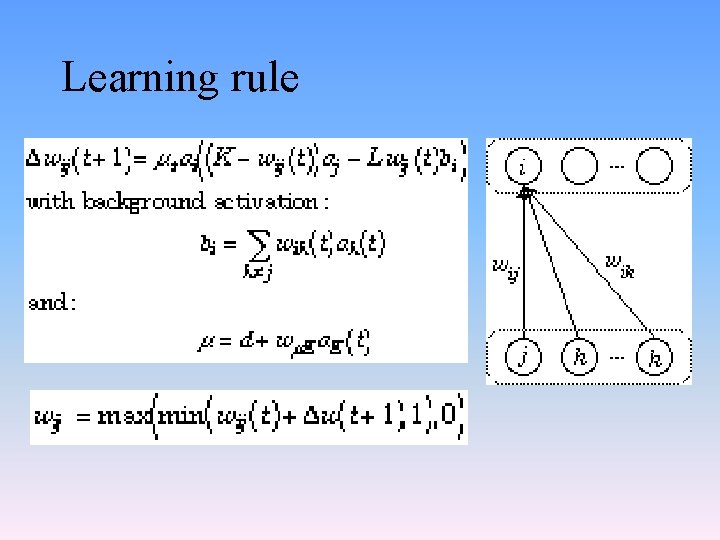

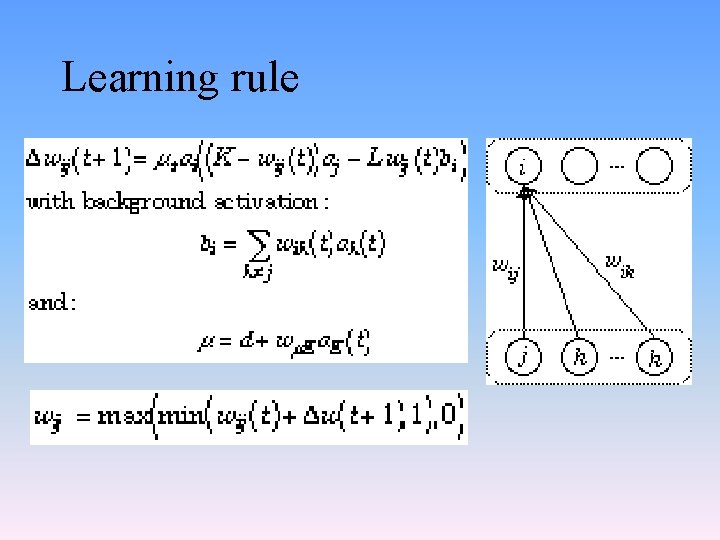

Learning rule derived from Grossberg’s ART • Extension of the Hebb Rule • Increases and decreases in weight • Only applied to excitatory connections (no sign changes allowed) • Weights are bounded between 0 and 1 • Allows separation of complex patterns from their composing subpatterns • In contrast to ART: weight change is influenced by weighed neighbor activations

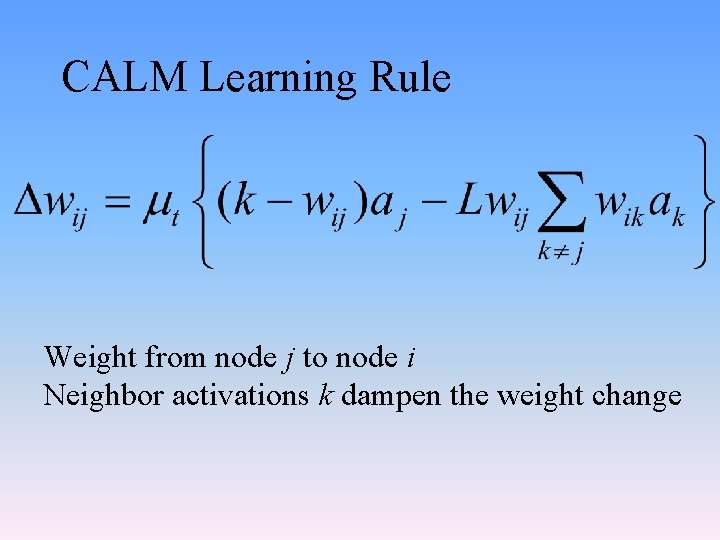

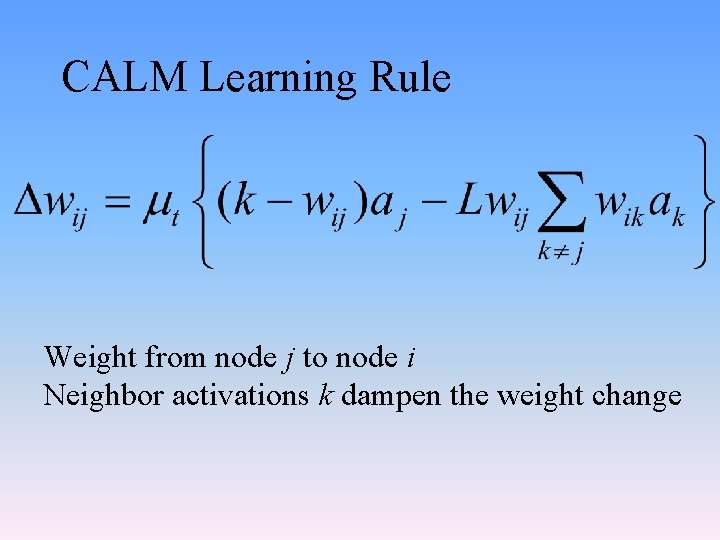

CALM Learning Rule Weight from node j to node i Neighbor activations k dampen the weight change

Learning rule

Avoid neurobiologically implausible architectures • Random organization of excitatory and inhibitory connections • Learning may change a connections sign • Single nodes may give off both excitatory and inhibitory connections

Neurons form a dichotomy (Dale’s Law) • Neurons involved in long-range connections in cortex give off excitatory connections • Inhibitory neurons in cortex are inhibitory

CALM: Categorizing And Learning Module By Murre, Phaf, & Wolters (1992)

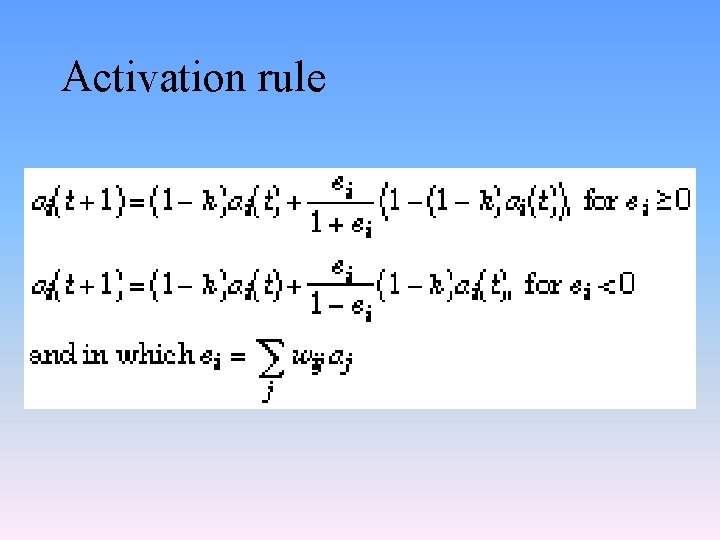

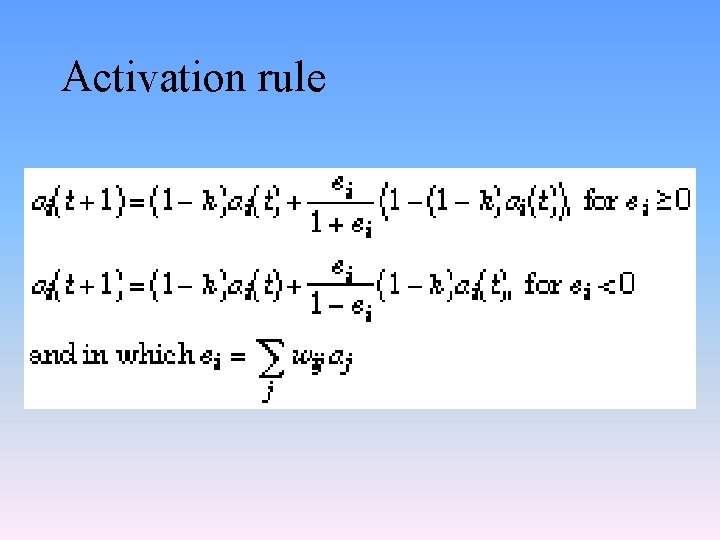

Activation rule

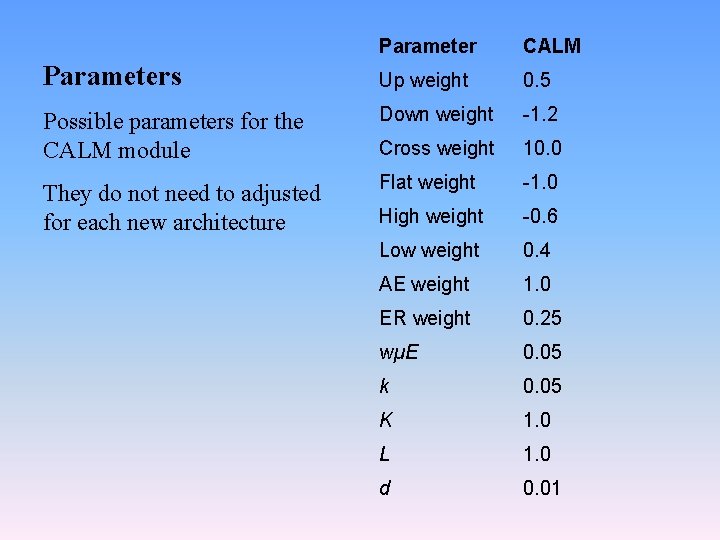

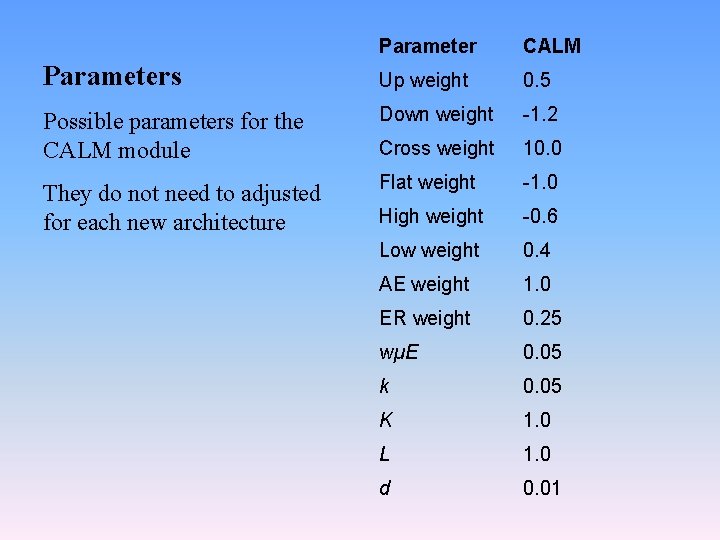

Parameter CALM Parameters Up weight 0. 5 Possible parameters for the CALM module Down weight -1. 2 Cross weight 10. 0 They do not need to adjusted for each new architecture Flat weight -1. 0 High weight -0. 6 Low weight 0. 4 AE weight 1. 0 ER weight 0. 25 wµE 0. 05 k 0. 05 K 1. 0 L 1. 0 d 0. 01

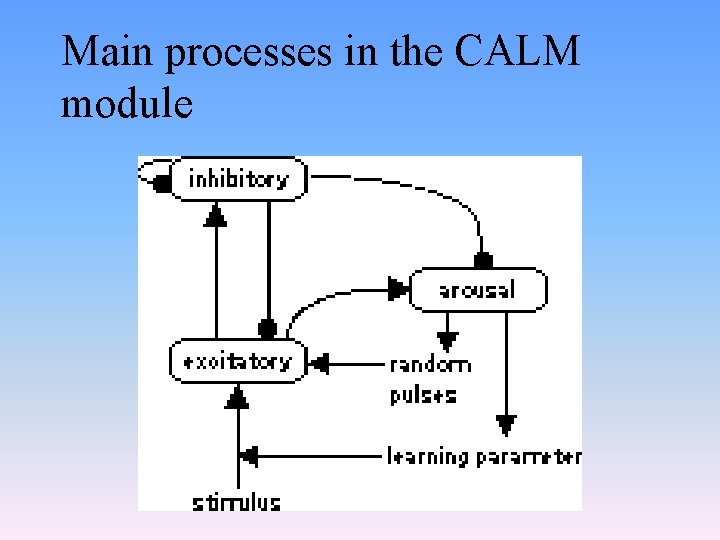

Main processes in the CALM module

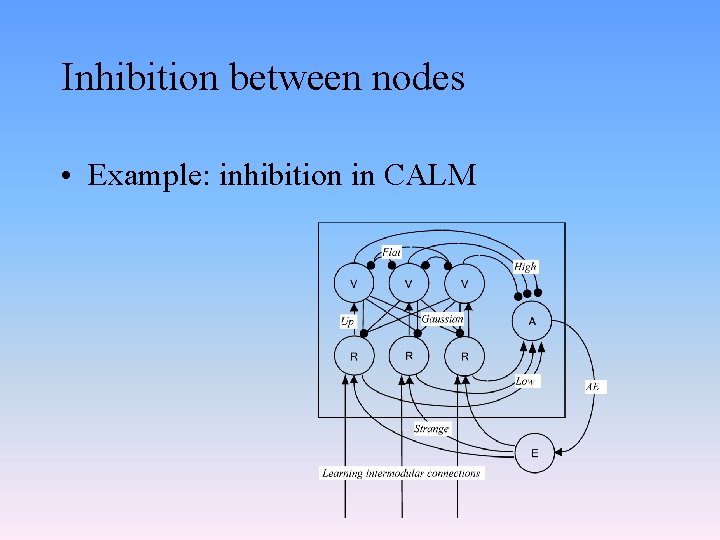

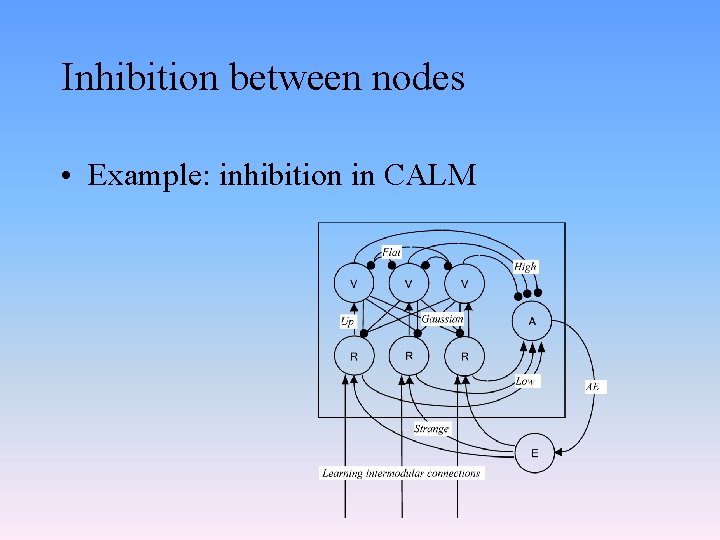

Inhibition between nodes • Example: inhibition in CALM