Modeling Information Seeking Behavior in Social Media Eugene

Modeling Information Seeking Behavior in Social Media Eugene Agichtein Emory University

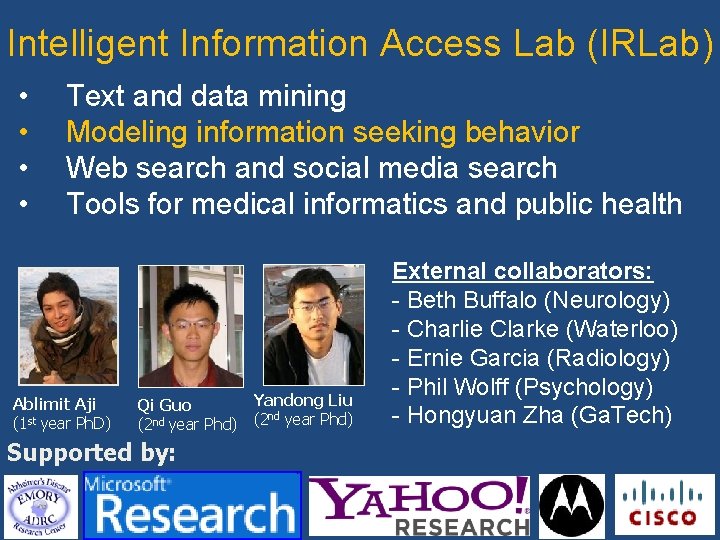

Intelligent Information Access Lab (IRLab) • • Text and data mining Modeling information seeking behavior Web search and social media search Tools for medical informatics and public health Ablimit Aji (1 st year Ph. D) Yandong Liu Qi Guo nd (2 nd year Phd) (2 year Phd) External collaborators: - Beth Buffalo (Neurology) - Charlie Clarke (Waterloo) - Ernie Garcia (Radiology) - Phil Wolff (Psychology) - Hongyuan Zha (Ga. Tech) Supported by: 2

Online Behavior and Interactions Information sharing: blogs, forums, discussions Search logs: queries, clicks Client-side behavior: Gaze tracking, mouse movement, scrolling 3

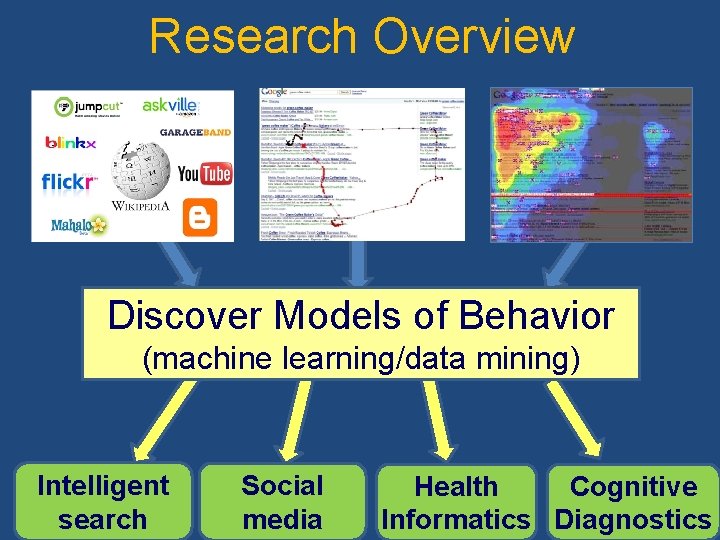

Research Overview Discover Models of Behavior (machine learning/data mining) Intelligent search Social media Cognitive Health Informatics Diagnostics 4

Applications that Affect Millions • Search: ranking, evaluation, advertising, search interfaces, medical search (clinicians, patients) Ø Collaboratively generated content: searcher intent, success, expertise, content quality • Health informatics: self reporting of drug side effects, co-morbidity, outreach/education • Automatic cognitive diagnostics: stress, frustration, Alzheimer’s, Parkinson's, ADHD, …. 5

6

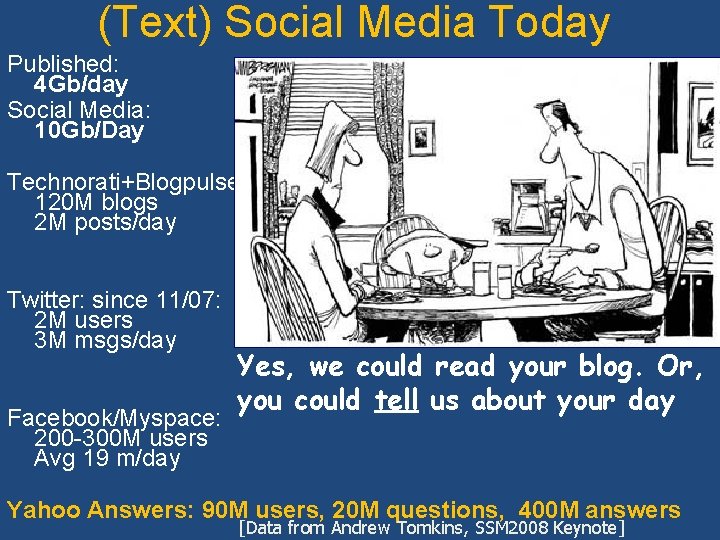

(Text) Social Media Today Published: 4 Gb/day Social Media: 10 Gb/Day Technorati+Blogpulse 120 M blogs 2 M posts/day Twitter: since 11/07: 2 M users 3 M msgs/day Facebook/Myspace: 200 -300 M users Avg 19 m/day Yes, we could read your blog. Or, you could tell us about your day Yahoo Answers: 90 M users, 20 M questions, 400 M answers [Data from Andrew Tomkins, SSM 2008 Keynote]

8

Total time: 7 -10 minutes, active “work” 9

Someone must know this…

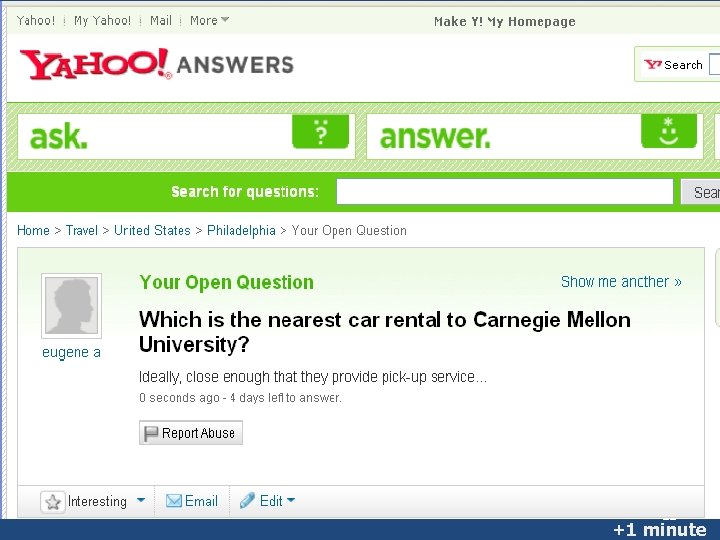

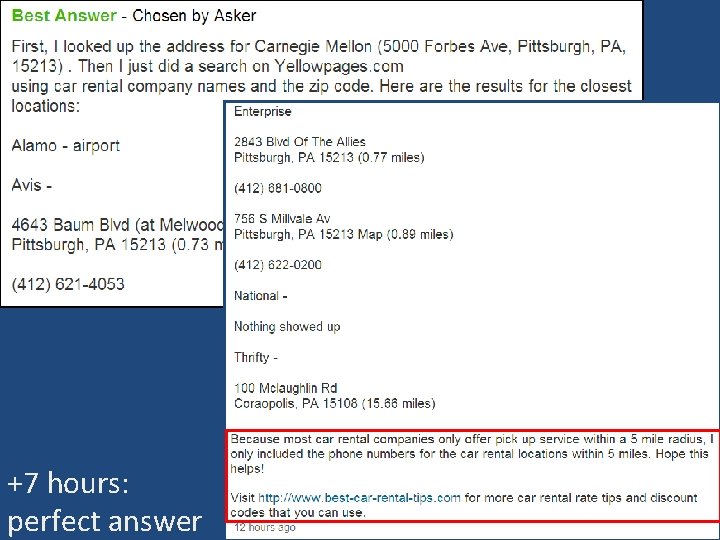

11 +1 minute

+7 hours: perfect answer

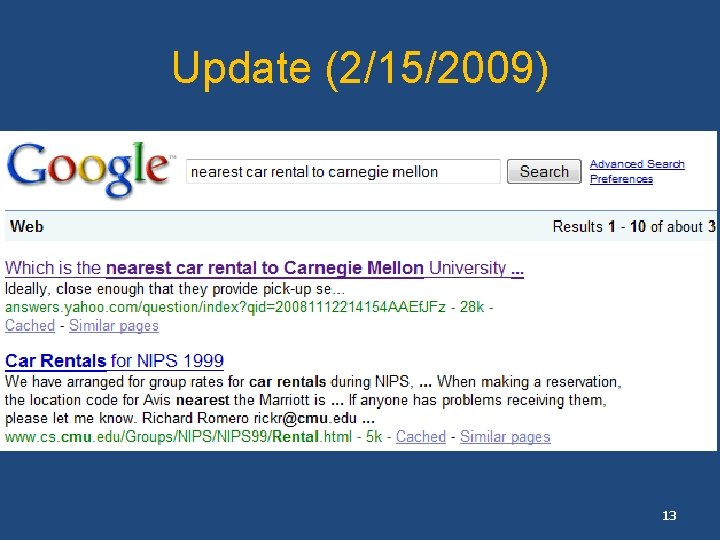

Update (2/15/2009) 13

http: //answers. yahoo. com/question/index; _ylt=3? qid=20071008115118 AAh 1 Hd. O 14

15

Finding Information Online (Revisited) Next generation of search: Algorithmically-mediated information exchange CQA (collaborative question answering): • Realistic information exchange Content quality, asker satisfaction • Searching archives • Train NLP, IR, QA systems • Study of social behavior, norms Current and future work 16

(Some) Related Work • Adamic et al. , WWW 2007, WWW 2008: – Expertise sharing, network structure • Elsas et al. , SIGIR 2008: – Blog search • Glance et al. : – Blog Pulse, popularity, information sharing • Harper et al. , CHI 2008, 2009: – Answer quality across multiple CQA sites • Kraut et al. : – community participation • Kumar et al. , WWW 2004, KDD 2008, …: – Information diffusion in blogspace, network evolution SIGIR 2009 Workshop on Searching Social Media http: //ir. mathcs. emory. edu/SSM 2009/ 17

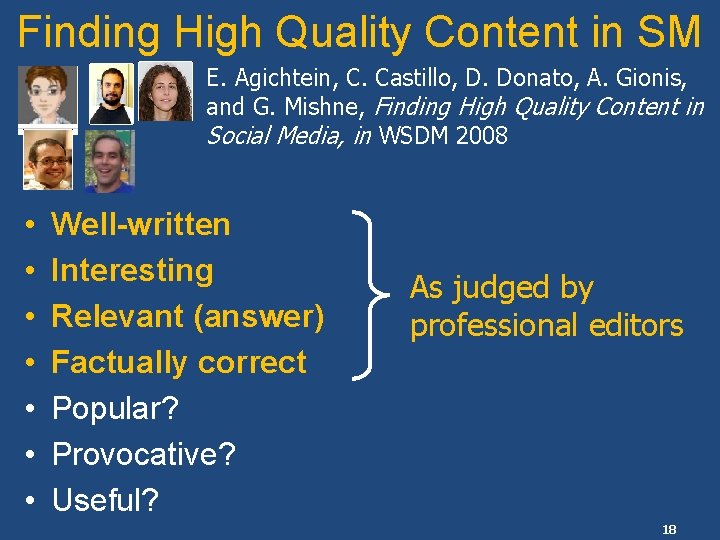

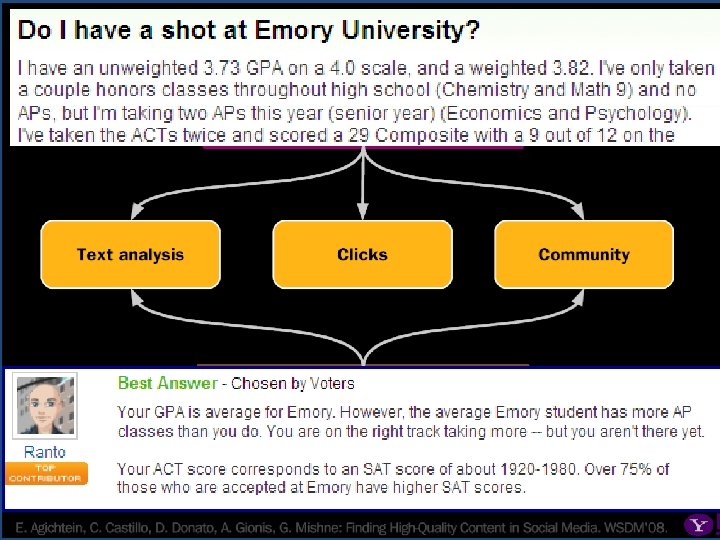

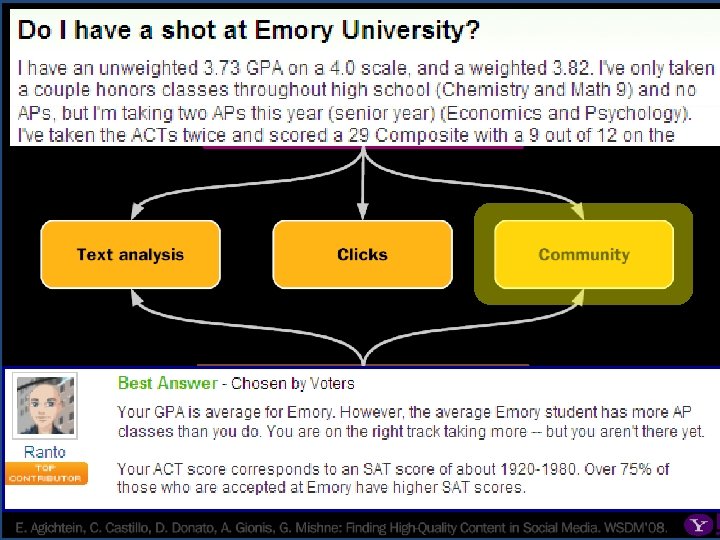

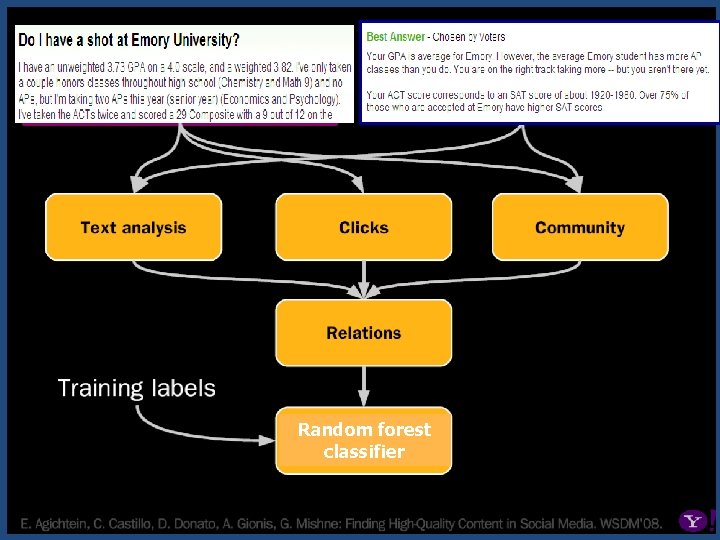

Finding High Quality Content in SM E. Agichtein, C. Castillo, D. Donato, A. Gionis, and G. Mishne, Finding High Quality Content in Social Media, in WSDM 2008 • • Well-written Interesting Relevant (answer) Factually correct Popular? Provocative? Useful? As judged by professional editors 18

Social Media Content Quality E. Agichtein, C. Castillo, D. Donato, A. Gionis, G. Mishne, Finding High Quality Content in Social Media, WSDM 2008 quality 19

20 20

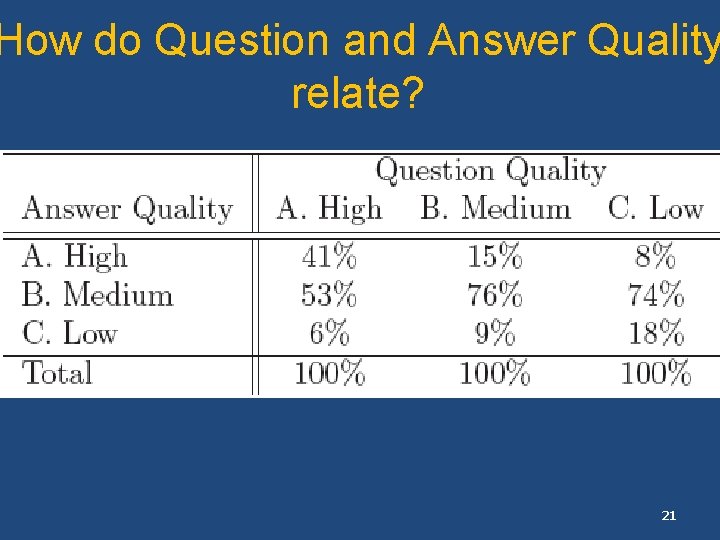

How do Question and Answer Quality relate? 21

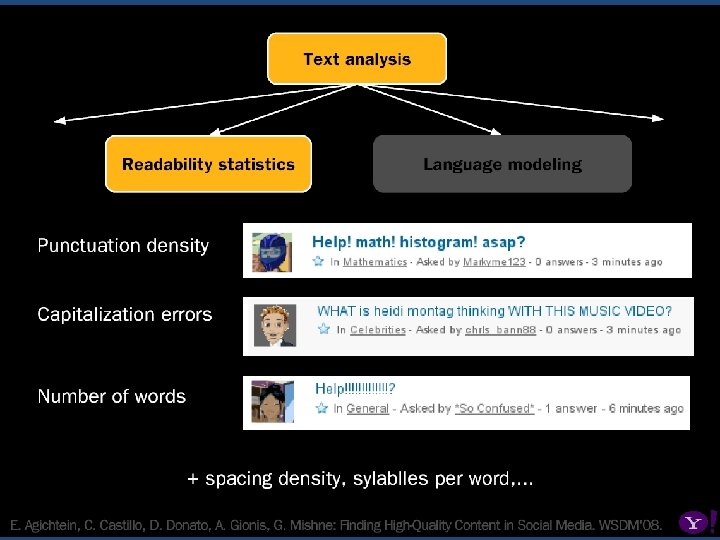

22 22

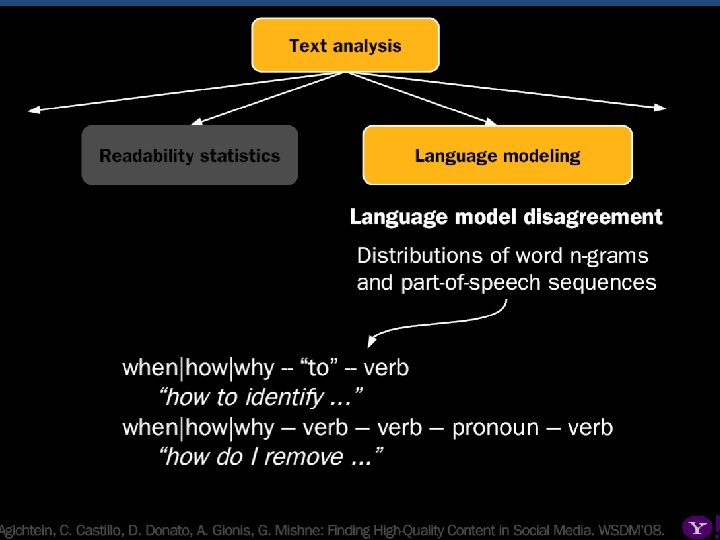

23 23

24 24

25 25

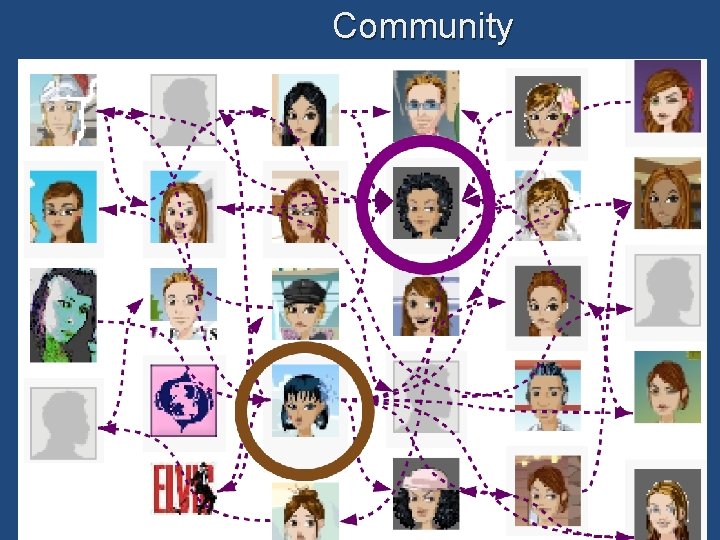

Community 26

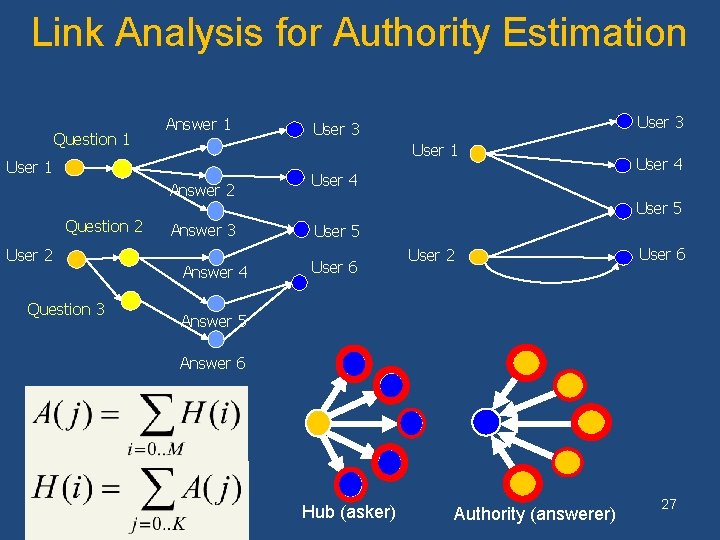

Link Analysis for Authority Estimation Question 1 Answer 1 User 1 Answer 2 Question 2 User 2 Question 3 User 3 Answer 4 User 5 User 6 User 2 User 6 Answer 5 Answer 6 Hub (asker) Authority (answerer) 27

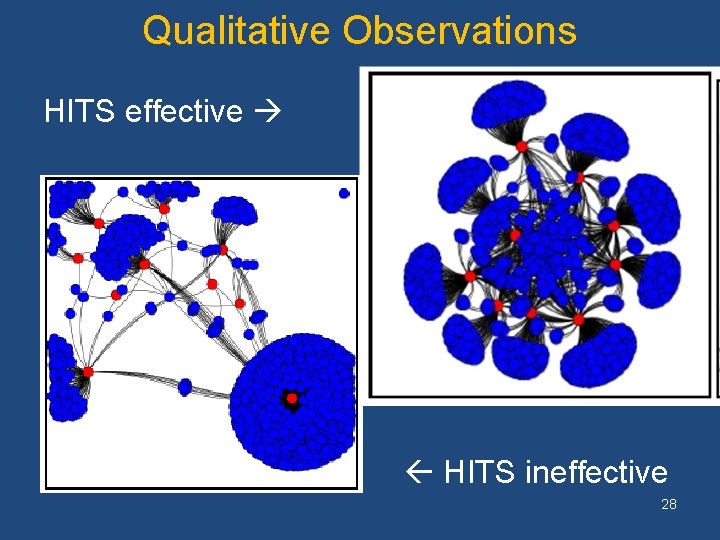

Qualitative Observations HITS effective HITS ineffective 28

Random forest classifier 29 29

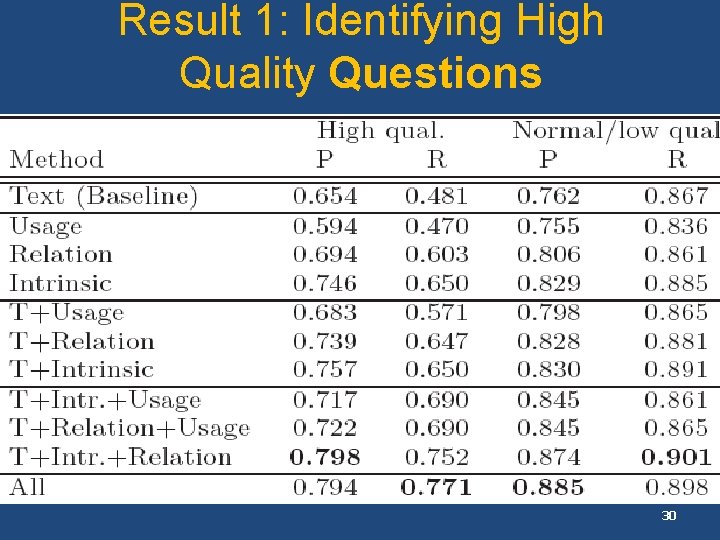

Result 1: Identifying High Quality Questions 30

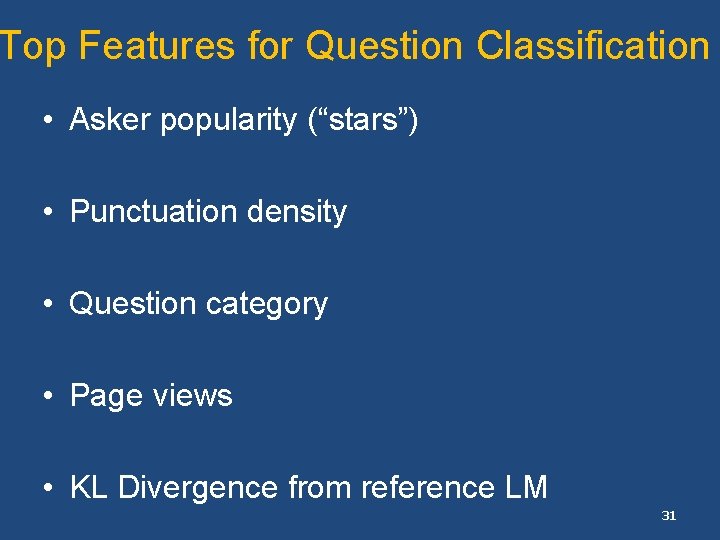

Top Features for Question Classification • Asker popularity (“stars”) • Punctuation density • Question category • Page views • KL Divergence from reference LM 31

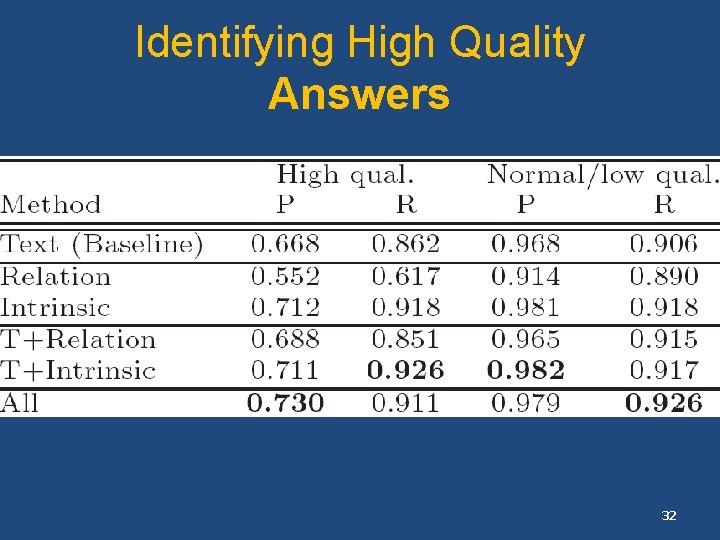

Identifying High Quality Answers 32

Top Features for Answer Classification • Answer length • Community ratings • Answerer reputation • Word overlap • Kincaid readability score 33

Finding Information Online (Revisited) • Next generation of search: • human-machine-human • CQA: a case study in complex IR ü Content quality • Asker satisfaction • Understanding the interactions 34

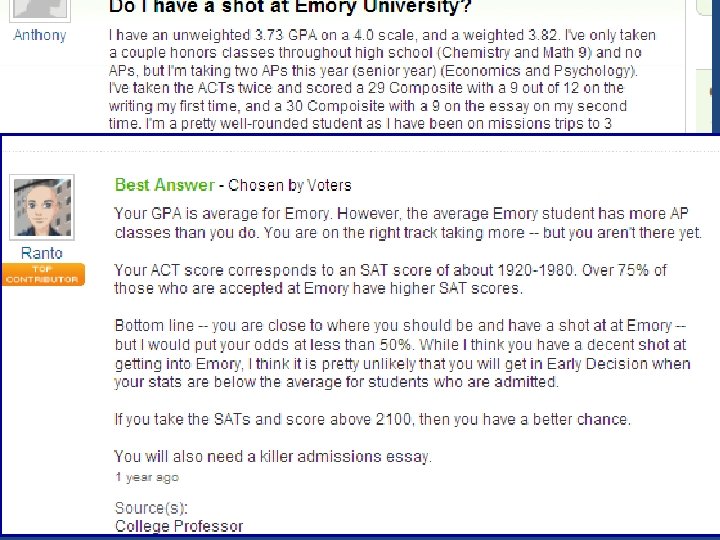

Dimensions of “Quality” • • Well-written Interesting Relevant (answer) Factually correct Popular? Timely? Provocative? Useful? As judged by the asker (or community) 35

Are Editor Labels “Meaningful” for CGC? • Information seeking process: want to find useful information about topic with incomplete knowledge – N. Belkin: “Anomalous states of knowledge” • Want to model directly if user found satisfactory information • Specific (amenable) case: CQA

Yahoo! Answers: The Good News • Active community of millions of users in many countries and languages • Effective for subjective information needs – Great forum for socialization/chat • Can be invaluable for hard-to-find information not available on the web 37

38

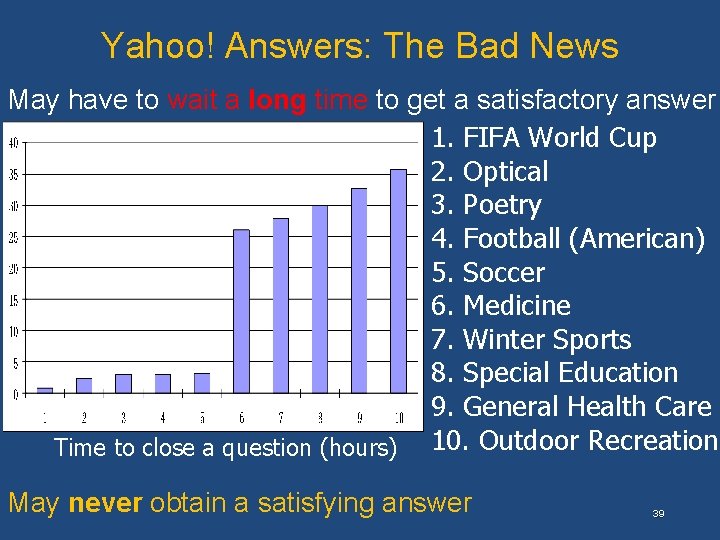

Yahoo! Answers: The Bad News May have to wait a long time to get a satisfactory answer 1. FIFA World Cup 2. Optical 3. Poetry 4. Football (American) 5. Soccer 6. Medicine 7. Winter Sports 8. Special Education 9. General Health Care Time to close a question (hours) 10. Outdoor Recreation May never obtain a satisfying answer 39

Predicting Asker Satisfaction Y. Liu, J. Bian, and E. Agichtein, in SIGIR 2008 Yandong Liu Jiang Bian Given a question submitted by an asker in CQA, predict whether the user will be satisfied with the answers contributed by the community. – “Satisfied” : • The asker has closed the question AND • Selected the best answer AND • Rated best answer >= 3 “stars” (# not important) – Else, “Unsatisfied 40

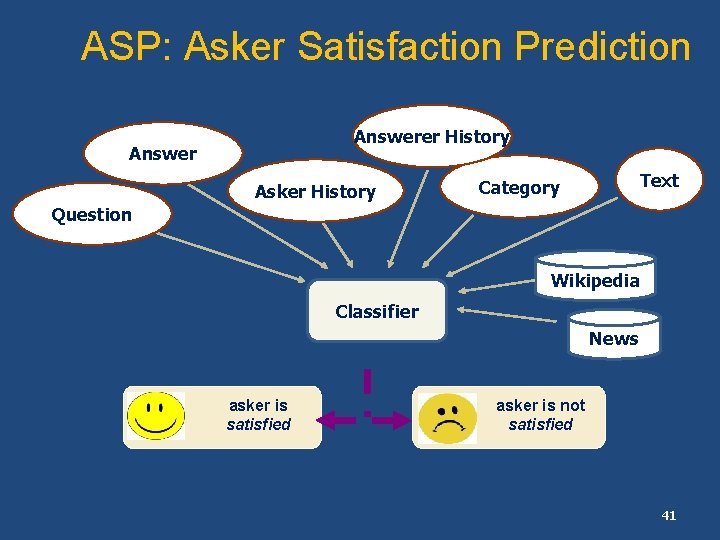

ASP: Asker Satisfaction Prediction Answerer History Answer Asker History Text Category Question Wikipedia Classifier News asker is satisfied asker is not satisfied 41

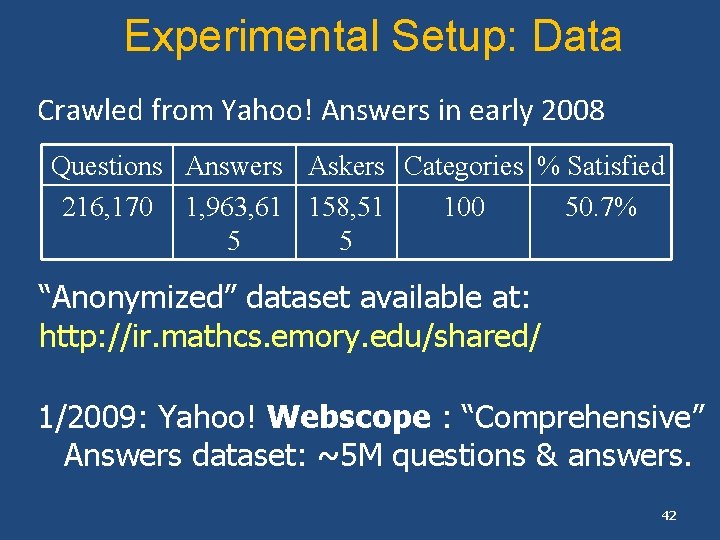

Experimental Setup: Data Crawled from Yahoo! Answers in early 2008 Questions Answers Askers Categories % Satisfied 216, 170 1, 963, 61 158, 51 100 50. 7% 5 5 “Anonymized” dataset available at: http: //ir. mathcs. emory. edu/shared/ 1/2009: Yahoo! Webscope : “Comprehensive” Answers dataset: ~5 M questions & answers. 42

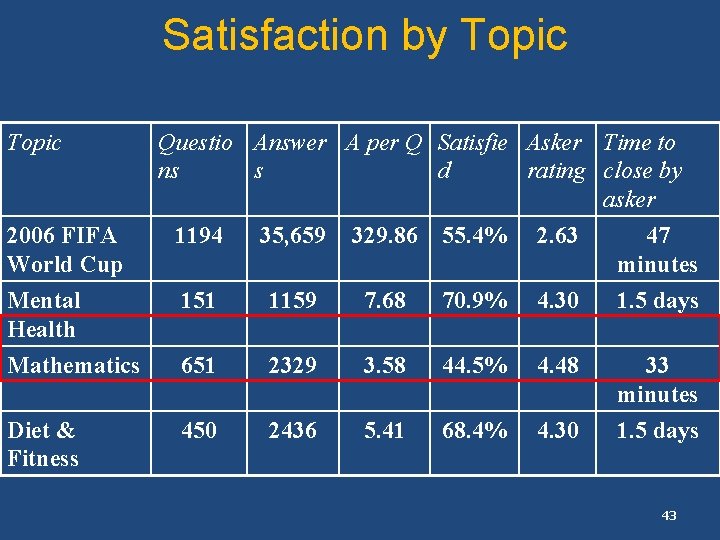

Satisfaction by Topic 2006 FIFA World Cup Questio Answer A per Q Satisfie Asker Time to ns s d rating close by asker 1194 35, 659 329. 86 55. 4% 2. 63 47 minutes Mental Health Mathematics 151 1159 7. 68 70. 9% 4. 30 1. 5 days 651 2329 3. 58 44. 5% 4. 48 Diet & Fitness 450 2436 5. 41 68. 4% 4. 30 33 minutes 1. 5 days 43

Satisfaction Prediction: Human Judges • Truth: asker’s rating • A random sample of 130 questions • Researchers – Agreement: 0. 82 F 1: 0. 45 2 P*R/(P+R) • Amazon Mechanical Turk – Five workers per question. – Agreement: 0. 9 F 1: 0. 61 – Best when at least 4 out of 5 raters agree 44

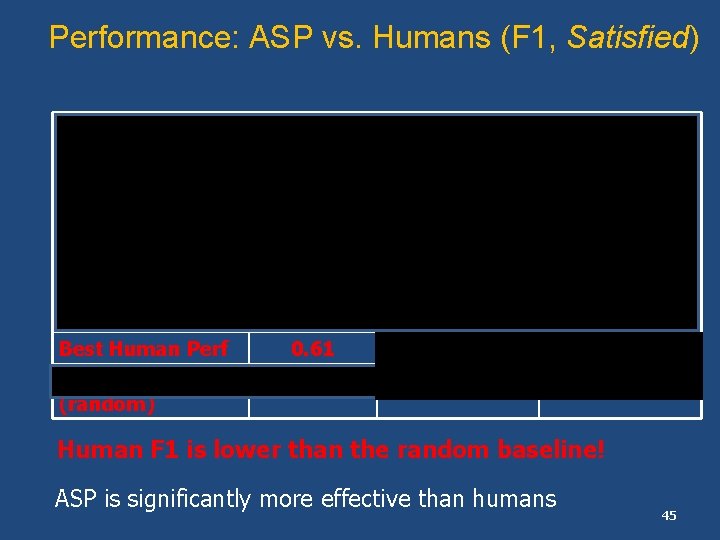

Performance: ASP vs. Humans (F 1, Satisfied) Classifier With Text Without Text Selected Features ASP_SVM 0. 69 0. 72 0. 62 ASP_C 4. 5 0. 76 0. 77 ASP_Random. Forest 0. 70 0. 74 0. 68 ASP_Boosting 0. 67 ASP_NB 0. 61 0. 65 0. 58 Best Human Perf 0. 61 Baseline (random) 0. 66 Human F 1 is lower than the random baseline! ASP is significantly more effective than humans 45

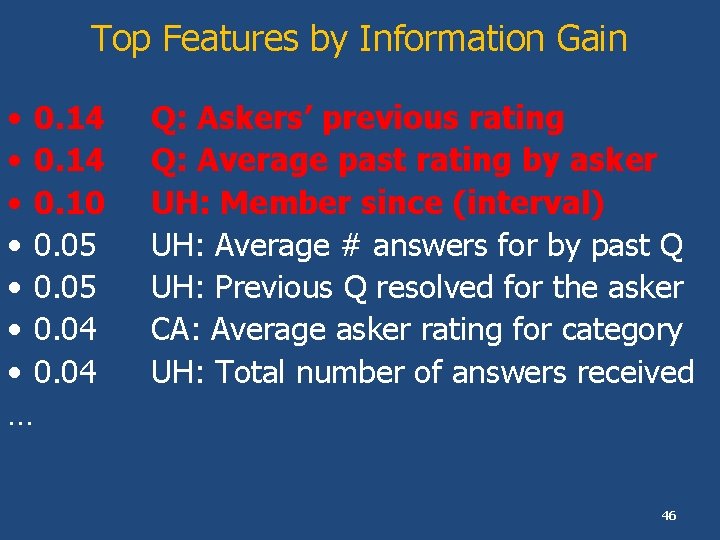

Top Features by Information Gain • 0. 14 • 0. 10 • 0. 05 • 0. 04 … Q: Askers’ previous rating Q: Average past rating by asker UH: Member since (interval) UH: Average # answers for by past Q UH: Previous Q resolved for the asker CA: Average asker rating for category UH: Total number of answers received 46

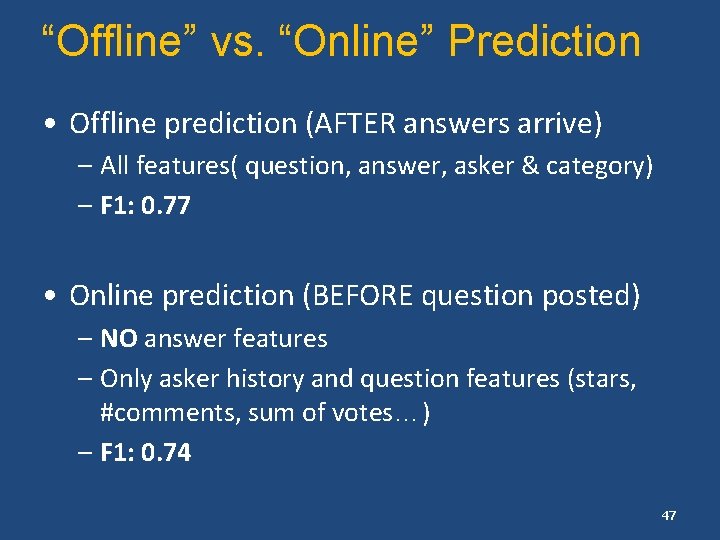

“Offline” vs. “Online” Prediction • Offline prediction (AFTER answers arrive) – All features( question, answer, asker & category) – F 1: 0. 77 • Online prediction (BEFORE question posted) – NO answer features – Only asker history and question features (stars, #comments, sum of votes…) – F 1: 0. 74 47

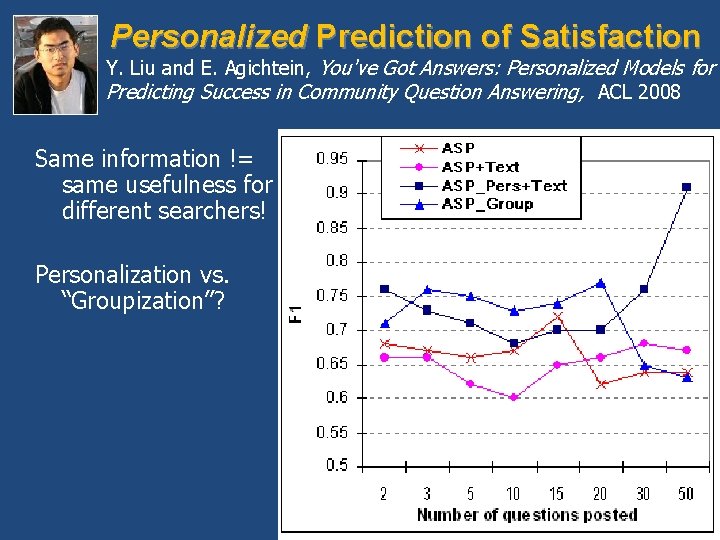

Personalized Prediction of Satisfaction Y. Liu and E. Agichtein, You've Got Answers: Personalized Models for Predicting Success in Community Question Answering, ACL 2008 Same information != same usefulness for different searchers! Personalization vs. “Groupization”? 48

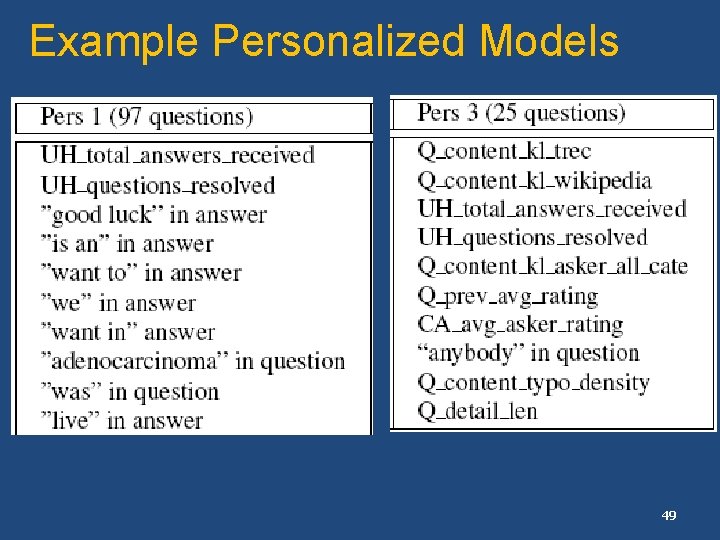

Example Personalized Models 49

Outline • Next generation of search: • Algorithmically mediated information exchange • CQA: a case study in complex IR ü Content quality ü Asker satisfaction 50

Current Work (in Progress) • Partially supervised models of expertise (Bian et al. , WWW 2009) • Real-time CQA • Sentiment, temporal sensitivity analysis • Understanding Social Media dynamics

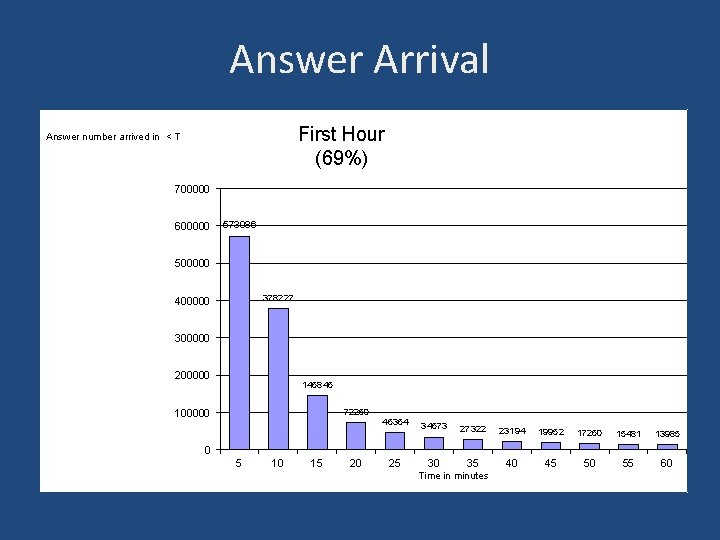

Answer Arrival First Hour (69%) Answer number arrived in < T 700000 600000 573086 500000 378227 400000 300000 200000 146845 100000 72260 46364 34573 27322 23194 19952 17260 15481 13985 30 35 40 45 50 55 60 0 5 10 15 20 25 Time in minutes

![Exponential Decay Model [Lerman 2007] Exponential Decay Model [Lerman 2007]](http://slidetodoc.com/presentation_image_h2/721eb4f09fc46b6d14174db9f4d0c66b/image-53.jpg)

Exponential Decay Model [Lerman 2007]

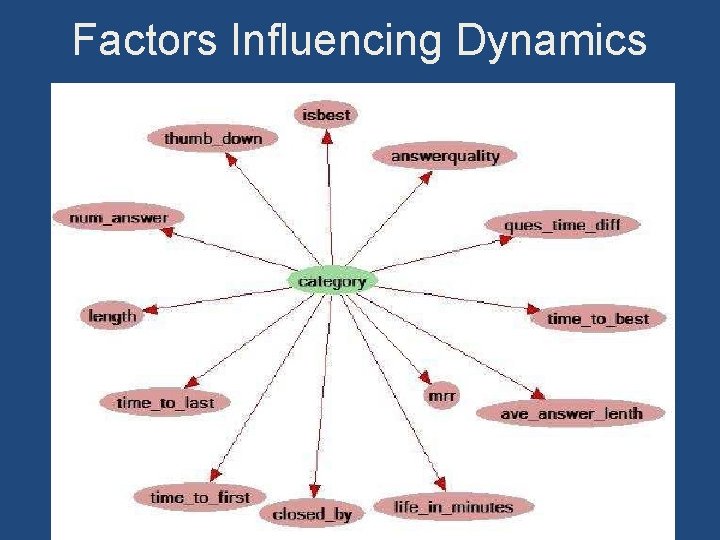

Factors Influencing Dynamics

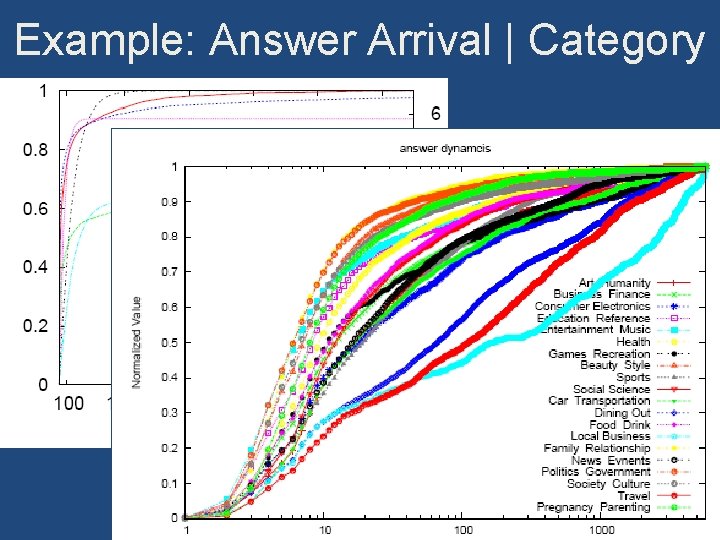

Example: Answer Arrival | Category

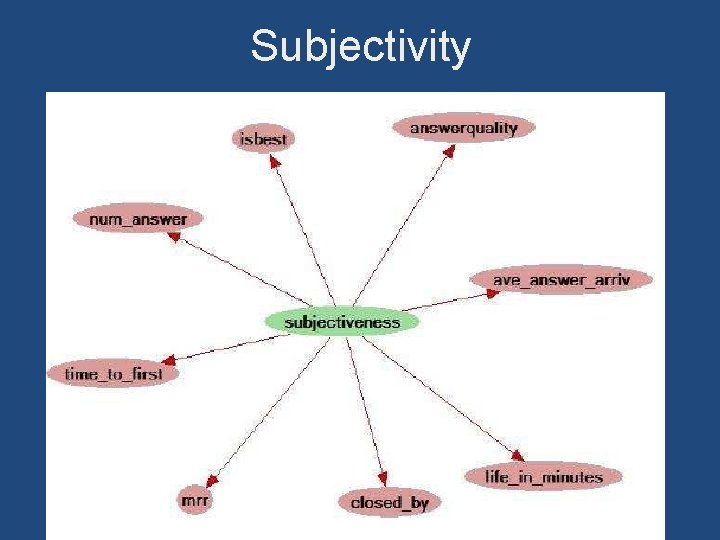

Subjectivity

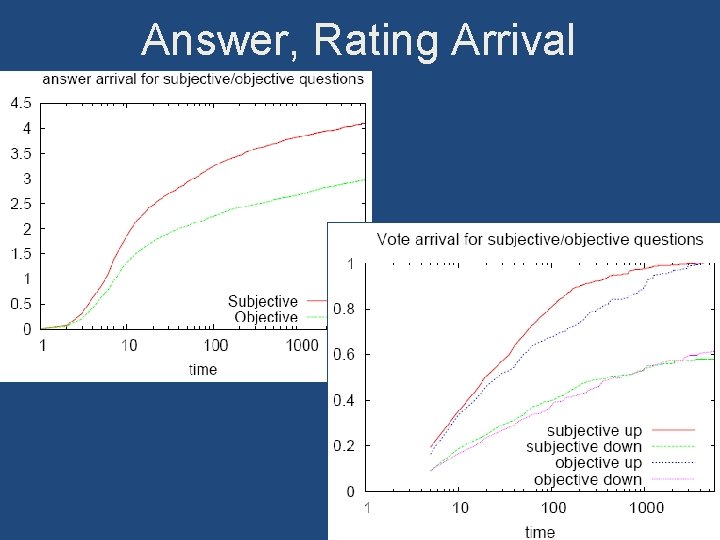

Answer, Rating Arrival

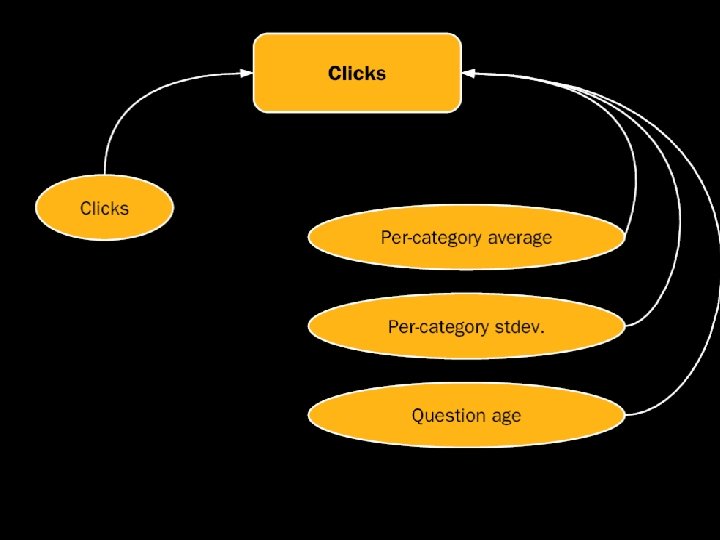

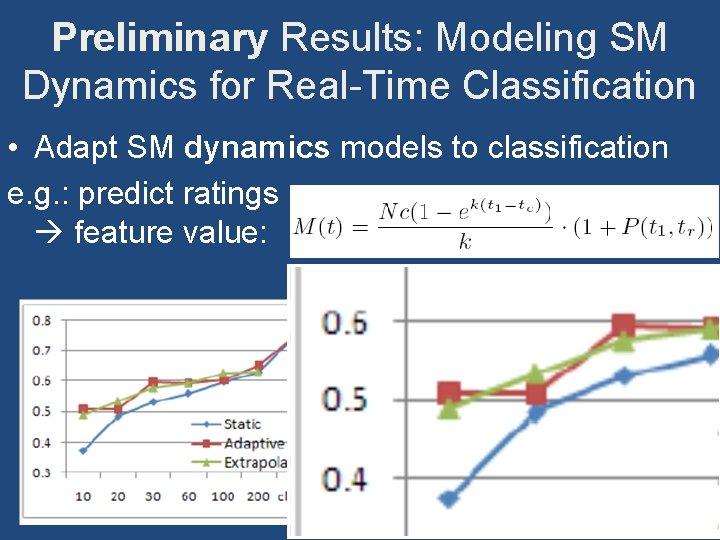

Preliminary Results: Modeling SM Dynamics for Real-Time Classification • Adapt SM dynamics models to classification e. g. : predict ratings feature value:

Outline • Next generation of search: • Algorithmically mediated information exchange • CQA: a case study in complex IR ü Content quality ü Asker satisfaction ü Understanding social media dynamics 59

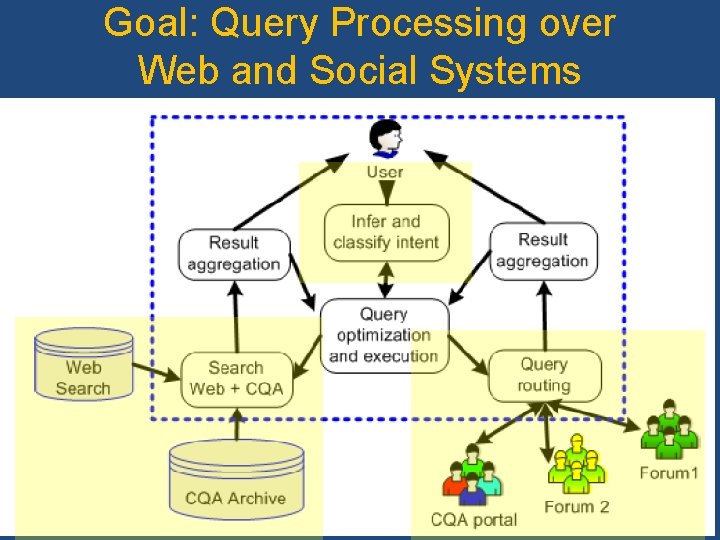

Goal: Query Processing over Web and Social Systems 60

Takeaways Robust machine learning over behavior data system improvements, insights into behavior Contextualized models for NLP and text mining system improvements, insights into interactions Mining social media: potential for transformative impact for IR, sociology, psychology, medical informatics, public health, … 61

More information, datasets, papers, slides: http: //www. mathcs. emory. edu/~eugene/ References • • Modeling web search behavior [SIGIR 2006, 2007] Estimating content quality [WSDM 2008] Estimating contributor authority [CIKM 2007] Searching CQA archives [WWW 2008, WWW 2009] Inferring asker intent [EMNLP 2008] Predicting satisfaction [SIGIR 2008, ACL 2008, TKDE] Coping with spam [AIRWeb 2008]

- Slides: 62