Modeling Actionlevel Satisfaction for Search Task Satisfaction Prediction

![How to assess search result quality? • Query-level relevance evaluation [Ricardo et al. 1999] How to assess search result quality? • Query-level relevance evaluation [Ricardo et al. 1999]](https://slidetodoc.com/presentation_image_h2/f2a2c8eac6819772c6d4b583e76ea706/image-2.jpg)

- Slides: 24

Modeling Action-level Satisfaction for Search Task Satisfaction Prediction Hongning Wang Department of Computer Science University of Illinois at Urbana. Champaign Urbana IL, 61801 USA wang 296@illinois. edu Yang Song, Ming-Wei Chang, Xiaodong He, Ahmed Hassan, Ryen W. White Microsoft Research, Redmond, WA 98004 USA {yangsong, minchang, xiaohe, hassanam, ryenw} @microsoft. com

![How to assess search result quality Querylevel relevance evaluation Ricardo et al 1999 How to assess search result quality? • Query-level relevance evaluation [Ricardo et al. 1999]](https://slidetodoc.com/presentation_image_h2/f2a2c8eac6819772c6d4b583e76ea706/image-2.jpg)

How to assess search result quality? • Query-level relevance evaluation [Ricardo et al. 1999] – Metrics: MAP, NDCG, MRR • Task-level satisfaction evaluation [Hassan et al. WSDM’ 10] – Users’ satisfaction of the whole search task Goal: find existing work for “action-level search satisfaction prediction” 10/27/2021 SIGIR'2014 @ Gold Coast 2

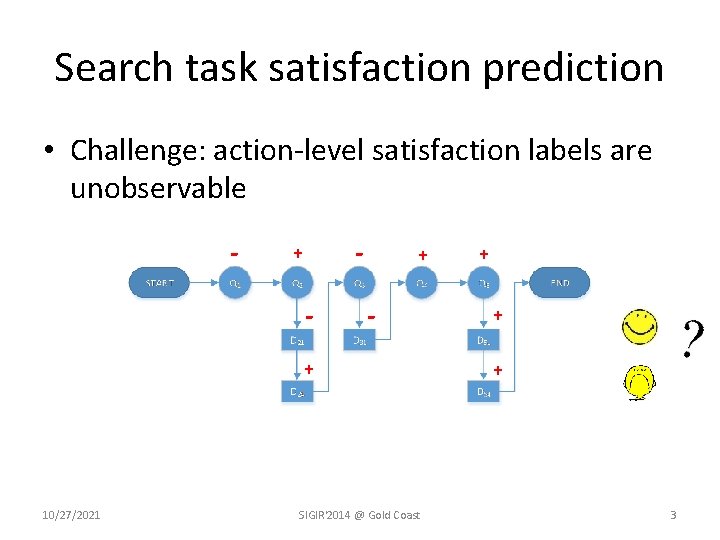

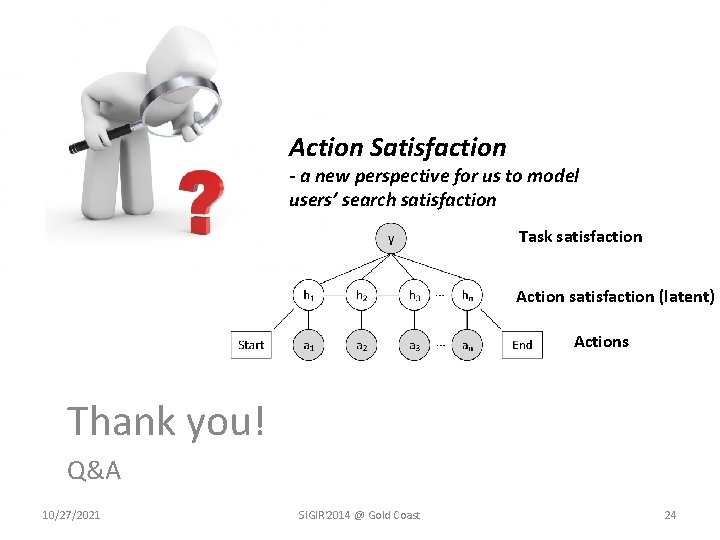

Search task satisfaction prediction • Challenge: action-level satisfaction labels are unobservable - - + - + 10/27/2021 SIGIR'2014 @ Gold Coast + + + 3

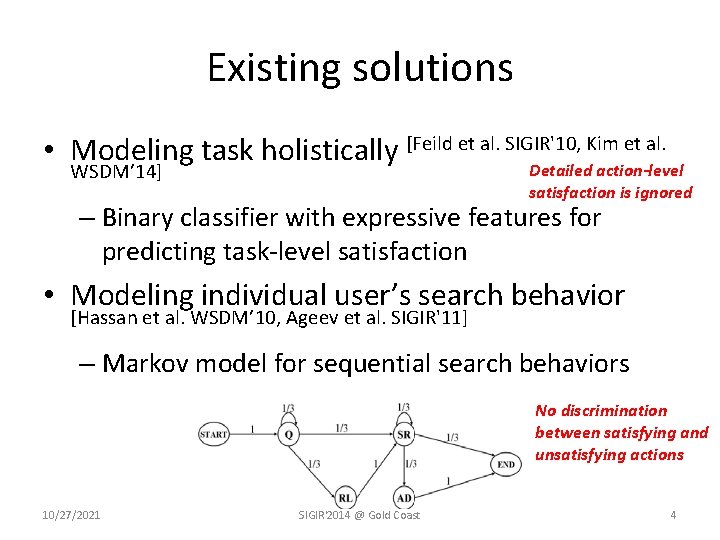

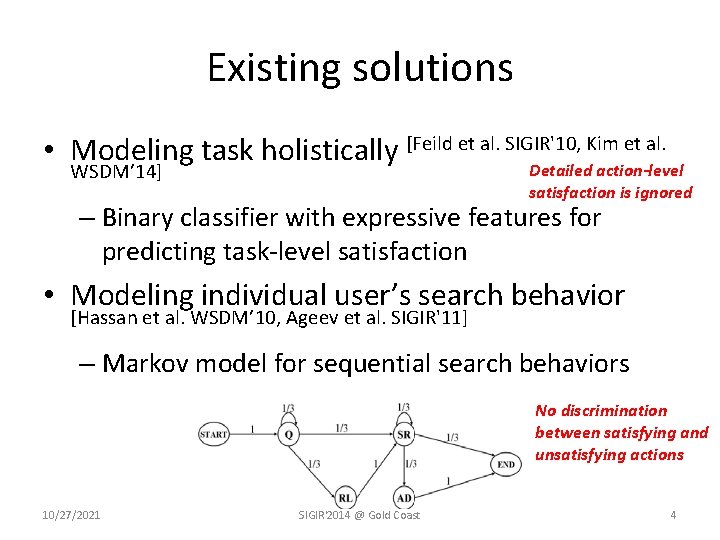

Existing solutions • Modeling task holistically [Feild et al. SIGIR'10, Kim et al. WSDM’ 14] Detailed action-level satisfaction is ignored – Binary classifier with expressive features for predicting task-level satisfaction • Modeling individual user’s search behavior [Hassan et al. WSDM’ 10, Ageev et al. SIGIR'11] – Markov model for sequential search behaviors No discrimination between satisfying and unsatisfying actions 10/27/2021 SIGIR'2014 @ Gold Coast 4

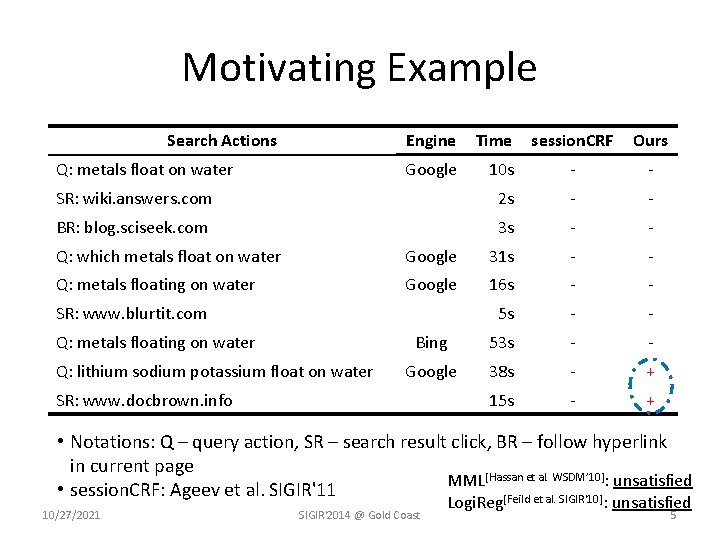

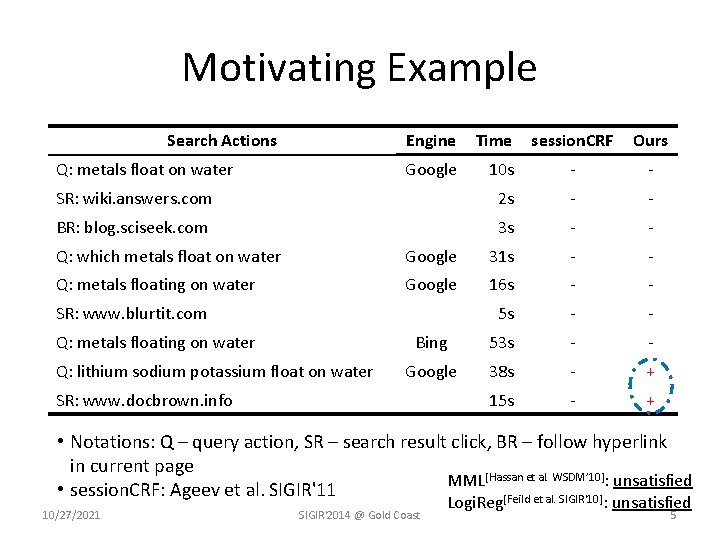

Motivating Example Search Actions session. CRF Ours 10 s - - SR: wiki. answers. com 2 s - - BR: blog. sciseek. com 3 s - - Q: metals float on water Engine Time Google Q: which metals float on water Google 31 s - - Q: metals floating on water Google 16 s - - 5 s - - Bing 53 s - - Google 38 s - + 15 s - + SR: www. blurtit. com Q: metals floating on water Q: lithium sodium potassium float on water SR: www. docbrown. info • Notations: Q – query action, SR – search result click, BR – follow hyperlink in current page MML[Hassan et al. WSDM’ 10]: unsatisfied • session. CRF: Ageev et al. SIGIR'11 [Feild et al. SIGIR'10] 10/27/2021 SIGIR'2014 @ Gold Coast Logi. Reg : unsatisfied 5

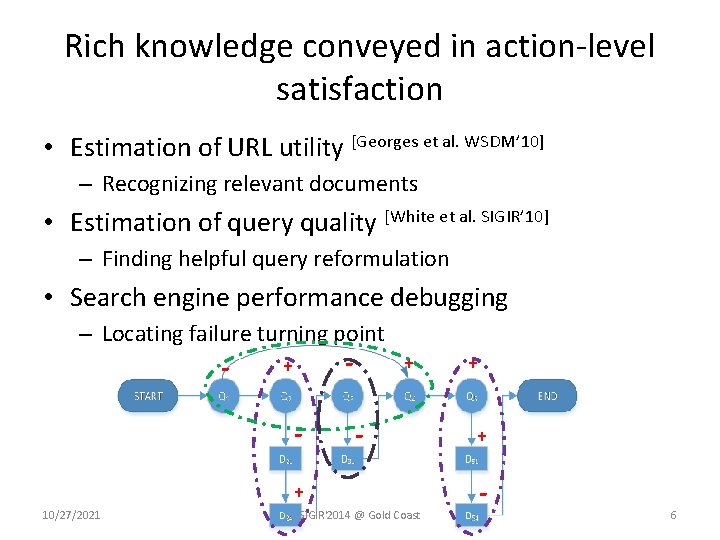

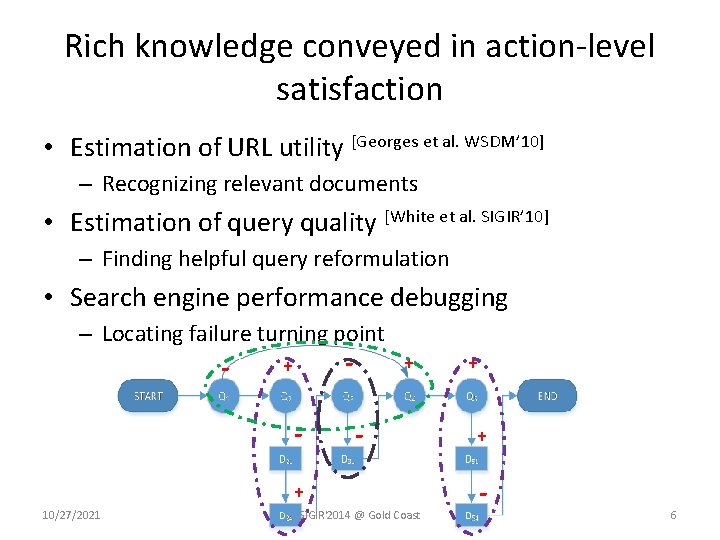

Rich knowledge conveyed in action-level satisfaction • Estimation of URL utility [Georges et al. WSDM’ 10] – Recognizing relevant documents • Estimation of query quality [White et al. SIGIR’ 10] – Finding helpful query reformulation • Search engine performance debugging – Locating failure turning point - - + - + 10/27/2021 SIGIR'2014 @ Gold Coast + + - 6

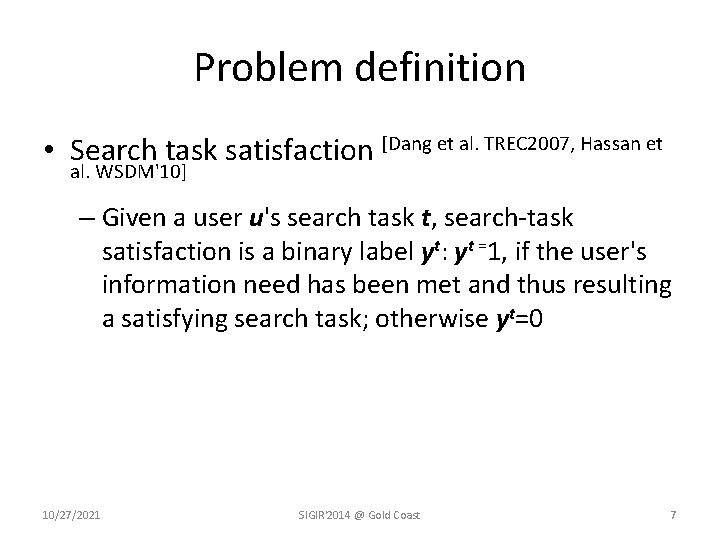

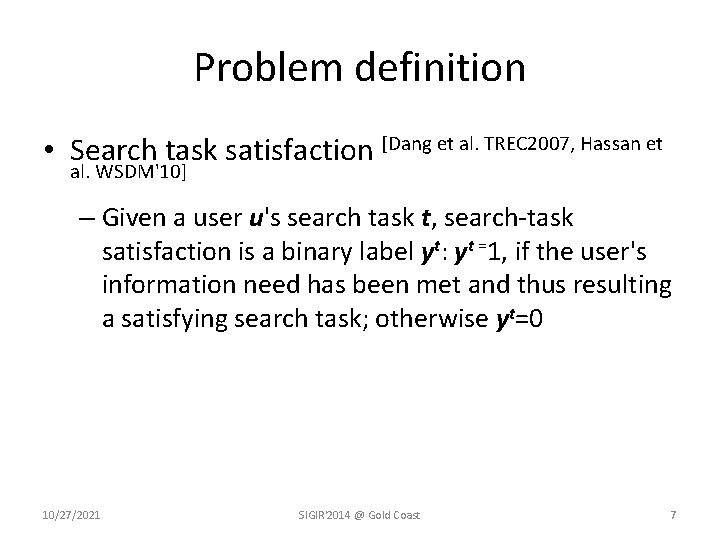

Problem definition • Search task satisfaction [Dang et al. TREC 2007, Hassan et al. WSDM'10] – Given a user u's search task t, search-task satisfaction is a binary label yt: yt =1, if the user's information need has been met and thus resulting a satisfying search task; otherwise yt=0 10/27/2021 SIGIR'2014 @ Gold Coast 7

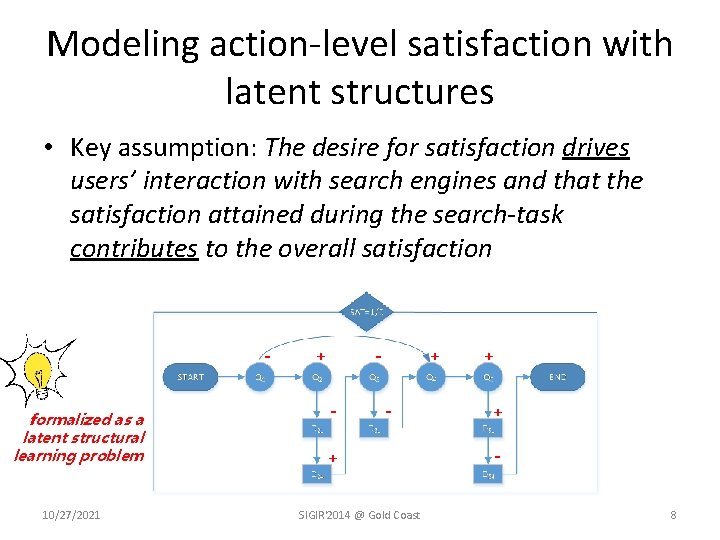

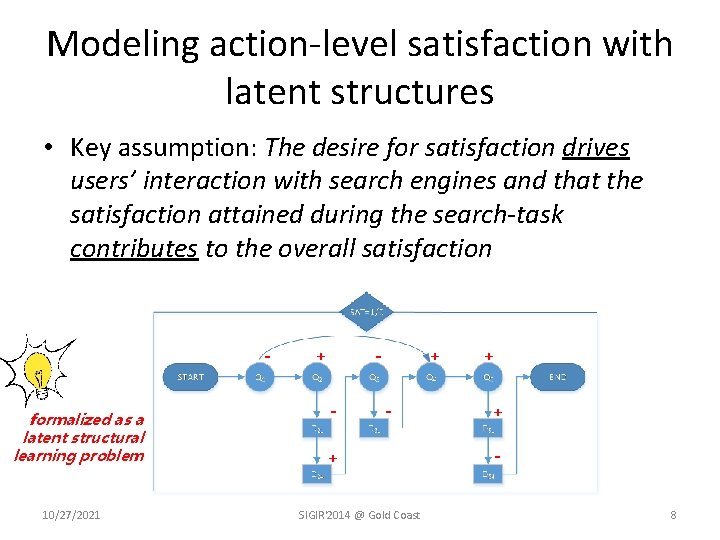

Modeling action-level satisfaction with latent structures • Key assumption: The desire for satisfaction drives users’ interaction with search engines and that the satisfaction attained during the search-task contributes to the overall satisfaction formalized as a latent structural learning problem 10/27/2021 - + - + SIGIR'2014 @ Gold Coast + + 8

Modeling Action-level Satisfaction for Search Task Satisfaction Prediction • Action-aware Task Satisfaction model (Ac. TS) – A latent structured learning approach Short-range features: 1. #clicks, #queries, last action 2. Dwell time, query-URL match, domain Long-range features: 1. exist. Sat. Q, all. Sat. Q 2. action transitions Task satisfaction Action satisfaction (latent) Actions 10/27/2021 SIGIR'2014 @ Gold Coast 9

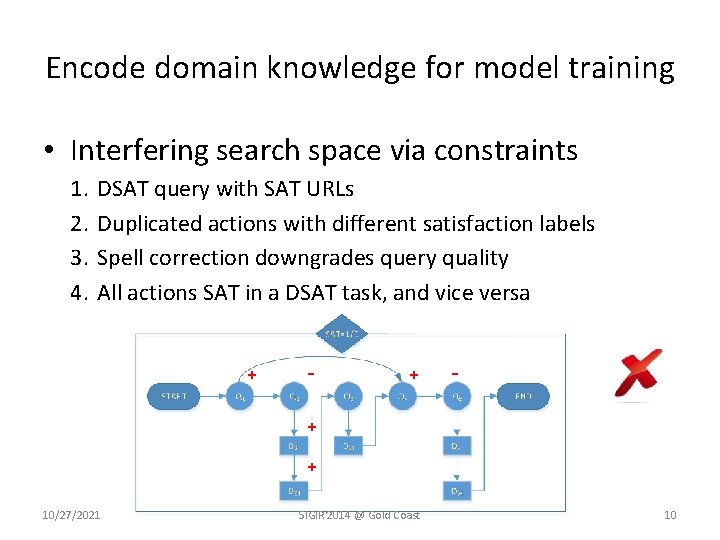

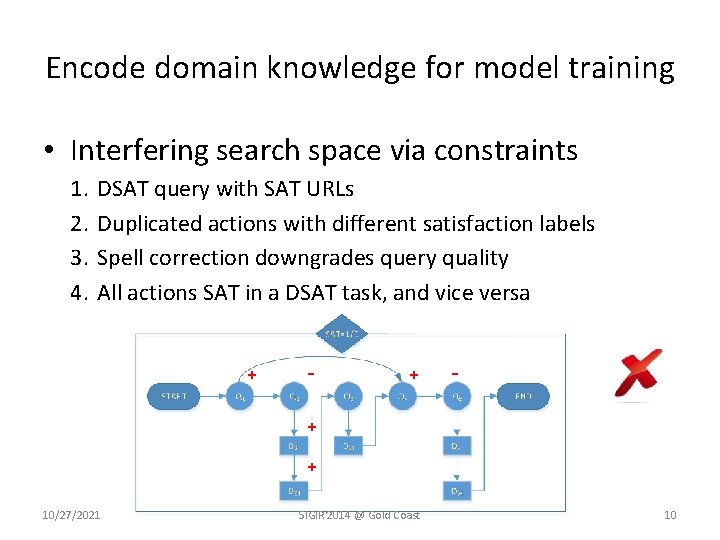

Encode domain knowledge for model training • Interfering search space via constraints 1. 2. 3. 4. DSAT query with SAT URLs Duplicated actions with different satisfaction labels Spell correction downgrades query quality All actions SAT in a DSAT task, and vice versa + - + + 10/27/2021 SIGIR'2014 @ Gold Coast 10

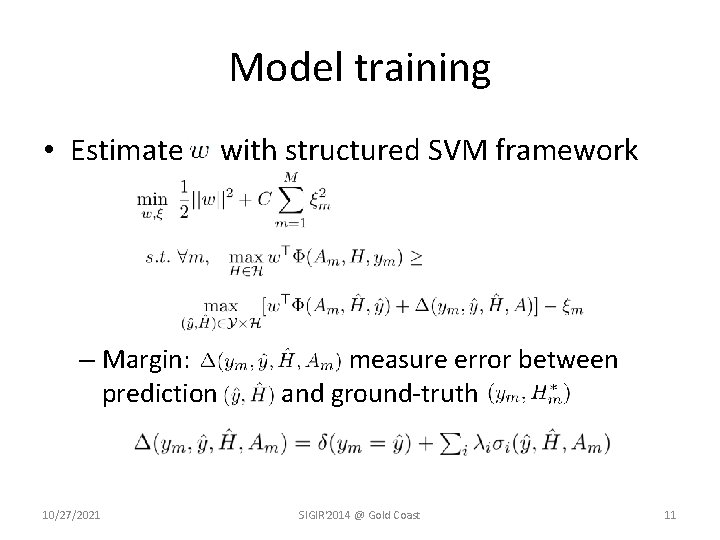

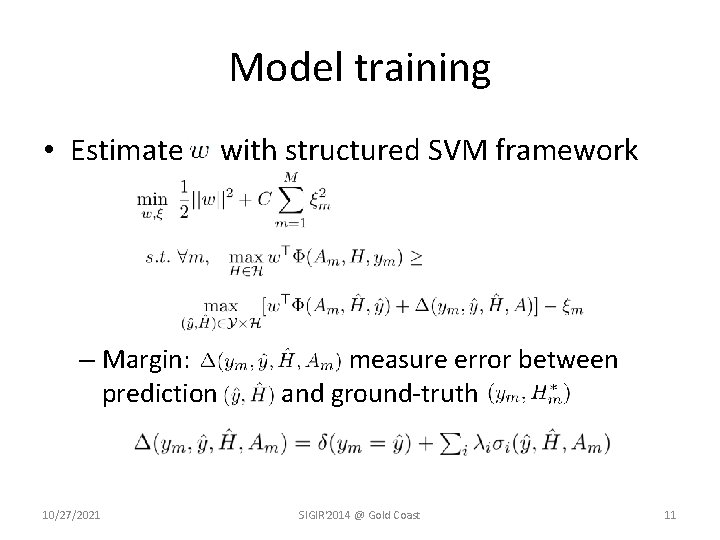

Model training • Estimate – Margin: prediction 10/27/2021 with structured SVM framework measure error between and ground-truth SIGIR'2014 @ Gold Coast 11

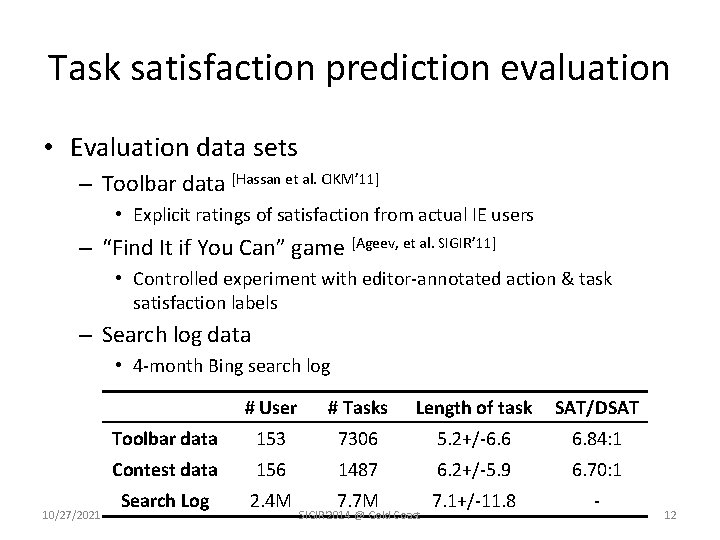

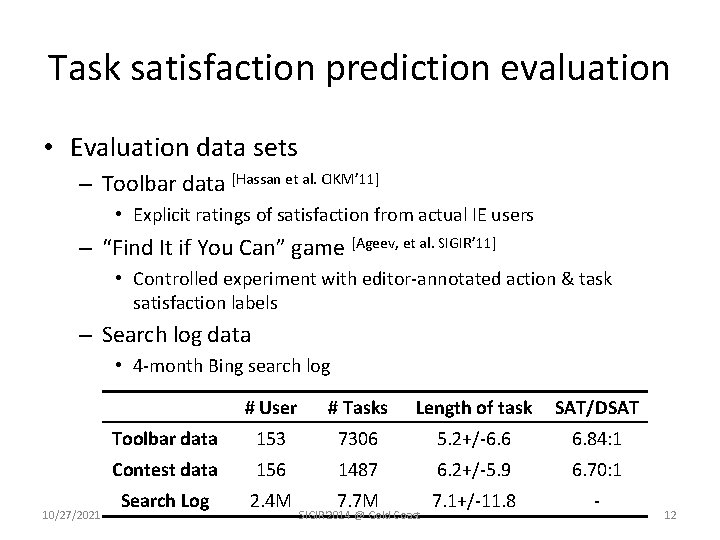

Task satisfaction prediction evaluation • Evaluation data sets – Toolbar data [Hassan et al. CIKM’ 11] • Explicit ratings of satisfaction from actual IE users – “Find It if You Can” game [Ageev, et al. SIGIR’ 11] • Controlled experiment with editor-annotated action & task satisfaction labels – Search log data • 4 -month Bing search log 10/27/2021 # User # Tasks Length of task SAT/DSAT Toolbar data 153 7306 5. 2+/-6. 6 6. 84: 1 Contest data 156 1487 6. 2+/-5. 9 6. 70: 1 Search Log 2. 4 M 7. 7 M 7. 1+/-11. 8 - SIGIR'2014 @ Gold Coast 12

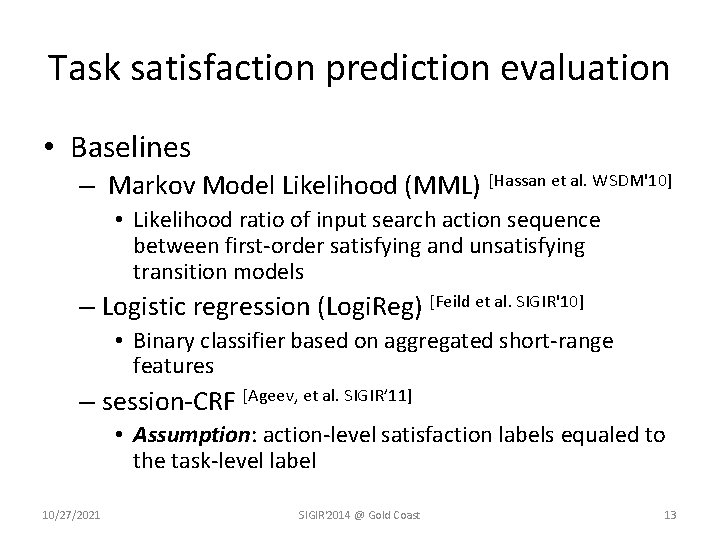

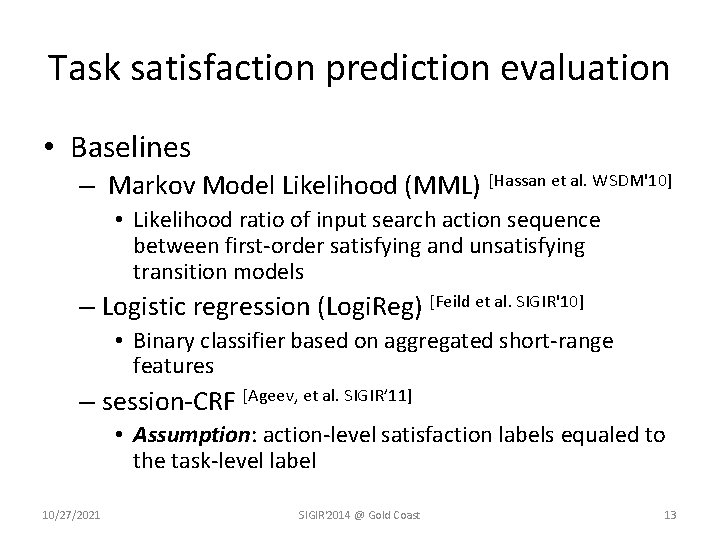

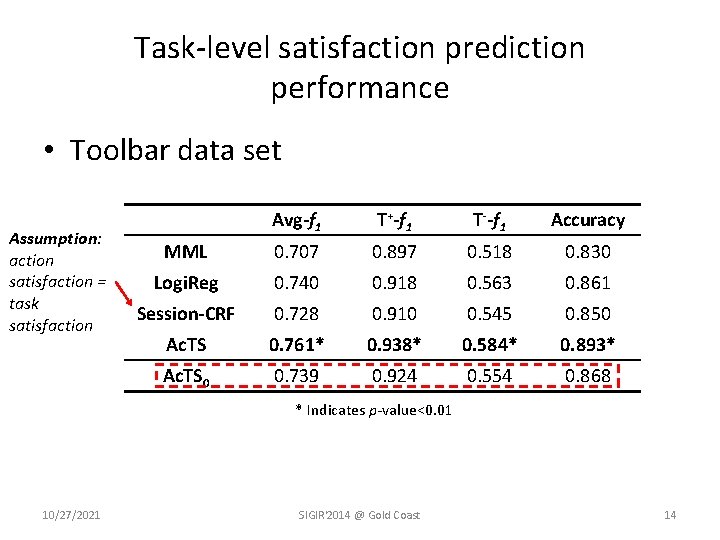

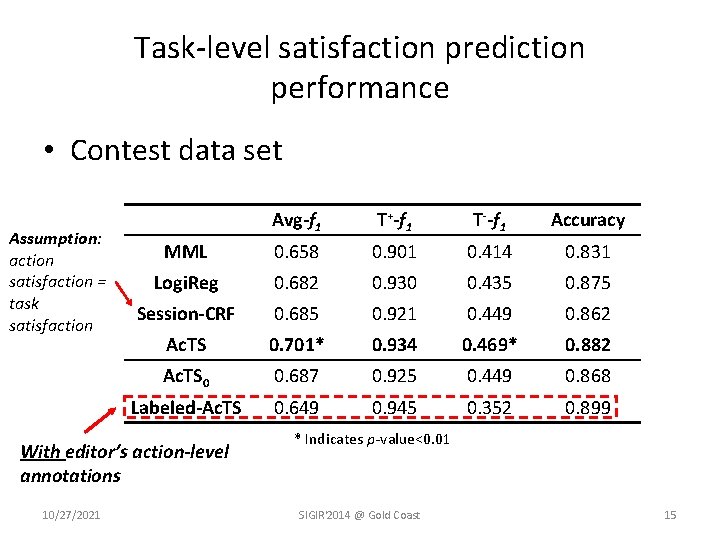

Task satisfaction prediction evaluation • Baselines – Markov Model Likelihood (MML) [Hassan et al. WSDM'10] • Likelihood ratio of input search action sequence between first-order satisfying and unsatisfying transition models – Logistic regression (Logi. Reg) [Feild et al. SIGIR'10] • Binary classifier based on aggregated short-range features – session-CRF [Ageev, et al. SIGIR’ 11] • Assumption: action-level satisfaction labels equaled to the task-level label 10/27/2021 SIGIR'2014 @ Gold Coast 13

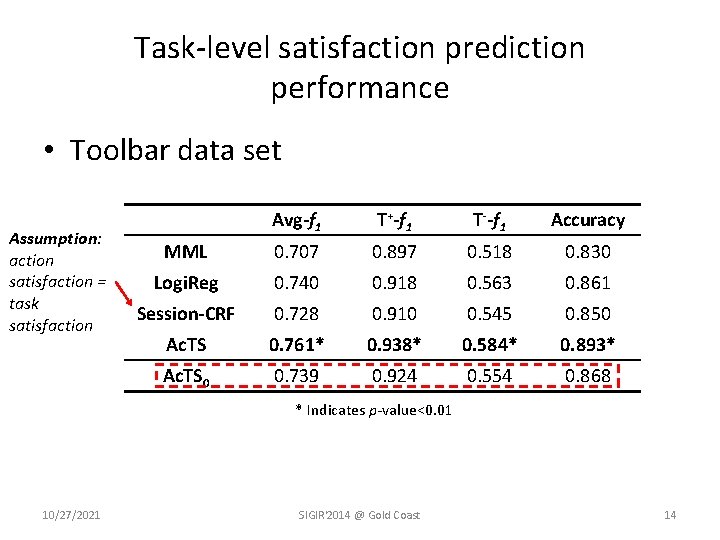

Task-level satisfaction prediction performance • Toolbar data set Assumption: action satisfaction = task satisfaction Avg-f 1 T+-f 1 T--f 1 Accuracy MML 0. 707 0. 897 0. 518 0. 830 Logi. Reg 0. 740 0. 918 0. 563 0. 861 Session-CRF 0. 728 0. 910 0. 545 0. 850 Ac. TS 0. 761* 0. 938* 0. 584* 0. 893* Ac. TS 0 0. 739 0. 924 0. 554 0. 868 * Indicates p-value<0. 01 10/27/2021 SIGIR'2014 @ Gold Coast 14

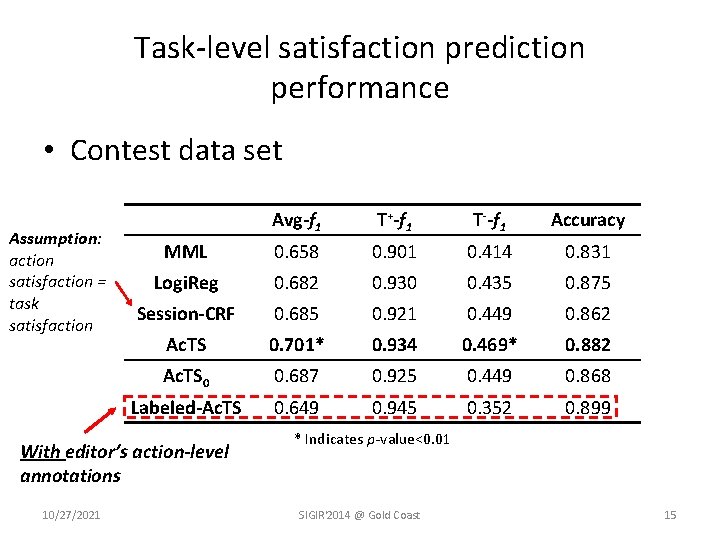

Task-level satisfaction prediction performance • Contest data set Assumption: action satisfaction = task satisfaction Avg-f 1 T+-f 1 T--f 1 Accuracy MML 0. 658 0. 901 0. 414 0. 831 Logi. Reg 0. 682 0. 930 0. 435 0. 875 Session-CRF 0. 685 0. 921 0. 449 0. 862 Ac. TS 0. 701* 0. 934 0. 469* 0. 882 Ac. TS 0 0. 687 0. 925 0. 449 0. 868 Labeled-Ac. TS 0. 649 0. 945 0. 352 0. 899 With editor’s action-level annotations 10/27/2021 * Indicates p-value<0. 01 SIGIR'2014 @ Gold Coast 15

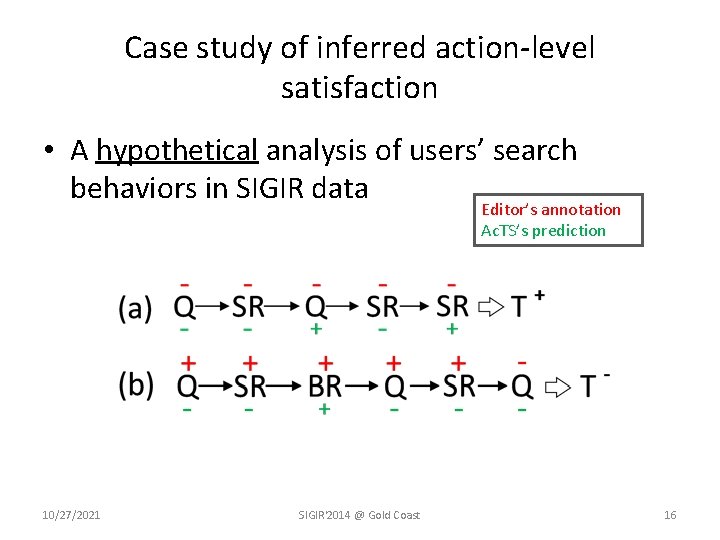

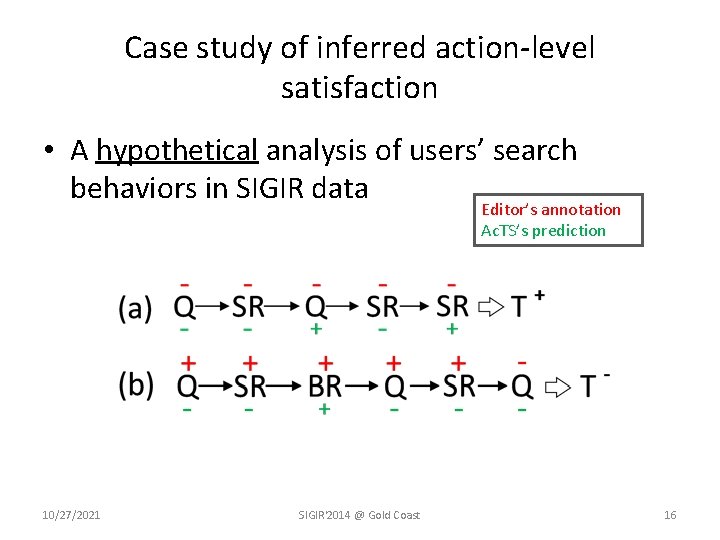

Case study of inferred action-level satisfaction • A hypothetical analysis of users’ search behaviors in SIGIR data Editor’s annotation Ac. TS’s prediction 10/27/2021 SIGIR'2014 @ Gold Coast 16

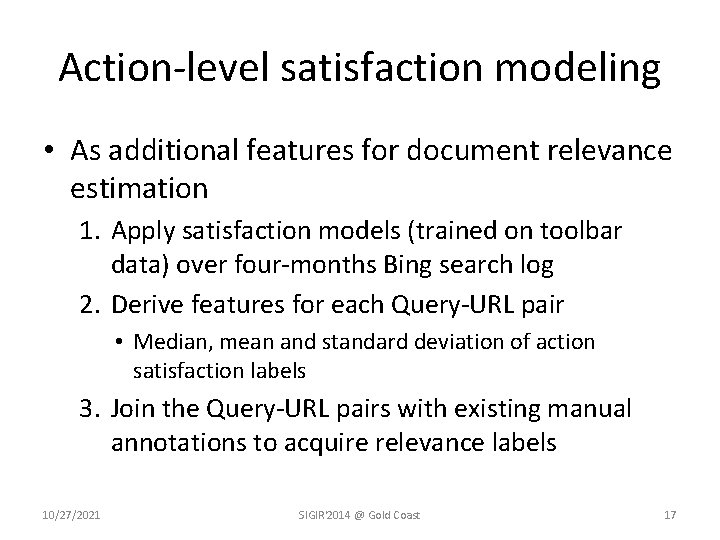

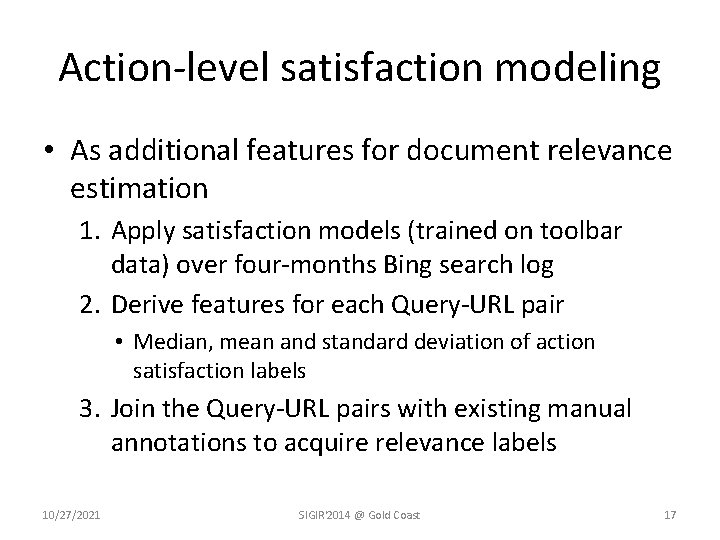

Action-level satisfaction modeling • As additional features for document relevance estimation 1. Apply satisfaction models (trained on toolbar data) over four-months Bing search log 2. Derive features for each Query-URL pair • Median, mean and standard deviation of action satisfaction labels 3. Join the Query-URL pairs with existing manual annotations to acquire relevance labels 10/27/2021 SIGIR'2014 @ Gold Coast 17

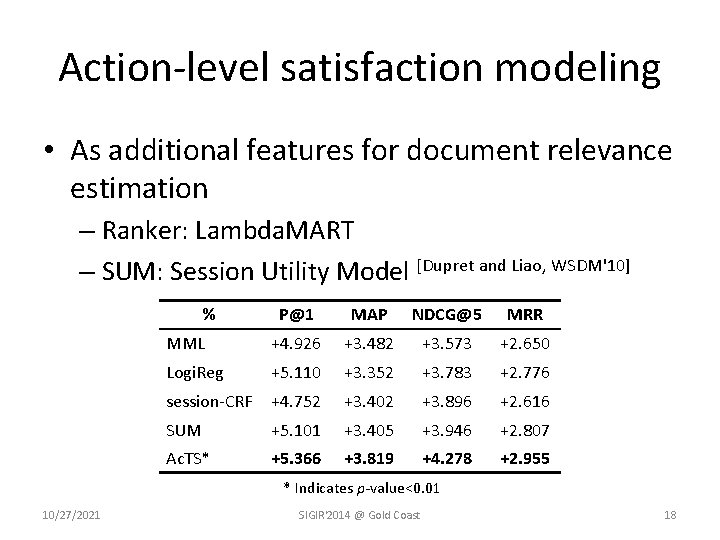

Action-level satisfaction modeling • As additional features for document relevance estimation – Ranker: Lambda. MART – SUM: Session Utility Model [Dupret and Liao, WSDM'10] % P@1 MAP NDCG@5 MRR MML +4. 926 +3. 482 +3. 573 +2. 650 Logi. Reg +5. 110 +3. 352 +3. 783 +2. 776 session-CRF +4. 752 +3. 402 +3. 896 +2. 616 SUM +5. 101 +3. 405 +3. 946 +2. 807 Ac. TS* +5. 366 +3. 819 +4. 278 +2. 955 * Indicates p-value<0. 01 10/27/2021 SIGIR'2014 @ Gold Coast 18

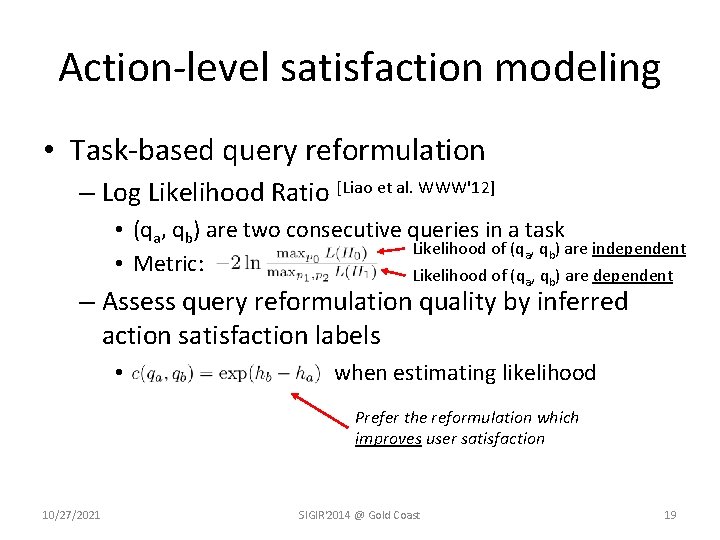

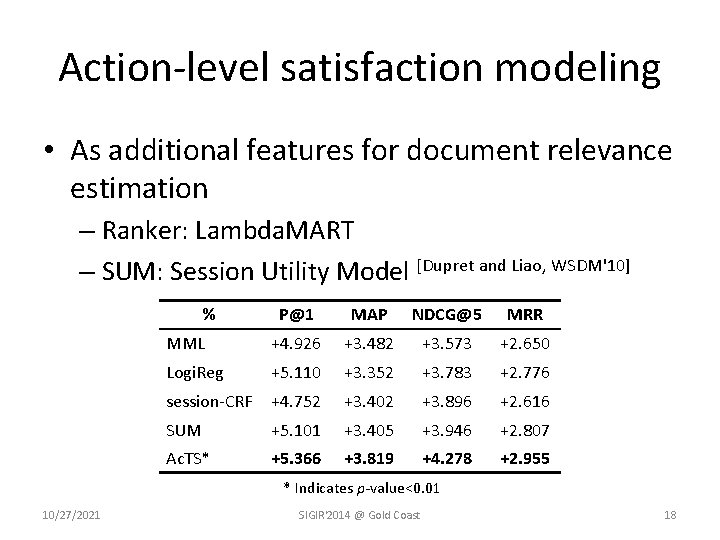

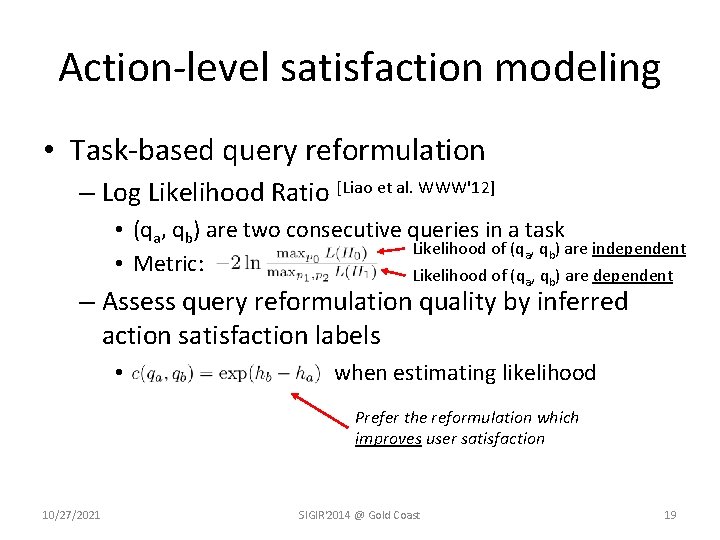

Action-level satisfaction modeling • Task-based query reformulation – Log Likelihood Ratio [Liao et al. WWW'12] • (qa, qb) are two consecutive queries in a task Likelihood of (qa, qb) are independent • Metric: Likelihood of (q , q ) are dependent a b – Assess query reformulation quality by inferred action satisfaction labels • when estimating likelihood Prefer the reformulation which improves user satisfaction 10/27/2021 SIGIR'2014 @ Gold Coast 19

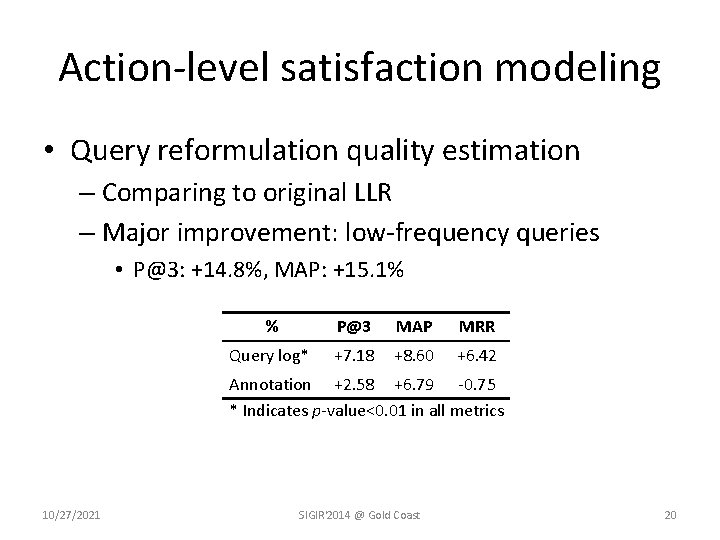

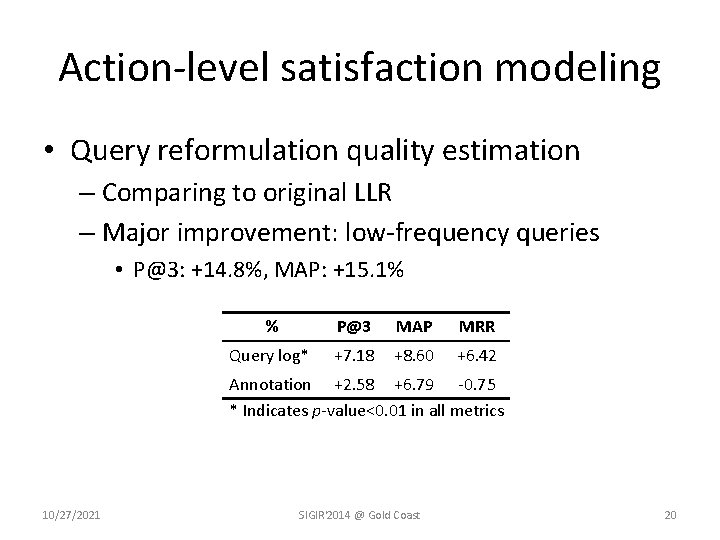

Action-level satisfaction modeling • Query reformulation quality estimation – Comparing to original LLR – Major improvement: low-frequency queries • P@3: +14. 8%, MAP: +15. 1% % P@3 MAP MRR Query log* +7. 18 +8. 60 +6. 42 Annotation +2. 58 +6. 79 -0. 75 * Indicates p-value<0. 01 in all metrics 10/27/2021 SIGIR'2014 @ Gold Coast 20

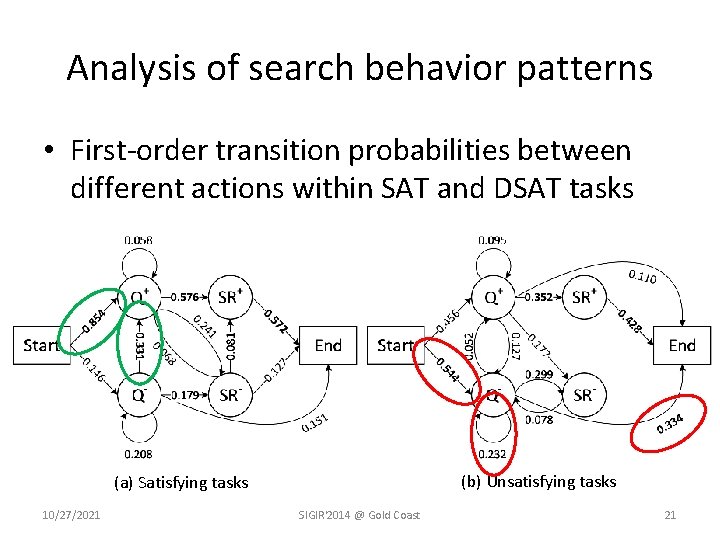

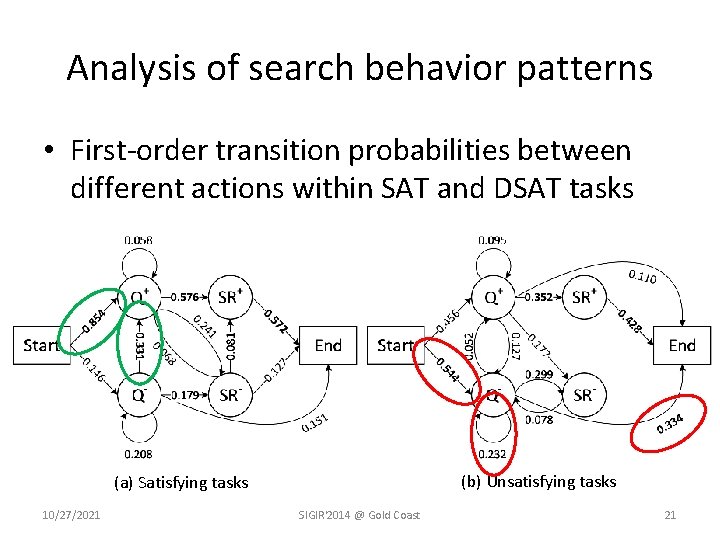

Analysis of search behavior patterns • First-order transition probabilities between different actions within SAT and DSAT tasks (b) Unsatisfying tasks (a) Satisfying tasks 10/27/2021 SIGIR'2014 @ Gold Coast 21

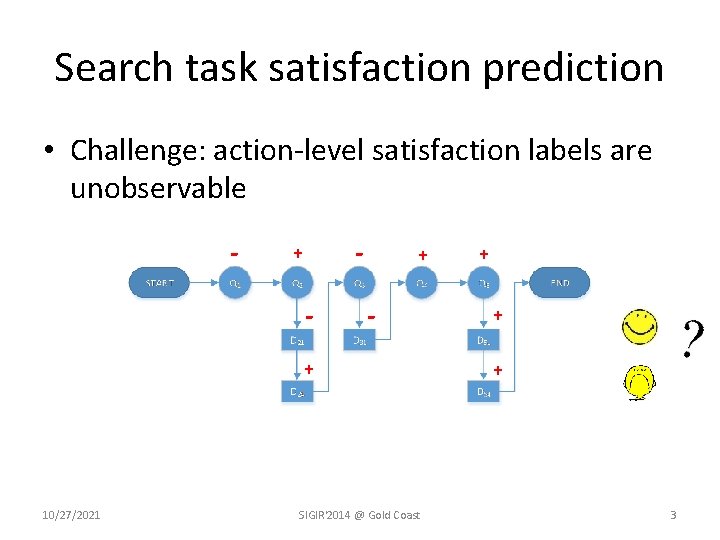

Conclusions • Search task satisfaction prediction – Explicitly modeling action-level satisfaction – Explore rich structured features and dependency relations via latent structural modeling – Improved task satisfaction prediction performance and clear utility in other search applications • Future direction – Real-time search satisfaction prediction – Leverage the inferred action-level satisfaction in more application context 10/27/2021 SIGIR'2014 @ Gold Coast 22

References • • • Baeza-Yates, Ricardo, and Berthier Ribeiro-Neto. Modern information retrieval. Vol. 463. New York: ACM press, 1999. Feild, Henry A. , James Allan, and Rosie Jones. “Predicting searcher frustration. ” Proceedings of the 33 rd international ACM SIGIR conference on Research and development in information retrieval. ACM, 2010. Kim, Youngho, et al. “Modeling dwell time to predict click-level satisfaction. ” Proceedings of the 7 th ACM international conference on Web search and data mining. ACM, 2014. Hassan, Ahmed, Rosie Jones, and Kristina Lisa Klinkner. "Beyond DCG: user behavior as a predictor of a successful search. " Proceedings of the third ACM international conference on Web search and data mining. ACM, 2010. Ageev, Mikhail, et al. "Find it if you can: a game for modeling different types of web search success using interaction data. " Proceedings of the 34 th international ACM SIGIR conference on Research and development in Information Retrieval. ACM, 2011. Dupret, Georges, and Ciya Liao. "A model to estimate intrinsic document relevance from the clickthrough logs of a web search engine. " Proceedings of the third ACM international conference on Web search and data mining. ACM, 2010. White, Ryen W. , and Jeff Huang. "Assessing the scenic route: measuring the value of search trails in web logs. " Proceedings of the 33 rd international ACM SIGIR conference on Research and development in information retrieval. ACM, 2010. Dang, Hoa Trang, Diane Kelly, and Jimmy J. Lin. "Overview of the TREC 2007 Question Answering Track. " TREC. Vol. 7. 2007. Hassan, Ahmed, Yang Song, and Li-wei He. "A task level metric for measuring web search satisfaction and its application on improving relevance estimation. "Proceedings of the 20 th ACM international conference on Information and knowledge management. ACM, 2011. Liao, Zhen, et al. "Evaluating the effectiveness of search task trails. "Proceedings of the 21 st international conference on World Wide Web. ACM, 2012. 10/27/2021 SIGIR'2014 @ Gold Coast 23

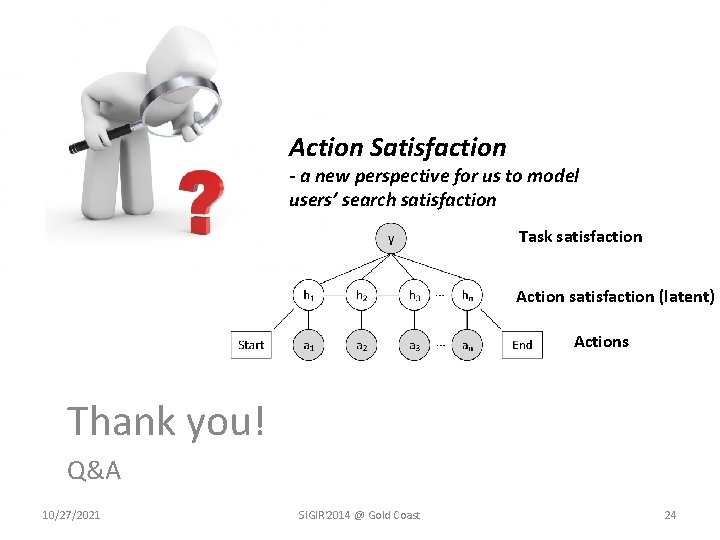

Action Satisfaction - a new perspective for us to model users’ search satisfaction THANK YOU! Task satisfaction Action satisfaction (latent) Actions Thank you! Q&A 10/27/2021 SIGIR'2014 @ Gold Coast 24