Modeldriven Performance Analysis Methodology for Scalable Performance Analysis

Model-driven Performance Analysis Methodology for Scalable Performance Analysis of Distributed Systems Swapna Gokhale ssg@engr. uconn. edu Asst. Professor of CSE, University of Connecticut, Storrs, CT Aniruddha Gokhale a. gokhale@vanderbilt. edu Asst. Professor of EECS, Vanderbilt University, Nashville, TN Jeff Gray gray@cis. uab. edu Asst. Professor of CIS Univ. of Alabama at Birmingham, AL Presented at NSF NGS/CSR PI Meeting Rhodes, Greece, April 25 -26, 2006 CSR CNS-0406376, CNS-0509271, CNS-0509296, CNS-0509342

Distributed Performance Sensitive Software Systems Military/Civilian distributed performancesensitive software systems • Network-centric & larger-scale “systems of systems” • Stringent simultaneous Qo. S demands • e. g. , dependability, security, scalability, thruput • Dynamic context 2

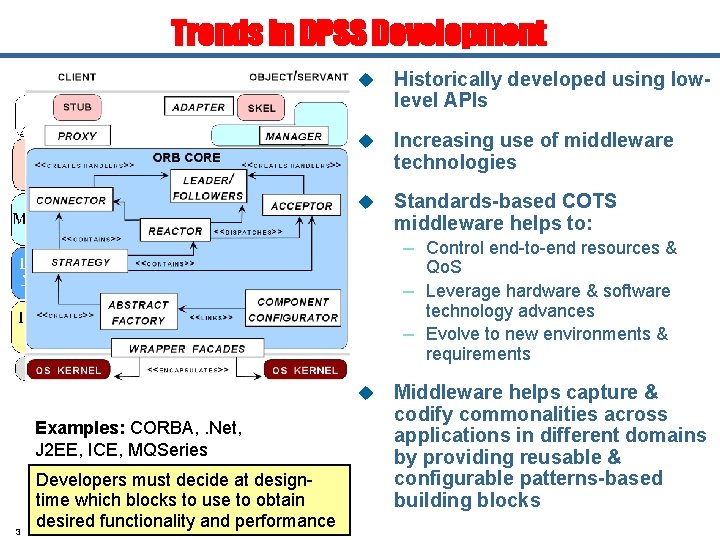

Trends in DPSS Development u Historically developed using lowlevel APIs u Increasing use of middleware technologies u Standards-based COTS middleware helps to: – Control end-to-end resources & Qo. S – Leverage hardware & software technology advances – Evolve to new environments & requirements u Examples: CORBA, . Net, J 2 EE, ICE, MQSeries 3 Developers must decide at designtime which blocks to use to obtain desired functionality and performance Middleware helps capture & codify commonalities across applications in different domains by providing reusable & configurable patterns-based building blocks

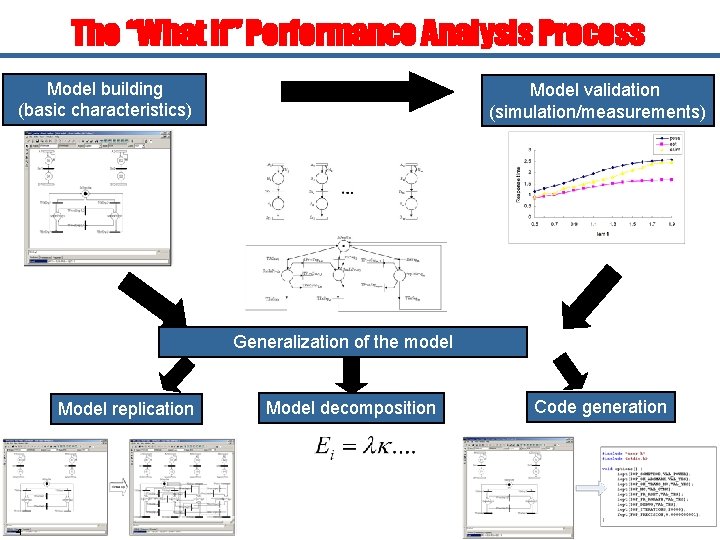

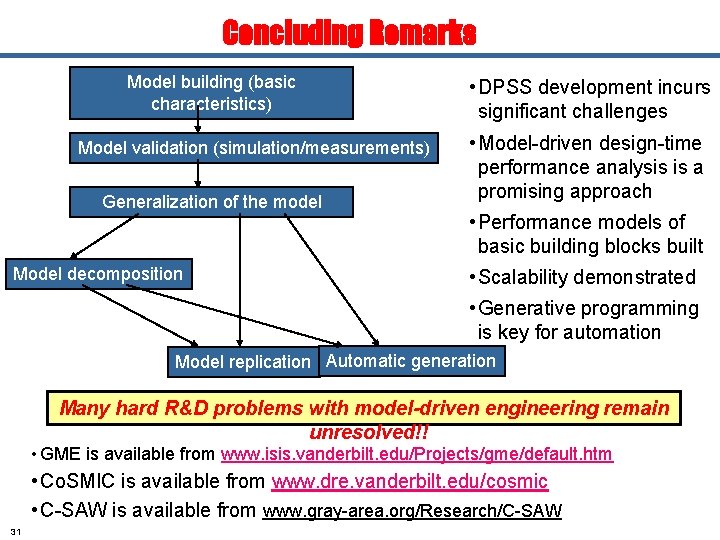

The “What If” Performance Analysis Process Model building (basic characteristics) Model validation (simulation/measurements) Generalization of the model Model replication 4 Model decomposition Code generation

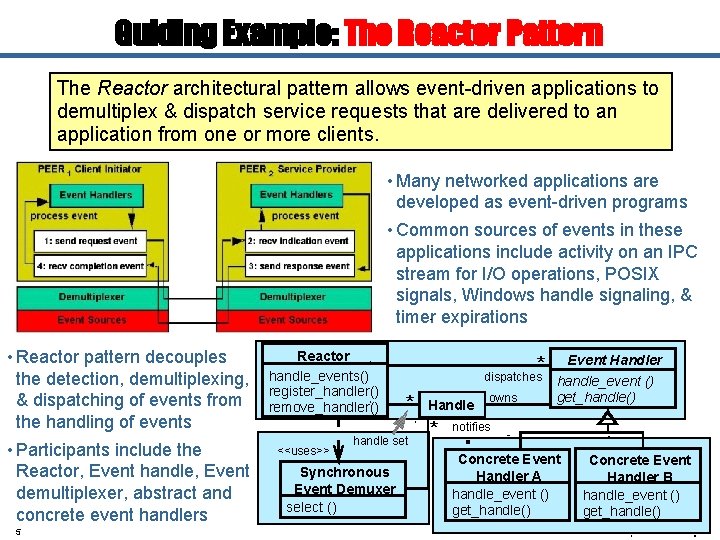

Guiding Example: The Reactor Pattern The Reactor architectural pattern allows event-driven applications to demultiplex & dispatch service requests that are delivered to an application from one or more clients. • Many networked applications are developed as event-driven programs • Common sources of events in these applications include activity on an IPC stream for I/O operations, POSIX signals, Windows handle signaling, & timer expirations • Reactor pattern decouples the detection, demultiplexing, & dispatching of events from the handling of events • Participants include the Reactor, Event handle, Event demultiplexer, abstract and concrete event handlers 5 Reactor handle_events() register_handler() remove_handler() <<uses>> * dispatches * handle set Synchronous Event Demuxer select () Handle * owns Event Handler handle_event () get_handle() notifies Concrete Event Handler A handle_event () get_handle() Concrete Event Handler B handle_event () get_handle()

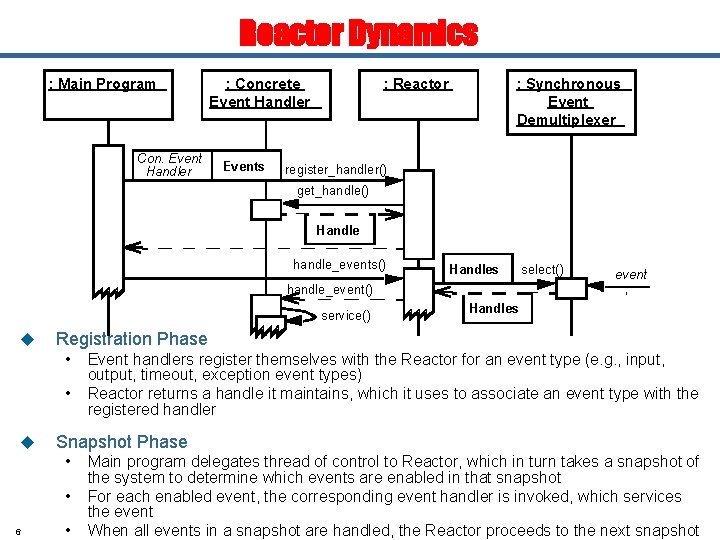

Reactor Dynamics : Main Program Con. Event Handler : Concrete Event Handler Events : Reactor : Synchronous Event Demultiplexer register_handler() get_handle() Handle handle_events() Handles select() event handle_event() service() u Registration Phase • Event handlers register themselves with the Reactor for an event type (e. g. , input, • u output, timeout, exception event types) Reactor returns a handle it maintains, which it uses to associate an event type with the registered handler Snapshot Phase • Main program delegates thread of control to Reactor, which in turn takes a snapshot of • 6 Handles • the system to determine which events are enabled in that snapshot For each enabled event, the corresponding event handler is invoked, which services the event When all events in a snapshot are handled, the Reactor proceeds to the next snapshot

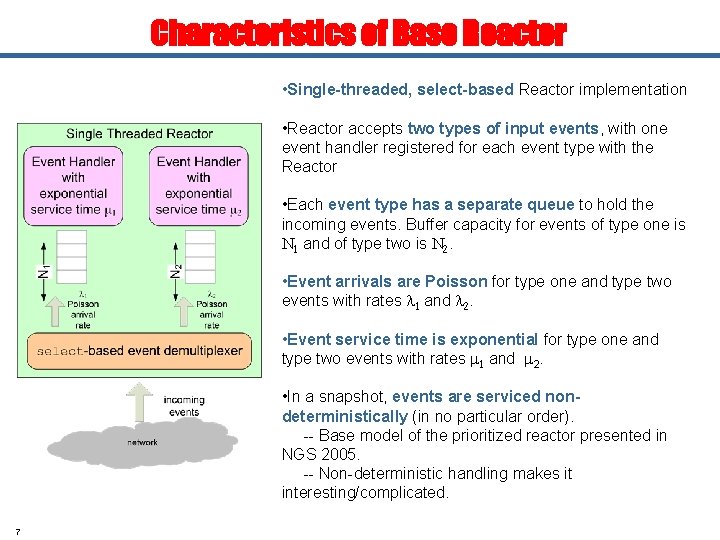

Characteristics of Base Reactor • Single-threaded, select-based Reactor implementation • Reactor accepts two types of input events, with one event handler registered for each event type with the Reactor • Each event type has a separate queue to hold the incoming events. Buffer capacity for events of type one is N 1 and of type two is N 2. • Event arrivals are Poisson for type one and type two events with rates l 1 and l 2. • Event service time is exponential for type one and type two events with rates m 1 and m 2. • In a snapshot, events are serviced nondeterministically (in no particular order). -- Base model of the prioritized reactor presented in NGS 2005. -- Non-deterministic handling makes it interesting/complicated. 7

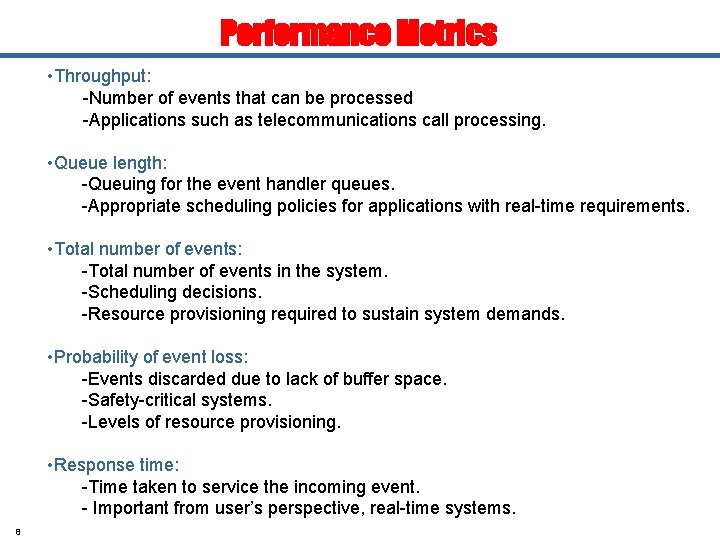

Performance Metrics • Throughput: -Number of events that can be processed -Applications such as telecommunications call processing. • Queue length: -Queuing for the event handler queues. -Appropriate scheduling policies for applications with real-time requirements. • Total number of events: -Total number of events in the system. -Scheduling decisions. -Resource provisioning required to sustain system demands. • Probability of event loss: -Events discarded due to lack of buffer space. -Safety-critical systems. -Levels of resource provisioning. • Response time: -Time taken to service the incoming event. - Important from user’s perspective, real-time systems. 8

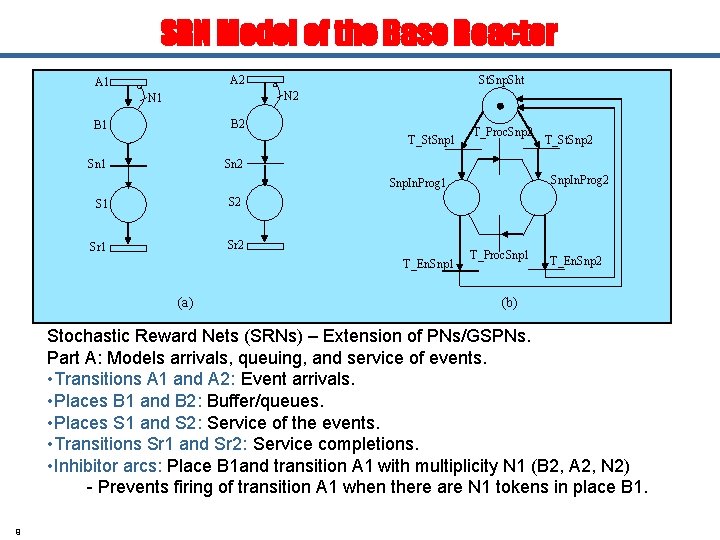

SRN Model of the Base Reactor A 2 A 1 St. Snp. Sht N 2 N 1 B 2 B 1 T_St. Snp 1 Sn 1 T_Proc. Snp 2 T_St. Snp 2 Snp. In. Prog 1 S 2 Sr 1 Sr 2 T_En. Snp 1 (a) T_Proc. Snp 1 T_En. Snp 2 (b) Stochastic Reward Nets (SRNs) – Extension of PNs/GSPNs. Part A: Models arrivals, queuing, and service of events. • Transitions A 1 and A 2: Event arrivals. • Places B 1 and B 2: Buffer/queues. • Places S 1 and S 2: Service of the events. • Transitions Sr 1 and Sr 2: Service completions. • Inhibitor arcs: Place B 1 and transition A 1 with multiplicity N 1 (B 2, A 2, N 2) - Prevents firing of transition A 1 when there are N 1 tokens in place B 1. 9

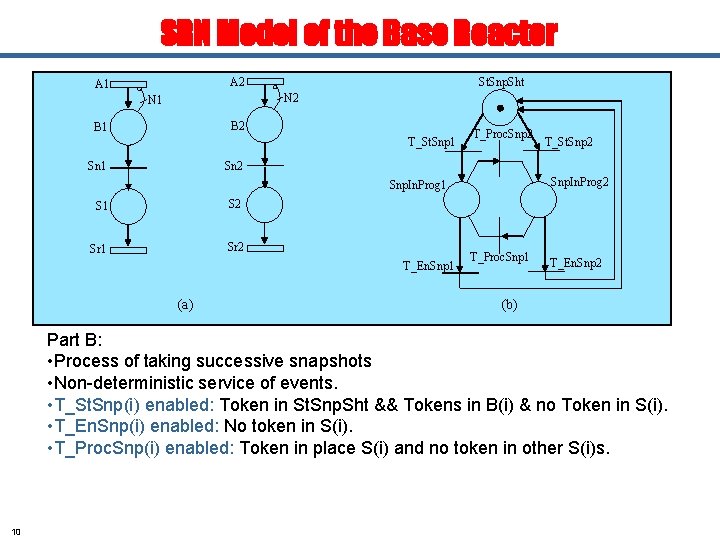

SRN Model of the Base Reactor A 2 A 1 St. Snp. Sht N 2 N 1 B 2 B 1 T_St. Snp 1 Sn 1 T_Proc. Snp 2 T_St. Snp 2 Snp. In. Prog 1 S 2 Sr 1 Sr 2 T_En. Snp 1 (a) T_Proc. Snp 1 T_En. Snp 2 (b) Part B: • Process of taking successive snapshots • Non-deterministic service of events. • T_St. Snp(i) enabled: Token in St. Snp. Sht && Tokens in B(i) & no Token in S(i). • T_En. Snp(i) enabled: No token in S(i). • T_Proc. Snp(i) enabled: Token in place S(i) and no token in other S(i)s. 10

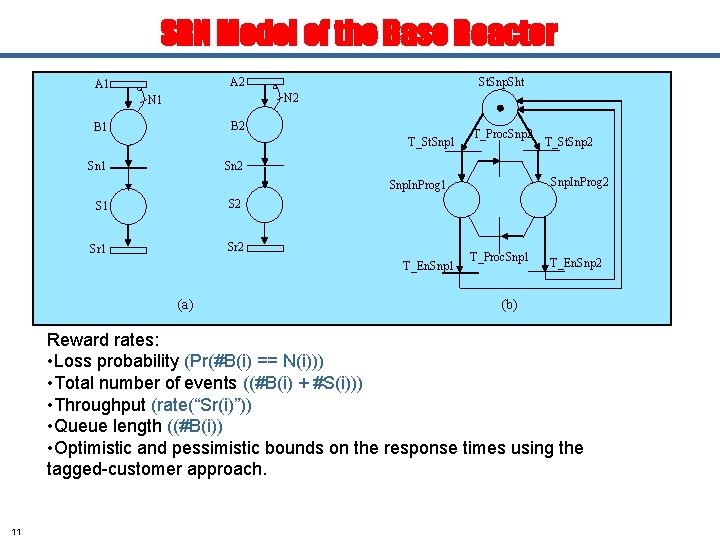

SRN Model of the Base Reactor A 2 A 1 St. Snp. Sht N 2 N 1 B 2 B 1 T_St. Snp 1 Sn 1 T_Proc. Snp 2 T_St. Snp 2 Snp. In. Prog 1 S 2 Sr 1 Sr 2 T_En. Snp 1 (a) T_Proc. Snp 1 T_En. Snp 2 (b) Reward rates: • Loss probability (Pr(#B(i) == N(i))) • Total number of events ((#B(i) + #S(i))) • Throughput (rate(“Sr(i)”)) • Queue length ((#B(i)) • Optimistic and pessimistic bounds on the response times using the tagged-customer approach. 11

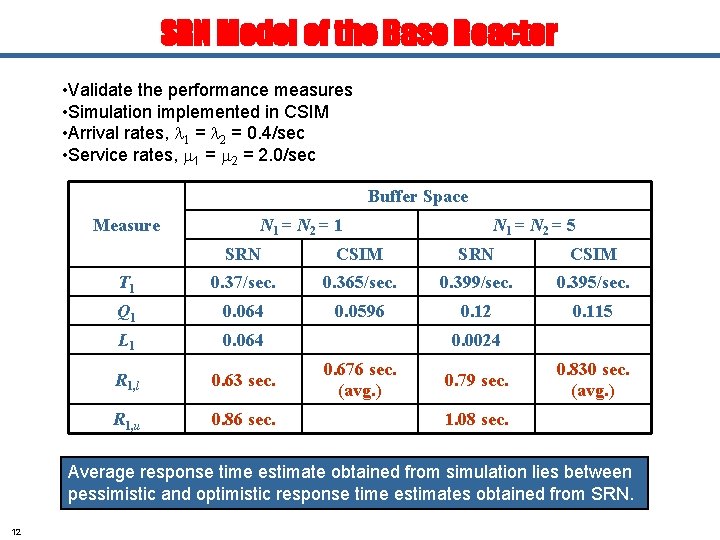

SRN Model of the Base Reactor • Validate the performance measures • Simulation implemented in CSIM • Arrival rates, l 1 = l 2 = 0. 4/sec • Service rates, m 1 = m 2 = 2. 0/sec Buffer Space Measure N 1 = N 2 = 1 N 1 = N 2 = 5 SRN CSIM T 1 0. 37/sec. 0. 365/sec. 0. 399/sec. 0. 395/sec. Q 1 0. 064 0. 0596 0. 12 0. 115 L 1 0. 064 R 1, l 0. 63 sec. R 1, u 0. 86 sec. 0. 0024 0. 676 sec. (avg. ) 0. 79 sec. 0. 830 sec. (avg. ) 1. 08 sec. Average response time estimate obtained from simulation lies between pessimistic and optimistic response time estimates obtained from SRN. 12

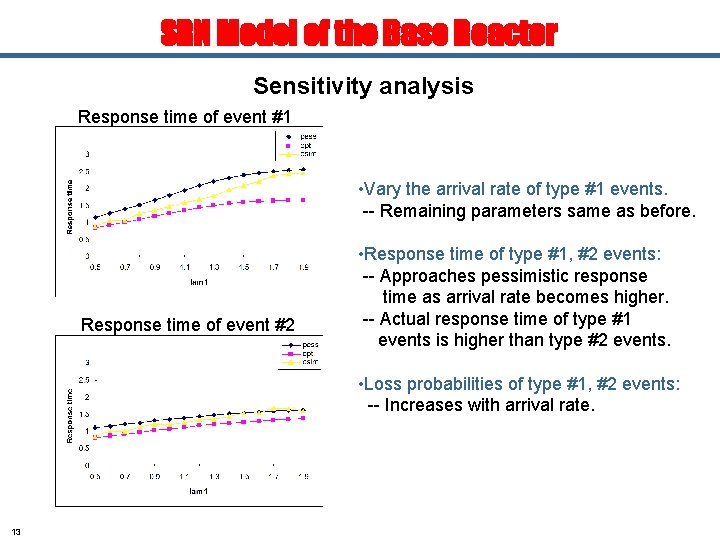

SRN Model of the Base Reactor Sensitivity analysis Response time of event #1 • Vary the arrival rate of type #1 events. -- Remaining parameters same as before. Response time of event #2 • Response time of type #1, #2 events: -- Approaches pessimistic response time as arrival rate becomes higher. -- Actual response time of type #1 events is higher than type #2 events. • Loss probabilities of type #1, #2 events: -- Increases with arrival rate. 13

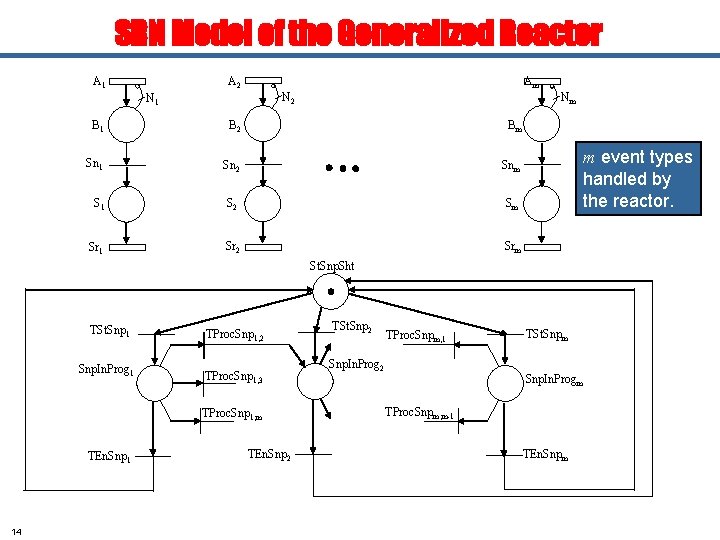

SRN Model of the Generalized Reactor A 1 A 2 Am N 2 N 1 Nm B 1 B 2 Bm Sn 1 Sn 2 Snm S 1 S 2 Sm Sr 1 Sr 2 Srm m event types handled by the reactor. St. Snp. Sht TSt. Snp 1 Snp. In. Prog 1 TProc. Snp 1, 2 TProc. Snp 1, 3 TProc. Snp 1, m TEn. Snp 1 14 TEn. Snp 2 TSt. Snp 2 TProc. Snpm, 1 Snp. In. Prog 2 TSt. Snpm Snp. In. Progm TProc. Snpm, m-1 TEn. Snpm

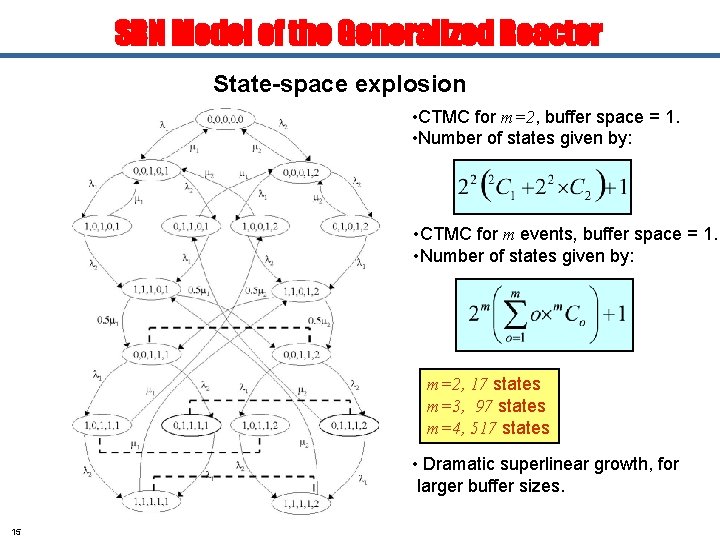

SRN Model of the Generalized Reactor State-space explosion • CTMC for m=2, buffer space = 1. • Number of states given by: • CTMC for m events, buffer space = 1. • Number of states given by: m=2, 17 states m=3, 97 states m=4, 517 states • Dramatic superlinear growth, for larger buffer sizes. 15

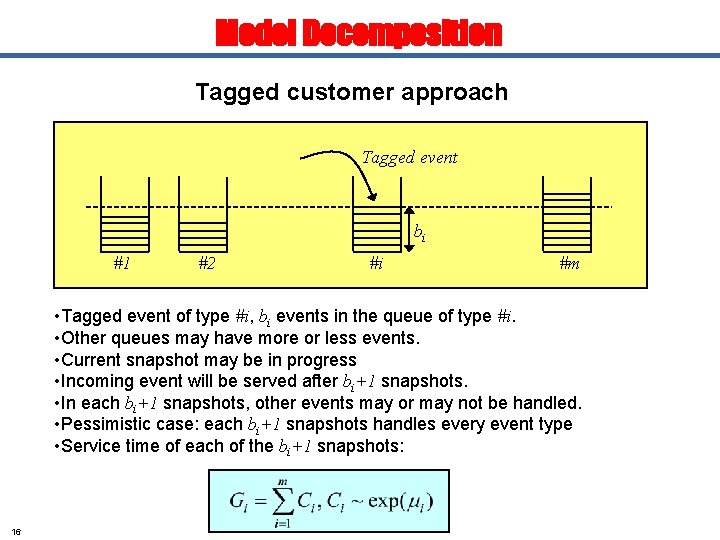

Model Decomposition Tagged customer approach Tagged event bi #1 #2 #i #m • Tagged event of type #i, bi events in the queue of type #i. • Other queues may have more or less events. • Current snapshot may be in progress • Incoming event will be served after bi+1 snapshots. • In each bi+1 snapshots, other events may or may not be handled. • Pessimistic case: each bi+1 snapshots handles every event type • Service time of each of the bi+1 snapshots: 16

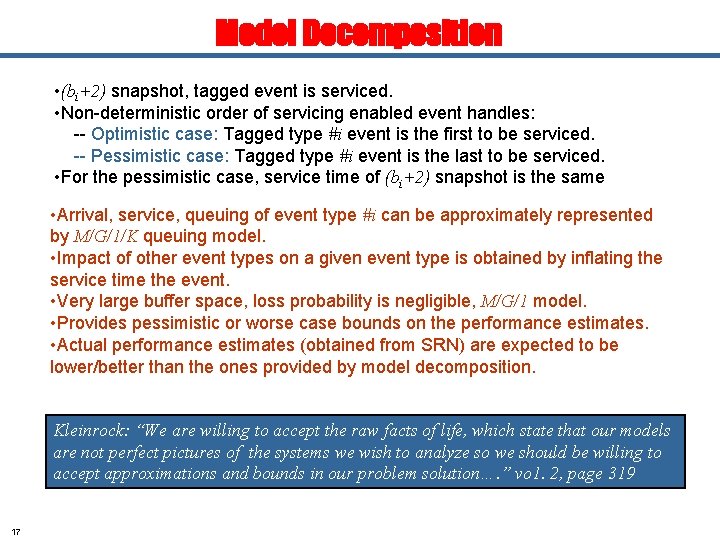

Model Decomposition • (bi+2) snapshot, tagged event is serviced. • Non-deterministic order of servicing enabled event handles: -- Optimistic case: Tagged type #i event is the first to be serviced. -- Pessimistic case: Tagged type #i event is the last to be serviced. • For the pessimistic case, service time of (bi+2) snapshot is the same • Arrival, service, queuing of event type #i can be approximately represented by M/G/1/K queuing model. • Impact of other event types on a given event type is obtained by inflating the service time the event. • Very large buffer space, loss probability is negligible, M/G/1 model. • Provides pessimistic or worse case bounds on the performance estimates. • Actual performance estimates (obtained from SRN) are expected to be lower/better than the ones provided by model decomposition. Kleinrock: “We are willing to accept the raw facts of life, which state that our models are not perfect pictures of the systems we wish to analyze so we should be willing to accept approximations and bounds in our problem solution…. ” vo 1. 2, page 319 17

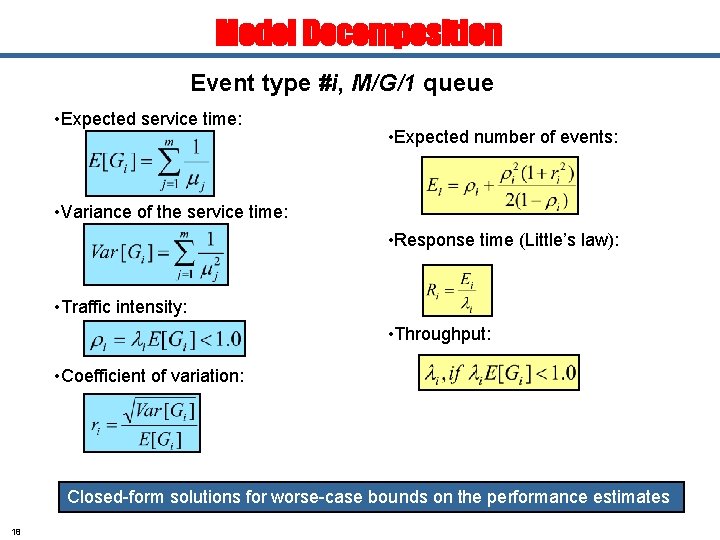

Model Decomposition Event type #i, M/G/1 queue • Expected service time: • Expected number of events: • Variance of the service time: • Response time (Little’s law): • Traffic intensity: • Throughput: • Coefficient of variation: Closed-form solutions for worse-case bounds on the performance estimates 18

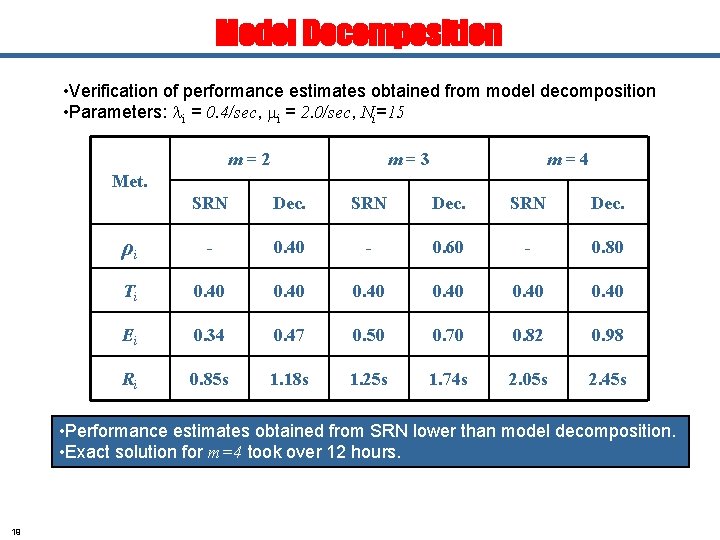

Model Decomposition • Verification of performance estimates obtained from model decomposition • Parameters: li = 0. 4/sec, mi = 2. 0/sec, Ni=15 m=2 m=3 m=4 Met. SRN Dec. ρi - 0. 40 - 0. 60 - 0. 80 Ti 0. 40 Ei 0. 34 0. 47 0. 50 0. 70 0. 82 0. 98 Ri 0. 85 s 1. 18 s 1. 25 s 1. 74 s 2. 05 s 2. 45 s • Performance estimates obtained from SRN lower than model decomposition. • Exact solution for m=4 took over 12 hours. 19

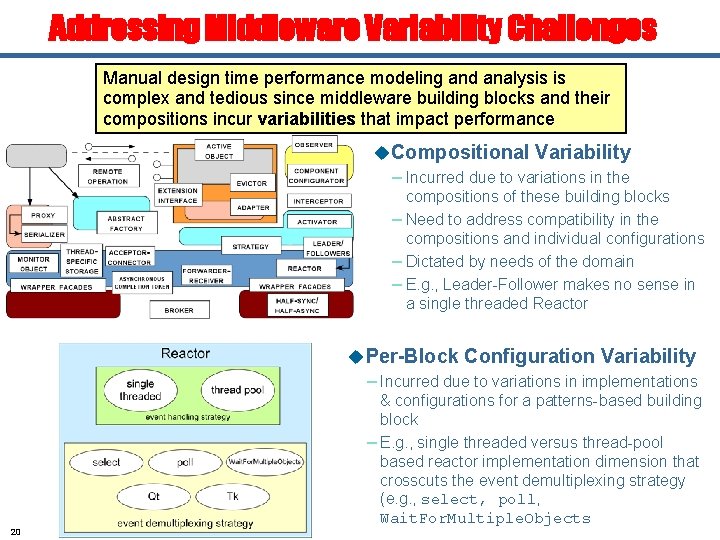

Addressing Middleware Variability Challenges Manual design time performance modeling and analysis is complex and tedious since middleware building blocks and their compositions incur variabilities that impact performance u. Compositional Variability – Incurred due to variations in the compositions of these building blocks – Need to address compatibility in the compositions and individual configurations – Dictated by needs of the domain – E. g. , Leader-Follower makes no sense in a single threaded Reactor u. Per-Block Configuration Variability – Incurred due to variations in implementations 20 & configurations for a patterns-based building block – E. g. , single threaded versus thread-pool based reactor implementation dimension that crosscuts the event demultiplexing strategy (e. g. , select, poll, Wait. For. Multiple. Objects

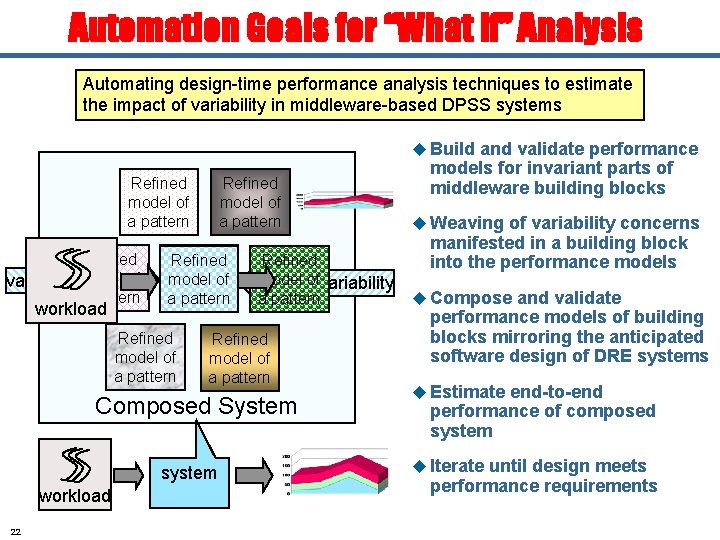

Automation Goals for “What if” Analysis Automating design-time performance analysis techniques to estimate the impact of variability in middleware-based DPSS systems u Build and validate performance Refined model of a pattern Refined of weave variabilitymodel a pattern workload Refined model of a pattern Invariant Refined model of a pattern Refined model ofvariability weave a pattern Refined model of a pattern Composed System system workload 22 models for invariant parts of middleware building blocks u Weaving of variability concerns manifested in a building block into the performance models u Compose and validate performance models of building blocks mirroring the anticipated software design of DRE systems u Estimate end-to-end performance of composed system u Iterate until design meets performance requirements

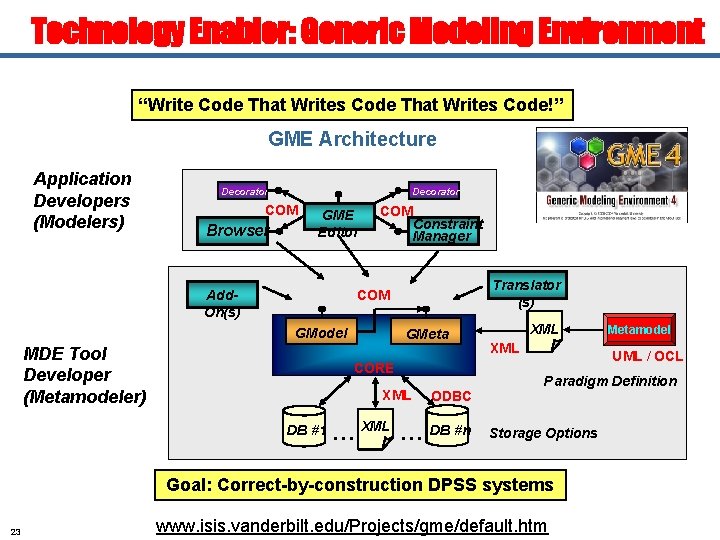

Technology Enabler: Generic Modeling Environment “Write Code That Writes Code!” GME Architecture Application Developers (Modelers) Decorator COM Browser GME Editor COM Constraint Manager Translator (s) COM Add. On(s) GModel MDE Tool Developer (Metamodeler) GMeta CORE XML DB #1 ODBC …XML … DB #n XML UML / OCL Paradigm Definition Storage Options Goal: Correct-by-construction DPSS systems 23 Metamodel www. isis. vanderbilt. edu/Projects/gme/default. htm

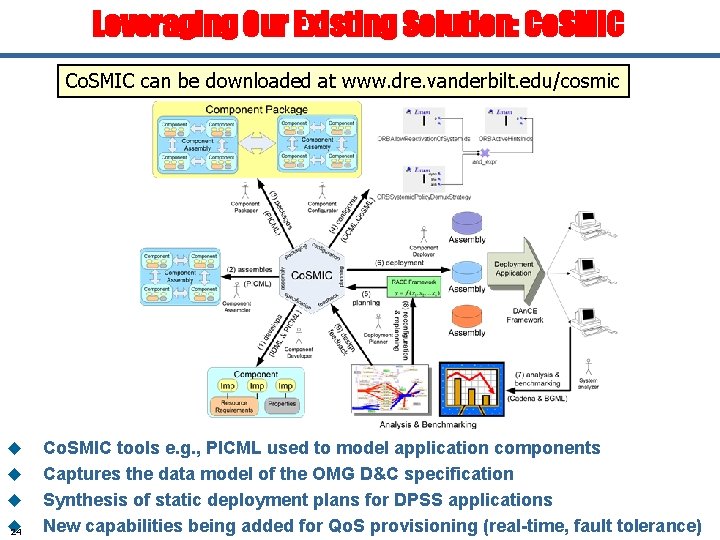

Leveraging Our Existing Solution: Co. SMIC can be downloaded at www. dre. vanderbilt. edu/cosmic u u 24 Co. SMIC tools e. g. , PICML used to model application components Captures the data model of the OMG D&C specification Synthesis of static deployment plans for DPSS applications New capabilities being added for Qo. S provisioning (real-time, fault tolerance)

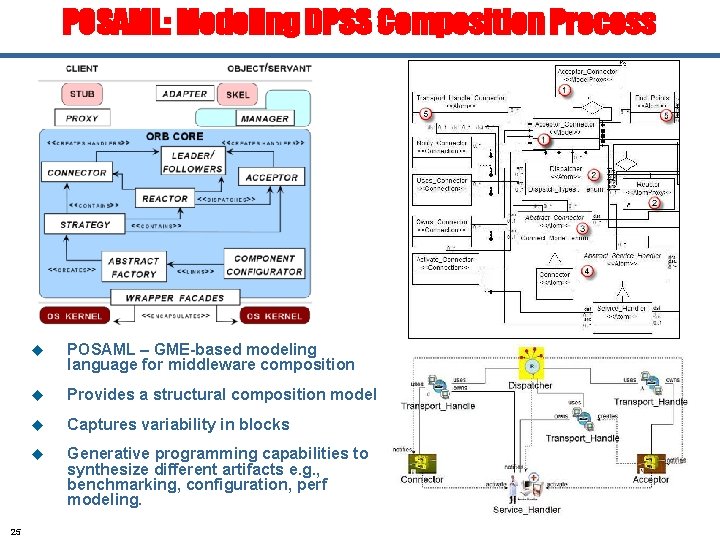

POSAML: Modeling DPSS Composition Process 25 u POSAML – GME-based modeling language for middleware composition u Provides a structural composition model u Captures variability in blocks u Generative programming capabilities to synthesize different artifacts e. g. , benchmarking, configuration, perf modeling.

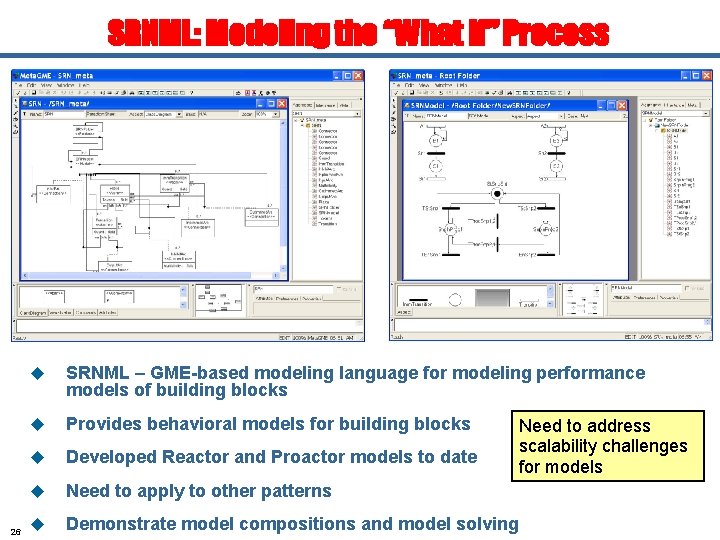

SRNML: Modeling the “What if” Process 26 u SRNML – GME-based modeling language for modeling performance models of building blocks u Provides behavioral models for building blocks u Developed Reactor and Proactor models to date u Need to apply to other patterns u Demonstrate model compositions and model solving Need to address scalability challenges for models

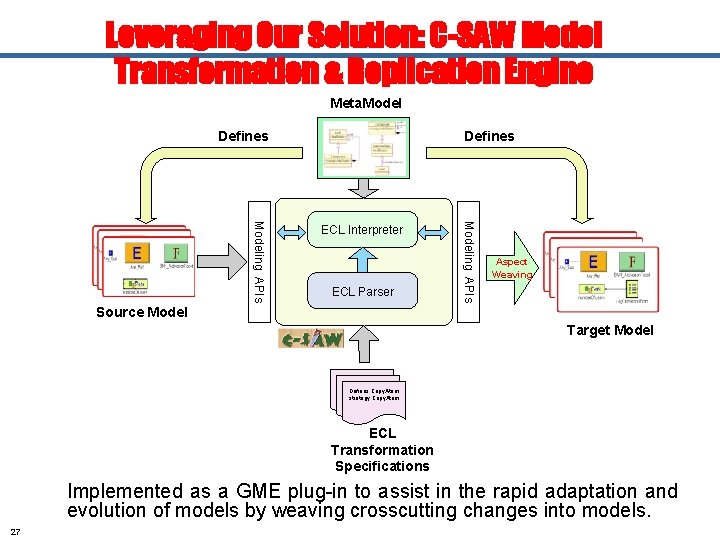

Leveraging Our Solution: C-SAW Model Transformation & Replication Engine Meta. Model Defines ECL Parser Modeling APIs ECL Interpreter Aspect Weaving Source Model Target Model Defines Copy. Atom strategy Copy. Atom ECL Transformation Specifications Implemented as a GME plug-in to assist in the rapid adaptation and evolution of models by weaving crosscutting changes into models. 27

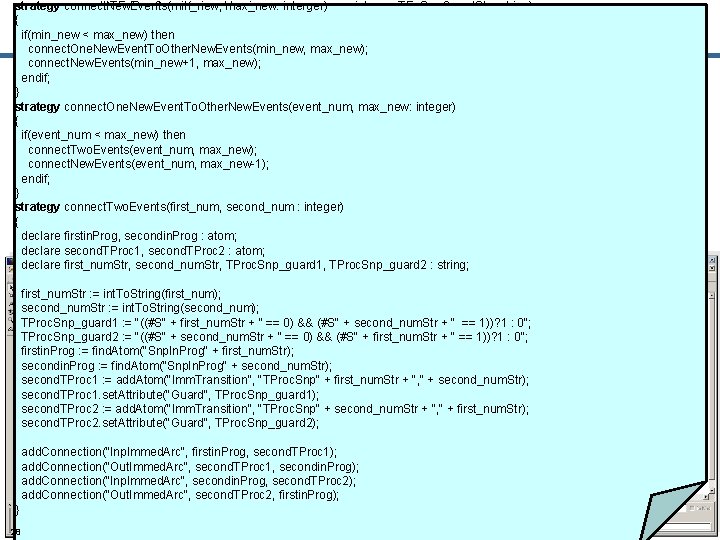

compute. TEn. Snp. Guard(min_old, min_new, max_new : integer; TEn. Snp. Guard. Str : string) strategy connect. New. Events(min_new, max_new: interger) { ifif(min_new (min_old << max_new) then compute. TEn. Snp. Guard(min_old + 1, min_new, max_new, TEn. Snp. Guard. Str + "(#S" + int. To. String(min_old) + " == 0)&&"); connect. One. New. Event. To. Other. New. Events(min_new, max_new); else connect. New. Events(min_new+1, max_new); add. Eventswith. Guard(min_new, max_new, TEn. Snp. Guard. Str + "(#S" + int. To. String(min_old) + "== 0))? 1: 0"); endif; } strategy connect. One. New. Event. To. Other. New. Events(event_num, max_new: integer) {. . . // several strategies not show here (e. g. , add. Eventswith. Guard) strategy add. Events(min_new, max_new : integer; TEn. Snp. Guard. Str : string) if(event_num < max_new) then { connect. Two. Events(event_num, max_new); if connect. New. Events(event_num, (min_new <= max_new) then max_new-1); add. New. Event(min_new, TEn. Snp. Guard. Str); endif; } add. Events(min_new+1, max_new, TEn. Snp. Guard. Str); endif; connect. Two. Events(first_num, second_num : integer) strategy {} strategy add. New. Event(event_num integer; TEn. Snp. Guard. Str : string) declare firstin. Prog, secondin. Prog : : atom; { declare second. TProc 1, second. TProc 2 : atom; start, st. Tran, in. Prog, end. Tran : TProc. Snp_guard 1, atom; declare first_num. Str, second_num. Str, TProc. Snp_guard 2 : string; declare TSt. Snp_guard : string; first_num. Str : = int. To. String(first_num); start second_num. Str : = find. Atom("St. Snp. Sht"); : = int. To. String(second_num); st. Tran : = add. Atom("Imm. Transition", "TSt. Snp" + int. To. String(event_num)); TProc. Snp_guard 1 : = "((#S" + first_num. Str + " == 0) && (#S" + second_num. Str + " == 1))? 1 : 0"; TProc. Snp_guard 2 TSt. Snp_guard : = "(#S" : = "((#S" + int. To. String(event_num) + second_num. Str + " + ==" 0) ==&& 1)? 1 (#S" : 0"; + first_num. Str + " == 1))? 1 : 0"; st. Tran. set. Attribute("Guard", TSt. Snp_guard); firstin. Prog : = find. Atom("Snp. In. Prog" + first_num. Str); secondin. Prog : = find. Atom("Snp. In. Prog" + second_num. Str); in. Prog : = add. Atom("Place", "Snp. In. Prog" + int. To. String(event_num)); second. TProc 1 : = add. Atom("Imm. Transition", "TProc. Snp" + first_num. Str + ", " + second_num. Str); second. TProc 1. set. Attribute("Guard", end. Tran : = add. Atom("Imm. Transition", TProc. Snp_guard 1); "TEn. Snp" + int. To. String(event_num)); end. Tran. set. Attribute("Guard", TEn. Snp. Guard. Str); second. TProc 2 : = add. Atom("Imm. Transition", "TProc. Snp" + second_num. Str + ", " + first_num. Str); second. TProc 2. set. Attribute("Guard", add. Connection("Inp. Immed. Arc", start, TProc. Snp_guard 2); st. Tran); add. Connection("Out. Immed. Arc", st. Tran, in. Prog); add. Connection("Inp. Immed. Arc", firstin. Prog, end. Tran); second. TProc 1); end. Tran, start); secondin. Prog); add. Connection("Out. Immed. Arc", second. TProc 1, } add. Connection("Inp. Immed. Arc", secondin. Prog, second. TProc 2); add. Connection("Out. Immed. Arc", second. TProc 2, firstin. Prog); } Scaling a Base SRN Model strategy compute. TEn. Snp. Guard(min_old , min_new, max_new : integer; TEn. Snp. Guard. Str : string) { if (min_old < max_new) then compute. TEn. Snp. Guard(min_old + 1, min_new, max_new, TEn. Snp. Guard. Str + "(#S" + int. To. String(min_old) + " == 0)&&"); else add. Eventswith. Guard(min_new , max_new, TEn. Snp. Guard. Str + "(#S" + int. To. String(min_old) + "== 0))? 1: 0"); endif; }. . . // several strategies not show here (e. g. , add. Eventswith. Guard) strategy add. Events(min_new, max_new : integer; TEn. Snp. Guard. Str : string) { if (min_new <= max_new) then add. New. Event(min_new, TEn. Snp. Guard. Str); add. Events(min_new+1, max_new, TEn. Snp. Guard. Str); endif; } strategy add. New. Event(event_num : integer; TEn. Snp. Guard. Str : string) { declare start, st. Tran, in. Prog, end. Tran : atom; declare TSt. Snp_guard : string; strategy connect. New. Events(min_new, max_new: interger) { if(min_new < max_new) then connect. One. New. Event. To. Other. New. Events(min_new , max_new); connect. New. Events(min_new+1, max_new); endif; } strategy connect. One. New. Event. To. Other. New. Events(event_num , max_new: integer) { if(event_num < max_new) then connect. Two. Events(event_num , max_new); connect. New. Events(event_num , max_new-1); endif; } strategy connect. Two. Events(first_num , second_num : integer) { declare firstin. Prog, secondin. Prog : atom; declare second. TProc 1, second. TProc 2 : atom; declare first_num. Str, second_num. Str, TProc. Snp_guard 1, TProc. Snp_guard 2 : string; first_num. Str : = int. To. String(first_num ); second_num. Str : = int. To. String(second_num ); TProc. Snp_guard 1 : = "((#S" + first_num. Str + " == 0) && (#S" + second_num. Str + " == 1))? 1 : 0"; TProc. Snp_guard 2 : = "((#S" + second_num. Str + " == 0) && (#S" + first_num. Str + " == 1))? 1 : 0"; firstin. Prog : = find. Atom("Snp. In. Prog" + first_num. Str); secondin. Prog : = find. Atom("Snp. In. Prog" + second_num. Str); second. TProc 1 : = add. Atom("Imm. Transition ", "TProc. Snp" + first_num. Str + ", " + second_num. Str); second. TProc 1. set. Attribute("Guard", TProc. Snp_guard 1); second. TProc 2 : = add. Atom("Imm. Transition", "TProc. Snp" + second_num. Str + ", " + first_num. Str); second. TProc 2. set. Attribute("Guard", TProc. Snp_guard 2); start : = find. Atom("St. Snp. Sht"); st. Tran : = add. Atom("Imm. Transition ", "TSt. Snp" + int. To. String(event_num )); TSt. Snp_guard : = "(#S" + int. To. String(event_num ) + " == 1)? 1 : 0"; st. Tran. set. Attribute("Guard", TSt. Snp_guard); in. Prog : = add. Atom("Place", "Snp. In. Prog" + int. To. String(event_num )); end. Tran : = add. Atom("Imm. Transition ", "TEn. Snp" + int. To. String(event_num )); end. Tran. set. Attribute("Guard ", TEn. Snp. Guard. Str); add. Connection("Inp. Immed. Arc ", start, st. Tran); add. Connection("Out. Immed. Arc ", st. Tran, in. Prog); add. Connection("Inp. Immed. Arc ", in. Prog, end. Tran); add. Connection("Out. Immed. Arc ", end. Tran, start); add. Connection("Inp. Immed. Arc ", firstin. Prog, second. TProc 1); add. Connection("Out. Immed. Arc ", second. TProc 1, secondin. Prog); add. Connection("Inp. Immed. Arc ", secondin. Prog, second. TProc 2); add. Connection("Out. Immed. Arc ", second. TProc 2, firstin. Prog); } } 28

Project Status and Work in Progress u Ongoing Integration of SRNML (behavioral) and POSAML (structural) u Incorporate SRNML & POSAML in Co. SMIC and release the software in open source public domain u Integrate with C-SAW scalability engine u Performance analysis of different building blocks (patterns): – Non-deterministic reactor (all steps). – Prioritized reactor, Active Object, Proactor (Steps #1, #2: Basic model, Model validation) u Compose DPSS systems and performance analysis (analytical and simulation) of composed systems u Validate via automated empirical benchmarking u Demonstrate on real systems 29

Selected Publications 1. U. Praphamontripong, S. Gokhale, A. Gokhale, and J. Gray, “Performance Analysis of an Asynchronous Web Server” Proc. of 30 th COMPSAC, To Appear. 2. J. Gray, Y. Lin, and J. Zhang, "Automating Change Evolution in Model-Driven Engineering, " IEEE Computer (Special Issue on Model-Driven Engineering), vol. 39, no. 2, February 2006, pp. 51 -58 3. Invited (Under Review): J. Gray, Y. Lin, J. Zhang, S. Nordstrom, A. Gokhale, S. Neema, and S. Gokhale, "Replicators: Transformations to Address Model Scalability, " voted one of the Best Papers of Mo. DELS 2005 and invited for an extended submission to the Journal of Software and Systems Modeling. 4. S. Gokhale, A. Gokhale, and J. Gray, "Response Time Analysis of an Event Demultiplexing Pattern in Middleware for Network Services, " IEEE Globe. Com, St. Louis, MO, December 2005. 5. Best Paper Award: J. Gray, Y. Lin, J. Zhang, S. Nordstrom, A. Gokhale, S. Neema, and S. Gokhale, "Replicators: Transformations to Address Model Scalability, " Model Driven Engineering Languages and Systems (Mo. DELS) (formerly the UML series of conferences), Springer-Verlag LNCS 3713, Montego Bay, Jamaica, October 2005, pp. 295 -308. - Voted one of the Best Papers of Mo. DELS 2005 and invited for an extended submission to the Journal of Software and Systems Modeling. 6. P. Vandal, U. Praphamontripong, S. Gokhale, A. Gokhale, and J. Gray, "Performance Analysis of the Reactor Pattern in Network Services, " 5 th International Workshop on Performance Modeling, Evaluation, and Optimization of Parallel and Distributed Systems (PMEO-PDS), held at IPDPS, Rhodes Island, Greece, April 2006. 7. A. Kogekar, D. Kaul, A. Gokhale, P. Vandal, U. Praphamontripong, S. Gokhale, J. Zhang, Y. Lin, J. Gray, "Model-driven Generative Techniques for Scalable Performability Analysis of Distributed Systems, " Next Generation Software Workshop, held at. IPDPS, Rhodes Island, Greece, April 2006. 8. S. Gokhale, A. Gokhale, and J. Gray, "A Model-Driven Performance Analysis Framework for Distributed Performance-Sensitive Software Systems, " Next Generation Software Workshop, held at IPDPS, Denver, CO, April 2005. 30

Concluding Remarks Model building (basic characteristics) Model validation (simulation/measurements) Generalization of the model Model decomposition • DPSS development incurs significant challenges • Model-driven design-time performance analysis is a promising approach • Performance models of basic building blocks built • Scalability demonstrated • Generative programming is key for automation Model replication Automatic generation Many hard R&D problems with model-driven engineering remain unresolved!! • GME is available from www. isis. vanderbilt. edu/Projects/gme/default. htm • Co. SMIC is available from www. dre. vanderbilt. edu/cosmic • C-SAW is available from www. gray-area. org/Research/C-SAW 31

- Slides: 30