Model Evaluation Model Selection Modeling process Problem identification

Model Evaluation & Model Selection

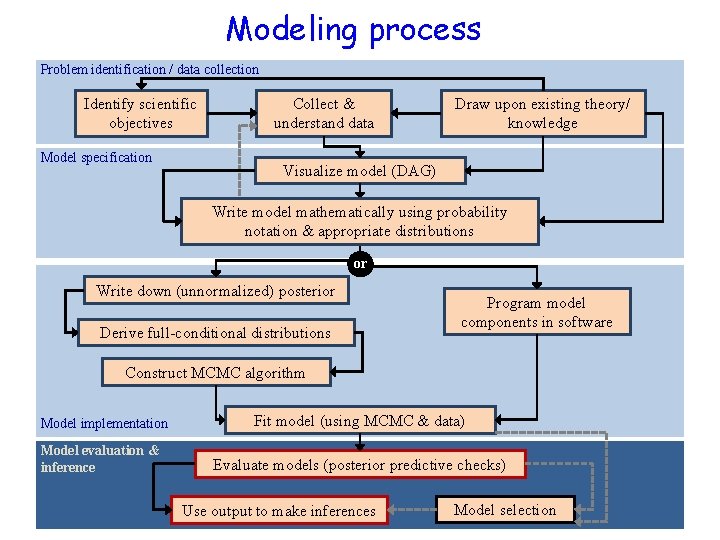

Modeling process Problem identification / data collection Identify scientific objectives Model specification Collect & understand data Draw upon existing theory/ knowledge Visualize model (DAG) Write model mathematically using probability notation & appropriate distributions or Write down (unnormalized) posterior Derive full-conditional distributions Program model components in software Construct MCMC algorithm Model implementation Model evaluation & inference Fit model (using MCMC & data) Evaluate models (posterior predictive checks) Use output to make inferences Model selection

![Motivating issues • How well does my model(s) fit my data? [evaluation] – Just Motivating issues • How well does my model(s) fit my data? [evaluation] – Just](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-3.jpg)

Motivating issues • How well does my model(s) fit my data? [evaluation] – Just because the MCMC procedure “went smoothly, ” doesn’t mean you have a “good model” – Just because you got posterior stats for your parameters of interest doesn’t mean you have a “good model” – Check ability of model to “replicate” observed data – Potentially check ability of model to “predict” observed data (via cross -validation) • Alternative model formulations = alternative hypotheses about system. Which model? [selection] – – Which model agrees the best with my data? Which model is simpler to interpret? Which model satisfies both criteria? Which model should I chose? Combine alternative models? Model averaging 3

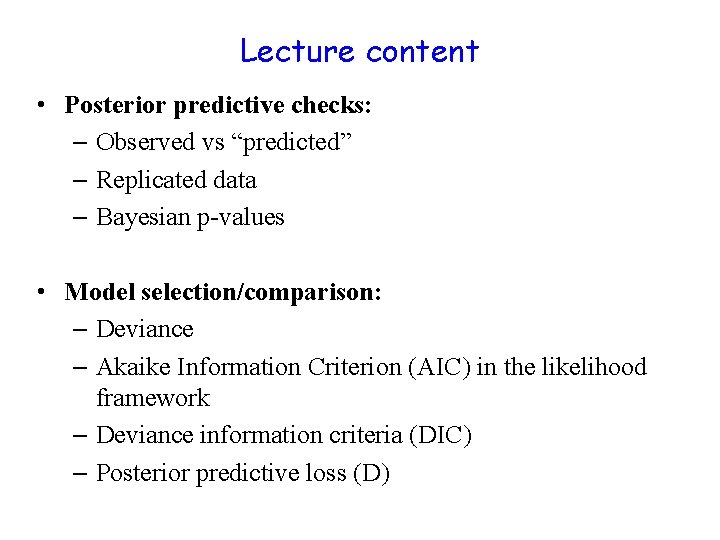

Lecture content • Posterior predictive checks: – Observed vs “predicted” – Replicated data – Bayesian p-values • Model selection/comparison: – Deviance – Akaike Information Criterion (AIC) in the likelihood framework – Deviance information criteria (DIC) – Posterior predictive loss (D)

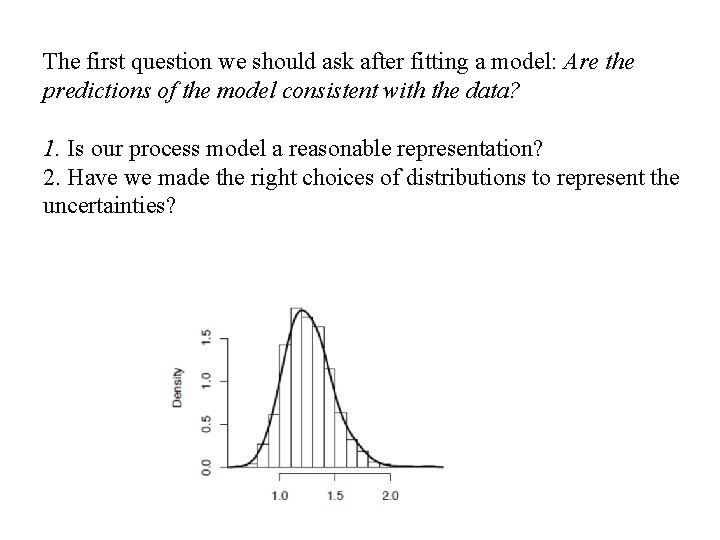

The first question we should ask after fitting a model: Are the predictions of the model consistent with the data? 1. Is our process model a reasonable representation? 2. Have we made the right choices of distributions to represent the uncertainties?

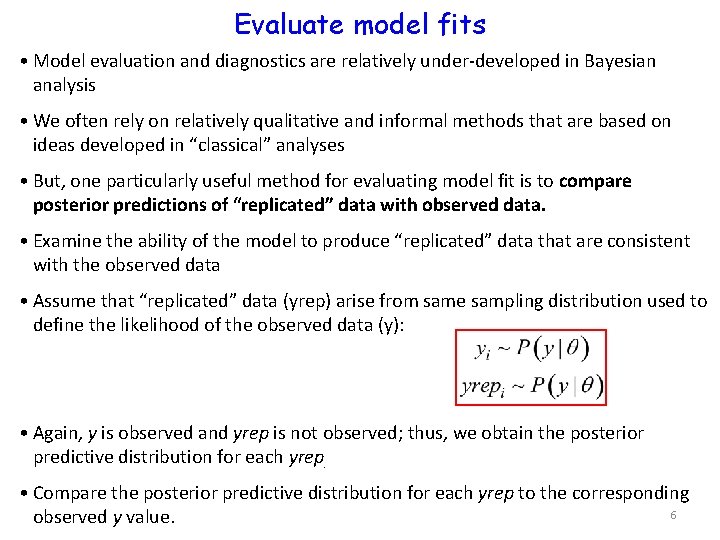

Evaluate model fits • Model evaluation and diagnostics are relatively under-developed in Bayesian analysis • We often rely on relatively qualitative and informal methods that are based on ideas developed in “classical” analyses • But, one particularly useful method for evaluating model fit is to compare posterior predictions of “replicated” data with observed data. • Examine the ability of the model to produce “replicated” data that are consistent with the observed data • Assume that “replicated” data (yrep) arise from same sampling distribution used to define the likelihood of the observed data (y): • Again, y is observed and yrep is not observed; thus, we obtain the posterior predictive distribution for each yrep. • Compare the posterior predictive distribution for each yrep to the corresponding 6 observed y value.

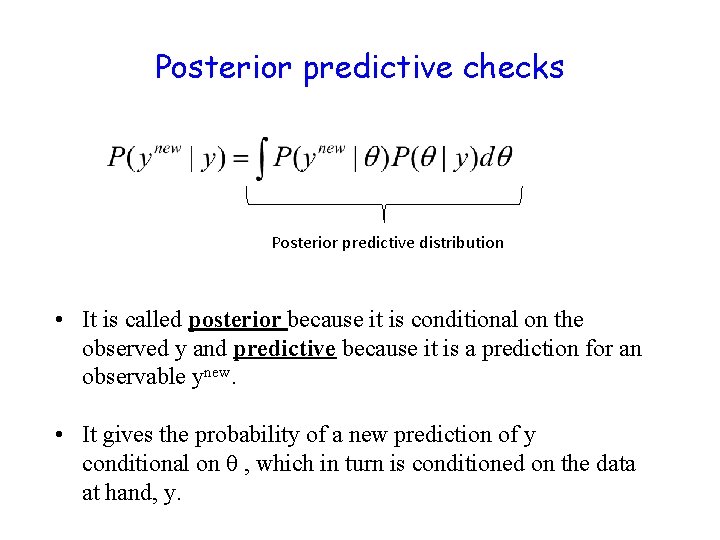

Posterior predictive checks Posterior predictive distribution • It is called posterior because it is conditional on the observed y and predictive because it is a prediction for an observable ynew. • It gives the probability of a new prediction of y conditional on θ , which in turn is conditioned on the data at hand, y.

The mechanics • We have a scientific model g(θ, x) that predicts a response y. We estimate the posterior distribution, P(θ|y). For any given value of x, we can simulate the posterior predictive distribution ynew by making a draw of θ= θ’ from P(θ|y) and estimating ynew ~P(g(θ’|x), σ). • In MCMC, this simply means making draws from the data model because each draw is conditional on the current value of the parameters. These draws define the posterior predictive distribution in exactly the same way that draws allow us to define the posterior probability of the parameters.

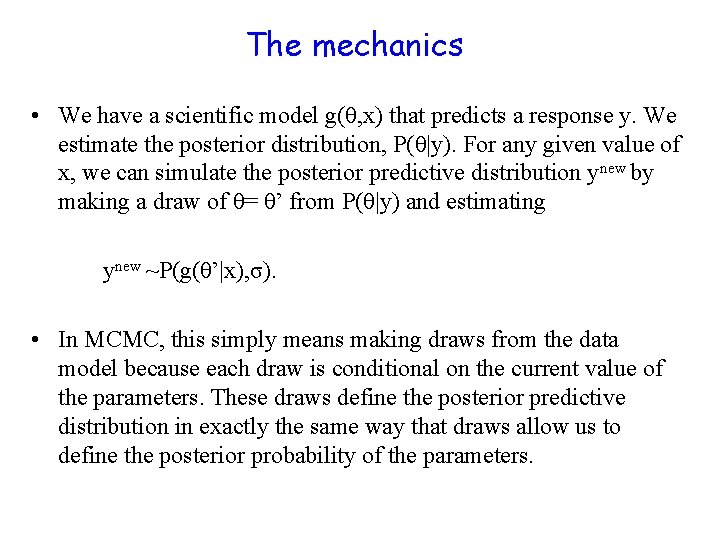

DAG: Back to hemlock trees yijis the observed growth. μi is the true value of growth. It is “latent”, i. e. not observable. Data model Process model Parameter model

![model{ for(i in 1: length(y)){ mu[i] <- b 0 + b 1*diam[i] y[i] ~ model{ for(i in 1: length(y)){ mu[i] <- b 0 + b 1*diam[i] y[i] ~](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-10.jpg)

model{ for(i in 1: length(y)){ mu[i] <- b 0 + b 1*diam[i] y[i] ~ dnorm(mu[i], tau) #posterior predictive distribution of y. new[i], unobserved trees y. sim[i] ~ dnorm(mu[i], tau) } # Priors b 0 ~ dnorm(0, . 0001) b 1 ~ dnorm(0, . 0001) tau ~ dgamma(. 0001, . 0001) sigma<-1/sqrt(tau) }

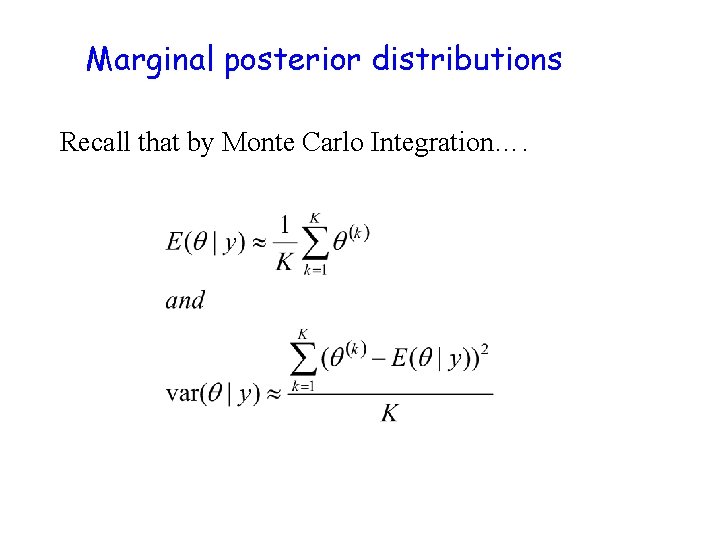

Marginal posterior distributions Recall that by Monte Carlo Integration….

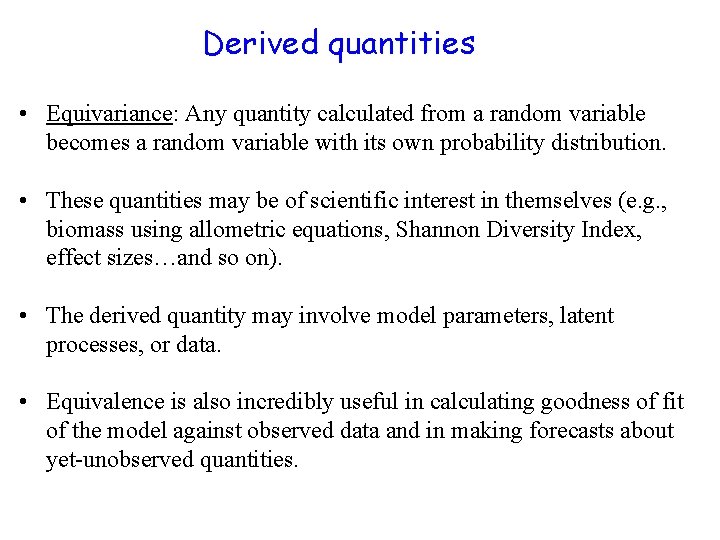

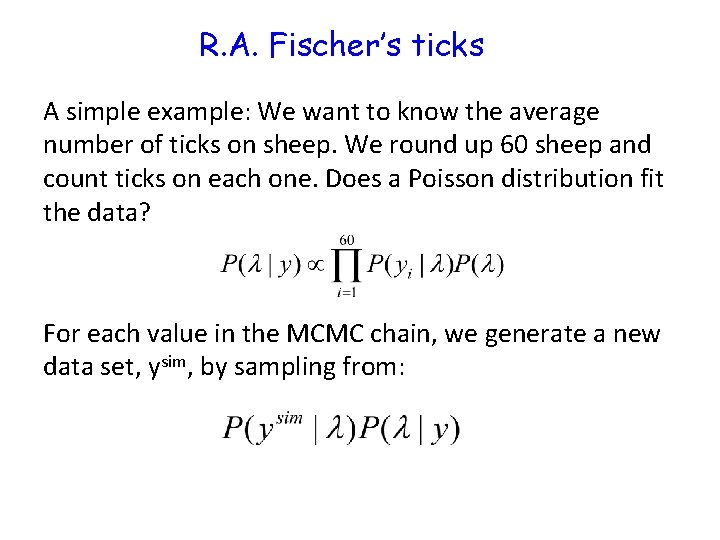

Derived quantities • Equivariance: Any quantity calculated from a random variable becomes a random variable with its own probability distribution. • These quantities may be of scientific interest in themselves (e. g. , biomass using allometric equations, Shannon Diversity Index, effect sizes…and so on). • The derived quantity may involve model parameters, latent processes, or data. • Equivalence is also incredibly useful in calculating goodness of fit of the model against observed data and in making forecasts about yet-unobserved quantities.

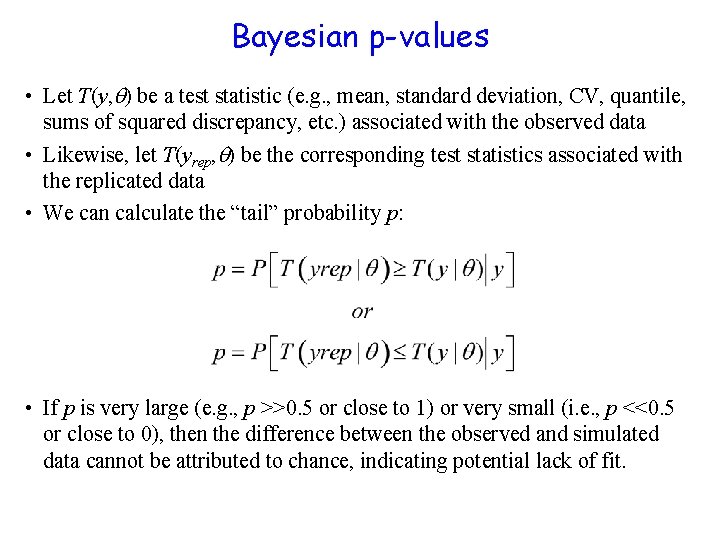

Bayesian p-values • Let T(y, ) be a test statistic (e. g. , mean, standard deviation, CV, quantile, sums of squared discrepancy, etc. ) associated with the observed data • Likewise, let T(yrep, ) be the corresponding test statistics associated with the replicated data • We can calculate the “tail” probability p: • If p is very large (e. g. , p >>0. 5 or close to 1) or very small (i. e. , p <<0. 5 or close to 0), then the difference between the observed and simulated data cannot be attributed to chance, indicating potential lack of fit.

R. A. Fischer’s ticks A simple example: We want to know the average number of ticks on sheep. We round up 60 sheep and count ticks on each one. Does a Poisson distribution fit the data? For each value in the MCMC chain, we generate a new data set, ysim, by sampling from:

Data Parameter A single mean governs the pattern

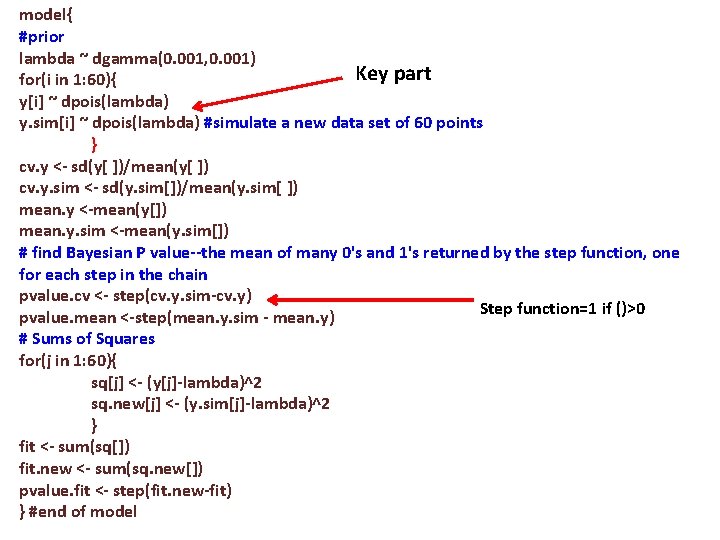

model{ #prior lambda ~ dgamma(0. 001, 0. 001) Key part for(i in 1: 60){ y[i] ~ dpois(lambda) y. sim[i] ~ dpois(lambda) #simulate a new data set of 60 points } cv. y <- sd(y[ ])/mean(y[ ]) cv. y. sim <- sd(y. sim[])/mean(y. sim[ ]) mean. y <-mean(y[]) mean. y. sim <-mean(y. sim[]) # find Bayesian P value--the mean of many 0's and 1's returned by the step function, one for each step in the chain pvalue. cv <- step(cv. y. sim-cv. y) Step function=1 if ()>0 pvalue. mean <-step(mean. y. sim - mean. y) # Sums of Squares for(j in 1: 60){ sq[j] <- (y[j]-lambda)^2 sq. new[j] <- (y. sim[j]-lambda)^2 } fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. fit <- step(fit. new-fit) } #end of model

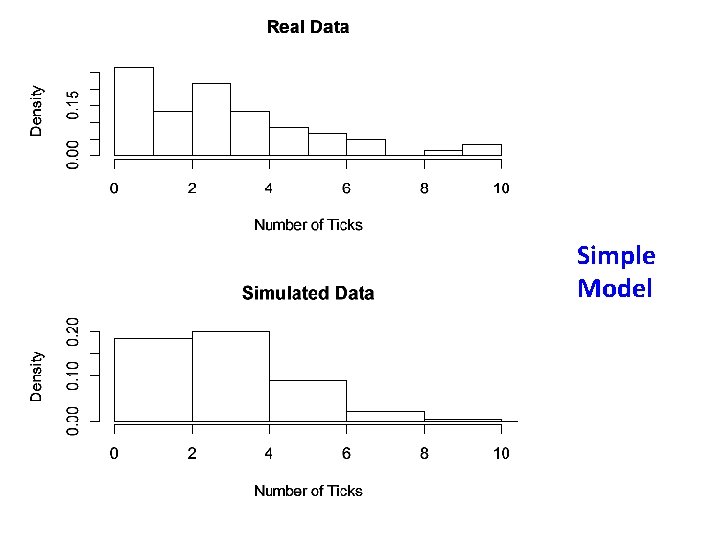

Simple Model

![Posterior predictive check Simple Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. Posterior predictive check Simple Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue.](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-18.jpg)

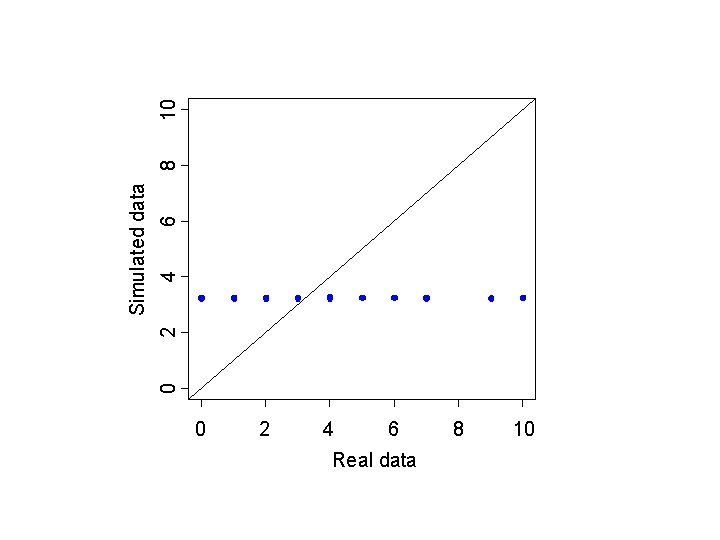

Posterior predictive check Simple Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. fit <- step(fit. new-fit) } #end of model p-value for CV=0. 0015 P-value for mean=0. 51 Remember, this is a twotailed probability, so values close to 0 and 1 indicate lack of fit.

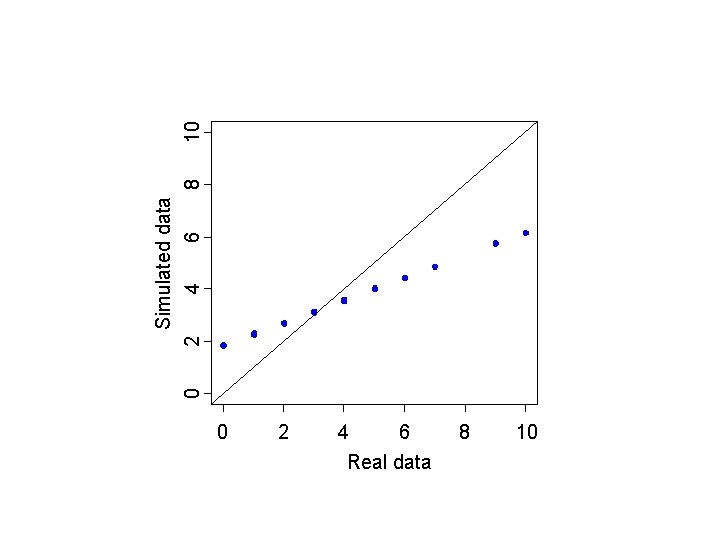

0 2 4 6 Real data 8 10 0 2 4 6 Simulated data 8 10

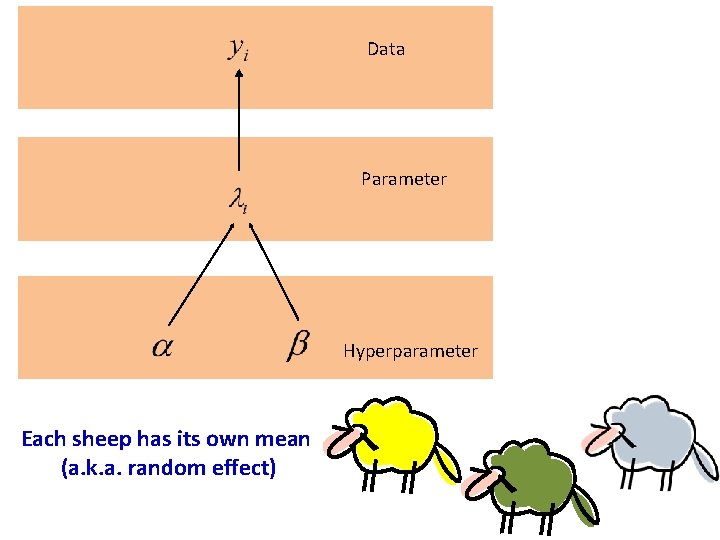

Data Parameter Hyperparameter Each sheep has its own mean (a. k. a. random effect)

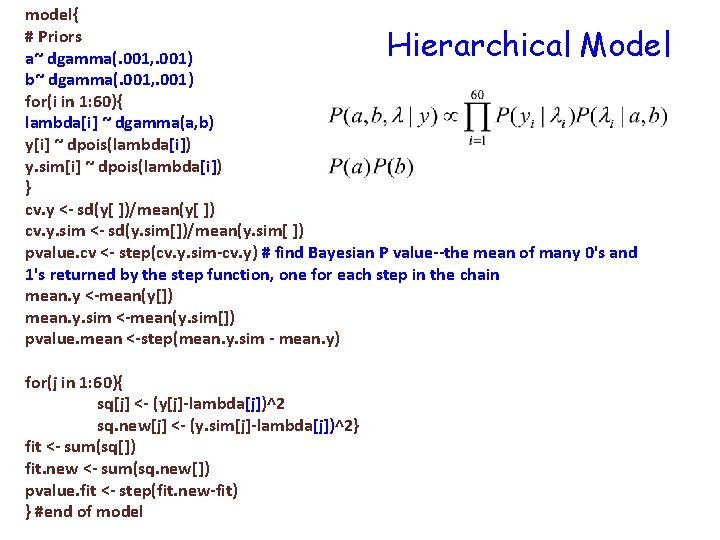

model{ # Priors a~ dgamma(. 001, . 001) b~ dgamma(. 001, . 001) for(i in 1: 60){ lambda[i] ~ dgamma(a, b) y[i] ~ dpois(lambda[i]) y. sim[i] ~ dpois(lambda[i]) } cv. y <- sd(y[ ])/mean(y[ ]) cv. y. sim <- sd(y. sim[])/mean(y. sim[ ]) pvalue. cv <- step(cv. y. sim-cv. y) # find Bayesian P value--the mean of many 0's and 1's returned by the step function, one for each step in the chain mean. y <-mean(y[]) mean. y. sim <-mean(y. sim[]) pvalue. mean <-step(mean. y. sim - mean. y) Hierarchical Model for(j in 1: 60){ sq[j] <- (y[j]-lambda[j])^2 sq. new[j] <- (y. sim[j]-lambda[j])^2} fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. fit <- step(fit. new-fit) } #end of model

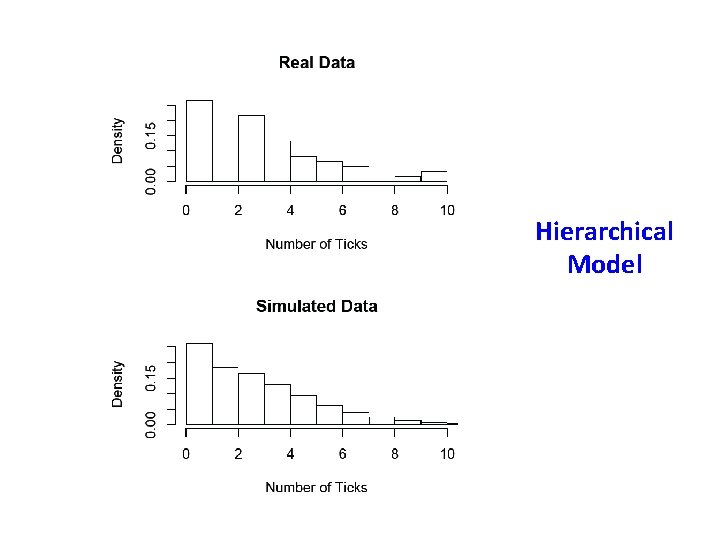

Hierarchical Model

![Posterior predictive check Hierarchical Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. Posterior predictive check Hierarchical Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue.](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-23.jpg)

Posterior predictive check Hierarchical Model fit <- sum(sq[]) fit. new <- sum(sq. new[]) pvalue. fit <- step(fit. new-fit) } #end of model p-value for CV=0. 46 p-value for mean=0. 51 Remember, this is a twotailed probability, so values close to 0 and 1 indicate lack of fit.

0 2 4 6 Real data 8 10 0 Simulated data 2 4 6 8 10

Posterior predictive checks • Gelman, A. , and J. Hill. 2009. Data analysis using regression and multilevel / hierarchical models. Cambridge University Press, Cambridge, UK. • Link, W. A. , and R. J. Barker. 2010. Bayesian Inference with Ecological Applications. Academic Press. • Kery, M. 2010. Introduction to Win. BUGS for Ecologists: A Bayesian approach to regression, ANOVA, mixed models and related analyses. Academic Press.

![Motivating issues • How well does my model(s) fit my data? [evaluation] – Just Motivating issues • How well does my model(s) fit my data? [evaluation] – Just](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-26.jpg)

Motivating issues • How well does my model(s) fit my data? [evaluation] – Just because the MCMC procedure “went smoothly, ” doesn’t mean you have a “good model” – Just because you got posterior stats for your parameters of interest doesn’t mean you have a “good model” – Check ability of model to “replicate” observed data – Potentially check ability of model to “predict” observed data (via cross -validation) • Alternative model formulations = alternative hypotheses about system. Which model? [selection] – – Which model agrees the best with my data? Which model is simpler to interpret? Which model satisfies both criteria? Which model should I chose? Combine alternative models? Model averaging 26

Lecture content • Posterior predictive checks: – Observed vs “predicted” – Replicated data – Bayesian p-values • Model selection/comparison: – Deviance – Akaike Information Criterion (AIC) in the likelihood framework – Deviance information criteria (DIC) – Posterior predictive loss (D) 27

“Model selection and model averaging are deep waters, mathematically, and no consensus has emerged in the substantial literature on a single approach. Indeed, our only criticism of the wide use of AIC weights in wildlife and ecological statistics is with their uncritical acceptance and the view that this challenging problem has been simply resolved” Link, W. A. , and R. J. Barker. 2006. Model weights and the foundations of multi-model inference. Ecology 87: 2626

The problem of model selection • Up until now, we have been concerned with the uncertainties associated with a given model. • What about the uncertainty that arises from our choice of models? • How do we decide which model is best? • How do we make inferences based on multiple models?

Parsimony==Ockham’s razor William of Ockham (1285 -1349) “Pluralitas non est ponenda sine neccesitate” “entities should not be multiplied unnecessarily” “Parsimony: . . . 2 : economy in the use of means to an end; especially : economy of explanation in conformity with Occam's razor” (Merriam-Webster Online Dictionary)

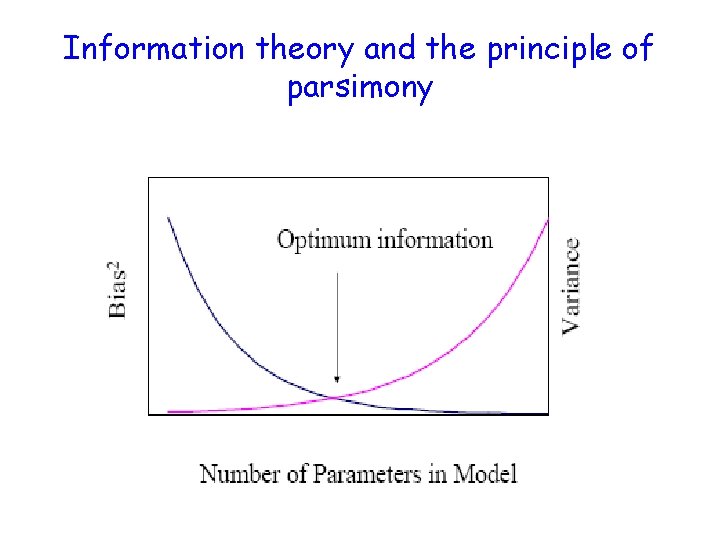

Information theory and the principle of parsimony

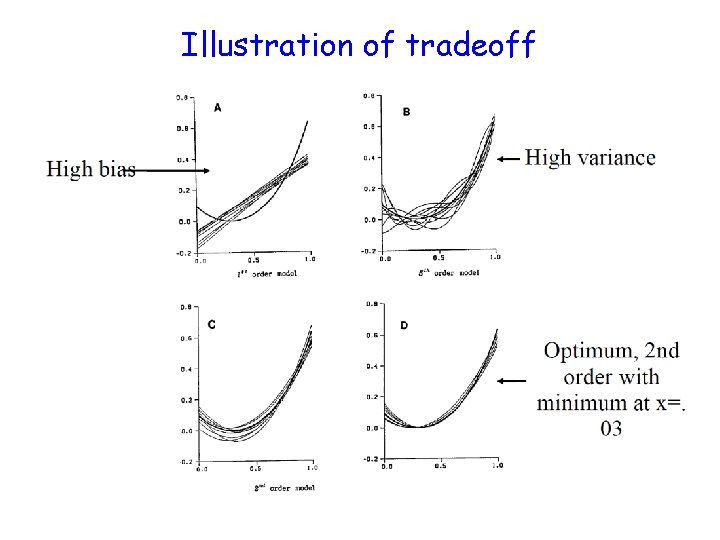

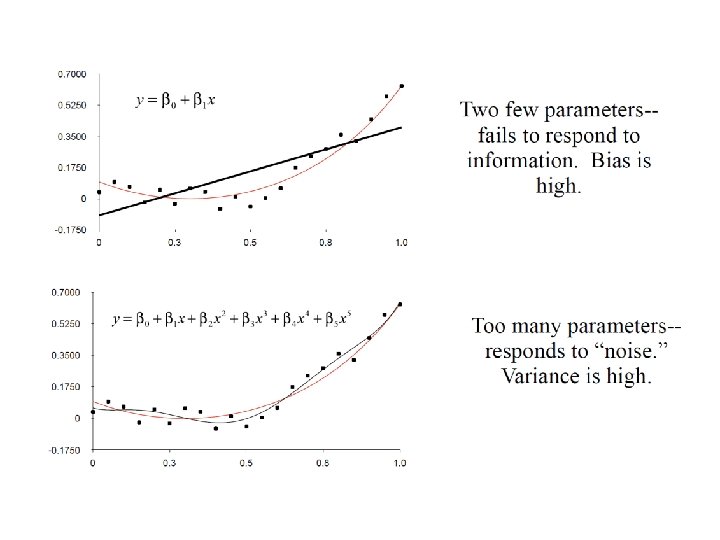

True model: Generated ten datasets sampling from normal distribution with mean=0 and var=0. 01. Fit five models to these ten datasets.

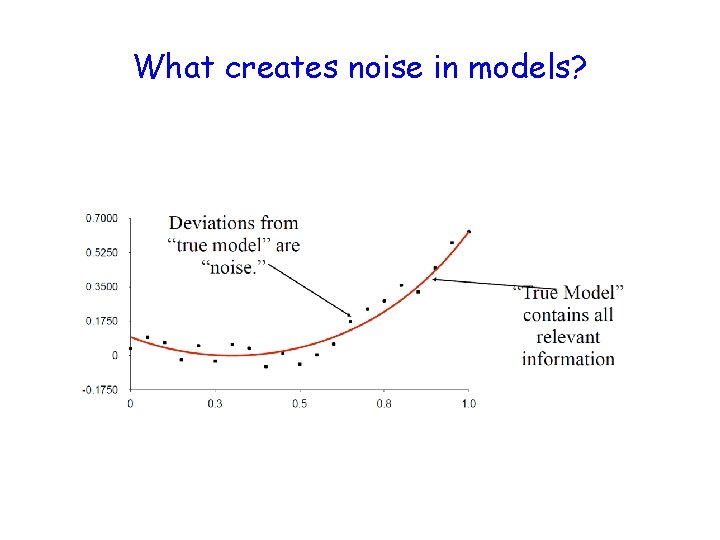

What creates noise in models?

Illustration of tradeoff

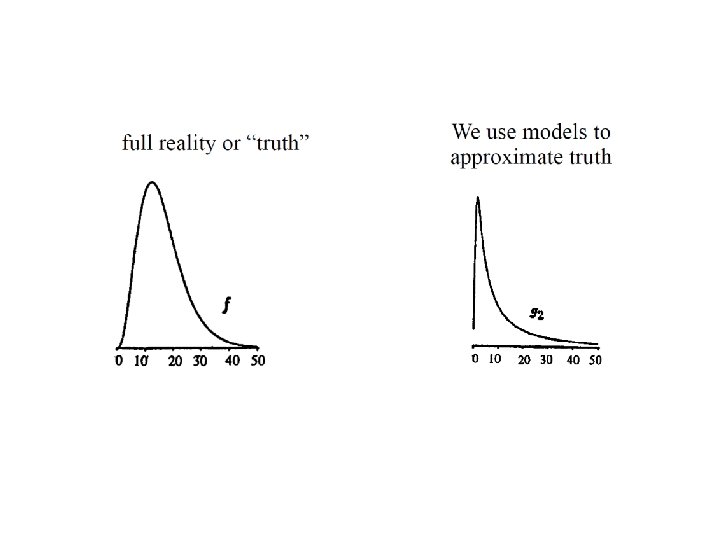

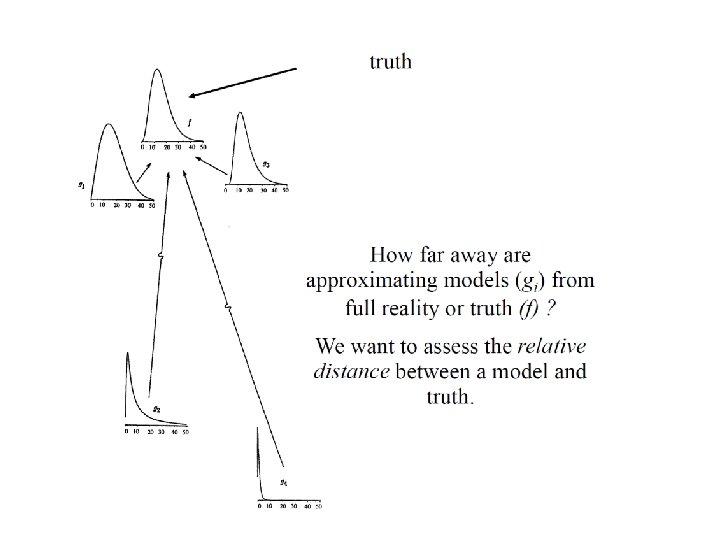

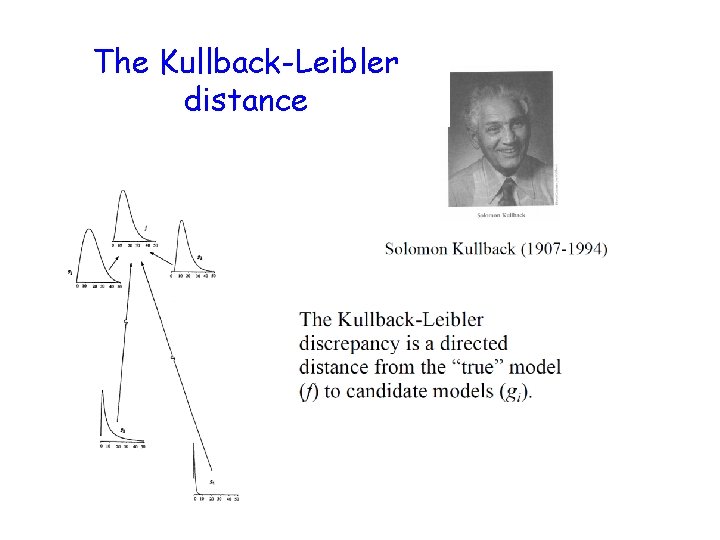

The Kullback-Leibler distance

Interpretation of Kullblack-Leibler Information (aka. distance between 2 models) • Given truth represented by f and a model approximating truth g, the K-distance measures the information lost by using model g to approximate f.

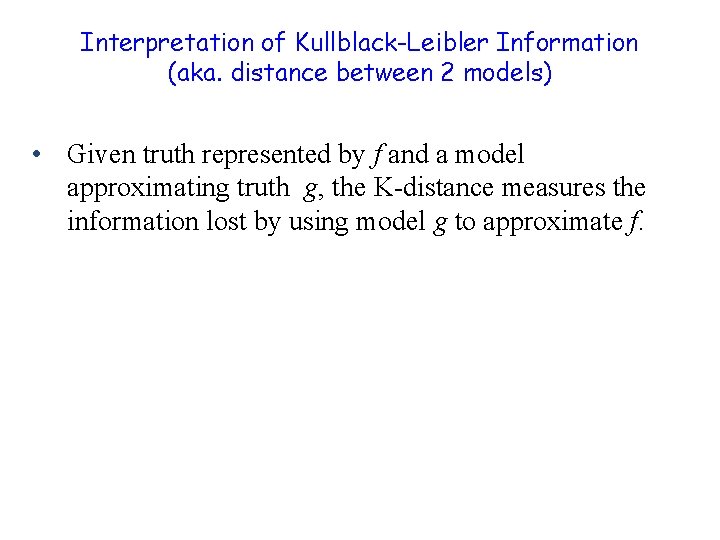

Interpretation of Kullblack-Leibler Information (aka. distance between 2 models) 650 Count 520 f(x) Truth 390 260 650 130 520 0 0 5 10 GAMMA 15 20 g 2(x) 390 260 130 650 0 0 520 390 10 WEIBULL 15 20 Approximations to truth g 1(x) 260 5 130 0 0 5 10 15 LOGNORMAL 20 Measures the (asymmetric) distance between two models. Minimizing the information lost when using g(x) to approximate f(x) is the same as maximizing the likelihood.

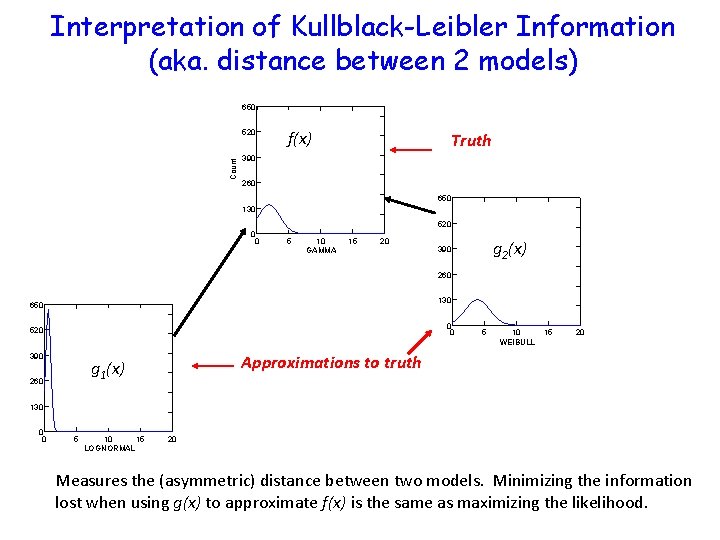

Heuristic interpretation of K-L

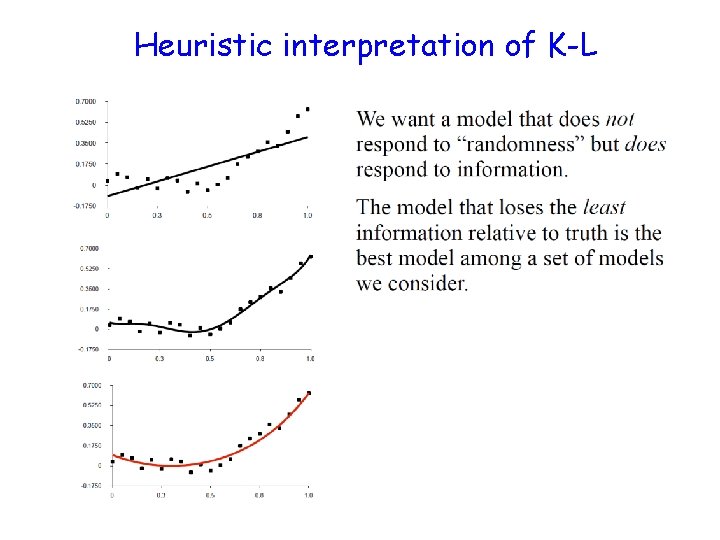

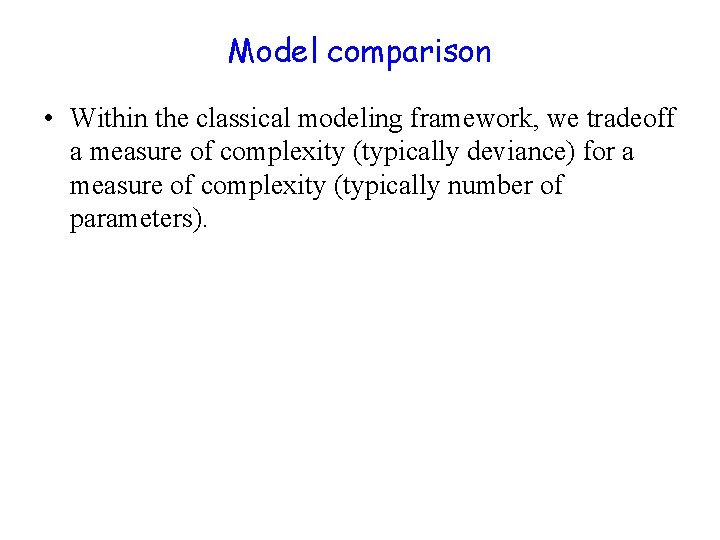

Model comparison • Within the classical modeling framework, we tradeoff a measure of complexity (typically deviance) for a measure of complexity (typically number of parameters).

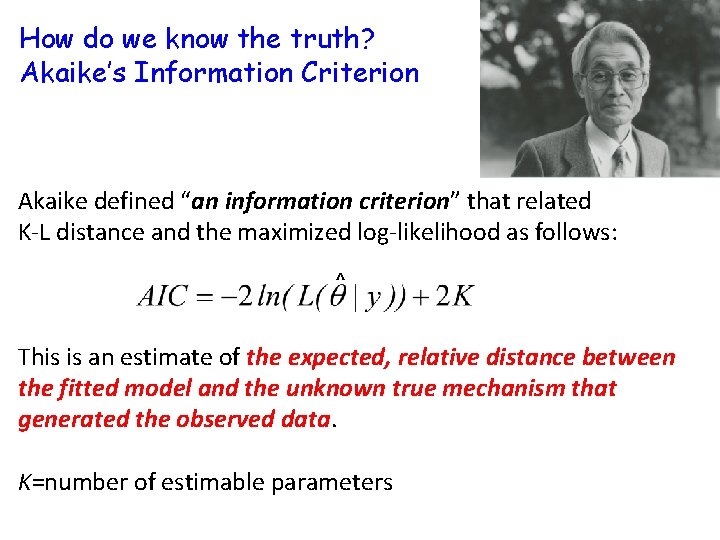

How do we know the truth? Akaike’s Information Criterion Akaike defined “an information criterion” that related K-L distance and the maximized log-likelihood as follows: ^ This is an estimate of the expected, relative distance between the fitted model and the unknown true mechanism that generated the observed data. K=number of estimable parameters

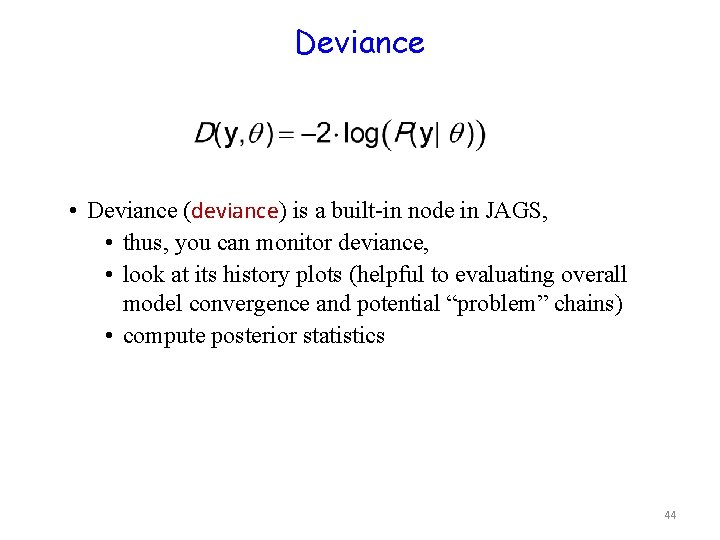

Deviance • Deviance (deviance) is a built-in node in JAGS, • thus, you can monitor deviance, • look at its history plots (helpful to evaluating overall model convergence and potential “problem” chains) • compute posterior statistics 44

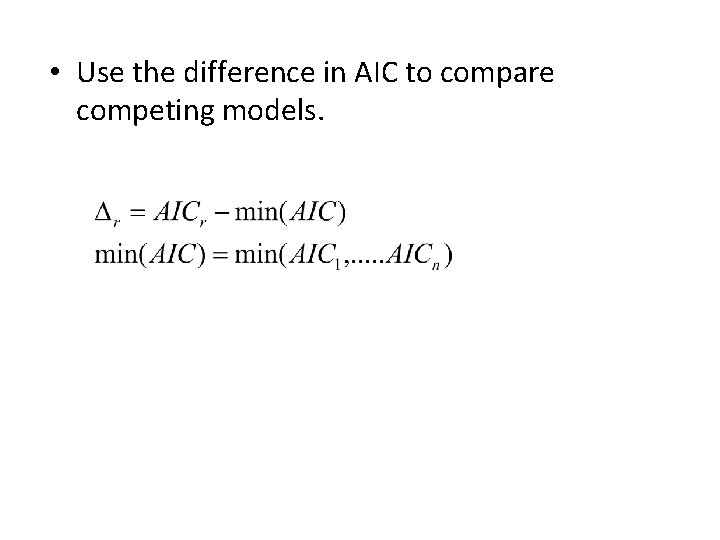

• Use the difference in AIC to compare competing models.

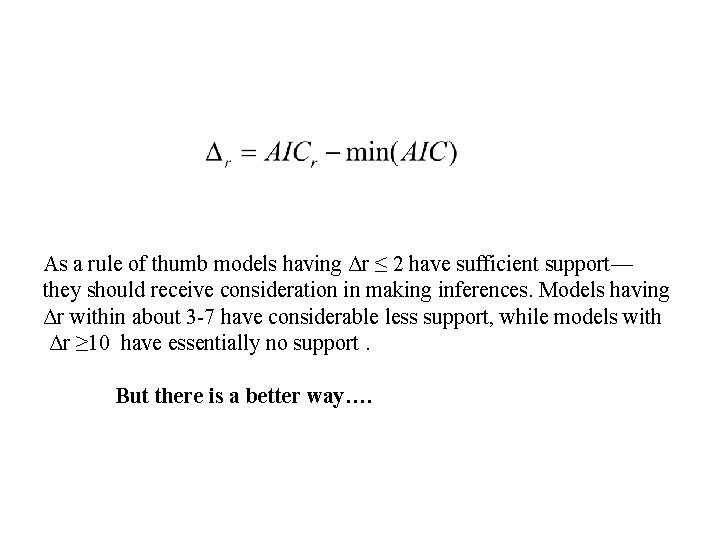

As a rule of thumb models having Δr ≤ 2 have sufficient support— they should receive consideration in making inferences. Models having Δr within about 3 -7 have considerable less support, while models with Δr ≥ 10 have essentially no support. But there is a better way….

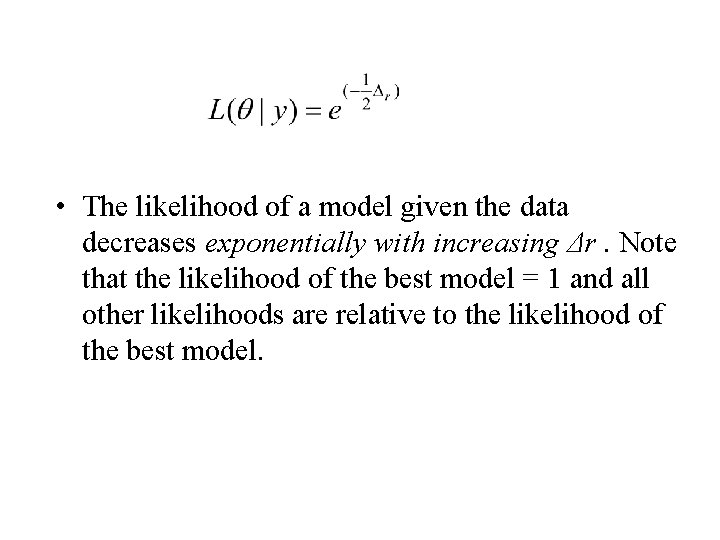

• The likelihood of a model given the data decreases exponentially with increasing Δr. Note that the likelihood of the best model = 1 and all other likelihoods are relative to the likelihood of the best model.

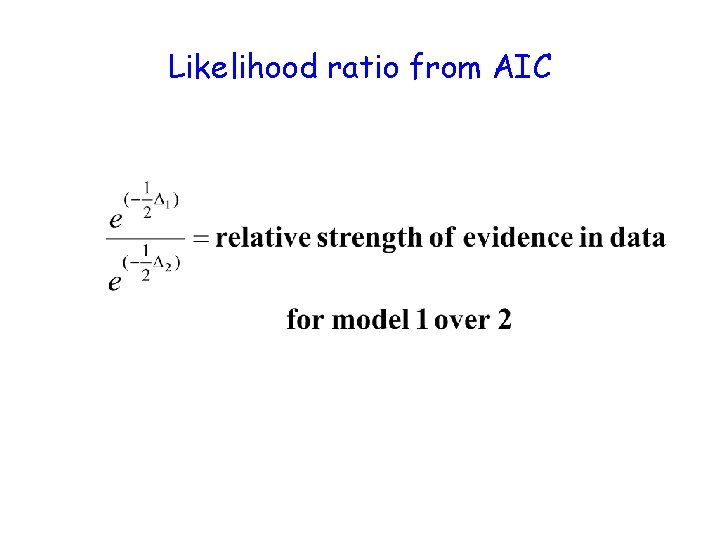

Likelihood ratio from AIC

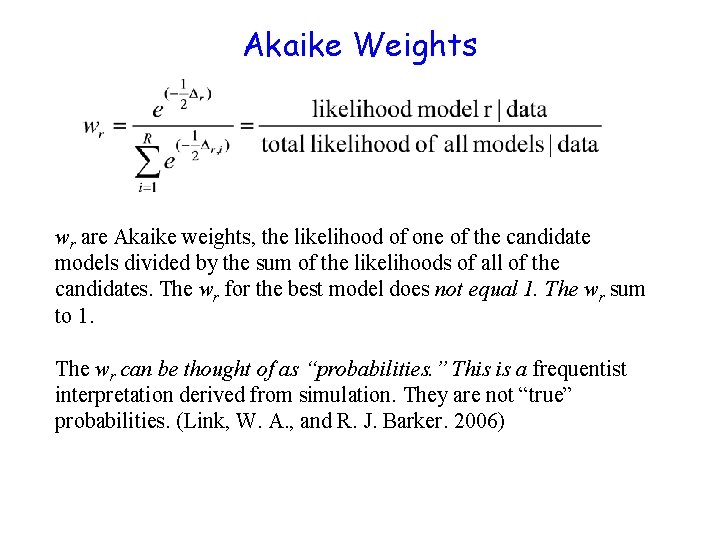

Akaike Weights wr are Akaike weights, the likelihood of one of the candidate models divided by the sum of the likelihoods of all of the candidates. The wr for the best model does not equal 1. The wr sum to 1. The wr can be thought of as “probabilities. ” This is a frequentist interpretation derived from simulation. They are not “true” probabilities. (Link, W. A. , and R. J. Barker. 2006)

Interpretation of Akaike Weights • wi is the weight of evidence in favor of model i being the actual best K-L model given that one of the R models must be the K-L best model of the candidate set. • “probability” that model i is the actual best K-L model • Last statement is quite controversial.

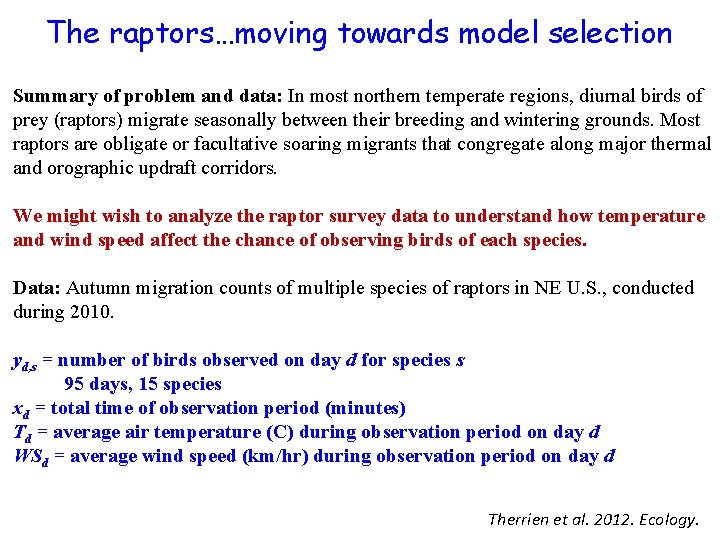

The raptors…moving towards model selection Summary of problem and data: In most northern temperate regions, diurnal birds of prey (raptors) migrate seasonally between their breeding and wintering grounds. Most raptors are obligate or facultative soaring migrants that congregate along major thermal 0. Identify scientific problem/objectives; understand data; draw-up and orographic updraft corridors. existing theory/knowledge. We might wish to analyze the raptor survey data to understand how temperature and wind speed affect the chance of observing birds of each species. Data: Autumn migration counts of multiple species of raptors in NE U. S. , conducted during 2010. yd, s = number of birds observed on day d for species s 95 days, 15 species xd = total time of observation period (minutes) Td = average air temperature (C) during observation period on day d WSd = average wind speed (km/hr) during observation period on day d Therrien et al. 2012. Ecology.

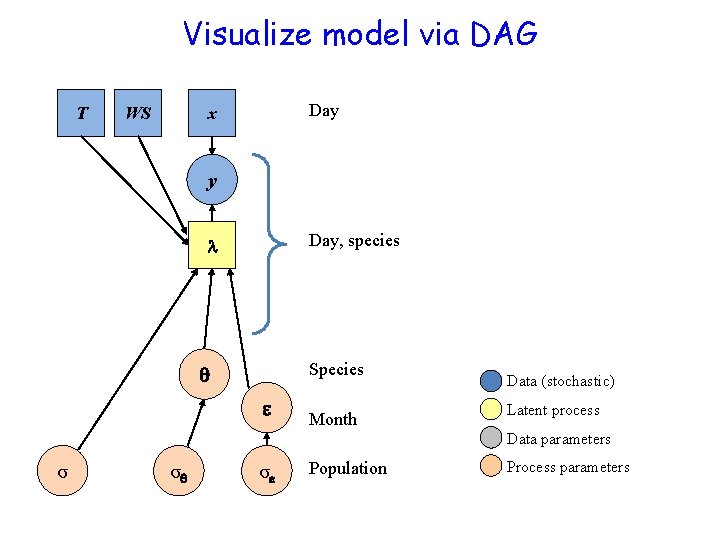

Visualize model via DAG T WS Day x y Day, species Species Month Data (stochastic) Latent process Data parameters σ σ σ Population Process parameters

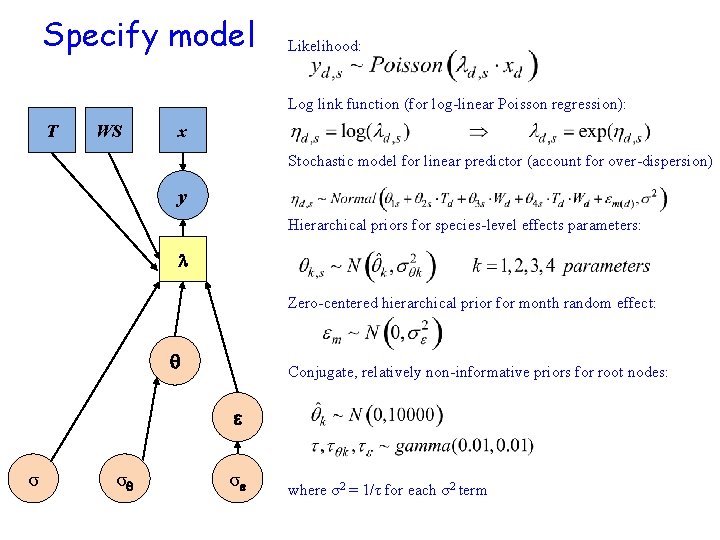

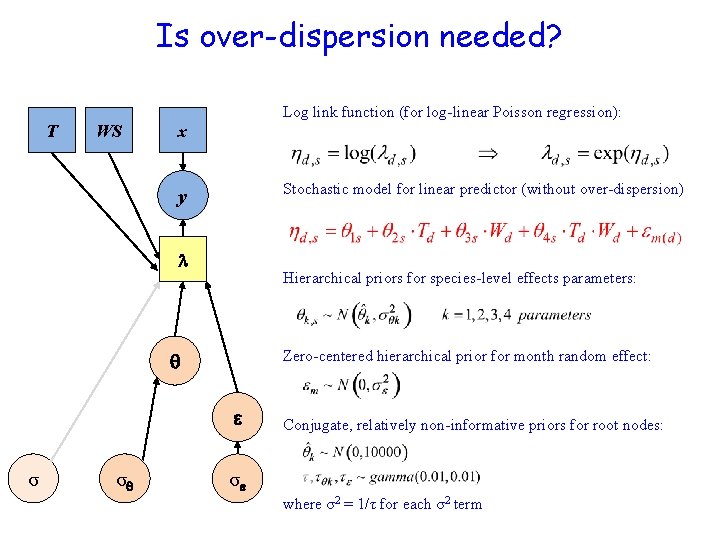

Specify model Likelihood: Log link function (for log-linear Poisson regression): T WS x Stochastic model for linear predictor (account for over-dispersion) y Hierarchical priors for species-level effects parameters: Zero-centered hierarchical prior for month random effect: Conjugate, relatively non-informative priors for root nodes: σ σ σ where σ2 = 1/τ for each σ2 term

Is over-dispersion needed? T WS Log link function (for log-linear Poisson regression): x Stochastic model for linear predictor (without over-dispersion) y Hierarchical priors for species-level effects parameters: Zero-centered hierarchical prior for month random effect: σ σ Conjugate, relatively non-informative priors for root nodes: σ where σ2 = 1/τ for each σ2 term

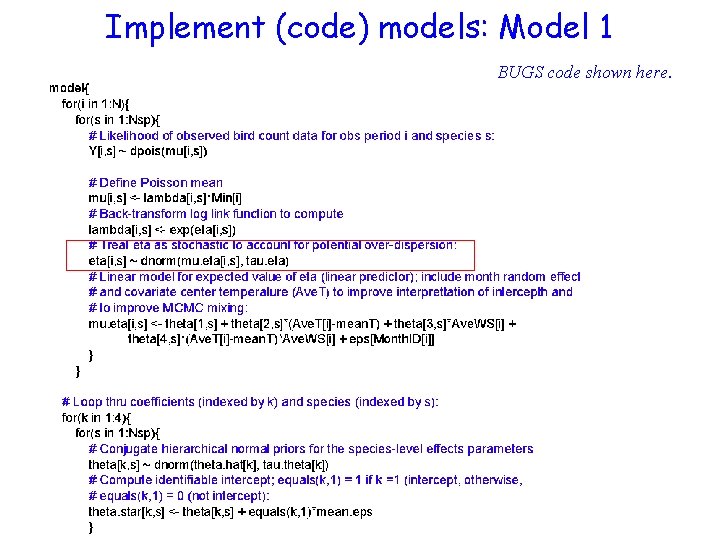

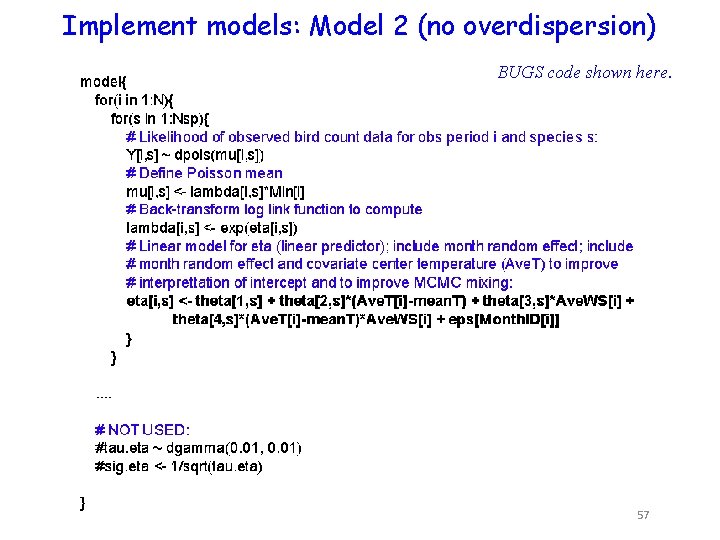

Implement (code) models: Model 1 BUGS code shown here.

BUGS code shown here.

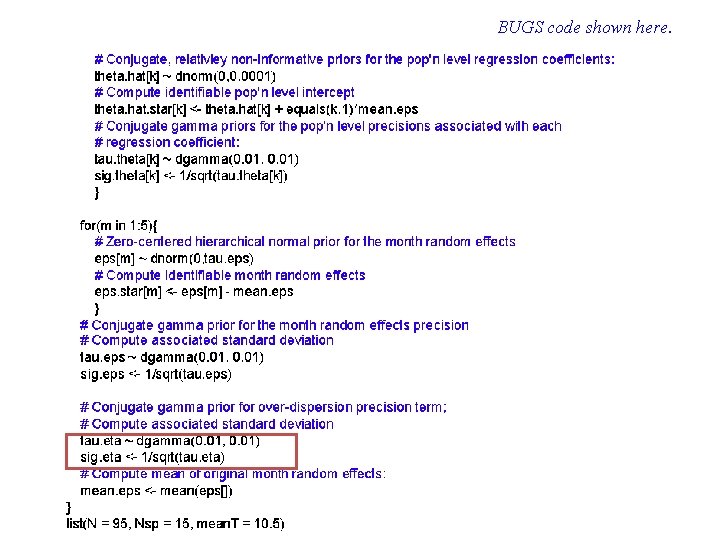

Implement models: Model 2 (no overdispersion) BUGS code shown here. 57

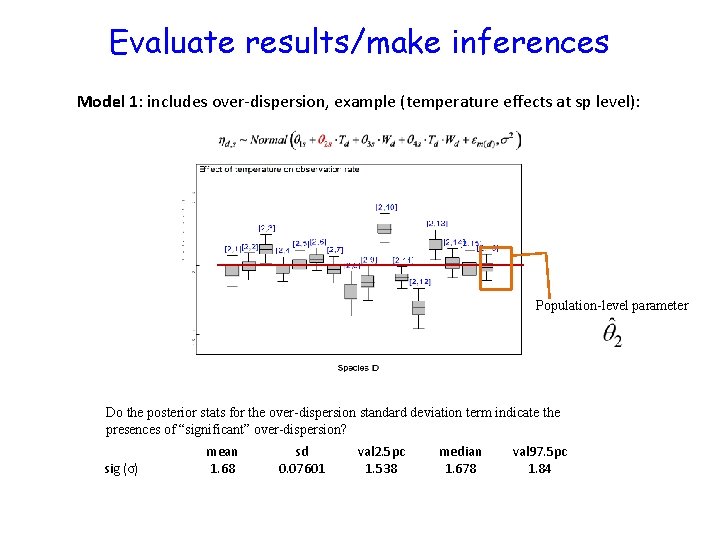

Evaluate results/make inferences Model 1: includes over-dispersion, example (temperature effects at sp level): Population-level parameter Do the posterior stats for the over-dispersion standard deviation term indicate the presences of “significant” over-dispersion? sig (σ) mean 1. 68 sd 0. 07601 val 2. 5 pc 1. 538 median 1. 678 val 97. 5 pc 1. 84

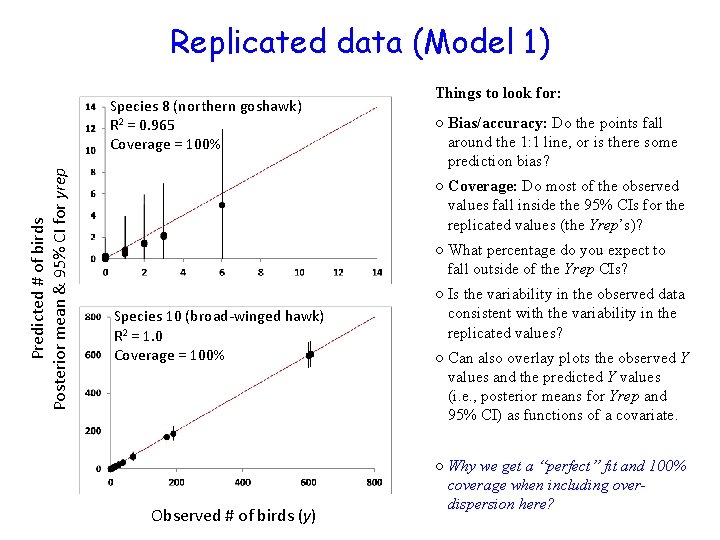

Replicated data (Model 1) Predicted # of birds Posterior mean & 95% CI for yrep Species 8 (northern goshawk) R 2 = 0. 965 Coverage = 100% Things to look for: ○ Bias/accuracy: Do the points fall around the 1: 1 line, or is there some prediction bias? ○ Coverage: Do most of the observed values fall inside the 95% CIs for the replicated values (the Yrep’s)? ○ What percentage do you expect to fall outside of the Yrep CIs? Species 10 (broad-winged hawk) R 2 = 1. 0 Coverage = 100% Observed # of birds (y) ○ Is the variability in the observed data consistent with the variability in the replicated values? ○ Can also overlay plots the observed Y values and the predicted Y values (i. e. , posterior means for Yrep and 95% CI) as functions of a covariate. ○ Why we get a “perfect” fit and 100% coverage when including overdispersion here?

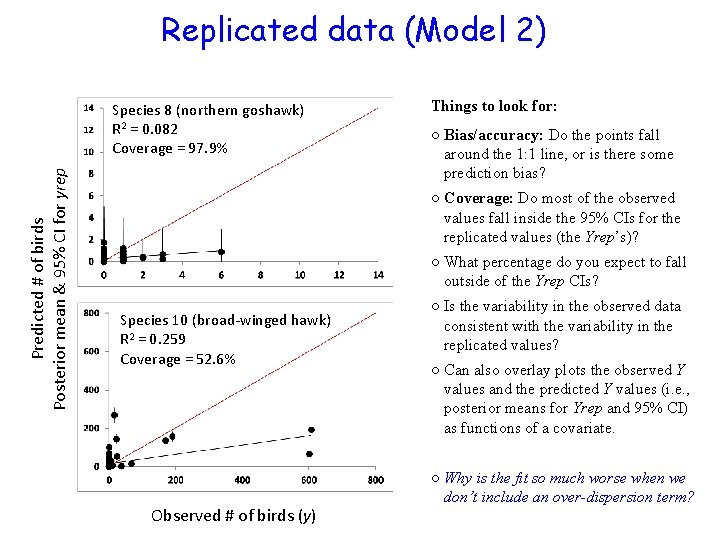

Replicated data (Model 2) Predicted # of birds Posterior mean & 95% CI for yrep Species 8 (northern goshawk) R 2 = 0. 082 Coverage = 97. 9% Things to look for: ○ Bias/accuracy: Do the points fall around the 1: 1 line, or is there some prediction bias? ○ Coverage: Do most of the observed values fall inside the 95% CIs for the replicated values (the Yrep’s)? ○ What percentage do you expect to fall outside of the Yrep CIs? Species 10 (broad-winged hawk) R 2 = 0. 259 Coverage = 52. 6% Observed # of birds (y) ○ Is the variability in the observed data consistent with the variability in the replicated values? ○ Can also overlay plots the observed Y values and the predicted Y values (i. e. , posterior means for Yrep and 95% CI) as functions of a covariate. ○ Why is the fit so much worse when we don’t include an over-dispersion term?

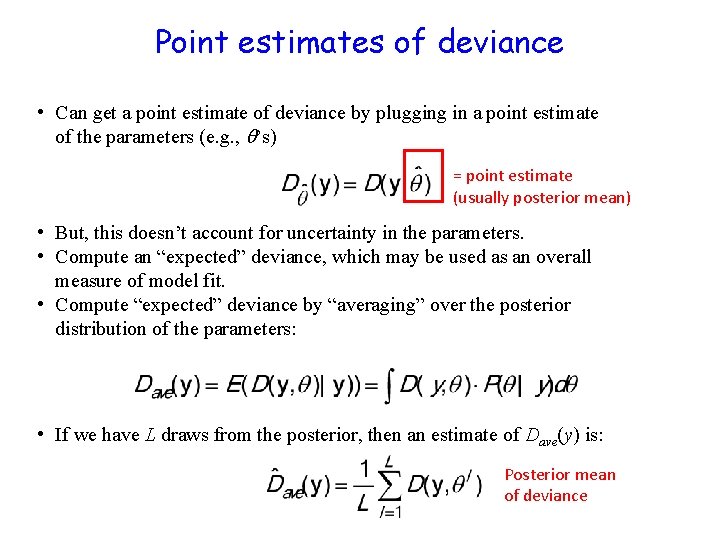

Point estimates of deviance • Can get a point estimate of deviance by plugging in a point estimate of the parameters (e. g. , ’s) = point estimate (usually posterior mean) • But, this doesn’t account for uncertainty in the parameters. • Compute an “expected” deviance, which may be used as an overall measure of model fit. • Compute “expected” deviance by “averaging” over the posterior distribution of the parameters: • If we have L draws from the posterior, then an estimate of Dave(y) is: Posterior mean of deviance

Model complexity • To account for model complexity, compute the effective number of parameters. To do this we compute deviance in two ways: • The posterior mean of the deviance. • The deviance evaluated at the posterior mean values of model parameters. • Why should first component be larger than the second component? • In some situations, the above solution for p. D can lead to p. D < 0 (i. e. , a negative # of effective parameters), which renders DIC and p. D useless.

Computing DIC • Thus, DIC is given by: The deviance of the model evaluated at the means of the posterior distribution of parameters The effective number of parameters model fit (lower better) + model penalty (lower better) • Lower DIC -> “better” model, but what is an “important difference” in DIC? • Interpretation of DIC values same as AIC • DIC difference of 1 -2: “best” model deserves consideration • DIC differences of 3 -7: “considerably” more support for best model • Differences can be affected by Monte Carlo error • I look for differences > 10

![# JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau)} tau~dgamma(0. 001, # JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau)} tau~dgamma(0. 001,](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-64.jpg)

# JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau)} tau~dgamma(0. 001, . 001) alpha~dgamma(0. 001, . 001) gama~dgamma(. 001, . 001) In R } # end of model #In R jm=jags. model("Bugs_light_example. R", data=data, mod. inits, n. chains=3, n. adapt = n. adapt) update(jm, n. iter=5000) #generate coda object for parameters and deviance. zm=coda. samples(jm, variable. names=c("alpha", "gama", "c", "sigma", "deviance"), n. iter=5000) dic. samples(jm, n. iter, type="p. D") #Mean deviance: 529. 8 #penalty 2. 97 =pd # Penalized deviance: 532. 8 =dic # Another way: summary(zm[, "deviance"])$stat[, ] #pd* = (1/2)*Var(deviance) pd= 0. 5*summary(zm[, "deviance"])$stat[2]^2

Why DIC? • DIC, AIC, and BIC all have the same general form: x. IC = model fit + model penalty • So, why focus on DIC? Why not use AIC or BIC? • BIC and AIC require us to count the number of parameters in a model, but an informative prior, or hierarchical priors makes it impossible to count the number of “effective” parameters • Thus, Spiegelhalter et al. (2002) developed DIC, which computes an “effective” number of parameters that (should) capture the effect of “shrinkage” or “borrowing of strength” due to informative priors or hierarchical priors

Some intuition for DIC • The problem is parameters that are “free” to be influenced by noise in the data. How free are they? • If a prior on a parameter is very informative—the parameter is not free to respond to the data, it does not contribute to the effective number of parameters. • If a prior is uninformative, the opposite is the case. It is free to respond and contributes to the effective number of parameters in the same way as in a likelihood analysis. • If a parameter is part of a hierarchy, should it count to same way as a parameter that is part of a simpler model?

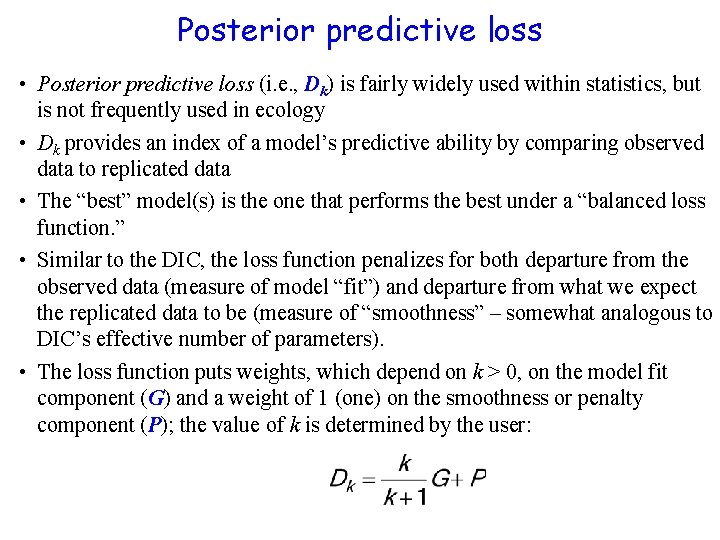

Posterior predictive loss • Posterior predictive loss (i. e. , Dk) is fairly widely used within statistics, but is not frequently used in ecology • Dk provides an index of a model’s predictive ability by comparing observed data to replicated data • The “best” model(s) is the one that performs the best under a “balanced loss function. ” • Similar to the DIC, the loss function penalizes for both departure from the observed data (measure of model “fit”) and departure from what we expect the replicated data to be (measure of “smoothness” – somewhat analogous to DIC’s effective number of parameters). • The loss function puts weights, which depend on k > 0, on the model fit component (G) and a weight of 1 (one) on the smoothness or penalty component (P); the value of k is determined by the user:

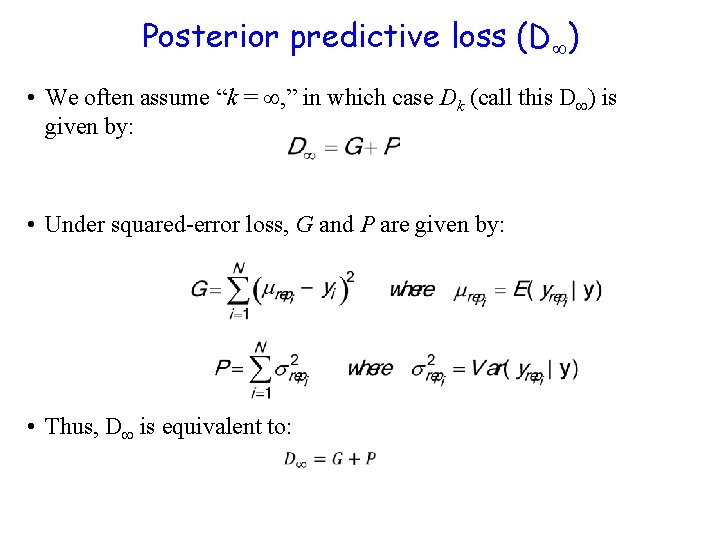

Posterior predictive loss (D ) • We often assume “k = , ” in which case Dk (call this D ) is given by: • Under squared-error loss, G and P are given by: • Thus, D is equivalent to:

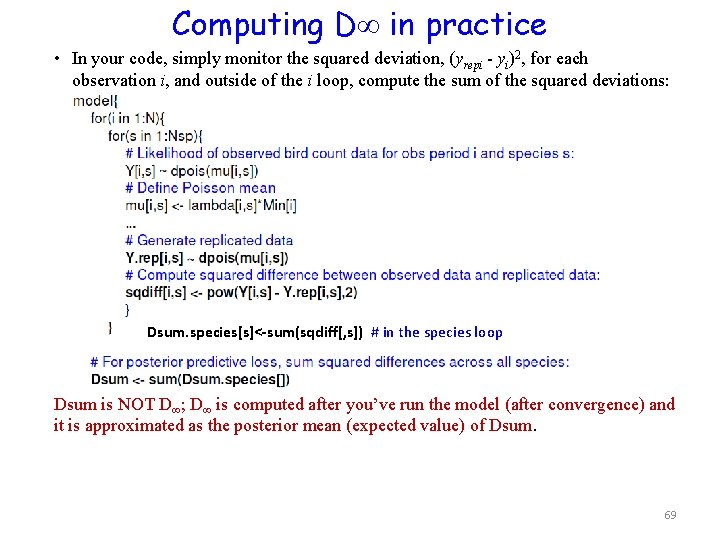

Computing D in practice • In your code, simply monitor the squared deviation, (yrepi - yi)2, for each observation i, and outside of the i loop, compute the sum of the squared deviations: Dsum. species[s]<-sum(sqdiff[, s]) # in the species loop Dsum is NOT D ; D is computed after you’ve run the model (after convergence) and it is approximated as the posterior mean (expected value) of Dsum. 69

![Back to the tree and the light Model 1: mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) Model 2: mu[i]<-alpha*x[i] Back to the tree and the light Model 1: mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) Model 2: mu[i]<-alpha*x[i]](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-70.jpg)

Back to the tree and the light Model 1: mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) Model 2: mu[i]<-alpha*x[i]

![# JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau) yrep[i]~dnorm(mu[i], tau)) # JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau) yrep[i]~dnorm(mu[i], tau))](http://slidetodoc.com/presentation_image_h/df6e3eb51a7c9cbc2da99568fa416063/image-71.jpg)

# JAGS model{ for (i in 1: n){ mu[i]<-(alpha*x[i])/((alpha/gama)+x[i]) y[i]~ dnorm(mu[i], tau) yrep[i]~dnorm(mu[i], tau)) } tau~dgamma(0. 001, . 001) alpha~dgamma(0. 001, . 001) gama~dgamma(. 001, . 001)} # end of model In R zm=coda. samples(jm, variable. names=c("alpha", "gama", "sigma", "deviance", yrep"), n. iter=5000) y<-tree. data$Observed. growth. rate # assign response variable ntree=nrow(tree. data) yrep. stats=summary(zm[, paste("yrep[", 1: ntree, "]", sep="")])$stat G <- sum((yrep. stats[, 1]-y)^2)# sq diff (yrep. mean-y) P <- sum((yrep. stats[, 2])^2)# var for each yrep Dinf <- G + P

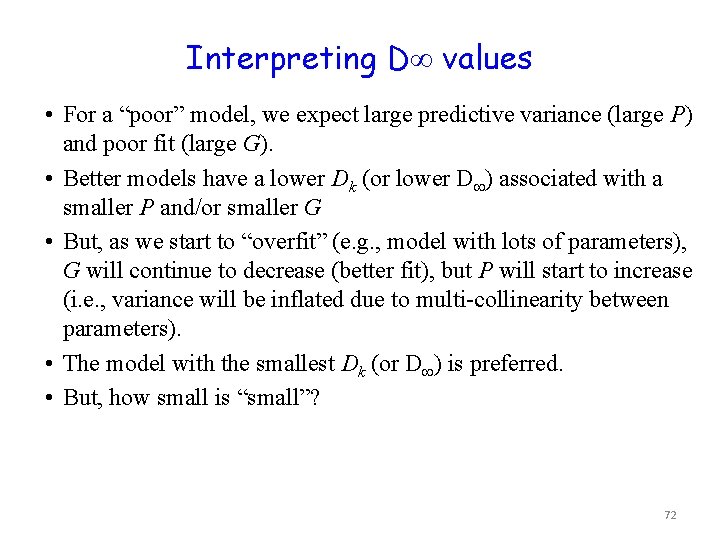

Interpreting D values • For a “poor” model, we expect large predictive variance (large P) and poor fit (large G). • Better models have a lower Dk (or lower D ) associated with a smaller P and/or smaller G • But, as we start to “overfit” (e. g. , model with lots of parameters), G will continue to decrease (better fit), but P will start to increase (i. e. , variance will be inflated due to multi-collinearity between parameters). • The model with the smallest Dk (or D ) is preferred. • But, how small is “small”? 72

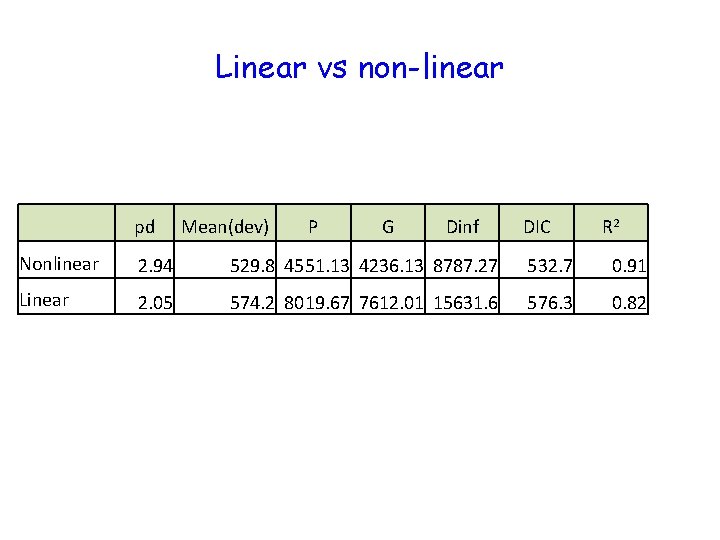

Linear vs non-linear pd Mean(dev) P G Dinf DIC R 2 Nonlinear 2. 94 529. 8 4551. 13 4236. 13 8787. 27 532. 7 0. 91 Linear 2. 05 574. 2 8019. 67 7612. 01 15631. 6 576. 3 0. 82

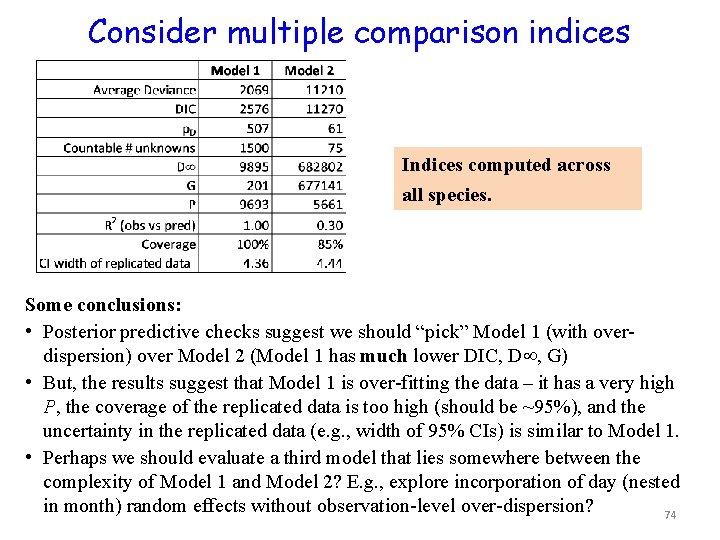

Consider multiple comparison indices Indices computed across all species. Some conclusions: • Posterior predictive checks suggest we should “pick” Model 1 (with overdispersion) over Model 2 (Model 1 has much lower DIC, D , G) • But, the results suggest that Model 1 is over-fitting the data – it has a very high P, the coverage of the replicated data is too high (should be ~95%), and the uncertainty in the replicated data (e. g. , width of 95% CIs) is similar to Model 1. • Perhaps we should evaluate a third model that lies somewhere between the complexity of Model 1 and Model 2? E. g. , explore incorporation of day (nested in month) random effects without observation-level over-dispersion? 74

• In the index of Gelman et al. 1995: “Model selection, why we do not do it” • In the index of Gelman et al. 2004: “Model selection, why we avoid it. ” – Gelman, A. , J. B. Carlin, H. S. Stern, and D. B. Rubin. 2004. Bayesian data analysis. Chapman and Hall / CRC, London.

When should we avoid model selection? • When we have a firm, theoretical / mechanistic basis for a particular model formulation. • When our objectives for insight determine the form of the model. • When we want to make forecasts and must include known influences on future behavior of the system.

Concluding remarks • Use multiple approaches for comparing / selecting between models • Selection criteria may depend on scientific objectives: • Use model to learn (heuristic tool) • Use model to predict under novel conditions • Other topics not covered • Model averaging & Bayes factors • E. g. , can use posterior model weights to average derived or predicted quantities obtained from each model • Can use BIC to approximate the Bayes factors (BF) • See Link & Barber (2006) or Kass (1993) • Evaluation of model assumptions • Appropriate choice of distributions? • Appropriate model structure • E. g. : linear vs non-linear; choice of covariates; random vs fixed effects; hierarchical vs non-hierarchical priors, etc 77

References • Posterior predictive loss: Gelfand & Ghosh (1998) Model choice: A minimum posterior predictive loss approach. Biometrika 85: 1 -11. • Bayes factors & BIC: Link & Barker (2006) Model weights and the foundations of multimodel inference. Ecology 87: 2626 -2635. • Model checking & improvement: Gelman, Carlin, Stern, Rubin (2004) Bayesian Data Analysis. Chapman & Hall/CRC. (Chapter 6) • Bayes factors: Kass (1993) Bayes factors in practice. The Statistician 42: 551 -560. • Elements of hierarchical Bayes, including Bayes factors, DIC, D : Carlin et al. (2006) Elements of hierarchical Bayesian inference, in Hierarchical Modelling for the Environmental Sciences: Statistical Methods and Applications, J. S. Clark & A. E. Gelfand (eds. ) Oxford Univ Press. 78

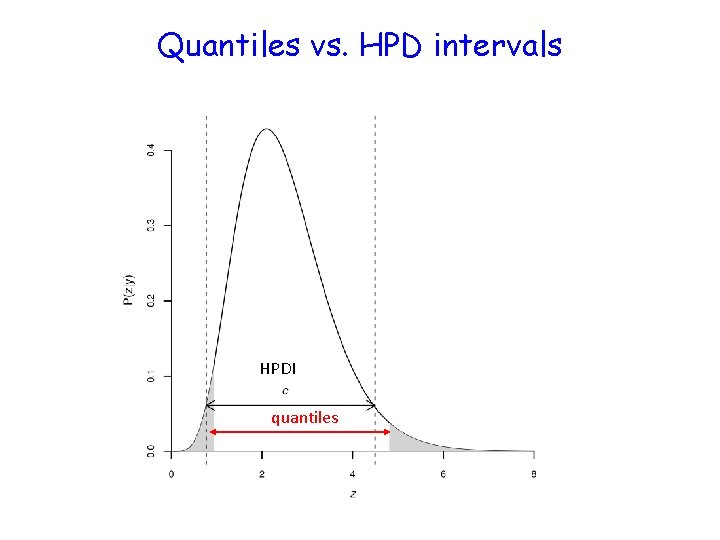

Quantiles vs. HPD intervals HPDI quantiles

Quantiles vs. HPD intervals • HPDI: The longest horizontal line that can be placed within the distribution such that the area between the vertical dashed lines and beneath the distribution curve = 1 –alpha. • Equal tailed intervals: quantiles of distribution (1 alpha/2).

Likelihood Ratio Test • Ratios of llikelihoods (R) follow a chi-square distribution with degrees of freedom equal to the difference in the number of parameters between models A and B.

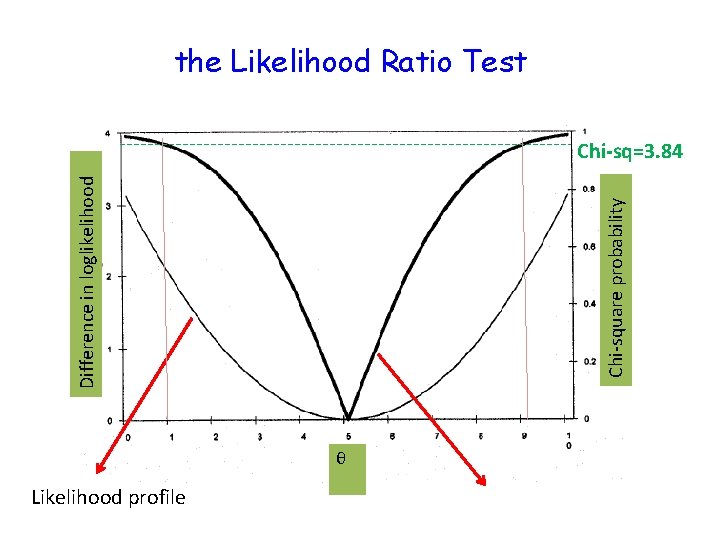

the Likelihood Ratio Test Chi-square probability Difference in loglikelihood Chi-sq=3. 84 θ Likelihood profile

- Slides: 82