Model Adequacy Checking in the ANOVA Text reference

- Slides: 15

Model Adequacy Checking in the ANOVA Text reference, Section 3 -4, pg. 75 • • • Checking assumptions is important Have we fit the right model? Normality Independence Constant variance Later we will talk about what to do if some of these assumptions are violated 1

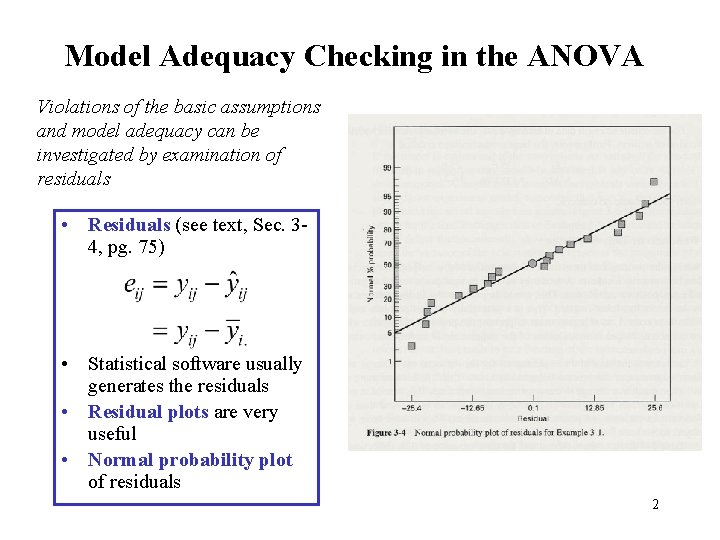

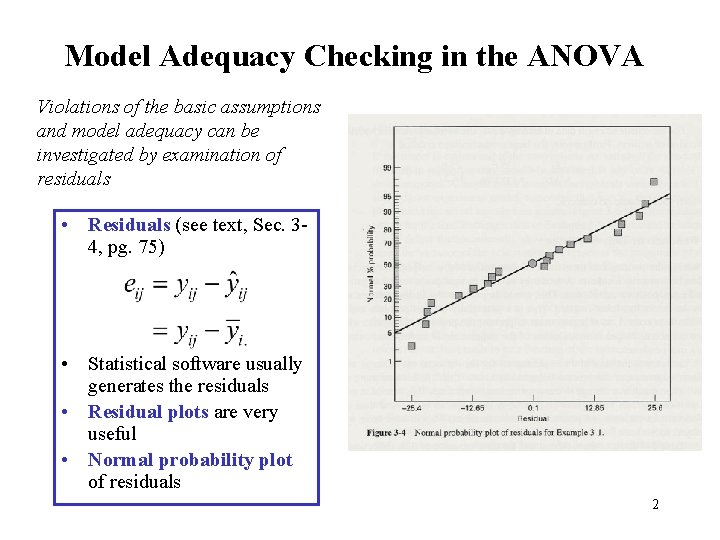

Model Adequacy Checking in the ANOVA Violations of the basic assumptions and model adequacy can be investigated by examination of residuals • Residuals (see text, Sec. 34, pg. 75) • Statistical software usually generates the residuals • Residual plots are very useful • Normal probability plot of residuals 2

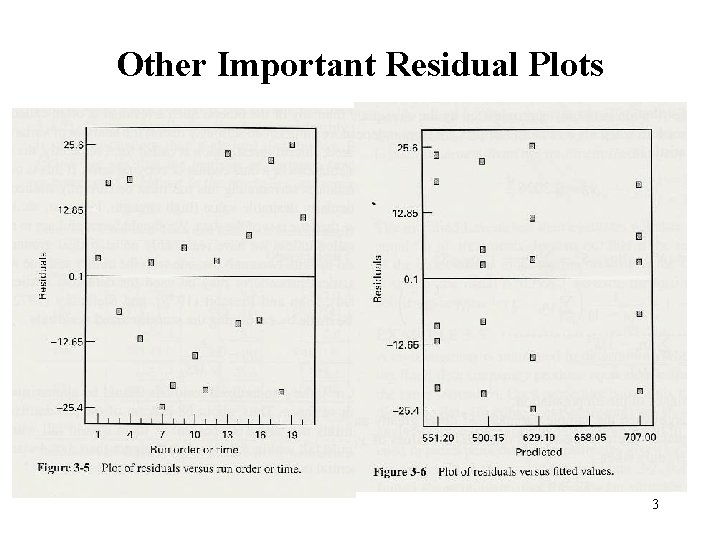

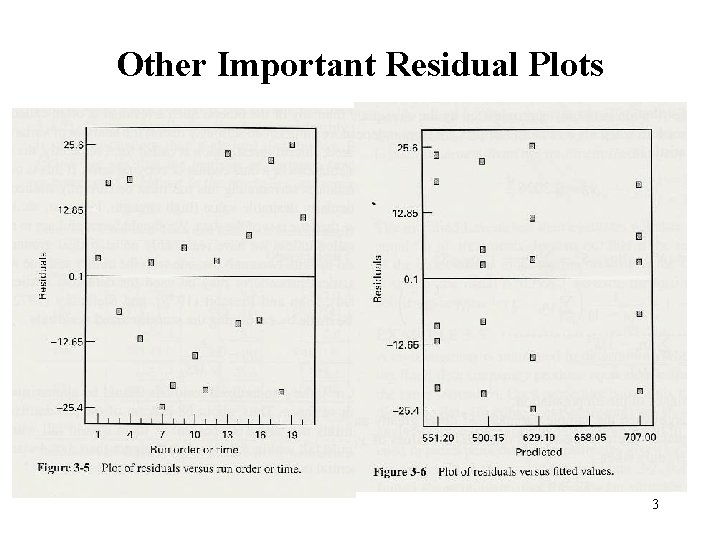

Other Important Residual Plots 3

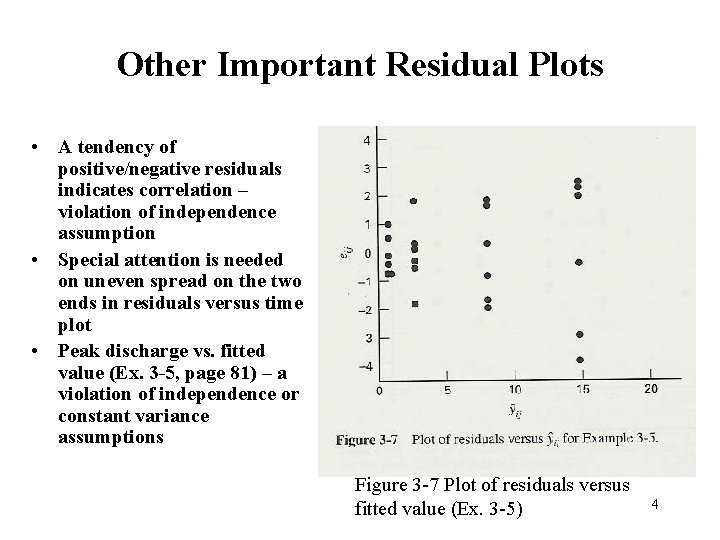

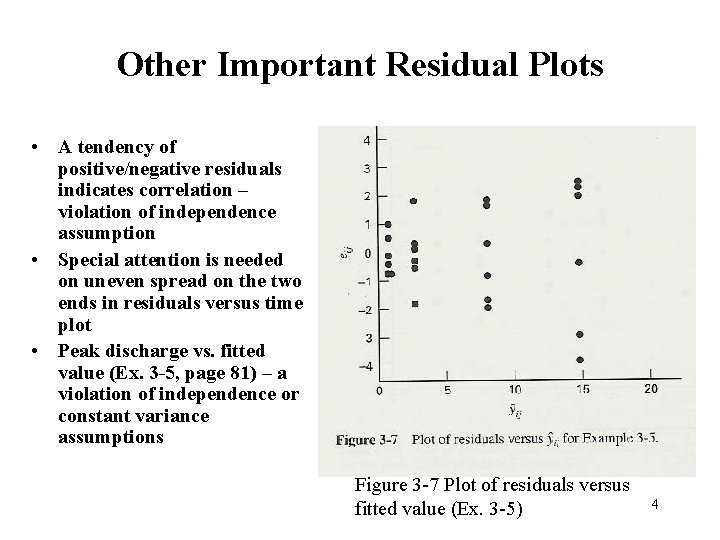

Other Important Residual Plots • A tendency of positive/negative residuals indicates correlation – violation of independence assumption • Special attention is needed on uneven spread on the two ends in residuals versus time plot • Peak discharge vs. fitted value (Ex. 3 -5, page 81) – a violation of independence or constant variance assumptions Figure 3 -7 Plot of residuals versus fitted value (Ex. 3 -5) 4

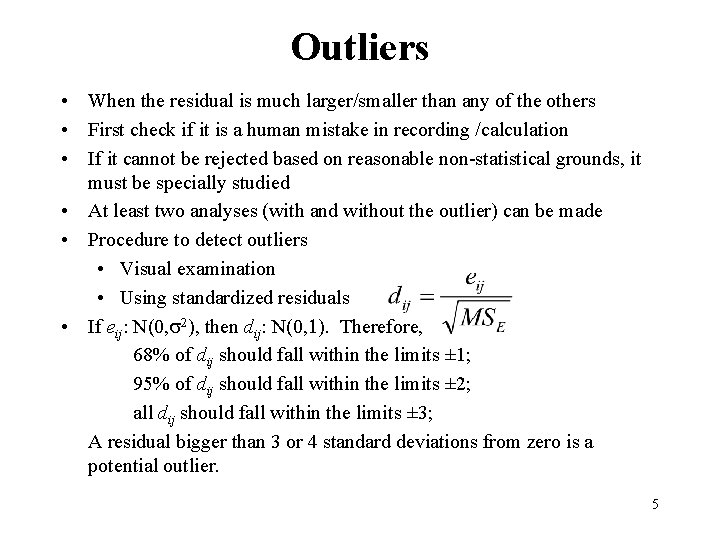

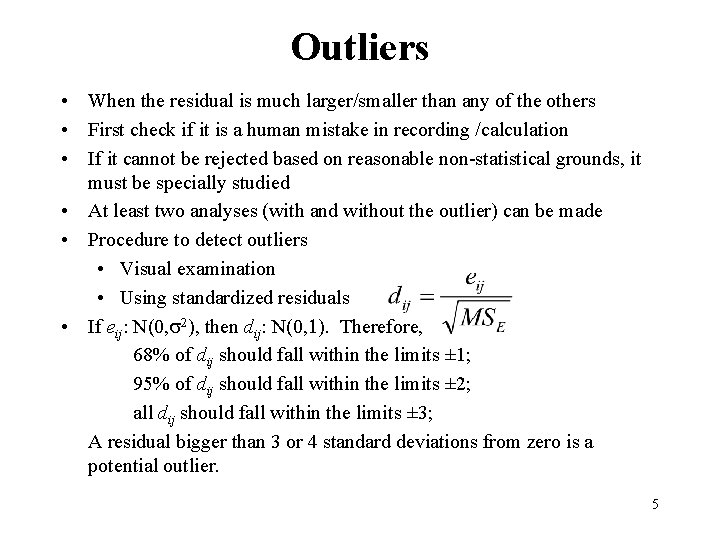

Outliers • When the residual is much larger/smaller than any of the others • First check if it is a human mistake in recording /calculation • If it cannot be rejected based on reasonable non-statistical grounds, it must be specially studied • At least two analyses (with and without the outlier) can be made • Procedure to detect outliers • Visual examination • Using standardized residuals • If eij: N(0, s 2), then dij: N(0, 1). Therefore, 68% of dij should fall within the limits ± 1; 95% of dij should fall within the limits ± 2; all dij should fall within the limits ± 3; A residual bigger than 3 or 4 standard deviations from zero is a potential outlier. 5

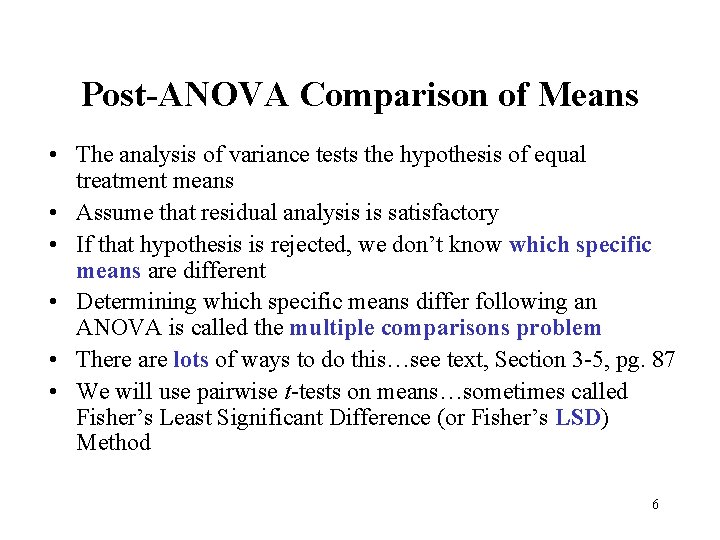

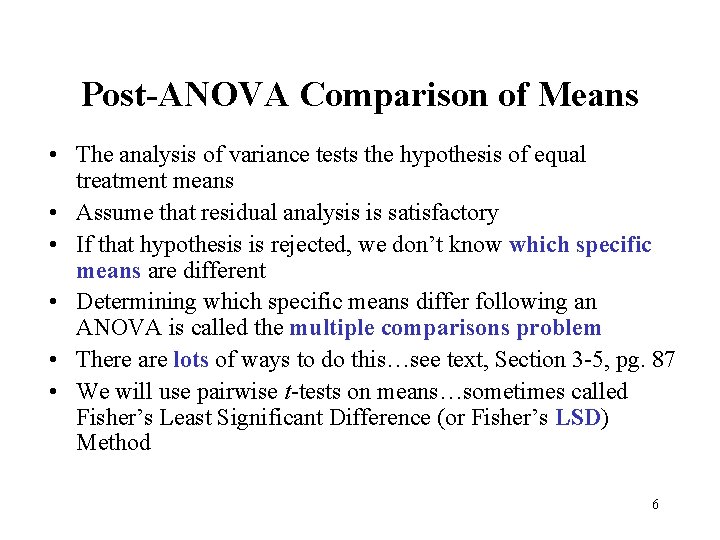

Post-ANOVA Comparison of Means • The analysis of variance tests the hypothesis of equal treatment means • Assume that residual analysis is satisfactory • If that hypothesis is rejected, we don’t know which specific means are different • Determining which specific means differ following an ANOVA is called the multiple comparisons problem • There are lots of ways to do this…see text, Section 3 -5, pg. 87 • We will use pairwise t-tests on means…sometimes called Fisher’s Least Significant Difference (or Fisher’s LSD) Method 6

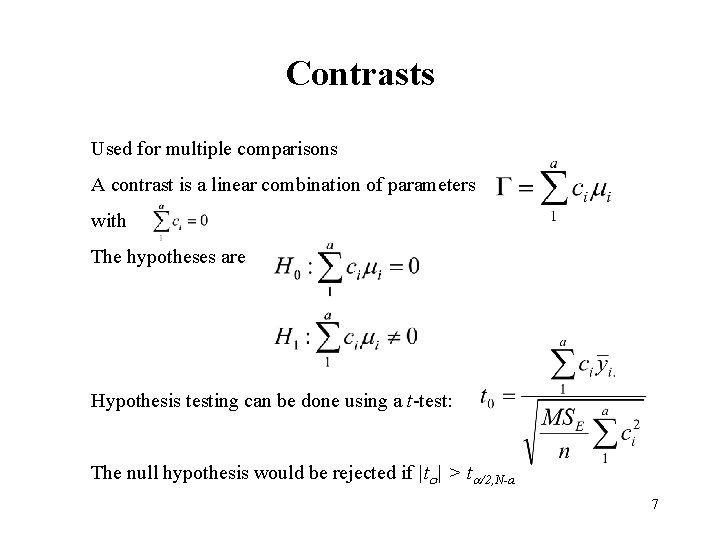

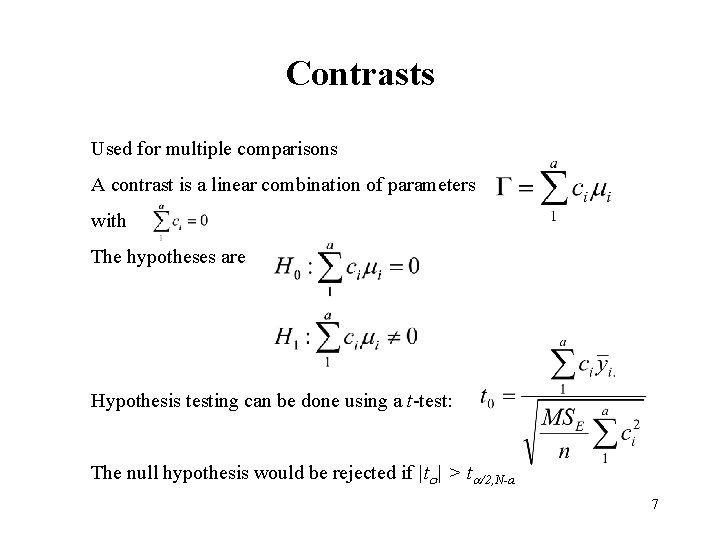

Contrasts Used for multiple comparisons A contrast is a linear combination of parameters with The hypotheses are Hypothesis testing can be done using a t-test: The null hypothesis would be rejected if |to| > ta/2, N-a 7

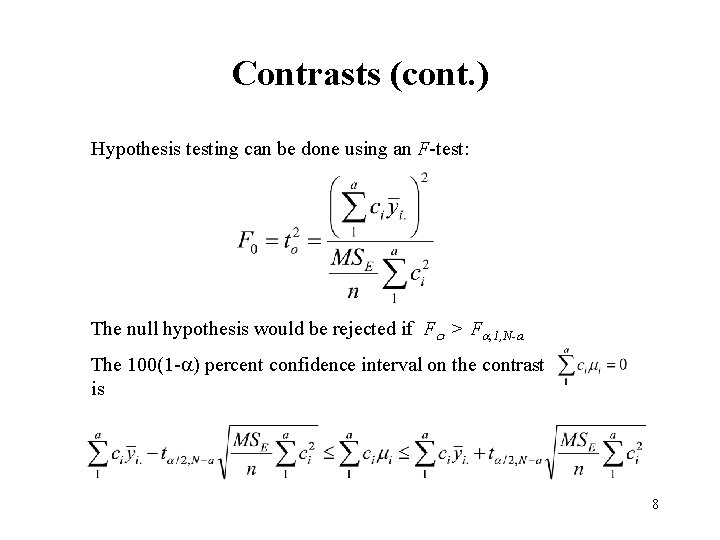

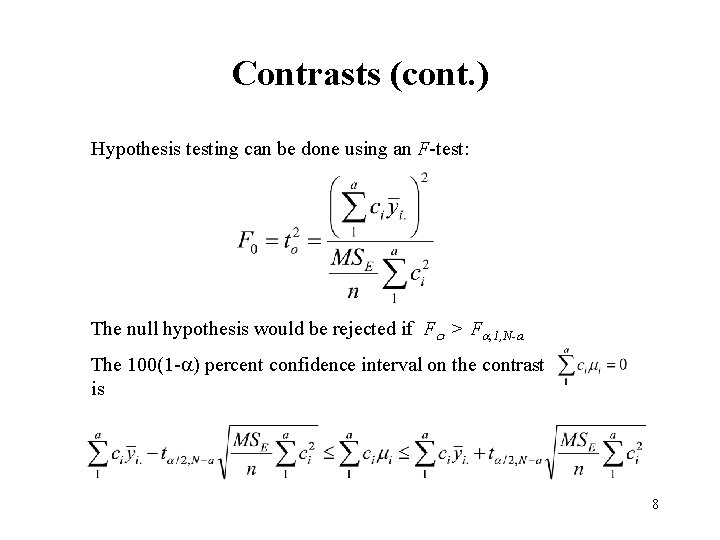

Contrasts (cont. ) Hypothesis testing can be done using an F-test: The null hypothesis would be rejected if Fo > Fa, 1, N-a The 100(1 -a) percent confidence interval on the contrast is 8

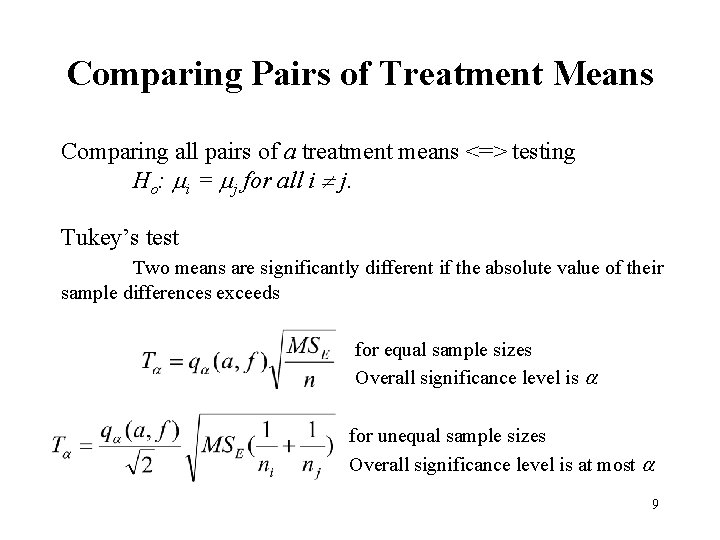

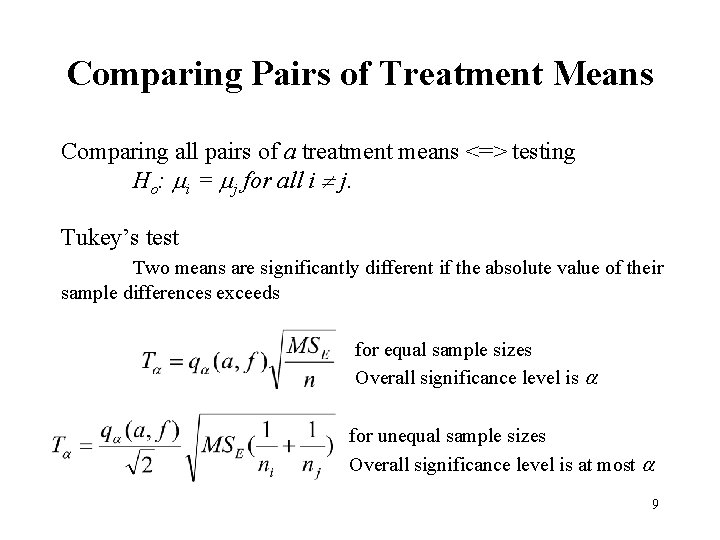

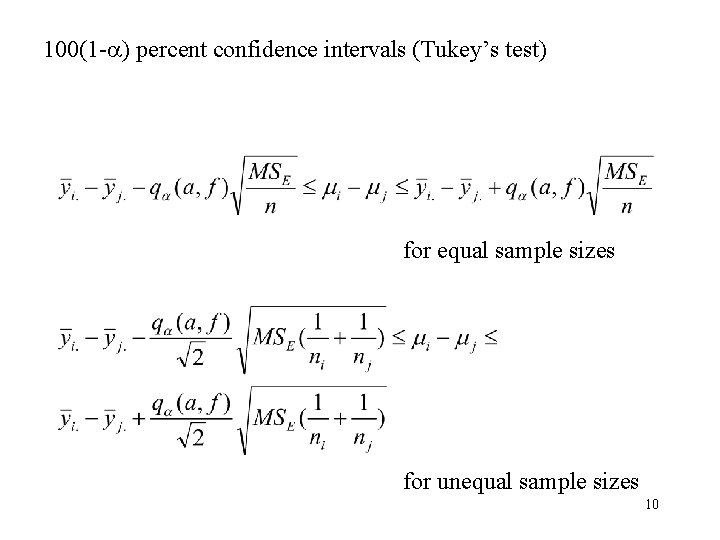

Comparing Pairs of Treatment Means Comparing all pairs of a treatment means <=> testing Ho: mi = mj for all i j. Tukey’s test Two means are significantly different if the absolute value of their sample differences exceeds for equal sample sizes Overall significance level is a for unequal sample sizes Overall significance level is at most a 9

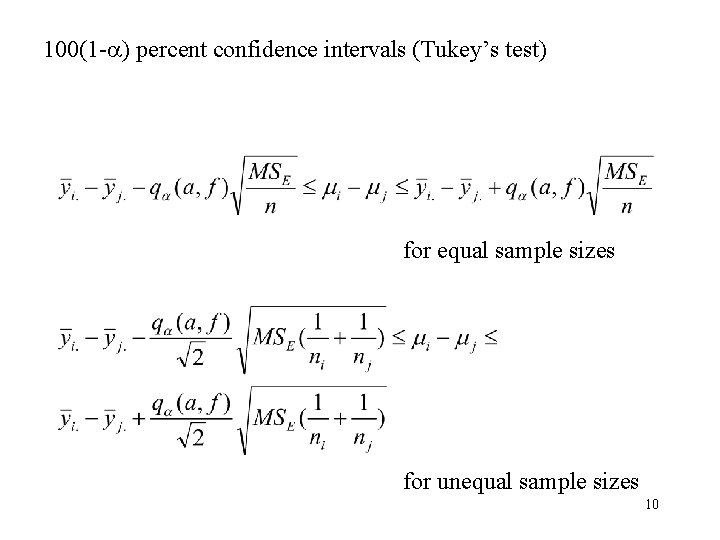

100(1 -a) percent confidence intervals (Tukey’s test) for equal sample sizes for unequal sample sizes 10

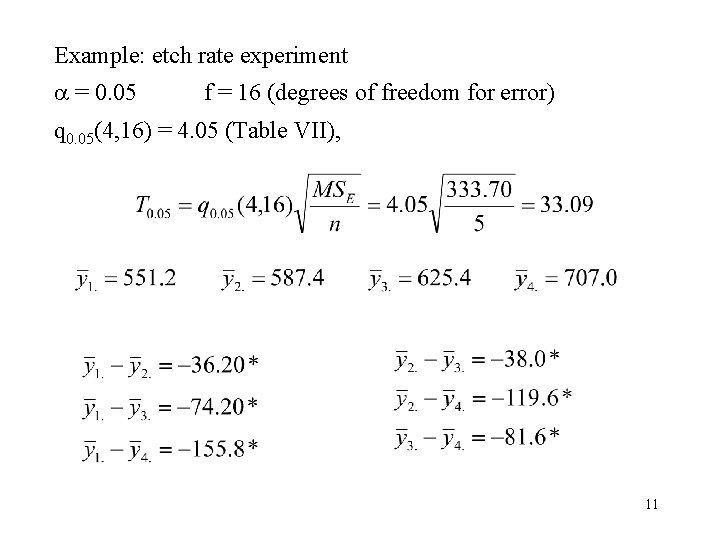

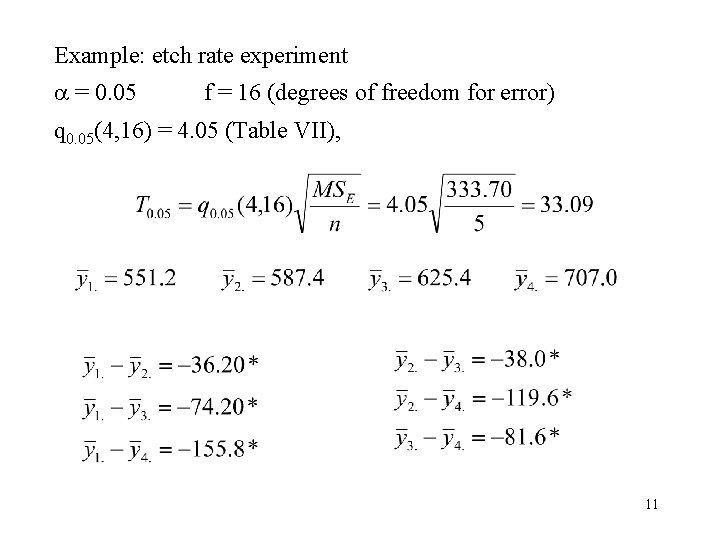

Example: etch rate experiment a = 0. 05 f = 16 (degrees of freedom for error) q 0. 05(4, 16) = 4. 05 (Table VII), 11

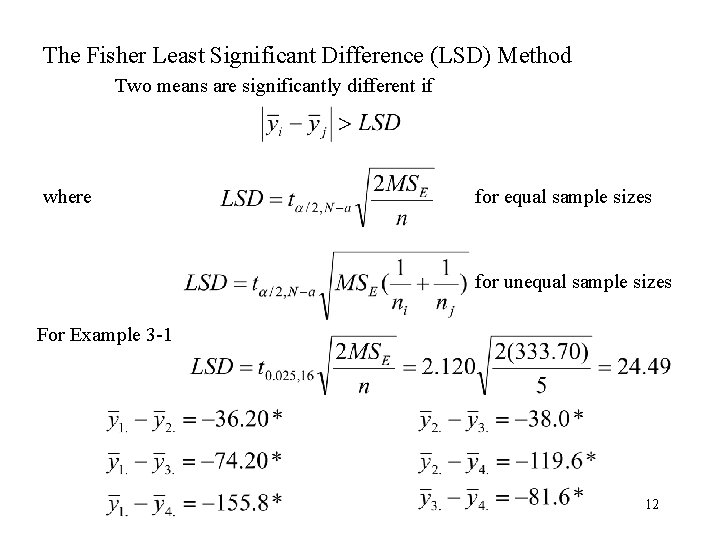

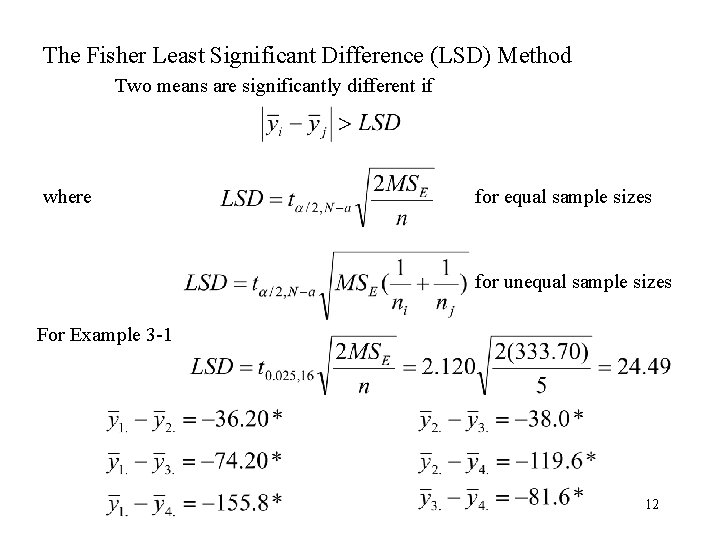

The Fisher Least Significant Difference (LSD) Method Two means are significantly different if where for equal sample sizes for unequal sample sizes For Example 3 -1 12

Duncan’s Multiple Range Test (page 100) The Newman-Keuls Test (page 102) Are they the same? Which one to use? • Opinions differ • LSD method and Duncan’s multiple range test are the most powerful ones • Tukey method controls the overall error rate 13

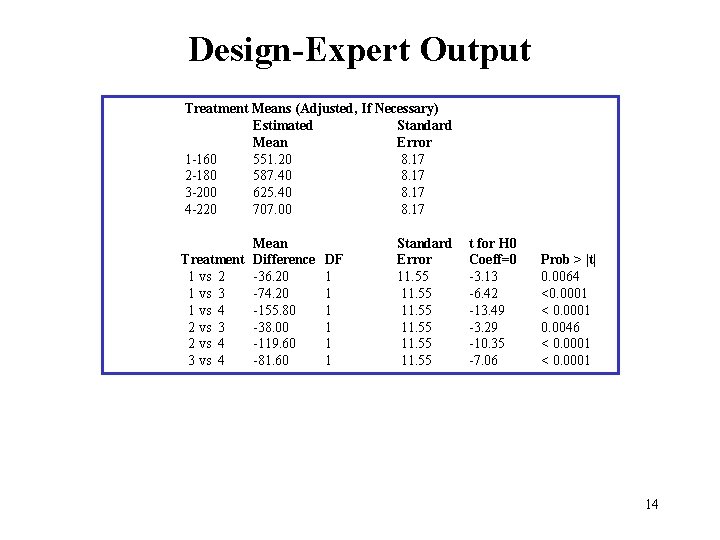

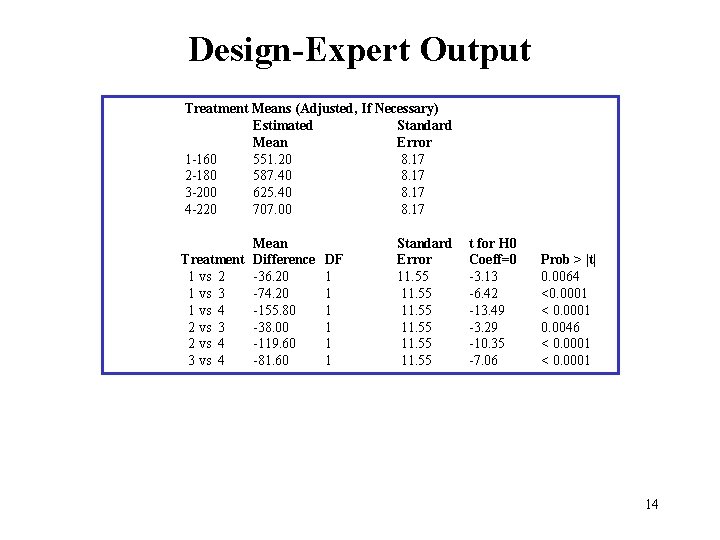

Design-Expert Output Treatment Means (Adjusted, If Necessary) Estimated Standard Mean Error 1 -160 551. 20 8. 17 2 -180 587. 40 8. 17 3 -200 625. 40 8. 17 4 -220 707. 00 8. 17 Mean Treatment Difference 1 vs 2 -36. 20 1 vs 3 -74. 20 1 vs 4 -155. 80 2 vs 3 -38. 00 2 vs 4 -119. 60 3 vs 4 -81. 60 DF 1 1 1 Standard Error 11. 55 t for H 0 Coeff=0 -3. 13 -6. 42 -13. 49 -3. 29 -10. 35 -7. 06 Prob > |t| 0. 0064 <0. 0001 < 0. 0001 0. 0046 < 0. 0001 14

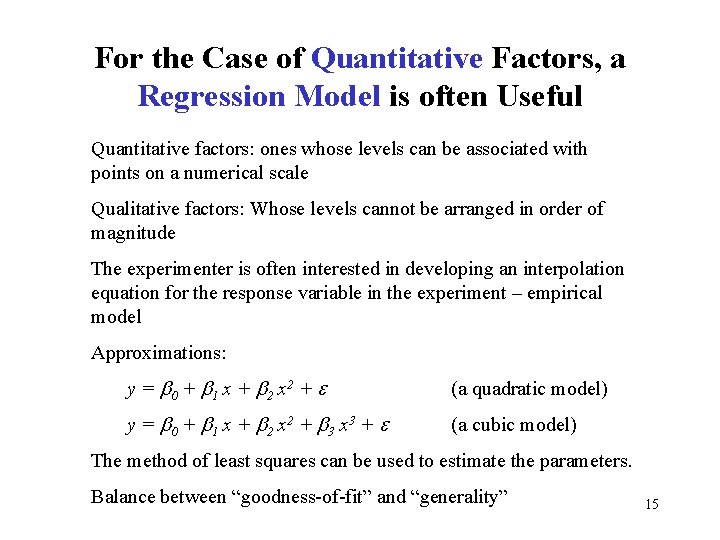

For the Case of Quantitative Factors, a Regression Model is often Useful Quantitative factors: ones whose levels can be associated with points on a numerical scale Qualitative factors: Whose levels cannot be arranged in order of magnitude The experimenter is often interested in developing an interpolation equation for the response variable in the experiment – empirical model Approximations: y = b 0 + b 1 x + b 2 x 2 + e (a quadratic model) y = b 0 + b 1 x + b 2 x 2 + b 3 x 3 + e (a cubic model) The method of least squares can be used to estimate the parameters. Balance between “goodness-of-fit” and “generality” 15